YAC-Net: A Lightweight Deep Learning Model for Automated Parasite Egg Detection in Microscopy Images

This article explores YAC-Net, a novel lightweight deep learning model designed for the accurate and efficient detection of parasite eggs in microscopy images.

YAC-Net: A Lightweight Deep Learning Model for Automated Parasite Egg Detection in Microscopy Images

Abstract

This article explores YAC-Net, a novel lightweight deep learning model designed for the accurate and efficient detection of parasite eggs in microscopy images. Aimed at researchers and drug development professionals, we detail the model's foundational context in combating intestinal parasitic infections, its innovative architectural improvements over existing frameworks like YOLOv5, and its optimization for low-resource settings. The content provides a methodological breakdown of YAC-Net's application, discusses troubleshooting and performance optimization strategies, and presents rigorous validation and comparative analysis against state-of-the-art detection methods. This resource underscores YAC-Net's potential to automate and revolutionize diagnostic processes in biomedical research and global public health initiatives.

The Urgent Need for Automated Diagnostics: Exploring YAC-Net's Role in Public Health and Drug Development

The Global Burden of Intestinal Parasitic Infections (IPIs)

Intestinal parasitic infections (IPIs) pose a critical global health challenge, affecting over one billion people worldwide and representing a significant cause of morbidity and mortality, particularly in developing regions [1]. The World Health Organization (WHO) estimates that 24% of the world's population (approximately 1.5 billion people) suffers from IPIs, predominantly from soil-transmitted helminths [1]. Recent meta-analyses indicate that approximately 450 million people become ill annually as a direct result of these infections, with children bearing the highest burden [2].

The prevalence of IPIs demonstrates significant geographical variation, influenced by climatic, socioeconomic, and environmental factors. These infections are most prevalent in tropical and subtropical regions, including sub-Saharan Africa, Asia, Latin America, and the Caribbean [1]. Over 50% of the population in some sub-Saharan African regions is affected by IPIs [1]. In developed countries, intestinal protozoal infections are more common than helminthic infections, with Giardia lamblia being the most prevalent parasitic cause of diarrhea in the United States [1].

Table 1: Global Prevalence of Major Intestinal Parasitic Infections

| Parasite Category | Representative Pathogens | Estimated Global Burden | High Prevalence Regions |

|---|---|---|---|

| Soil-Transmitted Helminths | Ascaris lumbricoides, Trichuris trichiura, Ancylostoma duodenale, Necator americanus | 1.5 billion people infected [1] | Sub-Saharan Africa, Asia, Latin America [1] |

| Intestinal Protozoa | Giardia duodenalis, Cryptosporidium parvum/hominis, Entamoeba histolytica | 7% prevalence in developed countries; up to 30% in developing countries for giardiasis [1] | Global distribution, with higher burden in developing regions [1] |

| Other Pathogenic Parasites | Blastocystis spp., Cyclospora cayetanensis, Cystoisospora belli | Significant contributor to diarrheal illness globally [1] | Both developed and developing countries [1] |

Clinical Significance and Health Impact

Morbidity and Mortality

IPIs contribute significantly to global disease burden, measured by Disability-Adjusted Life Years (DALYs). The clinical manifestations of IPIs vary widely depending on the causative organism, host immune status, and infection intensity. Common presentations include diarrhea, dysentery, abdominal pain, nausea, vomiting, nutritional deficiencies, iron deficiency anemia, and perianal itching [1]. In severe cases, particularly with heavy worm burdens, intestinal obstruction can occur [1].

The impact of parasitic infections extends beyond acute gastrointestinal symptoms. Chronic infections can lead to impaired nutrient absorption, growth retardation in children, cognitive deficits, and reduced work capacity in adults [2]. A 2025 systematic review and meta-analysis revealed a significant association between IPIs and colorectal cancer (CRC), with infected individuals having 3.61 times higher odds of developing CRC [3] [4]. The pooled prevalence of IPIs among CRC patients was 19.67%, suggesting a potential role of chronic inflammation in carcinogenesis [3].

Economic Consequences

The economic impact of IPIs is substantial, resulting from healthcare costs, lost productivity, and effects on cognitive development. The burden is particularly heavy in agricultural communities where infection can reduce work capacity. Beyond human infections, parasites significantly affect livestock, with soil-borne nematodes causing global crop yield losses estimated at $125 to $350 billion annually [2].

Major Pathogenic Parasites: Biology and Pathogenesis

Giardia duodenalis

Giardia duodenalis (also known as Giardia lamblia or Giardia intestinalis) is a flagellate protozoan and a major cause of epidemic and sporadic diarrhea worldwide [1]. It exists in two forms: the vegetative/active trophozoite and the infectious cyst [1]. Infection occurs through ingestion of cysts in fecal-contaminated water or food, with excystation occurring in the duodenum [1].

Pathogenesis: Trophozoites adhere to enterocyte brush borders via a ventral sucking disc, causing damage to the brush border epithelium, shortening of microvilli, and disruption of epithelial barrier function [1]. These changes lead to nutrient malabsorption, diarrhea, and steatorrhea through multiple mechanisms including disaccharidase deficiency, impaired glucose-dependent sodium absorption, and chloride hypersecretion [1].

Clinical Presentation: Patients typically present with diarrhea, abdominal cramps, nausea, gas, and bloating [1]. Chronic giardiasis may manifest with full-spectrum malabsorption of carbohydrates, proteins, fats, vitamins, and minerals, leading to significant weight loss and nutritional deficiencies [1].

Cryptosporidium Species

Cryptosporidium parvum and C. hominis are intracellular protozoan parasites causing diarrheal illness worldwide, primarily transmitted through contaminated water [1]. The infection begins with ingestion of oocysts that excyst in the small intestine, releasing sporozoites that invade epithelial cells [1].

Pathogenesis: Cryptosporidium affects primarily the distal jejunum and ileum, altering epithelial barrier function and increasing intestinal permeability [1]. Diarrhea results mainly from enterotoxic effects that increase secretion of water and electrolytes [1]. Animal models demonstrate inhibition of glucose-stimulated sodium absorption, lymphocytic infiltration in the lamina propria, crypt hyperplasia, and villous atrophy [1].

Clinical Presentation: Immunocompetent individuals typically experience self-limiting acute diarrhea, while immunocompromised patients (e.g., AIDS, transplant recipients) may develop chronic protracted diarrhea, acalculous cholecystitis, sclerosing cholangitis, and acute pancreatitis [1].

Table 2: Characteristics of Major Intestinal Parasitic Pathogens

| Parasite | Transmission Route | Primary Target Site | Key Pathogenic Mechanisms |

|---|---|---|---|

| Giardia duodenalis | Fecal-oral (contaminated water/food) [1] | Proximal small intestine [1] | Brush border damage, villous shortening, disrupted barrier function, disaccharidase deficiency [1] |

| Cryptosporidium spp. | Waterborne, fecal-oral, recreational water [1] | Distal jejunum and ileum [1] | Epithelial barrier disruption, enterotoxin-mediated secretion, villous atrophy [1] |

| Entamoeba histolytica | Fecal-oral [5] | Colon, liver (for abscesses) [5] | Contact-dependent cytolysis, invasion of intestinal mucosa [5] |

| Soil-Transmitted Helminths | Soil-transmitted, percutaneous (hookworm) [1] | Small intestine [1] | Mucosal invasion, blood feeding (hookworm), nutrient competition [1] |

Diagnostic Approaches and Protocols

Conventional Diagnostic Methods

The primary method for diagnosing most IPIs remains stool microscopy [1]. The Centers for Disease Control and Prevention (CDC) recommends collecting three stool samples over several days to improve detection sensitivity [1]. Different staining techniques enhance identification:

- Direct fluorescent antibody (DFA) testing for Giardia and Cryptosporidium (sensitivity: 93-100%, specificity: 99.8-100%) [1]

- Trichrome staining for protozoal trophozoites [1]

- Enzyme immunoassay (EIA) and rapid immunochromatographic cartridge assays for antigen detection [1]

For Giardia infections, duodenal fluid aspiration or biopsy may be necessary when stool examinations are repeatedly negative [1]. Molecular assays using PCR-based techniques are increasingly available for subtyping genetic variants of parasites [1].

Experimental Protocol: Standard Stool Examination Procedure

Materials Required:

- Fresh stool specimen

- Microscope slides and coverslips

- Saline solution

- Lugol's iodine

- Formalin-ethyl acetate concentration reagents

- Appropriate staining solutions (trichrome, modified acid-fast)

- Centrifuge and centrifuge tubes

Procedure:

- Sample Collection: Collect stool specimen in clean, wide-mouthed container without preservatives for fresh examination.

- Direct Wet Mount:

- Emulsify small portion of stool (approximately 2 mg) in saline solution.

- Prepare similar preparation in Lugol's iodine.

- Examine under 10x and 40x objectives for cysts, trophozoites, ova, and larvae.

- Formalin-Ethyl Acetate Concentration:

- Mix 1-2 g stool with 10 mL formalin in centrifuge tube, let stand 30 minutes.

- Strain through gauze into second centrifuge tube.

- Add 3 mL ethyl acetate, stopper, and shake vigorously.

- Centrifuge at 500 x g for 10 minutes.

- Examine sediment from interface layer as wet mount.

- Staining Procedures:

- Prepare smears for trichrome staining (protozoa) or modified acid-fast staining (coccidian parasites).

- Follow specific staining protocols for each method.

- Microscopic Examination:

- Systematically scan entire coverslip area under low power.

- Use high-power magnification for detailed morphology.

- Identify parasites based on size, shape, internal structures.

Emerging Diagnostic Technologies: Deep Learning Applications

YAC-Net and AI-Enhanced Detection

Recent advances in artificial intelligence have revolutionized parasitic diagnosis through automated detection systems. The YAC-Net model represents a lightweight deep-learning approach designed specifically for rapid and accurate detection of parasitic eggs in microscopy images while reducing automation costs [6]. This system helps address challenges in traditional microscopy, including operator fatigue, inter-observer variability, and time-intensive manual processes.

The Kubic FLOTAC Microscope (KFM) exemplifies this technological evolution, combining FLOTAC/Mini-FLOTAC techniques with AI-powered predictive models for automated parasite egg detection [7]. This portable digital microscope system demonstrates high sensitivity, accuracy, and precision in both laboratory and field settings, with studies showing a mean absolute error of only 8 eggs per sample in fecal egg counts [7].

Experimental Protocol: AI-Assisted Parasite Detection Workflow

Materials Required:

- Kubic FLOTAC Microscope or standard microscope with digital imaging capability

- FLOTAC or Mini-FLOTAC kit

- Stool samples prepared using standardized flotation techniques

- Computer workstation with GPU capability

- Deep learning model (YAC-Net or similar architecture)

Procedure:

- Sample Preparation:

- Prepare fecal samples using Mini-FLOTAC technique with appropriate flotation solution.

- Fill chambers and allow to stand for 10-15 minutes.

- Image Acquisition:

- Capture digital images of entire chamber using KFM or microscope-mounted camera.

- Ensure consistent lighting and magnification across samples.

- Save images in standardized format (JPEG or PNG).

- Model Processing:

- Input images into YAC-Net model for automated detection.

- Model generates bounding boxes around suspected parasitic structures.

- Classification probabilities assigned to each detection.

- Validation and Verification:

- Expert parasitologist reviews AI-generated annotations.

- Correct misclassifications and add missed detections if necessary.

- Update model with corrected annotations for continuous learning.

- Quantification and Reporting:

- Calculate eggs per gram (EPG) based on detection counts.

- Generate standardized clinical report.

The following diagram illustrates the integrated diagnostic workflow combining conventional and AI-enhanced approaches:

Therapeutic Interventions and Drug Development

Current Antiparasitic Agents

Treatment of IPIs involves various classes of antiparasitic drugs targeting different parasitic groups:

Antiprotozoal Agents:

- Metronidazole and tinidazole: First-line treatment for giardiasis, amebiasis, and trichomoniasis [8] [9]. Metronidazole becomes activated inside giardia trophozoites through reduction by ferredoxins, damaging parasite DNA [1].

- Nitazoxanide: Effective against cryptosporidiosis and giardiasis [9].

- Paromomycin: Luminal amebicide used for intestinal infections [9].

Antihelminthic Agents:

- Albendazole and mebendazole: Broad-spectrum anthelminthics effective against soil-transmitted helminths including ascariasis, hookworm, and trichuriasis [8].

- Pyrantel pamoate: Over-the-counter medication for enterobiasis (pinworms) and ascariasis [9].

- Praziquantel: Treatment for trematode and cestode infections [8].

- Ivermectin: Used for strongyloidiasis and off-label for scabies and lice [9].

Table 3: Drug Therapy for Common Intestinal Parasitic Infections

| Infection | First-Line Treatment | Alternative Agents | Special Considerations |

|---|---|---|---|

| Giardiasis | Metronidazole 250 mg TID for 5-7 days or Tinidazole 2 g single dose [1] [9] | Nitazoxanide, Albendazole, Paromomycin [9] | High single-dose tinidazole efficacy (92%) [1] |

| Cryptosporidiosis | Nitazoxanide | Paromomycin (in immunocompromised) | Often self-limiting in immunocompetent hosts [1] |

| Amebiasis | Metronidazole + Luminal agent (Paromomycin or Iodoquinol) [9] | Tinidazole, Erythromycin, Tetracycline | Luminal agent essential to eradicate cyst carriage [9] |

| Enterobiasis (Pinworm) | Albendazole or Pyrantel Pamoate [9] | Mebendazole | Treat all household contacts; repeat dose in 2 weeks [9] |

| Soil-Transmitted Helminths | Albendazole or Mebendazole [8] | Pyrantel Pamoate, Ivermectin | Single-dose regimens typically effective [8] |

Drug Development and Research Targets

Current drug development for parasitic diseases focuses on addressing limitations of existing agents, including resistance, toxicity, and limited spectrum of activity [10]. Key strategies include:

- Target-Based Drug Discovery: Identification of parasite-specific biochemical pathways and essential enzymes [5].

- Phenotypic Screening: Whole-organism screening of compound libraries against pathogenic parasites [10].

- Repurposing Approaches: Evaluation of existing drugs for antiparasitic activity [5].

Promising molecular targets for new antiparasitic drugs include:

- Methionine aminopeptidase 2 (MetAP2): Target of fumagillin in microsporidia [5].

- β-tubulin: Binding site for benzimidazoles like albendazole [5].

- Aspartic proteases: Inhibited by ritonavir and indinavir in microsporidia [5].

- Chitin synthesis enzymes: Targets for nikkomycins in microsporidia [5].

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Reagents for IPI Investigation

| Reagent/Material | Application | Function | Examples/Specifications |

|---|---|---|---|

| FLOTAC/Mini-FLOTAC System | Parasite egg concentration and quantification [7] | Standardized fecal egg counting technique with high sensitivity and accuracy | Multi-chamber design, disposable |

| Kubic FLOTAC Microscope (KFM) | Automated digital parasitological diagnosis [7] | Portable microscope with AI integration for egg detection and classification | Battery-powered, web interface, dedicated AI server |

| Deep Learning Models (YAC-Net) | Automated parasite detection in microscopy images [6] | Lightweight convolutional neural network for rapid egg identification | Optimized for parasitic egg morphology |

| Parasite-Specific Antigen Detection Kits | Immunodiagnosis of specific parasitic infections [1] | Enzyme immunoassay (EIA) and rapid immunochromatographic tests for antigen detection | Giardia, Cryptosporidium, E. histolytica specific tests |

| Staining Reagents | Microscopic differentiation of parasitic structures [1] | Enhance visualization of cysts, trophozoites, and ova | Trichrome, modified acid-fast, iodine solutions |

| Antiparasitic Compound Libraries | Drug discovery and development [10] | Screening collections for identifying novel therapeutic agents | Diversity-oriented synthetic compounds, natural product extracts |

The global burden of intestinal parasitic infections remains substantial, affecting predominantly vulnerable populations in resource-limited settings. Effective management requires integrated approaches combining accurate diagnosis, effective treatment, and preventive measures. Emerging technologies, particularly AI-enhanced diagnostic systems like YAC-Net and the Kubic FLOTAC Microscope, offer promising avenues for improving detection accuracy and accessibility [7] [6].

Future research priorities include:

- Development of novel therapeutic agents to address drug resistance and limited treatment options [10]

- Validation and implementation of AI-based diagnostic systems in diverse field settings [7] [6]

- Enhanced understanding of parasite-host interactions and chronic sequelae of infections, including the association with colorectal cancer [3] [4]

- Implementation of integrated control programs combining mass drug administration with improved sanitation, health education, and access to clean water [1]

Addressing the challenge of IPIs requires multidisciplinary collaboration between researchers, healthcare providers, and public health officials to reduce the substantial global burden of these neglected infections.

Limitations of Manual Microscopy and Traditional Machine Learning

Modern biological and biomedical research heavily relies on microscopy for visualizing cellular and subcellular structures. For decades, image analysis in this domain has been dominated by two primary approaches: manual analysis by human experts and traditional machine learning algorithms. While these methods have enabled significant discoveries, they present considerable limitations in accuracy, throughput, and reproducibility, particularly in the era of high-content screening and large-scale quantitative biology [11] [12]. This document outlines the key constraints of these established approaches, providing a scientific rationale for the development of advanced deep learning frameworks like YAC-Net for microscopy image detection. The limitations discussed herein frame the critical research gap that next-generation computational models aim to address.

Critical Limitations of Manual Microscopy Analysis

Manual microscopy remains a reference technique in many laboratories, particularly for clinical validation and complex morphological assessments. However, quantitative analyses reveal significant constraints across multiple performance dimensions.

Quantitative Performance Deficits

Table 1: Performance Comparison of Manual vs. Automated Microscopy Analysis

| Performance Metric | Manual Microscopy | AI-Powered Automation | Experimental Evidence |

|---|---|---|---|

| Analysis Speed | Time-consuming; limited by human fatigue [13] | High-throughput; operates continuously [13] | Processes hundreds-thousands of items/minute [13] |

| Consistency & Reproducibility | Subject to intra- and inter-observer variability [12] | Applies uniform criteria consistently [13] | Reduces subjective inconsistencies in evaluation [13] |

| Quantitative Accuracy | Prone to human error in repetitive tasks [13] | Sub-pixel accuracy in measurements [13] | Detects minute variations invisible to human eye [13] |

| Data Volume Handling | Limited by practical human capacity [12] | Processes terabytes of multidimensional data [14] | Enables analyses previously deemed impractical [14] |

| Fatigue-Related Error Rate | Increases with prolonged task duration [13] | No performance degradation over time [13] | Contributes to mistakes in monotonous inspection [13] |

Operational and Economic Constraints

Beyond quantitative performance issues, manual microscopy presents significant operational challenges. The dependency on highly trained specialists creates workflow bottlenecks, particularly in high-volume diagnostic settings like hematology laboratories [15]. Furthermore, the labor-intensive nature of manual analysis makes it economically unsustainable for large-scale studies, as the time required for expert annotation often exceeds the data acquisition time by orders of magnitude [12].

In clinical practice, even advanced digital systems that incorporate manual verification pathways demonstrate these limitations. For instance, the Sysmex XN-3100 hematology analyzer requires manual reclassification of abnormal cells, particularly immature granulocytes and blasts, as the automated preclassification shows "significant deviation from linearity" (p<0.01) for these cell types [15]. This necessary human intervention slows diagnostic throughput and introduces variability dependent on operator expertise.

Limitations of Traditional Machine Learning in Microscopy

Traditional machine learning approaches (e.g., random forests, support vector machines) using hand-crafted features (e.g., intensity, texture, shape descriptors) represented a significant advancement over purely manual analysis but face fundamental limitations in complex biological imaging contexts.

Technical and Performance Constraints

Table 2: Key Limitations of Traditional Machine Learning in Microscopy

| Limitation Category | Specific Technical Constraints | Impact on Microscopy Analysis |

|---|---|---|

| Feature Engineering Dependency | Relies on manually designed features and thresholds [12] | Poor generalization across different imaging conditions or modalities [12] |

| Background Noise Sensitivity | Standard thresholding algorithms struggle with varying background [12] | High false positive/negative rates in low signal-to-noise images [12] |

| Complex Pattern Recognition | Limited capacity for hierarchical feature learning [16] | Inadequate for subtle morphological distinctions (e.g., spine classification) [17] |

| 3D Image Processing | Computational inefficiency with 3D image stacks [12] | Loss of spatial information critical for structural analysis [12] |

| Adaptation to New Data | Requires complete reprocessing and threshold recalibration [12] | Labor-intensive maintenance for evolving experimental conditions |

Practical Implementation Challenges

The technical limitations of traditional machine learning manifest in concrete analytical failures across multiple application domains. In neuron and dendritic spine analysis, these algorithms struggle with the complex morphological diversity of spines (filopodia, stubby, thin, and mushroom types), particularly in distinguishing subtle neck structures critical for functional classification [17]. Similarly, in parasitology, distinguishing between visually similar entities like Fasciola hepatica and Calicophoron daubneyi eggs presents considerable challenges for traditional pattern recognition approaches, necessitating specialized image processing steps to prevent false positives and misclassification [7].

The threshold selection problem represents a particularly persistent challenge. As noted in the development of TrueSpot, an automated fluorescence detection tool, "Setting a threshold at which algorithms consider puncta to be true signals versus just noise in an image has been a challenging aspect of image analysis" for traditional algorithms [12]. This limitation becomes especially problematic in fluorescence microscopy where background noise and signal intensity can vary substantially between experiments.

Experimental Protocols for Benchmarking Analysis Methods

To quantitatively evaluate the limitations of traditional approaches and validate improved methodologies, standardized benchmarking protocols are essential. The following sections describe key experimental workflows for assessing analytical performance.

Protocol for Dendritic Spine Analysis and Classification

Objective: To quantitatively compare manual, traditional machine learning, and deep learning approaches for classifying dendritic spine morphologies in neuronal imaging.

Materials and Reagents: Table 3: Research Reagent Solutions for Neuronal Imaging

| Reagent/Probe | Function | Application Notes |

|---|---|---|

| MemBright probes | Uniform plasma membrane labeling | Enables clear visualization of spine necks and heads; works in live/fixed samples [17] |

| Fluorescent Phalloidin | F-actin binding for spine labeling | Labels spine heads efficiently but neck signal may be weaker [17] |

| Membranous GFP variants | Membrane-specific targeting | Improved neck detection vs. cytosolic GFP (Addgene 117,858) [17] |

| DiIC₁₈ / DID | Lipophilic membrane dye | Diffuses through entire membrane; variability complicates segmentation [17] |

Imaging Workflow:

- Sample Preparation: Transfert hippocampal neurons with membranous GFP variant or label with MemBright dye (5 min incubation in culture media) [17].

- Image Acquisition: Acquire 3D image stacks using super-resolution microscopy (Airyscan or SIM for live imaging; 3D-STED for fixed samples) [17].

- Multi-view Fusion: For fixed samples, acquire 4 views from different angles and align in same spatial reference frame [18].

- Resolution Enhancement: Apply deconvolution using Classic Maximum Likelihood Estimation in Huygens software [17].

Analysis Workflow:

Diagram Title: Experimental Workflow for Spine Analysis Benchmarking

Protocol for Parasite Egg Detection and Discrimination

Objective: To evaluate the performance of different computational approaches in distinguishing between morphologically similar parasite eggs in fecal samples.

Materials and Reagents:

- Kubic FLOTAC Microscope (KFM) system [7]

- FLOTAC/Mini-FLOTAC sample preparation apparatus [7]

- Fecal samples spiked with Fasciola hepatica and Calicophoron daubneyi eggs [7]

Experimental Workflow:

- Sample Preparation: Prepare egg-spiked samples and naturally infected samples using FLOTAC technique to create datasets for model training and evaluation [7].

- Image Acquisition: Acquire digital microscopy images using the KFM system, which includes automated parasite egg detection via an AI predictive model [7].

- Model Training: Train traditional machine learning models (using hand-crafted morphological features) and deep learning models (end-to-end) on the annotated dataset.

- Performance Evaluation: Compare quantitative metrics including fecal egg count accuracy, false positive rates, and discrimination accuracy between parasite species.

Analysis Workflow:

Diagram Title: Parasite Egg Detection and Discrimination Workflow

The limitations of manual microscopy and traditional machine learning present significant constraints on the scale, accuracy, and reproducibility of image-based research in biological sciences and clinical diagnostics. Manual methods, while valuable for complex qualitative assessments, suffer from inherent limitations in throughput, consistency, and quantitative rigor. Traditional machine learning approaches, while improving analytical scalability, remain constrained by their dependency on hand-crafted features and sensitivity to imaging variations. These documented limitations establish a compelling rationale for the development and implementation of advanced deep learning frameworks like YAC-Net, which offer the potential for end-to-end learning, robust performance across diverse imaging conditions, and discovery of previously unrecognized morphological features directly from pixel data.

The Rise of Deep Learning in Biomedical Image Analysis

Application Note: YAC-Net for Automated Parasite Egg Detection

The application of deep learning in biomedical image analysis represents a paradigm shift in diagnostic methodologies, particularly for tasks requiring high precision and reproducibility. Within this context, the YAC-Net deep learning model exemplifies this transition, specifically engineered for automated detection of parasite eggs in microscopy images. Intestinal parasitic infections (IPIs) remain a serious public health challenge in developing countries, with soil-transmitted helminth (STH) infection being a primary cause. The World Health Organization's 2023 statistics indicate approximately 1.5 billion STH-infected individuals worldwide, creating an urgent need for scalable diagnostic solutions [19].

Traditional microscopic examination, while considered the gold standard, suffers from limitations including low efficiency, high workload, and dependence on the expertise and physical condition of the examiner. Manual examination accuracy is closely tied to the operator's prior knowledge, leading to potential inconsistencies. Automated detection systems can eliminate these dependencies, providing accurate, fast, and standardized results while offering identification services to populations lacking specialized professional expertise [19].

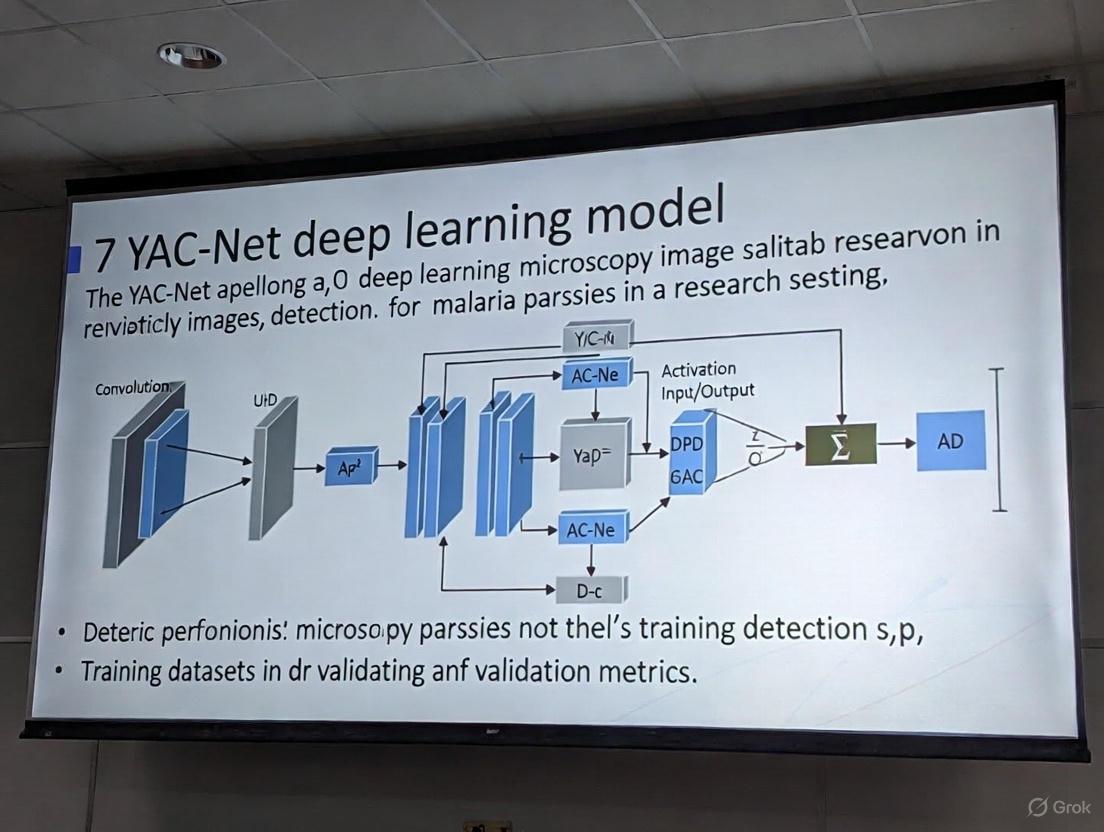

Technical Innovation: YAC-Net Architecture

YAC-Net is a lightweight deep-learning model designed to achieve rapid and accurate detection of parasitic eggs while reducing computational requirements. Built upon the YOLOv5n baseline model, it incorporates two key architectural improvements specifically designed for the specificity of egg data [19]:

- Asymptotic Feature Pyramid Network (AFPN) in the Neck: Replaces the traditional Feature Pyramid Network (FPN). Unlike FPN, which primarily integrates semantic feature information at adjacent levels, AFPN's hierarchical and asymptotic aggregation structure fully fuses spatial contextual information of egg images. Its adaptive spatial feature fusion mode helps the model select beneficial features while ignoring redundant information, thereby reducing computational complexity and improving detection performance [19].

- C2f Module in the Backbone: Replaces the original C3 module. This modification enriches gradient flow information, significantly enhancing the backbone network's feature extraction capability [19].

Table 1: Performance Comparison of YAC-Net Against Baseline and Other Methods

| Model | Precision (%) | Recall (%) | F1 Score | mAP@0.5 | Parameters |

|---|---|---|---|---|---|

| YOLOv5n (Baseline) | 96.7 | 94.9 | 0.9578 | 0.9642 | 2, 410, 378 |

| YAC-Net (Proposed) | 97.8 | 97.7 | 0.9773 | 0.9913 | 1, 924, 302 |

The experimental results, validated on the ICIP 2022 Challenge dataset using fivefold cross-validation, demonstrate that YAC-Net not only surpasses its baseline but also achieves state-of-the-art performance compared to other contemporary detection methods. Notably, it reduces the number of parameters by one-fifth, making it suitable for deployment in resource-constrained environments [19].

Broader Applicability and Workflow Integration

The principles embodied by YAC-Net are reflected in other successful biomedical imaging platforms. For instance, the Kubic FLOTAC Microscope (KFM) is an AI-enhanced, portable digital microscope that integrates a robust AI predictive model for automated parasite egg detection. It combines the high sensitivity and accuracy of the FLOTAC/Mini-FLOTAC sample preparation technique with a dedicated AI server for image analysis. This system has been specifically optimized to discriminate between hard-to-distinguish eggs, such as those of Fasciola hepatica and Calicophoron daubneyi, minimizing false positives and ensuring accurate egg counts through additional image processing steps [7].

Furthermore, the trend towards multi-modal, lightweight models is evident in other areas of medical image analysis. Research into optimized deep learning frameworks that can simultaneously diagnose multiple diseases from various imaging modalities (e.g., Chest X-ray, MRI, endoscopy) using truncated architectures and metaheuristic optimization highlights the drive for computational efficiency without sacrificing diagnostic accuracy [20].

Experimental Protocols

Protocol 1: Model Training and Evaluation for YAC-Net

Objective: To train and evaluate the YAC-Net deep learning model for the detection of parasite eggs in microscopy images.

Materials:

- Dataset: ICIP 2022 Challenge dataset or equivalent annotated dataset of parasite egg microscopy images.

- Hardware: Computer with a CUDA-compatible GPU (e.g., NVIDIA GeForce RTX series) for accelerated training.

- Software: Python 3.8+, PyTorch 1.7+, Ultralytics YOLOv5 library, OpenCV, NumPy.

- Model: YAC-Net implementation (based on modified YOLOv5n with AFPN and C2f modules).

Procedure:

Data Preparation:

- Organize the dataset into training, validation, and test sets. A fivefold cross-validation strategy is recommended for robust evaluation [19].

- Apply standard data augmentation techniques to improve model generalization (e.g., random flipping, rotation, scaling, color jittering, and mosaic augmentation).

Model Configuration:

- Initialize the model with the YAC-Net architecture, replacing the standard FPN with AFPN and the C3 modules with C2f modules in the backbone.

- Configure training hyperparameters. A standard initial setup includes:

- Optimizer: SGD or Adam

- Learning Rate: Use a learning rate scheduler (e.g., OneCycleLR)

- Batch Size: Maximize based on available GPU memory.

- Epochs: Train until validation metrics plateau.

Training:

- Load the preprocessed training dataset.

- Execute the training loop, monitoring losses (box loss, objectness loss, classification loss) on both training and validation sets.

- Save model checkpoints at intervals or when performance on the validation set improves.

Evaluation:

- Use the held-out test set to evaluate the final model.

- Calculate key performance metrics, including Precision, Recall, F1 Score, and mean Average Precision at an IoU threshold of 0.5 (mAP@0.5) [19].

- Compare the parameter count and computational speed (FPS) against the baseline model.

Troubleshooting:

- If overfitting is observed (high training metrics, low validation metrics), increase the intensity of data augmentation or incorporate regularization techniques like dropout or weight decay.

- For poor convergence, verify the learning rate schedule and consider using a pre-trained backbone for transfer learning.

Protocol 2: Sample Processing and AI-Assisted Diagnosis Using KFM System

Objective: To prepare fecal samples and perform automated parasite egg detection and counting using the Kubic FLOTAC Microscope system.

Materials:

- Kubic FLOTAC Microscope: Includes portable digital microscope, integrated AI server, and web interface [7].

- FLOTAC or Mini-FLOTAC kit: Including fill-FLOTAC chambers, flotation cups, and solution filters [7].

- Flotation Solution: Zinc sulfate or sodium nitrate solution of appropriate specific gravity.

- Fecal Sample: Fresh or preserved fecal material from the subject.

Procedure:

Sample Preparation:

- Weigh a specific amount of feces (e.g., 1-2 grams) and place it into a flotation cup.

- Add the flotation solution gradually, emulsifying the sample thoroughly to create a homogeneous suspension.

- Filter the suspension to remove large debris.

- Carefully transfer the filtered suspension to the FLOTAC chamber, avoiding bubble formation.

System Setup:

- Power on the KFM device. Ensure the integrated battery is charged or connect to a power source.

- Access the microscope control web interface from a connected computer or tablet.

- Launch the dedicated AI analysis software/server.

Image Acquisition and Analysis:

- Place the prepared FLOTAC chamber into the microscope stage.

- Initiate the automated scanning procedure via the web interface. The system will capture multiple field-of-view images.

- The onboard AI model will process the captured images in real-time, detecting, classifying, and counting parasite eggs.

- Review the generated clinical report, which includes egg types and counts per gram of sample.

Troubleshooting:

- If the AI model produces false positives, ensure the sample preparation protocol is followed precisely to minimize artifacts. The system may require re-optimization for specific parasite types [7].

- For low detection sensitivity, verify the concentration and cleanliness of the flotation solution and the quality of the sample.

Workflow and Pathway Visualizations

Diagram 1: AI-Powered Parasite Egg Detection Workflow

Diagram 2: YAC-Net Model Optimization Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Automated Parasite Egg Detection Experiments

| Item Name | Function / Application | Key Characteristics / Notes |

|---|---|---|

| ICIP 2022 Challenge Dataset | Benchmark dataset for training and evaluating parasite egg detection models. | Provides standardized, annotated microscopy images for comparative algorithm validation [19]. |

| FLOTAC / Mini-FLOTAC Chamber | Sample preparation apparatus for fecal egg flotation and concentration. | Enables highly sensitive, quantitative egg counts; compatible with the KFM digital microscopy system [7]. |

| Zinc Sulfate Flotation Solution | Medium for separating parasite eggs from fecal debris via flotation. | Specific gravity (~1.18-1.20) is critical for optimal recovery of specific parasite eggs. |

| Kubic FLOTAC Microscope (KFM) | Portable, AI-integrated digital microscope for automated egg detection. | Combines reliable hardware with a dedicated AI server for end-to-end analysis in field and lab settings [7]. |

| YAC-Net Model Weights | Pre-trained parameters for the YAC-Net deep learning architecture. | Allows for transfer learning and fine-tuning on new datasets, accelerating project initiation [19]. |

| GPU-Accelerated Workstation | Hardware for model training and inference. | CUDA-compatible GPU (e.g., NVIDIA RTX) significantly reduces time required for model development and evaluation. |

YAC-Net's Position in the Evolving Landscape of AI-Assisted Diagnostics

The integration of artificial intelligence (AI) into medical diagnostics represents a paradigm shift in how diseases are detected and classified. Within this dynamic field, YAC-Net emerges as a specialized deep-learning model explicitly designed for the automated detection of parasite eggs in microscopy images [19]. Intestinal parasitic infections (IPIs) remain a serious public health challenge in developing nations, and their diagnosis critically depends on the accurate detection of parasites or their eggs in patient samples [19]. YAC-Net addresses the pressing need for diagnostic tools that are not only accurate but also computationally efficient, thereby lowering the hardware barriers for implementing automated screening in resource-limited settings [19]. This application note details the model's architecture, benchmarks its performance against contemporary methods, and provides a standardized protocol for its application in parasitic egg detection.

Technical Specifications and Performance Benchmarking

YAC-Net is architected as a lightweight deep-learning model, with its design originating from the YOLOv5n model, which serves as its baseline [19]. The developers introduced two key structural modifications to enhance performance and reduce computational complexity:

- Asymptotic Feature Pyramid Network (AFPN) in the Neck: The original Feature Pyramid Network (FPN) was replaced with an AFPN. This hierarchical and asymptotic aggregation structure allows for a more complete fusion of spatial contextual information from the input images. Its adaptive spatial feature fusion mechanism helps the model select beneficial features while ignoring redundant information, which reduces computational demands and improves detection performance [19].

- C2f Module in the Backbone: The C3 module in the backbone was replaced with a C2f module. This enrichment of gradient flow information enhances the backbone network's feature extraction capability, contributing to the model's high accuracy [19].

Ablation studies confirmed the effectiveness of these modifications in the process of model lightweighting [19]. The model was trained and evaluated using the ICIP 2022 Challenge dataset, with experiments conducted via fivefold cross-validation [19].

Table 1: Performance Metrics of YAC-Net on the Test Set

| Metric | Value |

|---|---|

| Precision | 97.8% |

| Recall | 97.7% |

| F1 Score | 0.9773 |

| mAP_0.5 | 0.9913 |

| Number of Parameters | 1,924,302 |

Table 2: Comparative Analysis of YAC-Net Against Other Detection Methods

| Model/Method | Key Characteristics | Reported Performance (mAP or Equivalent) |

|---|---|---|

| YAC-Net | Lightweight; modified YOLOv5n with AFPN & C2f [19] | mAP_0.5: 0.9913 [19] |

| Faster R-CNN | Two-stage detector; complex structure [21] [22] | Used in parasitic egg and colorectal cancer detection [21] [22] |

| YOLOv5n (Baseline) | Original model before YAC-Net modifications [19] | Lower than YAC-Net (YAC-Net improves mAP_0.5 by 0.0271) [19] |

| C2BNet | Coupled Composite Backbone with Swin-Transformer & CNN; large parameters [19] | High performance but computationally intensive [19] |

The experimental results demonstrate that compared to its baseline (YOLOv5n), YAC-Net improves precision by 1.1%, recall by 2.8%, F1 score by 0.0195, and mAP0.5 by 0.0271, while simultaneously reducing the number of parameters by one-fifth [19]. When benchmarked against other state-of-the-art detection methods, YAC-Net achieves leading performance in precision, F1 score, mAP0.5, and parameter count [19].

Diagram 1: YAC-Net high-level architecture for parasite egg detection.

Detailed Experimental Protocol for Parasite Egg Detection

This protocol outlines the procedure for applying the pre-trained YAC-Net model to detect parasitic eggs in stool microscopy images, based on the methodology described in the foundational research [19].

Research Reagent Solutions and Essential Materials

Table 3: Essential Materials and Software for YAC-Net Implementation

| Item | Function/Description | Example/Note |

|---|---|---|

| Microscopy Stool Images | Raw input data for the detection model. | Images should be prepared per standard parasitology methods (e.g., similar to Kato-Katz [21]). |

| ICIP 2022 Challenge Dataset | Benchmark dataset for training and validating parasite egg detection models. | Largest public dataset of its kind; used for YAC-Net's development [19]. |

| YAC-Net Pre-trained Model | The core deep learning model for object detection. | Available from the original publication; built upon PyTorch framework. |

| Python (v3.8+) | Programming language environment. | Essential for running model inference scripts. |

| PyTorch Library | Deep learning framework. | Required to load and execute the YAC-Net model. |

| OpenCV Library | Computer vision library for image processing. | Used for image pre-processing and visualization of results. |

| GPU with CUDA Support | Hardware accelerator. | Recommended for significantly reducing inference time. |

Step-by-Step Workflow

Step 1: Image Acquisition and Pre-processing

- Image Acquisition: Collect stool sample images using a microscope equipped with a digital camera. Ensure consistent magnification and lighting conditions across images to maintain uniformity.

- Pre-processing: Resize input images to the required input dimensions of the YAC-Net model (typically 640x640 pixels). Normalize pixel values to a standard range (e.g., 0-1) to facilitate stable model inference.

Step 2: Model Loading and Inference

- Environment Setup: Install the necessary software dependencies, including Python, PyTorch, OpenCV, and other libraries as specified in the model's repository.

- Load Model: Load the pre-trained YAC-Net weights into the model architecture using PyTorch. Transfer the model to a GPU if available for faster computation.

- Run Inference: Pass the pre-processed image through the model. YAC-Net will perform a single forward pass to predict bounding boxes and class probabilities for parasite eggs present in the image.

Step 3: Post-processing and Result Interpretation

- Post-processing: Apply a confidence threshold (e.g., 0.5) to filter out weak detections. Use Non-Maximum Suppression (NMS) to eliminate redundant bounding boxes that refer to the same egg.

- Visualization: Draw the final bounding boxes and class labels on the original image. The output should clearly indicate the location and type of any detected parasite eggs.

- Quantification: The model's output can be used to quantify the number of eggs per image, which can be correlated with infection intensity.

Diagram 2: YAC-Net diagnostic workflow from sample to result.

YAC-Net's Position in the AI Diagnostics Ecosystem

YAC-Net occupies a strategic position within the AI-assisted diagnostics landscape by addressing the critical trade-off between model performance and computational efficiency. While sophisticated models like Faster R-CNN and composite networks with Swin-Transformers have demonstrated high accuracy in various medical image analysis tasks, including colorectal cancer screening [22] and general microscopic image segmentation [23], they often require substantial computational resources [19]. This limits their deployability in remote or resource-poor settings where parasitic infections are most prevalent.

YAC-Net's lightweight design, achieved through the innovative use of AFPN and the C2f module, allows it to achieve state-of-the-art performance on parasitic egg detection with a parameter count reduced by one-fifth compared to its baseline [19]. This makes it a compelling solution for point-of-care diagnostic applications. Its design philosophy aligns with the growing need for "edge AI" in medicine, where models are optimized to run on lower-power devices without sacrificing diagnostic accuracy. Furthermore, the model's high recall rate of 97.7% is particularly crucial for a screening tool, as it minimizes false negatives, ensuring that infections are not missed [19].

YAC-Net represents a significant advancement in the application of deep learning for parasitic disease diagnostics. By prioritizing a lightweight architecture without compromising on performance, it provides a practical and effective pathway toward automating the detection of parasite eggs in microscopy images. The detailed protocols and performance benchmarks outlined in this application note underscore its robustness and readiness for further validation in clinical settings. For researchers and drug development professionals, YAC-Net serves as a powerful tool that can streamline diagnostic processes, enhance throughput, and contribute to the fight against neglected tropical diseases by making advanced diagnostic technology more accessible.

Connecting Automated Detection to Accelerated Drug Discovery and Development

The integration of automated detection systems powered by deep learning is fundamentally transforming the landscape of drug discovery and development. These technologies are poised to address critical bottlenecks in data generation and analysis, enabling researchers to extract more predictive information from complex, physiologically relevant model systems. This shift is particularly evident in the analysis of microscopy images, where high-content data can be repurposed to predict broader biological activity, thereby accelerating project timelines and reducing reliance on costly, low-throughput customized assays [24]. Framed within the context of ongoing research into the YAC-Net deep learning model—a lightweight architecture designed for precise, automated detection of parasite eggs in microscope images—this document outlines practical protocols and applications that bridge advanced detection and pharmaceutical development [25]. By providing detailed methodologies and data, these application notes aim to equip scientists with the tools to leverage such models for enhancing the efficiency and predictive power of their discovery workflows.

Quantitative Performance Data

The deployment of specialized deep learning models for image-based detection in a drug discovery context necessitates a clear understanding of model performance and computational requirements. The table below summarizes key quantitative metrics for the YAC-Net model and illustrates how repurposing high-content imaging data can impact drug discovery campaigns.

Table 1: Performance Metrics of the YAC-Net Detection Model This table details the detection performance of the YAC-Net model as evaluated on the ICIP 2022 Challenge dataset using fivefold cross-validation [25].

| Metric | YOLOv5n (Baseline) | YAC-Net (Proposed) | Improvement |

|---|---|---|---|

| Precision | 96.7% | 97.8% | +1.1% |

| Recall | 94.9% | 97.7% | +2.8% |

| F1 Score | 0.9578 | 0.9773 | +0.0195 |

| mAP@0.5 | 0.9642 | 0.9913 | +0.0271 |

| Number of Parameters | 2,300,000 (est.) | 1,924,302 | Reduction of ~375,000 |

Table 2: Impact of Repurposing High-Content Imaging Data in Drug Discovery This table summarizes the experimental results from repurposing a high-throughput imaging assay to predict activity in two separate drug discovery projects, demonstrating a significant increase in hit rates and chemical diversity [24].

| Project | Conventional Assay Hit Rate | Image-Based Prediction Hit Rate | Fold Increase in Hit Rate | Key Outcome |

|---|---|---|---|---|

| Project A | Baseline | 50x higher | 50 | Increased hit rate |

| Project B | Baseline | 250x higher | 250 | Increased chemical structure diversity of hits |

Experimental Protocols

Protocol A: Implementation of YAC-Net for High-Content Microscopy Image Analysis

This protocol describes the procedure for utilizing the pre-trained YAC-Net model to detect and quantify objects of interest (e.g., parasite eggs, specific cellular phenotypes) in microscopy images, generating structured data for downstream analysis.

I. Materials and Equipment

- YAC-Net Model Weights: Pre-trained model file (

yac_net.pt). - Inference Hardware: A computer with a CUDA-compatible GPU (e.g., NVIDIA GeForce RTX 3080) is recommended for speed.

- Software Environment: Python 3.8+, PyTorch 1.9+, OpenCV, and other dependencies as outlined in the YAC-Net repository.

- Input Data: A directory of microscopy images in standard formats (e.g.,

.tiff,.png).

II. Procedure

- Environment Setup

- Create a Python virtual environment and install the required packages as listed in the project's

requirements.txtfile. - Ensure the CUDA drivers and PyTorch are correctly configured to utilize the GPU.

- Create a Python virtual environment and install the required packages as listed in the project's

Data Preparation

- Organize the microscopy images into a single directory. For consistent results, ensure images are saved with minimal compression.

- (Optional) If the model was trained on a specific image format or bit depth, pre-process the images to match, such as normalizing pixel values to [0, 1].

Model Inference

- Load the pre-trained YAC-Net model weights into the model architecture using the PyTorch

load_state_dict()function. - Set the model to evaluation mode with

model.eval(). - Use the provided inference script to process the image directory. The script will: a. Load and pre-process each image (e.g., resizing, normalization). b. Pass the image through the YAC-Net model. c. Apply a non-maximum suppression (NMS) threshold (e.g., 0.45) to filter overlapping detections. d. Output the bounding box coordinates, confidence scores, and class labels for all detections above a set confidence threshold (e.g., 0.5).

- Load the pre-trained YAC-Net model weights into the model architecture using the PyTorch

Output and Analysis

- The results can be saved in a structured format, such as a JSON file or CSV, for easy integration with data analysis pipelines.

- The output data can then be used for quantitative analysis, such as calculating the number of objects per image, object density, or spatial distribution.

Protocol B: Repurposing a High-Content Glucocorticoid Receptor (GR) Translocation Assay for Predictive Modeling

This protocol is adapted from seminal work demonstrating that data from a single high-content assay can be repurposed to predict compound activity in unrelated biological assays [24].

I. Materials and Equipment

- Cell Line: U-2 OS cells (or another suitable line) stably expressing a glucocorticoid receptor-GFP fusion protein.

- Compound Library: A diverse set of small molecules for screening.

- Reagents: Cell culture media, serum, buffers, fixation reagent (e.g., paraformaldehyde), nuclear stain (e.g., DAPI).

- Equipment: Automated fluorescence microscope, high-throughput liquid handler, plate reader.

- Software: Image analysis software (e.g., CellProfiler) and machine learning environment (e.g., Python with scikit-learn).

II. Procedure

- Primary High-Content Screening

- Seed cells into 384-well microplates and culture until 50-60% confluent.

- Using an automated liquid handler, treat cells with compounds from the library at a single concentration (e.g., 10 µM) for a defined period (e.g., 2 hours). Include positive (e.g., Dexamethasone) and negative (DMSO vehicle) controls on every plate.

- Fix cells, stain nuclei with DAPI, and acquire images for each well using an automated fluorescence microscope with a 20x objective. Capture images in the GFP (GR-GFP) and DAPI (nuclei) channels.

Image Analysis and Feature Extraction

- Use image analysis software to identify nuclei based on the DAPI signal.

- For each cell, quantify the translocation of GR-GFP from the cytoplasm to the nucleus. Extract a rich set of ~800+ morphological features from each cell, including intensity, texture, and shape measurements from both channels [24] [26].

- Aggregate the single-cell data to generate a profile (e.g., median values) for each compound well, resulting in a high-dimensional feature vector for every compound.

Model Training and Predictions for a New Assay

- Data Integration: Compile the feature vectors from the GR translocation screen with the known activity outcomes from your target drug discovery project's assay (e.g., a functional assay for a different target).

- Model Training: Train a machine learning model, such as a Random Forest or Bayesian Matrix Factorization model, on this combined dataset [24]. The model will learn the relationship between the cellular phenotypes induced by the compounds and their activity in the target assay.

- Activity Prediction: Use the trained model to predict the activity of new, untested compounds in the target assay based solely on their profiles from the GR translocation screen. This prioritizes compounds for testing in the more resource-intensive target assay.

Workflow Visualization

The following diagram illustrates the integrated workflow connecting automated microscopy detection with predictive modeling for accelerated drug discovery.

Diagram Title: AI-Driven Drug Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Automated Detection and High-Content Screening

This table details key reagents, tools, and software essential for implementing the protocols described in this document.

| Item Name | Function / Application | Key Characteristics / Examples |

|---|---|---|

| YAC-Net Deep Learning Model [25] | Automated object detection in microscopy images. | Lightweight CNN; uses AFPN & C2f modules; high precision (97.8%) with reduced parameters. |

| High-Content Screening (HCS) Platform [26] | Automated image acquisition for phenotypic profiling. | Integrated systems with automated microscopy, liquid handling, and environmental control. |

| Glucocorticoid Receptor (GR) Assay Kit [24] | Provides a rich source of phenotypic data for predictive model training. | Cell line with GR-GFP construct; fixation & staining reagents. |

| CellProfiler / ImageJ | Open-source software for quantitative analysis of biological images. | Used for feature extraction (morphology, intensity, texture) from raw microscopy images. |

| Python with ML Libraries (scikit-learn, PyTorch) | Environment for building and training predictive models from extracted features. | Enables use of models like Random Forest or Bayesian Matrix Factorization for activity prediction. |

| Modular Liquid Handler [27] | Automation of reagent dispensing and compound transfers in microplates. | Systems like the "Research 3 neo pipette" or Tecan's "Veya" for walk-up automation and reproducibility. |

| 3D Cell Culture System [27] | Provides more physiologically relevant models for screening. | Automated platforms like the MO:BOT for standardizing organoid culture and screening. |

Architecture and Implementation: A Technical Deep Dive into the YAC-Net Model

Within the context of developing YAC-Net, a deep learning model for microscopy image detection, the selection of an appropriate base architecture is a critical foundational decision. This choice directly influences the model's performance, computational efficiency, and suitability for deployment in research and clinical settings. For YAC-Net, which focuses on detecting cellular structures and anomalies in microscopy images, YOLOv5n was chosen as the starting baseline model. This document outlines the quantitative and qualitative reasoning behind this selection, providing a detailed protocol for researchers and scientists in drug development who face similar decisions in their computer vision pipelines.

The decision was driven by the need for a highly efficient and adaptable architecture that maintains a small computational footprint without sacrificing the potential for high accuracy, a balance that is essential for analyzing complex microscopy data where features can be small and nuanced [28].

Quantitative Comparison of YOLOv5 Variants

The YOLOv5 family consists of several models, ranging from the ultra-lightweight YOLOv5n (nano) to the much larger YOLOv5x (extra large). The primary differentiators among them are their depth and width, which are governed by two scaling factors: depth_multiple (model depth) and width_multiple (layer channel width) [29] [30]. The following table summarizes the key architectural and performance metrics across the variants, based on the COCO dataset benchmark.

Table 1: Architectural and Performance Specifications of YOLOv5 Variants

| Model Variant | Depth Multiple | Width Multiple | Parameters (M) | mAPval (50-95) | CPU Speed (ms) | V100 Speed (ms) |

|---|---|---|---|---|---|---|

| YOLOv5n | 0.33 | 0.25 | 1.9 | 28.0 | 45 | 6.3 |

| YOLOv5s | 0.33 | 0.50 | 7.2 | 37.4 | 98 | 6.4 |

| YOLOv5m | 0.67 | 0.75 | 21.2 | 45.4 | 224 | 8.2 |

| YOLOv5l | 1.0 | 1.0 | 46.5 | 49.0 | 430 | 10.1 |

| YOLOv5x | 1.25 | 1.25 | 86.7 | 50.7 | 787 | 12.1 |

As illustrated in Table 1, YOLOv5n has the smallest number of parameters (1.9 million) and the fastest inference time on both CPU and GPU hardware [31]. While its mAP is the lowest among the family, its minimal resource consumption makes it an ideal "blank slate" for research and development, particularly when the goal is to build upon and specialize a model for a specific domain like microscopy [32].

Rationale for Selecting YOLOv5n in Microscopy Research

Suitability for Resource-Constrained Environments

Microscopy research, especially in drug development, often involves processing thousands of high-resolution images. The requirement for high-throughput analysis, coupled with the potential for deployment on laboratory computers without high-end GPUs, makes computational efficiency a prime concern. YOLOv5n, with its minimal parameter count and fast inference speed, is designed specifically for "embedded devices and real-time applications" [28] and "scenarios requiring efficient, real-time processing" [32]. This aligns with the need for scalable and accessible analysis tools in scientific environments.

Effectiveness as a Baseline for Architectural Improvement

A core objective in developing YAC-Net is to innovate and improve upon existing architectures. Starting with a large, high-performance model like YOLOv5x offers less room for measurable improvement and is computationally expensive for rapid experimentation. YOLOv5n provides a lean baseline; its smaller architecture allows for faster training cycles, enabling researchers to quickly prototype, test, and validate new modules or techniques. Its performance establishes a clear lower bound, making it easier to quantify the contribution of any proposed enhancements. This approach is evidenced in successful research where YOLOv5n was used as a baseline and subsequently improved to outperform the original model on a specialized detection task [28].

Proven Efficacy in Biological Image Detection

Evidence from recent literature supports the applicability of YOLOv5 variants to biological and medical imaging tasks, demonstrating that even the smaller models can be effectively tuned for high performance.

Table 2: Performance of YOLOv5 Variants on Specialized Datasets

| Application Domain | Dataset | Model | Key Metric | Result |

|---|---|---|---|---|

| Urine Sediment Analysis [33] | Microscopic Urine Images | YOLOv5l | mAP@0.5 | 85.8% |

| YOLOv5x | mAP@0.5 | 85.4% | ||

| Industrial Defect Detection [28] | NEU-DET | Original YOLOv5n | mAP | 71.0% |

| Improved YOLOv5n | mAP | 75.3% | ||

| Multi-Sized Cell Detection [34] | Fluorescence Microscopy | YOLOv5-FPN | Average Precision | 0.8 (80.0%) |

For instance, in a study on urinary particle detection, all YOLOv5 variants were evaluated, with YOLOv5l and YOLOv5x achieving the highest mean average precision (mAP) [33]. This demonstrates the family's inherent capability for microscopy-like data. Furthermore, research on industrial surface defect detection—a problem space with challenges analogous to microscopic defect detection, such as small and indistinct features—successfully used YOLOv5n as a baseline. The researchers then enhanced it with targeted improvements, increasing its mAP by 4.3% [28]. This validates the strategy of starting with a lightweight model and architecturally optimizing it for a specific domain.

YOLOv5n Baseline Evaluation and Optimization Protocol

This protocol provides a step-by-step methodology for establishing a YOLOv5n performance baseline on a custom microscopy dataset and outlines initial paths for optimization, as applied in the YAC-Net project.

Phase 1: Baseline Establishment

Step 1: Environment and Dataset Setup

- Installation: Clone the official Ultralytics YOLOv5 repository and install dependencies as specified in

requirements.txt[31]. - Data Preparation: Annotate microscopy images with bounding boxes. Format the dataset in the YOLOv5 structure and create a corresponding dataset YAML file (e.g.,

microscopy_data.yaml) defining paths, class names, and number of classes. - Data Verification: Use the YOLOv5 training script's built-in functionality to visualize the first training batch (

train_batch*.jpg). This critical step confirms that images and labels are loaded correctly and that data augmentation is functioning as intended [35].

Step 2: Initial Training with Default Parameters

- Execute the training command to establish a performance baseline with default settings [35]:

python train.py --data microscopy_data.yaml --weights yolov5n.pt --epochs 300 --img 640 --batch-size 16 - Key Parameters:

--weights yolov5n.pt: Loads pre-trained COCO weights for transfer learning.--epochs 300: A standard starting point for training epochs [35].--img 640: Input image size. If small objects are prevalent in your microscopy images, consider increasing this value (e.g.,--img 1280) in subsequent runs [35].--batch-size: Use the largest value that fits your GPU memory.

Step 3: Baseline Validation

- Upon completion, evaluate the model on the validation set. Key metrics to record from the

results.txtfile and validation plots include:- mAP@0.5 (PASCAL VOC metric)

- mAP@0.5:0.95 (COCO metric)

- Training and validation loss curves

- Analyze the precision-recall curve and confusion matrix to identify specific class-wise performance issues.

Phase 2: Initial Optimization and Hyperparameter Tuning

Step 4: Data Augmentation Refinement YOLOv5 includes on-the-fly augmentations like mosaic, scaling, and color space adjustments [29] [30]. For microscopy data, consider:

- Adjusting the mosaic augmentation cutoff with

--close-mosaicto stabilize late-stage training [35]. - Modifying the

hyp.scratch-low.yamlconfiguration file to increase or decrease the intensity of certain augmentations based on the characteristics of your dataset.

Step 5: Hyperparameter Evolution

- Employ YOLOv5's built-in hyperparameter evolution to automatically find a better starting set of hyperparameters than the defaults [35] [33]:

python train.py --data microscopy_data.yaml --weights yolov5n.pt --epochs 300 --img 640 --evolve - This process uses a genetic algorithm to mutate hyperparameters over generations, selecting for those that yield the highest fitness value (typically mAP).

Step 6: Architectural Investigation (Post-Baseline) After a strong baseline is established, consider architectural modifications informed by the specific failure modes observed. As demonstrated in successful research, these can include [28]:

- Integrating attention mechanisms (e.g., Coordinate Attention) into the backbone to enhance feature extraction.

- Replacing the native PANet neck with a more advanced feature fusion network like Gold-YOLO to improve information flow.

- Adopting a more advanced loss function such as SIoU to improve bounding box regression.

Workflow and Resource Toolkit

Experimental Workflow Diagram

The following diagram illustrates the logical flow for the baseline selection, evaluation, and initial optimization of YOLOv5n within a research project.

Diagram Title: YOLOv5n Baseline Selection and Optimization Workflow

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 3: Essential Components for a YOLOv5n-based Detection Project

| Component / Solution | Function / Role | Example / Note |

|---|---|---|

| Ultralytics YOLOv5 Repo | Core codebase for training, validation, and export of models. | GitHub repository [31]. |

Pre-trained Weights (yolov5n.pt) |

Enables transfer learning, providing a strong starting point for feature detection and significantly reducing training time and data requirements. | Trained on the COCO dataset [35]. |

| Custom Dataset YAML File | Configuration file that informs the trainer about the dataset structure, number of classes, and class names. | Essential for adapting the model to a new task [31]. |

Hyperparameter Configuration (hyp.*.yaml) |

Defines the training "recipe," including learning rates, augmentation intensities, and loss weights. | Start with hyp.scratch-low.yaml [35]. |

| Genetic Algorithm (GA) | An automated method for hyperparameter optimization, evolving them over generations to maximize a fitness metric like mAP. | Implemented in YOLOv5 via --evolve [35] [33]. |

| Mosaic Data Augmentation | A training-time augmentation that combines four images into one, improving the model's ability to learn context and detect objects at various scales. | Particularly effective for addressing the "small object problem" [29] [30]. |

| Test-Time Augmentation (TTA) | An inference method that creates augmented versions of an input image and averages the predictions. | Can be enabled to boost final prediction accuracy at a cost of speed [35]. |

The selection of YOLOv5n as the foundational model for the YAC-Net project is a strategic decision grounded in its minimal computational footprint, rapid prototyping capabilities, and demonstrated potential for specialization. The quantitative data shows it provides the most efficient starting point, while published research confirms that its architecture is a viable canvas for innovations targeting complex detection tasks in microscopy and beyond. The protocols and toolkit provided herein offer a concrete roadmap for other researchers to implement this baseline strategy, establishing a robust and efficient foundation for advanced deep learning model development in scientific image analysis.

Within the framework of the YAC-Net deep learning model for microscopy image detection, the replacement of the traditional Feature Pyramid Network (FPN) with the Asymptotic Feature Pyramid Network (AFPN) constitutes a foundational innovation. This architectural modification directly addresses critical limitations inherent in previous automated parasite egg detection systems, specifically the loss or degradation of feature information during multi-scale feature fusion, which impairs the model's ability to encode objects with significant scale variance [25] [36]. In the context of analyzing microscopic parasite egg images, where objects can vary considerably in size and appearance, this degradation presents a substantial obstacle to achieving high precision and recall.

The conventional FPN structure, which employs a top-down pathway with lateral connections, primarily integrates semantic feature information from adjacent levels [25]. While effective to a degree, this approach suffers from a significant semantic gap between non-adjacent levels. High-level features, which contain rich semantic information, must propagate through multiple intermediate scales before fusing with low-level features that possess detailed spatial information. Throughout this propagation, semantic information can be lost or degraded, compromising the final feature maps used for detection [36]. In contrast, the AFPN introduces a hierarchical and asymptotic aggregation structure that supports direct interaction between non-adjacent levels. It initiates the fusion process by combining two adjacent low-level features and then asymptotically (progressively) incorporates higher-level features into the fusion process [25] [36]. This methodology avoids the larger semantic gap between non-adjistant levels, thereby preserving more discriminative information crucial for accurately identifying and localizing parasite eggs of varying sizes. Furthermore, the integration of an adaptive spatial fusion (ASF) operation allows the model to dynamically mitigate potential multi-object information conflicts that can occur during feature fusion at each spatial location, effectively learning to select beneficial features while ignoring redundant information [36] [37].

Performance Analysis and Quantitative Data

The integration of the Asymptotic Feature Pyramid Network into the YAC-Net model has yielded substantial quantitative improvements across all key detection metrics while simultaneously reducing the computational footprint of the model. The following tables summarize the core experimental results from the ablation study and comparative analysis, demonstrating the efficacy of this innovation.

Table 1: Ablation Study of YAC-Net Modifications on the ICIP 2022 Challenge Dataset (Fivefold Cross-Validation)

| Model Component | Precision (%) | Recall (%) | F1 Score | mAP@0.5 | Parameters |

|---|---|---|---|---|---|

| YOLOv5n (Baseline) | 96.7 | 94.9 | 0.9578 | 0.9642 | ~2,400,000* |

| + AFPN Replacement | 97.5 | 97.2 | 0.9735 | 0.9850 | ~1,924,302 |

| + C2f Module | 97.1 | 96.5 | 0.9680 | 0.9775 | - |

| YAC-Net (Full Model) | 97.8 | 97.7 | 0.9773 | 0.9913 | 1,924,302 |

*Note: The baseline parameter count is an estimate based on the reported reduction.

Table 2: Comparative Performance of YAC-Net Against State-of-the-Art Detection Methods

| Detection Method | Precision (%) | Recall (%) | F1 Score | mAP@0.5 | Parameter Count |

|---|---|---|---|---|---|

| Faster R-CNN [25] | - | - | - | - | High |

| Cascade Mask R-CNN [25] | - | - | - | - | High |

| YOLOX [25] | - | - | - | - | - |

| YAC-Net (Proposed) | 97.8 | 97.7 | 0.9773 | 0.9913 | 1,924,302 |

The data in Table 1 reveals that the replacement of the FPN with the AFPN is the single most impactful modification, contributing significantly to the gains in recall, F1 score, and mAP@0.5. Crucially, this performance boost is achieved alongside a reduction of approximately one-fifth in the number of parameters compared to the baseline YOLOv5n model [25]. This dual benefit of enhanced accuracy and reduced model complexity is a direct validation of the AFPN's efficient design. As shown in Table 2, the complete YAC-Net model achieves best-in-class performance on the ICIP 2022 Challenge dataset, establishing it as a highly effective and lightweight solution for parasite egg detection [25].

Experimental Protocol and Workflow

This section provides a detailed, actionable protocol for implementing and validating the AFPN module within a deep learning-based detection framework, such as YAC-Net, for microscopy image analysis.

Dataset Preparation and Preprocessing

- Dataset: Utilize the ICIP 2022 Challenge dataset or a comparable in-house dataset of microscopic parasite egg images [25].

- Data Annotation: Ensure all images are annotated with bounding boxes and class labels for each parasite egg instance. Common classes include helminth eggs like Ascaris lumbricoides, Trichuris trichiura, and hookworm species.

- Data Splitting: Employ a fivefold cross-validation strategy to ensure robust model evaluation and mitigate overfitting. Partition the entire dataset into five folds, using four for training and one for validation in a rotating manner [25].

- Data Augmentation: Apply standard online data augmentation techniques during training to improve model generalization. This should include:

Model Configuration and Training

- Baseline Model: Initialize the model with a pre-trained YOLOv5n backbone. This provides a strong starting point for feature extraction [25].

- AFPN Integration:

- Backbone Enhancement: Replace the C3 modules in the YOLOv5n backbone with C2f modules to enrich gradient flow and improve feature extraction capability [25].

- Training Hyperparameters:

- Optimizer: Stochastic Gradient Descent (SGD) or Adam.

- Learning Rate: Apply a cosine annealing scheduler, starting from 0.01.

- Batch Size: Set to 16 or 32, depending on GPU memory constraints.

- Epochs: Train for a minimum of 300 epochs to ensure convergence.

- Loss Function: Use a composite loss comprising Binary Cross-Entropy for classification and CIOU Loss for bounding box regression.

Model Evaluation and Validation

- Primary Metrics: Calculate Precision, Recall, F1 Score, and mean Average Precision at IoU=0.5 (mAP@0.5) on the held-out test set [25].

- Complexity Analysis: Report the total number of model parameters and computational load in Giga Floating Point Operations (GFLOPS) to quantify the model's efficiency [25] [38].

- Ablation Studies: Conduct controlled experiments to isolate the performance contribution of the AFPN by comparing the baseline model against the model with only the AFPN modification and the final YAC-Net model (AFPN + C2f) [25].

The workflow for this protocol is visualized in the following diagram, outlining the key stages from data preparation to model deployment.

Visualizing the Architectural Innovation

The core innovation of the AFPN lies in its structure for feature fusion. The following diagram contrasts the traditional FPN design with the AFPN, highlighting the direct, non-adjacent level interactions that reduce information loss.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for replicating the AFPN-based YAC-Net model and its evaluation.

Table 3: Essential Research Reagents and Computational Resources

| Resource Name | Type | Function / Application | Key Characteristics |

|---|---|---|---|

| ICIP 2022 Challenge Dataset [25] | Dataset | Benchmark for training and evaluating parasite egg detection models. | Annotated microscopic images of intestinal parasite eggs. |

| YOLOv5n [25] | Software Model | Baseline object detection model; serves as the starting backbone for YAC-Net. | Ultra-lightweight, pre-trained on large-scale datasets. |

| Asymptotic Feature Pyramid Network (AFPN) [36] | Algorithm/Module | Replaces FPN for multi-scale feature fusion with reduced information loss. | Supports direct non-adjacent level fusion; includes Adaptive Spatial Fusion (ASF). |