Template Selection for Out-of-Sample Geometric Morphometric Registration: Strategies for Robust Classification in Biomedical Research

The application of geometric morphometric (GM) classification rules to new, out-of-sample individuals is a critical challenge in biomedical research, particularly for clinical diagnostics and drug development.

Template Selection for Out-of-Sample Geometric Morphometric Registration: Strategies for Robust Classification in Biomedical Research

Abstract

The application of geometric morphometric (GM) classification rules to new, out-of-sample individuals is a critical challenge in biomedical research, particularly for clinical diagnostics and drug development. This article provides a comprehensive guide for researchers and scientists on template selection strategies for registering out-of-sample data into an existing GM shape space. We explore the foundational importance of template choice, review methodological frameworks like multi-template approaches and landmark-free registration, and offer practical solutions for optimizing performance and avoiding artifacts. The content synthesizes current evidence on validation protocols and comparative performance of different methods, empowering professionals to build reliable and scalable GM tools for phenotypic assessment.

The Core Challenge: Why Template Selection is Fundamental for Out-of-Sample GM

Defining the Out-of-Sample Problem in Geometric Morphometrics

Frequently Asked Questions (FAQs)

FAQ 1: What is the out-of-sample problem in geometric morphometrics? The out-of-sample problem refers to the challenge of classifying new individuals that were not part of the original study sample. In geometric morphometrics, classification rules are typically built from aligned coordinates (like Procrustes coordinates) derived from a training sample. These transformations use the entire sample's information, making it unclear how to apply this registration to a new individual without performing a new global alignment. This prevents the straightforward application of existing classification rules to new subjects [1].

FAQ 2: Why is solving the out-of-sample problem crucial for applied research? Solving this problem is essential for practical applications in fields like nutritional assessment and drug development. For instance, the goal of the SAM Photo Diagnosis App Program is to develop an offline smartphone tool for identifying the nutritional status of children from arm shape images. After validating a classification rule on different populations, the app must be able to assess new children; this requires obtaining the registered coordinates for a new child's arm shape within the training sample's shape space before classification can proceed [1].

FAQ 3: How does template selection influence out-of-sample registration? The choice of template used for registering new, out-of-sample raw coordinates is a critical methodological decision. Different template configurations from the study sample can be used as targets for this registration, and understanding sample characteristics and collinearity among shape variables is crucial for achieving optimal classification results [1].

FAQ 4: Are there automated, landmark-free methods that address this problem? Yes, automated landmark-free approaches like Deterministic Atlas Analysis (DAA) offer potential solutions. These methods use a dynamically computed geodesic mean shape (an atlas) to which all specimens in a dataset are compared. The deformation required to map this atlas onto each specimen is quantified, providing a basis for shape comparison without relying on manually placed homologous landmarks. This can enhance efficiency for large-scale studies [2].

Troubleshooting Guides

Issue 1: Poor Classification Performance on New Data

Problem: A classifier built from a training sample performs poorly when applied to new, out-of-sample individuals.

- Potential Cause 1: Inappropriate template for registration. The template chosen to register the new individual's raw coordinates may not adequately represent the shape space of the training sample.

- Potential Cause 2: Modality differences in data sources. Using 3D data from mixed modalities (e.g., CT scans and surface scans) can introduce artifacts and reduce correspondence between shape measurements.

- Solution: Standardize data by using surface reconstruction techniques, such as Poisson surface reconstruction, which creates watertight, closed surfaces for all specimens. This has been shown to significantly improve correspondence between different methods of shape measurement [2].

Issue 2: High Processing Time and Observer Bias

Problem: The process of manual landmark placement is too slow and prone to observer bias, especially for large datasets.

- Potential Cause: Reliance on manual or semi-automated landmarking. Traditional geometric morphometrics is largely manual, which limits processing speed, introduces bias, and hinders comparisons across highly disparate forms [2] [3].

Experimental Data & Protocols

| Method Name | Core Principle | Reported Advantages | Context of Use |

|---|---|---|---|

| Template Registration [1] | Registers out-of-sample raw coordinates to a chosen template from the training sample. | Allows for the projection of new individuals into an existing shape space. | Nutritional assessment from 2D arm shape images. |

| Deterministic Atlas Analysis (DAA) [2] | Uses a sample-dependent geodesic mean shape (atlas) and quantifies deformations to fit each specimen. | Landmark-free; enhanced efficiency for large-scale studies across disparate taxa. | Macroevolutionary analysis of 3D mammalian crania. |

| morphVQ Pipeline [3] | Uses descriptor learning and functional maps to establish correspondence between whole surfaces. | Automated; captures more morphological detail; computationally efficient. | Genus-level classification of biological shapes from 3D bone models. |

Table 2: Effect of Kernel Width on a Deterministic Atlas Analysis (DAA)

This table illustrates how a key parameter in DAA influences the analysis, using Arctictis binturong as an initial template on a dataset of 322 specimens [2].

| Kernel Width (mm) | Number of Control Points Generated | Implication for Shape Analysis |

|---|---|---|

| 40.0 mm | 45 | Captures broader shape variations. |

| 20.0 mm | 270 | A balanced level of detail for many studies. |

| 10.0 mm | 1,782 | Captures finer-scale shape deformations. |

Detailed Experimental Protocol: Template-Based Out-of-Sample Registration

This protocol outlines a methodology for evaluating out-of-sample cases using a template for registration, based on research for nutritional assessment [1].

1. Sample Collection and Training Set Creation: - Design: Assemble a reference sample with a convenience sampling design that ensures equal proportions of key factors (e.g., nutritional status, age, sex). - Criteria: Establish clear selection and exclusion criteria (e.g., age range, specific physiological conditions, absence of identifying marks). - Ethics: Obtain informed consent from legal guardians and secure approval from the relevant ethical review board.

2. Data Acquisition and Landmarking: - Imaging: Capture standardized images (e.g., of the left arm) from all subjects in the training sample. - Landmark Digitization: Manually place landmarks and corresponding semilandmarks on all images in the training dataset.

3. Shape Variable Processing: - Alignment: Perform a Generalized Procrustes Analysis (GPA) on the entire training dataset to align all landmark configurations and isolate shape variation. - Classifier Construction: Build a classifier (e.g., Linear Discriminant Analysis) using the Procrustes-aligned coordinates from the training sample.

4. Out-of-Sample Registration and Classification: - Template Selection: Select one or more template configurations from the training sample to serve as the target for registration. - New Individual Processing: For a new subject, capture an image and digitize the raw landmark coordinates. - Registration: Register the new individual's raw coordinates to the selected template(s). This step aligns the new data to the same coordinate system as the training sample. - Classification: Project the registered coordinates of the new individual into the classifier to determine its group membership.

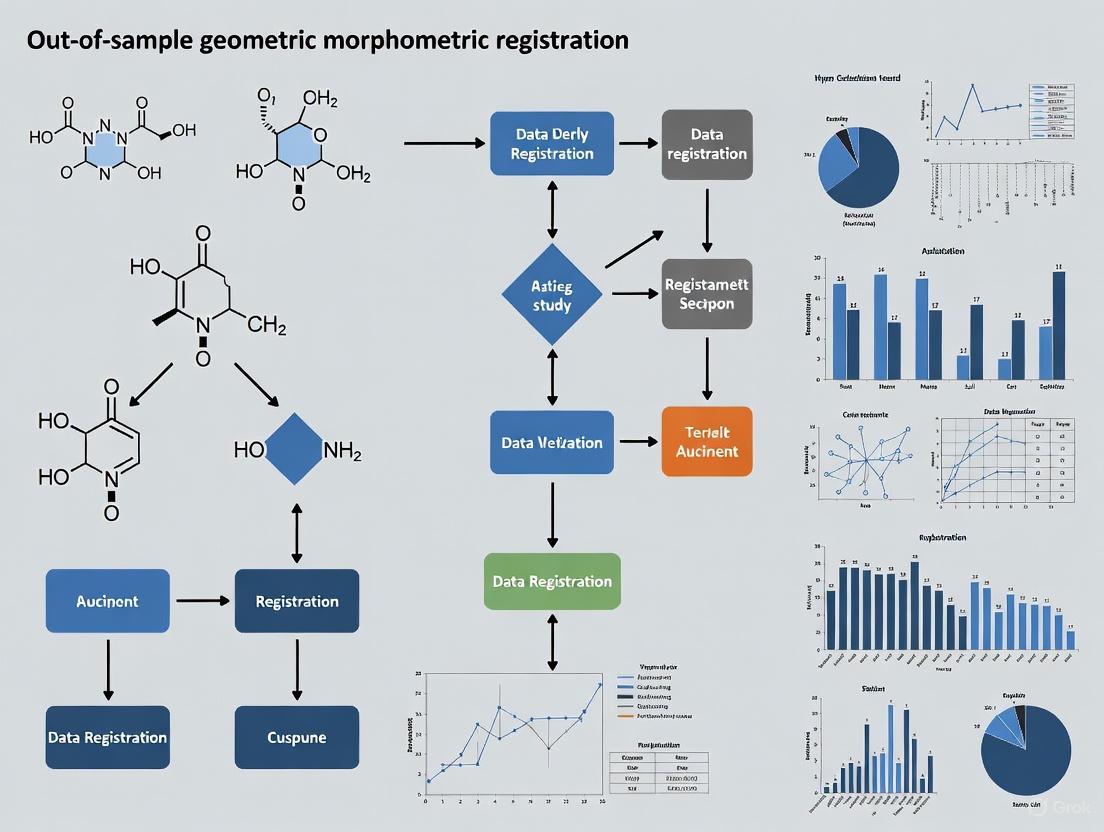

Workflow Visualization

Out-of-Sample Registration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Out-of-Sample GMM Research

| Item Name | Function / Application | Relevance to Out-of-Sample Problem |

|---|---|---|

| SAM Photo Diagnosis App [1] | A smartphone application for capturing and analyzing arm shape images to identify nutritional status. | A real-world application where solving the out-of-sample problem is critical for field use. |

| Deformetrica Software [2] | Implements the Deterministic Atlas Analysis (DAA) framework for landmark-free shape comparison. | Provides a methodological framework for incorporating new specimens without manual landmarking. |

| morphVQ Software [3] | A shape analysis pipeline using learned shape descriptors and functional maps for automated phenotyping. | Offers an efficient, automated alternative to capture shape variation for new samples comprehensively. |

| Poisson Surface Reconstruction [2] | A technique to create watertight, closed 3D surface meshes from scan data. | Standardizes mixed-modality data (CT/surface scans), improving correspondence for new data. |

| Semi-landmarks [1] | Points placed along curves and surfaces to capture outline and surface shape. | Crucial for accurately describing the geometry of new specimens in studies of complex shapes like the arm. |

The Critical Role of a Template in Registration and Shape Space Projection

Frequently Asked Questions

1. What is the fundamental role of a template in geometric morphometric (GM) registration? A template provides a standardized reference configuration of landmarks and semi-landmarks, serving as the common target onto which all other specimens in a study are aligned [4]. This process is crucial for capturing shape variation by establishing geometric homology across your sample. The biological question guiding your research strongly influences the template's design, and this is especially critical when using curve and surface semi-landmarks [4].

2. Why is template selection particularly critical for classifying out-of-sample individuals? For out-of-sample classification, a new individual's raw coordinates are registered (aligned) to a single template configuration, rather than being included in a full Generalized Procrustes Analysis (GPA) with the entire sample [5]. The choice of this template—such as the sample mean shape, an individual specimen close to the mean, or a representative from a specific group—directly impacts the registered coordinates of the new specimen. This, in turn, affects how accurately it will be projected into the existing sample's shape space and classified [5].

3. How does template complexity (landmark density) affect my analysis? Finding the optimal number of coordinate points is essential [6]. An overly simple template with too few points will fail to capture enough morphological detail, limiting your ability to detect shape differences. An overly complex template leads to oversampling, which increases data collection time, reduces computational efficiency, and can diminish statistical power by introducing extraneous information [6]. The optimal density should be adapted to the level of morphological variation in your specific sample [4].

4. My data contains damaged or fragmented specimens. How can a template help? A well-defined template serves as a complete model of the structure, enabling you to estimate the position of missing landmarks on damaged specimens through imputation [6]. The best imputation method (e.g., regression-based) depends on the extent of damage. A robust template is key to reconstructing missing data, which is a common challenge when working with archaeological or paleontological materials [6].

5. Are there standardized methods for creating a 3D template? Yes, one reproducible procedure involves using polygonal modeling software to generate a regular template configuration [4]. This method gives the researcher control over the template's geometry, allowing them to systematically define its complexity. Another approach involves creating a preliminary template that intentionally oversamples the structure, then applying a landmark sampling algorithm to determine the optimal number of points for your specific research question [6].

Troubleshooting Guides

Problem: Poor Out-of-Sample Classification Performance

Symptoms

- New specimens are consistently misclassified, even when similar specimens in the training set are classified correctly.

- High variance in the projected shapes of out-of-sample specimens.

Diagnosis and Solutions

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Suboptimal Template Choice | Compare classification results using different templates (e.g., mean shape, a specific specimen). | Test multiple template candidates and select the one that yields the most stable and biologically meaningful classification for your out-of-sample data [5]. |

| Template Complexity Mismatch | Evaluate if the template captures relevant morphological features for the hypothesis being tested [6]. | Re-estimate the optimal coordinate density for your sample. Simplify an overly complex template or add more semi-landmarks to an overly simple one [4] [6]. |

| Insufficient Training Sample Size | Analyze how estimates of mean shape and shape variance change as you reduce your sample size [7]. | Increase your training sample size if possible. Be aware that small sample sizes lead to unstable mean shape estimates and increased shape variance, which undermines the template's reliability [7]. |

Problem: Template Registration Errors and Alignment Failures

Symptoms

- Draped semi-landmarks cluster or fold unnaturally on the target specimen.

- Poor alignment of major morphological structures after Procrustes registration.

Diagnosis and Solutions

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Inconsistent Landmark Homology | Visually inspect landmark and semi-landmark placement across several specimens. | Re-establish a clear, biologically homologous protocol for landmark definition. Ensure all digitization is performed by a single observer or train multiple observers to high consistency [7]. |

| Irregular Template Geometry | Check the initial spacing and distribution of points on your template. | Use polygonal modeling tools to create a template with a regular and uniform point distribution, which provides a better foundation for sliding semi-landmarks [4]. |

| Large Shape Disparity in Sample | Perform a Principal Component Analysis (PCA) to visualize the morphospace of your sample. | If your sample has extremely diverse forms (e.g., pelvis shapes across different theropod species), ensure your template design is complex enough to capture this variation. A single, simple template may be insufficient for highly disparate morphologies [4]. |

Experimental Protocols

Protocol 1: Determining Optimal Coordinate Point Density

Objective: To establish a landmark and semi-landmark protocol that adequately captures morphological shape without over-sampling [6].

Materials:

- 3D scans of a representative sub-sample of specimens (e.g., n=5) [6].

- 3D modeling software (e.g., Artec Studio, Viewbox 4) [6].

- Geometric morphometrics software (e.g., R

geomorphpackage) [7].

Methodology:

- Design a Preliminary Template: Create a template that intentionally over-samples the structure of interest. This involves defining a large number of landmarks, curve semi-landmarks, and surface semi-landmarks [6]. For example, a protocol for the human os coxae might start with 25 landmarks, 159 curve semi-landmarks, and 425 surface semi-landmarks (Total k=609 points) [6].

- Apply the Template: Digitize this high-density template on all specimens in your sub-sample.

- Analyze Coordinate Density: Subject the configurations to a landmark sampling algorithm (e.g., Watanabe’s Landmark Sampling). This analysis will indicate the minimal number of points required to capture the majority of shape variation in your sample [6].

- Define Final Template: Based on the results, create a simplified final template with the optimal number of points for your full study.

Protocol 2: Evaluating Templates for Out-of-Sample Registration

Objective: To identify the most effective template for projecting new individuals into an existing shape space for classification [5].

Materials:

- A training sample with known group affiliations (e.g., nutritional status, species).

- A test set of specimens withheld from the initial analysis.

- GM software capable of Procrustes registration and discriminant analysis.

Methodology:

- Create Candidate Templates: Generate several candidate templates from your training sample, including:

- The mean shape configuration from the GPA.

- An individual specimen that is closest to the mean shape.

- A specimen that is a representative of a specific group (e.g., the mean shape of a "healthy" group).

- Withhold Test Data: Remove a subset of specimens from the training sample to serve as a known test set.

- Build Classifier: Perform a standard GPA and build a classification model (e.g., Linear Discriminant Analysis) on the training sample only.

- Test Registration: For each candidate template, register the raw coordinates of each test specimen to it. Then, project the newly registered specimen into the training shape space and classify it.

- Compare Performance: Evaluate the classification accuracy for each template candidate. The template that yields the highest classification accuracy for the out-of-sample test specimens is optimal for your application [5].

Workflow Diagram

Out-of-Sample Classification Workflow

The Scientist's Toolkit: Key Research Reagents

| Item | Function in Template-Based Research |

|---|---|

| 3D Structured-Light Scanner (e.g., Artec Eva) | Creates high-resolution 3D surface meshes of physical specimens, which serve as the raw data for digitizing landmarks and building templates [6]. |

Geometric Morphometrics Software (e.g., R geomorph, Viewbox4, tpsDig2) |

Performs essential steps like Generalized Procrustes Analysis (GPA), sliding of semi-landmarks, statistical shape analysis, and visualization of results [7] [6]. |

| Polygonal Modeling Software (e.g., MeshLab, Blender) | Used to design and create the initial 3D template, allowing researchers to control the geometry and point distribution of landmark configurations before applying them to actual specimens [4]. |

| Template Configuration | The core reagent of the analysis. A k x m matrix (k=number of points, m=3 for 3D space) that defines the homologous points for a structure. Its design directly influences all downstream results [4] [6] [5]. |

| Landmark Sampling Algorithm | A computational tool that helps determine the optimal number of coordinate points needed to represent an object's shape without over- or under-sampling, ensuring statistical power and efficiency [6]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary risks of using an arbitrary or single template for out-of-sample registration? Using an arbitrary or single template introduces registration bias, where the alignment process is optimized for one specific shape that may not represent the morphological variation in your entire sample or the new specimen. This can lead to misclassification, as the shape coordinates obtained for the out-of-sample individual may be inaccurate, causing it to be assigned to the wrong group [1].

FAQ 2: How can poor template choice affect my study's conclusions? Poor template choice can generate artifacts in the shape data that are misinterpreted as biological signal. For instance, in taxonomic studies, this can lead to incorrect conclusions about the relatedness of species or the identity of a new specimen. Over-reliance on Principal Component Analysis (PCA) plots derived from biased registrations has been shown to produce conflicting and unreliable results in evolutionary studies [8].

FAQ 3: Is there an optimal number of templates I should use? While there is no universal number, your template set must capture the spectrum of shape variation present in your training sample. Using a single template is highly discouraged. One methodology is to use multiple templates, including the sample consensus (mean shape) and specimens representing the extremes of the sample's shape variation to ensure robust out-of-sample registration [1].

FAQ 4: My data involves 2D images of symmetric structures. What specific pitfalls should I avoid? For symmetric structures, a major pitfall is not decomposing shape variation into its symmetric and asymmetric components during analysis. Using a single, potentially asymmetric template for registration can conflate true symmetric variation with directional asymmetry, leading to biased results. A specialized geometric morphometrics framework is required to properly analyze these components [9].

FAQ 5: Can increasing my overall sample size compensate for a poor template? A large sample size is always beneficial for defining population-level shape variation. However, it does not directly solve the problem of out-of-sample registration bias. A large but morphologically restricted training sample will still provide a poor set of templates if it does not encompass the shape diversity that a new specimen might possess [7] [1].

Troubleshooting Guides

Problem: Low Classification Accuracy for New Specimens

You have built a classifier (e.g., for nutritional status or species identification) that performs well on your original sample but fails to accurately classify new individuals.

Potential Cause 1: Template is not representative.

- Diagnosis: The template used to register the out-of-sample individual's raw coordinates is morphologically distinct from the new specimen, forcing a suboptimal alignment that distorts its true shape.

- Solution: Do not rely on a single template. Create a template set that includes the Procrustes consensus (mean shape) and specimens representing the major axes of shape variation in your training sample (e.g., from a Principal Component Analysis). Register the new specimen to all templates in this set and use the resulting coordinates for classification, or use the consensus for a more stable reference [1].

Potential Cause 2: Classifier is overly tuned to sample-specific alignment artifacts.

- Diagnosis: The classifier has learned patterns that are idiosyncratic to the particular Generalized Procrustes Analysis (GPA) of your training sample, which do not generalize to new alignments.

- Solution: Ensure your validation protocol correctly simulates the real-world application. When testing classifier performance, split your data into training and test sets, then perform GPA separately on the training set. The test set individuals must then be registered to a template derived only from the training set (e.g., the training set's consensus shape) before classification. This prevents data leakage and provides a realistic accuracy estimate [1].

Problem: Inconsistent or Biased Shape Data

The shape data for out-of-sample specimens show unexpected patterns, such as a systematic shift in one direction of morphospace, or high levels of asymmetric variation in a symmetric structure.

Potential Cause 1: Registration amplifies allometric (size-related) bias.

- Diagnosis: The template has a very different size or allometry from the new specimen. During registration, the scaling component can confound the pure shape information, pulling the new specimen's coordinates toward the template's allometric trajectory.

- Solution: If allometry is a concern in your study, consider using multiple templates that represent different size classes within your sample for registration. Additionally, explicitly test for and account for allometric effects in your statistical models [1].

Potential Cause 2: Template introduces artificial asymmetry.

- Diagnosis: When studying symmetric structures like flowers or skulls, using an asymmetric template will impart that asymmetry onto all newly registered specimens.

- Solution: Utilize a symmetric template. This can be created by reflecting and averaging landmark configurations. Employ a specialized geometric morphometric protocol that explicitly separates symmetric and asymmetric shape components during analysis, which provides a more biologically informative interpretation and avoids artifact generation [9].

The workflow below illustrates the impact of template choice on out-of-sample registration and data integrity:

Problem: Poor Performance in Discriminating Closely Related Groups

Your analysis fails to find consistent shape differences between two closely related species or populations, or the differences change depending on which view or landmark set is used.

- Potential Cause: Inadequate sample size and template representation for the morphological scale of the question.

- Diagnosis: The templates and training sample do not adequately capture the subtle, but consistent, shape differences that distinguish the groups. This is compounded by high intraspecific variance.

- Solution: Increase the sample size of your training set to better estimate population-level shape parameters and variances. Conduct pilot studies using multiple views or skeletal elements to identify which anatomical structures provide the strongest discriminatory signal for your specific hypothesis. A small sample size can lead to unstable mean shape estimates, which directly impacts the quality of your templates [7].

The table below summarizes findings from various studies on the performance of different analytical methods, highlighting the limitations of traditional approaches.

Table 1: Performance Comparison of Morphometric Methods in Classification Tasks

| Study Context | Traditional Method | Alternative Method | Key Finding on Performance | Citation |

|---|---|---|---|---|

| Carnivore Tooth Mark Identification | Geometric Morphometrics (2D outlines) | Deep Learning (Convolutional Neural Networks) | GMM classification accuracy < 40%, while Deep Learning achieved ~81% accuracy. | [10] |

| Species Discrimination (Vole Skulls) | Visual/Subjective Assessment | Learning-Vector-Quantization Neural Networks | Neural networks misclassified only 3% of specimens, a task the human eye could not perform reliably. | [11] |

| Hominin Taxonomy (Skull Morphology) | Principal Component Analysis (PCA) | Supervised Machine Learning Classifiers | PCA outcomes found to be artifacts of input data, unreliable and not reproducible. Supervised classifiers were more accurate. | [8] |

| Impact of Sample Size (Bat Skulls) | Geometric Morphometrics with small samples | Geometric Morphometrics with large samples (n >70) | Reducing sample size increased shape variance and impacted mean shape estimates, undermining robustness. | [7] |

The Scientist's Toolkit: Essential Materials & Methods

Table 2: Key Research Reagents and Solutions for Robust Geometric Morphometrics

| Item | Function/Description | Considerations for Template Selection | |

|---|---|---|---|

| Representative Template Set | A collection of landmark configurations (e.g., mean shape, extreme morphologies) used to register out-of-sample specimens. | The cornerstone of avoiding bias. The set must represent the shape diversity of the training sample to prevent forcing new specimens into an unnatural alignment. | |

| Generalized Procrustes Analysis (GPA) | A statistical procedure that superimposes landmark configurations by removing the effects of position, scale, and orientation. | Standard for analyzing the training sample. Crucially, out-of-sample specimens should not be included in this initial GPA; they are aligned to a template derived from it. | |

| Symmetric Template | A template created by reflecting and averaging a configuration, used for analyzing bilaterally or rotationally symmetric structures. | Essential for preventing the introduction of artificial asymmetry during the registration of new specimens to a symmetric structure. | [9] |

| Supervised Machine Learning Classifiers | Algorithms like Linear Discriminant Analysis, Neural Networks, or Support Vector Machines trained to assign specimens to predefined groups. | Often provide higher classification accuracy than traditional unsupervised methods (e.g., PCA) and are more robust for identifying new taxa or groups. | [8] [11] |

| High-Resolution Micro-CT Scanner | Imaging technology for obtaining high-quality 2D or 3D digital models of biological structures. | Provides the foundational data integrity. 3D data is often superior, as 2D analyses can introduce biases based on object positioning and miss critical morphological information. | [12] [13] |

Detailed Experimental Protocol: Out-of-Sample Registration for Classification

This protocol outlines a robust methodology for registering a new specimen for classification, based on the geometric morphometrics workflow described in the search results [1] [9].

Aim: To obtain unbiased shape coordinates for a new specimen that are directly comparable to an existing training sample's shape space.

Materials and Software:

- Raw landmark coordinates of a new specimen.

- Training dataset of landmark coordinates with known group affiliations.

- Morphometric software (e.g., R with

geomorphpackage, TPS series).

Step-by-Step Method:

Build a Representative Template Set from Your Training Sample:

- Perform a standard Generalized Procrustes Analysis (GPA) on the entire training dataset.

- Conduct a Principal Component Analysis (PCA) on the Procrustes coordinates to visualize the major axes of shape variation.

- Select a set of templates for out-of-sample registration. This set should include:

- The Procrustes consensus shape (mean configuration).

- A few specimens that represent the extremes of the primary PCs (e.g., at the positive and negative ends of PC1 and PC2).

Register the New Specimen to Each Template:

- For each template in your set, perform a Partial Procrustes Superimposition. This aligns the new specimen's raw coordinates to the specific template, minimizing the Procrustes distance between them.

- This step yields multiple sets of registered coordinates for the single new specimen, one for each template used.

Project the Registered Coordinates into the Training Shape Space:

- Take each set of registered coordinates from Step 2 and project them into the tangent space of the training sample. This is a critical step to make the new specimen's shape directly comparable to the original data.

- This typically involves using the projection matrix derived from the training sample's GPA.

Classify the New Specimen:

- Use your pre-built classifier (e.g., Linear Discriminant Analysis, Neural Network) to classify the new specimen's projected shape coordinates.

- If you have registered to multiple templates, you can run the classification for each result and use a consensus or probabilistic outcome.

The following diagram visualizes this multi-template registration workflow:

Frequently Asked Questions

FAQ 1: Why is a single template often insufficient for registering out-of-sample specimens? Using a single template can introduce bias, especially when the study sample is highly variable. The accuracy of registration depends on how well the algorithm can align the template with each target specimen. This becomes difficult as the morphological difference between the template and target increases, leading to larger registration errors [14]. For robust out-of-sample registration, using multiple templates that represent the morphological diversity of your population is recommended [14].

FAQ 2: How do I select appropriate templates for a multi-template approach? If prior information about your sample's morphological variation is available, use it to select templates. When no prior information exists, an unbiased method like K-means clustering can be used. This involves:

- Performing a Generalized Procrustes Analysis (GPA) on point clouds of all specimens.

- Conducting a Principal Component Analysis (PCA) on the Procrustes coordinates.

- Applying K-means clustering to the PC scores to identify major morphological clusters.

- Selecting the specimens closest to the centroids of these clusters as your templates [14]. This method ensures your templates collectively capture the overall variation in your dataset.

FAQ 3: My dataset contains 3D models from different scanning modalities (e.g., CT and surface scans). How does this affect my analysis? Mixing modalities, such as computed tomography (CT) scans and surface scans, can introduce non-biological shape differences because they capture surface topology differently. This can significantly impact the results of landmark-free methods. A recommended solution is to standardize your data using Poisson surface reconstruction, which creates consistent, watertight, closed meshes from all specimens, thereby improving correspondence between shape measurements [2].

FAQ 4: What are the key metrics for evaluating the performance of a registration method? When comparing a new registration method (like a landmark-free approach) to a traditional "gold standard" (like manual landmarking), several metrics are crucial for evaluation [2] [14]:

- Root Mean Square Error (RMSE): Quantifies the average error in landmark positioning compared to the gold standard.

- Mantel Test & PROTEST: Assess the correlation between shape distance matrices derived from different methods.

- Phylogenetic Signal: Measures how much of the shape variation is explained by phylogenetic relationships.

- Morphological Disparity: Estimates the amount of shape variation within a group.

- Evolutionary Rates: Calculates the rate of shape change over time or across a phylogeny.

Troubleshooting Guides

Problem: Poor Out-of-Sample Registration Accuracy

- Potential Cause 1: Inadequate Template Representativeness. A single template may not capture the full morphological spectrum of your population.

- Solution: Implement a multi-template pipeline. One such method is MALPACA (Multiple Automated Landmarking through Point cloud Alignment and Correspondence), which uses several templates and takes the median landmark estimate from all corresponding registrations for each target specimen, reducing single-template bias [14].

- Potential Cause 2: Incorrect registration parameters.

- Solution: In methods like Deterministic Atlas Analysis (DAA), the kernel width parameter controls the spatial scale of deformation. A smaller kernel width captures finer details but requires more computational power. Systematically test different kernel widths and evaluate their performance using metrics like RMSE to find the optimal setting for your data [2].

Problem: Low Correlation Between Traditional and Automated Shape Data

- Potential Cause: Methodological differences in capturing shape. Landmark-free methods and traditional landmarking capture shape variation in fundamentally different ways, which can lead to discrepancies.

- Solution:

- Perform a thorough heatmap analysis based on thin-plate spline deformations to visually identify how and where the shape is captured differently by each method [2].

- Use statistical tests like the Mantel test and PROTEST to quantify the overall correlation between the shape matrices generated by the two methods [2]. This helps determine if the patterns of variation are consistent, even if the exact numerical values differ.

- Solution:

Problem: Low Sample Size and Statistical Power

- Potential Cause: Insufficient information density to reliably map the true population distribution, leading to overfitting.

- Solution: Employ data augmentation techniques. Generative Adversarial Networks (GANs) can be used to create highly realistic synthetic landmark data that follows the original training distribution. This augmented dataset can improve the quality and robustness of subsequent statistical models and classifiers [15].

Experimental Protocols & Data

Table 1: Quantitative Metrics for Method Evaluation This table outlines key metrics for comparing the performance of a new registration method against a gold standard.

| Metric | Description | Interpretation |

|---|---|---|

| Root Mean Square Error (RMSE) [14] | Average distance between estimated and gold standard landmark positions. | Lower values indicate higher landmarking accuracy. |

| Mantel Test [2] | Correlates pairwise distance matrices from two methods. | A significant positive correlation suggests the methods capture similar overall variation patterns. |

| PROTEST [2] | Procrustes-based test of association between two configurations. | A significant result indicates concordance between the multivariate datasets. |

| Phylogenetic Signal (e.g., Kmult) [2] | Measures how trait variation depends on phylogenetic relatedness. | Helps assess if evolutionary inferences are consistent between methods. |

| Morphological Disparity [2] | Quantifies the volume of morphospace occupied by a group. | Evaluates whether the methods yield similar estimates of morphological diversity. |

Table 2: Addressing Data Modality Challenges This table summarizes the problem of mixed data modalities and a proposed solution.

| Aspect | Challenge | Proposed Solution |

|---|---|---|

| Data Modality | Using mixed modalities (e.g., CT vs. surface scans) introduces non-biological shape differences [2]. | Poisson surface reconstruction to create uniform, watertight meshes [2]. |

| Impact | Reduces correspondence between shape measurements from manual and automated methods [2]. | Standardizes mesh topology, significantly improving cross-method concordance [2]. |

Protocol: K-means Multi-Template Selection Goal: To objectively select a set of representative templates for automated landmarking when no prior morphological information is available [14].

- Input: 3D surface models (e.g., in PLY format) for the entire study sample.

- Point Cloud Extraction: Generate sparse point clouds from all 3D models to reduce computational burden.

- Generalized Procrustes Analysis (GPA): Perform Procrustes superimposition on the point clouds to remove differences in position, orientation, and scale.

- Principal Component Analysis (PCA): Decompose the Procrustes-aligned coordinates into PC scores to reduce dimensionality.

- K-means Clustering: Apply K-means clustering to the PC scores to identify the major morphological groups within the sample. The value of K (number of clusters) can be chosen based on the study design.

- Template Selection: For each identified cluster, select the specimen whose point cloud is closest to the centroid of that cluster. These specimens become your templates.

Protocol: Post-hoc Quality Control for Multi-Template Landmarking Goal: To assess the performance of individual templates in a multi-template pipeline and refine landmark estimates [14].

- Run MALPACA: Execute the multi-template pipeline (e.g., MALPACA) to obtain landmark estimates for all target specimens from each template.

- Import Data: Import the landmark estimates from each individual template into a statistical environment like R.

- Assess Convergence: Analyze how closely the landmark estimates from different templates converge for each target specimen. Identify outlier estimates that are far from the consensus.

- Refine Estimates (Optional): Remove the outlier estimates and re-calculate the final landmark positions (e.g., by taking the median of the remaining estimates). Compare the RMSE of the refined estimates to the original to check for improvement.

Workflow Visualization

The following diagram illustrates the core workflow for template selection and out-of-sample registration, integrating the solutions to key challenges.

Diagram 1: Workflow for robust out-of-sample registration, integrating solutions for data modality and template selection.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools This table lists key software and methodological "reagents" for geometric morphometrics research.

| Item | Function / Description |

|---|---|

| 3D Slicer with SlicerMorph [14] | An open-source platform for image analysis and visualization. The SlicerMorph extension provides specific tools for GM, including automated landmarking pipelines (ALPACA, MALPACA). |

| Generalized Procrustes Analysis (GPA) [16] | A core superimposition method that registers landmark configurations by removing differences in location, orientation, and scale, isolating shape for analysis. |

| K-means Clustering [14] | An unsupervised machine learning algorithm used for template selection by identifying natural morphological clusters in a dataset when prior information is lacking. |

| Deterministic Atlas Analysis (DAA) [2] | A landmark-free method that computes a sample-specific average shape (atlas) and measures individual shapes as deformations from this atlas using control points and momentum vectors. |

| Generative Adversarial Networks (GANs) [15] | A class of artificial intelligence algorithms used for data augmentation; they can generate synthetic geometric morphometric data to improve statistical power in studies with small sample sizes. |

| Poisson Surface Reconstruction [2] | An algorithm used to create watertight, closed 3D meshes from point cloud data, crucial for standardizing models from different scanning modalities. |

A Practical Toolkit: Methodological Frameworks for Template Selection and Application

Frequently Asked Questions

What is a single-template approach in geometric morphometrics? A single-template approach is a registration-based method where one specimen, chosen as a template or atlas, is used to guide the automated landmarking of all other specimens in a study sample. The registration algorithm maps the landmarks from this single template onto every target specimen [14].

What is the main technical limitation of using a single template? The primary limitation is that registration accuracy decreases as the morphological difference between the template and target specimens increases. This can introduce systematic bias and larger landmarking errors, especially in studies with high morphological variability [14].

My dataset contains multiple species. Is a single-template approach suitable? For highly variable samples, such as those spanning different species, a single-template approach is generally not recommended. Its performance significantly declines when morphological variation is large. In such cases, a multiple-template approach is superior for accommodating the wide range of forms [14].

How does template choice affect my results? The choice of template is critical. Selecting a template that is morphologically atypical of your sample can lead to poor registration for the majority of your specimens. The ideal template should be as close as possible to the average shape of your study population to minimize overall error [14] [2].

Are there alternatives if a single template isn't working for my dataset? Yes. If you encounter high errors, consider these strategies:

- Multiple-Template Approach: Methods like MALPACA use several templates and take the median landmark estimate from all registrations, reducing bias [14].

- Landmark-Free Methods: Techniques like Deterministic Atlas Analysis (DAA) avoid landmarks altogether by comparing shapes based on the deformation of an iteratively computed atlas shape [2].

Troubleshooting Common Problems

Problem: High landmark estimation errors across many specimens.

- Potential Cause: The single template is morphologically too distant from a large portion of your sample.

- Solutions:

- Re-assess Template Choice: If possible, select a new template that is more central to the morphological distribution of your dataset [14].

- Switch to a Multi-Template Method: This is the most robust solution. Implement a pipeline like MALPACA, which is designed to handle higher variability [14].

- Validate with a Subset: Manually landmark a small, morphologically diverse subset of your specimens to quantify the error and confirm the need for a different approach [14].

Problem: Successful registration for some species but poor results for others.

- Potential Cause: The single template cannot capture the shape disparities across distinct taxonomic groups.

- Solutions:

- Implement Species-Specific Landmarking: Run separate single-template analyses for each species, using a representative template from within that species. This often yields more accurate results than a global single-template approach [14].

- Adopt a Multi-Template Framework: Use a method that automatically selects and leverages multiple templates from across the morphological spectrum of your data [14].

Problem: Inconsistent landmark placement on symmetric or repetitive structures.

- Potential Cause: The registration algorithm struggles with bilateral symmetry or structures that lack clear, unique homologous points.

- Solutions:

- Define Landmarks More Precisely: Ensure the template has clearly defined, biologically homologous landmarks.

- Utilize Semi-Landmarks: For curves and surfaces, incorporate sliding semi-landmarks in your initial template to better capture the geometry of these structures.

- Post-hoc Symmetry Analysis: Consider using specialized geometric morphometric methods designed to handle symmetry after data collection.

Experimental Protocol: Evaluating a Single-Template Approach

This protocol provides a step-by-step guide to assess the feasibility and accuracy of using a single template for your specific dataset.

1. Goal To determine if a single-template approach provides sufficient landmarking accuracy for a given study sample by comparing automated landmark estimates to a manually annotated "gold standard."

2. Experimental Workflow The following diagram outlines the key stages of this validation experiment.

3. Materials and Reagents

| Item | Function / Description |

|---|---|

| 3D Surface Models | Input data; high-resolution mesh files (e.g., PLY, STL format) of all specimens [14]. |

| Landmarking Software | Software with automated registration (e.g., ALPACA in SlicerMorph) and manual landmarking tools [14]. |

| "Gold Standard" Landmarks | A set of manually placed landmarks on every specimen, serving as the ground truth for error calculation [14]. |

| Statistical Software (R) | For performing Procrustes superimposition, calculating Root Mean Square Error (RMSE), and other morphometric analyses [14]. |

4. Step-by-Step Procedure

Dataset Curation:

- Assemble your 3D surface models. Ensure data quality and consistent orientation.

- For multi-species or highly variable samples: Deliberately include specimens that represent the extremes of morphological variation [14].

Create a "Gold Standard" (GS):

- Manually annotate all specimens with the required landmarks. This is time-consuming but critical for validation.

- To minimize bias, have one experienced researcher perform all manual landmarking or establish a strict protocol if multiple researchers are involved [14].

Template Selection:

Automated Landmarking:

- Using your chosen software (e.g., ALPACA), run the automated landmarking pipeline for the entire dataset using the single selected template [14].

- Ensure all output landmarks are in the same coordinate system as your GS.

Error Quantification and Analysis:

- For each specimen, calculate the Root Mean Square Error (RMSE) between the automated landmark positions and the GS landmarks.

- Compare the RMSE to your pre-defined acceptable threshold, which should be informed by the level of biological variation you are studying and the magnitude of typical intra-observer error in your field [14].

- Analyze if errors are randomly distributed or systematically linked to specific morphological traits.

5. Quantitative Benchmarks and Decision Matrix

The table below summarizes key performance metrics to guide your evaluation, based on comparisons with manual landmarking.

| Metric | Single-Template Performance | Interpretation & Action |

|---|---|---|

| Overall RMSE | High error across most specimens. | The single template is a poor fit for the entire sample. Action: Switch to a multi-template approach [14]. |

| Landmark-Specific Error | High error concentrated on specific landmarks (e.g., those on highly variable structures). | The registration algorithm struggles with local shape differences. Action: Manually check/refine these landmarks or use a different template [14]. |

| Correlation with GS Morphospace | Low correlation in Procrustes distances or PC scores. | Automated method captures different biological signals. Action: Multi-template methods show significantly higher correlation and are preferable [14]. |

| Performance in Disparate Taxa | Significant performance drop in specific groups (e.g., Primates, Cetacea). | The template cannot capture the shape disparities. Action: Use a species-specific or multi-template approach [2]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Geometric Morphometrics |

|---|---|

| SlicerMorph | An open-source extension for 3D Slicer; provides tools for ALPACA, MALPACA, and other morphometric analyses [14]. |

| ALPACA (Automated Landmarking through Point Cloud Alignment and Correspondence) | A specific, fast single-template automated landmarking method that uses sparse point clouds for efficiency [14]. |

| MALPACA (Multiple ALPACA) | The multi-template extension of ALPACA, which uses median landmark estimates from multiple templates to reduce bias [14]. |

| Deterministic Atlas Analysis (DAA) | A landmark-free method that uses diffeomorphic transformations and an iteratively computed atlas to compare shapes without predefined landmarks [2]. |

| Generalized Procrustes Analysis (GPA) | A standard procedure to superimpose landmark configurations by removing the effects of position, orientation, and scale [14]. |

| K-means Clustering | An algorithm that can be used on shape data (e.g., PC scores from GPA) to help select a diverse and representative set of templates for a multi-template approach [14]. |

Multi-Template Strategies (e.g., MALPACA) for Highly Variable Datasets

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using a multi-template strategy like MALPACA over single-template automated landmarking? Multi-template strategies significantly outperform single-template methods when landmarking highly variable specimens, such as those from different species. Using multiple templates accommodates large morphological variations by reducing the bias introduced by any single template. For each landmark, the median estimate from all templates is used, which produces more accurate and reliable results compared to reliance on a single source [14].

Q2: I have no prior information about the morphological variation in my dataset. How can I select appropriate templates? When prior information is unavailable, a K-means-based template selection method can be used. This unbiased approach uses point clouds from your 3D surface models to approximate morphological patterns. The process involves [14]:

- Performing a Generalized Procrustes Analysis (GPA) on the point clouds.

- Conducting a Principal Component Analysis (PCA) on the Procrustes-aligned coordinates.

- Applying K-means clustering to the PC scores to identify specimens closest to the cluster centroids. These centroid specimens are then manually landmarked and used as templates for the MALPACA pipeline.

Q3: Can I perform a quality check on the results after running MALPACA? Yes, a key advantage of the multi-template pipeline is the ability to conduct post-hoc quality control. You can analyze the landmark estimates from each individual template to assess how closely they converge. This allows for the identification of potential outlier estimates from specific templates, which can then be excluded to refine the final median estimate and improve overall accuracy [14].

Q4: How do I handle the classification of new, out-of-sample individuals in a geometric morphometrics study? Classifying new individuals not included in the original training sample requires obtaining their registered coordinates in the shape space of the training sample. This involves using one or more templates from your training set for the registration of the new individual's raw coordinates. The choice of template can affect the results, so understanding your sample's characteristics is crucial for optimal classification performance [5].

Troubleshooting Guides

Problem: High Landmarking Error in Morphologically Diverse Sample Your single-template automated landmarking method is producing high errors when applied to a dataset containing multiple species or highly variable forms.

Solution: Implement a multi-template pipeline like MALPACA.

- Template Selection: Use the K-means method described in FAQ Q2 to select a representative set of templates if no prior information is available.

- Run MALPACA: Landmark your selected templates manually, then run the MALPACA pipeline. This involves independently running the ALPACA registration for each template against every target specimen [14].

- Calculate Final Landmarks: For each landmark on each target specimen, the final 3D coordinate is the median of all corresponding estimates from every template used [14].

Problem: Poor Performance on Out-of-Sample Classification A classification model built from your training sample does not perform well when applied to new, out-of-sample individuals.

Solution: Ensure proper registration of new individuals into the training sample's shape space.

- Template Choice: The configuration of the template(s) used for registering the out-of-sample individual's raw coordinates is critical. The template should be morphologically representative of the expected variation [5].

- Registration: Perform Procrustes analysis or another alignment method to register the new individual's configuration to the template.

- Classification: Once registered to the same shape space, the pre-built classifier from your training sample can be applied directly to the new individual.

Problem: Dataset Contains Mixed Modality Scans (e.g., CT and surface scans) Using mixed modalities in landmark-free analyses can lead to challenges and inaccurate results due to differences in mesh topology [2].

Solution: Standardize your data by creating watertight, closed surfaces for all specimens.

- Poisson Surface Reconstruction: Apply this technique to all your scans to generate consistent, closed meshes. This step has been shown to significantly improve the correspondence between shape variations measured using different methods when dealing with mixed modalities [2].

Table 1: Performance Comparison of ALPACA vs. MALPACA [14]

| Sample Type | Method | Number of Templates | Performance Metric (vs. Gold Standard) |

|---|---|---|---|

| Mouse (Single population) | ALPACA (Single-template) | 1 | Higher Root Mean Square Error (RMSE) |

| MALPACA (Multi-template) | 7 | Lower RMSE | |

| Ape (Multi-species) | ALPACA (Single-template) | 1 | Higher Root Mean Square Error (RMSE) |

| MALPACA (Multi-template) | 6 | Lower RMSE |

Table 2: K-means vs. Random Template Selection for MALPACA [14]

| Selection Method | Number of Random Trials | Performance Outcome |

|---|---|---|

| K-means | 100 (mouse), 50 (ape) | Consistently avoids the worst-performing template combinations and shows good performance. |

| Random | 100 (mouse), 50 (ape) | Performance is variable; can result in selecting poor-performing template sets. |

Experimental Protocols

Protocol 1: Executing the MALPACA Pipeline [14]

- Input Data: Collect 3D surface models (e.g., in PLY format) for all specimens in the study sample.

- Template Selection:

- With prior knowledge: Manually landmark specimens that best represent the morphological extremes and variation in your dataset.

- Without prior knowledge: Apply the K-means template selection procedure (see FAQ Q2) to select representative specimens. Manually landmark these selected templates.

- Multi-Template Registration: For each template, run the ALPACA (Automated Landmarking through Point cloud Alignment and Correspondence) method against every target specimen in the dataset. This step can be run independently for each template-target pair.

- Consensus Landmark Generation: For each target specimen, for every landmark coordinate (x, y, z), calculate the median value from all estimates provided by the different templates. This median is the final landmark position.

- Post-hoc Quality Check (Optional): Import the individual landmark estimates from each template into analysis software (e.g., R). Assess the convergence of estimates across templates and remove clear outliers before re-calculating the final median.

Protocol 2: K-means Multi-Template Selection [14]

- Point Cloud Extraction: Obtain sparse point clouds from the 3D surface models of all specimens in the study sample.

- Generalized Procrustes Analysis (GPA): Perform GPA on all point clouds to align them and isolate shape variation.

- Principal Component Analysis (PCA): Decompose the Procrustes-aligned coordinates via PCA to reduce dimensionality.

- K-means Clustering: Apply K-means clustering to the PC scores. The number of clusters (K) can be specified based on the desired number of templates.

- Template Identification: From each cluster, select the specimen that is closest to the cluster centroid. These specimens are your candidate templates.

Workflow and Methodology Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Methodologies [14] [2]

| Item Name | Type | Function / Application |

|---|---|---|

| SlicerMorph | Software Extension | An open-source morphometrics toolkit within 3D Slicer. It provides modules for the MALPACA pipeline and K-means template selection [14]. |

| 3D Slicer | Software Platform | A free, open-source platform for medical image informatics, image processing, and three-dimensional visualization. It serves as the base for SlicerMorph [14]. |

| ALPACA (Automated Landmarking through Point cloud Alignment and Correspondence) | Algorithm/Method | A fast, lightweight automated landmarking method that uses sparse point clouds for registration. It forms the core registration step in MALPACA [14]. |

| Generalized Procrustes Analysis (GPA) | Statistical Method | Aligns configurations of landmarks (or point clouds) by optimizing position, orientation, and scale. Used for isolating shape variation in template selection [14]. |

| Deterministic Atlas Analysis (DAA) | Landmark-free Method | A method based on Large Deformation Diffeomorphic Metric Mapping (LDDMM) that compares shapes without predefined landmarks, useful for highly disparate taxa [2]. |

| Poisson Surface Reconstruction | Data Processing Method | A technique to create watertight, closed 3D surface meshes from scan data, crucial for standardizing mixed-modality datasets (e.g., CT and surface scans) [2]. |

Frequently Asked Questions (FAQs)

Q1: What is the main advantage of using Deterministic Atlas Analysis (DAA) over traditional landmark-based methods? DAA is a landmark-free approach that offers two key advantages. First, it is highly efficient and less time-consuming as it eliminates the need for manual or semi-automated landmarking, which is a slow and labor-intensive process. Second, it is better suited for comparing morphologically disparate taxa, as it does not rely on identifying homologous anatomical points across very different species, a requirement that can limit traditional geometric morphometrics [2].

Q2: How does the choice of an initial template affect my DAA results? The initial template selection can influence the analysis, though the overall impact on shape predictions may be minimal. However, a critical effect is on the number of control points generated. Different templates can yield vastly different numbers of control points (e.g., 32 vs. 420 in one study), and a poor choice can introduce a systematic bias by drawing the template specimen toward the center of the morphospace, thereby reducing apparent morphological differentiation. It is recommended to test multiple initial templates and select one that produces a sufficient number of control points and does not exhibit this central clustering artifact [2].

Q3: My dataset contains 3D models from mixed scanning modalities (e.g., CT and surface scans). Will this affect the DAA? Yes, using mixed modalities (open and closed meshes) can challenge the DAA process. A recommended solution is to standardize your data by using Poisson surface reconstruction, which creates watertight, closed surfaces for all specimens. This step has been shown to significantly improve the correspondence between shape variation patterns captured by manual landmarking and DAA [2].

Q4: What is the "kernel width" parameter, and how should I set it? The kernel width is a key parameter in DAA that controls the spatial extent of the deformations used to map the atlas to each specimen. A smaller kernel width yields finer-scale deformations and generates a higher number of control points. For example, kernel widths of 40.0 mm, 20.0 mm, and 10.0 mm can produce 45, 270, and 1,782 control points, respectively. The choice of kernel width involves a trade-off between detail and computational load, and it should be optimized for your specific dataset [2].

Q5: Can DAA be used for macroevolutionary studies? Yes, DAA shows great promise for large-scale macroevolutionary analyses across disparate taxa. Studies have found that while estimates of phylogenetic signal, morphological disparity, and evolutionary rates may vary slightly between DAA and manual landmarking, the overall patterns are comparable. This makes DAA a valuable tool for enabling the analysis of larger and more diverse datasets in evolutionary biology [2].

Troubleshooting Guides

Problem: Poor Atlas Registration or Unbiological Deformation Fields

Possible Causes and Solutions:

Cause 1: Suboptimal initial template.

- Solution: Do not select a template arbitrarily. Follow a data-driven approach by performing an initial Principal Component Analysis (PCA) on a subset of your data and select a template from specimens located at the extremes of the major principal components, as well as one close to the grand mean shape. This ensures your initial template captures the morphological diversity present in your sample [17].

Cause 2: Inappropriate kernel width.

- Solution: The kernel width should be tuned to the scale of the morphological features you are studying. If you are missing fine-grained shape details, try reducing the kernel width to increase the number of control points. Be aware that this will increase computation time [2].

Cause 3: Mixed mesh modalities in the input data.

- Solution: Standardize all meshes in your dataset to be watertight (closed) surfaces. Use Poisson surface reconstruction on all your 3D models before running the DAA pipeline to ensure topological consistency [2].

Problem: DAA Results Are Inconsistent with Manual Landmarking

Possible Causes and Solutions:

Cause 1: Fundamental differences in how shape is quantified.

- Solution: Some disagreement is expected. DAA captures shape based on dense deformations across the entire surface, while manual landmarking relies on discrete homologous points. This can lead to differences, particularly in certain clades like Primates and Cetacea. Use statistical comparisons (e.g., Mantel test, PROTEST) to quantify the correlation between the shape matrices generated by each method and ensure the biological conclusions are robust [2].

Cause 2: Lack of biological signal in automated results.

- Solution: Validate your DAA pipeline on a smaller subset of data that has also been manually landmarked. Compare the mean shape and variance-covariance patterns from both methods to ensure the automated approach retains biological integrity. Techniques like neural network-based shape optimization can be applied to refine the automated landmarks to better match expert biological annotations [17].

Experimental Protocols

Protocol 1: Standardized DAA Pipeline for a Mixed-Modality Dataset

This protocol is adapted from a large-scale study on mammalian crania [2].

- Data Standardization: Convert all 3D models (whether from CT or surface scans) into watertight, closed meshes using Poisson surface reconstruction.

- Initial Template Selection: Select an initial template mesh. It is advisable to test several candidates (e.g., one from a morphologically average specimen and others from extremes) and choose the one that generates a sufficient number of control points without introducing bias.

- Parameter Setting: Set the kernel width parameter (e.g., 10.0 mm, 20.0 mm, 40.0 mm). Consider running a sensitivity analysis on a data subset.

- Atlas Generation and Deformation: Run the DAA software (e.g., Deformetrica) to generate the sample-dependent atlas and compute the deformations that map this atlas to every specimen in the dataset. The output will be momentum vectors ("momenta") for each control point on each specimen.

- Shape Data Analysis: Use the momenta as the basis for shape comparison. Apply multivariate statistical techniques like kernel Principal Component Analysis (kPCA) to visualize and analyze shape variation.

Protocol 2: Optimizing Landmark Detection with Registration and Deep Learning

This protocol enhances automated landmark data to achieve accuracy comparable to manual annotation [17].

- Image Registration: Affine-align all volumetric images (e.g., μCT scans) to a pre-constructed atlas. Then, perform deformable registration using algorithms like ANIMAL or SyN to establish voxel-to-voxel correspondence.

- Landmark Propagation: Propagate the atlas's landmark configuration to each specimen via the computed deformation fields.

- Neural Network Optimization: Train a feedforward neural network (FFNN) to learn a regression model that minimizes the difference between the registered automated landmarks and a set of expert-placed manual landmarks. The loss function is specific to shape differences.

- Landmark Refinement: Apply the trained network to the automated landmarks to produce an optimized set of landmarks that are statistically indistinguishable from manual annotations.

Table 1: Impact of DAA Parameters on Analysis Output [2]

| Parameter | Tested Values | Observed Effect on Control Points | Impact on Analysis |

|---|---|---|---|

| Kernel Width | 40.0 mm | 45 control points | Captures broad-scale shape variation |

| 20.0 mm | 270 control points | A balance of detail and computation | |

| 10.0 mm | 1,782 control points | Captures finer-scale shape details | |

| Initial Template | Arctictis binturong | 270 control points | Minimal bias, recommended |

| Cacajao calvus | 420 control points | Template drawn to morphospace center | |

| Schizodelphis morckhoviensis | 32 control points | Too few points, insufficient detail |

Table 2: Comparison of Shape Analysis Methods [2] [17]

| Method | Key Feature | Pros | Cons |

|---|---|---|---|

| Manual Landmarking | Relies on homologous points identified by an expert | - Biologically meaningful- Established gold standard | - Time-consuming and labor-intensive- Subjective and prone to observer bias- Difficult across disparate taxa |

| DAA (Landmark-Free) | Uses deformation momenta and control points | - Automated and efficient- Suitable for large, disparate datasets- Standardized and repeatable | - Results may differ from landmarking- Sensitive to parameters and mesh quality- Biological interpretation of momenta can be complex |

| Registration + Deep Learning | Optimizes automated landmarks via neural networks | - Retains biological integrity of manual data- Highly accurate and automated | - Requires a manually landmarked training set- Increased computational complexity |

Workflow and Pathway Diagrams

DAA Workflow for Geometric Morphometrics

Template Selection Impact on DAA

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software for DAA [2] [18] [17]

| Item | Function in DAA Research | Notes |

|---|---|---|

| Deformetrica | Software platform for performing Deterministic Atlas Analysis (DAA) and computing large deformation diffeomorphic metric mapping (LDDMM). | The primary software implementation for the DAA method discussed [2]. |

| Poisson Surface Reconstruction | An algorithm used to create watertight, closed surfaces from 3D point clouds or open meshes. | Critical for standardizing datasets that mix different 3D scanning modalities (CT vs. surface scans) [2]. |

| MorphoJ | An integrated software package for geometric morphometric analysis of landmark data. | Used for traditional GM analyses (e.g., Procrustes superimposition, PCA) to compare and validate DAA results [18]. |

| 3D Slicer / ITK-SNAP | Open-source software for visualization and processing of 3D biomedical images. | Used for image segmentation, visualization, and potentially pre-processing of volumetric data before mesh generation [17]. |

| ANTS (Advanced Normalization Tools) | A comprehensive toolkit for image registration, including the SyN (Symmetric Normalization) algorithm. | Used in complementary registration-based workflows for automated landmarking and atlas building [17]. |

K-means clustering is a method of vector quantization that aims to partition n observations into k clusters, where each observation belongs to the cluster with the nearest mean (cluster centroid). This results in a partitioning of the data space into Voronoi cells [19]. In the context of geometric morphometric research, this algorithm provides a powerful, unsupervised approach to organizing complex morphological data, enabling researchers to identify inherent groupings within their datasets without a priori assumptions.

The primary goal of unbiased template selection is to identify a representative sample from a population that does not over-represent any particular anatomical feature or demographic subset [20]. Traditional template selection often relies on single specimens or simple averaging, which can introduce systematic biases, particularly when working with diverse populations. By implementing k-means clustering, researchers can systematically group specimens based on morphological similarity and select templates that best represent the central tendency of each natural grouping within their population, thereby enhancing the generalizability of registration and normalization procedures for out-of-sample data.

Theoretical Foundation

The K-Means Algorithm

The standard k-means algorithm, often called Lloyd's algorithm, uses an iterative refinement technique to partition datasets [19]. Given a set of observations (x₁, x₂, ..., xₙ), where each observation is a d-dimensional real vector, k-means clustering aims to partition the n observations into k (≤ n) sets S = {S₁, S₂, ..., Sₖ} to minimize the within-cluster sum of squares (WCSS) [19]:

[ \arg \minS \sum{i=1}^k \sum{\mathbf{x} \in Si} \left\| \mathbf{x} - \boldsymbol{\mu}_i \right\|^2 ]

where μᵢ is the mean of points in Sᵢ [19]. This objective function ensures that clusters are as compact as possible around their centroids, making the centroids themselves excellent candidates as representative templates.

Connection to Out-of-Sample Registration

In geometric morphometrics, the gold standard for landmark data acquisition has traditionally been manual detection by a single observer. While accurate for small-scale investigations, this approach becomes limiting for large-scale studies requiring automated, standardized data collection [21]. The k-means protocol addresses this challenge by providing a data-driven method for template selection that improves registration performance on unseen data.

The concept of out-of-sample performance is critical here. Where in-sample evaluation assesses how well a model reproduces the data used to build it, out-of-sample evaluation tests its performance on new, unseen data [22]. For template selection in morphometric registration, this translates to how well templates chosen via k-means facilitate accurate registration of specimens not included in the template selection process.

Experimental Protocol

Data Preparation and Preprocessing

Before applying k-means clustering, morphological data must be standardized and normalized:

- Landmark Configuration: Collect coordinate data from anatomical landmarks across all specimens. For 3D data, this will result in a matrix of size n × 3m, where n is the number of specimens and m is the number of landmarks.

- Procrustes Alignment: Perform Generalized Procrustes Analysis to remove variation due to position, orientation, and scale, focusing exclusively on shape variation.

- Feature Vector Construction: Use the Procrustes coordinates as feature vectors for clustering. Additional features such as principal component scores from shape space can also be incorporated.

Table 1: Essential Research Reagents and Computational Tools

| Item Name | Function/Application | Implementation Notes |

|---|---|---|

| Shape Coordinate Data | Raw morphological measurements | Landmark coordinates from geometric morphometrics |

| Procrustes Superposition | Removes non-shape variation | Standard step in geometric morphometric analysis |

| Euclidean Distance Metric | Measures similarity between shapes | Default for k-means; ensures spherical clusters [19] |

| Cluster Validity Indices | Determines optimal cluster count (k) | Includes Within-Cluster Sum of Squares (WCSS) [19] |

| Python/Scikit-learn | Algorithm implementation | Provides efficient k-means implementation and data handling |

K-Means Implementation for Template Selection

The following workflow outlines the complete k-means protocol for unbiased template selection:

The algorithm proceeds by alternating between two steps [19]:

Assignment Step: Assign each observation to the cluster with the nearest mean (centroid) based on squared Euclidean distance: ( Si^{(t)} = { xp : \| xp - mi^{(t)} \|^2 \leq \| xp - mj^{(t)} \|^2 \ \forall j, 1 \leq j \leq k } )

Update Step: Recalculate means (centroids) for observations assigned to each cluster: ( mi^{(t+1)} = \frac{1}{|Si^{(t)}|} \sum{xj \in Si^{(t)}} xj )

The algorithm converges when assignments no longer change, or equivalently, when the within-cluster sum of squares becomes stable [19].

Determining the Optimal Number of Clusters (k)

Selecting the appropriate value for k is critical. The elbow method provides a graphical approach for determining the optimal number of clusters by identifying the point where the rate of decrease in WCSS sharply changes [23].

Table 2: Cluster Quality Metrics for k-Selection

| Number of Clusters (k) | Within-Cluster Sum of Squares | Between-Cluster Variance | Recommended Application |

|---|---|---|---|

| k = 2 | High (~85% of total variance) | Low | Basic population stratification |

| k = 3 | Moderate (~70% of total variance) | Moderate | Standard morphometric studies |

| k = 4-5 | Lower (~50-60% of total variance) | High | Fine-grained population analysis |

| k > 5 | Low (<50% of total variance) | Very High | Specialized, hypothesis-driven research |

Troubleshooting Guide: Common Issues and Solutions

Q1: How do I determine the optimal number of clusters (k) for my morphometric data?

Problem: The k-means algorithm requires pre-specifying the number of clusters, but the optimal k for morphological data isn't known.

Solution:

- Implement the Elbow Method: Plot the within-cluster sum of squares (WCSS) against different k values. The "elbow" point where the rate of decrease sharply changes indicates the optimal k [23].

- Consider Biological Relevance: Ensure the chosen k has anatomical meaning. For instance, when creating population-specific brain templates, k might correspond to known morphological subtypes.

- Use Silhouette Analysis: Calculate silhouette scores for different k values; higher average scores indicate better-defined clusters.

Q2: My k-means results vary with each run due to random initialization. How can I ensure stable, reproducible template selection?

Problem: The standard k-means algorithm is sensitive to initial centroid placement, leading to inconsistent templates across runs.

Solution:

- Use k-means++ Initialization: This initialization method spreads out the initial centroids, leading to more consistent and optimal results [19].

- Set a Random Seed: Fix the random number generator seed for reproducible results during development and testing.

- Multiple Initializations: Run the algorithm multiple times with different initializations and select the result with the lowest WCSS [19].