Sustaining Diagnostic Excellence: Modern Strategies for Parasite Identification Proficiency in the Era of AI and Automation

This article addresses the critical challenge of maintaining high levels of technologist proficiency in parasite identification amid a rapidly evolving diagnostic landscape.

Sustaining Diagnostic Excellence: Modern Strategies for Parasite Identification Proficiency in the Era of AI and Automation

Abstract

This article addresses the critical challenge of maintaining high levels of technologist proficiency in parasite identification amid a rapidly evolving diagnostic landscape. For researchers, scientists, and drug development professionals, we explore the foundational pressures necessitating new training paradigms, evaluate the integration of AI and deep learning tools as both aids and training supplements, provide optimization strategies for hybrid human-AI workflows, and present validation frameworks for assessing competency. By synthesizing recent advancements in molecular diagnostics, digital pathology, and artificial intelligence, this review offers a comprehensive roadmap for developing resilient proficiency models that enhance diagnostic accuracy, accelerate training, and future-proof laboratory expertise against emerging parasitic threats.

The Evolving Diagnostic Landscape: Foundational Challenges in Parasitology Proficiency

Technical Support & Troubleshooting Hub

This technical support center provides troubleshooting guides and FAQs to help researchers address common challenges in traditional microscopy within parasite identification research. The content supports the broader thesis that maintaining technologist proficiency requires both skill reinforcement and the strategic integration of new technologies.

Frequently Asked Questions (FAQs)

1. How does user subjectivity directly impact parasite identification, and what can I do to minimize it? Subjectivity arises from individual interpretation of visual patterns, leading to diagnostic variability. This is particularly challenging for subtle features or borderline cases [1]. To minimize its impact:

- Implement Double-Blind Reviews: For critical findings, have a second technologist examine the slides without access to the initial diagnosis.

- Use Established Diagnostic Criteria: Create and consistently use an internal guide with reference images and clear morphological criteria for common parasites.

- Quantify When Possible: Use standardized counting chambers for parasite loads (e.g., eggs per gram) to replace subjective estimates with numerical data.

2. What are the specific signs of technologist fatigue in my data, and how can workflow adjustments help? Fatigue leads to a decline in performance, increasing the likelihood of missed diagnoses [1]. Key signs include a drop in detection rates for low-abundance parasites or an increase in inconclusive reports later in the workday. To combat this:

- Schedule Strategically: Rotate high-concentration tasks (e.g., screening unknown samples) with other duties and enforce regular breaks.

- Implement Workload Caps: Establish reasonable daily limits for the number of slides a technologist must screen.

- Leverage Pre-screening Tools: Where available, use digital pathology systems with AI algorithms to pre-screen slides, flagging regions of interest for the technologist to review, thus reducing the visual field they must inspect [1] [2].

3. Our lab has varying levels of expertise. How can we standardize diagnoses effectively? Expertise gaps are a major source of inter-observer variability [1]. Standardization is key to managing this.

- Develop a Centralized Image Library: Build a digital library of reference cases, including classic examples, atypical presentations, and common mimics, with expert annotations.

- Conduct Regular Proficiency Testing: Use standardized slide sets to periodically assess all technologists and identify areas where training is needed.

- Promote Cross-Training: Facilitate mentorship and peer-review sessions where less experienced staff can discuss challenging cases with senior experts.

4. Are there modern digital tools that can help overcome these limitations without fully replacing our microscopes? Yes, a hybrid approach is often feasible. You can augment your traditional workflow with digital tools.

- Digital Slide Scanners: Convert select glass slides into high-resolution Whole Slide Images (WSIs). This allows for easy second opinions, remote consultations, and digital archiving [3] [2].

- AI-Based Analysis Software: These tools can act as an assistant, automatically detecting, quantifying, and characterizing parasites or specific morphological features, thereby adding a layer of objective, data-driven analysis [1] [4] [2].

Troubleshooting Common Experimental Issues

| Issue | Root Cause | Solution & Protocol |

|---|---|---|

| Low Diagnostic Consistency | High inter-observer variability due to subjective interpretation of morphological features [1]. | Protocol for Consistency Assessment:1. Select a set of 10-20 slides covering a range of parasites and difficulty levels.2. Have all technologists in the lab independently examine and diagnose each slide.3. Calculate the percent agreement or Kappa statistic for the group.4. For slides with low agreement, organize a consensus session to review and establish definitive diagnostic criteria. |

| Missed Diagnoses in High-Throughput Screening | Operator fatigue from reviewing large volumes of slides, leading to decreased attention to detail [1]. | Protocol for Workload Management:1. Segment Work: Break large batches into smaller sets of 15-20 slides.2. Mandatory Breaks: Institute a 5-minute break after each set.3. Random Re-check: Implement a protocol where 5% of already screened negative slides are randomly re-checked by another technologist to ensure ongoing vigilance. |

| Inability to Resolve Subtle Morphological Features | Limitations of conventional microscopy, such as shallow depth of focus on uneven samples or low contrast [5]. | Protocol for Enhanced Imaging:1. If a digital microscope is available, utilize its Extended Focal Image (EFI) function. This captures multiple images at different focal planes and integrates them into a single, entirely in-focus image [5].2. For low-contrast samples, use High Dynamic Range (HDR) imaging to reveal details that are otherwise difficult to observe [5]. |

Quantitative Comparison: Traditional vs. Advanced Diagnostic Methods

The following table summarizes data on the performance and characteristics of traditional and advanced methods, highlighting the quantitative benefits of newer technologies in addressing traditional limitations.

| Parameter | Traditional Microscopy | Advanced Molecular Detection | AI-Augmented Digital Pathology |

|---|---|---|---|

| Typical Diagnostic Concordance | Baseline (High inter-observer variability) [1] | N/A (Gold standard for specific IDs) | ~98.3% concordance with light microscopy [2] |

| Time for 500 Target Analyses | Manual process: ~10 days [6] | ~Few hours [6] | Rapid automated analysis (minutes to hours) [1] |

| Susceptibility to Operator Fatigue | High [1] | Low (Automated systems) | Low (Automated pre-screening) [1] |

| Key Limitation Addressed | (Baseline) | Speed, sensitivity, specificity [6] | Subjectivity, workload, reproducibility [1] |

Experimental Workflow for Integrating Digital Tools

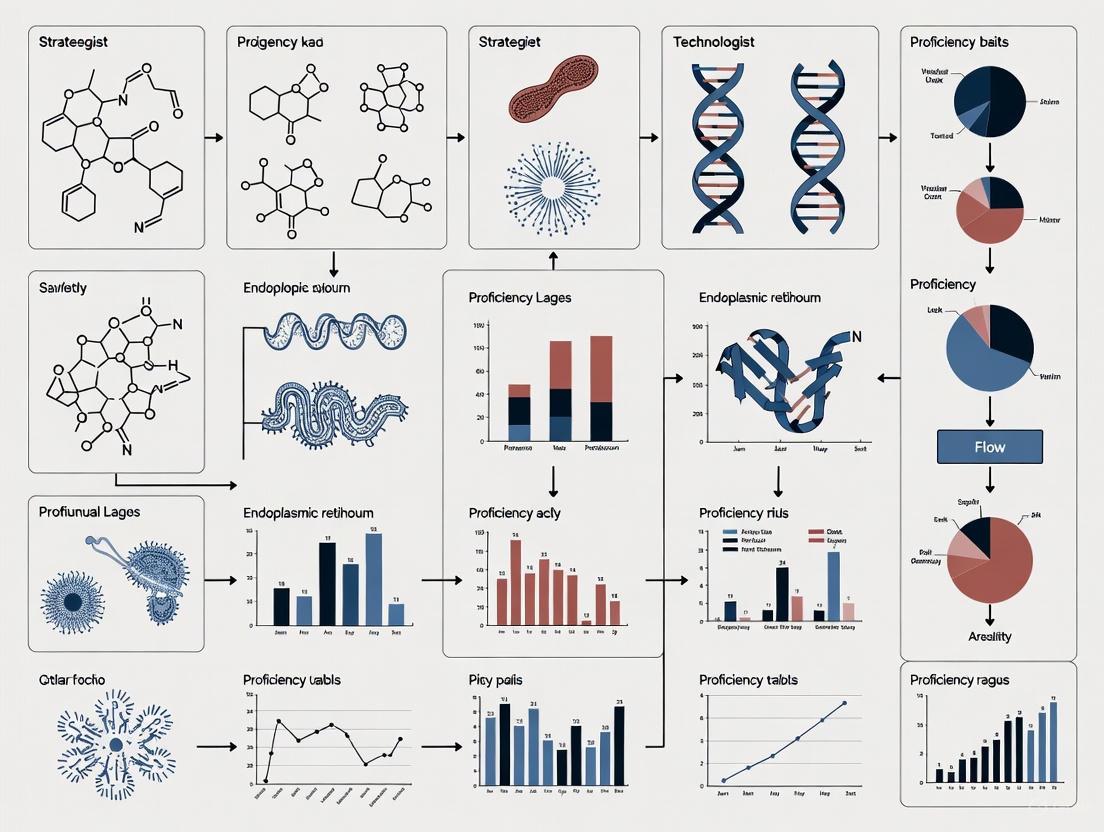

This workflow diagram outlines a protocol for leveraging digital tools to enhance proficiency and address gaps in a traditional microscopy setting.

Research Reagent Solutions for Enhanced Parasite Identification

This table lists key reagents and materials used in modern parasitic diagnostics to improve accuracy and objectivity.

| Item | Function in Research & Diagnosis |

|---|---|

| High-Quality Staining Reagents | (e.g., Trichrome, Giemsa) Enhance contrast and highlight specific morphological features of parasites for more reliable visual identification. |

| Monoclonal Antibodies | Used in Immunohistochemistry (IHC) and serological tests (e.g., ELISA) to detect specific parasite antigens with high specificity, reducing cross-reactivity [4] [7]. |

| PCR Master Mixes & Primers | Essential for molecular methods like Polymerase Chain Reaction (PCR) to amplify and detect specific parasite DNA sequences, offering high sensitivity and specificity [4] [7]. |

| Next-Generation Sequencing (NGS) Kits | Allow for comprehensive genomic analysis of parasites, enabling species identification, detection of drug-resistance markers, and discovery of new pathogens [4] [7]. |

| CRISPR-Cas Reagents | Power new, highly specific molecular diagnostic assays for detecting parasite DNA, potentially enabling rapid, point-of-care testing [7]. |

| Automated Image Analysis Software | Provides AI and machine learning algorithms to automatically identify, quantify, and characterize parasites in digital images, reducing subjectivity and fatigue [1] [2]. |

Parasitic infections represent a critical global health challenge, affecting nearly a quarter of the world's population and contributing significantly to mortality and morbidity, particularly in tropical and subtropical regions [4] [8]. These infections result in diverse health issues including malnutrition, anemia, impaired cognitive and physical development in children, and increased susceptibility to other diseases [4]. The World Health Organization identifies that 13 of the 20 listed neglected tropical diseases are caused by parasites, underscoring the urgent need for improved diagnostic methods [4].

The economic burden is equally staggering, with parasitic infections draining billions from economies through healthcare costs and lost productivity [4]. Accurate diagnosis is fundamental to reducing this dual burden, enabling targeted treatment, preventing complications, and facilitating effective surveillance and control programs [4] [8]. This technical resource center provides essential guidance for maintaining diagnostic accuracy in parasitology research and clinical practice.

The Global Impact of Parasitic Infections

Parasitic infections cause a spectrum of clinical manifestations, from mild discomfort to severe, life-threatening illness [9] [10]. Gastrointestinal parasites can lead to enteritis, diarrhea, dysentery, nutritional depletion, mechanical obstruction, and invasive disease [9]. The table below summarizes the significant global prevalence and impact of selected parasitic infections:

Table 1: Global Prevalence and Impact of Selected Parasitic Infections

| Parasite/Disease | Global Prevalence/Cases | Key Health Impacts | Vulnerable Populations |

|---|---|---|---|

| Soil-transmitted helminths | Approximately 1.5 billion people [8] | Malnutrition, anemia, impaired cognitive development [9] | Children in resource-poor settings [9] |

| Malaria | 249 million cases annually [11] | Fever, organ impairment, death | Children under 5 (account for ~80% of deaths) [11] |

| Schistosomiasis | Approximately 151 million cases [4] | Tissue damage, organ impairment | Communities with poor sanitation |

| Food-borne trematodes | Approximately 44.47 million cases [4] | Various gastrointestinal and systemic effects | Consumers of raw/undercooked food |

Economic Burden Quantification

The economic impact of parasitic infections extends beyond direct healthcare costs to include substantial indirect costs from lost productivity and long-term developmental deficits [4]. The following table summarizes specific economic losses attributed to various parasitic infections:

Table 2: Documented Economic Impact of Parasitic Infections

| Parasitic Infection | Region | Economic Impact |

|---|---|---|

| Malaria | India | US$ 1940 million in 2014 [4] |

| Visceral leishmaniasis | State of Bihar, India | 11% of annual household expenditures [4] |

| Ectoparasitic infections | United States | Considerable economic burden with significant outpatient treatment costs [4] |

| Neurocysticercosis | United States | Over US$400 million annually in healthcare and lost productivity [4] |

| Porcine cysticercosis | Latin America | Economic losses exceeding US$164 million [4] |

| Ticks and tick-borne diseases | India's dairy production | Loss of US$787.63 million [4] |

Diagnostic Challenges and Methodologies

Traditional Diagnostic Methods

Conventional diagnostic approaches include microscopy, serological testing, histopathology, and culturing [12] [7]. While these methods have been foundational in parasitology, they face limitations including time consumption, requirement for expert interpretation, and variable sensitivity and specificity [8] [12]. For intestinal parasites, the ova and parasite (O&P) examination has been a standard method, though its accuracy for a single specimen is only 75.9% [13].

Advanced Diagnostic Technologies

Molecular methods have significantly enhanced detection capabilities. Polymerase chain reaction (PCR), next-generation sequencing, and isothermal loop-mediated amplification offer improved sensitivity and specificity [4] [12]. Emerging technologies including nanotechnology, CRISPR-Cas systems, and multi-omics approaches provide new avenues for precise parasite detection and biological understanding [12] [7].

Artificial intelligence, particularly convolutional neural networks, is revolutionizing parasitic diagnostics by enhancing detection accuracy and efficiency in image analysis [4]. These technologies are particularly valuable in addressing challenges posed by complex parasite life cycles and increasing drug resistance [4].

Troubleshooting Guides for Parasite Identification

Common Diagnostic Challenges and Solutions

Table 3: Troubleshooting Common Parasite Diagnostic Issues

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Intermittent shedding of parasites in stool | Natural life cycle of parasite | Collect multiple specimens (minimum of 3, on alternate days); for non-diarrheal patients, collect 2 specimens after normal bowel movements and 1 after a cathartic [13] |

| Low sensitivity of O&P exam | Intermittent shedding, improper specimen preservation | Use multiple collection methods; ensure proper transport media (Total-Fix or paired vials of 10% formalin and PVA); collect adequate stool sample (10 g or 10 mL minimum) [13] |

| Inability to distinguish active from past infection | Serological tests detecting antibodies that persist after infection | Combine methods; use antigen detection tests or molecular methods that indicate current infection [8] |

| False-negative results | Low parasite load, inappropriate test selection | Use concentration techniques; employ multiple diagnostic methods (molecular, antigen detection); repeat testing [8] |

| Cross-reactivity in serological tests | Antigenic similarity between different parasite species | Use confirmatory tests with higher specificity (immunoblot, PCR); consider geographic prevalence in interpretation [4] |

Specimen Collection and Handling Protocols

Stool Specimen Collection for O&P Examination:

- Collect specimens in appropriate transport media (Total-Fix or paired vials of 10% formalin and PVA)

- Obtain adequate stool sample (minimum of 5 g or 5 mL, ideal 10 g or 10 mL)

- Transport specimens at room temperature

- Avoid antibiotics, laxatives, and antacids until after stool sample collection as these can interfere with detection [13]

Alternative Specimen Types:

- Urine: For detection of Schistosoma haematobium, collect around noon in sterile, leak-proof container; transport refrigerated [13]

- Sputum or bronchoalveolar lavage: For detection of Paragonimus westermani eggs, Strongyloides stercoralis larvae; submit in Total-Fix, 10% formalin, or unpreserved [13]

- Perianal sample: For pinworm detection, use pinworm paddle or clear cellulose tape applied to glass slide [13]

Frequently Asked Questions (FAQs)

Q: What is the recommended first-line test for diagnosis of intestinal parasites? A: The O&P exam is not routinely recommended as the primary test for intestinal parasites in the United States, as more common parasites are better detected by other methods. Testing should be guided by symptoms, travel history, and geographic disease prevalence [13].

Q: How many stool specimens are recommended for optimal parasite detection? A: For routine examination before treatment, a minimum of 3 specimens collected on alternate days is recommended. Submitting more than one specimen collected on the same day typically does not increase test sensitivity [13].

Q: What are the key advantages of molecular methods over traditional microscopy? A: Molecular methods like PCR offer enhanced sensitivity and specificity, ability to detect low parasite loads, species differentiation, and reduced dependence on technical expertise. They are particularly valuable for detecting parasites that are morphologically similar or present in low numbers [8] [12].

Q: How can diagnostic methods distinguish between active and past infections? A: Methods that detect parasite antigens, DNA, or viable organisms indicate active infection. Serological tests that measure antibodies may not distinguish between current and past infections, as antibodies can persist after resolution of infection [8].

Q: What quality control measures are essential for maintaining diagnostic accuracy? A: Key measures include: regular proficiency testing, continuing education, use of appropriate positive and negative controls, validation of methods, standardized procedures, and participation in external quality assessment schemes [8] [14].

Experimental Protocols for Parasite Identification

Multiparameter Diagnostic Approach Protocol

Principle: Combining multiple diagnostic methods increases detection sensitivity and specificity, providing a comprehensive assessment of parasitic infection [8].

Materials:

- Stool transport media (Total-Fix or 10% formalin and PVA)

- Microscope with appropriate magnification (10x, 40x, 100x oil immersion)

- DNA extraction kits

- PCR reagents and equipment

- Antigen detection kits (ELISA or rapid tests)

Procedure:

- Collect stool specimens on alternate days (minimum of 3 samples)

- Process each sample for:

- Direct wet mount examination

- Formalin-ethyl acetate concentration technique

- Permanent staining (Trichrome or modified acid-fast)

- Perform antigen detection tests for specific parasites (e.g., Giardia, Cryptosporidium)

- Extract DNA from preserved stool samples

- Conduct PCR for target parasite DNA

- Correlate results from all methods for final diagnosis

Interpretation: A parasite is considered present if identified by any validated method. Molecular methods can confirm species and detect low-level infections missed by microscopy.

Diagnostic Workflow Visualization

Diagram Title: Parasite Diagnostic Workflow

Research Reagent Solutions

Table 4: Essential Research Reagents for Parasitology Diagnostics

| Reagent/Material | Function/Application | Specific Examples/Notes |

|---|---|---|

| Stool preservatives | Preserve parasite morphology for microscopy | Total-Fix, 10% formalin, PVA (polyvinyl alcohol) [13] |

| DNA extraction kits | Isolation of parasite nucleic acids for molecular tests | Kits optimized for stool samples; include inhibitors removal |

| PCR master mixes | Amplification of parasite DNA | Include controls for inhibition detection; species-specific primers |

| Staining reagents | Enhance microscopic visualization | Trichrome stain for protozoa, modified acid-fast for coccidia |

| Antigen detection kits | Detect parasite-specific proteins | ELISA or rapid tests for Giardia, Cryptosporidium |

| Cell culture systems | Support parasite growth | For culture-based detection (e.g., Entamoeba histolytica) |

| Positive control specimens | Quality assurance | Characterized parasite samples for test validation |

The significant health and economic burden of parasitic infections underscores the critical importance of diagnostic accuracy. While traditional methods remain foundational, technological advancements including molecular diagnostics, artificial intelligence, and novel biomarker detection are transforming the field. Maintaining technologist proficiency through standardized protocols, continuing education, and quality control measures is essential for accurate parasite identification and optimal patient outcomes. The integration of multiple diagnostic approaches provides the most comprehensive assessment, enabling effective treatment, control, and ultimately reduction of the global burden of parasitic diseases.

The field of diagnostic parasitology is undergoing a profound transformation, moving from traditional morphological assessment toward advanced molecular detection methods. This shift is revolutionizing how researchers and laboratory technologists identify parasites, offering unprecedented accuracy and specificity. While conventional microscopy has been the gold standard for centuries, its limitations in sensitivity, specificity, and ability to differentiate morphologically similar species have accelerated the adoption of molecular techniques, particularly polymerase chain reaction (PCR)-based methods. This technical support center provides essential troubleshooting guidance and methodological frameworks to help scientists maintain proficiency and navigate challenges during this transitional period.

FAQs: Navigating the Methodological Shift

1. What are the primary advantages of molecular methods over morphological identification for parasites?

Molecular diagnostics investigate human, viral, and microbial genomes and the products they encode, offering incredible sensitivity and specificity compared to conventional methods [15]. Where morphological identification often struggles to differentiate between visually similar species, molecular techniques can definitively identify species based on genetic markers, which is crucial for determining zoonotic potential and appropriate treatment protocols [16] [17].

2. When should I use molecular testing instead of traditional morphological methods?

Molecular testing is particularly valuable in specific scenarios [17]:

- When you need to differentiate between morphologically similar species (e.g., different Giardia assemblages)

- For definitive identification of zoonotic pathogens (e.g., Echinococcus multilocularis)

- When investigating spurious parasitism (parasites passing through but not infecting the host)

- For detecting low-level infections where sensitivity is critical

- When specific species identification impacts treatment decisions or public health measures

3. What are the main types of PCR assays used in parasite detection?

There are two fundamental approaches [17]:

- Species-specific PCR assays: Designed with primers unique to a particular parasite species, providing rapid confirmation without need for sequencing.

- Universal PCR assays: Use primers targeting conserved genetic regions to amplify variable regions, allowing identification of multiple related species through subsequent sequencing.

4. Why might molecular and morphological methods show conflicting results in biodiversity studies?

Recent research indicates that molecular methods like eDNA analysis sometimes report higher biodiversity in intensively managed soils compared to woodlands, while morphological assessments suggest the opposite trend [18]. These discrepancies may stem from methodological differences including primer bias, detection of relict DNA from non-living organisms, or the ability of molecular methods to detect cryptic species missed by morphological examination.

5. What are the emerging alternatives to PCR-based molecular detection?

While PCR remains fundamental, several advanced techniques are gaining traction [16]:

- DNA barcoding: Analyzes specific barcode sequences for identification with approximately 95% accuracy.

- Next-generation sequencing (NGS): Allows analysis of entire genomes at an unprecedented scale.

- CRISPR-based detection: Developing as the next generation of rapid, accurate, and inexpensive molecular assays.

- Artificial intelligence: Employs well-trained algorithms to analyze images with 98.8–99.0% precision.

Troubleshooting Guide: Common PCR Issues and Solutions

Table 1: PCR Troubleshooting for Parasite Detection

| Observation | Possible Cause | Recommended Solution |

|---|---|---|

| No PCR Product | Poor template quality or integrity | Minimize DNA shearing during isolation; evaluate by gel electrophoresis; store in molecular-grade water or TE buffer [19]. |

| Poor primer design or specificity | Verify primer complementarity to target; use online design tools; ensure no complementarity between primers [20]. | |

| Suboptimal annealing temperature | Calculate primer Tm accurately; use gradient cycler to optimize; typically 3–5°C below primer Tm [19]. | |

| Presence of PCR inhibitors | Re-purify DNA with 70% ethanol precipitation; use polymerases with high inhibitor tolerance [19]. | |

| Multiple or Non-Specific Bands | Low annealing temperature | Increase temperature incrementally; use hot-start polymerases [19] [20]. |

| Excess primer or Mg2+ concentration | Optimize primer concentration (0.1–1 μM); adjust Mg2+ in 0.2–1 mM increments [19] [20]. | |

| Primer-dimer formation | Avoid GC-rich 3' ends; increase primer length; verify no direct repeats [20]. | |

| Faint or Weak Bands | Insufficient template DNA | Increase input DNA quantity; choose high-sensitivity polymerases; increase cycle number to 40 for low copy numbers [19]. |

| Insufficient number of cycles | Adjust to 25–35 cycles generally; extend to 40 for low template [19]. | |

| Suboptimal extension time | Increase extension time for longer amplicons; reduce temperature for long targets (>10 kb) [19]. | |

| Sequence Errors | Low fidelity polymerase | Use high-fidelity enzymes like Q5 or Phusion; reduce cycle number [20]. |

| Unbalanced dNTP concentrations | Ensure equimolar dATP, dCTP, dGTP, and dTTP concentrations [19]. | |

| UV-damaged DNA | Use long-wavelength UV (360 nm) for gel visualization; limit exposure time [19]. |

Experimental Workflows and Methodologies

Molecular Detection Workflow for Parasites

Method Selection Algorithm for Parasite Identification

Research Reagent Solutions for Molecular Parasitology

Table 2: Essential Reagents for Molecular Parasite Detection

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| DNA Polymerases | Hot-start DNA polymerases | Prevents non-specific amplification by remaining inactive until high-temperature activation [19]. |

| High-fidelity enzymes (Q5, Phusion) | Reduces sequence errors for cloning and sequencing; essential for accurate genotyping [20]. | |

| Polymerases with high processivity | Efficient for complex templates (GC-rich, secondary structures) and long targets [19]. | |

| PCR Additives | GC Enhancer | Helps denature GC-rich DNA and sequences with secondary structures [19]. |

| DMSO, Formamide | Co-solvents that help denature difficult templates; use at lowest effective concentration [19]. | |

| Magnesium Salts | MgCl₂, MgSO₄ | Cofactor for DNA polymerases; concentration requires optimization (typically 1.5-2.5 mM) [19]. |

| Primer Design | Specific primers (species-specific) | Designed for unique genomic regions of target parasites for exclusive detection [17]. |

| Universal primers (conserved regions) | Target conserved genetic regions (ITS, CO1, 18S) to amplify variable regions for multiple species [17]. | |

| Template Preparation | DNA purification kits | Remove PCR inhibitors from complex samples (feces, soil, blood); essential for reliability [20]. |

| TE buffer (pH 8.0) | Proper storage medium for DNA to prevent degradation by nucleases [19]. |

The transition from morphological to molecular detection methods represents a significant advancement in parasite identification research, offering enhanced accuracy, sensitivity, and specificity. While this shift presents technical challenges, the troubleshooting guides and methodological frameworks provided here offer practical support for maintaining technologist proficiency. By understanding both the capabilities and limitations of molecular methods, and implementing robust troubleshooting protocols, researchers can effectively navigate this methodological evolution and contribute to improved parasitic disease diagnosis and management.

Frequently Asked Questions & Troubleshooting Guides

This technical support center provides resources to help researchers navigate the complex interplay between parasite biology, drug resistance, and environmental factors. The guidance is framed within strategies for maintaining technologist proficiency in parasite identification research.

Antimicrobial Resistance (AMR) Surveillance

Q: Our laboratory is establishing an AMR surveillance program for bloodstream infections. What key pathogen-antibiotic combinations should we prioritize for tracking?

A: According to the latest WHO Global Antimicrobial Resistance Surveillance System (GLASS) report, your surveillance program should generate standardized data for key pathogen-antibiotic combinations. The 2025 report provides adjusted national AMR estimates based on data from 110 countries between 2016-2023, analyzing over 23 million bacteriologically confirmed cases [21].

Table: Key Pathogen-Antibiotic Combinations for AMR Surveillance

| Infection Type | Pathogen | Antibiotic Combinations | Surveillance Priority |

|---|---|---|---|

| Bloodstream infections | Multiple bacterial species | Beta-lactams, Carbapenems, Vancomycin | Critical |

| Urinary tract infections | Escherichia coli, Klebsiella pneumoniae | Fluoroquinolones, Cephalosporins | High |

| Gastrointestinal infections | Salmonella spp., Campylobacter spp. | Macrolides, Fluoroquinolones | High |

| Urogenital gonorrhoea | Neisseria gonorrhoeae | Cephalosporins, Azithromycin | Critical |

Q: We've encountered inconsistent results with our antimicrobial susceptibility testing (AST) devices. What troubleshooting steps should we follow?

A: Inconsistent AST results can stem from various technical issues. The FDA recently recalled specific VITEK 2 AST cards due to incorrect antibiotic concentrations in wells [22]. Follow this systematic troubleshooting protocol:

- Verify reagent quality: Check for recalls or lot-specific issues with your AST cards or panels [22].

- Calibration validation: Ensure automated systems like the Selux AST System or BD Kiestra MRSA Application are properly calibrated according to manufacturer specifications [22].

- Control organisms: Regularly test with quality control organisms to verify system performance.

- Incubation conditions: Confirm temperature and atmosphere (aerobic/anaerobic) requirements are strictly maintained.

- Sample preparation: Standardize inoculum preparation methods across technicians.

Table: Recently Cleared AST Systems and Their Applications

| Device Name | Manufacturer | Clearance Date | Primary Application |

|---|---|---|---|

| PBC Separator with Selux AST System | Selux Diagnostics, Inc. | February 15, 2024 | Automated inoculation preparation for positive blood cultures |

| VITEK 2 AST-Gram Positive Daptomycin | bioMérieux | July 5, 2023 | MIC determination for daptomycin against Gram-positive bacteria |

| HardyDisk AST Sulbactam/Durlobactam | Hardy Diagnostics | July 6, 2023 | Disk diffusion assay for Acinetobacter baumannii-complex |

Complex Parasite Life Cycles

Q: Our research involves parasites with complex life cycles. What experimental conditions promote stable coexistence of multiple parasite species in a single host?

A: Mathematical modeling reveals that host-manipulating parasites can coexist under specific ecological conditions despite competition for intermediate hosts [23]. Your experimental design should account for these three critical conditions:

Parasite Coexistence Conditions

Troubleshooting Tip: If you observe competitive exclusion in your parasite communities, manipulate host behavior to create a balanced predation risk that benefits both parasite species.

Q: How does timing of transmission affect virulence evolution in our mosquito-microsporidian model system?

A: Experimental selection of Vavraia culicis in Anopheles gambiae hosts demonstrates that selection for late transmission increases virulence [24]. Parasites selected for late transmission showed:

- Higher host mortality rates

- Shorter host life cycles

- Rapid infective spore production

- Increased exploitation of host resources

Experimental Protocol: Transmission Timing and Virulence

- Establish two selection lines: early-transmission (ET) and late-transmission (LT) parasites

- For ET line: passage parasites to new hosts during early infection stages (24-48 hours post-infection)

- For LT line: passage parasites only during late infection stages (96+ hours post-infection)

- Maintain selection pressure for 6+ host generations

- Compare virulence metrics: host longevity, spore production dynamics, and mortality curves

Climate Change Impacts

Q: How should we modify our thermal limit experiments for ectotherms to achieve greater ecological realism?

A: Traditional Critical Thermal Maxima (CTmax) experiments with rapid ramping temperatures lack ecological realism [25]. Implement this improved protocol using Incremental Temperature with Diel fluctuations (ITDmax):

Thermal Limit Experimental Designs

Q: Our soil warming experiments yield inconsistent CO2 emission results. What critical factors are we missing?

A: Recent research challenges the assumption that warming alone increases soil microbial CO2 emissions [26]. The missing factors in your experimental design are likely:

- Carbon availability: Heating alone doesn't stimulate microbial activity without easily available carbon sources

- Nutrient balance: Microbes require nitrogen and phosphorus in addition to carbon

- Microbial resources: Depleted microbial resources constrain warming effects

Experimental Correction:

- Add carbon substrates (e.g., plant litter, root exudates) to warming treatments

- Include nutrient amendments (N, P) in factorial design with temperature

- Measure multiple carbon pools: plant material, living microbes, dead microbial biomass

- Account for microbial metabolic strategies and enzyme production

Technologist Proficiency Maintenance

Q: With declining parasitology education hours, how can we maintain morphological identification skills among our research staff?

A: Implement a digital morphology database using Whole-Slide Imaging (WSI) technology [27]. This approach addresses the scarcity of physical specimens in developed countries due to improved sanitation.

Table: Digital Parasitology Database Implementation

| Component | Specification | Proficiency Benefit |

|---|---|---|

| Scanning Technology | SLIDEVIEW VS200 scanner with Z-stack function | Preserves rare specimens indefinitely |

| Specimen Types | Parasite eggs, adults, arthropods (50+ specimens) | Comprehensive morphological reference |

| Accessibility | Shared server with 100 simultaneous users | Enables team training and consistency |

| Educational Features | Bilingual annotations (English/Japanese) | Standardized terminology across team |

| Security | ID and password protection | Maintains data integrity |

Experimental Protocol: Virtual Slide Database Creation

- Specimen Collection: Curate existing slide specimens of parasite eggs, adults, and arthropods

- Digital Scanning: Use high-resolution slide scanner with Z-stack function for thicker specimens

- Quality Control: Review all digital images for focus and clarity before incorporation

- Taxonomic Organization: Structure database folders according to standard classification

- Annotation: Add explanatory texts in multiple languages for each specimen

- Implementation: Host on secure server with controlled access for research team

Troubleshooting Tip: If morphological expertise continues to decline despite digital resources, implement monthly proficiency testing using the database's unknown specimen module.

The Scientist's Toolkit

Table: Essential Research Reagents and Materials

| Reagent/Material | Application | Function | Technical Notes |

|---|---|---|---|

| Selux Gram-Negative Comprehensive Panel | Antimicrobial susceptibility testing | Quantitative in vitro AST for Gram-negative organisms | Can expand to incorporate new drugs; cleared with prospective change protocol for breakpoint updates [22] |

| VITEK 2 AST cards | Automated antimicrobial susceptibility testing | Miniaturized, abbreviated broth dilution method for MIC determination | Check for recent recalls; ensure proper storage and handling [22] |

| Whole-Slide Imaging (WSI) System | Parasite morphology preservation | Digitizes glass specimens for education and reference | Prevents specimen deterioration; enables remote collaboration [27] |

| Soil Nutrient Amendments (C, N, P) | Climate warming experiments | Provides necessary resources for microbial metabolic activity | Enables differentiation between temperature and resource limitation effects [26] |

| Experimental Mosquito Colonies | Parasite transmission studies | Maintains consistent host populations for virulence evolution research | Essential for controlled selection experiments [24] |

The field of diagnostic parasitology faces a critical paradox: while technological advancements offer unprecedented diagnostic power, the specialized workforce required to implement and interpret these tools is in dangerous decline. This crisis stems from a convergence of factors, including an ageing workforce in many developed countries, the increasing technological complexity of modern diagnostics, and the vast socioeconomic impact of globalization and environmental change [28]. The traditional gold standard of microscopy, which requires extensive hands-on training and expertise, is increasingly being supplemented or replaced by immunodiagnostic and molecular methods [28] [12]. This shift, while improving sensitivity and specificity, creates a new challenge: maintaining technologist proficiency across both conventional and advanced technological platforms. This technical support center is designed to address these challenges by providing immediate, accessible guidance to help researchers and scientists navigate the complexities of modern parasitic disease research, thereby supporting ongoing proficiency and effective knowledge transfer in an era of specialized workforce shortages.

Technical Support Center

Troubleshooting Guides

FAQ: Why is my PCR producing multiple or non-specific bands?

Multiple or non-specific PCR products are a common issue that can complicate the interpretation of parasitic DNA detection assays, such as those for Giardia or Cryptosporidium [29].

- Possible Cause & Solution: Premature Replication

- Cause: Polymerase activity before the initial denaturation step can lead to non-specific priming.

- Solution: Use a hot-start polymerase, such as OneTaq Hot Start DNA Polymerase. Set up reactions on ice using chilled components and add samples to a thermocycler preheated to the denaturation temperature [29].

- Possible Cause & Solution: Primer Annealing Temperature is Too Low

- Cause: Low annealing temperatures allow primers to bind to non-target sequences with partial complementarity.

- Solution: Increase the annealing temperature. Recalculate primer Tm values using an NEB Tm calculator and test an annealing temperature gradient [29].

- Possible Cause & Solution: Poor Primer Design

- Cause: Primers with complementary regions (self-dimers or hairpins) or GC-rich 3' ends can misprime.

- Solution: Verify that primers are non-complementary, both internally and to each other. Increase the length of the primer and avoid GC-rich 3' ends. Use software dedicated to primer design [29].

- Possible Cause & Solution: Incorrect Mg++ Concentration

- Cause: Mg++ concentration affects primer annealing and enzyme fidelity.

- Solution: Adjust the Mg++ concentration in 0.2–1 mM increments to find the optimal concentration for your specific reaction [29].

- Possible Cause & Solution: Contamination with Exogenous DNA

- Cause: Contamination from previous PCR products or environmental DNA.

- Solution: Use positive displacement pipettes or aerosol-resistant tips. Designate a dedicated work area and pipettor for reaction setup only, and always wear gloves [29].

FAQ: My multiplex gastrointestinal PCR panel is negative, but the patient has a high clinical suspicion of parasitosis. What are the next steps?

Multiplex syndromic panels (e.g., for gastrointestinal infections) are valuable but have limited parasitic targets, often only including Giardia lamblia, Cryptosporidium spp., Entamoeba histolytica, and Cyclospora cayetanensis [28] [8]. They do not detect other clinically important parasites like Dientamoeba fragilis, Blastocystis hominis, or many helminths [28] [8].

- Action 1: Initiate Morphological Examination

- Action 2: Leverage Species-Specific Serology

- Procedure: If a tissue-invasive parasite is suspected (e.g., Strongyloides, Echinococcus), collect serum for parasite-specific antibody testing. Note that serology can indicate past or present infection and may not distinguish between active and resolved disease [8].

- Action 3: Employ Targeted Molecular Assays

- Action 4: Consider Alternative Specimen Types

- Procedure: For specific parasites, other specimens are superior. Use a Pinworm Exam (pinworm paddle) for Enterobius vermicularis. Submit a visible worm in alcohol for morphological identification. Sputum or bronchoalveolar lavage can be examined for Paragonimus westermani eggs or Strongyloides larvae [13].

Research Reagent Solutions

The following table details essential materials and their functions for establishing a foundational parasitology research laboratory, combining traditional and modern approaches [28] [29] [8].

Table 1: Key Research Reagent Solutions for Parasitology Diagnostics

| Item | Function/Application |

|---|---|

| Total-Fix or 10% Formalin & PVA | Preferred transport and preservative media for stool specimens intended for microscopic O&P examination; ensures morphological integrity of parasites [13]. |

| High-Fidelity DNA Polymerase (e.g., Q5) | Essential for accurate amplification of parasite DNA in PCR applications, minimizing sequence errors during amplification, which is critical for genotyping and resistance studies [29]. |

| Monarch Spin PCR & DNA Cleanup Kit | For purifying nucleic acids from complex sample matrices (e.g., stool) to remove PCR inhibitors, thereby increasing assay sensitivity and reliability [29]. |

| Lateral Flow Immunoassay (LFIA) Kits | Rapid, point-of-care tests for detecting specific parasite antigens (e.g., for giardiasis, cryptosporidiosis); useful for initial screening and in resource-limited settings [28] [12]. |

| PreCR Repair Mix | Used to repair damaged DNA template before amplification, which can be crucial when working with archival or poorly preserved clinical samples [29]. |

| Specific Antibodies for IFA/ELISA | Key reagents for indirect immunofluorescence assays (IFA) or enzyme-linked immunosorbent assays (ELISA) to detect host antibodies or circulating parasite antigens [28] [8]. |

Experimental Protocols for Proficiency Maintenance

Protocol: Comprehensive Diagnostic Workflow for Gastrointestinal Parasites

This integrated protocol combines multiple diagnostic methods to ensure accurate detection and address the limitations of any single technique [8].

Principle: No single diagnostic method detects all gastrointestinal parasites with perfect sensitivity and specificity. An algorithmic approach that combines antigen detection, molecular methods, and morphological examination maximizes diagnostic accuracy and helps maintain technologist proficiency across different platforms [8] [13].

Specimen Collection and Transport:

- Collection: Collect a minimum of three stool specimens on alternate days to account for intermittent shedding of parasites [13].

- Preservation: Preserve each specimen in a validated transport medium. Total-Fix or paired vials of 10% formalin (for concentration and permanent smear) and PVA (for permanent smear) are recommended [13].

- Rejection Criteria: Do not accept specimens in unvalidated preservatives like Ecofix or Protofix. Specimens submitted for O&P exam from patients hospitalized for >3 days are generally not recommended, as diarrhea is more likely from non-parasitic causes [13].

Procedure:

- Initial Screening with Multiplex PCR Panel:

- Use an automated nucleic acid extraction system to purify DNA from a portion of the preserved stool sample.

- Perform a multiplexed PCR gastrointestinal panel targeting common bacterial, viral, and parasitic pathogens (Giardia, Cryptosporidium, E. histolytica) according to the manufacturer's instructions [28] [8].

- Microscopic Examination (O&P):

- Concentration: Perform a formalin-ethyl acetate sedimentation concentration procedure on the formalin-preserved sample to concentrate eggs, cysts, and larvae.

- Staining: Prepare a permanent stained smear (e.g., trichrome stain) from the PVA-preserved sample. This is critical for the identification of protozoan trophozoites and cysts [28] [8].

- Examination: Systematically examine both the concentrated wet mount and the stained smear under appropriate magnification (10x, 40x, 100x oil immersion). Proficiency requires knowledge of key morphological features [8].

- Reflexive & Specialized Testing:

- If the PCR panel is negative but microscopy is suspicious or clinical suspicion remains high, proceed with species-specific PCR for pathogens not included in the panel (e.g., Dientamoeba fragilis, Cyclospora) [8] [12].

- For suspected extra-intestinal parasites or to establish exposure, submit serum for serological testing (e.g., Strongyloides IgG, Echinococcus IgG) [8].

Quality Control: Include positive control samples (e.g., known Giardia cysts) in each batch of microscopic and molecular tests to ensure reagent and procedural validity.

Proficiency Notes: Regular participation in external quality assurance (EQA) programs is essential. Cross-training technologists in both morphological and molecular techniques builds a resilient and proficient workforce capable of handling complex diagnostic challenges [28] [8].

Workflow Visualization: Integrated Diagnostic Pathway

The following diagram illustrates the logical workflow for the comprehensive diagnosis of gastrointestinal parasites, demonstrating how methods interlink to form a complete diagnostic picture.

The performance characteristics of diagnostic methods vary significantly. Understanding these metrics is crucial for selecting the appropriate test and interpreting results correctly, especially in the context of declining hands-on experience with gold-standard methods.

Table 2: Performance Comparison of Parasitic Diagnostic Methods

| Diagnostic Method | Key Parasites Detected | Estimated Sensitivity of a Single Test | Key Advantages | Key Limitations & Expertise Requirements |

|---|---|---|---|---|

| Microscopy (O&P) | Broad spectrum of protozoa and helminths | ~75.9% [13] | Low cost; detects unexpected parasites; gold standard for many | Labour-intensive; requires high expertise [28] [8] [12] |

| Rapid Lateral Flow (LFA) | Giardia, Cryptosporidium, E. histolytica [28] | Varies by target & kit | Fast; point-of-care; minimal training | Limited target menu; cross-reactivity possible [28] |

| Multiplex PCR Panels | Giardia, Cryptosporidium, E. histolytica, Cyclospora [28] | High for included targets | High throughput; detects multiple pathogens simultaneously | Limited parasite menu; does not detect helminths well [28] [8] |

| AI-Based Image Analysis | Blood parasites (malaria), intestinal helminths [30] | Comparable to expert microscopist (studies ongoing) | Rapid; can standardize diagnosis; reduces workload | Requires curated image databases; limited real-world validation [30] |

Augmented Intelligence: Methodological Integration of AI and Digital Tools in Training and Diagnostics

Troubleshooting Guides

General Deep Learning Model Debugging

Q: My model's performance is worse than expected. What should I do? A: Follow this systematic debugging workflow to identify the issue [31] [32]:

Common Implementation Bugs and Solutions [31] [33]:

| Bug Type | Symptoms | Solution |

|---|---|---|

| Incorrect tensor shapes | Silent failures, broadcasting errors | Step through model creation with debugger, check tensor shapes |

| Input pre-processing errors | Poor performance, normalization issues | Verify normalization (scale to [0,1] or [-0.5,0.5] for images) |

| Incorrect loss function input | Loss behaves unexpectedly | Ensure correct input format (e.g., logits vs. softmax) |

| Train/evaluation mode issues | Batch norm dependencies incorrect | Toggle train/eval mode appropriately |

| Numerical instability | inf or NaN outputs |

Check exponent, log, division operations |

Q: How can I verify my implementation is correct? A: Follow this validation protocol [31] [33]:

- Start Simple: Begin with a minimal implementation (<200 lines) using tested components

- Overfit a Single Batch: Drive training error close to zero to catch fundamental bugs

- Compare to Known Results: Match your implementation against official implementations

Model-Specific Issues

Q: My vision transformer isn't converging on medical images. What's wrong? A: Medical imaging datasets often have unique challenges. Consider these approaches [34]:

| Issue | Solution for Medical Imaging |

|---|---|

| Limited labeled data | Use self-supervised pre-training (DINOv2) |

| Class imbalance | Apply data augmentation strategies |

| Similar visual features | Leverage explainable AI (GradCAM, SHAP) |

| Small dataset size | Use pre-trained backbones with fine-tuning |

Experimental Protocol for Medical Image Classification [34]:

Frequently Asked Questions

Architecture Selection

Q: When should I choose DINOv2 over YOLO or ConvNeXt? A: Base your selection on task requirements and data constraints [34] [35] [36]:

| Model Type | Best For | Parasitology Application | Performance |

|---|---|---|---|

| DINOv2 | Self-supervised learning, multiple vision tasks | Feature extraction from limited labeled data | 96.48% accuracy on skin diseases [34] |

| YOLO | Real-time object detection | Rapid parasite detection in images | Varies by version and dataset |

| ConvNeXt | CNN-based feature extraction | Traditional image classification | Strong baseline performance [34] |

Q: What makes DINOv2 suitable for medical imaging? A: DINOv2 excels in medical applications due to [35] [37]:

- Self-supervised learning: Reduces need for extensive labeled data

- Multi-purpose backbone: Handles classification, segmentation, detection

- Robust features: Trained on 142 million curated images (LVD-142M)

- No fine-tuning required: Works out-of-the-box for many tasks

Performance Optimization

Q: How can I improve my model's accuracy on parasite images? A: Implement these evidence-based strategies [31] [34] [33]:

| Strategy | Implementation | Expected Benefit |

|---|---|---|

| Data augmentation | Rotation, flipping, color jittering | Improved generalization |

| Transfer learning | Pre-trained on ImageNet or medical datasets | Faster convergence, better performance |

| Explainable AI | GradCAM, SHAP heatmaps | Clinical insights, validation |

| Ensemble methods | Combine multiple models | Increased accuracy and robustness |

Experimental Protocol for Parasite Identification [16] [17]:

- Sample Collection: Gather sufficient diverse parasite images

- Data Pre-processing: Apply normalization and augmentation

- Model Training: Use appropriate architecture with medical imaging considerations

- Validation: Compare against traditional methods (microscopy, PCR)

- Clinical Correlation: Partner with domain experts for validation

The Scientist's Toolkit

Research Reagent Solutions

| Tool | Function | Application in Parasite Research |

|---|---|---|

| DINOv2 Pre-trained Models | Feature extraction without fine-tuning | Rapid prototyping for new parasite datasets |

| GradCAM/SHAP | Model interpretability | Identify visual features used for classification |

| Data Augmentation Pipeline | Increase effective dataset size | Handle limited medical image data |

| Traditional Microscopy | Gold standard validation | Ground truth for model training [16] |

| PCR Techniques | Molecular confirmation | Species identification when morphology is insufficient [17] |

Experimental Workflow for Parasite Identification Research

Quantitative Performance Comparison

Reported Performance Metrics in Medical Imaging [34]:

| Model | Dataset | Accuracy | F1-Score | Application |

|---|---|---|---|---|

| DINOv2 | 31-class skin disease | 96.48% | 97.27% | Dermatology |

| DINOv2 | HAM10000 | High | High | Skin disease |

| DINOv2 | Dermnet | High | High | Dermatology |

| Traditional Microscopy | Parasite detection | 70-95% | Varies | Parasitology [16] |

| DNA barcoding | Species identification | 95.0% | Varies | Parasite diagnosis [16] |

Technical Support Center

Troubleshooting Guides

Scanner Image Quality Issues

Problem: Blurred or Out-of-Focus Whole Slide Images (WSIs)

- Question: Why are my scanned parasite images blurred or out-of-focus?

- Answer: This is often due to incorrect scanner settings or slide preparation artifacts.

- Step 1: Verify that the scanner's Z-stacking or auto-focus function is enabled, especially for thick parasite specimens.

- Step 2: Check that the slide is clean and free of dust, debris, or fingerprints on the glass surface.

- Step 3: Ensure the slide is properly seated in the scanner tray or holder.

- Step 4: For persistent issues, perform a scanner calibration according to the manufacturer's protocol. Real-world studies show that focus errors can affect up to 30% of digital slides on some scanner models [38].

Problem: Digital Artifacts in the Image

- Question: What are these strange lines, tiles, or color shifts in my digital slide?

- Answer: These are typically digital artifacts introduced during the scanning process.

- Step 1: Identify the artifact type. Tiling can be caused by image processing errors or camera overexposure [38]. Color shifts may arise from incorrect white balance settings during pre-scan setup.

- Step 2: Rescan the slide. If the artifact disappears, it was likely a temporary glitch.

- Step 3: If the artifact persists, clean the scanner's optical path (lenses, cameras) as per the user manual.

- Step 4: Contact technical support if the problem continues, as it may indicate a hardware issue with the camera or sensor.

AI Platform Analysis Errors

Problem: AI Model Fails to Detect Target Parasites

- Question: The AI-assisted review platform is not identifying parasites that are visibly present. What should I do?

- Answer: This usually indicates a mismatch between the AI model's training data and your current samples.

- Step 1: Verify the stain type and protocol. AI models are often trained on specific stains (e.g., H&E). Using a different stain can reduce accuracy [39].

- Step 2: Check image quality. Blur, artifacts, or uneven illumination can confuse AI algorithms. Rescan the slide if necessary.

- Step 3: Retrain or fine-tune the AI model. The system may need additional training examples from your specific lab's specimens to improve its predictive ability [39].

- Step 4: Review the model's confidence threshold. Lowering the threshold may help the system detect fainter or smaller parasites.

Problem: Inconsistent AI Results Between Scans

- Question: Why does the AI platform give different results for the same slide when scanned multiple times?

- Answer: Inconsistencies often stem from variations in the input WSIs.

- Step 1: Ensure consistent scanning parameters (magnification, resolution, focus method) across all scans.

- Step 2: Standardize slide preparation to minimize variations in stain intensity and section thickness.

- Step 3: Check for the presence of new artifacts in one of the scans that might be interfering with the analysis.

- Step 4: As a Preview feature, AI-assisted troubleshooting may have inherent limitations in consistency; provide feedback to the vendor for improvement [40].

Frequently Asked Questions (FAQs)

Q1: What is the typical throughput I can expect from a high-volume slide scanner? A1: Throughput varies significantly by scanner model. A study comparing 16 scanners found that the total time to scan a set of 347 slides—including both instrument run time and technician operation time—ranged from approximately 13.5 to 47 hours [38]. The table below summarizes key performance metrics from this real-world evaluation.

Q2: How does magnification (20x vs. 40x) impact my parasite identification workflow? A2: 20x magnification is often sufficient for routine identification of larger parasites and general histopathology, offering faster scanning speeds and smaller file sizes. 40x magnification is essential for visualizing subcellular structures and identifying smaller parasites (e.g., microsporidia), providing the detail needed for complex diagnoses but requiring more storage and longer scanning times [41].

Q3: Our AI model is producing confusing results. Who is responsible for addressing this? A3: Maintaining model accuracy is a shared responsibility. Researchers are responsible for providing high-quality, consistently prepared slides and validating the AI's findings against gold-standard methods. IT/AI Support Teams are responsible for managing the IT infrastructure, ensuring data privacy, and retraining models with new data. Vendors are responsible for providing supported, well-documented platforms and incorporating user feedback [40].

Q4: What are the most common sources of error in a fully digital workflow? A4: The most common errors are concentrated at the beginning and end of the workflow, as illustrated in the following diagram.

Q5: Are AI-generated summaries and analyses reliable enough for diagnostic purposes? A5: During Preview phases, AI-generated outputs should not be used as the sole basis for diagnostic decisions. These features are designed to assist with troubleshooting and provide initial insights. They may not always be accurate or complete. For critical production issues and formal diagnoses, traditional pathologist review and official support channels remain essential [40].

Experimental Protocols & Data

Real-World Scanner Performance Comparison

The following table summarizes data from a clinical study comparing the performance of 16 whole slide imaging scanners from 7 vendors when processing 347 real-world glass slides [38]. This data is critical for assessing institutional resources.

Table 1: Whole Slide Scanner Performance Metrics [38]

| Performance Metric | Range Observed (for 347 slides) | Key Findings & Impact |

|---|---|---|

| Total Scan Time | 13 hours 30 minutes to 47 hours 2 minutes | Includes technician operation time. Affects daily workflow capacity. |

| Technician Operation Time | 1 hour 30 minutes to 9 hours 24 minutes | Includes pre- and post-scan work. Impacts staffing requirements. |

| Image Quality Errors | 8% to 61% of slides per run | High error rates necessitate rescanning, reducing net throughput. |

| Missing Tissue Errors | 0% to 21% of slides | Scanner failed to detect all tissue/parasite material on the slide. |

| Out-of-Focus Errors | 0% to 30.1% of slides | Compromises diagnostic clarity and AI analysis accuracy [38]. |

Digital Workflow Protocol for Parasite Identification

Aim: To digitally transform the process of parasite identification and quantification from gross specimen to analytical report. Materials:

- Specimens: Amphibian, fish, or snail hosts [42]

- Key Reagent Solutions: See Table 2 below.

- Equipment: Whole Slide Scanner (e.g., Leica, Hamamatsu, Grundium [43]), dedicated workstation, AI-assisted review platform.

Methodology:

- Specimen Necropsy & Isolation: Perform systematic necropsy on host species. Isolate macro-parasites and preserve them for molecular and morphological vouchers [42].

- Slide Preparation: Prepare stained glass slides (H&E, IHC, or special stains) from tissue sections containing parasites.

- Digital Slide Scanning:

- Load slides into a high-throughput scanner (e.g., capable of handling 100-450 slides per batch [43]).

- Set scanning parameters to at least 20x magnification (40x recommended for small parasites). Ensure consistent focus settings.

- Initiate scan and use barcoding for sample tracking.

- Quality Control (QC) Review:

- A senior technician must review 100% of digital images for critical errors like missing tissue, blur, or artifacts [38].

- Log error rates and rescan any slides that fail QC.

- AI-Assisted Review & Analysis:

- Upload approved WSIs to the AI platform.

- Run the pre-trained parasite detection model to identify and quantify infections.

- Manually verify the AI's findings, especially low-confidence detections.

- Data Storage & Collaboration: Archive digital slides and analysis results in a secure, searchable database. Use remote consultation capabilities for expert second opinions [41].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Parasitology Research [42]

| Item | Function/Benefit |

|---|---|

| Fixatives (e.g., Formalin) | Preserves tissue morphology and parasites in situ, preventing degradation. |

| Histological Stains (H&E) | Provides contrast for visualizing tissue architecture and general parasite morphology. |

| Special Stains (e.g., Gram, Ziehl-Neelsen) | Helps identify specific parasite types or associated bacterial infections. |

| Molecular Preservation Buffer | Stabilizes nucleic acids from isolated parasites for downstream genetic analysis and vouchers [42]. |

| Macro-parasite Mounting Medium | Secures isolated parasites on slides for creating permanent morphological vouchers [42]. |

Workflow and Troubleshooting Diagrams

Digital Pathology Workflow

Image Analysis Troubleshooting Logic

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: My model confuses protozoan cysts with debris or air bubbles. How can I improve its specificity? Answer: Low specificity for protozoan cysts is often due to their small size and morphological similarity to non-parasitic objects. To address this:

- Leverage Attention Mechanisms: Integrate Convolution and Attention networks (CoAtNet). The attention mechanism helps the model focus on the most relevant parts of the image, enhancing feature extraction from small, indistinct objects like protozoan cysts [44].

- Use Advanced Preprocessing: Apply image segmentation techniques, such as Otsu thresholding, as a preprocessing step. This isolates potential parasitic regions and reduces background noise, which helps the network learn more discriminative features and has been shown to improve overall classification accuracy by approximately 3% [45].

- Employ Data Augmentation: Generate more training samples with techniques like rotation, scaling, and color jittering to make the model more robust to the varied appearances of cysts and impurities [46].

FAQ 2: For a new, small dataset of helminth eggs, which model architecture should I choose to avoid overfitting? Answer: With limited data, your priority should be models that perform well without requiring millions of images.

- Use Transfer Learning: Fine-tune a pre-trained model like DenseNet201 or ResNet50V2. These models, trained on large datasets like ImageNet, can be adapted to parasitology tasks and have been used in ensemble models to achieve test accuracies above 97% [46].

- Consider YOLOv4-tiny: If your goal is object detection (identifying and locating eggs within an image), YOLOv4-tiny is designed for efficiency and lower computational cost. It has achieved high precision (96.25%) and sensitivity (95.08%) in recognizing 34 classes of intestinal parasites, making it suitable for environments with limited resources [47].

- Explore Self-Supervised Learning (SSL): For very small datasets, SSL models like DINOv2 are highly effective. DINOv2 can learn features from unlabeled datasets and has demonstrated exceptional performance, with a large variant achieving 98.93% accuracy and 99.57% specificity in intestinal parasite identification, even with limited data fractions [48].

FAQ 3: What is the most reliable way to validate the performance of my CNN model for clinical use? Answer: Beyond standard accuracy, use a comprehensive set of validation metrics and procedures accepted in clinical and technical literature [48]:

- Compute a Full Suite of Metrics: Report precision, sensitivity (recall), specificity, and F1-score. The F1-score is particularly important for imbalanced datasets.

- Perform Statistical Agreement Analysis: Calculate Cohen’s Kappa to measure the level of agreement between your model and human expert technologists. A Kappa score greater than 0.90 indicates almost perfect agreement and is a strong validation signal [48].

- Utilize Cross-Validation: Perform k-fold cross-validation (e.g., five-fold) to ensure your model's performance is consistent and not dependent on a particular split of the training and test data [45].

FAQ 4: How can I implement an automated diagnostic system in a resource-limited lab with low-end GPU devices? Answer: Computational efficiency is key for deployment in low-resource settings.

- Select Lightweight Models: Opt for models known for their speed and lower memory footprint, such as YOLOv4-tiny or YOLOv7-tiny. These are specifically designed to run on low-end GPU hardware while maintaining high performance [48] [47].

- Apply Model Compression: Techniques like pruning and quantization can reduce the size of a trained model and increase its inference speed without a significant loss in accuracy.

- Use a Modular Workflow: Implement a pipeline where a lightweight model performs initial screening, and only difficult cases are flagged for review by a more complex model or a human expert.

Troubleshooting Guides

Issue: Poor Model Generalization on Images from a Different Microscope Symptoms: High accuracy on the original test set but poor performance on new data from a different source. Solution:

- Domain Adaptation: Use image preprocessing to standardize inputs. Apply Gaussian or median filters to reduce noise and artifacts specific to a new microscope [46]. Techniques like histogram equalization can also help normalize color and contrast variations.

- Fine-Tuning: Retrain the final layers of your pre-trained model on a small, new dataset acquired from the different microscope. This helps the model adapt to the new imaging characteristics.

- Data Diversity in Training: Ensure your original training set includes images from multiple sources, with variations in staining, lighting, and microscope models to build a more robust model from the start.

Issue: Low Detection Accuracy for Overlapping or Clustered Parasitic Eggs Symptoms: The model fails to detect individual eggs when they are touching or overlapping in the image. Solution:

- Instance Segmentation Models: Move from simple object detection to models capable of instance segmentation, such as U-Net or Mask R-CNN. These models can delineate the exact boundaries of each egg, effectively separating them even in clusters [44] [47].

- Data Augmentation: Artificially create training examples with overlapping eggs by pasting multiple egg images into a single background. This teaches the model to recognize these challenging scenarios.

- Post-Processing Techniques: Implement post-processing algorithms that can split connected regions identified by the model based on shape and size criteria.

Experimental Protocols & Data

Table 1: Performance Comparison of Deep Learning Models in Parasitology

| Model Name | Task Type | Key Performance Metrics | Best For / Notes |

|---|---|---|---|

| CoAtNet (CoAtNet0) [44] | Classification | 93% Accuracy, 93% F1-Score | High accuracy on parasitic egg image classification; simpler structure with lower computational cost. |

| YOLOv4-tiny [47] | Object Detection | 96.25% Precision, 95.08% Sensitivity | Resource-constrained settings; fast detection on low-end GPUs; recognized 34 parasite classes. |

| DINOv2-large [48] | Classification | 98.93% Accuracy, 99.57% Specificity | Situations with limited labeled data; self-supervised learning. |

| CNN with Otsu Segmentation [45] | Classification | 97.96% Accuracy (~3% gain over baseline) | Boosting performance of simpler CNNs; improves interpretability by highlighting parasitic regions. |

| Ensemble Model (VGG16, ResNet50V2, etc.) [46] | Classification | 97.93% Test Accuracy, 0.9793 F1-Score | Maximizing diagnostic accuracy and robustness by combining multiple models. |

Detailed Methodology: Implementing a YOLOv4-tiny Model for Parasite Detection

Objective: To train an object detection model for automatically recognizing protozoan cysts and helminth eggs in stool sample images. Materials: (See "Research Reagent Solutions" below) Protocol:

- Dataset Preparation:

- Collect images of stool samples prepared using a modified direct smear method [47].

- Annotate the images by drawing bounding boxes around all parasitic objects and labeling them with the correct class. Use annotation tools like LabelImg.

- Split the dataset into training (80%) and testing (20%) sets [48].

- Model Configuration:

- Download the YOLOv4-tiny architecture configuration files.

- Adjust the configuration to match the number of classes in your dataset.

- Set hyperparameters such as batch size, subdivisions, and learning rate. A lower batch size can be beneficial for training on smaller GPUs.

- Training:

- Initialize the model with pre-trained weights on a large dataset like COCO to leverage transfer learning.

- Train the model on your training set. Monitor the loss to ensure it is decreasing.

- Evaluation:

- Use the test set to evaluate the model's performance.

- Generate a confusion matrix and calculate key metrics such as precision, sensitivity (recall), and mean Average Precision (mAP) [47].

- Compare the model's predictions against the ground truth annotations made by human experts.

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in the Experiment |

|---|---|

| Stool Sample Collection Kit | For standardized and safe collection of patient specimens. |

| Formalin-ethyl acetate centrifugation technique (FECT) | A concentration method used to prepare stool samples for microscopic examination, often considered a gold standard for creating ground truth data [48]. |

| Merthiolate-Iodine-Formalin (MIF) Stain | A solution for fixation and staining of parasites, enhancing contrast and visibility of morphological features in microscopic images [48]. |

| Microscope with Digital Camera | To acquire high-resolution digital images of the prepared slides for model training and testing. |

| Annotation Software (e.g., LabelImg, VGG Image Annotator) | To create bounding box or segmentation mask labels on images, which are essential for supervised learning. |

| GPU-Accelerated Workstation | To efficiently handle the intensive computational demands of training deep learning models. |

Workflow Diagrams

Diagram 1: Overall AI Parasite Diagnostic Workflow.

Diagram 2: Model Selection Logic for Parasite Classification.

Technical Troubleshooting Guides

Common Multi-Omics Integration Challenges and Solutions

Table 1: Frequent Technical Pitfalls and Their Resolutions

| Challenge | Root Cause | Solution | Reference |

|---|---|---|---|

| Data Heterogeneity | Different measurement techniques, data types, scales, and noise levels across omics layers [49] [50] | Apply appropriate normalization for each data type (log transformation for metabolomics, quantile normalization for transcriptomics) followed by z-score standardization to a common scale [50] | |

| Missing Data Points | Limitations in mass spectrometry (varying ionization, in-source fragmentation); low capture efficiency in single-cell techniques [51] | For metabolomics: Use vendors with Level 1 and 2 metabolite identifications; employ imputation methods carefully considering data structure [51] [50] | |

| Discrepancies Between Omics Layers | Biological factors (post-translational modifications, protein degradation) not technical artifacts [50] | Verify data quality, then use pathway analysis to identify common biological pathways that might explain apparent discrepancies [50] | |

| Low Statistical Power | High background noise, small effect sizes, inadequate sample size [51] | Use tools like MultiPower for sample size estimation; increase replicates; ensure adequate sample collection [51] | |

| Batch Effects | Technical variation from sample processing across different dates or platforms [52] | Implement batch effect correction algorithms during preprocessing; randomize sample processing order [52] |

Data Preprocessing and Quality Control

Table 2: Normalization Methods by Omics Type

| Omics Data Type | Recommended Normalization Methods | Purpose | Quality Metrics |

|---|---|---|---|

| Transcriptomics | Quantile normalization, TPM/FPKM for RNA-Seq | Ensure uniform expression distribution across samples | Check for low-count genes, 3'/5' bias, library complexity |

| Proteomics | Quantile normalization, median centering | Account for varying ionization efficiencies | Evaluate protein identification FDR, intensity distribution |

| Metabolomics | Log transformation, total ion current normalization | Stabilize variance, account for concentration differences | Assess peak shape, retention time stability, internal standards |

| Genomics | GC-content normalization, read depth scaling | Correct for technical sequencing biases | Monitor mapping rates, insert sizes, coverage uniformity |

Frequently Asked Questions (FAQs)

Experimental Design Questions

What is the optimal sample size for a multi-omics study? Sample size requirements depend on effect size, background noise, and the number of omics layers. Use statistical power tools like MultiPower specifically designed for multi-omics experiments. Generally, multi-omics studies require larger sample sizes than single-omics studies to achieve the same statistical power [51].

How should I handle different data scales when integrating genomics and proteomics data?

- Apply omics-specific normalization first (e.g., quantile normalization for transcriptomics)

- Follow with cross-omics standardization such as z-score normalization

- Use integration tools that inherently handle multi-scale data (MOFA+, Seurat v4) [49] [50]

What controls should be included for quality assurance?

- Technical replicates across all omics platforms

- Reference standards with known values for each omics type

- Blank samples to identify background signals

- Pooled quality control samples analyzed throughout the batch [52]

Data Analysis Questions

How can I identify key biomarkers using integrated genomics and proteomics data?

- Perform differential expression analysis on each omics layer separately

- Apply integration techniques like multi-omics factor analysis

- Prioritize candidates showing consistent changes across multiple omics layers

- Validate findings in independent cohorts when possible [50]

What statistical methods are appropriate for multi-omics datasets?

- For univariate analysis: t-tests, ANOVA with multiple testing corrections

- For multivariate analysis: PLS-DA, canonical correlation analysis

- For integration: Multi-omics factor analysis, joint dimensionality reduction

- Always control false discovery rate using methods like Benjamini-Hochberg [50]

How do I resolve discrepancies between genomic mutations and protein abundance? Investigate biological mechanisms rather than assuming technical errors:

- Check for post-translational modifications that affect protein function

- Examine protein degradation rates and translational regulation

- Consider alternative splicing or RNA editing events

- Analyze time-delayed effects between transcription and translation [50]

Interpretation and Validation Questions

How can I link genomic variations to proteomic changes in my data?

- Perform association analysis between SNPs and protein abundance (protein QTL mapping)

- Integrate with pathway databases to identify affected biological processes

- Use causal inference methods to determine directionality of effects

- Validate findings with functional experiments when possible [50]