Strategies to Mitigate Observer Bias in Geometric Morphometrics: From Foundational Concepts to Automated Solutions

Observer bias in geometric morphometric (GM) landmark placement is a critical methodological challenge that can compromise data integrity and research reproducibility in biomedical and drug development research.

Strategies to Mitigate Observer Bias in Geometric Morphometrics: From Foundational Concepts to Automated Solutions

Abstract

Observer bias in geometric morphometric (GM) landmark placement is a critical methodological challenge that can compromise data integrity and research reproducibility in biomedical and drug development research. This article provides a comprehensive framework for understanding, quantifying, and mitigating these biases. We explore the foundational sources of error—including inter-observer, intra-observer, and methodological variations—and evaluate both established protocols and emerging automated technologies. By systematically comparing traditional manual landmarking with advanced deep learning and landmark-free approaches, we offer evidence-based strategies for protocol standardization, operator training, and analytical validation. This guide empowers researchers to enhance measurement reliability, improve classification accuracy in phenotypic analyses, and strengthen the validity of morphological assessments in clinical and pharmaceutical applications.

Understanding Observer Bias in Geometric Morphometrics: Sources, Impact, and Measurement

Observer bias is a type of detection bias that occurs when a researcher's expectations, opinions, or prejudices influence what they perceive or record in a study [1] [2]. This systematic error arises when observers' conscious or unconscious predispositions affect their interpretation of data, particularly in studies where measurements are taken or recorded manually [2] [3]. In geometric morphometrics—a quantitative method for analyzing shape variation using landmarks—observer bias can significantly compromise data integrity, especially when combining datasets from multiple observers or methods [4] [5].

This technical guide addresses the critical sources of observer variation in geometric morphometric research and provides evidence-based troubleshooting strategies to enhance data reliability and validity.

Core Concepts and Definitions

Types of Observer Bias in Geometric Morphometrics

| Bias Type | Definition | Primary Impact on Morphometrics |

|---|---|---|

| Inter-observer Error | Systematic differences in measurements recorded by different observers [4] [5] | Introduces variability when multiple researchers place landmarks on the same specimens, potentially obscuring true biological signals [4] |

| Intra-observer Error | Variation in measurements recorded by the same observer across multiple trials | Leads to inconsistency in landmark placement over time, reducing measurement repeatability [5] |

| Methodological Error | Discrepancies arising from different data collection techniques or equipment [5] | Causes inconsistencies when combining data from different sources (e.g., calipers, MicroScribe, 3D models) [5] |

| Observer-Expectancy Effect | Researcher's cognitive biases subconsciously influence study outcomes [1] [2] | May lead to systematic misplacement of landmarks in direction expected by research hypotheses |

Quantitative Impact of Observer Bias

Evidence from systematic reviews demonstrates the substantial impact of unmitigated observer bias:

| Research Context | Impact of Non-Blinded Assessment | Source |

|---|---|---|

| Randomized Controlled Trials with binary outcomes | Exaggerated odds ratios by 36% on average [3] | Hróbjartsson et al. |

| Randomized Controlled Trials with measurement scale outcomes | Exaggerated effect size by 68% on average [3] | Hróbjartsson et al. |

| Randomized Controlled Trials with time-to-event outcomes | Overstated hazard ratio by approximately 27% [3] | Hróbjartsson et al. |

| Geometric morphometric studies | Interobserver error comparable to intraspecific variation in some taxa [5] | Robinson et al. |

Experimental Protocols for Assessing Observer Error

Protocol 1: 3D Printed Replica Method for Inter-observer Error Assessment

Background: Traditional inter-observer error assessment requires all observers to converge on the same original specimens, which is logistically and financially challenging, especially in international collaborations [4].

Materials and Methods:

- Reference Collection Creation: Select representative lithic points or biological specimens that capture the morphological variation of interest [4].

- 3D Scanning and Printing: Create high-resolution 3D scans of original specimens and produce accurate 3D printed copies for distribution to multiple observers [4].

- Standardized Photography: Develop clear protocols for photographing replicas, including standardized camera setup, lighting, and orientation to minimize parallax error [4].

- Data Collection: Each observer records metric measurements and landmark placements on their set of replicas following identical protocols [4].

- Error Assessment: Compare datasets using statistical analyses (e.g., Procrustes ANOVA, Euclidean distances) to quantify inter-observer variability [4].

Validation: Research demonstrates that when photography procedures are standardized and dimensions are clearly defined, the resulting metric and geometric morphometric data are minimally affected by inter-observer error, supporting this method as an effective solution for collaborative research frameworks [4].

Protocol 2: Comprehensive Error Assessment Across Multiple Methods

Objective: Evaluate variance contributions from multiple sources in geometric morphometric data collection [5].

Experimental Design:

- Specimen Selection: Choose representative specimens spanning relevant taxonomic groups (e.g., 14 anthropoid crania) [5].

- Multiple Methods: Collect data using complementary approaches: traditional calipers, MicroScribe digitizer, 3D models from surface scanners (NextEngine), and microCT scans [5].

- Repeated Measures: Each observer collects data five times for each method and specimen [5].

- Statistical Analysis:

- For linear data: Use ANOVA models to examine variance at genus, species, specimen, observer, method, and trial levels [5].

- For 3D data: Employ geometric morphometric methods; use principal components analysis to examine distribution in morphospace and Procrustes distances to generate UPGMA trees [5].

Key Findings: In linear morphometric data, most variance occurs at the genus level, with greater variance at the observer than method levels. For 3D data, interobserver and intermethod error can be similar to intraspecific distances among individuals, with interobserver error sometimes exceeding intermethod error [5].

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Reagent | Function in Mitigating Observer Bias | Application Context |

|---|---|---|

| 3D Printed Reference Collection | Provides identical specimens for multiple observers, enabling inter-observer error assessment without travel [4] | Collaborative research designs; international studies |

| Poisson Surface Reconstruction | Creates watertight, closed surfaces from mixed modalities (CT, surface scans), standardizing mesh topology [6] | Landmark-free morphometric analyses |

| Deterministic Atlas Analysis (DAA) | Landmark-free approach that quantifies deformation energy needed to map a computed atlas onto each specimen [6] | Macroevolutionary analyses across disparate taxa |

| Functional Data Geometric Morphometrics (FDGM) | Converts 2D landmark data into continuous curves, modeling non-rigid deformations undetected by GPA [7] | Capturing subtle shape variations in craniodental morphology |

| XYOM Software | Identifies influential landmark subsets through random search and hierarchical methods, improving discriminatory power [8] | Optimizing landmark selection for species discrimination |

Troubleshooting Guides & FAQs

FAQ 1: How can I combine geometric morphometric data from multiple observers without introducing significant error?

Solution: Implement a comprehensive pre-collaboration reliability assessment:

- Develop Detailed Protocols: Create standardized procedures for specimen orientation, landmark definitions, and digitization techniques [4] [1].

- Conduct Training Sessions: Train all observers together until they consistently produce similar measurements for the same specimens [1] [2].

- Assess Interrater Reliability: Calculate quantitative interrater reliability metrics and set a minimum threshold for agreement before beginning actual data collection [1].

- Use Reference Collections: Distribute 3D printed replicas of key specimens to all observers for periodic recalibration throughout the project [4].

Evidence: Studies show that when procedures are standardized and dimensions clearly defined, metric and geometric morphometric data are minimally affected by inter-observer error [4].

FAQ 2: What is the most effective way to reduce observer bias in landmark placement?

Solution: Implement a multi-faceted approach:

- Blinding (Masking): Keep observers unaware of research hypotheses and group assignments when placing landmarks [1] [3].

- Standardized Protocols: Create detailed, structured protocols for all observation procedures with visual examples of correct and incorrect landmark placement [1] [2].

- Multiple Observers: Involve at least two independent observers for a subset of specimens and assess interrater reliability [1].

- Automated Methods: Where possible, use landmark-free approaches like Deterministic Atlas Analysis or Functional Data Geometric Morphometrics to reduce human intervention [6] [7].

Evidence: Non-blinded outcome assessors generate effect sizes exaggerated by 36-68% on average, highlighting the critical importance of blinding [3].

FAQ 3: Are some geometric morphometric approaches less susceptible to observer bias than others?

Solution: Consider alternative morphometric approaches:

- Outline-Based Methods: These methods demonstrate relatively lower levels of intra-observer error compared to inter-observer error and avoid issues with subjective landmark homology [4].

- Landmark-Free Methods: Techniques like DAA (Deterministic Atlas Analysis) eliminate landmark placement subjectivity entirely by using control points and momentum vectors to quantify shape variation [6].

- Functional Data GM: This approach converts landmark data into continuous curves, potentially capturing shape variations between landmarks that traditional GM might miss [7].

Evidence: Outline-based methods are likely more suitable for collaborative research designs due to greater objectivity in data capture compared to landmark-based methods [4].

FAQ 4: How does methodological error compare to inter-observer error in geometric morphometrics?

Solution: Understand the relative contributions of different error sources:

- Variance Partitioning: Studies partitioning variance in linear morphometric data found greater variance at the observer level than method level [5].

- 3D Data Considerations: For 3D geometric morphometric data, interobserver and intermethod error are often similar, though interobserver error may be higher than intermethod error [5].

- Context Dependence: The impact of error depends on research context—interobserver error may obscure patterns in intraspecific studies but be negligible in analyses of deeply divergent taxa [5].

Recommendation: Conduct interobserver and intermethod reliability assessments prior to full data collection, especially for studies focused on intraspecific variation or closely related species [5].

Workflow Diagrams for Bias Mitigation

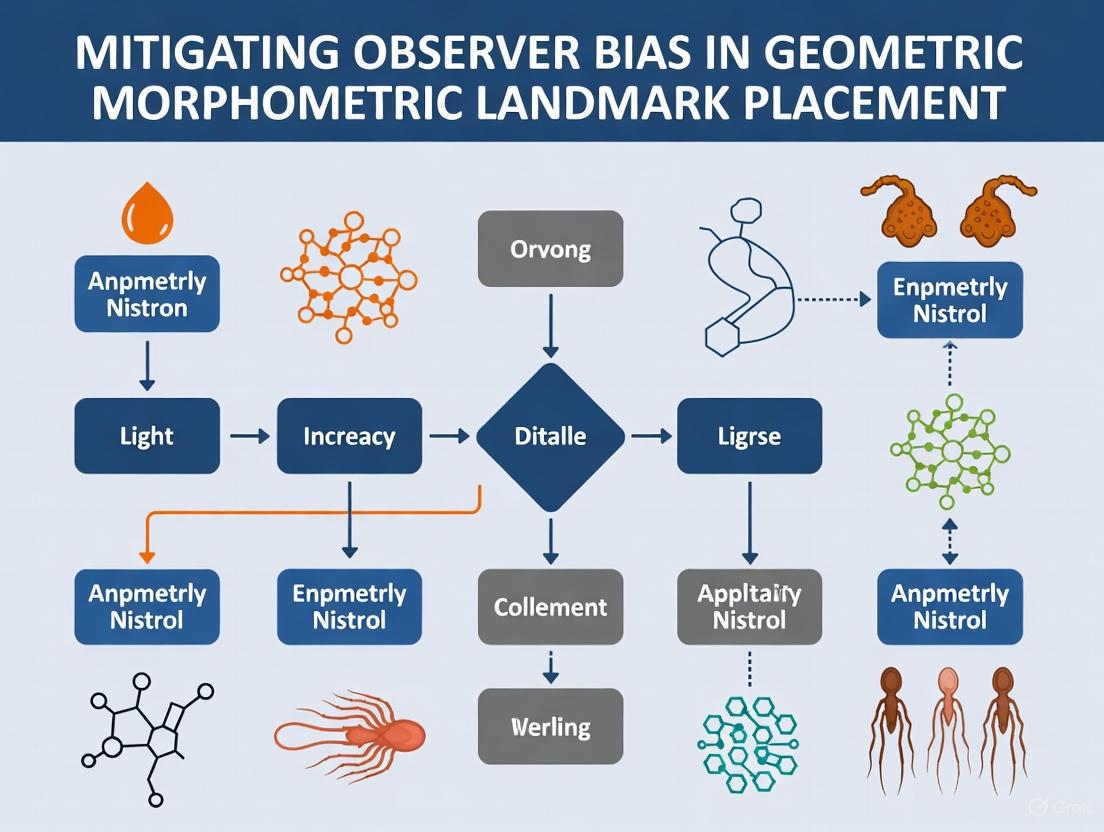

Diagram 1: Comprehensive workflow for mitigating observer bias throughout research phases.

Diagram 2: Experimental workflow for assessing inter-observer error using 3D replica methodology.

The Impact of Bias on Data Integrity and Research Reproducibility

Troubleshooting Guides

Guide 1: Addressing Low Reproducibility in Geometric Morphometric Studies

Problem: Landmark data produces inconsistent results between research teams, leading to low reproducibility of morphometric analyses.

Symptoms:

- Significant variation in landmark coordinates when the same specimen is digitized multiple times

- Inability to replicate statistical classifications (e.g., species identification) using the same dataset

- High intra- or inter-observer error metrics in Procrustes analysis

Diagnosis and Solutions:

| Problem Source | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Inter-observer Error [9] | Have multiple researchers landmark the same 10-15 specimens; Compare coordinate values using Procrustes ANOVA | • Implement standardized landmark identification training• Create detailed visual guides with example landmarks• Use consensus sessions where researchers landmark together |

| Intra-observer Error [9] | Single researcher landmarks same specimen multiple times with washout periods; Calculate coefficient of variation for each landmark | • Establish fixed protocols for landmark identification• Take regular breaks during digitization sessions• Re-landmark subset of specimens to monitor consistency |

| Specimen Presentation Bias [9] | Image same specimens at different orientations; Compare landmark configurations from each presentation | • Standardize imaging protocols using specimen holders• Document exact orientation parameters for replication• Use 3D imaging when 2D projections introduce distortion |

| Instrumental Error [9] | Image same specimens using different equipment (scanners, cameras); Compare resulting landmark data | • Standardize imaging equipment across study• Use calibration standards for cameras/scanners• Document all equipment specifications and settings |

Verification: After implementing corrections, replicate a subset of measurements (≥20% of dataset) to confirm error reduction. Successful intervention should reduce measurement error to <10% of total shape variation [9].

Guide 2: Mitigating Algorithmic Bias in Automated Landmarking

Problem: Automated landmark identification systems introduce systematic errors that compromise data integrity.

Symptoms:

- Consistent under/over-estimation of specific morphological features

- Poor performance with specimens that deviate from training set morphology

- Discrepancies between manually-placed and automatically-placed landmarks

Diagnosis and Solutions:

| Problem Source | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Unrepresentative Training Data [10] [11] | Audit training dataset for population coverage; Test AI performance across different specimen subgroups | • Expand training set to include morphological diversity• Use data augmentation techniques• Implement multiple genotype-specific templates [10] |

| Image Registration Error [10] | Visualize registration alignment quality; Identify areas with poor correspondence | • Optimize image pre-processing parameters• Use specimen-specific registration protocols• Apply multi-level registration approaches |

| Data Drift [11] | Monitor landmark accuracy over time as new specimens are added; Compare to ground truth manual landmarks | • Establish continuous validation protocols• Re-train models regularly with new data• Implement model performance tracking |

| Software-Specific Bias [12] | Compare results across different automated systems (e.g., WebCeph, Deformetrica) against manual standards | • Use ensemble methods combining multiple algorithms• Establish software-specific calibration curves• Maintain manual validation for critical landmarks |

Verification: Validate automated landmark placement against manual digitization by expert researchers for a representative subset (≥30 specimens). Target accuracy should be within mean Euclidean distance of 1.5-2.0 mm for craniofacial landmarks [12].

Frequently Asked Questions (FAQs)

Q1: What constitutes acceptable levels of measurement error in geometric morphometric studies?

Acceptable error levels depend on your research question and biological effect sizes. As a general guideline:

- Inter-observer error should explain <10% of total shape variation [9]

- Intra-observer error should show high repeatability (coefficient of variation <5% for consistent landmarks) [12]

- For taxonomic classification studies, error should not significantly impact group assignments (>90% consistency in replicate classifications) [9]

Always report measurement error metrics alongside your biological results to provide context for your findings.

Q2: How can we balance the efficiency of automated landmarking with the need for data integrity?

Implement a tiered validation approach:

- Full manual validation: Manually check automated landmarks for 5-10% of specimens spanning your morphological range

- Targeted correction: Identify landmarks with consistently poor automated performance and manually correct these specific points

- Quality metrics: Establish quantitative benchmarks for acceptable automated landmark placement (e.g., maximum Euclidean distance from manual validation points) [10] [12]

Studies show this hybrid approach can reduce landmarking time by 60-80% while maintaining data integrity comparable to full manual digitization [10].

Q3: What specific landmarks are most vulnerable to placement bias, and how can we address them?

Evidence identifies several high-variability landmarks:

- Gonion (Go): Shows highest variability in both manual and automated studies [12]

- Posterior Nasal Spine (PNS): Challenging for both experts and AI algorithms [12]

- Landmarks in areas with poor image registration: Such as regions with high morphological variability [10]

Mitigation strategies include:

- Developing landmark-specific placement protocols with visual examples

- Using semi-landmarks in high-curvature regions

- Implementing multiple independent placements with consensus determination

Q4: How does bias in landmark placement actually impact downstream evolutionary and taxonomic analyses?

The impacts are substantial and quantifiable:

- Classification reliability: In one study, different landmark replicates yielded different species classifications for the same specimens [9]

- Morphological disparity: Automated methods may underestimate true shape variance compared to manual landmarking [10]

- Phylogenetic signal: Both the strength and patterns of phylogenetic signal can vary between landmarking methods [6]

- Effect size attenuation: Measurement error can obscure real biological effects, reducing statistical power

These impacts necessitate error assessment as a routine component of morphometric study design.

Q5: What documentation standards should we implement to ensure research integrity in morphometrics?

Comprehensive documentation should include:

- Imaging protocols: Equipment specifications, specimen orientation, resolution settings [9]

- Landmark definitions: Detailed descriptions and visual examples of all landmarks and semi-landmarks

- Digitization protocols: Software used, number of observers, training procedures [13]

- Error assessment: Intra- and inter-observer error metrics with calculation methods [9]

- Processing details: All data transformation and analysis parameters

This documentation enables proper replication and assessment of potential bias sources.

| Error Source | Percentage of Total Shape Variation Explained | Impact on Species Classification | Recommended Mitigation |

|---|---|---|---|

| Inter-observer Variation [9] | Up to 30% | High - affects group membership predictions | Standardized training; Multiple observers |

| Intra-observer Variation [9] | 5-15% | Moderate - affects statistical power | Regular calibration; Breaks during digitization |

| Specimen Presentation [9] | 10-25% | High - introduces systematic distortion | Standardized imaging protocols |

| Imaging Device Differences [9] | 5-20% | Moderate - equipment-specific effects | Equipment standardization; Cross-calibration |

| Automated vs. Manual Landmarking [10] | 15-40% | Variable - depends on landmark type | Hybrid validation approach |

Table 2: Performance Comparison of Landmark Identification Methods

| Method | Reproducibility (Coefficient of Variation) | Time Requirement | Typical Applications |

|---|---|---|---|

| Manual Landmarking by Expert [12] | Moderate (varies by landmark) | High (hours to days) | Small datasets; Method development |

| AI-Assisted Landmarking [12] | High (lower CV for most landmarks) | Moderate (requires validation) | Clinical applications; Medium datasets |

| Fully Automated Landmarking [10] | High (algorithmically consistent) | Low (minutes to hours) | Large-scale studies; High-throughput screening |

| Landmark-Free Methods [6] | Algorithmically consistent | Low to moderate | Macroevolutionary studies; Highly disparate taxa |

Experimental Protocols

Protocol 1: Comprehensive Error Assessment in Geometric Morphometrics

Purpose: Quantify and document measurement error from multiple sources in landmark data.

Materials:

- High-resolution imaging equipment (calibrated)

- 10-15 representative specimens

- Geometric morphometrics software (e.g., MorphoJ, R geomorph package)

- Multiple trained researchers (≥3 for inter-observer assessment)

Procedure:

- Specimen Imaging

- Image each specimen three times at different orientations

- Use multiple imaging devices if available (e.g., different cameras, scanners)

- Document all imaging parameters (resolution, scale, orientation)

Landmark Digitization

- Each researcher landmarks all specimens three times with washout periods

- Blind researchers to specimen identity during digitization

- Randomize digitization order across sessions

Data Analysis

- Perform Procrustes superimposition on all datasets

- Conduct Procrustes ANOVA to partition variance components

- Calculate intraclass correlation coefficients for repeatability

- Test classification consistency using linear discriminant analysis

Validation: A successful assessment will quantify error from each source and identify the largest contributors to total measurement error in your specific research context.

Protocol 2: Validation Framework for Automated Landmarking Systems

Purpose: Establish reliability metrics for AI-based landmark identification in research applications.

Materials:

- Automated landmarking software (e.g., WebCeph, Deformetrica)

- Manually landmarked validation set (≥30 specimens)

- Computing infrastructure for analysis

Procedure:

- Ground Truth Establishment

- Create manual landmark consensus from multiple expert digitizers

- Resolve discrepancies through collaborative review

- Document final landmark positions as reference standard

System Validation

- Process all specimens through automated pipeline

- Calculate Euclidean distances between automated and manual landmarks

- Identify systematic biases in landmark placement

- Assess performance variation across morphological groups

Performance Benchmarking

- Compare reproducibility metrics between manual and automated methods

- Evaluate computational efficiency and scalability

- Establish application-specific accuracy thresholds

Validation: The automated system should achieve mean accuracy within acceptable application-specific thresholds (e.g., <2.0 mm for clinical cephalometrics [12]) while maintaining high reproducibility.

Research Reagent Solutions

Table 3: Essential Materials for Bias-Resistant Morphometric Research

| Item | Function | Specification Guidelines |

|---|---|---|

| Calibrated Imaging System | Standardized specimen digitization | Fixed focal length lenses; Resolution ≥10MP; Scale calibration; Distortion correction |

| Specimen Positioning Equipment | Minimize presentation bias | Customizable holders; Angle measurement capability; Stable mounting system |

| Manual Digitization Tools | Reference standard creation | Tablet with pressure sensitivity; Software with landmark visualization; Training protocols |

| Automated Landmarking Software | High-throughput data collection | Validated against manual standards; Customizable parameters; Uncertainty quantification |

| Data Validation Tools | Error assessment and quality control | Procrustes ANOVA implementation; Classification stability tests; Visualization of placement error |

Workflow Diagrams

Diagram 1: Bias Assessment Protocol in Morphometric Research

Diagram 2: Integrated Landmarking Workflow for Data Integrity

Troubleshooting Guides

Guide 1: Troubleshooting Low ICC Values in Interrater Reliability Studies

Problem: Intraclass Correlation Coefficient (ICC) analysis returns low values (e.g., below 0.5), indicating poor reliability among raters placing landmarks in geometric morphometric studies.

Theory of Probable Cause: Low ICC values typically stem from either high between-rater variation (systematic differences in how raters place landmarks) or inconsistencies in the measurement process itself [14].

Testing the Theory:

- Test 1: Calculate ICC for each rater pair using a two-way random effects model for absolute agreement (ICC(2,1)). Consistent low values across all pairs suggest a widespread protocol issue. Isolated low values point to a specific inconsistent rater [14].

- Test 2: Visually inspect landmark placement by different raters on the same specimen using thin-plate spline deformation grids. Look for systematic offsets in specific landmarks [15].

Resolution Plan:

- Action 1: Implement enhanced rater training focused on landmarks with high variance, using clear, standardized definitions for each landmark location [16].

- Action 2: Re-calibrate raters by having all raters place landmarks on a small set of training specimens, discussing discrepancies until consensus is reached.

- Action 3: If inconsistency persists, review and refine the landmarking protocol. For difficult-to-define landmarks, consider using semi-landmarks to capture curvature information [15].

Verification:

- Re-run the ICC analysis on a new set of specimens after implementing the above actions. The ICC should show a significant improvement toward the moderate (0.5-0.75) or good (0.75-0.9) range [14].

Guide 2: Addressing Inconsistent Results from Euclidean Distance Analysis

Problem: Euclidean Distance Analysis with Singular Value Decomposition (EDSVD) yields unstable or biologically implausible shape models when comparing landmark configurations [17].

Theory of Probable Cause: Instability in EDSVD can be caused by highly correlated distance measurements, landmarks with extremely high variance, or insufficient data scaling prior to analysis.

Testing the Theory:

- Test 1: Check the correlation matrix of the Euclidean distances. If many distances are highly correlated (e.g., |r| > 0.9), this can cause multicollinearity issues in the SVD [17].

- Test 2: Perform a Procrustes ANOVA to identify landmarks with disproportionately high variance, which may be outliers or poorly defined [15].

Resolution Plan:

- Action 1: Standardize the Euclidean distances to unit centroid size to separate size and shape information, making the analysis more stable [17].

- Action 2: Remove or re-evaluate landmarks identified as high-variance outliers in the Procrustes ANOVA.

- Action 3: If using many inter-landmark distances, consider a variable selection method to reduce the set to the most biologically informative distances.

Verification:

- Re-run the EDSVD and check if the primary axes of shape variation now reflect known biological differences among the specimens. Compare the results with those from a Procrustes-based method to confirm general consistency [17] [15].

Frequently Asked Questions (FAQs)

Q1: Which form of ICC should I use for my geometric morphometric study, and why does the selection matter?

The choice of ICC form is critical and depends on your research design and the inferences you wish to make [14]. The table below outlines the common models:

| ICC Model | When to Use | Key Consideration |

|---|---|---|

| One-Way Random | Different, random sets of raters measure different subjects (e.g., multi-center studies). | Rarely used in standard morphometrics; generalizes to a population of raters [14]. |

| Two-Way Random | The same set of randomly selected raters measures all subjects. | Recommended for most studies. Results generalize to any raters with similar characteristics [14]. |

| Two-Way Mixed | The same specific set of raters (the only raters of interest) measures all subjects. | Results are only valid for the specific raters in your study; not generalizable [14]. |

You must also decide between "single rater" or "mean of k raters" (depending on whether your protocol relies on one rater's judgment or the average of multiple) and between "consistency" or "absolute agreement" (where absolute agreement is stricter and recommended for assessing rater bias, as it is sensitive to systematic differences) [14].

Q2: My ICC value is 0.6. Is this acceptable for publication?

An ICC of 0.6 falls into the "moderate" reliability category. According to Koo & Li (2016), values between 0.50 and 0.75 indicate moderate reliability [14]. While this may be acceptable in early-stage research or for traits that are inherently difficult to measure, many journals prefer ICC values in the "good" (0.75-0.9) or "excellent" (>0.9) range for key morphological measurements. You should report the ICC value along with its 95% confidence interval and justify its acceptability in the context of your field [14].

Q3: How does Euclidean Distance Analysis (EDSVD) compare to Procrustes-based methods for quantifying shape and mitigating bias?

Both methods are established tools in geometric morphometrics but have different approaches and strengths [17] [15].

| Feature | Euclidean Distance Analysis (EDSVD) | Procrustes-Based Methods |

|---|---|---|

| Primary Data | Matrix of inter-landmark distances [17]. | Raw landmark coordinates [15]. |

| Bias Mitigation | Standardizing distances to unit centroid size helps control for size-related bias [17]. | Procrustes superimposition removes non-shape variation (position, orientation, scale) [15]. |

| Interpretation | Can be less intuitive; shape differences visualized via reconstructed distances or principal coordinates [17]. | Direct visualization of shape change as landmark displacements or deformation grids is highly intuitive [15]. |

| Key Advantage | Does not require alignment (registration) of specimens [17]. | The current gold standard; rich toolkit for visualization and analysis [15]. |

Procrustes-based methods are generally preferred in modern morphometrics due to their superior and intuitive visualization capabilities [15]. However, EDSVD remains a valid tool, and its results are often similar to those from principal component analysis of Procrustes coordinates [17].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key methodological "reagents" for designing a reliable geometric morphometrics study aimed at mitigating observer bias.

| Item Name | Function in Experiment | Key Consideration |

|---|---|---|

| Standardized Landmarking Protocol | A detailed document with written and visual definitions for each landmark. | The single most important tool for reducing random error and systematic bias between raters. |

| Two-Way Random Effects ICC Model | The statistical model to quantify the agreement between multiple raters who are considered a random sample from a larger population [14]. | Use ICC(2,1) for the reliability of a single rater's measurements. Use ICC(2,k) for the reliability of the mean rating from all raters [14]. |

| Procrustes Anova (Procrustes MANOVA) | A statistical method to partition shape variance into components (e.g., specimen, rater, error) to identify significant rater effects [15]. | Directly tests for the presence of systematic bias in landmark placement among different raters. |

| Training Set of Specimen Images | A curated set of images representing morphological diversity, used to train and calibrate raters before the main study. | Including specimens of varying complexity helps ensure rater consistency across the full range of the study. |

| Semi-Landmarks | Points placed on curves and surfaces between traditional landmarks to capture more comprehensive shape information [15]. | Reduces subjectivity in capturing non-point-like homologous structures, thereby mitigating a source of bias. |

Experimental Workflow for Reliability Assessment

The following diagram illustrates a robust methodology for setting up a geometric morphometric study and quantifying observer reliability, incorporating steps to mitigate bias.

Methodology for Assessing Rater Reliability

Interpreting ICC Results & Diagnosing Problems

This table provides a standard framework for interpreting your ICC results and outlines potential next steps based on the outcome.

| ICC Value | Reliability | Interpretation | Recommended Action |

|---|---|---|---|

| < 0.50 | Poor | Unacceptable level of agreement. Rater bias is a major concern. | Essential to review landmark definitions, retrain raters, and re-run pilot study [14]. |

| 0.50 - 0.75 | Moderate | Moderate agreement. May be sufficient for group-level comparisons. | Identify and review landmarks with the highest variance. Consider if this level of precision is sufficient for study aims [14]. |

| 0.75 - 0.90 | Good | Solid agreement. Suitable for most research applications. | Proceed with full data collection. Report ICC with confidence intervals [14]. |

| > 0.90 | Excellent | High degree of agreement. Ideal for critical measurements. | Proceed with full data collection. The protocol is highly reliable [14]. |

Troubleshooting Guides

Guide 1: Addressing Low Inter-Observer Reliability

Problem: Different observers are identifying the same landmark in different locations, leading to inconsistent data.

Solution:

- Implement Structured Training: Before the study, conduct joint training sessions using a subset of images not included in the main analysis. All observers should mark the same landmarks, followed by discussion to reach a consensus on precise definitions in all three planes of space [18].

- Establish a Reference Standard: Define a set of landmarks with an intraclass correlation coefficient (ICC) of 0.70 or higher as your "reference standard" for the study [19].

- Use Multiple Observers: Employ several independent observers to mark the same landmarks. Calculate the interrater reliability to ensure data is recorded consistently [20] [2].

Guide 2: Managing Landmark Identification Errors in Complex Cases

Problem: Landmark identification is inaccurate in patients with metal artifacts, malocclusion, or missing teeth.

Solution:

- Leverage Validated AI Models: Integrate an automatic 3D landmarking model, such as one based on a lightweight 3D U-Net network, which has demonstrated high precision (Mean Radial Error consistently below 1.4 mm) even in these challenging conditions [19].

- Multi-Planar Verification: For certain landmarks, use a combination of multi-planar views (axial, coronal, sagittal) alongside 3D volume-rendered images for confirmation. For example, the Sella point (S) must be marked in multiplanar views [21].

- Prioritize Reliable Landmarks: Focus on landmarks known to have higher inherent reliability, such as midline and dental landmarks, and be cautious with those that are less reliable, such as Porion, Orbitale, and condylar points [21].

Guide 3: Mitigating Systematic Bias in Landmark Placement

Problem: Observer expectations or subjective judgments are influencing how landmarks are placed.

Solution:

- Apply Blinding Techniques: Keep observers unaware of key study details, such as patient group assignments (e.g., control vs. treatment) or the primary study hypothesis, to prevent their expectations from influencing the data collection [2] [22].

- Develop Standardized Operating Procedures (SOPs): Create and document clear, step-by-step protocols for identifying every landmark. This includes specifying the exact sequence of software tools and the planes of view used for verification [19] [20].

- Engage in Reflexive Practices: Observers should maintain a reflexive journal to document and critically evaluate their own decisions and potential biases during the identification process [23].

Frequently Asked Questions (FAQs)

FAQ 1: Which 3D cephalometric landmarks are considered the most and least reliable?

Landmark reliability varies based on their anatomical location and definition. The table below summarizes this information based on systematic reviews and empirical studies.

Table 1: Reliability of Common 3D Cephalometric Landmarks

| Reliability Category | Landmark Examples | Notes |

|---|---|---|

| High Reliability | Midline skeletal landmarks (e.g., Nasion, A point, B point) and dental landmarks [21]. | These points are often easily identifiable with minimal ambiguity. |

| Low Reliability | Porion, Orbitale, and condylar landmarks [21]. | These areas have lower reliability due to complex anatomy or image superimposition. |

FAQ 2: What statistical measures should I use to assess landmark identification reliability?

The appropriate statistical test depends on your data type and study design.

Table 2: Statistical Measures for Assessing Landmark Reliability

| Method | Use Case | Interpretation |

|---|---|---|

| Intraclass Correlation Coefficient (ICC) | Preferred for assessing both intra- and inter-observer reliability of coordinate data [19] [18]. | Values > 0.9 indicate excellent reliability [18]. |

| Mean Radial Error (MRE) | Measures the average absolute error in millimeters between an identified landmark and a reference standard [19]. | An MRE below 2 mm is often considered clinically acceptable. |

| Success Detection Rate (SDR) | Calculates the percentage of landmarks identified within a specific error threshold (e.g., 2mm, 3mm, 4mm) [19]. | Useful for presenting clinical applicability. |

FAQ 3: Our research uses both Spiral CT (SCT) and Cone-Beam CT (CBCT). Will this affect landmark reliability?

Yes, the imaging modality can influence precision. A 2025 study found that while an AI model performed well on both, SCT bone landmarks were more precise than SCT dental landmarks, whereas CBCT dental landmarks exhibited greater precision compared to CBCT bone landmarks [19]. The clinical application also differs; SCT often uses more landmarks for complex craniofacial assessment, while CBCT uses fewer, more specialized landmarks for dental and jaw structures [19]. You should validate your protocol for each modality separately.

FAQ 4: What are the core components of a rigorous experimental protocol for a reliability study?

A robust methodology should include the components outlined in the workflow below.

The Scientist's Toolkit: Essential Materials & Reagents

Table 3: Key Research Reagent Solutions for 3D Cephalometry

| Item | Function/Application | Example/Note |

|---|---|---|

| Geometric Morphometrics Software | Analysis of 2D and 3D landmark data; performs statistical shape analysis. | MorphoJ is a widely used program for this purpose [24]. |

| 3D Cephalometric Analysis Software | Visualization, landmark identification, and 3D model reconstruction from medical images. | Dolphin 3D and Mimics are examples used in research [19] [18]. |

| AI Landmarking Model | Automated, high-precision landmark detection to reduce manual workload and observer bias. | Models based on 3D U-Net architecture can achieve MRE < 1.3 mm [19]. |

| Validated Cephalometric Landmark Set | A predefined set of anatomical points with clear operational definitions in all 3 planes of space. | Critical for ensuring all observers are measuring the same thing [19] [25] [18]. |

| High-Resolution CBCT/SCT Scanner | Acquisition of 3D medical images for landmark identification. | Equipment like i-CAT CBCT or similar spiral CT scanners [21]. |

Visualizing the Bias Mitigation Workflow

A comprehensive strategy to mitigate observer bias involves steps throughout the research lifecycle, as shown in the following diagram.

Frequently Asked Questions (FAQs)

Q1: What are the most significant sources of measurement error in geometric morphometric studies? Measurement error originates from multiple phases of a study. Key sources include:

- Observer Error: Variation in landmark placement by the same observer (intra-observer) or between different observers (inter-observer). This is often the largest source of error [26] [27] [28].

- Data Acquisition Error: Decisions related to imaging, such as voxel size in micro-CT scans, segmentation strategies, and thresholding algorithms, can introduce artefactual variance [26].

- Specimen Presentation: In 2D analyses, how a specimen is positioned or tilted in front of the camera can significantly impact landmark coordinates and subsequent classification results [28].

- Specimen Preparation: Preservation methods (e.g., formalin fixation) and long-term storage can alter the natural form of specimens, inducing artifactual variation [27].

Q2: How does measurement error impact my research findings? Measurement error introduces "artefactual variance" that can inflate the total variance in your dataset [27]. This has several critical consequences:

- Loss of Statistical Power: High random error increases "noise," which can obscure true biological signals and lead to a failure to detect significant differences between groups [27].

- Biased Results: Systematic error, such as consistent differences in how one observer places landmarks compared to another, can bias the results, causing artifactual variation to be misinterpreted as biologically meaningful [27].

- Reduced Classification Accuracy: In analyses like Linear Discriminant Analysis, measurement error can change the predicted group membership of both known and unknown (e.g., fossil) specimens [28].

Q3: What is the first step in managing systematic error? The most critical first step is to systematically assess and quantify the measurement error in your own dataset [26] [27]. This involves collecting replicate measurements to quantify the variance introduced by your specific observers, imaging protocols, and specimen handling. Without this assessment, you cannot know the magnitude of the problem or whether your biological findings are reliable [26].

Q4: Can automated landmarking eliminate observer error? Automated landmarking methods based on image registration can standardize landmark placement and eliminate human observer error [10]. However, they introduce other potential error sources, such as stochastic image registration errors, and may underestimate biological shape variance compared to manual landmarking. The accuracy of automated methods depends on the quality of image alignment and the specific anatomical location [10].

Q5: How can I improve consistency among multiple observers? Ensuring all observers are consistent is crucial [26]. Effective strategies include:

- Structured Training: Implement a training period where all observers practice on the same specimens and discuss landmark definitions [26].

- Detailed Protocol: Provide a written, detailed guide with definitions and images for every landmark.

- Blinding: Observers should be blinded to group assignments (e.g., treatment vs. control) to prevent bias [23].

Troubleshooting Guides

Problem: High Intra-Observer Error

Symptoms: Large differences in landmark coordinates when the same observer digitizes the same specimen multiple times.

Solutions:

- Training and Familiarization: Prior to formal data collection, engage in a training period to become thoroughly familiar with the landmark protocol and the range of morphological variation in your sample [26].

- Minimize Session Breaks: Acquire landmarks for a given study in as few sessions as possible to reduce drift in landmark identification over time [26].

- Use of Semi-Landmarks: For complex curves and surfaces, consider using sliding semi-landmarks, which can improve consistency along outlines where Type I or II landmarks are sparse [29].

- Standardize Data Processing: Apply consistent and careful surface simplification and use optimal segmentation parameters to create clearer, more consistent 3D models for landmarking [26].

Problem: High Inter-Observer Error

Symptoms: Significant differences in landmark coordinates when the same specimens are digitized by different observers.

Solutions:

- Consensus Training: Hold joint training sessions where all observers digitize a subset of specimens together, discussing and reconciling differences until a consensus on landmark location is reached [26].

- Standardize Equipment and Setup: Use the same imaging equipment and standardized specimen presentations for all observers to minimize variance from these sources [28].

- Leverage Automation: For large datasets, consider using a fully automated landmarking method to algorithmically standardize placement across the entire sample [10]. Alternatively, use semi-automated methods where an initial rough placement is provided, which the observer then refines.

Problem: Error Introduced by Imaging and Data Processing

Symptoms: Landmark coordinates are influenced by choices in voxel size, segmentation algorithm, or surface simplification.

Solutions:

- Pilot Study: Conduct a pilot study to systematically evaluate how different segmentation strategies and voxel sizes affect landmark placement on a subset of specimens [26].

- Consistent Parameters: Once an optimal protocol is identified, use the same voxel size, segmentation strategy, and thresholding parameters for all specimens in the study [26].

- Documentation: Thoroughly document all imaging and processing parameters in your methods section to ensure reproducibility.

The table below summarizes the contribution of different factors to the total variance in landmark data, as found in a systematic study of micro-CT-derived surfaces [26].

Table 1: Contribution of Different Factors to Total Landmark Variance

| Factor | Contribution to Variance | Impact & Notes |

|---|---|---|

| Intra-observer Error | Significant (Major source) | Can be reduced with training and fewer sessions [26]. |

| Inter-observer Error | Significant | Can clearly exceed intra-observer error, especially with inexperienced observers [26]. |

| Segmentation Strategy | <1% | Contribution was small but significant in the studied context [26]. |

| Surface Simplification | Not Significant | Slight simplification had no significant effect [26]. |

| Voxel Size | Not Significant | Did not significantly contribute to variance in this study [26]. |

The following table illustrates how different error sources can impact the practical outcome of a morphometric analysis, using a case study on vole teeth classification [28].

Table 2: Impact of Data Acquisition Error on Species Classification Accuracy

| Error Source | Impact on Landmark Precision | Impact on Species Classification |

|---|---|---|

| Imaging Device (Different cameras) | Substantial | Impacts predicted group memberships [28]. |

| Specimen Presentation (Tilting) | Greatest discrepancy | Greatest discrepancy in classification results [28]. |

| Inter-observer Variation | Substantial | Impacts predicted group memberships [28]. |

| Intra-observer Variation | Substantial | Impacts predicted group memberships [28]. |

Experimental Protocols

Protocol 1: Quantifying Observer Error

Purpose: To quantify the amount of variance in landmark data introduced by intra- and inter-observer error.

Materials: 3D surface models or images of a subset of specimens (e.g., n=20), geometric morphometric software (e.g., TpsDig, Viewbox, geomorph in R).

Methodology:

- Repeated Measurements: A single observer (O1) digitizes the entire set of landmarks on all 20 specimens twice, with a minimum of one week between sessions to avoid memory bias [28].

- Multiple Observers: A second observer (O2) also digitizes the same 20 specimens.

- Data Analysis: Perform a Procrustes ANOVA on the aligned coordinates to partition variance components and estimate the mean squares for "Specimen" (biological signal), "Observer" (systematic bias), and the residual (which includes random measurement error) [27].

Protocol 2: Evaluating the Impact of Imaging Parameters

Purpose: To assess the artefactual variance introduced by different segmentation strategies.

Materials: Raw micro-CT scan data for a subset of specimens, segmentation software (e.g., ITK-SNAP).

Methodology:

- Generate Multiple Surfaces: For each specimen, generate multiple 3D surface models using different local thresholding algorithms or parameters [26].

- Landmarking: Digitize the same set of landmarks on each variant of the surface model.

- Statistical Comparison: Use a nested PERMANOVA to test for a significant effect of the "Segmentation" factor on the Procrustes shape coordinates, comparing its variance contribution to the biological variation among specimens [26].

Research Reagent Solutions

Table 3: Essential Materials and Software for Geometric Morphometrics

| Item | Function | Example Software / Tool |

|---|---|---|

| 3D Imaging System | To create digital representations of specimens. | micro-CT Scanner, Laser Surface Scanner [26] [28]. |

| Segmentation Software | To convert volumetric image data (from CT) into 3D surface models (meshes). | ITK-SNAP [29]. |

| Geometric Morphometrics Software | To digitize landmarks, perform Procrustes superimposition, and conduct shape statistics. | Tps系列 (TpsDig, TpsUtil) [28], Viewbox [29], R package geomorph [29] [28]. |

| Spatial Transcriptomics Framework | For identifying anomalous tissue regions that may require specialized landmarking. | STANDS (Spatial Transcriptomics ANomaly Detection and Subtyping) [30]. |

| Fiberoptic Confocal Microscope | For real-time intraoperative identification of specific tissue types (e.g., conduction system in heart). | Cellvizio 100 series with miniprobe [31]. |

Workflow and Relationship Diagrams

Diagram 1: Systematic Error Mapping Workflow

This diagram illustrates a logical workflow for identifying, quantifying, and mitigating systematic error in a geometric morphometrics study.

This diagram maps the primary sources of measurement error to their potential impacts on morphometric research outcomes.

Proven Protocols and Standardization Strategies for Reliable Landmark Placement

Comprehensive Operator Training and Calibration Protocols

Troubleshooting Guides and FAQs

This section provides targeted solutions for common challenges in geometric morphometric research, specifically designed to mitigate observer bias and improve data reproducibility.

FAQ: Addressing Common Landmarking Challenges

Q: Our research group gets different results when multiple people place landmarks on the same specimen. How can we standardize our work? A: Inter-observer error is a major source of bias. Implement these solutions:

- Develop Detailed SOPs: Create and enforce strict, written Standard Operating Procedures for landmark placement. These should include defined scope, required equipment, measurement parameters and tolerances, environmental conditions, and step-by-step processes [32].

- Conduct Rigorous Training: Use your SOPs to train all personnel, followed by an error study to quantify both intra- and inter-observer error before beginning actual data collection [33].

- Adopt Automated Landmarking: For large datasets, consider automated methods like Deterministic Atlas Analysis (DAA) or other atlas-based image registration techniques. These are algorithmically standardized and eliminate human inconsistency, though they must be validated for your specific taxa and research question [6] [10].

Q: We are considering automated landmarking. What are the key trade-offs? A: Automated methods offer speed and repeatability but present new challenges.

- Advantages: They are significantly faster than manual methods, eliminate intra- and inter-observer bias, and are essential for analyzing large datasets or high-density morphometric data [6] [10].

- Challenges & Considerations: Automated methods may underestimate true biological shape variance, struggle with highly disparate taxa, and are susceptible to stochastic image registration errors. Their accuracy can be influenced by image quality, modality (CT vs. surface scans), and the selected parameters of the algorithm itself [6] [10]. Always validate an automated protocol against a manually landmarked subset of your data.

Q: How can we quantify and control for error in our landmarking process? A: Integrate error quantification into your standard research protocol.

- Perform an Error Study: As demonstrated in foundational studies, a subset of specimens (e.g., 10-20%) should be landmarked multiple times by the same observer (intra-observer error) and by different observers (inter-observer error) [33].

- Quantify the Error: Calculate the average Euclidean distance (in mm) between landmark placements for both intra- and inter-observer trials. Establish a baseline for acceptable error in your specific research context [33].

- Use Appropriate Metrics: Statistical tests like the Mantel test or PROTEST can be used to quantify the overall correlation between shape matrices generated by different methods or observers [6].

Troubleshooting Guide: Specific Issues and Solutions

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| High intra-observer error on specific landmarks [33] | Poorly defined landmark protocol or ambiguous anatomical definition | Refine the landmarking SOP with clearer definitions and visual examples. Use 3D rendering software to rotate the view and confirm landmark location. |

| Low correlation between manual and automated landmarking results [6] [10] | Mixed imaging modalities (e.g., CT & surface scans) or poor image registration | Standardize image data. Use Poisson surface reconstruction to create watertight, closed meshes from all specimens before analysis [6]. |

| Automated landmarks show systematic bias, pulling extreme shapes toward the mean [6] | Suboptimal initial template selection during atlas generation for methods like DAA | Test multiple initial templates and select one that is not a morphological extreme. The template choice can systematically bias results [6]. |

| Shape variance estimates are lower with automated landmarks [10] | Automated methods capture "biological signal" without human placement error, which can inflate variance | This may reflect a more precise capture of true shape by removing human error. Compare results to a manually landmarked gold standard to interpret findings. |

| Outliers in automated landmarking analysis [10] | Stochastic image registration errors | Review specimen preparation and image acquisition protocols to minimize artifacts. Visually inspect failed registrations to diagnose the cause. |

Experimental Protocols for Mitigating Observer Bias

This section provides detailed, actionable methodologies for key experiments and procedures critical to establishing a robust, low-bias geometric morphometrics workflow.

Protocol: Intra- and Inter-Observer Error Study

Purpose: To quantify the precision and consistency of landmark placement, establishing the reliability of your morphometric data [33].

Materials:

- A subset of at least 10 specimen images representative of the full study's morphological range.

- 3D image analysis software (e.g., MorphoJ, Landmark Editor).

- The finalized landmarking SOP.

Methodology:

- Initial Landmarking: A single trained observer (Observer 1) places the complete set of landmarks on all 10 specimens in the subset. This dataset is labeled "Round 1".

- Intra-Observer Trial: After a minimum washout period of two weeks, Observer 1 re-landmarks the same 10 specimens, with the data presented in a different random order. This dataset is labeled "Round 2".

- Inter-Observer Trial: A second trained observer (Observer 2), using the same SOP, landmarks the same 10 specimens, creating the "Observer 2" dataset.

- Data Analysis:

- For intra-observer error, perform a Procrustes ANOVA on the "Round 1" and "Round 2" datasets from Observer 1 to isolate the variance due to measurement error.

- For inter-observer error, perform a Procrustes ANOVA on the "Round 1" dataset from Observer 1 and the dataset from Observer 2.

- Calculate the mean Euclidean error for each landmark by comparing the x,y,z coordinates between trials. This identifies which landmarks are most prone to error [33].

Protocol: Validating an Automated Landmarking Pipeline

Purpose: To implement and validate an automated landmarking method (e.g., DAA) against a manually generated gold standard, ensuring it captures biologically relevant shape variation [6] [10].

Materials:

- A dataset of 3D specimen meshes.

- Software for automated landmarking (e.g., Deformetrica).

- A subset of specimens (≈10%) with manually placed landmarks following the protocol in 2.1.

Methodology:

- Data Standardization: If datasets come from mixed modalities (CT, surface scans), process all meshes using Poisson surface reconstruction to generate watertight, closed surfaces. This step is critical for improving correspondence between different methods [6].

- Initial Template Selection: Generate atlases from different initial templates. Select a template that is morphologically central to your dataset to avoid biasing results toward extreme forms [6].

- Parameter Optimization: Run the automated analysis (e.g., DAA) with different kernel width parameters. A smaller kernel width captures finer-scale deformations but generates more control points [6].

- Validation & Correlation:

- Perform a Procrustes superimposition on both the manual and automated landmark data.

- Use a Mantel test to assess the correlation between the pairwise Procrustes distance matrices generated from the manual and automated data [6].

- Use PROTEST to evaluate the agreement between the two multivariate configurations [6].

- Visually compare results using thin-plate spline deformation grids and Euclidean distance heatmaps to identify regions where the methods disagree [6].

Workflow Visualization

The following diagram illustrates the logical pathway for establishing a reliable landmarking protocol, integrating both manual and automated approaches to mitigate bias.

Decision Workflow for Mitigating Landmark Placement Bias

The Scientist's Toolkit: Essential Research Reagents and Materials

This table details key software, materials, and methodological solutions required for geometric morphometric studies focused on reducing observer bias.

| Item/Solution | Function & Relevance to Bias Mitigation |

|---|---|

| Standard Operating Procedure (SOP) | A detailed, written protocol defining every aspect of landmark placement. It is the foundational document for ensuring consistency and repeatability across and within observers [32]. |

| 3D Geometric Morphometrics Software (e.g., MorphoJ, Landmark Editor) | Software platforms used for placing landmarks, performing Procrustes superimposition, and conducting statistical shape analysis. Essential for executing the error studies that quantify bias [33]. |

| Deterministic Atlas Analysis (DAA) | A "landmark-free" morphometric method that compares shapes by calculating the deformation of an atlas template. It enhances efficiency and eliminates human landmarking bias for large-scale studies across disparate taxa [6]. |

| Poisson Surface Reconstruction | An algorithm used to standardize 3D mesh data. It creates watertight, closed surfaces from mixed imaging modalities (CT, surface scans), which is a critical pre-processing step to improve the performance of automated landmarking methods [6]. |

| Procrustes ANOVA | A statistical method that partitions shape variance into components (e.g., group effects, individual variation, measurement error). It is the primary tool for quantifying intra- and inter-observer error in landmark data [33]. |

| Mantel Test & PROTEST | Statistical tests used to compare the overall structure of two shape variance-covariance matrices or Procrustes coordinates. Used to validate the correlation between manual and automated landmarking outputs [6]. |

Standardized Landmark Definitions Across Multiple Anatomical Planes

Core Concepts: Anatomical Planes and Landmark Types

What are the three principal anatomical planes used to define landmark locations?

In human anatomy, three principal hypothetical planes are used to describe the location of structures and divide the body into sections. All descriptions assume the body is in the standard anatomical position (upright and facing forward) [34] [35].

Table 1: The Three Principal Anatomical Planes

| Plane Name | Alternative Names | Orientation | Divides Body Into |

|---|---|---|---|

| Sagittal | Anteroposterior | Vertical | Left and right sections |

| Coronal | Frontal | Vertical | Front (anterior) and back (posterior) sections |

| Transverse | Axial, Horizontal | Horizontal | Upper (superior) and lower (inferior) sections |

A specific type of sagittal plane is the median (or midsagittal) plane, which passes directly through the midline of the body, dividing it into equal left and right halves. Any sagittal plane parallel to this but off-center is called a parasagittal plane [34].

How do anatomical planes relate to geometric morphometrics?

In geometric morphometrics (GM), the anatomical planes provide a crucial, standardized reference framework for capturing the 3D coordinates of anatomical landmarks. This allows for the precise quantification of biological shape [36]. By defining landmarks in relation to these universal planes, researchers can ensure that the shape data they collect is comparable across multiple specimens and studies, which is foundational for mitigating observer bias.

What are the main types of landmarks used in geometric morphometrics?

Landmarks are discrete, homologous points that can be precisely located on every specimen in a study. They are the primary data source for capturing shape.

Table 2: Key Landmark Types in Geometric Morphometrics

| Landmark Type | Description | Role in Mitigating Bias |

|---|---|---|

| Type I (Anatomical) | Defined by precise local topology or histology (e.g., foramina, suture intersections). Highest level of homology [36]. | Considered the most reliable and least prone to interpretation, thus reducing observer bias. |

| Type II (Mathematical) | Defined by a local property, such as a point of maximum curvature (e.g., the tip of a bone process) [36]. | More subjective than Type I, making standardized protocols essential for consistency. |

| Type III (Extrema) | Defined by the most extreme point of a structure, often requiring other landmarks for context (e.g., the furthest point on the back of the skull) [36]. | Most prone to placement bias; requires rigorous training and calibration. |

| Semi-landmarks | Points used to capture the shape of curves and surfaces where no discrete landmarks exist [36]. | Automating their placement and sliding procedures can significantly reduce bias and improve repeatability. |

Troubleshooting Common Experimental Issues

FAQ: My specimens are damaged or fragmented, leading to missing landmark data. What are my options?

Missing data is a frequent challenge when working with archaeological, paleontological, or clinical specimens. The best solution depends on the extent of the damage [36].

- Problem: A few specimens have missing data in the same small region.

- Solution: Consider using statistical imputation methods, such as Partial Least Squares regression, to estimate the missing coordinate points. Be aware that these methods require a sufficient sample size relative to the number of missing points to be reliable [36].

- Problem: Widespread or significant damage across many specimens.

- Solution: For larger-scale missingness, parametric methods may fail. Alternative approaches include using a template or atlas to reconstruct missing regions based on the complete specimens in your sample [36]. The choice of imputation method can impact downstream analyses, such as the detection of structural modularity, so method selection should be carefully considered.

FAQ: How can I determine the optimal number of landmarks to use for my study?

Determining the correct density of coordinate points is essential. Under-sampling fails to capture meaningful shape variation, while over-sampling wastes time, reduces computational efficiency, and can diminish statistical power [36].

- Problem: Uncertainty about how many landmarks are sufficient.

- Solution: Employ a Landmark Sampling Evaluation Curve (LaSEC) protocol [36]. This involves:

- Creating a preliminary template that deliberately over-samples the structure.

- Applying this template to a small, random subsample of your specimens.

- Systematically reducing the number of points and evaluating how well the reduced set approximates the shape captured by the full set.

- Identifying the point of diminishing returns, where adding more landmarks no longer significantly improves shape representation.

FAQ: My landmarking process is time-consuming and shows low repeatability between users. How can I improve this?

Manual landmark placement is inherently time-consuming and susceptible to observer bias, which threatens the validity of your results [6].

- Problem: Low throughput and high inter-observer error.

- Solution: Explore automated or landmark-free methods.

- Automated Landmarking: Uses atlas templates or algorithms to predict landmark positions on new specimens, dramatically improving speed and repeatability [6].

- Landmark-Free Methods (e.g., DAA): Techniques like Deterministic Atlas Analysis (DAA) bypass the need for predefined homologous points altogether. They work by quantifying the deformation energy required to map a sample-derived mean shape (an "atlas") onto each specimen. The vectors controlling this deformation ("momenta") then become the data for shape analysis [6]. These are particularly promising for comparing highly disparate taxa where homology is obscure.

Experimental Protocol: A Procrustean Workflow for the Os Coxae

This protocol outlines a standardized method for capturing 3D shape data of the human os coxae (hip bone), adaptable to other skeletal elements.

Materials & Equipment:

- Specimens: Well-preserved, unfragmented ossa coxae (e.g., n=29) [36].

- Scanner: High-resolution 3D scanner (e.g., Artec Eva structured-light scanner) [36].

- Software: Scanning software (e.g., Artec Studio), morphometrics digitization software (e.g., Viewbox 4), and statistical environment (e.g., R) [36].

Methodology:

- 3D Scanning:

- Preferentially scan left-side elements. For right-side elements, scan and later flip the 3D mesh about its vertical axis to facilitate comparison [36].

- Process scans to create watertight polygon meshes and save in a universal format (e.g., PLY).

- Template Design & Digitization:

- Develop a digitization template comprising fixed landmarks (Type I, II, III) and semi-landmarks on curves and surfaces to capture the overall shape of the ilium, ischium, and pubis [36].

- Apply this template consistently to all specimen meshes, resulting in a k x 3 matrix (X) for each specimen, where k is the total number of points and the columns represent x, y, z coordinates [36].

- Procrustes Superimposition (in R):

- Combine all individual matrices into a k x 3 x n array.

- Perform Generalized Procrustes Analysis (GPA). This is an iterative process that removes non-shape differences by [36]:

- Centering: Translating each configuration so its centroid is at the origin (0,0,0).

- Scaling: Scaling all configurations to a unit size (Centroid Size = 1).

- Rotating: Rotating configurations to minimize the sum of squared distances between corresponding landmarks across all specimens.

- Downstream Analysis:

- The resulting Procrustes-aligned coordinates exist in tangent space and can be analyzed with standard multivariate statistical techniques (e.g., PCA, MANOVA) to explore shape variation, integration, and modularity [36].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Geometric Morphometrics

| Item | Function & Role in Mitigating Bias |

|---|---|

| Structured-Light 3D Scanner | Non-contact device for creating high-resolution 3D models of specimens. Standardizes the initial data capture, eliminating bias from manual measurement [36]. |

| Open-Access Digitization Template | A pre-defined set of landmark and semi-landmark locations for a specific anatomical structure (e.g., os coxae). Provides a standardized protocol for all users to follow, ensuring comparability across studies and reducing placement ambiguity [36]. |

| Geometric Morphometrics Software (e.g., Viewbox, R geomorph) | Software for placing landmarks, performing Procrustes superimposition, and statistical shape analysis. Automates calculations, removing human calculation error and ensuring analytical consistency [36]. |

| Deterministic Atlas Analysis (DAA) Software (e.g., Deformetrica) | A landmark-free approach that uses diffeomorphic mappings to compare shapes. Mitigates bias associated with the manual identification and placement of homologous points, ideal for disparate forms [6]. |

| Poisson Surface Reconstruction Algorithm | A computational method to create watertight, closed meshes from scan data. Standardizes mesh topology, which is critical for the performance and reliability of landmark-free analyses on datasets from mixed scanning modalities (CT vs. surface scans) [6]. |

Troubleshooting Guide: Common Issues and Solutions

| Problem Category | Specific Issue | Potential Cause | Recommended Solution | Supporting Evidence |

|---|---|---|---|---|

| Data Acquisition & Imaging | Inconsistent shape data when using different imaging devices (e.g., DSLR vs. digital microscope). | Inter-instrument variation; different sensors and lenses capturing images differently. | Standardize the imaging equipment across the entire study. Use the same camera, lens, and settings for all specimens. [28] | Studies found that comparing datasets from different cameras explained a substantial amount of total variation. [28] |

| Inconsistent results when mixing 3D data modalities (e.g., CT and surface scans). | Different mesh topologies (open vs. closed surfaces) from various modalities create non-comparable data. | Standardize data by converting all meshes to a common type, such as using Poisson surface reconstruction to create watertight, closed surfaces. [6] | Research on mammal crania showed Poisson reconstruction significantly improved correspondence between shape variation patterns. [6] | |

| Specimen Presentation | High measurement error and misclassification in 2D analyses. | Changes in specimen orientation (e.g., tilting) relative to the camera lens. | In 2D GM, rigorously standardize specimen presentation. Secure specimens in a fixed position to ensure identical orientation for all images. [28] | Intentionally tilting specimens resulted in the greatest discrepancies in species classification results. [28] |

| Reduced ability to discriminate between closely related species. | Inappropriate sample size or 2D view/element choice for the research question. | Conduct preliminary analyses using multiple views, elements, and sample sizes to ensure robust conclusions. [37] | Reducing sample size impacted mean shape and increased shape variance; trends were not consistent across different views. [37] | |

| Observer & Workflow Bias | Lack of repeatability and high inter-observer variation in landmark placement. | Different levels of experience and inherent subjectivity between multiple users digitizing landmarks. | Standardize landmark digitization to a single, trained observer. If multiple observers are necessary, implement rigorous cross-training and quantify inter-observer error. [28] | Datasets digitized by different individuals exhibited the greatest discrepancies in landmark precision. [28] |

| "Alert fatigue" or desensitization when using AI-assisted tools. | Frequent exposure to AI-generated alerts can diminish attention to critical notifications. | Calibrate AI alert systems to minimize unnecessary notifications and integrate them thoughtfully into the workflow to avoid cognitive overload. [38] | Studies found radiologists with high-frequency AI system use experienced increased burnout and alert desensitization. [38] |

Frequently Asked Questions (FAQs)

How does specimen presentation specifically affect 2D geometric morphometric analyses?

In 2D geometric morphometrics, the data collected are highly sensitive to the angle at which a three-dimensional specimen is presented to the camera. Even slight tilting can dramatically alter the apparent positions of landmarks in the 2D image, introducing significant "presentation error." [28] One study demonstrated that this error source had a greater impact on statistical classification results than the type of camera used. [28] Therefore, meticulous standardization of specimen orientation is not just recommended but critical for generating reproducible 2D data.

What is the single most impactful step I can take to reduce measurement error in my landmark data?

The most impactful step is to standardize and document every aspect of your data acquisition protocol. Evidence consistently shows that the largest discrepancies in landmark precision stem from comparisons of datasets digitized by different individuals. [28] To mitigate this:

- Standardize the operator: Use a single, well-trained individual for digitizing.

- Standardize the equipment: Use the same imaging device and setup for all specimens.

- Standardize the presentation: Especially in 2D, ensure identical specimen orientation. [28] Consistency across all these factors is key to minimizing total measurement error.

My research involves comparing 3D models from CT scans and surface scans. How can I make this data consistent?

Combining 3D data from mixed modalities like CT and surface scans is a common challenge, as they often produce meshes with different properties (e.g., open vs. closed surfaces). A method shown to improve consistency is Poisson surface reconstruction. [6] This technique creates watertight, closed surfaces for all specimens, standardizing the mesh topology. Research on a large dataset of mammals found that this standardization significantly improved the correspondence between shape variation patterns measured using different methods. [6]

How do I choose between a high-density manual landmarking approach and a newer, automated landmark-free method?

The choice depends on your research question and the scale of your study.

- Manual Landmarking: This is the established gold standard, ideal for capturing homologous anatomical loci with high biological meaning. It is best for studies focused on specific structures and when comparing morphologically similar taxa. However, it is time-consuming and prone to observer bias. [6] [37]

- Automated Landmark-Free Methods (e.g., DAA): These offer enhanced efficiency and are less tied to strict homology, making them promising for large-scale studies across more disparate taxa. [6] However, one study found that while landmark-free methods produced comparable macroevolutionary patterns to manual landmarking, the results were not identical, indicating that the choice of method can influence downstream biological interpretations. [6]

A hybrid approach is often wise: using automated methods for initial, large-scale screening and manual methods for detailed, hypothesis-driven analysis of specific structures.

Experimental Protocol: Quantifying and Mitigating Observer Bias

This protocol provides a detailed methodology for assessing the impact of inter-observer variation on landmark data, based on established experimental designs. [28]

Objective: To quantify the error introduced by different observers (inter-observer variation) during landmark digitization and evaluate its impact on a typical classification analysis.

Materials:

- A set of specimen images (e.g., 30-50 images of vole molars, bat crania). [28] [37]

- Geometric morphometrics software (e.g., TpsDig,

geomorphin R). [28] - Statistical software (e.g., R).

Procedure:

- Image Set Preparation: Select a representative subset of specimen images. Ensure all images are acquired with the same device and identical specimen presentation to isolate observer error. [28]

- Observer Recruitment: Engage multiple observers (e.g., 2-3) with varying levels of experience in geometric morphometrics.