Strategies for Reducing False Positives in Automated Parasite Egg Detection: A Guide for Biomedical Researchers

Automated detection of parasite eggs in microscopic images is transforming parasitology diagnostics, yet high false positive rates remain a significant barrier to clinical reliability.

Strategies for Reducing False Positives in Automated Parasite Egg Detection: A Guide for Biomedical Researchers

Abstract

Automated detection of parasite eggs in microscopic images is transforming parasitology diagnostics, yet high false positive rates remain a significant barrier to clinical reliability. This article provides a comprehensive analysis for researchers and drug development professionals, covering the foundational causes of false positives, advanced deep-learning methodologies like YOLO with attention mechanisms, practical troubleshooting and optimization techniques, and rigorous validation frameworks. By synthesizing current research and performance metrics, we offer a roadmap for developing more accurate, efficient, and clinically viable diagnostic tools.

Understanding the Challenge: Why False Positives Plague Automated Parasite Egg Detection

The Critical Impact of False Positives on Diagnostic Reliability and Patient Care

In diagnostic testing, a false positive occurs when a test indicates the presence of a disease or condition that is not actually present [1]. Understanding and minimizing these errors is paramount in automated parasite egg detection, as they can compromise research validity, lead to unnecessary treatments, and misdirect valuable resources [1] [2].

This technical support center provides researchers and scientists with a foundational understanding of key accuracy metrics and practical guides to troubleshoot and refine their automated diagnostic systems, with a specific focus on reducing false positives.

Core Concepts of Diagnostic Accuracy

What are the key metrics for evaluating diagnostic test performance?

The reliability of a diagnostic test is quantified using several key performance indicators. Sensitivity and Specificity are intrinsic measures of a test's accuracy, while Predictive Values are highly influenced by the prevalence of the condition in the population being tested [3].

The calculations for these metrics are derived from a 2x2 contingency table comparing test results against a reference standard (gold standard) [3]:

| Metric | Formula | Description |

|---|---|---|

| Sensitivity | True Positives / (True Positives + False Negatives) | The ability of a test to correctly identify individuals who have the disease. A highly sensitive test is good at "ruling in" the disease when positive [3]. |

| Specificity | True Negatives / (True Negatives + False Positives) | The ability of a test to correctly identify individuals who do not have the disease. A highly specific test is good at "ruling out" the disease when negative [3]. |

| Positive Predictive Value (PPV) | True Positives / (True Positives + False Positives) | The probability that a subject with a positive test result truly has the disease [3]. |

| Negative Predictive Value (NPV) | True Negatives / (True Negatives + False Negatives) | The probability that a subject with a negative test result truly does not have the disease [3]. |

What is the real-world impact of false positives in diagnostic testing?

False positives have tangible consequences that extend beyond the laboratory. The implications are multifaceted [1]:

- Patient Harm: Can lead to unnecessary therapeutic interventions, including medications and invasive procedures like biopsies, which carry their own risks and side effects. They also cause significant psychological distress, such as anxiety while awaiting follow-up results [1] [4].

- Resource Mismanagement: Generate increased healthcare costs due to redundant follow-up tests, consultations, and treatments. This also wastes valuable laboratory supplies and clinician time, delaying care for other patients [1].

- Diagnostic Delays: A false positive result can lead to a diagnostic odyssey where the actual cause of a patient's symptoms is overlooked, potentially leading to worsened health outcomes [1].

- Erosion of Trust: Frequent false positives can undermine confidence in a laboratory, a specific testing method, or even the broader healthcare system [1].

Troubleshooting False Positives in Automated Parasite Egg Detection

What are the common causes of false positives in my automated detection system?

In the context of automated parasite egg detection using systems like the Kubic FLOTAC Microscope (KFM), false positives can arise from multiple points in the workflow [1] [5]:

| Category | Specific Cause | Impact on Detection |

|---|---|---|

| Sample & Reagent Issues | Cross-contamination from other samples or reagents. | Introduces foreign genetic or particulate material that can be misclassified as a target [1]. |

| Degraded or poor-quality samples. | Alters the appearance of non-target structures, increasing misidentification risk [1]. | |

| Expired or faulty reagents. | Can cause non-specific binding or anomalous reactions [1]. | |

| Technical & Instrumental Issues | Improperly calibrated digital microscopes or scanners. | Can create imaging artifacts or enhance non-specific features [6]. |

| Suboptimal image acquisition (e.g., focus, lighting). | Reduces image quality, making accurate AI classification difficult [6]. | |

| Bioinformatic & Model Issues | Non-specific binding or cross-reactivity in molecular assays. | The test detects a closely related but non-target organism [1]. |

| Insufficiently trained AI/Deep Learning model. | The model has not learned the distinct features of the target egg and confuses it with visually similar debris or other egg types [5]. | |

| Inadequate pre-processing of input images. | Failure to normalize images can exaggerate irrelevant features [6]. |

What experimental protocols can I implement to minimize false positives?

Implementing rigorous, end-to-end protocols is key to enhancing specificity. The following workflow, based on best practices from the AI-KFM challenge and related research, outlines a strategic approach to system optimization [6] [5]:

Detailed Methodologies:

Sample Preparation & Curation of a Robust Dataset:

- Purpose: To train a model that can generalize well and avoid learning artifacts from a limited set of samples.

- Protocol: Collect and prepare fecal samples using a validated method like FLOTAC or Mini-FLOTAC [6] [5]. The dataset should include images derived from real-world samples with varying concentrations of eggs and diverse levels of contamination [6]. It is critical that this dataset includes "negative" samples (without target eggs) and samples with visually similar debris to teach the model what not to detect.

Dedicated Image Pre-processing:

- Purpose: To enhance the relevant features of the target parasite eggs and suppress background noise before the image is analyzed by the AI model [5].

- Protocol: Apply techniques such as contrast enhancement, color normalization, and noise reduction filters. This step helps in making the distinguishing features of the target eggs (e.g., shape, texture, shell appearance of Fasciola hepatica vs. Calicophoron daubneyi) more prominent to the detection algorithm [5].

AI Model Training and Tuning for Specificity:

- Purpose: To develop a model that can accurately discriminate between target eggs, non-target organisms, and debris.

- Protocol: Utilize state-of-the-art deep learning models like Convolutional Neural Networks (CNNs), YOLO, or Faster R-CNN [6]. The model must be trained not only on positive examples but also on a wide array of negative examples and potential confounding factors. Techniques like data augmentation can increase the effective size and diversity of your training set.

Rigorous Validation and Performance Assessment:

- Purpose: To objectively quantify the system's accuracy and its rate of false positives in a real-world context.

- Protocol: Evaluate the system's performance on a separate, independent validation dataset where the true egg counts have been verified by expert manual counting (e.g., using optical microscopy) [5]. Performance should be assessed using metrics like Mean Absolute Error (MAE) between the AI count and the manual count, in addition to standard detection metrics [5].

Technical Support & FAQs

My model has high sensitivity but low specificity. What should I adjust?

This is a common trade-off. To improve specificity and reduce false positives, consider the following actions:

- Review Your Training Data: Ensure your training dataset is rich in negative examples and challenging non-target images (confounders). The model may not have learned enough about what constitutes a "negative."

- Adjust Confidence Thresholds: Most object detection models output a confidence score. Increasing the confidence threshold required for a positive detection can filter out weaker, less certain false predictions.

- Tune the Model Architecture: Explore different model architectures or loss functions that penalize false positives more heavily. You may need a more complex model capable of learning finer distinctions.

- Enhance Pre-processing: As indicated in the workflow, implement or refine dedicated image processing steps to make the specific features of your target eggs more distinct from background debris [5].

How can I validate my system in the absence of a perfect gold standard?

In many real-world scenarios, a perfect gold standard may not exist. In these cases:

- Use Expert Consensus: Employ a panel of multiple human experts to manually count and classify samples. The consensus of the panel can serve as a robust reference standard.

- Leverage Alternative Confirmatory Tests: If available, use a different, highly specific diagnostic method (e.g., PCR for specific parasite DNA) to confirm a subset of positive and negative results.

- Benchmark Against Established Methods: Validate your automated system's output against well-regarded, though imperfect, manual methods like the McMaster or Wisconsin techniques, while acknowledging their own limitations [6].

The Scientist's Toolkit: Key Research Reagent Solutions

The following reagents and materials are essential for developing and validating automated parasite egg detection systems [1] [6] [5]:

| Item | Function in the Workflow |

|---|---|

| FLOTAC / Mini-FLOTAC Apparatus | A validated sample preparation technique that provides high sensitivity and accuracy for fecal egg counts, forming the physical basis for sample analysis with systems like the KFM [6] [5]. |

| Flotation Solutions | Specific solutions (e.g., with different specific gravities) used to separate parasite eggs from fecal debris, which is a critical pre-analytical step [6]. |

| Kubic FLOTAC Microscope (KFM) | A compact, portable digital microscope designed to autonomously scan and acquire images from FLOTAC preparations, enabling automated in-field analysis [6] [5]. |

| Synthetic Negative Controls | Samples known to be free of the target parasite, used in quality control to identify and eliminate systematic causes of false positives before reporting results [1]. |

| High-Quality, Annotated Datasets | Curated image libraries (e.g., the AI-KFM challenge dataset) with precise bounding boxes or segmentation masks for parasite eggs, which are essential for training and validating AI models [6]. |

| Convolutional Neural Network (CNN) Models | A class of deep learning algorithms, such as YOLO or Faster R-CNN, that are particularly effective for image recognition and object detection tasks in this field [6]. |

Automated detection of parasite eggs from microscopic images is a transformative technology for diagnostics in parasitology. However, a significant challenge impeding its reliability is the occurrence of false positives, where the system misidentifies non-target objects such as impurities, air bubbles, or other microscopic debris as parasite eggs. This technical support document outlines the common sources of these errors and provides evidence-based troubleshooting strategies to enhance the accuracy of your detection systems.

Troubleshooting Guides & FAQs

Frequently Asked Questions

FAQ 1: What are the most common causes of false positives in automated parasite egg detection? False positives primarily arise from two interrelated challenges:

- Morphological Similarities: Non-target objects, such as impurities, platelets, or cellular debris, can have shapes, sizes, and color intensities that closely mimic those of genuine parasite eggs [7] [8]. For example, in malaria diagnosis, platelets and impurities can be highly similar to malarial parasite cells [7].

- Complex Backgrounds: Microscopic images often contain noisy, unevenly lit, or cluttered backgrounds. These complex conditions can confuse detection models, causing them to assign high confidence to background artifacts [8] [9]. Stool samples, in particular, present highly complex and variable backgrounds [10].

FAQ 2: What deep learning architectures are most effective for reducing false positives? Modern approaches often leverage advanced versions of the You Only Look Once (YOLO) architecture, enhanced with attention mechanisms, for a balance of speed and high accuracy.

- YOLO-based Models: Frameworks like YOLOv5 and YOLOv8 are widely used for their real-time, single-stage detection capabilities [11] [9]. Their efficiency makes them suitable for resource-constrained environments.

- Attention Mechanisms: Integrating modules like the Convolutional Block Attention Module (CBAM) or Contextual Transformer (CoT) blocks helps the model focus on the most relevant features of the parasite egg while suppressing irrelevant background information. This has been shown to significantly improve precision [8] [9].

FAQ 3: Besides model architecture, what other experimental factors are critical for minimizing errors? The quality and management of your dataset are as important as the model choice.

- High-Quality Annotation: Ensure bounding boxes are tightly and accurately drawn around parasite eggs by experienced personnel. Inconsistent or poor-quality annotations will directly lead to model errors [9].

- Comprehensive Data Augmentation: Applying techniques like random rotation, scaling, and adjustments to brightness and contrast during training can simulate various imaging conditions. This improves the model's robustness and its ability to generalize to new, unseen data [9].

- Strategic Data Preprocessing: For high-resolution images, a sliding window cropping strategy can be used to create smaller sub-images. This prevents the loss of fine morphological details that is common when images are simply resized to fit model input requirements [7].

Troubleshooting Guide: Reducing False Positives

| Problem | Possible Cause | Solution | Key Performance Metric to Monitor |

|---|---|---|---|

| High false positive rate in cluttered samples. | Model is distracted by complex background noise and non-target particles. | Integrate an attention mechanism (e.g., CBAM, CoT) into the detection model to recalibrate feature importance [8] [9]. | Precision (Should increase) |

| Model fails to distinguish eggs from morphologically similar debris. | Insufficient or non-representative training data; poor feature discrimination. | Apply extensive data augmentation and use a deeper backbone network (e.g., Darknet-53) for better feature learning [7] [9]. | F1-Score (Balance of Precision & Recall) |

| Low confidence in detecting small or overlapping oocysts. | Loss of fine-grained features in the model's neck or head network. | Incorporate a feature refinement strategy and a dedicated detection head for small objects [9]. | Recall & mAP@0.5 (Should increase) |

| Model performs well on training data but poorly on new images. | Overfitting to the specific conditions of the training set. | Increase the diversity of the training dataset through augmentation and use semi-supervised learning to leverage unlabeled data [12] [13]. | mAP on validation/test set |

Experimental Protocols & Performance Data

Detailed Methodology: YOLO with Attention Integration

The following workflow is adapted from successful frameworks for detecting pinworm eggs [8] and Eimeria oocysts [9].

1. Image Acquisition & Dataset Preparation

- Microscopy: Capture images using a standardized protocol. For example, use a phase contrast microscope with a 100x objective lens [14] or a digital microscope at 200x magnification [9]. Maintain consistent exposure time and gain settings.

- Annotation: Manually label images using a tool like LabelImg. Bounding boxes should be tightly drawn around the parasite eggs. All annotations must be reviewed and validated by experienced biomedical experts [9].

- Data Augmentation: Apply a suite of transformations to the training data, including:

2. Model Architecture & Training (YOLO-GA Example) The YOLO-GA framework enhances standard YOLO by incorporating global context and adaptive feature recalibration [9].

- Backbone: Use a standard CNN backbone (e.g., CSPDarknet) to extract initial features.

- Contextual Transformer (CoT) Blocks: Integrate CoT blocks into the backbone. This module captures local and global contextual dependencies, helping the model understand the spatial relationships between an egg and its surroundings [9].

- Normalized Attention Module (NAM): Insert NAM into the model's neck (feature fusion network). This module adaptively recalibrates channel and spatial attention weights, emphasizing informative features and suppressing noise [9].

- Training: Use the Adam optimizer. The model is trained to minimize a loss function that combines bounding box regression, objectness, and classification loss.

3. Validation & Analysis

- Performance Metrics: Evaluate the model on a held-out test set using precision, recall, F1-score, and mean Average Precision (mAP) at an Intersection-over-Union (IoU) threshold of 0.5 [8].

- Visualization: Use class activation mapping (e.g., Grad-CAM) to generate heatmaps of the model's attention. This allows you to verify that the model is focusing on the correct morphological structures of the parasite egg, providing interpretability and a check against learning spurious correlations [9].

Quantitative Performance of Detection Models

The following table summarizes the performance of various advanced models reported in recent literature, providing benchmarks for your own experiments.

Table 1: Performance Metrics of Recent Parasite Detection Models

| Model Name | Target Parasite | Key Innovation | Reported Precision | Reported mAP@0.5 | Citation |

|---|---|---|---|---|---|

| YCBAM | Pinworm | Integrates YOLO with self-attention & CBAM. | 0.997 | 0.995 | [8] |

| YOLO-GA | Eimeria oocysts | Combines Contextual Transformer (CoT) & Normalized Attention (NAM). | 0.952 | 0.989 | [9] |

| AIDMAN | Malaria (Plasmodium) | YOLOv5 + Attentional Aligner + CNN classifier. | N/A | 0.986 (Cell-level) | [11] |

| CoAtNet-based Model | Various Intestinal Parasites | Hybrid convolution and attention network. | N/A | 0.93 (Avg. Accuracy) | [10] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Microscopy-Based Parasite Detection Workflows

| Item / Reagent | Function in the Experiment | Example & Notes |

|---|---|---|

| Giemsa Stain | Stains cellular components of parasites to enhance contrast and visibility for identification. | Used for staining thin blood film smears for malaria parasite detection [7]. |

| M9 Modified Medium | A defined culture medium for maintaining and growing bacterial strains under controlled conditions. | Used in studies investigating the morphology of antibiotic-resistant E. coli [14]. |

| Cell Painting Assay Kits | A high-content microscopy assay using multiple fluorescent dyes to label various cellular organelles. | Enables unbiased morphological profiling of cells, useful for detecting subtle morphological signatures [15]. |

| Phosphate Buffered Saline (PBS) | A salt solution used for washing cell pellets and resuspending samples to maintain a stable pH. | Used to prepare bacterial specimens for morphological observation under light microscopy [14]. |

| Methanol | Used as a fixative for thin blood smears, preserving the cellular structure before staining. | Applied to air-dried blood smears before Giemsa staining [7]. |

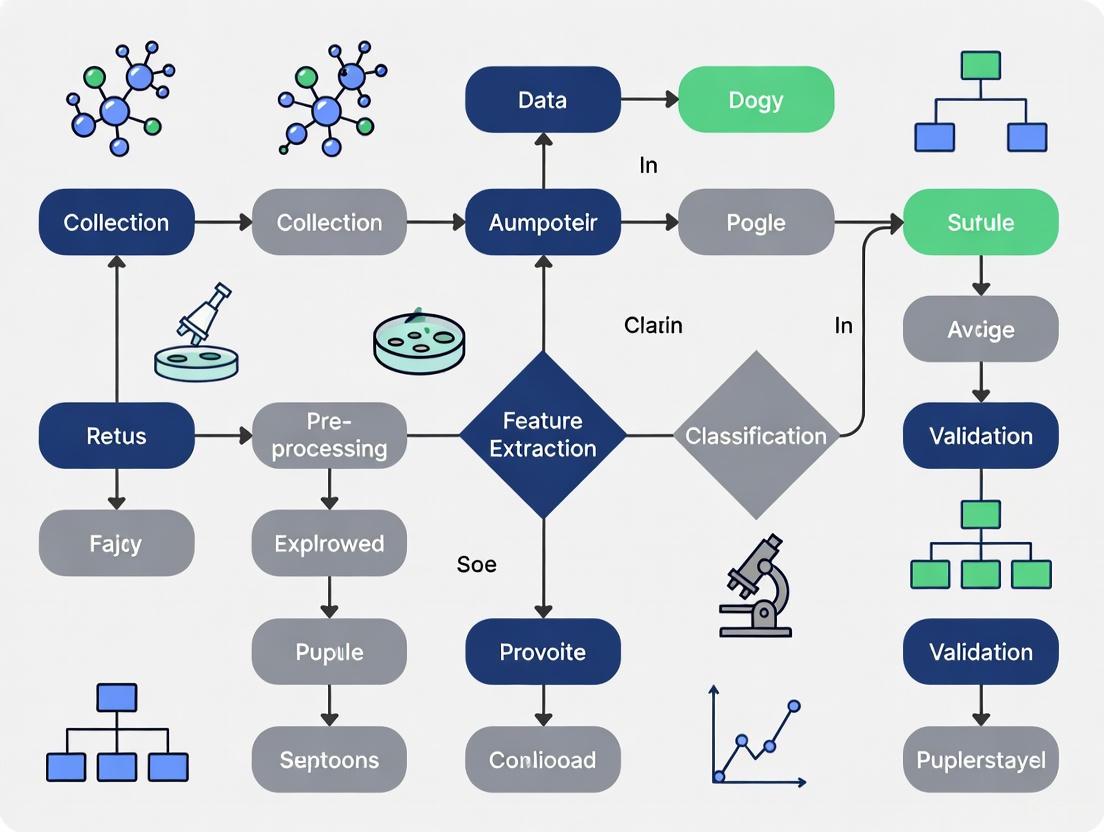

Workflow and System Diagrams

Automated Detection Workflow

YOLO-GA Model Architecture

Frequently Asked Questions

What is a "false positive" in the context of automated parasite detection? A false positive occurs when a detection model identifies an area or object as a target of interest (like a parasite egg) when it is not. In medical imaging, this could mean a model flags a bubble, mucosal fold, or piece of debris as a parasite [16]. The exact definition can vary; some studies define a false positive based on how long an alert box is continuously traced by the system on a non-target area [16].

Why are false positives a critical metric to benchmark? High false positive rates can significantly undermine the utility of an automated detection system. They can overwhelm human reviewers with false alarms, reduce trust in the system, and waste computational and investigative resources. Benchmarking helps compare different models and optimize the trade-off between sensitivity (finding all true positives) and specificity (avoiding false positives) [16] [17].

What factors commonly cause false positives in parasite detection models? Common causes include:

- Morphological Similarities: Non-target objects like bubbles, stool particles, or mucosal folds in stool samples can resemble parasites or their eggs [16].

- Interfering Substances: The presence of substances like rheumatoid factor (RF) has been documented to cause false positives in rapid diagnostic tests for other parasites like malaria [18].

- Poor Data Quality: The quality of the input data, such as inadequate bowel preparation in colonoscopy or poor staining in microscopy, is a major contributor to false alerts [16].

- Model Architecture Limitations: Some models may struggle with objects at certain scales, lighting conditions, or levels of occlusion, leading to misidentification [19].

How can I reduce the false positive rate of my detection model? Strategies can be divided into two main categories:

- Prevention during Training/Configuration: Fine-tuning the model's matching algorithms and similarity thresholds, using high-quality and diverse training data that includes common false positive sources, and incorporating data augmentation [19] [17].

- Post-Processing and Suppression: Implementing rules or a secondary AI model to automatically clear likely false positives based on additional context (e.g., size, shape, or the presence of other identifiers), providing a transparent and auditable reason for the suppression [17].

Troubleshooting Guides

Guide: Diagnosing a High False Positive Rate

Problem: Your detection model is generating an unacceptably high number of false alerts.

Investigation Steps:

- Characterize the False Positives: Manually review a sample of the false positives and categorize them. Are they predominantly bubbles, folds, or a specific type of debris? This identifies the primary source of noise [16].

- Analyze Model Performance by Subgroup: Check if the high false positive rate is concentrated in a specific subset of your data, such as images with poor quality or from a particular source.

- Review the Definition of a Positive: In video-based detection, a very sensitive system might trigger instantaneous alerts. Assess if applying a minimum duration threshold for a valid positive (e.g., ≥0.5 seconds) would filter out transient noise without missing true positives [16].

- Check for Class Imbalance: Ensure your training data is not dominated by background or negative examples, which can lead to models that are biased toward predicting the negative class too often or too rarely [19].

Common Solutions:

- Data Augmentation: Augment your training dataset with more examples of the common false positive categories to teach the model to ignore them [19].

- Adjust Detection Threshold: Increase the confidence threshold required for the model to make a positive call. This will reduce false positives but may slightly increase false negatives.

- Implement Secondary Filters: Create a rule-based system to dismiss detections that do not meet certain size, shape, or intensity criteria for a true parasite egg [17].

Guide: Implementing a Benchmarking Protocol for False Positives

Objective: To establish a standardized method for comparing the false positive performance of different detection models or model versions.

Experimental Protocol:

- Curate a Gold Standard Test Set: Assemble a diverse and well-annotated dataset of images. The annotations must include not only the locations of true parasites but also labels for common false positive sources (e.g., "fold," "bubble," "debris") [20] [16].

- Define Your False Positive Metric: Clearly specify the criteria for a false positive. For image-based detection, this could be at the image-level, object-level, or for video, a time-based threshold (e.g., any alert lasting ≥1 second on a non-target area) [16].

- Run Inference: Process the entire gold standard test set with the model(s) you are evaluating.

- Calculate Key Metrics: Tally the results and calculate the following core metrics for a comprehensive view [21] [20]:

- False Positives Per Image (FPPI): The average number of false positives in each image.

- Specificity: The proportion of true negatives correctly identified.

- Precision: The proportion of positive identifications that were actually correct.

The table below summarizes quantitative performance data from recent relevant studies to serve as a benchmarking reference.

Table 1: Benchmarking False Positive Performance in Diagnostic Models

| Study / Model | Application Context | False Positive Rate / Incidence | Specificity | Related Performance Metrics |

|---|---|---|---|---|

| Multi-model Deep Learning Framework [21] | Malaria Detection (Thin Blood Smears) | Not Explicitly Stated | 96.90% | Accuracy: 96.47%, Sensitivity: 96.03% |

| ParaEgg Diagnostic Tool [20] | Human Intestinal Helminthiasis | -- | 95.5% | Sensitivity: 85.7%, PPV: 97.1%, NPV: 80.1% |

| Kato-Katz Smear (for comparison) [20] | Human Intestinal Helminthiasis | -- | 95.5% | Sensitivity: 93.7% |

| CADe for Colon Polyps (≥0.5s threshold) [16] | Colonoscopy Polyp Detection | 1.8 per colonoscopy | 93.2% | Accuracy: 97.8% |

| CADe for Colon Polyps (≥2s threshold) [16] | Colonoscopy Polyp Detection | 0.05 per colonoscopy | 99.8% | Accuracy: 99.9% |

| Malaria RDTs (in RF-positive patients) [18] | Malaria Rapid Diagnostic Test | 2.2% - 13% (by test brand) | -- | -- |

Guide: Selecting a Model Architecture to Minimize False Positives

Problem: Choosing an object detection model that balances high sensitivity with a low false positive rate.

Considerations and Options: The choice of model architecture inherently influences its propensity for false positives. Below is a comparison of modern architectures and their characteristics.

Table 2: Object Detection Models and False Positive Considerations

| Model Architecture | Key Principle | Strengths for FP Reduction | Weaknesses & FP Risks |

|---|---|---|---|

| YOLO Series [19] [22] | Single-shot detector that performs localization and classification in one pass. | High speed, suitable for real-time applications. Newer versions integrate attention mechanisms for better small-object discrimination [22]. | Can struggle with small objects or objects in close proximity, potentially leading to missed detections or false positives on background noise [19]. |

| EfficientDet [19] | Uses a weighted Bi-directional Feature Pyramid Network (BiFPN) for efficient multi-scale feature fusion. | High computational efficiency and strong performance. Compound scaling balances model size and accuracy. Excellent at detecting objects at various scales. | While generally accurate, its performance is dependent on the quality and diversity of the training data to learn robust features against false positives. |

| Faster R-CNN / Cascade R-CNN [19] | Two-stage detector that first proposes regions of interest and then classifies them. | Typically achieves high accuracy and precision. The two-stage process can be more effective at filtering out non-objects. | Slower than single-shot detectors due to its complex pipeline, which may not be suitable for all real-time applications [19]. |

| Models with Attention Mechanisms [22] | Integrates attention modules to help the model focus on more relevant features in the image. | Explicitly designed to enhance feature representation, which can significantly improve the detection of small objects like early-stage parasites and suppress background noise [22]. | Increased model complexity and potential need for more data to train effectively. |

Decision Workflow: The following diagram outlines a logical pathway for selecting and optimizing a model to achieve a low false positive rate.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Parasite Detection Experiments

| Reagent / Material | Function in Research Context |

|---|---|

| Giemsa Stain | The gold standard for staining blood smears to visualize malaria parasites; used for preparing ground truth data [21]. |

| Formalin-Ether Concentration Technique (FET) | A conventional copromicroscopic method for concentrating parasite eggs in stool samples; used as a comparator for novel diagnostic tools [20]. |

| Kato-Katz Smear | A semi-quantitative method for preparing thick stool smears to detect and count helminth eggs; often used as a reference standard in field studies [20]. |

| ParaEgg Diagnostic Tool | A newer copromicroscopic tool evaluated for its high sensitivity and specificity in detecting intestinal helminths in both human and animal samples [20]. |

| Sodium Nitrate Flotation (SNF) | A flotation technique that uses a specific solution to separate and concentrate parasite eggs from stool debris for easier microscopic identification [20]. |

| Gold Standard Test Dataset | A meticulously annotated collection of images (e.g., 27,558 thin blood smear images) used for training and, crucially, for benchmarking model performance against a known truth [21]. |

Experimental Workflow for False-Positives Mitigation

The following diagram outlines a key experimental pipeline, adapted from mass spectrometry-based screening, for identifying and mitigating a specific false-positive mechanism [23].

Troubleshooting Guide: Common False-Positive Scenarios

This section addresses specific issues researchers might encounter during high-throughput screening (HTS) experiments.

| Problem Area | Specific Issue & Symptoms | Proposed Solution & Methodology | Key Performance Metrics for Validation |

|---|---|---|---|

| Compound Interference | Unexplained inhibition in positive control wells; signal inconsistency across replicates [23]. | Implement an orthogonal assay with a different detection principle (e.g., non-optical readout) to confirm activity [23]. | >5-fold reduction in hit rate after orthogonal confirmation; Z' factor >0.5 for assay quality [23]. |

| Statistical False Positives | High hit rate (>10%); results fail upon retest or in dose-response; p-values just below 0.05 [24]. | Apply multiple testing corrections (e.g., Benjamini-Hochberg procedure) to control the False Discovery Rate (FDR) [24]. | FDR controlled at ≤5%; comparison of pre- and post-correction hit lists [24]. |

| Assay Artifact | Signal drift over time; correlation between compound concentration and signal in negative controls. | Include internal controls in every plate to normalize for background signal and systematic drift. | Coefficient of variation (CV) <15% across all control wells; stable signal in negative controls over time. |

Research Reagent Solutions for HTS

This table details essential materials and their functions in setting up a robust HTS campaign focused on minimizing false positives.

| Reagent / Material | Function in the Assay | Key Characteristics & Selection Criteria |

|---|---|---|

| Orthogonal Assay Kits | To confirm primary hits using a different biochemical or biophysical principle, ruling out technology-specific artifacts [23]. | Should have a different readout (e.g., Mass Spectrometry vs. Fluorescence) and not share reagents with the primary assay [23]. |

| High-Fidelity Enzymes/Substrates | Essential components for the biochemical reaction in the primary screen. | High purity and batch-to-batch consistency are critical to minimize background noise and variability. |

| Stable Cell Lines | For cell-based assays, used to express the target protein or pathway. | Should demonstrate stable expression over multiple passages (>20) and low background signaling. |

| Control Compound Plates | Included on every screening plate for quality control and normalization. | Should contain known inhibitors/activators (positive controls) and neutral compounds (negative controls). |

Statistical Methods for Multiple Testing Corrections

When conducting multiple comparisons in a single study, the chance of obtaining a false positive increases dramatically. Statistical corrections are essential to control this family-wise error rate [24].

| Number of Comparisons (C) | Per-Comparison α (αpc) | Family-Wise Error Rate (αfw) |

|---|---|---|

| 1 | 0.05 | 0.05 |

| 3 | 0.05 | 0.14 |

| 6 | 0.05 | 0.26 |

| 10 | 0.05 | 0.40 |

| 15 | 0.05 | 0.54 |

Formula: αfw = 1 - (1 - αpc)^C [24]

The table below compares two common approaches for correcting for multiple comparisons.

| Correction Method | Procedure | Best Use Case | Advantages / Disadvantages |

|---|---|---|---|

| Bonferroni | Divide the significance level (α) by the number of comparisons (c). New significance level = α/c [24]. | When a very strict control of Type I errors (false positives) is required. | Advantage: Simple to implement. Disadvantage: Very conservative, leading to a high false negative rate [24]. |

| Benjamini-Hochberg (BH) | 1. Set a False Discovery Rate (FDR, Q).2. Rank all p-values (p1...pm).3. Find the largest k where p(k) ≤ (k/m) * Q.4. All hypotheses ranked 1 to k are significant [24]. | When conducting exploratory analyses with many tests and you can tolerate a small proportion of false discoveries. | Advantage: Less strict, more powerful than Bonferroni. Disadvantage: Controls the proportion of false discoveries, not the absolute presence of any [24]. |

Frequently Asked Questions (FAQs)

Q1: What is the difference between a false positive and a false negative in a screening context? A false positive occurs when a compound is incorrectly identified as a "hit," while a false negative occurs when a truly active compound is missed. Reducing one can often increase the other, so the balance must be chosen based on the consequences for the project [25].

Q2: Why can't we just use a stricter p-value threshold instead of formal corrections? While using a stricter threshold (e.g., p < 0.01) can reduce false positives, it is an arbitrary and non-rigorous method. Statistical corrections like Bonferroni or BH provide a mathematically sound framework for controlling the error rate across the entire experiment, which is especially important when the number of tests is large [24].

Q3: Our primary screen uses a fluorescent readout. What is a good orthogonal method for confirmation? Mass spectrometry (MS)-based assays are excellent for orthogonal confirmation. They are label-free, direct, and unaffected by compound interference that plagues fluorescence-based assays (e.g., auto-quenching or inner-filter effects) [23].

Q4: How many significant figures should we use when reporting corrected p-values? It is common practice to report p-values to three significant digits (e.g., p = 0.034). For very small p-values, scientific notation is appropriate (e.g., p = 2.1e-5). Ensure your analysis software or graphing tool is correctly configured to handle this output [26].

Advanced Detection Architectures: From YOLO to Attention Mechanisms for Enhanced Specificity

Leveraging YOLO-based Models for Efficient and Accurate Egg Localization

Troubleshooting Guide: Common YOLO Experimental Challenges

Q1: My model achieves a high mAP@0.5 but has an unacceptable number of false positives in real-world use. How can I address this?

- Problem Interpretation: A high mAP@0.5 with high false positives indicates that while your model detects most objects, it lacks precision. The mAP@0.5 metric uses a relatively low Intersection over Union (IoU) threshold of 0.50, meaning it considers detections with just 50% overlap with the ground truth as correct. This can mask a model's tendency to make imprecise bounding boxes or detect non-existent objects [27].

- Recommended Actions:

- Increase the IoU Threshold: Evaluate your model's performance using mAP@0.50:0.95. A significant drop in this metric compared to mAP@0.5 confirms the localization imprecision. Consider using a higher IoU threshold for validation or during non-maximum suppression (NMS) to enforce stricter localization requirements [27].

- Adjust Confidence Threshold: Tune the confidence threshold upward. A higher confidence score will only retain predictions the model is most certain about, reducing false detections at the cost of potentially missing some true positives with lower confidence [27].

- Integrate a Secondary Classifier: For critical applications, use your YOLO model as a region proposer. The cropped image regions identified by YOLO can then be classified by a secondary, traditional machine learning model (e.g., SVM) using features like HOG to filter out false positives through a voting scheme [28].

- Review Training Data: Ensure your dataset includes ample "hard negative" examples—image patches that resemble parasite eggs (e.g., debris, bubbles, artifacts) but are not. This teaches the model to distinguish relevant features better [29].

Q2: The model performs well on clean, lab-acquired images but fails when deployed on data from a different microscope or preparation technique. How can I improve robustness?

- Problem Interpretation: This is a classic domain shift problem. The model has overfitted to the specific visual characteristics (e.g., lighting, contrast, background, magnification) of its training data and cannot generalize to new domains [30].

- Recommended Actions:

- Employ Multi-Domain Training: If possible, train your model on a consolidated dataset comprising images from multiple sources—different microscopes, sample preparation methods (e.g., Kato-Katz, FLOTAC), and environmental conditions [6] [30].

- Use Domain-Invariant Learning: During training, use techniques like Maximum Mean Discrepancy (MMD) with Normalized Squared Feature Estimation (NSFE) to explicitly encourage the model to learn features that are consistent across different domains. This method helps the model focus on the essential features of the egg itself, rather than the domain-specific noise [30].

- Incorporate Data Augmentation: Aggressively use data augmentation (e.g., color jitter, Gaussian blur, motion blur, noise injection) to simulate visual variations the model might encounter in different deployment environments [6].

Q3: I need to deploy the model on a device with limited computational resources. How can I maintain accuracy while reducing the model's size and latency?

- Problem Interpretation: Standard YOLO models might be too heavy for edge devices, requiring a balance between performance and efficiency.

- Recommended Actions:

- Adopt a Lightweight Architecture: Start with a lightweight baseline like YOLOv5n or YOLOv8n. Further modify the architecture by replacing the backbone with a more efficient network like GhostNet, which can significantly reduce parameters and computations [31] [32].

- Use Advanced Feature Pyramids: Replace the standard Feature Pyramid Network (FPN) with an Asymptotic Feature Pyramid Network (AFPN). The AFPN more efficiently fuses multi-scale features through a hierarchical and gradual fusion process, improving the detection of small objects like parasite eggs while reducing redundant information and computational cost [31].

- Optimize for Deployment: Use deployment toolkits like TensorRT to optimize the trained model. This can significantly increase inference speed (e.g., by 1.4x) without sacrificing accuracy, making real-time analysis on portable microscopes feasible [32].

Frequently Asked Questions (FAQs)

Q: What is the practical difference between mAP50 and mAP50-95, and which should I prioritize for my research?

A: These metrics evaluate different aspects of your model's performance, and the choice depends on your application's requirements.

- mAP50 (mean Average Precision at IoU=0.50): Measures how good your model is at finding eggs, even if the bounding box is not perfectly precise. It is useful for initial screening where the primary goal is to not miss any potential eggs [27].

- mAP50-95 (mean Average Precision averaged over IoU from 0.50 to 0.95): A much stricter metric. It evaluates how good your model is at both finding and precisely localizing eggs. A high mAP50-95 indicates robust performance and is often more representative of real-world usability where accurate quantification and analysis are needed [27].

For parasite egg detection, where accurate counting and size analysis might be important, a high mAP50-95 is a better indicator of a reliable model [8].

Q: How can I effectively reduce false negatives in my egg detection pipeline?

A: To minimize missed detections (false negatives), focus on recall-oriented strategies.

- Lower Confidence Threshold: Temporarily decrease the confidence threshold during inference to allow more potential detections to be considered. Be aware that this will likely increase false positives, which must then be managed [27].

- Data-Centric Improvements: Add more training examples of eggs that are occluded, out-of-focus, or have unusual orientations. Ensure your dataset is comprehensively annotated so the model learns the full spectrum of egg appearances [28].

- Model-Centric Improvements: For datasets with many small eggs, consider adding or enhancing a dedicated small-object detection layer in the YOLO architecture to better capture fine-grained features [32].

Q: My dataset is limited and imbalanced. What are the most effective strategies to tackle this?

A: Limited and imbalanced data is a common challenge in medical imaging.

- Leverage Transfer Learning: Start with a model pre-trained on a large, general-purpose dataset (like COCO) and fine-tune it on your specific parasitic egg data. This provides a strong foundational feature extractor [6].

- Apply Rigorous Data Augmentation: Use techniques like rotation, scaling, flipping, and color space adjustments to artificially increase the diversity and size of your training set, improving model generalization [6] [31].

- Utilize Attention Mechanisms: Integrate modules like the Convolutional Block Attention Module (CBAM) into your YOLO architecture. CBAM helps the model learn to focus on the most informative spatial and channel-wise features of the eggs, making it more data-efficient and robust, even with limited examples [8].

Performance Metrics & Quantitative Benchmarks

The following table summarizes key performance metrics from recent studies on egg detection, providing a benchmark for model evaluation.

Table 1: Performance Metrics of Recent Egg Detection Models

| Model / Study | Primary Task | Key Metric | Reported Score | Context & Notes |

|---|---|---|---|---|

| YCBAM (YOLOv8-based) [8] | Pinworm Egg Detection | mAP50 | 0.995 | Integrated self-attention and CBAM for high precision in noisy images. |

| mAP50-95 | 0.653 | |||

| YAC-Net (YOLOv5n-based) [31] | Parasite Egg Detection | mAP50 | 0.991 | Lightweight model; uses AFPN and C2f modules. |

| Precision | 97.8% | |||

| Multi-Domain YOLOv8 [30] | Cracked Chicken Egg Detection | mAP (Unknown IoU) | 88.8% | Trained with NSFE-MMD for robustness on unknown test domains. |

| YOLO-Goose (YOLOv8s-based) [32] | Goose & Egg Identification | mAP50 | 96.4% | Designed for individual animal identification and egg matching. |

| Faster R-CNN with ML Voting [28] | S. mansoni Egg Detection | AP@IoU=0.50 | 0.884 | Combined DL object detection with traditional ML to reduce false positives. |

Table 2: Core Object Detection Metrics and Their Interpretation [27]

| Metric | Definition | What a Low Score Indicates |

|---|---|---|

| Precision | Proportion of correct detections among all positive predictions. | High false positive rate; model predicts objects that are not present. |

| Recall | Proportion of actual objects that were successfully detected. | High false negative rate; model misses many true objects. |

| mAP50 | Average precision across classes at an IoU threshold of 0.50. | Model struggles with basic object finding. |

| mAP50-95 | Average precision across classes and IoU thresholds (0.50 to 0.95). | Model struggles with precise object localization. |

| F1 Score | Harmonic mean of Precision and Recall. | An imbalance between false positives and false negatives. |

Experimental Protocol: Reducing False Positives with a Hybrid DL-ML Approach

This protocol is based on a study that combined a Deep Learning object detector with traditional Machine Learning classifiers to effectively reduce false positives in S. mansoni egg diagnosis [28].

Objective: To implement a two-stage validation system where a YOLO model performs initial detection, and a secondary ML model verifies the predictions to filter out false positives.

Workflow:

Methodology:

Stage 1: Deep Learning-Based Object Detection

- Model Training: Train a YOLO model (e.g., YOLOv8) on your annotated dataset of parasite eggs.

- Inference for Region Proposals: Use the trained YOLO model on new images. Set a low confidence threshold (e.g., 0.3-0.5) to ensure high recall and minimize false negatives. The output is a set of bounding boxes (region proposals) for all suspected eggs.

Stage 2: Machine Learning-Based Verification

- Feature Extraction: For each proposed bounding box from Stage 1, crop the image region. Extract Histogram of Oriented Gradients (HOG) features from each cropped region. HOG provides a robust descriptor of shape and texture.

- ML Model Training: Train multiple traditional ML classifiers (e.g., Support Vector Machine - SVM, Random Forest - RF, k-Nearest Neighbors - k-NN) on a dataset of HOG features. This dataset must include correct egg patches (true positives) and challenging non-egg patches (hard negatives).

- Voting Scheme: During inference on new data, pass the HOG features of each proposed region through all the trained ML models. A final detection is only confirmed if a majority of the ML models classify the region as a true egg.

Expected Outcome: This hybrid approach leverages the high recall of YOLO for candidate generation and the high precision of traditional ML models for candidate verification, leading to a significant reduction in false positives and a more reliable automated diagnostic system [28].

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Materials and Computational Tools for Automated Parasite Egg Detection Research

| Item / Solution | Function / Description | Example Use Case |

|---|---|---|

| Kubic FLOTAC Microscope (KFM) | A compact, portable digital microscope for autonomous analysis of fecal specimens in field and lab settings [6] [33]. | Used in the AI-KFM challenge to create a standardized, real-world dataset for model training and benchmarking. |

| FLOTAC / Mini-FLOTAC | Fecal egg count techniques used to prepare samples, providing high sensitivity and accuracy for parasite egg floatation [6]. | Standardizing sample preparation for creating consistent and high-quality image datasets. |

| Kato-Katz Technique | A widely used parasitological technique for preparing thick fecal smears on slides for microscopic examination [28]. | The gold-standard method for creating slides for human schistosomiasis diagnosis in research and clinical settings. |

| Attention Mechanisms (e.g., CBAM) | A module that can be integrated into CNNs to help the model focus on more informative features (both spatially and channel-wise) [8]. | Improving feature extraction in complex microscopic backgrounds, enhancing detection of small and translucent pinworm eggs. |

| Multi-Domain Training with MMD | A training strategy using Maximum Mean Discrepancy (MMD) to learn domain-invariant features from data collected across different sources [30]. | Improving model robustness and performance when deployed on data from new microscopes or sample origins. |

| Asymptotic Feature Pyramid Network (AFPN) | A feature pyramid structure that allows for more adaptive and gradual fusion of multi-scale features compared to standard FPN [31]. | Enhancing the detection performance of lightweight models for small objects like parasite eggs while reducing computational complexity. |

Integrating Attention Modules like CBAM to Suppress Background Noise

In automated parasite egg detection, a significant challenge is the high rate of false positives caused by complex background noise, debris, and artifacts in microscopic images that resemble target structures. This technical support document provides a practical guide for researchers integrating Convolutional Block Attention Module (CBAM) to enhance feature extraction, suppress irrelevant background information, and improve detection accuracy. The following sections offer troubleshooting guidance, experimental protocols, and reagent solutions to address common implementation challenges.

Frequently Asked Questions (FAQs)

Q1: What is CBAM and how does it help reduce false positives in parasite detection?

CBAM is a lightweight attention module that sequentially infers attention maps along channel and spatial dimensions of feature maps [34]. This dual attention mechanism allows deep learning models to:

- Selectively emphasize informative features related to parasite eggs while suppressing less relevant background features [35]

- Enhance spatial focus on small targets with distinctive morphological features [8]

- Improve discrimination between true parasite eggs and visually similar artifacts [35]

Q2: Which base architectures are most compatible with CBAM integration?

CBAM can be integrated into most popular detection backbones. Research has demonstrated successful implementations with:

- YOLO variants (YOLOv7, YOLOv8) for real-time detection [8] [36]

- Mask R-CNN for instance segmentation tasks [35]

- U-Net architectures for precise segmentation [37]

- EfficientNet for classification-focused tasks [34]

Q3: What are the most common performance issues when implementing CBAM?

Common issues researchers encounter include:

- Vanishing attention: Where the attention mechanism fails to learn meaningful feature weighting

- Overfitting on small datasets: Particularly problematic in medical imaging with limited annotated data

- Computational overhead: Though lightweight, CBAM can impact inference speed on edge devices

- Hyperparameter sensitivity: Poor tuning leads to suboptimal attention focus

Q4: How can I evaluate whether CBAM is functioning correctly in my model?

Effective evaluation strategies include:

- Visualizing attention maps to see which image regions the model prioritizes

- Ablation studies comparing performance with and without CBAM

- Analyzing precision-recall curves specifically for challenging negative cases

- Per-class accuracy analysis to identify specific parasite types benefiting from attention

Troubleshooting Guides

Poor Attention Map Quality

Symptoms:

- Attention maps appear diffuse or random

- Model fails to focus on parasite egg regions

- No improvement in false positive rates after CBAM integration

Solutions:

- Verify gradient flow to CBAM layers during backpropagation

- Adjust initialization of attention weights to prevent vanishing gradients

- Incorporate intermediate supervision to guide attention learning

- Increase channel compression ratio in the channel attention module to strengthen feature representation [34]

Performance Degradation After CBAM Integration

Symptoms:

- Decreased overall detection accuracy

- Increased inference time beyond requirements

- Unstable training loss curves

Solutions:

- Position CBAM correctly within the backbone network (after residual connections in ResNet-based architectures)

- Reduce CBAM modules to critical network stages rather than every layer

- Apply progressive integration starting with shallow layers then deepening

- Utilize lightweight alternatives like SEConv for computational efficiency while maintaining accuracy [36]

Overfitting on Limited Medical Data

Symptoms:

- Excellent training performance but poor validation results

- Attention maps that memorize specific images rather than learning general features

Solutions:

- Implement strong data augmentation (rotation, color variation, elastic deformations)

- Apply transfer learning from pre-trained models on natural images

- Add regularization to attention weights (L2 regularization, dropout)

- Use larger datasets with diverse parasite egg representations and background scenarios [8]

Key Experimental Protocols

Baseline Establishment Protocol

Objective: Establish performance baseline before CBAM integration

Procedure:

- Select your base detection architecture (YOLO, Faster R-CNN, etc.)

- Train on your parasite dataset with standard parameters

- Evaluate and record key metrics:

- Mean Average Precision (mAP) at IoU 0.5 and 0.5:0.95

- Precision and recall rates

- False positive/negative rates

- Inference speed (FPS)

- Analyze failure cases to identify specific false positive patterns

CBAM Integration Protocol

Objective: Correctly integrate CBAM into selected architecture

Procedure:

- Identify integration points: Insert CBAM after convolution blocks where feature refinement is needed

- Implement CBAM forward pass:

- Initialize CBAM parameters using He or Xavier initialization

- Fine-tune the complete model with a lower learning rate (10-100x reduction)

- Validate functionality by visualizing attention maps on sample images

Attention Visualization Protocol

Objective: Verify CBAM is focusing on relevant image regions

Procedure:

- Forward pass a sample image through the network

- Extract spatial attention maps from each CBAM module

- Resize attention maps to input image dimensions using interpolation

- Overlay attention heatmaps on original images

- Qualitatively assess whether high-attention regions correspond to parasite eggs

- Compare attention patterns between true positives, false positives, and false negatives

Quantitative Performance Data

Table 1: CBAM Performance Across Different Detection Frameworks for Biological Targets

| Architecture | Dataset | Precision | Recall | mAP@0.5 | False Positive Reduction |

|---|---|---|---|---|---|

| YCBAM (YOLOv8 + CBAM) [8] | Pinworm parasite eggs | 0.997 | 0.993 | 0.995 | Significant (prec: 0.997 vs baseline ~0.95) |

| Mask R-CNN-CBAM [35] | Agricultural pests | 0.959 | 0.952 | - | 2.67% improvement over Mask R-CNN |

| CBAM-EfficientNetV2 [34] | Breast cancer histopathology | - | - | - | Peak accuracy: 98.96% (400X magnification) |

| YOLO-PAM [38] | Malaria parasites | - | - | 0.836 | Effective for multi-species detection |

Table 2: Ablation Study Results Showing CBAM Component Contributions

| Model Component | Performance Impact | Key Metric Change |

|---|---|---|

| Full CBAM Module [35] | Highest overall improvement | F1 score +5.5% |

| Channel Attention Only | Moderate improvement | Precision focus |

| Spatial Attention Only | Better localization | Recall improvement |

| Dual-channel downsampling with CBAM [35] | Small target enhancement | AP@50 +3.1% |

| Feature Pyramid Network + CBAM [35] | Multi-scale improvement | Small-target recall +6% |

Research Reagent Solutions

Table 3: Essential Research Components for CBAM Integration Experiments

| Component | Specification | Research Function |

|---|---|---|

| Base Detection Framework | YOLOv8, Mask R-CNN, or EfficientNet | Foundation for CBAM integration and performance comparison |

| Annotation Tools | LabelImg, VGG Image Annotator | Creating bounding box or segmentation labels for parasite eggs |

| Attention Visualization | Grad-CAM, custom attention visualization scripts | Qualitative validation of CBAM focus areas |

| Evaluation Metrics | mAP, Precision, Recall, F1-Score | Quantitative performance assessment before/after CBAM |

| Computational Resources | GPU with 8GB+ VRAM | Handling deep learning models and attention mechanisms |

Workflow Diagrams

CBAM Integration Workflow in Detection Pipeline

CBAM Dual Attention Mechanism Operation

The Role of Lightweight Models for Deployment in Resource-Limited Settings

Technical Support Center

Troubleshooting Guide: Common Model Deployment Issues

Issue 1: High False Positive Rate in Noisy Microscopy Images

- Problem: Model misclassifies impurities, bubbles, or artifacts as parasite eggs.

- Solution:

- Integrate an Attention Mechanism: Add a Convolutional Block Attention Module (CBAM) to help the model focus on relevant spatial regions and channel features, reducing background interference [8].

- Implement Multi-Scale Feature Fusion: Use an Asymptotic Feature Pyramid Network (AFPN) to better fuse spatial contextual information from different scales, improving the model's ability to distinguish small eggs from complex backgrounds [31].

- Augment Training Data: Include a wide variety of impurity examples and use data augmentation techniques (e.g., rotations, blurring, contrast changes) to improve model robustness [6].

Issue 2: Model is Too Large for On-Device Deployment

- Problem: The trained model requires more computational resources (memory, CPU) than available on the target edge device.

- Solution:

- Apply Model Quantization: Reduce the numerical precision of model weights from 32-bit floating-point to 8-bit or 4-bit integers. This can reduce memory usage by 4x to 8x with minimal accuracy loss [39].

- Use a Lightweight Baseline: Start with an already-efficient model architecture like YOLOv5n or YOLOv8-S, then further optimize it [40] [31].

- Prune the Network: Remove redundant neurons or less important attention heads from the model to create a smaller, faster network [39].

Issue 3: Poor Generalization to New Data Sources

- Problem: A model trained on one dataset performs poorly on images from a different microscope or preparation technique.

- Solution:

- Employ Federated Learning: Train models across multiple decentralized data sources (e.g., different field clinics) without exchanging data to improve generalization while preserving privacy [40].

- Use Data from Standardized Protocols: Train models on datasets created with standardized, real-world protocols like the AI-KFM challenge dataset, which reflects varied field conditions [6].

- Fine-Tune with Local Data: Collect a small set of local images and perform transfer learning to adapt a pre-trained model to your specific environment [39].

Frequently Asked Questions (FAQs)

Q1: What is the most important optimization for achieving real-time performance on a low-power device?

A: Implementing a quantized Key-Value (KV) Cache is critical for autoregressive models, as it reduces memory requirements and makes computational complexity approximately linear instead of quadratic relative to sequence length. Combining this with a lightweight model like YOLOv8-S, which is designed for minimal computational overhead, provides the best balance of speed and accuracy [40] [39].

Q2: Our model achieves high accuracy on validation data but has high false positives in the field. What steps should we take?

A: This indicates a domain shift problem. First, incorporate an attention mechanism (e.g., CBAM or self-attention) into your object detector. Studies show that the YCBAM architecture, which integrates YOLO with attention modules, achieved a precision of 0.9971 and a recall of 0.9934 for pinworm egg detection by helping the model ignore irrelevant background features [8]. Second, ensure your training dataset includes a high variety of real-world, noisy images, such as those from the AI-KFM challenge, which contain varying levels of contamination [6].

Q3: Which lightweight model architecture provides the best balance of accuracy and efficiency for parasite egg detection?

A: Recent research indicates that optimized versions of YOLO (You Only Look Once) are highly effective. The lightweight YAC-Net model, an improved version of YOLOv5n, achieved a precision of 97.8% and a recall of 97.7% for parasite egg detection while reducing parameters by one-fifth compared to its baseline [31]. Similarly, the YOLOv8-S model has demonstrated exceptional object detection performance with minimal computational overhead [40].

Q4: How can we address the challenge of limited labeled training data in resource-limited settings?

A: Two effective strategies are:

- Leverage Pre-Trained Models: Use models pre-trained on large general image datasets (like ImageNet) and fine-tune them on your smaller, domain-specific parasite egg dataset. This transfer learning approach requires less labeled data [8].

- Explore Data Augmentation and Generative Models: Systematically augment your existing data. Furthermore, research is being conducted on using Generative Adversarial Networks (GANs) to generate synthetic training data, although this was noted as a unique approach in the AI-KFM challenge and may require more expertise [6].

Experimental Protocols & Performance Data

Table 1: Performance Comparison of Lightweight Models for Medical Detection

| Model / System | Task | Key Metric | Result | Reference |

|---|---|---|---|---|

| YAC-Net (YOLOv5n-based) | Parasite Egg Detection | Precision / Recall / mAP@0.5 | 97.8% / 97.7% / 0.9913 | [31] |

| AIDMAN (YOLOv5 + Transformer) | Malaria Diagnosis | Prospective Clinical Validation Accuracy | 98.44% | [41] |

| YCBAM (YOLOv8 + Attention) | Pinworm Egg Detection | Precision / mAP@0.5 | 0.9971 / 0.9950 | [8] |

| Vision Transformer | Orange Disease Classification | Accuracy | 96% | [40] |

Table 2: Core AI Research Reagents for Automated Parasite Detection

| Research Reagent | Function in the Experimental Pipeline | Key Consideration for Low-Resource Settings |

|---|---|---|

| YOLO Models (v5n, v8-S) | Provides the core object detection backbone; balances speed and accuracy. | Low computational overhead ideal for edge deployment [40] [31]. |

| Attention Modules (CBAM, Self-Attention) | Directs model focus to salient features (eggs), suppressing background noise and reducing false positives. | Critical for handling noisy, real-world field images [8]. |

| Asymptotic Feature Pyramid Network (AFPN) | Integrates multi-scale feature information, helping to detect small objects like parasite eggs. | Improves performance on low-resolution images [31]. |

| Quantization Tools (e.g., GPTQ) | Reduces model memory footprint by lowering the precision of weights and activations. | Enables deployment on devices with limited RAM and storage [39]. |

| Kubic FLOTAC Microscope (KFM) | Standardized, portable digital microscope for creating consistent field image datasets. | Ensures models are trained and validated on realistic, field-representative data [6]. |

Experimental Workflow Diagram

Diagram 1: Lightweight Model Workflow for Parasite Egg Detection.

Model Optimization Pathway

Diagram 2: Model Optimization Pathway.

Multi-Task and Transfer Learning Approaches to Improve Feature Learning

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using multi-task learning for automated parasite detection?

Multi-task learning (MTL) improves feature learning by sharing representations between a primary classification task and an auxiliary task. In diagnostic applications, using an auxiliary task like nuclear segmentation forces the network to learn biologically relevant features, such as nuclear morphology, which leads to a more robust representation for the main task of distinguishing infected from uninfected cells. This approach can significantly improve performance, particularly when training data is limited, and has been shown to achieve sensitivity as high as 0.94 and specificity of 0.58 in related medical imaging tasks, outperforming state-of-the-art architectures [42].

Q2: My transfer learning model is performing poorly on my parasite image dataset. What could be wrong?

A common issue is domain mismatch. If your pre-trained model (e.g., from natural images like ImageNet) is too dissimilar from your medical images, features may not transfer effectively. Consider these solutions:

- Strategy 1: Same-Modality Transfer. Use a model pre-trained on images from the same modality, even if the anatomy is different. For example, a model pre-trained on chest X-rays was successfully fine-tuned for bone tumor detection on knee X-rays, as both are radiographs sharing low-level features like contrast and edge characteristics [43].

- Strategy 2: Progressive Fine-tuning. Gradually fine-tune the model on datasets that bridge the gap between the source domain (e.g., natural images) and your specific target domain (parasite images) [43].

- Strategy 3: Ensemble Methods. Combine predictions from multiple pre-trained models (e.g., VGG16, ResNet50V2, DenseNet201). This leverages the complementary strengths of different architectures and can achieve higher accuracy and robustness than a single model [44].

Q3: How can I reduce false positives in my detection system without compromising sensitivity?

Reducing false positives is a key challenge. Several strategies from recent research can be applied:

- Architecture Choice: Implement a multi-task network. By jointly learning to classify and localize (e.g., segment nuclei), the model learns more specific features related to the parasite's structure, reducing confusion with background artifacts [42].

- Data-Centric Approach: Introduce a filtering step. Train a preliminary classifier to screen out images that are clearly irrelevant (e.g., images with no cells) before they reach your main detector. This can reduce the false positive rate for the overall system [45].

- Optimized Operating Points: Analyze your model's performance at different classification thresholds. A transfer learning model was shown to achieve higher specificity (0.903 vs. 0.867) at the same high-sensitivity operating point (0.903), effectively reducing about 17 false positives per 475 negative samples [43].

Q4: What is the benefit of using an ensemble of models over a single model?

Ensemble learning combines predictions from multiple models to make a final, more robust decision. The key benefits are:

- Improved Accuracy and Generalization: By leveraging the diverse strengths of different architectures, ensembles mitigate individual model weaknesses and reduce overfitting. One study achieved a test accuracy of 97.93% using an ensemble, outperforming all standalone models [44].

- Enhanced Reliability: Techniques like majority voting or adaptive weighted averaging ensure the final prediction is based on a consensus, making the system more stable and trustworthy for clinical settings [44] [21].

Troubleshooting Guides

Issue: Model Overfitting on a Limited Parasite Image Dataset

Problem: Your model performs well on training data but poorly on validation/test sets, indicating overfitting.

Solution Steps:

- Implement Data Augmentation: Apply random transformations (rotations, flips, brightness/contrast adjustments) to artificially expand your dataset and teach the model to be invariant to these changes. This is a standard technique used to enhance robustness [44] [46].

- Incorporate Multi-Task Learning: Add an auxiliary task. For parasite detection, this could be pixel-level segmentation of eggs or nuclei. This acts as a regularizer by forcing the network to learn generalizable, structurally relevant features [42].

- Use Transfer Learning with Fine-Tuning: Start with a model pre-trained on a large dataset (e.g., ImageNet). Fine-tune the final layers, or all layers, with a small learning rate on your specific parasite dataset. This leverages generalized feature detectors without needing millions of new images [44] [46] [47].

- Apply Regularization Techniques: Use dropout layers and L2 weight regularization in your network architecture to prevent complex co-adaptations of neurons on the training data [44].

Issue: High Computational Cost of Complex Models

Problem: Training large ensembles or deep networks is computationally expensive and slow.

Solution Steps:

- Evaluate a Hybrid DL-ML Model: Instead of a purely deep learning approach, use a pre-trained CNN as a feature extractor. Then, feed these features to a traditional machine learning classifier like a Support Vector Machine (SVM). One study achieved 99% accuracy with an inference time of only 0.025 seconds using this method [46].

- Opt for a Compact Multi-Task Architecture: Design a single, efficient network that performs multiple tasks instead of running several separate models. A compact multi-task CNN for cervical precancer diagnosis demonstrated that high performance (AUC of 0.87) could be achieved with a simpler architecture [42].

- Consider Adaptive Ensembles: If an ensemble is necessary, use an adaptive weighted averaging method that assigns higher weight to more accurate models, rather than running all models for every prediction. This can optimize the performance-cost trade-off [44].

Experimental Protocols from Key Studies

Protocol 1: Ensemble Transfer Learning for High-Accuracy Classification

This protocol is adapted from a study achieving 97.93% accuracy in malaria detection [44].

- Objective: To classify images as parasitized or uninfected using an ensemble of pre-trained models.

- Materials: Dataset of 27,558 thin blood smear images [44] [21].

- Methodology:

- Data Preparation: Split data into training, validation, and test sets. Apply data augmentation (rotations, flips, etc.).

- Model Selection & Fine-Tuning: Select multiple pre-trained architectures (e.g., VGG16, ResNet50V2, DenseNet201). Fine-tune each one on the training set.

- Prediction: Generate classification probabilities for each model on the test set.

- Ensemble Integration: Combine the predictions using an adaptive weighted averaging technique, where weights are proportional to each model's performance on the validation set.

- Expected Outcome: The ensemble model will outperform any individual base model in accuracy, precision, and F1-score.

Protocol 2: Multi-Task Learning for Enhanced Feature Learning

This protocol is adapted from a framework for diagnosing cervical precancer, which achieved 0.94 sensitivity [42].

- Objective: Improve the feature learning for a main classification task by jointly training on an auxiliary segmentation task.

- Materials: A dataset of medical images with classification labels and, ideally, segmentation masks. If pixel-level annotations are scarce, proxy labels from other algorithms can be used [42].

- Methodology:

- Network Architecture: Design a convolutional neural network with a shared encoder and two decoder heads: one for the main task (e.g., infected/uninfected classification) and one for the auxiliary task (e.g., nuclear segmentation).

- Joint Training: Train the network by minimizing a combined loss function:

Total Loss = α * Loss_Classification + β * Loss_Segmentation. - Inference: For final deployment, use only the classification branch of the network. The features learned will be more discriminative due to the multi-task training.

- Expected Outcome: The multi-task model will show better generalization and higher sensitivity on the main classification task compared to a model trained for classification alone, particularly when training data is limited.

Protocol 3: Cross-Domain Transfer Learning for Data Efficiency

This protocol is based on a study that successfully applied transfer learning from chest X-rays to knee X-rays for tumor detection [43].

- Objective: Leverage knowledge from a source domain with large datasets (e.g., chest X-rays) to a target domain with limited data (e.g., parasite microscopy images).

- Materials: A large, annotated source dataset (e.g., ImageNet, CheXpert) and a smaller target dataset of parasite images.

- Methodology:

- Pre-training: Pre-train a model (e.g., YOLOv5 for detection or a CNN for classification) on the large source dataset.

- Model Initialization: Initialize your target model with the pre-trained weights. This is the key difference from training from scratch (YOLO-TL vs. YOLO-SC) [43].

- Fine-Tuning: Fine-tune the entire model on the target parasite dataset. Use a low learning rate to adapt the pre-trained features without destroying them.

- Expected Outcome: The transfer learning model will converge faster and achieve higher specificity and better false-positive reduction at high-sensitivity operating points compared to a model trained from scratch [43].

The table below summarizes key performance metrics from the cited studies to provide benchmarks for your own research.

Table 1: Performance Metrics of Different Learning Approaches in Medical Imaging

| Study Focus | Core Approach | Reported Performance | Key Advantage |

|---|---|---|---|

| Malaria Detection [44] | Ensemble Transfer Learning (VGG16, ResNet50V2, etc.) | Accuracy: 97.93%, F1-Score: 0.9793 | Outperforms all standalone models; high robustness. |

| Cervical Precancer Detection [42] | Multi-Task Learning (Classification + Segmentation) | Sensitivity: 0.94, Specificity: 0.58, AUC: 0.87 | Improves feature learning; performance comparable to expert colposcopy. |

| Bone Tumor Detection [43] | Same-Modality Transfer Learning (X-ray to X-ray) | AUC: 0.954, Specificity: 0.903 (at sensitivity=0.903) | Reduces false positives effectively without sacrificing sensitivity. |

| Malaria Diagnosis [46] | Hybrid NASNet & SVM Feature Engineering | Accuracy: 99%, Inference Time: ~0.025s | Combines high accuracy with very fast prediction times. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Their Functions for Automated Parasite Detection Research

| Research Reagent / Material | Function in the Experiment |

|---|---|

| Giemsa-stained Blood Smear Images | The gold standard dataset for training and validation. Provides clear visual differentiation of parasites from RBCs [44] [21]. |

| Pre-trained Model Weights (e.g., ImageNet) | Provides a strong feature extraction foundation, enabling effective transfer learning and reducing data requirements [44] [47]. |

| Data Augmentation Pipeline | Software to generate transformed versions of images (rotation, flip, etc.), increasing dataset diversity and reducing overfitting [44] [46]. |

| Nuclear Segmentation Masks | Pixel-level annotations used as ground truth for training the auxiliary task in a multi-task learning framework [42]. |

| High-Resolution Microendoscope (HRME) | A low-cost, point-of-care imaging device capable of capturing subcellular nuclear morphology for in-vivo analysis [42]. |

Workflow and Architecture Diagrams

MTL for Parasite Detection

TL with Ensemble Framework

Optimizing Performance: Practical Strategies to Tune Models and Minimize Errors

Frequently Asked Questions

Q: What are the most common data-related causes of false positives in automated parasite egg detection? A: The most common causes are class imbalance, where background debris patches vastly outnumber egg patches, training the model to be overly sensitive [48], and insufficient morphological diversity in the dataset, which fails to teach the model to distinguish eggs from visually similar impurities [8] [48].

Q: How can I improve my model's performance when using low-cost microscopes that produce low-resolution images? A: For low-resolution images, employ patch-based classification with sliding windows. Divide the image into small, overlapping patches [7] [48]. This allows the model to analyze fine details at a local level. Combine this with extensive data augmentation—such as random flipping, rotation, and contrast enhancement—to artificially create more variation and help the model learn robust features from limited data [48].