Strategic Hit Triage: Integrating Cheminformatics and Counter-Screens to Derive Robust Leads from HTS

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of triaging hits from High-Throughput Screening (HTS).

Strategic Hit Triage: Integrating Cheminformatics and Counter-Screens to Derive Robust Leads from HTS

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of triaging hits from High-Throughput Screening (HTS). It details a synergistic strategy that combines computational cheminformatics analysis with empirical counter-screening to efficiently distinguish true, promising leads from false positives and assay artifacts. The content spans from foundational concepts and common pitfalls to advanced methodological applications, workflow optimization, and final validation techniques. By outlining a robust, integrated triage pipeline, this resource aims to equip scientists with the knowledge to enhance the quality of their screening output, conserve valuable resources, and increase the likelihood of successful probe or drug discovery.

The HTS Triage Imperative: Laying the Groundwork for Success

High-Throughput Screening (HTS) generates vast amounts of data from testing thousands to millions of compounds against biological targets. The crucial process that follows—HTS triage—involves classifying and prioritizing these screening hits for further investigation. This guide provides troubleshooting and methodological support for researchers navigating the complex journey from initial screening results to validated chemical starting points.

Core Concepts of HTS Triage

What is HTS Triage? HTS triage is the classification or prioritization of hits from screening campaigns into compounds that are likely to survive further investigation, those that probably have no chance of succeeding, and those where expert intervention could make a significant difference in their outcome. Like its medical counterpart, HTS triage is a combination of science and art, learned through extensive laboratory experience [1].

Why is Early Chemistry Partnership Critical? An early partnership between biologists and medicinal chemists is essential for designing robust assays and efficient workflows. This collaboration helps weed out assay artifacts, false positives, and promiscuous bioactive compounds, ultimately giving projects a better chance at identifying truly useful chemical matter [1].

Troubleshooting Guides: Addressing Common HTS Challenges

Frequent Issues and Solutions

| Problem Category | Specific Issue | Signs & Symptoms | Recommended Solution | Prevention Tips |

|---|---|---|---|---|

| Assay Interference | Compound Fluorescence | High signal in fluorescence-based assays without biological relevance; concentration-dependent but target-independent activity [2] | Use orange/red-shifted fluorophores; include a pre-read after compound addition; use time-resolved fluorescence [2] | Pre-profile compound library for fluorescence; use ratiometric fluorescence output [2] |

| Luciferase Inhibition | Activity in luciferase-reporter assays without true target engagement; concentration-dependent inhibition of luciferase enzyme [2] | Test actives against purified firefly luciferase using KM levels of substrate; use orthogonal assay with alternate reporter [2] | Use previous profiling efforts to identify FLuc inhibitors; consider alternative detection methods [3] | |

| Compound Aggregation | Non-specific enzyme inhibition; protein sequestration; IC~50~ sensitive to enzyme concentration; steep Hill slopes [2] | Include 0.01-0.1% Triton X-100 in assay buffer; confirm reversibility by diluting compound [2] | Include detergent in initial assay buffer; monitor for time-dependent inhibition [2] | |

| Compound Integrity | Sample Degradation | Discrepancy between expected and observed activity; poor correlation between screening rounds [4] | Implement rapid LC-UV/MS analysis concurrent with concentration-response testing [4] | Proper compound storage conditions; regular library quality control; minimize freeze-thaw cycles [4] |

| Chemical Liabilities | PAINS (Pan-Assay Interference Compounds) | Activity across multiple unrelated assay types; unusual concentration-response curves [1] | Apply PAINS filters and other computational filters early in triage process [1] | Curate screening library to minimize PAINS; educate team on common interference chemotypes [1] |

| Cellular Toxicity | Cytotoxicity in Cell-Based Assays | Apparent inhibition due to cell death; occurs more commonly at higher compound concentrations [2] | Implement cytotoxicity counter-screens; establish potency window between target effect and toxicity [5] | Shorter compound incubation times; monitor multiple cytotoxicity markers simultaneously [3] |

Advanced Problem: When to Run Counter-Screens

The timing of counter-screens significantly impacts triage efficiency. This workflow illustrates strategic placement options:

Strategic Considerations:

- Early Deployment: Run counter-screens before hit confirmation when specificity cannot be established from primary data (e.g., cytotoxicity-prone cell lines) [5]

- Standard Practice: Implement at hit confirmation stage for technology interference assessment (e.g., luciferase inhibition) [5]

- Potency Stage: Use specificity counter-screens at hit potency stage to identify selectivity windows [5]

Frequently Asked Questions (FAQs)

Triage Strategy & Prioritization

Q: What are the key criteria for prioritizing hits during triage? A: Prioritization should consider multiple factors: confirmed biological activity in dose-response, favorable physicochemical properties, absence of interference behaviors, structural novelty, tractability for medicinal chemistry optimization, and selectivity over related targets. The exact criteria weight depends on project goals and target novelty [1].

Q: How much of a typical screening library consists of problematic compounds? A: Even carefully tended screening libraries contain approximately 5% PAINS (Pan-Assay Interference Compounds), similar to the universe of commercially available compounds. This must be kept in mind during active triage [1].

Q: What computational approaches can assist with hit prioritization? A: New machine learning approaches like Minimum Variance Sampling Analysis (MVS-A) can help distinguish true bioactive compounds from assay artifacts by analyzing learning dynamics during model training on HTS data, requiring no prior assumptions about interference mechanisms [6].

Technical & Methodological Questions

Q: What is the difference between counter-screens and orthogonal assays? A: Counter-screens identify compounds that interfere with assay technology or format (e.g., luciferase inhibition), while orthogonal assays use different detection methods to confirm target-specific activity. Both are essential for comprehensive hit validation [2].

Q: How can we rapidly address compound integrity concerns during triage? A: Implement high-speed UHPLC-UV/MS platforms that analyze ~2,000 samples per instrument weekly. Running integrity assessments concurrently with concentration-response testing provides simultaneous potency and integrity data for better decision-making [4].

Q: What are the most common types of assay interference? A: The most prevalent interference mechanisms include compound aggregation (affecting 1.7-1.9% of libraries), compound fluorescence (varying by wavelength), firefly luciferase inhibition (~3% of libraries), and redox cycling [2].

Experimental Protocols & Methodologies

Essential Research Reagent Solutions

| Reagent/Category | Specific Examples | Function in HTS Triage | Implementation Notes |

|---|---|---|---|

| Cheminformatics Tools | RDKit, Chemistry Development Kit (CDK), MayaChemTools [7] | Calculate molecular descriptors, structural analysis, PAINS filtering | RDKit offers Python API; CDK is Java-based; select based on workflow integration needs [7] |

| Counter-Screen Assays | Luciferase inhibition assay, Cytotoxicity panels, Redox sensitivity tests [3] | Identify technology-specific interference and false positives | Deploy based on primary assay technology; consider timing in workflow [5] |

| Compound Integrity Tools | UHPLC-UV/MS systems [4] | Verify compound identity and purity after storage | High-speed platforms enable analysis of ~2000 samples/week [4] |

| Machine Learning Tools | Minimum Variance Sampling Analysis (MVS-A) [6] | Prioritize true positives and identify false positives without mechanism assumptions | Uses gradient boosting; computes sample influence scores; requires <30 seconds per assay [6] |

| Database Management | RDKit PostgreSQL cartridge, Open Babel, ChemDB [7] | Structure and similarity searching, data organization | Enables substructure searching and chemical data management [7] |

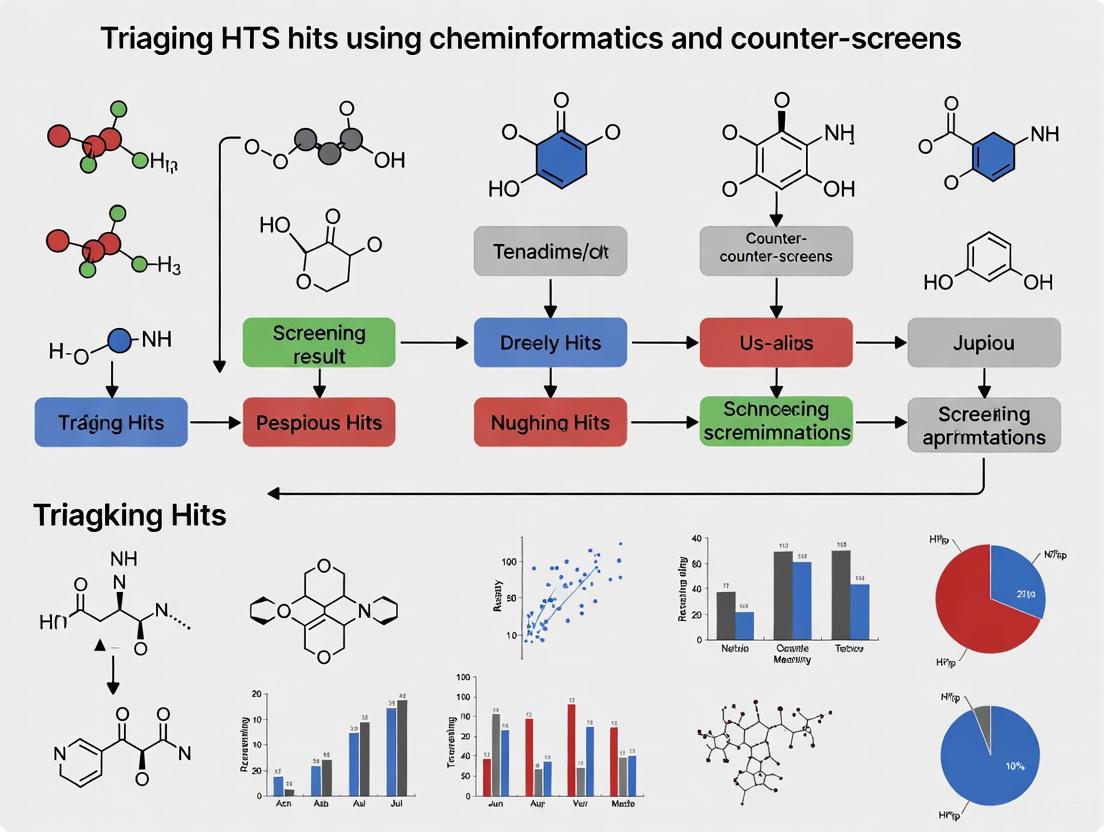

Comprehensive HTS Triage Workflow

This integrated workflow combines cheminformatics and experimental approaches for systematic hit prioritization:

Protocol Implementation Notes:

Cheminformatics Execution:

- Apply PAINS filters and rapid elimination of swill (REOS) filters first [1]

- Calculate key physicochemical properties (molecular weight, logP, hydrogen bond donors/acceptors) [8]

- Perform structural clustering to identify representative chemotypes [1]

- Apply machine learning approaches like MVS-A for additional prioritization [6]

Experimental Validation:

Data Integration:

- Combine cheminformatics and experimental data into unified scoring system

- Consider target novelty and project resources when setting prioritization thresholds [1]

- Document decision rationale for future reference and learning

Effective HTS triage requires both rigorous scientific methodology and practical experimental wisdom. By implementing these troubleshooting guides, FAQs, and standardized protocols, research teams can significantly improve their hit selection efficiency, reduce resource waste on false positives, and accelerate the discovery of genuine chemical starting points for drug development.

In high-throughput screening (HTS), the difference between a true lead compound and a false positive represents more than just a scientific discrepancy—it signifies a substantial financial risk. False positives, or compounds that demonstrate activity not related to the targeted biology, can consume invaluable resources as they progress through more costly validation stages [2]. With typical HTS hit rates of only 0.01-0.1% for genuine actives, these artifacts can easily obscure true signals and derail projects [2]. This technical support center provides actionable troubleshooting guides and FAQs to help you implement a robust triage strategy, leveraging cheminformatics and strategic counter-screens to safeguard your research.

Understanding HTS False Positives and Their Costs

What are the most common types of assay interference?

Assay interference arises from various compound-specific behaviors that can mimic genuine biological activity. The table below summarizes the most prevalent types, their mechanisms, and their impact on screening campaigns.

Table 1: Common Types of Assay Interference and Their Characteristics

| Interference Type | Mechanism of Action | Effect on Assay | Reported Prevalence |

|---|---|---|---|

| Compound Aggregation | Forms colloidal aggregates that non-specifically sequester proteins [2] | Non-specific enzyme inhibition; protein sequestration [2] | 1.7–1.9% of library; can comprise up to 90-95% of actives in some biochemical assays [2] |

| Compound Fluorescence | The compound itself fluoresces, interfering with fluorescent detection methods [2] | General increase or decrease in detected signal; bleed-through in adjacent wells [2] | Varies by spectral window; can constitute up to 50% of actives in assays using blue-shifted spectra [2] |

| Luciferase Inhibition | Directly inhibits the firefly or nano luciferase reporter enzyme [2] [9] | Inhibition or activation of signal in luciferase-based assays [2] | At least 3% of library; up to 60% of actives in some cell-based assays [2] |

| Redox Cycling | Generates hydrogen peroxide (H₂O₂) in the presence of reducing agents in the assay buffer [2] [9] | Time-dependent enzyme inactivation; effect is often sensitive to pH and reducing agent concentration [2] | ~0.03% of compounds generate H₂O₂ at appreciable levels; enrichment can be as high as 85% in a given assay [2] |

| Thiol Reactivity | Covalently modifies cysteine residues in proteins [9] | Nonspecific interactions in cell-based assays; on-target covalent modification in biochemical assays [9] | Varies by library and assay conditions [9] |

Troubleshooting Guides & FAQs

FAQ: How can we quickly identify nuisance compounds in our screening library or hit list?

Computational tools can pre-emptively flag many problematic compounds. While PAINS (Pan-Assay Interference Compounds) filters are widely known, they can be oversensitive and may miss many true interferers [9]. More recent, model-based tools offer improved accuracy:

- Liability Predictor: A free webtool that uses Quantitative Structure-Interference Relationship (QSIR) models to predict compounds with tendencies for thiol reactivity, redox activity, and luciferase inhibition. External validation showed 58-78% balanced accuracy [9].

- SCAM Detective: A computational tool designed to predict small, colloidally aggregating molecules (SCAMs), the most common source of false positives [9].

- OCHEM Alerts: A public website hosting a variety of filters, including those for AlphaScreen and His-Tag frequent hitters [10].

Troubleshooting Guide: Our primary biochemical screen yielded a high hit rate. What is the first triage step?

A high hit rate often indicates pervasive assay interference. Your first step should be to conduct a confirmation assay with robust counter-screens.

Protocol: Confirmation and Counter-Screen Assay

- Confirmatory Dose-Response: Re-test all primary hits in a dose-response format (in triplicate) using the original primary assay. This confirms the activity and provides initial potency (IC50/EC50) data [10].

- Execute Counter-Screens:

- For Luciferase-Based Assays: Test hits in a luciferase-only assay (e.g., using purified firefly luciferase) to identify inhibitors of the reporter enzyme itself [2] [9].

- For Fluorescence-Based Assays: Test hits in a target-free assay system containing only the fluorophore and detection reagents to identify fluorescent compounds or quenchers [2].

- For Aggregation Suspects: Re-test hits in the primary assay with the addition of a non-ionic detergent (e.g., 0.01-0.1% Triton X-100). A significant reduction in potency suggests aggregation-based inhibition [2].

- Analyze Data: Compounds that show concentration-dependent activity in the primary confirmation assay but are inactive in the relevant counter-screen are less likely to be artifacts and should be prioritized [10].

FAQ: What is the difference between a counter-screen and an orthogonal assay?

Both are critical for hit validation, but they serve distinct purposes:

- Counter-Screen: An assay designed specifically to identify compounds that interfere with the technology or format of your primary assay. Its goal is to eliminate technology-specific artifacts [2]. Examples include a luciferase inhibitor assay for a luciferase-based primary screen, or a fluorescence interference assay for a fluorescence-based readout [2] [10].

- Orthogonal Assay: An assay that measures activity against the same biological target but uses a completely different detection technology or assay format. A positive result in an orthogonal assay provides strong evidence that the compound's activity is directed at the biology of interest and is not an artifact of the detection method [2]. For example, following up a fluorescence-based biochemical screen with a mass spectrometry-based assay or a cellular thermal shift assay (CETSA) [11].

Troubleshooting Guide: We need to deploy an orthogonal assay to confirm our hits. What are our options?

Orthogonal assays are a powerful way to confirm true biological activity. The workflow below outlines the logical process for selecting and utilizing an orthogonal assay.

Detailed Methodologies for Key Orthogonal Assays:

1. Mass Spectrometry-Based Binding or Activity Assay MS-based methods directly detect reaction products or binding, avoiding interference from light-based artifacts [11].

- Key Technology: Systems like the RapidFire MS can dramatically increase throughput, processing samples in seconds instead of minutes [12].

- Workflow: a. Incubate the target protein with test compounds and substrates. b. Use an online solid-phase extraction (SPE) cartridge to rapidly desalt and concentrate the reaction mixture. c. Inject directly into a mass spectrometer for label-free quantification of substrates and products [12].

- Troubleshooting Note: While powerful, MS-based screens can have unique false-positive mechanisms, such as compound-mediated ionization suppression, which requires specific control experiments to identify [11].

2. Differential Scanning Fluorimetry (DSF) DSF (or thermal shift assay) measures the stabilization of a protein's melting temperature (Tm) upon ligand binding.

- Workflow: a. Mix the purified target protein with a fluorescent dye (e.g., SYPRO Orange) that binds to hydrophobic regions exposed upon denaturation. b. Dispense the mixture into a qPCR plate in the presence and absence of test compounds. c. Ramp the temperature incrementally while measuring fluorescence. d. Plot fluorescence vs. temperature to generate protein melt curves. A positive shift in Tm (ΔTm) for the compound-treated sample suggests binding [9].

- Note: Colored or fluorescent compounds can interfere; using a label-free method like nanoDSF is an alternative.

3. Surface Plasmon Resonance (SPR) SPR provides real-time, label-free data on binding kinetics (kon and koff) and affinity (KD).

- Workflow: a. Immobilize the purified target protein on a biosensor chip. b. Flow test compounds at different concentrations over the chip surface. c. Monitor the change in the refractive index (response units) at the chip surface as compounds bind and dissociate. d. Analyze the sensorgrams to quantify kinetic parameters.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagents for HTS Triage

| Reagent / Tool | Function in Triage | Example Use Case |

|---|---|---|

| Non-ionic Detergent (Triton X-100) | Disrupts compound aggregates by masking hydrophobic surfaces [2] | Add at 0.01-0.1% to assay buffer to test for aggregation-based inhibition; a loss of activity suggests an artifact [2]. |

| Purified Reporter Enzyme (Luciferase) | Serves as the core component of a counter-screen [2] [9] | Test primary hits for direct inhibition of firefly or nano luciferase to rule out reporter-based artifacts [9]. |

| Dithiothreitol (DTT) / Catalase | Tools to investigate redox activity [2] | Replacing DTT with weaker reducing agents (e.g., glutathione) or adding catalase (which degrades H₂O₂) can eliminate activity from redox cyclers [2]. |

| His-Tagged Protein & Alternative Tags | Controls for tag-binding artifacts [10] | If the primary assay uses a His-tagged protein, a counter-screen with a differently tagged protein (e.g., GST) can identify compounds that bind the tag rather than the target. |

| Cheminformatics Filters (e.g., Liability Predictor) | Computationally flags compounds with high risk of interference [9] | Profile screening libraries or hit lists prior to experimental validation to deprioritize likely artifacts. |

Robust triage is not a single step but a multi-layered defense strategy. It begins with a well-designed compound library, continues with vigilant computational profiling of primary hits, and is solidified through rigorous experimental confirmation using counter-screens and orthogonal assays. By integrating cheminformatics with careful experimental design, researchers can efficiently navigate the sea of potential artifacts, ensuring that precious resources are invested only in the most promising and genuine chemical matter.

Troubleshooting Guides

Guide 1: Identifying and Managing PAINS and Promiscuous Inhibitors

Problem: High-throughput screening (HTS) hits show non-drug-like behavior: they inhibit multiple unrelated targets, have uncorrelated structure-activity relationships, and are difficult to optimize.

Explanation: These are often Pan-Assay Interference Compounds (PAINS) or promiscuous inhibitors. They do not represent specific target binding but interfere with the assay system itself. A common mechanism is the formation of colloidal aggregates, which can non-specifically inhibit enzymes [13] [14].

Steps for Resolution:

- Cheminformatics Triage: Upon identifying HTS hits, filter the compound list using PAINS filters and other computational tools to flag substructures known to be associated with promiscuous activity [1].

- Test for Aggregate-Based Inhibition:

- Add Detergent: Include a non-ionic detergent like Triton X-100 or Tween-20 in your assay buffer. Inhibition caused by colloidal aggregates is often reduced or eliminated by low concentrations of detergent [13].

- Conduct a Concentration-Dependent Assay: Test the inhibitor over a range of concentrations. Aggregators often show a steep, non-sigmoidal inhibition curve [14].

- Use Dynamic Light Scattering (DLS): Confirm the presence of aggregates directly by measuring the particle size distribution of the compound in aqueous buffer. Promiscuous inhibitors often form particles of 30-400 nm in diameter [14].

- Check for Time-Dependence: The inhibition from these compounds is often time-dependent, but reversible, unlike many classical competitive inhibitors [13].

- Evaluate Serum Sensitivity: The inhibitory activity of aggregators is often attenuated by the presence of albumin or other serum components [13].

Guide 2: Diagnosing and Mitigating General Assay Interference

Problem: A screening hit produces a signal that does not accurately reflect the true concentration of the target analyte, leading to a false positive or false negative result.

Explanation: Assay interference occurs when a component in the sample causes a clinically significant difference in the assay result. Interferents can be endogenous or exogenous and disrupt the assay through various mechanisms [15].

Steps for Resolution:

- Check Serum Indices: Most automated clinical chemistry analyzers can measure HIL (Hemolysis, Icterus, Lipemia) indices. Review these indices for your sample to identify common physical interferences [15].

- Investigate Specific Interferents:

- Biotin: For immunoassays, check if the patient is taking high doses of biotin (Vitamin B7), which can cause positive or negative interference in streptavidin-biotin based assay systems [15].

- Heterophilic Antibodies: If you suspect a false positive in a sandwich immunoassay, consider the presence of human antibodies that can bridge the capture and detection antibodies. This can often be mitigated by adding nonspecific mouse serum or a proprietary blocking reagent to the assay [15].

- Macrocomplexes: For tests like prolactin or certain enzymes, a discrepantly high result may be due to the analyte forming a complex with immunoglobulins. Treatment with polyethylene glycol (PEG) can precipitate these complexes for clarification [15].

- Perform a Dilution Test: Dilute the sample and re-assay. A non-linear result (one that does not dilute proportionally) suggests interference.

- Use an Alternative Method: Confirm the result using an assay based on a different detection principle (e.g., switch from an immunoassay to a mass spectrometry-based method) [4].

Frequently Asked Questions (FAQs)

FAQ 1: What does PAINS stand for and why are these compounds problematic? PAINS stands for Pan-Assay Interference Compounds. They are problematic because they appear as "hits" in many different HTS campaigns by interfering with the assay technology or biological readout, rather than acting specifically on the intended target. Pursuing them wastes significant time and resources [1].

FAQ 2: What is the difference between a promiscuous inhibitor and a PAINS compound? The terms are closely related and often used interchangeably. "Promiscuous inhibitor" describes the behavior of a compound that inhibits many diverse targets. "PAINS" is a specific term for classes of compounds, defined by their chemical structure, that are frequently promiscuous. All PAINS are promiscuous inhibitors, but not all promiscuous inhibitors are classified as PAINS [13] [1].

FAQ 3: At what stage of the HTS process should I start looking for assay interference? The triage for assay interference should begin as early as possible, ideally during the hit confirmation stage. Using cheminformatics filters to flag potential PAINS and planning for counter-screens during the primary screen design will save resources. Some counter-screens can even be run before hit confirmation to filter out nonspecific compounds early [5].

FAQ 4: My hit compound is fluorescent. Is it automatically a false positive? Not automatically, but it is a major red flag, especially in assays using fluorescence detection. The compound's fluorescence can quench or enhance the assay signal, creating an artifact. You must run a technology counter-screen (e.g., measuring compound fluorescence at the assay's wavelengths in the absence of all other components) to rule out this interference [5].

FAQ 5: What are HIL interferences and which common tests are affected? HIL stands for Hemolysis, Icterus, and Lipemia. These are common sample conditions that can interfere with spectrophotometric measurements [15]. The table below summarizes their effects:

| Interference Type | Falsely Increases | Falsely Decreases |

|---|---|---|

| Hemolysis (H) | Potassium, AST, LDH, Phosphate, Magnesium | Insulin |

| Icterus (I) | Creatinine (Jaffé method) | Hydrogen peroxide-based assays (e.g., cholesterol) |

| Lipemia (L) | Plasma electrolytes (indirect ISE) | Turbidimetric/nephelometric assays (e.g., immunoglobulins) |

FAQ 6: What is a counter-screen and why is it crucial? A counter-screen is a secondary assay designed to identify compounds that are active for the wrong reasons. It helps distinguish true target activity from false positives caused by general assay interference, technology-specific interference, or off-target effects. It is crucial for ensuring that only high-quality, specific hits progress to more costly stages of development [5].

Workflow Visualizations

HTS Hit Triage Workflow

This diagram outlines the key decision points for triaging high-throughput screening hits to eliminate false positives.

Mechanism of Promiscuous Inhibition

This diagram illustrates the mechanism by which some compounds form aggregates leading to non-specific enzyme inhibition.

Research Reagent Solutions

The following table details key reagents and materials used to identify and manage assay interferences.

| Reagent/Material | Function in Troubleshooting |

|---|---|

| Non-ionic Detergents (e.g., Triton X-100) | Disrupts colloidal aggregates formed by promiscuous inhibitors, thereby abolishing their non-specific inhibitory activity [13]. |

| Mouse Serum or Blocking Reagents | Blocks heterophilic antibodies in immunoassays to prevent false positive results [15]. |

| Polyethylene Glycol (PEG) | Precipitates macrocomplexes (e.g., macroprolactin) to help determine the true concentration of the analyte [15]. |

| Bovine Serum Albumin (BSA) | Attenuates the activity of promiscuous inhibitors, serving as a diagnostic tool; also used as a carrier protein in assays [13]. |

| Dynamic Light Scattering (DLS) Instrument | Detects and measures the size of colloidal aggregates (30-400 nm) in compound solutions, confirming an aggregation mechanism [14]. |

| UHPLC-UV/MS System | Rapidly assesses compound integrity (purity and identity) of HTS hits to rule out false positives from degraded or misidentified samples [4]. |

Troubleshooting Guide: Addressing Common HTS Hit Triage Challenges

| Challenge | Signs & Symptoms | Root Cause | Corrective Action | Preventive Strategy |

|---|---|---|---|---|

| Assay Interference Compounds | Illogical SAR; activity in irrelevant assays; unusual concentration-response curves [1] [5] | Compound fluorescence, luminescence inhibition, redox reactivity, or signal quenching [5] | Run technology-specific counter-screens (e.g., luciferase inhibition assay for luminescent readouts) [5] | Design assays to minimize interference; include counterscreens early in the triage cascade [5] |

| Promiscuous/Pan-Assay Interference Compounds (PAINS) | Hits belong to chemotypes known for non-specific activity; high molecular hit rate across multiple HTS campaigns [1] [16] | Compounds that form aggregates, react covalently, or act as membrane disruptors [1] | Filter hits against PAINS substructure libraries; assess purity and integrity [1] [4] | Curate screening libraries to remove known PAINS; apply cheminformatic filters pre-screen [1] |

| Cytotoxicity in Cell-Based Assays | Activity in a cell-based primary screen but no binding in biochemical assays; reduced cell viability [5] | Hit compounds are generally cytotoxic, causing signal modulation through cell death [5] | Implement a cytotoxicity counter-screen (e.g., measuring ATP levels) to establish a selectivity window [5] | Use a specificity counter-screen with a relevant cell line (e.g., knockout) in parallel with the primary screen [5] |

| Compound Integrity Issues | Inability to confirm activity upon re-test; poor correlation between biological activity and structure [4] | Compound degradation, precipitation, or evaporation during storage [4] | Perform rapid LC-UV/MS analysis to confirm identity and purity concurrently with concentration-response testing [4] | Regularly monitor collection health; use proper storage conditions; integrate integrity checks early in workflow [4] |

| Poor Lead-Like Properties | Hits have high lipophilicity (ClogP), high molecular weight, or are "flat" (low Fsp3) [16] [17] | Library compounds have suboptimal physicochemical properties from the start [17] | Prioritize hits with "lead-like" properties (e.g., MW 175-400, ClogP <4) for follow-up [17] | Design screening libraries with a focus on quality, lead-like space, and 3D character [17] |

Frequently Asked Questions (FAQs) for HTS Hit Triage

Q1: Our HTS produced several hits that are known luciferase inhibitors. How should we handle them? You should run a dedicated luciferase inhibition counter-screen [5]. This assay uses the same detection technology as your primary screen but without the target. Hits active in this counter-screen are likely false positives due to assay technology interference. The optimal stage for this is during hit confirmation or potency determination. If a compound shows activity in your primary screen but also inhibits luciferase, it should be deprioritized unless it demonstrates a significant potency window (e.g., 10-fold more active in the primary assay) [5].

Q2: What is the most efficient way to integrate compound purity assessment into the triage workflow? A novel and efficient approach is to run ultra-high-pressure liquid chromatography–ultraviolet/mass spectrometric (UHPLC-UV/MS) analysis in parallel with your concentration-response curve (CRC) assays [4]. This can be done by either splitting a single liquid sample for both analyses or running them serially. This method provides compound integrity data (identity and purity) at the same time as potency data, enabling medicinal chemists to make faster, more informed decisions about which hits to pursue without adding weeks to the cycle time [4].

Q3: Which molecular descriptors have the greatest influence on promiscuous behavior in HTS? Beta-binomial statistical models of molecular hit rates have shown that lipophilicity (ClogP) has the largest influence on the likelihood of a compound being a promiscuous hit [16]. This is followed by the fraction of sp3-hybridized carbons (Fsp3) and molecular size (heavy atom count) [16]. This means that hits with high ClogP, low Fsp3 ("flat" molecules), and high heavy atom counts should be treated with greater caution during triage.

Q4: When is the best time to deploy a counter-screen in an HTS campaign? The timing is flexible and should be dictated by the specific project needs [5].

- Standard Practice: Run the counter-screen at the hit confirmation stage alongside triplicate testing. This verifies selectivity while confirming activity [5].

- Early Triage: If the primary screen is prone to a specific type of interference (e.g., cytotoxicity in a cell-based assay), run the counter-screen immediately after the primary screen. This filters out problematic chemotypes before investing in confirmation [5].

- Potency Stage: Running a counter-screen during hit potency (XC50) determination allows you to establish a selectivity index, which can be valuable even for compounds with some off-target activity [5].

Q5: How can we quickly build confidence in the structure-activity relationships (SAR) of HTS hits? Immediately after hit confirmation, employ two parallel strategies:

- Hit Explosion: The integrated medicinal chemist designs and synthesizes a small set of novel analogs around the confirmed hit scaffolds to rapidly explore tolerated substituents [17].

- Hit Expansion ("SAR by Catalogue"): Computational chemists use the hit structures to search commercial catalogues for readily available analogs. Screening these purchased compounds quickly fills gaps in the initial SAR [17]. This combined approach generates a robust SAR dataset in a very short time.

Essential Research Reagent Solutions

The following reagents and tools are critical for effective HTS hit triage.

| Reagent / Tool | Function in HTS Hit Triage |

|---|---|

| Annotated Libraries (e.g., FDA-approved drugs) | Used during assay development to identify expected actives and flag compounds that cause assay interference [17]. |

| PAINS Filters | Cheminformatic filters used to identify and eliminate compounds with substructures known to cause pan-assay interference [1]. |

| Counter-Screen Assay Reagents | Specific reagents (e.g., parent cell line, inactive mutant protein, luciferase enzyme) needed to run assays that identify technology-specific or target-nonspecific false positives [5]. |

| "Lead-like" Screening Library | A curated collection of compounds with desirable properties (MW ~175-400, ClogP <4)designed to yield high-quality, developable hits from the outset [17]. |

| UHPLC-UV/MS Platform | Enables high-speed analysis of compound integrity (identity and purity), providing crucial data for triage decisions [4]. |

Experimental Workflow for Integrated Hit Triage

The following diagram illustrates the essential partnership between biology and medicinal chemistry in the HTS triage workflow.

Counter-Screen Implementation Strategy

Determining when and how to use counter-screens is a key decision point. The adapted screening cascade below shows how to integrate them early for efficient triage.

The Triage Toolkit: A Practical Guide to Cheminformatics and Counter-Screen Implementation

Frequently Asked Questions (FAQs)

Q1: What is the primary purpose of applying REOS, PAINS, and drug-likeness filters in triaging HTS hits? The primary purpose is to identify and prioritize promising lead compounds while eliminating those with undesirable properties early in the drug discovery pipeline. REOS (Rapid Elimination of Swill) filters help remove compounds with reactive, promiscuous, or otherwise problematic functional groups that are likely to cause toxicity or assay interference [18]. PAINS (Pan-Assay Interference Compounds) filters specifically target compounds that are known to produce false-positive results in high-throughput screening (HTS) assays through non-specific mechanisms [18]. Drug-likeness filters, often based on calculated properties or adherence to rules like the "Rule of Five," help prioritize molecules with physicochemical properties typical of successful oral drugs, thereby improving the likelihood of favorable pharmacokinetics [18].

Q2: My HTS hit passes all the standard filters but shows inconsistent activity in follow-up assays. What could be wrong? This is a common issue that can arise from several factors:

- Metabolic Instability: The compound might be chemically unstable under assay conditions or be rapidly metabolized. Consider performing stability assays.

- PAINS Behavior Not Covered by Standard Filters: Standard PAINS libraries may not cover all interference mechanisms. Re-evaluate the compound's structure for potential redox-activity, metal chelation, or membrane disruption properties that could cause promiscuous behavior [18].

- Insufficient Data Quality: The initial HTS data might have been a false positive. Re-test the compound in a dose-response format to confirm the activity and determine accurate potency (e.g., IC50/EC50).

- Compound Purity: The original sample may have been impure. Re-purify the compound and re-test to confirm the activity is intrinsic to the intended structure.

Q3: How can I convert a 2D chemical structure from a database into a 3D model for further analysis? Using the ICM software environment, you can follow this protocol [19]:

- Read the 2D structure (e.g., in SDF or SMILES format) into a molecular table.

- Right-click on the molecule in the table.

- Select Chemistry/Convert to 3D and Optimize from the context menu. This process generates a 3D conformation with optimized geometry, which is essential for molecular docking, 3D-QSAR, and other structure-based analyses [19].

Q4: What are the key molecular descriptors to calculate for a preliminary drug-likeness assessment? A preliminary assessment typically involves a set of whole-molecule physicochemical properties. The following table summarizes key descriptors and their ideal ranges for drug-like compounds [20]:

Table: Key Molecular Descriptors for Drug-Likeness Assessment

| Descriptor | Description | Common Ideal Range (for oral drugs) |

|---|---|---|

| Molecular Weight (MW) | Mass of the molecule. | ≤ 500 Da |

| LogP | Partition coefficient (octanol/water); measures lipophilicity. | ≤ 5 |

| Hydrogen Bond Donors (HBD) | Number of OH and NH groups. | ≤ 5 |

| Hydrogen Bond Acceptors (HBA) | Number of O and N atoms. | ≤ 10 |

| Topological Polar Surface Area (TPSA) | Surface sum over polar atoms; related to membrane permeability. | ≤ 140 Ų |

| Number of Rotatable Bonds (RB) | Number of bonds that allow rotation; a measure of molecular flexibility. | ≤ 10 |

These descriptors can be calculated using cheminformatics toolkits like RDKit or directly within software like ICM by right-clicking the 'mol' column header and selecting Insert Column..., then choosing the desired chemical property [19] [20].

Q5: How can I programmatically screen a library of compounds against the PAINS filter?

Many cheminformatics packages provide this functionality. For instance, using the R programming environment and the ChemmineR package, you can:

- Load your compound library in SDF format into an

SdfSetobject. - Use the

fmcsRpackage to perform a maximum common substructure search against a predefined set of PAINS SMARTS patterns. - Compute the Tanimoto similarity or overlap coefficient to identify and flag compounds that match known PAINS substructures [20].

The Tanimoto coefficient (TC) is calculated based on molecular fingerprints as follows [20]:

TC = M1·M2 / (M1 + M2 - M1·M2), where M1 and M2 are the numbers of bits set to 1 in the fingerprints of the two molecules being compared.

Troubleshooting Guides

Problem 1: High Attrition Rate After Applying REOS/Drug-Likeness Filters

- Symptoms: A very large percentage of your HTS hit list is removed by initial filters, leaving few compounds for follow-up.

- Possible Causes and Solutions:

- Cause: Overly Stringent Filter Criteria. The thresholds for molecular weight, LogP, or other descriptors may be too strict for your target class (e.g., natural products, macrocycles).

- Solution: Broaden the filter criteria. Consult the literature for property distributions of successful drugs in your therapeutic area. Consider using target-specific guidelines instead of general rules.

- Cause: Library Bias. The chemical library screened may be enriched in compounds that are structurally simple or biased toward "lead-like" rather than "drug-like" space.

- Solution: Analyze the chemical space of your starting library using principal component analysis (PCA) or t-distributed stochastic neighbor embedding (t-SNE) to understand its inherent biases [21]. This may justify screening a more diverse library.

Problem 2: Suspected PAINS Activity in a Confirmed Hit

- Symptoms: A compound shows activity in multiple unrelated assays, has a steep dose-response curve, or its activity diminishes upon slight structural modification.

- Investigation and Action Protocol:

- Confirm the Substructure: Use a molecular editor (like the one in ICM: Tools/Chemistry/Molecular Editor) to visually inspect the compound and confirm it contains a known PAINS substructure [19].

- Counter-Screen: Perform a specific counter-screen designed to detect the suspected interference mechanism (e.g., a redox-activity assay or a fluorescence interference assay).

- Test Close Analogs: If available, test close structural analogs that lack the suspected PAINS substructure. If the activity disappears, it strongly suggests the original hit was a false positive.

- Deprioritize: Unless there is strong and specific evidence for a true target engagement, deprioritize the compound to avoid wasting resources [18].

Problem 3: Inconsistent 3D Coordinate Generation

- Symptoms: The 3D model of a molecule generated by software has distorted geometry, high strain energy, or incorrect stereochemistry.

- Resolution Steps:

- Check 2D Input: Ensure the initial 2D structure is correctly drawn, with proper atom hybridization and stereochemistry defined.

- Use a Robust Algorithm: Use a well-established energy minimization force field after the initial 3D conversion. In ICM, the Convert to 3D and Optimize command handles this automatically [19].

- Preserve Known Coordinates: If you have a known good 3D structure (e.g., from a crystal structure), use the Load and Preserve Coordinates option instead of re-generating the 3D model from scratch [19].

- Validate the Output: Visually inspect the generated 3D structure for obvious errors, such as overlapping atoms or impossibly long bonds.

Experimental Workflow for Hit Triage

The following diagram illustrates a logical workflow for triaging HTS hits using cheminformatics filters and counter-screens, as discussed in the FAQs and troubleshooting guides.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Software and Resources for Cheminformatics Hit Triage

| Item / Resource | Function / Description | Application in Hit Triage |

|---|---|---|

| ICM Software | A comprehensive computational biology platform with integrated chemistry tools [19]. | Used for chemical table management, 2D to 3D structure conversion, molecular editing, and property calculation [19]. |

| RDKit | An open-source cheminformatics toolkit for Python/C++ [21]. | Calculating molecular descriptors (MW, LogP, HBD, HBA, TPSA) and fingerprints for similarity searching and model building [21]. |

| R Software & ChemmineR | A statistical computing environment with cheminformatics packages [20]. | Used for analyzing molecular similarity, clustering compounds, and performing maximum common substructure searches (e.g., for PAINS detection) [20]. |

| FooDB | A public database of food components [21]. | Can serve as a source of naturally occurring, often drug-like compounds for benchmarking or understanding "chemical space" [21]. |

| Molecular Editor | A tool for drawing and modifying chemical structures (e.g., within ICM) [19]. | Essential for visually inspecting hit structures, modifying them, and preparing structures for reports or presentations [19]. |

| Chemical Table | A database table within software like ICM that stores molecules and their associated data [19]. | The central workspace for managing, filtering, and analyzing the HTS hit list and associated properties [19]. |

Core Concepts: From HTS Triage to Targeted Virtual Profiling

What is the central goal of virtual screening and profiling in modern drug discovery? Virtual screening is a computational technique used to search libraries of small molecules to identify those structures most likely to bind to a drug target, thereby accelerating the early stages of drug discovery by prioritizing compounds for experimental testing [22]. Virtual profiling extends this by predicting a compound's activity profile across multiple biological targets, such as a panel of kinases. This is crucial for triaging HTS hits, as it helps rapidly identify non-selective, promiscuous, or otherwise problematic compounds early, saving significant resources [1] [23]. Techniques like Profile-QSAR and Kinase-Kernel represent advanced implementations of this principle, moving beyond single-target prediction to a more holistic, family-wide view of chemical activity.

How does this fit into a thesis on triaging HTS hits? A thesis focused on triaging HTS hits using cheminformatics and counter-screens would position these methods as a powerful computational counter-screen. Before running costly experimental counter-screens, virtual profiling can:

- Predict Selectivity: Forecast a hit's activity against related anti-targets or important off-target families (e.g., kinase selectivity panels).

- Identify Promiscuous Chemotypes: Flag compounds with substructures known to cause pan-assay interference (PAINS) or exhibit frequent-hitting behavior [1].

- Prioritize Lead-like Hits: Rank hits based on predicted desirable properties and activity profiles, focusing medicinal chemistry efforts on the most promising chemical series [24].

The following diagram illustrates how virtual profiling is integrated into a comprehensive HTS triage workflow.

Troubleshooting Guides

Common Issues and Solutions for Virtual Profiling

Problem 1: Poor Predictive Performance of Profile-QSAR Model

- Symptoms: Model predictions do not correlate with experimental follow-up testing; high error rates in cross-validation.

- Possible Causes & Solutions:

- Insufficient or Low-Quality Training Data: The Profile-QSAR method requires a substantial amount of high-quality IC₅₀ data for the new kinase target to effectively leverage the historical kinase knowledgebase [23]. Solution: Ensure you have at least 500 reliable experimental IC₅₀ measurements for your target kinase to train a robust model.

- Descriptor Mismatch: The predictive power comes from using predictions from 100+ historical kinase QSARs as descriptors. Solution: Verify that the historical kinase QSARs cover a broad and relevant chemical and target space related to your new kinase [23].

Problem 2: Kinase-Kernel Produces Unreliable Predictions for a Novel Kinase

- Symptoms: Predictions for a kinase with no training data are inconsistent or lack confidence.

- Possible Causes & Solutions:

- Low Sequence Similarity to Profiled Kinases: Kinase-Kernel works by interpolating from the new kinase's nearest neighbors based on active-site sequence similarity. Solution: Check the sequence similarity of your novel kinase to the 115+ kinases with trained Profile-QSAR models. If the nearest neighbors are too distant, the predictions will be weak. Consider alternative methods or generating a small amount of training data [23].

Problem 3: High Computational Resource Demand

- Symptoms: Virtual screening of large compound libraries takes too long, creating a bottleneck.

- Possible Causes & Solutions:

- Inefficient Docking Setup: Standard docking of millions of compounds is computationally intensive. Solution: For kinase targets, investigate methods like Surrogate AutoShim, which uses a pre-docked "Universal Kinase Surrogate Receptor" ensemble. This allows for the prediction of IC₅₀s for millions of compounds in hours instead of weeks [23].

- Library Size: Screening ultra-large libraries (billions of compounds) is prohibitive. Solution: Utilize techniques like Chemical Space Docking, which screens vast, non-enumerated chemical spaces on-the-fly without the need to physically store all structures [25].

Table 1: Troubleshooting Common Virtual Profiling Issues

| Problem | Primary Cause | Recommended Solution |

|---|---|---|

| Poor Model Performance | Insufficient training data (<500 IC₅₀s) | Generate more high-quality bioactivity data for the target. |

| Unreliable Kinase-Kernel Predictions | Novel kinase has low sequence similarity to profiled kinases | Gather a small training set or use a complementary 3D method if a structure exists. |

| High Computational Load | Docking massive, enumerated compound libraries | Use surrogate docking (e.g., Surrogate AutoShim) or Chemical Space Docking. |

| Inability to Find Novel Chemotypes | Over-reliance on known actives for similarity searches | Use scaffold-hopping tools like FTrees or maximum common substructure searches [25]. |

Data Management and Integrity Issues

Problem: Inconsistent or Uninterpretable Screening Results

- Symptoms: Inability to reconcile data from different assay stages; difficulty tracing HTS hit progression.

- Possible Causes & Solutions:

- Lack of Integrated Informatics Platform: HTS and virtual screening generate large, complex data sets. Solution: Implement a unified informatics platform that integrates sample management (e.g., Titian Mosaic), automated screening execution, and data analysis (e.g., Genedata Screener) to maintain data fidelity and streamline analysis [24].

- Ignoring Compound History: Failure to check new HTS hits against historical screening data can lead to rediscovery of promiscuous or problematic compounds. Solution: Utilize databases like PubChem and internal corporate databases to check the "natural history" of screening hits across previous assays and targets [1] [23].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between Profile-QSAR and traditional QSAR?

- A: Traditional QSAR builds a model linking a compound's physicochemical descriptors directly to its activity against a single target. Profile-QSAR is a meta-QSAR approach. It uses the predicted activities from over 100 historical kinase QSAR models as input descriptors for a new model trained on data for a new kinase. This effectively allows every prediction for the new kinase to be informed by over 1.5 million historical IC₅₀ data points, providing unparalleled accuracy and extrapolation power [23].

Q2: When should I use Kinase-Kernel versus Profile-QSAR?

- A: The choice is determined by the availability of training data for your kinase target:

- Use Profile-QSAR when you have approximately 500 experimental IC₅₀s for your new kinase target. This data is used to train a new, highly accurate model.

- Use Kinase-Kernel when you have little to no training data for your new kinase. It predicts activity by interpolating from the models of your kinase's nearest neighbors based on active-site sequence similarity [23].

Q3: Can these virtual profiling methods predict cellular activity and selectivity?

- A: Yes. A key advantage of methods like Profile-QSAR is their ability to predict beyond simple biochemical IC₅₀. They can be trained to predict cellular activity, selectivity profiles across dozens of kinases, and even entire kinase affinity profiles for over 115 kinases, all from the same underlying data and model [23].

Q4: My HTS identified a hit, but it's not a kinase inhibitor. Are these concepts applicable?

- A: Absolutely. While Profile-QSAR and Kinase-Kernel are specialized for kinases, the core concept of virtual profiling is universal. For any protein family with sufficient accumulated screening data (e.g., GPCRs, nuclear receptors, proteases), similar meta-modeling or machine learning approaches can be built. Furthermore, structure-based virtual screening and pharmacophore-based methods are widely applicable for non-kinase triage [22] [25].

Q5: What are the most common pitfalls in triaging HTS hits with cheminformatics?

- A: Common pitfalls include:

- Ignoring PAINS: Not filtering for pan-assay interference compounds, which leads to pursuing assay artifacts [1].

- Chasing Promiscuous Hits: Investing resources into compounds that are frequent hitters across many target classes, indicating potential non-specific binding [23].

- Neglecting Chemical Tractability: Selecting hits with complex structures or undesirable properties that are difficult to optimize medchemically [1] [24].

- Working in Silos: A lack of early partnership between biologists and medicinal chemists during the triage process, which is critical for robust outcomes [1].

Experimental Protocols & Methodologies

Workflow: Implementing a Profile-QSAR Model for Kinase Selectivity Prediction

This protocol outlines the steps to create and use a Profile-QSAR model for predicting the activity and selectivity of HTS hits against a panel of kinases.

1. Prerequisite Data Collection

- Historical Kinase Knowledgebase: Assemble a collection of 100+ previously developed QSAR models, covering a diverse set of kinases and chemical scaffolds. This knowledgebase should be built on over 1.5 million historical IC₅₀ measurements [23].

- Target-Specific Training Set: For your new kinase of interest, generate a robust set of approximately 500 compounds with reliably measured IC₅₀ values. This set should be structurally diverse to ensure model generalizability.

2. Model Training

- Descriptor Generation: For each compound in your target-specific training set, obtain the predicted activity values (pIC₅₀ or pKᵢ) from each of the 100+ historical kinase QSAR models. These predictions form the new "descriptor" matrix for the Profile-QSAR model [23].

- Meta-Model Construction: Using the target-specific experimental IC₅₀s as the response variable and the historical model predictions as descriptors, train a new QSAR model (e.g., using partial least squares regression or machine learning algorithms). This is the Profile-QSAR model.

3. Prediction and Profiling

- New Compound Prediction: To profile a new HTS hit, process its structure through the same pipeline: generate its prediction descriptors from the historical kinase models, and then input these descriptors into your trained Profile-QSAR model.

- Selectivity Assessment: Run this prediction for all kinase targets for which you have Profile-QSAR models (e.g., 115+ kinases) to generate a predicted activity profile, enabling immediate virtual assessment of selectivity.

The workflow and relationship between key computational methods are summarized in the following diagram.

Workflow: Structure-Based Triage with Surrogate AutoShim

For kinases where a 3D structural perspective is needed, this protocol uses a pre-docked surrogate receptor ensemble for rapid IC₅₀ prediction.

1. Prepare the Universal Kinase Surrogate Receptor

- Ensemble Selection: Curate an ensemble of 16 diverse kinase crystal structures that collectively represent a wide range of kinase active site conformations and sequences [23].

- Pre-Docking: Dock a vast virtual library (e.g., 4 million internal and commercial compounds) into each receptor in this surrogate ensemble. Store all docking scores and pharmacophore interaction fingerprints for billions of generated poses.

2. Train the AutoShim Model

- Training Data: Use the same target-specific training set of ~500 experimental IC₅₀s.

- Model Fitting: For the new kinase target, train an AutoShim scoring function by adjusting the weights of pharmacophore interaction "shims" within the surrogate receptor's binding site to best reproduce the experimental training IC₅₀s [23].

3. Rapid Screening and Prediction

- Apply Model: To screen a compound, its pre-computed docking poses and interaction fingerprints from the surrogate ensemble are retrieved. The trained AutoShim model is applied to these stored poses to predict an IC₅₀.

- Output: Rank the entire virtual library based on the predicted IC₅₀s from the AutoShim model, all without performing any new docking calculations for the new target.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Resources for Virtual Screening and Profiling

| Tool / Resource Name | Type | Primary Function | Relevance to HTS Triage |

|---|---|---|---|

| Profile-QSAR [23] | Computational Algorithm | 2D meta-QSAR for kinase activity/selectivity prediction | Profiling HTS hits against a kinase panel to predict selectivity and polypharmacology. |

| Kinase-Kernel [23] | Computational Algorithm | Predicts kinase activity for targets with no training data. | Extending virtual profiling to kinome coverage beyond kinases with existing assay data. |

| AutoDock Vina [26] | Docking Software | Generates binding poses and scores for ligand-receptor complexes. | Structure-based virtual screening and pose prediction for HTS hit validation. |

| SeeSAR [25] | Interactive Softwar | Visual analysis and prioritization of docking results. | Rapid, intuitive triage of virtual screening hits based on binding interactions and HYDE affinity estimation. |

| PyRx [26] | Software Platform | Integrated virtual screening environment with docking wizards. | Provides a user-friendly interface for preparing compounds, running docking screens, and analyzing results. |

| FTrees / SpaceLight [25] | Similarity Search Tool | Finds structurally diverse analogs using pharmacophores/fingerprints. | "Scaffold hopping" to find novel chemotypes from an HTS hit while maintaining activity. |

| PubChem [23] | Public Database | Repository of chemical structures and bioassay data. | Checking the screening history and promiscuity of HTS hits across public domain assays. |

| Lead-like Compound Library [22] | Compound Collection | A library of compounds with optimized physicochemical properties. | A high-quality source for virtual screening to increase the likelihood of finding tractable hits. |

The journey from a primary high-throughput screen to a confirmed hit list is a critical, multi-stage process designed to efficiently separate true positives from false leads. The workflow integrates cheminformatics and experimental counter-screens to prioritize compounds with the highest potential for success in downstream drug discovery campaigns [27] [28].

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: Our primary screen yielded an excessively high hit rate (>5%). What are the first steps in the cheminformatic triage to quickly focus on the most promising compounds?

An unusually high hit rate often indicates a high proportion of false positives. The first cheminformatic triage should rapidly filter compounds based on undesirable chemical properties.

- Problem: The hit list is too large to handle experimentally.

- Solution: Immediately apply a series of computational filters to eliminate compounds with problematic structures.

- Actionable Protocol:

- Apply Structural Alert Filters: Use industry-standard rules to flag and remove compounds that are frequent hitters in biochemical assays. This includes:

- PAINS (Pan-Assay Interference Compounds): Filters out compounds with substructures known to cause assay interference through non-specific mechanisms [29].

- REOS (Rapid Elimination Of Swill): Removes compounds with undesirable physicochemical properties or functional groups [29].

- Lilly Medicinal Chemistry Filters: A set of rules to identify compounds with properties outside drug-like space [29].

- Assess Drug-Likeness: Filter based on simple property calculations (e.g., molecular weight, lipophilicity, number of rotatable bonds) to prioritize "lead-like" compounds [27].

- Perform Structure-Based Clustering: Group the remaining hits by chemical similarity. This allows you to select a diverse subset of chemotypes for confirmation, ensuring you don't waste resources on confirming multiple nearly identical compounds [29].

- Apply Structural Alert Filters: Use industry-standard rules to flag and remove compounds that are frequent hitters in biochemical assays. This includes:

FAQ 2: After the first triage and confirmation, several compounds failed to show activity in the counter-screen. What does this indicate, and how should we proceed?

This is a common and often positive outcome, as it helps to eliminate false positives and identify compounds with specific activity.

- Problem: Confirmed hits are inactive in orthogonal or counter-screen assays.

- Solution: Systematically investigate the cause of the discrepancy to determine if the activity in the primary screen was specific or artifactual.

- Actionable Protocol:

- Interpret the Result: A compound active in the primary screen but inactive in a counter-screen designed to detect a specific interference (e.g., fluorescence, reactivity) suggests that the initial activity may have been an artifact. These compounds are typically deprioritized [27] [28].

- Investigate Mechanism: If the counter-screen is an orthogonal assay with a different readout (e.g., measuring binding vs. functional activity), the result can reveal the compound's mechanism of action. A hit active in both is a very strong candidate [30].

- Check Data Quality: Review the raw data and Z'-factor for both the primary and counter-screen assays to rule out technical failure [31].

- Decision Point: Compounds failing target-agnostic interference counterscreens (e.g., for aggregation) should be discarded. The remaining, clean compounds move to the next triage stage.

FAQ 3: During the second triage, we have multiple chemotypes with similar potency. What criteria should we use to prioritize them for full profiling?

When potency is similar, prioritization should be based on a broader set of properties that predict successful lead optimization.

- Problem: Difficulty in ranking structurally distinct hit series.

- Solution: Employ a multi-parameter optimization approach that considers chemical attractiveness and synthetic tractability.

- Actionable Protocol:

- Analyze Structure-Activity Relationships (SAR): Even at this early stage, look for preliminary SAR. A series where small structural changes lead to significant potency changes is more attractive than one with "flat" SAR, as it suggests a specific interaction with the target [32].

- Evaluate Lead-Like Properties: Calculate and compare properties like:

- Molecular Weight: Lower is generally better (<350 Da is ideal for leads).

- Lipophilicity (cLogP): Prioritize series with lower cLogP.

- Fraction of sp3 Carbons (FSP3): Higher FSP3 is often correlated with better developability [27].

- Assess Synthetic Tractability: Consider the ease of synthesizing analogues. Are building blocks readily available? Is the chemistry straightforward? This will be crucial for the upcoming medicinal chemistry cycle [28].

- Review Commercial Availability: Check if close analogues are available for purchase to rapidly test your initial SAR hypotheses [27].

FAQ 4: How can we leverage AI and machine learning in the triage process beyond standard filtering?

Modern triage workflows are enhanced by AI to uncover hidden patterns and improve prediction accuracy.

- Problem: Standard filters are reactive; we want predictive tools to identify the best hits proactively.

- Solution: Integrate AI-driven tools for data analysis and compound prioritization.

- Actionable Protocol:

- Incorporate AI-Enhanced Triaging: Use machine learning models to predict compound bioactivity and toxicity based on chemical descriptors and high-dimensional data, moving beyond simple rule-based filters [29].

- Utilize the "Informacophore" Concept: Employ machine learning to identify the minimal chemical structure and computed molecular descriptors essential for biological activity. This helps in understanding the key features driving activity and in scaffold prioritization [32].

- Implement SAR Analysis: Use platforms like KNIME to automate statistical analysis and visualization. AI can help cluster compounds and predict which chemotypes have the highest potential for optimization [29].

Essential Research Reagents and Solutions

The following table details key materials and tools used in a robust HTS triage workflow.

| Item | Function / Application in HTS Triage |

|---|---|

| LeadFinder Diversity Library [27] [28] | A diverse collection of 150,000 low molecular weight, lead-like compounds used for primary screening and follow-up. |

| Liquid Chromatography-Mass Spectrometry (LCMS) [27] [28] | A critical quality control (QC) tool used to verify the identity and purity of compounds, especially those advancing to hit validation stages. |

| Echo Acoustic Dispensing [27] [28] | Precision dispensing technology for highly accurate and non-contact transfer of compounds and reagents in nanoliter volumes for confirmation assays. |

| Genedata Screener [27] [28] | A robust software platform for processing, managing, and statistically analyzing large, complex HTS datasets, enabling efficient data interrogation. |

| Orthogonal Assay Reagents [27] [30] | Reagents for secondary assays with a different readout technology (e.g., HTRF, AlphaScreen, NanoBRET) to confirm activity and rule out technology-specific artifacts. |

Detailed Experimental Protocols

Protocol 1: Primary Screen and Initial Cheminformatic Triage

Objective: To conduct the primary HTS and perform the first computational triage to select compounds for hit confirmation.

Methodology:

- Primary Screening: Test the entire compound library (e.g., 150,000 - 500,000 compounds) at a single concentration (typically 1-10 µM) in the developed assay [27] [28].

- Data Normalization: Normalize raw data to positive and negative controls on each plate. Calculate percent activity or inhibition for every compound.

- Hit Identification: Set a statistically relevant activity threshold (e.g., >3 standard deviations from the mean of negative controls) to define initial "hits" [27].

- First Cheminformatic Triage: Subject the initial hit list to computational filtering using predefined rules (PAINS, REOS, Lilly) to remove compounds with undesirable properties [29]. The output is a refined list for confirmation.

Protocol 2: Hit Confirmation and Counter-Screening

Objective: To experimentally confirm the activity of triaged hits and eliminate false positives through orthogonal methods.

Methodology:

- Hit Confirmation: Re-test the triaged hits in a dose-response manner (e.g., 8-point, 1:3 serial dilution) in the primary assay to confirm activity and generate preliminary potency (IC50/EC50) data [27].

- Counter-Screening: Test confirmed hits in one or more of the following assays:

- Assay Interference Counterscreen: Run the compound in an assay designed to detect a specific artifact (e.g., fluorescence quenching, chemical reactivity) [27].

- Orthogonal Assay: Test the compound in a different assay format that measures the same biological target but uses a different readout technology (e.g., switch from fluorescence to luminescence) [30].

- Selectivity/Counterscreen: Test against related but undesirable targets (e.g., anti-targets) to assess initial selectivity [28].

Protocol 3: Secondary Cheminformatic Triage and Hit Profiling

Objective: To prioritize the confirmed and counter-screened hits for final in-depth profiling.

Methodology:

- Second Cheminformatic Triage: Analyze the confirmed hit list using more advanced techniques:

- Structure-Based Clustering: Group hits into distinct chemotype series [29].

- SAR Analysis: For each series, analyze the relationship between chemical structure and confirmed potency to identify the most promising scaffolds [32].

- Property Calculations: Calculate and profile key physicochemical properties to prioritize drug-like series [27].

- Final Hit Profiling: Subject the top-priority compounds and series from the second triage to a full panel of assays to generate comprehensive data. This includes:

In modern drug discovery, a primary High-Throughput Screening (HTS) campaign can test hundreds of thousands of compounds against a biological target to identify initial "hits" [33]. However, a significant portion of these initial hits are often false positives, caused by compound interference with the assay technology or undesirable compound properties [5] [34]. Without a robust triage strategy, researchers risk wasting substantial time and resources pursuing misleading leads.

This case study walks through a successful integrated triage campaign for a kinase target, detailing how cheminformatics and strategic counter-screens were combined to efficiently distinguish true, promising hits from assay artifacts. The accompanying technical guides provide actionable protocols for researchers to implement similar strategies.

Our case study focuses on a project targeting a novel kinase for oncology. The initial HTS of a 500,000-compound library yielded 10,000 primary hits—a hit rate of 2%. The integrated triage campaign was designed to efficiently filter these hits down to a manageable number of high-quality leads for further optimization.

Table: Triage Campaign at a Glance

| Stage | Input Compounds | Output Compounds | Key Triage Method |

|---|---|---|---|

| Primary HTS | 500,000 | 10,000 | Biochemical ATPase Activity Assay |

| Hit Confirmation | 10,000 | 2,500 | Dose-Response & Cheminformatics Filtering |

| Counter-Screening | 2,500 | 800 | Technology & Specificity Counter-Screens |

| Orthogonal Assay | 800 | 150 | Cell-Based Phosphorylation Assay |

| Hit Validation | 150 | 25 | Selectivity Profiling & Cytotoxicity |

The workflow below illustrates the sequential stages of this triage campaign, showing how hits were progressively filtered at each step.

The Experimental Triage Workflow: Protocols & Troubleshooting

This section provides the detailed experimental protocols for each stage of the triage cascade, alongside solutions to common problems.

Stage 1: Primary HTS and Hit Confirmation

Experimental Protocol: Biochemical ATPase Activity Assay

- Objective: To identify compounds that inhibit the target kinase's enzymatic activity.

- Reagents:

- Purified recombinant kinase protein.

- ATP solution (1 mM stock).

- Fluorogenic peptide substrate.

- Test compounds (screened at 10 µM final concentration).

- Assay buffer (50 mM HEPES pH 7.5, 10 mM MgCl₂, 1 mM DTT, 0.01% Tween-20).

- Procedure:

- Dispense 5 µL of compound solution into a 384-well assay plate using an acoustic dispenser.

- Add 10 µL of kinase/substrate mixture in assay buffer.

- Initiate the reaction by adding 10 µL of ATP solution.

- Incubate for 60 minutes at room temperature.

- Stop the reaction and develop the signal according to the detection kit's instructions.

- Measure fluorescence (Ex/Em = 535/587 nm) on a plate reader.

- Data Analysis: Compounds showing >50% inhibition compared to controls are considered primary hits.

Technical Support: HTS Hit Confirmation

Q: After the primary screen, my hit confirmation rate is low. Many actives do not reproduce. What could be the cause? A: Low confirmation rates are often due to compound precipitation or interference with the assay readout.

- Solution 1 (Precipitation): Check the solubility of your hits in the assay buffer. Visually inspect the assay plates for precipitation or turbidity. Re-test compounds in a dose-response format, ensuring the highest concentration does not exceed their solubility limit.

- Solution 2 (Assay Interference): Many compounds are fluorescent or quench fluorescence. Re-test the primary hits using an orthogonal, non-fluorescence-based readout (e.g., a mobility shift assay) to confirm activity.

Stage 2: Cheminformatics Analysis of Confirmed Hits

Before proceeding to resource-intensive counter-screens, a cheminformatics analysis provides a powerful first filter to eliminate compounds with undesirable properties.

Experimental Protocol: Cheminformatics Filtering

- Objective: To remove compounds with poor drug-likeness or known nuisance behavior.

- Software: Any cheminformatics toolkit (e.g., RDKit, KNIME, commercial software).

- Procedure:

- Calculate key molecular properties for all confirmed hits: Molecular Weight (MW), Calculated LogP (cLogP), Number of Hydrogen Bond Donors (HBD), and Acceptors (HBA).

- Filter compounds using "Rule of 3" for lead-likeness (MW < 300, cLogP < 3, HBD < 3, HBA < 3) or other relevant criteria.

- Screen structures against in-house or public databases of known PAINS (Pan-Assay Interference Compounds) and other undesirable substructures.

- Perform cluster analysis to identify and prioritize chemically diverse series over singletons.

- Data Analysis: Compounds failing the lead-like filters or containing PAINS motifs are deprioritized or removed from the list.

The following diagram illustrates the key decision points in the cheminformatics analysis workflow.

Technical Support: Cheminformatics Analysis

Q: A compound has an excellent activity profile but is flagged as a PAINS. Should I automatically discard it? A: Not necessarily. A PAINS flag is a warning, not an automatic rejection.

- Solution: Investigate the compound further. Test it in a counter-screen designed to detect its specific suspected mechanism of interference (e.g., redox activity, aggregation). If it passes the counter-screen, it may still be a valuable starting point, but proceed with caution and use rigorous controls in all subsequent experiments.

Stage 3: Strategic Deployment of Counter-Screens

Counter-screens are essential for identifying and eliminating false positives that passed the initial assays [5]. They are broadly categorized as follows:

Table: Types of Counter-Screens in HTS Triage

| Counter-Screen Type | Objective | Example Protocol | What It Identifies |

|---|---|---|---|

| Technology Counter-Screen | Identify compounds interfering with detection technology. | Run the primary assay detection system (e.g., luciferase) in the absence of the biological target. | Compounds that inhibit luciferase, are fluorescent, or quench the signal. |

| Specificity Counter-Screen | Eliminate compounds with non-specific or off-target effects. | Test compounds in a cell viability assay (e.g., ATP-based CellTiter-Glo) or against a related but undesired target. | General cytotoxic compounds or promiscuous inhibitors. |

Experimental Protocol: Luciferase Inhibition Counter-Screen

- Objective: To identify compounds that inhibit the luciferase reporter enzyme, a common artifact in reporter gene assays.

- Reagents: Commercially available luciferase enzyme and luciferin substrate.

- Procedure:

- Dispense compounds into a white, solid-bottom plate.

- Add luciferase enzyme in assay buffer.

- Initiate the reaction by injecting luciferin substrate.

- Measure luminescence immediately on a plate reader.

- Data Analysis: Compounds that reduce luminescence signal in this target-free system are flagged as luciferase inhibitors and removed from consideration.

Technical Support: Counter-Screen Strategy

Q: When is the best time to run a counter-screen in my triage cascade? A: The timing can be flexible and should be optimized for efficiency [5].

- Solution 1 (Standard Practice): Run counter-screens in parallel with hit confirmation (dose-response) to immediately see if activity tracks with the undesired effect.

- Solution 2 (Early Triage): If a high frequency of a specific artifact is suspected (e.g., cytotoxicity in a sensitive cell line), run the counter-screen immediately after the primary screen to filter the hit list before confirmation, saving resources.

Stage 4: Orthogonal and Secondary Assays

Experimental Protocol: Cell-Based Target Phosphorylation Assay

- Objective: To confirm that hits can inhibit the target kinase in a more physiologically relevant cellular context.

- Reagents: Cell line expressing the target kinase, phospho-specific antibody for the target substrate.

- Procedure:

- Seed cells in a 96-well plate and incubate overnight.

- Treat cells with test compounds over a dose range (e.g., 0.1 nM - 10 µM) for 2 hours.

- Stimulate the pathway with an appropriate agonist (if required).

- Lyse cells and measure substrate phosphorylation levels via Western Blot or an ELISA-like immunoassay.

- Data Analysis: Calculate IC₅₀ values for inhibition of substrate phosphorylation. Compounds that show potent activity in this orthogonal system are prioritized.

The Scientist's Toolkit: Essential Reagents & Materials

Table: Key Research Reagent Solutions for HTS Triage

| Reagent / Material | Function in Triage Campaign | Example Vendor / Product Code |

|---|---|---|

| Diverse Compound Library | Provides a wide range of chemical starting points for HTS. A high-quality library is crucial for success [33]. | Evotec (>850,000 compounds) [33] |