Shape Space and Classification in Morphometrics: From Mathematical Foundations to Biomedical Applications

This article provides a comprehensive exploration of shape space theory and classification methodologies within geometric morphometrics, tailored for researchers and drug development professionals.

Shape Space and Classification in Morphometrics: From Mathematical Foundations to Biomedical Applications

Abstract

This article provides a comprehensive exploration of shape space theory and classification methodologies within geometric morphometrics, tailored for researchers and drug development professionals. It covers the foundational mathematical principles, including key shape space models like Kendall's shape space and differential coordinates. The scope extends to practical applications in drug discovery and clinical assessment, detailing both alignment-based and alignment-free methods. It also addresses critical challenges such as measurement error, data pooling, and the 'out-of-sample' problem, offering optimization strategies and validation protocols. Finally, the article evaluates the performance of different classification techniques and discusses emerging computational trends, synthesizing key takeaways for biomedical research.

The Geometry of Form: Unpacking the Core Principles of Shape Space

The concept of a shape space provides a formal mathematical framework for quantifying and comparing forms in nature, technology, and science. In essence, a shape space is a mathematical construct in which each point represents a distinct shape, and distances between points correspond to quantitative measures of shape dissimilarity [1]. This conceptualization has become fundamental to numerous disciplines, from evolutionary biology and paleontology to pharmaceutical development and materials science. The study of shape space enables researchers to move beyond qualitative descriptions to rigorous statistical analyses of form, variation, and transformation.

The importance of shape space analysis stems from the critical role that form plays in determining function across biological and physical systems. In molecular biology, shape complementarity governs interactions between drugs and their protein targets, antibodies and antigens, and enzymes and their substrates [2]. In evolutionary biology, shape changes in fossil lineages provide evidence for evolutionary processes and environmental adaptations [3]. The quantitative framework of shape space allows researchers to precisely characterize these relationships, test hypotheses about factors affecting form, and visualize complex morphological patterns.

Mathematical Foundations of Shape

Topological Concepts of Shape

Topology, often described as "rubber-sheet geometry," provides the most fundamental mathematical perspective on shape by focusing on properties that remain invariant under continuous deformations such as stretching, bending, and twisting [4]. Unlike classical geometry, which concerns itself with precise distances and angles, topology considers two objects to be equivalent if one can be transformed into the other without tearing or gluing. A circle is thus topologically equivalent to an ellipse or square, while a sphere is equivalent to a cube but not to a torus (doughnut shape) [4].

The mathematical formalization of these concepts occurs through topological spaces, which define the minimal structure needed to discuss continuity and connectedness [5]. A topological space consists of a set of points along with a collection of open sets that satisfy specific axioms governing unions and intersections. This abstract framework enables the definition of key topological properties including:

- Connectedness: Whether a space can be divided into disjoint open sets

- Compactness: A generalization of closed and bounded sets

- Homeomorphism: The fundamental equivalence relation in topology, where a continuous deformation with a continuous inverse exists between two spaces

These topological concepts provide the foundational "glue" for constructing more structured shape spaces, as they define the most basic level of shape equivalence and transformation.

Geometric Morphometrics and Shape Spaces

While topology provides the basic language of shape transformation, geometric morphometrics operationalizes shape analysis for practical scientific applications. Geometric morphometrics defines shape as "all the geometric information that remains when location, scale, and rotational effects are filtered out from an object" [1]. This definition leads directly to the construction of explicit shape spaces with measurable distances between shapes.

The most common approach to constructing such shape spaces uses Procrustes superimposition [1]. This method involves:

- Translation: Centering configurations at the origin

- Scaling: Normalizing to unit centroid size

- Rotation: Aligning configurations to minimize distances between corresponding points

The resulting Procrustes shape coordinates reside in a curved, non-Euclidean space. For statistical analysis, shapes are typically projected into a tangent space that approximates this curved shape space near a reference configuration, enabling the application of conventional multivariate statistics [1].

Table 1: Key Mathematical Spaces for Shape Analysis

| Space Type | Key Properties | Primary Applications |

|---|---|---|

| Topological Space | Defines continuity and connectedness; no metric structure | Qualitative shape classification; fundamental shape equivalence |

| Shape Space | Curved manifold; Procrustes distance metric | Biological morphometrics; comparative anatomy |

| Tangent Space | Euclidean approximation to shape space | Multivariate statistical analysis |

| Form Space | Incorporates size and shape together | Allometric studies; growth analysis |

Methodological Frameworks for Shape Analysis

Landmark-Based Approaches

Landmark-based methods form the cornerstone of modern geometric morphometrics. This approach relies on the precise identification of anatomically homologous points across specimens, classified into three distinct types [1]:

- Type I landmarks: Defined by local tissue geometry (e.g., intersection of three sutures)

- Type II landmarks: Points of maximum or minimum curvature (e.g., tip of a structure)

- Type III landmarks: Extremal points defined by maximal distance from other landmarks

The configuration of landmarks for each specimen is recorded as a matrix of coordinates, which undergoes Procrustes superimposition to extract shape variables [1]. The power of this approach lies in its ability to preserve the geometric relationships among landmarks throughout analysis, enabling sophisticated visualization of shape change through deformation grids and vector diagrams.

Landmark-based methods face limitations when studying structures lacking numerous homologous points or when comparing highly disparate forms. These challenges have led to the development of complementary approaches using semilandmarks, which capture information along curves and surfaces [1].

Landmark-Free Methods

Recent computational advances have enabled landmark-free approaches that capture shape variation without requiring manually identified homologous points. These methods are particularly valuable for analyzing large datasets or structures with few clear landmarks [6].

One prominent landmark-free method is Deterministic Atlas Analysis (DAA), implemented through Large Deformation Diffeomorphic Metric Mapping (LDDMM) [6]. This approach:

- Generates a consensus "atlas" shape through iterative alignment of all specimens

- Calculates deformation fields mapping the atlas to each specimen

- Uses momentum vectors at control points to quantify shape variation

The DAA framework automatically distributes control points throughout the shape, with density controlled by a kernel width parameter [6]. Smaller kernel values produce more control points and capture finer-scale shape details. This method has demonstrated particular utility in large-scale evolutionary studies encompassing highly divergent forms where homologous landmarks become scarce.

Table 2: Comparison of Shape Analysis Methodologies

| Method | Data Type | Key Advantages | Limitations |

|---|---|---|---|

| Traditional Landmarks | Type I-III landmarks | Clear biological homology; well-established statistics | Time-consuming; limited coverage of surfaces |

| Semilandmarks | Points along curves/surfaces | Captures outline and surface geometry | Requires sliding algorithms; arbitrary spacing |

| Outline Analysis | Mathematical functions fitted to outlines | Comprehensive boundary capture; no landmarks needed | Disregards internal homology; sensitive to noise |

| DAA/LDDMM | Dense surface meshes | Automated; comprehensive coverage; no landmarks | Complex implementation; computationally intensive |

Applications Across Scientific Disciplines

Molecular Shape in Drug Discovery

In pharmaceutical research, molecular shape similarity serves as a powerful principle for identifying potential drug candidates, based on the concept that structurally similar molecules often share similar biological properties [7]. Shape-based virtual screening compares the three-dimensional geometry of a query molecule with large databases of compounds to identify those with complementary shapes to target proteins [7] [2].

Multiple computational approaches have been developed to quantify molecular shape similarity:

- Atom-Based Methods: Ultrafast Shape Recognition (USR) and related techniques describe molecular shape using distributions of atomic distances from reference points [7]

- Gaussian Models: Represent atoms as overlapping Gaussian functions to calculate molecular volumes and overlap scores [8]

- Alignment-Based Methods: Optimize the spatial overlap between molecules through rotational and translational adjustments [7]

These methods enable scaffold hopping—identifying compounds with different molecular frameworks but similar overall shapes that may interact with the same biological target [7]. The Tanimoto Similarity Index provides a standardized measure of shape overlap, ranging from 0 (no overlap) to 1 (identical shapes) [8].

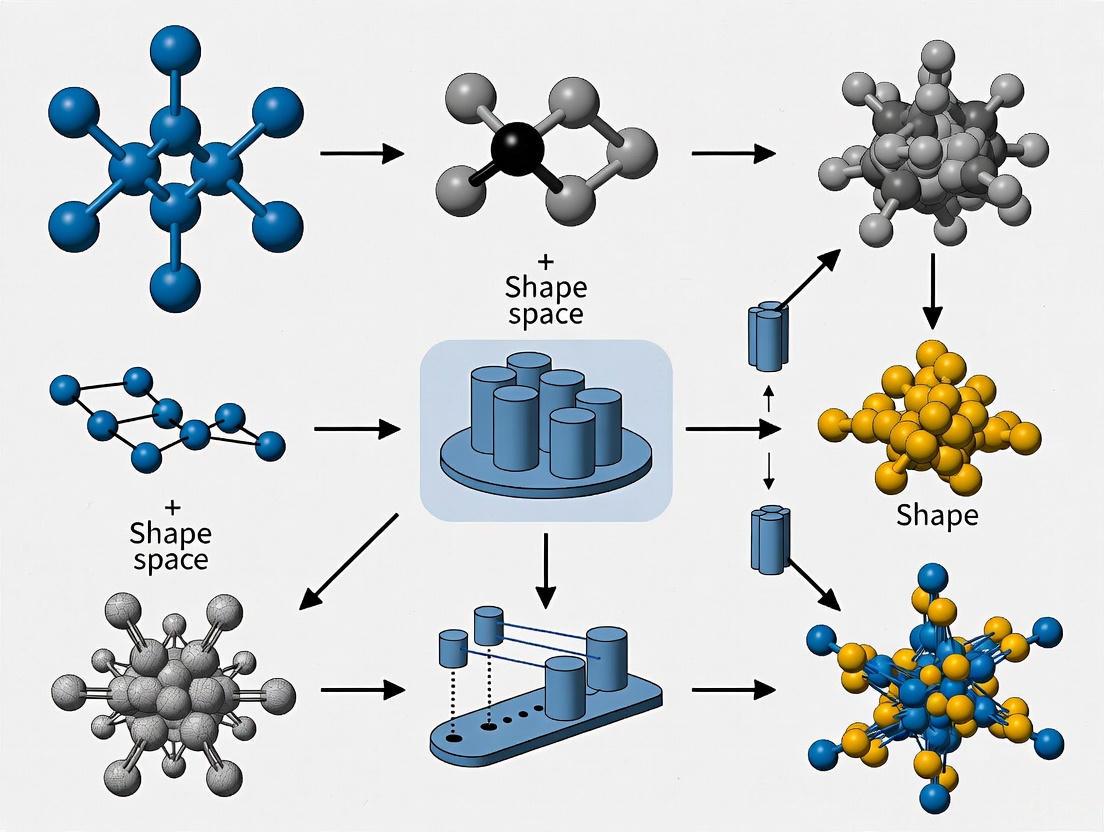

Diagram 1: Molecular Shape Similarity Screening Workflow. This flowchart illustrates the computational pipeline for shape-based virtual screening of compound databases.

Biological Morphometrics and Evolution

In evolutionary biology, shape space analysis has revolutionized the study of phenotypic evolution by enabling precise quantification of morphological change [9] [3]. Allometry—the study of size-related shape changes—has been particularly advanced through geometric morphometric frameworks [9]. Two primary schools of thought have emerged:

- Gould-Mosimann School: Defines allometry as the covariation between shape and size, typically analyzed through multivariate regression of shape variables on size measures [9]

- Huxley-Jolicoeur School: Characterizes allometry as covariation among morphological features all containing size information, represented by the first principal component of form space [9]

These approaches have been applied across different biological levels:

- Ontogenetic allometry: Shape changes during growth within a species

- Static allometry: Shape variation among adults of the same species

- Evolutionary allometry: Shape differences across related species or higher taxa

Geometric morphometric analyses have revealed conserved patterns of morphological integration, evolutionary rates varying across structures, and the influence of developmental constraints on evolutionary trajectories [9] [3].

Experimental Protocols and Research Tools

Detailed Methodological Protocols

Protocol 1: Procrustes-Based Geometric Morphometrics

This established protocol for landmark-based shape analysis involves sequential steps to isolate biological shape variation from other sources of geometric differences [1]:

- Landmark Digitization

- Identify and record Type I, II, and III landmarks across all specimens

- Ensure landmarks represent biologically homologous points

- For 3D data, use micro-CT scanners or laser scanners for coordinate acquisition

Procrustes Superimposition

- Translate configurations to a common origin (centroid)

- Scale configurations to unit centroid size

- Rotate configurations to minimize the sum of squared distances between corresponding landmarks

Statistical Analysis in Tangent Space

- Project Procrustes coordinates into a Euclidean tangent space

- Apply multivariate statistical methods (PCA, MANOVA, regression)

- Visualize shape changes along statistical axes using deformation grids

Validation and Visualization

- Assess measurement error through replicate measurements

- Visualize mean shapes and shape changes using thin-plate spline deformations

- Map statistical results back to anatomical space for biological interpretation

Protocol 2: Landmark-Free Shape Analysis Using DAA

This emerging protocol for automated shape analysis is particularly suitable for large datasets and structures lacking clear landmarks [6]:

Data Standardization and Preprocessing

- Convert all specimens to watertight, closed meshes using Poisson surface reconstruction

- Ensure consistent mesh quality and resolution across specimens

- Apply consistent mesh decimation if needed for computational efficiency

Atlas Generation

- Select an initial template specimen representative of morphological diversity

- Iteratively compute a consensus atlas shape minimizing total deformation energy

- Generate control points guided by kernel width parameter (typically 10-40mm)

Shape Registration and Comparison

- Compute diffeomorphic deformations mapping atlas to each specimen

- Calculate momentum vectors at control points quantifying deformation magnitude and direction

- Apply kernel Principal Component Analysis (kPCA) to explore shape variation

Macroevolutionary Analysis

- Compare shape distances with phylogenetic distances

- Calculate morphological disparity across groups

- Estimate evolutionary rates in shape space

Diagram 2: Comparative Workflows for Shape Analysis Methodologies. This diagram contrasts the key stages in landmark-based and landmark-free approaches to shape space analysis.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Tools for Shape Space Analysis

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Imaging Modalities | Micro-CT scanners, laser surface scanners, MRI | Generate 3D digital representations of specimens |

| Landmarking Software | tpsDig2, MorphoJ, EVAN Toolbox | Digitize landmarks and perform basic shape analysis |

| Shape Analysis Platforms | R (geomorph package), PAST, Deformetrica | Comprehensive statistical analysis of shape data |

| Molecular Shape Tools | ROCS, USR-VS, OptiPharm | Calculate molecular shape similarity for drug discovery |

| Visualization Software | MeshLab, Landmark Editor, Paraview | Visualize 3D shapes and shape transformations |

The mathematical formalization of shape space has transformed how researchers across diverse fields quantify, compare, and analyze form. From the abstract foundations of topology to the practical applications in drug discovery and evolutionary biology, shape space concepts provide a unified framework for understanding morphological variation. The continuing development of both landmark-based and landmark-free methods ensures that shape analysis can adapt to increasingly large and complex datasets while maintaining biological relevance.

As shape space methodologies evolve, several frontiers appear particularly promising: the integration of developmental dynamics into shape models, the reconciliation of discrete character data with continuous shape variables, and the application of machine learning to identify biologically meaningful shape features automatically. These advances will further solidify shape space as an essential conceptual and analytical framework throughout the scientific disciplines concerned with form and function.

Shape spaces provide a foundational mathematical framework for analyzing and comparing biological forms in morphometrics research. A shape space is a mathematical construct where each point corresponds to a distinct shape, and distances between points quantify shape dissimilarity [10]. The core definition of shape in this context encompasses all geometric features of an object except for its size, position, and orientation [10]. This conceptual separation allows researchers to focus specifically on morphological variation without confounding factors from placement or scale. The development of rigorous shape space theories has provided morphometrics with a firm mathematical foundation for statistical operations such as estimating average shapes and characterizing shape variation within and between populations—operations that are fundamental to biological applications across evolutionary biology, anthropology, and biomedical sciences [10].

The complexity of shape spaces stems from their inherent curvature and multidimensionality, particularly for configurations with more than three landmarks [10]. For biological shapes represented by landmark configurations, the dimensionality of Kendall's shape space can be calculated as 2k-4 for 2D data (where k is the number of landmarks) and 3k-7 for 3D data [10]. This reduction from the original coordinate representation accounts for the non-shape components: in 2D, one dimension is removed for size, two for translation, and one for rotation, while in 3D, one dimension is removed for size, three for translation, and three for rotation [10]. Understanding these foundational concepts is crucial for researchers applying geometric morphometrics to drug development, where precise quantification of morphological changes can reveal treatment effects, toxicity responses, or structural modifications at cellular or organismal levels.

Kendall's Shape Space

Kendall's shape space, named after David G. Kendall, represents one of the most established mathematical frameworks for shape analysis in morphometrics. This approach defines shape as a property that remains after filtering out effects of translation, rotation, and scaling [11]. Mathematically, a Kendall shape space for k landmarks in m dimensions is denoted as Σₘᵏ = Sₘᵏ/SO(m), where Sₘᵏ represents the pre-shape space consisting of centered and normalized configurations (Sₘᵏ := {X ∈ ℝᵐ ˣ ᵏ ‖ ∑ᵢ Xᵢ = 0, ‖X‖ꜰ = 1}), and SO(m) represents the special orthogonal group (rotation matrices) whose action on the pre-shape space is quotiented out [12]. The pre-shape space itself can be identified with a hypersphere 𝕊⁽ᵏ⁻¹⁾ᵐ⁻¹ through a transformation ψ(X) = HX/‖HX‖, where H is a matrix that centers the configuration [12].

Procrustes Distance and Superimposition

The fundamental metric in Kendall's shape space is the Procrustes distance, which quantifies shape difference through a rigorous superimposition process [10]. The procedure for comparing two landmark configurations involves three sequential steps:

- Size normalization: Both configurations are scaled to unit centroid size, where centroid size is defined as the square root of the sum of squared distances of each landmark from the centroid [10].

- Translation: The scaled configurations are shifted so their centroids coincide at the origin [10].

- Rotation: One configuration is rotated around the common centroid to minimize the sum of squared distances between corresponding landmarks [10].

This process exists in two variants: partial Procrustes superimposition (both configurations scaled to unit size) and full Procrustes superimposition (only the target is fixed at unit size while the other is optimally scaled) [10]. The full Procrustes distance represents the minimum Euclidean distance between corresponding landmarks after optimal superimposition [10].

Table 1: Dimensionality of Kendall's Shape Space for Different Landmark Configurations

| Landmarks | Data Type | Original Coordinates | Non-shape Dimensions | Shape Dimensions |

|---|---|---|---|---|

| 3 | 2D | 6 | 4 | 2 |

| 4 | 2D | 8 | 4 | 4 |

| 5 | 2D | 10 | 4 | 6 |

| k | 2D | 2k | 4 | 2k-4 |

| k | 3D | 3k | 7 | 3k-7 |

Visualizing Kendall's Shape Space for Triangles

The simplest non-trivial Kendall shape space is for triangles in 2D, which forms a spherical surface known as the shape sphere [10]. This provides an intuitive model for understanding properties of shape spaces in general. On this sphere, each point represents a distinct triangle shape, with antipodal points representing reflected triangles [10]. Great circles on this sphere correspond to repeated applications of specific shape changes, helping visualize why shape spaces are curved, closed surfaces [10]. For biological datasets with more landmarks, the shape spaces become higher-dimensional, but the spherical nature persists in abstract form, with the curvature having implications for statistical analysis.

Point Distribution Model

The Point Distribution Model (PDM) represents a Euclidean approximation to Kendall's shape space that enables multivariate statistical analysis [11]. This approach linearizes the curved shape space by working in a tangent space projected from a mean shape, creating a vector space where standard multivariate statistical techniques can be directly applied [11]. The PDM is constructed through Procrustes alignment of all specimens to a common reference shape, effectively reducing rotational and translational effects while preserving shape variability in a linear space [11].

Mathematical Foundation and Construction

The Point Distribution Model operates by projecting shapes from the curved Kendall shape space onto a Euclidean tangent space at a specific point, typically the mean shape or a reference shape [11]. The projection is mathematically valid when shape variation is sufficiently small, which empirical analyses suggest is satisfactory to excellent for most biological datasets [10]. The construction process involves:

- Procrustes Superimposition: All specimen configurations are aligned to a reference shape using Generalized Procrustes Analysis (GPA)

- Mean Shape Calculation: The average of aligned shapes is computed

- Tangent Space Projection: Shapes are projected to the Euclidean space tangent to the shape space at the mean shape

- Covariance Estimation: The covariance matrix of the tangent coordinates is computed

- Principal Components Analysis: Eigen decomposition of the covariance matrix identifies major modes of shape variation

Table 2: Comparison of Shape Representation Models

| Model | Mathematical Structure | Invariance | Statistical Framework |

|---|---|---|---|

| Kendall's Shape Space | Riemannian manifold | Rotation, Translation, Scale | Geometric statistics on manifolds |

| Point Distribution Model | Euclidean tangent space | Rotation, Translation (via alignment) | Standard multivariate statistics |

| Differential Coordinates | Lie group structure | Translation | Riemannian geometry on Lie groups |

| Fundamental Coordinates | Lie group structure | Euclidean motion (alignment-free) | Riemannian operations on groups |

The resulting principal components represent the major axes of shape variation within the sample, ordered by the amount of variance they explain. Each principal component corresponds to a mode of shape variation that can be visualized as a deformation from the mean shape. The PDM enables compact representation of shape variability through a limited number of principal components, facilitating statistical hypothesis testing, classification, and regression analysis of shape data.

Differential Coordinates Model

The Differential Coordinates model represents a more recent approach that addresses limitations of previous methods by employing a differential representation focused on local geometric variability [11]. This framework encodes shapes using differential coordinates that capture the local geometric structure of the shape, endowing the shape space with a Lie group structure that provides excellent theoretical properties and enables efficient algorithms [11]. Unlike the Point Distribution Model, this approach preserves the nonlinear nature of shape variation while offering computational advantages.

Mathematical Framework

In the Differential Coordinates model, shapes are represented using localized shape descriptors that are translation-invariant by construction [11]. The mathematical foundation leverages the fact that these differential coordinates form a Lie group, which provides:

- Closed-form expressions for Riemannian operations

- Numerical robustness without iterative approximation schemes

- Computational efficiency for geodesic calculations and interpolation

The model achieves rotational invariance through Procrustes alignment to a reference shape, similar to the Point Distribution Model, but preserves more of the nonlinear structure of shape variability [11]. This makes it particularly suitable for analyzing biological shapes with complex, nonlinear variation patterns that might be oversimplified by Euclidean approximation.

Experimental Protocol and Implementation

The implementation of Differential Coordinates analysis follows a structured workflow:

- Surface Mesh Representation: Shapes are represented as triangular surface meshes with vertices (v) and faces (f), where v ∈ ℝⁿˣ³ holds coordinates of n vertices and f ∈ ℝᵐˣ³ lists vertex indices forming m triangles [11]

- Reference Shape Selection: A representative template shape is chosen as the deformable reference

- Correspondence Establishment: Correspondences between vertices across all specimens are established (solving the "correspondence problem")

- Differential Coordinate Computation: Local shape descriptors are computed for each specimen

- Statistical Analysis: Geometric statistics are performed in the Lie group structure

Comparative Analysis and Applications

Geometric and Statistical Properties

Each shape framework offers distinct advantages for morphometric analysis. Kendall's shape space provides the most mathematically rigorous foundation with proper account of curvature but requires specialized geometric statistics [10]. The Point Distribution Model offers practical simplicity through linearization but may distort relationships in data with substantial shape variation [11]. The Differential Coordinates model balances computational efficiency with respect for nonlinear structure but requires more sophisticated implementation [11].

The curvature of shape spaces has important implications for statistical analysis. In Kendall's shape space, the intrinsic curvature means that linear combinations of shapes do not generally remain in the space, and averaging must be performed using Fréchet means [10]. The validity of tangent space approximation depends on the scale of variation in the dataset, with empirical evidence suggesting it works well for most biological applications where variation is relatively limited [10].

Applications in Morphometrics and Drug Development

These mathematical frameworks enable sophisticated analysis of biological shapes with applications in evolutionary biology, systematics, and increasingly in biomedical research and drug development. Specific applications include:

- Quantifying morphological changes in response to pharmaceutical treatments

- Characterizing disease progression through structural changes in tissues or organs

- Classifying pathological states based on cellular or subcellular morphology

- Analyzing anatomical development and the effects of genetic or environmental factors

In drug development, shape analysis can reveal subtle treatment effects that might be missed by traditional measurements, providing biomarkers for efficacy or toxicity. The probabilistic extensions of these frameworks, such as the Kendall Shape Probabilistic U-Net that incorporates shape spaces into deep learning models, further expand applications to image segmentation and analysis in biomedical imaging [12].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Shape Analysis

| Tool/Reagent | Function | Application Context |

|---|---|---|

| morphomatics Library | Implementation of shape space models | General shape analysis across frameworks |

| Surface Mesh (v, f) | Digital representation of shapes | Discrete representation of biological forms |

| Procrustes Alignment Algorithm | Remove non-shape variation | Preprocessing for all shape frameworks |

| Principal Components Analysis | Dimensionality reduction | Point Distribution Model implementation |

| Exponential/Logarithmic Maps | Geodesic calculations | Navigation in nonlinear shape spaces |

| Kendall Shape VAE | Probabilistic shape modeling | Shape-aware image segmentation [12] |

| PyVista | 3D visualization | Visualizing shapes and deformations [11] |

Kendall's Shape Space, Point Distribution Models, and Differential Coordinates provide complementary mathematical frameworks for analyzing biological shapes in morphometrics research. Kendall's approach offers a rigorous foundation based on Riemannian geometry, the Point Distribution Model enables practical application of multivariate statistics through linearization, and Differential Coordinates balance computational efficiency with respect for nonlinear structure. Together, these frameworks empower researchers to quantify and analyze morphological variation with unprecedented mathematical precision, opening new avenues for understanding biological form in contexts ranging from evolutionary biology to pharmaceutical development. As shape analysis continues to evolve, these core mathematical frameworks provide the foundation for increasingly sophisticated analysis of biological morphology and its relationship to function, development, and evolution.

The precise quantification of biological form is fundamental to understanding patterns of growth, evolution, and variation. Geometric morphometrics (GM) has emerged as the gold standard for analyzing shape, using coordinate-based data to quantify morphological differences while preserving geometric information throughout statistical analyses [13] [6]. This approach has transformed the study of phenotypic evolution by enabling researchers to capture and analyze complex anatomical structures with unprecedented precision. Shape representation in GM relies primarily on three complementary data types: landmarks, semilandmarks, and surface meshes, each addressing specific challenges in capturing biological form.

The fundamental challenge in morphometrics lies in establishing biological homology—ensuring that compared points represent the same biological entity across specimens. While landmarks provide discrete points of known homology, many biological structures lack sufficient such points for comprehensive shape characterization. This limitation has driven the development of semilandmarks and surface representations that densely sample curves and surfaces between traditional landmarks [14] [13]. These approaches have expanded the scope of morphometric studies to encompass entire structures rather than being limited to discrete points, enabling more nuanced investigations of morphological variation and evolution.

Landmarks: The Foundation of Geometric Morphometrics

Definition and Classification

Landmarks are defined as discrete, anatomically corresponding points that can be reliably identified across all specimens in a study. These points represent biological homologues, meaning they share common evolutionary and developmental origins [14] [15]. In geometric morphometrics, landmarks are typically categorized into three distinct types based on their anatomical definability:

- Type I landmarks are defined by local biological features, such as sutures between bones or small foramina, where distinct structures intersect.

- Type II landmarks represent points of maximum curvature or other local geometric features that can be reliably located based on tissue morphology.

- Type III landmarks are defined extrinsically, often as extremal points that require reference to other landmarks or the specimen's boundaries.

The primary strength of landmarks lies in their established biological homology, which provides a solid foundation for interpreting statistical shape differences in evolutionary or developmental contexts [14]. This biological validity makes them indispensable for studies investigating transformational processes.

Limitations and Constraints

Despite their biological relevance, landmarks present significant practical limitations. Their number is constrained by the availability of clearly identifiable homologous points, which rapidly diminishes when studying closely related taxa or smooth biological surfaces [13]. This problem becomes particularly acute in phylogenetic broad-scale studies, where identifiable homologous points become increasingly scarce [6]. Furthermore, landmarks alone cannot capture the morphological information between discrete points, potentially missing substantial shape variation occurring across curves and surfaces [13].

Table 1: Landmark Types and Their Characteristics in Morphometric Analysis

| Landmark Type | Definition Basis | Biological Homology | Examples | Primary Limitations |

|---|---|---|---|---|

| Type I | Local biological features | Strong | Sutures, foramina | Limited number on smooth surfaces |

| Type II | Maximum curvature | Moderate | Bony processes, apex of curves | More susceptible to identification error |

| Type III | Extrema relative to other points | Weak | Extremal points, endpoints | Most dependent on overall configuration |

Semilandmarks: Enhancing Shape Capture

Conceptual Framework

Semilandmarks (also called "sliding semilandmarks") were developed to address the limitation of sparse landmark coverage by providing a method to quantify shape along curves and surfaces between traditional landmarks [13]. Unlike landmarks, semilandmarks do not possess established biological homology in the traditional sense. Instead, they rely on geometric homology, where equivalence is determined algorithmically based on their relative positions on curves or surfaces bounded by true landmarks [14] [15].

The theoretical foundation of semilandmarks recognizes that while individual semilandmark positions may not be biologically meaningful, the overall curves and surfaces they represent are homologous structures [15]. As noted by researchers, "the coordinates of semilandmarks along the surface are meaningless, and one cannot interpret the position of single semilandmarks, only the surface geometry that all semilandmarks describe together" [15]. This conceptual shift requires treating semilandmarks as a collective representation of form rather than as discrete homologous points.

Technical Implementation

The placement of semilandmarks follows a multi-stage process. First, a template specimen is manually landmarked, and semilandmarks are distributed along curves or across surfaces between landmarks. This template is then warped to each target specimen using thin-plate spline (TPS) interpolation based on the true landmarks [14] [16]. The semilandmarks are subsequently "slid" to minimize either bending energy or Procrustes distance, effectively removing the tangential component of their placement error [14] [13].

Two primary criteria are used for the sliding process:

- Bending energy minimization: This approach positions semilandmarks to minimize the deformation energy required to transform the template to the target specimen, giving greater weight to landmarks and semilandmarks local to the point being slid [14].

- Procrustes distance minimization: This method slides semilandmarks to minimize the squared Procrustes distance between specimens, where all landmarks and semilandmarks influence the sliding equally, regardless of proximity [14].

The number of iterations in the sliding process affects the final configuration, with research indicating that classification accuracy stabilizes after approximately 12 iterations rather than progressively improving with more iterations [16].

Surface Meshes and Advanced Approaches

Surface Mesh Representation

Surface meshes provide the most comprehensive approach to shape representation by capturing continuous anatomical surfaces rather than discrete points. A surface mesh consists of vertices (points), edges (connections between points), and faces (polygonal surfaces, typically triangles), creating a continuous representation of the anatomical structure [15]. Surface meshes are particularly valuable for visualizing statistical results, as they can be warped to landmark and semilandmark configurations to create realistic representations of mean shapes or shape extremes [15].

In practical application, a template surface mesh is warped to fit estimated landmark and semilandmark configurations using thin-plate spline interpolation [15]. This enables the creation of surfaces representing statistical estimates, such as means or allometrically scaled shapes, which have utility in clinical contexts for assessing anomalies or building models for functional analyses like finite element analysis [15].

Landmark-Free Methods

Recent technological advances have prompted the development of landmark-free approaches that bypass traditional landmark identification entirely. These methods include:

- Deterministic Atlas Analysis (DAA): A Large Deformation Diffeomorphic Metric Mapping (LDDMM) approach that computes deformations between a dynamically generated atlas and each specimen without requiring pre-defined landmarks [6].

- Iterative Closest Point (ICP) algorithms: Rigid registration approaches that align surfaces by iteratively minimizing distances between point clouds [14] [6].

- Auto3dgm: A package that uses a template specimen with the greatest geometric similarity to project semilandmarks to all other specimens [14].

These landmark-free methods offer significant advantages in processing speed and reduced researcher bias, making them particularly suitable for analyzing large datasets [6]. However, they face challenges when applied to phylogenetically disparate taxa, as the correspondence points identified may not represent biological homologues [6].

Table 2: Comparison of Semilandmarking and Landmark-Free Approaches

| Method | Basis of Correspondence | Homology Assurance | Automation Level | Best Application Context |

|---|---|---|---|---|

| Sliding Semilandmarks | Landmark-guided | Geometric homology | Semi-automated | Studies requiring biological interpretability |

| Deterministic Atlas Analysis | Deformation-based | Sample-dependent | Automated | Large-scale studies across disparate taxa |

| Iterative Closest Point | Surface proximity | Topographic similarity | Automated | Classification and discrimination tasks |

| Auto3dgm | Template projection | Geometric similarity | Automated | Rapid data processing |

Methodological Comparisons and Experimental Insights

Performance of Different Semilandmarking Approaches

Comparative studies have systematically evaluated the performance of different semilandmarking approaches, revealing both consistencies and important differences. One comprehensive study compared three landmark-driven approaches: sliding TPS, hybrid rigid registration combining least-squares and ICP algorithms (LS&ICP), and an approach combining TPS with non-rigid ICP (TPS&NICP) [14] [15]. The findings demonstrated that while sliding TPS and TPS&NICP produced highly consistent results, the LS&ICP approach yielded notably different semilandmark locations and subsequent statistical outcomes [15].

These differences translated to variations in estimates of mean shapes, principal components of shape variation, and allometric patterns [14] [15]. Importantly, consistency within methods was highest for sliding TPS and TPS&NICP, particularly when true landmarks were densely distributed across the surface [15]. This suggests that the performance of semilandmarking approaches is contingent on the landmark framework guiding them.

Effect of Semilandmark Density

The density of semilandmarks represents a critical methodological decision in study design. Research indicates that while increasing semilandmark density enhances shape capture, it does not necessarily improve analytical outcomes proportionally. Studies comparing different densities found that estimates of surface mesh shape remained generally consistent across densities, suggesting that beyond a certain threshold, additional semilandmarks provide diminishing returns [15].

However, surfaces warped using landmarks alone demonstrated notable differences compared to those incorporating semilandmarks, with the discrepancy dependent on landmark coverage and template selection [15]. This underscores the importance of semilandmarks for accurately representing surfaces between landmarks, particularly in regions with sparse landmark coverage.

Experimental Protocol: Iteration Effects in Sliding Semilandmarks

A systematic investigation of iteration effects in sliding semilandmarks provides guidance for optimizing this parameter [16]. The experimental protocol employed the following methodology:

- Sample: 80 3D facial scans (40 males, 40 females) from the Stirling/ESRC 3D Face Database

- Template construction: 16 anatomical landmarks placed manually on a template mesh

- Semilandmark generation: 484 semilandmarks automatically generated and uniformly distributed across the facial surface

- Sliding procedure: Semilandmarks slid along target meshes using TPS warping with bending energy minimization

- Iteration test: Five relaxation states tested (1, 6, 12, 24, and 30 iterations)

- Analysis: Principal Component Analysis for feature selection followed by Linear Discriminant Analysis for gender classification

The results demonstrated that classification accuracy peaked at 12 iterations (96.43%) rather than increasing progressively with more iterations [16]. This indicates an optimal threshold for the sliding process beyond which additional iterations do not improve results and may even reduce accuracy. The processing time increased linearly with iteration count, making higher iterations computationally expensive without analytical benefit [16].

Practical Applications and Classification Context

Nutritional Status Assessment in Children

Geometric morphometrics has demonstrated significant utility in practical classification problems, such as assessing nutritional status in children. The SAM Photo Diagnosis App Program developed a smartphone application to identify severe acute malnutrition in children aged 6-59 months from images of their left arms [17] [18]. This approach represents an innovative application of GM techniques in real-world screening contexts.

The methodology involved:

- Sample collection: Images from 410 Senegalese children with either optimal nutritional condition or severe acute malnutrition

- Landmark and semilandmark placement: Anatomical points digitized on arm images

- Classification model development: Linear discriminant analysis applied to shape variables

- Out-of-sample validation: Methodological framework for classifying new individuals not included in the training sample

A critical challenge addressed in this research was the classification of out-of-sample individuals, requiring specialized approaches to obtain registered coordinates in the training sample's shape space [17]. This application highlights the translation of morphometric shape representation from theoretical framework to practical tool with significant public health implications.

Taxonomic Discrimination in Fisheries

Geometric morphometrics has also proven valuable in taxonomic discrimination, as demonstrated in a study of three Indian shad species [19]. The research analyzed digital images from 120 specimens using GM approaches to investigate body shape variations. The analysis successfully differentiated the species with 100% accuracy using Canonical Variate Analysis and Discriminant Function Analysis, though the limited sample size for one species (Hilsa kelee, n=6) necessitated leave-one-out cross-validation to address potential overfitting [19].

This application illustrates how shape representation using landmarks and semilandmarks can provide discrimination beyond traditional morphometric approaches, offering insights into subtle morphological differences with taxonomic significance.

Table 3: Essential Software Tools for Shape Representation in Morphometrics

| Software Tool | Primary Function | Key Features | Application Context |

|---|---|---|---|

| EVAN Toolbox | Semilandmark processing | Sliding semilandmarks along curves and surfaces | General morphometric analysis |

| Viewbox | Template creation and warping | Semiautomated semilandmark placement | 3D facial analysis [16] |

| Geomorph R Package | Statistical shape analysis | Procrustes ANOVA, phylogenetic integration | Comprehensive GM analysis |

| Morpho R Package | Sliding semilandmarks | Minimization of bending energy/Procrustes distance | Landmark and semilandmark processing |

| Deformetrica | Landmark-free analysis | Deterministic Atlas Analysis (DAA) | Large-scale datasets [6] |

| Auto3dgm | Automated correspondence | Template-based point correspondence | Rapid data processing [14] |

The representation of biological form through landmarks, semilandmarks, and surface meshes provides a sophisticated framework for analyzing shape variation within a defined shape space. Each approach offers distinct advantages: landmarks provide biological homology, semilandmarks enable dense shape capture between landmarks, and surface meshes facilitate comprehensive visualization and analysis. The integration of these methods allows researchers to construct detailed representations of morphological variation that can be leveraged for classification purposes.

Each methodological choice in shape representation carries implications for subsequent classification analyses. Landmark-based approaches maintain biological interpretability but may lack comprehensive shape coverage. Semilandmark methods enhance shape capture but introduce algorithmic dependence in point placement. Landmark-free approaches offer automation and efficiency but may sacrifice biological correspondence. Understanding these trade-offs is essential for designing morphometric studies that yield biologically meaningful and statistically robust classification systems.

As morphometric research continues to evolve, the integration of traditional landmark-based approaches with emerging landmark-free methods holds promise for addressing the challenges of analyzing increasingly large and complex morphological datasets. This synthesis will expand the scope of morphometric studies and enhance our understanding of shape variation across evolutionary, developmental, and clinical contexts.

The quantification of shape is a fundamental challenge across numerous scientific disciplines, from evolutionary biology and archaeology to modern drug discovery. Geometric morphometrics (GM) provides a powerful suite of tools for addressing this challenge by capturing and analyzing the geometry of anatomical structures or objects while controlling for differences in size, position, and orientation [20]. The core concept in GM is shape space—a mathematical space in which each point represents a unique object shape. Navigating this space requires robust metrics to quantify similarity and difference, enabling researchers to classify specimens, identify patterns of variation, and test hypotheses about form and function [17].

This technical guide focuses on two primary classes of tools for this task: Procrustes distance, which measures the difference between shapes after optimal superimposition, and multidimensional shape similarity metrics, which integrate numerous geometric descriptors to predict perceptual similarity. The Procrustes paradigm is particularly central to modern morphometrics, as it provides a rigorous framework for placing specimens into a common coordinate system for statistical analysis [20]. Understanding these metrics is essential for anyone working in morphometrics research, as they form the basis for almost all subsequent statistical analyses and interpretations of shape data.

Theoretical Foundations of Shape Similarity

The Challenge of Quantifying Shape

Quantifying visual shape similarity is a complex problem because shape perception involves multiple competing constraints. An effective shape representation must balance sensitivity (the ability to discriminate between subtly different shapes) and robustness (providing a consistent description across irrelevant transformations like rotation or scaling) [21]. Different shape descriptors inherently represent trade-offs between these goals; a descriptor invariant to rotation may be highly sensitive to other transformations like "bloating" or the addition of noise to a contour [21].

Human visual perception likely resolves this conflict by representing shape in a multidimensional space defined by many complementary shape descriptors [21]. This approach motivates computational models that combine multiple geometric properties to predict human shape similarity judgments. No single metric can perfectly capture all aspects of shape similarity, which is why the field employs a variety of distance measures tailored to different data types and research questions.

Procrustes Distance and Shape Space

The Procrustes distance is a cornerstone metric in geometric morphometrics for comparing shapes defined by landmark coordinates. The process begins with a set of homologous landmarks—anatomically corresponding points—captured from each specimen. The core idea of Procrustes analysis is to remove the effects of non-shape-related variation through an iterative least-squares optimization process known as Generalized Procrustes Analysis (GPA) [20] [17].

The GPA procedure involves three sequential steps:

- Translation: Each shape configuration is centered to a common origin (typically the centroid of the landmarks) to eliminate positional differences.

- Scaling: Configurations are scaled to a standard size (unit centroid size) to remove the effect of size.

- Rotation: Configurations are rotated to minimize the sum of squared distances between corresponding landmarks [20].

After this superimposition, the Procrustes distance between two shapes is calculated as the square root of the sum of squared differences between the coordinates of their corresponding landmarks [22]. The resulting aligned coordinates reside in a non-Euclidean space known as Kendall's shape space. For statistical analysis, shapes are typically projected into a linear tangent space where standard multivariate methods can be applied with acceptable accuracy [20].

Table 1: Key Distance Metrics in Geometric Morphometrics

| Metric Name | Data Type | Calculation | Key Properties | Primary Applications |

|---|---|---|---|---|

| Procrustes Distance | Landmark coordinates | Square root of summed squared coordinate differences after GPA | Invariant to position, scale, rotation; defines geometric shape space | Hypothesis testing of shape difference, morphological systematics [20] [22] |

| Mahalanobis Distance | Multivariate data (e.g., Procrustes coordinates) | Measures distance in terms of standard deviations from a group mean, accounting for covariance | Scale-invariant, accounts for variable correlations | Classifying specimens into groups, discriminant analysis [22] |

| ShapeComp Similarity | 2D contours/outlines | Multidimensional Euclidean distance from >100 shape features (e.g., area, compactness) | Predicts human perceptual similarity; perceptually uniform stimuli | Psychophysical research, visual neuroscience, AI vision [21] |

Methodological Protocols for Shape Analysis

A Standard Procrustes Analysis Workflow

The following workflow details the essential steps for a landmark-based geometric morphometric study, from data collection to statistical analysis. This protocol is adapted from applications in osteology [20] and entomology [22].

Step 1: Data Acquisition and Digitization

- Specimen Selection: Ensure specimens are well-preserved and represent the biological variation of interest. For bilateral structures, decide whether to use one side (flipping scans if necessary) or both.

- Landmark Definition: Establish a template of homologous landmarks (fixed anatomical points), curve semi-landmarks (points along homologous curves), and surface semi-landmarks (points on homologous surfaces). The number and placement of points are critical to capture morphology without over-sampling [20].

- Data Capture: Use high-resolution 3D scanners (e.g., structured-light scanners like Artec Eva) or 2D imaging systems. For 2D, ensure consistent orientation and scale. Save data as coordinate matrices.

Step 2: Configuration Preprocessing

- Data Organization: For

nspecimens, each withklandmarks inmdimensions (2D or 3D), combine coordinate matrices into ak x m x narray [20]. - Handling Missing Data: For damaged specimens, employ imputation methods. The choice of method (e.g., regression-based, thin-plate spline) depends on the extent of missingness [20].

- Semi-landmark Sliding: If used, relax semi-landmarks along curves and surfaces to minimize bending energy, optimizing their positions as homologous points.

Step 3: Generalized Procrustes Analysis (GPA)

- Translation: Center each configuration by subtracting its centroid (mean x, y, z coordinates).

- Scaling: Scale all configurations to unit Centroid Size (CS), calculated as the square root of the sum of squared distances of all landmarks from their centroid.

- Rotation: Rotate configurations to minimize the global sum of squared distances between corresponding landmarks via an iterative algorithm. The final output is the set of Procrustes-aligned coordinates.

Step 4: Statistical Analysis and Distance Calculation

- Procrustes ANOVA: Test for significant shape difference between groups, accounting for measurement error and other factors.

- Principal Component Analysis (PCA): Reduce dimensionality of aligned coordinates to visualize major trends of shape variation in a morphospace [22].

- Distance Calculation: Compute Procrustes distances between specimens or group means for clustering and classification. Use Mahalanobis distances for group discrimination [22].

Protocol for Out-of-Sample Classification

A common challenge in applied morphometrics is classifying a new specimen that was not part of the original Procrustes alignment. The following protocol addresses this [17]:

- Template Selection: Choose a single specimen or the mean shape from the reference (training) sample to serve as a template.

- Target Registration: Perform a Procrustes fit of the new specimen's raw coordinates to the selected template. This is a partial Procrustes alignment that removes non-shape variation relative to the template, without a full GPA involving all specimens.

- Projection: Project the newly registered coordinates into the existing tangent space of the reference sample.

- Classification: Apply the pre-existing classification rule (e.g., linear discriminant function) to the new specimen's projected coordinates.

Table 2: The Scientist's Toolkit: Essential Reagents and Software for Geometric Morphometrics

| Tool/Reagent | Specification/Type | Primary Function in Workflow |

|---|---|---|

| High-Resolution 3D Scanner (e.g., Artec Eva) | Hardware | Captures surface topography of specimens to create 3D digital models for landmarking [20]. |

| Digitization Software (e.g., Viewbox 4, TPSDig2) | Software | Provides interface for placing and recording coordinates of landmarks, curve points, and surface points on digital specimens [20] [22]. |

| Geometric Morphometrics Software (e.g., MorphoJ, R package geomorph) | Software | Performs core analyses: Generalized Procrustes Analysis, PCA, statistical testing of shape difference, and visualization [22]. |

| Statistical Environment (e.g., R) | Software | Provides a flexible platform for advanced statistical analysis, custom scripting, and data visualization of shape data [20] [17]. |

| Human Os Coxae Template [20] | Research Protocol | Pre-defined set of landmarks for a specific structure; ensures consistency and homology across studies. |

| Shape Feature Model (e.g., ShapeComp) [21] | Computational Model | Predicts human perceptual shape similarity from outlines using a multidimensional feature space; useful for psychophysics and AI. |

Applications and Case Studies in Research

Taxonomic Classification of Thrips

Geometric morphometrics successfully distinguishes closely related insect species where traditional methods struggle. A 2025 study on eight species of Thrips used 11 landmarks on the head and 10 on the thorax. Procrustes-based PCA revealed significant shape differences, with the first three principal components accounting for over 73% of head shape variation. Procrustes distance and Mahalanobis distance matrices, analyzed with permutation tests, statistically confirmed species separations. For instance, T. angusticeps and T. australis showed the greatest head shape divergence, while the thorax landmark configuration best separated T. nigropilosus, T. obscuratus, and T. hawaiiensis. This demonstrates GM's power as a complementary tool for identifying quarantine-significant pests [22].

Nutritional Status Assessment in Children

GM enables non-invasive nutritional screening by analyzing body shape. The SAM Photo Diagnosis App Program uses a smartphone app to classify nutritional status in children aged 6-59 months from photos of the left arm. A discriminant model is built from Procrustes-aligned landmarks and semi-landmarks from a reference sample. For out-of-sample classification, the app registers a new child's arm photo to a template from the reference sample, projecting it into the established shape space for classification. This digital health tool highlights GM's potential for real-world public health interventions, relying on a robust registration and classification protocol for new individuals [17].

Analyzing Morphological Integration in Human Osteology

A 2025 study of the human os coxae (hip bone) illustrates the use of Procrustes methods to investigate developmental and functional modularity. Researchers developed a detailed landmark template (25 fixed landmarks, 159 curve semi-landmarks, 425 surface semi-landmarks) from 3D scans. After Procrustes alignment, they analyzed patterns of shape covariation between the ilium, ischium, and pubis—bones that fuse during development. This protocol allowed them to test the hypothesis that these modules retain statistically independent patterns of variation due to their distinct developmental origins and functional roles, such as locomotion versus obstetric demands [20].

The fields of shape similarity quantification and geometric morphometrics are being transformed by the integration of artificial intelligence (AI) and machine learning (ML). In drug discovery, AI tools analyze the "chemical shape space" to perform virtual screening of millions of compounds, predicting bioactive molecules and optimizing lead compounds by assessing properties like shape similarity [23]. ML algorithms, including deep neural networks, are also being applied directly to morphometric data for classification tasks, potentially uncovering complex, non-linear patterns of shape variation that traditional methods might miss [17] [23].

Furthermore, advanced models like ShapeComp demonstrate that combining over 100 complementary shape descriptors (e.g., area, compactness, Fourier descriptors) into a single multidimensional metric can accurately predict human perceptual shape similarity, outperforming both pixel-based methods and some deep learning models [21]. This aligns with the core morphometric principle that no single metric can capture all aspects of shape, pointing toward a future of hybrid, multi-method approaches.

In conclusion, Procrustes distance provides a mathematically rigorous foundation for comparing shapes in a normalized space, while multidimensional similarity metrics offer powerful tools for modeling perceptual shape space. Together, these methodologies for quantifying shape similarity form an indispensable toolkit for modern morphometrics research. They enable the rigorous testing of hypotheses across diverse fields, from taxonomy and paleontology to biomedical engineering and drug discovery, driving forward our understanding of the relationship between form and function.

The quantification of biological shape is a cornerstone of evolutionary biology, medical imaging, and comparative anatomy. At the heart of this quantification lies the mapping problem—the challenge of establishing accurate, biologically meaningful correspondences between points on two or more anatomical structures. Whether comparing mammalian skulls across evolutionary timescales, analyzing differences in leaf morphology, or tracking morphological changes in medical conditions, researchers must solve this fundamental problem before any meaningful statistical analysis of shape can proceed [6]. The correspondence solution directly determines which aspects of shape variation are captured and ultimately influences all subsequent biological interpretations.

Traditional geometric morphometrics has largely relied on manual landmark placement—expert-identified homologous points that correspond across specimens. While this approach has proven immensely valuable, it introduces significant limitations: the process is time-consuming, susceptible to observer bias, and fundamentally constrained by the number of landmarks a researcher can practically place [24] [6]. As biological datasets expand to include thousands of 3D specimens obtained from CT scanning and other imaging technologies, and as research questions require more comprehensive capture of morphological detail, the field has increasingly turned toward automated correspondence methods that can operate without exhaustive manual intervention [24] [6]. These new approaches aim to capture shape variation more comprehensively while minimizing human bias, thereby enabling more powerful analyses of shape space and classification across diverse biological contexts.

Mathematical Foundations of Shape Correspondence

The mathematical treatment of shape correspondence has evolved along several parallel tracks, each with distinct advantages for particular biological applications. Quasi-conformal theory provides a powerful framework for representing surface deformations through Beltrami coefficients (μ), which quantify the local deviation from angle preservation. Intuitively, while conformal maps transform infinitesimal circles into infinitesimal circles, quasi-conformal maps transform them into ellipses with bounded eccentricity, providing a continuous measure of local distortion [25]. This formalism enables the computation of landmark-matching mappings between surfaces even when they lack global one-to-one correspondence, automatically detecting and aligning only the most relevant corresponding parts between two anatomical structures [25].

Diffeomorphic mapping approaches, particularly Large Deformation Diffeomorphic Metric Mapping (LDDMM), model shape transformations as smooth, invertible deformations that preserve topological structure. In methods like Deterministic Atlas Analysis (DAA), a mean template shape (an "atlas") is computed from the dataset, and the deformation required to map this atlas onto each specimen is quantified through momentum vectors ("momenta") at control points [6]. These momenta capture the optimal deformation trajectory and serve as the basis for comparing shape variation across specimens without requiring predefined landmarks.

Functional maps represent a more recent approach that operates in the spectral domain rather than directly in coordinate space. This method establishes correspondence through linear operators that map functions defined on one surface to another, effectively transforming the correspondence problem into one of finding a consistent basis between shapes [24]. The morphVQ pipeline leverages this approach with learned shape descriptors to estimate functional correspondence between whole triangular meshes, producing Latent Shape Space Differences (LSSDs) that characterize morphological variation through area-based and conformal operators [24].

Comparative Analysis of Correspondence Methodologies

Table 1: Key Methodologies for Solving the Shape Correspondence Problem

| Method | Mathematical Foundation | Correspondence Type | Key Advantages | Limitations |

|---|---|---|---|---|

| Traditional Landmarking | Procrustes superimposition | Discrete point homology | Biologically interpretable; well-established statistics | Limited morphological coverage; observer bias; time-intensive |

| morphVQ [24] | Functional maps + descriptor learning | Continuous surface mapping | Automated; captures comprehensive shape variation; computationally efficient | Requires quality surface meshes; black-box nature of learned descriptors |

| DAA (LDDMM) [6] | Diffeomorphic transformations | Deformation-based momentum vectors | No predefined landmarks needed; handles substantial shape differences | Sample-dependent atlas; sensitive to kernel width parameter; mixed modalities problematic |

| Quasi-conformal Registration [25] | Beltrami equation + quasi-conformal theory | Landmark-guided surface mapping | Handles inconsistent regions; optimal part-matching without global correspondence | Requires some landmark constraints; complex implementation |

| Auto3DGM [24] | Farthest point sampling + GDPF | Pseudolandmark correspondence | Fully automated; no template required | Lower resolution than surface-based methods |

Table 2: Performance Comparison on Biological Classification Tasks

| Method | Classification Accuracy | Computational Efficiency | Morphological Coverage | Required Expertise |

|---|---|---|---|---|

| Manual Landmarking | High (with expert digitization) | Low (hours to days per specimen) | Limited to landmark regions | High (domain knowledge required) |

| morphVQ [24] | Comparable to manual landmarking | High | Comprehensive (whole surfaces) | Medium (parameter tuning) |

| DAA [6] | Varies across taxa | Medium | Comprehensive | Medium (atlas selection critical) |

| Global PCA Models [26] | Moderate for gross morphology | High | Global geometry only | Low |

| Local/Wavelet Models [26] | High for detailed structures | Medium | Multi-scale detail | Medium |

The performance comparison reveals significant trade-offs between methodological approaches. morphVQ demonstrates particular strength in computational efficiency while maintaining classification accuracy comparable to manual landmarking [24]. DAA shows excellent potential for broad taxonomic comparisons but exhibits sensitivity to data preparation, particularly in handling mixed imaging modalities (CT vs. surface scans), though this can be mitigated through Poisson surface reconstruction to create watertight meshes [6]. Quasi-conformal registration excels in datasets where specimens share only partial correspondence, automatically identifying and aligning only common regions while excluding inconsistent parts [25].

Experimental Protocols for Correspondence Establishment

morphVQ Pipeline for Automated Phenotyping

The morphVQ pipeline implements a fully automated approach to shape correspondence through several refined stages. The process begins with data preparation and preprocessing, requiring triangular mesh models of biological specimens derived from micro-CT or other scanning modalities [24].

Step 1: Initial rigid alignment

- Apply the Generalized Dataset Procrustes Framework (GDPF) from auto3DGM

- Subsample shapes at low resolution (128-256 pseudolandmarks)

- Establish initial rotation and translation parameters for coarse alignment [24]

Step 2: Descriptor learning and functional map computation

- Learn shape descriptors directly from the aligned polygon models

- Estimate functional correspondences between pairs of specimens

- Use Consistent ZoomOut refinement to improve map quality [24]

Step 3: Latent Shape Space Difference (LSSD) calculation

- Compute area-based and conformal (angular) LSSDs

- These represent shape variation between specimens in the functional domain

- Enable statistical analysis of morphological variation [24]

Validation: The method has been validated through genus-level classification tasks, demonstrating comparable accuracy to manual landmarking while capturing more comprehensive morphological detail [24].

Deterministic Atlas Analysis (DAA) Protocol

DAA provides an alternative landmark-free approach suitable for datasets with substantial morphological variation. The protocol involves both preprocessing and analytical stages [6].

Preprocessing and standardization

- Convert all specimens to watertight, closed surfaces using Poisson surface reconstruction

- This critical step eliminates issues arising from mixed imaging modalities

- Ensure consistent mesh topology across the dataset [6]

Template selection and atlas generation

- Select an initial template specimen (choice has minimal impact on results)

- Iteratively compute a geodesic mean shape (atlas) that minimizes total deformation energy

- Generate control points guided by a kernel width parameter (e.g., 20.0mm produces ~270 control points) [6]

Deformation quantification

- Compute momentum vectors ("momenta") at each control point

- These represent optimal deformation trajectories from atlas to each specimen

- Perform kernel Principal Component Analysis (kPCA) on momenta to visualize shape space [6]

Parameter optimization: Kernel width selection balances morphological sensitivity with computational burden; smaller values (10.0mm) capture finer details but increase control points (1,782), while larger values (40.0mm) provide broader characterization with fewer points (45) [6].

DAA Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Shape Correspondence Research

| Tool/Software | Primary Function | Methodology | Application Context |

|---|---|---|---|

| morphVQ [24] | Automated shape correspondence | Functional maps + descriptor learning | General anatomical structures; bone morphology |

| Deformetrica [6] | Diffeomorphic registration | LDDMM/Deterministic Atlas Analysis | Macroevolutionary studies; disparate taxa |

| Geomorph [27] | Geometric morphometrics analysis | Traditional & modern GM | General biological shapes; comprehensive stats |

| MorphoLeaf [27] | Plant leaf morphometrics | Landmark & outline analysis | Plant leaves; digital identification |

| Auto3DGM [24] | Automated pseudolandmarking | Farthest point sampling + GDPF | General 3D shapes; initial alignment |

Visualization Framework for Correspondence Analysis

Shape Correspondence Method Classification

The solution to the mapping problem between shapes represents more than a technical exercise in computational geometry—it fundamentally shapes our understanding of biological form and its evolution. As correspondence methods evolve from discrete landmark-based approaches toward continuous, automated frameworks, they enable researchers to address more complex questions about morphological adaptation, diversification, and development across broader taxonomic scales. The emerging synergy between mathematical theory, computational implementation, and biological application promises to transform morphometrics from a specialized methodology into a general framework for understanding the evolution of form.

Each correspondence method carries implicit assumptions about the nature of biological variation, and the choice of method should be guided by the specific research question, dataset characteristics, and analytical goals. Landmark-based approaches retain value for hypothesis-driven studies of specific morphological structures, while landmark-free methods excel in exploratory analyses across disparate taxa or when comprehensive shape characterization is required. Future developments will likely focus on hybrid approaches that leverage the biological interpretability of landmarks with the comprehensive coverage of continuous correspondence methods, ultimately providing richer representations of shape space for classifying and understanding biological diversity.

From Theory to Practice: Classification Methods and Real-World Applications

In morphometrics research, the quantitative analysis of form and shape is fundamental to understanding biological variation, evolutionary patterns, and diagnostic characteristics in fields ranging from drug development to paleontology [28] [29]. The concept of "shape space" provides a mathematical framework where biological forms can be represented as points, enabling statistical analysis of morphological patterns that are often invisible to the human eye. Within this conceptual space, classification techniques serve as critical tools for identifying, categorizing, and interpreting complex morphological data. This technical guide provides an in-depth examination of three powerful classification methodologies—Linear Discriminant Analysis (LDA), Support Vector Machines (SVM), and Neural Networks—with specific emphasis on their application to morphometric research problems.

The drive toward more quantitative, reproducible, and objective analysis in morphology has accelerated the adoption of these machine learning techniques [29]. Traditional morphometric approaches often grapple with challenges of subjective interpretation and observer bias, limitations that can significantly impact research outcomes in pharmaceutical development and systematic biology. By contrast, LDA, SVM, and neural networks offer data-driven frameworks for morphological classification that can identify subtle, diagnostically significant patterns within high-dimensional shape data [30] [29]. This whitepaper examines the theoretical foundations, practical implementation, and relative strengths of these three techniques within the specific context of morphometric analysis.

Theoretical Foundations of Classification Techniques

Linear Discriminant Analysis (LDA)

Linear Discriminant Analysis is a supervised classification approach that operates by finding linear combinations of features that best separate two or more classes of objects or events [31] [32]. Developed from Fisher's linear discriminant in the 1930s, LDA follows a generative model framework, modeling the data distribution for each class and using Bayes' theorem to classify new data points [31]. The algorithm fundamentally seeks to identify a lower-dimensional projection that maximizes between-class variance while minimizing within-class variance, effectively enhancing class separability in the reduced space.

The core mathematical objective of LDA is to find the projection vector v that maximizes Fisher's criterion:

J(v) = (vᵀSᵇv) / (vᵀSʷv)

Where Sᵇ is the between-class scatter matrix and Sʷ is the within-class scatter matrix [31] [32]. For implementation, LDA operates under several key assumptions: the input data should follow a Gaussian distribution, the dataset should be linearly separable, and each class should share a common covariance matrix [31]. When these assumptions are met, LDA produces optimal classification boundaries with computational efficiency particularly valuable for high-dimensional morphological data where the number of features often exceeds sample size.

Support Vector Machines (SVM)

Support Vector Machines represent a distinct approach to classification, focusing on finding the optimal hyperplane that maximizes the margin between classes in a high-dimensional feature space [33] [34]. Developed in the 1990s, SVMs employ a discriminative approach, concentrating specifically on the instances most difficult to classify—the support vectors—which are the data points closest to the decision boundary [33] [35].

The fundamental optimization problem for a linear SVM can be expressed as:

minimize(½||w||² + C∑ζᵢ) subject to yᵢ(wᵀxᵢ + b) ≥ 1 - ζᵢ and ζᵢ ≥ 0

Where w is the normal vector to the hyperplane, C is a regularization parameter controlling the trade-off between maximizing margin and minimizing classification error, and ζᵢ are slack variables that allow for misclassification in non-separable cases [33]. For non-linearly separable data, SVMs employ the "kernel trick," mapping input features into higher-dimensional spaces using kernel functions such as Radial Basis Function (RBF), polynomial, or sigmoid kernels without explicitly computing the coordinates in that space [33] [34]. This capability makes SVMs particularly valuable for complex morphological patterns where linear separation is insufficient.

Neural Networks

Neural Networks, particularly deep learning architectures, represent a paradigm shift in classification capability through their ability to automatically learn hierarchical feature representations directly from raw data [28] [30]. Unlike LDA and SVM which typically operate on pre-engineered features, neural networks can discover and optimize the feature representation itself, making them exceptionally powerful for image-based morphometric analysis.