Overcoming Low-Resolution Microscopy: Advanced AI Strategies for Accurate Egg Identification in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on handling low-resolution microscopic images for egg identification, a common challenge in parasitology and biomedical studies.

Overcoming Low-Resolution Microscopy: Advanced AI Strategies for Accurate Egg Identification in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on handling low-resolution microscopic images for egg identification, a common challenge in parasitology and biomedical studies. It explores the fundamental limitations of low-resolution imaging and details how cutting-edge deep learning and AI-enhanced microscopy techniques can overcome these hurdles. The content covers practical methodologies for image enhancement and automated detection, troubleshooting for common imaging errors, and a comparative analysis of traditional versus modern AI-driven approaches. By synthesizing the latest research, this article serves as a strategic resource for improving diagnostic accuracy, accelerating research workflows, and enabling reliable analysis even with cost-effective or resource-limited microscopy setups.

The Low-Resolution Challenge: Understanding the Impact on Egg Identification Accuracy

Frequently Asked Questions

Q1: What specific morphological features become indecipherable in low-resolution microscopic images of parasite eggs? In low-resolution images, critical features for species identification are lost. These include the texture and thickness of the eggshell, the presence and characteristics of an operculum (lid), the internal structures of the developing larva, and subtle variations in shape and size that are essential for differentiating between morphologically similar species [1] [2]. With insufficient detail, eggs from different species can appear nearly identical, leading to misclassification.

Q2: How does low resolution directly impact the performance of automated detection systems? Low resolution causes a significant drop in detection and classification accuracy for automated systems like deep learning models. The models lack sufficient pixel data to learn discriminative features [2]. This is compounded by a higher likelihood of missing small eggs entirely and an increased confusion with background debris and impurities, as the model cannot reliably distinguish fine egg contours from noise [3] [2].

Q3: Are there computational methods to mitigate the problems caused by low-resolution images? Yes, several computational approaches can help. Image enhancement techniques, such as contrast manipulation, can broaden the range of brightness values to improve feature visibility [4] [5]. Advanced deep learning models, like the YAC-Net or YCBAM, are specifically designed to be more efficient with limited data and can integrate attention mechanisms to focus on the most relevant image regions [1] [3]. Furthermore, transfer learning with pre-trained networks can boost classification performance on poor-quality images by leveraging features learned from larger datasets [2].

Experimental Protocols for Analysis

Protocol 1: Evaluating Detection Performance Across Resolutions

This protocol outlines a method to quantitatively assess how image resolution affects the accuracy of a deep learning model in detecting parasite eggs.

- Dataset Preparation: Collect a dataset of high-resolution microscopic images of parasite eggs, confirmed by expert annotation [2].

- Resolution Degradation: Downsample the high-resolution images to simulate various low-resolution conditions (e.g., from 1000x to 10x magnification levels) [2].

- Model Training: Train a standard object detection model (e.g., a YOLO-based architecture) on the original high-resolution dataset. For comparison, train another model on the downsampled, low-resolution images [1] [2].

- Performance Metrics: Evaluate and compare both models using a separate test set. Key metrics to record are detailed in Table 1 below.

Protocol 2: Contrast Enhancement for Feature Improvement

This protocol describes a standard method for improving contrast in low-resolution images to aid visual inspection and automated analysis.

- Image Conversion: Convert the original RGB image to grayscale to reduce computational complexity [2].

- Histogram Analysis: Generate an RGB intensity histogram of the image to visualize its dynamic range [4].

- Apply Intensity Transformation: Use an intensity transfer function to stretch the histogram. This function broadens the range of brightness values in the mid-range levels of each color channel, resulting in an overall increase in perceived contrast [4] [5].

- Validation: Visually inspect the enhanced image to confirm that the edges and contours of target eggs are more distinct from the background.

Data Presentation

Table 1: Impact of Microscope Resolution on Parasite Egg Classification

The following table compares the performance of a Convolutional Neural Network (CNN) when classifying parasite eggs from images taken with different microscope types.

| Microscope Type | Magnification | Approximate Resolution | Model Precision | Model Recall | Key Limitations |

|---|---|---|---|---|---|

| High-Quality Microscope [2] | 1000x | High (detailed texture visible) | >97% [3] | High [3] | Expensive; limited availability in resource-constrained settings [2]. |

| Low-Cost USB Microscope [2] | 10x | 640 x 480 pixels | Lower than high-resolution models [2] | Lower than high-resolution models [2] | Lacks detail for species classification; low contrast; abundant impurities [2]. |

Table 2: Reagent and Computational Toolkit for Low-Resolution Image Research

This table lists key software and methodological solutions used in research to address challenges in low-resolution microscopic image analysis.

| Tool / Solution | Type | Primary Function |

|---|---|---|

| Transfer Learning (AlexNet, ResNet50) [2] | Computational Method | Leverages features from large image datasets to improve classification on smaller, low-resolution medical image sets. |

| Patch-Based Sliding Window [2] | Image Analysis Technique | Divides a large, low-resolution image into smaller patches to systematically search for and localize small objects like parasite eggs. |

| YAC-Net [1] | Lightweight Deep Learning Model | A modified YOLOv5n architecture designed for accurate parasite egg detection with reduced computational requirements. |

| YCBAM (YOLO Convolutional Block Attention Module) [3] | Deep Learning Model with Attention | Integrates self-attention mechanisms to help the model focus on spatially relevant features like egg boundaries in complex backgrounds. |

| Intensity Transfer Function [4] | Image Processing Algorithm | Increases image contrast by mapping input pixel brightness to a wider range of output values, making features more distinguishable. |

Workflow Diagrams

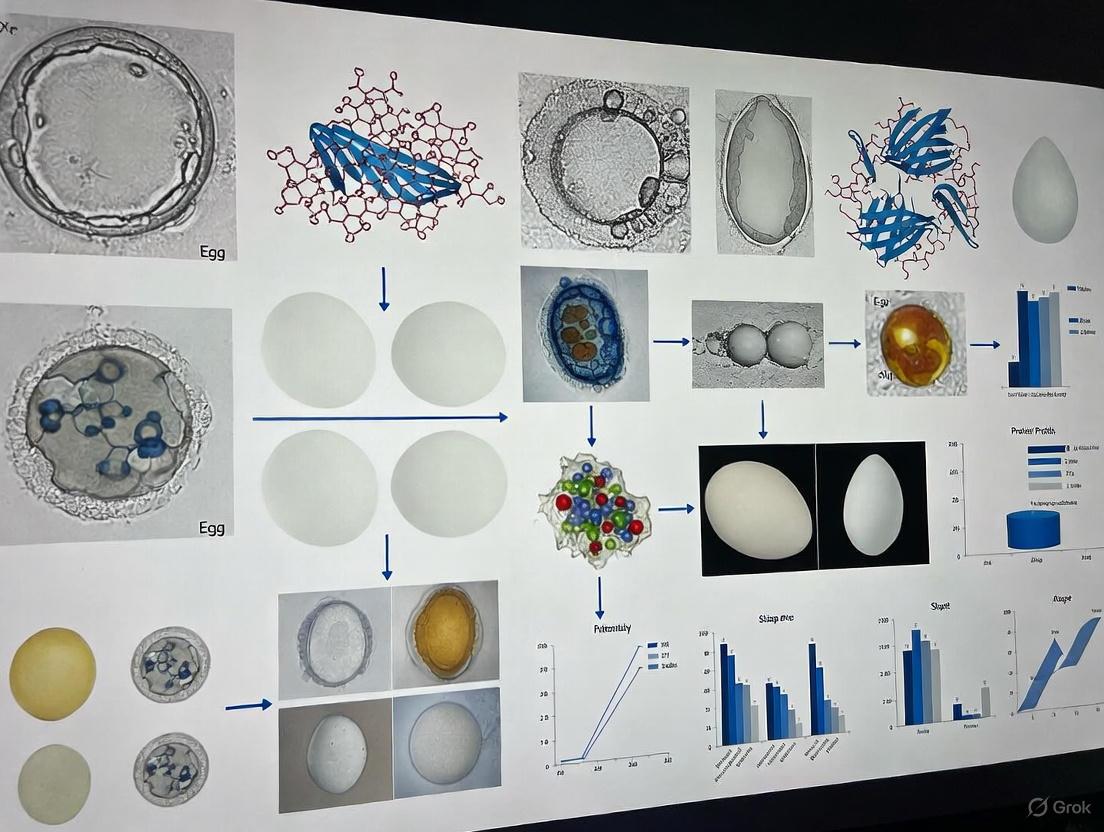

Diagram 1: Low-Resolution Analysis Problem Pathway

Diagram 2: Computational Enhancement Solution Workflow

In biological research, particularly in specialized fields like egg identification, the quality of microscopic images is paramount. Image degradation can stem from a multitude of sources, ranging from the fundamental physical limits of optics to practical errors in sample handling. For researchers relying on techniques such as RNA-FISH or immunofluorescence to study oocytes or early embryos, these artifacts can obscure critical details of gene expression and cellular structure, leading to inaccurate data. Understanding and mitigating these sources of degradation is a critical first step toward obtaining reliable, high-quality results for your analysis.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Our fluorescence microscopy images for RNA localization in eggs have inconsistent spot detection. What could be causing this? A1: Inconsistent signal puncta (discrete spots) in images, such as those from RNA-FISH, are often caused by varying background noise and signal intensity across samples. Manual or semi-automated quantification of these images is labor-intensive, biased, and difficult to reproduce [6]. We recommend using fully automated software tools like TrueSpot, which uses an automated threshold selection algorithm to handle images with varying background noise, resulting in higher precision and recall compared to other tools [6].

Q2: Our Atomic Force Microscopy (AFM) images of zona pellucida samples are too low-resolution or contain streaks. How can we improve them without damaging the sample with long scan times? A2: Achieving high resolution in ambient AFM is often hampered by slow scanning speeds, which can also risk damaging soft biological samples. Furthermore, AFM scans can contain inherent artifacts like streaking [7]. A viable solution is to acquire lower-pixel-resolution images to reduce measurement time and then enhance them using deep learning models. These models have been shown to outperform traditional interpolation methods, successfully upscaling images and eliminating common artifacts like streaking [7].

Q3: What are the most common sample preparation errors that lead to artifacts in SEM images of biological specimens? A3: For biological specimens, preparation is a common source of artifacts. Key errors include:

- Drying Effects: Improper drying can cause shrinking or distortion of delicate cellular structures [8].

- Charging: Imaging non-conductive materials without an adequate conductive coating can lead to charge accumulation, distorting the image [8].

- Beam Damage: Sensitive biological materials may degrade under the electron beam if the parameters are not optimized [8].

- Contamination: Residual particles or films on the specimen surface can lead to misleading images [8].

Q4: How can we achieve long-term, high-fidelity live-cell imaging of developmental processes without excessive phototoxicity? A4: Traditional super-resolution techniques often trade off between resolution, speed, and phototoxicity. New computational approaches can help. The DPA-TISR (Deformable Phase-Space Alignment for Time-Lapse Image Super-Resolution) neural network is designed for this purpose. It leverages temporal dependencies between consecutive frames to transform low-resolution, low-light time-lapse images into super-resolution sequences with high fidelity and temporal consistency, enabling multicolor live-cell SR imaging for over 10,000 time points [9].

Troubleshooting Guide: Identifying and Solving Common Issues

The table below summarizes common issues, their potential causes, and solutions.

| Category | Specific Issue | Potential Cause | Recommended Solution |

|---|---|---|---|

| Signal Detection | Inconsistent spot quantification in fluorescence images | Varying background noise; manual thresholding [6] | Use automated detection software (e.g., TrueSpot) with robust thresholding [6] |

| Low signal-to-noise ratio | Low photon count; detector inefficiency [8] | Increase signal averaging; use more sensitive detectors (e.g., EMCCD, sCMOS); optimize staining protocol | |

| Microscopy Technique | Low resolution in AFM | Slow scanning speed to avoid damage; tip bluntness [7] | Acquire fast, low-resolution scans and enhance with deep learning models [7] |

| Artifacts (e.g., streaking) in AFM | Scanning distortions; tip-sample interactions [7] | Apply deep learning models which can eliminate such artifacts during enhancement [7] | |

| Charging in SEM | Electron accumulation on non-conductive samples [8] | Apply a thin, uniform conductive coating (e.g., gold, carbon); use low-voltage imaging [8] | |

| Sample Preparation | Shrinking or distortion of biological samples | Improper drying techniques (e.g., air drying) [8] | Use critical point drying or cryo-preparation methods (e.g., cryo-SEM) [8] |

| Beam damage in SEM or TEM | Excessive electron beam current or dose [8] | Use lower beam energy; reduce exposure time; use cryo-conditions to stabilize the sample [8] | |

| Contamination | Dirty sample surface or holder [8] | Ensure thorough cleaning of sample and holder prior to insertion into the microscope [8] |

Quantitative Data & Experimental Protocols

Performance Comparison of Image Enhancement Techniques

The following table summarizes quantitative metrics comparing traditional and deep-learning methods for enhancing low-resolution AFM images, based on a study that upscaled images from 128x128 to 512x512 pixels. Higher PSNR and SSIM values indicate better fidelity to the ground-truth high-resolution image [7].

| Method / Model | Type | PSNR (Higher is Better) | SSIM (Higher is Better) |

|---|---|---|---|

| Bilinear | Traditional | 29.02 | 0.901 |

| Bicubic | Traditional | Data not fully specified | Data not fully specified |

| Lanczos4 | Traditional | Data not fully specified | Data not fully specified |

| NinaSR-B0 | Deep Learning | Data not fully specified | Data not fully specified |

| RCAN | Deep Learning | Data not fully specified | Data not fully specified |

| EDSR | Deep Learning | Data not fully specified | Data not fully specified |

Note on Findings: The study concluded that deep learning models outperformed traditional methods, yielding better results for super-resolution tasks in AFM. While specific values for each model were not fully listed in the provided excerpt, the deep learning models collectively demonstrated superior ability to enhance resolution and fidelity, and even completely eliminated common AFM artifacts like streaking [7].

Protocol: Enhancing Low-Resolution AFM Images Using Pre-Trained Deep Learning Models

This protocol is adapted from research on enhancing low-resolution AFM images of complex surfaces, which is applicable to biological membranes and surfaces [7].

Objective: To convert a low-resolution (128 x 128 pixel) AFM image into a high-resolution (512 x 512 pixel) image using a pre-trained super-resolution (SR) deep learning model.

Materials:

- Low-resolution AFM image (.tiff, .png, or compatible format).

- Computer with Python and PyTorch/TensorFlow installed.

- Pre-trained SR model (e.g., NinaSR, RCAN, CARN, RDN, EDSR).

Procedure:

- Image Acquisition: Acquire an AFM image of your sample at a low pixel resolution (128 x 128). For validation purposes, you may also acquire a high-resolution (512 x 512) image of the same area to serve as ground truth.

- Data Preprocessing:

- Load your low-resolution image into the computational environment.

- Normalize the pixel values to a range suitable for the model (e.g., [0, 1]).

- If the model requires specific input dimensions, resize the image accordingly.

- Model Application:

- Load the pre-trained weights of your chosen SR model (e.g., EDSR).

- Pass the preprocessed low-resolution image through the model to generate a 4x upscaled (super-resolved) image.

- Post-processing:

- Convert the model's output back to the original data range (e.g., 0-255 for 8-bit image display).

- Save the enhanced super-resolution image.

- Validation (Optional):

- Compare the enhanced image to the ground truth high-resolution image using fidelity metrics like PSNR and SSIM to quantitatively assess the improvement [7].

Visualization of Concepts & Workflows

Diagram 1: Decision Workflow for Addressing Microscope Image Degradation

The Scientist's Toolkit: Research Reagent Solutions

Key Research Reagents for Fluorescence Microscopy

This table details essential reagents and materials used in advanced fluorescence microscopy techniques, crucial for experiments like imaging gene expression in eggs.

| Reagent / Material | Function in Experiment | Specific Example / Note |

|---|---|---|

| Fluorescent Dyes & Labels | Tagging specific biomolecules (e.g., RNA, proteins) for visualization under a microscope. | Used in RNA-FISH and immunofluorescence to label individual RNA molecules or proteins [6]. |

| Conductive Coating Materials | Applied to non-conductive biological samples to prevent charging artifacts in electron microscopy. | Gold, carbon, or platinum-palladium; applied via sputter coating to create a thin, conductive layer [8]. |

| Cryo-Preparation Chemicals | Preserving the native state of biological structures by rapid freezing, avoiding drying artifacts. | Used in cryo-SEM and cryo-TEM; involves plunge freezing in ethane slush or high-pressure freezing [8]. |

| Wiener-Butterworth (WB) Deconvolution | A computational algorithm used to enhance image resolution and clarity during post-processing. | Used in SPI microscopy for non-iterative rapid deconvolution, providing ~40x faster processing than traditional methods [10]. |

| Fixed Biological Specimens | Prepared samples for method validation and testing of imaging protocols. | Biological specimens such as β-tubulin, mitochondria, and peroxisomes are used to validate microscope performance [10]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the precise morphological dimensions of a pinworm egg, and why do these measurements pose a detection challenge? Pinworm eggs (Enterobius vermicularis) measure 50–60 μm in length and 20–30 μm in width [11] [12] [13]. Their small size places them at the limit of visibility for the human eye and makes them difficult to resolve in low-resolution or noisy microscopic images, often requiring high magnification for accurate identification [3].

FAQ 2: Beyond their small size, what other morphological characteristics complicate automated image analysis? The eggs are transparent (colorless) and have a distinctive asymmetrical, flattened shape on one side, often described as "slice of bread" shaped [11] [13]. This lack of color contrast and an irregular, non-geometric shape makes it difficult for standard image processing algorithms to distinguish them from background debris or artifacts in a sample [3].

FAQ 3: How does the egg's surface property impact the laboratory environment and sample analysis? The outer shell of pinworm eggs is adhesive [11] [12]. This stickiness causes eggs to readily cling to fingers, under fingernails, clothing, bedding, and dust particles [13]. In a research setting, this increases the risk of sample cross-contamination and can lead to false positives if laboratory surfaces and equipment are not meticulously cleaned [11].

FAQ 4: What is the recommended standard method for diagnosing pinworm infection, and what is its key limitation? The diagnostic gold standard is the cellulose tape test ("Scotch" test), where transparent tape is applied to the perianal skin first thing in the morning to collect eggs, which are then examined under a microscope [11] [14]. A key limitation is its dependence on examiner skill and experience, and its sensitivity can be variable, sometimes requiring multiple tests on consecutive days to confirm a negative result [3] [14].

FAQ 5: What are the most common experimental treatments used in pinworm research, and what is a critical consideration for a successful protocol? Common antihelminthic agents include Albendazole, Mebendazole, and Pyrantel Pamoate [15] [14] [13]. A critical factor for eradicating the parasite in an experimental cohort is treating all individuals within a shared environment simultaneously, even if they are asymptomatic, to prevent rapid reinfection and break the cycle of transmission [15] [13].

Troubleshooting Common Experimental Challenges

Challenge 1: Low detection rate and high false negatives in manual egg counting.

- Potential Cause: The small size and transparency of the eggs make them easy to miss during manual microscopy, especially in samples with a high background of debris [3].

- Solution: Implement an automated detection system. Recent studies have successfully used deep learning models, such as an enhanced YOLO (You Only Look Once) architecture, to achieve precision and recall rates exceeding 97% in detecting pinworm eggs in microscopic images [1] [3]. These models can be trained to recognize the specific morphological features of pinworm eggs, reducing human error.

Challenge 2: Poor image quality and resolution for reliable automated analysis.

- Potential Cause: Standard microscope cameras or settings may not provide sufficient detail for software to distinguish eggs from similarly sized particles.

- Solution: Optimize image acquisition protocols. Ensure consistent and adequate lighting (e.g., Kohler illumination) and use microscopes with high-resolution cameras. For image analysis, employ pre-processing techniques like noise reduction and contrast enhancement to standardize input images before they are fed into the detection algorithm [3].

Challenge 3: Persistent reinfection in a laboratory animal cohort.

- Potential Cause: The sticky, environmental-resistant eggs contaminate the housing environment (bedding, food, water dispensers), leading to repeated transmission [11] [13].

- Solution: Combine antihelminthic treatment with rigorous environmental decontamination. All bedding should be changed and cages thoroughly cleaned with hot water. Linen, towels, and other washable materials should be laundered in hot water simultaneously with treatment administration [14] [13]. Avoid shaking materials to prevent aerosolizing eggs [14].

The table below summarizes the key quantitative data related to Enterobius vermicularis.

Table 1: Pinworm (Enterobius vermicularis) Morphological and Life Cycle Metrics

| Parameter | Specification | Experimental Significance |

|---|---|---|

| Egg Dimensions | 50–60 μm by 20–30 μm [11] [13] | Defines the resolution requirement for imaging systems; target size for object detection models. |

| Egg Maturation Time | Larvae within eggs become infective in 4–6 hours after deposition [11] [13] | Critical for understanding the timeline of infectivity and for designing studies on larval development. |

| Time to Oviposition | Approximately 2–6 weeks after ingestion of eggs [13] | Informs the duration of experimental studies tracking infection and life cycle progression. |

| Adult Female Worm Size | 8–13 mm long by 0.3–0.5 mm wide [11] | Useful for gross anatomical identification in animal models. |

| Adult Male Worm Size | 2–5 mm long by 0.1–0.2 mm wide [11] | Useful for gross anatomical identification in animal models. |

| Estimated Eggs per Gravid Female | Ranges from >10,000 to 16,000 [12] [13] | Indicates the high potential for environmental contamination and transmission from a single worm. |

Experimental Protocols

Protocol 1: Standardized Cellulose Tape Test for Egg Collection This is the primary method for collecting pinworm eggs for imaging and analysis [11] [14].

- Materials: Clear cellulose tape (e.g., "Scotch" tape), microscope slides, disposable gloves.

- Procedure:

- The sample should be collected first thing in the morning, before defecation or bathing [11].

- Cut a 3–4 inch strip of clear tape. With the sticky side down, firmly press the tape over the perianal skin folds.

- Carefully peel the tape away and adhere it, sticky side down, onto a clean microscope slide. Avoid folding the tape or creating air bubbles.

- The slide can then be examined directly under a microscope. Eggs can be viewed in a wet mount or stained with iodine for better visualization [11].

- Technical Notes: For higher diagnostic sensitivity, this process should be repeated for 3 to 5 consecutive mornings [15] [14].

Protocol 2: Workflow for Automated Egg Detection using a Deep Learning Model This protocol outlines the steps to implement a deep learning-based detection system, such as the YCBAM model described in the literature [3].

- Dataset Curation: Collect a large set of microscopic images of pinworm eggs. The dataset must be annotated by experts, meaning each egg in every image is labeled with a bounding box. Models in recent studies were trained on datasets containing over 1,000 images [3].

- Model Selection & Training: Choose a object detection architecture like YOLOv8. Integrate attention modules like the Convolutional Block Attention Module (CBAM) to help the model focus on the small, critical features of the eggs and ignore irrelevant background noise [3]. Train the model on your annotated dataset.

- Validation & Testing: Evaluate the trained model on a separate set of images that it has not seen before. Key performance metrics to calculate include Precision, Recall, and mean Average Precision (mAP) [3].

- Deployment: The trained model can be deployed on a computer connected to a microscope to analyze new samples in real-time or in batch processing.

The following diagram illustrates the logical workflow for this automated detection process:

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 2: Essential Materials for Pinworm Egg Research

| Item | Function / Application | Key Notes |

|---|---|---|

| Clear Cellulose Tape | Collection of eggs from the perianal skin for microscopic analysis. | The standard material for the "tape test"; transparency is critical for light microscopy [11] [14]. |

| Microscope Slides & Coverslips | Preparation of samples for microscopic examination. | Standard equipment for creating wet mounts of tape test samples or other specimens. |

| Iodine Stain | Enhancing the visibility of translucent pinworm eggs under the microscope. | Stains the larval interior, making eggs easier to identify against the background [11]. |

| Antihelminthic Agents (e.g., Albendazole, Mebendazole) | Experimental treatment to eliminate adult worms and disrupt the parasite life cycle. | Typically administered as a single dose, repeated after two weeks to target newly matured worms [15] [13]. |

| Deep Learning Model (e.g., YOLOv8 with CBAM) | Automated detection and quantification of eggs in digital microscope images. | Reduces human error and increases throughput; attention modules (CBAM) are particularly useful for small object detection [3]. |

| Gilson's Fluid | Preservation of ova and parasites for later morphological study. | A fixative solution used in parasitology to preserve eggs for size and developmental studies [16]. |

Limitations of Traditional Manual Examination and Human Visual Perception

Frequently Asked Questions (FAQs)

Q1: What are the primary limitations of human visual perception when analyzing low-resolution microscopic images?

Human visual perception struggles with low-resolution images due to several inherent limitations. When image resolution drops as low as 5 pixels per character dimension, critical details become indistinguishable, leading to significant identification errors [17]. Human analysts also face challenges with visual fatigue during prolonged examination, resulting in decreased concentration and increased oversight rates. Furthermore, human perception has limited ability to simultaneously process multiple visual features, making it difficult to identify subtle patterns or slight variations between similar specimens, especially when dealing with highly similar morphological structures [18].

Q2: How do computational methods address the limitations of manual examination for egg identification?

Computational approaches, particularly deep learning models, overcome human limitations through several mechanisms. They employ data augmentation techniques to simulate various image conditions, creating training variations that enhance model robustness [19]. Advanced feature extraction using convolutional neural networks automatically learns discriminative features that may be imperceptible to human observers [17]. These models also provide quantitative assessment capabilities, eliminating subjective bias through consistent, measurable evaluation criteria [18]. For low-resolution challenges specifically, specialized frameworks like ReSTOLO implement two-stage detection that separates localization and classification tasks, achieving precision and recall rates exceeding 85% even with limited data [18].

Q3: What specific image quality issues most significantly impact identification accuracy?

The most impactful image quality issues for egg identification include:

- Low Resolution: Causes loss of critical morphological details and edge definition, fundamentally limiting discernible information [17].

- Noise Interference: Introduces visual artifacts that obscure genuine features, particularly problematic with specimens having fine textures or similar morphological structures [17].

- Insufficient Contrast: Reduces distinction between the specimen and background, complicating segmentation and feature extraction.

- Blur and Focus Issues: Result in unclear boundaries and internal structures, preventing accurate morphological analysis.

Q4: What data augmentation techniques are most effective for low-resolution microscopic images?

Table: Effective Data Augmentation Techniques for Low-Resolution Microscopy

| Technique Category | Specific Methods | Impact on Model Performance |

|---|---|---|

| Geometric Transformations | Rotation, Flip, Translation, Scaling | Improves invariance to orientation and positional variance [19] |

| Color and Contrast Adjustments | Brightness/Contast Variation, Color Jitter, Grayscale Conversion | Enhances robustness to lighting and staining variations [19] |

| Noise and Artifact Simulation | Adding Gaussian Noise, Masking, Blurring | Prevents overfitting and improves performance on imperfect images [19] |

| Advanced Generative Methods | MixUp, CutMix, CutOut, Generative AI | Creates more diverse training samples and teaches model to handle occlusions [19] |

Q5: How can researchers optimize imaging protocols to minimize identification challenges?

Optimizing imaging protocols involves both equipment configuration and image processing strategies. Implement multi-frame capture with image stitching to increase effective field of view and resolution [20]. Ensure consistent lighting conditions and use contrast enhancement techniques during acquisition. For computational analysis, employ pre-processing pipelines that include noise reduction filters (median filters for impulse noise, Gaussian filters for general noise) and normalization to standardize input values, which accelerates learning and improves accuracy [17].

Troubleshooting Guides

Problem: Low Identification Accuracy with High Similarity Specimens

Issue: Difficulty distinguishing between morphologically similar egg types in low-resolution images.

Solution: Implement a two-stage recognition framework inspired by ReSTOLO that separates localization and classification tasks [18].

Experimental Protocol:

- Image Acquisition: Capture images using standardized microscopic parameters, ensuring consistent resolution and lighting.

- Data Preparation: Apply augmentation techniques including random rotation (±15°), slight brightness/contrast variation, and minimal noise addition to expand dataset diversity [19].

- Model Training:

- Validation: Evaluate using precision, recall, and F1-score metrics, with particular attention to confusion between similar classes.

Workflow Diagram:

Problem: Limited Training Data for Rare Specimens

Issue: Insufficient image samples for specific egg types, leading to model bias and poor generalization.

Solution: Deploy advanced data augmentation and few-shot learning techniques to maximize limited data utility.

Experimental Protocol:

- Data Augmentation Pipeline: Implement a comprehensive augmentation strategy:

- Apply geometric transformations (rotation, scaling, shearing) with constrained parameters to maintain biological validity [19].

- Use color space modifications (hue, saturation, brightness) to simulate different staining intensities.

- Add generative augmentation (TextCaps) that introduces natural variations simulating real-world appearance changes [17].

- Few-Shot Learning Approach: For classes with very few samples (≤10 images), employ few-shot learning frameworks that learn to compare samples rather than classify directly.

- Transfer Learning: Initialize models with pre-trained weights from general image datasets, then fine-tune on the specialized microscopic image domain [20].

- Evaluation: Use cross-validation with multiple splits to reliably estimate performance with small datasets.

Workflow Diagram:

Problem: Handling Variable Image Quality and Resolution

Issue: Inconsistent image quality due to different imaging equipment or preparation techniques.

Solution: Develop a quality assessment and normalization pipeline to standardize inputs.

Experimental Protocol:

- Quality Metric Definition: Establish quantitative metrics for focus (blurriness), contrast, noise level, and illumination evenness.

- Pre-processing Pipeline:

- Implement noise reduction using median filtering for impulse noise and Gaussian filtering for general noise [17].

- Apply contrast enhancement techniques (histogram equalization, CLAHE) to improve feature discernibility.

- Use normalization to standardize pixel values across all images, accelerating model convergence [17].

- Resolution Handling: For significantly different resolutions, employ multi-scale processing approaches or resizing with appropriate interpolation methods.

- Model Architecture Selection: Choose architectures with demonstrated robustness to resolution variations, such as those incorporating attention mechanisms or feature pyramids.

Research Reagent Solutions

Table: Essential Materials for Microscopic Egg Identification Research

| Reagent/Equipment | Function/Purpose | Usage Considerations |

|---|---|---|

| Standard Staining Solutions | Enhance contrast and highlight morphological features for better visual and computational analysis | Optimize concentration to avoid artifact creation; test compatibility with imaging systems [18] |

| Image Annotation Software | Create accurate ground truth data for training and evaluating computational models | Ensure consistency across multiple annotators; establish clear labeling guidelines [20] |

| Data Augmentation Tools | Programmatically expand training datasets and improve model generalization | Select tools supporting microscopy-specific transformations; avoid unrealistic alterations [19] |

| Deep Learning Frameworks | Provide infrastructure for developing and training custom identification models | Choose based on model architecture needs (TensorFlow, PyTorch) and deployment requirements [17] |

| High-Resolution Reference Dataset | Serve as benchmark for evaluating algorithm performance on optimal quality images | Establish standardized acquisition protocols; ensure representative specimen coverage [18] |

AI and Deep Learning Solutions: From Image Enhancement to Automated Detection

In the field of parasitic egg identification research, the quality of microscopic images is paramount. Low-resolution (LR) images can obscure critical morphological details, hindering accurate detection and analysis. Deep Learning-based Super-Resolution (SR) has emerged as a powerful technique to enhance image quality by reconstructing high-resolution (HR) images from their LR counterparts. This technical support center provides researchers with essential guidance on implementing SR models such as EDSR and RCAN to improve the clarity and diagnostic value of low-resolution microscopic images in your studies.

Frequently Asked Questions (FAQs)

1. What are the key advantages of deep learning SR models like EDSR and RCAN over traditional methods for microscopic image enhancement?

Traditional interpolation-based methods (e.g., bilinear, bicubic) often produce blurred images that lack fine texture details [21]. Deep learning SR models significantly outperform these methods. They not only enhance image resolution but can also simultaneously address common microscopy issues. For instance, in Atomic Force Microscopy (AFM), deep learning models have been shown to completely eliminate streaking artifacts, which traditional methods could only partially attenuate [7]. Furthermore, models like RCAN employ channel attention mechanisms to adaptively rescale feature maps, enhancing diagnostically crucial details while suppressing less relevant information [21].

2. How do I choose between different SR models like EDSR, RCAN, and RDN for my parasite egg image data?

The choice depends on your specific priorities regarding image quality, computational cost, and the need to preserve specific features. The table below summarizes the performance of several state-of-the-art models to help you decide.

Table 1: Performance Comparison of Deep Learning Super-Resolution Models

| Model | Key Architectural Feature | Reported Performance (PSNR/SSIM) | Best For |

|---|---|---|---|

| EDSR | Deep residual networks without batch normalization [21] | ~35.85 dB PSNR, 0.85 SSIM (on general images) [22] | Scenarios requiring a balance of performance and faster processing [23] |

| RCAN | Residual Channel Attention Network [21] | ~37.88 dB PSNR, 0.986 SSIM (on thermal images) [23] | Enhancing fine-grained details critical for egg identification |

| RDN | Residual Dense Network [7] | ~30.18 dB PSNR, 0.945 SSIM (on thermal images) [23] | Extracting abundant local features from images |

| SwinIR | Swin Transformer architecture for image restoration [21] | ~37.84 dB PSNR, 0.99 SSIM (on general images) [22] | Capturing long-range dependencies and high perceptual quality |

3. Which evaluation metrics are most relevant for assessing SR performance in a biomedical context?

A combination of fidelity metrics and task-based metrics is recommended.

- Fidelity Metrics: These quantify pixel-level similarity between the SR image and a ground truth HR image.

- Task-Based Metrics: These are ultimately more important for clinical or research utility. If SR is used to improve parasite egg segmentation, you should monitor the Dice coefficient or Intersection over Union (IoU). For classification tasks, track improvements in accuracy or the F1-score [21].

4. My high-resolution ground truth images contain scanning artifacts. Will the SR model learn and amplify these artifacts?

No, a distinct advantage of deep learning SR models is their ability to suppress artifacts. Research on AFM images has demonstrated that while common artifacts like streaking are present in high-resolution "ground truth" scans, they are often completely eliminated in the images generated by the deep learning models [7]. The models learn to generate a clean, high-resolution version of the structure rather than simply replicating all noise and artifacts from the training data.

Troubleshooting Guides

Problem 1: Poor Generalization to Real-World Low-Resolution Images

Symptom: The model performs well on synthetically down-scaled images but poorly on real low-resolution images from your microscope.

Solutions:

- Address the Domain Gap: Models trained on natural images (e.g., the DIV2K dataset [24]) may not transfer well to microscopic domain. Fine-tune pre-trained models on a dataset of your own microscopic images [7].

- Use Real Image Pairs: If possible, train the model using paired low-resolution and high-resolution images acquired from your microscope, as this accounts for the real optical degradation process [7].

- Pre-process Your Data: Ensure consistency in intensity ranges and color channels between your training data and the images you intend to enhance.

Problem 2: Over-Smoothing and Lack of Textural Detail

Symptom: The super-resolved images appear overly smooth and lack the high-frequency textures needed to distinguish fine features of parasite eggs.

Solutions:

- Model Selection: Switch to a model designed for perceptual quality. While EDSR achieves high PSNR, GAN-based models like ESRGAN can generate more realistic textures, though they may introduce hallucinated features [21] [22].

- Loss Function: Models trained with a combination of loss functions (e.g., L1 loss for fidelity and a perceptual loss for texture) often produce more visually pleasing results [21].

- Leverage Attention Mechanisms: Use models like RCAN, which use channel attention to enhance important high-frequency features selectively [21].

Problem 3: Long Inference Times and Computational Bottlenecks

Symptom: Processing images takes too long, making it impractical for high-throughput analysis of many samples.

Solutions:

- Implement Lightweight Models: Explore efficient architectures like LiteLoc, which uses dilated convolutions and a simplified U-Net to achieve high precision with low computational overhead [25].

- Optimize Workflow: Use a scalable framework that supports parallel processing across multiple GPUs and CPUs to maximize hardware utilization [25].

- Model Compression: Apply techniques like network pruning or quantization to reduce the size and complexity of your SR model, speeding up inference [25].

Experimental Protocols

Protocol 1: Benchmarking SR Models for Microscopy

This protocol outlines how to quantitatively compare different SR models on your specific dataset of microscopic images.

Research Reagent Solutions: Table 2: Essential Materials for Super-Resolution Experiments

| Item | Function/Description |

|---|---|

| High-Resolution Microscope | Provides the ground truth high-resolution images for training and evaluation. |

| DIV2K Dataset | A dataset of 800 high-quality training images and 100 validation images, commonly used for pre-training SR models [24]. |

| PyTorch or TensorFlow | Deep learning frameworks used for implementing and training SR models. |

| GPU (e.g., NVIDIA GTX 1080Ti or higher) | Hardware accelerator essential for training deep learning models in a reasonable time [23]. |

Methodology:

- Data Preparation:

- Acquire a set of paired images: true low-resolution (LR) images and their corresponding high-resolution (HR) ground truth images captured from your microscope [7].

- If true LR images are unavailable, you can generate a synthetic test set by down-sampling your HR images using a realistic degradation model (e.g., bicubic down-sampling with blur) [21].

- Split your data into training, validation, and test sets.

Model Training & Inference:

- Select models to benchmark (e.g., EDSR, RCAN, RDN).

- For each model, use its standard training procedure or a pre-trained model. The ADAM optimizer with a learning rate of 0.0001 is commonly used [23].

- Process your LR test images with each trained model to generate the super-resolved (SR) images.

Quantitative Evaluation:

Qualitative & Task-Based Evaluation:

- Visually inspect the SR images for the presence of artifacts, sharpness, and textural realism.

- Evaluate the downstream task performance. For egg identification, this could involve measuring the detection accuracy (e.g., using a model like YOLOv5 [1]) or segmentation performance on the SR images compared to the original LR images.

The workflow for this benchmarking process is summarized in the following diagram:

Protocol 2: Integrating SR into an Egg Detection Pipeline

This protocol describes how to incorporate a trained SR model as a pre-processing step to improve the performance of an automated parasite egg detection system.

Methodology:

- Build the Pipeline: Construct a two-stage pipeline. The first stage takes a raw low-resolution microscopic image and uses your chosen SR model (e.g., RCAN) to generate a high-resolution image. The second stage feeds this enhanced image into an object detection model, such as a lightweight YOLO variant (e.g., YAC-Net [1]), for egg identification and counting.

- End-to-End Validation: Test the entire pipeline on a dedicated set of low-resolution images. Compare the egg detection results (e.g., using precision, recall, and F1-score [1]) against the same detection model operating on the original low-resolution images without SR enhancement.

- Optimization: To reduce latency, consider converting the SR model to an optimized format (like TensorRT) or using a distilled, lighter-weight SR network to speed up the pre-processing step [25].

The logical structure of this integrated pipeline is as follows:

Frequently Asked Questions (FAQs)

General Architecture Questions

Q1: What is the advantage of integrating CBAM into a YOLO model for microscopic image analysis?

Integrating the Convolutional Block Attention Module (CBAM) enhances YOLO's capability to detect small, low-contrast targets like parasite eggs by allowing the model to selectively focus on the most relevant features. CBAM sequentially infers attention maps along both the channel and spatial dimensions of the feature maps. This means it can adaptively emphasize important feature channels (e.g., those highlighting edges or textures of eggs) and critical spatial regions (the exact location of the egg within a cluttered background). This dual attention significantly improves feature extraction from complex backgrounds, increasing the model's sensitivity and accuracy for small targets in low-resolution images [3].

Q2: How do lightweight CNNs, like the one in SE-CBAM-YOLOv7, help with deployment in resource-constrained settings?

Lightweight CNNs reduce the computational cost and number of parameters in a model without significantly compromising performance. In the SE-CBAM-YOLOv7 architecture, the standard convolution is replaced with a lightweight Squeeze-and-Excitation Convolution (SEConv). This replacement reduces the computational parameters of the network, accelerating the detection process. This is crucial for real-time applications or for deploying automated diagnostic tools in field settings or laboratories with limited computational resources, as it lowers the hardware requirements for performing automated detection [26] [1].

Q3: My model performs well on high-quality images but fails on low-resolution or blurred data. What architectural improvements can help?

This is a common challenge in microscopic imaging. The following architectural strategies have proven effective:

- Asymptotic Feature Pyramid Network (AFPN): Replacing a standard Feature Pyramid Network (FPN) with an AFPN can be beneficial. An AFPN uses a hierarchical and gradual fusion structure to more fully integrate spatial contextual information from different scales. Its adaptive spatial fusion mode helps the model select beneficial features and ignore redundant information, which improves performance on low-resolution images and reduces computational complexity [1].

- Enhanced Feature Extraction Backbone: Modifying the backbone network, for instance by replacing a C3 module with a C2f module (as seen in the YAC-Net model), can enrich gradient flow and improve the feature extraction capability of the network, helping it learn more discriminative features from lower-quality data [1].

Implementation and Optimization Questions

Q4: I am experiencing slow inference speeds even with a lightweight YOLO model. What can I do to improve performance?

Slow inference can stem from several factors. Please refer to the detailed troubleshooting guide in the next section for a step-by-step diagnosis. Key areas to check include the model version, input image size, and the use of hardware acceleration like half-precision (FP16). For example, using an input size of 320x320 instead of 640x640 can significantly increase FPS (frames per second), though it may involve a trade-off with accuracy, particularly for smaller objects [27].

Q5: How critical is the choice of YOLO version for my project?

The choice of YOLO version involves a direct trade-off between speed and accuracy. Smaller models (e.g., YOLOv5n, YOLOv11n) are faster and have fewer parameters, making them ideal for deployment, while larger models (e.g., YOLOv5x, YOLOv11x) are more accurate but require more computational resources. The table below summarizes this trade-off based on benchmark data [27].

Table 1: Comparison of YOLO Model Versions (Representative Data)

| Model | mAP (%) | FPS | Use Case Recommendation |

|---|---|---|---|

| YOLOv11n | 38.3 | ~180 | Optimal for high-speed, resource-constrained deployment. |

| YOLOv11m | 49.1 | ~95 | Recommended optimum for balancing speed and accuracy [27]. |

| YOLOv11x | 52.2 | ~45 | Best when maximum accuracy is required and resources are sufficient. |

Note: mAP and FPS values can vary based on dataset and hardware.

Troubleshooting Guides

Issue: Slow Model Inference Speed

Slow inference speed can bottleneck an entire automated system. Follow this guide to identify and resolve the issue.

Table 2: Troubleshooting Slow Inference Speed

| Step | Issue | Solution & Rationale | Key Parameter to Adjust |

|---|---|---|---|

| 1 | Model is too large for the task. | Switch to a smaller model variant (e.g., from YOLOv11l to YOLOv11n). Smaller models have fewer layers and parameters, leading to faster computation [27]. | model = YOLO('yolo11n.pt') |

| 2 | Input image resolution is too high. | Reduce the input image size. A lower resolution (e.g., 320x320) requires the model to process fewer pixels, drastically improving FPS, though it may reduce accuracy for small objects [27]. | imgsz=320 |

| 3 | Not leveraging hardware acceleration. | Enable half-precision (FP16) inference. Using 16-bit floating-point numbers reduces memory usage and accelerates computation on supported GPUs (e.g., with NVIDIA TensorRT), often with a minimal loss in accuracy [27]. | half=True |

| 4 | Data loading is a bottleneck. | Increase the number of worker threads for data loading. This ensures the GPU is constantly fed with data and not waiting for the CPU to pre-process images [27]. | workers=8 |

Experimental Protocol for Speed Optimization:

- Baseline: Measure the FPS and mAP of your current model with default settings.

- Intervention: Apply one optimization from the table above (e.g., change image size to 320).

- Evaluation: Re-measure FPS and mAP. Calculate the performance trade-off.

- Iterate: Try a combination of optimizations (e.g., a smaller model with FP16 enabled) to find the best balance for your specific application.

Issue: Poor Detection Accuracy for Small Eggs

Difficulty in detecting small or low-contrast parasite eggs is a primary challenge.

Table 3: Troubleshooting Low Detection Accuracy

| Step | Issue | Solution & Rationale | Architectural Component |

|---|---|---|---|

| 1 | Model loses fine-grained feature information for small objects. | Integrate an attention mechanism like CBAM. CBAM enhances critical features in both channel and spatial dimensions, helping the model focus on small target characteristics and ignore background noise [26] [3]. | Convolutional Block Attention Module (CBAM) |

| 2 | Model struggles with multi-scale objects. | Use an advanced feature fusion neck like AFPN. Unlike standard FPN, AFPN better integrates multi-scale contextual information, allowing the model to leverage both low-level spatial details and high-level semantic information effectively [1]. | Asymptotic Feature Pyramid Network (AFPN) |

| 3 | Backbone feature extraction is insufficient. | Enhance the backbone network. For example, replacing C3 modules with C2f modules can enrich gradient information flow, improving the backbone's ability to extract discriminative features from challenging images [1]. | Backbone (e.g., C2f module) |

Experimental Protocol for an Improved Architecture (e.g., SE-CBAM-YOLOv7):

- Backbone Modification: Replace standard convolution (Conv) in the baseline YOLO model with lightweight SEConv to reduce parameters and focus computation [26].

- Feature Enhancement: Modify the SPPCSPC module to be based on SEConv, enhancing multi-scale feature extraction and the model's receptive field [26].

- Feature Fusion Enhancement: Integrate the CBAM attention module into the feature fusion path (e.g., creating a CBAMConcat module). This allows the network to adaptively refine features by emphasizing important channels and spatial locations before prediction [26].

- Evaluation: Train the modified model and compare its mAP, especially on small targets, against the baseline. The proposed SE-CBAM-YOLOv7, for instance, showed a 1.7% increase in mAP and a significant enhancement in detecting small aircraft targets, a finding translatable to small egg detection [26].

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and their functions for building effective detection models in the context of low-resolution microscopic egg identification.

Table 4: Essential Research Reagents for Model Development

| Research Reagent | Function & Explanation | Exemplar Use Case |

|---|---|---|

| YOLO Model Variants | A family of single-stage object detectors offering a balance of speed and accuracy. Different sizes (n, s, m, l, x) allow researchers to select the appropriate model based on their computational constraints and accuracy requirements [27] [1]. | YOLOv5n or YOLOv8n serve as excellent baseline models for creating larger, customized architectures like YAC-Net or SE-CBAM-YOLOv7 [26] [1]. |

| Convolutional Block Attention Module (CBAM) | A lightweight attention module that sequentially applies channel and spatial attention to feature maps. It helps the model focus on "what" and "where" is important, crucial for distinguishing small eggs from complex backgrounds [26] [3]. | Integrated into the YOLO neck (e.g., as a CBAMConcat module) to refine features before the final detection head, improving feature fusion capability [26]. |

| Asymptotic Feature Pyramid Network (AFPN) | A feature fusion network designed for more effective multi-scale feature integration. It mitigates the information loss common in traditional FPN structures, which is vital for detecting objects of varying sizes [1]. | Replacing the standard PANet or FPN in a YOLO model's neck to improve the detection of eggs at different scales and resolutions [1]. |

| Public Parasite Egg Datasets | Curated, annotated image datasets used for training and validating models. The quality, size, and diversity of the dataset are fundamental to model performance. | The ICIP 2022 Challenge dataset is a key resource for training and benchmarking models like YAC-Net in a standardized manner [1]. |

This technical support center provides troubleshooting guides and frequently asked questions (FAQs) for researchers and scientists working on the automated identification of parasite eggs from low-resolution microscopic images.

### Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of poor model performance on my low-resolution microscopic images? Poor performance often stems from challenges in the image data itself. Pinworm eggs, for example, are small (50–60 μm in length and 20–30 μm in width) and can have a thin, colorless, and transparent shell, making them morphologically similar to other microscopic particles and difficult to distinguish from background debris [3]. Additionally, low-resolution images may lack the detail necessary for the model to learn these distinguishing features.

FAQ 2: How can I improve the detection of small, transparent eggs in a cluttered background? Consider using deep learning architectures that incorporate attention mechanisms. For instance, the YOLO Convolutional Block Attention Module (YCBAM) integrates self-attention and a Convolutional Block Attention Module (CBAM) to help the model focus on essential image regions and critical features like egg boundaries, while reducing the influence of irrelevant background noise [3]. This has been shown to achieve a high mean Average Precision (mAP) of 0.9950 [3].

FAQ 3: My automated detection is computationally expensive. Are there more efficient models? Yes, designing lightweight models is an active research area. One approach is to modify existing architectures like YOLO with more efficient components. The YAC-Net model, for example, uses an Asymptotic Feature Pyramid Network (AFPN) and a C2f module to enrich gradient information. This strategy reduced the number of parameters by one-fifth compared to its baseline model while still achieving a precision of 97.8% and an mAP of 0.9913 on parasite egg detection [1].

FAQ 4: I have issues with image file handling and data quality. What should I check? A common challenge is the improper export of images from the microscope. Ensure your export settings are configured to avoid "lossy" compression, which can introduce artifacts, and select a format like TIFF that preserves all intensity values. Microscope images often contain more than 256 intensity values per channel (e.g., 16-bit), and export software set for standard 8-bit RGB images can clip or compress this data, destroying information [28].

FAQ 5: What is the difference between object detection and instance segmentation for my analysis? Your choice depends on the scientific question. Object detection (providing a centroid and bounding box) is suitable for tasks like counting how many eggs or cells are present in an image. Instance segmentation (finding the exact boundary of each object) is necessary if you need to measure properties of the objects themselves, such as their size or shape. Segmentation is typically more computationally demanding but provides more detailed information [28].

### Troubleshooting Guides

Guide 1: Troubleshooting Image Preprocessing for Low-Resolution Images

Problem: Blurry, noisy, or low-contrast images are leading to high false-positive and false-negative rates in model inference.

Solutions:

- Apply Deep Learning-Based Enhancement: Modern deep learning methods far surpass traditional filters for tasks like denoising and resolution enhancement (super-resolution) [22]. Consider using models like DnCNN for denoising (achieving PSNR=37.01) or ESRGAN for super-resolution to improve image quality before analysis [22].

- Leverage Fully Automated Tools: For fluorescent spot quantification, tools like TrueSpot can automatically set signal thresholds, which is particularly useful for images with varying background noise. TrueSpot has been shown to outperform other tools in such conditions [6].

- Verify Your Export Settings: As highlighted in the FAQs, always confirm that your microscope's export function is not downgrading your image data. Use lossless formats and preserve the original bit-depth [28].

Guide 2: Troubleshooting Model Training and Deployment

Problem: The model works well in experimentation but fails in a production environment or on new data.

Solutions:

- Address the Experimentation vs. Production Gap: Models in production must handle dynamic data streams in real-time with strict latency requirements, unlike the static datasets used in experimentation [29]. Implement a robust MLOps framework for continuous monitoring of model performance, data drift, and system health [30] [29].

- Implement a Lightweight Model for Deployment: To reduce the hardware requirements for automated detection, especially in resource-constrained settings, deploy optimized models like YAC-Net [1]. This promotes scalability and allows for potential edge deployment.

- Ensure Rigorous Validation: Test your model extensively on data from different sources (e.g., different soil types or imaging parameters) to ensure generalization. Avoid data leakage during validation to get a true picture of model performance [29].

### Experimental Protocols & Data

Detailed Methodology: YCBAM for Pinworm Egg Detection

This protocol outlines the procedure for using the YOLO Convolutional Block Attention Module (YCBAM) to detect pinworm eggs [3].

- Image Acquisition: Collect microscopic images of samples using standard microscopy techniques. The model is designed to handle noisy and varied environments common in microscopic imaging.

- Data Annotation: Expert personnel manually label the images, marking the location of pinworm eggs to create ground truth data for training and validation.

- Model Architecture:

- Base Network: Use YOLOv8 as the base object detection model.

- Integration of Attention Modules: Integrate the Convolutional Block Attention Module (CBAM) into the YOLO architecture. CBAM sequentially infers attention maps along both the channel and spatial dimensions, allowing the model to focus on "what" and "where" is meaningful.

- Self-Attention Mechanism: Incorporate a self-attention mechanism to capture long-range dependencies within the image, providing a dynamic feature representation.

- Training: Train the YCBAM model on the annotated dataset. The study achieved efficient learning and convergence, indicated by a training box loss of 1.1410 [3].

- Evaluation: Evaluate model performance on a held-out test set using standard metrics. The original study reported a precision of 0.9971, recall of 0.9934, and a mAP@0.5 of 0.9950 [3].

Performance Comparison of Detection Models

The following table summarizes the quantitative performance of various deep-learning models for parasite egg detection, as reported in recent literature. This allows for easy comparison of different approaches.

Table 1: Performance Metrics of Parasite Egg Detection Models

| Model Name | Base Architecture | Key Innovation | Precision | Recall | mAP@0.5 | Parameters | Source |

|---|---|---|---|---|---|---|---|

| YCBAM | YOLOv8 | Convolutional Block Attention Module (CBAM) & Self-Attention | 0.997 | 0.993 | 0.995 | Not Specified | [3] |

| YAC-Net | YOLOv5n | Asymptotic Feature Pyramid Network (AFPN) & C2f module | 0.978 | 0.977 | 0.991 | ~1.92 million | [1] |

| CSAE | Custom Autoencoder | Convolutional Selective Autoencoder | High human-level accuracy (≥95% for most sets) | Not Specified | [31] |

### Workflow Visualization

The following diagram illustrates the complete end-to-end workflow for microscopic image analysis, from acquiring the image to interpreting the model's results.

Diagram 1: End-to-End Microscopic Image Analysis Workflow

### The Scientist's Toolkit

This table lists key computational tools and resources used in the development of automated detection systems for microscopic images.

Table 2: Key Research Reagent Solutions (Computational Tools)

| Tool Name | Type/Function | Brief Description | Application in Workflow |

|---|---|---|---|

| YCBAM | Deep Learning Model | A YOLO-based model integrated with attention mechanisms for precise object detection [3]. | Model Training & Inference |

| YAC-Net | Lightweight Deep Learning Model | A modified YOLOv5 model designed for low computational resource environments [1]. | Model Training & Inference (Edge) |

| TrueSpot | Automated Software Tool | A robust tool for automated detection and quantification of fluorescent signal puncta in 2D/3D images [6]. | Image Preprocessing & Analysis |

| U-Net / ResU-Net | Segmentation Architecture | CNN architectures used for accurately segmenting pinworm eggs from complex backgrounds [3]. | Image Segmentation |

| Kubeflow | MLOps Platform | An open-source platform for running scalable and portable ML workloads on Kubernetes [32]. | Model Deployment & Orchestration |

| Weights & Biases (W&B) | Experiment Tracker | A platform for tracking experiments, versioning datasets, and visualizing results [32]. | Experiment Management |

Leveraging Transfer Learning for Effective Models with Limited Training Data

In the field of biomedical research, particularly in parasitic egg identification, researchers often face the significant challenge of developing accurate computer vision models with very limited training data. This is especially true when working with low-resolution microscopic images, where acquiring a large, expertly annotated dataset is time-consuming and expensive. Transfer Learning (TL) is a powerful machine learning technique that directly addresses this problem. It involves taking a model pre-trained on a large, general-purpose dataset (like ImageNet) and adapting or fine-tuning it for a new, specific task [33] [34]. This approach allows knowledge gained from one task to be transferred to another, related task, significantly reducing the required amount of task-specific data, computational resources, and training time [33] [35] [34].

Within the context of a thesis focused on low-resolution microscopic images for egg identification, transfer learning is not just convenient—it is often essential. As noted in research on parasitic egg detection using low-cost USB microscopes, the poor image quality and lack of detailed features make it difficult to train a robust model from scratch [2]. Transfer learning provides a pathway to overcome these limitations by leveraging features and patterns learned from millions of high-quality natural images.

Core Concepts: How Transfer Learning Works

At its core, transfer learning repurposes the knowledge a model has already acquired. In a Convolutional Neural Network (CNN), the initial layers learn to detect very general and fundamental features like edges, curves, and textures [33]. The middle layers combine these to form more complex shapes and patterns, while the final layers are highly specialized for recognizing specific objects from the original training dataset [33].

Transfer learning capitalizes on this hierarchy by reusing the early and middle layers of a pre-trained model, which contain generally applicable feature detectors. The final, task-specific layers are then replaced and retrained on the new dataset. The two primary strategies for implementing transfer learning are:

- Feature Extractor Approach: The convolutional layers of the pre-trained model are frozen (their weights are not updated) and used as a fixed feature extractor. Only the newly added classifier layers are trained on the target dataset [36] [35]. This method is faster and less prone to overfitting on very small datasets.

- Fine-Tuning Approach: After, or instead of, the feature extractor method, some or all of the layers of the pre-trained model are unfrozen and trained on the new data with a very low learning rate [33] [35]. This allows the model to adapt its pre-learned features to the specifics of the new domain, which is crucial when the new images (e.g., low-resolution microscopies) differ significantly from the original training data.

Essential Research Reagent Solutions

The following table details key "research reagents"—the foundational models and datasets—commonly used in transfer learning experiments for medical image analysis.

Table 1: Key Research Reagents for Transfer Learning Experiments

| Research Reagent | Type | Primary Function in Research |

|---|---|---|

| ImageNet Dataset | Dataset | A large-scale dataset of natural images used to pre-train backbone models, providing them with a rich understanding of general visual features [36] [34]. |

| ResNet (Residual Network) | Pre-trained Model | A deep CNN architecture that uses skip connections to solve the vanishing gradient problem, enabling the training of very deep networks. A popular variant is ResNet50 [36] [2]. |

| Inception (GoogleNet) | Pre-trained Model | A CNN architecture known for its efficiency and use of "inception modules" that apply multiple filter sizes in parallel, allowing the network to capture features at various scales [36] [37]. |

| VGGNet | Pre-trained Model | A CNN characterized by its simplicity and depth, using only 3x3 convolutional layers stacked on top of each other. It provides strong performance as a feature extractor [36] [35]. |

| AlexNet | Pre-trained Model | A pioneering deep CNN that significantly advanced the field of image classification. It is relatively shallow compared to modern architectures but remains a useful benchmark [36] [2]. |

Experimental Protocols and Performance

To guide experimental design, the table below summarizes methodologies and results from relevant studies, particularly in low-resolution image analysis.

Table 2: Summary of Experimental Protocols and Performance in Transfer Learning

| Study / Context | Pre-trained Models Used | TL Approach & Key Methodology | Reported Performance Metrics |

|---|---|---|---|

| Parasitic Egg Classification in Low-Mag Microscopy [2] | AlexNet, ResNet50 | Fine-tuning. A patch-based sliding window technique was used. The last two layers were replaced. Greyscale conversion and contrast enhancement were applied for pre-processing. | The proposed framework outperformed state-of-the-art object recognition methods (specific accuracy not listed in excerpt). |

| General Medical Image Analysis [36] | Inception, ResNet, VGG, etc. | Feature Extractor was the most favored single approach, followed by Fine-tuning from scratch. | TL demonstrated efficacy despite data scarcity. Deep models like ResNet or Inception as feature extractors saved computational cost without degrading performance. |

| Parasitic Egg Recognition (Chula-ParasiteEgg Dataset) [38] | Various CNN-based models, CoAtNet | A novel CoAtNet (Convolution and Attention) model was tuned for the task, leveraging both convolution and attention mechanisms. | Average Accuracy: 93%; Average F1 Score: 93%. |

| General Computer Vision [33] | VGG, ResNet, MobileNet | Feature Extractor & Fine-Tuning. Steps include: 1) Select pre-trained model, 2) Remove old classifier, 3) Add new classifier, 4) Freeze feature extractor layers, 5) Train new layers, 6) Optionally fine-tune. | TL provides improved performance, reduced training time, and lower data requirements, especially when tasks are similar. |

Workflow for a Parasitic Egg Identification Experiment

The following diagram illustrates a typical end-to-end workflow for setting up a transfer learning experiment, incorporating steps from the cited protocols.

The Scientist's Toolkit: Troubleshooting Guides and FAQs

This section directly addresses common challenges you might encounter during your experiments, providing actionable guidance based on the principles of transfer learning.

FAQ 1: When should I use transfer learning versus training a model from scratch?

Answer: You should strongly consider transfer learning in the following scenarios, which are common in scientific research:

- You have a low quantity of data: This is the primary use case. Working with too little data will result in poor model performance if trained from scratch. Using a pre-trained model helps create more accurate models [33].

- You have limited computational resources or time: Training a model from scratch requires significant computation and time. Leveraging a pre-trained model drastically reduces both [33] [34].

- Your target task is related to the source task: The features learned from the pre-trained model's dataset (e.g., general shapes and edges from ImageNet) are applicable to your new task (e.g., detecting egg-shaped objects in microscopes) [33].

You should consider training from scratch mostly when you have a very large dataset and the domain of your images is drastically different from natural images (e.g., certain types of medical scans), and you have the computational capacity to support it [33].

FAQ 2: My model is overfitting to my small training dataset. What can I do?

Answer: Overfitting is a major challenge when working with limited data. Here are several strategies to mitigate it:

- Data Augmentation: Artificially increase the size and diversity of your training data by applying random (but realistic) transformations. For microscopic images, this can include random rotation, horizontal/vertical flipping, and slight color/contrast adjustments [2].

- Use the Feature Extractor Approach First: Before attempting fine-tuning, try using the pre-trained model as a fixed feature extractor. This involves freezing all the pre-trained layers and only training the new classifier head you have added. This significantly reduces the number of trainable parameters and thus the risk of overfitting [33] [36].

- Apply Stronger Regularization: Techniques like Dropout and L2 regularization can be increased to prevent the model from becoming too specialized to the training data.

- Use a Validation Set: Always reserve a portion of your data for validation. Monitor the validation loss closely, and stop training when it stops improving (early stopping) to prevent the model from memorizing the training data.

FAQ 3: How do I choose which pre-trained model to use for my project?

Answer: The choice involves a trade-off between model performance, size, and computational speed. Consider the following guidelines:

- Start with Modern, Well-Established Models: Models like ResNet, Inception, and EfficientNet are generally strong starting points. Literature reviews in medical imaging have found Inception and ResNet to be among the most widely used and effective [36] [37].

- Consider Your Hardware Constraints: If you are deploying on a system with limited resources (e.g., an embedded system or mobile device), smaller architectures like MobileNet or SqueezeNet are designed for efficiency.

- Benchmark Empirically: The best model for your specific dataset can only be determined through experimentation. It is a common and good practice to empirically evaluate multiple pre-trained models on a held-out validation set to identify the optimal one for your task [36].

FAQ 4: Should I use the Feature Extractor method or Fine-Tuning?

Answer: The decision flow below outlines the key considerations for choosing the right strategy for your low-resolution image task.

FAQ 5: What is "negative transfer" and how can I avoid it?

Answer: Negative transfer occurs when the knowledge from the source task (e.g., ImageNet) actually harms the performance on the target task, instead of improving it [34]. This typically happens when the two tasks or domains are not sufficiently similar.

To avoid negative transfer:

- Ensure Domain Similarity: Transfer learning works best when the source and target problems are related. For example, a model pre-trained on natural images can be effective for microscopic images because low-level features like edges are shared [33] [34].

- Do Not Use a Mismatched Pre-Trained Model: Avoid using a model pre-trained on a completely unrelated domain if possible. For instance, a model trained solely on textual data would not be suitable for image analysis.

- Start with a Conservative Approach: Begin with the feature extractor method. If performance is poor, it may indicate a domain mismatch. Fine-tuning might help bridge the gap, but if performance continues to degrade, the chosen pre-trained model may not be appropriate for your task.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: My microscopic images are low-resolution and often blurry. Can I still use them for reliable automated egg detection?

Yes, with the right approach. Deep learning models have been specifically developed to handle these challenges. For instance:

- Image Enhancement: Deep learning models are superior to traditional methods for enhancing low-resolution images, significantly reducing scanning time without compromising detail [7].

- Robust Detection: Lightweight models like YAC-Net are designed to maintain detection performance even with low-resolution and blurred egg images, reducing hardware requirements [1].

- Pre-processing: Techniques such as Otsu thresholding and the watershed algorithm can be applied during image analysis to distinguish foreground from background and accurately identify regions of interest, improving input quality for models [39].

Q2: I am working in a resource-constrained setting. What type of detection model should I choose?

Prioritize lightweight, one-stage detector models that balance accuracy with lower computational costs.

- Model Choice: The YOLO (You Only Look Once) series of models are often the best choice. They are one-stage detectors, making them faster and requiring less computational power than two-stage detectors (like Faster R-CNN) while still achieving high accuracy [1] [40].

- Proven Performance: The YOLOv4 model has demonstrated high recognition accuracy (up to 100% for some species like Clonorchis sinensis) for various parasitic helminth eggs [40]. Another study using a YOLO-based model with a Convolutional Block Attention Module (YCBAM) for pinworm eggs achieved a mean Average Precision (mAP) of 0.995 [3].

Q3: How can I improve my model's performance when it struggles to distinguish eggs from background debris or other artifacts?

Incorporate attention mechanisms into your model architecture.

- Focus on Relevant Features: Modules like the Convolutional Block Attention Module (CBAM) help the model learn to focus on spatially and channel-wise important features, such as egg boundaries, while ignoring redundant information and background noise [3].

- Improved Feature Fusion: Using an Asymptotic Feature Pyramid Network (AFPN) instead of a standard Feature Pyramid Network (FPN) allows for better integration of contextual information from different levels, helping the model understand egg morphology more effectively and ignore irrelevant details [1].

Q4: What is the gold standard for evaluating the detection performance of my model?

Use the following established object detection metrics, which are calculated based on True Positives (TP), False Positives (FP), and False Negatives (FN) [40]:

- Precision:

Precision = TP / (TP + FP). Reflects how many of the detected eggs are actually correct (low false positive rate). - Recall: