Optimizing Dimensionality Reduction for Morphometric Discriminant Analysis: A Guide for Biomedical Researchers

Morphometric analysis is pivotal in biomedical research for discerning subtle phenotypic changes, yet its high-dimensional nature poses significant analytical challenges.

Optimizing Dimensionality Reduction for Morphometric Discriminant Analysis: A Guide for Biomedical Researchers

Abstract

Morphometric analysis is pivotal in biomedical research for discerning subtle phenotypic changes, yet its high-dimensional nature poses significant analytical challenges. This article provides a comprehensive guide for researchers and drug development professionals on optimizing dimensionality reduction (DR) techniques to enhance morphometric discriminant analysis. We explore the foundational principles of DR in biological contexts, evaluate the performance of leading linear and non-linear methods like UMAP, t-SNE, and PaCMAP on real-world datasets such as drug-induced transcriptomes. The guide delves into methodological applications, tackles common troubleshooting and optimization scenarios including parameter tuning and handling dose-dependent variations, and presents a rigorous framework for the validation and comparative analysis of DR outputs. By integrating insights from recent benchmarking studies and advanced machine learning approaches, this resource aims to equip scientists with the knowledge to select, apply, and validate DR methods effectively, thereby improving the reliability and biological interpretability of their morphometric studies.

The Why and What: Establishing the Core Principles of Dimensionality Reduction in Morphometrics

Defining the High-Dimensional Challenge in Morphometrics and Drug Response

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: What constitutes a "high-dimensional" dataset in morphometrics and drug screening?

In morphometrics and drug screening, dimensionality refers to the number of features or variables measured per sample. A dataset becomes high-dimensional when the number of features (e.g., hundreds to thousands of morphological or gene expression parameters) is staggeringly high—often exceeding or being comparable to the number of observations, which makes calculations complex [1] [2].

- Example from the field: A typical high-dimensional profiling experiment might capture roughly 1,000 morphological features from Cell Painting and ~978 gene expression levels from the L1000 assay for each sample, across tens of thousands of chemical and genetic perturbations [3].

FAQ 2: What are the primary challenges when working with high-dimensional morphometric data?

High-dimensional data introduces several critical challenges that can hinder analysis and interpretation:

- The Curse of Dimensionality: As the number of features grows, data becomes sparse, making it difficult to identify patterns. Conventional distance metrics also lose effectiveness [2].

- Data Sparsity and Redundancy: Many measured features may be irrelevant or convey the same information, introducing noise and increasing computational load without benefit [2].

- Increased Risk of Overfitting: Models can easily learn noise or idiosyncrasies in the training data rather than meaningful biological patterns, leading to poor performance on new, unseen data [3] [2].

- High Computational Complexity: Processing, storing, and analyzing datasets with thousands of features demands significant time and memory resources [2].

- Measurement Error and Pooling Risks: In morphometrics, pooling datasets from multiple operators or devices can introduce systematic biases and errors that are difficult to disentangle from true biological variation, especially when the biological signal is subtle [4].

FAQ 3: How can I predict one data modality from another, and what accuracy can I expect?

It is possible to computationally predict one profiling modality from another (e.g., gene expression from morphology) by leveraging the shared information subspace between them [3].

Baseline Protocol: Cross-Modality Prediction

- Data Preparation: Obtain treatment-level profiles for your perturbations (e.g., morphological profiles and gene expression profiles) [3].

- Model Setup: Frame the problem as a regression. For predicting the mRNA level of a single landmark gene (

y_l) from morphological features (X_cp), use the model:y_l = f(X_cp) + e_l[3]. - Model Training: Use a regression model. Baseline studies suggest:

- Validation: Evaluate performance using metrics like accuracy and area under the receiver operating characteristic curve (AUC).

Expected Performance: Performance varies by dataset. Some show excellent accuracy for specific predictions, while others do not. One study comparing high-dimensional vs. low-dimensional models for detecting imaging response to treatment in multiple sclerosis found a significant improvement, with AUC increasing from 0.686 (low-dimensional) to 0.890 (high-dimensional) [5].

FAQ 4: What are the best techniques to reduce dimensionality in my data?

The optimal technique depends on your data structure and research goal. The table below summarizes common approaches.

Table 1: Dimensionality Reduction and Feature Selection Techniques

| Technique | Category | Brief Description | Best Use Cases |

|---|---|---|---|

| Principal Component Analysis (PCA) | Dimensionality Reduction | Transforms data into uncorrelated principal components that capture maximum variance [2]. | Linear data structures; efficient, interpretable reduction [2]. |

| Linear Discriminant Analysis (LDA) | Dimensionality Reduction | A supervised technique that finds feature combinations that best separate classes [2]. | Classification problems with labeled data [2]. |

| t-SNE / UMAP | Dimensionality Reduction | Non-linear techniques that preserve local relationships and complex structures [2]. | Visualizing complex, non-linear data patterns [2]. |

| Lasso (L1) Regularization | Feature Selection | Adds a penalty that shrinks coefficients, effectively performing feature selection by zeroing out irrelevant features [3] [2]. | Sparse datasets where only a subset of features is relevant; integrated into model training [2]. |

| Random Forests | Feature Selection | Tree-based algorithms that naturally rank feature importance through the training process [2]. | Handling high-dimensional data with varying feature relevance; robust to irrelevant features [2]. |

FAQ 5: My multi-operator morphometric study shows high variation. How can I troubleshoot this?

High inter-operator (IO) variation is a common issue that threatens the validity of pooled datasets [4].

- Troubleshooting Guide: Mitigating Inter-Operator Bias

- Problem: Landmark Misplacement

- Problem: Systematic Bias in Specific Regions

- Cause: Certain complex morphological regions (e.g., curves and surfaces) are more prone to inconsistent digitization [6].

- Solution: For complex structures, employ sliding semilandmarks on curves and surfaces. This method uses a template to semi-automate placement, minimizing subjectivity after initial landmark and curve definition [6].

- Problem: Inability to Disentangle Operator Effect from Biological Signal

- Cause: IO error is of the same magnitude or direction as the biological variation of interest [4].

- Solution: Follow a pre-pooling validation workflow [4]:

- Estimate intra-operator and IO measurement errors using a pilot dataset.

- Compare the amount of variation introduced by IO error to the biological variation under study.

- If IO error is significant and non-random, avoid pooling data from problematic operators or protocols.

Experimental Protocols

Protocol 1: Workflow for Assessing Measurement Error Before Pooling Morphometric Datasets

This protocol helps determine if datasets from multiple operators can be pooled reliably [4].

- Pilot Data Collection: Have each operator perform repeated measurements on an identical subset of specimens.

- Data Acquisition: Apply your morphometric protocol (e.g., landmark-only, landmarks with semilandmarks) to the pilot data [4].

- Error Quantification:

- Calculate intra-operator error for each operator by comparing their own replicates.

- Calculate inter-operator (IO) error by comparing measurements of the same specimen across different operators.

- Statistical Comparison: Compare the magnitude of IO error to the size of the biological effect you are studying (e.g., variation between species or treatment groups).

- Decision Point:

- If IO error is small relative to biological effect → Datasets can likely be pooled.

- If IO error is large or systematic → Do not pool datasets; instead, refine the measurement protocol and retrain operators.

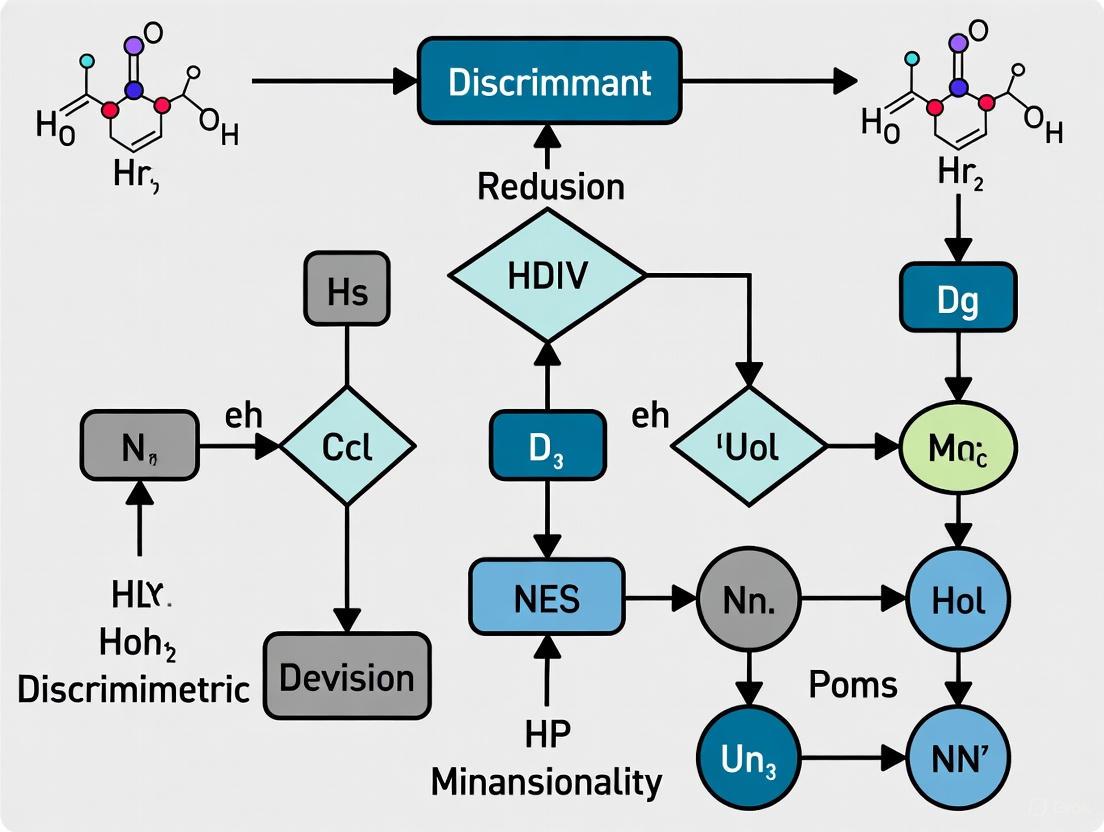

The following workflow diagram illustrates the key decision points in this process:

Protocol 2: High-Dimensional Model for Detecting Imaging Response to Treatment

This protocol outlines the methodology for using high-dimensional modeling to detect subtle treatment effects in medical imaging, as demonstrated in multiple sclerosis research [5].

- Image Acquisition and Processing: Collect longitudinal, standard-of-care MRI scans from patients (pre- and post-treatment).

- Feature Extraction: Use fully-automated image analysis software to extract a high-dimensional set of features. The cited study extracted 144 regional trajectories of brain volume change and disconnection over time [5].

- Confounder Regression: Statistically adjust the extracted imaging-derived parameters for potential confounders (e.g., age, sex, scan timing) to ensure residual effects are not due to these variables [5].

- Model Building and Training:

- Build a high-dimensional model of the relationship between treatment and the trajectories of change. The cited study used an Extremely Randomized Trees (ERT) classifier [5].

- For comparison, build a conventional, low-dimensional model using a limited set of common biomarkers (e.g., total lesion count, whole-brain volume).

- Model Evaluation:

- Quantify performance using receiver operating characteristic (ROC) curves and calculate the Area Under the Curve (AUC).

- Perform statistical testing (e.g., via simulated randomized controlled trials) to compare the statistical power and efficiency of high-dimensional versus low-dimensional models [5].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Assays for High-Dimensional Profiling

| Item or Assay | Function in High-Dimensional Research |

|---|---|

| Cell Painting Assay | A high-content, microscopy-based assay that uses fluorescent dyes to stain up to eight cellular components, generating ~1,000 morphological features that form a high-dimensional profile for each sample [3]. |

| L1000 Assay | A high-throughput gene expression profiling technology that measures the mRNA levels of ~978 "landmark" genes, capturing a large portion of the transcriptional state of a cell population under perturbation [3]. |

| Sliding Semilandmarks | A geometric morphometric method used to quantify shapes of complex biological structures (e.g., bones, organs) along curves and surfaces, allowing for dense and biologically informed capture of morphology beyond traditional landmarks [6]. |

| t-SNE / UMAP | Non-linear dimensionality reduction algorithms critical for visualizing and exploring the structure of high-dimensional data (e.g., from Cell Painting) by preserving local relationships in a 2D or 3D map [2]. |

| Lasso (L1) Regression | A regularized regression technique that not only builds predictive models but also performs feature selection by shrinking the coefficients of less important features to zero, helping to simplify high-dimensional models [3] [2]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between local and global structure in my high-dimensional biological data?

Local structure refers to the fine-grained relationships and distances between data points that are close neighbors in the high-dimensional space. In contrast, global structure describes the overall geometry, large-scale patterns, and relationships between distant data points. Preserving local structure means maintaining the accuracy of small-scale clustering, which is crucial for identifying distinct cell populations or subtle morphological variations. Global structure preservation ensures that the broader organization and relative positioning of major clusters remain intact, which is essential for understanding large-scale phenotypic differences.

FAQ 2: When should I prioritize local structure preservation over global structure in morphometric analysis?

Prioritize local structure preservation when your research focuses on identifying fine-grained subpopulations, detecting rare cell types, or analyzing subtle shape variations. For instance, when classifying children's nutritional status from arm shape landmarks, preserving local structure helps capture the subtle morphological differences that distinguish between healthy and malnourished individuals. Conversely, prioritize global structure when analyzing broad phenotypic categories or when the overall data topology is more important than fine-grained cluster separation.

FAQ 3: How does the "curse of dimensionality" affect my ability to preserve both local and global structures?

The curse of dimensionality describes the exponential increase in complexity and data sparsity that occurs as the number of dimensions grows. In high-dimensional spaces, distance measures become less meaningful, making it difficult for any single dimensionality reduction technique to faithfully preserve both local and global relationships. This is particularly problematic in biological data like transcriptomics, where you might measure thousands of genes across only a few samples, or in morphometrics with numerous landmark coordinates.

FAQ 4: What are the practical consequences of choosing a technique that poorly preserves local structure in morphometric data?

Poor local structure preservation can lead to the loss of biologically meaningful fine-grained patterns. In geometric morphometrics for nutritional assessment, this might mean failing to distinguish between subtle arm shape variations that indicate different malnutrition states. Clusters that represent distinct biological entities may merge artificially, while homogeneous populations might appear fragmented, leading to incorrect biological interpretations and reduced classification accuracy.

FAQ 5: Can I use multiple dimensionality reduction techniques in tandem to better address both structure types?

Yes, combining multiple techniques is often beneficial. A common approach is to use a linear method like Principal Component Analysis for initial noise reduction and global structure preservation, followed by a nonlinear method like UMAP or t-SNE for enhanced local structure visualization and clustering. This hybrid approach can leverage the strengths of different algorithms while mitigating their individual limitations.

Troubleshooting Guides

Issue 1: Poor Cluster Separation in Low-Dimensional Embedding

Symptoms: Biologically distinct populations appear merged in the reduced space; clustering algorithms perform poorly on the embedded data.

Diagnosis and Solutions:

Check Local Structure Preservation: If known subpopulations are merging, your technique may be over-prioritizing global structure. Switch to or add a method that better preserves local neighborhoods.

- Action: Compare results using UMAP with different neighborhood size parameters. Smaller neighborhood sizes will emphasize local structure.

- Example: In single-cell data, if T-cell subsets are not separating, reduce the

n_neighborsparameter in UMAP from the default (15) to a smaller value (e.g., 5-10).

Assess Input Data Quality: High noise or irrelevant features can obscure biological signals.

- Action: Apply feature selection (e.g., using Random Forest feature importance) before dimensionality reduction. Preprocess your morphometric data to remove technical artifacts.

Validate with Known Labels: Use a small set of known, confidently labeled data points to verify whether the embedding maintains their relationships.

Issue 2: Loss of Meaningful Global Topology

Symptoms: The overall arrangement of clusters appears distorted; relationships between major populations do not reflect known biology; distances between clusters are not interpretable.

Diagnosis and Solutions:

Technique Selection Error: Nonlinear methods like t-SNE are designed to prioritize local structure and often distort global relationships.

- Action: For global structure analysis, use Principal Component Analysis, which is designed to preserve global variance. The first principal component captures the direction of maximum variance in the data, followed by subsequent components that capture the next highest variances while being uncorrelated with previous components.

Parameter Tuning: Some methods offer parameters that balance local/global preservation.

- Action: In UMAP, increasing the

min_distparameter can better preserve global structure. In t-SNE, increasing perplexity may help capture more global relationships.

- Action: In UMAP, increasing the

Comparative Analysis: Run multiple methods and compare the consistent patterns across them. Persistent patterns across different techniques are more likely to represent true biological structure.

Issue 3: Inconsistent Results When Adding New Data

Symptoms: Embedding changes dramatically when new samples are projected; classification rules built on the original embedding fail on new data.

Diagnosis and Solutions:

Out-of-Sample Projection Problem: Some techniques create embeddings specific to a dataset and lack a straightforward way to add new points.

- Action: Use methods with built-in projection capabilities or established protocols. For geometric morphometrics, establish a fixed template or Procrustes registration method for new individuals. Research shows that sample-dependent processing steps like Generalized Procrustes Analysis need special consideration for out-of-sample classification.

Model Stability: Ensure your embedding is stable and representative.

- Action: Use a sufficiently large and diverse training set. For methods like PCA, you can project new data into the existing principal component space defined by your original dataset.

Implementation Check: Verify that you are using the same preprocessing, normalization, and parameter settings for both training and new data.

Dimensionality Reduction Technique Comparison

The table below summarizes how common techniques balance local versus global structure preservation:

| Technique | Local Structure Preservation | Global Structure Preservation | Best Use Cases in Morphometrics |

|---|---|---|---|

| Principal Component Analysis | Poor | Excellent | Initial exploration, noise reduction, visualizing major sources of shape variance. |

| UMAP | Excellent | Good (adjustable) | Identifying fine-grained subpopulations, detailed cluster analysis. |

| t-SNE | Excellent | Poor | Visualizing local clustering structure when global topology is not required. |

| Autoencoders | Adjustable | Adjustable | Handling complex nonlinearities; architecture and loss function determine preservation focus. |

Experimental Protocol: Comparing Local/Global Preservation

Objective: Systematically evaluate how well different dimensionality reduction techniques preserve the local and global structure of your morphometric data.

Materials:

- High-dimensional morphometric dataset (e.g., landmark coordinates)

- Computing environment with Python/R and relevant DR libraries

- Ground truth labels (if available) for biological populations

Methodology:

Data Preprocessing:

- Standardize your data by subtracting the mean and scaling to unit variance.

- For geometric morphometric data, perform Procrustes alignment to remove non-shape variation.

Baseline Generation:

- Compute a distance matrix in the original high-dimensional space (e.g., Procrustes distance for shape data).

Dimensionality Reduction:

- Apply multiple techniques to the same dataset:

- PCA

- t-SNE (vary perplexity: 5, 30, 50)

- UMAP (vary n_neighbors: 5, 15, 50)

- Apply multiple techniques to the same dataset:

Structure Preservation Assessment:

- Local Structure: For each point, identify its k-nearest neighbors in the original space. Calculate what percentage are preserved as nearest neighbors in the embedded space.

- Global Structure: Calculate the correlation between pairwise distances in the original high-dimensional space and the embedded low-dimensional space.

Biological Validation:

- Apply clustering to the embeddings and compare cluster purity against biological labels.

- Visually inspect embeddings for biological coherence.

Workflow Diagram

Research Reagent Solutions

The table below outlines key computational tools for dimensionality reduction in morphometric research:

| Tool/Technique | Function | Key Consideration |

|---|---|---|

| Principal Component Analysis | Linear dimensionality reduction; maximizes variance explained. | Excellent for global structure; provides interpretable components. |

| UMAP | Nonlinear dimensionality reduction; preserves local neighborhood structure. | Highly effective for local structure; global preservation tunable via parameters. |

| t-SNE | Nonlinear technique focusing on local probability distributions. | Excellent for visualization of local clusters; distances between clusters not meaningful. |

| Variational Autoencoder | Deep learning approach for nonlinear dimensionality reduction. | Highly flexible; can learn complex manifolds but requires significant data and tuning. |

| Procrustes Analysis | Aligns shapes by removing translation, rotation, and scaling effects. | Essential preprocessing for geometric morphometrics before applying other DR techniques. |

FAQs: Selecting and Troubleshooting Dimensionality Reduction Techniques

Q1: My high-dimensional morphometric data is causing my classification model to overfit. What is the most straightforward technique to improve generalizability?

A1: Principal Component Analysis (PCA) is often the most suitable initial approach. PCA is a linear dimensionality reduction technique that enhances model generalizability by transforming correlated variables into a set of uncorrelated principal components, capturing the maximum variance in the data with fewer features [7] [8]. This process reduces model complexity and helps prevent overfitting, which is a common consequence of the "curse of dimensionality" where data becomes sparse [7] [9]. To implement PCA, first standardize your data, then compute the covariance matrix and its eigenvectors (principal components) and eigenvalues (variance explained) [10] [11]. You can choose the number of components by selecting the top ( k ) eigenvectors that capture a sufficient amount (e.g., 95%) of the total variance [9].

Q2: When should I choose a non-linear method like t-SNE over a linear method like PCA for my data?

A2: Choose a non-linear method when your data involves complex, non-linear relationships that a linear projection cannot adequately capture [12]. While PCA focuses on preserving global variance, t-SNE is designed to preserve the local structure of the data, making it superior for visualizing clusters and understanding small-scale patterns [7] [9]. Research comparing PCA to non-linear methods on morphometric data has found that non-linear techniques show superior preservation of small differences between morphologies [13]. However, note that t-SNE is primarily a visualization tool for 2D or 3D spaces and is computationally intensive, making it less suitable for general-purpose feature reduction preceding other algorithms [7] [9].

Q3: I need to reduce dimensions for a supervised classification task involving multiple fish species. Should I use PCA or LDA?

A3: For a supervised classification task like discriminating between species, Linear Discriminant Analysis (LDA) is typically more appropriate. Unlike the unsupervised PCA, LDA is a supervised technique that explicitly uses class labels to project data onto a lower-dimensional space [7] [11]. The goal of LDA is to maximize the separation between different classes while minimizing the spread (variance) within each class [11]. This has been proven useful in morphometric discriminant analysis research, for instance, in the differentiation of six native freshwater fish species in Ecuador, where LDA successfully created models that could discriminate between species based on morphometric measurements [14].

Q4: The clusters in my t-SNE plot look different every time I run it. What key hyperparameters should I tune for stability and meaningful results?

A4: The non-deterministic nature of t-SNE means results can vary between runs. To improve stability and interpretability, focus on tuning these key hyperparameters [9]:

- Perplexity: This parameter controls the size of the effective neighborhood for each point. It can be thought of as a guess for the number of close neighbors each point has. Typical values are between 5 and 50 [9]. A low perplexity focuses on very local structures, while a high perplexity considers more global patterns.

- Learning Rate: This controls the step size of the optimization process (gradient descent). If the learning rate is too high, the visualization may look like a "ball" of scattered points. If it's too low, the algorithm may get stuck in a poor local minimum [9].

- Exaggeration: This hyperparameter controls the magnitude of attraction between similar points in the early stages of optimization, helping to form more distinct clusters [9].

Q5: How can I objectively evaluate the performance of a dimensionality reduction algorithm on my dataset?

A5: Performance can be evaluated based on the goal of the reduction [10]:

- For Visualization: If the goal is visualization, the success is qualitative—assess whether the low-dimensional plot reveals meaningful clusters or patterns that align with domain knowledge [12].

- For Model Performance: If dimensionality reduction is a preprocessing step for a supervised learning task (e.g., classification), the most direct evaluation is the performance (e.g., accuracy) of the final model on a held-out test set [12]. Using cross-validation to compare performance with and without dimensionality reduction is a robust method [12].

- Preservation of Distances/Structure: Some metrics quantitatively assess how well the low-dimensional embedding preserves the distances or neighborhood structures from the high-dimensional space. For discriminant analysis, the reliability of group separation can be assessed using techniques like leave-one-out cross-validation to generate a misclassification table [15].

Experimental Protocols for Key Dimensionality Reduction Techniques

Protocol: Principal Component Analysis (PCA)

Objective: To reduce the dimensionality of a morphometric dataset by transforming the original variables into a set of uncorrelated principal components that capture maximum variance.

Materials:

- Standardized morphometric dataset (Matrix ( X ) with dimensions ( n \times p ), where ( n ) is the number of specimens and ( p ) is the number of original variables).

Procedure:

- Data Standardization: Standardize the dataset ( X ) to have a mean of 0 and a standard deviation of 1 for each variable. This ensures that variables with larger scales do not dominate the analysis [10] [11].

- Compute Covariance Matrix: Calculate the ( p \times p ) covariance matrix of the standardized data. This matrix represents the pairwise covariances between all original variables [10].

- Eigen Decomposition: Perform eigendecomposition on the covariance matrix to obtain its eigenvectors and eigenvalues. The eigenvectors represent the principal components (axes of maximum variance), and the corresponding eigenvalues represent the amount of variance captured by each principal component [7] [10].

- Select Principal Components: Sort the eigenvectors in descending order of their eigenvalues. Choose the top ( k ) eigenvectors to form a projection matrix ( W ) (dimensions ( p \times k )). The choice of ( k ) can be based on the cumulative explained variance (e.g., retaining components that collectively explain >95% of the total variance) or by looking for an "elbow" in a scree plot of the eigenvalues [9] [10].

- Project Data: Transform the original data into the new ( k )-dimensional subspace by taking the matrix product of the standardized data ( X ) and the projection matrix ( W ). The result is a new dataset ( Y = X \cdot W ) with dimensions ( n \times k ) [10].

Protocol: Linear Discriminant Analysis (LDA) for Morphometric Discrimination

Objective: To project morphometric data onto a lower-dimensional space that maximizes the separation between pre-defined groups (e.g., species, sexes).

Materials:

- Standardized morphometric dataset (Matrix ( X )).

- A vector of group labels (e.g., species identification for each specimen).

Procedure:

- Compute Mean Vectors: Calculate the mean vector for each group in the dataset [11].

- Compute Scatter Matrices:

- Within-Class Scatter Matrix (( SW )): Calculate the scatter of data points around their respective class means. This represents the variance within each group [7] [11].

- Between-Class Scatter Matrix (( SB )): Calculate the scatter of the class means around the overall global mean. This represents the variance between different groups [7] [11].

- Solve the Generalized Eigenvalue Problem: Solve for the eigenvectors and eigenvalues of the matrix ( SW^{-1}SB ) [7]. The eigenvectors define the new axes (linear discriminants), and the eigenvalues indicate the discriminatory power of each axis.

- Select Linear Discriminants: Sort the eigenvectors by decreasing eigenvalue. Select the top ( m ) eigenvectors (where ( m ) is at most the number of groups minus one) to form a transformation matrix ( W ) [11].

- Project Data: Transform the original data onto the new discriminatory space via ( Y = X \cdot W ). The resulting dataset ( Y ) can be used for classification or visualization [14].

Validation:

- Cross-Validation: Use leave-one-out cross-validation to assess the accuracy of the discriminant model. This involves iteratively training the model on all but one specimen and then trying to classify the held-out specimen. The resulting classification/misclassification table provides a robust measure of the model's performance [15].

Method Selection and Workflow Visualization

The following diagram illustrates a logical workflow for selecting an appropriate dimensionality reduction technique based on your data and research goals.

Quantitative Comparison of Dimensionality Reduction Techniques

The table below summarizes the key characteristics of major dimensionality reduction techniques to aid in selection.

Table 1: Comparative Analysis of Dimensionality Reduction Techniques

| Technique | Type | Key Objective | Key Metric | Optimal Use Case | Limitations |

|---|---|---|---|---|---|

| PCA [7] [9] | Linear, Unsupervised | Maximize variance captured | Explained Variance Ratio, Eigenvalues | General-purpose compression, noise reduction, linear data. | Fails to capture complex non-linear structures. |

| LDA [7] [11] | Linear, Supervised | Maximize class separation | Between-class / Within-class variance ratio, Classification accuracy. | Supervised classification tasks with labeled data. | Requires class labels; assumes normal data and equal class covariances. |

| t-SNE [7] [9] | Non-linear, Unsupervised | Preserve local data structure | Kullback-Leibler Divergence (Trustworthiness) [13]. | Visualizing high-dimensional data in 2D/3D to reveal clusters. | Computationally heavy; results vary with parameters (perplexity); global structure may be lost. |

| UMAP [9] | Non-linear, Unsupervised | Preserve local & global structure | — | Visualization and as a general-purpose non-linear preprocessor. Faster than t-SNE for large data. | Less interpretable parameters; like t-SNE, output is not reusable for new data without a parametric extension. |

| Kernel PCA [16] | Non-linear, Unsupervised | Capture non-linear variance in a higher-dimensional space | — | Data with non-linear relationships where linear PCA fails. | Choice of kernel and kernel parameters can be difficult; computationally more complex than linear PCA. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential "Research Reagent Solutions" for Morphometric DR Experiments

| Item / Tool | Function in DR Research |

|---|---|

| Geometric Morphometric Software (e.g., MorphoJ) | Provides a dedicated environment for performing statistical shape analysis, including Procrustes superimposition, and implements techniques like Discriminant Function Analysis (DFA) for group comparisons [15]. |

| Python/R with Specialized Libraries (scikit-learn) | Offers open-source, flexible programming environments with comprehensive libraries for implementing a wide array of DR techniques (PCA, LDA, t-SNE, UMAP) and integrating them into custom analysis pipelines [7] [11]. |

| Standardized Morphometric Data | A dataset of 2D or 3D landmarks or outlines collected from specimens. This is the primary input for the analysis. The protocol in [14] used 27 morphometric measurements and 20 landmarks on 1355 fish. |

| High-Performance Computing (HPC) Cluster | Essential for processing large-scale morphometric datasets (e.g., 3D micro-CT scans) or running computationally intensive algorithms like t-SNE on thousands of samples, significantly reducing computation time [9]. |

| Cross-Validation Framework | A methodological "reagent" used to rigorously evaluate the performance and generalizability of a DR model, particularly in supervised settings like LDA, to prevent over-optimistic performance estimates [15]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary computational approaches for predicting a drug's Mechanism of Action (MOA)? Two major complementary approaches exist. Structure-based methods, like AlphaFold3, predict direct protein-small molecule binding affinity from static structures [17]. Conversely, functional genomics methods, like the DeepTarget tool, integrate large-scale drug viability screens with genetic knockout (e.g., CRISPR-Cas9) and omics data (gene expression, mutation) from matched cancer cell lines to identify both direct and indirect, context-dependent MOAs driving cancer cell death [17].

FAQ 2: How can I identify if a drug's efficacy is due to an off-target effect? Computational tools can systematically predict context-specific secondary targets. For instance, DeepTarget identifies two types of secondary effects: 1) Those contributing to efficacy even when primary targets are present, found by decomposing drug response into gene knockout effects, and 2) Those mediating responses specifically when primary targets are not expressed, identified by calculating Drug-KO Similarity (DKS) scores in cell lines lacking primary target expression [17]. This helps categorize off-target effects into clinically relevant secondary mechanisms.

FAQ 3: My dimensionality reduction results are inconsistent. What are common pitfalls? Inconsistent results often stem from poor organization and a lack of reproducibility in the computational workflow [18]. Other factors include incorrect parameterization of models, flaws in initial data preparation, or not accounting for confounding factors in input data (e.g., variation in screen quality, copy number effects) [17] [18]. Maintaining a chronological lab notebook and fully automated, restartable driver scripts for experiments is crucial for tracking, replicating, and troubleshooting analyses [18].

FAQ 4: What defines a "high-confidence" drug-target interaction for benchmarking? High-confidence drug-target pairs are typically curated from multiple independent, authoritative sources. Gold-standard datasets for benchmarking may include pairs where the drug has:

- FDA approval for a specific anti-cancer indication linked to a target mutation [17].

- Clinical resistance data linked to a tumor mutation in the target gene (e.g., from COSMIC or oncoKB) [17].

- Multiple independent validation reports (e.g., in BioGrid) or high-confidence status from scientific advisory boards (e.g., ChemicalProbes.org) [17].

- Direct interaction and activity (e.g., as an inhibitor/antagonist) documented in DrugBank [17].

FAQ 5: How can we predict if a drug will work better for mutant vs. wild-type protein targets? Preferential targeting of mutant forms can be predicted by comparing drug-target relationships in different genetic contexts. The underlying principle is that if a drug specifically targets a mutant form, the similarity between drug treatment and target knockout effects (DKS score) will be significantly higher in cell lines harboring the mutant target versus those with the wild-type version. This difference is quantified as a mutant-specificity score [17].

Troubleshooting Guides

Issue 1: Poor Clustering of Drugs by Known MOA in Dimensionality Reduction

Problem: When using tools like DeepTarget, a UMAP plot based on Drug-KO Similarity (DKS) scores fails to cluster compounds by their known mechanisms of action [17].

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect Data Preprocessing | Verify that Chronos-processed CRISPR dependency scores are used, as they account for sgRNA efficacy, screen quality, and copy number effects [17]. | Re-run the pipeline using the properly processed and normalized dependency scores. |

| Low-Quality Input Data | Check the quality metrics for the original drug response and CRISPR-KO viability profiles from data sources (e.g., DepMap) [17]. | Filter out cell lines or drugs with poor-quality data or low signal-to-noise ratios. |

| High Dimensional Noise | Perform principal component analysis (PCA) on the DKS score matrix to see if too much variance is captured in later components, indicating noise. | Apply feature selection or increase the regularization in the dimensionality reduction algorithm. |

Issue 2: Failure to Experimentally Validate a Predicted Off-Target Effect

Problem: A computationally predicted secondary target or off-target effect cannot be confirmed in subsequent laboratory experiments.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Cellular Context Differences | Ensure the cell lines used for experimental validation genetically match those where the prediction was strong (e.g., same mutation profile, low primary target expression) [17]. | Repeat the validation assay in a panel of cell lines that better represent the predicted context of the off-target effect. |

| Insufficient Pathway Engagement | The predicted target may be inhibited computationally, but the drug concentration in experiments may be insufficient to trigger the downstream phenotypic effect. | Perform a dose-response curve and measure downstream pathway activity (e.g., via phospho-protein assays) in addition to viability. |

| Indirect Mechanism | The prediction may not be a direct binding target but part of the downstream pathway or a synthetic lethal interaction [17]. | Use complementary methods like protein-binding assays (SPR, CETSA) to confirm direct binding, or use transcriptomics to see if the drug treatment mimics the gene knockout's transcriptional signature. |

Issue 3: High Misclassification Rates in Drug Response Prediction

Problem: A model built to classify cells as responsive or non-responsive to a drug performs poorly on validation data.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect Feature Selection | Check if the features used (e.g., mutation status, gene expression) are known to be the primary drivers of response for that drug class [17]. | Incorporate prior biological knowledge (e.g., from gold-standard datasets) to guide feature selection. Use recursive feature elimination. |

| Class Imbalance | Calculate the ratio of responsive to non-responsive samples in your training set. A highly skewed ratio can bias the model. | Apply techniques like SMOTE for oversampling the minority class, use different error cost functions, or use precision-recall curves for evaluation instead of accuracy. |

| Model Overfitting | Check if the model's performance on training data is much higher than on test/validation data. | Increase regularization (e.g., in quadratic discriminant analysis), simplify the model, or perform more robust cross-validation [19]. |

Experimental Protocols & Data

Table 1: Gold-Standard Datasets for Validating Drug-Target Predictions

The following high-confidence datasets are used for benchmarking computational target prediction tools like DeepTarget [17].

| Dataset Name | Description | Number of Drug-Target Pairs |

|---|---|---|

| COSMIC Resistance | Tumor mutation in target gene causes clinical resistance to the drug [17]. | 16 |

| oncoKB Resistance | Target mutation linked to clinical resistance per the oncoKB database [17]. | 28 |

| FDA Mutation-Approval | FDA approval for anti-cancer treatment linked to a specific target mutation [17]. | 86 |

| SAB ChemicalProbes | High-confidence interactions curated by the ChemicalProbes.org Scientific Advisory Board [17]. | 24 |

| Biogrid Highly Cited | Multiple independent validation reports in the BioGrid database [17]. | 28 |

| DrugBank Active Inhibitors | Directly interacting inhibitors documented in DrugBank [17]. | 90 |

| DrugBank Active Antagonists | Directly interacting antagonists documented in DrugBank [17]. | 52 |

| SelleckChem Selective | Highly selective inhibitors based on binding profiles [17]. | 142 |

Table 2: Key Payload Classes in Antibody-Drug Conjugates (ADCs) and Their Mechanisms

Understanding ADC payloads is key to predicting their efficacy and off-target toxicity [20].

| Payload Class | Mechanism of Action | Example Payloads | Common Off-Target Toxicities |

|---|---|---|---|

| Microtubule-Disrupting Agents | Inhibit tubulin polymerization, causing mitotic arrest and apoptosis [20]. | Monomethyl auristatin E (MMAE), DM1, DM4 [20]. | Peripheral neuropathy, hepatotoxicity, cardiotoxicity [20]. |

| Topoisomerase I Inhibitors | Inactivate the TOPI-DNA complex, leading to DNA single-strand breaks and apoptosis [20]. | Deruxtecan (DXd), Exatecan [20]. | Myelosuppression, interstitial lung disease [20]. |

| DNA Alkylating Agents | Cause DNA cross-linking, leading to irreversible DNA damage and cell death [20]. | Pyrrolobenzodiazepines (PBDs) [20]. | Hematological toxicity [20]. |

Workflow and Pathway Visualizations

DeepTarget Prediction Workflow

ADC Payload Mechanisms & Toxicity

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example Sources / Tools |

|---|---|---|

| Cancer Cell Line Panels | Provide matched drug response and genomic data across diverse genetic backgrounds for robust analysis [17] [21]. | DepMap, NCI-60 [17] [21]. |

| CRISPR-KO Viability Data | Genome-wide knockout screens essential for computing Drug-KO Similarity (DKS) scores to identify targets [17]. | DepMap (Chronos-processed) [17]. |

| Gold-Standard Validation Sets | Curated, high-confidence drug-target pairs used to benchmark and validate computational predictions [17]. | COSMIC, oncoKB, DrugBank, ChemicalProbes.org [17]. |

| Open-Source Prediction Tools | Implemented algorithms for systematic MOA prediction and target identification. | DeepTarget [17]. |

| Bioinformatics Programming Tools | Languages and environments for data analysis, visualization, and automating computational workflows [22]. | R/RStudio, Python, Command Line/Bash [22]. |

| Electronic Lab Notebook | A chronologically organized document (e.g., wiki, blog, or custom system) to record detailed procedures, observations, and code, ensuring reproducibility [18]. | Lab-specific wikis, commercial ELN systems [18]. |

The How: A Practical Guide to Implementing Top-Performing DR Methods

Frequently Asked Questions

Q1: Which dimensionality reduction (DR) methods are most effective for separating distinct drug responses, like different Mechanisms of Action (MOAs)?

Methods that excel at preserving local data structures and creating well-separated clusters are ideal for this task. Based on large-scale benchmarking on the CMap dataset, the top-performing methods are:

- PaCMAP

- TRIMAP

- t-SNE

- UMAP [23]

These methods consistently ranked highest in internal validation metrics (like Silhouette score) and external clustering metrics (like Adjusted Rand Index), demonstrating their strength in grouping drugs with similar molecular targets and separating those with different MOAs [23].

Q2: We need to analyze subtle, dose-dependent changes in gene expression. Which DR methods should we use?

Detecting continuous, gradient-like patterns requires methods that effectively preserve global data structure and trajectory. For this specific application:

- Spectral Embedding

- PHATE

- t-SNE [23]

These methods showed stronger performance in capturing the nuanced transcriptomic variations that occur across different drug dosage levels, where other top methods for discrete analysis struggled [23].

Q3: Our primary goal is clear visualization for interpretation. Are the default parameters in DR tools sufficient?

Relying solely on standard parameter settings can limit optimal performance [23]. Each method has hyperparameters that significantly influence the output:

- t-SNE: The perplexity value balances the attention between local and global data structure.

- UMAP: The number of neighbors and minimum distance parameters control the granularity of the clustering.

For critical results, it is highly recommended to invest time in hyperparameter optimization to ensure the visualization accurately reflects the underlying biology of your data [23].

Q4: How does Principal Component Analysis (PCA) compare to modern non-linear methods for this type of data?

While PCA is a widely used, fast, and interpretable linear method, its performance in preserving biological similarity from drug-induced transcriptomic data is generally poorer compared to non-linear methods like UMAP and t-SNE [23]. PCA focuses on preserving global variance but often fails to capture the complex, non-linear manifold structures that characterize biological data, which can obscure finer local differences crucial for distinguishing drug responses [23] [24].

Troubleshooting Guides

Problem: Poor Cluster Separation in DR Embedding Your low-dimensional projection fails to clearly separate known biological classes (e.g., different MOAs).

| Potential Cause | Solution | Reference Method / Rationale |

|---|---|---|

| Incorrect Method Choice | Switch to a method known for strong local structure preservation, such as PaCMAP, t-SNE, or UMAP. | These methods optimize to keep similar data points close together, enhancing cluster separation [23]. |

| Suboptimal Hyperparameters | Systematically tune key parameters. For UMAP, increase n_neighbors to capture more global structure. For t-SNE, adjust perplexity. |

Hyperparameter exploration is critical, as standard settings are often not optimal [23]. |

| Data Preprocessing Issues | Ensure proper normalization and scaling of your transcriptomic data (e.g., z-scores). High technical noise can overwhelm biological signal. | The CMap benchmark used z-score normalized data to ensure comparability across genes and profiles [23]. |

Problem: Failure to Capture Biological Trajectories The DR output does not reveal a continuous gradient or progression (e.g., a dose-response relationship) that is known to exist.

| Potential Cause | Solution | Reference Method / Rationale |

|---|---|---|

| Method Inherently Discretizes Data | Employ a method specifically designed for trajectory inference. PHATE is particularly powerful as it uses diffusion geometry to model manifold continuity. | PHATE was developed to visualize transitional structures and progressions in high-dimensional biological data [23]. |

| Over-Emphasis on Local Neighborhoods | If using t-SNE, try significantly lowering the perplexity value. Alternatively, use Spectral Embedding, which performed well in dose-dependency benchmarks. |

Spectral and PHATE showed stronger performance for dose-dependent transcriptomic changes [23]. |

Problem: Long Computation Time or High Memory Usage The DR algorithm is too slow or resource-intensive for your dataset.

| Potential Cause | Solution | Reference Method / Rationale |

|---|---|---|

| Dataset is Very Large | For an initial exploration, use PCA for its speed, acknowledging its limitations. For non-linear reduction, consider Spectral or PHATE, which were among the top performers and are feasible for large datasets. | Benchmarking studies evaluate scalability; PCA is noted for speed, while Spectral and PHATE are applied to large CMap data [23]. |

| Inefficient Algorithm for Data Size | Explore methods known for computational efficiency. SOMDE has been shown to perform well with low memory usage and running time in related spatial transcriptomic benchmarks. | While not in the CMap DR benchmark, SOMDE's design for scalability is noted in other large-scale transcriptomic evaluations [25]. |

The following table summarizes the relative performance of various DR methods across key tasks, as benchmarked on the CMap dataset [23].

| DR Method | Preserving Local Structure (Cluster Separation) | Preserving Global Structure (Trajectory) | Computational Efficiency | Key Application Scenario |

|---|---|---|---|---|

| PaCMAP | Excellent | Good | Good | Distinguishing discrete classes (e.g., MOAs) |

| t-SNE | Excellent | Good (with tuning) | Moderate | Cluster visualization and dose-response |

| UMAP | Excellent | Good | Good | General-purpose exploratory analysis |

| TRIMAP | Excellent | Good | Good | Balancing local/global structure |

| Spectral | Good | Excellent | Moderate | Detecting gradients and trajectories |

| PHATE | Good | Excellent | Moderate | Analyzing progressions (e.g., dosing) |

| PCA | Poor | Excellent | Excellent | Fast initial overview, linear trends |

Experimental Protocol: Benchmarking DR on CMap-Style Data

This protocol outlines how to evaluate DR method performance using a approach similar to the benchmark study [23].

1. Objective To systematically evaluate the ability of different dimensionality reduction (DR) methods to preserve biologically meaningful structures in drug-induced transcriptomic data.

2. Materials and Dataset Preparation

- Data Source: Utilize the Connectivity Map (CMap) dataset, a comprehensive resource of drug-induced gene expression profiles [23] [26].

- Data Extraction: Download level 5 data, which represents gene-level z-scores of differential expression (treatment vs. control) for 12,328 genes.

- Benchmark Conditions: Construct several evaluation datasets from CMap:

- Condition A (Different Cell Lines): Profiles from multiple cell lines (e.g., A549, MCF7) treated with the same compound.

- Condition B (Different Drugs): Profiles from a single cell line treated with multiple distinct compounds.

- Condition C (Different MOAs): Profiles from a single cell line treated with compounds targeting distinct molecular mechanisms of action.

- Condition D (Varying Dosages): Profiles from a single cell line treated with the same compound at different concentrations [23].

3. Dimensionality Reduction Execution

- Method Selection: Apply a wide array of DR methods (e.g., PCA, t-SNE, UMAP, PaCMAP, TRIMAP, Spectral, PHATE).

- Embedding Generation: Generate low-dimensional embeddings (e.g., 2D for visualization, higher dimensions for analysis) for each dataset and method.

- Parameter Consideration: Run each method with its default parameters initially, then perform a hyperparameter sensitivity analysis for critical findings.

4. Performance Evaluation and Metrics

- Internal Validation: Assess the quality of the embedding's intrinsic structure without external labels.

- Silhouette Score: Measures how similar an object is to its own cluster compared to other clusters.

- Davies-Bouldin Index (DBI): Evaluates cluster separation based on the ratio of within-cluster to between-cluster distances [23].

- External Validation: Assess how well the embedding clusters align with known biological labels.

- Adjusted Rand Index (ARI): Measures the similarity between two data clusterings, corrected for chance.

- Normalized Mutual Information (NMI): Measures the mutual dependence between the clustering and the true labels [23].

- Visual Inspection: Critically examine 2D scatter plots of the embeddings to assess cluster separation, trajectory, and overall layout intuitiveness.

Research Reagent Solutions

| Item | Function in Experiment | Specification / Note |

|---|---|---|

| CMap Database | Provides the foundational drug perturbation transcriptomic profiles for benchmarking. | Use the latest build; contains ~7,000 profiles from 5 cell lines treated with 1,309 compounds [26]. |

| LINCS L1000 Database | A larger-scale alternative/complement to CMap, featuring gene expression signatures from a vast number of genetic and chemical perturbations. | Data is based on L1000 assay, measuring 978 landmark genes [26]. |

| DR Software Libraries | Implementation of the dimensionality reduction algorithms. | Common choices include: scikit-learn (PCA, Spectral), umap-learn (UMAP), openTSNE (t-SNE). |

Workflow Diagram for DR Benchmarking

DR Method Selection Logic

Frequently Asked Questions

1. Which dimensionality reduction method is best for preserving both local and global structures in my data? PaCMAP is specifically designed to preserve both local and global structure by using a unique loss function and a graph optimization process that initially captures global structure before refining local details [27] [28]. TRIMAP also aims for this balance but may struggle with local structure in some cases [28]. UMAP preserves more global structure than t-SNE but still focuses heavily on local neighborhoods [29] [30].

2. I am new to dimensionality reduction and need a method that works well without extensive parameter tuning. What do you recommend? PaCMAP is an excellent starting point, as it is robust to initialization and works effectively with its default hyperparameters across many datasets [28]. In a large-scale benchmark study, standard parameter settings limited the optimal performance of many DR methods, highlighting the value of a method that performs well out-of-the-box [23].

3. My primary goal is to visualize clear, separated clusters in a high-dimensional dataset like transcriptomic data. Which method should I choose? For cluster separation in complex biological data like transcriptomes, t-SNE, UMAP, PaCMAP, and TRIMAP have been shown to outperform other methods [23] [31]. A 2025 benchmarking study on drug-induced transcriptomic data confirmed their effectiveness in grouping samples with similar molecular targets [23].

4. Why might my t-SNE or UMAP visualization show clusters that I know are not close together in the original high-dimensional space? This is a common limitation. t-SNE and UMAP primarily optimize for preserving local structure (i.e., distances to nearest neighbors) and can distort the global structure (distances between clusters) [28] [30]. Their loss functions do not exert attractive forces over longer distances, so the relative positions of clusters on the plot may not reflect their true relationships [30].

5. How does PaCMAP achieve better global structure preservation than UMAP or t-SNE? PaCMAP uses a combination of three types of point pairs in its loss function—neighbor pairs, mid-near pairs, and further pairs. The attractive forces from the mid-near and further pairs help to pull the larger data structure into shape, preserving global relationships. Furthermore, it employs a dynamic optimization process that focuses on getting the global structure right before refining the local details [27] [28].

6. My dataset is very large. Are any of these methods particularly fast or scalable? UMAP and PaCMAP are recognized for their scalability [29] [28]. In independent tests on the MNIST dataset (60,000 samples), PaCMAP completed the embedding faster than UMAP, which was in turn faster than t-SNE [28].

Troubleshooting Guides

Problem: Poor Preservation of Global Structure

- Symptoms: The relative positions and distances between major clusters in your low-dimensional plot do not match your understanding of the high-dimensional data. For example, a 3D object like a mammoth does not retain its shape when projected to 2D [30].

- Solutions:

- Switch your algorithm: Use PaCMAP or TRIMAP, which are specifically designed to better capture global structure [30].

- Adjust UMAP: While not a perfect fix, you can try increasing the

n_neighborsparameter in UMAP (e.g., from 15 to 50 or 100). This forces the algorithm to consider a larger local neighborhood when constructing its initial graph, which can improve global coherence [29]. - Check your initialization: Both t-SNE and UMAP rely on a PCA initialization to impart global structure. Using a random initialization instead can lead to significantly worse and unpredictable global layouts [30].

Problem: Long Computation Times

- Symptoms: The dimensionality reduction process takes an impractically long time to complete.

- Solutions:

- Use a faster algorithm: For large datasets, consider using UMAP or PaCMAP over t-SNE, as they are generally more computationally efficient [29] [28].

- Sample your data: If possible, run the method on a representative subset of your data to fine-tune hyperparameters before performing the full-scale analysis.

- Leverage PCA initialization: For t-SNE, using PCA initialization (

init='pca') is not only good for global structure but is also faster than random initialization [30].

Problem: Failure to Capture Subtle, Continuous Trends (e.g., Dose-Response)

- Symptoms: The method fails to show a clear gradient or trajectory in data where you expect a continuous change, such as in dose-dependent transcriptomic changes.

- Solutions:

- Choose a trajectory-aware method: Standard clustering-focused methods like UMAP and t-SNE can struggle here. A 2025 benchmark study found that Spectral, PHATE, and t-SNE showed stronger performance in detecting subtle dose-dependent transcriptomic changes [23] [31].

- Explore other specialists: Consider methods like PHATE (Potential of Heat-diffusion for Affinity-based Trajectory Embedding), which is designed to model diffusion-based geometry and visualize gradual biological transitions [23].

Method Comparison & Selection Guide

The table below summarizes the key characteristics, strengths, and weaknesses of the four top-tier methods to help you make an informed choice.

| Method | Core Principle | Best For | Key Strengths | Key Weaknesses / Considerations |

|---|---|---|---|---|

| t-SNE [29] | Minimizes divergence between high-/low-dimensional probability distributions. | Visualizing local structure and clear cluster separation [23]. | Excellent at revealing local clusters; well-established. | Computationally slow; distorts global structure; sensitive to perplexity parameter [29] [28]. |

| UMAP [29] | Approximates a high-dimensional graph, then optimizes a low-dimensional equivalent. | Balancing speed and clarity for large datasets [29] [28]. | Faster than t-SNE; clearer global structure than t-SNE. | Global structure can still be unreliable; results can be sensitive to parameter choices [30]. |

| PaCMAP [27] [28] | Optimizes a loss function using three types of point pairs (neighbor, mid-near, further) in a dynamic process. | Preserving both local and global structure with minimal tuning [28] [30]. | Superior global structure preservation; robust to parameters; fast. | Newer method with a smaller user base than UMAP/t-SNE. |

| TRIMAP [23] | Optimizes embedding using triplets of points (two neighbors, one random point). | Capturing global structure and large-scale data relationships [23] [30]. | Effective at preserving global structure; performs well in benchmarks. | Can struggle with fine local structure details [28]. |

Experimental Protocols for Benchmarking

To objectively evaluate these methods on your own data, you can adapt the following benchmarking protocol from a recent scientific study.

Protocol: Benchmarking DR Methods for Discriminant Analysis

- Source: Adapted from Kwon et al. (2025), Scientific Reports [23] [31].

- Objective: To evaluate the ability of DR methods to preserve biologically meaningful structures (e.g., from different classes, doses, or cell lines).

1. Data Preparation & Experimental Conditions

- Dataset: Use a dataset with known ground truth labels (e.g., cell lines, drug treatments, molecular mechanisms of action).

- Benchmark Conditions: Test methods under distinct conditions to assess different aspects of performance:

- Condition i: Different subjects or cell lines treated with the same compound.

- Condition ii: The same cell line treated with different compounds.

- Condition iii: The same cell line treated with compounds targeting distinct mechanisms of action (MOAs).

- Condition iv: The same cell line treated with the same compound at varying dosages (to test sensitivity to continuous change).

2. Dimensionality Reduction Application

- Apply all DR methods (UMAP, t-SNE, PaCMAP, TRIMAP) to the high-dimensional data under each condition to generate 2D embeddings.

- Use standard or optimized hyperparameters for each method. The Kwon et al. study noted that standard settings often limit performance, so some hyperparameter exploration is advised [23].

3. Evaluation Metrics Use a combination of internal and external validation metrics to assess the quality of the embeddings.

- Internal Cluster Validation Metrics (Assess cluster compactness and separation without ground truth):

- External Cluster Validation Metrics (Assess how well clusters match known labels):

4. Visualization and Interpretation

- Visually inspect the 2D embeddings to see if they align with known biological groups or expected trajectories.

- The Kwon et al. study found that hierarchical clustering applied to the embeddings was particularly effective for evaluating clustering accuracy against ground truth labels [23].

The Scientist's Toolkit: Research Reagent Solutions

This table lists the essential "research reagents"—the software tools and metrics—you will need to conduct your dimensionality reduction analysis effectively.

| Tool / Reagent | Function / Purpose | Typical Application in DR Analysis |

|---|---|---|

| scikit-learn (Python) | A core machine learning library. | Provides implementations of PCA and t-SNE, and utilities for calculating metrics like the Silhouette Score [28]. |

| UMAP-learn (Python) | A specialized library for the UMAP algorithm. | Used to apply the UMAP algorithm to high-dimensional data for visualization and analysis [28]. |

| PaCMAP (Python) | A library for the PaCMAP algorithm. | The primary tool for running PaCMAP, which is effective at preserving both local and global structure [28]. |

| TRIMAP (Python) | A library for the TRIMAP algorithm. | Used to run the TRIMAP algorithm, which is strong at preserving global structure [28]. |

| Silhouette Score | An internal evaluation metric. | Quantifies the quality of clusters formed in the low-dimensional embedding without using ground truth labels [23]. |

| Adjusted Rand Index (ARI) | An external evaluation metric. | Measures the agreement between the clustering in the DR result and the known ground truth labels [23]. |

Workflow Diagram for Method Selection

The diagram below outlines a logical workflow to guide you in selecting and applying the appropriate dimensionality reduction method.

The analysis of complex, high-dimensional data is a fundamental challenge in modern scientific research, particularly in studies of brain dynamics, cellular processes, and morphometric analysis. Potential of Heat-diffusion for Affinity-based Transition Embedding (PHATE) is a dimensionality reduction technique specifically designed to preserve both local and global data structure, along with the continuous progression of data dynamics in the low-dimensional embedding space [32]. Unlike other methods such as t-distributed Stochastic Neighbor Embedding (t-SNE) which may fail to preserve global similarities, PHATE provides a smoother account of a system's evolution, making it exceptionally suitable for capturing subtle, continuous variations in data where other techniques might obscure progressive changes [32].

This technical support center focuses on the application of PHATE within the context of morphometric discriminant analysis research, where it enables researchers to visualize and analyze the progressive nature of biological and structural changes. By providing detailed troubleshooting guides, experimental protocols, and analytical workflows, we aim to support researchers in optimizing their use of dimensionality reduction for detecting nuanced patterns that are critical in fields such as neuroscience, drug development, and environmental science.

Frequently Asked Questions (FAQs)

Q1: What makes PHATE more suitable for analyzing continuous biological processes compared to other dimensionality reduction methods?

PHATE excels at preserving the temporal dynamics and continuous trajectories inherent in biological systems. It leverages diffusion geometry and potential distance metrics to capture the underlying continuous manifold of data, making it particularly effective for visualizing processes like neuronal state transitions [32] or cellular differentiation. Whereas methods like PCA may oversimplify non-linear relationships and t-SNE often emphasizes local structure at the expense of global continuity, PHATE maintains both, revealing the progression of subtle variations rather than presenting data as discrete, disconnected clusters.

Q2: How do I determine the optimal parameters for PHATE when working with morphometric data?

Parameter optimization depends on your specific dataset and research question. For most morphometric applications, start with these guidelines:

- knn (number of neighbors): Controls local connectivity. For datasets with 5,000-50,000 data points, begin with

knn=5and increase toknn=10-30for noisier data or to capture broader relationships [33]. - decay (alpha parameter): Influences the transformation from affinities to potential. The default is typically

decay=40, but for particularly sparse or dense datasets, values between15-40may improve results [33]. - t (diffusion time): Often set to

'auto'to allow PHATE to determine the optimal value based on the data's intrinsic dimensionality.

Always validate your parameter choices by checking the stability of the resulting embeddings and their biological plausibility.

Q3: I'm encountering installation and dependency conflicts when setting up PHATE. How can I resolve these issues?

Installation issues commonly arise from pre-existing Python environments or dependency version mismatches. The most reliable approach is to create a fresh virtual environment before installation [34]:

If you encounter specific error messages like "TypeError: init() got an unexpected keyword argument 'use.alpha'", this indicates dependency version incompatibility, particularly with the graphtools package [33]. Ensure you're using compatible versions by installing the complete PHATE ecosystem:

Q4: Can PHATE be integrated with other analysis tools commonly used in morphometric research?

Yes, PHATE is designed for integration with standard scientific Python workflows. You can seamlessly incorporate PHATE with:

- Scanpy and Seurat for single-cell data analysis

- Scikit-learn for downstream clustering and classification

- Matplotlib and Plotly for visualization of embeddings

- NumPy and pandas for data manipulation

This interoperability makes PHATE particularly valuable in comprehensive analytical pipelines where multiple techniques are applied sequentially to extract meaningful biological insights.

Troubleshooting Common Experimental Issues

Data Preprocessing Problems

Problem: Inconsistent embedding results across similar datasets This often stems from improper data normalization before applying PHATE. Morphometric data from different sources or collection batches may have varying scales that disproportionately influence the neighborhood graph construction.

Solution: Implement robust standardization:

- Apply Z-score normalization to each feature (mean=0, standard deviation=1)

- For data with outliers, use robust scaling (centering with median, scaling with IQR)

- Ensure consistent preprocessing across all compared datasets

Validation: Check that the post-normalization distribution of features is consistent across datasets using Q-Q plots or Kolmogorov-Smirnov tests.

Problem: Poor separation of known biological groups in PHATE embedding When PHATE fails to separate groups that are known to be biologically distinct, the issue often lies in the high-dimensional neighborhood graph construction.

Solution:

- Adjust the knn parameter to optimize local versus global structure preservation

- Experiment with different distance metrics (Euclidean, cosine, correlation) based on your data type

- Apply feature selection to remove uninformative variables before dimensionality reduction

- Consider multiscale PHATE approaches to capture structure at different resolutions

Algorithm-Specific Errors

Problem: "Unexpected keyword argument" errors during execution

As seen in the error traceback "TypeError: init() got an unexpected keyword argument 'use.alpha'", this occurs when there are API incompatibilities between PHATE and its dependencies [33].

Solution:

Problem: Excessive memory usage with large datasets PHATE's graph construction can be memory-intensive for datasets with >100,000 points.

Solution:

- Use PCA pre-processing to reduce dimensionality to 50-100 components before applying PHATE

- Implement data subsampling strategies while maintaining population representation

- Employ approximate nearest neighbor algorithms when exact computation is prohibitive

- Consider batch processing strategies for extremely large datasets

Experimental Protocols and Methodologies

Standard PHATE Workflow for Morphometric Analysis

The following workflow has been adapted from published research applying PHATE to neuroimaging data [32] and can be generalized to various morphometric applications:

Step 1: Data Acquisition and Preprocessing

- Acquire raw data (e.g., MEG signals, microscopic images, or geometric measurements)

- Apply quality control metrics to remove artifacts and outliers

- Perform source reconstruction if working with neuroimaging data [32]

- Standardize data using z-score normalization with a threshold typically set at 2-3 standard deviations [32]

Step 2: Temporal Segmentation and Feature Extraction

- For time-series data, identify transient events or "avalanches" of activity [32]

- Extract morphometric features relevant to your research question (shape descriptors, texture metrics, etc.)

- Create a feature matrix where rows represent observations and columns represent features

Step 3: PHATE Embedding Calculation

Step 4: Validation and Interpretation

- Apply K-means clustering to identify natural groupings in the PHATE space [32]

- Compute transition probabilities between states for dynamic data [32]

- Validate against null models to ensure significance of observed patterns [32]

- Correlate PHATE coordinates with external biological variables

Quantitative Thresholds for MEG Data Analysis

Table 1: Standard Parameters for Neuronal Avalanche Detection in MEG Data

| Parameter | Recommended Value | Purpose | Validation Approach |

|---|---|---|---|

| Z-score threshold | 3 SD [32] | Binarize activation patterns | Test robustness across 2-4 SD [32] |

| Minimum avalanche size | 2 active regions [32] | Define significant events | Compare to null models |

| Cluster number (K-means) | Data-driven (e.g., elbow method) | Identify discrete states | Check against surrogate data [32] |

| PHATE dimensions | 2-3 for visualization [32] | Final embedding | Preserve >80% variance |

Research Reagent Solutions and Computational Tools

Table 2: Essential Tools for PHATE-Based Morphometric Analysis

| Tool/Category | Specific Implementation | Application Context | Key Considerations |

|---|---|---|---|

| Dimensionality Reduction | PHATE algorithm [32] [34] | Capturing continuous trajectories | Superior to t-SNE for preserving dynamics [32] |

| Clustering Method | K-means clustering [32] | Identifying discrete states from continuous embeddings | Optimal cluster number varies by dataset |

| Data Processing | Z-score standardization [32] | Data normalization before analysis | Threshold of 3 SD recommended for neural data [32] |

| Visualization | Matplotlib, Plotly [34] | Visualizing PHATE embeddings | 2D/3D scatter plots with color-coded features |

| Validation Framework | Null model comparisons [32] | Testing statistical significance | Temporal randomization preserves marginal statistics [32] |

| Programming Environment | Python (>=3.9) [34] | Primary computational platform | Requires specific dependency versions |

Workflow Visualization

Diagram 1: Comprehensive PHATE Analysis Workflow for Morphometric Data

Diagram 2: Specialized Workflow for MEG Data Analysis with PHATE

Troubleshooting Guides

Guide: Resolving Low CNN Classification Accuracy on Morphometric Data

Problem: Your Convolutional Neural Network (CNN) is achieving low accuracy when classifying shapes or biological structures from images, such as seeds, teeth, or bone surface modifications.

Explanation: CNNs require sufficient and relevant data to learn discriminative features. Low accuracy can stem from an inadequate dataset size, poor data quality, or a model architecture that is not complex enough to capture the essential morphological patterns.

Solution Steps:

- Data Augmentation: Artificially expand your training dataset by applying random, realistic transformations to your images. These include rotations, scaling, slight translations, and adjustments to brightness and contrast. This helps the model generalize better and prevents overfitting.

- Leverage Transfer Learning: Instead of training a CNN from scratch, initialize your model with weights pre-trained on a large, general image dataset (e.g., ImageNet). Fine-tune the final layers of this pre-trained model on your specific morphometric data. This approach is particularly effective when you have a limited dataset [35].

- Try Few-Shot Learning: If your dataset is very small, explore Few-Shot Learning (FSL) models. Research has shown FSL can achieve high accuracy (e.g., 79.52%) in classifying tooth marks, performing nearly as well as full-scale Deep Learning models in scenarios with limited data [36].

Guide: Debugging Suboptimal GMM Clustering Results

Problem: The Gaussian Mixture Model (GMM) is failing to identify meaningful, well-separated clusters in your high-dimensional morphometric or transcriptomic data.

Explanation: GMMs make soft, probabilistic cluster assignments and can model ellipsoidal cluster shapes, offering more flexibility than K-Means. Poor performance often relates to incorrect model initialization, wrong assumptions about the data's distribution, or an improperly chosen number of components.

Solution Steps:

- Smart Initialization: Avoid random initialization. Use the results of a K-Means++ algorithm to set the initial means and covariances for the GMM's Expectation-Maximization (EM) algorithm. This leads to more stable and faster convergence.

- Model Selection with BIC/AIC: Determine the optimal number of Gaussian components (clusters) in your data by fitting multiple GMMs with a different number of components (k). Plot the Bayesian Information Criterion (BIC) or Akaike Information Criterion (AIC) for each model and choose the 'k' at the elbow of the curve, where the score improvement plateaus [37].

- Experiment with Covariance Types: The GMM's

covariance_typehyperparameter controls the shape and orientation of the clusters. Test different types:'full': Each component has its own general covariance matrix (maximum flexibility).'tied': All components share the same general covariance matrix.'diag': Each component has its own diagonal covariance matrix.'spherical': Each component has its own single variance value. Start with'full'for the most flexibility, but if the model overfits, try a more constrained type [37].

Guide: Integrating a CNN Feature Extractor with a GMM Clustering Head

Problem: You want to build a hybrid pipeline where a CNN extracts features from images and a GMM performs clustering on these features, but the integration is not working correctly.