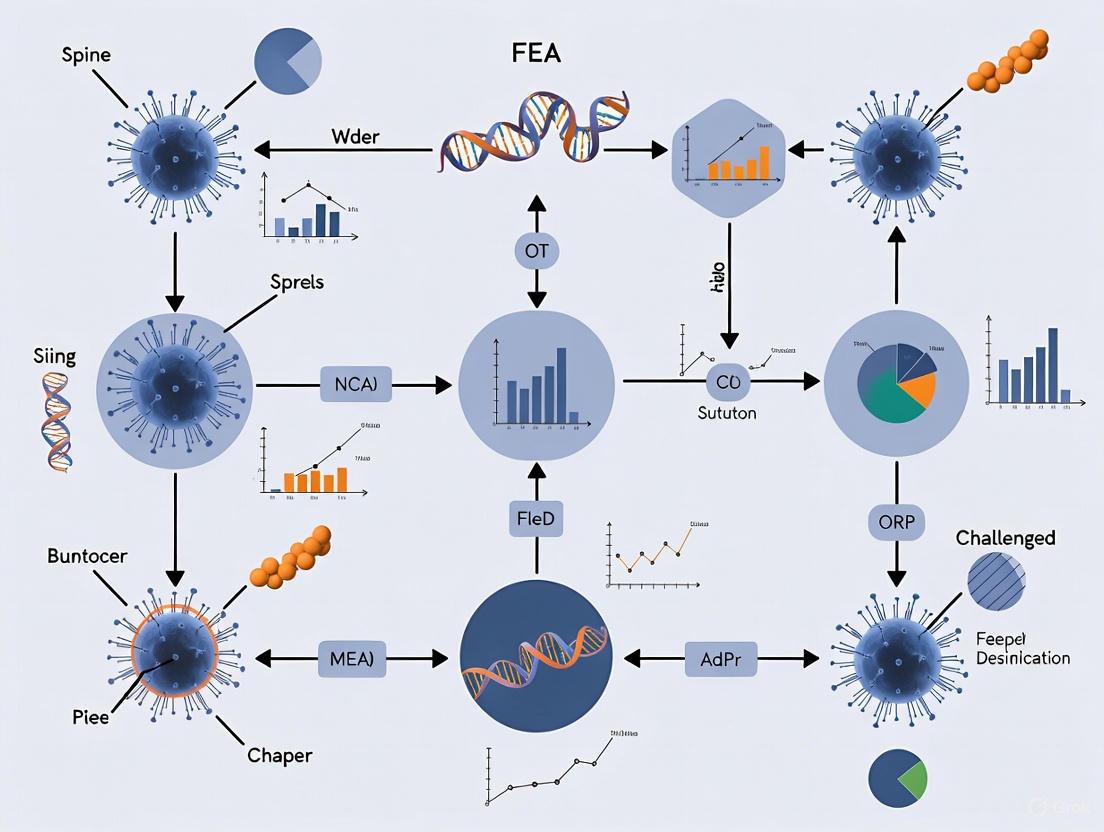

Navigating FEA Protocol Challenges: Verification, Validation and Multiphysics Solutions for Biomedical Research

This article addresses the critical challenges researchers face in implementing reliable Finite Element Analysis protocols for biomedical applications.

Navigating FEA Protocol Challenges: Verification, Validation and Multiphysics Solutions for Biomedical Research

Abstract

This article addresses the critical challenges researchers face in implementing reliable Finite Element Analysis protocols for biomedical applications. Covering foundational principles to advanced applications, we explore multiphysics coupling, multiscale modeling, and computational demands while providing practical verification and validation methodologies. Through comparative analysis and troubleshooting guidance, we establish robust frameworks for ensuring FEA result credibility in drug development and clinical research contexts, emphasizing the hybrid approach that combines computational efficiency with experimental validation.

Understanding FEA Fundamentals: Core Principles and Current Landscape Challenges

The Critical Importance of FEA Protocol Reliability in Biomedical Research

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Common FEA Solution Errors

Finite Element Analysis in biomedical research often encounters specific solution errors. The table below outlines common issues, their underlying causes, and recommended solutions.

Table 1: Common FEA Solution Errors and Resolution Strategies

| Error Scenario | Root Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| Unconverged Solution [1] | Nonlinearities from material properties (e.g., plasticity), contact, or large deformations preventing solver convergence. | Check Newton-Raphson residual plots to identify "hotspot" elements with high residuals [1]. | Refine mesh in contact regions, use displacement-based loading instead of force, or ramp loads more slowly [1]. |

| Degree of Freedom (DOF) Limit Exceeded [1] | Rigid Body Motion (RBM) due to insufficient constraints, allowing parts to move freely. | Run a Modal analysis; modes at or near 0 Hz indicate under-constrained parts [1]. | Ensure all parts are properly constrained by supports or connected via contacts/joints to supported parts [1]. |

| Element Formulation Errors / High Distortion [1] | Elements become highly distorted, skewed, or inverted, making a meaningful solution impossible. | Locate the specific failing elements using solver error messages and inspect their shape and location [1]. | Improve mesh quality in the affected region; use ramped effects for contacts with initial penetration [1]. |

| Singularities [2] | Boundary conditions or model geometry (e.g., sharp corners, point loads) creating theoretically infinite stresses. | Identify localized "red spots" of very high stress at sharp re-entrant corners or single nodes [2]. | Avoid applying forces to single nodes; round sharp corners if possible; understand that localized infinite stresses may not be physically meaningful [2]. |

| Mesh Discretization Error [3] | Mesh is too coarse to accurately capture the physical phenomena of interest, such as stress gradients. | Perform a mesh convergence study by refining the mesh and observing if the results change significantly [3]. | Systematically refine the mesh in critical regions until the solution stabilizes (i.e., converges) [3]. |

Guide 2: Addressing Model Setup and Validation Pitfalls

Beyond solver errors, foundational mistakes during model setup can compromise the entire analysis. This guide addresses these critical early-stage challenges.

Table 2: Model Setup and Validation Pitfalls

| Common Pitfall | Impact on Reliability | Corrective Protocol |

|---|---|---|

| Unclear Analysis Objectives [3] | Using inappropriate modeling techniques (e.g., linear vs. nonlinear), leading to incorrect conclusions. | Before modeling, explicitly define what the FEA must capture (e.g., peak stress, stiffness, fatigue life) [3]. |

| Inconsistent Segmentation [4] | Significant variations in biomechanical data (stress, strain) due to inconsistent 3D model generation from medical scans. | Apply the same standardized segmentation procedure (e.g., KI, KI-95.0) to all specimens in a study [4]. |

| Unrealistic Boundary Conditions [3] | Model behavior that does not reflect real-world physics, invalidating results. | Develop a strategy to test and validate boundary conditions, ensuring they properly represent the physical environment [3]. |

| Ignoring Contact Conditions [3] | Incorrect load transfer and structural response in assemblies, as software does not assume contact by default. | Specify contact conditions between bodies and conduct robustness studies to check parameter sensitivity [3]. |

| Inadequate Verification & Validation (V&V) [3] | No confidence in the numerical accuracy or real-world predictive capability of the model. | Implement a V&V process including mathematical checks, accuracy checks, and correlation with experimental test data [3]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is a mesh convergence study considered a fundamental step in reliable FEA? A mesh convergence study is essential because the accuracy of the FEA solution is directly tied to mesh density. As elements are made smaller (mesh refinement), the computed solution approaches the true solution. A mesh is considered "converged" when further refinement does not produce significant changes in the results, giving confidence that the numerical error is acceptable [3].

FAQ 2: Our FEA models of 3D-printed trabecular bone structures sometimes differ greatly from physical tests. What could be the issue? A common oversight is neglecting the geometrical and material peculiarities of thin, additively manufactured struts. Simplifying the material model or not using realistic, as-manufactured geometries can severely reduce model fidelity. A systematic approach integrating experimental geometry characterization and material property testing (e.g., using a ductile damage model for titanium alloys) is crucial for developing reliable FE models of these complex structures [5].

FAQ 3: How can small variations in the CT segmentation process impact my biomechanical FEA results? Research shows that even a 5.0% variation in segmentation intensity values can lead to statistically significant differences in key biomechanical measurements, including average displacement, pressure, stress, and strain. This highlights that the segmentation process is a source of variance and mandates that consistent, standardized segmentation procedures be applied to all specimens within a single study to ensure valid conclusions [4].

FAQ 4: What are singularities, and how should I handle "infinite stress" spots in my model? A singularity is a point in your model where stresses theoretically tend toward an infinite value, often caused by boundary conditions at sharp corners or point loads. While confusing, it's important to recognize that these are often numerical artifacts. You should avoid applying forces to single nodes and understand that these localized infinite stresses may not be physically meaningful. The focus should be on the stress distribution in areas away from these singular points [2].

Experimental Protocols for FEA Reliability

Protocol 1: Standardized Segmentation and Mesh Generation for Anatomical Structures

Objective: To generate consistent and accurate 3D finite element models from CT data for biomechanical analysis.

Workflow Diagram:

Detailed Methodology:

- Data Acquisition: Acquire CT data from cadaveric or patient scans. Exclude specimens with joint replacements, surgical pins, or advanced osteoarthritis to avoid artifacts [4].

- Standardized Segmentation: Perform segmentation in a dedicated imaging platform (e.g., 3D Slicer). Apply the exact same segmentation algorithm and intensity value parameters (e.g., Kittler-Illingworth method) to all specimens in the study to minimize variation [4].

- Mesh Processing and Standardization: Import the segmented 3D model (e.g., as an .stl file) into mesh processing software (e.g., MeshLab). Apply a standardized sequence of automated functions: isolated piece removal, non-manifold edge repair, duplicate face removal, and surface hole closure [4].

- Geometry Preparation: Crop the model to the region of interest (e.g., femoral head) and uniformly orient it along a standard axis to ensure consistent application of loads and constraints in subsequent FEA [4].

- Finite Element Model Creation: Import the processed mesh into FEA software (e.g., FEBio). Convert the triangular surface mesh into a solid mesh using nodally integrated tetrahedral elements for better performance [4].

- Analysis: Assign consistent material properties (e.g., Young’s modulus of 16800 MPa, Poisson’s ratio of 0.31 for bone) and boundary conditions (e.g., 1800N compressive load) to all models [4].

Protocol 2: Finite Element Model Verification and Validation (V&V) Framework

Objective: To ensure the computational model is solved correctly (Verification) and that it accurately represents the real-world physical behavior (Validation).

Workflow Diagram:

Detailed Methodology:

- Verification - Accuracy & Mathematical Checks: Perform checks to ensure the model is free of numerical errors. This includes checking for rigid body motion, unrealistic deformations, and unit system consistency [3] [1].

- Verification - Mesh Convergence Study: Refine the mesh in critical regions and observe key outputs (e.g., peak stress). A converged mesh is achieved when further refinement produces no significant change in results, ensuring numerical accuracy [3].

- Validation - Correlation with Test Data: Whenever possible, compare FEA results (e.g., strain at a specific location) with data obtained from physical experimental tests (e.g., strain gauge records). The correlation between simulation and experiment validates the model's predictive capability [3] [5].

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details key computational and material solutions used in developing reliable FE models for biomedical research, as featured in the cited experiments.

Table 3: Key Reagents and Materials for Reliable Biomedical FEA

| Item Name | Function / Role in FEA Protocol |

|---|---|

| 3D Slicer [4] | An open-source software platform for segmenting DICOM image data (e.g., CT scans) to create initial 3D models of anatomical structures. |

| FEBio [4] | An open-source finite element software package specifically tailored for biomechanics and bioengineering applications, supporting nonlinear materials and contact. |

| Kittler-Illingworth (KI) Algorithm [4] | A specific image segmentation algorithm used to extract osteological structures from CT data, forming the basis for generating consistent 3D models. |

| Isotropic Elastic Material Model [4] | A material model used to represent bone in simulations, defined by a Young's modulus (e.g., 16800 MPa) and Poisson's ratio (e.g., 0.31). |

| Tetrahedral Elements [4] | A type of finite element, often used as a solid mesh for modeling complex anatomical geometries, with nodally integrated variants offering improved performance. |

| Ductile Damage Model [5] | A advanced material model used for metals like Ti6Al4V that simulates plastic deformation and failure, crucial for modeling 3D-printed trabecular structures. |

Current Challenges in Multiphysics Coupling for Biological Systems

Troubleshooting Common Multiphysics FEA Simulation Failures

This section addresses frequent errors and solution strategies encountered when modeling biological systems.

Table 1: Common Simulation Failures and Troubleshooting Guide

| Error Symptom | Potential Root Cause | Solution Strategy | Relevant Biological Context |

|---|---|---|---|

| Non-convergence of solver | Material model nonlinearity is too high; contact definition is overly complex [6]. | Simplify the material model initially; use a stabilized solver; implement an arc-length method for path-dependent problems [6]. | Simulating soft tissue mechanics (e.g., intervertebral discs) with hyperelasticity [6]. |

| Unphysical stress concentrations | Inappropriate mesh granularity at critical features; unrealistic boundary conditions [7]. | Perform mesh sensitivity analysis, especially at geometric discontinuities; re-evaluate and smooth applied loads and constraints [7]. | Bone-implant interfaces in orthopedic devices; stent-artery interaction [7] [6]. |

| Violation of incompressibility | Use of inappropriate element formulation that cannot handle near-incompressible material behavior [7]. | Switch to mixed (u-P) elements (e.g., Taylor-Hood) that solve for displacement and pressure independently [7]. | Modeling fluid-saturated tissues like cartilage or meniscus [7]. |

| Inaccurate fluid-structure interaction (FSI) | Mismatched spatial or temporal discretization between the fluid and solid domains [8]. | Ensure compatible element types and sizes at the interface; use strongly-coupled FSI solvers with smaller time steps [8]. | 3D bioprinting extrusion, where bioink flow interacts with the deposited structure [8]. |

| High computational cost & long solve times | Model is too refined globally; use of a direct solver for a large-scale problem [9] [10]. | Use adaptive mesh refinement; employ efficient iterative solvers and preconditioners tailored for coupled systems [9] [10]. | Whole-organ simulations (e.g., cardiac mechanics) or multi-scale models [11] [10]. |

Essential Experimental Protocols for Model Validation

Accurate simulation requires rigorous validation against experimental data. Below are detailed protocols for key validation experiments.

Protocol for Mechanical Testing of Biological Soft Tissues

This protocol provides a methodology for obtaining stress-strain data to calibrate material models for soft tissues (e.g., ligaments, tendons).

- 1. Objective: To characterize the quasi-static tensile mechanical properties of a biological soft tissue specimen for FEA material model calibration.

- 2. Materials and Reagents:

- Universal mechanical testing machine (e.g., Instron)

- Phosphate-Buffered Saline (PBS) solution

- Environmental chamber for temperature control

- Custom-designed clamps with abrasive surfaces to prevent slippage

- Digital calipers

- Video extensometer or strain gauges

- 3. Procedure:

- Specimen Preparation: Harvest and prepare tissue specimens according to standard anatomical dissection techniques. Keep specimens hydrated with PBS throughout preparation and testing.

- Mounting: Carefully mount the specimen into the testing machine's clamps, ensuring the long axis of the tissue is aligned with the direction of tensile loading. Apply a minimal preload (e.g., 0.1 N) to remove any slack.

- Geometric Measurement: Use digital calipers to measure the cross-sectional area (e.g., width and thickness) of the specimen within the gauge length.

- Testing: Activate the environmental chamber to maintain physiological temperature (e.g., 37°C). Pre-condition the specimen by applying 10 cycles of a low-load tensile strain. Then, conduct a tensile test to failure at a constant strain rate (e.g., 0.5% per second) or perform a stress-relaxation test.

- Data Recording: Continuously record applied load (N) and actuator displacement (mm) or direct strain measurement at a minimum sampling rate of 50 Hz.

- 4. Data Analysis:

- Convert load-displacement data to engineering stress (force/original area) versus engineering strain (change in length/original length).

- Fit an appropriate constitutive model (e.g., Neo-Hookean, Mooney-Rivlin for soft tissues) to the stress-strain data to derive material parameters for FEA input [7].

Protocol for Experimental Validation of a Bone-Implant Construct

This protocol outlines a method for validating an FEA model of an orthopedic implant.

- 1. Objective: To validate the strain distribution predicted by an FEA model of a bone-implant construct using experimental strain measurements.

- 2. Materials and Reagents:

- Composite or cadaveric bone specimen

- Orthopedic implant (e.g., femoral stem, fracture plate)

- Strain gauges and data acquisition system

- Surgical instruments for implantation

- Mechanical testing machine

- Cyanoacrylate adhesive for strain gauge bonding

- 3. Procedure:

- Instrumentation: Select a composite or cadaveric bone model. Surface-bond strain gauges at critical locations on the bone (e.g., proximal, medial, distal) where high strain is predicted by a preliminary FEA model.

- Implantation: Surgically implant the device according to the manufacturer's surgical technique guide.

- Experimental Testing: Mount the instrumented bone-implant construct into the mechanical testing machine. Apply a physiologically relevant quasi-static load (e.g., joint force during walking).

- Data Collection: Record micro-strain values from all strain gauges simultaneously under the applied load.

- 4. Data Analysis:

- Compare the experimental strain gauge readings directly with the strain values predicted by the FEA model at the corresponding locations.

- Calculate quantitative metrics such as the correlation coefficient (R²) and relative error to objectively assess the model's predictive power [7] [6]. A model is often considered validated if the prediction error is within 10-15% of experimental measurements.

Frequently Asked Questions (FAQs)

Q1: How can I manage the different time and length scales when modeling a biological system from the cellular to the organ level? A1: Multi-scale modeling remains a primary challenge [11] [10]. A common strategy is a "hierarchical" or "information-passing" approach. Separate FEA models are created at distinct scales (e.g., tissue and organ). The results from the smaller-scale model (e.g., average tissue properties) are used as input parameters for the larger-scale model [11]. Emerging research focuses on AI-based surrogate models to accelerate this data transfer across scales [10].

Q2: What are the best practices for reporting my FEA study to ensure reproducibility and facilitate peer review? A2: Comprehensive reporting is critical. Beyond basic model geometry and loads, you must document:

- Model Structure: Precise material laws and parameters, mesh type and density, and all contact definitions [7].

- Verification & Validation (V&V): Describe steps taken to ensure the model solves the equations correctly (verification) and that it accurately represents reality (validation), ideally with quantitative comparisons to experimental data [7] [6].

- Software and Solvers: Specify the software, version, and solver settings (e.g., solver type, convergence tolerances) [7].

Q3: My model of a bioprinted structure does not accurately capture the post-printing behavior. What could be missing? A3: This is a key challenge in 3D bioprinting simulations. Traditional FEA may fail to capture the highly dynamic, multi-physics nature of the process. Your model likely needs to better account for the time-dependent coupling between the mechanical deformation during extrusion, the evolving material properties (e.g., cross-linking, viscosity), and the cell-matrix interactions that occur during and after printing [8]. Future tools aim to provide more accurate real-time simulation of these interactions [8].

Q4: How can AI and machine learning be integrated with traditional physics-based FEA? A4: AI is being used to augment FEA in several ways, as highlighted in recent research:

- Surrogate Modeling: AI models can be trained on FEA simulation data to create ultra-fast approximate models, which is vital for uncertainty quantification and design optimization [10].

- Parameter Identification: AI can help determine difficult-to-measure model input parameters from experimental data [10].

- Automated Research: "Virtual scientists" powered by AI can propose novel hypotheses and design strategies, such as new vaccine designs, which can then be analyzed using FEA and experimental validation [12].

Workflow and Relationship Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Biological Multiphysics FEA

| Tool / "Reagent" | Function / Purpose | Example Use-Case |

|---|---|---|

| FEBio | Open-source FEA software specifically designed for biomechanics and bioengineering [7]. | Modeling soft tissue mechanics, cartilage contact, and biphasic material behavior [7]. |

| Continuity | An open-source modeling environment for multi-scale problems in cardiac bioengineering [7]. | Simulating integrated electrophysiology and mechanics of the heart [7]. |

| AI Surrogate Models | Machine learning models trained on FEA data to provide instant predictions, bypassing costly simulations [10]. | Rapid parameter exploration and uncertainty quantification in patient-specific model calibration [11] [10]. |

| Digital Twin Framework | A patient-specific computer model that is updated with data from the individual over time [11]. | Pre-operative surgical planning for orthopedic procedures; in silico testing of medical devices [11] [6]. |

| CFD-FEM Coupling | Co-simulation of Computational Fluid Dynamics (CFD) and Finite Element Method (FEM) [13]. | Modeling blood flow interaction with vessel walls (FSI); simulating air flow in respiratory airways [13]. |

| DEM-FEM Coupling | Co-simulation of Discrete Element Method (DEM) and FEM for granular materials [13]. | Simulating the mechanical behavior of bone granules or agricultural grains during processing and handling [13]. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed to assist researchers, scientists, and drug development professionals in navigating the specific challenges of implementing multiscale finite element analysis (FEA) within physiological environments. The guidance is framed within the broader context of thesis research on FEA protocol challenges and solutions.

Frequently Asked Questions (FAQs)

Q1: What are the primary causes of solution non-convergence in nonlinear biomechanical models? Non-convergence typically stems from three main sources: complex contact conditions between biological structures, nonlinear material behaviors (e.g., tissue hyperelasticity), and inappropriate solver selection for dynamic problems. Implementing a stepped loading approach and verifying contact parameters can significantly improve convergence [3] [14].

Q2: How can I validate that my mesh is sufficiently refined for capturing stress concentrations in biological tissues? A mesh convergence study is fundamental. Systematically refine your mesh in critical regions and monitor key outputs like peak stress. A mesh is considered converged when further refinement produces no significant changes in results (typically <2% variation). This is especially crucial for capturing stress concentrations near geometric discontinuities in physiological structures [3].

Q3: My model results contradict experimental findings. What verification steps should I prioritize? First, confirm your unit system is consistent throughout the model. Then, methodically verify boundary conditions and material properties against your experimental setup. Finally, simplify the model to a case with a known analytical solution to verify the fundamental physics are being captured correctly before reintroducing complexity [3].

Q4: What are the computational trade-offs between implicit and explicit dynamics solvers for simulating physiological processes? Implicit solvers (e.g., Abaqus/Standard) are generally more efficient for static or low-speed dynamic problems but can struggle with complex contacts. Explicit solvers (e.g., Abaqus/Explicit, LS-DYNA) are better suited for high-speed dynamic events like impact or blast simulation but require small time steps, increasing computational cost [14].

Q5: How can I effectively manage the high computational cost of multiscale simulations? Leverage high-performance computing (HPC) resources and consider cloud-based FEA platforms that offer scalable computational power. Additionally, employ sub-modeling techniques where a global model informs a more refined local model, focusing computational resources only on regions of interest [15] [16].

Troubleshooting Guides

Guide 1: Resolving Contact Instabilities

- Problem: The simulation aborts due to contact errors, or results show unrealistic penetrations or forces at interfaces between biological components (e.g., bone-cartilage, device-tissue).

- Investigation Checklist:

- Verify initial contact conditions to ensure parts are not initially over-constrained or penetrating.

- Check the contact formulation; "Surface-to-Surface" is often more stable than "Node-to-Surface" for soft tissues.

- Adjust the contact stiffness; too high a value can cause instability, while too low can allow excessive penetration.

- Solution Protocol:

- Simplify: Start with a frictionless, bonded contact to ensure basic connectivity works.

- Incrementally Introduce Complexity: First, change the bonded contact to a rough or frictionless contact. Then, gradually increase the friction coefficient to the desired value.

- Stabilize: Use automatic stabilization or viscous damping if numerical noise persists, but use minimal values to avoid influencing the physical results [3] [14].

Guide 2: Addressing Unrealistic Stress Singularities

- Problem: The post-processor shows extremely high, localized stresses at specific points (e.g., sharp re-entrant corners, point loads) that are not physically plausible.

- Investigation Checklist:

- Identify if the high stress occurs at a point load or a sharp corner in the geometry.

- Check if the stress value continues to increase without bound as the mesh is refined at that location.

- Solution Protocol:

- Geometry: Round sharp corners with a small fillet, even if the CAD model appears sharp, as this better represents real-world conditions.

- Loading: Replace point loads with pressure loads distributed over a small, realistic area.

- Post-Processing: Exclude the highly localized stress at the singularity and instead report the stress at a small distance away, or use averaged stress values for assessment [3].

Guide 3: Implementing a Robust Multiscale Workflow

- Problem: Difficulty in efficiently transferring data (e.g., boundary conditions, deformation fields) between models at different spatial scales (organ, tissue, cellular).

- Investigation Checklist:

- Clearly define the specific physical values (displacement, strain, stress) that must be passed between scales.

- Ensure the mesh at the interface of the larger-scale model is sufficiently refined to provide meaningful boundary conditions for the smaller-scale model.

- Solution Protocol:

- Global Analysis: Run the macro-scale model (e.g., organ level) and save the solution (displacements and reactions) at the cut boundary for the region of interest.

- Submodeling: Create a more detailed, refined model of the region of interest (e.g., tissue or cellular level). Import the displacement field from the global analysis as the boundary condition for this submodel.

- Validation: Compare the results in the submodel at the driven boundary to the global model's results to ensure consistency [17]. This methodology is exemplified in research that links community-scale factors to individual biological outcomes, demonstrating the principle of passing information across scales [18].

Quantitative Data and Methodologies

FEA Market and Software Capabilities

Table 1: Global FEA Market Overview (2024-2033 Forecast) [19] [16]

| Metric | Value / Trend | Details |

|---|---|---|

| Market Size (2024) | USD 5.67 Billion | Base year valuation. |

| Forecast (2033) | USD 10.23 Billion | Projected market value. |

| CAGR | ~7.4% | Compound Annual Growth Rate. |

| Key Growth Drivers | Product complexity, lightweight design demands, regulatory pressures. | Adoption in automotive, aerospace, and medical industries. |

| Key Restraint | High computational cost and lack of skilled professionals. | Barriers to entry for smaller organizations. |

Table 2: Leading FEA Software for Advanced Biomechanical Analysis (2025) [14]

| Software | Primary Strengths | Ideal for Multiscale Physiology |

|---|---|---|

| ANSYS Mechanical | Comprehensive multiphysics, high-fidelity results, strong HPC support. | Coupling fluid-solid interaction (FSI) for cardiovascular systems or thermal-structural analysis. |

| Abaqus (Dassault) | Superior nonlinear mechanics (materials, contact), robust implicit/explicit solvers. | Modeling soft tissue deformation, complex contact in joint mechanics, and injury biomechanics. |

| MSC Nastran | Industry standard for linear dynamics, vibration, and buckling analysis. | Analyzing implant vibration or structural dynamics of biomedical devices. |

| Altair OptiStruct | Leading topology/shape optimization integrated with FEA. | Simulation-driven design of lightweight, patient-specific orthopedic implants. |

Detailed Experimental Protocol: FEA and Optimization of a Robotic Arm

This protocol from a recent study exemplifies a complete FEA-based optimization workflow, directly applicable to refining mechanical designs for biomedical applications [20].

Table 3: Key Research Reagent Solutions for FEA & Optimization [20]

| Item / "Reagent" | Function in the Protocol |

|---|---|

| 3D CAD Software (SolidWorks) | Creating the high-fidelity geometric model of the structure for analysis. |

| FEA Solver (ANSYS Mechanical) | Performing the structural simulation to compute stress, strain, and deformation. |

| Topology Optimization Module | Algorithmically determining the optimal material layout within a defined design space. |

| Multi-Objective Genetic Algorithm (GA) | An optimization tool used to find the best design that balances competing goals (e.g., weight vs. strength). |

Methodology:

- Objective Definition: The goal was to minimize the mass of a robotic arm while maintaining its structural integrity under operational loads. This is analogous to designing lightweight surgical tools or implants.

- Kinematic and Dynamic Analysis: The study first analyzed the arm's motion and determined the joint driving forces and velocities, which are critical for defining accurate loading conditions in the FEA.

- Finite Element Model Setup:

- A 3D model was imported into the FEA software.

- Materials: Appropriate material properties (e.g., Young's modulus, yield strength) were assigned.

- Constraints and Loads: Boundary conditions were applied to replicate the mounting points, and forces from the dynamic analysis were applied.

- Meshing: The model was discretized into finite elements. A mesh convergence study was highlighted as a critical step for accuracy [20].

- Structural Optimization:

- Design Space: The entire volume of the robotic arm components was defined as the region that could be modified.

- Constraints: Performance targets, including maximum allowable stress and minimum stiffness, were set as constraints.

- Objective Function: Minimize total mass.

- Optimization Loop: Using topology optimization and GA, the software iteratively modified the material distribution within the design space. After 11 optimization cycles, the final design was generated.

- Validation: The optimized design was analyzed again with FEA to ensure it met all strength and stiffness requirements under load.

Results: The protocol achieved a 14.28% reduction in mass (from 75.12 kg to 64.39 kg) while maintaining structural performance, demonstrating the power of combining FEA with optimization algorithms [20].

Workflow and Conceptual Diagrams

Multiscale FEA Troubleshooting Workflow

Submodeling Technique for Multiscale Analysis

FEA Model Verification & Validation Process

Computational Resource Demands and Performance Limitations

Troubleshooting Guides and FAQs

FAQ: Why is my FEA simulation running so slowly and using excessive memory?

Slow FEA simulations are often due to model complexity, inadequate hardware, or suboptimal solver settings. Key bottlenecks include a high number of finite elements, insufficient RAM, and communication overhead in parallel computing [21].

- Troubleshooting Checklist:

- Model Size: Check the number of degrees of freedom in your model. Models with millions of degrees of freedom are computationally intensive [21].

- Mesh Quality: Use hexahedral (brick) elements where possible, as they generally provide more accurate results at lower element counts than tetrahedral elements. For thin-walled structures, consider using 2D shell elements to greatly improve run time and accuracy [22].

- Hardware Resources: Monitor memory (RAM) usage during simulation. Memory bandwidth constraints are a significant barrier, and exceeding available system memory causes performance bottlenecks [21].

- Solver and HPC Configuration: For large-scale problems, ensure you are using an iterative solver and that the HPC workload is properly balanced across computing cores to minimize communication delays [21].

FAQ: How can I reduce the computational cost of my FEA without sacrificing critical accuracy?

Strategic model simplification and the use of advanced computing paradigms can significantly reduce costs.

- Actionable Protocol:

- Geometry Simplification: Remove unnecessary details from your CAD model, such as small fillets, rounds, and very small components that do not affect global stiffness [22]. Using a "defeature" or "fill" command in your pre-processing software can automate this.

- Mesh Optimization: Perform a mesh convergence study. Start with a coarser mesh and progressively refine it only in critical areas until the results stop changing significantly. This ensures you are not using an unnecessarily fine mesh everywhere [22].

- Leverage Cloud HPC: Use cloud-based High-Performance Computing (HPC) for on-demand, scalable resources. This eliminates the need for massive capital investment in on-premise infrastructure and allows you to pay only for the computing power you use [23].

- Explore AI Surrogates: For well-understood problems requiring many iterations, consider developing a machine learning surrogate model, such as a Graph Neural Network (GNN). Once trained on high-fidelity FEM data, these models can predict mechanical behavior in a fraction of the time required for a full simulation [24] [25].

Table 1: HPC Performance Benchmarks for FEA Simulations

| Simulation Type | Hardware Configuration | Traditional CPU Runtime | Accelerated HPC Runtime | Performance Gain |

|---|---|---|---|---|

| Complex Aerospace CFD [26] | 172M elements; 8x AMD MI300X GPUs | Several weeks | ~3.7 hours | ~98% reduction |

| General Large-Scale FEA [21] | CPU-only clusters | Hours to days | Minutes to hours | Significant reduction (exact % varies) |

| Typical Cloud HPC Workload [23] | On-premise hardware | Hours | "Minutes" on cloud HPC | Drastic reduction |

Table 2: Common FEA Computational Bottlenecks and Mitigations

| Bottleneck | Impact on Simulation | Recommended Mitigation Strategy |

|---|---|---|

| Memory Bandwidth [21] | Creates performance bottlenecks; limits model size. | Use cloud HPC with high-memory nodes; simplify model geometry [26] [22]. |

| Load Imbalance [21] | Reduced parallel efficiency; increased execution time. | Use advanced domain decomposition strategies in HPC settings. |

| Communication Overhead [21] | Diminishing returns when using thousands of computing cores. | Optimize HPC solver settings and parallel processing techniques. |

| Model Discretization [22] | Long run times, inaccurate results, poor mesh quality. | Use hexahedral elements over tetrahedral; employ shell elements for thin structures. |

Experimental Protocols for Performance Optimization

Protocol 1: Creating a Simplified, FEA-Ready Model from a Complex CAD File

Objective: To reduce computational demand by generating a simplified yet accurate geometry for meshing.

- Geometry Import: Import the original CAD model into your pre-processing software (e.g., Ansys SpaceClaim).

- Defeaturing: Identify and remove features that are irrelevant to the analysis. Common targets include:

- Small Fillets/Rounds: Use the "Fill" command to remove them [22].

- Insignificant Bodies: Remove very small components (e.g., small resistors on a circuit board) that do not contribute to global stiffness [22].

- Fasteners: Replace bolts and rivets with simplified 1D beam elements or rigid contact constraints [22].

- Midsurface Creation: For thin-walled structures, use the "Create Midsurface" tool to generate a surface body, which can later be meshed with efficient shell elements [22].

- Validation: Compare the simplified model's mass and center of gravity with the original to ensure gross properties are conserved.

Protocol 2: Implementing a Hybrid FEM-Neural Network Surrogate Model

Objective: To create a data-driven surrogate model for rapid prediction of mechanical behavior, bypassing the need for full FEM simulations after training.

- Synthetic Dataset Generation: Use a high-fidelity, well-parameterized FEM model to generate a diverse set of 500–800 simulated samples, covering variations in geometry, loads, and boundary conditions [24].

- Graph Extraction: Transform the FEM models into graph structures, (\mathcal{G}), where nodes, (N), represent discrete physical points and edges, (V), capture structural connectivity. Nodal inputs, (X), can simulate sensor data (e.g., reaction forces), while target outputs, (y), represent mechanical stress [25].

- Model Training: Train a Graph Neural Network (GNN) on the extracted graph dataset. The GNN performs regression on nodes to learn the mapping between inputs and stress distributions [25].

- Model Validation: Test the trained GNN surrogate model against a hold-out set of high-fidelity FEM results to confirm its predictive accuracy and reliability [24] [25].

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Advanced FEA Research

| Tool / Solution | Function in Research | Example Providers / Standards |

|---|---|---|

| Cloud HPC Platforms | Provides on-demand, scalable computing resources to handle large-scale simulations without capital investment in physical hardware [23]. | Rescale, Amazon Web Services (AWS), Microsoft Azure [26] [23]. |

| Commercial FEA Software | Industry-standard tools for performing high-fidelity, multi-physics simulations (e.g., linear dynamics, nonlinear deformation, crash tests) [23]. | Ansys Mechanical, Abaqus, LS-Dyna [23]. |

| Graph Neural Network (GNN) Libraries | Enable the creation of AI surrogate models from FEM data, allowing for real-time predictive modeling after training [25]. | PyTorch Geometric, Deep Graph Library (DGL). |

| Open-Source FEA Tools | Cost-effective solutions for concept testing and running a massive number of simulations, driven by a community of developers [23]. | CalculiX, FEniCS, Code_Aster. |

Technical Support Center

This support center addresses key challenges researchers face when integrating Artificial Intelligence (AI) and cloud computing into Finite Element Analysis (FEA) workflows. The guidance is framed within broader thesis research on overcoming FEA protocol challenges to enhance reliability, efficiency, and accessibility in computational engineering.

Troubleshooting Guides

Issue 1: Inaccurate Results from AI-Generated Models or Meshes

- Problem Statement: An AI tool suggests a mesh or a simplified model that produces results diverging from established benchmarks or experimental data.

- Diagnosis Procedure:

- Verify the AI's Inputs: Check the quality and relevance of the training data or the constraints provided to the AI system. Ensure the boundary conditions and load cases defined for the AI are physically realistic [27].

- Perform a Mesh Convergence Analysis: Manually refine the mesh around critical areas identified by the AI (e.g., high-stress gradients) and observe if the results stabilize. This validates whether the AI-suggested mesh is sufficient [27].

- Cross-Check with a High-Fidelity Model: Run a simulation using a traditional, high-fidelity model with a proven mesh. Compare key results (e.g., max stress, natural frequencies) with the AI-generated output to quantify the discrepancy [28].

- Solution Protocol:

- Do not treat AI as a black box. The researcher's role is to provide interpretation, validation, and judgment [28].

- Establish a review protocol requiring human oversight of all AI-generated outputs before critical decisions are made. Document the AI tool usage and the validation steps taken in your research records [29].

- Utilize software features that embed validation checks, such as displacement limits or maximum stress thresholds, directly into the simulation workflow [27].

Issue 2: High Cloud Computing Costs and Unmanageable Data Transfer Latency

- Problem Statement: Cloud-based FEA simulations are exceeding budgeted costs, or data transfer times are creating bottlenecks in the research timeline.

- Diagnosis Procedure:

- Analyze Cloud Resource Configuration: Review the simulation jobs to check if the allocated computational resources (e.g., number of cores, RAM) are excessive for the problem size.

- Check for Inefficient Models: Identify models with poorly configured solvers or an overly dense mesh that unnecessarily consume high computational resources [27].

- Evaluate Data Transfer Workflows: Determine if entire large CAD assemblies are being transferred for every minor iteration, rather than using more efficient data management strategies.

- Solution Protocol:

- Implement cost-management practices by leveraging scalable cloud resources. Start with smaller instances for initial tests and scale up only for final, high-fidelity simulations [28].

- Before sending to the cloud, use AI-powered tools like Reduced Order Models (ROMs) or real-time simulation software (e.g., ANSYS Discovery) to pre-optimize designs and weed out poor concepts locally, reducing the number of costly cloud runs [30] [31].

- For latency, leverage cloud platforms that offer robust collaboration features, allowing team members to work on the same model without transferring large files repeatedly [28].

Issue 3: Integration Failures with Legacy Data and Systems

- Problem Statement: New AI or cloud-based FEA tools cannot read data from legacy systems, or the integration requires disruptive changes to established research protocols.

- Diagnosis Procedure:

- Audit Data Infrastructure: Identify the data formats, standards, and storage systems used by existing legacy tools.

- Identify Standardization Gaps: Check for inconsistencies in data structuring across different projects and platforms that prevent seamless integration [29].

- Solution Protocol:

- Prioritate AI solutions that act as a knowledge layer and work alongside existing tools, enhancing workflows without requiring a full replacement of legacy systems [30].

- Implement data integration platforms or cloud-based practice management tools designed to connect disparate systems and create a unified data architecture [29].

- Start with high-ROI, low-integration pilots. Use cloud-based SaaS platforms that eliminate large upfront investments and can often interface with legacy data through exported files [29].

Frequently Asked Questions (FAQs)

Q1: Will AI eventually replace the need for FEA specialists and researchers? A1: No. AI is positioned to augment, not replace, expert researchers. AI handles repetitive tasks, suggests optimizations, and accelerates computations, but it lacks human judgment. The responsibility for validation, interpretation of results in a real-world context, and critical thinking remains with the researcher. The future belongs to those who combine deep fundamental knowledge with modern tools [28] [32].

Q2: What is the biggest risk of adopting AI in FEA, and how can it be mitigated? A2: The biggest risk is the uncritical trust in AI-generated outputs, leading to inaccurate results and potential professional liability. This is often summarized as "Garbage In, Garbage Out" [27]. Mitigation Strategy: Establish and document a robust verification and validation (V&V) protocol. This includes cross-checking AI results with high-fidelity models or experimental data, maintaining human oversight for safety-critical decisions, and using software features that allow for the definition and checking of validation thresholds [29] [27] [31].

Q3: How is cloud computing "democratizing" FEA, and what are the associated concerns? A3: Democratization means making advanced FEA accessible to a broader group of users, including those in smaller organizations or without specialized FEA training, by removing hardware barriers and simplifying interfaces through cloud platforms [28]. Associated Concerns:

- Risk of Misuse: Users without deep FEA knowledge may trust colorful contour plots without understanding the underlying physics, leading to dangerous inaccuracies [28].

- Data Security: Processing sensitive designs on external servers raises concerns about intellectual property protection [28] [33].

- Solution: Democratization must be balanced with training, safeguards, and mentorship to ensure results remain trustworthy [28].

Q4: What are AI-based Reduced Order Models (ROMs) and why are they important? A4: AI-based ROMs are simplified, data-driven models that approximate the behavior of complex, high-fidelity simulations. They are trained on full simulation data to capture essential physics with a fraction of the computational cost [31]. Importance: They are crucial for applications requiring rapid iterations, such as design exploration, optimization, and real-time control, where using the full high-fidelity model would be too slow or computationally prohibitive [31].

Quantitative Data on Emerging FEA Trends

Table 1: Market and Adoption Metrics for FEA and AI in Engineering

| Metric | Value | Source / Context |

|---|---|---|

| Global FEA Service Market Value (2024) | USD 134 Million | IntelMarketResearch [34] |

| Projected FEA Market Value (2032) | USD 187 Million | IntelMarketResearch [34] |

| Projected CAGR (2025-2032) | 5.0% | IntelMarketResearch [34] |

| Engineering Firms Believing AI will Positively Impact Operations (2025) | 78% | ACEC Survey [29] |

| Productivity Gain for Skilled Workers Using Generative AI | Nearly 40% | MIT Sloan Field Study [29] |

| Engineers & Architects Using AI Tools Daily (2025) | 36% | Arup Survey [29] |

Table 2: Documented Performance Improvements from AI and Advanced Workflows

| Improvement Type | Measured Outcome | Example / Context |

|---|---|---|

| Design Acceleration | Designs produced in seconds vs. weeks | Thornton Tomasetti’s Asterisk [29] |

| Weight Reduction | 45% lighter component | Airbus using Autodesk Generative Design [30] |

| Energy Savings | 15-25% reduction in energy use | AI-optimized HVAC systems (Uni. of Maryland) [29] |

| Administrative Efficiency | 25% reduction in admin time; 2x faster billing | Red Brick Consulting using AI-powered management [29] |

| Error Reduction | 32% fewer design mistakes | Engineering teams using Leo AI [30] |

Experimental Protocols for Cited Studies

Protocol 1: Validation of AI-Optimized Structural Design This protocol outlines the methodology for validating the performance of a structural component generated by an AI-driven generative design tool, as referenced in the Airbus A320 case study [30].

- Objective Definition: Define the design goals and constraints input into the AI (e.g., minimize mass, subject to load capacity, fixed connection points, and manufacturability constraints).

- AI Model Generation: Use the generative design software to produce multiple design alternatives.

- High-Fidelity FEA: Import the top AI-generated design into a traditional FEA software (e.g., Altair OptiStruct, ANSYS Mechanical). Apply the full set of boundary conditions and loads.

- Benchmarking: Run the same FEA analysis on the traditional, human-designed component.

- Comparison and Validation: Compare key performance indicators (KPIs) including:

- Total mass

- Maximum stress and factor of safety

- Maximum displacement under load

- Natural frequencies (if dynamic performance is critical)

- Iteration: Refine the AI constraints based on initial results and repeat until the design meets all performance criteria.

Protocol 2: Development and Testing of an AI-Based Reduced Order Model (ROM) This protocol describes the creation and validation of an AI-based ROM for rapid thermal analysis, relevant to trends discussed by MathWorks [31].

- Data Generation: Use a high-fidelity computational fluid dynamics (CFD) or thermal model to simulate a wide range of input parameters (e.g., boundary temperatures, heat fluxes, material properties). This creates a comprehensive dataset for training.

- Model Training: Employ a machine learning framework (e.g., in MATLAB) to train a neural network. The inputs are the system parameters, and the outputs are the key results from the high-fidelity model (e.g., temperature distribution, heat flow rates).

- ROM Validation:

- Select a new set of input parameters not used in training.

- Run the simulation using both the high-fidelity model and the new AI-based ROM.

- Quantify the accuracy by calculating the relative error for the output metrics.

- Deployment: Integrate the validated ROM into a larger system simulation or a real-time control application where the original high-fidelity model would be too computationally expensive to run.

Workflow Visualization

AI-Cloud FEA Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software and Platforms for Modern FEA Research

| Tool / "Reagent" | Primary Function | Application in FEA Workflow |

|---|---|---|

| Generative Design Software (e.g., Autodesk Generative Design) | AI-driven design space exploration | Generates multiple optimized design concepts based on defined constraints and goals, often producing non-intuitive geometries [30]. |

| AI-Based Reduced Order Models (ROMs) | Fast, approximate simulation | Replaces computationally heavy high-fidelity models for rapid iteration, system-level simulation, and real-time applications [31]. |

| Real-Time Simulation Software (e.g., ANSYS Discovery) | GPU-accelerated instant feedback | Provides immediate simulation results during model editing, enabling rapid "what-if" scenario testing and concept validation [30]. |

| Cloud HPC Platforms (e.g., SimScale, Rescale, Ansys Cloud) | On-demand computational power | Provides access to virtually unlimited computing resources for large, complex, or multi-physics simulations without local hardware investment [28] [32]. |

| Specialized Engineering AI (e.g., Leo AI) | Engineering knowledge and query assistant | Automates repetitive tasks like part selection, provides CAD-aware Q&A, validates designs with code, and surfaces internal standards [30]. |

| Meshing & FEA Pre/Post-Processors (e.g., Altair HyperWorks) | Model preparation and results analysis | Offers advanced, automated meshing capabilities, contact definition, and boundary condition application, often enhanced with AI to guide and validate setups [27]. |

Implementing Robust FEA Methods: From Model Setup to Advanced Applications

Effective Coupling Strategies for Multiphysics Problems

Frequently Asked Questions (FAQs)

1. What defines a multiphysics problem in FEA, and why is it particularly challenging? A multiphysics problem involves the simultaneous simulation of two or more interacting physical phenomena. The primary challenge is the bidirectional coupling between different physical fields, where the solution of one physics affects the others and vice versa. This creates a complex, interdependent system that cannot be accurately solved by analyzing each physics in isolation. Challenges include managing strong nonlinearities, achieving convergence of the coupled solutions, and capturing the correct interaction mechanisms across different spatial and temporal scales [35] [36].

2. What are the fundamental categories of coupling strategies? Coupling strategies are generally categorized as either monolithic or partitioned [37]. The choice between them involves a trade-off between computational robustness and flexibility.

| Strategy | Description | Pros & Cons |

|---|---|---|

| Monolithic (Strong) Coupling | All physics are solved simultaneously within a single system of equations. | Pros: High accuracy and numerical stability for strongly coupled problems. [37] Cons: Computationally demanding, complex implementation, and difficult to extend with new physics. [37] |

| Partitioned (Weak) Coupling | Individual physics are solved sequentially by separate solvers, exchanging data at the interfaces. | Pros: Modular, flexible, and can leverage existing single-physics solvers. [37] Cons: Potentially lower stability and accuracy; risk of error accumulation. [37] |

3. My coupled simulation will not converge. What are the most common causes? Non-convergence in multiphysics simulations often stems from several key areas that require verification:

- Incorrect coupling definitions: Ensure that the correct physical quantities are being transferred between fields and that the coupling is bidirectional if required.

- Material property nonlinearities: Temperature-dependent or field-dependent material properties can cause instability. Verify that property models are accurate across the expected range of conditions [35].

- Insufficient mesh resolution: The mesh must be fine enough to resolve the gradients in all coupled fields, especially at the interfaces where interactions occur [38].

- Overly large solver time steps: For transient problems, using too large a time step can prevent the coupled iterative process from converging within the allotted iterations.

4. How can I validate my multiphysics model against real-world behavior? Validation is a critical step to ensure the reliability of your model [39]. A robust validation protocol includes:

- Correlation Analysis: Systematically compare FEA results with experimental or trusted reference data, such as from physical prototypes or established analytical solutions. Analyze differences to identify discrepancies [39].

- Sensitivity Analysis: Run "what-if" scenarios to understand how uncertain parameters (e.g., material properties, boundary conditions) affect the results. This helps assess the model's robustness and identify which parameters are most critical to update for improved accuracy [39].

- Mesh Refinement Studies: Progressively refine the mesh in critical regions and observe if the solution changes significantly. This ensures that your results are not dependent on a discretization that is too coarse [38].

Troubleshooting Guides

Guide 1: Resolving Instability in Strongly Coupled Thermal-Stress Analysis

This guide addresses a common scenario where thermal expansion induces stress, and the resulting deformation alters heat transfer paths.

1. Symptom: The solution oscillates and fails to converge after applying coupled thermal and structural loads.

2. Investigation Path: The following workflow outlines a systematic approach to diagnose and resolve the instability:

3. Protocols for Key Steps:

- Verifying Material Properties: Confirm that the coefficient of thermal expansion and temperature-dependent Young's modulus are correctly defined. For accuracy, use tabular data from material tests rather than single values [35].

- Correcting Boundary Conditions: Ensure the structure is not over-constrained, which can create artificially high stresses. A common error is fixing all degrees of freedom when the actual part can expand freely in some directions.

- Solver Adjustment: For a partitioned approach, reduce the coupling load transfer increment. For a monolithic approach, activate a full Newton-Raphson iterative method to handle the nonlinearities more effectively.

Guide 2: Improving Accuracy in Electromagnetic-Thermal Coupling

1. Symptom: Simulated temperatures in windings are significantly lower than experimental measurements, despite correct loss calculations [35].

2. Investigation Path: Use this flowchart to diagnose and correct accuracy issues in electromagnetic-thermal coupling.

3. Protocols for Key Steps:

- Mapping Loss Densities: Electromagnetic losses (e.g., copper and iron losses) must be accurately calculated and mapped as heat generation sources for the thermal analysis. Ensure that the loss values are passed as volumetric heat sources to the thermal solver, rather than as a single total value [35]. The mathematical formulation for copper loss, for instance, must account for temperature-dependent resistance: (P{cu} = mI^2R{20}[1 + \alpha_{cu}(T-20)]) [35].

- Mesh Refinement at Hotspots: Create a much finer mesh in regions with high thermal gradients, such as inside winding bundles and at the interfaces between different materials. A coarse mesh will diffuse heat, underestimating peak temperatures [35] [38].

- Improving Convection Modeling: Replace simplified convection coefficients with a more realistic computational fluid dynamics (CFD) model if possible. Alternatively, use calibrated convection correlations that match your experimental operating conditions.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methodologies used in advanced multiphysics research, as identified in the literature.

| Tool/Method | Function in Multiphysics Research |

|---|---|

| Topology Optimization | A computational method for structurally optimizing material layout within a design space. Used to achieve significant mass reduction (e.g., 22.4% weight reduction) while preserving performance under multiphysics constraints [35]. |

| Physics-Informed Neural Networks (PINNs) | A machine learning approach that integrates physical laws (PDEs) directly into the neural network's loss function. Used as a mesh-free alternative for solving complex coupled systems, though it faces challenges in balancing multiple loss terms [40]. |

| Multistage PINN | An advanced PINN variant that progressively increases the complexity of the physical system during training. This staged learning enhances accuracy and computational efficiency for strongly coupled problems, reducing training time by over 90% compared to standard PINNs [40]. |

| Neuromorphic Hardware (e.g., Loihi 2) | Specialized, brain-inspired computing platforms that can directly implement FEM by solving large, sparse linear systems with spiking neural networks. Offers a pathway to highly energy-efficient numerical computing for PDEs [41]. |

| Bidirectional Evolutionary Structural Optimization (BESO) | A specific topology optimization technique that systematically removes and adds material to evolve the structure toward an optimal design. Effective for lightweighting components under multiphysics loads [35]. |

Appendix: Benchmarking Data for Coupling Strategies

The table below summarizes quantitative performance data for various coupling and solution methods as reported in recent research.

| Method / Framework | Reported Performance Metric | Application Context |

|---|---|---|

| Thermal-Electrical-Vibration Framework [35] | Achieved 22.4% weight reduction via topology optimization. | Flux-switching permanent magnet linear motors |

| Multiphysics Coupling Framework [42] | Enhanced mass flow rate solution accuracy by 9.6% to 13.8% compared to a single-field model. | High length-to-diameter ratio combustion system |

| Multistage PINN [40] | Reduced training time by >90% while maintaining better alignment with FEM solutions. | Solving coupled multiphysics systems (e.g., material degradation, fluid dynamics) |

| Classic Correction Method [42] | Improved accuracy by 6.7% relative to the uncoupled case. | High length-to-diameter ratio combustion system |

Troubleshooting Common Computational Challenges

FAQ: My multiscale simulation is computationally expensive and slow. What strategies can improve efficiency?

High computational cost is a common challenge in multiscale modeling. The table below summarizes solutions and their key characteristics.

Table: Efficiency Improvement Strategies for Multiscale Modeling

| Strategy | Key Mechanism | Suitable For | Key Benefit |

|---|---|---|---|

| FFT-based Homogenization [43] | Solves micro-scale PDEs in frequency domain using Green's functions | Materials with periodic microstructures | Significant reduction in computing time and memory usage |

| Machine Learning Surrogates [44] [45] | Replaces high-fidelity RVE simulations with trained neural network models | History-dependent materials (e.g., elasto-plasticity) | Drastic acceleration of online computation; handles path-dependency |

| Reduced-Order Modeling (ROM) [46] | Constructs low-dimensional models from high-fidelity simulation data | Complex systems where full-order models are prohibitive | Fast evaluation on laptop-class hardware |

| Localized Orthogonal Decomposition (LOD) [47] | Constructs low-dimensional multiscale finite element space by solving local patch problems | Elliptic multiscale problems without scale separation | High approximation properties with cheap, parallelizable computations |

| Adaptive Dynamic Multilevel Methods [48] | Dynamically adapts the solution grid based on a-posteriori error control | Multiphase flow in highly heterogeneous porous media | Reduces computational load by focusing resources where needed |

Experimental Protocol: Implementing an FFT-based Homogenization Method for Thin Structures [43]

- Problem Formulation: Model the structure at both macro and micro scales using Classical Plate Theory (CPT). The governing equations for the microscopic homogenization problem are defined by the equilibrium of stress and moment resultants.

- Microscale Analysis: For each integration point in the macro model, apply the FFT-based method to the Representative Volume Element (RVE) of the microstructure.

- Spectral Solution: Transform the PDEs of the plate theory to the frequency domain. Use the Lippman-Schwinger equation and Green's functions to compute the local stress and strain fields.

- Tangent Operator Calculation: Derive the macroscopic tangent operator in an algorithmically consistent manner within the FFT framework to ensure Newton-Raphson convergence in nonlinear macro-scale solvers.

- Information Transfer: The homogenized stress and moment resultants, along with the tangent operator, are passed back to the macro-scale Finite Element Method (FEM) solver to complete the global Newton iteration.

Diagram 1: Concurrent FEM-FFT Multiscale Workflow

FAQ: How do I handle path-dependent material behavior (like plasticity) in a multiscale framework without excessive computational cost?

Path-dependent behavior requires tracking the internal state variables of the material at the micro-scale throughout the loading history.

- Conventional Approach (

FE^2): At every macro-scale integration point and time step, a full nonlinear RVE simulation is run. This is accurate but prohibitively expensive for large models [44]. - Recommended Solution: Machine Learning Surrogates: Train a Recurrent Neural Network (RNN), such as a Gated Recurrent Unit (GRU), to learn the constitutive response of the RVE.

- Data Generation: Perform high-fidelity offline RVE simulations under a wide range of strain paths. A random walk-based sampling algorithm is effective for this [44].

- Model Training: Train a GRU model that maps strain increments to stress outputs, with the hidden state capturing the path-dependent history.

- Software Integration: Implement the trained GRU model as a user material (UMAT) subroutine in a finite element solver like ABAQUS. This replaces the online RVE solves with a fast, data-driven prediction [44].

FAQ: My material lacks clear scale separation. Will homogenization methods still work?

Yes, but you must choose methods designed for this challenge. Traditional homogenization assumes a clear separation of scales, which is often violated in real materials like complex geological formations or composite materials [48].

- Applicable Methods:

- Multiscale Finite Element Methods (MsFEM): Construct multiscale basis functions that capture the local fine-scale features on a coarse grid. These basis functions are computed by solving local problems on fine-scale patches of the domain [47].

- Localized Orthogonal Decomposition (LOD): This method constructs a specialized coarse-scale approximation space by solving localized fine-scale problems on patches around coarse grid elements. It provides convergence independent of structural assumptions or scale separation [47].

Experimental Protocol: Benchmarking Homogenization vs. Multiscale Methods [48]

- Unified Framework Setup: Implement both the Homogenization (HO) and Multiscale (MS) method within the same adaptive dynamic multilevel (ADM) framework. This ensures a fair comparison.

- Problem Definition: Select a benchmark problem involving fully implicit multiphase flow in a highly heterogeneous porous medium with no clear scale separation.

- Pre-processing: At the start of the simulation, pre-compute the local basis functions for the Multiscale method and the local effective parameters for the Homogenization method.

- Dynamic Simulation: Run the fully implicit simulation. The ADM framework will dynamically adapt the grid levels at each time step based on an error analysis.

- Post-processing and Comparison: Compare the results based on key metrics: accuracy (compared to a fine-scale reference solution), computational time, and memory usage.

Troubleshooting Methodological and Implementation Issues

FAQ: What is the fundamental difference between computational homogenization and multiscale methods?

While both are upscaling strategies, their core mechanisms differ, as summarized below.

Table: Comparison of Homogenization and Multiscale Methods

| Feature | Computational Homogenization | Multiscale Methods (e.g., MsFEM) |

|---|---|---|

| Primary Goal | Determine effective coarse-scale model parameters (e.g., permeability, stiffness) [48]. | Directly resolve fine-scale features on a coarse grid [48]. |

| Core Mechanism | Solves local periodic problems to compute average properties [48]. | Computes local basis functions that map solutions between coarse and fine scales [48] [47]. |

| Scale Separation | Often assumes periodicity or scale separation, though advanced methods relax this [48]. | Specifically designed for problems without clear scale separation [48] [47]. |

| Output | An effective constitutive model for the coarse scale. | A set of multiscale basis functions for the coarse-scale system. |

FAQ: How can I manage uncertainty in my multiscale model parameters and predictions?

Uncertainty Quantification (UQ) is critical for reliable predictions, especially when using multiscale models for decision-making.

- Sources of Uncertainty: These include material properties, geometry, boundary conditions, and measurement errors [49].

- UQ Methods:

- Probabilistic Methods: Represent uncertainties as probability distributions and use Monte Carlo sampling or polynomial chaos expansions to propagate them through the model [49].

- Sensitivity Analysis: Identify the parameters that most significantly influence your Quantity of Interest (QoI). This helps focus UQ efforts on the most influential factors [49] [45].

- Bayesian Frameworks: Use Bayesian inference to update model parameters and their uncertainties based on available experimental data. This is particularly useful for integrating multi-fidelity data [45].

Diagram 2: Uncertainty Quantification Workflow

FAQ: What are the best practices for coupling different physics (e.g., fluid-solid-thermal) in a multiscale simulation?

Multiphysics coupling introduces nonlinearities and potential instabilities.

- Coupling Schemes:

- Strong (Direct) Coupling: Solves all physics simultaneously in a single system. This is more accurate and stable for tightly coupled problems but is computationally demanding and complex to implement.

- Weak (Iterative) Coupling: Solves each physics domain separately and passes information between them iteratively within a time step. This is easier to implement and can leverage existing single-physics solvers, but may require sub-iterations for stability [49].

- Emerging Trends:

The Scientist's Toolkit: Research Reagent Solutions

This section details essential computational tools and frameworks used in modern multiscale modeling research.

Table: Key Software and Implementation Tools

| Tool / Solution | Function | Application Context |

|---|---|---|

| DARSim2 Simulator [48] | Open-source simulator for benchmarking homogenization and multiscale methods. | Fully implicit multiphase flow in porous media. |

| ABAQUS UMAT Subroutine [44] | Interface for implementing user-defined material models in the ABAQUS FEM solver. | Integrating machine learning surrogates (e.g., GRU models) for multiscale simulation. |

| FFT-based Homogenization Code [43] | Specialized solver for periodic microstructures using Fast Fourier Transforms. | Efficient concurrent multiscale analysis of thin plate structures and composites. |

| Simcenter 3D Materials Engineering [50] | Commercial software for adaptive multiscale modeling of material microstructures. | Predicting micro-level failure and its impact on overall part performance. |

| Localized Orthogonal Decomposition (LOD) [47] | A numerical method to create coarse-scale finite element spaces with fine-scale accuracy. | Solving elliptic multiscale problems with high contrast and no scale separation. |

Uncertainty Quantification in Material Properties and Boundary Conditions

In Finite Element Analysis (FEA), uncertainty quantification (UQ) is the process of identifying, characterizing, and accounting for errors and variations in simulation inputs to assess their impact on predicted outcomes. For researchers and scientists, understanding and implementing UQ is crucial for developing reliable, predictive computational models, especially when physical testing is limited or impossible. In the context of FEA protocols, uncertainties primarily originate from two key areas: imperfectly defined material properties and idealized boundary conditions.

All FEA models are approximations of reality. Without proper UQ, these models can produce mathematically correct yet physically misleading results, leading to flawed scientific conclusions or design decisions [51]. A systematic approach to UQ is, therefore, an essential component of rigorous computational research.

FAQs: Core Concepts and Troubleshooting

Q1: What are the primary types of uncertainty encountered in FEA? Two main types of uncertainty affect FEA:

- Epistemic Uncertainty: Arises from a lack of knowledge or incomplete information about the model, such as imperfectly known material parameters or simplified boundary conditions. This uncertainty can be reduced by gathering more or higher-quality data [52].

- Aleatoric Uncertainty: Stems from inherent, unpredictable randomness in the system or data, such as natural variations in material microstructure or fluctuations in operational loads. This uncertainty is generally irreducible [52].

Q2: How can uncertain material properties impact my FEA results? Uncertainties in material properties, such as Young's modulus, Poisson's ratio, or yield strength, can significantly alter the model's response. For instance, in stiffness-driven optimization problems, the impact on the objective value is often proportional to the changes in constitutive properties. However, for strength-based problems, the effect is not always consistent and can change with different design requirements, sometimes showing an increase of up to 25% in the maximum failure index under worst-case material deviations [53]. Using a linear material model beyond the yield point, where material behavior is nonlinear, is a common error that produces mathematically correct but physically unrealistic results [51].

Q3: What are common mistakes when defining boundary conditions that introduce uncertainty? Defining unrealistic boundary conditions is a frequent source of error [3] [54]. Common mistakes include:

- Over-constraining or Under-constraining the Model: This can make the system kinematically indeterminate or introduce unrealistic stiffness [55].

- Applying Forces to a Single Node: This creates a singularity, resulting in non-physical, infinite stresses [2].

- Using Statically Equivalent Loads for Local Effects: According to Saint-Venant's principle, this is valid only when assessing regions far from the load application. It is not suitable for analyzing local stress concentrations [2].

Q4: What is a mesh convergence study, and why is it critical for UQ? A mesh convergence study is a fundamental step for quantifying discretization uncertainty. It involves progressively refining the mesh and observing the change in key results (like peak stress or displacement). A mesh is considered "converged" when further refinement does not produce significant changes in the results [3]. Neglecting this study means you cannot know if your results are numerically accurate or merely an artifact of a poorly discretized model.

Q5: How can I validate my FEA model when experimental data is scarce? When direct test data is unavailable, a robust Verification & Validation process is essential [3]. This includes:

- Verification: Ensuring the model is solved correctly (i.e., "solving the equations right"). This involves mathematical checks and accuracy checks, such as mesh convergence studies and ensuring energy balance.

- Validation: Ensuring the correct model is being solved (i.e., "solving the right equations"). This can involve correlating with simplified analytical solutions or benchmarking against highly trusted, high-fidelity models or limited experimental data points [3].

Troubleshooting Guides

Guide: Managing Material Property Uncertainty

Problem: Simulation results for failure criteria or fatigue life show high sensitivity to small variations in material input parameters.

Solution Steps:

- Identify Key Parameters: Perform a sensitivity analysis to determine which material properties (e.g., ultimate tensile strength, creep constants) most significantly influence your critical outputs.

- Characterize the Uncertainty: Quantify the variation for these key parameters. This can be done through statistical analysis of experimental material test data or from literature.

- Implement a UQ Method:

- Monte Carlo Simulation: Run a large number of simulations with material properties randomly sampled from their defined distribution functions to build a statistical output.

- Worst-Case Analysis: Run simulations using the extreme upper and lower bounds of material properties to understand the full possible range of outcomes [53].

- Bayesian Methods: Use frameworks like Bayesian Neural Networks (BNNs) to produce predictions with inherent uncertainty estimates, which is particularly useful with limited data [56].

- Validate and Update: If possible, use any available test data to validate the statistical distribution of your results and update your material property distributions accordingly.

Guide: Mitigating Boundary Condition Uncertainty

Problem: Stresses and deformations in the model change drastically with small adjustments to supports or loads, indicating low robustness.

Solution Steps:

- Understand the Real-World Interface: Critically analyze how the component is actually supported and loaded. Consider using force measurement sensors or contact pressure films in physical tests to gather data [51].

- Simplify with Caution: Avoid over-simplifying complex connections. If contact and load transfer are important, model the contact interfaces explicitly rather than relying on idealized constraints [3].

- Perform a Boundary Condition Sensitivity Study: Systematically vary the applied loads and constraint locations within their realistic range of uncertainty and observe the impact on results.

- Check for Singularities: Inspect results for localized stress hotspots (often displayed as small red spots) at points of application or constraints. Re-apply loads over a realistic area rather than a single point and avoid perfectly sharp corners in the geometry [2].

Quantitative Data on Uncertainty and Errors

Table 1: Impact of Material Uncertainty on Different Optimization Problems

| Optimization Problem Type | Impact of Material Uncertainty | Observed Change in Objective |

|---|---|---|

| Stiffness/Compliance Minimization | Consistent and predictable | Proportional to changes in constitutive properties [53] |

| Strength/Failure Index Minimization | Significant and inconsistent | Up to 25% increase in maximum failure index [53] |

Table 2: Common FEA Errors and Their Potential Consequences

| Error Category | Specific Error | Potential Consequence |

|---|---|---|