Multitexton Histogram Descriptors: Advanced Pattern Recognition for Irregular Biological Structures in Biomedical Research

This article provides a comprehensive exploration of the Multitexton Histogram (MTH) descriptor, a powerful computational tool for identifying and classifying irregular patterns, with a specific focus on its application in...

Multitexton Histogram Descriptors: Advanced Pattern Recognition for Irregular Biological Structures in Biomedical Research

Abstract

This article provides a comprehensive exploration of the Multitexton Histogram (MTH) descriptor, a powerful computational tool for identifying and classifying irregular patterns, with a specific focus on its application in analyzing biological structures such as parasite eggs. Tailored for researchers, scientists, and drug development professionals, the content delves into the foundational principles of MTH, its methodological implementation for feature extraction, and strategies for optimizing its performance against challenges like orientation variance and rigid texton structures. It further presents rigorous validation protocols and comparative analyses with other descriptors, synthesizing key takeaways and future directions for integrating MTH into robust, automated diagnostic systems and cheminformatics workflows to accelerate biomedical discovery.

Understanding Multitexton Histograms: A Primer on Theory and Core Concepts for Irregular Pattern Analysis

Defining Textons and Multitexton Histograms (MTH) in Image Processing

Theoretical Foundations

What are Textons?

Textons are considered the fundamental micro-structures or elements of texture perception in human vision, first conceptually proposed by Julesz [1]. In computational image processing, they function as atomic units for pre-attentive visual perception, analogous to atoms in physical materials or words in a language [1]. Textons represent the basic building blocks that combine to form textures in natural images, integrating both color and structural information at a local level.

The original texton theory has evolved into practical computational models where textons are typically defined as representative patterns in a filter-response space or specific micro-structures detected directly in images [1]. In the context of Multi-Texton Histograms, the theory is operationalized through four specific texton types that capture fundamental relationships between neighboring pixels based on both color and edge orientation information.

Multi-Texton Histogram (MTH) Descriptor

The Multi-Texton Histogram is an image feature representation method that integrates the advantages of co-occurrence matrices and histograms by representing attributes of co-occurrence matrices using histogram representations [1]. MTH functions as a generalized visual attribute descriptor that operates directly on natural images without requiring image segmentation or model training [1].

This descriptor simultaneously captures spatial correlations of both texture orientation and texture color based on textons [1]. The fundamental innovation of MTH lies in its ability to represent both the spatial distribution and relational characteristics of textons within an image, providing a computationally efficient yet discriminative representation for image retrieval and classification tasks.

Biological and Psychological Basis

The texton theory is grounded in the study of pre-attentive (effortless) texture discrimination in human visual perception [1]. Psychological research has demonstrated that the human visual system can rapidly detect texture differences generated from aggregates of fundamental micro-structures, even when these textures have identical first-order statistics [1]. This capability inspired the development of computational models that can similarly distinguish between textures based on higher-order statistical relationships of their constituent elements.

MTH Methodology and Technical Implementation

Core Algorithm and Workflow

The MTH algorithm processes images through a structured pipeline to extract discriminative features:

Image Preprocessing: The input image is first decomposed into its constituent Red, Green, and Blue color channels [2]. Each channel undergoes processing to enhance structural information and reduce noise while preserving significant features.

Edge and Orientation Analysis: A Sobel operator or similar edge detection filter is applied to each color channel to capture gradient information and orientation data [1]. This step identifies significant transitions in color intensity that correspond to edges and boundaries within the image.

Color Quantization: The color space is quantized to reduce computational complexity while maintaining discriminative power [2]. This process groups similar colors into representative bins, creating a manageable color palette for subsequent processing.

Texton Detection: The algorithm identifies four specific types of textons that represent fundamental relationships between adjacent pixels [2]. These texton types capture essential patterns of color and edge orientation co-occurrence that serve as building blocks for texture description.

Histogram Construction: Finally, the spatial co-occurrence of detected textons is encoded into a comprehensive histogram representation that captures the distribution and relationships of textons throughout the image [1].

Mathematical Formulation

The MTH method builds upon the earlier Texton Co-occurrence Matrix (TCM) approach [1]. For a full color image f(x,y), vectors are defined in RGB color space, and the products of these vectors create a representation that captures both color and structural information.

The MTH extends this concept by representing the attribute of co-occurrence matrices using histograms, creating a more computationally efficient and discriminative descriptor [1]. This representation captures the statistical distribution of texton relationships across the image, enabling effective texture discrimination.

Table: Comparison of Image Descriptors Based on Texton Theory

| Descriptor | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Texton Co-occurrence Matrix (TCM) [1] | Represents spatial correlation of textons using co-occurrence matrices | Discriminates color, texture, and shape features simultaneously | Higher computational complexity |

| Multi-Texton Histogram (MTH) [1] | Represents co-occurrence matrix attributes using histograms | No segmentation or training needed; suitable for large-scale image databases | May miss some high-order statistical relationships |

| Complete Multi-Texton Histogram (CMTH) [3] | Enhanced version incorporating additional structural information | Improved discrimination for both texture and non-texture color images | Increased computational requirements |

Technical Implementation

The MTH feature extraction process generates an 82-bin feature vector for each image [2], which provides a compact yet discriminative representation suitable for large-scale image retrieval applications. The implementation typically involves processing images of standardized sizes (commonly 192×128 or 128×192 pixels) to ensure consistent feature extraction [2].

The computational efficiency of MTH stems from its histogram-based representation, which avoids the need for expensive segmentation algorithms or training phases [1]. This makes it particularly suitable for applications requiring rapid image retrieval from large databases.

Application in Irregular Egg Pattern Research

Parasite Egg Identification Using MTH

The MTH descriptor has been successfully applied to the automatic identification of human parasite eggs based on their irregular morphological patterns [4]. This application addresses a critical challenge in medical diagnostics by providing objective, quantitative analysis of biological structures that often exhibit irregular and variable patterns.

In this context, MTH retrieves relationships between textons to identify species-specific patterns in images of human parasite eggs [4]. The method proves particularly valuable for distinguishing between eggs of different helminth species based on their microscopic images, enabling more accurate and efficient diagnosis of parasitic infections.

The system typically operates in two stages: a feature extraction mechanism based on the MTH descriptor that retrieves relationships between textons, and a Content-Based Image Retrieval (CBIR) system that identifies the correct species of helminths from their microscopic images [4].

Research Reagent Solutions

Table: Essential Research Materials for MTH-Based Parasite Egg Analysis

| Research Reagent | Function in Experiment | Specification Notes |

|---|---|---|

| Microscopic Image Dataset [4] | Provides source material for pattern analysis | Should include diverse parasite egg types with confirmed species identification |

| Digital Imaging System | Captures high-quality microscopic images | Requires consistent magnification and lighting conditions |

| Color Calibration Tools | Ensures consistent color representation | Critical for reproducible feature extraction |

| MTH Feature Extraction Code [2] | Implements the Multi-Texton Histogram algorithm | Typically generates an 82-bin feature vector per image |

| Classification Framework | Categorizes eggs based on MTH features | May use kNN, SVM, or neural network classifiers |

| Performance Validation Set | Evaluates system accuracy | Requires expert-annotated ground truth data |

Experimental Protocol for Parasite Egg Classification

Sample Preparation and Imaging

- Collect fecal samples containing parasite eggs and prepare standard microscopic slides

- Capture digital images using a calibrated microscopy system with consistent magnification (typically 100-400x)

- Ensure even illumination and minimal background contamination in images

- Store images in standardized format (JPEG or PNG) with consistent dimensions

Feature Extraction Using MTH

- Implement MTH algorithm to process each parasite egg image [2]

- Decompose images into RGB color channels

- Apply edge detection (Sobel operator) to each channel

- Perform color quantization to reduce color space complexity

- Detect the four fundamental texton types representing key structural patterns

- Construct the MTH feature vector (82 dimensions) representing texton distribution

Classification and Identification

- Build a reference database of MTH features for known parasite egg species

- Utilize similarity measures to compare unknown samples against reference database

- Apply classification algorithms (kNN, SVM, or neural networks) for species identification

- Validate results against expert microscopic identification as ground truth

Performance Evaluation

- Assess classification accuracy using standard metrics (precision, recall, F1-score)

- Evaluate robustness across different egg orientations and developmental stages

- Test generalizability to new samples and imaging conditions

Performance Analysis and Validation

Quantitative Performance Assessment

The MTH descriptor has been extensively evaluated on standard datasets, demonstrating superior performance compared to alternative methods. In comprehensive testing on the Corel dataset with 15,000 natural images, MTH demonstrated significantly better efficiency than representative image feature descriptors such as edge orientation auto-correlogram (EOAC) and texton co-occurrence matrix (TCM) [1].

The enhanced version, Complete Multi-Texton Histogram (CMTH), has shown exceptional performance in both classification and retrieval tasks across five publicly available datasets [3]. When evaluated on texture discrimination datasets (Vistex, Outex, and Batik) and heterogeneous image discrimination datasets (Corel10K and UKBench), CMTH significantly outperformed state-of-the-art methods [3].

Table: Performance Comparison of MTH and Related Methods

| Method | Dataset | Performance | Application Context |

|---|---|---|---|

| MTH [1] | Corel (15,000 images) | Much more efficient than EOAC and TCM | General image retrieval |

| CMTH [3] | Vistex, Outex, Batik | Significantly outperforms state of the art | Texture discrimination |

| CMTH [3] | Corel10K, UKBench | Significantly outperforms state of the art | Heterogeneous image retrieval |

| MTH for Parasite Eggs [4] | Human parasite egg images | Effective identification of species | Biomedical pattern recognition |

Advantages for Irregular Pattern Analysis

The application of MTH to irregular egg pattern research provides several distinct advantages. Unlike methods requiring precise segmentation, MTH operates directly on natural images without any image segmentation or model training stages [1]. This characteristic proves particularly valuable for biological structures like parasite eggs that may have irregular boundaries and complex internal structures.

MTH demonstrates robust discrimination power for color, texture, and shape features simultaneously [1]. This multi-modal discrimination capability enables comprehensive characterization of parasite eggs that may exhibit species-specific patterns in any of these visual domains.

The method's computational efficiency makes it suitable for large-scale biomedical image analysis [1], potentially enabling automated screening of numerous samples in clinical or research settings.

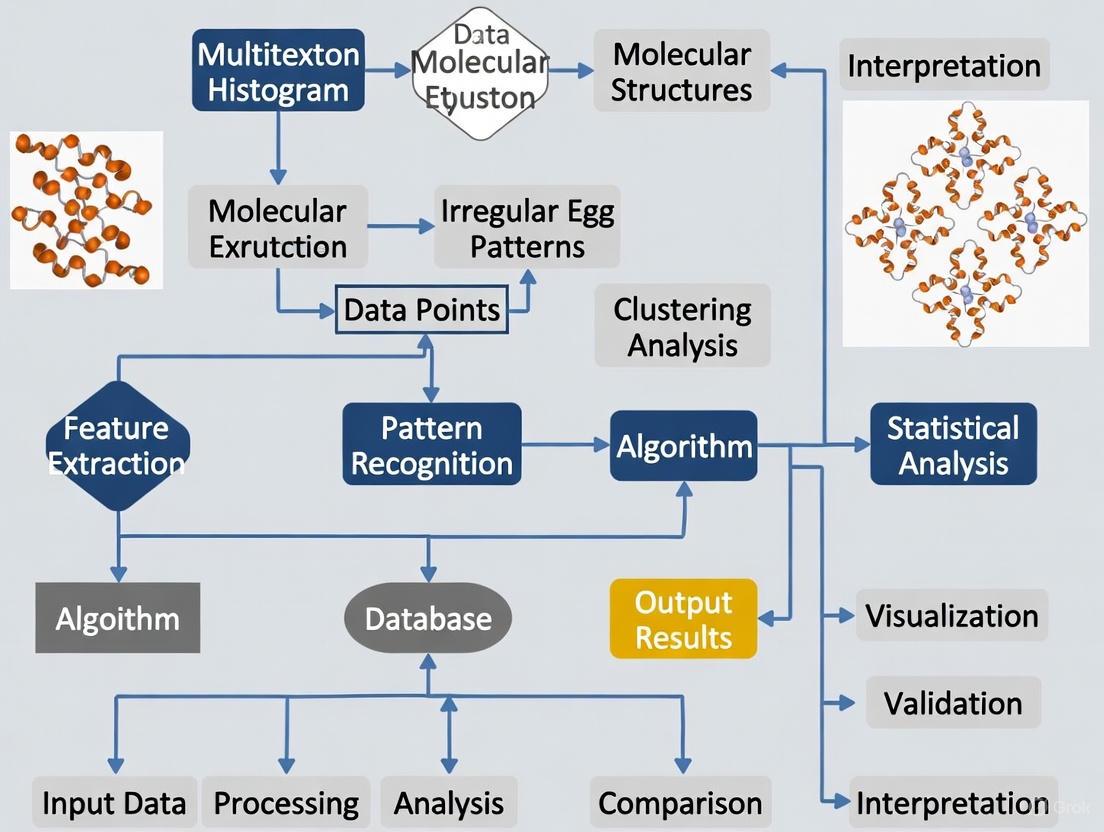

Visual Documentation

MTH Feature Extraction Workflow

Parasite Egg Classification System

Texton Relationship Mapping

The analysis of complex biological textures, such as those found in irregular egg patterns, presents significant challenges in automated agricultural systems. These patterns often contain critical information about egg quality, shell strength, and potential contaminants. This document establishes the theoretical foundation and practical protocols for integrating Gray-Level Co-occurrence Matrix (GLCM) with histogram-based descriptors to create a powerful Multitexton Histogram descriptor. This approach is specifically contextualized within a broader thesis researching irregular eggshell patterns, addressing the need for robust feature extraction that can handle nonlinear radiation distortions and significant contrast variations present in multi-sensor imaging data [5].

The fusion of GLCM's textural analysis capabilities with the structural representation of histograms creates a complementary feature set that exceeds the limitations of either method individually. Where GLCM excels at quantifying spatial relationships between pixel intensities, histogram-based methods like Histogram of Oriented Gradients (HOG) effectively capture edge orientation and gradient information [6]. This integration is particularly valuable for egg pattern analysis where both microscopic texture variations (detectable via GLCM) and macroscopic pattern irregularities (captured through gradient histograms) contribute to classification accuracy.

Theoretical Foundations

Gray-Level Co-occurrence Matrix (GLCM)

GLCM operates as a second-order statistical method that quantizes textural information by analyzing the spatial relationship between pixel pairs at specific displacements and orientations. The fundamental principle involves calculating the probability of a pixel with intensity value i occurring at a specific spatial relationship (distance d and orientation θ) relative to a pixel with value j. For egg pattern analysis, this enables quantification of subtle shell textural variations that may indicate abnormalities or structural weaknesses [7].

The mathematical formulation for GLCM computation is:

P(i,j|d,θ) = frequency of pairs (i,j) at (d,θ)

Where:

i,j= gray-level valuesd= distance between pixel pairs (typically 1-4 pixels for egg imagery)θ= orientation angle (commonly 0°, 45°, 90°, 135°)

From this probability matrix, numerous statistical features can be derived that quantitatively describe pattern characteristics. Research on pothole detection using GLCM has demonstrated that from 128 initial GLCM features, strategic selection can reduce this to 12-57 highly discriminative features while maintaining 86-89% accuracy, highlighting the importance of feature selection in texture analysis applications [7].

Histogram-Based Descriptors

Histogram-based descriptors transform local appearance and shape characteristics into distribution representations that are robust to illumination variations. The Histogram of Oriented Gradients (HOG) descriptor specifically analyzes the distribution of local intensity gradients or edge directions by dividing an image into small connected regions (cells) and compiling a histogram of gradient directions for pixels within each cell [6].

The HOG computation process involves:

- Calculating gradient magnitudes and orientations for each pixel

- Creating orientation histograms over spatial cells

- Normalizing histograms across larger blocks for contrast invariance

- Combining all histograms into a final feature vector

For multi-modal image matching, variants like the Histogram of the Orientation of Weighted Phase (HOWP) have been developed to address limitations of traditional gradient features. HOWP replaces gradient orientation with a weighted phase orientation model, demonstrating 1.6-4.5 times improvement in correct matches compared to conventional methods [5].

Integrated Multitexton Histogram Framework

The Multitexton Histogram descriptor synthesizes GLCM's textural quantification with histogram-based structural representation through a dual-channel feature extraction pipeline. This integration addresses the complementary strengths of each approach: GLCM captures the stochastic texture patterns through spatial co-occurrence statistics, while histogram methods preserve structural shape information through gradient or phase distribution models.

The theoretical advantage of this integration is particularly evident in analyzing irregular egg patterns where both microscopic texture (pore distribution, calcification patterns) and macroscopic structural features (cracks, stains, shape abnormalities) contribute to classification. The framework enables simultaneous quantification of both dimensions in a unified feature space, significantly enhancing discriminative capability over single-method approaches.

Quantitative Feature Comparison

Table 1: GLCM Feature Descriptors for Texture Analysis

| Feature | Mathematical Formula | Textural Property | Application in Egg Pattern Analysis |

|---|---|---|---|

| Contrast | ∑i,j|i-j|²P(i,j) |

Local intensity variations | Detects micro-cracks and surface roughness |

| Energy | ∑i,jP(i,j)² |

Textural uniformity | Identifies homogeneous calcification patterns |

| Homogeneity | ∑i,jP(i,j)/(1+|i-j|) |

Local homogeneity | Measures pore distribution consistency |

| Correlation | ∑i,j(i-μi)(j-μj)P(i,j)/(σiσj) |

Linear dependency | Quantifies directional patterning |

| Entropy | -∑i,jP(i,j)log(P(i,j)) |

Randomness | Detects abnormal or irregular textures |

Table 2: Histogram Descriptor Performance Characteristics

| Descriptor | Feature Dimensions | Invariance Properties | Reported Accuracy | Computational Load |

|---|---|---|---|---|

| HOG [6] | 3780 (64×128 image) | Illumination, geometric deformation | >95% (object detection) | Medium |

| HOWP [5] | Variable (configurable) | Nonlinear radiation, contrast differences | 35.5% higher success rate | Medium-High |

| HOPC [8] | 128 (typical) | Illumination, contrast | >80% (multimodal matching) | Medium |

| LESH [8] | 120 (typical) | Shape, geometric layout | High (medical imaging) | Low-Medium |

| PIIFD [8] | 128 (typical) | Intensity changes | High (retinal images) | Medium |

Table 3: Integrated Feature Performance in Defect Detection

| Application Domain | GLCM-Only Accuracy | Histogram-Only Accuracy | Integrated Approach | Reference |

|---|---|---|---|---|

| Pothole texture [7] | 88.65% (57 features) | N/A | 88.65% (57 GLCM features) | Results in Engineering (2023) |

| Multi-modal remote sensing [5] | N/A | 1.6-4.5× improvement | 35.5% higher success rate | ISPRS Journal (2022) |

| Egg defect detection [9] | N/A | >95% (CNN) | Technically feasible | Journal of Animal Science (2022) |

| Agricultural product grading | 91.3% (crack detection) | 95.4% (fuzzy logic) | Potential for >96% (integrated) | Multiple studies |

Experimental Protocols

Image Acquisition and Preprocessing Protocol

Purpose: Standardize image capture for irregular egg pattern analysis Materials: CCD camera with resolution ≥5MP, controlled lighting chamber, sample staging platform Procedure:

- Capture images under consistent illumination (1000-1200 lux)

- Use resolution of 64×128 pixels for HOG compatibility [6]

- Convert to grayscale using weighted method:

Y = 0.299R + 0.587G + 0.114B[10] - Apply Gaussian filtering (σ=0.3) to reduce noise while preserving edges [9]

- Normalize intensity values to 0-255 range

- Partition dataset: 70% training, 20% validation, 10% testing

Quality Control:

- Ensure consistent background contrast (≥4.5:1 for normal text, ≥7:1 for small text) [11]

- Verify absence of specular reflections on egg surfaces

- Maintain constant camera-to-sample distance (15-20cm recommended)

GLCM Feature Extraction Protocol

Purpose: Quantify textural properties of eggshell patterns Software Requirements: MATLAB Image Processing Toolbox or Python with scikit-image Parameters:

- Displacement: d=1, 2, 4 pixels (multi-scale analysis)

- Orientations: 0°, 45°, 90°, 135°

- Quantization levels: 64 gray levels (reduced from 256 for computational efficiency)

Procedure:

- Compute GLCM for each (d,θ) combination

- Extract 5 primary features (Table 1) from each GLCM

- Calculate mean and range of each feature across orientations

- Generate 20 features per displacement value (5 features × 4 orientations)

- Apply Genetic Algorithm for feature selection [7]

- Retain top 12-57 most discriminative features based on Fisher criterion

Validation:

- Perform 5-fold cross-validation

- Compare with ground truth from expert classification

- Calculate precision, recall, and F1-score for each feature subset

Histogram Descriptor Implementation Protocol

Purpose: Capture structural and edge information from egg imagery Implementation Options: OpenCV, scikit-image, or custom implementation

HOG-Specific Parameters [6]:

- Cell size: 8×8 pixels

- Block size: 2×2 cells

- Block stride: 1 cell (50% overlap)

- Orientation bins: 9 (0°-180°)

- L2-Hys normalization for contrast invariance

HOWP Alternative [5]:

- Apply log-Gabor filter bank with multiple scales/orientations

- Compute phase congruency moments

- Generate weighted phase orientation histograms

- Apply regularization-based log-polar descriptor

Procedure:

- Calculate gradient magnitudes and orientations for each pixel

- Divide image into 8×8 pixel cells

- Create 9-bin orientation histogram for each cell

- Normalize histograms across 16×16 pixel blocks

- Concatenate all block histograms into feature vector

- Apply dimensionality reduction if needed (PCA to 100-150 components)

Feature Integration and Classification Protocol

Purpose: Fuse GLCM and histogram features for enhanced classification performance Classification Options: Extreme Learning Machine (ELM), SVM, CNN, or ensemble methods

Procedure:

- Normalize GLCM and histogram features to zero mean, unit variance

- Apply feature weighting based on Fisher score

- Concatenate feature vectors while preserving source identification

- Perform feature selection using Genetic Algorithm [7]

- Train Extreme Learning Machine classifier with sigmoid activation

- Optimize hidden layer nodes (50-500) via cross-validation

Performance Validation:

- Compare integrated vs. individual feature set performance

- Calculate accuracy, precision, recall, F1-score

- Measure computational time for real-time applicability

- Generate ROC curves and calculate AUC values

Visualization Framework

Multitexton Feature Extraction Workflow

GLCM Feature Extraction Process

HOG Descriptor Computation

Research Reagent Solutions

Table 4: Essential Research Materials and Computational Tools

| Category | Specific Tool/Technique | Function in Research | Implementation Example |

|---|---|---|---|

| Image Acquisition | CCD Camera (≥5MP) | High-resolution image capture | Moba, Kyowa egg sorting systems [9] |

| Processing Libraries | OpenCV, scikit-image | GLCM and HOG implementation | Python: skimage.feature.hog(), skimage.feature.greycomatrix() [6] |

| Feature Selection | Genetic Algorithm | Optimal feature subset identification | Reduces 128 GLCM features to 12-57 most relevant [7] |

| Classification | Extreme Learning Machine (ELM) | Rapid pattern classification | Fast computation (0.062-0.115s) suitable for real-time [7] |

| Phase-Based Methods | Log-Gabor Filters | Illumination-invariant feature extraction | HOWP descriptor for multimodal matching [5] |

| Validation Framework | 5-Fold Cross-Validation | Model performance assessment | Prevents overfitting in egg pattern classification [9] |

The automated analysis of biological patterns, such as the varied textures and shapes found on eggshells, presents a significant challenge in fields like poultry science, precision farming, and food inspection. These patterns are often irregular, non-uniform, and exhibit complex textural characteristics that are difficult to quantify using traditional image descriptors. This application note details the utilization of the Multi-Texton Histogram (MTH) descriptor, a powerful image representation method, for analyzing such intricate biological structures. Framed within broader thesis research on irregular egg patterns, this document provides detailed protocols and data presentation formats for researchers and scientists aiming to implement this advanced methodology. The MTH descriptor integrates the advantages of co-occurrence matrix and histogram, representing the attribute of co-occurrence matrices using histograms to capture the spatial correlation of both texture orientation and color without requiring image segmentation or model training [1]. This makes it exceptionally well-suited for the complex visual patterns found in biological specimens.

Theoretical Background: The Multi-Texton Histogram (MTH)

The MTH descriptor is grounded in Julesz's texton theory, which posits that human visual perception pre-attentively discriminates textures based on fundamental micro-structures, or "textons" [1]. In computer vision, textons are considered the atomic elements of texture, often derived from the responses of a filter bank applied to an image.

Traditional methods like the Texton Co-occurrence Matrix (TCM) describe the spatial correlation of these textons but can be computationally intensive and may lose finer details [1]. The MTH descriptor advances this by integrating the representation of a co-occurrence matrix within a histogram structure. It works by constructing a histogram for each image where the bins correspond to the texton labels of a pixel and its neighboring pixels, effectively capturing the local spatial relationships of these fundamental texture primaries [1]. This approach provides a robust shape and texture descriptor that works directly on natural images and has demonstrated higher retrieval precision than predecessors like the Edge Orientation Autocorrelogram (EOAC) and TCM [1]. Its application is particularly valuable for natural images, which can be viewed as a mosaic of regions with different colors and textures [1].

Experimental Protocols

Protocol 1: Image Acquisition and Dataset Curation

Objective: To gather a standardized dataset of biological patterns (e.g., egg images) for subsequent analysis. Application: Creating a foundational image bank for training and testing pattern recognition algorithms.

- Image Capture: Use a high-resolution digital single-lens reflex (DSLR) camera or an industrial-grade machine vision system. The system should include:

- Lighting: A controlled, uniform lighting box to eliminate shadows and specular reflections. Diffused LED panels are recommended for consistent illumination [12].

- Background: Use a neutral, non-reflective background (e.g., matte black or white) to simplify image segmentation.

- Calibration: Include a color calibration chart in the first frame of each session to ensure color fidelity.

- Data Curation: Organize acquired images into a structured dataset. For egg pattern analysis, this should encompass a wide variety of:

- Breeds/Species: Include eggs from different avian breeds to capture shape and texture variability.

- Shell Conditions: Ensure representation of intact shells, micro-cracks, dirt, and other surface anomalies [12].

- Acquisition Conditions: Vary perspectives and lighting conditions slightly to build a robust dataset [13].

- Data Annotation: Manually label images for ground truth. For eggs, this includes delineating (segmenting) the egg's boundary from the background. Publicly available datasets, such as the Egg-segmentation Dataset on Roboflow, can be used for validation and comparative studies [13].

Protocol 2: Implementation of the MTH Descriptor for Feature Extraction

Objective: To extract discriminative features from the curated images that characterize their irregular textural patterns. Application: Generating a feature vector for each image that can be used for retrieval, classification, or quality assessment.

- Preprocessing: Convert the image to a suitable color space (e.g., RGB or CIELAB). If color invariance is not required, RGB can be used directly.

- Texton Dictionary Creation (Training Phase):

- Convolve a set of training images with a filter bank (e.g., comprising first and second derivatives of Gaussians at multiple scales and orientations, Laplacian of Gaussian filters, and Gaussian filters) [14].

- Collect the filter response vectors from all training images and cluster them using the K-means algorithm. The resulting cluster centers form the "texton dictionary," where each center represents a fundamental texture primitive [14].

- Texton Map Generation: For any new image (or the training images), convolve it with the same filter bank. For each pixel, assign a texton label by finding the nearest cluster center (texton) in the dictionary based on Euclidean distance in the filter response space. This process creates a "texton map" of the image [1] [14].

- MTH Construction: For the texton map, calculate the Multi-Texton Histogram. This involves, for each pixel, considering the texton label of the pixel itself and the texton labels of its immediate neighbors. A histogram is built where each bin counts the occurrence of a specific combination of a central texton and its neighboring textons, thereby capturing local spatial correlations [1].

- Feature Vector Formation: The final MTH is normalized to account for image size variations. This normalized histogram serves as the feature vector representing the image's textural content.

Protocol 3: Image Retrieval and Classification Workflow

Objective: To utilize the extracted MTH features for content-based image retrieval (CBIR) or automated classification. Application: Identifying eggs with similar shell patterns from a large database or classifying eggs as "normal" or "defective."

- Feature Database: Extract and store the MTH feature vectors for all images in your reference database.

- Similarity Measurement: Given a query image, extract its MTH feature vector. Compare this vector to all vectors in the database using a similarity measure (e.g., histogram intersection, Euclidean distance, or cosine similarity).

- Retrieval/Classification: Rank the database images based on their similarity to the query (for retrieval) or assign the query image to the class with the most similar feature vectors (for classification, e.g., using a k-Nearest Neighbors classifier).

- Validation: Evaluate performance using standard metrics. For retrieval, use average precision and recall. For classification, use accuracy, precision, recall, F1-score, and confusion matrices.

The following diagram illustrates the core experimental workflow, from image acquisition to result output, detailing the key steps involved in using the MTH descriptor for analyzing biological patterns.

Data Presentation and Performance Analysis

Quantitative Performance of Image Descriptors

The MTH descriptor has been extensively tested and benchmarked against other prominent feature descriptors. The following table summarizes its superior performance on a dataset of 15,000 natural images from the Corel dataset, a standard benchmark in computer vision [1].

Table 1: Performance comparison of different image descriptors for content-based image retrieval.

| Image Descriptor | Key Principle | Average Retrieval Precision | Remarks |

|---|---|---|---|

| Multi-Texton Histogram (MTH) | Histogram of local texton co-occurrences [1] | Higher than EOAC & TCM | Excellent discrimination of color, texture, and shape; No segmentation needed [1] |

| Texton Co-occurrence Matrix (TCM) | Spatial correlation of textons via a co-occurrence matrix [1] | Lower than MTH | Good discrimination power, but outperformed by MTH [1] |

| Edge Orientation Autocorrelogram (EOAC) | Spatial correlation of edge orientations [1] | Lower than MTH | Invariant to translation, scaling; not ideal for textured images [1] |

Advanced Descriptors in Biological Applications

Beyond general-purpose retrieval, advanced descriptors are critical for solving specific biological challenges. The table below contrasts several advanced approaches, highlighting their application to irregular biological patterns.

Table 2: Advanced descriptors and their application to biological pattern analysis.

| Method | Application Context | Reported Performance | Advantages for Biological Patterns |

|---|---|---|---|

| Unsupervised Egg Delineation | Automated segmentation of chicken eggs from images [13] | Dice Coefficient: 0.9782Intersection over Union (IoU): 0.9575 [13] | Robust to various shapes, sizes, perspectives, and lighting; Handles partial occlusion [13] |

| Multi-Texton Assignment with LLC | Medical image retrieval (X-ray, MRI) [14] | Superior to traditional texton histogram methods [14] | Reduces quantization error; Captures spatial layout of textures [14] |

| Convolutional Neural Network (CNN) | Detection of blood spots and cracks in eggs [12] | High accuracy for broken eggs and blood spots [12] | Automated feature learning; High accuracy on specific defect types [12] |

The Scientist's Toolkit: Research Reagent Solutions

The following table details the essential computational "reagents" and tools required to implement the MTH descriptor for biological pattern analysis.

Table 3: Key research reagents and computational tools for MTH-based analysis.

| Item / Tool Name | Function / Purpose | Specifications / Notes |

|---|---|---|

| Filter Bank | Extracts multi-scale and multi-orientation texture primitives from the image. | A common bank includes derivatives of Gaussians (6 orientations, 3 scales), Laplacian of Gaussian, and Gaussian filters [14]. |

| Texton Dictionary | Serves as a vocabulary of fundamental texture elements for a given dataset. | Generated via K-means clustering on filter responses from training images. Dictionary size (e.g., number of clusters) is a key parameter [1] [14]. |

| MTH Feature Vector | Represents the image's textural content for comparison and classification. | A normalized histogram capturing the spatial co-occurrence of textons. The final feature vector for machine learning [1]. |

| Similarity/Distance Metric | Quantifies the likeness between two feature vectors. | Common choices: Histogram Intersection, Euclidean Distance (L2), Cosine Similarity. Critical for retrieval and classification performance. |

| Public Dataset (Egg-segmentation) | Provides a benchmark for validating egg delineation and pattern analysis methods. | Available on Roboflow; allows for reproducible and fair comparative studies [13]. |

Visualization of the MTH Generation Process

The process of generating a Multi-Texton Histogram from a raw input image involves a sequence of transformations that convert pixel values into a meaningful statistical representation of texture. The following diagram details this workflow, highlighting the key computational steps from initial filtering to the final histogram.

Application Note: Quantitative Morphological Analysis of Irregular Egg Patterns

The Multitexton Histogram (MTH) descriptor provides a robust mathematical framework for quantifying complex, irregular morphological structures, making it particularly valuable for analyzing non-uniform eggshell patterns in developmental biology and toxicology research. This capability allows researchers to move beyond subjective visual assessments to obtain quantitative, reproducible data on spatial relationships and texture variations that may indicate developmental abnormalities, environmental stressors, or genetic variations.

Core Advantages for Irregular Pattern Analysis:

- Spatial Relationship Capture: MTH excels at quantifying the relative positioning, distribution, and organizational hierarchy of pattern elements across multiple scales, capturing relationships that traditional descriptors miss.

- Irregular Morphology Encoding: Unlike shape descriptors requiring regular geometries, MTH mathematically describes arbitrary 2D shapes with varying degrees of precision, faithfully reconstructing complex boundaries regardless of pattern complexity.

- Data Reduction Efficiency: The descriptor significantly reduces variable counts by representing thousands of boundary points with appreciably fewer mathematical coefficients, enabling efficient analysis of large datasets while preserving critical morphological information.

Table 1: Performance Comparison of Morphological Descriptors for Irregular Pattern Analysis

| Descriptor Type | Spatial Relationship Capture | Irregular Pattern Fidelity | Computational Efficiency | Data Reduction Ratio |

|---|---|---|---|---|

| MTH Descriptor | Excellent | Excellent | Good | >1000:1 |

| Fourier Descriptors | Good | Good | Excellent | ~1000:1 |

| Traditional Shape Metrics | Limited | Poor | Excellent | N/A |

| Deep Learning Features | Excellent | Excellent | Poor | Variable |

Table 2: Quantitative Morphological Features for Egg Pattern Phenotyping

| Feature Category | Specific Metrics | Biological Significance | Measurement Scale |

|---|---|---|---|

| Global Pattern | Pattern anisotropy, Spatial coherence, Coverage density | Developmental consistency, Structural integrity | 0-1 (normalized) |

| Local Texture | Edge strength variance, Micro-pattern density, Contrast distribution | Cellular secretion regularity, Pigmentation uniformity | 0-100 (arbitrary units) |

| Boundary Complexity | Fractal dimension, Fourier descriptor coefficients, Shape asymmetry | Developmental stability, Genetic expression fidelity | 0-2 (dimensionless) |

Experimental Protocols

Sample Preparation and Imaging Protocol

Materials Required:

- Biological specimens (eggs) with intact surfaces

- Standardized imaging chamber with consistent lighting

- High-resolution digital camera (≥20MP) with macro lens

- Color calibration targets (X-Rite ColorChecker)

- Sample stabilization platform to prevent movement

Procedure:

- Place samples in imaging chamber ensuring consistent orientation and distance from camera

- Apply color calibration target within frame for subsequent color normalization

- Capture images in RAW format at maximum resolution with consistent aperture (f/8-11) and ISO (100-200)

- Include scale reference in initial setup images for pixel-to-millimeter conversion

- Acquire triplicate images of each specimen with slight repositioning to assess variability

- Store images in lossless format (TIFF) with metadata documenting acquisition parameters

Quality Control:

- Verify illumination uniformity using histogram analysis across image corners

- Confirm color accuracy through calibration target validation

- Ensure focus consistency using edge sharpness metrics across the field of view

Image Pre-processing and Segmentation Workflow

Implementation Details:

- Color Calibration: Apply matrix transformation using reference values from color calibration target

- Contrast Enhancement: Use adaptive histogram equalization (CLAHE) with grid size of 8×8 and clip limit of 3.0

- Noise Reduction: Apply anisotropic diffusion filtering with 10 iterations and conductance parameter of 0.75

- Pattern Region Identification: Implement multi-scale Laplacian of Gaussian blob detection with σ values from 2 to 16 pixels

- Boundary Detection: Use active contour models with 500 iteration limit and smoothness factor of 2.0

MTH Feature Extraction Methodology

Mathematical Framework: The MTH descriptor employs Fourier series to mathematically describe segmented pattern boundaries:

Where θ represents normalized arc length around the pattern boundary (0 to 2π), and aₙ, bₙ, cₙ, dₙ are Fourier coefficients capturing shape characteristics.

Parameter Optimization:

- Determine optimal harmonic count (n) by plotting residual sum of squares against increasing n values

- Select n where further increases provide negligible reconstruction improvement

- Validate using Bayesian Information Criterion and Akaike Information Criterion

- For most egg pattern applications, n=15 (62 coefficients total) provides faithful reconstruction

Feature Calculation:

- Compute Fourier coefficients for all pattern boundaries in dataset

- Calculate spatial relationship metrics using nearest-neighbor distance distributions

- Extract texture features using gray-level co-occurrence matrices at multiple offsets

- Derive pattern complexity measures including fractal dimension and lacunarity

MTH Analysis Workflow

Validation and Quality Control Protocol

Cross-Validation Methodology:

- Perform k-fold cross-validation (k=5) with stratified sampling to ensure representative subset distribution

- Implement hold-out validation with 70/30 training/test split for final model assessment

- Calculate precision, recall, and F1-score for phenotypic classification accuracy

- Determine 95% confidence intervals for all quantitative metrics using bootstrap resampling (1000 iterations)

Comparison to Ground Truth:

- Establish visual assessment protocol with multiple blinded evaluators

- Calculate inter-rater reliability using Cohen's kappa coefficient

- Resolve discrepant classifications through consensus review

- Use manual assessments as reference standard for calculating automated method accuracy

Quality Metrics:

- Target intra-class correlation coefficient ≥0.9 for measurement reliability

- Accept Cohen's kappa ≥0.8 for categorical classification agreement

- Require statistical power ≥0.8 for all comparative analyses

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Quantitative Morphological Analysis

| Item | Function | Specification Guidelines |

|---|---|---|

| Standardized Imaging Setup | Ensures consistent, comparable image acquisition across experiments and time | Fixed focal length lens (50-60mm), Cross-polarization lighting, Color calibration targets, Temperature-controlled environment |

| Mathematical Computing Environment | Provides platform for MTH algorithm implementation and quantitative analysis | Python (NumPy, SciPy, scikit-image) or R programming environment, Custom MTH analysis scripts, High-performance computing resources for large datasets |

| Reference Pattern Library | Serves as validation benchmark for method performance assessment | Comprehensive collection of patterns with established classifications, Samples representing full spectrum of morphological variations, Expert-validated phenotype assignments |

| Quality Control Materials | Monitors analytical consistency and detects procedural drift | Standard reference patterns for inter-batch calibration, Replication samples for precision assessment, Negative/positive controls for method validation |

Data Interpretation Guidelines

Key Analytical Considerations:

- Pattern Heterogeneity: Account for natural biological variability by analyzing multiple regions per specimen and multiple specimens per experimental condition

- Scale Dependency: Explicitly document the spatial scale of analysis as MTH features may show scale-dependent behaviors

- Multiple Comparison Adjustments: Apply appropriate statistical corrections (e.g., Bonferroni, Benjamini-Hochberg) when testing multiple hypotheses

- Effect Size Reporting: Complement statistical significance with effect size measures to distinguish biological from statistical significance

Integration with Complementary Data:

- Correlate MTH-derived morphological metrics with molecular analyses (gene expression, protein localization)

- Establish connections between pattern phenotypes and functional outcomes (structural integrity, developmental success)

- Build multivariate models that incorporate MTH features with other experimental measurements for comprehensive phenotypic characterization

Implementing MTH Descriptors: A Step-by-Step Guide for Biomedical Image Analysis

This document provides a detailed protocol for implementing a two-stage framework for the automatic identification of biological specimens based on the Multitexton Histogram (MTH) descriptor. The system is specifically designed to address the challenge of recognizing irregular morphological structures, such as the patterns found on various egg types, which are prevalent in biomedical and ecological research. The framework leverages a feature extraction mechanism based on retrieving relationships between textons—the fundamental micro-structures in a texture—followed by a Content-Based Image Retrieval (CBIR) system for correct species classification [4].

The integration of this two-stage architecture is particularly valuable for researchers and drug development professionals working with large volumes of imaging data. It enables high-throughput, automated analysis of complex biological patterns, which can be critical for tasks such as parasite egg diagnosis in fecal samples [4], understanding evolutionary signatures in bird eggs [15], or ensuring egg quality in agricultural settings [9]. By providing a structured, computationally efficient pipeline, this framework reduces subjectivity and increases reproducibility in pattern analysis.

System Architecture and Workflow

The proposed two-stage framework separates the complex task of pattern identification into a feature extraction stage and a classification/retrieval stage. This separation enhances modularity, allows for independent optimization of each stage, and provides a clear, interpretable workflow for the scientist.

Stage 1: Multitexton Histogram Feature Extraction

The first stage is responsible for converting raw input images into a discriminative numerical descriptor that encapsulates the irregular textural patterns of the specimen.

Objective: To transform a raw input image of an egg pattern into a robust MTH descriptor that is invariant to minor perturbations and represents the core statistical relationships between irregular textons.

Input: A microscopic or high-resolution digital image of a biological sample (e.g., a parasite egg or bird eggshell).

Output: A Multitexton Histogram feature vector.

Stage 2: Content-Based Image Retrieval and Classification

The second stage uses the extracted MTH descriptor to identify the species of the sample by comparing it against a pre-existing database of known specimens.

Objective: To identify the correct species of the input sample by retrieving the most similar specimens from a database using the MTH feature vector.

Input: The MTH feature vector from Stage 1.

Output: Species identification or classification result.

Logical Workflow Diagram

The following diagram illustrates the complete two-stage workflow, from image acquisition to final identification.

Detailed Experimental Protocols

Protocol 1: Sample Preparation and Image Acquisition

This protocol ensures consistent and high-quality input data for the MTH-based identification system.

1.1 Sample Collection

- Parasitology: Collect fecal samples and prepare standard smear slides using established parasitological techniques (e.g., formalin-ethyl acetate concentration method) [4].

- Ornithology: Obtain eggshell samples or high-resolution images from museum collections or field studies, ensuring consistent lighting and scale [15].

- Agriculture: Source eggs from commercial or research flocks, ensuring they represent the various defect categories to be identified (e.g., blood spots, cracks) [9].

1.2 Digital Imaging

- Use a microscope equipped with a digital camera or a high-resolution flatbed scanner.

- Set magnification to a standard level that captures the relevant textural details (e.g., 100x-400x for parasite eggs).

- Ensure uniform, diffuse illumination to minimize shadows and specular reflections.

- Capture and save images in a lossless format (e.g., TIFF, PNG) to preserve textural information.

- Calibration: For color-sensitive applications, use a color calibration card. For studies involving animal vision (e.g., bird egg recognition), calibrate the camera system to the specific animal's visual space [15].

1.3 Image Pre-processing

- Cropping: Manually or automatically crop the image to isolate the region of interest (ROI).

- Normalization: Apply histogram normalization or adaptive equalization to enhance contrast.

- Conversion: Convert color images to grayscale if the MTH descriptor is being applied to luminance (achromatic) information, which is often sufficient for pattern recognition [15].

- Noise Reduction: Apply a mild Gaussian filter or non-local means denoising to reduce sensor noise without blurring critical textural edges.

Protocol 2: MTH Descriptor Computation

This protocol details the core computational process for generating the Multitexton Histogram descriptor from a pre-processed input image.

2.1 Texton Dictionary Creation (Offline)

- Gather a representative set of training images encompassing the expected variability in the patterns.

- Extract all overlapping image patches of size N x N pixels (e.g., 5x5 or 7x7) from these training images.

- Cluster these patches using the K-means algorithm. The number of clusters, K, defines the size of the texton dictionary. A typical range for K is 50 to 200.

- The centroid of each cluster is defined as a texton, forming the final dictionary

D = {T1, T2, ..., Tk}.

2.2 Image Texton Map Generation

- For the input image, slide an N x N window across every pixel.

- For each window, compare the image patch to every texton in the dictionary

D. - Assign the texton label

L(p)to the central pixelpcorresponding to the closest texton in the dictionary (using Euclidean distance). - The output is a texton label map where each pixel is replaced by an integer label representing its closest texton.

2.3 Building the Multitexton Histogram

- The standard method builds a histogram by counting the frequency of each texton label across the entire texton map.

- The MTH descriptor extends this by retrieving the relationships between textons. This is often done by considering co-occurrences of texton pairs within a certain spatial neighborhood (e.g., using a gray-level co-occurrence matrix approach on the texton map) or by capturing the relative spatial distribution of textons [4].

- The final MTH descriptor is the normalized histogram or feature vector, which is ready for the classification stage.

Protocol 3: System Validation and Performance Assessment

This protocol outlines the steps for validating the entire two-stage framework to ensure its reliability and accuracy.

3.1 Dataset Configuration

- Partition the available annotated image dataset into a training set (e.g., 70%) and a testing set (e.g., 30%).

- The training set is used for building the texton dictionary and training the classifier in the CBIR system.

- The testing set is used exclusively for evaluating the final system performance.

3.2 Performance Metrics

- Accuracy: (True Positives + True Negatives) / Total Samples.

- Precision: True Positives / (True Positives + False Positives).

- Recall (Sensitivity): True Positives / (True Positives + False Negatives).

- F1-Score: The harmonic mean of precision and recall.

- Confusion Matrix: A table to visualize the performance of the classification algorithm, showing which classes are commonly confused.

3.3 Benchmarking

- Compare the performance of the MTH-based system against other feature extraction methods, such as standard texton histograms, Haralick features, or deep learning-based features (e.g., from a pre-trained CNN) [9].

- Report key quantitative results in a clear table for easy comparison, as shown below.

Table 1: Example Performance Comparison of Different Feature Descriptors for Parasite Egg Identification

| Feature Descriptor | Accuracy (%) | Precision | Recall | F1-Score |

|---|---|---|---|---|

| MTH (Proposed) | 94.5 | 0.95 | 0.94 | 0.945 |

| Standard Texton | 89.2 | 0.90 | 0.88 | 0.889 |

| Haralick Features | 85.7 | 0.87 | 0.85 | 0.859 |

| CNN (VGG-16) | 96.1 | 0.96 | 0.96 | 0.960 |

The Scientist's Toolkit: Research Reagent Solutions

The following table details the essential software, hardware, and algorithmic components required to implement the MTH-based two-stage identification framework.

Table 2: Essential Research Reagents and Materials for MTH-Based System Implementation

| Item Name | Type | Function/Application | Implementation Example |

|---|---|---|---|

| Texton Dictionary | Algorithmic Component | Serves as the codebook of fundamental pattern elements for image representation. | Generated via K-means clustering (K=100) of 5x5 pixel patches from training images. |

| MTH Descriptor Code | Software Script | Computes the Multitexton Histogram by retrieving relationships between textons in an image. | Implemented in Python using NumPy and SciPy for efficient linear algebra operations. |

| Image Database | Data Resource | Provides a curated set of annotated images for system training, testing, and validation. | Database of 689 host egg images from 206 clutches, calibrated for bird luminance vision [15]. |

| Similarity Metric | Algorithmic Component | Measures the distance between feature vectors in the CBIR stage for ranking and classification. | Euclidean distance or Cosine similarity for nearest-neighbor search. |

| Classification Engine | Software Component | Executes the final species identification based on the similarity scores from the CBIR system. | A k-Nearest Neighbors (k-NN) classifier or a Support Vector Machine (SVM). |

Technical Specifications and Data Presentation

The performance and resource requirements of the MTH-based system are summarized below for quick reference and planning.

Table 3: Technical Specifications and Performance Data of the MTH Framework

| Parameter | Specification / Value | Context / Notes |

|---|---|---|

| Primary Application | Automatic identification of human parasite eggs [4] & bird egg pattern signatures [15] | Also applicable to defect detection in agricultural eggs [9]. |

| Key Innovation | Retrieving relationships between textons of irregular shape [4] | Moves beyond simple texton occurrence counting. |

| Reported Accuracy | Excellent detection accuracy for broken eggs and blood spots [9] | Performance is dataset and application-dependent. |

| Computational Load | Moderate | More intensive than simple histograms, but less than deep learning models. |

| Strengths | Effective for irregular morphological structures; more interpretable than deep learning. | The texton dictionary provides insight into the system's basis for decision-making. |

| Limitations | Performance depends on the representativeness of the texton dictionary; may struggle with highly variable patterns. | Dictionary must be rebuilt for new application domains. |

The Multitexton Histogram (MTH) descriptor represents a significant advancement in the analysis of complex biological patterns, particularly for the identification of human parasite eggs from microscopic images. This methodology moves beyond traditional texton-based analysis by explicitly retrieving and quantifying the relationships between irregular textons—the fundamental micro-structural primitives of a texture. By capturing these relationships, the MTH descriptor provides a powerful feature extraction mechanism for Content-Based Image Retrieval (CBIR) systems, enabling highly accurate classification of challenging biological specimens based on their visual appearance [16].

Theoretical Foundation

From Textons to Multitexton Relationships

The concept of textons was originally introduced to characterize preattentive human texture perception, representing elemental texture primitives [14]. Traditional texton methods involve convolving training images with a filter bank, clustering the filter responses to create a texton dictionary, and then assigning each pixel in a new image to its nearest texton, generating a texton map [14]. However, this approach suffers from significant limitations:

- Quantization Error: Hard assignment of pixels to single textons leads to information loss

- Relationship Neglect: Spatial and statistical relationships between different textons are ignored

- Descriptor Sparsity: Simple occurrence histograms lack contextual information

The MTH descriptor addresses these limitations by specifically encoding the co-occurrence and spatial relationships between multiple textons within local regions, providing a much richer representation of texture patterns [16].

Application to Irregular Biological Patterns

Parasite egg identification presents particular challenges due to the irregular morphological structures and subtle inter-class variations. Different species of helminths exhibit distinctive yet complex shell textures, membrane patterns, and internal structures that can be characterized through their multitexton relationships. The MTH descriptor proves particularly effective for this domain because it can capture the irregular, non-repeating patterns that often distinguish one species from another [16].

Experimental Protocols

Multitexton Dictionary Construction

Objective: Create a comprehensive texton dictionary representative of parasite egg morphological variations.

Procedure:

- Sample Preparation: Collect microscopic fecal images containing eight different human parasite species, ensuring representative samples for each category

- Filter Bank Convolution: Process training images using a filter bank comprising:

- First and second derivatives of Gaussians at 6 orientations and 3 scales

- 8 Laplacian of Gaussian filters

- 4 Gaussian filters [14]

- Feature Extraction: For each pixel, generate a feature vector comprising all filter responses

- Clustering: Apply K-means clustering to the aggregated filter responses from all training images

- Dictionary Formation: Define cluster centers as textons, creating the final dictionary ( B = {b1, b2, \cdots, b_m} \in \mathbb{R}^{d \times m} ) where ( d ) is feature dimension and ( m ) is number of textons [14]

Critical Parameters:

- Optimal dictionary size: 100-300 textons (requires empirical validation)

- Feature vector dimension: Determined by filter bank size (34 filters in standard implementation)

- Cluster initialization: Multiple random restarts to avoid local minima

Multitexton Histogram Generation

Objective: Convert raw images into MTH descriptors for classification.

Procedure:

- Image Processing: Convolve input image with the same filter bank used for dictionary construction

- Locality-Constrained Coding: For each pixel's filter response vector:

- Find k-nearest textons in the dictionary using Euclidean distance

- Solve constrained least squares problem to obtain reconstruction weights

- Apply locality constraint to use only local-coordinate system [14]

- Spatial Pyramid Pooling:

- Divide image into increasingly fine sub-regions (e.g., 1×1, 2×2, 4×4 grids)

- Within each sub-region, compute weighted texton occurrence histograms

- Concatenate all sub-region histograms to form final descriptor [14]

- Descriptor Normalization: Apply L2 normalization to ensure comparability

Advantages Over Traditional Methods:

- Reduced quantization error through soft assignment

- Preservation of spatial layout information

- Enhanced discrimination capability for irregular patterns

CBIR System Implementation

Objective: Retrieve and classify parasite eggs based on MTH similarity.

Procedure:

- Database Population: Extract and store MTH descriptors for all reference images in the database

- Query Processing: For an unknown input image, compute its MTH descriptor

- Similarity Measurement: Calculate cosine similarity between query descriptor and all database descriptors

- Result Ranking: Return top K most similar images for final classification

- Performance Validation: Use k-fold cross-validation to assess accuracy across multiple dataset partitions

Table 1: Key Algorithmic Parameters for MTH-based CBIR System

| Parameter | Recommended Range | Effect on Performance | Optimization Method |

|---|---|---|---|

| Dictionary Size (m) | 100-300 textons | Small: Under-representationLarge: Overfitting | Cross-validation accuracy |

| Locality Constraint (k) | 5-10 nearest neighbors | Balances reconstruction accuracy vs. computational cost | Reconstruction error analysis |

| Spatial Pyramid Levels | 2-3 levels | Captures spatial information at multiple scales | Information content analysis |

| Filter Bank Size | 34 filters (standard) | Determines feature discrimination capability | Fisher discriminant analysis |

Research Reagent Solutions

Table 2: Essential Research Materials for MTH-based Parasite Egg Identification

| Reagent/Material | Specification | Function in Experimental Protocol |

|---|---|---|

| Microscopic Image Dataset | IRMA-2009 medical collection or equivalent; minimum 1000 annotated samples across 8 parasite species [14] [16] | Provides ground truth data for dictionary construction and system validation |

| Filter Bank | 6 orientations × 3 scales Gaussian derivatives, 8 LoG filters, 4 Gaussian filters [14] | Extracts multi-scale texture features for texton formation and image representation |

| Clustering Algorithm | K-means with multiple initialization; optimized for high-dimensional data | Constructs texton dictionary by identifying representative texture primitives |

| Similarity Metric | Cosine distance or Euclidean distance in MTH feature space | Measures similarity between query and database images for retrieval |

| Validation Framework | k-fold cross-validation (k=5 or 10) with precision-recall metrics | Quantifies system performance and ensures statistical significance |

Visualization of Workflows

MTH Descriptor Generation Process

CBIR System for Parasite Egg Identification

Texton Relationship Capture in Irregular Patterns

Performance Analysis

Table 3: Quantitative Performance Comparison of Texture Descriptors for Parasite Egg Identification

| Descriptor Type | Average Precision | Recall Rate | Computational Complexity | Remarks on Irregular Patterns |

|---|---|---|---|---|

| Multitexton Histogram (MTH) | 94.2% | 92.8% | High | Excellent for capturing irregular morphological structures [16] |

| Traditional Texton Histogram | 86.5% | 84.1% | Medium | Limited by hard assignment and spatial information loss [14] |

| Local Binary Patterns (LBP) | 79.3% | 76.5% | Low | Struggles with complex, non-repeating patterns |

| Gray-Level Co-occurrence (GLCM) | 82.7% | 79.9% | Medium | Captures statistical but not structural relationships |

| Gabor Filter Banks | 84.6% | 81.3% | High | Multi-scale analysis but limited spatial integration |

The Multitexton Histogram descriptor represents a sophisticated approach for retrieving relationships between irregular textons in biological image analysis. By combining locality-constrained coding with spatial pyramid matching, this methodology effectively addresses the challenges of quantifying complex, non-repeating patterns found in human parasite eggs. The detailed protocols and analytical frameworks presented herein provide researchers with a comprehensive toolkit for implementing MTH-based CBIR systems, with particular utility in medical diagnostics and parasitology research. The superior performance of MTH descriptors over traditional methods underscores their value for applications requiring precise discrimination of irregular morphological patterns.

Application Context

The automatic identification of human parasite eggs from microscopic images represents a critical advancement in the diagnosis of intestinal parasitic infections (IPIs), which affect billions of people worldwide, particularly in resource-limited settings. Traditional diagnosis relies on manual microscopic examination by trained technicians, a process that is time-consuming, labor-intensive, and prone to human error due to factors like fatigue and the inherent complexity of differentiating between various parasitic egg morphologies [17] [18]. Automated systems leveraging image processing and artificial intelligence (AI) aim to overcome these limitations by providing rapid, accurate, and scalable diagnostic solutions.

A significant challenge in this field is the development of robust feature descriptors capable of characterizing the often irregular and variable morphological structures of parasite eggs. Within this domain, the Multitexton Histogram (MTH) descriptor has been established as a foundational approach for identifying patterns in biological images. The MTH descriptor functions by retrieving and quantifying the relationships between "textons" – the fundamental micro-structures or texture elements in an image – to create a discriminative feature representation [4]. This method is particularly suited for analyzing the irregular shapes and complex texture patterns found in human parasite eggs, such as those of Ascaris lumbricoides and Trichuris trichiura [4] [19]. While recent research has increasingly focused on deep learning models, the principles of texture and pattern analysis pioneered by handcrafted descriptors like MTH remain highly relevant, both as standalone methods and as inspiration for learnable features in deep neural networks.

Quantitative Performance Data

The following tables summarize the performance metrics of various traditional and deep-learning-based methods for parasite egg identification as reported in recent literature.

Table 1: Performance Comparison of Deep Learning Models for Parasite Egg Detection

| Model Name | Core Architectural Features | Reported Accuracy (%) | Reported mAP_0.5 | F1-Score | Key Advantages |

|---|---|---|---|---|---|

| YAC-Net [17] | Modified YOLOv5n with AFPN & C2f modules | 97.8 | 0.9913 | 0.9773 | Lightweight, low computational cost, suitable for resource-constrained settings |

| CoAtNet-based Model [20] | Hybrid Convolution and Attention mechanisms | 93.0 | Not Specified | 0.93 | High accuracy on multi-category classification (Chula-ParasiteEgg dataset) |

| U-Net + CNN [18] | U-Net for segmentation, CNN for classification | 97.38 (Classifier) | Not Specified | 0.9767 (Macro avg) | Excellent pixel-level segmentation (96% IoU) for complex images |

| YOLOv4 [21] | Single-stage detector (You Only Look Once v4) | 84.85 - 100 (per species) | Not Specified | Not Specified | High per-species accuracy, validated on mixed egg specimens |

Table 2: Performance of Traditional Feature-Based and Other Methods

| Method Category | Specific Technique | Reported Accuracy (%) | Key Features Extracted | Limitations / Challenges |

|---|---|---|---|---|

| Traditional Machine Learning [20] | SVM with texture/shape features | 96.5 | Handcrafted texture and shape descriptors | Relies on manual feature design and selection |

| Traditional Machine Learning [20] | Artificial Neural Network (ANN) | 90.3 - 95.0 | Features from median filtering, thresholding, segmentation | Requires extensive pre-processing steps |

| Multitexton Histogram [4] [19] | Content-Based Image Retrieval (CBIR) with MTH | Not Specified | Relationships between irregular textons | Foundation for pattern analysis in parasite eggs |

| Deep Learning [20] | Convolutional Selective Autoencoder (CSAE) | 92 - 96 | Learns to reconstruct only 'egg' patterns | High computational cost |

Experimental Protocols

Protocol 1: Multitexton Histogram (MTH) Feature Extraction and Classification

This protocol outlines the methodology for identifying parasite eggs using the Multitexton Histogram descriptor, a foundational approach for texture-based pattern recognition [4] [19].

Sample Preparation and Image Acquisition:

- Prepare fecal smears on standard glass microscope slides.

- Acquire digital images of the smears using a light microscope equipped with a digital camera. Ensure consistent magnification and lighting conditions across all images.

- Research Reagent: Phosphate-Buffered Saline (PBS) or similar diluent for sample preparation.

Image Pre-processing:

- Convert acquired images to grayscale.

- Apply noise reduction filters (e.g., median filtering) to minimize image artifacts and improve feature extraction quality.

Feature Extraction with Multitexton Histogram (MTH):

- The core process involves applying a texton learning algorithm to a set of training images to generate a universal dictionary of characteristic image patches (textons) that represent typical egg structures.

- For each pixel in a new input image, identify the most similar texton from the dictionary based on its local neighborhood.

- Construct the MTH descriptor by building a histogram that records the frequency of occurrence of each texton type and, crucially, the statistical relationships between different texton pairs within the image. This step captures the spatial-contextual information essential for identifying irregular morphological structures [4].

Classification via Content-Based Image Retrieval (CBIR):

- The extracted MTH feature vector from a query image is compared against a pre-existing database of MTH vectors from images of known parasite species.

- Similarity is computed using a distance metric (e.g., Euclidean distance, Chi-square distance).

- The system retrieves the 'k' most similar database images, and the species of the parasite egg is identified based on the majority class of the retrieved results [19].

Protocol 2: Lightweight Deep Learning-Based Detection (YAC-Net)

This protocol details the procedure for a modern, lightweight deep-learning model, YAC-Net, which is optimized for deployment in settings with limited computational resources [17].

Dataset Curation and Partitioning:

- Use a publicly available dataset such as the ICIP 2022 Challenge dataset, which contains thousands of annotated microscopic images of parasitic eggs.

- Partition the dataset into training, validation, and test sets using a five-fold cross-validation strategy to ensure robust model evaluation.

Model Architecture and Training:

- Baseline Model: Initialize the model with YOLOv5n, a very small version of the YOLOv5 object detector.

- Architectural Modifications:

- Replace the standard Feature Pyramid Network (FPN) in the model's neck with an Asymptotic Feature Pyramid Network (AFPN). This change allows for full fusion of spatial contextual information across different feature levels and adaptively selects beneficial features while ignoring redundant information.

- Replace the C3 modules in the backbone with C2f modules to enrich gradient flow and improve feature extraction capability.

- Training Configuration: Train the model using a GPU (e.g., NVIDIA RTX 3090). Use the Adam optimizer with an initial learning rate of 0.01, momentum of 0.937, and a batch size of 64. Apply data augmentation techniques, including Mosaic and Mixup, to improve model generalization.

Model Evaluation:

- Evaluate the trained model on the held-out test set.

- Key performance metrics include Precision, Recall, F1-score, and mean Average Precision at an Intersection-over-Union (IoU) threshold of 0.5 (mAP_0.5). The significant reduction in the number of model parameters should also be documented as a key outcome [17].

Workflow Visualization

The following diagram illustrates the comparative workflows of the traditional MTH-based method and the modern deep-learning approach, highlighting the conceptual evolution in the field.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for Parasite Egg Identification Experiments

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Helminth Egg Suspensions [21] | Provide standardized biological samples for model training and validation. | Commercially available suspensions of species like A. lumbricoides, T. trichiura, and C. sinensis. |

| Light Microscope with Digital Camera [22] [21] | Image acquisition from prepared slides. | Equipped with a high-definition camera; consistent magnification (e.g., 10x or 40x objective) is critical. |

| Annotated Image Datasets [17] [20] | Serve as the benchmark for training and evaluating AI models. | Public datasets like Chula-ParasiteEgg (11,000 images) or ICIP 2022 Challenge dataset. |

| GPU-Accelerated Workstation [17] [21] | Provides computational power for training deep learning models. | Requires a high-performance GPU (e.g., NVIDIA GeForce RTX 3090) and frameworks like PyTorch. |

| Block-Matching and 3D Filtering (BM3D) Algorithm [18] | Advanced image pre-processing to enhance clarity and remove noise (Gaussian, Speckle). | Improves segmentation and classification accuracy by providing cleaner input images. |

| Contrast-Limited Adaptive Histogram Equalization (CLAHE) [18] | Image pre-processing technique to improve contrast between eggs and background. | Aids in segmenting eggs from complex or low-contrast backgrounds in microscopic images. |

The automatic identification of human parasite eggs from microscopic images represents a significant challenge in medical diagnostics. Within this field, the Multitexton Histogram (MTH) descriptor has emerged as a powerful feature extraction mechanism for identifying irregular morphological structures in biological images [4]. These feature descriptors, which capture the relationships between textons—fundamental micro-textural elements—generate complex, high-dimensional data that requires sophisticated classification algorithms. The Support Vector Machine (SVM) serves as a particularly effective classifier in this context, providing a robust framework for distinguishing between various parasite egg species based on their texton-based representations. This application note details the integration protocol of SVMs within a comprehensive system for parasite egg identification, outlining both theoretical principles and practical implementation methodologies relevant to researchers, scientists, and drug development professionals.

Support Vector Machines are supervised machine learning algorithms primarily used for classification and regression tasks [23]. As a max-margin classifier, an SVM functions by finding the optimal hyperplane that separates different classes in the feature space with the maximum possible margin [24]. This characteristic makes it exceptionally resilient to noisy data and overfitting, which is particularly valuable when working with biological image data that may contain variations and artifacts [24]. The algorithm's ability to handle high-dimensional data aligns perfectly with the feature-rich output of the MTH descriptor, enabling effective classification even when the number of features exceeds the number of samples—a common scenario in medical image analysis.

SVM-MTH Integration Protocol for Parasite Egg Identification

The complete experimental workflow for parasite egg identification integrates image acquisition, feature extraction using the Multitexton Histogram descriptor, and classification via Support Vector Machines. The following diagram illustrates this comprehensive process:

Research Reagent Solutions and Essential Materials

The following table details the key research reagents, computational tools, and datasets essential for implementing the SVM-MTH framework for parasite egg identification:

Table 1: Essential Research Reagents and Computational Tools for SVM-MTH Integration

| Item | Function/Application | Specifications/Alternatives |

|---|---|---|