Machine Learning for Geometric Morphometric Classification: Advanced Methods and Biomedical Applications

This article explores the integration of machine learning (ML) with geometric morphometrics (GM) for precise shape-based classification, a methodology gaining significant traction in biological and biomedical research.

Machine Learning for Geometric Morphometric Classification: Advanced Methods and Biomedical Applications

Abstract

This article explores the integration of machine learning (ML) with geometric morphometrics (GM) for precise shape-based classification, a methodology gaining significant traction in biological and biomedical research. We first establish the foundational principles of GM and the transition from traditional statistical analysis to ML. The core of the article details the ML pipeline for GM data, covering feature engineering, algorithm selection (including SVMs, Random Forests, and Neural Networks), and implementation in platforms like R and Python. We then address critical challenges such as class imbalance, data standardization, and model interpretability, providing practical optimization strategies. A comparative analysis validates the performance of ML against traditional morphometrics and highlights emerging deep learning approaches. Designed for researchers and drug development professionals, this review serves as a comprehensive guide for leveraging ML-GM integration to enhance classification accuracy in studies of morphological variation, from paleontology and archaeology to future clinical diagnostics.

The Fundamentals of Geometric Morphometrics and the Shift to Machine Learning

Geometric Morphometrics (GM) is a collection of approaches that provides a mathematical description of biological forms based on geometric definitions of their size and shape, using Cartesian coordinates of points placed on biological structures [1]. This paradigm has revolutionized the quantitative analysis of form by allowing researchers to statistically analyze the entire geometry of anatomical structures rather than relying on traditional linear measurements. The field has blossomed through the development and extensions of the geometric morphometric paradigm, now widely used across biological sciences from developmental studies to analyses of ancestral morphologies [2].

The fundamental advantage of GM over traditional morphometrics lies in its ability to retain the full geometric configuration of landmarks throughout statistical analysis, enabling visualization of shape changes in biologically meaningful ways. These methods have become indispensable in evolutionary biology, systematics, paleontology, and biomedical research, where precise quantification of morphological variation is essential. By preserving geometric relationships throughout analysis, GM allows researchers to directly visualize statistical results as actual shape changes, providing powerful insights into patterns of morphological evolution, developmental pathways, and functional adaptations.

Theoretical Foundations

Landmarks: The Basic Data Units

Landmarks are defined as discrete, anatomically corresponding points that can be precisely located and reliably measured across all specimens in a study. They represent the fundamental data units in geometric morphometrics and are typically categorized into three distinct types based on their biological and mathematical properties [1]:

Table 1: Landmark Types in Geometric Morphometrics

| Type | Definition | Examples | Reliability |

|---|---|---|---|

| Type I | Points defined by local biological features, often at tissue intersections | Intersections between primary and secondary veins, sutures between bones | Highest reliability due to clear biological definition |

| Type II | Points representing maxima of curvature or other geometric features | Tips of processes, petal lobes, furthest extents of structures | Moderate reliability, dependent on clear geometry |

| Type III | Points defined by geometric constructions from other landmarks | Midpoints between Type I landmarks, extremal points | Lowest reliability as they are computationally derived |

These landmarks provide the foundational coordinate data that capture the geometry of biological forms. Type I landmarks are generally preferred when available, as they represent the most biologically homologous points, while Type III landmarks are used sparingly to supplement coverage of morphological structures.

Semilandmarks: Capturing Curves and Surfaces

A significant limitation of traditional landmark-based GM is that landmarks alone often fail to capture the comprehensive geometry of biological structures, particularly along curves and surfaces where discrete anatomical points may be scarce. Semilandmarks address this limitation by allowing the quantification of homologous curves and surfaces [2].

The development of sliding and surface semilandmark techniques has greatly enhanced the quantification of shape by densely sampling regions between traditional landmarks. These points are "semilandmarks" because they lack individual biological homology but represent homologous curves or surfaces across specimens. Mathematically, semilandmarks are allowed to slide along tangents to curves or surfaces to minimize bending energy or Procrustes distance, establishing geometric correspondence [2].

Semilandmarks are particularly valuable for studying structures with limited discrete landmarks, such as cranial vaults, limb bones, or smooth botanical surfaces. Their application has enabled more comprehensive quantification of diverse morphologies, including beak shape in birds, fish fins, turtle shells, and hominin crania [2].

Shape Spaces and the Procrustes Framework

The mathematical foundation of GM relies on the concept of shape space - a multidimensional space where each point represents a complete configuration of landmarks. To compare shapes, extraneous factors like size, position, and orientation must be eliminated through Generalized Procrustes Analysis (GPA) [1].

GPA superimposes landmark configurations by optimizing three parameters:

- Translation - moving configurations to a common center

- Scaling - normalizing all configurations to unit size

- Rotation - rotating configurations to minimize distances between corresponding landmarks

After Procrustes superimposition, the resulting Procrustes coordinates represent pure shape variables that can be analyzed using standard multivariate statistical methods. The Procrustes distance between two landmark configurations quantifies their shape difference, serving as the fundamental metric in shape space.

Table 2: Key Concepts in Shape Space Theory

| Concept | Mathematical Definition | Biological Interpretation |

|---|---|---|

| Kendall's Shape Space | Pre-shape sphere representing all possible configurations after translation and scaling | Abstract space of all possible forms |

| Procrustes Distance | Square root of the sum of squared differences between corresponding landmarks | Quantitative measure of shape difference |

| Tangent Space | Linear approximation to shape space at a reference form (consensus) | Euclidean space where conventional statistics apply |

| Consensus Configuration | Mean shape obtained through GPA | Reference form representing central tendency |

Practical Protocols for Geometric Morphometrics

Data Acquisition and Digitization

Modern geometric morphometrics leverages advanced imaging technologies for data acquisition. The protocol varies depending on specimen size, resolution requirements, and available resources:

Imaging Modalities:

- Computed Tomography (CT) Scanning: Ideal for 3D reconstruction of internal and external structures, especially for bony elements or dense tissues [2]

- Surface Laser Scanning: Suitable for capturing external morphology of larger specimens

- Photographic Imaging: Cost-effective for 2D analyses when structures can be properly flattened

Landmarking Protocol:

- Define landmark protocol - Establish explicit definitions for each landmark position

- Training and calibration - Ensure consistent landmark placement across operators

- Repeatability assessment - Conduct multiple measurements to estimate measurement error

- Data validation - Check for outliers and biologically impossible configurations

For complex 3D structures, the combination of landmarks, curve semilandmarks, and surface semilandmarks provides the most comprehensive shape characterization [2]. Surface semilandmarks are typically applied using a template-based approach, where a standardized mesh is warped to fit each specimen's morphology.

Data Processing and Analysis Workflow

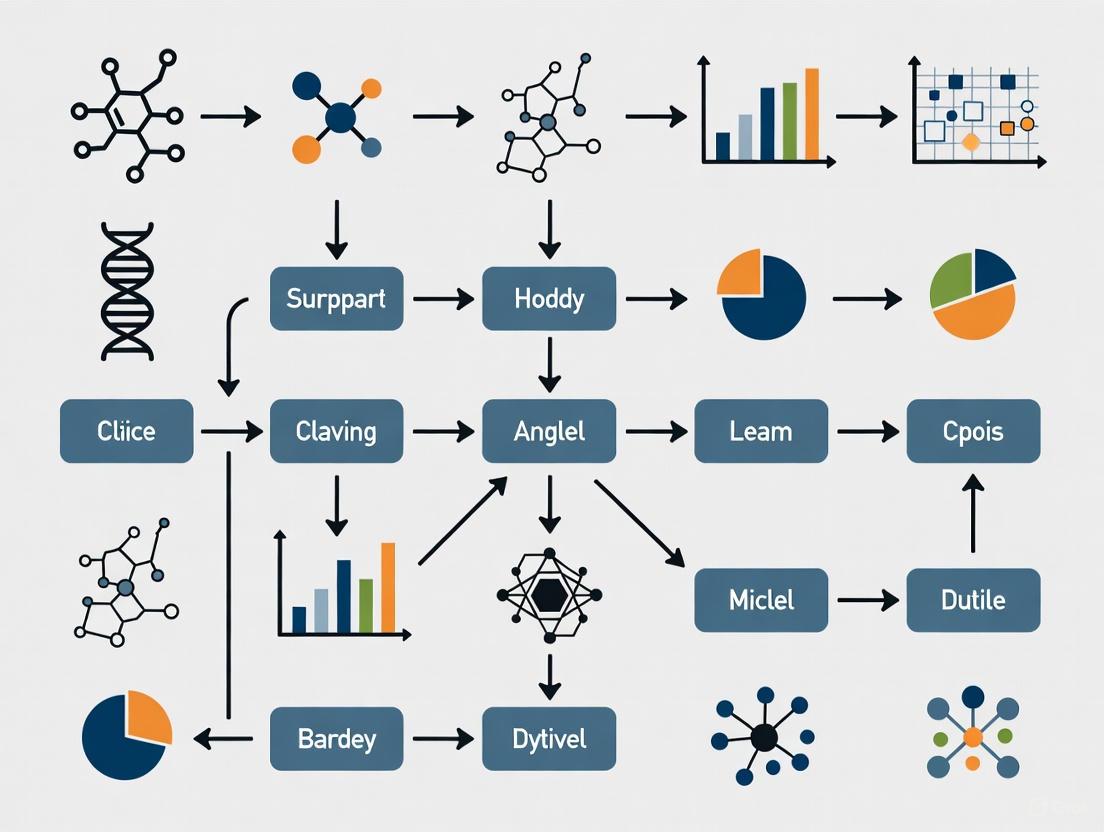

The following diagram illustrates the complete geometric morphometrics workflow from raw data to statistical analysis:

Critical Steps in Detail:

Generalized Procrustes Analysis (GPA)

- Translate all configurations to a common origin (usually the centroid)

- Scale configurations to unit centroid size

- Rotate configurations to minimize Procrustes distances

- Iterate until convergence to obtain the consensus configuration

Shape Variable Extraction

- Procrustes coordinates represent shape variables after GPA

- Centroid size (square root of sum of squared distances from landmarks to centroid) serves as size measure

- Residuals from consensus represent individual shape variation

Statistical Analysis

- Principal Component Analysis (PCA): Identifies major axes of shape variation

- Canonical Variate Analysis (CVA): Maximizes separation among pre-defined groups

- Regression: Analyzes allometry (shape-size relationships)

- Modularity/Integration Tests: Examines covariation among anatomical regions

Symmetry Analysis Protocol

Many biological structures exhibit symmetrical organization, requiring specialized analytical approaches. The protocol for symmetry analysis involves:

- Symmetry Definition: Classify symmetry type (bilateral, rotational, translational)

- Landmark Configuration: Assign landmarks to symmetry components

- Procrustes ANOVA: Partition variance into symmetric and asymmetric components

- Biological Interpretation: Relate symmetric and asymmetric variation to developmental, genetic, or environmental factors

For bilaterally symmetric structures, the approach separates variation into:

- Symmetric Component: Differences among individuals

- Asymmetric Component: Differences between sides within individuals (fluctuating asymmetry, directional asymmetry, antisymmetry)

Integration with Machine Learning for Classification

Machine Learning Approaches in Morphometrics

The integration of machine learning (ML) with geometric morphometrics has created powerful frameworks for taxonomic classification and morphological pattern recognition. Recent advances demonstrate several promising approaches:

Functional Data Geometric Morphometrics (FDGM) This innovative approach converts discrete landmark data into continuous curves represented as linear combinations of basis functions [3]. FDGM has demonstrated superior performance in classifying shrew species based on craniodental morphology, outperforming classical GM approaches when combined with machine learning classifiers such as Support Vector Machines and Random Forests [3].

Deep Learning with Convolutional Neural Networks (CNNs) CNNs applied directly to specimen images have shown remarkable performance in classification tasks. In archaeobotanical studies, CNNs outperformed traditional GM methods for seed classification, demonstrating higher accuracy in distinguishing wild from domestic species [4]. This approach leverages automated feature detection rather than relying on manually placed landmarks.

Traditional ML Classifiers with Shape Data Standard machine learning algorithms (Naïve Bayes, SVM, Random Forest, Generalized Linear Models) can be applied to Procrustes shape coordinates or principal component scores derived from GM analysis [3]. This hybrid approach maintains the biological interpretability of GM while leveraging the classification power of ML.

Comparative Performance of GM and ML Methods

Table 3: Performance Comparison of Geometric Morphometrics and Machine Learning Methods

| Method | Accuracy Range | Data Requirements | Interpretability | Best Application Context |

|---|---|---|---|---|

| Traditional GM with Linear Discriminant Analysis | 70-85% | 20-50 specimens per group | High | Well-defined groups with clear morphological differences |

| Functional Data GM with ML | 85-95% [3] | 30+ specimens per group | Moderate | Complex shapes with subtle interspecific variation |

| Convolutional Neural Networks (CNNs) | >90% [4] | Large datasets (hundreds to thousands) | Low | High-throughput classification without landmark identification |

| Geometric Morphometrics with Random Forest | 80-90% | 50+ specimens per group | Moderate | Complex classification problems with multiple groups |

The choice between methods depends on research goals: traditional GM provides greater biological interpretability, while ML approaches often achieve higher classification accuracy, particularly for complex morphological patterns [4].

Essential Research Tools and Reagents

Table 4: Research Toolkit for Geometric Morphometric Studies

| Tool Category | Specific Tools/Software | Primary Function | Application Context |

|---|---|---|---|

| Imaging Equipment | Micro-CT scanners, Surface laser scanners, Digital microscopes | 3D/2D specimen digitization | Data acquisition across scales |

| Landmark Digitization | TPS Dig2, ImageJ, Landmark Editor | Precise landmark coordinate collection | Initial data collection |

| Statistical Analysis | R (geomorph, Morpho), MorphoJ, PAST | GM-specific statistical analyses | Shape analysis and hypothesis testing |

| Machine Learning Integration | R (caret, randomForest), Python (scikit-learn, TensorFlow) | Advanced classification algorithms | Pattern recognition and prediction |

| Visualization | R (rgl, ggplot2), Paraview, Meshlab | 3D shape visualization and rendering | Results communication |

Integrated GM-ML Workflow for Classification Research

The following diagram illustrates a modern integrated workflow combining geometric morphometrics and machine learning for classification research:

This integrated framework leverages the strengths of both approaches: GM provides biological interpretability and visualization capabilities, while ML enhances classification performance and pattern recognition. The workflow can be adapted based on research questions, with the GM pathway preferred when understanding specific morphological changes is essential, and the direct ML pathway suitable for high-throughput classification tasks.

Application Notes and Implementation Guidelines

Protocol Optimization for Specific Research Contexts

Taxonomic Classification Studies For distinguishing closely related species, combine high-density semilandmarks with functional data analysis approaches [3]. The dorsal craniodental view has proven particularly informative for shrew species classification. Implement cross-validation procedures to avoid overfitting, especially with limited sample sizes.

Paleontological Applications When working with fragmentary fossil material, utilize template-based semilandmark methods to reconstruct missing regions [2]. Machine learning approaches are particularly valuable for identifying subtle morphological patterns indicative of domestication or environmental adaptations in archaeobotanical remains [4].

Developmental and Evolutionary Studies For analyzing symmetry and asymmetry in evolutionary developmental contexts, implement the Procrustes ANOVA framework to separate directional asymmetry, fluctuating asymmetry, and antisymmetry components [1]. This approach provides insights into developmental stability and canalization.

Data Quality Assurance and Validation

Landmark Reliability Assessment

- Conduct multiple digitization sessions to calculate measurement error

- Use intraclass correlation coefficients to quantify repeatability

- Implement Procrustes ANOVA to partition variance components

Model Validation Protocols

- Apply k-fold cross-validation for machine learning models

- Use holdout test sets never exposed during model training

- Calculate sensitivity, specificity, and balanced accuracy metrics

- Generate confusion matrices to identify systematic misclassifications

Future Directions and Emerging Methodologies

The field continues to evolve with several promising developments:

- Deep learning integration with 3D landmark data for improved classification accuracy

- Automated landmark placement using neural networks to reduce digitization time

- Multimodal data fusion combining geometric morphometrics with genetic, ecological, and functional data

- Open science frameworks enhancing reproducibility through shared data and code protocols [5]

Geometric morphometrics, particularly when integrated with machine learning, provides a powerful quantitative framework for addressing fundamental questions in evolutionary biology, systematics, and functional morphology. By following these standardized protocols and leveraging the appropriate tools, researchers can maximize the insights gained from morphological data while ensuring reproducibility and statistical rigor.

The quantitative analysis of shape, or morphometrics, has undergone a revolutionary transformation with the advent of geometric morphometrics (GM), which enables researchers to capture and analyze the complete geometry of anatomical structures rather than relying on simple linear measurements. This paradigm shift has created unprecedented opportunities across biological, medical, and materials sciences—from classifying insect species for agricultural biosecurity to assessing nutritional status in children and characterizing electro-chemical interfaces in energy materials [6] [7] [8]. However, as morphological datasets grow in dimensionality and complexity, traditional statistical methods like Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA) face fundamental limitations in capturing the intricate, non-linear patterns inherent in biological and material structures.

PCA, while invaluable for exploratory data analysis and dimensionality reduction, operates on the fundamental assumption that the most informative directions in data space are linear combinations of the original variables that maximize variance [9] [10]. This linearity assumption proves problematic when analyzing complex morphological structures where shape variation follows curved manifolds rather than straight lines. Similarly, LDA, despite its supervised nature that makes it powerful for classification tasks, seeks linear boundaries between predefined classes and assumes normal data distribution and equal class covariances [9] [10]. These mathematical presuppositions rarely hold true for real-world morphological data, where allometric growth patterns, ecological adaptations, and evolutionary constraints create complex non-linear relationships.

The limitations of these traditional approaches become particularly evident in high-stakes applications such as medical diagnostics, species identification with quarantine implications, or development of functional materials, where accurate classification directly impacts health outcomes, economic decisions, and scientific advancement [6] [8] [11]. This application note examines these limitations through both theoretical and practical lenses, provides detailed protocols for implementing advanced machine learning alternatives, and offers a strategic framework for selecting appropriate analytical pathways based on specific research questions and data characteristics.

Critical Limitations of PCA and LDA for Morphological Data Analysis

The Linearity Constraint in Non-Linear Morphological Spaces

The most fundamental limitation of both PCA and LDA lies in their inherent linearity assumption, which directly contradicts the non-linear nature of most morphological phenomena. Biological structures develop and evolve along curved trajectories, with shape changes often following complex allometric patterns where form changes disproportionately with size [9]. When researchers apply PCA to such data, the resulting principal components may effectively capture variance but fail to represent the true underlying biological or physical structure. For instance, in taxonomic studies of leaf-footed bugs (Acanthocephala species), PCA of pronotum shapes accounted for 67% of total shape variation but still resulted in morphological overlaps between closely related species, limiting definitive classification [11].

The linearity problem becomes even more pronounced with LDA, which constructs linear decision boundaries between classes. In morphological datasets with complex class distributions, these straight boundaries inevitably misclassify specimens that fall in the curved regions between class centroids. This limitation was evident in electrochemical impedance spectroscopy data analysis, where LDA's performance for classifying equivalent circuits "crucially depends on slow electrochemical processes" and showed inferior performance compared to non-linear methods [8]. The algorithm's struggle to capture the complex, frequency-dependent processes at electrode-electrolyte interfaces highlights how physical and biological phenomena often inhabit spaces that cannot be adequately partitioned with linear hyperplanes.

The Curse of Dimensionality and Data Sparsity

Morphometric studies frequently generate high-dimensional data, particularly when using landmark-based approaches with numerous coordinates or outline-based methods with hundreds of semilandmarks. In these high-dimensional spaces, PCA and LDA face the "curse of dimensionality," where data becomes increasingly sparse as dimensions grow, fundamentally undermining statistical reliability [9] [10]. The data sparsity problem means that the number of required training examples grows exponentially with each additional dimension to maintain the same coverage density—a requirement rarely feasible in morphological studies where sample collection is often expensive, time-consuming, or limited by rarity.

This dimensionality challenge manifests practically in multiple ways. PCA components become increasingly unstable with high dimension-to-sample size ratios, with the direction of variance captured by each principal component shifting substantially with the addition of new specimens [9]. For LDA, the covariance matrix estimation becomes numerically unstable when the number of features approaches the number of samples, leading to overfitted models that fail to generalize to new data. Research on roselle (Hibiscus sabdariffa L.) morphological traits demonstrated that machine learning models like Random Forest significantly outperformed traditional methods in capturing non-linear genotype-by-environment interactions, achieving R² values of 0.84 compared to poorer performance with linear models [12]. This performance gap underscores how linear methods struggle with the high-dimensional, complex relationships characteristic of morphological datasets.

Sensitivity to Statistical Assumptions and Data Artifacts

Both PCA and LDA carry stringent statistical assumptions that morphological data frequently violate. LDA assumes multivariate normal distributions within each class, equal covariance matrices across classes, and absence of multicollinearity—conditions rarely satisfied in morphological studies where sampling is often unbalanced and covariates are intrinsically correlated [9] [10]. PCA, while less assumption-bound, remains highly sensitive to data scaling, outliers, and missing values, which are common challenges in morphological research involving natural variation or imperfect preservation.

The practical consequences of these statistical limitations are evident across multiple domains. In geometric morphometric approaches for classifying children's nutritional status, researchers noted significant challenges with out-of-sample classification using traditional GM workflows based on Procrustes alignment and linear discrimination [6]. The requirement for a new global alignment for each new specimen introduced artifacts and dependencies on template selection, complicating real-world deployment. Similarly, in urban form analysis, PCA could only capture linear variance in data, failing to identify complex morphological patterns that non-linear methods like UMAP successfully revealed [13]. These case studies highlight how the theoretical foundations of traditional statistical methods constrain their practical utility for complex morphological data.

Table 1: Comparative Limitations of PCA and LDA for Morphological Data Analysis

| Limitation Aspect | Impact on PCA | Impact on LDA | Example from Literature |

|---|---|---|---|

| Linearity Assumption | Fails to capture curved manifolds and allometric trajectories | Creates suboptimal linear boundaries between non-linearly separable classes | Urban form analysis required UMAP to reveal non-linear patterns [13] |

| High-Dimensional Data | Components become unstable with more dimensions than samples | Covariance matrix estimation fails, leading to overfitting | Roselle plant morphology better analyzed with Random Forest (R²=0.84) [12] |

| Statistical Assumptions | Sensitive to outliers, scaling, and missing data | Requires multivariate normality and equal covariances | EIS data classification required 1D-CNN to handle complex patterns [8] |

| Class Imbalance | Not directly applicable (unsupervised) | Performance degrades with unbalanced class sizes | Insect identification showed morphological overlaps in closely related species [11] |

| Interpretability | Components may not correspond to biologically meaningful axes | Directions maximize separation but may not reflect causal factors | Nutritional assessment from arm shapes required specialized alignment [6] |

Advanced Machine Learning Approaches for Morphological Data

Non-Linear Manifold Learning Techniques

Non-linear dimensionality reduction techniques address the fundamental linearity constraint of PCA by explicitly modeling the curved manifolds upon which morphological data naturally resides. Algorithms such as t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) have demonstrated remarkable success in preserving both local and global topological structures in complex morphological datasets [9] [13]. These methods operate on different principles than PCA—rather than maximizing variance, they preserve neighborhood relationships, enabling them to unfold curved morphological spaces into lower-dimensional representations that maintain meaningful relationships between specimens.

The practical advantages of these non-linear approaches are particularly evident in visualization and exploratory analysis of morphological data. In urban form studies, researchers found that UMAP combined with BIRCH clustering successfully identified 14 distinct urban form types organized into five families with similar characteristics across the metropolitan area of Thessaloniki, Greece [13]. The non-linear embedding captured complex multi-scale morphological patterns that PCA failed to reveal, enabling more nuanced understanding of urban development patterns. Similarly, in single-cell RNA sequencing data (a form of molecular morphology), t-SNE has become the standard for visualizing high-dimensional gene expression patterns, allowing researchers to identify distinct cell types and states based on their transcriptional profiles [9]. These successes across domains highlight how abandoning the linearity constraint enables more faithful representation of complex morphological spaces.

Deep Learning Architectures for Representation Learning

Deep learning methods, particularly autoencoders and convolutional neural networks (CNNs), offer powerful alternatives for morphological data analysis by learning hierarchical representations directly from raw data without relying on pre-specified features or linear transformations. Autoencoders learn to compress high-dimensional morphological data into lower-dimensional latent spaces through encoder-decoder architectures, typically outperforming PCA in reconstruction accuracy and preservation of semantically meaningful features [9] [10]. Variational autoencoders (VAEs) extend this approach by learning probabilistic latent spaces that enable generative sampling and interpolation between morphological forms.

CNNs have revolutionized image-based morphological analysis, automatically learning relevant features from pixel data without requiring manual landmark annotation. In astrophysics, the Spherinator project employs a variational autoencoder with convolutional neural networks to create an explorable 2D representation of simulated galaxy images, enabling morphological classification at unprecedented scale [14]. Similarly, in electrochemical research, 1D-CNNs achieved approximately 86% accuracy in classifying equivalent circuits from impedance spectroscopy data, significantly outperforming linear methods and providing insights into the critical frequency ranges that drive classification decisions [8]. These deep learning approaches demonstrate particular strength when applied to large, complex morphological datasets where manual feature engineering becomes impractical and linear approximations fail to capture meaningful patterns.

Ensemble and Hybrid Approaches

Ensemble methods like Random Forest and hybrid approaches that combine multiple algorithms offer robust alternatives for morphological classification tasks that challenge traditional methods. Random Forest operates by constructing multiple decision trees during training and outputting the mode of classes (classification) or mean prediction (regression) of the individual trees, effectively handling non-linear relationships and high-dimensional data without succumbing to overfitting as readily as single models [12]. Its inherent feature importance measures also provide interpretability missing from many deep learning approaches.

The integration of machine learning with multi-objective optimization algorithms represents a particularly powerful paradigm for morphological analysis. In roselle plant research, combining Random Forest with the Non-dominated Sorting Genetic Algorithm II (NSGA-II) enabled researchers to simultaneously optimize multiple conflicting morphological traits—branch number, growth period, boll number, and seed number—identifying optimal genotype and planting date combinations that would be impossible to discover with traditional methods [12]. Similarly, hybrid workflows that combine non-linear dimensionality reduction with specialized clustering algorithms, such as the UMAP + BIRCH pipeline used in urban form analysis, offer scalable solutions for detecting coherent morphological types in large, high-dimensional datasets [13]. These integrated approaches demonstrate how moving beyond standalone statistical methods enables more comprehensive morphological analysis and optimization.

Table 2: Machine Learning Alternatives to PCA and LDA for Morphological Data

| Method | Key Advantages | Ideal Use Cases | Implementation Considerations |

|---|---|---|---|

| t-SNE | Preserves local structure and reveals clusters | Visualization of high-dimensional data, exploratory analysis | Perplexity parameter sensitive; cluster sizes not meaningful [9] [10] |

| UMAP | Better preservation of global structure than t-SNE | Large-scale morphological datasets, preprocessing for clustering | More scalable than t-SNE; preserves more global structure [13] |

| Autoencoders | Learns non-linear representations; generative capability | Complex feature extraction, data compression, anomaly detection | Requires more data and tuning; variational versions enable sampling [9] [14] |

| Random Forest | Handles non-linearity and high dimensionality; robust to outliers | Classification and regression with complex feature interactions | Provides feature importance; less interpretable than linear models [12] |

| 1D/2D-CNNs | Automatically learns relevant features from raw data | Image-based morphology, spectral data, time-series morphology | Requires substantial data; minimal preprocessing needed [8] |

Experimental Protocols for Advanced Morphological Analysis

Protocol 1: Dimensionality Reduction with UMAP

Principle: Uniform Manifold Approximation and Projection (UMAP) constructs a high-dimensional graph representation of data then optimizes a low-dimensional layout to preserve as much of the topological structure as possible [13]. Unlike PCA, UMAP makes no linearity assumptions and can capture complex non-linear relationships in morphological data.

Step-by-Step Workflow:

- Data Preparation: Standardize all morphological features (landmark coordinates, linear measurements, or outline data) using z-score normalization to ensure equal contribution to the manifold learning process.

- Parameter Selection: Set the number of neighbors (typically 15-50) to balance local versus global structure preservation. Higher values emphasize global structure.

- Minimum Distance Tuning: Adjust the minimum distance parameter (typically 0.1-0.5) to control how clustered the embedding appears. Lower values result in tighter clusters.

- Manifold Construction: Compute the UMAP embedding using the standardized data and selected parameters.

- Validation: Assess embedding quality through downstream tasks (clustering accuracy, classification performance) or qualitative assessment of known morphological groupings.

Applications: This protocol has been successfully applied to urban form analysis, where UMAP reduced 17 multi-scale morphological indicators to a lower-dimensional space before clustering with BIRCH, revealing 14 distinct urban form types with geographical coherence [13].

Protocol 2: Morphological Classification with 1D-CNN

Principle: 1D Convolutional Neural Networks (CNNs) learn hierarchical features directly from raw data sequences, making them ideal for classifying morphological data represented as landmark coordinates, outline points, or spectral measurements [8].

Step-by-Step Workflow:

- Data Representation: Format morphological data as 1D sequences, preserving the natural ordering of landmarks or measurements.

- Architecture Design: Construct a 1D-CNN with alternating convolutional and pooling layers to learn features at multiple scales, followed by fully connected layers for classification.

- Model Training: Train the network using appropriate loss functions (categorical cross-entropy for classification) with regularization (dropout, batch normalization) to prevent overfitting.

- Interpretation: Apply explainable AI techniques like SHAP analysis to identify which morphological features most influence classification decisions.

- Validation: Evaluate performance using hold-out test sets or cross-validation, reporting accuracy, F1-score, and confusion matrices.

Applications: This approach achieved approximately 86% accuracy in classifying equivalent circuits from electrochemical impedance spectroscopy data, significantly outperforming traditional methods and providing insights into the critical frequency ranges that drive classification decisions [8].

Protocol 3: Multi-Objective Optimization with ML and NSGA-II

Principle: Integrating machine learning models with multi-objective evolutionary algorithms enables simultaneous optimization of multiple, potentially conflicting morphological traits [12].

Step-by-Step Workflow:

- Data Collection: Assemble morphological measurements across multiple traits of interest from specimens representing different genotypes, treatments, or conditions.

- Model Training: Develop predictive models (Random Forest recommended) for each morphological trait based on input parameters (genotype, environmental conditions).

- Optimization Setup: Define objective functions for each trait to be optimized, specifying direction (maximize/minimize) and constraints.

- NSGA-II Execution: Implement the Non-dominated Sorting Genetic Algorithm II to identify Pareto-optimal solutions representing the best trade-offs between objectives.

- Validation: Experimentally verify predicted optima and refine models iteratively with additional data.

Applications: This protocol successfully optimized roselle plant morphology, identifying that the Qaleganj genotype planted on May 5 produced optimal values for branch number (26), growth period (176 days), boll number (116), and seed numbers (1517) per plant [12].

Table 3: Essential Software and Computational Tools for Morphological Machine Learning

| Tool/Platform | Primary Function | Application in Morphological Research | Implementation Considerations |

|---|---|---|---|

| MorphoJ [11] | Geometric morphometrics analysis | Generalized Procrustes analysis, PCA, discriminant analysis | Specialized for landmark data; user-friendly interface |

| Scikit-learn [12] | Machine learning in Python | PCA, LDA, Random Forest, and other ML algorithms | Extensive documentation; integration with scientific Python stack |

| UMAP [13] | Non-linear dimensionality reduction | Visualization and preprocessing of complex morphological data | Parameters significantly affect results; requires tuning |

| TensorFlow/PyTorch [14] | Deep learning frameworks | Autoencoders, CNNs for complex morphological pattern recognition | Steeper learning curve; requires GPU for large datasets |

| StreamFlow/Flyte [14] | Workflow orchestration | Reproducible pipelines for large-scale morphological analysis | StreamFlow for HPC clusters; Flyte for cloud-native environments |

The limitations of PCA and LDA for complex morphological data necessitate a more nuanced, problem-driven approach to analytical method selection. Through the case studies and protocols presented herein, a clear framework emerges for matching methodological approach to research question. For visualization and exploration of unknown morphological spaces, non-linear dimensionality reduction techniques like UMAP provide superior insights compared to PCA. For classification tasks with complex decision boundaries, deep learning approaches like 1D-CNNs outperform LDA while offering interpretability through explainable AI techniques. Most powerfully, integrated machine learning and optimization frameworks enable not just description but active optimization of morphological traits.

The progression beyond traditional statistics does not render methods like PCA and LDA obsolete—they remain valuable for initial data exploration, baseline comparisons, and applications where linear approximations suffice. However, researchers working with complex morphological data must expand their analytical toolkit to include the non-linear, ensemble, and deep learning approaches detailed in this application note. By doing so, they can overcome the fundamental constraints of linear methods and uncover richer, more meaningful patterns in morphological data—advancing fields as diverse as taxonomy, materials science, biomedical research, and beyond.

Functional Data Geometric Morphometrics (FDGM) represents a paradigm shift in shape analysis, moving beyond discrete landmark points to model biological forms as continuous mathematical curves. This innovative approach combines the statistical rigor of Functional Data Analysis (FDA) with the established principles of Geometric Morphometrics (GM), enabling researchers to capture subtle shape variations that traditional methods might miss [3]. By treating entire shapes as functions, FDGM opens new possibilities for analyzing complex biological structures in evolutionary biology, taxonomy, and paleontology.

The fundamental innovation of FDGM lies in its treatment of landmark data not as isolated points, but as points interconnected to form continuous curves. These curves are then represented as linear combinations of basis functions, allowing for analysis of shape variation across the entire form rather than just at predetermined landmark locations [3]. This approach is particularly valuable for studying structures where biologically significant shape variations occur between traditional landmarks, providing a more comprehensive understanding of morphological diversity.

Core Concepts of FDGM

From Discrete Landmarks to Continuous Functions

Traditional geometric morphometrics relies on the precise placement of anatomical landmarks - discrete points that correspond biologically across specimens [3]. While powerful, this approach inherently limits analysis to specific, predetermined locations, potentially missing meaningful shape information that occurs between landmarks.

FDGM addresses this limitation through a conceptual and mathematical transformation:

- Curve Conversion: 2D landmark data is converted into continuous curves through interpolation techniques

- Basis Function Representation: These continuous curves are represented as linear combinations of mathematical basis functions

- Functional Space Analysis: Statistical analyses are performed within the functional space rather than on discrete point coordinates [3]

This functional representation enables researchers to analyze shape variation as a continuous phenomenon across the entire structure, rather than being constrained to discrete measurement points.

Mathematical Foundation

The mathematical framework of FDGM builds upon functional data analysis principles. Each shape is represented as a function:

[f(t) = \sum{k=1}^{K} ck \phi_k(t)]

where (\phik(t)) are basis functions (e.g., Fourier basis or B-splines), (ck) are coefficients, and (t) represents the spatial domain [3]. This representation allows for the application of functional versions of standard statistical methods, including functional principal component analysis (FPCA) and functional linear discriminant analysis.

A critical step in FDGM involves curve registration or functional alignment to ensure that corresponding geometric features (peaks, valleys) are properly aligned across specimens [3]. This process accounts for non-rigid deformations and complex shape changes that may not be captured by traditional Procrustes alignment alone.

Comparative Framework: FDGM vs. Traditional Approaches

Methodological Comparison

Table 1: Comparison between Traditional GM and FDGM Approaches

| Feature | Traditional GM | FDGM |

|---|---|---|

| Data Representation | Discrete landmark coordinates | Continuous curves/functions |

| Shape Information | Limited to landmark positions | Captures between-landmark variation |

| Alignment Method | Generalized Procrustes Analysis (GPA) | GPA + Functional alignment/curve registration |

| Statistical Framework | Multivariate statistics | Functional data analysis |

| Landmark Requirement | Requires exact correspondence | More flexible with landmark correspondence |

Performance Advantages

Recent studies have demonstrated significant advantages of FDGM over traditional approaches:

- Enhanced Sensitivity: FDGM shows improved sensitivity to subtle shape variations, particularly for species with minor morphological distinctions [3]

- Superior Classification Accuracy: In shrew craniodental classification, FDGM outperformed traditional GM, with the dorsal view providing best distinction between species [3]

- Comprehensive Shape Capture: The continuous curve approach captures shape information between traditional landmarks, providing more complete morphological characterization [3]

Extension to three-dimensional data further enhances these advantages. Recent innovations incorporate square-root velocity function (SRVF) and arc-length parameterization for 3D morphometric data, enabling analysis of complex surfaces and volumes while preserving geometric properties [15].

Application Notes: Implementation Protocols

Standard FDGM Protocol for 2D Data

Table 2: Step-by-Step FDGM Protocol for 2D Shape Classification

| Step | Procedure | Tools/Packages | Key Parameters |

|---|---|---|---|

| 1. Data Acquisition | Capture 2D images of specimens under standardized conditions | Digital camera with fixed setup | Consistent orientation, scale, and resolution |

| 2. Landmark Digitization | Place homologous landmarks on all specimens | TpsDig2, MorphoJ [16] | 13-15 landmarks typically sufficient [16] |

| 3. Curve Conversion | Convert landmark coordinates to continuous curves | Custom R/Python scripts | Fourier or B-spline basis functions |

| 4. Functional Alignment | Align curves to account for non-rigid deformations | FDA packages (R/Python) | Landmark-based registration |

| 5. Shape Analysis | Apply functional PCA and discriminant analysis | Functional data analysis packages | Number of principal components |

| 6. Machine Learning Integration | Implement classifiers using shape features | Naïve Bayes, SVM, Random Forest, GLM [3] | Cross-validation for parameter tuning |

Advanced 3D FDGM Protocol

For three-dimensional data, the protocol extends to incorporate recent methodological innovations:

- Data Acquisition: 3D scanning or photogrammetry (e.g., Structure-from-Motion) [17]

- Preprocessing: Point cloud classification using geometric features and RGB values [17]

- Functional Representation: Apply SRVF and arc-length parameterization [15]

- Analysis Pipelines: Implement multiple approaches including FDM, arc-FDM, soft-SRV-FDM, and elastic-SRV-FDM [15]

Machine Learning Integration

Classification Framework

The integration of machine learning with FDGM significantly enhances classification performance across biological applications:

- Feature Extraction: Functional principal component scores serve as input features for classifiers [3]

- Algorithm Selection: Multiple algorithms including Naïve Bayes, Support Vector Machine, Random Forest, and Generalized Linear Models have been successfully applied [3]

- Performance Validation: Cross-validation and independent test sets ensure robust performance assessment

In shrew species classification, the combination of FDGM with machine learning achieved superior classification accuracy compared to traditional GM approaches, with the dorsal craniodental view providing the most discriminatory power [3].

Comparative Performance

Table 3: Machine Learning Classification Performance with Morphometric Approaches

| Application Domain | Traditional GM Accuracy | FDGM Accuracy | Best Performing Classifier |

|---|---|---|---|

| Shrew Craniodental Classification | Lower than FDGM [3] | Superior performance [3] | Varies by view (dorsal best) [3] |

| Deep-Sea Coral/Sponge Classification | N/A | N/A | Random Forest (84.5% accuracy) [17] |

| Seed Domestication Classification | Outperformed by CNN [4] | N/A | Convolutional Neural Networks [4] |

| Kangaroo Dietary Classification | Baseline for comparison [15] | Enhanced with FDA innovations [15] | Support Vector Machines [15] |

Research Toolkit

Essential Software and Analytical Tools

Table 4: Essential Research Tools for FDGM Implementation

| Tool Name | Function | Application Context |

|---|---|---|

| TpsDig2 [16] | Landmark digitization | Collecting 2D coordinate data from images |

| MorphoJ [16] | Geometric morphometrics analysis | Traditional GM and preliminary shape analysis |

| R FDA Package | Functional data analysis | Implementing FDGM statistical analyses |

| Python Scikit-learn | Machine learning implementation | Classification algorithms and validation |

| Custom SRVF Scripts [15] | 3D functional analysis | Advanced 3D shape analysis pipelines |

Experimental Materials Protocol

For morphological studies employing FDGM:

- Sample Preparation: Standardize specimen orientation and imaging conditions [3]

- Landmark Selection: Choose biologically homologous points covering key morphological features [16]

- Data Quality Control: Implement reproducibility protocols including open data and code sharing [5]

- Validation Sets: Reserve specimens for independent testing of classification models [3]

Visualization and Workflow

FDGM Analytical Workflow: From specimen collection to classification results.

Methodological Comparison: Traditional GM versus FDGM approach.

Functional Data Geometric Morphometrics represents a significant advancement in shape analysis methodology. By modeling biological forms as continuous curves rather than discrete points, FDGM captures more comprehensive shape information and enhances classification performance when integrated with machine learning algorithms.

The future development of FDGM points toward several promising directions:

- Integration with Deep Learning: Combining functional data approaches with convolutional neural networks for enhanced pattern recognition [4]

- Expansion to 3D Data: Application of SRVF and elastic registration methods to three-dimensional morphological data [15]

- Multimodal Data Fusion: Combining shape data with other data types (genetic, ecological) for comprehensive biological analysis

- Reproducibility Frameworks: Addressing current limitations in reproducibility through standardized protocols and open data sharing [5]

As morphological studies continue to evolve, FDGM provides a powerful framework for extracting maximum biological information from shape data, with applications spanning taxonomy, evolutionary biology, ecology, and archaeological science. The integration of this innovative morphological approach with machine learning classification represents a particularly promising pathway for advancing quantitative morphological research.

Why Machine Learning? Addressing Non-Linearities and High-Dimensional Shape Data

The analysis of biological shape is a fundamental endeavor in fields ranging from drug development to evolutionary biology. Geometric Morphometrics (GM) has long been the standard quantitative framework for capturing and analyzing shape variation using landmark coordinates [3]. However, traditional statistical methods often struggle with the inherent complexities of shape data, which is characteristically high-dimensional and may contain complex non-linear relationships [3] [18]. Machine Learning (ML) provides a powerful suite of tools that directly address these challenges, enabling researchers to build more accurate and robust classification models from morphometric data. This document outlines the theoretical rationale for applying ML to GM and provides detailed protocols for its implementation in classification research.

The core challenge lies in the nature of shape data itself. After a Generalized Procrustes Analysis (GPA), which aligns landmark configurations by removing differences in position, orientation, and scale, the resulting data exists in a high-dimensional space [3]. When analyzing complex structures with many landmarks, the number of dimensions can easily exceed the number of specimens, a scenario where traditional statistical models are prone to overfitting and lose their ability to generalize to new data [18] [19]. Furthermore, the biological relationships underpinning shape variation—such as allometric growth patterns or adaptations to ecological niches—are often non-linear. While methods like Principal Component Analysis (PCA) can reduce dimensionality, they are inherently linear and may fail to capture these more complex patterns [3] [20].

Machine learning models are exceptionally well-suited to this context. They can natively handle high-dimensional input spaces and, through the use of non-linear activation functions (e.g., ReLU, Sigmoid) or kernel methods, learn intricate decision boundaries that linear models cannot [21]. This allows ML to detect subtle, data-driven patterns in shape, thereby improving classification accuracy for tasks such as taxonomic identification, morphological response to treatment, or diagnostic screening [5] [4] [22].

Quantitative Comparisons: Machine Learning vs. Traditional Morphometrics

The superiority of ML approaches, particularly deep learning, is demonstrated by their performance in direct comparative studies. The following tables summarize key findings from recent research.

Table 1: Comparative Performance of GM and ML in Species Classification

| Study Subject | Method | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| Shrew Crania (3 species) | Functional Data GM (FDGM) + Machine Learning | Classification Accuracy | Favored FDGM; Dorsal view was best | [3] |

| Archaeobotanical Seeds | Geometric Morphometrics (GMM) | Classification Accuracy | Outperformed by CNN | [4] |

| Archaeobotanical Seeds | Convolutional Neural Network (CNN) | Classification Accuracy | Superior to GMM | [4] |

| Cut Marks (Tool Type) | Geometric Morphometrics + Machine Learning | Identification of tool material (flint vs. metal) | Successfully identified flint tools on Iron Age site | [22] |

Table 2: Machine Learning Algorithms for High-Dimensional and Small Data Challenges

| Algorithm Category | Example Algorithms | Strengths | Ideal Use Case in Morphometrics |

|---|---|---|---|

| Traditional ML | Support Vector Machine (SVM), Random Forest (RF), Naïve Bayes | Effective in high-dimensional spaces; Less prone to overfitting with small data than deep learning | Initial classification models with limited sample size [3] [19] |

| Deep Learning | Convolutional Neural Networks (CNNs) | Automatically learns relevant features; State-of-the-art for image-based classification | Direct classification from images, bypassing landmarking [5] [4] |

| Dimensionality Reduction | PCA, t-SNE, UMAP, Autoencoders | Reduces data complexity; Aids in visualization and model performance | Pre-processing step for high-dimensional landmark data [18] [23] |

Experimental Protocols

This section provides a detailed workflow for applying machine learning to geometric morphometric data, from data acquisition to model interpretation.

Protocol 1: A Standard Workflow for Landmark-Based ML Classification

Application Note: This protocol is designed for classification tasks (e.g., species, genotypes, treatment groups) when data is collected as 2D or 3D landmarks.

Materials and Reagents:

- Specimens (e.g., skulls, seeds, medical images)

- Imaging equipment (e.g., microscope with camera, micro-CT scanner)

- Software for digitizing landmarks (e.g., MorphoJ, tpsDig2)

- Computing environment with programming capabilities (e.g., R, Python)

Procedure:

- Data Acquisition:

- Image Capture: Standardize imaging conditions (orientation, scale, lighting) to minimize non-biological variance. For the shrew crania study, three standardized views (dorsal, jaw, lateral) were used [3].

- Landmark Digitization: Identify and digitize homologous anatomical landmarks across all specimens. The number of landmarks should be sufficient to capture the geometry of the biological structure [3].

Data Preprocessing:

- Generalized Procrustes Analysis (GPA): Perform GPA on the raw landmark coordinates to superimpose configurations, removing variation due to translation, rotation, and scale. The resulting Procrustes coordinates represent shape variables for subsequent analysis [3] [22].

- Training/Test Split: Randomly split the Procrustes coordinates and their associated class labels into a training set (e.g., 70-80%) and a held-out test set (e.g., 20-30%). The test set must only be used for the final evaluation of the model's generalization ability.

Dimensionality Reduction and Model Training:

- Principal Component Analysis (PCA): Perform PCA on the Procrustes coordinates from the training set. The principal components (PCs) are new, uncorrelated variables that capture the major axes of shape variance [3] [22].

- Feature Selection: Use the PC scores as features for the machine learning model. The number of PCs to retain can be determined by a scree plot or by retaining enough PCs to explain a high percentage (e.g., >95%) of the total variance.

- Model Training: Train a selected machine learning classifier (e.g., SVM, Random Forest, Naïve Bayes) using the PC scores from the training set. Optimize model hyperparameters via cross-validation on the training set only [3].

Model Evaluation:

- Prediction: Use the trained model to predict class labels for the held-out test set.

- Performance Metrics: Calculate accuracy, precision, recall, F1-score, and generate a confusion matrix to evaluate model performance [4].

Protocol 2: Functional Data and Deep Learning Approaches

Application Note: This protocol outlines advanced methods that can capture subtler shape variations, either by treating outlines as continuous functions or by using deep learning to bypass landmark digitization.

Procedure:

- Functional Data Geometric Morphometrics (FDGM):

- Curve Representation: Convert discrete 2D landmark data into continuous curves using mathematical basis functions (e.g., B-splines) [3].

- Analysis: Analyze the resulting functional data using methods like functional PCA. This approach can be more sensitive to shape variations that occur between traditional landmarks [3].

- Machine Learning Integration: As with standard GM, the scores from functional PCA can be used as features in standard machine learning classifiers to improve classification performance [3].

- Deep Learning with Convolutional Neural Networks (CNNs):

- Input Data: Use the standardized raw images as direct input to the model, bypassing the landmark digitization step entirely [4].

- Model Architecture: Employ a CNN architecture (e.g., VGG, ResNet). The convolutional layers will automatically learn discriminative features directly from the pixel data.

- Training: Train the CNN on the labeled images. Techniques like transfer learning (using a pre-trained model) and data augmentation (rotating, flipping images) can be highly effective, especially with smaller datasets [5] [4].

- Comparison: This approach has been shown to outperform GMM in tasks like seed classification, as it leverages the full image information rather than a pre-defined set of points [4].

The following workflow diagram illustrates the two primary pathways for applying machine learning to shape data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Morphometric Machine Learning

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Structured-Light 3D Scanner (e.g., DAVID SLS-2) | High-resolution 3D model generation for detailed shape capture. | Used for creating 3D models of bones/tools for cross-sectional analysis [22]. |

| Generalised Procrustes Analysis (GPA) | The foundational statistical procedure for aligning landmark configurations and extracting pure "shape" variables. | A critical pre-processing step before any shape analysis [3] [22]. |

| R Statistical Software | Primary environment for conducting Geometric Morphometrics and traditional statistical analysis. | Key packages: Momocs for GMM, geomorph for GM analysis [4]. |

| Python Programming Language | Primary environment for building and training machine learning and deep learning models. | Key libraries: scikit-learn for SVM/RF, TensorFlow/PyTorch for CNNs, NumPy for data handling [18]. |

| Principal Component Analysis (PCA) | Linear dimensionality reduction technique to transform high-dimensional shape data into a lower-dimensional set of uncorrelated components. | PC scores are used as features for machine learning models to prevent overfitting [3] [18]. |

| Support Vector Machine (SVM) | A powerful classification algorithm effective in high-dimensional spaces, capable of learning non-linear boundaries using kernel functions. | One of several traditional ML models suitable for morphometric classification [3] [19]. |

| Convolutional Neural Network (CNN) | A class of deep neural networks most commonly applied to analyzing visual imagery, capable of automated feature learning. | Outperforms traditional GMM in image-based classification tasks (e.g., seed identification) [5] [4]. |

The integration of machine learning with geometric morphometrics represents a significant methodological advance for classification research. ML directly addresses the core challenges of morphometric data—its high dimensionality and potential non-linearities—by providing tools that are more flexible and powerful than traditional statistical methods. As demonstrated in studies across biology, archaeology, and paleontology, ML techniques, from SVMs to CNNs, consistently achieve high classification accuracy, uncover subtle morphological patterns, and offer automation potential. The protocols provided herein offer a roadmap for researchers in drug development and other scientific fields to leverage these powerful tools, thereby enhancing the rigor, reproducibility, and scope of their shape-based analyses.

Building the Machine Learning Pipeline for Morphometric Data

In the field of drug discovery and pharmaceutical research, the quantitative analysis of biological shape—or geometric morphometrics—has emerged as a critical tool for understanding phenotypic changes induced by therapeutic compounds or disease states [24]. The high failure rates and exorbitant costs associated with traditional drug development pipelines have intensified the need for more predictive preclinical models and analytical methods [25] [26]. Machine learning (ML) offers powerful capabilities for pattern recognition in complex datasets, but its effectiveness hinges on appropriate data preprocessing and feature engineering [25]. This application note details methodologies for transforming raw morphological data into features suitable for ML-driven classification research, with specific applications for researchers and drug development professionals.

Core Concepts in Morphological Feature Engineering

Procrustes Coordinates: Establishing Biological Homology

Procrustes analysis is a cornerstone of geometric morphometrics, providing a statistical framework for comparing biological shapes by removing non-shape-related variations. The process involves a similarity test for two datasets where each input matrix represents sets of points or vectors (the rows of the matrix) [27].

The Generalized Procrustes Analysis (GPA) standardizes configurations of landmark points through three operations [24] [28]:

- Translation: Configurations are centered around the origin by subtracting centroid coordinates.

- Scaling: Configurations are scaled to unit size, typically achieved by setting (tr(AA^{T}) = 1) [27].

- Rotation: Configurations are rotated to minimize the sum of squared distances between corresponding landmarks, known as the Procrustes distance [27].

The mathematical objective is to minimize (M^{2}=\sum(data1-data2)^{2}), the sum of the squares of the pointwise differences between the two input datasets [27]. This process ensures that shape comparisons focus solely on biologically meaningful variations rather than differences in position, orientation, or size.

Outline Representations: Capturing Continuous Morphology

While landmark-based methods excel when homologous points are available, many biological structures lack clearly defined landmarks or exhibit shape variations between phylogenetically distant species where homology is ambiguous [29]. Outline representations address this limitation by capturing the continuous contour of a structure. Common methodologies include:

- Elliptic Fourier Analysis (EFA): Describes closed contours through Fourier coefficients, effectively capturing smooth outlines [29].

- Landmark-Free Deep Learning: Approaches like Morpho-VAE (Morphological regulated Variational AutoEncoder) use image-based deep learning frameworks to extract morphological features without manual landmark annotations [29]. This method combines unsupervised and supervised learning to reduce dimensionality while focusing on morphologically discriminative features.

Experimental Protocols

Protocol 1: Generalized Procrustes Analysis for Standardization

Application Context: Aligning 3D nasal cavity landmark data to assess olfactory region accessibility for nose-to-brain drug delivery [24].

Materials and Software:

- 3D meshes of biological structures (e.g., from CT scans)

- Software: Viewbox 4.0, R with geomorph package [24]

- Anatomically defined fixed landmarks and sliding semi-landmarks

Methodology:

- Landmark Digitization: Manually place fixed anatomical landmarks on a template model in homologous regions present across all specimens [24].

- Semi-Landmark Placement: Distribute semi-landmarks across surface patches of the template model. Use Thin Plate Spline (TPS) warping to project these semi-landmarks from the template to each specimen, allowing them to slide tangentially along the surface to minimize bending energy [24].

- GPA Implementation: Input the raw landmark coordinates (fixed and slid semi-landmarks) into the GPA algorithm to perform:

- Output: The aligned Procrustes coordinates, which represent the shape information free of position, orientation, and size effects, are now ready for subsequent multivariate analysis or as features for machine learning models.

Protocol 2: Landmark-Free Feature Extraction Using Morpho-VAE

Application Context: Classifying primate mandible shapes to understand morphological adaptations without predefined landmarks [29].

Materials and Software:

- Sample images of biological structures (e.g., mandibles)

- Python with deep learning frameworks (e.g., TensorFlow, PyTorch)

- Morpho-VAE architecture [29]

Methodology:

- Image Preprocessing: For 3D objects, generate multiple 2D projections from different angles (e.g., frontal, lateral, superior). Standardize image size and orientation [29].

- Morpho-VAE Architecture Setup:

- Configure the VAE module with encoder and decoder networks.

- Integrate a classifier module that connects to the latent space.

- Define the combined loss function: (E{total} = (1 - \alpha)E{VAE} + \alpha E{C}), where (E{VAE}) is the VAE loss (reconstruction + regularization), (E_{C}) is the classification loss, and (\alpha) is a hyperparameter (e.g., 0.1) balancing both objectives [29].

- Model Training:

- Train the network on the image dataset.

- The encoder learns to compress input images into a low-dimensional latent space ((\zeta)).

- The classifier ensures that the latent space captures features discriminative for the labeled classes (e.g., species families) [29].

- Feature Extraction: Use the trained encoder to transform input images into latent vectors ((\zeta)). These vectors serve as the landmark-free feature representation for downstream machine learning tasks like classification or clustering.

The following diagram illustrates the Morpho-VAE workflow for landmark-free feature extraction:

Comparative Analysis of Feature Engineering Approaches

Table 1: Comparison of Morphological Feature Engineering Techniques

| Feature Type | Mathematical Foundation | Data Requirements | Primary Applications in Drug Discovery | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Procrustes Coordinates | Generalized Procrustes Analysis (GPA) [24] [28] | Anatomically defined landmarks (fixed and sliding semi-landmarks) [24] | - Personalizing nasal drug delivery [24]- Quantifying morphological biomarkers | - Maintains biological homology- Strong statistical theory- Results are interpretable | - Requires expert anatomical knowledge- Limited to structures with definable landmarks |

| Outline Representations (EFA) | Elliptic Fourier Analysis [29] | Continuous outline coordinates | - Characterizing cell morphology [29]- Analyzing organelle shapes | - Suitable for smooth, complex outlines- Does not require homologous points | - Less effective for structures with sharp angles or internal details- May require many coefficients |

| Landmark-Free Deep Learning (Morpho-VAE) | Variational Autoencoder (VAE) with classifier integration [29] | 2D image projections of 3D structures [29] | - High-throughput phenotypic screening- Classifying tissue morphology in digital pathology | - Fully automated- Captures complex, non-linear shape features- Can impute missing data [29] | - "Black box" nature reduces interpretability- Requires large datasets for training |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Computational Tools for Morphological Analysis

| Item Name | Specification/Function | Application Context |

|---|---|---|

| Viewbox 4.0 | Software for digitizing landmarks and semi-landmarks, and performing Geometric Morphometric analysis [24]. | Precise placement of anatomical landmarks and semi-landmarks on 3D models for Procrustes analysis [24]. |

R geomorph Package |

An R package for performing geometric morphometric shape analysis, including GPA and PCA [24]. | Statistical analysis of shape, multivariate regression, and visualization of shape variations. |

| Sliding Semi-Landmarks | Points placed on curves and surfaces that slide to minimize bending energy, allowing comparison of non-homologous regions [24]. | Capturing the geometry of complex biological surfaces and contours between fixed landmarks in 3D studies [24]. |

| Generalized Procrustes Analysis (GPA) | Algorithm that standardizes landmark configurations by removing effects of position, scale, and orientation [24] [28]. | The core step in landmark-based morphometrics to isolate pure "shape" information for statistical comparison. |

| Morpho-VAE Framework | A deep learning architecture combining a Variational Autoencoder (VAE) with a classifier to extract discriminative shape features [29]. | Landmark-free, automated feature extraction from 2D image data for classification tasks (e.g., mandible morphology) [29]. |

| ITK-SNAP | Open-source software for semi-automatic segmentation of 3D medical images [24]. | Creating 3D surface meshes from CT or MRI scans, which serve as the base for landmarking. |

Implementation Workflow for ML-Based Morphometric Classification

The integration of feature engineering with machine learning classification involves a structured pipeline, from data acquisition to model deployment, as visualized below:

This workflow demonstrates two parallel paths for feature extraction—landmark-based and landmark-free—that converge at the machine learning classification stage. This flexible approach allows researchers to select the most appropriate method based on their specific data characteristics and research objectives.

The integration of machine learning (ML) with geometric morphometric (GM) data is transforming biological classification research. By quantifying shape from anatomical landmarks, GM provides a rich, high-dimensional dataset that ML algorithms can leverage for precise taxonomic, ecological, and phenotypic discrimination [3]. This combination is particularly powerful in applications ranging from species classification to nutritional assessment and forensic analysis [6] [30]. The selection of an appropriate algorithm is paramount, as the performance of different ML models can vary significantly based on the data structure, sample size, and research objective.

This article provides a structured comparison of four prominent classification algorithms—Support Vector Machine (SVM), Random Forest (RF), Naïve Bayes (NB), and Generalized Linear Models (GLM)—within the context of geometric morphometrics. We present quantitative performance comparisons from recent studies, detail standardized protocols for implementation, and visualize the analytical workflow to equip researchers with the practical knowledge needed to select and apply the optimal model for their classification tasks.

Performance Comparison in Morphometric Research

Empirical evidence from recent studies provides critical guidance for algorithm selection. The following tables summarize the performance of SVM, RF, NB, and GLM across diverse morphometric classification tasks.

Table 1: Algorithm Performance in Shrew Craniodental Species Classification [3] [31]

| Algorithm | Accuracy | Precision | Recall | F1-Score | Notes |

|---|---|---|---|---|---|

| Generalized Linear Model (GLM) | 95.4% | Not Reported | Not Reported | Not Reported | Best performer with Functional Data GM |

| Support Vector Machine (SVM) | 89.9% | Not Reported | Not Reported | Not Reported | Third best performance |

| Random Forest (RF) | 90.4% | Not Reported | Not Reported | Not Reported | Second best performance |

| Naïve Bayes (NB) | 86.5% | Not Reported | Not Reported | Not Reported | Lowest performance among the four |

Table 2: Algorithm Performance in Other Morphometric and Classification Contexts

| Study Context | Best Performer | Performance | Other Algorithms | Performance |

|---|---|---|---|---|

| Fake News Classification [32] | SVM | 100% Accuracy | Random Forest | 99% Accuracy |

| Naïve Bayes | 94% Accuracy | |||

| Sex Estimation from 3D Tooth Shapes [30] | Random Forest | 97.95% Accuracy | Support Vector Machine | 70-88% Accuracy |

| Artificial Neural Network | 58-70% Accuracy | |||

| Stingless Bee Species Classification [33] | SVM with SMOTE | AUC: 0.9918, Sensitivity: 0.959 | Random Forest with SMOTE | Lower AUC & Sensitivity |

Essential Research Toolkit for GM-ML Classification

A successful GM-ML pipeline requires specialized tools and software for data acquisition, processing, and analysis.

Table 3: Key Research Reagents and Software Solutions

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| 3D Scanner / Digitizer | Captures high-resolution 3D surface data of specimens. | Lab-based scanners (e.g., inEOS X5) for dental casts [30]. |

| Landmarking Software | Allows precise placement of 2D/3D landmarks on specimens. | 3D Slicer [30], MorphoJ [30], Thin Plate Spline (TPS) software [3]. |

| Statistical Shape Analysis Tools | Performs Procrustes alignment and basic statistical shape analysis. | MorphoJ [30], PAleontological STatistics (PAST) [30]. |

| R / Python Programming Environment | Provides a flexible platform for Functional Data Analysis and advanced ML modeling. | R packages for FDA and scikit-learn in Python for implementing SVM, RF, NB, and GLM. |

| Data Balancing Algorithms | Addresses class imbalance in datasets to improve model performance. | Synthetic Minority Oversampling Technique (SMOTE), Adaptive Synthetic (ADASYN) [33]. |

Experimental Protocols for GM-ML Classification

Protocol 1: Standard Workflow for 2D/3D Geometric Morphometrics with ML

This protocol outlines the foundational steps for classifying shapes, such as shrew crania or children's arm shapes, using landmark data [3] [6].

- Sample Collection & Imaging: Collect specimens or images under standardized conditions. For the shrew study, 89 crania were imaged from three views (dorsal, jaw, lateral) [3]. For child nutritional status, standardized photographs of the left arm are taken [6].

- Landmark Digitization: Identify and digitize homologous anatomical landmarks (and semi-landmarks if needed) on all specimens using software like 3D Slicer or MorphoJ. The number and type of landmarks are critical and study-dependent [30].

- Generalized Procrustes Analysis (GPA): Superimpose the raw landmark configurations to remove the effects of translation, rotation, and scale. This results in Procrustes coordinates that represent shape variables [3] [30].

- Feature Space Reduction: Perform Principal Component Analysis (PCA) on the Procrustes coordinates. The resulting principal component (PC) scores, which capture the major axes of shape variation, are used as features for the machine learning models [3] [30].

- Model Training & Validation:

- Split the PC scores into training and test sets, or use cross-validation (e.g., leave-one-out).

- Train the four classifiers (SVM, RF, NB, GLM) on the training data.

- Tune hyperparameters (e.g., SVM's regularization parameter

C, RF's number of trees) via grid search. - Evaluate model performance on the held-out test set using metrics from Table 1.

Protocol 2: Functional Data Geometric Morphometrics (FDGM) with ML

This advanced protocol enhances shape analysis by treating landmark outlines as continuous curves, which can capture more subtle shape variations [3] [34].

- Steps 1-3: Follow the same sample collection, landmark digitization, and GPA as in Protocol 1.

- Curve Conversion: Convert the aligned 2D landmark configurations into continuous curves using mathematical representation via basis functions (e.g., B-splines) [3].

- Functional PCA (FPCA): Apply FPCA to the continuous curves to extract the dominant modes of functional variation. The resulting FPC scores serve as the feature set for classification [3] [34].

- Classification & Comparison: Train and validate the SVM, RF, NB, and GLM classifiers on the FPC scores. Compare their performance against the results from the standard GM pipeline (Protocol 1) to assess the benefit of the FDA approach [3].

Protocol 3: Handling Class Imbalance with SMOTE/ADASYN

This protocol is applied when dealing with imbalanced datasets, where some classes (e.g., certain species) have far fewer specimens than others [33].

- Data Preparation: Complete the GM or FDGM pipeline to obtain the feature set (PC or FPC scores).

- Imbalance Treatment: Apply balancing techniques only to the training set.

- Synthetic Minority Oversampling Technique (SMOTE): Generates synthetic examples for the minority class in feature space.