Leveraging Transfer Learning with ResNet50 for Advanced Parasite Egg Classification in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on applying transfer learning with ResNet50 to automate the classification of parasitic eggs from microscopic images.

Leveraging Transfer Learning with ResNet50 for Advanced Parasite Egg Classification in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying transfer learning with ResNet50 to automate the classification of parasitic eggs from microscopic images. It covers foundational concepts, a step-by-step methodological pipeline for implementation, strategies for troubleshooting and optimizing model performance, and a comparative analysis with other state-of-the-art deep learning models. By synthesizing recent validation studies, the article demonstrates how this approach achieves high diagnostic accuracy, streamlines the drug discovery workflow, and offers a scalable solution for improving global parasitic disease diagnostics.

Parasite Diagnostics and the Foundational Role of ResNet50

The Critical Need for Automation in Parasitic Egg Diagnosis

Parasitic infections remain a profound global health challenge, disproportionately affecting populations in low- and middle-income countries. Soil-transmitted helminths (STH) alone infect over 1.5 billion people worldwide, causing significant morbidity including anemia, impaired child development, and adverse pregnancy outcomes [1] [2]. Traditional diagnostic methods, primarily manual microscopy of stool samples, are fraught with limitations: they are time-consuming, labor-intensive, and require specialized expertise that is often scarce in resource-constrained settings [3] [4]. The diagnostic process is further complicated by the morphological similarities between different parasitic eggs and the presence of abundant impurities in samples, leading to diagnostic errors and unreliable quantification [5].

These challenges have catalyzed the development of automated diagnostic systems leveraging artificial intelligence (AI). Deep learning, particularly convolutional neural networks (CNNs), has demonstrated remarkable potential in transforming parasitology diagnostics by enabling rapid, accurate, and scalable detection of parasitic eggs in microscopic images [3] [6]. This document details the application of transfer learning with ResNet50, a powerful deep learning architecture, for the classification of parasitic eggs, providing researchers with structured protocols, performance data, and implementation frameworks to advance the field of automated parasitological diagnosis.

The Diagnostic Challenge and the Case for Automation

Limitations of Conventional Microscopy

Conventional microscopy, while considered the gold standard, suffers from several critical drawbacks that automation seeks to address:

- Operator Dependency and Subjectivity: Diagnostic accuracy is intrinsically linked to the technician's skill and experience, leading to variability and inconsistency [5].

- Low Throughput and High Time Requirement: A single microscopic examination can take 8-10 minutes for an expert, creating bottlenecks in high-volume settings and hindering large-scale monitoring programs [5].

- High Error Rates: The process is prone to both false negatives (missed infections) and false positives (misidentification of artifacts), with sensitivities for some parasites like Taenia reported as low as 3.9–52.5% [7].

The Transformative Potential of Deep Learning

Deep learning models address these limitations by providing an end-to-end, automated analysis. CNNs can learn discriminative features directly from image data, eliminating the need for manual feature engineering and reducing subjective bias [5]. This capability is crucial for identifying subtle morphological differences between species and distinguishing eggs from background debris. The integration of AI with low-cost, portable digital microscopes, such as the Schistoscope [2] or the Kubic FLOTAC Microscope (KFM) [4], paves the way for deploying high-quality diagnostics in field and point-of-care settings.

ResNet50 and Transfer Learning for Parasite Egg Classification

Rationale for Model Selection

ResNet50, a 50-layer deep residual network, is particularly well-suited for medical image analysis tasks. Its key innovation—skip connections that bypass one or more layers—mitigates the vanishing gradient problem, enabling the effective training of very deep networks that can learn complex, hierarchical features from images [8]. For parasitic egg classification, these features may encompass texture, shape, shell structure, and internal characteristics.

Transfer learning is a strategy that involves taking a pre-trained model (typically on a large, general-purpose dataset like ImageNet) and fine-tuning it on a specific, often smaller, target dataset [5]. This approach is highly beneficial in medical imaging where large, annotated datasets are scarce and training deep networks from scratch is computationally prohibitive. It allows researchers to leverage generic feature detectors (e.g., for edges, textures) and rapidly adapt them to the specialized domain of parasitology.

Performance of ResNet50 in Comparative Studies

Studies have consistently demonstrated the efficacy of ResNet50 in parasitic egg classification. The table below summarizes its performance in comparison to other deep-learning architectures.

Table 1: Performance Comparison of Deep Learning Models for Parasitic Egg Classification

| Model | Dataset | Key Performance Metrics | Reference/Context |

|---|---|---|---|

| ResNet50 | Low-cost USB microscope images (4 classes) | High classification accuracy as part of a patch-based detection framework [5] | Suwannaphong et al., 2024 |

| ResNet50 + SE | Microscopic images of helminth eggs | High accuracy; used with a Support Vector Machine (SVM) classifier [7] | Muthulakshmi et al., 2025 |

| ConvNeXt Tiny | Ascaris lumbricoides and Taenia saginata images | F1-Score: 98.6% [7] | Comparative Study, 2025 |

| MobileNet V3 S | Ascaris lumbricoides and Taenia saginata images | F1-Score: 98.2% [7] | Comparative Study, 2025 |

| EfficientNet V2 S | Ascaris lumbricoides and Taenia saginata images | F1-Score: 97.5% [7] | Comparative Study, 2025 |

| CoAtNet | Chula-ParasiteEgg (11,000 images) | Average Accuracy: 93%, F1-Score: 93% [6] | Sukunya et al., 2023 |

The high performance of ResNet50 and similar architectures underscores the viability of deep learning for this task. While newer models like ConvNeXt Tiny may achieve marginally higher scores, ResNet50 remains a robust and well-established benchmark due to its proven architecture and widespread adoption.

Experimental Protocol: Transfer Learning with ResNet50 for Egg Classification

This protocol provides a step-by-step methodology for implementing a ResNet50-based classifier to distinguish between different species of parasitic eggs in microscopic images.

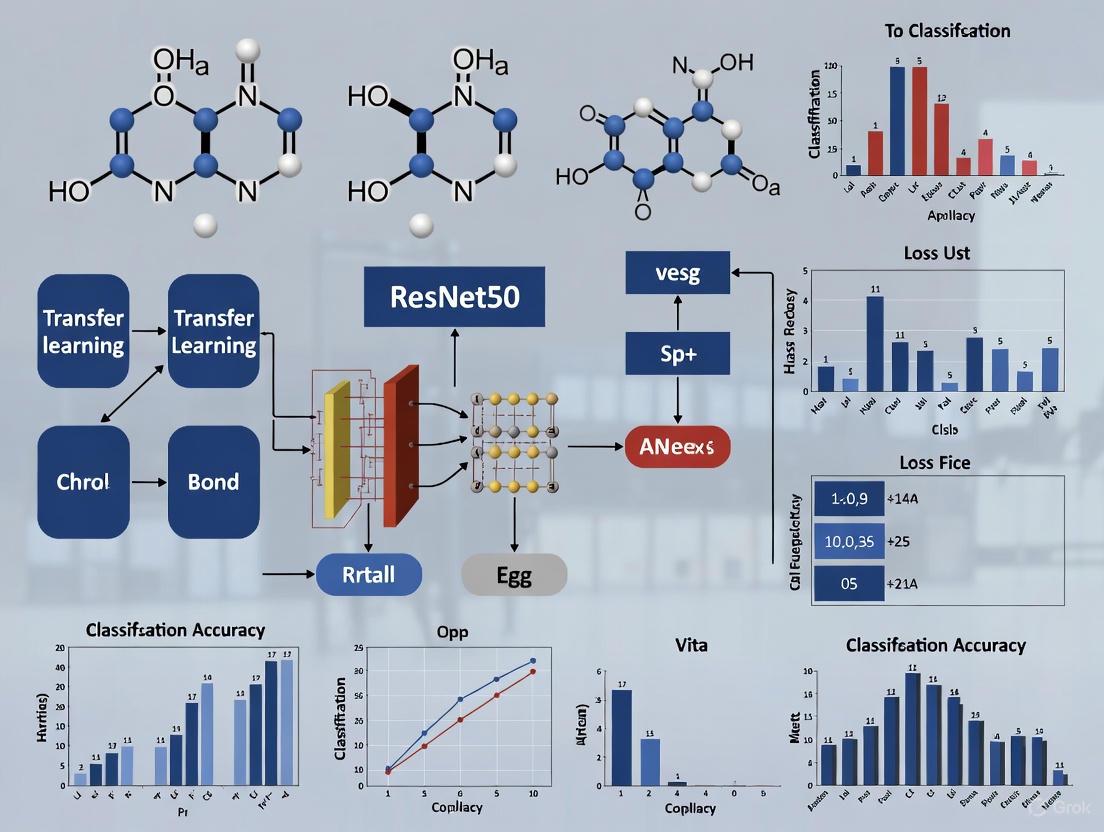

The following diagram illustrates the end-to-end experimental workflow:

Detailed Methodology

Stage 1: Data Acquisition and Preparation

- Image Acquisition: Acquire digital microscopic images of fecal smears. The platform can vary from high-resolution research microscopes to low-cost, portable devices like the Schistoscope [2] or a USB microscope [5]. Consistency in magnification and staining (if used) is critical.

- Data Annotation: Expert microscopists must annotate images, marking the location and species of each parasitic egg. Common classes include Ascaris lumbricoides, Trichuris trichiura, hookworm, and Schistosoma mansoni [2].

- Data Preprocessing:

- Grayscale Conversion: Convert RGB images to grayscale to reduce computational complexity [5].

- Contrast Enhancement: Apply techniques like histogram equalization to improve the visibility of egg features [5].

- Patch-Based Processing: For low-resolution images or to augment data, use a sliding window to divide images into smaller patches (e.g., 100X100 pixels) that fully encapsulate a single egg [5].

Stage 2: Model Preparation and Training

- Model Loading: Load a ResNet50 model pre-trained on the ImageNet dataset.

- Architecture Modification: Replace the final fully connected layer (originally for 1000 ImageNet classes) with a new layer containing nodes equal to the number of parasitic egg classes (e.g., 4 classes + 1 for background) [5].

- Fine-Tuning Strategy:

- Freeze Early Layers: Keep the weights of the initial layers (which detect generic features) frozen for the first phase of training.

- Fine-Tune Deeper Layers: Unfreeze and train the deeper layers of the network along with the new classification head to adapt to the specific features of parasitic eggs.

- Training Configuration:

- Optimizer: Use Adam (as in YOLOv4 studies [9]) or SGD.

- Learning Rate: Set a low initial learning rate (e.g., 0.001) for fine-tuning.

- Data Augmentation: Apply random rotations (0-160 degrees), flipping, and shifting to increase the diversity and size of the training dataset and prevent overfitting [5].

Stage 3: Validation and Inference

- Validation: Use a held-out validation set (e.g., 20% of the data) to monitor performance and prevent overfitting. Early stopping can be used to halt training when validation performance plateaus [9].

- Inference: On new test images, apply the same pre-processing and patch-based analysis. The model classifies each patch, and the results are aggregated to produce a final classification for the entire image.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of an automated diagnostic system requires both computational and wet-lab components. The following table details key materials and their functions.

Table 2: Key Research Reagents and Materials for Automated Parasite Egg Diagnosis

| Item Name | Function/Application | Example/Note |

|---|---|---|

| Kato-Katz Kit | Preparation of thick fecal smears for microscopic examination; the gold standard for STH and schistosomiasis diagnosis [2]. | Standard 41.7 mg template. |

| Schistoscope | A low-cost, automated digital microscope for acquiring images from prepared slides in field settings [2]. | Enables automated focusing and scanning. |

| Kubic FLOTAC Microscope (KFM) | A portable digital microscope designed to analyze fecal specimens prepared with FLOTAC or Mini-FLOTAC [4]. | Allows for autonomous scanning in lab and field. |

| Annotated Image Datasets | Used to train and validate deep learning models. Requires expert annotation for ground truth. | Examples: Chula-ParasiteEgg-11 [4], ICIP 2022 Challenge dataset [1]. |

| Pre-trained ResNet50 Model | The foundational deep learning model to which transfer learning is applied for the specific task. | Typically pre-trained on the ImageNet dataset. |

| GPU Computing Resource | Essential for efficient training and fine-tuning of deep learning models. | e.g., NVIDIA GeForce RTX 3090 [9]. |

The automation of parasitic egg diagnosis is no longer a futuristic concept but an achievable reality with the potential to revolutionize global health. Transfer learning with established architectures like ResNet50 provides a practical and powerful pathway for researchers to develop highly accurate classification systems without requiring massive, prohibitively expensive datasets. By following the detailed protocols and leveraging the toolkit outlined in this document, the scientific community can accelerate the development and deployment of these critical diagnostic tools, bringing us closer to the goal of accessible, reliable, and rapid diagnosis for all populations affected by parasitic diseases.

ResNet50 is a 50-layer deep convolutional neural network (CNN) architecture developed by Microsoft Research in 2015 that revolutionized deep learning by enabling the training of very deep networks without succumbing to the vanishing gradient problem [10] [11]. The model's design introduces residual learning frameworks that utilize skip connections (also known as residual connections) to allow information to bypass one or more layers [12] [13]. These connections enable the network to learn residual functions with reference to the layer inputs rather than learning unreferenced functions, significantly simplifying the training of deep networks [12].

The core innovation lies in the residual blocks, specifically the bottleneck residual block design which utilizes three convolutional layers per block: a 1x1 convolution for reducing dimensionality, a 3x3 convolution for feature processing, and another 1x1 convolution for restoring dimensionality [11]. This design efficiently manages computational complexity while maintaining the network's representational power [11]. The skip connections create gradient super-highways that allow gradients to flow backward through the network without being diminished by multiplication through multiple layers, thus effectively solving the vanishing gradient problem that had previously hampered deep network training [10] [13].

Figure 1: ResNet50 architecture with detailed bottleneck residual block

Advantages of Pre-trained Models for Research

The ImageNet pre-trained ResNet50 model provides researchers with a powerful feature extractor that has learned rich, hierarchical feature representations from over 14 million images across 1000 categories [10] [14]. This pre-training confers several significant advantages:

Reduced Computational Requirements: Training deep networks from scratch requires substantial computational resources and time. Using pre-trained weights eliminates the need for this initial computationally intensive phase [14].

Faster Convergence: Models initialized with pre-trained weights converge significantly faster during fine-tuning compared to randomly initialized weights, as they begin with meaningful feature representations rather than random filters [5].

Effective Feature Extraction: Even for domains dissimilar to natural images, the low-level and mid-level features learned on ImageNet (edges, textures, shapes) often transfer well to specialized domains, requiring only the higher-level features to be adapted to the target task [14] [5].

Improved Performance with Limited Data: The pre-trained model can achieve high accuracy with relatively small datasets, making it particularly valuable in scientific domains where labeled data is scarce and expensive to obtain [14] [5].

Table 1: Performance Comparison of Training Approaches

| Training Approach | Data Requirements | Training Time | Typical Accuracy | Best Use Cases |

|---|---|---|---|---|

| Training from Scratch | Very Large (>1000 images/class) | Very Long | High (with sufficient data) | Large datasets, novel domains dissimilar to ImageNet |

| Full Fine-tuning | Medium (100-1000 images/class) | Medium | High | Similar domains to ImageNet, sufficient computational resources |

| Feature Extraction (Frozen Backbone) | Small (<100 images/class) | Short | Moderate to High | Small datasets, limited computational resources, similar domains |

Application Notes: ResNet50 for Parasite Egg Classification

Domain-Specific Adaptations

For parasite egg classification, ResNet50 requires specific adaptations to address the unique challenges of microscopic image analysis. Research demonstrates that modifying the input channel processing is essential when working with grayscale medical images [15] [5]. The standard ResNet50 expects 3-channel RGB input, but microscopic images are often single-channel grayscale. The network can be adapted by replicating the grayscale channel three times or modifying the first convolutional layer to accept single-channel input [5].

Additional domain-specific adaptations include the integration of multi-feature fusion, where deep features extracted from ResNet50 are combined with handcrafted texture descriptors such as Local Binary Patterns (LBP) to capture fine-grained patterns that may be significant for differentiating parasite species [15]. Attention mechanisms, particularly Convolutional Block Attention Modules (CBAM), can be incorporated to help the model focus on diagnostically relevant regions in the image, improving both accuracy and interpretability [15].

Handling Data-Specific Challenges

Parasite egg classification presents several data-specific challenges that must be addressed for successful model deployment:

Class Imbalance: Parasite egg datasets typically contain far more background patches than egg-containing patches, requiring careful data balancing strategies [5]. Techniques include oversampling minority classes, undersampling majority classes, and appropriate use of data augmentation [5].

Small Object Detection: Parasite eggs often occupy a small fraction of the total image area, necessitating patch-based processing approaches where images are divided into smaller patches (e.g., 100×100 pixels) to ensure eggs are sufficiently represented in the input [5].

Image Quality Variations: Low-cost microscopy systems produce images with poor contrast, noise, and limited detail, requiring preprocessing techniques such as Multiscale Curvelet Filtering with Directional Denoising (MCF-DD) to enhance image quality while preserving diagnostically important features [15].

Table 2: ResNet50 Performance in Parasite Egg Classification Studies

| Study | Dataset Size | Classes | Preprocessing | Modifications | Reported Accuracy |

|---|---|---|---|---|---|

| Intestinal Parasite Classification [5] | 162 images | 4 parasite species + background | Grayscale conversion, contrast enhancement, patch-based processing (100×100 pixels) | Fine-tuning last layers, input adaptation for grayscale | 97.8% precision, 97.7% recall |

| Lightweight Parasite Detection [1] | ICIP 2022 Challenge dataset | Multiple parasite egg types | Standard normalization | Comparative baseline for lightweight models | High performance (exact values not specified) |

| Enhanced Pneumonia Detection [15] | Kaggle Chest X-ray dataset | Pneumonia vs. Normal | MCF-DD denoising, multi-feature fusion | Attention mechanisms, hybrid feature fusion | Higher accuracy than standard approaches |

Experimental Protocols

Transfer Learning Protocol for Parasite Egg Classification

Figure 2: Transfer learning workflow for parasite egg classification

Data Preparation and Augmentation Protocol

Image Preprocessing Steps:

- Grayscale Conversion: Convert RGB images to single-channel grayscale to reduce computational complexity while maintaining relevant features for parasite egg identification [5].

- Contrast Enhancement: Apply histogram equalization or adaptive contrast limited AHE (CLAHE) to improve visualization of low-magnification microscopic images [5].

- Patch Extraction: Divide each microscopic image into overlapping patches of 100×100 pixels with four-fifths overlap to ensure eggs are adequately represented [5].

- Data Augmentation: Generate additional training samples through:

- Random horizontal and vertical flipping

- Random rotation between 0-160 degrees

- Random shifting by 50 pixels horizontally and vertically around egg locations [5]

Data Balancing:

- Randomly select approximately 10,000 background patches to balance with augmented egg patches

- Ensure equal representation across all parasite species classes [5]

Model Training and Fine-tuning Protocol

Optimizer Configuration:

- Utilize Stochastic Gradient Descent (SGD) with momentum or Adam optimizer

- Set initial learning rate of 0.001 with reduction on plateau

- Use mini-batch size of 16-32 depending on available GPU memory [16]

Fine-tuning Strategy:

- Feature Extraction Phase: Freeze all ResNet50 layers, train only the newly added classification head for 10-20 epochs

- Selective Fine-tuning: Unfreeze later ResNet50 blocks (stages 3 and 4) while keeping early layers frozen

- Full Fine-tuning: Unfreeze all layers and train with very low learning rate (1e-5 to 1e-6) for final optimization [14]

Training Monitoring:

- Track training and validation accuracy/loss after each epoch

- Implement early stopping with patience of 10-15 epochs

- Save model checkpoints based on validation accuracy [5]

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Resource Category | Specific Solution/Tool | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Computational Framework | TensorFlow/Keras with ResNet50 | Deep learning framework and model architecture | Pre-trained models available via tf.keras.applications.ResNet50 [10] |

| Data Augmentation | TensorFlow Image Augmentation | Increases dataset diversity and size | Random flip, rotation, contrast adjustment [13] [5] |

| Optimization Algorithms | SGD with Momentum, Adam | Model parameter optimization during training | Adam and SGD momentum achieve >97.5% accuracy in classification tasks [16] |

| Preprocessing Tools | Multiscale Curvelet Filtering with Directional Denoising (MCF-DD) | Noise suppression in medical images | Preserves diagnostic details while removing noise [15] |

| Feature Enhancement | Local Binary Patterns (LBP) | Handcrafted texture feature extraction | Combined with ResNet50 features in hybrid approach [15] |

| Attention Mechanisms | Convolutional Block Attention Module (CBAM) | Focus model on diagnostically relevant regions | Improves interpretability and accuracy [15] |

| Evaluation Metrics | Precision, Recall, F1-Score, mAP | Performance quantification | Essential for imbalanced datasets in medical imaging [15] [1] |

Performance Optimization and Interpretation

Optimization Strategies

Research demonstrates that optimizer selection significantly impacts ResNet50 performance. Comparative studies show that Adam and SGD with momentum optimizers achieve the highest accuracy (97.66% and 97.58% respectively) in medical image classification tasks [16]. The choice of optimizer should be determined by the specific characteristics of the dataset and computational constraints.

For parasite egg classification with limited data, progressive fine-tuning approaches yield superior results compared to full network training from scratch. This involves initially freezing the backbone network and training only the classification head, followed by gradual unfreezing of later layers while monitoring validation performance to prevent overfitting [14] [5].

Interpretation and Explainability

Incorporating attention mechanisms and visualization techniques is crucial for building trust in model predictions, particularly in medical applications. Class Activation Mapping (CAM) and Gradient-weighted Class Activation Mapping (Grad-CAM) can highlight the image regions most influential in the classification decision, allowing domain experts to verify that the model focuses on biologically relevant features [15].

For parasite egg classification, the patch-based prediction approach naturally provides localization information by indicating which image patches contain the detected eggs [5]. This spatial information can be combined with confidence scores to provide comprehensive diagnostic support to laboratory technicians.

Core Principles of Transfer Learning for Medical Image Analysis

Transfer learning has emerged as a cornerstone technique in medical image analysis, effectively addressing the critical challenge of limited annotated datasets in healthcare domains. This approach involves leveraging knowledge from pre-trained deep learning models, initially developed on large-scale general image datasets like ImageNet, and adapting it to specialized medical imaging tasks. The fundamental principle rests on the understanding that low-level features such as edges, textures, and shapes are universally valuable across image recognition tasks. By transferring these generic features, models require significantly less domain-specific data to achieve high performance, accelerating development and improving accuracy where expert annotations are scarce and costly to obtain.

Within parasitology, this methodology has demonstrated remarkable success in automating the detection and classification of parasitic eggs in microscopic images, transforming diagnostic processes that traditionally relied on manual, time-consuming examination by skilled technicians. The application of transfer learning with established architectures like ResNet50 has enabled the development of systems capable of providing rapid, accurate identifications, thereby overcoming human resource constraints and variability in diagnostic expertise, particularly in resource-limited settings where parasitic infections are most prevalent.

Core Conceptual Framework

Theoretical Foundations and Key Terminology

The operational framework of transfer learning for medical image analysis is built upon several foundational concepts:

- Source Domain: A rich data environment (e.g., ImageNet) containing millions of general images used for initial model training. This domain provides the foundational feature hierarchies that the model learns.

- Target Domain: The specific, data-scarce application area (e.g., microscopic images of parasite eggs). The goal is to successfully apply knowledge from the source domain to this new, different domain.

- Pre-trained Model: A deep learning model (e.g., ResNet50) whose weights have been previously optimized on the source domain. These models have already learned to extract meaningful hierarchical features from raw pixels.

- Feature Extraction: The process of using the pre-trained model as a fixed feature extractor for new samples from the target domain. The final layers of the model are typically removed, and the output of the remaining layers is used as input to a new classifier.

- Fine-tuning: A more advanced strategy where not only the new classifier is trained on the target task, but some layers of the pre-trained model are also unfrozen and further trained with a low learning rate. This allows the model to adapt its generic features to the specific characteristics of the target domain.

The underlying hypothesis is that the feature representations learned from natural images are sufficiently general to be relevant for medical tasks. For parasite egg classification, a model that can identify contours, shapes, and textures in photographs of everyday objects can effectively learn to distinguish the morphological characteristics of different parasite species, such as the 50–60 μm long, 20–30 μm wide pinworm eggs with their thin, clear, bi-layered shell [3].

Comparative Analysis: From Scratch vs. Transfer Learning

Table 1: Performance comparison of deep learning models in parasitic egg detection, highlighting the efficacy of transfer learning approaches.

| Model / Approach | Reported Accuracy | Precision | F1-Score | Key Advantages |

|---|---|---|---|---|

| Custom CNN (from scratch) | 93.0% [6] | N/R | 93.0% [6] | Simplified structure, tailored for specific data. |

| CoAtNet (from scratch) | 93.0% [6] | N/R | 93.0% [6] | Integrates convolution and attention; high accuracy. |

| ResNet-101 (Transfer Learning) | >97.0% [3] | N/R | N/R | High classification accuracy; robust feature extraction. |

| NASNet-Mobile (Transfer Learning) | >97.0% [3] | N/R | N/R | Optimized for mobile devices; high efficiency. |

| YOLO-based Models (e.g., YAC-Net) | N/R | 97.8% [1] | 0.9773 [1] | High detection precision and recall; real-time capability. |

| YCBAM (YOLO with Attention) | N/R | 0.9971 [3] | N/R | Superior detection performance (mAP@0.5: 0.9950). |

Abbreviation: N/R, Not explicitly reported in the cited source.

The comparative data reveals a clear trend: models utilizing transfer learning, such as ResNet-101 and NASNet-Mobile, consistently achieve top-tier accuracy exceeding 97% in classifying Enterobius vermicularis (pinworm) eggs from microscopic images [3]. This performance often surpasses that of models trained from scratch, which, while effective, may require more data and computational resources to reach similar performance levels. Furthermore, advanced object detection frameworks like YOLO, when enhanced with attention mechanisms (YCBAM), demonstrate that transfer learning principles can be extended beyond classification to achieve exceptional precision (0.9971) in localizing and identifying parasite eggs within complex, noisy backgrounds [3].

Experimental Protocols and Application Notes

Detailed Protocol: Transfer Learning with ResNet50 for Parasite Egg Classification

This protocol details the procedure for adapting a ResNet50 model, pre-trained on ImageNet, to classify parasite eggs in microscopic images.

I. Research Reagent Solutions and Computational Materials

Table 2: Essential materials, tools, and reagents required for the experiment.

| Item Name / Category | Specification / Example | Primary Function in the Protocol |

|---|---|---|

| Pre-trained Model | ResNet50 (ImageNet weights) | Provides the foundational convolutional neural network architecture and pre-learned feature extractors. |

| Dataset | Labeled microscopic images of parasite eggs (e.g., Chula-ParasiteEgg [6]) | Serves as the target domain data for fine-tuning and evaluating the model. |

| Deep Learning Framework | PyTorch or TensorFlow | Provides the programming environment and libraries for building, modifying, and training neural networks. |

| Computational Hardware | GPU (e.g., NVIDIA CUDA-enabled) | Accelerates the computationally intensive processes of model training and inference. |

| Data Augmentation Tools | Framework-integrated (e.g., torchvision.transforms) |

Artificially increases dataset size and diversity through transformations (rotation, flipping), improving model robustness. |

| Optimizer | Stochastic Gradient Descent (SGD) or Adam | Algorithm responsible for updating model weights during training to minimize loss. |

II. Step-by-Step Methodology

Data Preparation and Preprocessing:

- Image Standardization: Resize all input images to a fixed dimension of 224x224 pixels, which is the standard input size for the original ResNet50 model.

- Data Augmentation: Apply a suite of random transformations to the training data to improve generalization. This includes rotation (±15°), horizontal and vertical flipping, and slight variations in brightness and contrast.

- Data Partitioning: Split the dataset into three subsets: training (70%), validation (15%), and test (15%). Ensure stratification to maintain class distribution across splits.

- Pixel Value Normalization: Normalize image pixel values using the mean and standard deviation of the ImageNet dataset ([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]). This is crucial because the pre-trained model's weights are tuned to this distribution.

Model Adaptation and Modification:

- Load Pre-trained Model: Initialize the model with weights pre-trained on the ImageNet dataset.

- Replace Classifier Head: Remove the final fully connected layer of the original ResNet50 (which outputs 1000 classes for ImageNet) and replace it with a new, randomly initialized layer. The output dimension of this new layer should match the number of parasite egg classes in your target dataset (e.g., 5 classes).

- Freeze Feature Extractor: In the initial phase of training, freeze the weights of all convolutional layers in the ResNet50 backbone. This prevents their destruction by large gradient updates early in training.

Training and Fine-tuning:

- Phase 1 - Classifier Training: Train only the newly replaced fully connected layer for a few epochs. Use a relatively higher learning rate (e.g., 0.01) for this new layer to allow for rapid learning.

- Phase 2 - Full Fine-tuning: Unfreeze all or a portion (e.g., the last 5-10 layers) of the convolutional backbone. Continue training the entire model using a much lower learning rate (e.g., 0.0001) to make subtle adjustments to the pre-trained features, adapting them specifically to the characteristics of parasite egg images.

- Loss Function and Monitoring: Use Cross-Entropy Loss as the objective function. Monitor performance on the validation set after each epoch to select the best model and to detect overfitting.

Model Evaluation:

- Final Assessment: Evaluate the performance of the best-performing saved model on the held-out test set, which contains images the model has never seen during training or validation.

- Performance Metrics: Report standard classification metrics, including Accuracy, Precision, Recall, F1-Score, and the Confusion Matrix, to provide a comprehensive view of model performance.

Workflow and Signaling Pathway Visualization

Diagram 1: Transfer Learning Workflow for ResNet50.

Diagram 2: ResNet50 Adaptation Logic.

Advanced Applications and Performance Analysis

Advanced Model Architectures and Performance

Beyond standard transfer learning, research has shown that integrating attention mechanisms and custom modules with pre-trained architectures can yield state-of-the-art results. For instance, the YOLO Convolutional Block Attention Module (YCBAM) framework integrates YOLO with self-attention and a Convolutional Block Attention Module (CBAM) to enhance the detection of pinworm parasite eggs [3]. This integration allows the model to focus on spatially and channel-wise relevant features, significantly improving detection in challenging imaging conditions. The YCBAM model demonstrated a precision of 0.9971, a recall of 0.9934, and a mean Average Precision (mAP@0.5) of 0.9950 [3].

Similarly, the YAC-Net model, a lightweight derivative of YOLOv5, replaced the standard Feature Pyramid Network (FPN) with an Asymptotic Feature Pyramid Network (AFPN) and the C3 module with a C2f module [1]. This enriched gradient flow and improved spatial context fusion, leading to a precision of 97.8%, a recall of 97.7%, and an mAP@0.5 of 0.9913, while simultaneously reducing the number of parameters by one-fifth compared to its baseline [1]. These advancements highlight that transfer learning serves as a powerful foundation upon which further, task-specific optimizations can be built to achieve exceptional performance.

Quantitative Performance Benchmarking

Table 3: Detailed performance metrics of advanced deep learning models for parasitic egg detection.

| Model Architecture | Precision | Recall | mAP@0.5 | mAP@0.5:0.95 | Key Architectural Innovation |

|---|---|---|---|---|---|

| YCBAM [3] | 0.9971 | 0.9934 | 0.9950 | 0.6531 | Integration of YOLO with Self-Attention and CBAM. |

| YAC-Net [1] | 0.978 | 0.977 | 0.9913 | N/R | AFPN structure and C2f module for lightweight design. |

| CoAtNet-based Model [6] | N/R | N/R | N/R | N/R | Hybrid convolution and attention network. |

Abbreviation: N/R, Not explicitly reported in the cited source.

The quantitative data underscores the remarkable effectiveness of these advanced models. The YCBAM architecture's near-perfect precision and recall indicate an extremely low rate of false positives and false negatives, which is critical for a reliable diagnostic tool [3]. The high mAP@0.5 score further confirms its superior ability to localize and identify eggs accurately. The performance of YAC-Net is equally notable for achieving high accuracy and precision with a reduced parameter count, making it suitable for deployment in resource-constrained environments [1]. This aligns with the overarching goal of creating accessible and efficient automated diagnostic solutions.

Concluding Synthesis

The core principles of transfer learning, centered on knowledge repurposing from data-rich source domains to data-scarce target domains, have profoundly impacted medical image analysis. The application of these principles, using architectures like ResNet50 as a adaptable foundation, has enabled significant breakthroughs in the automated detection and classification of parasitic eggs. The empirical evidence demonstrates that this approach not only achieves high diagnostic accuracy—often surpassing 97%—but also provides a robust platform for innovation through the integration of attention mechanisms and specialized modules. These advancements are paving the way for rapid, precise, and accessible diagnostic tools that can alleviate the burden on healthcare professionals and improve patient outcomes in regions most affected by parasitic infections. Future work will likely focus on further model optimization for edge devices, enhancing interpretability for clinical trust, and expanding these techniques to a wider array of neglected tropical diseases.

The accurate morphological differentiation of parasite eggs is a critical, yet time-consuming and expertise-dependent, step in the diagnosis of parasitic infections. This document provides detailed application notes and protocols for researchers focusing on three prevalent helminths: Ascaris lumbricoides (roundworm), Taenia species (tapeworm), and Enterobius vermicularis (pinworm). The content is specifically framed within a research context that leverages transfer learning with ResNet50 for the automated classification of parasitic eggs, a method that shows significant promise in overcoming the limitations of manual microscopy [17]. By establishing a clear morphological baseline and standardizing imaging protocols, this work aims to facilitate the development of robust, data-driven diagnostic models.

Morphological Characteristics of Target Parasite Eggs

A precise understanding of the morphological characteristics of target parasite eggs is the foundation for both manual identification and the creation of accurately labeled datasets for training deep learning models. The following subsections and comparative tables detail the key identifying features of Ascaris, Taenia, and Pinworm eggs. It is important to note that the morphological details can vary depending on the type of fecal preparation and stain used, as summarized in Table 1 [18].

Table 1: Visibility of Key Morphological Features in Different Stool Preparations

| Stage/Feature | Unstained (Saline) | Unstained (Formalin) | Temporary Stain (Iodine) | Permanent Stains |

|---|---|---|---|---|

| Trophozoite Motility | Visible | Not Visible | Visible | Not Applicable |

| Cytoplasm Inclusions | Visible | Visible | Visible | Visible |

| Trophozoite Nucleus | Usually not visible | Visible, not distinctive | Visible | Visible |

| Cyst Nuclei | Visible | Visible | Visible | Visible |

| Chromatoid Bodies | Easily visible | Visible | Less visible | Visible |

Ascaris lumbricoides

Ascaris lumbricoides is one of the most common intestinal nematodes worldwide [19]. Its eggs have a characteristic appearance, though they can be observed in both fertilized and unfertilized forms.

Table 2: Morphology of Ascaris lumbricoides Eggs

| Characteristic | Fertilized Egg | Unfertilized Egg |

|---|---|---|

| Size | 45-75 µm in length, 35-50 µm in width [3] | 88-94 µm in length, 44-48 µm in width |

| Shape | Round or oval | Elongated and more oval |

| Shell | Thick, mammillated (bumpy), albuminous coat | Thinner shell with a less prominent albuminous coat |

| Content | Contains a single, large, unsegmented ovum | Filled with a disorganized mass of refractile granules |

| Color | Golden-brown in iodine stain [18] | Brownish in iodine stain |

Taenia Species

Taenia saginata (beef tapeworm) and Taenia solium (pork tapeworm) are cestodes that infect humans. Their eggs are morphologically similar and cannot be differentiated to the species level based on egg morphology alone [20] [19].

Table 3: Morphology of Taenia Species Eggs

| Characteristic | Description |

|---|---|

| Size | 31-43 µm in diameter |

| Shape | Spherical or subspherical |

| Shell | A thick, radially striated wall, often dark brown in color |

| Content | Contains a fully-developed, hexacanth (six-hooked) embryo (oncosphere) |

| Key Feature | Eggs are typically released in the intestine and passed in gravid proglottids [20]. The eggs of cyclophyllidean tapeworms like Taenia are not operculated [20]. |

Enterobius vermicularis (Pinworm)

The pinworm, Enterobius vermicularis, is the most common nematode infection in the United States [19]. Its eggs are transparent and flattened on one side.

Table 4: Morphology of Enterobius vermicularis Eggs

| Characteristic | Description |

|---|---|

| Size | 50-60 µm in length, 20-30 µm in width [3] |

| Shape | Oval, asymmetrical with one flattened side ("D-shaped") |

| Shell | Thin, colorless, transparent, and double-lined |

| Content | Often contains a coiled larva, which may be visible moving under a microscope [3] |

| Key Feature | Eggs are typically recovered via the Scotch tape test, not routine stool examination [3] [19]. |

Experimental Protocols for Microscopy and Image Acquisition

Standardized sample preparation and image acquisition are paramount for generating a high-quality dataset usable for deep learning model training. The following protocols ensure consistency and reproducibility.

Sample Collection and Preparation

- Stool Specimens (for Ascaris and Taenia): Collect fresh stool samples in clean, sealed containers. For fixation, use 10% formalin or sodium acetate-acetic acid-formalin (SAF) to preserve egg morphology. Fixed samples can be used for concentration techniques like formalin-ethyl acetate sedimentation to increase detection sensitivity [18].

- Perianal Specimen (for Pinworm): Use the Scotch tape test. Press the sticky side of clear cellulose tape against the perianal folds first thing in the morning, before bathing or defecation. Place the tape adhesive-side down on a microscope slide for direct examination [3] [19].

Staining and Mounting

The choice of preparation affects the visibility of key morphological features (see Table 1).

- Unstained Wet Mounts: For general observation and initial detection. A saline mount allows for observation of motility in larvae. An iodine-stained mount (e.g., Lugol's iodine) enhances the visibility of nuclei and glycogen vacuoles, causing cysts to stain reddish-brown [18].

- Permanent Stains: For detailed morphological study and archiving of images for datasets. Stains like Wheatley's trichrome are essential for observing the nuclear structure of protozoan trophozoites and cysts, which is critical for species identification [18].

Microscopy and Image Capture

- Microscope Setup: Use a compound light microscope with 10x, 40x, and 100x oil immersion objectives.

- Image Capture: Use a high-resolution digital camera (e.g., 5 MP or greater) mounted on the microscope.

- Standardization: Maintain consistent lighting (Köhler illumination), magnification, and resolution across all images. Capture images in RAW format if possible to retain maximum detail for later processing.

- Multiple Focal Planes: For permanent stained slides, capture images at multiple focal planes (z-stacking) to ensure all structural details are recorded.

- Metadata Logging: For each image, record essential metadata including parasite species (if known), stain type, magnification, and sample preparation method.

Integration with ResNet50 Transfer Learning Framework

The standardized morphological data and imaging protocols directly feed into the development of an automated classification system using a ResNet50 transfer learning framework. ResNet50, a 50-layer deep convolutional neural network, is well-suited for this task due to its residual learning blocks that mitigate the vanishing gradient problem in deep networks, allowing it to learn complex features from images effectively [17].

Workflow for Model Development

The process of developing a ResNet50 model for parasite egg classification follows a structured pipeline from dataset creation to deployment, as illustrated below.

Experimental Protocol for ResNet50 Fine-tuning

This protocol outlines the specific steps for adapting a pre-trained ResNet50 model to the task of parasite egg classification.

Dataset Curation:

- Image Collection: Compile a dataset of microscope images of Ascaris, Taenia, and Pinworm eggs using the protocols in Section 3.

- Annotation: Label each image with the correct species class. Use bounding boxes if performing object detection, or image-level labels for classification.

- Partitioning: Split the dataset into training (∼70%), validation (∼15%), and test (∼15%) sets, ensuring class balance across splits.

Data Preprocessing and Augmentation:

- Resizing: Resize all input images to 224x224 pixels, the default input size for ResNet50.

- Normalization: Normalize pixel values using the mean and standard deviation from the ImageNet dataset.

- Augmentation: Apply random transformations to the training data to improve model generalization. This includes rotation (±15°), horizontal/vertical flipping, zoom (±10%), and brightness/contrast adjustments (±20%) [21].

Model Configuration and Transfer Learning:

- Load Pre-trained Model: Initialize the model with weights pre-trained on the ImageNet dataset.

- Replace Classifier: Remove the final fully connected layer (of 1000 classes for ImageNet) and replace it with a new layer with 3 output units (for Ascaris, Taenia, Pinworm) with a softmax activation.

- Fine-tuning:

- Stage 1: Freeze the convolutional base of ResNet50 and train only the newly replaced classifier layers for a few epochs using a low learning rate (e.g., 1e-3).

- Stage 2: Unfreeze a portion of the deeper layers of the convolutional base and continue training the entire unfrozen network with an even lower learning rate (e.g., 1e-5) to allow for subtle feature adaptation.

Training and Evaluation:

- Compilation: Compile the model using an optimizer (e.g., Adam or SGD with momentum) and a loss function (categorical cross-entropy).

- Training: Train the model on the augmented training set, using the validation set for hyperparameter tuning and to monitor for overfitting.

- Evaluation: Evaluate the final model's performance on the held-out test set. Report standard metrics including accuracy, precision, recall, F1-score, and area under the ROC curve (AUC) [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Research Reagents and Materials

| Item | Function/Application |

|---|---|

| 10% Formalin Solution | Universal fixative for stool specimens; preserves parasite egg morphology for long-term storage and subsequent processing [18]. |

| Lugol's Iodine Solution | Temporary stain used in wet mounts to enhance visualization of nuclear structures and glycogen in cysts [18]. |

| Wheatley's Trichrome Stain | Permanent stain used for detailed morphological study of parasites on fixed smear slides; allows for differentiation of internal structures [18]. |

| Microscope Slides and Coverslips | Standard consumables for preparing specimens for microscopic examination. |

| Cellulose Tape | Essential for the perianal "Scotch tape test" used specifically for collecting Enterobius vermicularis (pinworm) eggs [3]. |

| Formalin-ethyl Acetate | Reagents used in the sedimentation concentration procedure to separate and concentrate parasite eggs and cysts from stool debris. |

| Labeled Image Dataset | A curated collection of parasite egg images, tagged by species and preparation method; the fundamental resource for training and validating deep learning models [1] [6]. |

This document provides a comprehensive guide linking the traditional morphological identification of Ascaris, Taenia, and Pinworm eggs with modern deep-learning methodologies. The detailed protocols for microscopy and the structured framework for implementing a ResNet50-based classifier are designed to support researchers in building accurate, automated diagnostic tools. By standardizing the input data and leveraging powerful transfer learning techniques, this approach has the potential to significantly increase the efficiency, scalability, and accessibility of parasitic infection diagnosis, thereby advancing both clinical diagnostics and public health initiatives.

Building Your Classifier: A Step-by-Step ResNet50 Pipeline

This application note details the protocols for acquiring and curating microscopic image datasets, a foundational step for research focused on transfer learning with ResNet50 for human parasite egg classification. The performance of deep learning models, including fine-tuned architectures like ResNet50, is critically dependent on the quality, quantity, and appropriateness of the training data [6] [22]. Within the domain of medical parasitology, data preparation presents unique challenges, such as the small size of target objects (e.g., pinworm eggs measuring 50–60 μm in length and 20–30 μm in width), their morphological similarities to other microscopic particles, and the frequent scarcity of expert-annotated samples [3]. This document provides researchers and laboratory professionals with a structured framework to build robust datasets that effectively support model development and generalization.

Data Sourcing and Acquisition

The initial phase involves gathering a sufficient volume of raw microscopic images. The methodologies and sources outlined below ensure a diverse and representative dataset.

Experimental Protocol: Sample Preparation and Image Capture

The following protocol, adapted from contemporary research, ensures the acquisition of high-quality, consistent microscopic images [3] [23].

- Sample Collection and Preparation: Stool samples are collected and processed using standard parasitological techniques, such as formalin-ethyl acetate sedimentation or the Kato-Katz method, to concentrate parasitic eggs. For pinworm detection, the scotch tape test is employed [3].

- Microscopy Setup: A brightfield microscope equipped with a high-resolution digital camera (recommended: 5MP or higher) is used. A 10x or 40x objective lens is typically suitable for visualizing most helminth eggs.

- Image Capture Parameters:

- Consistency: Maintain consistent lighting intensity across all slides to minimize illumination variance.

- Focus: Capture multiple focal planes (z-stacking) if possible, to ensure egg structures are fully in focus.

- Resolution: Capture images at the camera's native resolution (e.g., 2592x1944 pixels) to preserve fine morphological details.

- Field Selection: Systematically capture images from multiple, non-overlapping fields on each slide to ensure a random sample of the material.

- Data Volume: Aim for a minimum of several hundred to thousands of images, depending on the number of parasite species to be classified. Studies have successfully utilized datasets ranging from 1,200 to over 11,000 images [3] [6].

Public Datasets for Benchmarking

Researchers can supplement their data with publicly available datasets to benchmark model performance.

- Chula-ParasiteEgg Dataset: A publicly available dataset containing 11,000 microscopic images, which has been used to train and evaluate models like CoAtNet for parasitic egg recognition [6].

Data Curation and Preprocessing

Raw images are often unsuitable for immediate model training. This stage focuses on enhancing image quality and preparing data for annotation.

Preprocessing for Image Enhancement

Preprocessing techniques are applied to improve the signal-to-noise ratio and standardize the input data. The table below summarizes key techniques and their functions.

Table 1: Image Preprocessing Techniques for Parasitic Egg Analysis

| Technique | Function | Application Example |

|---|---|---|

| Noise Reduction (BM3D) | Removes various types of image noise (Gaussian, Salt and Pepper) while preserving edges [23]. | Enhancing clarity of egg boundaries in low-quality images. |

| Contrast Enhancement (CLAHE) | Improves local contrast, making eggs more distinguishable from the background [23]. | Differentiating transparent or colorless pinworm eggs from the background [3]. |

| Color Normalization | Standardizes color and intensity distributions across images from different batches or microscopes. | Reducing model confusion caused by variations in staining or lighting. |

Data Annotation and Labeling

Annotation is the process of labeling data with the correct answers, which for object classification involves assigning a class label to each image or region of interest.

Annotation Protocol for Image-Level Classification

This protocol is designed for projects where the goal is to classify an entire image based on the presence or type of parasite egg.

- Annotation Tool Selection: Utilize annotation platforms that support classification tasks. Options include self-service platforms like Label Your Data or SuperAnnotate, which are designed for customizable AI workflows [24].

- Class Label Definition: Establish a clear and definitive guide for each parasite egg class (e.g., Ascaris lumbricoides, Trichuris trichiura, Enterobius vermicularis), including reference images and descriptions of key morphological features.

- Annotator Training: For scientific imagery, annotators require specialized training. As noted in annotation guides, "when dealing with scientific objects/events that are unfamiliar to the annotators, the definitions of classes can be complex and may require trained eyes" [22].

- Quality Assurance: Implement a multi-step review process.

- Primary Annotation: A trained annotator labels the image.

- Expert Validation: A domain expert (e.g., a medical parasitologist) reviews a significant subset, if not all, of the annotations to ensure biological accuracy.

- Inter-Annotator Agreement: Have a portion of the dataset annotated by multiple individuals to measure consistency and identify ambiguous cases [22].

Dataset Augmentation and Curation

A well-curated final dataset is balanced and partitioned to rigorously evaluate model performance.

Managing Class Imbalance

Parasite egg datasets are often imbalanced, as some species are more common than others. This can bias a model toward the majority class.

- Techniques: Employ data augmentation to synthetically increase the representation of rare classes. This includes applying random (but realistic) transformations such as rotation, flipping, slight scaling, and brightness/contrast adjustments to the existing images of the under-represented class [24].

- Objective: Achieve a roughly equal number of images per class in the training set to prevent model bias.

Dataset Partitioning

Split the fully annotated and curated dataset into three distinct subsets to monitor for overfitting during model training.

- Training Set (70-80%): Used to train the ResNet50 model.

- Validation Set (10-15%): Used to tune hyperparameters (like learning rate) and evaluate model performance during training.

- Test Set (10-15%): A held-out set used only once, at the very end, to provide an unbiased evaluation of the final model's generalization ability.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and tools essential for creating a high-quality dataset for parasitic egg classification.

Table 2: Essential Research Reagents and Tools for Data Curation

| Item | Function / Explanation |

|---|---|

| High-Resolution Microscope Camera | Captures detailed images necessary for distinguishing subtle morphological features of different parasite eggs. |

| Standard Parasitological Stains | Enhances visual contrast of eggs against the background, aiding both human and automated identification. |

| Data Annotation Platform | Software used to efficiently label images. Platforms like Label Your Data or SuperAnnotate streamline this process [24]. |

| Image Processing Libraries | Software libraries for implementing preprocessing algorithms like BM3D and CLAHE [23]. |

| Augmentation Pipelines | Automated pipelines that apply transformations to training images, increasing dataset diversity and size. |

| Domain Expert (Parasitologist) | Validates annotations to ensure biological accuracy, a critical step for building a reliable ground-truth dataset [22]. |

Within the domain of medical parasitology, automated diagnostic systems leveraging deep learning offer a promising avenue to address the limitations of manual microscopic examination, which is time-consuming, labor-intensive, and prone to human error [3] [6]. Transfer learning enables researchers to adapt powerful pre-trained models for specific tasks with limited data, making it particularly suitable for biomedical applications like parasite egg classification [25]. This protocol details the implementation of transfer learning using the ResNet50 architecture, a robust convolutional neural network, specifically framed within the context of parasite egg classification research. By modifying the classifier head of a ResNet50 model pre-trained on the ImageNet dataset, researchers can efficiently develop highly accurate classifiers for identifying and categorizing parasitic eggs in microscopic images [26] [6].

Key Concepts and Definitions

Transfer Learning: A machine learning technique where a model developed for one task is reused as the starting point for a model on a second task. In deep learning, this involves using a pre-trained model and adapting it to a new, similar problem, saving significant time and computational resources while often improving performance, especially with limited data [25].

Feature Extraction: One of the two main approaches in transfer learning. It involves using the representations learned by a pre-trained model to extract meaningful features from new samples. The pre-trained model's convolutional base is used as a fixed feature extractor, and only the newly added classifier layers are trained on the target dataset [27].

Fine-Tuning: The second main approach, which involves unfreezing some of the top layers of a frozen model base and jointly training both the newly-added classifier layers and the last layers of the base model. This allows "fine-tuning" of the higher-order feature representations in the base model to make them more relevant for the specific task [27].

ResNet50 (Residual Network): A 50-layer deep convolutional neural network architecture known for its use of residual connections, or "skip connections," which help mitigate the vanishing gradient problem in very deep networks, enabling the training of effective models with many layers [25].

Table 1: Performance Metrics of Deep Learning Models in Parasite Egg Classification

| Model/Approach | Task | Accuracy | Precision | Recall/Sensitivity | F1-Score | mAP |

|---|---|---|---|---|---|---|

| CoAtNet [6] | Parasitic egg recognition | 93% | - | - | 93% | - |

| CNN Classifier [23] | Parasitic egg classification | 97.38% | - | - | 97.67% (macro avg) | - |

| U-Net + CNN [23] | Parasitic egg segmentation & classification | 96.47% (pixel) | 97.85% | 98.05% | - | - |

| YCBAM (YOLO + CBAM) [3] | Pinworm egg detection | - | 99.71% | 99.34% | - | 99.50% |

| Transfer Learning with ResNet50 (General Example) [26] | CIFAR-10 classification | 94% (training) | - | - | - | - |

| Transfer Learning with ResNet50 (General Example) [28] | Furniture classification | 97% (test) | - | - | - | - |

Table 2: Comparison of Transfer Learning Approaches

| Approach | Training Data Requirements | Computational Cost | Training Time | Typical Use Cases |

|---|---|---|---|---|

| Feature Extraction [25] | Low | Low | Very Fast (e.g., 30 seconds [28]) | Limited data, Similar domain |

| Fine-Tuning [27] | Medium | Medium to High | Moderate to Slow | Sufficient data, Domain adaptation needed |

| Training from Scratch | Very High | Very High | Slow (Hours to Days) | Very large datasets, Unique features |

Experimental Protocols

Protocol 1: Feature Extraction with ResNet50 for Parasite Egg Classification

Purpose: To adapt a pre-trained ResNet50 model for parasite egg classification using the feature extraction approach, ideal for limited datasets.

Materials and Reagents:

- Pre-trained ResNet50 model (Keras/TensorFlow)

- Parasite egg image dataset (e.g., Chula-ParasiteEgg-11 [6])

- Python environment with TensorFlow/Keras

- GPU-accelerated computing resources (recommended)

Procedure:

- Data Preprocessing:

- Load and resize parasite egg images to 224×224 pixels (default input size for ResNet50).

- Apply preprocessing function specific to ResNet50 (

tf.keras.applications.resnet50.preprocess_input). - Split data into training and validation sets (e.g., 80/20 split).

- Implement data augmentation (rotation, flipping, zooming) to increase dataset diversity.

Model Preparation:

- Load the pre-trained ResNet50 model with ImageNet weights, excluding the top classification layer (

include_top=False). - Freeze all layers in the ResNet50 base model to prevent their weights from being updated during training.

- Add a new classifier head consisting of:

- Global Average Pooling layer

- Optional: Dropout layer (e.g., rate=0.2) for regularization

- Final Dense layer with softmax activation and units equal to the number of parasite egg classes

- Load the pre-trained ResNet50 model with ImageNet weights, excluding the top classification layer (

Model Compilation:

- Compile the model with an optimizer (e.g., RMSprop or Adam) with a low learning rate (e.g., 0.0001).

- Specify loss function (categorical crossentropy for multi-class classification).

- Define evaluation metrics (e.g., accuracy).

Model Training:

- Train the model using the augmented parasite egg training dataset.

- Use early stopping callback to prevent overfitting.

- Monitor validation accuracy to evaluate performance.

Model Evaluation:

- Evaluate the trained model on the held-out test set of parasite egg images.

- Generate confusion matrix and classification report.

- Visualize activation heatmaps to interpret model decisions [29].

Protocol 2: Fine-Tuning ResNet50 for Enhanced Parasite Egg Detection

Purpose: To further improve model performance by unfreezing and fine-tuning the higher-level layers of the ResNet50 base model.

Materials and Reagents:

- ResNet50 model with feature extraction layers trained using Protocol 1

- Extended parasite egg image dataset

- Python environment with TensorFlow/Keras

Procedure:

- Model Preparation:

- Start with the model trained in Protocol 1.

- Unfreeze a portion of the upper layers of the ResNet50 base model (typically the last 10-20% of layers).

- Keep the earlier layers frozen as they contain more generic features.

Model Re-compilation:

- Compile the model with an even lower learning rate (e.g., 0.00001) to avoid disrupting the previously learned features.

Model Training:

- Train the model for a additional epochs using the parasite egg dataset.

- Closely monitor validation loss to detect overfitting.

- Implement learning rate reduction on plateau if necessary.

Model Evaluation:

- Evaluate the fine-tuned model on the test set.

- Compare performance metrics with the feature extraction-only model.

- Utilize visualization techniques to compare feature representations before and after fine-tuning [29].

Workflow Visualization

Diagram 1: ResNet50 Transfer Learning Workflow for Parasite Egg Classification. This diagram illustrates the complete pipeline from input microscopic images to parasite egg classification output, highlighting the frozen pre-trained base and trainable custom classifier head.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Transfer Learning in Parasite Egg Classification

| Tool/Reagent | Function | Specifications/Alternatives |

|---|---|---|

| ResNet50 Pre-trained Model | Provides foundational feature extraction capabilities trained on ImageNet | Input size: 224×224×3; 50 layers deep; Residual connections |

| Chula-ParasiteEgg Dataset [6] | Benchmark dataset for training and evaluation | 11,000 microscopic images; 11 parasite egg categories |

| TensorFlow/Keras Framework | Deep learning framework for implementation | Python-based; Supports transfer learning workflows |

| Data Augmentation Pipeline | Increases effective dataset size and model robustness | Operations: rotation, flip, zoom, contrast adjustment |

| GPU Acceleration | Speeds up model training process | NVIDIA GPUs with CUDA support recommended |

| Grad-CAM Visualization [29] | Generates activation heatmaps for model interpretability | Highlights regions of input image most relevant to classification |

| Out-of-Domain Detection [30] | Identifies non-parasite egg images in real-world deployment | Thresholding methods (SoftMax, ODIN) to detect irrelevant inputs |

Strategic Freezing and Fine-Tuning of Model Layers

Transfer learning has emerged as a pivotal technique in computational parasitology, enabling the development of robust diagnostic models even with limited medical image datasets. This approach leverages knowledge from pre-trained models, significantly reducing training time and computational costs while enhancing performance. The strategic decision of which layers to freeze and which to fine-tune represents a critical methodological consideration that directly impacts model efficacy, generalizability, and computational efficiency. Within parasite egg classification research, transfer learning has demonstrated remarkable success, with pre-trained models like ResNet-50 achieving high accuracy by adapting learned feature hierarchies from natural images to the distinct morphological patterns of parasitic structures [6] [1]. This protocol details systematic approaches for layer freezing and fine-tuning specifically contextualized within ResNet-50 architectures for parasitic egg classification, providing researchers with evidence-based methodologies to optimize model performance for this specialized domain.

Background and Rationale

The ResNet-50 Architecture in Medical Imaging

The ResNet-50 architecture has established itself as a cornerstone in medical image analysis due to its residual learning framework that mitigates vanishing gradient problems in deep networks. The model comprises five primary stages: an initial stem convolution and max-pooling layer, followed by four hierarchical stages containing 3, 4, 6, and 3 residual blocks respectively. Each residual block contains multiple convolutional layers with batch normalization and ReLU activation, progressively extracting more abstract representations through its depth [31]. In parasite egg classification, this hierarchical feature extraction proves particularly valuable, as early layers capture universal low-level features like edges and textures relevant to egg shell morphology, while deeper layers encode more specialized representations that may require adaptation to recognize species-specific parasitic characteristics [6] [1].

Theoretical Basis for Strategic Layer Freezing

The fundamental principle underlying strategic layer freezing stems from the observation that in deep convolutional neural networks, features are learned hierarchically. Early layers typically learn general-purpose visual patterns (edges, gradients, basic shapes) that remain largely transferable across domains, while later layers develop increasingly specialized representations tuned to the original training dataset [31]. Research in medical imaging consistently demonstrates that for parasitic egg classification, selectively fine-tuning only the deeper layers of pre-trained models yields superior performance compared to either training from scratch or fine-tuning the entire network [6]. This approach effectively balances domain adaptation with the preservation of valuable generalized features, while concurrently reducing computational requirements and mitigating overfitting risks on typically small medical imaging datasets [1].

Quantitative Performance Comparison

Table 1: Performance of various deep learning models in parasite egg classification and related medical imaging tasks

| Model Architecture | Application | Accuracy | Precision | Recall | F1-Score | Parameters |

|---|---|---|---|---|---|---|

| ResNet-50 (Fine-tuned) | Parasite Egg Classification | 93% [6] | - | - | 93% [6] | - |

| CoAtNet | Parasite Egg Classification | 93% [6] | - | - | 93% [6] | - |

| 3-layer CNN | Parasite Egg Classification | 93% [6] | - | - | - | - |

| VGG-16 (Fine-tuned) | Osteoporosis Classification | 88% [31] | - | - | - | - |

| ResNet-50 | Osteoporosis Classification | 90% [31] | - | - | - | - |

| YAC-Net | Parasite Egg Detection | - | 97.8% [1] | 97.7% [1] | 97.73% [1] | 1,924,302 [1] |

| YCBAM | Pinworm Egg Detection | - | 99.71% [3] | 99.34% [3] | - | - |

Table 2: Performance impact of fine-tuning strategies on ResNet-50 across medical applications

| Fine-Tuning Strategy | Application Domain | Performance Metric | Result | Comparative Baseline |

|---|---|---|---|---|

| Full Fine-tuning | Osteoporosis Classification | Accuracy | 90% [31] | 83% (No Fine-tuning) [31] |

| Partial Fine-tuning (Later Layers) | Alzheimer's Disease Prediction | Accuracy | 83% [32] | 63% (Baseline 3D-CNN) [32] |

| Transfer Learning | Breast Cancer Response Prediction | Balanced Accuracy | 86% [33] | - |

| Feature Extraction + Classifier | Parasite Egg Classification | Accuracy | 93% [6] | 66% (3-layer CNN) [31] |

Experimental Protocols

Protocol 1: Progressive Layer Unfreezing for ResNet-50

Objective: To systematically adapt a ResNet-50 model for parasite egg classification while minimizing overfitting risks through controlled layer unfreezing.

Materials:

- Pre-trained ResNet-50 model (ImageNet weights)

- Parasite egg image dataset (e.g., Chula-ParasiteEgg with 11,000 images) [6]

- Deep learning framework (PyTorch or TensorFlow)

- GPU-enabled computational environment

Methodology:

- Initial Setup: Remove the original classification head of ResNet-50 and replace it with a new head appropriate for parasite egg classes (typically 2-20 classes depending on parasite diversity).

- Phase 1 - Feature Extractor Freezing:

- Freeze all ResNet-50 backbone layers

- Train only the new classification head for 20-50 epochs

- Use moderate learning rate (1e-3 to 1e-4)

- Monitor validation loss for early stopping

- Phase 2 - Intermediate Layer Fine-tuning:

- Unfreeze stages 4 and 5 of ResNet-50 (last 9-12 residual blocks)

- Reduce learning rate by factor of 10 (1e-4 to 1e-5)

- Train for additional 30-60 epochs

- Apply learning rate scheduling based on validation performance

- Phase 3 - Full Model Fine-tuning (Conditional):

- For large datasets (>5,000 images), consider unfreezing all layers

- Use minimal learning rate (1e-5 to 1e-6)

- Apply strong regularization (weight decay, dropout)

- Limited epochs (20-30) to prevent overfitting

Validation: Perform five-fold cross-validation to ensure robustness of results [1]. Compute precision, recall, and F1-score in addition to accuracy, as class imbalance is common in parasitological datasets.

Protocol 2: Differential Learning Rate Strategy

Objective: To implement layer-specific learning rates that decrease progressively from later to earlier layers in the network.

Materials:

- As in Protocol 1

- Learning rate scheduler supporting per-layer rates

Methodology:

- Layer Grouping: Divide ResNet-50 into three logical groups:

- Group A: Classification head (highest learning rate: 1e-3 to 1e-4)

- Group B: Stages 4-5 (medium learning rate: 1e-4 to 1e-5)

- Group C: Stages 1-3 (lowest learning rate: 1e-5 to 1e-6)

- Simultaneous Training: Train all groups simultaneously with their respective learning rates

- Adaptive Adjustment: Monitor loss convergence for each group and adjust rates accordingly

- Regularization: Apply L2 regularization (weight decay = 1e-4) and data augmentation specific to microscopic images (rotation, flipping, brightness/contrast variation)

Validation: Compare training and validation curves across groups to detect overfitting or underfitting in specific network segments.

Protocol 3: Attention-Enhanced Fine-tuning

Objective: To integrate attention mechanisms with ResNet-50 fine-tuning for improved focus on parasite egg morphological features.

Materials:

- As in Protocol 1

- Convolutional Block Attention Module (CBAM) implementation [3]

Methodology:

- Architecture Modification: Insert CBAM modules after stages 3, 4, and 5 of ResNet-50

- Selective Freezing: Initially freeze all original ResNet-50 layers, train only CBAM modules and classification head

- Progressive Unfreezing: Unfreeze ResNet-50 stages sequentially while maintaining CBAM modules trainable

- Multi-task Learning: Optionally add auxiliary segmentation heads to reinforce spatial awareness

Validation: Utilize gradient-weighted class activation mapping (Grad-CAM) to visualize whether the model attends to morphologically relevant regions of parasite eggs.

Research Reagent Solutions

Table 3: Essential research reagents and computational materials for transfer learning in parasite egg classification

| Reagent/Material | Specification/Example | Function in Research |

|---|---|---|

| Pre-trained Models | ResNet-50 (ImageNet weights), VGG-16, CoAtNet [31] [6] | Feature extraction backbone providing initial weights for transfer learning |

| Parasite Image Datasets | Chula-ParasiteEgg (11,000 images) [6], ICIP 2022 Challenge Dataset [1] | Benchmark data for model training and validation |

| Data Augmentation Tools | Albumentations, Torchvision Transforms | Generate synthetic training data through transformations, addressing limited dataset sizes |

| Attention Modules | CBAM [3], Self-Attention Mechanisms | Enhance feature representation by focusing on spatially relevant regions |

| Model Frameworks | PyTorch 1.12.1 [32], Python 3.8 [32] | Infrastructure for model implementation, training, and evaluation |

| Evaluation Metrics | Precision, Recall, F1-Score, mAP@0.5 [1] [3] | Quantify model performance across multiple dimensions |

Workflow Visualization

Strategic Freezing Workflow for ResNet-50 in Parasite Egg Classification

ResNet-50 Architecture with Strategic Freezing for Parasite Egg Classification

Strategic freezing and fine-tuning of model layers represents a critical methodological consideration in transfer learning for parasitic egg classification. The experimental protocols outlined provide structured approaches for maximizing model performance while conserving computational resources and mitigating overfitting. Current evidence indicates that methods employing progressive unfreezing or differential learning rates consistently outperform both training from scratch and complete fine-tuning approaches, with ResNet-50 achieving 93% accuracy in parasite egg classification tasks [6]. The integration of attention mechanisms further enhances this capability, particularly for challenging detection scenarios involving small objects or complex backgrounds [3]. As parasitological diagnostics increasingly embrace automated methodologies, these refined transfer learning strategies will play an indispensable role in developing accurate, efficient, and deployable classification systems suitable for both clinical and resource-constrained settings.

Data Preprocessing and Augmentation Techniques for Enhanced Generalization

This application note details a comprehensive protocol for data preprocessing and augmentation, contextualized within a research project utilizing transfer learning with a ResNet50 architecture for the classification of parasite eggs in low-quality microscopic images. The methodologies described are designed to enhance model generalization, combat overfitting, and improve performance when working with limited and challenging datasets, which is a common scenario in biomedical research. The procedures outlined herein are tailored for an audience of researchers, scientists, and drug development professionals.

In the domain of medical image analysis, particularly for intestinal parasitic egg classification, the acquisition of large, high-quality, and expertly labeled datasets is a significant challenge. Deep learning models, such as Convolutional Neural Networks (CNNs), are data-hungry and prone to overfitting on small datasets. Transfer learning, which involves fine-tuning a model pre-trained on a large dataset like ImageNet, provides a powerful starting point [34] [5]. However, the domain shift between natural images (ImageNet) and medical microscopic images necessitates robust data preprocessing and augmentation strategies to ensure the model generalizes well to the target task. This document provides a step-by-step protocol for preparing and augmenting a dataset of low-cost microscopic images for a parasite egg classification task using a ResNet50 model.

Data Preprocessing Protocols

Proper data preprocessing is critical for standardizing input data and aligning it with the expectations of a pre-trained model. The following protocol is essential for preparing low-quality microscopic images.

Image Conversion and Enhancement

Function: To reduce computational complexity and improve the visibility of critical features in low-magnification, low-contrast images. Protocol: