Improving Cross-Validation Rates in Morphometric Classification: A Robust Framework for Biomedical Research

Morphometric classification, powered by machine learning, is revolutionizing quantitative analysis in biomedical research, from neuron-glia discrimination to brain tumor diagnostics.

Improving Cross-Validation Rates in Morphometric Classification: A Robust Framework for Biomedical Research

Abstract

Morphometric classification, powered by machine learning, is revolutionizing quantitative analysis in biomedical research, from neuron-glia discrimination to brain tumor diagnostics. However, the reliability of these models hinges on robust cross-validation practices, an area where methodological flaws can severely impact reproducibility. This article provides a comprehensive guide for researchers and drug development professionals, addressing the foundational principles, methodological applications, and critical optimization strategies for cross-validation in morphometric studies. We explore common pitfalls, such as statistical misinterpretations in repeated cross-validation, and present rigorous validation and comparative frameworks to ensure model accuracy and generalizability. By synthesizing insights from recent neuroimaging, cell biology, and entomology research, this work aims to establish best practices that enhance the validity and clinical translation of morphometric classification models.

The Critical Role of Cross-Validation in Morphometric Machine Learning

Core Concepts and Frequently Asked Questions

What is Morphometric Classification? Morphometric classification is a computational approach that quantifies and analyzes the shape, size, and structural properties of biological forms—from cellular components to entire organs—to identify patterns and build diagnostic models. In biomedical research, it leverages machine learning to classify conditions based on morphological features extracted from imaging data [1] [2] [3].

Why is Cross-Validation Critical in Morphometric Studies? Proper cross-validation is essential for obtaining reliable performance estimates and ensuring that classification models generalize to new data sources. Traditional k-fold cross-validation can lead to overoptimistic performance claims when the goal is to generalize to new data collection sites or populations. Leave-Source-Out Cross-Validation (LSO-CV) provides more realistic and reliable estimates by iteratively leaving out all data from one source during training and using it for testing [4].

What are Common Data Quality Issues Affecting Classification? When working with structural MRI data for morphometric analysis, several preprocessing errors can significantly impact downstream classification accuracy:

| Error Type | Impact on Classification | Recommended Fix |

|---|---|---|

| Skull Strip Errors [5] | Introduces non-brain tissue, corrupting feature extraction | Manually edit brainmask.mgz to remove residual non-brain tissue |

| Segmentation Errors [5] | Creates inaccuracies in gray/white matter boundaries, affecting regional measurements | Manually edit wm.mgz volume to fill holes or correct mislabeled regions |

| Topological Defects [5] | Prevents accurate surface-based measurements and feature calculation | Use automated topology fixing tools followed by manual verification |

| Intensity Normalization Errors [5] | Reduces comparability across subjects, increasing dataset variance | Re-run intensity normalization with adjusted parameters |

Troubleshooting Guide: Improving Cross-Validation Performance

Problem: My model performs well during k-fold CV but fails on external data.

- Root Cause: Data leakage or batch effects where the model learns source-specific artifacts rather than true biological signals [4].

- Solution: Implement Leave-Source-Out Cross-Validation. With data from multiple sources (e.g., hospitals, study sites), iteratively hold out all data from one entire source as the test set. This provides a nearly unbiased estimate of performance on new sources [4].

Problem: High variance in cross-validation performance metrics.

- Root Cause: Insufficient data or high heterogeneity within classes, common in neuropsychiatric disorders like schizophrenia [1].

- Solution:

Problem: Morphometric features do not generalize across populations.

- Root Cause: Population-specific anatomical variations or differences in data acquisition protocols.

- Solution: For geometric morphometrics, carefully select a template configuration from your study sample for registering out-of-sample individuals. Test different template choices to minimize registration artifacts [3].

Experimental Protocols for Robust Morphometric Classification

Protocol 1: Constructing Morphometric Similarity Networks for Schizophrenia Classification

This protocol is adapted from a study that achieved 80.85% classification accuracy for schizophrenia patients vs. healthy controls [1].

1. Data Acquisition and Preprocessing:

- Acquire T1-weighted structural MRI scans using standardized protocols.

- Process through FreeSurfer's

recon-allpipeline to extract cortical surface and subcortical segmentation [1]. - Critical Step: Manually inspect output for the common errors listed in the troubleshooting table above [5].

2. Feature Extraction:

- For each subject, extract multiple morphometric features from predefined brain regions:

- Cortical thickness

- Surface area

- Gray matter volume

- Mean curvature

- Gaussian curvature [1]

3. Individual Network Construction:

- Construct Morphometric Similarity Networks (MSNs) by calculating inter-regional similarity based on the multiple morphometric features [1].

4. Population Graph Formation:

- Create a population-level graph where nodes represent subjects (with MSN features) and edges represent similarity between subjects' topological features.

- Incorporate phenotypic information (e.g., age, sex) into edge construction.

- Apply thresholding to eliminate spurious connections [1].

5. Model Training and Validation:

- Implement Graph Convolutional Networks (GCNs) with variational edge learning to adaptively optimize edge weights.

- Use leave-source-out cross-validation to evaluate generalization across data collection sites [1] [4].

Protocol 2: Geometric Morphometric Classification for Nutritional Status

This protocol outlines the geometric morphometrics approach for classifying children's nutritional status from arm shape images [3].

1. Data Collection:

- Capture standardized photographs of the left arm under consistent lighting, positioning, and camera distance.

- Collect traditional anthropometric measurements (weight, height, mid-upper arm circumference) for ground truth labeling [3].

2. Landmarking and Registration:

- Place anatomical landmarks and semi-landmarks along the arm contour.

- For out-of-sample classification: Register new individuals to a template configuration from the reference sample using Procrustes analysis [3].

3. Model Development and Testing:

- Apply linear discriminant analysis, neural networks, or support vector machines to the aligned coordinates.

- Strictly separate training and test sets at the study design phase—do not perform joint Procrustes alignment on the entire dataset before splitting [3].

Quantitative Performance Data

Table 1: Classification Performance of Morphometric Similarity Network Approach (MSN-GCN) for Schizophrenia Detection [1]

| Metric | Performance | Experimental Details |

|---|---|---|

| Mean Accuracy | 80.85% | 377 patients vs. 590 healthy controls |

| Key Discriminatory Regions | Superior temporal gyrus, Postcentral gyrus, Lateral occipital cortex | Identified through saliency analysis |

| Dataset Size | 967 subjects | Multi-site data from 6 public databases |

Table 2: Cross-Validation Methods Comparison for Multi-Source Data [4]

| Cross-Validation Method | Bias | Variance | Recommended Use Case |

|---|---|---|---|

| K-Fold CV (Single-Source) | High (Overoptimistic) | Low | Not recommended for multi-source studies |

| K-Fold CV (Multi-Source) | High (Overoptimistic) | Low | Provides better than single-source but still optimistic |

| Leave-Source-Out CV (LSO-CV) | Near Zero | Moderate to High | Recommended for estimating generalization to new sites |

Table 3: Key Software Tools for Morphometric Analysis

| Tool Name | Function | Application Context |

|---|---|---|

| FreeSurfer [1] [5] | Automated cortical reconstruction and subcortical segmentation | Structural MRI analysis, morphometric feature extraction |

| NeuroMorph [6] | 3D mesh analysis and morphometric measurements | Analysis of segmented neuronal structures from electron microscopy |

| Nipype [7] | Pipeline integration and workflow management | Combining tools from different neuroimaging software packages |

| PyBIDS [8] | Dataset organization and querying | Managing data structured according to Brain Imaging Data Structure |

| ANTs [7] | Image registration and segmentation | Structural MRI processing, spatial normalization |

| DIPY [7] | Diffusion MRI analysis | White matter mapping, tractography |

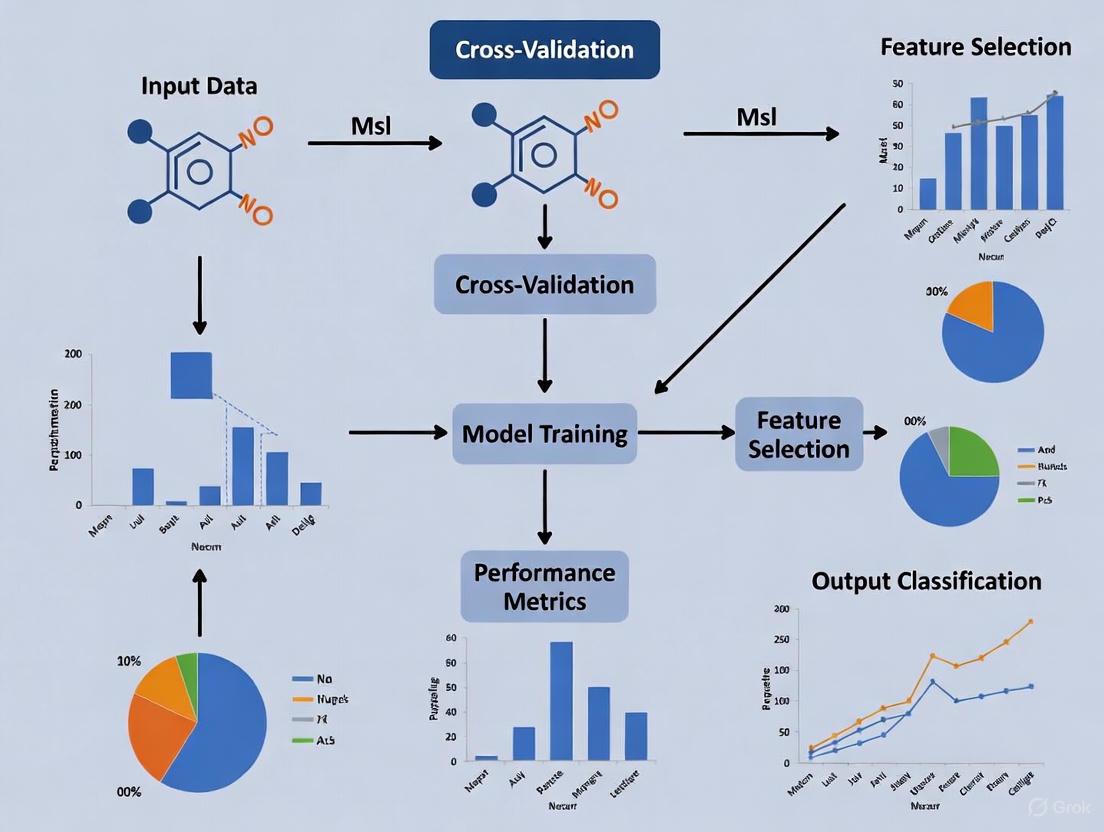

Workflow Visualization Diagrams

Diagram 1: Morphometric Similarity Network Classification Workflow

Diagram 2: Cross-Validation Strategies for Multi-Source Data

FAQs on Cross-Validation and Reproducibility

Q1: What is the core link between cross-validation and the reproducibility crisis in biomedical machine learning?

Reproducibility—the ability of independent researchers to reproduce a study's findings—is a cornerstone of science. However, many fields, including machine learning (ML) for healthcare and medical imaging, are experiencing a reproducibility crisis [9]. A common cause of irreproducible, over-optimistic results is the misapplication of ML techniques, specifically an incorrect setup of the training and test sets used to develop and evaluate a model [10]. Cross-validation is a core statistical procedure designed to provide a realistic estimate of a model's performance on unseen data. When implemented correctly, it directly combats overfitting and is therefore non-negotiable for producing reliable, reproducible findings [11] [12].

Q2: I'm getting great performance metrics during training, but my model fails on new data. What is the most likely cause?

The most probable cause is data leakage, a critical flaw where information from the test set inadvertently "leaks" into the training process [12]. This creates an overly optimistic performance estimate during development that does not generalize. Leakage can occur in several ways, but a common mistake in cross-validation is performing feature selection or data preprocessing (like normalization) before splitting the data into folds [13] [10]. Any step that uses information from the entire dataset must be included inside the cross-validation loop, performed solely on the training folds for each split.

Q3: For my morphometric classification study, should I use standard k-fold cross-validation?

It depends on your data structure. Standard k-fold is a good starting point, but it is often inappropriate for biomedical data. You should consider:

- Stratified k-fold: Use this if your classification classes are imbalanced (e.g., 80% healthy, 20% disease). It preserves the class percentage in each fold [13].

- Group k-fold: Use this if your data has natural groupings (e.g., multiple samples from the same patient, or measurements from the same lab). This ensures all samples from one group are in either the training or test set, preventing optimistic bias [13].

- Time Series Split: Use this for any longitudinal or time-course data to prevent the model from learning from the future to predict the past [14].

Q4: How can I use cross-validation for hyperparameter tuning without biasing my results?

You must use nested cross-validation [14] [15]. A single cross-validation procedure used for both tuning and final performance estimation leads to optimistically biased results. Nested cross-validation features two loops:

- Inner Loop: Used on the training fold to perform hyperparameter tuning (e.g., via GridSearchCV).

- Outer Loop: Used to provide an unbiased estimate of the model's true generalization error after tuning is complete [15].

Troubleshooting Guide: Common Cross-Validation Pitfalls

Problem 1: Data Leakage and Over-Optimistic Performance

- Symptoms: High accuracy during cross-validation that drops significantly when the model is applied to a truly held-out test set or new external data.

- Root Cause: Information from the test set has been used to inform the training process. A survey of ML-based science found that data leakage affects at least 294 papers across 17 fields, leading to wildly overoptimistic conclusions [12].

- Solution:

- Implement a

Pipelinethat encapsulates all preprocessing steps and the model estimator together. Scikit-learn'sPipelineensures that all transformations are fitted only on the training folds during cross-validation [11]. - Perform any feature selection that depends on the target variable (supervised selection) inside the cross-validation loop, not before the data is split [10].

- Implement a

Problem 2: High Variance in Cross-Validation Scores

- Symptoms: The performance metric (e.g., accuracy) varies widely across the different folds of cross-validation.

- Root Cause: The dataset may be too small, or the random splits may be unrepresentative due to underlying group structures or class imbalances.

- Solution:

- Repeat the cross-validation multiple times with new random splits and average the scores to lower the variance [13].

- Switch from standard k-fold to stratified or group k-fold as needed to ensure representative splits [13].

- Consider using a repeated k-fold strategy, which combines multiple rounds of k-fold cross-validation with different random splits.

Problem 3: Poor Generalization from Cross-Validation

- Symptoms: The final model, selected based on cross-validation performance, does not perform well in production.

- Root Cause: The cross-validation protocol may not accurately reflect the real-world predictive task. Furthermore, the model may have been overfitted to the cross-validation procedure itself by testing too many hyperparameter configurations.

- Solution:

Experimental Protocols & Data

Benchmarking ML Classifiers for Morphometric Classification

The following table summarizes the performance of various ML classifiers applied to a fruit fly morphometrics dataset, a typical task in biomedical research. This provides a benchmark for expected performance and highlights the importance of algorithm selection [16].

Table 1: Performance of Machine Learning Classifiers on Fruit Fly Morphometrics

| Classifier Model | Predictive Accuracy (%) | Kappa Statistic | Area Under Curve (AUC) | Notes |

|---|---|---|---|---|

| K-Nearest Neighbor (KNN) | 93.2 | N/A | N/A | Accuracy not significantly better than "no-information rate" (p-value > 0.1) |

| Random Forest (RF) | 91.1 | 0.54 | N/A | Poor model; accuracy not better than random guessing (p-value > 0.1) |

| SVM (Linear Kernel) | 95.7 | 0.81 | 0.91 | Performance significantly better than random (p-value < 0.0001) |

| SVM (Radial Kernel) | 96.0 | 0.81 | 0.93 | Performance significantly better than random (p-value = 0.0002) |

| SVM (Polynomial Kernel) | 95.1 | 0.78 | 0.96 | Performance significantly better than random (p-value < 0.0001) |

| Artificial Neural Network (ANN) | 96.0 | 0.83 | 0.98 | Performance significantly better than random (p-value < 0.0001) |

Detailed Protocol: Nested Cross-Validation for Robust Model Evaluation

This protocol ensures a rigorous and reproducible model assessment, critical for any biomedical ML study.

- Data Preparation: Start with a cleaned dataset. Define a final hold-out test set (e.g., 20% of the data) and set it aside. Do not use this set for any aspect of model development or tuning. The remaining 80% is your development set.

- Setup Cross-Validation Loops:

- Outer Loop: Configure a k-fold cross-validation (e.g., 5-folds) on the development set. This loop is for performance estimation.

- Inner Loop: Configure another k-fold cross-validation (e.g., 5-folds) within the training fold of the outer loop. This loop is for hyperparameter tuning.

- Model Training and Tuning: For each fold in the outer loop:

- Split the development data into

outer_trainandouter_testfolds. - On the

outer_trainfold, perform a grid or random search of hyperparameters. For each candidate hyperparameter set, run the inner loop cross-validation. - Select the hyperparameters that yield the best average performance across the inner folds.

- Train a final model on the entire

outer_trainfold using these best hyperparameters.

- Split the development data into

- Performance Evaluation: Use this final model to predict the

outer_testfold and calculate the performance metric. - Final Model: After completing the outer loop, average the performance metrics from all

outer_testfolds. This is your unbiased performance estimate. To get a final model for deployment, train it on the entire development set using the hyperparameters found to be best on average.

Essential Tools & Workflows for Reproducible Research

The Scientist's Toolkit: Key Research Reagents & Software

Table 2: Essential Tools for Reproducible Biomedical ML Research

| Tool / Reagent | Type | Primary Function | Reference/Link |

|---|---|---|---|

| scikit-learn | Software Library | Provides unified interfaces for models, pipelines, and cross-validation. | https://scikit-learn.org [11] |

| RENOIR | Software Platform | Offers standardized pipelines for model training/testing with repeated sampling to evaluate sample size dependence. | https://github.com/alebarberis/renoir [10] |

| PSIS-LOO | Computational Method | An efficient method for approximating leave-one-out cross-validation, useful for Bayesian models. | https://avehtari.github.io/modelselection/CV-FAQ.html [17] |

| Stratified K-Fold | Algorithm | A resampling method that preserves the percentage of samples for each class in every fold. | scikit-learn documentation [13] [11] |

| Nested Cross-Validation | Experimental Protocol | A rigorous procedure for obtaining unbiased performance estimates when tuning model hyperparameters. | [14] [15] |

Workflow: Correct vs. Incorrect Cross-Validation

The following diagram illustrates a standardized, robust workflow for ML analysis that integrates proper cross-validation to avoid common pitfalls, inspired by tools like RENOIR [10].

Correct ML Workflow with Hold-Out Test Set

Conceptual Pitfall: The Danger of Data Leakage

This diagram visualizes the critical conceptual error of data leakage and its impact on model performance estimates, a key issue behind the reproducibility crisis [12].

Data Leakage in Cross-Validation

Frequently Asked Questions

1. What is the primary goal of cross-validation in model evaluation? Cross-validation is a resampling procedure used to estimate the skill of a machine learning model on unseen data. Its primary goal is to test the model's ability to predict new data that was not used in estimating it, thereby flagging problems like overfitting or selection bias and providing insight into how the model will generalize to an independent dataset [18] [19].

2. How do I choose between K-Fold, Leave-One-Out (LOOCV), and Repeated K-Fold validation? The choice depends on your dataset size, computational resources, and need for estimate stability.

- K-Fold is a good general-purpose choice [18] [20].

- LOOCV is suitable for very small datasets but is computationally expensive [21] [20].

- Repeated K-Fold provides a more robust and stable performance estimate by reducing the noise from a single run of K-Fold, making it ideal for small- to modestly-sized datasets where a less noisy estimate is critical [22].

3. I have an imbalanced dataset. Which cross-validation method should I use? For imbalanced datasets, standard K-Fold cross-validation can lead to folds with unrepresentative class distributions. It is recommended to use Stratified K-Fold Cross-Validation, which ensures that each fold has the same proportion of class labels as the full dataset. This helps the classification model generalize better [20] [13] [19].

4. What is a common mistake that leads to over-optimistic performance estimates during cross-validation? A common and critical mistake is information leakage. This occurs when data preparation (e.g., normalization, feature selection) is applied to the entire dataset before splitting it into training and validation folds. This allows information from the validation set to influence the training process. To avoid this, all preparation steps must be performed after the split, within the cross-validation loop, using only the training data to fit any parameters and then applying that fit to the validation data [18] [13].

5. Why should I use a separate test set even after performing cross-validation? Cross-validation is used for model selection and hyperparameter tuning. During this process, you might inadvertently overfit the model to the validation splits. Using a completely separate, held-out test set that was never used in any part of the model training or validation process provides a final, unbiased evaluation of how your model will perform on truly unseen data [13].

Comparison of Common Cross-Validation Schemes

The table below summarizes the key characteristics, advantages, and disadvantages of K-Fold, Leave-One-Out, and Repeated K-Fold cross-validation to help you select the appropriate method.

| Method | Description | Best For | Advantages | Disadvantages |

|---|---|---|---|---|

| K-Fold [18] [20] [19] | Dataset is randomly split into k equal-sized folds. The model is trained on k-1 folds and tested on the remaining one. This process is repeated k times. | General use on datasets of various sizes. A value of k=5 or k=10 is common. | Lower bias than a single train-test split; efficient use of data; good for dataset size vs. compute time trade-off. | A single run can have a noisy estimate of performance; results can vary based on the random splits. |

| Leave-One-Out (LOOCV) [21] [19] | A special case of K-Fold where k equals the number of samples (n). Each iteration uses a single observation as the test set and the remaining n-1 as the training set. | Very small datasets. | Uses maximum data for training (low bias); deterministic—no randomness in results. | Computationally expensive for large n; high variance in the estimate as each test set is only one sample [21] [20]. |

| Repeated K-Fold [22] | Repeats the K-Fold cross-validation process multiple times (e.g., 3, 5, or 10 repeats) with different random splits. | Small to modest-sized datasets where a stable, reliable performance estimate is needed. | Reduces the noise and variability of a single K-Fold run; provides a more accurate estimate of true model performance. | Significantly more computationally expensive than a single K-Fold run (fits n_repeats * k models) [22]. |

Experimental Protocols for Morphometric Classification

Improving cross-validation rates is a key concern in morphometric classification research, where the goal is to correctly assign specimens to groups based on their shape outlines. The following protocols detail methodologies to optimize your cross-validation pipeline.

Protocol 1: Optimizing Dimensionality Reduction for CVA

Canonical Variates Analysis (CVA) is often used for morphometric classification but requires more specimens than variables. Outline data, represented by many semi-landmarks, creates a high-dimensionality problem. This protocol uses a PCA-based dimensionality reduction method optimized for cross-validation rate [23].

Workflow:

Methodology:

- Data Preparation: Represent your specimen outlines using a geometric morphometric method (e.g., semi-landmarks, elliptical Fourier analysis) [23].

- Dimensionality Reduction: Perform a Principal Components Analysis (PCA) on the aligned outline data. This creates a new set of variables (PC scores) that are fewer in number than the original specimens [23].

- Iterative Optimization:

- Define a range of possible numbers of PC axes (m) to use in the subsequent CVA.

- For each value of m, perform a linear Canonical Variates Analysis (CVA) using the first m PC scores as the input features.

- For each CVA model, calculate the cross-validation classification rate. Do not use the resubstitution rate, as it is optimistically biased [23].

- Model Selection: Select the number of PC axes (m) that results in the highest cross-validation rate of correct assignment. This approach has been shown to produce higher cross-validation assignment rates than using a fixed number of PC axes [23].

Protocol 2: Implementing Repeated K-Fold for Stable Performance Estimation

This protocol outlines the steps for implementing Repeated K-Fold cross-validation, which is crucial for obtaining a reliable performance estimate for your morphometric classifier, especially with limited data [22].

Workflow:

Methodology:

- Configuration: Choose the number of folds (k), typically 10, and the number of repeats (n_repeats), such as 3, 5, or 10 [22].

- Repeated Validation:

- For each of the

n_repeats:- Randomly shuffle the entire dataset and split it into k folds.

- For each of the k folds:

- Use the current fold as the validation set and the remaining k-1 folds as the training set.

- Train your chosen classification model (e.g., a classifier built on CVA scores) on the training set.

- Use the trained model to predict the validation set and calculate a performance score (e.g., accuracy).

- Retain the score and discard the model.

- For each of the

- Performance Estimation: Once all repeats and folds are complete, you will have

k * n_repeatsperformance scores. The final model performance is reported as the mean and standard deviation of all these scores. This average is expected to be a more accurate and less noisy estimate of the true underlying model performance [22].

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational tools and their functions essential for implementing the cross-validation schemes and protocols described above.

| Tool / Solution | Function in Cross-Validation & Morphometrics |

|---|---|

scikit-learn (sklearn) |

A comprehensive Python library providing implementations for KFold, LeaveOneOut, RepeatedKFold, cross_val_score, and various classifiers, making it easy to implement the protocols [18] [20] [22]. |

| Principal Components Analysis (PCA) | A statistical technique used for dimensionality reduction. It is critical for morphometric outline studies to reduce the number of variables before applying CVA, helping to avoid overfitting and improving cross-validation rates [23]. |

| Canonical Variates Analysis (CVA) | A multiple-group form of discriminant analysis. It is often the primary classification method in morphometric research to assign specimens to groups based on shape [23]. |

| Stratified K-Fold | A variant of K-Fold that returns stratified folds, preserving the percentage of samples for each class. This is essential for obtaining representative performance estimates on imbalanced datasets [20] [19]. |

Frequently Asked Questions (FAQs)

Q1: What is the clinical significance of distinguishing molecular glioblastoma (molGB) from low-grade glioma (LGG) on MRI? Molecular glioblastomas are IDH-wildtype tumors that are biologically aggressive (WHO Grade 4) but can appear as non-contrast-enhancing lesions on MRI, mimicking benign low-grade gliomas [24]. Accurate distinction is critical because molGB requires immediate, aggressive treatment with radiotherapy and temozolomide, whereas LGG may be managed with monitoring or less intensive initial therapy [24]. Misdiagnosis can lead to significant delays in appropriate treatment.

Q2: Our morphometric model is overfitting. How can we improve cross-validation performance? Overfitting often occurs when model complexity is high relative to the dataset size. To improve cross-validation rates:

- Feature Selection: Apply rigorous feature selection methods like ANOVA F-Test, Mutual Information, or Recursive Feature Elimination to reduce the feature set to the most informative ones, preventing the model from learning noise [24].

- Data Augmentation: Artificially expand your training dataset by applying label-preserving transformations to your existing data, such as flipping, rotations, and dropout, to enhance model robustness and generalizability [24].

- Model Simplification: Consider using simpler, more interpretable models like Logistic Regression or Linear Support Vector Machines as a baseline, ensuring they are properly regularized [24].

Q3: Can cell morphology predict molecular or genetic profiles? Evidence suggests a complex but exploitable relationship. A shared subspace exists where changes in gene expression can correlate with changes in cell morphology [25]. Machine learning models, including multilayer perceptrons, have demonstrated the ability to predict the mRNA expression levels of specific landmark genes from Cell Painting morphological profiles with good accuracy, and vice-versa [25]. This indicates that morphological data can be a proxy for some molecular states.

Q4: What is an appropriate mathematical framework for comparing complex cell morphologies? The Gromov-Wasserstein (GW) distance, a concept from metric geometry, is a powerful and generalizable framework [26]. It quantifies the minimum amount of physical deformation needed to change one cell's morphology into another's, resulting in a true mathematical distance [26]. This approach does not rely on pre-defined, cell-type-specific shape descriptors and is effective for complex shapes like neurons and glia, enabling rigorous algebraic and statistical analyses [26].

Troubleshooting Guides

Issue 1: Poor Model Generalization Across Independent Datasets

Problem: A morphometric classifier (e.g., a deep learning ResNet-3D model) trained to differentiate molGB from LGG performs well on internal validation but fails on a new, external dataset [24].

Diagnosis: This typically indicates dataset shift or inadequate feature learning. The model has likely learned features specific to the scanner protocol, patient population, or artifacts of your initial dataset that are not generalizable.

Solution:

- Employ Domain-Invariant Features: Utilize mathematical frameworks like the Gromov-Wasserstein distance, which is designed to be stable and discriminative across different technologies and experimental conditions [26].

- Leverage Transfer Learning: Pretrain your model's encoder on a large, public dataset of brain MRIs (e.g., BraTS) to learn general features of brain pathology before fine-tuning on your specific task [24].

- Implement Rigorous Cross-Validation: Use a 3-fold cross-validation strategy, repeated multiple times (e.g., 100 times) with different fold compositions. This provides a more robust estimate of model performance on your available data [24].

Issue 2: Low Accuracy in Cross-Modal Prediction Tasks

Problem: A regression model designed to predict gene expression profiles from Cell Painting morphological profiles shows low accuracy for most genes [25].

Diagnosis: The relationship between morphology and gene expression is complex and not one-to-one. Some genes have a strong morphological signature, while others do not [25]. The model may be capturing only the shared information and missing the modality-specific subspace.

Solution:

- Benchmark Model Performance: Start with baseline models like Lasso (linear) and Multilayer Perceptron or MLP (non-linear) to establish a performance benchmark for your dataset [25].

- Identify Predictable Genes: Analyze the results to identify specific genes that can be well-predicted from morphology. An enrichment analysis of these genes can reveal biological patterns and provide insight into the shared biology between morphology and transcription [25].

- Fuse Modalities, Don't Just Translate: For tasks like mechanism-of-action prediction, avoid relying solely on cross-modal prediction. Instead, use multi-modal fusion techniques to build a superior representation from both data types simultaneously, leveraging both shared and complementary information [25].

Table 1: Survival Outcomes of Glioblastoma Subtypes Treated with Radiotherapy and Temozolomide

| Glioblastoma Subtype | Contrast Enhancement on MRI | Median Overall Survival (Months) | Hazard Ratio (HR) | Study Findings |

|---|---|---|---|---|

| Molecular Glioblastoma (molGB) | Absent | 31.2 | 0.45 | Significantly improved survival compared to histGB [24] |

| Molecular Glioblastoma (molGB) | Present | 20.6 | - | No significant difference from histGB [24] |

| Histological Glioblastoma (histGB) | Present (defining feature) | 18.4 | Reference | Standard poor prognosis [24] |

Table 2: Performance of AI Models in Differentiating Molecular Glioblastoma from Low-Grade Glioma

| AI Model Type | Input Data | Key Preprocessing Steps | Performance (ROC AUC) |

|---|---|---|---|

| Deep Learning (ResNet10-3D) | 3D FLAIR MRI Volumes | Skull-stripping, registration to template, tumor-centric cropping [24] | 0.85 [24] |

| Machine Learning (Random Forest, SVM) | Radiomic Features from FLAIR MRI | Feature selection (ANOVA F-Test, Mutual Info), standardization [24] | - |

Experimental Protocols

Protocol 1: Differentiating Molecular GBM from LGG Using MRI and AI

Objective: To train a deep learning model to differentiate non-contrast-enhancing molecular glioblastoma (molGB) from low-grade glioma (LGG) based on FLAIR MRI sequences [24].

Materials:

- Patient preoperative FLAIR MRI volumes.

- Segmentation masks of the tumor region (can be generated via a pre-trained U-Net model and validated by a neuro-oncologist) [24].

Methodology:

- Image Preprocessing:

- Convert DICOM files to NifTI format.

- Perform skull-stripping using HD-BET [24].

- Register images to a standard anatomical template (e.g., SRI24).

- Resample to a uniform isotropic resolution.

- Model Training:

- Use a 3D architecture like ResNet10-3D.

- Pretraining: Improve performance by pretraining the model encoder on a large, public dataset (e.g., BraTS) using a self-supervised contrastive learning method (e.g., SimCLR) [24].

- Fine-tuning: Train the model using tumor-centered crops (e.g., 64x64x64 volumes) around the segmentation mask.

- Apply data augmentation (flipping, rotations, dropout).

- Train with Adam optimizer (learning rate 1e-4) for 15-30 epochs.

- Validation:

- Perform 3-fold cross-validation, repeated 100 times with different fold compositions.

- Evaluate performance using the Receiver Operating Characteristic Area Under the Curve (ROC AUC).

Protocol 2: Quantifying Cell Morphology with Metric Geometry

Objective: To quantify and compare complex cell morphologies (e.g., neurons, glia) in a way that reflects biophysical deformation and enables integration with other data modalities [26].

Materials:

- 2D segmentation masks or 3D digital reconstructions of individual cells.

Methodology:

- Data Discretization:

- For each cell, evenly sample points from its outline (2D) or surface (3D).

- Distance Matrix Calculation:

- For the set of sampled points for a cell, compute a pairwise distance matrix.

- Choose a distance metric:

- Euclidean distance: Accounts for the absolute positioning of cell appendages.

- Geodesic distance: Invariant under bending, sensitive to topological features like branching [26].

- Compute Gromov-Wasserstein (GW) Distance:

- For each pair of cells, compute the GW distance between their respective distance matrices using optimal transport. This distance quantifies the minimum "effort" required to deform one shape into the other [26].

- Downstream Analysis:

- Use the resulting GW distance matrix as a basis for:

- Dimensionality reduction (e.g., UMAP) to visualize a "cell morphology space."

- Clustering to identify morphological populations.

- Computing medoid or average cell morphologies for each cluster.

- Integrating with transcriptomic data to find genes associated with morphological changes [26].

- Use the resulting GW distance matrix as a basis for:

Experimental Workflow and Pathway Diagrams

Morphometric-Genomic Integration Workflow

Diagram Title: Multi-Modal Profiling Workflow for Linking Morphology and Gene Expression

Cell Morphometry Analysis with CAJAL

Diagram Title: CAJAL Framework for Cell Morphometry Using Metric Geometry

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Morphometric-Genomic Integration Studies

| Research Reagent / Tool | Function | Example Use Case |

|---|---|---|

| Cell Painting Assay | A high-content, high-throughput microscopy assay that uses up to six fluorescent dyes to stain major cellular compartments, enabling the extraction of thousands of morphological features [25]. | Generating high-dimensional morphological profiles from cell populations perturbed by drugs or genetic manipulations [25]. |

| L1000 Assay | A high-throughput gene expression profiling technology that measures the mRNA levels of ~978 "landmark" genes, capturing a majority of the transcriptional variance in the genome [25]. | Generating gene expression profiles from the same perturbations used in Cell Painting to enable multi-modal analysis [25]. |

| CAJAL Software | An open-source Python library that implements the Gromov-Wasserstein distance for quantifying and comparing cell morphologies based on principles of metric geometry [26]. | Creating a unified "morphology space" for neurons and glia, integrating morphological data across experiments, and identifying genes associated with morphological changes [26]. |

| BraTS Toolkit | A publicly available image processing pipeline for brain tumor MRI data. Includes steps for skull-stripping (HD-BET) and registration to standard templates [24]. | Preprocessing clinical brain MRI scans (converting DICOM, skull-stripping) before training deep learning models for tumor classification [24]. |

| pyRadiomics | An open-source Python package for the extraction of a large set of engineered features (shape, intensity, texture) from medical images [24]. | Extracting quantitative features from the FLAIR hypersignal region of gliomas to feed into traditional machine learning classifiers [24]. |

Implementing Robust Cross-Validation for Diverse Morphometric Data Types

Data Preparation and Feature Selection for Morphometric Analysis

Troubleshooting Guides

G1: Handling Measurement Error (ME) in Pooled Datasets

Problem: When pooling morphometric datasets from multiple operators or studies, high within-operator and inter-operator (IO) measurement error can obscure true biological signals and degrade cross-validation performance [27].

Solution:

- Estimate Errors: Before pooling data, conduct a pilot study to quantify Intra-operator ME (variation from repeated measurements by one operator) and IO bias (systematic variation between different operators) [27].

- Validate Protocol: Use an analytical workflow to assess if the error variation is significantly smaller than the biological variation of interest. If IO bias is large and directional, avoid pooling datasets [27].

- Select Robust Methods: Choose morphometric approaches (e.g., specific landmark types) that demonstrate low IO error and high repeatability in your pilot analysis [27].

Prevention: Establish and document a standardized data acquisition protocol for all operators, including detailed definitions of landmarks and measurement procedures [27].

G2: Optimizing Digitization Effort and Variable Inflation

Problem: Capturing shape using dense configurations of points (e.g., sliding semi-landmarks) leads to an inflation of variables. This can dramatically increase digitization time and potentially lead to biologically inaccurate results without guaranteeing an increase in precision [27].

Solution:

- Power Analysis: Use a small data subset to estimate the analytical power of your study. Select a morphometric protocol that provides sufficient statistical power without unnecessary variables [27].

- Reduce Variables: Systematically reduce the number of morphometric variables to the minimum set required for effective analysis, thus optimizing digitization effort [27].

Prevention: Prioritize well-defined landmarks and carefully consider the necessity of adding semi-landmarks. The goal is to capture shape accurately, not to maximize the number of variables [27].

G3: Addressing Flawed Cross-Validation Practices in Model Comparison

Problem: Using a simple paired t-test on accuracy scores from repeated cross-validation (CV) runs to compare models is a flawed practice. The statistical significance of the accuracy difference can be artificially influenced by the choice of CV setups (number of folds K and repetitions M), leading to p-hacking and non-reproducible conclusions [28].

Solution:

- Avoid Naive T-Tests: Do not directly apply a paired t-test to

K x Maccuracy scores from two models, as the scores are not independent [28]. - Use Robust Tests: Employ statistical tests specifically designed for correlated CV results, such as the

5x2cv pairedt-test or corrected resampledt-test [28]. - Standardize CV Setup: Clearly report and justify the chosen CV configuration (

K,M). Be aware that higherKandMcan increase the likelihood of detecting statistically significant differences by chance alone, even between models with the same intrinsic predictive power [28].

Prevention: Adopt a unified and unbiased framework for model comparison that is less sensitive to specific CV configurations [28].

G4: Selecting Morphometric Features for Predictive Modeling

Problem: With many potential morphometric features, identifying the most relevant ones for predicting processes like erosion or formation material is challenging. Using irrelevant features can reduce model accuracy and generalizability [29].

Solution:

- Apply Feature Selection: Use algorithms to identify the most important morphometric parameters. Effective algorithms include Principal Component Analysis (PCA), Greedy, Best first, Genetic search, and Random search [29].

- Validate with Modeling: Employ neural network models like the Group Method of Data Handling (GMDH) to predict outcomes (e.g., erosion rates) based on the selected morphometric features and validate the model's accuracy (e.g., R² score) [29].

Prevention: Integrate feature selection as a standard step in the modeling workflow to build simpler, more interpretable, and more robust models [29].

Frequently Asked Questions (FAQs)

Q1: What are the main sources of error in morphometric studies? The primary sources are methodological, instrumental, and personal. A significant challenge is inter-operator (IO) bias, where different users systematically measure or digitize the same structure differently. This is especially problematic when pooling datasets from multiple sources [27].

Q2: Why is my model's cross-validation accuracy high, but it fails on new, unseen data? This is a classic sign of overfitting, where the model has learned the noise in your training data rather than the underlying biological signal. Overfitted models have low bias but high variance. Cross-validation aims to optimize this bias-variance tradeoff. Using too many features (variable inflation) relative to your sample size is a common cause [27] [30].

Q3: What is the difference between k-fold CV and leave-p-out CV?

In k-fold CV, the dataset is randomly split into k equal-sized folds. Each fold is used once as a validation set while the remaining k-1 folds form the training set. In leave-p-out CV,psamples are left out as the validation set, and the model is trained on the remainingn-psamples. This process is repeated over all possible combinations ofpsamples, making it computationally very expensive. Leave-one-out CV is a special case wherep=1` [30].

Q4: How can self-organizing maps (SOM) be used in morphometric analysis? SOM is an unsupervised neural network algorithm that can be used to classify alluvial fans or other structures based on their morphometric properties. It helps identify the key morphometric factors (e.g., fan length, minimum height) that are most influential in determining characteristics like formation material or erosion rates, without prior class labels [29].

Q5: What is a "hold-out CV" approach?

This is a common practice where the entire dataset is first split into a training set (D_train) and a hold-out test set (D_test). The model training and hyperparameter tuning (using k-fold or other CV methods) are performed only on D_train. The final, chosen model is then evaluated exactly once on the hold-out D_test to get an unbiased estimate of its performance on unseen data [30].

Data Tables

This table summarizes the process of assessing measurement errors prior to data pooling.

| Error Type | Description | Impact on Analysis | Assessment Method |

|---|---|---|---|

| Intra-operator ME | Variation occurring when a single operator repeatedly measures the same specimen. | Adds non-systematic "noise" that can reduce statistical power. | Replicated measurements on the same objects by the same operator; compared to biological variation. |

| Inter-operator (IO) Bias | Systematic, directional variation introduced by different operators measuring the same specimens. | Can create artificial variation that mimics or obscures true biological signal, especially dangerous when pooling data. | Multiple operators measure the same set of specimens; IO variation is compared to intra-operator ME and biological variation. |

This table lists algorithms used to identify the most important morphometric features for predictive modeling.

| Algorithm Type | Brief Description | Key Advantage |

|---|---|---|

| Principal Component Analysis (PCA) | Transforms original variables into a new set of uncorrelated variables (principal components). | Reduces dimensionality while preserving most of the data's variance. |

| Greedy Search | Makes the locally optimal choice at each stage with the hope of finding a global optimum. | Computationally efficient for large feature sets. |

| Best First Search | Explores a graph by expanding the most promising node chosen according to a specified rule. | Can find a good solution without searching the entire space. |

| Genetic Search | Uses mechanisms inspired by biological evolution (e.g., selection, crossover, mutation). | Effective for complex search spaces with many local optima. |

| Random Search | Evaluates random combinations of features. | Simple to implement and can be surprisingly effective. |

This table shows features identified as most important for predicting erosion and formation material in a watershed study.

| Target Variable | Selected Morphometric Features | Feature Selection Algorithm Used |

|---|---|---|

| Formation Material | Minimum fan height (Hmin-f), Maximum fan height (Hmax-f), Minimum fan slope, Fan length (Lf) |

Multiple (PCA, Greedy, Best first, etc.) |

| Erosion Rate | Basin area, Fan area (Af), Maximum fan height (Hmax-f), Compactness coefficient (Cirb) |

Multiple (PCA, Greedy, Best first, etc.) |

Experimental Protocols

Objective: To estimate within- and among-operator biases and determine whether morphometric datasets from multiple operators can be safely pooled for analysis.

Materials:

- A subset of specimens (e.g., 6 third lower molars).

- Multiple operators.

- Data acquisition equipment (e.g., calipers, DSLR camera for 2D, or 3D scanner).

- Digitization software (e.g., tpsDig2).

Methodology:

- Repeated Measurements: Each operator performs repeated, blinded measurements on all specimens in the subset.

- Error Quantification:

- Calculate Intra-operator ME for each operator by analyzing variation across their own replicates.

- Calculate Inter-operator (IO) Bias by analyzing systematic differences between the average measurements of different operators.

- Decision Analysis:

- Compare the magnitude of IO bias to the intra-operator ME and, most importantly, to the biological variation under investigation (e.g., variation between species).

- If IO bias is significant and overlaps with the direction of biological variation, pooling data from these operators should be avoided.

- Use this analysis to select a morphometric protocol (choice of landmarks/measurements) that minimizes IO error.

Objective: To rigorously compare the accuracy of two classification models in a cross-validation setting, avoiding flawed statistical practices.

Materials:

- A single dataset with features and labels.

- A base classifier (e.g., Logistic Regression).

Methodology:

- Create Perturbed Models: To test the comparison framework itself, create two models with the same intrinsic predictive power.

- Train a base model (e.g., Logistic Regression) on a training fold.

- Create two "perturbed" models by adding and subtracting a small, random Gaussian vector to the model's coefficients.

- Cross-Validation: Evaluate the two perturbed models using a K-fold CV, repeated M times.

- Statistical Comparison:

- Incorrect Practice: Applying a standard paired t-test to the

K x Maccuracy scores. This will likely show a "significant" difference due to the non-independence of scores, an artifact of the CV setup. - Correct Practice: Use a statistical test designed for correlated CV results. This framework demonstrates how the flawed practice can lead to p-hacking, where changing

KorMchanges the significance outcome even for models with no real difference.

- Incorrect Practice: Applying a standard paired t-test to the

Diagrams and Visualizations

Morphometric Analysis Workflow

Cross-Validation Process

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in Morphometric Analysis |

|---|---|

| Digital Calipers | For acquiring traditional linear measurements (e.g., maximum tooth length and width) directly from specimens [27]. |

| DSLR Camera with Macro Lens | For capturing high-resolution 2D images of specimens, which serve as the basis for subsequent 2D landmark digitization [27]. |

| 3D Scanner / CT Scanner | For creating high-fidelity 3D models of specimens, enabling 3D landmarking and surface analysis [27]. |

| Digitization Software (e.g., tpsDig2) | Software used to place landmarks and semi-landmarks on 2D images or 3D models, converting visual information into quantitative (x,y,z) coordinate data [27]. |

| Geometric Morphometrics Software (e.g., MorphoJ) | Specialized software for performing Procrustes superimposition, statistical analysis of shape, and visualization of shape variation [27]. |

| Self-Organizing Map (SOM) Algorithm | An unsupervised neural network used to classify and explore morphometric datasets, identifying key patterns and clusters without pre-defined labels [29]. |

| Group Method of Data Handling (GMDH) Algorithm | A supervised neural network used for predicting outcomes (e.g., erosion rate) from morphometric features, known for its high accuracy in modeling complex relationships [29]. |

Frequently Asked Questions (FAQs)

1. Which classifier typically performs best for morphometric data? Based on recent comparative studies, the Random Forest (RF) algorithm frequently achieves the highest performance for morphometric classification tasks. In a 2025 study analyzing 3D dental morphometrics for sex estimation, Random Forest significantly outperformed other models, achieving up to 97.95% accuracy with balanced precision and recall. Support Vector Machines (SVM) showed moderate performance (70-88% accuracy), while Artificial Neural Networks (ANN) had the lowest metrics in this specific application (58-70% accuracy) [31]. RF's robustness is attributed to its ability to handle tabular data and high-dimensional feature spaces effectively [31].

2. What are the most critical errors to avoid during model training? The most impactful errors affecting cross-validation rates include [32]:

- Overfitting and Underfitting: Overfitting occurs with too few training examples, while underfitting happens when the model is too simple for complex data.

- Data Imbalance: Severe class imbalance in your training dataset can drastically bias model predictions.

- Data Leakage: When information from outside the training dataset inadvertently influences the model, resulting in performance metrics that are "too good to be true."

- Incorrect Data and Labeling: Errors in the source data or its labels will prevent the model from learning correct patterns.

3. How can I improve the performance and generalizability of my model?

- Apply K-Fold Cross-Validation: This technique is crucial for robust performance estimation. A study on photovoltaic system efficiency used 10-fold cross-validation with Random Forest, demonstrating strong generalization and stable results [33].

- Implement Feature Selection: Identify and use the most important features. Research shows this not only reduces processing time but can also improve the model's performance [33].

- Ensure Data Quality: Meticulously check for and handle issues like missing values, incorrect labels, and outliers before training [32].

- Conduct Thorough Model Experimentation: Avoid settling on the first model. Systematically test different algorithms, hyperparameters, and training strategies to find the most effective solution [32].

Troubleshooting Guides

Problem: Poor Cross-Validation Performance

Possible Causes and Solutions:

- Cause 1: Data Imbalance

- Solution: Apply techniques to rebalance your dataset. This can include oversampling the minority class, undersampling the majority class, or using algorithmic approaches designed to handle imbalanced data [32].

- Cause 2: Data Leakage

- Solution: Ensure all data preprocessing steps (like scaling) are calculated within each fold of the cross-validation and not on the entire dataset before splitting. Always withhold a final validation set until after model development is complete [32].

- Cause 3: Classifier-Specific Pitfalls

- Solution - For ANN: If using an Artificial Neural Network, ensure your dataset is large enough and the architecture is suitable. ANNs can perform poorly with smaller or structured tabular data, as they may struggle with female classification (recall: 0.33–0.88) compared to males (recall: 0.36–1.0) in one study [31].

- Solution - For SVM: The choice of kernel and its parameters is critical. Experiment with different kernels (linear, RBF) and use cross-validation to tune hyperparameters [34].

Problem: Model is Overfitting

Possible Causes and Solutions:

- Cause 1: Model is Too Complex for the Data

- Solution:

- For Random Forest: Increase the number of trees in the ensemble. While more trees generally improve performance, you can also adjust parameters like

max_depthto limit the complexity of individual trees [31] [35]. - For Neural Networks: Reduce the number of layers and hidden units. Apply regularization techniques like L1/L2 regularization or dropout [32].

- For Random Forest: Increase the number of trees in the ensemble. While more trees generally improve performance, you can also adjust parameters like

- General Solution: Perform feature reduction to decrease the dimensionality of your input data [32].

- Solution:

The diagram below outlines a systematic workflow to diagnose and remedy overfitting.

Problem: Choosing the Wrong Classifier

Solution: Select a classifier based on your data characteristics and the empirical evidence from morphometric literature. The table below summarizes a quantitative comparison from a key study.

Table: Classifier Performance in a 3D Dental Morphometrics Study (2025) [31]

| Classifier | Highest Accuracy | Typical Accuracy Range | Key Strengths | Key Weaknesses |

|---|---|---|---|---|

| Random Forest (RF) | 97.95% (Mandibular Second Premolar) | 85% - 98% | High accuracy, handles tabular data well, minimal sex bias, robust to overfitting. | Less interpretable than simpler models. |

| Support Vector Machine (SVM) | ~88% | 70% - 88% | Effective in high-dimensional spaces. | Performance highly dependent on kernel and parameters; showed moderate performance. |

| Artificial Neural Network (ANN) | ~70% | 58% - 70% | Can model complex non-linear relationships. | Lowest metrics in this study; struggled with female classification recall; requires large data. |

Table: Summary of Common Training Errors and Fixes [32]

| Error Type | What It Means | How to Fix It |

|---|---|---|

| Data Imbalance | The training set is not representative of all classes. | Use resampling techniques (oversampling, undersampling), or use class weights in the algorithm. |

| Data Leakage | Information from the test set leaks into the training process. | Perform data preparation (like scaling) inside the cross-validation folds. Use a completely held-out validation set. |

| Overfitting | The model learns the training data too well, including its noise, and fails to generalize. | Simplify the model, use regularization, get more training data, or perform feature reduction. |

| Underfitting | The model is too simple to capture the underlying trend in the data. | Increase model complexity, add more relevant features, or reduce noise in the data. |

Experimental Protocols & Workflows

This protocol can be adapted for general morphometric classification.

1. Sample Preparation & Digital Acquisition

- Sample Collection: Obtain dental casts from 60 males and 60 females (or your relevant biological samples). Ensure a consistent age range to minimize age-related variation.

- Inclusion Criteria: Select samples with full complement of the structures under study. Exclude any with damage, restoration, or developmental anomalies.

- Digitization: Create 3D digital models using a high-resolution 3D scanner.

2. Landmarking and Data Extraction

- Software: Use 3D Slicer software for placing landmarks.

- Landmark Definition: Identify anatomic and geometric landmarks based on established evidence (e.g., cusp tips, fissure junctions, crests of curvatures). The number of landmarks will vary based on structural complexity.

- Data Export: Record the 3D coordinates (x, y, z) of all landmarks and tabulate them for analysis.

3. Data Preprocessing

- Procrustes Superimposition: Perform this in specialized software like MorphoJ to remove the effects of size, rotation, and translation, isolating pure shape information.

- Principal Component Analysis (PCA): Use PCA on the Procrustes-aligned coordinates for dimensional reduction and to identify major trends of shape variation.

4. Machine Learning Classification

- Data Splitting: Use a robust method like 5-fold or 10-fold cross-validation.

- Model Training: Train multiple classifiers (RF, SVM, ANN) on the pre-processed landmark data (e.g., principal components).

- Performance Evaluation: Calculate accuracy, precision, recall, F1-score, and AUC (Area Under the Curve) to evaluate and compare models.

The entire experimental and analytical workflow is visualized below.

The Scientist's Toolkit: Essential Research Reagents & Software

Table: Key Software and Analytical Tools for Morphometrics

| Item Name | Function / Application | Specific Use Case |

|---|---|---|

| 3D Slicer | Open-source software platform for medical image informatics, image processing, and 3D visualization. | Placing 3D landmarks on digital models of teeth or bones [31]. |

| MorphoJ | Integrated software package for geometric morphometrics. | Performing Procrustes superimposition and multivariate statistical analysis of shape [31]. |

| Scikit-Learn (Python) | Open-source machine learning library for Python. | Implementing Random Forest, SVM, and Neural Network models, along with cross-validation and feature selection [36]. |

| Random Forest Classifier | Ensemble machine learning algorithm for classification and regression. | The primary model for high-accuracy morphometric classification, as demonstrated in multiple studies [31] [34] [33]. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate machine learning models on a limited data sample. | Provides a robust estimate of model performance and generalizability, essential for reliable results [31] [33] [35]. |

Step-by-Step Guide to K-Fold Cross-Validation with Morphometric Data

What is K-Fold Cross-Validation?

K-Fold Cross-Validation is a statistical technique used to evaluate the performance of machine learning models. It involves dividing the dataset into K subsets (folds) of approximately equal size. The model is trained K times, each time using K-1 folds for training and the remaining fold for validation. This process ensures every data point is used for both training and testing exactly once, providing a robust estimate of model generalization ability [37] [18].

In morphometric classification research, where data collection can be expensive and time-consuming, K-Fold Cross-Validation maximizes data utilization and helps develop models that generalize well to new, unseen morphometric data.

Why Use K-Fold in Morphometric Research?

Morphometric data presents unique challenges including limited sample sizes, high-dimensional feature spaces, and potential measurement variability. K-Fold Cross-Validation addresses these challenges by:

- Efficient Data Usage: Every data point contributes to both training and validation, crucial when sample collection is resource-intensive [37]

- Overfitting Detection: Identifies when models learn dataset-specific noise rather than generalizable patterns [38]

- Performance Reliability: Provides more stable performance estimates than single train-test splits [39]

- Model Selection: Enables comparison of different algorithms and feature sets for morphometric classification [40]

Theoretical Foundations

The K-Fold Algorithm

The standard K-Fold Cross-Validation process follows these steps [37] [18]:

- Shuffle the dataset randomly to eliminate ordering biases

- Split the dataset into K equal-sized folds (subsets)

- For each fold k:

- Use fold k as the validation set

- Use the remaining K-1 folds as the training set

- Train the model on the training set

- Evaluate the model on the validation set

- Record the performance metric

- Calculate the average performance across all K folds

The performance of the model is computed as:

[ \text{Performance} = \frac{1}{K} \sum{k=1}^{K} \text{Metric}(Mk, F_k) ]

Where (Mk) is the model trained on all folds except (Fk), and (F_k) is the test fold [37].

Visualizing the K-Fold Process

K-Fold Cross-Validation Workflow: This diagram illustrates the iterative process of training and validation across K folds.

Bias-Variance Tradeoff in K Selection

The choice of K involves a critical bias-variance tradeoff [37] [39] [18]:

- Small K (e.g., 2, 3, 5): Lower computational cost but higher variance in performance estimates and potentially higher bias

- Large K (e.g., 10, 15, 20): Lower bias but higher computational cost and variance in estimates

- K = n (Leave-One-Out CV): Lowest possible bias but highest computational cost and variance

For most morphometric applications, K=5 or K=10 provides a good balance between bias and variance [39] [18]. K=10 is particularly common as it generally results in model skill estimates with low bias and modest variance.

Experimental Protocol for Morphometric Data

Research Reagent Solutions

| Component | Function in Morphometric Analysis | Implementation Example |

|---|---|---|

| Data Collection Tools | Acquire raw morphometric measurements | Microscopy systems, digital calipers, image analysis software |

| Feature Extraction | Convert raw data into quantifiable features | Shape descriptors, landmark coordinates, texture analysis algorithms |

| Scikit-Learn Library | Provides K-Fold implementation and ML algorithms | sklearn.model_selection.KFold, sklearn.ensemble.RandomForestClassifier |

| Pandas & NumPy | Data manipulation and numerical computations | Data cleaning, transformation, and array operations |

| Performance Metrics | Quantify model performance | Accuracy, precision, recall, F1-score, ROC-AUC |

| Visualization Tools | Interpret results and identify patterns | Matplotlib, Seaborn, PCA plots |

Step-by-Step Implementation

Data Preparation and Preprocessing

Critical Consideration for Morphometric Data: Always perform preprocessing (like scaling) within each fold to prevent data leakage [18]. Fit the scaler on the training fold only, then transform both training and validation folds.

K-Fold Cross-Validation Implementation

Performance Metrics Table

| Fold | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|

| Fold 1 | 0.933 | 0.945 | 0.922 | 0.933 |

| Fold 2 | 0.967 | 0.956 | 0.978 | 0.967 |

| Fold 3 | 0.933 | 0.923 | 0.944 | 0.933 |

| Fold 4 | 0.967 | 0.978 | 0.956 | 0.967 |

| Fold 5 | 0.900 | 0.889 | 0.912 | 0.900 |

| Average | 0.940 ± 0.027 | 0.938 ± 0.034 | 0.942 ± 0.024 | 0.940 ± 0.025 |

Example performance metrics from a morphometric classification study using 5-fold cross-validation. Note the consistency across folds, indicating model stability.

Troubleshooting Common Issues

Frequently Asked Questions

Q1: Why does my model show high performance variance across folds? A: High variance often indicates that your dataset may be too small or contains outliers that disproportionately affect certain folds. Solutions include:

- Increase K value to reduce variance (try K=10 instead of K=5)

- Ensure proper shuffling before creating folds

- Check for and address outliers in morphometric measurements

- Consider stratified K-Fold if class distribution is imbalanced

Q2: How do I handle data preprocessing without causing data leakage? A: Data leakage occurs when information from the validation set influences the training process [41]. To prevent this:

- Always fit scalers, imputers, and other preprocessing steps on the training fold only

- Use scikit-learn Pipelines to encapsulate preprocessing and modeling steps

- Apply the fitted preprocessing transformer to the validation fold without refitting

Q3: What is the optimal K for my morphometric dataset? A: The optimal K depends on your dataset size and characteristics [18]:

- For datasets with <100 samples: Consider Leave-One-Out CV (K=n) or K=5

- For datasets with 100-1000 samples: K=10 is generally optimal

- For datasets with >1000 samples: K=5 often suffices

- Always use stratified K-Fold for imbalanced class distributions

Q4: My computational time is too high with K-Fold. How can I optimize? A: Computational constraints are common with large morphometric datasets:

- Use a smaller K value (K=3 or K=5)

- Implement parallel processing using scikit-learn's

n_jobsparameter - Reduce feature dimensionality through PCA or feature selection before CV

- Use simpler models during initial experimentation

Q5: How do I interpret significantly different performance across folds? A: Large performance variations suggest your model may be sensitive to specific data subsets:

- Examine the composition of each fold - there may be meaningful biological subgroups

- Check if certain morphometric features have different distributions across folds

- Consider whether your dataset contains multiple distinct populations that should be modeled separately

Advanced K-Fold Variations for Morphometric Data

Stratified K-Fold for Imbalanced Data

Morphometric studies often have imbalanced class distributions. Stratified K-Fold preserves the percentage of samples for each class across folds:

Group K-Fold for Correlated Samples

When morphometric data contains multiple measurements from the same subject or related specimens, Group K-Fold ensures entire groups stay together in folds:

Repeated K-Fold for More Robust Estimates

Repeating K-Fold with different random splits provides more reliable performance estimates:

Troubleshooting Decision Framework

K-Fold Cross-Validation Troubleshooting Guide: This decision framework helps diagnose and address common issues encountered during implementation.

Applications in Morphometric Classification Research

Case Study: Improving Cross-Validation Rates

A recent study on bioactivity prediction demonstrated how modified cross-validation approaches can better estimate real-world performance [42]. By implementing k-fold n-step forward cross-validation, researchers achieved more realistic performance estimates for out-of-distribution compounds.

For morphometric research, this suggests that standard random splits may not always reflect real-world scenarios where new data may differ systematically from training data. Consider time-based or group-based splitting when temporal or batch effects are present in morphometric data collection.

- Always shuffle data before creating folds to eliminate ordering biases [18]

- Use appropriate K values based on dataset size and computational constraints [37] [39]

- Implement stratified sampling for imbalanced class distributions in morphometric data

- Prevent data leakage by keeping all preprocessing within the cross-validation loop [41] [18]

- Report both mean and variance of performance metrics across folds [39]

- Consider dataset-specific variations like Group K-Fold for correlated measurements

- Use cross-validation for model selection and hyperparameter tuning, not just final evaluation [40]

Future Directions

Emerging approaches in cross-validation include nested cross-validation for hyperparameter optimization, and domain-specific validation strategies that better simulate real-world deployment conditions [42] [30]. For morphometric research, developing validation protocols that account for biological variability and measurement consistency will be crucial for improving classification reliability.

By implementing robust K-Fold Cross-validation protocols specifically tailored to morphometric data characteristics, researchers can develop more reliable classification models that generalize effectively to new specimens and conditions.

Frequently Asked Questions (FAQs)

Q1: What is the clinical value of predicting glioma-associated epilepsy (GAE) using radiomics? GAE is a common and often debilitating symptom in glioma patients. Accurate prediction allows for early intervention, tailored anti-seizure medication strategies, and improved patient quality of life. Radiomics provides a non-invasive method to preoperatively identify patients at high risk, enabling personalized treatment plans and potentially preventing seizure-related complications [43] [44].

Q2: Which MRI sequences are most informative for building a GAE prediction model? Multiple sequences contribute valuable information. T2-weighted (T2WI) and T2 Fluid-Attenuated Inversion Recovery (T2-FLAIR) are foundational sequences widely used because they effectively visualize the tumor core and peritumoral edema, which are crucial regions for feature extraction [43] [45]. Multiparametric approaches that also include T1-weighted (T1WI) and contrast-enhanced T1 (T1Gd) sequences can provide a more comprehensive feature set and have been shown to yield the best prediction results [46] [47].

Q3: What are the key clinical and molecular features that improve GAE prediction models? Integrating clinical and molecular data with radiomic features consistently enhances model performance. Important clinical features include patient age and tumor grade [43]. Key molecular markers identified in studies are IDH mutation status, ATRX deletion, and Ki-67% expression level [44]. Models that combine radiomics with these non-imaging features outperform models based on imaging alone [43] [44].

Q4: My radiomics model performs well on training data but generalizes poorly to new data. What could be the cause? Poor generalization is often a sign of overfitting, frequently caused by a high number of radiomic features relative to the number of patient samples. To mitigate this:

- Implement Robust Feature Selection: Use methods like Least Absolute Shrinkage and Selection Operator (LASSO) regression or Random Forest-based feature selection to identify the most relevant, non-redundant features [46] [44].

- Apply Proper Validation: Always use a strict train-test split or, better yet, k-fold cross-validation on your training cohort, and finally evaluate on a held-out validation cohort that was not used in any part of the model building process [43] [48].

- Increase Cohort Size: If possible, use multi-institutional data to increase the diversity and size of your dataset, which helps the model learn more generalizable patterns [47].

Troubleshooting Common Experimental Challenges

Issue: Low Cross-Validation Accuracy in Morphometric Classification

Problem: You are building a classifier to predict epilepsy risk based on tumor location and morphometric features, but your cross-validation accuracy is unacceptably low, suggesting the model is not reliably learning the underlying patterns.

Solution: This requires a multi-faceted approach focusing on data, features, and model architecture.

Inter-Cohort Validation: Instead of only using a simple random split, perform leave-one-out cross-validation (LOOCV) or stratified k-fold cross-validation. This is particularly effective for smaller cohorts, as it maximizes the use of available data for training while providing a robust estimate of model performance [48]. One study on pediatric LGG achieved an accuracy of 0.938 using LOOCV with a combination of radiomics and tumor location features [48].

Advanced Feature Selection and Integration:

- Dimensionality Reduction: With a large number of extracted features, use algorithms like the minimum redundancy maximum relevance (mRMR) to select a subset of features that are highly predictive of the target class while being minimally redundant with each other [48].

- Combine Feature Types: Do not rely on a single feature type. Evidence shows that integrating tumor location features (e.g., temporal lobe involvement) with texture and shape features (e.g., "High Dependence High Grey Level Emphasis," "Elongation") significantly boosts predictive performance compared to using either type alone [48]. The table below summarizes feature types and their importance.

Table: Key Radiomic and Morphometric Features for GAE Prediction

Feature Category Specific Examples Reported Importance / Notes Tumor Location Temporal lobe involvement, Midbrain involvement Often identified as the most important predictor [48]. Shape Features Elongation, Area Density Describes the 3D geometry of the tumor [48]. Texture Features High Dependence High Grey Level Emphasis, Information Correlation 1 Captures intra-tumoral heterogeneity [43] [48]. First-Order Statistics Intensity Range Describes the distribution of voxel intensities [48]. Model and Algorithm Selection: Test multiple machine learning classifiers. Research indicates that Support Vector Machine (SVM) and Random Forest (RF) models are often top performers for this task.

- A study on frontal glioma used an SVM-based model for its final predictor [43].

- Another study on lower-grade glioma found that a Random Forest model provided the best performance and was integrated into a web application for clinical use [44].

- Experiment with different algorithms and use cross-validation to select the best performer for your specific dataset.

Issue: Inconsistent Manual Segmentation of Regions of Interest (ROIs)

Problem: Manually delineating the tumor and peritumoral edema for feature extraction is time-consuming and introduces inter-observer variability, which can negatively impact model robustness and reproducibility.

Solution:

- Best Practice Protocol: Establish a standardized segmentation protocol. This typically involves a two-step process where one researcher (e.g., a Ph.D. candidate) performs the initial slice-by-slice manual segmentation using software like ITK-SNAP, which is then reviewed and modified by a senior neuroradiologist [43] [46]. This ensures consistency and accuracy.

- Leverage Automated Segmentation: To reduce manual burden and improve objectivity, explore using pre-trained deep learning models for automatic ROI segmentation. The nnU-Net framework, for example, has been successfully used for this purpose in glioma radiomics studies, automatically processing images and segmenting tumors [46].

Experimental Protocols & Workflows

Detailed Protocol: Building a Clinical-Radiomics Model for GAE Prediction

The following workflow, based on established methodologies, outlines the key steps for constructing a robust predictive model [43] [46] [48].

Diagram Title: Radiomics Model Development Workflow for Glioma-Associated Epilepsy