Geometric vs. Linear Morphometrics: A Comparative Analysis of Discriminatory Power in Biomedical Research

This article provides a comprehensive comparison of geometric morphometrics (GMM) and linear morphometrics (LMM) for researchers and drug development professionals.

Geometric vs. Linear Morphometrics: A Comparative Analysis of Discriminatory Power in Biomedical Research

Abstract

This article provides a comprehensive comparison of geometric morphometrics (GMM) and linear morphometrics (LMM) for researchers and drug development professionals. It explores the foundational principles of both methods, examines their application across diverse fields from neuroimaging to cancer diagnostics, addresses common methodological challenges and optimization strategies, and presents empirical evidence comparing their discriminatory performance. By synthesizing findings from recent studies, this review serves as a practical guide for selecting appropriate morphometric approaches to maximize classification accuracy and biological insight in phenotypic profiling and biomarker discovery.

Foundational Principles: Understanding Geometric and Linear Morphometrics

Morphometrics, the quantitative analysis of biological form, relies fundamentally on three core data types for capturing morphological information: landmarks, semilandmarks, and linear measurements. These distinct approaches form the foundation for both traditional and modern shape analysis methodologies, each with unique strengths, limitations, and appropriate applications in biological research. Landmarks represent discrete, anatomically homologous points that can be precisely located across all specimens in a study [1]. In contrast, semilandmarks are utilized to quantify shapes along curves and surfaces where true anatomical homologues are scarce, sliding along tangents to minimize bending energy or Procrustes distance against a reference [1] [2]. Linear measurements, the basis of traditional morphometrics, consist of standardized distance measurements between landmarks or other anatomical points, providing readily interpretable size information but limited capacity to capture complex geometric shape [3] [4].

The ongoing methodological evolution in morphometrics reflects a transition from traditional linear measurement-based approaches toward geometric frameworks that preserve the spatial relationships of anatomical structures throughout analysis. This comparison guide examines the discriminatory performance, technical requirements, and appropriate applications of these three fundamental data types within the broader research context of geometric versus linear morphometrics, providing researchers with evidence-based guidance for methodological selection.

Conceptual Definitions and Technical Specifications

Landmarks: Homologous Points

Landmarks are defined as discrete, anatomically homologous points that can be precisely located and consistently identified across all specimens in a study. From a biological perspective, landmarks represent points that are considered equivalent in each individual at every stage of developmental or evolutionary transformation [1]. Technically, landmarks are represented as two-dimensional or three-dimensional coordinates that capture their spatial position. The primary strength of landmarks lies in their biological interpretability, as they represent genuinely comparable anatomical loci across specimens. However, their primary limitation is that many biological structures, particularly smooth surfaces such as cranial vaults, offer few identifiable landmarks, thus restricting the morphological information that can be captured [1].

Semilandmarks: Geometric Homology

Semilandmarks are points used to quantify shapes along curves and surfaces where identifiable anatomical landmarks are insufficient. They are located using algorithms that establish geometric correspondence rather than developmental homology [1]. The technical process involves three key steps: initial placement along curves or surfaces between true landmarks, sliding along tangents to minimize either bending energy or Procrustes distance against a reference form, and subsequent treatment as homologous points in statistical analyses [2]. This approach enables the quantification of entire morphological structures rather than isolated points, significantly increasing data density. However, this method introduces methodological dependencies, as results can vary based on the chosen sliding algorithm (bending energy versus Procrustes distance) and sampling density [1] [2].

Linear Measurements: Traditional Dimensions

Linear measurements constitute the foundation of traditional morphometrics, consisting of standardized distance measurements between landmarks or other anatomical points. Common examples include lengths, widths, and heights of morphological structures, typically measured using calipers or digital tools [3] [4]. These measurements provide immediately interpretable biological data with straightforward collection protocols. However, they reduce complex morphological structures to single dimensions, inevitably losing geometric information about the spatial arrangement of structures. While multiple measurements can be combined, they cannot fully reconstruct the original geometry, presenting fundamental limitations for comprehensive shape analysis [4].

Table 1: Core Conceptual Comparison of Morphometric Data Types

| Feature | Landmarks | Semilandmarks | Linear Measurements |

|---|---|---|---|

| Basis of Homology | Developmental/evolutionary equivalence | Geometric correspondence | Anatomical reference points |

| Data Structure | 2D/3D coordinates | 2D/3D coordinates (with sliding) | Scalar distances |

| Information Captured | Spatial position of discrete points | Curves and surfaces between landmarks | Size and proportions |

| Primary Limitation | Sparse on smooth surfaces | Algorithm-dependent placement | Loss of geometric relationships |

Performance Comparison: Discriminatory Power and Applications

Taxonomic Discrimination

Multiple empirical studies have directly compared the discriminatory power of geometric morphometric methods (utilizing landmarks and semilandmarks) against traditional linear morphometrics. In species complex resolution, geometric morphometrics often demonstrates superior capability to separate closely related taxa. Research on Sorex shrews revealed that while linear morphometrics achieved a Jackknifed classification rate exceeding 92%, geometric morphometrics provided enhanced visualization of shape differences and better separation of centroid sizes among species [3]. Similarly, a study on Taterillus gerbils found that geometric morphometrics of cranial morphology "quantitatively supported traditional species boundaries" despite moderate classification accuracy [5].

Fossil shark tooth identification studies demonstrate another compelling application, where geometric morphometrics successfully recovered the same taxonomic separation as traditional morphometrics while capturing additional shape variables that traditional methods overlooked [4]. This pattern of geometric methods extracting more comprehensive morphological information appears consistent across diverse taxonomic groups.

Measurement Error and Repeatability

The manual placement of landmarks introduces potential observer error and measurement bias, though semiautomated approaches are developing to address this limitation [6]. Semilandmark placement introduces additional methodological variability, as results can differ based on the chosen algorithm (bending energy versus Procrustes distance minimization) and density of points [1] [2]. Studies comparing sliding methods found that while statistical results (F-scores, p-values) were often similar, estimates of within- and between-sample variation differed, and correlation between principal component axes was low [2].

Linear measurements generally exhibit high repeatability with standard protocols but capture less morphological information. Emerging automated landmarking approaches aim to reduce observer error associated with manual placement, potentially increasing accuracy in shape approximation [6].

Allometric Analysis

A crucial consideration in taxonomic applications is distinguishing true shape differences from allometric variation (shape change correlated with size). Research suggests there is "substantial risk that discrimination comes from variation in size, rather than shape" when using geometric morphometrics [5]. This is particularly relevant when comparing taxa of different sizes, as allometric patterns can confound taxonomic distinctions. The Sorex study specifically tested for allometric growth, finding it was not observed in the skull and mandible variations among the species examined [3]. Linear measurements inherently confound size and shape information, while geometric approaches enable their separation through Procrustes superposition, though the risk of allometric confounding remains if not explicitly tested.

Table 2: Empirical Performance Comparison Across Study Systems

| Study System | Landmarks Performance | Semilandmarks Performance | Linear Measurements Performance |

|---|---|---|---|

| Sorex shrews [3] | N/A | Effective species separation; centroid size differentiation | 92%+ classification rate; measurement overlap between some species |

| Shark teeth [4] | Captured comprehensive shape variation | Enabled analysis of root curvature | Effective but missed some shape variables |

| Human facial skeleton [2] | Limited on smooth surfaces | Method-dependent results (BE vs. PD) | Traditional approach; limited geometric information |

| Modern human populations [2] | Limited by low variation | Sensitive to sliding algorithm with low variation | Established approach but less powerful for subtle differences |

Experimental Protocols and Methodologies

Standard Landmark and Semilandmark Protocol

A typical experimental workflow for geometric morphometric analysis involves several standardized steps. First, specimens are digitized using appropriate imaging technology (e.g., CT scanning, surface scanning, or photography). Next, homologous landmarks are manually identified and digitized on each specimen using software such as tpsDig [2] [4]. For semilandmark placement, curves and surfaces are defined between landmarks, and points are initially placed equidistantly along these contours. These points are then slid along tangents to minimize either bending energy or Procrustes distance against a reference specimen [1] [2]. Finally, all landmark and semilandmark coordinates undergo Generalized Procrustes Analysis (GPA) to remove differences in position, orientation, and scale, isolating pure shape variation for subsequent statistical analysis.

Linear Measurement Protocol

Traditional morphometric protocols begin with the identification of standardized measurement points on each specimen. Linear distances are then collected using digital calipers or measurement tools in imaging software. These raw measurements are typically log-transformed to normalize variance and allow for multivariate statistical analysis such as principal component analysis or discriminant function analysis [3] [4].

Methodological Comparisons

When comparing methodologies, researchers often apply multiple approaches to the same specimen set. For example, a study on Sorex shrews employed "morphological, linear and geometric morphometric analysis" on the same specimens to enable direct comparison of results [3]. Similarly, the shark tooth study applied both traditional and geometric morphometrics to the same teeth, allowing for "comparison with traditional morphometrics" and assessment of which approach "is more effective" [4]. Such comparative designs provide the most robust evidence for evaluating methodological performance.

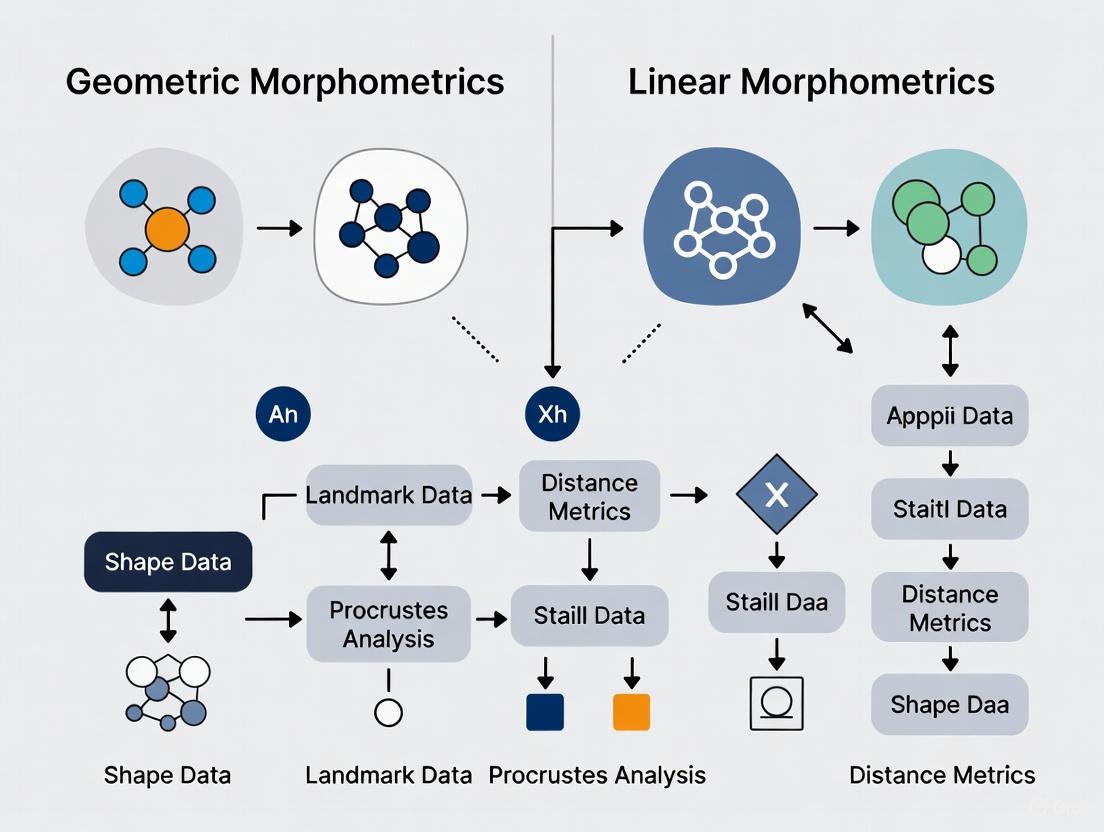

Morphometrics Methodological Workflow

Research Reagent Solutions: Essential Materials and Tools

Table 3: Essential Research Tools for Morphometric Data Collection

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Imaging Equipment | CT scanners, surface scanners, digital cameras | Specimen digitization | All morphometric approaches |

| Digitization Software | tpsDig, MorphoJ, Landmark | Landmark/semilandmark placement | Geometric morphometrics |

| Measurement Tools | Digital calipers, ImageJ | Linear distance collection | Traditional morphometrics |

| Analysis Packages | PAST, R (geomorph, Morpho) | Statistical shape analysis | All approaches |

| Alignment Methods | Generalized Procrustes Analysis (GPA) | Remove non-shape variation | Geometric morphometrics |

| Sliding Algorithms | Minimum bending energy, Procrustes distance | Semilandmark optimization | Semilandmark approaches |

The comparative evidence indicates that geometric morphometric approaches utilizing landmarks and semilandmarks generally provide superior discriminatory power for taxonomic and morphological research compared to traditional linear measurements. This advantage derives from their capacity to capture and preserve geometric relationships throughout analysis, enabling more nuanced visualization and interpretation of shape differences. However, this enhanced capability comes with increased methodological complexity and sensitivity to analytical decisions, particularly regarding semilandmark placement algorithms and densities.

For researchers selecting among these approaches, geometric morphometrics is recommended when comprehensive shape analysis is prioritized, specimens possess adequate homologous landmarks, and resources permit the more complex analytical pipeline. Linear morphometrics remains appropriate for rapid assessment of major size differences, when methodological simplicity is advantageous, or for comparison with historical datasets. Semilandmarks provide essential tools for analyzing complex biological shapes but require transparent reporting of methodological parameters and recognition that their results represent approximations of biological reality. As the field advances toward increasingly automated approaches, the integration of these complementary data types will continue to enhance our understanding of morphological diversity and evolution.

In the fields of biological taxonomy, medical phenotyping, and industrial metrology, the accurate capture and analysis of shape data is paramount. Two fundamental paradigms dominate this data acquisition landscape: coordinate-based approaches and measurement-based approaches. Coordinate-based methods capture the precise spatial coordinates of morphological structures, often creating detailed digital models [7]. In contrast, measurement-based approaches traditionally rely on linear distances, angles, and ratios between defined points [8]. Within scientific research, particularly in studies comparing the discriminatory performance of geometric morphometrics (GMM - a coordinate-based technique) versus linear morphometrics (LMM - a measurement-based technique), understanding the strengths, limitations, and appropriate applications of these paradigms is crucial for robust experimental design and valid conclusions. This guide provides an objective comparison of these methodologies, supported by experimental data and detailed protocols.

Fundamental Concepts and Definitions

Coordinate-Based Approaches

Coordinate-based data acquisition involves recording the precise two-dimensional or three-dimensional spatial coordinates of points located on an organism's structure. In geometric morphometrics, these are typically captured as landmarks (anatomically homologous points), semi-landmarks (points along curves and surfaces), or outline points [7] [9]. The primary output is a configuration of points that retains the complete geometry of the structure throughout analysis. Modern implementations often use digital scanners, coordinate measuring machines (CMMs), or photographic techniques with calibration to capture this data [7] [10].

Measurement-Based Approaches

Measurement-based data acquisition, historically the foundation of morphometrics, involves collecting linear distances between landmarks, as well as angles and ratios derived from these measurements [8]. This approach produces a set of one-dimensional variables that describe size and shape characteristics but do not inherently preserve the complete geometric structure of the specimen. Traditional tools include calipers, but digital interfaces now allow for automated data collection from images or directly from instruments [11].

Table 1: Core Conceptual Differences Between the Two Paradigms

| Characteristic | Coordinate-Based Approaches | Measurement-Based Approaches |

|---|---|---|

| Primary Data | 2D/3D coordinate points | Linear distances, angles, ratios |

| Geometric Relationship | Preserved entirely | Partially lost |

| Statistical Framework | Multivariate shape space | Traditional multivariate statistics |

| Primary Analysis Methods | Procrustes analysis, PCA, CVA | Discriminant Analysis, PCA, CVA |

| Common Tools | Digital scanners, CMMs, calibrated photography | Calipers, rulers, protractors |

Methodological Comparison in Taxonomic Research

Experimental Evidence from Mammalian Taxonomy

A 2023 study by Viacava et al. directly compared the performance of 3D geometric morphometrics (coordinate-based) and four published linear measurement sets (measurement-based) in discriminating three clades of antechinus, a mammalian species complex known for subtle shape differences [8]. The researchers used linear discriminant analysis (LDA) to assess discriminatory performance under three conditions: raw data, data with isometry (overall size) removed, and data with allometry (non-uniform effects of size) removed.

Table 2: Performance Comparison in Taxonomic Discrimination (Based on Viacava et al., 2023 [8])

| Data Type | Analysis Method | Key Finding | Statistical Note |

|---|---|---|---|

| Linear Measurements (Raw) | Linear Discriminant Analysis | High group discrimination | Discrimination largely driven by size variation |

| Linear Measurements (Size-Removed) | Linear Discriminant Analysis | Reduced discriminatory power | Remaining shape differences less diagnostic |

| Geometric Morphometrics (Raw) | Principal Component Analysis | Moderate group discrimination | |

| Geometric Morphometrics (Size-Removed) | Linear Discriminant Analysis | Better group discrimination after isometry and allometry removal | Separation based on non-allometric shape differences |

The study revealed that while raw linear measurements showed high discriminatory power in initial analyses, this discrimination was primarily driven by variation in size rather than shape [8]. When the effects of size were statistically removed, the discriminatory performance of linear measurements decreased substantially. Conversely, geometric morphometrics provided better discrimination between groups after isolating non-allometric shape differences, suggesting it is more effective at detecting true shape variation independent of size.

Visualizing the Experimental Workflow

The following diagram illustrates the key methodological steps employed in comparative studies of geometric and linear morphometrics:

Technical Implementation Across Fields

Data Acquisition Techniques and Technologies

The implementation of coordinate-based and measurement-based approaches varies significantly across different research and application fields, each with distinct technical requirements and performance characteristics.

Table 3: Technical Implementation in Different Fields

| Field | Coordinate-Based Methods | Measurement-Based Methods |

|---|---|---|

| Biological Morphology | 3D surface scanners, micro-CT, laser scanning, photogrammetry, landmark digitization software (tpsDig, Landmark) [7] [11] | Digital calipers, ocular micrometers, standardized measurement protocols [8] |

| Medical Phenotyping | Intraoral laser rulers with digital cameras, calibrated photographic systems [10] | Traditional calipers, manual Mallampati scoring, visual assessment scales [10] |

| Industrial Metrology | Coordinate Measuring Machines (CMMs) with scanning probes, laser scanners, structured light systems [12] [13] | Handheld calipers, micrometers, height gauges, touch-trigger CMM probing [12] |

Performance Comparison in Industrial Metrology

A 2020 study comparing scanning (coordinate-based) and touch-trigger (measurement-based) probe systems on Coordinate Measuring Machines found no statistically significant differences in measurement uncertainty for basic geometric features [12]. However, the scanning method was significantly quicker for data acquisition, though it required relatively higher calibration time. This demonstrates the efficiency advantage of coordinate-based approaches for comprehensive surface characterization, while acknowledging contexts where discrete point measurement remains sufficient.

Experimental Protocols

Protocol for Comparative Taxonomic Studies

The following detailed protocol is adapted from Viacava et al. (2023) for conducting comparative studies of geometric and linear morphometrics:

Specimen Selection: Select specimens representing the groups to be discriminated (e.g., species, populations, age classes). Ensure adequate sample size considering the higher dimensionality of geometric morphometric data.

Data Collection:

- Coordinate-Based Data: Digitize 3D landmarks using standardized anatomical definitions. Use surface scanners or CT scanners for complete shape capture. For 2D studies, use standardized photographic views with scale calibration.

- Measurement-Based Data: Collect linear measurements using digital calipers or from digital images. Follow published measurement protocols specific to the taxonomic group.

Data Processing:

- Coordinate-Based Pipeline:

- Perform Generalized Procrustes Analysis to remove effects of position, orientation, and scale.

- Extract shape variables (Procrustes coordinates) for analysis.

- Optionally, apply semilandmark protocols for curves and surfaces.

- Measurement-Based Pipeline:

- Compile linear measurements into data matrix.

- Apply size correction if needed (e.g., Mosimann-style size correction).

- Coordinate-Based Pipeline:

Statistical Analysis:

- Conduct Principal Component Analysis (PCA) to visualize shape variation.

- Perform Linear Discriminant Analysis (LDA) to assess group discrimination.

- Use cross-validation to estimate classification rates and avoid overfitting.

- Compare performance using correct classification rates and discriminant function plots.

Protocol for Digital Morphometric Phenotyping

Based on the method described by Sutherland et al. (2017) for upper airway phenotyping:

Equipment Setup: Use a digital camera with an intraoral laser ruler that projects two parallel beams of known separation distance (e.g., 1.0 or 1.5 cm) for scale calibration [10].

Image Acquisition:

- Capture standardized photographs:

- P1: Front view with mouth open maximally, tongue within mouth, no phonation.

- P2: Front view with mouth open maximally, tongue extended maximally.

- P3: Side profile with mouth open, tongue extended.

- P4: Front view with mouth open, tongue depressed, no phonation.

- Capture standardized photographs:

Data Extraction:

- Use image analysis software to measure structures in pixels.

- Convert pixel measurements to physical units using the known laser distance as calibration reference.

- Extract quantitative measures: tongue width, length, area; mouth dimensions; uvula parameters.

Analysis:

- Compare measures between patient and control groups.

- Assess associations with clinical indices (e.g., AHI for sleep apnea).

- Determine discriminatory power using ROC analysis or similar methods.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials for Morphometric Research

| Item | Function | Application Context |

|---|---|---|

| Digital Calipers | Precise linear measurement collection | Measurement-based data acquisition [8] |

| tpsDig Software | Landmark and outline digitization from image files | Coordinate-based 2D data collection [11] |

| Coordinate Measuring Machine (CMM) | High-precision 3D coordinate acquisition | Industrial metrology and engineering [12] [13] |

| Laser Ruler Assembly | Projection of parallel beams for photographic calibration | Medical phenotyping and digital morphometrics [10] |

| Micro-CT Scanner | High-resolution 3D imaging without specimen destruction | Detailed internal morphology studies [7] |

| StereoMorph R Package | 2D and 3D landmark and curve data collection using consumer cameras | Low-cost coordinate-based data acquisition [11] |

| Geomorph R Package | Geometric morphometric analysis of landmark data | Statistical analysis of coordinate-based data [8] |

The comparative evidence indicates that coordinate-based and measurement-based approaches each have distinct advantages depending on research goals. Coordinate-based geometric morphometrics provides superior capability for analyzing complex shape differences independent of size, particularly valuable for discriminating cryptic species or quantifying subtle morphological changes [8]. Measurement-based linear morphometrics offers practical advantages in terms of equipment requirements and analytical simplicity, with strong performance when size variation contributes to group differences [8]. The choice between paradigms should be guided by research questions, sample availability, and equipment access, with the understanding that hybrid approaches often provide the most comprehensive morphological insights.

The analysis of biological form is a cornerstone of evolutionary biology, taxonomy, and various applied scientific fields. Within this domain, the concepts of size, shape, and their relationship—known as allometry—are fundamental. The study of allometry, which examines how organismal traits change with size, remains an essential concept for understanding evolution and development [14]. Historically, two primary schools of thought have shaped the methodological approaches to allometry: the Huxley–Jolicoeur school, which characterizes allometry as the covariation among morphological traits that all contain size information, and the Gould–Mosimann school, which defines allometry as the covariation between shape and size after their separation [14] [15]. This distinction is not merely academic; it underlies fundamental differences in how researchers quantify, analyze, and interpret morphological data.

The rise of geometric morphometrics (GMM) has revolutionized the analysis of shape by preserving geometric relationships throughout statistical analyses, in contrast to traditional linear morphometrics (LMM) based on sets of distance measurements [8] [3]. This guide provides a comprehensive comparison of these frameworks, their performance in discriminatory tasks, and their handling of allometry, supported by experimental data and detailed methodologies to inform research practices across biological and medical sciences.

Theoretical Foundations: Two Schools of Allometric Thought

The Gould-Mosimann School: Size-Shape Covariation

The Gould-Mosimann framework explicitly separates size and shape according to the criterion of geometric similarity [14] [15]. In this conceptualization, shape is defined as the morphological information that remains after accounting for differences in position, orientation, and scale. Allometry is then studied as the covariation between this purified shape variable and a measure of size, typically centroid size (the square root of the sum of squared distances of all landmarks from their centroid) [16].

Within geometric morphometrics, this concept is implemented operationally through the multivariate regression of shape variables on centroid size [14]. The regression coefficient vector indicates the direction of shape change associated with size increase, providing a multivariate representation of allometry. This approach directly tests the hypothesis that shape depends on size and allows for visualization of the predicted shape changes along the allometric vector.

The Huxley-Jolicoeur School: Covariation Among Traits

The Huxley-Jolicoeur school defines allometry as the covariation among morphological features that all contain size information, without necessarily separating size and shape into distinct components [14] [15]. This framework originated from the observation that pairwise plots of log-transformed measurements often fit straight lines, interpretable through constant ratios between relative growth rates of different parts [14].

In its multivariate generalization, allometric trajectories are characterized by the first principal component (PC1) of morphological variables, which represents the line of best fit to the data points [14]. In geometric morphometrics, this approach is implemented in analyses using either Procrustes form space or conformation space (also known as size-and-shape space), where position and orientation are standardized but size is retained [15].

Comparative Framework of Allometric Concepts

Table 1: Comparison of Allometric Frameworks in Morphometrics

| Aspect | Gould-Mosimann School | Huxley-Jolicoeur School |

|---|---|---|

| Conceptual Definition | Covariation between shape and size | Covariation among traits containing size information |

| Size-Shape Relationship | Explicitly separated | Integrated in form space |

| Primary Analytical Method | Multivariate regression of shape on size | First principal component in form space |

| Morphospace Used | Shape tangent space | Conformation space (size-and-shape space) |

| Size Measurement | External (e.g., centroid size) | Internal to the morphological space |

| Visualization | Shape change along size vector | Trajectory in multivariate morphospace |

| Biological Emphasis | Allometry as shape change correlated with size | Allometry as general size-related growth trajectory |

Methodological Implementation: Geometric vs. Linear Morphometrics

Geometric Morphometrics (GMM)

Geometric morphometrics is based on the statistical analysis of landmark coordinates, which capture the geometry of biological structures. The standard analytical workflow involves:

- Landmark Digitization: Capture of 2D or 3D coordinates of biologically homologous points

- Generalized Procrustes Analysis (GPA): Superimposition that removes differences in position, orientation, and scale

- Tangent Space Projection: Mapping to a linear space for multivariate statistics

- Allometric Analysis: Regression of Procrustes coordinates on centroid size [16] [15]

GMM provides several advantages: it preserves complete geometric information throughout analysis, allows visualization of shape changes, and separates shape variation from size variation [3]. The method is particularly powerful for capturing subtle morphological differences within species by analyzing landmarks on organismal structure [17].

Linear Morphometrics (LMM)

Traditional linear morphometrics relies on collections of distance measurements between landmarks. The standard workflow includes:

- Measurement Collection: Caliper-based or digital distance measurements

- Size Correction: Application of ratios, residuals, or Burnaby's method

- Multivariate Analysis: Principal components or discriminant analysis of measurement vectors

While LMM benefits from simpler data collection and analysis, it captures only a subset of morphological information and cannot fully reconstruct the geometry of forms [3]. As noted in shrew taxonomy studies, linear measurements often overlap across species, limiting discriminatory power [3].

Experimental Workflow for Morphometric Analysis

The following diagram illustrates a standardized workflow for comparative morphometric studies, integrating both geometric and linear approaches:

Performance Comparison: Discriminatory Power in Taxonomic Applications

Empirical Evidence from Mammalian Species Complexes

A comprehensive study comparing the taxonomic resolution of GMM and LMM in antechinus (a mammalian species complex) revealed critical insights into their relative performance [8]. The research assessed discrimination using raw data, data with isometry removed, and data after allometric correction across four published LMM protocols and a 3D GMM dataset.

Table 2: Performance Comparison in Taxonomic Discrimination of Antechinus Species Complex

| Method | Raw Data Discrimination | After Isometry Removal | After Allometric Correction | Risk of Size-Confounded Results |

|---|---|---|---|---|

| Linear Morphometrics (LMM) | High discrimination | Reduced discrimination | Further reduced discrimination | Substantial risk |

| Geometric Morphometrics (GMM) | Moderate discrimination | Improved discrimination | Best discrimination | Minimal risk with proper correction |

| Principal Findings | LMM showed high group discrimination with raw data | GMM discriminated groups better after isometry removal | GMM performed best after allometric correction | LMM discrimination primarily from size variation |

The study demonstrated that while LMM can be a powerful tool for taxonomic discrimination, there is substantial risk that this discrimination comes from variation in size rather than shape [8]. This finding has profound implications for taxonomic practice, suggesting that measurement protocols might benefit from GMM-based pilot studies to differentiate allometric and non-allometric shape differences before developing easier-to-apply LMM protocols.

Case Study: Anatolian Shrew Morphometrics

Research on Anatolian Sorex species (S. volnuchini, S. raddei, and S. satunini) provides additional comparative data [3]. The study employed morphological features (molar teeth), linear morphometrics, and geometric morphometrics to address taxonomic complexities arising from morphological similarity.

The linear morphometric analysis yielded a Jackknifed classification rate exceeding 92%, indicating strong discriminatory power [3]. However, geometric morphometrics provided additional insights through visualization of shape differences and explicit tests of allometry. The study found that allometric growth—shape change correlated with size—was not observed in the skull (ventral and dorsal) and mandible across these species [3]. This absence of allometry simplified taxonomic interpretation by eliminating size-related shape changes as a confounding factor.

Allometry Correction Methods: Performance and Applications

Comparison of Allometric Vector Estimation Methods

Computer simulation studies have compared the performance of four methods for estimating allometric vectors from landmark data [15]:

- Multivariate regression of shape on size

- First principal component (PC1) of shape

- PC1 in conformation space

- PC1 of Boas coordinates

Table 3: Performance Comparison of Allometric Vector Estimation Methods

| Method | Theoretical Framework | Performance with Isotropic Noise | Performance with Anisotropic Noise | Implementation Complexity |

|---|---|---|---|---|

| Regression of shape on size | Gould-Mosimann | Consistently better than PC1 of shape | Robust performance | Low (standard in most software) |

| PC1 of shape | Gould-Mosimann | Suboptimal compared to regression | Variable performance | Low |

| PC1 in conformation space | Huxley-Jolicoeur | Very close to simulated allometric vectors | Excellent performance | Moderate |

| PC1 of Boas coordinates | Huxley-Jolicoeur | Almost identical to conformation space | Excellent performance | High |

Simulations with no residual variation showed that all four methods are logically consistent with one another, with minor nonlinearities in the mapping between conformation space and shape tangent space [15]. When residual variation was added (either isotropic or with patterns independent of allometry), regression of shape on size performed consistently better than the PC1 of shape. The PC1s of conformation and Boas coordinates were nearly identical and very close to the simulated allometric vectors under all conditions [15].

Practical Implications for Allometry Correction

The choice of allometry correction method has significant implications for downstream analyses. In taxonomic studies, failure to properly account for allometry can lead to spurious group discrimination based on size differences rather than genuine shape differences [8]. The simulation results suggest that for studies firmly within the Gould-Mosimann paradigm, regression-based approaches provide robust allometric correction, while for approaches in the Huxley-Jolicoeur tradition, conformation space PC1 offers excellent performance [15].

Applications Across Biological Disciplines

Nutritional Assessment in Children

Geometric morphometrics has been applied to nutritional status classification in children aged 6-59 months using arm shape analysis from photographs [17]. The SAM Photo Diagnosis App Program aims to develop an offline smartphone tool for identifying nutritional status, demonstrating the translational potential of morphometric methods. This application faces the challenge of classifying new individuals not included in the original study sample, requiring specialized approaches for out-of-sample prediction in the shape space [17].

Forensic Analysis of Bitemarks

Morphometric analysis has been applied to dog bitemarks, assessing inter-canine distance and interdental incisor-canine distance for forensic identification [18] [19]. Studies found high agreement between dental measurements and skin lesions, particularly for inter-canine measurements across different arch types and skull classifications (mesocephalic, dolichocephalic, brachycephalic) [19]. This application demonstrates the practical utility of morphometric approaches in legal medicine.

Phylogenetic Reconstruction

The potential use of morphometric data in phylogenetic reconstruction represents an emerging application, though systematic reviews indicate that continuous morphometric data alone do not consistently improve phylogenetic resolution or accuracy compared to discrete morphological characters [6]. Challenges include widespread non-independence of landmarks due to functional or developmental correlation, which violates assumptions of standard trait evolution models [6].

Essential Research Tools and Reagents

Table 4: Research Toolkit for Morphometric Studies

| Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|

| Digital Calipers | Precision linear measurements | Traditional LMM data collection |

| 3D Scanner | High-resolution surface capture | GMM landmark digitization |

| Geomorph R Package | Procrustes analysis and shape statistics | GMM data analysis [20] |

| tpsDig Software | 2D landmark digitization | GMM data collection |

| MorphoJ | Comprehensive morphometric analysis | Integrated GMM analysis |

| Standardized Imaging Setup | Controlled specimen photography | 2D GMM data collection |

| Specimen Stabilization Mount | Elimination of positional variance | Standardized data collection |

| Centroid Size Calculation | Standardized size measurement | Allometric analysis [15] |

The choice between geometric and linear morphometrics, and between different allometric frameworks, should be guided by research questions, sample characteristics, and analytical goals. Geometric morphometrics provides superior visualization, complete geometric information, and robust allometric correction when properly implemented. Linear morphometrics offers practical advantages in data collection and analysis simplicity but risks confounding size and shape differences.

For taxonomic discrimination, evidence suggests that GMM with appropriate allometric correction provides the most reliable separation of genuine shape differences from size variation [8] [3]. The Gould-Mosimann framework, implemented through multivariate regression of shape on size, offers a statistically robust approach for allometric studies, while the Huxley-Jolicoeur framework, implemented through PC1 in conformation space, provides an excellent alternative for analyses that treat size and shape as integrated features [14] [15].

Future methodological development should address challenges in out-of-sample classification [17], phylogenetic reconstruction [6], and standardization of allometry correction protocols across biological disciplines.

Morphometrics, the quantitative analysis of form, is a cornerstone of biological and medical research. For centuries, Linear Morphometrics (LMM)—the collection of point-to-point distance measurements—has been the standard tool for taxonomic and clinical studies, ranging from distinguishing between closely related rodent species to analyzing human cranial variations [21]. Its longevity is rooted in simplicity: measurements are easily acquired with basic tools and analyzed with straightforward statistics. However, this traditional approach carries significant limitations, primarily its limited capacity to capture holistic shape and its confounding of size and shape differences [21] [8]. In response, Geometric Morphometrics (GMM) has emerged as a modern standard, using the coordinates of anatomical landmarks to provide a comprehensive characterization of shape, explicitly separating it from size through Procrustes superimposition [21].

The choice between LMM and GMM is not merely a technicality; it fundamentally shapes the questions a researcher can ask and the answers they will find. This guide provides an objective comparison of their performance, underpinned by recent experimental data. The core thesis, supported by empirical findings, is that while LMM can effectively discriminate between groups, this power often stems from size variation. In contrast, GMM provides a more rigorous toolkit for isolating non-allometric shape differences, which are crucial for understanding true morphological divergence [21] [8]. This distinction is critical for researchers in fields like drug development, where precise phenotypic characterization in model organisms can inform mechanistic studies.

Performance Comparison: Experimental Data and Quantitative Findings

A 2023 study directly compared the discriminatory performance of four established LMM protocols against a 3D GMM dataset using three clades of antechinus, a mammalian species complex known for subtle shape differences [21] [8]. The research assessed the methods using raw data, data with isometry (overall size) removed, and data after allometric correction (non-uniform size effects removed).

Table 1: Comparative Performance of LMM and GMM in Taxonomic Discrimination

| Performance Metric | Linear Morphometrics (LMM) | Geometric Morphometrics (GMM) |

|---|---|---|

| Group Discrimination (Raw Data) | High [21] [8] | Effective [21] [8] |

| Group Discrimination (Size & Allometry Removed) | Performance drops significantly; discrimination often reliant on size [21] [8] | Maintains or improves discrimination; isolates non-allometric shape differences [21] [8] |

| Shape Characterization | Limited, based on isolated distances; may use non-homologous points (e.g., max/min dimensions) [21] | Holistic; retains geometric structure of landmarks [21] |

| Treatment of Size & Allometry | Difficult to separate; measurements often contain redundant size information [21] | Explicitly separates size (Centroid Size) and shape via Procrustes superimposition [21] |

| Data Acquisition | Low-cost, easy to acquire [21] | More complex; requires digitization and specialized software [21] |

| Visualization of Results | Limited to statistical plots (e.g., PCA score plots) [21] | Powerful visualization of shape change along axes (e.g., warp grids) [21] |

| Risk of Inflated Variance | Can inflate variance in first principal components [21] | Provides more balanced representation of shape variance [21] |

The key finding is that while LMM showed high group discrimination with raw data, its performance was substantially reliant on size variation. When size and allometry were statistically removed, GMM demonstrated superior ability to discriminate groups based on shape alone [21] [8]. This is critical because non-allometric shape variation is more likely to reflect independent adaptive processes and genuine species divergence rather than simple growth trajectories [21].

Methodologies: Protocols for a Direct Comparison

The experimental protocol from the 2023 antechinus study provides a framework for a rigorous comparison.

Experimental Workflow

The following diagram outlines the key steps for a comparative analysis of LMM and GMM, highlighting the parallel and divergent processes.

Detailed Methodological Steps

Specimen and Data Acquisition:

- LMM Protocol: Using digital calipers, collect a set of linear distances. These often include standard cranial measurements like skull length, width, and height, as defined by existing taxonomic literature [21].

- GMM Protocol: Using a 3D scanner or digitizer, capture the 3D coordinates of predefined anatomical landmarks (e.g., suture intersections, tips of processes) on the same specimens. These landmarks must be biologically homologous across all individuals [21].

Data Processing:

- LMM: The matrix of linear measurements is log-transformed to normalize data. Size correction may be attempted using ratios or residuals from a regression on a composite size variable, though these methods have known limitations [21].

- GMM: The landmark coordinates undergo a Generalized Procrustes Analysis (GPA). This procedure translates, rotates, and scales all specimens to a common unit size, minimizing the sum of squared distances between corresponding landmarks [21]. This produces two separate datasets:

- Shape Variables: The Procrustes-aligned coordinates, which represent pure shape.

- Centroid Size: A size variable calculated as the square root of the sum of squared distances of all landmarks from their centroid [21].

- Allometric Correction: For GMM, a multivariate regression of the shape variables on centroid size is performed. The residuals from this regression represent shape variation after removing the effects of allometry [21].

Data Analysis and Comparison:

- Both the processed LMM and GMM datasets (raw, size-corrected, and allometry-corrected) are analyzed using Principal Component Analysis (PCA) to visualize group separation.

- Linear Discriminant Analysis (LDA) is used to quantitatively assess the classification performance of each method and dataset for discriminating the known taxonomic groups [21] [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key solutions and tools required for conducting morphometric research.

Table 2: Essential Research Reagents and Materials for Morphometrics

| Item Name | Function/Brief Explanation |

|---|---|

| 3D Scanner / Microscribe | Device for capturing high-resolution 3D models or 3D coordinates of biological specimens for GMM. Replaces calipers for distance-based measurement [21]. |

| Digital Calipers | Tool for collecting traditional LMM measurements with high precision. Remains the standard instrument for linear-based protocols [21]. |

| Geometric Morphometrics Software (e.g., geomorph R package) | Specialized statistical software for performing Procrustes superimposition, shape visualization, and associated multivariate analyses [21]. |

| Statistical Software (e.g., R, PAST) | Platform for conducting standard multivariate analyses (PCA, LDA) on both LMM and GMM datasets and for general data manipulation [21]. |

| Anatomical Landmark Protocol | A predefined and justified set of biologically homologous points (e.g., suture intersections) to ensure consistency and repeatability in GMM data collection [21]. |

| Standard Linear Measurement Protocol | A published set of linear measurements (e.g., skull greatest length) specific to the taxon or structure under investigation for LMM [21]. |

Decision Framework: Choosing the Right Method for Your Research Question

The choice between LMM and GMM should be driven by the specific research question and logistical constraints. The following decision pathway synthesizes the experimental findings into a practical guide.

Interpretation of the Decision Workflow

- Choose LMM when: The research requires a low-cost, rapid method for initial screening or discrimination where the differences between groups are expected to be large and potentially correlated with size. It is also suitable when research must align directly with a vast body of historical literature built on linear measurements [21].

- Choose GMM when: The research question demands a rigorous separation of size and shape, such as in studies of cryptic species or complex morphological integration. It is the unequivocal choice when the goal is to identify and visualize subtle, non-allometric shape changes or to explore the relationship between shape and other variables (e.g., ecology, genetics) without the confounding effect of size [21] [8].

- Consider a Hybrid Approach: A powerful strategy is to use GMM for an initial pilot study. This can identify the specific anatomical regions that contribute most to shape variation. This knowledge can then inform the development of a targeted, efficient, and highly effective LMM protocol that avoids the pitfalls of redundant or non-homologous measurements, combining the statistical rigor of GMM with the practicality of LMM [21].

Both Geometric and Linear Morphometrics are powerful tools in the researcher's arsenal. The experimental evidence clearly shows that LMM's discriminatory power can be misleadingly inflated by size variation, while GMM provides a more robust framework for isolating true shape differences through its explicit treatment of allometry [21] [8]. The decision is not always binary. For exploratory taxonomy or functional morphology studies where shape is paramount, GMM is the superior choice. For large-scale, rapid screening where resources are limited and size differences are diagnostically relevant, LMM remains a valid and practical tool. Ultimately, the most informed research may leverage the strengths of both, using GMM to discover the true nature of morphological differences and LMM to apply that knowledge efficiently at scale.

Methodological Applications: From Neuroimaging to Drug Discovery

Tensor-Based Morphometry in Neurodegenerative Disease Tracking

In the study of neurodegenerative diseases, researchers rely on sensitive biomarkers to track the progression of pathological changes in the brain. Morphometry—the quantitative analysis of brain structure—has emerged as a critical tool for detecting subtle anatomical changes that occur during disease progression. Two principal approaches have dominated this field: linear morphometrics, which involves manual or semi-automated measurements of specific structures (e.g., hippocampal volume), and geometric morphometrics, which analyzes the complete shape and deformation patterns of brain structures. As a specialized form of geometric morphometry, Tensor-Based Morphometry (TBM) has gained prominence for its ability to provide detailed, voxel-level maps of tissue growth or atrophy by analyzing deformation fields derived from image registration [22] [23].

TBM functions by calculating the Jacobian determinant of deformation fields that map individual brain images to a common template. These Jacobian determinants represent local volume differences: values greater than 1 indicate local expansion, while values less than 1 indicate local contraction or atrophy. This approach offers significant advantages for neurodegenerative disease tracking, as it can detect diffuse atrophic patterns throughout the brain without requiring prior anatomical segmentation [24] [25]. The methodological robustness of TBM has been demonstrated across various neurodegenerative conditions, including Alzheimer's disease (AD), frontotemporal dementia (FTD), and Huntington's disease (HD), establishing it as a powerful tool for both clinical research and therapeutic trial monitoring [26] [25] [27].

Comparative Performance of TBM Methodologies

Field Strength Comparison: 3T vs. 1.5T MRI for TBM

Magnetic field strength represents a fundamental consideration in MRI-based tracking of neurodegeneration, affecting both image quality and statistical power. A comparative study investigating TBM for tracking Alzheimer's disease progression directly addressed this question by analyzing 110 subjects scanned longitudinally at both 3.0T and 1.5T field strengths. The cohort included 24 patients with Alzheimer's Disease, 51 individuals with mild cognitive impairment (MCI), and 35 healthy controls [26].

Table 1: Sample Size Requirements for Detecting 25% Slowing of Atrophy with 80% Power

| Patient Group | Field Strength | Subjects Required |

|---|---|---|

| Alzheimer's Disease | 1.5T | 37 |

| Alzheimer's Disease | 3.0T | 49 |

| Mild Cognitive Impairment | 1.5T | 108 |

| Mild Cognitive Impairment | 3.0T | 166 |

Surprisingly, the study found that 1.5T scans demonstrated slightly higher statistical power for detecting longitudinal change, although this advantage did not reach statistical significance. The atrophy measures derived at both field strengths showed strong correlations with clinical decline as measured by standard cognitive tests including the Alzheimer's Disease Assessment Scale-cognitive subscale (ADAS-cog), Mini-Mental State Examination (MMSE), and Clinical Dementia Rating sum-of-boxes (CDR-SB) [26]. This finding has important practical implications for clinical trial design, suggesting that 1.5T scanners—which are more widely available and cost-effective—remain viable for longitudinal TBM studies.

Registration Methodologies in TBM

The accuracy and sensitivity of TBM depend critically on the image registration algorithms used to compute deformation fields. A comprehensive comparison of registration methodologies examined multiple approaches, including viscous fluid registration, inverse-consistent linear elastic registration, and both symmetric and asymmetric unbiased registration techniques [22] [23].

Table 2: Performance Comparison of Registration Methods for TBM

| Registration Method | Reproducibility | Sensitivity to True Change | Resistance to Noise |

|---|---|---|---|

| Fluid Registration | Moderate | High | Low |

| Inverse-Consistent Linear Elastic | Moderate | Moderate | Moderate |

| Symmetric Unbiased | High | High | High |

| Asymmetric Unbiased | High | High | High |

The investigation revealed that unbiased registration methods (both symmetric and asymmetric) demonstrated superior performance characteristics. These methods produced symmetric log-Jacobian distributions with zero mean under the null hypothesis of no change, making them less likely to detect artifactual changes in the absence of true physiological change. This property is particularly valuable for longitudinal studies where minimizing false positive findings is crucial. The unbiased methods also showed higher reproducibility and more accurate measurement of biological deformations by explicitly penalizing bias in the resulting statistical maps [22] [23].

The foundational principle of unbiased registration involves coupling the computation of deformations with statistical analyses of the resulting Jacobian maps. The approach minimizes an energy functional that incorporates both image matching criteria (such as sum of squared differences or mutual information) and regularization terms based on the Kullback-Leibler divergence between probability density functions associated with the deformations [23]. This mathematical framework ensures that the deformation fields maintain intuitive axiomatic properties essential for reliable morphometric analysis.

Advanced TBM Techniques: Diffusion Tensor-Based Morphometry

While conventional TBM typically utilizes T1-weighted images, the relative homogeneity of white matter on these scans limits sensitivity to changes in specific pathways. To address this limitation, Diffusion Tensor-Based Morphometry (DTBM) has been developed, leveraging both scalar and directional information from diffusion tensor imaging [24].

In a study comparing T1W-TBM, FA-TBM (using fractional anisotropy), and DTBM in patients with hereditary spastic paraplegia type 11 (SPG11), DTBM demonstrated superior capability for detecting pathway-specific atrophy. While T1W-TBM showed diffuse white matter atrophy in SPG11 patients, DTBM revealed that atrophy predominantly affected several long-range pathways, providing more specific anatomical localization [24].

The DTBM methodology involves proper alignment of diffusion tensor images, which requires specialized registration approaches that account for tensor reorientation consistent with anatomical transformations. The resulting deformation fields capture volume changes with enhanced sensitivity to specific white matter pathways, making DTBM particularly valuable for disorders characterized by selective white matter degeneration [24].

Recent applications of DTBM have expanded to developmental studies, where it has revealed pathway-specific volumetric trajectories from infancy through young adulthood. Different white matter pathways exhibit distinct growth patterns, with the corticospinal tract showing protracted growth into young adulthood while corpus callosum growth is largely complete within the first three years of life [28]. These findings demonstrate DTBM's utility for investigating both developmental and degenerative processes affecting white matter architecture.

TBM in Action: Comparative Neurodegenerative Disease Studies

Differential Diagnosis: Frontotemporal Dementia vs. Alzheimer's Disease

The diagnostic differentiation between frontotemporal dementia (FTD) and Alzheimer's disease represents a clinically challenging scenario where TBM has demonstrated significant utility. A comprehensive study compared the effectiveness of hippocampal volumetry (HV), voxel-based morphometry (VBM), and TBM for distinguishing between these neurodegenerative conditions [25].

The investigation revealed distinctive patterns of brain atrophy in each disorder. FTD patients showed predominant atrophy in anterior brain regions, including the frontal lobes and anterior temporal lobes, often with right-side predominance. In contrast, AD patients exhibited more prominent atrophy in medial temporal lobe structures, including the hippocampus and entorhinal cortex, as well as posterior cingulate and parietal regions [25].

TBM proved particularly valuable for capturing the specific anatomical patterns characteristic of each disorder. The deformation patterns derived from TBM analysis aligned with known neuropathological differences between FTD and AD, providing a sensitive method for differential diagnosis. The whole-brain nature of TBM allowed for comprehensive assessment without requiring a priori hypotheses about specific affected regions, an advantage over region-of-interest approaches like hippocampal volumetry [25].

Enhanced Longitudinal Analysis: Two-Level Deformation-Based Morphometry

Recent methodological advances have further refined TBM approaches for longitudinal studies. A novel two-level deformation-based morphometry pipeline has been developed to enhance sensitivity for detecting within-subject changes over time [29].

This innovative approach involves two sequential registration stages. First, Jacobian determinants are calculated for each individual using within-subject registration to capture longitudinal changes. Subsequently, these Jacobian maps are transformed to an unbiased common space for group-level statistical analysis. This methodology minimizes registration bias that can occur when directly registering individual brains with substantial morphological differences to a common template [29].

Validation studies demonstrated that this two-level pipeline is 4.5 times more sensitive for detecting longitudinal within-subject volume changes compared to conventional one-level approaches. The method also more accurately captured the magnitude of induced volume changes in synthetic experiments and showed lower false positive rates in test-retest scenarios [29]. When applied to the OASIS-2 dataset, the enhanced pipeline better captured cortical volume changes associated with cognitive decline in dementia patients compared to healthy controls, confirming its utility for neurodegenerative disease tracking.

TBM-Based Classification: Tensor-Based Grading for Huntington's Disease

The principles of TBM have been extended to develop novel classification frameworks for neurodegenerative disorders. Tensor-based grading represents an innovative approach that combines patch-based analysis with deformation tensor metrics to model local patterns of anatomical changes [27].

In this methodology, deformation-based tensor fields are first computed from non-rigid image registration. Local similarity metrics are then calculated using a patch-based approach within a log-Euclidean framework. Finally, an elastic-net regularization model selects the most discriminative features for classification [27].

When applied to the classification of pre-manifest Huntington's disease patients and healthy controls, tensor-based grading achieved a classification accuracy of 87.5% ± 0.5%, substantially outperforming conventional patch-based grading methods (81.3% ± 0.6%). Furthermore, the tensor-based grading provided complementary information to putamen volume—a established imaging marker for Huntington's disease—suggesting potential for combined biomarker approaches [27].

Experimental Protocols for TBM Analysis

Standard TBM Processing Pipeline

A typical TBM analysis involves several sequential processing steps, each requiring specific methodological considerations:

Image Acquisition and Preprocessing: High-resolution 3D T1-weighted images are acquired using standardized protocols, such as the Alzheimer's Disease Neuroimaging Initiative (ADNI) protocol. Preprocessing typically includes geometric distortion correction, intensity non-uniformity correction, bias field correction, and spatial calibration based on phantom scans [22] [23].

Image Registration: Non-rigid registration is performed to align each subject's image to a common template. The choice of registration algorithm significantly impacts results, with unbiased methods generally preferred for their superior reproducibility and accuracy [22].

Jacobian Map Computation: The determinant of the Jacobian matrix is computed from the deformation field at each voxel, representing local volume differences between the subject and template.

Statistical Analysis: Jacobian maps are analyzed using general linear models to identify significant group differences or correlations with clinical variables. Multiple comparison correction is typically applied using false discovery rate or random field theory methods.

Essential Research Reagents and Tools

Table 3: Essential Research Tools for TBM Studies

| Tool Category | Specific Examples | Function in TBM Analysis |

|---|---|---|

| Image Processing Software | ANTs, SPM, FSL | Non-rigid image registration and Jacobian computation |

| Statistical Analysis Packages | R, MATLAB, Python with RMINC | Statistical modeling of Jacobian maps |

| Template Construction Tools | ANTs multivariate template construction | Creation of unbiased population templates |

| Quality Control Tools | MINC tools, ITK-SNAP | Visualization and quality assessment of registration results |

| DTI Processing Software | TORTOISE, DRTAMAS | Processing diffusion data for DTBM |

Visualizing TBM Workflows

Comprehensive TBM Analysis Pipeline

TBM Analysis Methodology Overview

The diagram illustrates the comprehensive workflow for TBM analysis, from image acquisition through final applications. The process begins with MRI acquisition, which can utilize either conventional T1-weighted imaging or diffusion tensor imaging (DTI). Following preprocessing to correct for various artifacts, unbiased non-rigid registration aligns images to a common template. The resulting deformation fields are used to compute Jacobian maps, which undergo statistical analysis to identify significant patterns of structural difference. The workflow highlights several methodological variations, including conventional TBM, diffusion tensor-based morphometry (DTBM), longitudinal analysis pipelines, and tensor-based grading approaches, each offering unique advantages for specific research applications.

Tensor-Based Morphometry represents a powerful methodology for tracking neurodegenerative disease progression, offering distinct advantages over both traditional linear morphometrics and other geometric morphometric approaches. The technique's sensitivity to distributed atrophic patterns, combined with its ability to provide detailed voxel-level maps of structural change, makes it particularly valuable for studying heterogeneous neurodegenerative conditions.

Key performance comparisons reveal several important considerations for researchers: (1) 1.5T and 3T scanners show comparable statistical power for longitudinal TBM studies, with 1.5T potentially offering slight advantages in statistical power despite lower signal-to-noise ratios; (2) Unbiased registration methods significantly improve reproducibility and reduce false positive findings compared to conventional registration approaches; (3) Advanced TBM variations, including DTBM and tensor-based grading, provide enhanced sensitivity for specific applications such as white matter pathway analysis and disease classification.

The integration of TBM into large-scale neurodegenerative disease studies like ADNI has established its validity as a biomarker for disease progression. Future methodological developments will likely focus on enhancing multi-modal integration, refining longitudinal analysis pipelines, and improving accessibility for clinical applications. As geometric morphometrics continues to evolve, TBM remains a cornerstone technique for quantifying and visualizing the structural consequences of neurodegenerative pathology.

Nuclear Mechano-Morphometric Biomarkers for Cancer Diagnosis

Comparative Performance of Morphometric Methodologies

The discriminatory power of a nuclear morphometric analysis is highly dependent on the chosen methodology. The table below summarizes the performance of geometric (non-linear, shape-based) and linear morphometric approaches in classifying cell status, based on experimental data from studies of normal and cancerous cell lines [30].

| Morphometric Approach | Cell Types / Conditions Compared | Key Discriminatory Features | Reported Classification Accuracy |

|---|---|---|---|

| Geometric (Deep Learning on full nucleus) | NIH/3T3 (mouse fibroblast) vs. BJ (human fibroblast) | Complex, non-linear texture and shape patterns | 96.1% (Validation) [30] |

| Geometric (Deep Learning on full nucleus) | BJ (normal human fibroblast) vs. MCF10A (fibrocystic breast) | Primarily shape features | 88.2% (Validation) [30] |

| Geometric (Deep Learning on full nucleus) | MCF7 vs. MCF10A vs. MDA-MB-231 (breast cancer lines) | Complex texture and shape patterns | 87.8% (Validation) [30] |

| Linear Morphometrics (Logistic Regression) | BJ (normal human fibroblast) vs. MCF10A (fibrocystic breast) | Pre-defined shape features | Slightly lower than deep learning approach [30] |

| Geometric (Deep Learning on nuclear patches) | BJ (normal human fibroblast) vs. MCF10A (fibrocystic breast) | Texture features at the scale of heterochromatin foci | 78.8% (Validation) [30] |

Experimental Protocols for Morphometric Analysis

Protocol: SCENMED Platform for Nuclear Morphometric Classification

The Single-Cell Nuclear Mechanical Diagnostics (SCENMED) platform combines fluorescence imaging and deep learning to discriminate between normal and cancer cells based on nuclear architecture [30].

- Cell Culture and Preparation: Seed cells (e.g., NIH/3T3, BJ, MCF10A, MCF7, MDA-MB-231) in glass-bottom dishes and allow them to grow overnight.

- Fixation and Staining: Fix and permeabilize the cells. Stain the nuclei with DAPI (4′,6-diamidino-2-phenylindole).

- Image Acquisition: Acquire several thousand wide-field images of the nucleus using a 60X objective.

- Image Processing and Segmentation: Employ a 4-step image processing procedure to identify individual nuclei, removing artifacts like overexposure, edge blur, and drift blur.

- Data Analysis and Classification:

- Geometric/Deep Learning Path: Classify the pre-processed nucleus images using a convolutional neural network (CNN) pipeline based on the VGG architecture.

- Linear Morphometrics Path: Extract pre-defined morphometric features (e.g., shape, texture) from each nucleus and use a supervised linear model (e.g., logistic regression) for classification.

Protocol: Chromatin Condensation Analysis via HC/EC Ratio

This protocol details the measurement of the heterochromatin-to-euchromatin (HC/EC) ratio, a quantitative metric for nuclear mechano-biology status [30].

- Image Acquisition: Follow steps 1-4 of the SCENMED protocol to obtain processed images of DAPI-stained nuclei.

- Intensity Thresholding: Calculate a threshold value to distinguish between heterochromatin (highly condensed, brighter DAPI staining) and euchromatin (less condensed, dimmer DAPI staining). The threshold is determined based on the image intensity distribution.

- Pixel Classification: Classify each pixel within the nucleus as belonging to either heterochromatin or euchromatin based on the calculated threshold.

- Ratio Calculation: Compute the HC/EC ratio by dividing the number of pixels classified as heterochromatin by the number of pixels classified as euchromatin for each nucleus.

Workflow and Conceptual Diagrams

SCENMED Experimental Workflow

Relationship: Morphometrics and Biomarkers

Research Reagent Solutions

The following table details key reagents and materials essential for conducting experiments in nuclear mechano-morphometric biomarker research.

| Research Reagent / Material | Function in Experiment |

|---|---|

| DAPI (4′,6-diamidino-2-phenylindole) | Fluorescent stain that binds strongly to A-T rich regions in DNA, used to visualize the nucleus and its internal chromatin structure for image analysis [30]. |

| Cell Lines (e.g., BJ, MCF10A, MDA-MB-231) | Provide biologically relevant in vitro models of normal human tissue and various cancer states (e.g., fibrocystic, metastatic) for comparative studies [30]. |

| VGG-based Convolutional Neural Network | A deep learning architecture adapted for image classification tasks, used to automatically identify complex, non-linear morphometric patterns from nuclear images [30]. |

| Procrustes Analysis Algorithms | A statistical method for geometric morphometrics that aligns, rotates, and scales landmark configurations to isolate shape variation from other nuisances like size and position [17]. |

| Liquid Biopsy Assays | Enable non-invasive sampling and analysis of circulating tumor cells (CTCs) or cell-free DNA (cfDNA), providing a source of material for molecular biomarker detection [31]. |

| High-Throughput Proteomics/Genomics Platforms | Technologies such as next-generation sequencing (NGS) and mass spectrometry that accelerate the discovery and validation of molecular biomarkers from various sample types [32] [31]. |

Geometric Morphometrics for GPCR Structure Classification in Pharmaceutical Research

G protein-coupled receptors (GPCRs) represent one of the most important drug target classes in pharmaceutical research, with over 30% of FDA-approved drugs acting through these receptors [33]. Despite their therapeutic importance, analyzing their dynamic, complex structures remains challenging due to their structural flexibility and the subtle nature of their conformational changes. Traditional methods for quantifying structural variations in GPCRs have relied on linear measurements, but these approaches often fail to capture the comprehensive three-dimensional nature of receptor activation and ligand-induced conformational changes.

Geometric morphometrics (GM) offers a powerful alternative for analyzing shape variation in three-dimensional space. This technique, borrowed from disciplines such as anthropology and paleontology, provides a mathematical framework for quantifying and comparing complex biological shapes [34]. When applied to GPCR structures, GM can discriminate between receptor states based on characteristics such as activation state, bound ligands, and the presence of fusion proteins, with the most significant results focused at the intracellular face where G protein coupling occurs [35].

This review examines the application of geometric morphometrics to GPCR structure classification within the broader context of morphometric performance research, specifically comparing its discriminatory power against traditional linear-based methods for pharmaceutical applications.

Geometric versus Linear Morphometrics: Theoretical Foundations and Performance Comparison

Fundamental Methodological Differences

Linear Morphometrics (LMM) traditionally relies on manually defined measurements between specific points, such as distances, angles, or ratios. In GPCR research, this might include measuring distances between specific Cα atoms across transmembrane helices or angles between helical axes. While straightforward to implement, LMM captures only a limited aspect of structural variation and may overlook subtle but functionally important shape changes.

Geometric Morphometrics (GM) utilizes Cartesian landmark coordinates common to all structures being compared. For GPCRs, this typically involves the XYZ coordinates of the alpha-carbon atoms at both ends of each transmembrane helix, effectively capturing the three-dimensional arrangement of the entire transmembrane bundle [35]. The core analytical process involves Procrustes superimposition to standardize and scale the data, followed by principal component analysis to identify patterns of shape variation [36].

Comparative Performance in Taxonomic Resolution

A critical 2023 study by Viacava et al. directly compared the performance of these methods for discriminating closely related species, with findings highly relevant to GPCR classification [8]. The research demonstrated that while raw LMM data showed apparently high discriminatory power in principal component analysis, this discrimination primarily reflected size variation rather than genuine shape differences. After removing isometric (overall size) and allometric (size-related shape) effects, GM provided superior discrimination of taxonomic groups based purely on shape characteristics.

Table 1: Performance Comparison of Morphometric Methods in Discrimination Tasks

| Performance Metric | Linear Morphometrics | Geometric Morphometrics |

|---|---|---|

| Shape Characterization | Partial, limited to predefined measurements | Holistic, captures complete 3D structure |

| Size/Shape Separation | Poor, often confounded | Excellent with proper statistical treatment |

| Allometry Assessment | Requires additional analyses | Integrated into analytical framework |

| Data Visualization | Limited 2D plots | Rich 3D shape deformation visualizations |

| Discriminatory Power | High for size-based differences, lower for pure shape | Superior for shape-based classification after size correction |

| Technical Barrier | Low | Moderate to high |

The implications for GPCR research are significant: while LMM might quickly identify gross structural differences, GM provides more biologically meaningful discrimination of subtle conformational states that may be critical for understanding drug mechanism of action.

Application to GPCR Structures: Experimental Evidence

Proof of Concept: Wiseman et al. (2021) Study

The pioneering application of GM to GPCR structures was demonstrated by Wiseman et al. in 2021, establishing this methodology as a novel approach for membrane protein analysis [34]. Their research implemented a comprehensive workflow from structure selection through statistical analysis, specifically designed to classify GPCRs based on structural characteristics.

Table 2: Key Findings from GM Application to GPCR Structures [35] [36]

| Structural Characteristic | GM Discrimination Result | Localization of Maximum Variation |

|---|---|---|

| Activation State | Successful classification of active vs. inactive states | Intracellular face |

| Bound Ligand Type | Significant differentiation between ligand classes | Intracellular face for family B receptors |

| Fusion Proteins | Strong discrimination with fusion protein present | Intracellular face |

| Thermostabilizing Mutations | No significant differences detected | Not applicable |

| Receptor Family | Successful classification across GPCR families | Both extracellular and intracellular faces |

The experimental protocol began with acquiring GPCR structures from the GPCRdb database and cross-referencing with the mpstruc database. Researchers identified the first and last residues of each transmembrane helix using the GPCRdb numbering system, then extracted XYZ coordinates for the Cα atoms at these positions using Swiss-PdbViewer software. This created a set of 28 landmarks (7 helices × 2 ends × 2 coordinates) for each receptor structure [36].