Geometric Morphometrics vs. Computer Vision: A Comparative Analysis for Advanced Morphological Classification in Biomedicine

This article provides a comprehensive analysis of geometric morphometrics (GMM) and computer vision (CV), particularly deep learning, for morphological classification in biomedical research and drug development.

Geometric Morphometrics vs. Computer Vision: A Comparative Analysis for Advanced Morphological Classification in Biomedicine

Abstract

This article provides a comprehensive analysis of geometric morphometrics (GMM) and computer vision (CV), particularly deep learning, for morphological classification in biomedical research and drug development. It explores the foundational principles of both approaches, examines their methodological applications across diverse domains from paleontology to precision medicine, and addresses key challenges and optimization strategies. Through comparative validation against real-world data, we demonstrate that while GMM offers interpretability for homologous structures, CV methods consistently achieve superior classification accuracy, often exceeding 80%, in complex, high-dimensional tasks. The synthesis concludes with a forward-looking perspective on hybrid models and 3D geometric deep learning, outlining their potential to transform morphological analysis in clinical and research settings.

Foundational Principles: From Landmarks to Latent Spaces in Morphological Analysis

Contents

- Introduction

- Core Concepts of Geometric Morphometrics

- Quantitative Comparison of Sliding Semilandmark Methods

- Experimental Protocols for Semilandmark Analysis

- Geometric Morphometrics in the Age of Computer Vision

- Essential Research Toolkit

- Conclusion

Geometric morphometrics (GM) represents a fundamental advancement in the quantitative analysis of biological form, enabling researchers to capture, analyze, and visualize the geometry of morphological structures with unprecedented precision. Unlike traditional morphometric approaches that rely on linear measurements, angles, or ratios, GM utilizes the Cartesian coordinates of biological landmarks, allowing for a comprehensive preservation of geometric information throughout statistical analyses [1] [2]. This methodology has become indispensable across various fields, from evolutionary biology to anthropology, particularly for discriminating groups with subtle morphological differences, such as modern human populations [1].

At the heart of GM lies a triad of core components: landmarks for defining homologous anatomical points, semilandmarks for quantifying homologous curves and surfaces, and Procrustes analysis for superimposing shapes to remove non-biological variation. This framework allows scientists to address complex questions about shape variation, allometry, and morphological integration. However, the field is currently undergoing a significant transformation with the rise of computer vision and deep learning approaches, which offer powerful alternatives for morphological classification [3] [4]. This guide provides a comprehensive comparison of these methodologies, supported by experimental data and detailed protocols.

Core Concepts of Geometric Morphometrics

Landmarks and Their Biological Significance

Landmarks are discrete, anatomically homologous points that can be precisely located and reliably reproduced across all specimens in a study. They are traditionally categorized into three types:

- Type I landmarks occur at discrete juxtapositions of tissues, such as the intersection of sutures or foramina.

- Type II landmarks represent points of maximum curvature or other local morphological extremes.

- Type III landmarks are defined by extremal points or constructed geometrically, such as the point of maximum distance from another landmark [5].

These landmarks form the foundational data structure for GM, represented as coordinate configurations that preserve the spatial relationships between points throughout analysis.

The Role of Semilandmarks in Quantifying Curves and Surfaces

Many biological structures lack sufficient traditional landmarks to capture their complete geometry. Semilandmarks solve this problem by allowing researchers to quantify homologous curves and surfaces [6]. These points are not anatomically defined but are placed along outlines and subsequently "slid" to remove tangential variation, as the contours themselves are homologous between specimens, but their individual points are not [1].

Two primary algorithms govern the sliding of semilandmarks:

- Minimum Bending Energy (BE): This method slides semilandmarks along tangents to minimize the bending energy required to deform the reference shape onto the target specimen, effectively assuming the deformation is as smooth as possible [1].

- Minimum Procrustes Distance (D): This approach slides semilandmarks to minimize the Procrustes distance between the target and reference by aligning points along directions perpendicular to the reference curve [1].

Procrustes Superimposition: Registering Biological Shapes

Generalized Procrustes Analysis (GPA) is the statistical procedure that standardizes landmark configurations by removing the effects of position, scale, and orientation through three sequential operations:

- Translation: Centering each configuration at the origin (0,0)

- Scaling: Scaling configurations to unit Centroid Size

- Rotation: Rotating configurations to minimize the sum of squared distances between corresponding landmarks [5]

This process results in Procrustes shape coordinates that reside in a curved shape space, which is typically projected onto a tangent space for subsequent multivariate statistical analysis. The consensus configuration represents the mean shape of all specimens after superimposition.

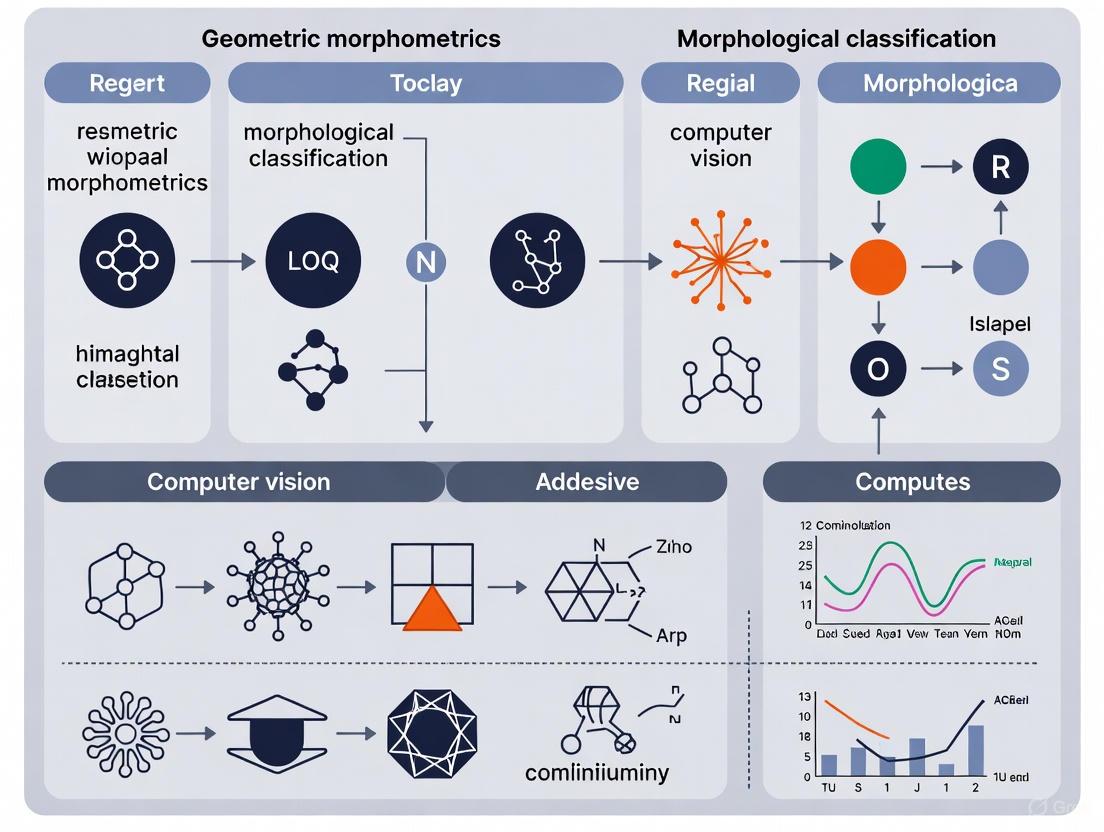

The following diagram illustrates the complete workflow of a geometric morphometric analysis, integrating both landmarks and semilandmarks:

Quantitative Comparison of Sliding Semilandmark Methods

The choice of sliding criterion can significantly influence analytical outcomes, particularly when studying samples with low morphological variation. A seminal study by Bernal et al. (2006) systematically compared the Minimum Bending Energy (BE) and Minimum Procrustes Distance (D) methods using human molars and craniometric data, revealing important practical differences [1].

Table 1: Comparison of Sliding Semilandmark Methods Based on Empirical Studies

| Analysis Metric | Minimum Bending Energy (BE) | Minimum Procrustes Distance (D) | Biological Interpretation |

|---|---|---|---|

| Statistical Power (F-scores & P-values) | Similar to D method | Similar to BE method | Both methods provide comparable statistical power for group discrimination [1] |

| Within-group Variation Estimation | Different estimates compared to D | Different estimates compared to BE | Methods yield different estimates of within-sample variation [1] |

| Between-group Variation Estimation | Different estimates compared to D | Different estimates compared to BE | Methods yield different estimates of between-sample variation [1] |

| Principal Component Correlation | Low correlation with D-based PCs | Low correlation with BE-based PCs | First principal axes differ substantially between methods [1] |

| Classification Performance | Similar correct classification % | Similar correct classification % | Both methods show comparable discriminant function classification rates [1] |

| Group Ordination | Different arrangement along discriminant scores | Different arrangement along discriminant scores | Despite similar classification, ordination of groups differs between methods [1] |

The implications of these differences are particularly important for studies of modern human populations, where morphological variation is inherently low. Researchers must recognize that their choice of sliding criterion may influence estimates of within- and between-group variation, potentially affecting biological interpretations.

Table 2: Performance Comparison of GM vs. Computer Vision Approaches

| Method | Classification Accuracy | Data Requirements | Strengths | Limitations |

|---|---|---|---|---|

| Traditional GM | 65-89% (depending on sample size and structure) [4] | 15-20 specimens per group [4] | Biological interpretability; visualization of shape changes; established statistical framework | Requires landmark correspondence; limited by landmark selection |

| Functional Data GM | Improved classification over traditional GM for shrew craniodental data [3] | Similar to traditional GM | Enhanced sensitivity to subtle shape variations; models continuous curves | Complex implementation; newer methodology with fewer software resources |

| Convolutional Neural Networks (CNN) | Outperforms GM in seed classification (15-30% error reduction) [4] | Large training datasets (thousands of images) | No landmark selection needed; automatic feature extraction; handles complex shapes | Black box nature; limited biological interpretability; large data requirements |

Experimental Protocols for Semilandmark Analysis

Protocol 1: Sliding Semilandmarks Using Minimum Bending Energy

Application Context: This method is particularly suitable when assuming smooth biological deformations, such as in studies of cranial vaults or molar outlines [1].

Step-by-Step Methodology:

Landmark and Semilandmark Digitization:

Reference Selection:

- Select a reference specimen (often the consensus of a preliminary Procrustes fit)

- Ensure the reference represents the overall sample well

Sliding Procedure:

- Allow semilandmarks to slide along tangents to the curve

- Optimize positions to minimize the bending energy between reference and target forms

- Iterate until convergence is achieved across all specimens

Procrustes Superimposition:

- Perform Generalized Procrustes Analysis on the combined landmark and slid semilandmark data

- Project coordinates into tangent space for subsequent statistical analysis

Biological Rationale: The BE method implements the conservative assumption that biological deformations tend to be smooth, making it appropriate for structures where this assumption is biologically justified [1].

Protocol 2: Sliding Semilandmarks Using Minimum Procrustes Distance

Application Context: This approach is valuable when the primary goal is optimal point-to-point correspondence between specimens, as in studies of facial symmetry [1].

Step-by-Step Methodology:

Initial Data Collection:

- Follow the same landmark and semilandmark digitization as in Protocol 1

Perpendicular Alignment:

- Slide semilandmarks along curves so they lie along lines perpendicular to the reference form

- Minimize the Procrustes distance between each specimen and the reference

Iterative Optimization:

- Update the reference form (often to the consensus)

- Repeat sliding process until convergence

Final Procrustes Fit:

- Perform final GPA on the complete dataset

- Use resulting coordinates for statistical shape analysis

Technical Note: This method effectively removes the component of variation along the tangent direction, focusing only on differences perpendicular to the curve [1].

The methodological relationship between these approaches and their position within the broader morphometric landscape can be visualized as follows:

Geometric Morphometrics in the Age of Computer Vision

The emergence of computer vision and deep learning approaches presents both competition and potential complementarity to traditional GM methods. A compelling study by Bonhomme et al. (2025) directly compared GM with Convolutional Neural Networks (CNNs) for archaeobotanical seed classification, demonstrating that CNNs consistently outperformed GM methods, particularly with larger sample sizes [4].

This performance advantage, however, comes with significant trade-offs. While CNNs excel at classification tasks, they function as "black boxes" with limited capacity for biological interpretation. In contrast, GM provides explicit information about which specific morphological features contribute to group differences, allowing researchers to visualize shape changes along principal components or discriminant axes.

Functional Data Geometric Morphometrics (FDGM) represents a hybrid approach that converts landmark data into continuous curves using basis function expansions [3]. This methodology enhances sensitivity to subtle shape variations and has demonstrated improved classification performance for shrew craniodental structures compared to traditional GM [3]. The FDGM framework is particularly valuable for species with minor morphological distinctions or for monitoring subtle shape changes in response to environmental factors.

Essential Research Toolkit

Table 3: Essential Software Tools for Geometric Morphometrics

| Software/Tool | Primary Function | Key Features | Access |

|---|---|---|---|

| geomorph R package [7] [8] | Comprehensive GM analysis | Landmark & semilandmark analysis; phylogenetic integration; Procrustes ANOVA | Free (R environment) |

| TPS Dig2 [1] [5] | Landmark digitization | 2D landmark data collection; semilandmark placement | Free |

| MorphoJ [2] | Statistical shape analysis | User-friendly interface; extensive visualization tools | Free |

| PAST [2] | Paleontological statistics | Multivariate statistics; includes basic GM capabilities | Free |

| Momocs [4] | Outline analysis | Elliptical Fourier analysis; outline processing | Free (R environment) |

Table 4: Key Research Reagents and Materials

| Material/Resource | Specification | Application in GM |

|---|---|---|

| Imaging System | Digital camera with standardized distance and orientation [1] | Ensuring comparable, orthogonal images for 2D GM |

| Specimen Mounting Apparatus | Stabilization jig with standardized planes (e.g., Frankfurt plane) [1] | Consistent specimen orientation across imaging sessions |

| Scale Bar | Metric reference included in image frame [2] | Scale calibration and verification |

| 3D Scanner (optional) | Laser or structured light scanner | 3D surface data acquisition for complex structures |

| Landmark Template | Digital or physical guide | Consistent landmark placement across specimens |

Geometric morphometrics, founded on the triad of landmarks, semilandmarks, and Procrustes analysis, provides a powerful, biologically interpretable framework for quantifying and analyzing morphological variation. The choice between sliding semilandmark methods involves important trade-offs: while Minimum Bending Energy assumes smooth biological deformations, Minimum Procrustes Distance focuses on optimal point correspondence, with each method potentially yielding different biological interpretations, particularly in studies of modern human populations characterized by low morphological variation [1].

As the field advances, Functional Data GM enhances traditional approaches by modeling shapes as continuous functions, providing greater sensitivity to subtle variations [3]. However, emerging evidence indicates that deep learning methods, particularly Convolutional Neural Networks, can outperform GM for specific classification tasks, though at the cost of biological interpretability [4]. The optimal methodological approach depends critically on research goals: GM remains superior for hypothesis-driven studies of specific morphological structures, while computer vision approaches offer advantages for pure classification tasks with sufficient training data. Future directions likely involve hybrid approaches that leverage the strengths of both paradigms, combining the biological interpretability of GM with the classification power of computer vision.

The quantification and classification of morphological shapes are fundamental to numerous scientific fields, from evolutionary biology to archaeology and medical imaging. For decades, geometric morphometrics (GMM), based on the statistical analysis of defined anatomical landmarks, has been the established methodology for such analyses [9]. However, the recent ascent of deep learning, particularly Convolutional Neural Networks (CNNs), offers a paradigm shift towards landmark-free, data-driven feature extraction [10] [9].

This guide provides an objective comparison of these two methodologies within the context of morphological classification research. We focus on the performance of CNNs against traditional GMM, supported by recent experimental data and detailed protocols, to inform researchers and professionals about the capabilities and applications of these powerful tools.

Methodological Comparison: Geometric Morphometrics vs. Convolutional Neural Networks

Core Principles

Geometric Morphometrics (GMM) is a landmark-based approach. It relies on the manual identification and digital recording of anatomically homologous points across specimens. The coordinates of these landmarks are then analyzed using multivariate statistics, such as Principal Component Analysis (PCA), to capture and compare shape variations [9]. A common extension is Elliptical Fourier Transform (EFT), which describes a shape's outline using harmonic coefficients, effectively capturing smooth contours without predefined points [10].

Convolutional Neural Networks (CNNs) represent a deep learning approach for image analysis. They automatically learn a hierarchy of relevant features directly from pixel data. Through multiple layers, CNNs detect simple patterns like edges, combine them into more complex structures, and ultimately learn representations that are highly effective for classification tasks without manual feature engineering [10] [11].

Comparative Workflow

The fundamental difference in approach between Geometric Morphometrics and Convolutional Neural Networks for a classification task can be visualized in the following experimental workflow, synthesized from recent comparative studies [10] [4] [9].

Performance Benchmarking: CNN vs. GMM/EFT

Recent empirical studies directly comparing CNN and GMM/EFT workflows demonstrate a consistent performance advantage for deep learning models across multiple domains and dataset sizes.

Archaeobotanical Seed Classification

A seminal 2025 study by Bonhomme et al. provided a direct comparison using four plant taxa (barley, olive, date palm, grapevine) crucial for understanding domestication history [10] [4]. The researchers used photographs of seeds and fruit stones, applying both EFT and CNN (VGG19 architecture) for binary classification.

Table 1: Performance Comparison on Archaeobotanical Seeds (Bonhomme et al., 2025) [10] [4]

| Taxon | Sample Size | Method | Performance (Accuracy) | Key Finding |

|---|---|---|---|---|

| Barley | 1,769 seeds | EFT with LDA | Higher | CNN was outperformed by EFT in this specific case |

| CNN (VGG19) | Lower | |||

| Olive | 473 seeds | EFT with LDA | Lower | CNN outperformed EFT |

| CNN (VGG19) | Higher | |||

| Date Palm | 1,087 seeds | EFT with LDA | Lower | CNN outperformed EFT |

| CNN (VGG19) | Higher | |||

| Grapevine | 1,430 seeds | EFT with LDA | Lower | CNN outperformed EFT |

| CNN (VGG19) | Higher |

The study concluded that CNN beat EFT in most cases, even for very small datasets starting from just 50 images per class. This demonstrates CNN's robust feature learning capability even with limited data, a common scenario in archaeobotanical research [10].

Honey Bee Wing Morphometrics

A 2025 study on honey bee populations across Europe further underscores the effectiveness of CNNs. Researchers used wing images to classify bees from five different countries, comparing three pre-trained CNN models [12].

Table 2: Performance of CNN Models on Wing Morphometrics (2025 Study) [12]

| CNN Model | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|

| VGG16 | 95% | Not Specified | Not Specified | Not Specified |

| InceptionV3 | Lower than VGG16 | Not Specified | Not Specified | Not Specified |

| ResNet50 | Lower than VGG16 | Not Specified | Not Specified | Not Specified |

The research highlighted not only the high predictive power of CNNs but also the advantages of automated CNN-based workflows over manual morphometric methods in terms of speed, objectivity, and scalability for large datasets [12].

Essential Research Reagents and Computational Tools

Implementing the methodologies discussed requires a suite of software tools and computational resources. The following table lists key solutions mentioned in the featured experiments.

Table 3: Research Reagent Solutions for Morphological Classification

| Tool/Solution | Type | Primary Function | Example Use Case |

|---|---|---|---|

| Momocs R Package [10] | Software Library | Geometric morphometrics and outline analysis | Analysis of seed outlines via Elliptical Fourier Transforms (EFT) |

| VGG19 [10] | Pre-trained CNN Model | Feature extraction and image classification | Baseline architecture for seed classification with transfer learning |

| Morpho-VAE [9] | Specialized Deep Learning Framework | Landmark-free morphological feature extraction | Analyzing primate mandible shapes from image data |

| R & Python with Keras [10] | Programming Environment | Bridging statistical analysis and deep learning | Implementing a reproducible workflow from data prep to model training |

| DeepWings [12] | Specialized Software | Automated landmark detection and classification | Wing geometric morphometrics classification of honey bees |

| Single-Board Computers (e.g., Raspberry Pi) [13] | Hardware Platform | Edge deployment of trained CNN models | On-device skin cancer detection in resource-constrained settings |

Advanced CNN Architectures and Implementation Protocols

State-of-the-Art CNN Models

The field of computer vision is rapidly evolving. While studies like Bonhomme et al. used the established VGG19, recent state-of-the-art models offer enhanced performance [14].

Table 4: State-of-the-Art Image Classification Models (2025)

| Model | Key Architectural Feature | Reported Top-1 Accuracy (ImageNet) | Strengths |

|---|---|---|---|

| CoCa (Contrastive Captioners) | Combines contrastive learning & captioning | 91.0% (Fine-tuned) | Exceptional multimodal understanding |

| DaViT (Dual Attention Vision Transformer) | Dual spatial & channel attention mechanisms | 90.4% (DaViT-Giant, Fine-tuned) | Captures global and local interactions |

| ConvNeXt V2 | Modernized pure convolutional architecture | ~89%+ (Fine-tuned) | High efficiency and accuracy balance |

| EfficientNet | Compound scaling of depth, width, resolution | ~88%+ (Fine-tuned) | Optimal performance-parameter trade-off |

Detailed Experimental Protocol: Implementing a CNN for Morphological Classification

Based on the methodologies from the cited studies, here is a generalized protocol for training a CNN for a task like seed or wing classification [10] [13] [12]:

Data Collection and Preprocessing:

- Acquire high-resolution, standardized images of the specimens (e.g., seeds, wings, mandibles).

- Resize images to a uniform dimension compatible with the chosen CNN input layer (e.g., 224x224 pixels).

- Apply image normalization to scale pixel values to a standard range (e.g., 0–1 or -1 to 1).

Data Augmentation:

- Artificially expand the dataset and improve model robustness by applying random, realistic transformations. These include rotation, flipping, zooming, and brightness adjustment, which simulate natural morphological variation and imaging conditions.

Model Selection and Transfer Learning:

- Select a pre-trained model (e.g., VGG16, VGG19, ResNet50) whose weights were learned from a large benchmark dataset like ImageNet.

- Remove the original classification head (the final layers) of the pre-trained network.

- Add a new, randomly initialized head tailored to the number of classes in the specific morphological problem (e.g., wild vs. domesticated).

Model Training:

- Freeze the convolutional base (feature extractor) and train only the new classification head for a few epochs. This allows the model to adapt its high-level logic to the new task.

- Unfreeze part or all of the convolutional base and continue training with a very low learning rate (fine-tuning). This refines the pre-trained features to be more specific to the morphological data.

- Use a loss function like categorical cross-entropy and an optimizer like Adam or SGD.

Model Evaluation:

- Evaluate the trained model on a held-out test set that was not used during training or validation.

- Report standard metrics including Accuracy, Precision, Recall, and F1-Score.

The Trade-off: Accuracy vs. Interpretability

A critical consideration when adopting CNNs is the trade-off between their high accuracy and the "black-box" nature of their predictions. While depth-scaled (very deep) CNNs can achieve the highest accuracy (e.g., 81.99% Top-1 in one study), this often comes at the cost of interpretability and a massive increase in parameters (e.g., 24 million) [15]. In contrast, width-scaled or baseline models may offer a better balance, maintaining reasonable accuracy with greater transparency. Techniques like Grad-CAM and LIME are increasingly used to visualize the regions of an image that most influenced the CNN's decision, helping to bridge the interpretability gap [15].

The empirical evidence clearly indicates that Convolutional Neural Networks generally outperform traditional geometric morphometrics for morphological classification tasks across diverse domains [10] [4] [12]. The key advantage of CNNs lies in their ability to automatically learn discriminative features directly from images, bypassing the labor-intensive and potentially subjective manual processes of landmarking or outline tracing.

However, the choice between methods is not absolute. GMM remains a powerful tool for hypothesis-driven research where specific anatomical landmarks are of biological interest. The future of morphological analysis lies not in the replacement of one method by the other, but in their complementary use. CNNs can serve as a powerful, automated screening and classification tool, while GMM can provide detailed, interpretable analyses of specific shape changes. As deep learning models become more transparent and accessible, they are poised to become an indispensable component of the modern morphological scientist's toolkit.

In scientific research, particularly within fields requiring morphological classification such as biology, paleontology, and drug development, two fundamental analytical paradigms exist: hypothesis-driven and data-driven science. The hypothesis-driven approach begins with a specific, educated guess about a system, and experiments are designed to test this predetermined hypothesis [16] [17]. This method is analogous to problem-driven technology development, where the starting point is a known problem, and tools are sought to address it [16]. In contrast, the data-driven approach starts with no specific hypothesis; instead, it begins with a broad question and involves computationally intensive analysis of large datasets to uncover hidden patterns, relationships, and novel insights that can subsequently generate new hypotheses [16] [17]. This is akin to tool-driven technology, where one starts with a powerful tool and explores its potential applications [16]. Understanding the core differences, strengths, and weaknesses of these paradigms is crucial for researchers applying them to modern morphological analysis techniques, such as geometric morphometrics and computer vision.

Philosophical and Practical Distinctions

The distinction between these paradigms is profound, influencing every stage of the research lifecycle, from initial design to final interpretation. Hypothesis-driven science provides a clear direction from the outset, focusing inquiry on a specific set of variables and mechanisms derived from existing theory or observation [17]. It is the traditional cornerstone of the scientific method, responsible for groundbreaking discoveries like penicillin and relativity [16] [17]. Its strength lies in its ability to test causal relationships and build upon established knowledge.

Conversely, data-driven science embraces a more exploratory, bottom-up philosophy. It is particularly suited for complex systems where underlying principles are not fully understood, allowing the data itself to reveal unexpected patterns [16]. A significant advantage of this paradigm is its capacity for higher levels of serendipity; the process of tinkering with data without a fixed direction can lead to less bias and the discovery of more transformative ideas [16]. For instance, Quantum Mechanics was largely forced by experimental data that contradicted existing intuitive theories [16]. However, a major critique of pure data-driven science, especially with complex machine learning models, is the lack of deep understanding, as these models can become "black boxes" that provide predictions without explanatory power [16].

Table 1: Core Philosophical Differences Between Paradigms

| Aspect | Hypothesis-Driven | Data-Driven |

|---|---|---|

| Starting Point | A specific hypothesis or question [17] | A broad question or a dataset [17] |

| Primary Goal | To test and falsify a pre-existing hypothesis [17] | To discover patterns and generate new hypotheses [16] [17] |

| Researcher's Role | Design controlled experiments to test a specific idea [17] | Curate data and apply algorithms to explore and model the system [16] |

| Bias Susceptibility | Higher risk of confirmation bias towards the initial hypothesis [16] | Lower risk of initial bias, but prone to seeing spurious correlations [16] |

| Typical Output | Causal explanation for a specific phenomenon [17] | Predictive models and novel associations [16] [17] |

Application in Morphological Classification Research

The debate between these paradigms is highly relevant in the field of morphological classification, where researchers aim to quantify and analyze the shape and structure of biological specimens. The two dominant methodologies in this space—Geometric Morphometrics (GMM) and Computer Vision (CV)—often align with different analytical paradigms.

Geometric Morphometrics (GMM): A Hypothesis-Driven Approach

GMM is a sophisticated method for quantifying shape and size variations in biological structures. It relies on the precise identification of homologous points, known as landmarks, across specimens [18]. These landmarks are ontogenetically conserved biological features, and their Cartesian coordinates are analyzed using statistical techniques like Procrustes superimposition to isolate pure shape variation from differences in size, orientation, and position [18]. The requirement for homology makes GMM a inherently hypothesis-driven tool; the researcher must have prior anatomical knowledge to identify comparable points, framing the analysis within a specific biological context. This approach is powerful for testing explicit hypotheses about taxonomy, ecology, and evolution [18]. However, it is manually intensive, susceptible to operator bias, and its applicability diminishes when comparing highly disparate taxa with few discernible homologous points [19].

Computer Vision (CV): A Data-Driven Approach

Computer vision, particularly Deep Learning (DL) models like Convolutional Neural Networks (CNNs), represents a more data-driven paradigm. Instead of relying on pre-defined homologous points, these algorithms learn to identify discriminative patterns directly from raw pixel data in images [20]. For example, in a study comparing methods for identifying carnivore agents from tooth marks, a Deep CNN classified marks with 81% accuracy, significantly outperforming a GMM approach which showed limited discriminant power (<40%) [20]. This data-driven method excels at handling large datasets and complex patterns without requiring explicit prior knowledge of homology, thus overcoming a key limitation of GMM [20] [19]. The trade-off, however, is the "black box" nature of these models, which can make it difficult to extract biologically meaningful explanations for their classifications [16] [20].

Table 2: Comparison of GMM and Computer Vision for Morphological Analysis

| Feature | Geometric Morphometrics (GMM) | Computer Vision (CV) |

|---|---|---|

| Analytical Paradigm | Primarily Hypothesis-Driven | Primarily Data-Driven |

| Core Data | Landmarks and semi-landmarks (homologous points) [18] | Raw pixels or extracted features from images [20] |

| Key Strength | Provides biologically meaningful, interpretable shape data [18] | High classification accuracy and automation; handles large datasets [20] |

| Key Limitation | Manual, time-consuming, and limited by homology [19] | "Black-box" nature; lack of deep understanding [16] [20] |

| Typical Accuracy | Lower in direct classification tasks (e.g., <40%) [20] | Higher in direct classification tasks (e.g., 81%) [20] |

| Automation Level | Low to Medium (requires expert input) [19] | High (once trained) [20] |

Experimental Data and Performance Comparison

Empirical studies directly comparing these methodologies provide critical insights for researchers selecting an analytical approach. A pivotal 2025 study offers a rigorous experimental comparison in the context of taphonomy—identifying carnivore agency from tooth marks on bones [20].

Experimental Protocol

The study established a controlled, experimentally-derived set of Bone Surface Modifications (BSM) generated by four different types of carnivores [20]. Two analytical methods were applied to this identical dataset:

- Geometric Morphometrics (GMM): This method utilized both outline analysis via Fourier analyses and a semi-landmark approach to capture the shape of the tooth pits [20].

- Computer Vision (CV): This approach employed Deep Convolutional Neural Networks (DCNN) and Few-Shot Learning (FSL) models to classify the same set of tooth mark images [20].

The performance of each method was evaluated based on its classification accuracy in correctly identifying the carnivore agent responsible for the tooth marks.

Results and Performance Metrics

The results demonstrated a clear performance gap between the two paradigms in this classification task. The quantitative findings are summarized in the table below.

Table 3: Experimental Performance in Carnivore Agency Classification [20]

| Methodology | Specific Technique | Reported Classification Accuracy |

|---|---|---|

| Geometric Morphometrics (GMM) | Outline (Fourier) & Semi-Landmark Analysis | < 40% |

| Computer Vision (CV) | Deep Convolutional Neural Network (DCNN) | 81.00% |

| Computer Vision (CV) | Few-Shot Learning (FSL) Model | 79.52% |

The study concluded that while GMM shows potential when using 3D topographical information, its current two-dimensional application has limited discriminant power for this task [20]. In contrast, computer vision methods offered an "unprecedented objective means of classifying BSM to taxon-specific agency with confidence indicators" [20]. This experiment underscores a key trade-off: the data-driven CV approach achieved superior predictive accuracy, while the more hypothesis-informed GMM approach, focused on biologically defined landmarks, provided less effective classification in this specific context.

Integrated Methodologies and Workflows

Recognizing that neither paradigm is universally superior, modern scientific practice is increasingly moving towards hybrid workflows that integrate both hypothesis-driven and data-driven elements. This synergistic approach aims to leverage the strengths of each while mitigating their respective weaknesses [17] [21].

A prime example is the application of FAIR (Findable, Accessible, Interoperable, Reusable) principles to computational modeling workflows in neuroscience [21]. This framework facilitates the combination of mechanistic, hypothesis-driven models with phenomenological, data-driven models, allowing for validation against experimental data across multiple biological scales [21]. The hybrid workflow can be conceptualized as a cycle, where data-driven exploration generates novel hypotheses, which are then rigorously tested and refined via hypothesis-driven experimentation, the results of which further enrich the data for the next cycle of exploration [17].

The following diagram illustrates the logical workflow of this integrated approach, showing how hypothesis-driven and data-driven processes feed into and reinforce each other.

The Scientist's Toolkit: Essential Research Reagents and Materials

Selecting the right tools is critical for executing research within either paradigm. The following table details key solutions and materials essential for morphological classification research.

Table 4: Essential Research Reagents and Materials for Morphological Analysis

| Item | Function/Description | Typical Use Case |

|---|---|---|

| High-Resolution Scanners (CT, Surface) | Generates 2D/3D digital images of specimens for analysis [19]. | Data acquisition for both GMM and CV. |

| Landmarking Software (e.g., tpsDig2) | Allows for manual or semi-automated placement of homologous landmarks on digital images [18]. | Geometric Morphometrics (GMM) data collection. |

| Structuring Element (Kernel) | A small matrix used in morphological image processing to define the neighborhood of pixels for operations like erosion and dilation [22] [23]. | Pre-processing images in Computer Vision. |

| Deep Learning Frameworks (e.g., TensorFlow, PyTorch) | Provides libraries to build, train, and deploy complex neural network models like CNNs [20]. | Implementing data-driven Computer Vision models. |

| Procrustes Analysis Software | Statically aligns landmark configurations to remove non-shape variations (size, position, rotation) [18]. | Analyzing and comparing shapes in GMM. |

| FAIR-Compliant Model Repositories | Databases for storing and sharing models and workflows in findable, accessible, interoperable, and reusable formats [21]. | Enhancing reproducibility and collaboration in both paradigms. |

The choice between hypothesis-driven and data-driven analytical paradigms is not a matter of selecting the objectively "better" option, but rather of aligning the methodology with the research goal. The hypothesis-driven approach, exemplified by geometric morphometrics, provides deep, biologically interpretable insights and is ideal for testing well-defined questions based on established knowledge. The data-driven approach, empowered by computer vision and deep learning, offers powerful predictive accuracy and the capacity to discover novel patterns in large, complex datasets without strong prior assumptions.

As the experimental evidence shows, computer vision can significantly outperform geometric morphometrics in specific classification tasks [20]. However, the most robust and impactful scientific progress will likely come from integrating both paradigms [17] [21]. By using data-driven methods to generate novel hypotheses and hypothesis-driven methods to validate and provide causal understanding, researchers can navigate the complexities of morphological classification with both the power of data and the clarity of theory.

The Rise of Geometric Deep Learning for 3D Molecular and Protein Structures

The field of quantitative morphology is experiencing a transformative shift from traditional geometric morphometrics (GM) toward geometric deep learning (GDL). While GM has long relied on manual landmark placement and statistical analysis of shape coordinates, this approach struggles with the complexity and scale of 3D molecular structures. Geometric deep learning represents a fundamental advancement by operating directly on non-Euclidean domains—graphs, surfaces, and manifolds—that naturally represent molecular and protein structures. This paradigm enables researchers to capture spatial, topological, and physicochemical features essential for predicting function and interactions [24].

The limitations of traditional GM become particularly evident in molecular contexts. As one archaeobotanical study demonstrated, convolutional neural networks (CNNs) significantly outperformed GM for seed classification tasks [4]. Similarly, in shrew craniodental morphology research, functional data geometric morphometrics (FDGM) combined with machine learning surpassed conventional GM approaches [3]. These successes in traditional morphology foreshadow GDL's revolutionary potential for 3D molecular and protein structures, where complexity far exceeds what manual methods can handle.

Foundations of Geometric Deep Learning

Core Principles and Molecular Representations

Geometric deep learning frameworks are built on mathematical principles of symmetry and equivariance, which are crucial for modeling 3D molecular structures accurately. Unlike traditional deep learning models that process Euclidean data (e.g., images, text), GDL handles non-Euclidean data through specialized architectures that preserve geometric relationships [24]. For molecular structures, this means models remain invariant to rotations, translations, and reflections—operations that should not alter the fundamental physical properties of a molecule [25].

Molecular representations in GDL primarily utilize three formats:

- 3D Graphs: Atoms as nodes and bonds as edges, with 3D coordinates preserving spatial geometry [25]

- 3D Surfaces: Molecular surfaces meshed into polygons capturing shape and chemical features [25]

- 3D Grids: Voxelized representations of molecular space using Euclidean data like atom coordinates [25]

These representations enable GDL models to learn from structural data while respecting the physical constraints and symmetries inherent to molecular systems.

Key Architectural Advances

Equivariant Graph Neural Networks (EGNNs) form the backbone of modern GDL approaches for 3D structures. These networks explicitly model the relationships between atomic coordinates and molecular properties while maintaining SE(3)/E(3) equivariance—meaning their predictions transform consistently with the input structure's orientation and position [25]. This property is essential for producing physically meaningful predictions that generalize across different molecular conformations.

The architectural landscape has diversified to include specialized frameworks:

- Message Passing Neural Networks: Propagate information along molecular graphs [26]

- Equivariant Transformers: Handle attention mechanisms with geometric constraints [27]

- Diffusion Models: Generate novel molecular structures through denoising processes [28]

Performance Comparison: GDL Versus Alternative Approaches

Protein Structure Prediction

Table 1: Performance Comparison of Protein Structure Prediction Methods

| Method | Approach Type | TM-Score (Hard Targets) | Multidomain Protein Handling | Key Strengths |

|---|---|---|---|---|

| D-I-TASSER | Hybrid GDL + Physics | 0.870 | Excellent | Integrates deep learning with physics-based simulations |

| AlphaFold3 | End-to-end GDL | 0.849 | Limited | State-of-the-art accuracy for single domains |

| AlphaFold2 | End-to-end GDL | 0.829 | Limited | Revolutionized protein structure prediction |

| C-I-TASSER | Contact-guided | 0.569 | Moderate | Uses predicted contact restraints |

| I-TASSER | Template-based | 0.419 | Moderate | Traditional homology modeling |

Recent benchmarking on 500 nonredundant "Hard" domains from SCOPe and CASP experiments demonstrates the superior performance of GDL-enhanced methods. D-I-TASSER, which integrates multisource deep learning potentials with iterative threading assembly refinement, achieved a template modeling (TM) score of 0.870, significantly outperforming AlphaFold2 (0.829) and AlphaFold3 (0.849) [29]. The hybrid approach proves particularly advantageous for difficult targets where pure deep learning methods struggle.

Molecular Generation for Drug Design

Table 2: Performance Comparison of Molecular Generation Methods

| Method | Approach | Vina Score | Novelty | Synthetic Accessibility | Key Innovation |

|---|---|---|---|---|---|

| DiffGui | Equivariant Diffusion | -7.92 | 98.7% | 0.71 | Bond diffusion + property guidance |

| Pocket2Mol | E(3)-equivariant GNN | -7.35 | 97.8% | 0.68 | Autoregressive atom generation |

| GraphBP | 3D Graph Generation | -7.21 | 96.5% | 0.65 | Distance and angle embeddings |

| 3D-CNN | Voxel-based VAE | -6.89 | 95.2% | 0.62 | 3D convolutional networks |

For structure-based drug design, DiffGui—a target-conditioned E(3)-equivariant diffusion model—demonstrates state-of-the-art performance by generating molecules with high binding affinity (Vina Score: -7.92) while maintaining drug-like properties [28]. The model's integration of bond diffusion and explicit property guidance addresses critical limitations of earlier autoregressive and voxel-based methods, which often produced unrealistic molecular geometries or suffered from error accumulation during sequential generation.

Protein-Protein Interaction Prediction

SpatPPI, a specialized GDL framework for predicting protein-protein interactions involving intrinsically disordered regions (IDRs), outperforms previous structure-based (SGPPI) and sequence-based (D-SCRIPT, Topsy-Turvy) methods on benchmark datasets [26]. By leveraging structural cues from folded domains to guide dynamic adjustment of IDRs through geometric modeling, SpatPPI achieves superior Matthews correlation coefficient (MCC) and area under precision-recall curve (AUPR) metrics, demonstrating GDL's advantage for complex biomolecular interactions where traditional methods struggle with structural flexibility.

Experimental Protocols and Methodologies

D-I-TASSER Protein Structure Prediction Workflow

The exceptional performance of D-I-TASSER stems from its sophisticated integration of deep learning with physical simulations. The protocol involves several key stages [29]:

- Deep Multiple Sequence Alignment Construction: Iterative searching of genomic and metagenomic databases to identify evolutionary patterns

- Spatial Restraint Generation: Using DeepPotential, AttentionPotential, and AlphaFold2 to create geometric constraints

- Replica-Exchange Monte Carlo Simulations: Assembling template fragments guided by a hybrid deep learning and knowledge-based force field

- Domain Partition and Assembly: Specialized handling of multidomain proteins through boundary splitting and reassembly

DiffGui Molecular Generation Process

DiffGui employs a sophisticated dual-diffusion process that simultaneously models atoms and bonds [28]:

- Dual Diffusion Framework: Separate noise schedules for atom positions/types and bond types

- Property-Guided Sampling: Incorporation of binding affinity, drug-likeness (QED), synthetic accessibility (SA), and physicochemical properties (LogP, TPSA) during generation

- E(3)-Equivariant Denoising: Equivariant graph neural networks that update both atom and bond representations

- Two-Phase Noise Injection: Initial phase focuses on bond diffusion with minimal atom disruption; secondary phase perturbs atom types and positions

This methodology ensures generated molecules maintain structural feasibility while optimizing for desired molecular properties—a significant advancement over earlier approaches that often produced energetically unstable structures.

SpatPPI IDR Interaction Prediction

SpatPPI addresses the challenging problem of predicting protein interactions involving intrinsically disordered regions through a specialized geometric learning approach [26]:

- Local Coordinate Systems: Embedding backbone dihedral angles into multidimensional edge attributes

- Dynamic Edge Updates: Reconstructing spatially enriched residue embeddings that adapt to IDR flexibility

- Two-Stage Decoding: Generating residue-level contact probability matrices that preserve partition-specific interaction modes

- Bidirectional Computation: Eliminating input-order biases through forward and reversed protein pair evaluation

This protocol enables SpatPPI to capture the spatial variability of disordered regions without requiring supervised conformational input, outperforming methods that rely solely on inter-residue distances without angular features.

Table 3: Key Research Tools and Resources for Geometric Deep Learning

| Resource | Type | Primary Application | Key Features | Access |

|---|---|---|---|---|

| D-I-TASSER | Software Suite | Protein Structure Prediction | Hybrid GDL + physical force fields | https://zhanggroup.org/D-I-TASSER/ |

| SpatPPI | Web Server | Protein-Protein Interactions | Specialized for intrinsically disordered regions | http://liulab.top/SpatPPI/server |

| DiffGui | Code Framework | Molecular Generation | Bond diffusion + property guidance | Reference implementation |

| GeoRecon | Pretraining Framework | Molecular Representation Learning | Graph-level geometric reconstruction | Research code |

| E(n) Equivariant GNNs | Architecture | General Molecular Learning | Built-in rotational/translational equivariance | Open-source libraries |

Successful implementation of GDL methods requires access to specialized computational resources:

- GPU Clusters: Essential for training and inference with large 3D molecular graphs

- Molecular Dynamics Simulations: Used for conformational sampling and validation (e.g., in SpatPPI and D-I-TASSER)

- Structural Biology Databases: AlphaFold DB, Protein Data Bank (PDB), and domain-specific datasets like HuRI-IDP for protein interactions

- Equivariant Deep Learning Libraries: Frameworks supporting SE(3)/E(3)-equivariant operations

Future Directions and Challenges

Despite remarkable progress, geometric deep learning for 3D molecular structures faces several important challenges. Data scarcity remains a significant limitation, particularly for high-quality annotated structural data [24]. Interpretability of GDL models continues to be difficult, though emerging explainable AI approaches show promise for extracting mechanistic insights [24]. Computational cost presents barriers for widespread adoption, especially for researchers without access to high-performance computing resources.

The most promising research directions include:

- Integration of physical priors with learned representations to improve generalization [27]

- Multi-modal learning combining structural, evolutionary, and physicochemical information [27]

- Foundation models for molecular structures that enable efficient transfer learning [30]

- Dynamic conformational modeling beyond static structures to capture functional flexibility [24]

As geometric deep learning continues to mature, its convergence with high-throughput experimentation and automated discovery pipelines promises to accelerate progress across structural biology, drug discovery, and materials science. The paradigm shift from traditional geometric morphometrics to GDL represents not merely an incremental improvement but a fundamental transformation in how we quantify, analyze, and design molecular structures.

Methodological Deep Dive and Domain-Specific Applications

The accurate classification of biological specimens is a cornerstone of research in entomology, plant biology, and systematics. For centuries, this process relied on traditional linear morphometrics (LMM), which uses point-to-point measurements such as lengths and widths [31]. However, the limitations of LMM—including measurement redundancy, dominance of size information, and inability to capture complex geometric shapes—have driven scientists toward more powerful analytical techniques [31] [18]. Two modern approaches now dominate the field: Geometric Morphometrics (GMM), which provides a sophisticated statistical framework for analyzing pure shape variation, and Computer Vision (CV) approaches, particularly deep learning, which offer automated pattern recognition from images [4].

Geometric morphometrics represents a significant methodological evolution from traditional measurement-based approaches. Unlike LMM, which relies on linear distances, GMM uses Cartesian coordinates of anatomical reference points (landmarks) to preserve the complete geometry of biological structures [18] [32]. Through Procrustes superimposition, GMM isolates pure shape variation by scaling, rotating, and translating specimens to remove differences in size, orientation, and position [18]. This ability to rigorously separate size (isometry) from non-uniform shape changes related to size (allometry) makes GMM particularly valuable for taxonomic studies where distinguishing these components is essential for accurate species delimitation [31].

Meanwhile, computer vision, especially convolutional neural networks (CNNs), has emerged as a powerful alternative that can automatically learn discriminative features directly from images without requiring manual landmark placement [4]. This review objectively compares the performance, methodologies, and applications of GMM and computer vision for taxonomic identification across entomological and botanical specimens, providing researchers with evidence-based guidance for selecting appropriate analytical tools.

Performance Comparison: Quantitative Metrics

Evaluating the performance of classification methods requires multiple metrics, as each captures different aspects of model effectiveness. Accuracy measures the overall correctness across all classes, while precision indicates how many positive identifications are actually correct, and recall (or sensitivity) measures the ability to find all relevant cases [33] [34]. The F1-score provides a balanced mean of precision and recall, which is particularly useful with imbalanced datasets [34]. For shape-specific analyses, additional metrics like Procrustes distance quantify shape differences in GMM, while IoU (Intersection over Union) assesses localization accuracy in computer vision tasks [34] [35].

Table 1: Performance Comparison of GMM and Computer Vision in Taxonomic Studies

| Study & Organism | Method | Accuracy | Precision/Recall | Key Findings |

|---|---|---|---|---|

| Archaeobotanical Seeds [4] | GMM (Elliptical Fourier) | 75.2% | Not specified | Lower accuracy compared to CNN; requires manual feature engineering |

| Archaeobotanical Seeds [4] | CNN (Computer Vision) | 83.9% | Not specified | Superior performance; automatic feature extraction; benefits from large datasets |

| Mammal Skulls (Antechinus) [31] | Linear Morphometrics | High (raw data) | Not specified | Discrimination inflated by size variation; poor allometric correction |

| Mammal Skulls (Antechinus) [31] | Geometric Morphometrics | Good (after allometry removal) | Not specified | Effective discrimination after removing size effects; better shape analysis |

| Human Facial Aging [32] | GMM (Facial Landmarks) | 69.3% | 87.3% sensitivity (6-year-olds) | Effective for age discrimination; performance varies by demographic group |

The comparative analysis reveals a complex performance landscape where each method excels in different contexts. For archaeobotanical seed classification, CNNs demonstrated clear superiority with 83.9% accuracy compared to 75.2% for GMM [4]. This performance advantage stems from the CNN's ability to automatically learn relevant features from entire images without requiring manual landmark identification. However, GMM maintains important strengths in scenarios requiring biological interpretability, particularly when allometric correction is essential [31]. In mammalian skull analyses, traditional LMM showed high discriminatory power with raw data, but this was substantially inflated by size variation rather than genuine shape differences. After proper allometric correction, GMM provided more biologically meaningful discrimination based on true shape variation [31].

Experimental Protocols and Methodologies

Geometric Morphometrics Workflow

The standard GMM pipeline involves a systematic, multi-stage process that requires careful execution at each step to ensure biologically meaningful results. The first critical stage is image acquisition, where standardized 2D photographs or 3D scans are obtained under controlled conditions to minimize non-biological variation [36] [18]. For taxonomic studies in entomology, this might involve mounting insect specimens in standardized orientations, while plant studies often require imaging leaves, flowers, or seeds against neutral backgrounds [18] [4].

The second stage involves landmarking, where homologous anatomical points are identified and digitized across all specimens [18]. Landmarks are typically categorized into three types: Type I (discrete anatomical points such as suture intersections), Type II (maxima of curvature), and Type III (extremal points) [18]. In many botanical studies, landmarks are supplemented with semi-landmarks along curves and contours to capture more complex geometries [18]. This process is often time-consuming and requires significant expertise to ensure homology and consistency across specimens [19].

The core analytical stage is Procrustes superimposition, which removes variation due to position, orientation, and scale by iteratively translating, rotating, and scaling all specimens to optimize fit against a consensus configuration [18]. This produces two main data outputs: Procrustes shape coordinates for analyzing shape variation, and centroid size (the square root of the sum of squared distances of all landmarks from their centroid) for studying size variation [31]. The resulting shape variables are then analyzed using multivariate statistical methods such as Principal Component Analysis (PCA) for exploratory analysis, Linear Discriminant Analysis (LDA) for classification, and Canonical Variate Analysis (CVA) for group discrimination [31].

Computer Vision and Deep Learning Protocol

Computer vision approaches, particularly convolutional neural networks (CNNs), follow a markedly different workflow that emphasizes automated feature learning rather than manual morphological quantification. The process begins with data collection and preprocessing, where large datasets of images are compiled and standardized through cropping, resizing, and normalization [4]. Unlike GMM, which requires careful specimen orientation during imaging, CNNs can often accommodate greater variation in initial image conditions.

A crucial step for deep learning approaches is data augmentation, where the training dataset is artificially expanded through transformations such as rotation, flipping, scaling, and brightness adjustment [4]. This technique improves model robustness and generalizability by exposing the network to variations not present in the original dataset. For the archaeobotanical seed study, this involved creating multiple modified versions of each seed image to enhance learning [4].

The core of the CNN approach is feature learning, where the network automatically discovers discriminative patterns through multiple convolutional layers that progressively detect edges, textures, shapes, and complex morphological structures [4]. This contrasts sharply with GMM's manual landmark specification. The final stages involve model training through backpropagation to minimize classification error, followed by performance evaluation on held-out test datasets using metrics such as accuracy, precision, recall, and F1-score [4].

The Scientist's Toolkit: Essential Research Solutions

Table 2: Essential Tools and Software for Morphometric Research

| Tool Category | Specific Examples | Application & Function |

|---|---|---|

| GMM Software | Momocs [36] [4], geomorph [36] | R packages for comprehensive GMM analysis including Procrustes fitting, statistical testing, and visualization |

| Deep Learning Frameworks | TensorFlow, PyTorch with R/reticulate [4] | Building, training, and deploying CNN models for automated image classification |

| Imaging Equipment | Digital cameras, scanners, CT systems [19] | Standardized 2D and 3D image acquisition of specimens under controlled conditions |

| Landmarking Tools | tpsDig, MorphoJ [31] | Precise digitization of anatomical landmarks and semi-landmarks on biological structures |

| Statistical Platforms | R Statistical Environment [36] [32] | Multivariate statistical analysis including PCA, LDA, and phylogenetic comparative methods |

Successful implementation of morphometric research requires both specialized software and hardware solutions. For GMM approaches, the R ecosystem provides comprehensive analytical capabilities through packages like Momocs and geomorph, which support the complete workflow from landmark data management to statistical analysis and visualization [36] [4]. These tools enable researchers to perform Procrustes superimposition, assess measurement error, conduct statistical tests for group differences, and create visualizations of shape variation [36]. For computer vision approaches, deep learning frameworks such as TensorFlow and PyTorch—accessible through R's reticulate package—provide the infrastructure for building and training CNN models [4].

Imaging technology represents another critical component, with choices ranging from standard digital cameras for 2D imaging to micro-CT scanners for 3D reconstruction of internal and external structures [19]. The selection of appropriate imaging technology depends on research questions, specimen size, required resolution, and whether surface or volumetric data is needed. For many entomological applications, high-resolution macro photography suffices, while complex plant structures or internal insect morphology may benefit from CT scanning approaches [19].

Comparative Analysis: Strengths and Limitations

Geometric Morphometrics Advantages and Challenges

GMM provides several distinct advantages for taxonomic research. Its strongest benefit is biological interpretability—the ability to directly visualize and interpret shape changes associated with taxonomic differences through deformation grids and vector diagrams [31] [18]. This allows researchers to understand precisely which anatomical regions contribute most to group separation, facilitating hypotheses about functional, developmental, or evolutionary significance [31]. Additionally, GMM's explicit separation of size and shape through Procrustes methods enables rigorous investigation of allometry, which is crucial for taxonomic studies where size differences may confound true shape discrimination [31].

The method also benefits from well-established statistical frameworks for hypothesis testing, including methods for assessing measurement error, statistical power, and phylogenetic signal [36]. The ability to conduct formal tests for group differences, integration, modularity, and allometry makes GMM particularly valuable for evolutionary and taxonomic research questions [36]. Furthermore, GMM typically requires smaller sample sizes than deep learning approaches, making it suitable for studies with limited specimens, such as rare species or archaeological remains [4].

However, GMM faces significant challenges, including landmarking labor intensity and expertise requirements [19]. The manual process of identifying and digitizing homologous landmarks is time-consuming and requires substantial anatomical knowledge, particularly for complex structures or when comparing disparate taxa where homology assessment becomes difficult [19]. The method also struggles with homology assessment across divergent taxa and capturing information from structures lacking clear landmarks [19]. Additionally, GMM results can be sensitive to landmark selection and placement, potentially introducing observer bias and affecting reproducibility [19].

Computer Vision Advantages and Challenges

Computer vision approaches, particularly deep learning, offer compelling advantages for automated taxonomic identification. Their most significant strength is automated feature extraction, which eliminates the need for manual landmarking and allows the network to discover discriminative features directly from images without researcher bias [4]. This capability enables the analysis of complex morphological patterns that may be difficult to capture with discrete landmarks. Additionally, CNNs demonstrate superior classification performance in many applications, as evidenced by the substantially higher accuracy in seed classification compared to GMM approaches [4].

These methods also exhibit exceptional robustness to image variation, tolerating differences in orientation, scale, and positioning that would problematic for traditional GMM [4]. The data augmentation strategies employed in deep learning further enhance this robustness by explicitly training networks to ignore irrelevant variation while focusing on discriminative features [4]. Furthermore, computer vision approaches are highly scalable to large datasets, with processing time largely independent of dataset size once trained, making them ideal for large-scale biodiversity studies and monitoring applications [4].

However, deep learning approaches face their own significant challenges, most notably the "black box" problem of interpretability [4]. Unlike GMM's visually interpretable results, understanding which specific morphological features drive CNN classifications remains challenging, limiting biological insight beyond pure classification accuracy. These methods also typically require large training datasets spanning hundreds or thousands of images per category, making them unsuitable for studying rare taxa with limited specimens [4]. Additionally, they demand substantial computational resources for training and expertise in deep learning implementation, which may present barriers for researchers without specialized computing support [4].

The comparison between geometric morphometrics and computer vision reveals a complementary rather than strictly competitive relationship, with each approach exhibiting distinct strengths suited to different research scenarios. GMM remains the method of choice for hypothesis-driven research requiring biological interpretability, allometric analysis, and studies with limited specimens [31] [18]. Its rigorous statistical framework and ability to visualize shape changes make it invaluable for understanding the morphological basis of taxonomic distinctions. In contrast, computer vision approaches excel at automated classification tasks with large datasets, applications requiring robustness to image variation, and when the primary goal is identification accuracy rather than morphological interpretation [4].

Future methodological developments will likely focus on hybrid approaches that leverage the strengths of both paradigms. Promising directions include landmark-free morphometric methods that automatically establish correspondences across specimens without manual landmarking [19], and interpretable deep learning approaches that combine the classification power of CNNs with visualization techniques to identify informative morphological regions [4]. As imaging technologies continue to advance and computational methods become more accessible, both GMM and computer vision will play increasingly important roles in taxonomic research, biodiversity monitoring, and evolutionary studies across entomology, botany, and beyond.

The success of intranasal drug delivery, particularly for nose-to-brain applications, is heavily influenced by the high inter-individual anatomical variability of the nasal cavity. This variability significantly impacts nasal airflow dynamics and intranasal drug deposition patterns, making personalized approaches essential for effective treatment [37]. Two distinct methodological approaches have emerged to quantify and analyze this morphological variability: Geometric Morphometrics (GMM), a traditional, hypothesis-driven method based on precise anatomical landmarks, and Computer Vision (CV) approaches, including deep learning, which leverage data-driven pattern recognition directly from medical images [20] [4]. This article provides a comparative analysis of these methodologies, focusing on their application in classifying nasal cavity morphology to optimize targeted drug delivery. We evaluate their performance, experimental protocols, and applicability within a personalized medicine framework, providing researchers with evidence-based guidance for methodological selection.

Methodological Face-Off: GMM vs. Computer Vision

Fundamental Principles and Workflows

Geometric Morphometrics (GMM) is a quantitative method for analyzing shape variation based on Cartesian landmark coordinates. When applied to nasal cavity analysis, the GMM workflow involves several standardized steps [37]:

- Landmark Digitization: Predefined anatomical landmarks are placed on a 3D reconstruction of the nasal cavity from CT scans. These typically include fixed points such as the highest point of the nasal valve and the anterior and posterior limits of the olfactory region.

- Procrustes Superimposition: The landmark configurations are translated, rotated, and scaled to remove non-shape variations using Generalized Procrustes Analysis (GPA).

- Statistical Shape Analysis: The aligned coordinates are analyzed using multivariate statistics, such as Principal Component Analysis (PCA), to identify major axes of shape variation.

- Cluster Identification: Morphological clusters are identified through techniques like Hierarchical Clustering on Principal Components (HCPC).

In contrast, Computer Vision (CV) and Deep Learning approaches bypass manual landmarking and instead learn feature representations directly from image data [20] [4]. A typical workflow involves:

- Data Preparation: A large set of medical images (e.g., CT scans) is compiled and preprocessed.

- Model Training: A convolutional neural network (CNN) is trained on this dataset to classify shapes or morphological features based on the raw pixel/voxel data.

- Feature Learning: The network automatically learns the most discriminative features for the classification task without explicit instruction.

- Prediction and Classification: The trained model is used to predict morphological classes or regression values for new, unseen data.

Performance Comparison and Experimental Data

Direct comparative studies in nasal cavity analysis are still emerging, but evidence from related morphological classification tasks in other fields provides strong indications of their relative performance. A landmark study on archaeobotanical seed classification directly pitted GMM against Deep Learning and found that Convolutional Neural Networks (CNNs) significantly outperformed GMM in classification accuracy [4]. Similarly, research on classifying carnivore tooth marks reported low discriminant power for GMM (<40%) compared to much higher accuracy for Deep Learning models (81% for DCNN and 79.52% for Few-Shot Learning) [20].

Table 1: Quantitative Performance Comparison of GMM and Computer Vision in Morphological Classification

| Methodology | Application Context | Reported Accuracy/Performance | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Geometric Morphometrics (GMM) | Nasal Cavity Clustering [37] | Identified 3 distinct morphological clusters | High interpretability; Provides clear morphological characterization | Manual landmarking is time-consuming; Expertise-dependent |

| Carnivore Tooth Mark ID [20] | <40% discriminant power | Limited by landmark selection and homology | ||

| Computer Vision / Deep Learning | Seed Classification [4] | Outperformed GMM (Specific metrics N/A) | High accuracy; Automated feature learning; Scalability | "Black box" nature; Large training datasets required |

| Carnivore Tooth Mark ID [20] | 81% (DCNN), 79.52% (FSL) | |||

| Hybrid Approach (Big AI) | Cardiac Safety Testing [38] | Combines strengths of both (See Table 2) | Speed of AI with interpretability of physics-based models | Conceptual and technical complexity |

For nasal cavity analysis specifically, a GMM study successfully categorized 151 nasal cavities into three distinct morphological clusters based on the shape of the region of interest (ROI) leading to the olfactory area [37]. This demonstrates GMM's capability to stratify patients into groups with potentially different olfactory accessibility, which is crucial for drug delivery planning. While a direct CV counterpart for nasal cavity clustering is not detailed in the provided results, the superior performance of CNNs in other morphological tasks suggests their high potential if sufficient training data is available.

Table 2: Analytical Characteristics and Suitability for Personalized Medicine

| Characteristic | Geometric Morphometrics (GMM) | Computer Vision/Deep Learning | Emerging Hybrid: "Big AI" [38] |

|---|---|---|---|

| Core Principle | Landmark-based shape analysis | Data-driven pattern recognition | Integrates physics-based models with AI |

| Interpretability | High (Morphospaces, clear variables) | Low ("Black box" models) | High (Restores mechanistic insight) |

| Data Efficiency | Moderate (Smaller samples viable) | Low (Requires large datasets) | Varies with implementation |

| Automation Level | Low (Manual landmarking) | High (End-to-end learning) | High |

| Primary Output | Shape variables, clusters | Classification, prediction | Predictive, simulatable digital twins |

| Role in Personalized Medicine | Stratification into morphotypes | Individualized prediction | Creation of individual "healthcasts" |

Experimental Protocols in Practice

A GMM Protocol for Nasal Cavity Cluster Analysis

The following detailed protocol is adapted from a study that identified three morphological clusters of the nasal cavity's region of interest (ROI) using GMM [37].

1. Sample Preparation and Imaging:

- Patient Cohort: Collect cranioencephalic computed tomography (CT) scans from a sufficient number of patients (e.g., n=78) with no known rhinologic history.

- Segmentation: Import DICOM files into segmentation software (e.g., ITK-SNAP). Perform semi-automatic segmentation to generate 3D surface meshes of the nasal cavity lumen, excluding paranasal sinuses. Export meshes in STL format.

- Pre-processing: Clean meshes and separate them into unilateral cavities. Mirror left cavities to align with right ones. Define a consistent origin for all models.

2. Landmarking Procedure:

- Define ROI: The region of interest should extend from the nasal valve plane to the anterior part of the olfactory region.

- Landmark Set: Digitize a template model with:

- 10 Fixed Landmarks: Identify homologous anatomical points present in all specimens (e.g., most anterior point at the nostril plane, highest point of the nasal valve, highest point at the front of the olfactory region).

- 200 Semi-Landmarks: Distribute semi-landmarks across surface patches on the template. Use Thin-Plate Spline (TPS) warping to project these semi-landmarks onto all other specimen surfaces, allowing them to slide tangentially to minimize bending energy.

- Reliability Assessment: Conduct intra- and inter-operator repeatability tests on a subset of models (e.g., 20) using Lin’s Concordance Correlation Coefficient (CCC) to ensure landmarking consistency.

3. Data Analysis and Clustering:

- Shape Alignment: Perform Generalized Procrustes Analysis (GPA) on all landmark coordinates to remove effects of position, orientation, and scale.

- Principal Component Analysis (PCA): Run PCA on the Procrustes-aligned coordinates to identify the main axes of shape variation (Principal Components - PCs).

- Cluster Identification: Apply Hierarchical Clustering on Principal Components (HCPC) to group specimens into distinct morphological clusters (e.g., Cluster 1: broader anterior cavity; Cluster 3: narrower with deeper turbinates).

- Statistical Validation: Use MANOVA and post-hoc Tukey tests to characterize and validate significant morphological differences between the identified clusters.

A Computer Vision Protocol for Morphological Classification

While a specific protocol for nasal cavity classification using CNNs was not detailed in the search results, a general protocol can be derived from high-performing applications in similar morphological tasks, such as archaeobotanical seed classification [4].

1. Data Curation and Preprocessing:

- Dataset Assembly: Compile a large and diverse dataset of medical images (e.g., thousands of nasal cavity CT scans). The dataset must be meticulously labeled with the target classifications (e.g., morphological cluster, deposition efficiency group).

- Data Preprocessing: Standardize all images to a uniform resolution and orientation. Apply data augmentation techniques (e.g., rotation, scaling, elastic deformations) to artificially increase the size and diversity of the training set and improve model generalizability.

2. Model Training and Validation:

- Model Selection: Choose a Convolutional Neural Network (CNN) architecture. This could be a relatively simple custom CNN or a pre-trained model adapted for the specific task (transfer learning).

- Training Loop: Train the model to map input images to the correct labels. Use a standard loss function (e.g., cross-entropy) and optimization algorithm (e.g., Adam). Split the data into training, validation, and test sets.

- Hyperparameter Tuning: Systematically adjust learning rate, batch size, and other hyperparameters to maximize performance on the validation set.

- Performance Metrics: Evaluate the final model on the held-out test set using metrics such as accuracy, confusion matrices, sensitivity, and specificity [4].

The Scientist's Toolkit: Essential Research Reagents and Materials