Generative AI for Geometric Morphometrics: Augmenting Biomedical Data to Overcome Sample Size Limitations

Geometric Morphometrics (GM) is a powerful multivariate tool for quantifying biological morphology, but its application in drug development and biomedical research is often constrained by small, incomplete, or imbalanced datasets.

Generative AI for Geometric Morphometrics: Augmenting Biomedical Data to Overcome Sample Size Limitations

Abstract

Geometric Morphometrics (GM) is a powerful multivariate tool for quantifying biological morphology, but its application in drug development and biomedical research is often constrained by small, incomplete, or imbalanced datasets. This article explores how generative computational learning algorithms, particularly Generative Adversarial Networks (GANs), can overcome these limitations. We provide a foundational understanding of GM's challenges, detail methodological implementations of generative models for data augmentation, address common troubleshooting and optimization strategies, and present a comparative analysis of validation techniques. By synthesizing the latest research, this review offers biomedical researchers a practical guide to leveraging synthetic data for enhanced predictive modeling, classification accuracy, and morphological analysis in clinical and preclinical development.

The Data Scarcity Challenge in Geometric Morphometrics and Biomedical Research

Geometric Morphometrics (GM) is a powerful visual statistical toolset that has revolutionized morphological research by enabling the rigorous analysis of form and shape using Cartesian geometric coordinates rather than traditional linear, areal, or volumetric variables [1] [2]. These methods employ two or three-dimensional homologous points of interest, known as landmarks, to quantify geometric variances among individuals [3]. In biomedical contexts, GM provides indispensable capabilities for modern medical diagnostics, individualized treatment, forensics, and the investigation of human morphological diversity [4]. When combined with virtual imaging, image manipulation, and morphometric methods, GM allows researchers to readily visualize, explore, and study digital anatomical objects, leading to new insights into organismal growth, development, and evolution [5].

The application of GM to biomedical data presents unique opportunities and challenges. While the foundations of GM were established approximately 30 years ago, the field has continually evolved through refinement and extension of its methodologies [4]. Modern GM now incorporates advanced computational approaches, including generative computational learning algorithms for data augmentation, which help overcome the common limitation of small sample sizes in specialized biomedical research domains [3] [6]. This protocol outlines the fundamental principles, practical applications, and emerging innovations in GM, with particular emphasis on its relevance to biomedical data analysis within a research framework investigating geometric morphometric data augmentation using generative algorithms.

Fundamental Concepts and Terminology

Landmarks: The Foundation of Geometric Morphometrics

Landmarks are biologically or geometrically corresponding point locations on the measured objects that form the basis of all GM analyses [4]. These landmarks are typically categorized into three primary types:

Table 1: Types of Landmarks in Geometric Morphometrics

| Landmark Type | Definition | Examples | Application Context |

|---|---|---|---|

| Type I | Anatomical points of biological significance | Sutures between bones, foramina | Biological and anatomical studies [3] |

| Type II | Points of mathematical significance | Points of maximal curvature or length | Generalized morphological analyses [3] |

| Type III | Constructed points located around outlines or in relation to other landmarks | Extremities of structures, outline points | Analyses requiring additional points beyond homologous landmarks [3] |

In addition to these traditional landmarks, modern GM incorporates semilandmarks for quantifying curves and surfaces. These semilandmarks "slide" over curves and surfaces in an attempt to reduce bending energy, thus enabling a more comprehensive capture of geometrical information [3] [4].

Shape, Form, and Size

In GM terminology, form refers to the geometric information independent of location and orientation, but not scale, while shape specifically denotes the geometric information independent of location, scale, and orientation [4]. The most common approach to standardizing shape data involves Generalized Procrustes Analysis (GPA), which translates all configurations to the same centroid, scales them to the same centroid size, and rotates them to minimize the summed squared differences between the configurations and their sample average [4]. This process effectively isolates biological variation by minimizing non-biological factors such as position, orientation, and size [7].

Core Methodological Workflow

The standard GM analytical pipeline follows a systematic sequence of steps from data acquisition through statistical analysis and visualization. The following diagram illustrates this fundamental workflow:

Data Acquisition and Landmark Digitization

The initial phase involves collecting two-dimensional or three-dimensional coordinate data from biological specimens. In biomedical contexts, this typically utilizes various imaging modalities:

- Computed Tomography (CT): Provides detailed 3D internal structures; used in equine skull studies [8]

- Surface Scanning: Captures external morphology with high precision

- Virtual Imaging: Enables digital extraction and manipulation of anatomical structures [5]

Landmarks are then digitized onto these images using specialized software. The precision of landmark placement is critical, as error at this stage propagates through all subsequent analyses. For comparative studies, all specimens must share the same configuration of biologically homologous landmarks.

Procrustes Superimposition

Generalized Procrustes Analysis (GPA) standardizes landmark configurations by:

- Translating all configurations to the same centroid

- Scaling them to unit centroid size (the square root of the summed squared distances of landmarks from their centroid)

- Rotating them to minimize the sum of squared distances between corresponding landmarks [4]

This process effectively removes the effects of position, orientation, and scale, isolating pure shape information for subsequent analysis.

Statistical Analysis of Shape Data

Following Procrustes alignment, the resulting shape coordinates undergo multivariate statistical analysis:

- Principal Component Analysis (PCA): Examines major patterns of variation in the dataset by projecting specimens into a new shape space [3] [9]

- Multivariate Regression: Assesses how form is influenced by meaningful factors such as age, size, or environmental variables [5] [4]

- Partial Least Squares (PLS): Examines associations among structures or between shape and other variables [5] [4]

- Canonical Variate Analysis (CVA): Explores group differences, though this method is highly sensitive to small or imbalanced datasets [3]

These analyses generate shape variables that can be related to other biological factors of interest through appropriate statistical modeling.

Application Notes for Biomedical Research

Case Study: Ontogenetic Changes in Equine Skulls

A representative application of GM in biomedical research investigated ontogenetic changes in equine skulls using CT imaging [8]. This study exemplifies the standard GM protocol in practice:

Experimental Protocol:

- Sample Preparation: Twenty-nine normal equine heads were divided into three age groups (<5 years, 6-15 years, >16 years)

- CT Imaging: Heads were scanned using multislice CT scanners with slice thickness of 1.5mm or 1.25mm

- Image Processing: Bone window DICOM images were reconstructed into isosurfaces using Stratovan Checkpoint software

- Landmarking: Twenty-nine homologous landmarks were placed on each skull, including internal structures like the ventral and dorsal conchal bullae and tooth pulps

- Data Analysis: Landmark coordinates were processed through Procrustes fitting in MorphoJ software, followed by principal component analysis

Key Findings: The analysis revealed that allometric shape changes (shape variation correlated with size) accounted for 27% of variance along PC1, successfully distinguishing the youngest horses from the two older age groups. When allometric effects were removed, age groups could not be distinguished, indicating that size-related shape changes dominate ontogenetic variation in equine skulls [8].

Visualization and Interpretation

A critical strength of GM is the capacity to visualize statistical results as actual shapes or forms [4] [10]. Common visualization methods include:

- Deformation Grids: Thin-plate spline deformation grids show shape changes by analogy with physical surface deformation [9] [4]

- Vector Plots: Display relative landmark displacements between starting and target shapes [10]

- Shape Models: Generate theoretical shapes at specific positions within morphospaces (e.g., along principal components)

These visualization techniques transform abstract statistical outputs into biologically interpretable forms, facilitating insights into morphological patterns that might otherwise remain obscured in numerical results.

Data Augmentation Using Generative Algorithms

A significant challenge in GM, particularly for biomedical applications with rare specimens or clinical conditions, is limited sample size. Traditional resampling techniques like bootstrapping duplicate existing data but do not generate genuinely new information [3]. Emerging approaches using generative computational learning algorithms offer promising solutions.

Generative Adversarial Networks for GM

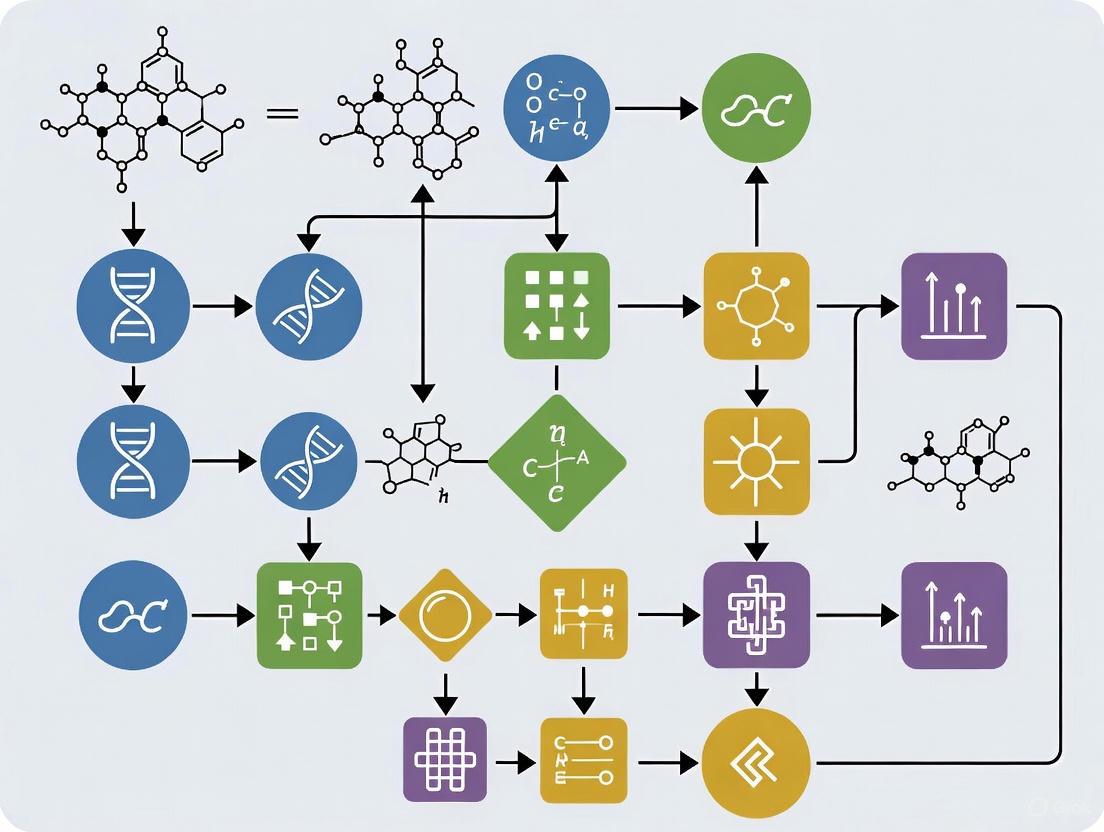

Generative Adversarial Networks (GANs) represent a cutting-edge approach for geometric morphometric data augmentation [3] [6]. The architecture and workflow of a typical GAN system for GM data augmentation can be visualized as follows:

Protocol for GAN-Based Data Augmentation [3]:

- Data Preparation: Format Procrustes-aligned landmark coordinates as training data

- Model Selection: Choose appropriate GAN architecture (standard GANs with different loss functions have outperformed conditional GANs in GM applications)

- Training: Simultaneously train generator and discriminator networks in adversarial fashion

- Synthetic Data Generation: Use trained generator to produce novel landmark configurations

- Validation: Apply robust statistical methods to verify synthetic data equivalence to original training data

Applications and Benefits: GAN-based augmentation helps address the "insufficiency of information density" common with small sample sizes, reducing overfitting in subsequent classification algorithms and predictive models [3]. Experimental results demonstrate that GANs can produce highly realistic synthetic data that is statistically equivalent to original training data, thereby enhancing the robustness of downstream statistical analyses [3] [6].

Landmark-Free Approaches

Recent methodological innovations include landmark-free approaches such as Deterministic Atlas Analysis (DAA), which uses Large Deformation Diffeomorphic Metric Mapping (LDDMM) to compare shapes without manual landmarking [7]. These methods:

- Generate control points automatically based on morphological features

- Compute deformation momenta to quantify shape differences

- Enable analysis of highly disparate forms with limited homology

- Are particularly valuable for large-scale studies across disparate taxa [7]

While these methods show promise for automating shape analysis, they currently face challenges in consistency with traditional landmark-based approaches, especially for certain taxonomic groups like Primates and Cetacea [7].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Geometric Morphometrics

| Tool Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Landmark Digitization Software | Stratovan Checkpoint, tps-series | Place and manage landmarks on 2D/3D images | Data acquisition phase [8] |

| Statistical Analysis Packages | MorphoJ, geomorph R package, PAST | Perform Procrustes analysis, PCA, and other multivariate statistics | Core analytical workflow [1] [8] |

| Programming Environments | R statistical computing, Wolfram Mathematica | Custom analysis scripting and implementation | Flexible, reproducible analyses [9] [1] |

| Generative Algorithms | Generative Adversarial Networks (GANs) | Synthetic data generation for small samples | Data augmentation for limited datasets [3] |

| Visualization Tools | Thin-plate spline, deformation grids | Visual representation of shape changes | Interpretation and communication of results [10] |

Geometric Morphometrics provides a powerful, visually intuitive framework for quantifying and analyzing form and shape in biomedical data. The core protocol—encompassing landmark digitization, Procrustes superimposition, multivariate statistical analysis, and shape visualization—offers a robust methodology for investigating morphological relationships across diverse biomedical contexts. The integration of emerging computational approaches, particularly generative adversarial networks for data augmentation and landmark-free analysis methods, addresses traditional limitations associated with small sample sizes and manual landmarking constraints. These advances position GM as an increasingly accessible and powerful tool for biomedical researchers investigating morphological variation in contexts ranging from clinical diagnostics to evolutionary studies. As these methodologies continue to evolve, they promise to enhance our understanding of form-function relationships in biological structures through rigorous quantitative analysis.

In scientific research, particularly in fields like paleontology, archaeology, and drug development, the quality and quantity of data directly determine the validity of statistical inferences. Geometric Morphometrics (GM) is a powerful multivariate statistical toolset for the analysis of morphology, with growing importance in biology, physical anthropology, and evolutionary studies [3]. These methods employ two or three-dimensional homologous points of interest, known as landmarks, to quantify geometric variances among individuals [3]. However, GM analyses are frequently compromised by incomplete fossil records, small sample sizes, and distorted preservation, creating a critical bottleneck that limits statistical power and reliability [3].

The statistical power of an analysis is the probability that it will detect an effect when there truly is one. Inadequate sample sizes directly diminish this power, increasing the risk of Type II errors (false negatives) and reducing the reliability of predictive models [3]. This application note examines how incomplete records and small samples impact statistical power in geometric morphometrics and details protocols for leveraging generative computational learning algorithms, particularly Generative Adversarial Networks (GANs), to overcome these limitations through data augmentation.

The Impact of Limited Data on Geometric Morphometric Analyses

Fundamental Challenges in Geometric Morphometrics

Geometric Morphometric practices involve projecting landmark configurations onto a common coordinate system through Generalized Procrustes Analysis (GPA), allowing for direct comparison of shapes by quantifying minute displacements of individual landmarks in space [3]. The resulting data is typically analyzed using multivariate statistical methods such as Principal Component Analysis (PCA) and Canonical Variant Analysis (CVA) [3].

The preservation rate of fossils often results in the loss of landmarks, significantly impeding these analyses [3]. For many species, particularly in paleoanthropology, obtaining large sample sizes is extraordinarily difficult, leading to substantial sample bias and reduced predictive capacity of discriminant models [3]. The impact of this bias is directly proportional to the number of variables included in multivariate analyses, creating a fundamental constraint on research progress [3].

Quantitative Impacts of Sample Size Limitations

Table 1: Statistical Consequences of Small Sample Sizes in Geometric Morphometrics

| Challenge | Impact on Analysis | Resulting Statistical Issue |

|---|---|---|

| Incomplete Fossil Records | Loss of landmarks and morphological information [3] | Reduced variable completeness, biased shape representation |

| Small Sample Sizes | Insufficient information density for population representation [3] | Overfitting, reduced model generalizability |

| Class Imbalance | Underrepresentation of certain morphological variants or species [3] | Biased classifiers, inaccurate group discrimination |

| High-Dimensional Data | Increased variables without corresponding sample increases [3] | Exponentiated bias impact, reduced discriminant power |

Generative Algorithms for Data Augmentation in Geometric Morphometrics

Generative Adversarial Networks (GANs) Fundamentals

Generative Adversarial Networks (GANs) represent a transformative approach to addressing data scarcity challenges in morphological analyses [3] [11]. A GAN consists of two neural networks trained simultaneously: a Generator that produces synthetic data, and a Discriminator that evaluates this data for authenticity [3]. The two models engage in adversarial competition, with the generator continuously improving its output to fool the discriminator, resulting in a network capable of producing highly realistic synthetic data statistically equivalent to original training data [3].

Recent advancements have led to more sophisticated implementations, such as adaptive identity-regularized GANs that integrate identity blocks to preserve critical species-specific features during generation, coupled with species-specific loss functions designed around distinctive morphological characteristics [11]. These biologically-informed approaches ensure that synthetic data generation respects phylogenetic relationships and morphological boundaries between distinct species [11].

Workflow for Geometric Morphometric Data Augmentation

Diagram Title: GM Data Augmentation with GANs

Experimental Protocols for Geometric Morphometric Data Augmentation

Protocol 1: Standard GAN Implementation for Landmark Data

Purpose: To generate synthetic geometric morphometric data using standard Generative Adversarial Networks to augment small sample sizes.

Materials and Equipment:

- Landmark coordinate data from original specimens

- Python programming environment with TensorFlow/PyTorch

- High-performance computing resources with GPU acceleration

Procedure:

- Data Preprocessing:

- Collect landmark coordinate data from all available specimens

- Perform Generalized Procrustes Analysis to remove non-shape variation (position, orientation, scale)

- Export Procrustes coordinates as training data

GAN Architecture Configuration:

- Implement generator network with 3 fully connected hidden layers (512, 256, 512 neurons)

- Implement discriminator network with 3 fully connected hidden layers (256, 128, 64 neurons)

- Use LeakyReLU activation functions in both networks

- Configure Adam optimizers for both networks with learning rate of 0.0002

Model Training:

- Train generator and discriminator simultaneously for 10,000 epochs

- Use minibatch training with batch size of 32

- Monitor training stability to prevent mode collapse

- Save model checkpoints every 500 epochs

Synthetic Data Generation:

- Use trained generator to produce synthetic landmark data

- Generate number of synthetic specimens required to achieve target sample size

- Validate synthetic data quality through statistical comparison with original data

Statistical Validation:

- Perform Multivariate Analysis of Variance (MANOVA) to test significance between original and synthetic data distributions

- Use Principal Component Analysis to visualize overlap in morphospace

- Verify that synthetic data falls within biologically plausible range

Troubleshooting:

- For training instability: Reduce learning rate or implement gradient penalty

- For mode collapse: Add noise to discriminator inputs or use multiple discriminators

- For unrealistic outputs: Increase training epochs or adjust network architecture

Protocol 2: Adaptive Identity-Regularized GAN for Morphologically Complex Species

Purpose: To generate high-quality synthetic morphometric data for morphologically complex species while preserving essential diagnostic features.

Materials and Equipment:

- Landmark data with species identification labels

- Taxonomic reference database with diagnostic characteristics

- Python environment with custom GAN implementation capabilities

Procedure:

- Species-Specific Feature Identification:

- Consult taxonomic literature to identify species-invariant morphological features

- Label landmark constellations corresponding to diagnostic characteristics

- Establish morphological constraints for each species

Adaptive Identity Block Implementation:

- Implement identity blocks that learn to preserve species-invariant features

- Configure adaptive mechanism to modulate behavior based on input taxonomic characteristics

- Connect identity blocks in parallel with standard generator layers

Species-Specific Loss Function Formulation:

- Develop multi-component loss function incorporating:

- Morphological consistency terms

- Phylogenetic relationship constraints

- Feature preservation objectives

- Weight loss components to balance diversity and biological accuracy

- Develop multi-component loss function incorporating:

Two-Phase Training Methodology:

- Phase 1: Train identity mappings to establish stable feature preservation

- Phase 2: Introduce controlled morphological variations for augmentation

- Monitor both discriminator loss and species-specific loss components

Biological Validation:

- Engage domain experts to evaluate biological authenticity of synthetic specimens

- Calculate biological validation score through expert assessment

- Verify maintenance of diagnostic features in synthetic specimens

Troubleshooting:

- For blurred feature preservation: Increase weight of species-specific loss component

- For insufficient diversity: Adjust balance between identity and variation components

- For taxonomic inaccuracy: Review diagnostic feature identification and constraints

Research Reagent Solutions for Geometric Morphometric Data Augmentation

Table 2: Essential Research Tools for Geometric Morphometric Data Augmentation

| Research Reagent/Tool | Function | Application Example |

|---|---|---|

| Generative Adversarial Networks (GANs) | Generate synthetic landmark data statistically equivalent to original specimens [3] | Augmenting small fossil datasets for improved statistical power |

| Adaptive Identity Blocks | Preserve species-specific morphological features during generation [11] | Maintaining diagnostic characteristics in synthetic specimens of closely related species |

| Species-Specific Loss Functions | Incorporate taxonomic constraints to ensure biological plausibility [11] | Generating morphologically accurate data for rare or endangered species |

| Generalized Procrustes Analysis | Normalize landmark configurations to remove non-shape variation [3] | Preprocessing step before generative augmentation |

| Principal Component Analysis | Visualize and validate synthetic data distribution in morphospace [3] | Quality assessment of generated data |

Implementation Considerations and Limitations

While generative approaches present a valuable means of augmenting geometric morphometric datasets, several limitations must be considered. Generative Adversarial Networks are not the solution to all sample-size related issues, and excessive transformations can potentially generate unrealistic data if not properly constrained [3] [12]. Additionally, these methods require substantial computational resources and expertise to implement effectively [12].

The effectiveness of data augmentation in geometric morphometrics has been demonstrated across multiple applications. In one study, GANs using different loss functions produced multidimensional synthetic data significantly equivalent to the original training data, though Conditional Generative Adversarial Networks were notably less successful [3] [13]. Another investigation implementing adaptive identity-regularized GANs for fish classification achieved 95.1% classification accuracy, representing a 9.7% improvement over baseline methods and 6.7% improvement over traditional augmentation approaches [11].

For optimal results, generative data augmentation should be combined with other preprocessing steps and traditional statistical techniques. This integrated approach can help overcome the persistent challenges posed by incomplete records and small samples, ultimately enhancing the statistical power and reliability of geometric morphometric analyses across biological, anthropological, and pharmaceutical research domains.

Data augmentation represents a cornerstone of modern data science, providing critical methodologies for enhancing the robustness and generalizability of statistical and machine learning models. In fields characterized by data scarcity, such as geometric morphometrics (GM), these techniques are particularly invaluable [3]. Geometric morphometrics, which involves the multivariate statistical analysis of form based on Cartesian landmark coordinates, frequently grapples with limited sample sizes due to factors inherent to its common applications—notably the incomplete fossil record in paleontology or the rarity of specific biological specimens [3] [14]. This data scarcity impedes complex statistical analyses, including classification tasks and predictive modeling, often leading to overfitting and reduced model performance [3].

The evolution of data augmentation strategies has transitioned from traditional resampling techniques to advanced generative artificial intelligence (AI). Traditional methods, such as bootstrapping, artificially inflate datasets by creating copies or simple variations of existing data but fail to generate novel data points that explore the "uncharted territory" between existing samples [3]. In contrast, modern generative AI, particularly Generative Adversarial Networks (GANs), can learn the underlying probability distribution of the training data and produce highly realistic, synthetic data that significantly enhance the diversity and representativeness of datasets [3] [11] [15]. This evolution is critically important for geometric morphometrics, where generative models can create new, biologically plausible landmark configurations, thereby overcoming historical limitations and enabling more powerful morphological analyses [3].

The Limitation of Traditional Resampling Methods

Traditional resampling methods have been widely used to address issues of small sample sizes and class imbalance. Techniques such as bootstrapping (resampling with replacement) and permutation tests have been standards in statistical practice for decades, offering robustness in parameter estimation and hypothesis testing [3]. Their primary strength lies in their ability to provide inferential power about a population from a single sample without stringent distributional assumptions.

However, these methods possess a fundamental limitation: they do not create new information. Bootstrapping, for instance, generates new datasets by duplicating existing data points, thereby inflating the sample size without increasing the information density about the population's true distribution [3]. This often results in models that are prone to overfitting, as the spaces between genuine data points remain unexplored. For geometric morphometric analyses, which rely on capturing the full spectrum of morphological variation in a multidimensional feature space, this insufficiency can be particularly detrimental, limiting the predictive accuracy and generalizability of subsequent models [3].

The Rise of Generative AI in Data Augmentation

Generative AI has emerged as a transformative solution to the limitations of traditional resampling. Unlike methods that merely duplicate data, generative models learn to approximate the complex, high-dimensional probability distributions of real datasets and can then sample from this learned distribution to create novel, synthetic data [16] [15].

Core Generative Models

The landscape of generative AI for data augmentation is diverse, with several model architectures showing significant promise:

- Generative Adversarial Networks (GANs): Introduced in 2014, GANs consist of two neural networks—a Generator and a Discriminator—trained simultaneously in a competitive framework [3] [16]. The generator creates synthetic data, while the discriminator evaluates its authenticity against real training data. This adversarial process continues until the generator produces data indistinguishable from the original [3]. GANs have been successfully applied to generate synthetic geometric morphometric data, with studies showing that they can produce multidimensional data statistically equivalent to the original training set [3].

- Gaussian Mixture Models (GMM): As a probabilistic model, GMM assumes data is generated from a mixture of a finite number of Gaussian distributions. It is a robust, statistically-driven method for generating synthetic data, particularly effective for filling gaps in data distributions [17]. For example, in a study predicting soil organic carbon, augmenting the calibration set with 44 GMM-generated samples improved the Random Forest model's performance, increasing the R² value from 0.71 to 0.77 and reducing the RMSE [17].

- Diffeomorphic Transforms: This non-generative AI data augmentation method performs diffeomorphic (smooth and invertible) transformations between two samples from the same class [18]. It is particularly effective for objects with high variability in shape and texture, such as biological specimens. By mimicking natural shape changes (e.g., those experienced in a diatom's life cycle), it generates new, realistic training samples that have been shown to improve classification accuracy beyond standard augmentation techniques [18].

Quantitative Comparison of Augmentation Strategies

The table below summarizes the performance of various data augmentation strategies as documented in recent scientific literature.

Table 1: Performance Comparison of Data Augmentation Strategies

| Augmentation Method | Application Context | Model Performance Before Augmentation | Model Performance After Augmentation | Key Metric |

|---|---|---|---|---|

| Gaussian Mixture Model (GMM) | Soil Organic Carbon Prediction [17] | R² = 0.71, RMSE = 0.93% | R² = 0.77, RMSE = 0.84% | Validation Accuracy |

| Adaptive Identity-Regularized GAN | Fish Species Classification [11] | 85.4% Accuracy (Baseline) | 95.1% ± 1.0% Accuracy | Classification Accuracy |

| Diffeomorphic Transforms | Diatom Classification [18] | Baseline Accuracy (Not Specified) | +0.47% Accuracy Improvement | Increase in Accuracy |

| GANs & GMM Combination | Geometric Morphometrics [3] | N/A (Theoretical) | Produced statistically equivalent synthetic data | Statistical Equivalence |

Application Notes & Protocols for Geometric Morphometrics

Integrating generative data augmentation into a geometric morphometrics workflow requires a structured pipeline, from data preparation to model validation. The following protocol outlines the key stages for a successful implementation.

Experimental Protocol: Data Augmentation for GM Using GANs

1. Objective: To augment a limited set of landmark configurations using a Generative Adversarial Network to enhance the performance and robustness of downstream statistical analyses (e.g., classification, PCA).

2. Materials and Data Pre-processing:

- Input Data: A matrix of Procrustes-aligned landmark coordinates [3]. The data should be derived from a Generalized Procrustes Analysis (GPA), which removes the effects of translation, rotation, and scale [3].

- Data Cleaning: Address any missing landmarks using appropriate imputation techniques if necessary [3].

- Feature Space Construction (Optional): For very high-dimensional data, a preliminary dimensionality reduction via Principal Components Analysis (PCA) may be performed. The GAN is then trained on the principal component scores, which represent the major axes of shape variation [3].

3. GAN Architecture and Training:

- Model Selection: Standard GAN architectures are often sufficient, though more advanced variants like Wasserstein GANs can offer improved training stability [3].

- Generator Network: A neural network that takes a vector of random noise from a latent space (e.g., 100 dimensions) and outputs a synthetic data point with the same dimensionality as a real landmark configuration (or PC score vector).

- Discriminator Network: A binary classifier network that distinguishes between "real" (training data) and "fake" (generator output) samples.

- Training Loop: The model is trained in two alternating steps:

- Train Discriminator: Update the discriminator with a batch of real and a batch of generated data.

- Train Generator: Update the generator to produce data that "fools" the discriminator.

- Conditional GANs (cGANs): For labeled data (e.g., specimens from different species), a cGAN can be used. The class label is provided as an additional input to both the generator and discriminator, allowing for the targeted generation of synthetic data for specific groups [3].

4. Validation and Quality Control:

- Statistical Validation: The synthetic data must be validated to ensure it is representative of the true morphological space. This can be achieved using:

- Downstream Task Evaluation: The ultimate validation is performance improvement in the target application. Compare the performance of a classifier (e.g., SVM, Random Forest) or a predictive model trained on the original data versus one trained on the augmented (original + synthetic) data [3] [11].

Workflow Visualization

The following diagram illustrates the end-to-end protocol for data augmentation in geometric morphometrics using a Generative Adversarial Network.

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Materials and Computational Tools for GM Data Augmentation

| Item / Reagent | Function / Application | Example / Note |

|---|---|---|

| Landmark Digitization Software | Precisely capture 2D/3D coordinates of homologous anatomical points from specimens or images. | Examples include MorphoJ, tpsDig2. Essential for building the initial raw dataset [3]. |

| Procrustes Analysis Software | Normalize landmark configurations by scaling, translating, and rotating them into a common coordinate system. | Implemented in R (geomorph package) or standalone software. Critical pre-processing step [3]. |

| Programming Framework | Provides the environment to build, train, and validate generative models. | Python with TensorFlow/PyTorch, or R. Necessary for implementing GANs and other AI models [3] [17]. |

| High-Performance Computing (HPC) | Accelerates the computationally intensive training process of deep learning models like GANs. | GPU clusters are often essential for training on large or high-dimensional morphometric datasets [11]. |

| Generative Model Architecture | The core algorithm for generating synthetic landmark data. | GANs, cGANs, or Gaussian Mixture Models (GMM). Choice depends on data structure and goals [3] [17]. |

| Statistical Validation Suite | Tools to test the quality and fidelity of the generated synthetic data. | Multivariate statistical tests (e.g., PERMANOVA) in R or Python; visualization in morphospace [3]. |

Challenges and Future Directions

Despite their promise, generative AI methods face several challenges. Model instability, particularly in GAN training, can lead to mode collapse where the generator produces limited varieties of samples [11]. Ensuring the biological plausibility of generated data is paramount; synthetic landmark configurations must represent anatomically possible forms [3] [11]. This has led to the development of biologically-informed GANs that incorporate taxonomic constraints and species-specific loss functions to maintain morphological authenticity [11].

Future research will likely focus on leveraging 3D geometric morphometric data more comprehensively, as current 2D analyses have shown limited discriminant power [14]. Furthermore, the integration of generative AI into broader scientific workflows, such as drug development—where it can help generate synthetic data for pharmacokinetic modeling or clinical trial simulation—showcases its expanding role beyond basic science [19]. As these technologies mature, they will become an indispensable tool in the scientist's arsenal, turning data scarcity from a roadblock into a surmountable challenge.

Generative Adversarial Networks (GANs) represent a groundbreaking machine learning framework introduced by Ian Goodfellow in 2014 that has transformed the field of generative modeling [20]. This innovative approach operates within an unsupervised learning framework by utilizing deep learning techniques where two neural networks, a generator and a discriminator, work in direct opposition to each other [20]. The fundamental objective of a GAN is to generate realistic synthetic data by learning and replicating the underlying patterns from existing training datasets. The capacity of GANs to produce highly realistic data has positioned them as powerful tools across numerous research domains, including geometric morphometrics where they address critical challenges related to sample size limitations and data incompleteness commonly encountered in fossil records [3].

The application of GANs to geometric morphometrics presents a particularly promising solution to one of the field's most persistent challenges: the incompleteness and distortion of the fossil record, which often conditions the type of knowledge that can be extracted from morphological analyses [3]. Traditional statistical methods in geometric morphometrics, including Canonical Variant Analyses (CVA), are highly sensitive to small or imbalanced datasets, with the impact of bias being directly proportional to the number of variables included in multivariate analyses [3]. GANs offer a sophisticated approach to overcoming these limitations through the generation of synthetic landmark data that expands limited datasets while preserving the essential morphological variances necessary for robust statistical analysis.

Fundamental GAN Architecture and Dynamics

Core Components and Adversarial Process

The GAN architecture consists of two deep neural networks engaged in a competitive minimax game [20]. The generator network takes random noise as input and transforms it into synthetic data that aims to mimic the real data from the training set. Simultaneously, the discriminator network functions as an adversarial evaluator, analyzing both real samples from the training dataset and synthetic samples produced by the generator, then assigning a probability score that each is real [20]. This dynamic creates a continuous feedback loop where the generator strives to produce increasingly realistic data to deceive the discriminator, while the discriminator concurrently refines its ability to distinguish real from synthetic samples.

The training process involves backpropagation to optimize both networks, where the gradient of the loss function is calculated according to each network's parameters, and these parameters are adjusted to minimize their respective losses [20]. The generator utilizes feedback from the discriminator to improve its synthetic data generation capabilities. This adversarial process continues until equilibrium is reached, ideally resulting in a generator capable of producing highly realistic data that the discriminator cannot distinguish from genuine samples, at which point the discriminator would assign a probability of 0.5 to all samples [20].

GAN Dynamics Workflow

The following diagram illustrates the fundamental adversarial process between the generator and discriminator:

GAN Variants and Architectural Evolution

Key GAN Architectures for Scientific Research

The fundamental GAN architecture has evolved into numerous specialized variants, each designed to address specific challenges or application requirements. The table below summarizes the key GAN architectures relevant to geometric morphometrics and scientific research:

Table 1: Key GAN Architectures for Geometric Morphometrics and Scientific Research

| GAN Variant | Key Features | Advantages | Relevant Applications |

|---|---|---|---|

| Vanilla GAN | Basic generator-discriminator architecture using multilayer perceptrons (MLPs) [20] | Simple implementation; foundational understanding [20] | Prototyping; educational purposes |

| Conditional GAN (cGAN) | Incorporates additional labels or conditions for both generator and discriminator [20] | Enables targeted generation with specific characteristics [20] | Category-specific morphological generation |

| Deep Convolutional GAN (DCGAN) | Utilizes convolutional neural networks (CNNs) for both generator and discriminator [20] | Improved performance for image-like data; stable training [20] | 2D and 3D morphological pattern generation |

| Wasserstein GAN (WGAN) | Employs Wasserstein distance metric with gradient penalty [21] | Addresses training instability; more consistent convergence [21] | High-dimensional morphometric data |

| CycleGAN | Uses cyclic consistency with two generators and two discriminators [20] | Enables domain translation without paired training data [20] | Cross-domain morphological transformation |

Performance Comparison of GAN Architectures

Different GAN architectures demonstrate varying performance characteristics across evaluation metrics. The following table quantitatively compares their performance in key areas relevant to geometric morphometrics:

Table 2: Performance Comparison of GAN Architectures in Scientific Applications

| GAN Architecture | Training Stability | Sample Quality | Mode Coverage | Computational Efficiency | Recommended Use Cases |

|---|---|---|---|---|---|

| Vanilla GAN | Low [20] | Moderate [20] | Limited [20] | High [20] | Basic synthetic data generation |

| DCGAN | Moderate [20] | High [20] | Moderate [20] | Moderate [20] | Image-based morphometric data |

| WGAN-GP | High [21] | High [21] | High [21] | Low [21] | High-fidelity landmark generation |

| Conditional GAN | Moderate [20] | High [20] | High [20] | Moderate [20] | Category-specific augmentation |

| CycleGAN | Moderate [20] | Moderate [20] | Moderate [20] | Low [20] | Domain adaptation tasks |

Application Notes for Geometric Morphometrics

GANs for Morphometric Data Augmentation

In geometric morphometrics, GANs present a valuable solution for addressing the critical issue of sample size insufficiency that frequently impedes robust statistical analyses [3]. The field relies on the analysis of morphological variations using homologous points of interest known as landmarks, which are often scarce in paleontological and archaeological contexts due to fossil record incompleteness [3]. Traditional resampling techniques like bootstrapping merely duplicate existing data without creating new information, whereas GANs generate genuinely novel synthetic data that expands the information density of the dataset, thereby enabling more reliable statistical inferences and reducing overfitting in predictive models [3].

Experimental applications demonstrate that GANs can produce highly realistic synthetic morphometric data that is statistically equivalent to original training data, effectively overcoming limitations imposed by small sample sizes [3]. Different GAN architectures have been tested with geometric morphometric datasets, with standard GANs using various loss functions proving particularly successful in generating multidimensional synthetic data that preserves the essential morphological variances of the original specimens [3]. This capability is crucial for enhancing the reliability of statistical tests such as Canonical Variant Analyses (CVA) that are highly sensitive to dataset size and balance [3].

Comparative Analysis of Data Augmentation Methods

The table below compares traditional data augmentation approaches with GAN-based methods specifically for geometric morphometric applications:

Table 3: Data Augmentation Methods Comparison for Geometric Morphometrics

| Method | Principle | Advantages | Limitations | Effectiveness for GM |

|---|---|---|---|---|

| Bootstrapping | Resampling with replacement [3] | Simple implementation; preserves distribution [3] | Does not create new information; limited variance [3] | Low to moderate |

| Traditional Synthetic Data | Parametric distribution modeling | Controlled data generation | Relies on distribution assumptions | Moderate |

| GAN-Based Augmentation | Adversarial learning of data distribution [3] | Creates meaningful new data; reduces overfitting [3] | Computational intensity; training instability [3] | High |

| Conditional GAN | Label-guided adversarial generation [20] | Targeted category-specific generation [20] | Requires labeled data; complex architecture [20] | Very high |

Experimental Protocols for Geometric Morphometric Applications

Protocol 1: Basic GAN Implementation for Landmark Data Augmentation

Objective: To implement a GAN framework for generating synthetic landmark data to augment limited geometric morphometric datasets.

Materials and Requirements:

- Software Environment: Python with TensorFlow/PyTorch, NumPy, SciPy

- Computational Resources: GPU with minimum 8GB VRAM recommended

- Input Data: Procrustes-aligned landmark coordinates in matrix form

Procedure:

- Data Preprocessing:

- Perform Generalized Procrustes Analysis (GPA) to align all landmark configurations [3]

- Convert landmark coordinates to vector format preserving specimen identity

- Normalize data to zero mean and unit variance

Generator Network Configuration:

- Implement a multilayer perceptron (MLP) with 3-5 hidden layers

- Use leaky ReLU activation functions (α=0.2) in hidden layers

- Apply tanh activation in output layer scaled to data range

- Input: 100-dimensional random noise vector ~N(0,1)

Discriminator Network Configuration:

- Implement MLP with 3-5 hidden layers (similar to generator)

- Use leaky ReLU activation functions (α=0.2) in hidden layers

- Apply sigmoid activation in output layer for binary classification

- Input: Landmark coordinate vector (real or synthetic)

Training Protocol:

- Initialize generator and discriminator with He normal initialization

- Set Adam optimizer with learning rate 0.0002, β₁=0.5

- Train for 10,000-50,000 epochs with batch size 32-128

- Alternate between discriminator and generator updates (1:1 ratio)

- Monitor loss functions and sample quality periodically

Synthetic Data Generation:

- Use trained generator to produce synthetic landmark data

- Apply inverse transformation to restore original coordinate scale

- Validate synthetic data quality through Principal Components Analysis (PCA)

Validation Metrics:

- Average Coverage Error (ACE): For assessing distribution similarity [22]

- Procrustes Distance: Measure shape differences between real and synthetic specimens

- PCA Overlap: Compare variance explained and component loading patterns

Protocol 2: Conditional GAN for Category-Specific Morphometric Generation

Objective: To generate synthetic landmark data for specific morphological categories or taxonomic groups using conditional GANs.

Materials and Requirements:

- Software Environment: Python with deep learning frameworks supporting conditional GANs

- Input Data: Labeled landmark data with categorical variables (e.g., species, treatment groups)

- Computational Resources: GPU with minimum 12GB VRAM

Procedure:

- Data Preparation:

- Perform standard Procrustes alignment [3]

- Encode categorical variables as one-hot vectors

- Concatenate landmark coordinates with condition vectors

Conditional Generator Architecture:

- Implement MLP with conditional input concatenation at input and hidden layers

- Use conditional batch normalization for improved performance

- Input: Concatenation of noise vector and condition vector

Conditional Discriminator Architecture:

- Implement MLP with conditional input concatenation at input layer

- Use projection discriminator for conditional probability estimation

- Input: Concatenation of landmark data and condition vector

Training Protocol:

- Use Wasserstein loss with gradient penalty (λ=10) for training stability [21]

- Set learning rate 0.0001 with RMSprop optimizer

- Train with 5:1 discriminator-to-generator update ratio initially

- Apply label smoothing (0.9 for real, 0.1 for fake) to prevent discriminator overfitting

- Monitor category-specific generation quality

Quality Assessment:

- Perform discriminant analysis to verify category separation in synthetic data

- Compare within-group and between-group variances with original data

- Assess morphological plausibility through expert evaluation

Workflow for Geometric Morphometric Data Augmentation

The following diagram illustrates the complete experimental workflow for geometric morphometric data augmentation using GANs:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Computational Tools for GAN Implementation in Geometric Morphometrics

| Tool/Category | Specific Examples | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras | GAN implementation and training [20] | PyTorch recommended for research flexibility |

| Geometric Morphometrics Software | MorphoJ, PAST, R (geomorph) | Landmark processing and analysis [3] | MorphoJ for GUI-based analysis |

| Data Visualization | ggplot2, Matplotlib, Plotly | Results visualization and quality assessment | Essential for synthetic data validation |

| GAN Architecture Variants | DCGAN, WGAN-GP, Conditional GAN | Specialized generation tasks [20] [21] | WGAN-GP for training stability [21] |

| Evaluation Metrics | Average Coverage Error (ACE), FID, PCA | Synthetic data quality assessment [22] | ACE particularly suited for time-series morphological data [22] |

| Computational Hardware | GPU clusters, Cloud computing (AWS, GCP) | Accelerate GAN training process | Minimum 8GB GPU RAM recommended |

Challenges and Mitigation Strategies

Technical Limitations and Solutions

Despite their promising applications in geometric morphometrics, GANs present several significant challenges that researchers must address. Training instability remains a fundamental issue, often manifesting as mode collapse where the generator produces limited varieties of samples [22] [20]. This problem can be mitigated through architectural improvements such as Wasserstein GAN with gradient penalty (WGAN-GP) which provides more stable training dynamics and better convergence [21]. Additionally, vanishing gradients during training can impede network learning, particularly in the early stages when the discriminator becomes too proficient at distinguishing real from synthetic data [22].

For geometric morphometric applications specifically, the high dimensionality of landmark data presents unique challenges. Each landmark consists of multiple coordinates (2D or 3D), and complete configurations may involve dozens of landmarks, resulting in complex high-dimensional spaces. Recent approaches have successfully addressed this through dimensionality reduction techniques such as Principal Components Analysis (PCA) applied prior to GAN training, allowing the model to learn the essential shape parameters rather than raw coordinate data [3]. This approach aligns with standard geometric morphometric practice where shape space is typically represented by principal components.

Validation and Evaluation Framework

Robust validation of synthetic morphometric data requires multiple complementary approaches. Statistical equivalence testing should demonstrate that synthetic data preserves the multivariate distributional properties of original data [3]. Domain expert evaluation is crucial for assessing the morphological plausibility of generated specimens, particularly for paleontological applications where functional constraints must be maintained. Downstream task performance should be evaluated by comparing analytical results (e.g., classification accuracy, allometric patterns) between original and augmented datasets.

The Average Coverage Error (ACE) metric has been proposed as particularly suitable for evaluating GAN performance with time-series and morphological data, as it assesses how well the generated data covers the true distribution of the original dataset [22]. This metric can be adapted for geometric morphometrics by treating landmark configurations as multivariate observations and evaluating their coverage in the shape space.

Generative Adversarial Networks represent a transformative methodology for addressing fundamental challenges in geometric morphometrics, particularly the limitations imposed by incomplete fossil records and small sample sizes. The adversarial dynamics between generator and discriminator networks enable the creation of scientifically valid synthetic morphometric data that expands limited datasets while preserving essential morphological variances. The experimental protocols outlined provide researchers with practical frameworks for implementing GAN-based data augmentation in geometric morphometric studies.

Future research directions include the development of three-dimensional GAN architectures specifically designed for landmark data, integration with geometric deep learning approaches that respect the non-Euclidean nature of shape space, and conditional generation frameworks that can incorporate taxonomic, temporal, or environmental covariates. As these methodologies mature, GANs are poised to become indispensable tools in the geometric morphometrician's toolkit, enabling more robust statistical analyses and deeper insights into morphological evolution despite the inherent limitations of the fossil record.

Implementing Generative Algorithms for High-Fidelity Morphometric Data Augmentation

Generative Adversarial Networks (GANs) have revolutionized data augmentation across scientific domains, particularly for fields like geometric morphometrics and drug discovery where labeled data are scarce. These frameworks learn to generate synthetic data that closely mirrors the distribution of real datasets, thereby addressing fundamental challenges of sample size limitations and class imbalance. This document provides a detailed technical examination of three critical GAN architectures—Standard GANs, Conditional GANs (cGANs), and the novel Adaptive Identity-Regularized GANs—framed within the context of geometric morphometric data augmentation. We present structured performance comparisons, detailed experimental protocols, and essential reagent solutions to equip researchers with practical implementation guidelines. The architectural blueprints outlined here serve as a foundation for enhancing research in computational biology, paleontology, pharmaceutical development, and beyond, where accurate morphological representation is paramount.

Core Architectural Definitions and Applications

Standard GANs: The foundational framework consists of two neural networks, a generator (G) and a discriminator (D), engaged in a minimax game [3]. The generator creates synthetic data from random noise, while the discriminator distinguishes between real and generated samples. This architecture is particularly effective for learning general data distributions and performing basic data augmentation without class-specific conditioning [3] [23].

Conditional GANs (cGANs): An extension of standard GANs that incorporates additional conditioning information, such as class labels, to guide the generation process [23]. This conditional input is fed to both generator and discriminator, enabling targeted synthesis of data for specific categories. cGANs have demonstrated superior performance in medical imaging (e.g., fracture reduction with 88.37% satisfaction rate versus 53.49% for manual reduction) [24] and agricultural phenotyping (achieving 0.9970 segmentation accuracy) [25].

Adaptive Identity-Regularized GANs: A specialized architecture integrating adaptive identity blocks to preserve critical species-specific features during generation, coupled with species-specific loss functions incorporating morphological constraints and taxonomic relationships [26]. This biologically-informed approach is particularly valuable for fish classification and segmentation, where it achieved 95.1% classification accuracy and 89.6% mean Intersection over Union, representing significant improvements over baseline methods [26].

Quantitative Performance Comparison

Table 1: Performance Metrics of GAN Architectures Across Applications

| Architecture | Application Domain | Key Performance Metrics | Comparative Improvement |

|---|---|---|---|

| Standard GAN | Molecular Generation | AUC: 0.94 (AlexNet discriminator) [23] | Baseline for drug-like molecule generation |

| Conditional GAN | Femoral Neck Fracture Reduction | Satisfied Reduction: 88.37% [24] | +34.88% over manual reduction (53.49%) |

| Grape Berry Segmentation | Accuracy: 0.9970, IoU: 0.9813 [25] | Optimal with 6×6 kernel size | |

| Molecular Generation | Target-specific compound generation [23] | Enabled class-controlled synthesis | |

| Adaptive Identity-Regularized GAN | Fish Classification | Accuracy: 95.1% [26] | +9.7% over baseline methods |

| Fish Segmentation | mean IoU: 89.6% [26] | +12.3% over baseline methods | |

| Biological Validation | Expert Quality Score: 88.7% [26] | Morphological plausibility assurance |

Table 2: Domain-Specific Advantages and Limitations

| Architecture | Geometric Morphometrics | Drug Discovery | Medical Imaging |

|---|---|---|---|

| Standard GAN | Generates basic shape variants [3] | Creates diverse drug-like molecules [23] | Limited application in complex anatomical contexts |

| Conditional GAN | Enables class-specific shape generation | Target-specific compound design [23] | Precision anatomical manipulation (fracture reduction) [24] |

| Adaptive Identity-Regularized GAN | Preserves taxonomically relevant morphological features | Species-specific bioactive compound generation | Biologically authentic synthetic tissue generation |

Experimental Protocols

Protocol 1: Implementing Adaptive Identity-Regularized GANs for Morphological Data Augmentation

This protocol details the procedure for implementing adaptive identity-regularized GANs, specifically designed for enhancing fish classification and segmentation performance through biologically-constrained data augmentation [26].

Materials: Fish dataset with 9,000 images across 9 species (1,000 samples each), deep learning framework with GAN implementation capabilities, high-performance computing resources, taxonomic reference database.

Procedure:

- Data Preprocessing:

- Collect and annotate fish images with species labels and segmentation masks.

- Perform image normalization and augmentation using standard transformations.

- Partition data into training (70%), validation (15%), and test (15%) sets.

Model Architecture Configuration:

- Implement adaptive identity blocks within the generator network to preserve species-invariant features.

- Design species-specific loss functions incorporating morphological constraints and taxonomic relationships.

- Configure the discriminator with multi-scale feature extraction and attention mechanisms.

Two-Phase Training:

- Phase 1 (Feature Preservation): Train generator with emphasis on identity mapping to establish stable species characteristics.

- Phase 2 (Controlled Variation): Introduce morphological variations while maintaining biological plausibility through adaptive sampling.

Validation and Evaluation:

- Quantitative assessment using classification accuracy, mean IoU for segmentation.

- Biological validation by domain experts to evaluate morphological authenticity.

- Statistical significance testing with p<0.001 threshold and effect size calculation.

Troubleshooting:

- For training instability: Adjust learning rates separately for generator and discriminator.

- For mode collapse: Implement minibatch discrimination and feature matching.

- For biologically implausible outputs: Strengthen species-specific loss constraints.

Protocol 2: Conditional GANs for Geometric Morphometric Augmentation

This protocol adapts cGAN methodologies for geometric morphometric data augmentation, particularly valuable for paleontological and archaeological applications where sample sizes are limited [3] [27].

Materials: Landmark coordinate data, 3D specimen models when applicable, computing environment with support for geometric operations, reference taxonomy.

Procedure:

- Data Preparation:

- Digitize landmark coordinates following standardized geometric morphometric protocols.

- Perform Generalized Procrustes Analysis (GPA) to remove non-shape variation.

- Convert landmark data to appropriate input format for cGAN processing.

Conditional GAN Configuration:

- Implement conditional input layers for taxonomic or morphological class labels.

- Configure generator to produce synthetic landmark configurations.

- Design discriminator to evaluate authenticity of landmark sets while considering class labels.

Training Process:

- Train generator and discriminator alternately with balanced batches.

- Incorporate graph-based regularization when working with population data [28].

- Monitor training progress with validation set shape statistics.

Synthetic Data Validation:

- Assess synthetic landmark quality through Procrustes distance to real specimens.

- Evaluate preservation of morphological relationships using Principal Component Analysis.

- Test utility through downstream classification tasks with augmented datasets.

Troubleshooting:

- For unrealistic shape generation: Increase weight of shape constraint terms in loss function.

- For poor class separation: Adjust conditional input architecture and embedding dimensions.

- For landmark correspondence issues: Implement landmark synchronization algorithms.

Protocol 3: Standard GANs for Molecular Structure Generation

This protocol outlines the application of standard GAN architectures for molecular generation in drug discovery contexts, based on the FSGLD pipeline and related approaches [29] [23].

Materials: Molecular database (e.g., ChEMBL, ZINC), molecular fingerprinting software, computing resources with GPU acceleration, molecular docking software.

Procedure:

- Data Preparation:

- Curate molecular dataset with desired properties (e.g., drug-likeness, target activity).

- Convert molecular structures to appropriate representation (fingerprints, graphs, or SMILES).

- Split data into training, validation, and test sets.

GAN Implementation:

- Implement generator network that maps random noise to molecular representations.

- Design discriminator to distinguish real from generated molecular structures.

- Select appropriate architectural variants (DCGAN, WGAN) based on data characteristics.

Training and Optimization:

- Train adversarial networks with balanced sampling from real molecular dataset.

- Implement gradient penalty or other regularization for training stability.

- Monitor diversity and quality of generated structures throughout training.

Validation and Application:

- Assess chemical validity and novelty of generated molecules.

- Evaluate synthetic accessibility and drug-like properties.

- Integrate with downstream molecular docking and dynamics simulations.

Troubleshooting:

- For invalid molecular structures: Adjust representation or add validity constraints.

- For limited diversity: Implement diversity-enforcing loss terms or sampling strategies.

- For poor chemical properties: Incorporate property prediction into discriminator.

Workflow Visualization

Diagram 1: Comparative GAN workflow for data augmentation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for GAN Implementation in Geometric Morphometrics and Drug Discovery

| Reagent Category | Specific Solution | Function | Implementation Example |

|---|---|---|---|

| Data Representation | Landmark Coordinates | Capture morphological shape information | Type I, II, III landmarks with semi-landmarks for curves [3] |

| Molecular Fingerprints | Represent chemical structures | Extended-Connectivity Fingerprints (ECFP6), MACCS keys [23] | |

| Image Tensors | Standardized image input | Normalized 3D arrays (e.g., 160×160×160 for CT scans) [24] | |

| Architectural Components | Adaptive Identity Blocks | Preserve invariant features during generation | Species-specific morphological feature retention [26] |

| Graph Regularization | Maintain population structure | Inter-subject similarity preservation in manifold-valued data [28] | |

| Multi-Scale Discriminators | Enhance sample discrimination | Hierarchical feature extraction for improved realism [26] | |

| Training Mechanisms | Species-Specific Loss | Incorporate biological constraints | Taxonomic relationship integration in loss calculation [26] |

| Adversarial Loss | Drive competition between networks | Standard, Wasserstein, or manifold-aware variants [28] | |

| Reconstruction Loss | Maintain input-output similarity | Mean squared error or structural similarity measures [28] | |

| Validation Tools | Biological Expert Evaluation | Assess morphological plausibility | Quality scoring by domain specialists (e.g., 88.7% score) [26] |

| Geometric Morphometric Analysis | Quantify shape characteristics | Procrustes analysis, principal component analysis [3] | |

| Molecular Docking | Evaluate binding affinity | Virtual screening of generated compounds [23] |

The architectural blueprints presented for Standard GANs, Conditional GANs, and Adaptive Identity-Regularized GANs provide a comprehensive framework for geometric morphometric data augmentation across scientific domains. Performance metrics demonstrate the progressive enhancement in capability from standard architectures (molecular generation AUC: 0.94) to conditional models (fracture reduction satisfaction: 88.37%) and finally to specialized adaptive identity implementations (fish classification accuracy: 95.1%). The experimental protocols and reagent solutions offer practical guidance for implementation, while the workflow visualization illustrates the interconnected nature of these approaches. As generative methodologies continue to evolve, these architectural foundations will enable researchers to address increasingly complex challenges in morphological analysis, pharmaceutical development, and beyond, particularly in data-limited scenarios common in specialized scientific fields.

Application Notes

The integration of generative artificial intelligence (AI) into geometric morphometrics (GM) offers a revolutionary approach to overcoming the critical limitation of small and incomplete datasets, particularly prevalent in paleontology and taxonomic studies [3]. The core challenge lies in augmenting these datasets in a way that preserves the fundamental biological shape relationships and inherent morphological constraints, ensuring that synthetic data are not just statistically plausible but also biologically meaningful [3] [30]. Geometric morphometrics provides a powerful multivariate statistical toolkit for the quantitative analysis of biological form based on Cartesian landmark coordinates, which mathematically define the geometry of a morphology [3] [31].

Generative models, such as Generative Adversarial Networks (GANs), have demonstrated significant potential in this domain. A GAN consists of two competing neural networks: a Generator that creates synthetic data and a Discriminator that evaluates its authenticity [3]. When trained on Procrustes-aligned landmark coordinates—which are shape variables independent of size, position, and orientation—these models can learn the complex, non-linear probability distribution of biological shapes in a sample [3] [31]. The success of this approach is evidenced by studies where GANs produced multidimensional synthetic data that were statistically equivalent to the original training data [3].

More recently, advanced architectures like latent diffusion models have shown even greater promise in biologically demanding contexts. For instance, MorphDiff, a transcriptome-guided latent diffusion model, simulates high-fidelity cell morphological responses to genetic and drug perturbations [32]. By using perturbed gene expression profiles as a conditioning input, the model effectively captures the intricate relationship between molecular state and phenotypic outcome, generating realistic cellular morphologies that can accurately predict mechanisms of action (MOA) for drugs [32]. This exemplifies a powerful method for incorporating rich domain knowledge (transcriptomics) directly into the generative process.

The fidelity of these models is paramount. As highlighted in taphonomic research, methods that fail to adequately represent the full spectrum of morphological variation, such as by excluding non-oval tooth pits from analyses, can produce misleading results and low classification accuracy [14]. Therefore, the key to preserving biological fidelity is the conscientious incorporation of domain knowledge, which can manifest as phylogenetic constraints, allometric growth trajectories, or functional/developmental modules [30].

Table 1: Key Generative Models for Morphometric Data Augmentation

| Model Type | Core Mechanism | Advantages in GM | Example Application |

|---|---|---|---|

| Generative Adversarial Network (GAN) [3] | Adversarial training between Generator and Discriminator | Produces highly realistic synthetic landmark data; overcomes linearity assumptions. | Augmenting fossil landmark datasets with significantly equivalent synthetic specimens. |

| Latent Diffusion Model [32] | Reverses a gradual noising process conditioned on external data. | Highly robust to noise; supports flexible conditioning (e.g., on gene expression); superior image synthesis. | Predicting cell morphology changes under unseen drug perturbations (MorphDiff). |

| Conditional GAN (cGAN) [3] | GAN architecture where generation is conditioned on specific labels. | Potentially allows for targeted generation of shapes per taxonomic group or treatment. | Noted as less successful in some GM experiments compared to other GANs. |

Experimental Protocols

Protocol 1: Data Augmentation for Fossil Landmarks Using GANs

This protocol outlines the procedure for augmenting a landmark dataset of fossil specimens using a Generative Adversarial Network, as derived from experimental applications in geometric morphometrics [3].

1. Landmarking and Shape Variable Acquisition:

- Landmark Digitization: Collect two-dimensional (2D) or three-dimensional (3D) coordinate data from homologous anatomical points (landmarks) across all specimens in the dataset. Landmarks are defined as biologically homologous points that correspond across all specimens [31].

- Procrustes Superimposition: Perform a Generalized Procrustes Analysis (GPA) to remove the non-shape variations of scale, position, and orientation from the raw landmark coordinates. This involves:

- Centering each configuration to a common origin.

- Scaling all configurations to a unit size, typically measured as Centroid Size (the square root of the sum of squared distances of all landmarks from their centroid) [33] [31].

- Rotating configurations to minimize the Procrustes distance between each specimen and the sample mean shape.

- The resulting Procrustes shape coordinates constitute the primary shape variables for analysis [31].

2. GAN Training and Data Generation:

- Input Data Preparation: Format the Procrustes shape coordinates into a single data matrix where each row represents one specimen and each column represents a shape coordinate.

- Model Configuration: Implement a GAN architecture. The generator network should take a random noise vector as input and output a vector of synthetic shape coordinates. The discriminator network should take a vector of shape coordinates (real or synthetic) and output a probability of it being real [3].

- Adversarial Training: Train the GAN models simultaneously. The generator learns to produce synthetic landmark data that the discriminator cannot distinguish from the real Procrustes-aligned training data [3].

- Synthetic Data Generation: After training, use the generator model to produce new, synthetic landmark configurations.

3. Validation and Fidelity Assessment:

- Statistical Comparison: Use robust statistical methods, such as Multivariate Analysis of Variance (MANOVA) or Procrustes distance-based tests, to verify that the synthetic data distribution is not significantly different from the original training data distribution [3].

- Visualization: Visualize the synthetic shapes using deformation grids (e.g., thin-plate splines) to qualitatively assess whether the generated forms are biologically plausible and fall within the expected morphospace [3] [31].

Protocol 2: Predicting Cellular Morphology with a Transcriptome-Guided Diffusion Model

This protocol details the methodology for MorphDiff, a state-of-the-art model that predicts cell morphological changes under perturbations using a conditioned diffusion model [32].

1. Multi-Modal Data Curation:

- Cell Morphology Imaging: Acquire high-throughput cell morphology images, for instance, using the Cell Painting assay which typically produces five-channel images (DNA, ER, RNA, AGP, Mito) [32].

- Transcriptomic Profiling: For the same cell populations under perturbation, obtain corresponding gene expression profiles. The L1000 assay is a common choice for this purpose [32].

- Data Pairing: Ensure that each cell morphology image (or a pool of images) has a paired transcriptomic profile from the same perturbation condition.

2. Morphology Latent Space Encoding:

- Train a Morphology VAE (MVAE): Construct and train a Variational Autoencoder (VAE). The encoder compresses the high-dimensional cell morphology images into a low-dimensional latent vector, and the decoder reconstructs the images from this vector [32].

- Latent Representation Extraction: Use the trained encoder to convert all cell morphology images into their latent representations. This compressed space is where the diffusion model will operate, making training more computationally efficient [32].

3. Conditional Latent Diffusion Model Training:

- Conditioning Setup: Use the paired L1000 gene expression profiles as the conditioning signal for the diffusion model.

- Diffusion Process: The Latent Diffusion Model (LDM) is trained on a two-part process:

- Noising (Forward Process): Sequentially add Gaussian noise to the latent morphology representation over T steps until it becomes pure noise.

- Denoising (Reverse Process): Train a U-Net model to recursively predict and remove the noise at each step, conditioned on the gene expression profile. The model is trained to minimize the difference between the predicted and actual noise [32].

- Implementation Detail: The gene expression condition is integrated into the U-Net via an attention mechanism, allowing the model to learn complex relationships between gene expression and morphological features [32].

4. Model Application and Downstream Analysis:

- Prediction Modes: The trained MorphDiff model can be used in two primary modes:

- G2I (Gene-to-Image): Generate a perturbed cell morphology from a random noise vector, conditioned solely on a perturbed gene expression profile.

- I2I (Image-to-Image): Transform an unperturbed cell morphology image into its predicted perturbed state, using the perturbed gene expression profile as a guide [32].

- Feature Extraction & MOA Prediction: Use image analysis tools (e.g., CellProfiler, DeepProfiler) to extract quantitative morphological features from the generated images. These features can then be used for downstream tasks such as Mechanism of Action (MOA) retrieval and analysis [32].

Workflow and Pathway Visualizations

Generative Morphometrics Workflow

MorphDiff Model Architecture

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item/Tool Name | Type | Primary Function in GM & Generative AI |