FEA in Biomedical Engineering: Unlocking Advantages, Navigating Limitations for Research and Development

This article provides a comprehensive analysis of the advantages and limitations of Finite Element Analysis (FEA) for researchers and professionals in biomedical engineering and drug development.

FEA in Biomedical Engineering: Unlocking Advantages, Navigating Limitations for Research and Development

Abstract

This article provides a comprehensive analysis of the advantages and limitations of Finite Element Analysis (FEA) for researchers and professionals in biomedical engineering and drug development. It explores the foundational principles of FEA, details its methodological applications in areas like medical device design and material science, offers best practices for troubleshooting and optimizing simulations, and critically examines validation strategies against traditional experimental data. The synthesis aims to equip scientists with the knowledge to effectively leverage FEA as a powerful, predictive tool in R&D while understanding its constraints to ensure reliable and translatable results.

Understanding FEA: Core Principles and Its Transformative Role in Biomedical Research

Finite Element Analysis (FEA) is a computational technique for numerically solving partial differential equations (PDEs) that arise in engineering and mathematical modeling. By subdividing a complex problem domain into smaller, simpler parts called finite elements, FEA transforms intractable PDEs into solvable systems of algebraic equations. This method has become indispensable across numerous engineering disciplines, including structural mechanics, heat transfer, fluid dynamics, and electromagnetic field analysis [1] [2]. The fundamental principle of FEA lies in its discretization approach, where a continuous physical system is represented by a finite number of elements interconnected at nodes, allowing for the approximation of complex behaviors within each element using simpler mathematical functions [3].

In the context of modern engineering research, FEA provides a powerful framework for investigating system behaviors under various physical constraints without resorting to expensive and time-consuming physical prototyping. The method offers significant advantages in handling complicated geometries, dissimilar material properties, and capturing local effects that would otherwise be difficult to analyze through analytical methods [1]. For researchers in fields ranging from traditional engineering to biomedical sciences, FEA serves as a virtual laboratory where design parameters can be optimized, and performance can be validated under simulated operational conditions.

Mathematical Foundations of FEA

The Discretization Process

The mathematical foundation of FEA begins with the concept of spatial discretization, where the problem domain (Ω) is subdivided into a finite number of elements. This mesh generation process creates smaller, regular subdomains (Ωₑ) that collectively approximate the original, potentially complex, geometry [1] [4]. The solution to the PDE is then approximated by linear combinations of basis functions within each element, with the accuracy of the solution heavily dependent on the mesh resolution and element type [5].

For a dependent variable u (which could represent temperature, displacement, or other physical quantities), the FEA approximation can be expressed as:

$$u(\mathbf{x}) \approx uh(\mathbf{x}) = \sum{i=1}^{N} ui \psii(\mathbf{x})$$

where $ui$ are the coefficients representing the solution at discrete nodes, and $\psii(\mathbf{x})$ are the basis functions (also called shape functions) that interpolate the solution between nodes [5]. The power of this approach lies in the local support of these basis functions—each function is nonzero only over a small region of the domain, typically limited to adjacent elements sharing a common node [5].

Weak Formulation

The transformation from the pointwise PDE to a solvable numerical system is achieved through the weak formulation. Rather than requiring the PDE to be satisfied exactly at every point, the weak form demands that the weighted average of the residual over the domain equals zero [1] [5]. This process begins by multiplying the PDE by a test function φ and integrating over the domain:

$$\int_{\Omega} [\nabla \cdot (k \nabla T)] \phi d\Omega = 0$$

Through integration by parts and application of boundary conditions, this formulation transforms the problem into finding a solution that satisfies the integral equation for all test functions in a specified function space [5]. The weak formulation offers significant mathematical advantages: it reduces the continuity requirements on the approximate solution, incorporates natural boundary conditions directly, and provides a framework for error analysis and convergence studies [1] [5].

Table 1: Key Mathematical Formulations in Finite Element Analysis

| Formulation Type | Mathematical Approach | Advantages | Application Context |

|---|---|---|---|

| Strong Form | Direct solution of the original PDE | Exact solution at every point (when obtainable) | Simple geometries with analytical solutions |

| Weak Form | Integral formulation using test functions | Handles non-smooth solutions, incorporates natural boundary conditions | Complex real-world problems with discontinuous material properties |

| Galerkin Method | Test functions same as basis functions | Symmetric matrices, optimal approximation properties | Most standard FEA applications |

| Petrov-Galerkin | Different test and basis functions | Enhanced stability for convective-dominated problems | Fluid dynamics and transport problems |

Core FEA Methodology: From Problem Definition to Solution

Meshing Strategies and Element Types

The creation of an appropriate finite element mesh is a critical step that significantly influences the accuracy and computational cost of the analysis. The mesh consists of elements (triangles, quadrilaterals, tetrahedra, etc.) connected at nodes, forming a discrete representation of the continuous domain [3] [4]. Two fundamental mesh resolution strategies exist: h-refinement, which increases the number of elements to improve accuracy, and p-refinement, which increases the polynomial order of the shape functions within elements [1].

The selection of element type and size depends on the problem characteristics, with finer meshes typically required in regions with high solution gradients or complex geometry [4]. Modern FEA practices often employ adaptive meshing, where the solution is first computed on a coarse mesh, then the mesh is automatically refined in areas with high error estimates, achieving optimal balance between computational efficiency and solution accuracy [4].

Assembly and Solution of Global System

The core computational phase of FEA involves assembling the global system from element-level contributions and solving the resulting matrix equation [1] [2]. For each element, local matrices and vectors are computed based on the element geometry and material properties. These local contributions are then systematically combined into a global system of equations:

$$[K]{u} = {F}$$

where $[K]$ is the global stiffness matrix (typically sparse and symmetric), ${u}$ is the vector of unknown nodal values, and ${F}$ is the global load vector [2]. The solution of this linear system represents the approximate values of the field variable at the node points, from which the complete solution throughout the domain can be reconstructed using the shape functions [5].

Table 2: FEA Solution Algorithms and Applications

| Solution Method | Algorithm Characteristics | Computational Complexity | Typical Applications |

|---|---|---|---|

| Direct Solvers (LU, Cholesky) | Robust, predictable performance | O(n³) for dense, better for sparse | Moderate-sized problems (<10⁶ DOF) |

| Iterative Solvers (CG, GMRES) | Lower memory requirements | O(n²) per iteration | Large-scale problems with >10⁶ DOF |

| Preconditioned Iterative | Accelerates convergence | Problem-dependent | Ill-conditioned systems, multiphysics |

| Eigenvalue Solvers | Finds natural frequencies | Typically O(n³) | Structural dynamics, wave propagation |

Research Reagent Solutions: Essential Tools for FEA

The effective implementation of FEA requires both sophisticated software tools and proper methodological approaches. The table below outlines key "research reagents" – essential software and methodological components – for conducting rigorous finite element analysis.

Table 3: Essential Research Reagents for Finite Element Analysis

| Tool Category | Representative Examples | Primary Function | Research Context |

|---|---|---|---|

| General-purpose FEA | ANSYS, Abaqus, COMSOL | Multiphysics simulation across mechanical, thermal, fluid domains | Broad engineering applications requiring coupled physics [3] [6] |

| Specialized FEA | NASTRAN (aerospace), LS-DYNA (impact), HeartFlow (medical) | Domain-specific solutions with tailored capabilities | Targeted applications with specialized material models or boundary conditions [1] [7] |

| Open-source FEA | MFEM, FEniCS, OpenFOAM | Customizable simulation frameworks for method development | Academic research, algorithm development, educational use [8] |

| Meshing Tools | Gmsh, ANSYS Meshing, HyperMesh | Geometry discretization with quality control | Pre-processing stage of FEA workflow [4] |

| CAD Integration | SolidWorks Simulation, Autodesk Inventor Nastran, Fusion 360 | Direct FEA on native CAD geometry | Design optimization and parametric studies [9] |

Advanced Discretization Strategies

Mesh Resolution and Adaptive Techniques

The accuracy of FEA solutions is intrinsically linked to the discretization strategy employed. The fundamental challenge lies in determining the appropriate balance between mesh density and computational resources [4]. A mesh that is too coarse may fail to capture critical solution features, while an excessively fine mesh consumes unnecessary computational resources [3]. The guiding principle for mesh resolution is to set the element size to 10-20% of the smallest spatial wavelength that needs to be resolved in the solution [4].

Adaptive meshing represents the state-of-the-art in discretization strategies, dynamically refining the mesh in regions with high solution gradients or significant errors while maintaining coarser discretization in areas with smooth solution variations [4]. This approach optimizes computational efficiency while ensuring solution accuracy. The implementation typically follows an iterative process: solve → estimate error → refine → resolve, continuing until global error measures fall below specified tolerances [4].

Specialized Discretization for Biomedical Applications

In biomedical research, FEA discretization strategies must address additional complexities such as anisotropic material properties, complex anatomical geometries, and multi-scale phenomena [7]. For cardiovascular applications, FEA models simulate patient-specific occluded coronary arteries to understand conditions favoring atherosclerotic plaques and evaluate treatment options like balloon angioplasty or stent implantation [7]. These models require particularly refined meshing at tissue-device interfaces where stress concentrations occur.

The discretization of physiological systems often employs multi-scale approaches, where different resolution levels are used for various anatomical features. For instance, in orthopedic biomechanics, multibody models represent body segments as rigid bodies for motion analysis, while detailed 3D FE models with refined meshing estimate stresses and strains exchanged between body segments and implanted prostheses [7]. This hierarchical discretization strategy enables efficient simulation of complex physiological systems.

FEA in Biomedical Research: Protocols and Applications

Cardiovascular Device Evaluation Protocol

The application of FEA in cardiovascular research follows a structured protocol for device evaluation and treatment planning. The patient-specific modeling protocol begins with acquiring medical imaging data (CT or MRI), followed by segmentation to create a 3D geometric model [7]. This model is then discretized into finite elements, with mesh refinement at critical regions such as vessel bifurcations or calcified plaques.

For stent implantation simulation, researchers assign appropriate material models to both the device (typically nitinol or stainless steel with nonlinear properties) and arterial tissue (often modeled as hyperelastic) [7]. Boundary conditions incorporate physiological pressures and vessel tethering, while contact algorithms model the stent-artery interaction. The simulation results predict vessel expansion, stent apposition, and stress distributions in the arterial wall—critical factors for evaluating treatment safety and efficacy [7].

FDA-approved technologies like HeartFlow and FEops HEARTguide exemplify the successful translation of these protocols to clinical practice, providing computational support for pre-operative planning of percutaneous coronary interventions and transcatheter aortic valve implantations [7].

Orthopedic Biomechanics Assessment

In orthopedic research, FEA protocols assess joint biomechanics, bone-implant interactions, and surgical outcomes. The standard protocol involves creating anatomical models from CT scans, with density-elasticity relationships mapping Hounsfield units to bone material properties [7]. Discretization strategies must balance computational demands with the need to capture complex trabecular structures and cortical shell geometries.

For total joint replacement simulations, researchers apply physiological loading conditions representing activities of daily living, while modeling complex interactions between implant components and biological tissues [7]. These simulations predict bone remodeling patterns, implant stability, and potential failure mechanisms—providing valuable insights for implant design optimization and patient-specific surgical planning.

Advantages and Research Limitations

Methodological Strengths in Research Contexts

FEA offers researchers several distinct advantages that explain its widespread adoption across engineering and scientific disciplines. The method provides geometric flexibility, enabling the analysis of complex domains with irregular boundaries that would be intractable using analytical methods [1] [10]. This capability is particularly valuable in biomedical applications where anatomical structures defy simplified geometric representations.

The method's ability to handle multiphysics problems allows researchers to study coupled phenomena—such as thermomechanical, fluid-structure, or electro-thermal interactions—within a unified computational framework [7] [5]. Additionally, FEA supports material heterogeneity, allowing different material properties to be assigned to various regions of the model, which is essential for simulating biological tissues and composite materials [1].

Research Limitations and Methodological Constraints

Despite its powerful capabilities, FEA presents several limitations that researchers must acknowledge. The computational expense of high-fidelity simulations, particularly for nonlinear, transient, or multiphysics problems, can be prohibitive, requiring access to high-performance computing resources [4]. This constraint often forces researchers to make simplifying assumptions that may affect result accuracy.

The mesh dependency of solutions represents another significant limitation, where simulation results may vary with different discretization strategies, requiring careful mesh sensitivity studies to establish result reliability [4]. Additionally, the validation challenge is particularly acute in biomedical applications, where experimental data for model verification may be limited due to ethical and practical constraints [7].

For complex physiological systems, researchers face difficulties in establishing appropriate boundary conditions and material models that accurately represent in vivo environments and tissue behaviors [7]. These limitations highlight the importance of interpreting FEA results with appropriate scientific caution and employing rigorous verification and validation protocols.

Finite Element Analysis (FEA) has emerged as an indispensable numerical technique for modelling and simulating engineering processes across diverse industries, from biomedical implants to food packaging and automotive design [11]. The core strength of FEA lies in its ability to predict how products react to real-world forces, vibration, heat, fluid flow, and other physical effects, showing whether a product will break, wear out, or function as designed [11]. This capability is particularly crucial in studying stress and strain concentration—the phenomenon where stresses intensify at geometrical discontinuities such as holes, notches, and grooves within continuous media under structural loading [12]. The advantages driving FEA adoption concentrate primarily in three domains: significant cost reduction through virtual prototyping, accelerated design cycles, and remarkable predictive power for complex physical behaviors. When framed within the broader context of FEA concentration research, these advantages demonstrate how computational methods are transforming traditional engineering approaches, though they operate within specific methodological limitations that continue to evolve through ongoing research.

Quantitative Advantages of FEA in Engineering Design

Table 1: Documented Performance Advantages of FEA Across Industries

| Application Domain | Reported Advantage | Quantitative Benefit | Source |

|---|---|---|---|

| Orthopedic Screw Design | Predictive accuracy for engagement failure | Identified dangerous (<30%) and optimal (>90%) engagement ranges | [13] |

| Food Packaging Design | Cost and time savings | Replaces "design–prototype–test– redesign" approach; reduces physical prototyping | [11] |

| Dental Restoration Materials | Stress distribution prediction | Enabled material performance comparison under 100N-250N loads | [14] |

| General Engineering Design | Design optimization capability | Allows evaluation of multiple configurations without physical prototypes | [13] [11] |

Table 2: Economic and Efficiency Benefits of FEA Implementation

| Advantage Category | Traditional Approach | FEA-Enhanced Approach | Impact |

|---|---|---|---|

| Development Costs | Physical prototyping required | Virtual prototyping | Reduced material and manufacturing costs |

| Development Timeline | Sequential design-test-redesign | Concurrent design analysis | Accelerated time-to-market |

| Design Insight | Limited to surface strain measurements | Comprehensive stress/strain visualization | Enhanced understanding of failure mechanisms |

| Optimization Capability | Limited design variations due to cost | Numerous design iterations possible | Improved product performance and reliability |

Experimental Protocols in FEA Concentration Research

Case Study: Two-Part Compression Screw Engagement Analysis

A recent study on novel two-part compression screws demonstrates a comprehensive FEA protocol for determining optimal thread engagement percentages [13]. The methodology followed these precise experimental steps:

Model Creation: Ten three-dimensional models representing different combinations of the two screw parts (ranging from 10% to 100% of the engagement length, at 10% intervals) were converted into finite element models [13].

Mesh Convergence Testing: A mesh convergence test was performed to determine the optimal element size. The model was considered converged when the change in peak von Mises stress between successive refinements was less than 5%. The final mesh consisted of 18,520 20-node tetrahedral solid elements [13].

Material Properties Assignment: The material properties of Ti6Al4V (elastic modulus: 113.8 GPa, Poisson's ratio: 0.342, and yield strength: 790 MPa) were assigned to the screw elements based on standardized data for orthopedic-grade titanium alloys [13].

Boundary Condition Application: To simulate clinically relevant loading scenarios, two extreme boundary conditions were applied at the screw head: a 1000-N axial pullout force and a 1-Nm bending moment. These values were selected to represent upper-bound physiological loads encountered in osteoporotic bone or during accidental overloading [13].

Interface Definition: The interface between the two screw parts was defined as a bonded contact, assuming complete thread interlocking without slippage or loosening, to isolate the structural response under idealized conditions [13].

Simulation Execution: All simulations were performed using linear static structural analysis in ANSYS 7.0 (ANSYS Inc., Canonsburg, PA, USA). Material behavior was modeled as homogeneous, isotropic, and linearly elastic [13].

This rigorous protocol yielded clinically significant findings: combinations with less than 30% engagement should be avoided due to high stress concentrations, while engagements exceeding 90% are recommended for optimal mechanical performance [13].

Case Study: Stress Concentration in 3D-Printed Materials

Research on photosensitive resin parts printed on Masked Stereolithography (mSLA) devices employed an integrated validation approach combining FEA with analytical methods and experimental techniques [15]:

Specimen Preparation: Samples printed on mSLA devices were modeled using Computer-Aided Design (CAD) software and contained centrally located holes in a flat plate to study stress concentrators [15].

Analytical Validation: The Whitney-Nuismer analytical method, based on point stress criteria, was used to predict the strength of specimens with central holes. This method considers the distribution of stresses along the load direction and uses two characteristic dimensions as material properties [15].

Experimental Validation: Digital Image Correlation (DIC) was employed as an experimental technique to validate FEA results. This method captures at least two images (before and after deformation) and obtains strain fields on the sample surface plane by comparing images with adequate granular pattern and resolution [15].

Loading Conditions: Specimens were subjected to both axial and eccentric loads, with careful consideration of clamp restraint effects [15].

This multi-method approach demonstrated remarkable consistency, with variations in the stress concentration factor ranging from 0.42% to 5.25% for axial loading conditions, validating the precision of FEA predictions [15].

Visualization of FEA Workflows and Relationships

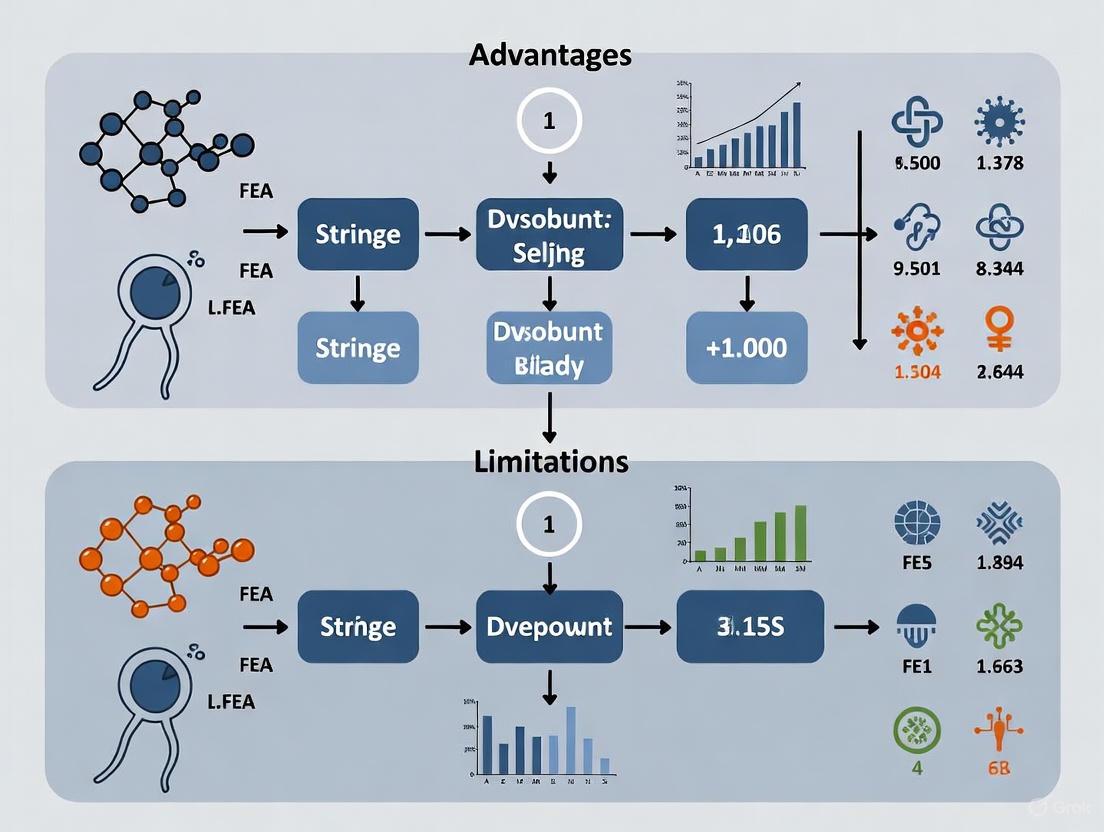

Diagram 1: Integrated FEA Workflow with Experimental Validation. This diagram illustrates the systematic process of finite element analysis, highlighting the integration of computational modeling with experimental validation techniques.

Diagram 2: Stress Concentration Factors and Analysis Methods. This diagram shows the relationship between geometric discontinuities, influencing factors, and resulting stress concentration phenomena, along with the primary research methods used for analysis.

Table 3: Essential Research Reagents and Computational Tools for FEA Concentration Analysis

| Tool Category | Specific Tool/Technique | Function in FEA Research | Example Application |

|---|---|---|---|

| Software Platforms | ANSYS | General-purpose FEA simulation | Structural analysis of orthopedic screws [13] |

| ABAQUS | Advanced nonlinear FEA | Stress concentration in perforated steel sheets [12] | |

| Material Models | Ti6Al4V Properties | Orthopedic implant simulation | Elastic modulus: 113.8 GPa, Poisson's ratio: 0.342 [13] |

| DC04 Steel Properties | Automotive sheet metal analysis | Tensile and shear characterization [12] | |

| Validation Methods | Digital Image Correlation (DIC) | Experimental strain field validation | Surface deformation measurement in 3D-printed specimens [15] |

| Whitney-Nuismer Method | Analytical stress concentration validation | Predicting strength of specimens with central holes [15] | |

| Meshing Technologies | Tetrahedral Solid Elements | 3D volume discretization | 20-node elements for accuracy near stress concentrations [13] |

| Mesh Convergence Testing | Solution accuracy verification | Determining optimal element size (<5% stress variation) [13] |

The adoption of FEA for concentration research is driven by compelling advantages that directly address core engineering challenges. The cost reduction achieved through virtual prototyping represents a fundamental shift from traditional "design–prototype–test–redesign" approaches, eliminating substantial material and manufacturing expenses [11]. The speed advantage manifests through the ability to evaluate multiple design configurations without physical prototypes, dramatically accelerating development cycles [13]. Most significantly, FEA's predictive power enables researchers to identify failure mechanisms and stress concentration factors that are difficult or impossible to measure experimentally, as demonstrated in orthopedic screw design where dangerous engagement ranges (<30%) and optimal configurations (>90%) were precisely identified [13].

When contextualized within the broader thesis of FEA concentration research, these advantages must be balanced against persistent limitations. The accuracy of FEA predictions remains dependent on appropriate material models, mesh quality, and boundary conditions, necessitating experimental validation through techniques like Digital Image Correlation [15]. Furthermore, the computational demands of high-fidelity models continue to present challenges, particularly for complex three-dimensional analyses [11] [12]. Despite these limitations, the continuing evolution of FEA methodologies—including multi-trapping models for hydrogen embrittlement [16], beam element simplifications for pipeline analysis [17], and integrated experimental-computational approaches for additive manufacturing [15]—demonstrates how the field is actively addressing these constraints while expanding the predictive power that makes FEA an indispensable tool across engineering disciplines.

Finite Element Analysis (FEA) is a computational technique that provides numerical solutions for predicting the behavior of physical systems under various conditions by solving partial differential equations across complex geometries [18]. While this method has revolutionized engineering and scientific research by enabling the simulation of everything from pharmaceutical tableting to aerospace components, its application is not without significant challenges [18] [19]. This whitepaper examines three core limitations inherent to FEA implementation: substantial computational resource requirements, the necessity of specialized expertise, and critical dependencies on model accuracy. These constraints are particularly relevant in pharmaceutical and biomedical research, where FEA guides critical decisions in drug delivery system design, medical device development, and biomechanical analysis [19] [20]. Understanding these limitations is essential for researchers to effectively leverage FEA while acknowledging the boundaries of its predictive capabilities.

Computational Cost and Resource Demands

The computational burden of FEA presents a fundamental constraint, particularly for large-scale, nonlinear, or multi-physics problems. The process involves discretizing a domain into numerous finite elements, forming a vast system of equations that must be solved simultaneously, demanding significant processing power and memory resources [21].

Scale of the Computational Challenge

The resource intensity is directly proportional to problem complexity. State-of-the-art iterative solvers, while efficient for many problems, exhibit computational complexity that remains problem-dependent, with performance influenced by the number of iterations required for convergence and the number of right-hand sides in the system [21]. For context, a pioneering direct FEM solver recently solved an electrodynamic system with over 22.8 million unknowns, a computation that required 16 hours on a single 3 GHz CPU core [21]. While this represents a linear complexity achievement, it underscores the substantial computational resources demanded by high-fidelity simulations.

In medical applications, computational cost can directly impact practical utility. For instance, in a method developed for estimating intraoperative brain shift, the original Finite Element Drift (FED) registration algorithm required approximately 70 seconds for combined registration and finite element analysis [22]. While an improved combined method (CFED) reduced this to 3.2 seconds—a remarkable 95% reduction—this advancement was necessary to achieve near-real-time performance for clinical application [22]. Such timeframes remain prohibitive for many interactive design processes requiring rapid iteration.

Quantifying Computational Parameters in Research

Table 1: Computational Load in Representative FEA Studies

| Application Domain | Model Size / Unknowns | Element Type & Count | Solver Type | Computational Time | Citation |

|---|---|---|---|---|---|

| Electromagnetic Analysis | 22,848,800 unknowns | Not Specified | Direct FEM Solver | 16 hours (single 3GHz CPU) | [21] |

| Brain Shift Estimation | Not Specified | Not Specified | FED-based Algorithm | 70 seconds | [22] |

| Optimized Brain Shift Estimation | Not Specified | Not Specified | Combined FED (CFED) | 3.2 seconds | [22] |

| Two-Part Compression Screw | Not Specified | 18,520 tetrahedral elements | Linear Static Structural | Not Specified | [13] |

Expertise and Knowledge Dependency

The accurate application and interpretation of FEA results demand substantial specialized knowledge across multiple domains, creating a significant barrier to entry and potential for misuse. As one source aptly notes, "FEA is like a super-cool-surgery-robot-5000TM," emphasizing that sophisticated tools require equally sophisticated operators to deliver value [23].

The Multidisciplinary Knowledge Requirement

Successful FEA implementation requires a foundation in both theoretical principles and practical engineering judgment. The essential knowledge domains include:

- Engineering Mechanics Fundamentals: Understanding stress/strain relationships, material behavior, and structural mechanics is paramount [23]. This includes comprehending different stress types (normal, shear, von Mises) and their implications, rather than merely relying on equations [23].

- Material Science: Accurate modeling requires appropriate material properties including Young's Modulus, Poisson's Ratio, and yield criteria [23] [19]. Different materials exhibit unique behaviors under load—for example, steel yields with a plastic plateau while stainless steel strengthens progressively [23].

- Software Proficiency: Competence with FEA platforms such as ANSYS, LS-DYNA, and COMSOL is necessary for model creation, solution, and validation [13] [24] [20].

- Critical and Analytical Thinking: Perhaps most crucially, engineers must possess the ability to critically evaluate results, identify potential errors, and make design recommendations based on simulation output [24].

Consequences of Inadequate Expertise

Without proper understanding, users risk committing critical errors in model setup, assumption selection, and results interpretation. The foundational engineering knowledge enables professionals to identify when results "don't look right" and to question numerical output that may violate physical principles [23]. As explicitly stated in one analysis, "the output is only as good as the input," and FEA models depend entirely on the accuracy of the information used to build them [18]. This expertise dependency means that "FEA should be used in collaboration with experts" to ensure appropriate guidance and safeguards [18].

Model Dependency and Validation Challenges

FEA results are fundamentally dependent on the accuracy of the created model, with potential errors introduced at multiple stages including geometry simplification, material property assignment, boundary condition definition, and mesh generation.

Critical Modeling Parameters and Their Impact

Table 2: Key Modeling Parameters in Pharmaceutical and Biomedical FEA

| Modeling Parameter | Impact on Accuracy | Example from Research | Citation |

|---|---|---|---|

| Material Constitutive Model | Determines stress-strain response; inappropriate models yield unrealistic predictions | Drucker-Prager Cap model used for pharmaceutical powder compression | [19] |

| Mesh Element Size & Type | Affects solution precision; improper sizing causes erroneous calculations | Mesh convergence test with <5% stress change criterion for screw analysis | [13] |

| Boundary Conditions | Constrain model properly; incorrect conditions produce invalid deformation/stress | Fixed die walls, vertically constrained punches in tableting simulation | [19] |

| Contact/Friction Definitions | Govern interface behavior; inaccurate coefficients misrepresent real interactions | Constant friction coefficient (μ=0.1-0.35) at powder/tooling interface | [19] |

| Material Properties | Define fundamental behavior; incorrect values invalidate results | Young's modulus (E) and Poisson's ratio (ν) for Ti6Al4V in orthopedic screws | [13] |

Validation Methodologies

Given these dependencies, rigorous validation protocols are essential. The recommended approaches include:

- Mesh Convergence Studies: Systematic refinement of element size until solution changes fall below an acceptable threshold (typically <5% variation in critical outputs like peak stress) [13]. This ensures results are not artifacts of discretization.

- Experimental Correlation: Comparing FEA predictions with physical test data. In microneedle research, this involves correlating simulated insertion forces with mechanical testing using texture analyzers or micromechanical test machines [20].

- Analytical Verification: For simpler geometries, verifying FEA results against known analytical solutions builds confidence in the modeling approach [25].

- Sensitivity Analysis: Systematically varying input parameters to quantify their influence on outputs, identifying which parameters require most careful determination [19].

Experimental Protocols and Research Workflows

Protocol for Pharmaceutical Powder Compression Analysis

The application of FEA to pharmaceutical tableting exemplifies a sophisticated modeling workflow with specific methodological requirements [19]:

- Geometry Creation: Develop 2D axisymmetric or 3D CAD models of the powder domain, punch, and die walls, leveraging symmetry to reduce computation time [19].

- Material Model Selection: Implement the Drucker-Prager Cap (DPC) constitutive model to represent powder yield behavior during compression, decompression, and ejection phases [19].

- Meshing: Generate quadrilateral elements for the powder domain, conducting mesh sensitivity studies to optimize element size [19].

- Boundary Condition Application:

- Constrain the upper punch to vertical movement along the y-axis with specified compression speed

- Fix the lower punch translationally and rotationally or constrain its vertical movement

- Apply fixed constraints to die walls

- Define powder/tooling interface friction coefficient (typically μ = 0.1-0.35) [19]

- Solution: Execute nonlinear analysis simulating compression, decompression, and ejection phases [19].

- Validation: Compare predicted stress distributions and density variations with experimental pressure transmission measurements and tablet density measurements [19].

FEA Research Workflow and Failure Points

The following diagram maps the standard FEA methodology, highlighting critical points where limitations most commonly manifest:

Successful FEA implementation requires both software tools and material data resources. The following table catalogs key solutions employed across the referenced studies:

Table 3: Essential Research Reagents and Computational Tools for FEA

| Resource Category | Specific Tool/Material | Application in FEA Research | Citation |

|---|---|---|---|

| FEA Software Platforms | ANSYS | Structural analysis of orthopedic screws and shafts | [13] [26] |

| COMSOL Multiphysics | Structural analysis of microneedles | [20] | |

| Custom Direct FEM Solver | Large-scale electromagnetic analysis | [21] | |

| Material Libraries | Ti6Al4V Titanium Alloy | Orthopedic screw modeling (E=113.8 GPa, ν=0.342) | [13] |

| Pharmaceutical Powders | Tablet compression simulation (Drucker-Prager model) | [19] | |

| Polymer Materials | Microneedle mechanical analysis | [20] | |

| Validation Instruments | Texture Analyzers | Experimental validation of microneedle mechanical strength | [20] |

| Micromechanical Test Machines | Measurement of microneedle penetration force | [20] | |

| Nanoindenters | Material property characterization for FEA input | [20] |

The limitations of Finite Element Analysis—computational cost, expertise dependency, and model sensitivity—represent significant challenges that researchers must actively address through appropriate methodologies. Computational constraints necessitate careful balance between model fidelity and resource availability, while the expertise requirement underscores the need for specialized training or collaboration. Most fundamentally, the model-dependent nature of FEA demands rigorous validation and critical interpretation of results. By acknowledging and systematically addressing these inherent limitations through the protocols and methodologies outlined in this whitepaper, researchers can more effectively leverage FEA as a powerful tool for advancing pharmaceutical and biomedical engineering while maintaining appropriate perspective on its predictive capabilities.

The Finite Element Analysis (FEA) software market is experiencing robust growth, transforming from a specialized engineering tool into a critical technology driving innovation across countless industries, including biomedical engineering [27] [28]. This expansion is fueled by increasing product complexity, stringent regulatory requirements, and the relentless pursuit of faster, more cost-effective development cycles [27] [28]. The global FEA software market is projected to grow at a Compound Annual Growth Rate (CAGR) of approximately 8-12% from 2025 to 2033, potentially reaching a market size of around $12 billion [27] [28]. This whitepaper provides an in-depth examination of the core FEA market dynamics, presents a detailed experimental case study from biomedical dentistry, and critically evaluates the advantages and limitations of FEA concentration research within a broader scientific thesis context. For researchers and drug development professionals, understanding these elements is paramount to leveraging FEA's full potential while navigating its inherent constraints in biomedical innovation.

The FEA software market is characterized by significant concentration, with a few major players like Ansys, Dassault Systèmes, and Siemens PLM Software commanding a substantial share of revenue, which for the top vendors likely exceeds $2 billion annually [27]. The market's evolution is being shaped by several convergent technological and economic forces.

Table 1: Finite Element Analysis Software Market Estimates and Projections

| Metric | Estimate/Projection | Time Period | Key Drivers |

|---|---|---|---|

| Market Size (2025) | ~$5-6 Billion [27] [28] | Base Year 2025 | Demand from automotive, aerospace, and manufacturing sectors [27] [28]. |

| Projected Market Size | ~$12 Billion [28] | Year 2033 | Advancements in computing power and cloud-based solutions [28]. |

| Compound Annual Growth Rate (CAGR) | 8% - 12% [27] [28] | 2025-2033 | Need for product optimization and adoption of additive manufacturing [27] [28]. |

Table 2: Key Characteristics and Trends in the FEA Software Market

| Feature | Current Characteristic | Impact on Biomedical Research |

|---|---|---|

| Concentration | Market is concentrated with high barriers to entry due to R&D costs [27]. | Limits software options but ensures high reliability and support for validated medical applications. |

| Core Innovation | Cloud-based FEA, AI/ML integration, High-Performance Computing (HPC) [27] [28]. | Enables larger, more complex biological models (e.g., full organs) and faster, more accurate simulations. |

| Emerging Trend | Growth of multiphysics simulation and digital twins [27] [28]. | Allows for holistic modeling of complex physiological interactions (e.g., fluid-structure in blood flow). |

The adoption of cloud-based solutions is democratizing access to powerful simulation tools, while the integration of Artificial Intelligence (AI) and Machine Learning (ML) is revolutionizing the simulation process by automating tasks and optimizing parameters [27] [28]. Furthermore, the rise of multiphysics capabilities allows engineers to model complex interactions between various physical phenomena, such as thermal, structural, and fluid dynamics, within a single, integrated environment [28]. This is particularly relevant for biomedical applications, where such interactions are the norm rather than the exception.

Fundamentals of Finite Element Analysis

FEA is a computational technique for predicting how objects will behave under various physical conditions. The process involves breaking down a complex real-world structure into a mesh of small, simple pieces called elements [18]. The collective behavior of these elements approximates the behavior of the entire structure.

The standard FEA workflow consists of three primary stages:

- Pre-processing: The physical geometry is defined, material properties are assigned, the mesh is generated, and loads and constraints (boundary conditions) are applied [18].

- Processing: The software assembles and solves a vast system of equations for each element to compute quantities like stress and strain [18].

- Post-processing: The results are analyzed and visualized, often through color-coded stress plots, to aid in interpretation and decision-making [18].

For biomedical applications, this process allows researchers to simulate conditions that are difficult, expensive, or unethical to replicate in live subjects, such as extreme mechanical loads or the long-term performance of implants [29].

FEA Computational Workflow

FEA in Biomedical Innovation: A Case Study on Dental Splints

FEA's impact on biomedical innovation is profound, enabling advances in the design of prosthetics, implants, and surgical instruments [29]. The following section details a specific experiment that exemplifies a rigorous FEA methodology relevant to drug development professionals engaged in material science and device design.

Experimental Protocol: Evaluating Splint Materials for Periodontally Compromised Teeth

A 2025 study used FEA to evaluate and compare the stress distribution of four different splint materials on mandibular anterior teeth with significant (55%) bone loss [30]. The objective was to determine the most effective material for stabilizing compromised teeth by distributing occlusal forces.

1. Hypothesis:

- Null Hypothesis (H₀): No significant difference in stress distribution exists among composite, fiber-reinforced composite (FRC), polyetheretherketone (PEEK), and metal splint types under different loading angles.

- Alternate Hypothesis (H₁): A significant difference in stress distribution exists among at least two of the four splint types under varying loading angles [30].

2. Methodology:

- 3D Model Construction: Precise models of the mandibular anterior teeth, periodontal ligament (PDL), and surrounding bone with 55% bone loss were created using SOLIDWORKS 2020 CAD software [30].

- Material Properties: The four splint materials were assigned their real-world mechanical properties, including Young's modulus (stiffness), density, and Poisson's ratio (deformation behavior) [30].

- Meshing: The models were discretized into a finite element mesh using ANSYS software. A refined mesh ensured accurate capture of stress concentrations [30].

- Boundary Conditions and Loading: The models were constrained at their boundaries to simulate anchorage to the jaw. Two simulated force conditions were applied:

- Vertical loading: 100 N at a 0-degree angle.

- Oblique loading: 100 N at a 45-degree angle [30].

- Simulation and Output: The FEA solver calculated the stress distribution within the models. The Von Mises stress criterion, a key predictor of material failure under complex loads, was used to evaluate performance in the PDL and cortical bone [30].

3. Key Research Reagent Solutions: Table 3: Essential Materials and Software for the FEA Case Study

| Item Name | Function in the Experiment |

|---|---|

| SOLIDWORKS 2020 | Used for constructing the accurate 3D geometric models of the teeth, bone, and splints [30]. |

| ANSYS Software | The FEA platform used for meshing, applying physics, solving the equations, and post-processing the results [30]. |

| Composite Resin | A standard dental material tested as one splinting option, representing a baseline for performance comparison [30]. |

| Fiber-Reinforced Composite (FRC) | A high-strength material tested for its potential to provide superior stress distribution and stabilization [30]. |

| Polyetheretherketone (PEEK) | A high-performance polymer tested for its biocompatibility and mechanical strength in demanding applications [30]. |

| Metal Alloy | Represented the "gold standard" material against which the newer splint materials were compared [30]. |

Results and Interpretation

The FEA simulations yielded clear, quantifiable results. Non-splinted teeth exhibited the highest stress levels, particularly under oblique loading, where cortical bone stress reached 0.74 MPa [30]. Among the splinted groups, Fiber-Reinforced Composite (FRC) demonstrated the most effective stress reduction. Under a 100N oblique load, FRC reduced stress in the cortical bone to 0.41 MPa, a significant improvement over the non-splinted case and superior to the performance of metal (0.51 MPa) and composite (0.62 MPa) splints [30]. These findings led to the rejection of the null hypothesis, confirming that the choice of splint material significantly impacts the biomechanical outcome in periodontally compromised teeth [30].

Dental Splint FEA Stress Analysis Workflow

Advantages and Limitations of FEA in Biomedical Research

Conducting FEA research requires a clear understanding of its capabilities and constraints. The following table synthesizes the core advantages and limitations, providing a critical framework for evaluating FEA-based studies.

Table 4: Advantages and Limitations of Finite Element Analysis in Research

| Advantages | Limitations |

|---|---|

| Safety & Cost Efficiency: Enables virtual testing of scenarios that are dangerous, expensive, or impractical for physical prototypes (e.g., crash tests, extreme pressure vessel failure) [29]. | Input Dependency: The accuracy of results is entirely dependent on the quality of input data. Inaccurate material properties or boundary conditions lead to misleading outputs [29] [18]. |

| Design Optimization: Allows engineers to rapidly iterate and test multiple design concepts, materials, and geometries to achieve optimal performance long before manufacturing [29]. | Computational Intensity: High-fidelity models with fine meshes can require significant computational resources and processing time, especially for complex nonlinear or dynamic problems [29]. |

| Insight into Complex Systems: Provides detailed visualizations of physical behavior, such as stress distribution in internal structures, which is often impossible to measure physically [18]. | Requires Specialized Expertise: Properly setting up, running, and interpreting FEA models requires deep knowledge of both the software and the underlying engineering principles [31] [18]. |

| Simulation of Real-World Scenarios: Can model complex, multi-physics environments (e.g., fluid-structure interaction in blood flow, thermal effects) that are difficult to replicate in labs [29]. | Necessity of Simplifications: Models often involve simplifications (e.g., idealized geometry, homogeneous material properties) that can cause discrepancies with real-world behavior [29]. |

The "garbage in, garbage out" principle is particularly pertinent to FEA. The model's predictive power is contingent upon the analyst's accurate representation of the clinical or physical scenario, including appropriate material models, boundary conditions, and loading [31]. Furthermore, the complexity of biological tissues, which are often anisotropic (exhibiting different properties in different directions), adds a layer of difficulty that requires careful consideration during model creation [31]. Consequently, while FEA is a powerful tool for generating hypotheses and guiding design, its conclusions should be validated with complementary in vitro or in vivo studies whenever possible [31].

The FEA market is on a strong growth trajectory, propelled by technological advancements like cloud computing, AI, and multiphysics simulation. This growth is expanding FEA's role as a cornerstone of biomedical innovation, from optimizing medical devices to advancing fundamental research. The dental splint case study illustrates the power of FEA to provide precise, quantitative biomechanical data that directly informs clinical decision-making, leading to better patient outcomes. However, this power must be tempered with a critical understanding of the method's limitations. The validity of any FEA conclusion is inextricably linked to the accuracy of its input parameters and the expertise of the researcher. For scientists and drug development professionals, a rigorous, critical approach to both conducting and evaluating FEA research is essential. By acknowledging both its strengths and its constraints, the biomedical community can fully harness FEA to accelerate innovation while maintaining scientific integrity.

FEA in Action: Methodologies and Cutting-Edge Applications in Biomedicine

Finite Element Analysis (FEA) represents a cornerstone computational methodology in engineering research, enabling the prediction of physical system behavior through numerical simulation. This technical guide deconstructs the essential FEA workflow within the broader context of advantages and limitations concentration research. By examining each phase from geometry preparation to result interpretation, we establish a rigorous framework for researchers seeking to leverage FEA while acknowledging its inherent constraints as an approximation method. The protocol emphasizes verification and validation procedures critical for research credibility, particularly given the method's susceptibility to numerical artifacts and modeling assumptions that can compromise predictive accuracy if improperly implemented [32] [33].

Finite Element Analysis has evolved into an indispensable tool across engineering disciplines, from traditional structural mechanics to specialized applications including food packaging and biomedical device design [11]. The method's core principle involves discretizing complex continuous domains into simpler interconnected subdomains (finite elements), transforming intractable differential equations into solvable algebraic systems [34]. For research applications, FEA offers significant advantages: reduced physical prototyping (lowering costs by up to 50% in documented automotive cases), accelerated design cycles (30-50% reduction reported), and unprecedented capability to explore parametric design spaces [35] [36] [37]. However, these advantages coexist with substantive limitations including solution sensitivity to mesh quality, boundary condition uncertainty, material model fidelity, and numerical approximation errors that must be systematically addressed through rigorous methodology [33] [11].

Essential FEA Workflow Protocol

The FEA methodology follows a structured sequence ensuring mathematical rigor and physical relevance. The established research protocol encompasses five critical phases, each with defined validation checkpoints.

Phase 1: Geometry Preparation and Simplification

Objective: Transform CAD geometry into a computationally suitable model while preserving critical features.

- Geometry Import/Generation: Models originate from external CAD systems or dedicated preprocessor tools. Research indicates imported geometries often require simplification to eliminate numerically problematic features [38].

- Dimensionality Decision: Researchers must select appropriate element types based on structural characteristics: beam elements (1D) for slender members, shell elements (2D) for thin-walled structures, and solid elements (3D) for volumetric stress states [34].

- Geometry Simplification Protocol: Strategic removal of geometrically complex but mechanically insignificant features (tiny holes, minute fillets) that unnecessarily increase computational cost while potentially generating mesh artifacts. However, features influencing stress concentrations must be preserved [34].

Table 1: Geometry Simplification Guidelines for Research Applications

| Feature Type | Simplification Approach | Validation Requirement |

|---|---|---|

| Small holes (<1% characteristic length) | Fill/eliminate | Compare stress contours in adjacent regions |

| Non-critical fillets/rounds | Replace with sharp corners | Conduct mesh sensitivity analysis at simplification site |

| Complex surface textures | Smooth to planar surfaces | Verify global stiffness change <2% |

| Bolt threads/non-structural details | Replace with smooth cylinders | Validate load path integrity through reaction force checks |

Phase 2: Material Properties and Boundary Conditions

Objective: Define constitutive relationships and kinematic constraints governing system behavior.

- Material Model Selection: Linear elastic models suffice for preliminary analyses, but nonlinear material definitions (accounting for plasticity, hyperelasticity, or creep) are essential for accurate failure prediction [33] [39].

- Boundary Condition Application: Constraints must physically represent actual support conditions. Research demonstrates boundary condition misapplication represents a prevalent error source in computational studies [33] [38].

- Load Quantification: Operational loads derived from experimental measurement, analytical calculation, or predictive algorithms (including AI-driven load case prediction from sensor data) [35].

Phase 3: Meshing and Discretization

Objective: Generate optimal finite element mesh balancing computational efficiency with solution accuracy.

- Element Selection: Higher-order elements (quadratic/parabolic) typically provide superior stress accuracy compared to linear elements for equivalent computational cost [33].

- Mesh Quality Metrics: Aspect ratio (<3:1 ideal), skewness (>45° angles preferred), and Jacobian ratios determine element quality and solution stability [34].

- Mesh Refinement Strategy: Adaptive meshing techniques automatically refine regions with high stress gradients, while mapped meshing provides structured elements for regular geometries [34].

Table 2: Mesh Quality Standards for Research-Grade FEA

| Quality Metric | Acceptable Range | Unacceptable Indications |

|---|---|---|

| Aspect Ratio | < 10:1 | > 20:1 indicates potential instability |

| Skewness | > 30° | < 10° compromises accuracy |

| Warpage (quad elements) | < 5° | > 15° generates numerical artifacts |

| Jacobian Ratio | > 0.6 | < 0.2 indicates highly distorted elements |

Phase 4: Solution and Analysis Execution

Objective: Obtain numerical solutions to the discretized boundary value problem.

- Solver Selection: Direct solvers excel for smaller models; iterative solvers prove more efficient for large-scale problems [38].

- Analysis Type Determination: Linear static analysis suffices for proportional loading and small displacements; nonlinear analysis becomes necessary for large deformations, contact, or material nonlinearity [39] [37].

- Convergence Monitoring: Nonlinear analyses require careful monitoring of solution convergence through force, moment, and displacement residuals [39].

Phase 5: Result Interpretation and Validation

Objective: Extract engineering insight from numerical results while establishing solution validity.

- Stress Interpretation: Von Mises stress effectively predicts yielding in ductile materials but requires careful interpretation near singularities and in linear analyses exceeding yield [39].

- Validation Against Experimental Data: Correlation with physical measurements (strain gauge data, digital image correlation) remains the gold standard for model validation [32].

- Error Assessment: Quantify numerical error through mesh convergence studies, evaluating solution sensitivity to further mesh refinement [34].

Diagram 1: Comprehensive FEA workflow with validation feedback loops.

Verification and Validation Framework

Verification and validation (V&V) constitute the essential methodology for establishing FEA credibility within research contexts.

Accuracy Checks

Systematic inspection ensures the computational model accurately represents the physical system [32]:

- Dimensional Verification: Confirm model geometry matches physical specimen within measurement tolerance.

- Mesh Quality Assessment: Validate element quality metrics meet established standards.

- Property Verification: Confirm material assignments, orientations, and element properties align with physical system.

Mathematical Checks

Fundamental analyses confirm proper numerical formulation and solution [32]:

- Free-free Modal Analysis: Verify rigid body modes in unconstrained structures.

- Unit Load Validation: Apply unit gravity or enforced displacement to confirm expected structural response.

- Thermal Equilibrium: Ensure consistent thermal expansion under uniform temperature fields.

Experimental Correlation

Quantitative comparison with experimental data validates model predictive capability [32]:

- Strain Gauge Correlation: Compare FEA-predicted strains with physical measurements at identical locations.

- Validation Factors: Calculate quantitative metrics (R² values, validation factors) measuring agreement between simulation and experiment.

- Correlation Documentation: Comprehensive reporting of correlation methodology, results, and discrepancies.

Diagram 2: Verification and validation methodology for research-grade FEA.

Advanced Considerations in FEA Research

AI-Enhanced FEA Methodologies

Emerging artificial intelligence approaches augment traditional FEA:

- Automated Meshing: AI algorithms intelligently generate optimal mesh density distributions, particularly valuable for complex geometries like automotive control arms [35].

- Load Case Prediction: Machine learning analyzes operational telemetry data to identify realistic yet extreme load cases beyond conventional assumptions [35].

- Failure Pattern Recognition: AI systems cross-reference FEA results with historical failure databases to flag high-risk regions matching known failure precursors [35].

Result Interpretation Framework

Proper interpretation requires understanding FEA limitations:

- Stress Interpretation Artifacts: Linear analyses compute stresses based on Hooke's law regardless of actual material behavior, potentially generating non-physical stress values exceeding yield strength [33] [39].

- Nonlinear Material Response: When yielding is anticipated, nonlinear material models with appropriate hardening rules provide physically meaningful plastic strain predictions rather than mathematically correct but physically impossible stresses [39].

- Acceptance Criteria: Engineering standards provide plastic strain limits (e.g., EN 1993-1-6 specifies approximately 5% for S235 steel), establishing quantitative failure thresholds [39].

Research Reagents: Essential FEA Computational Tools

Table 3: Essential Computational Tools for FEA Research

| Tool Category | Representative Examples | Research Function |

|---|---|---|

| General Purpose FEA Software | Ansys Mechanical, Autodesk Inventor Nastran | Comprehensive simulation environment for multiphysics problems |

| Specialized Solvers | Nastran, Abaqus, LS-DYNA | High-performance solution engines for specific problem classes |

| Pre/Post Processors | HyperMesh, Patran | Geometry preparation, meshing, and result visualization |

| Mesh Generation Tools | Ansys Meshing, Gmsh | Automated and manual discretization of complex geometries |

| Validation Frameworks | SDC Verifier, Custom Scripts | Mathematical checks and solution verification |

The essential FEA workflow represents a systematic methodology transforming geometric representations into validated engineering insight. When implemented with rigorous verification and validation protocols, FEA delivers substantial research advantages including accelerated development cycles, reduced prototyping costs, and enhanced predictive capability. However, researchers must remain cognizant of inherent limitations: all FEA results constitute approximate solutions dependent on model assumptions, material definitions, and numerical discretization. The integration of emerging AI methodologies promises enhanced automation and improved failure prediction, but cannot replace fundamental engineering judgment and experimental validation. Within the broader thesis of FEA advantages and limitations, this workflow establishes a foundational protocol for researchers seeking to leverage computational simulation while maintaining scientific rigor through comprehensive model validation.

Finite Element Analysis (FEA) has revolutionized the design and evaluation of medical devices, particularly in the orthopedics sector where implant performance is critical to patient outcomes. This computational method enables engineers and researchers to solve complex boundary value problems by computing reactions over a discrete number of points across a domain of interest, creating a virtual testing environment that simulates real-life applications [40]. For orthopedic implants and screws, FEA provides invaluable insights into biomechanical behavior under physiological loading conditions, allowing designers to predict device performance and identify potential failure modes before proceeding to costly physical prototyping and bench testing [40] [41]. The integration of FEA into the medical device development process has significantly reduced development timelines and costs while improving the safety and efficacy of orthopedic implants.

The advantages of FEA in medical device design are substantial, with the most significant being the speed at which early device performance testing can be conducted prior to physical prototyping [40]. This capability for in silico testing allows for rapid iteration and optimization of implant designs, potentially reducing the number of bench testing iterations required. However, these advantages come with the requirement for high expertise to properly navigate computational platforms and avoid costly misinterpretations [40]. Established product development strategies must also be revised to integrate FEA into early design phases, which requires considerable effort for medical device companies. Despite these challenges, the method has gained significant traction in the orthopedics industry, particularly for evaluating stress distribution, interfacial mechanics at bone-implant interfaces, and load transfer to surrounding bone tissue [42].

FEA Applications in Orthopedic Implant Design

Current State of Orthopedic Implants

Orthopedic implants have become indispensable in restoring mobility and relieving pain for millions of patients worldwide. With over 7.5 million orthopaedic devices implanted each year in the United States alone, and the global orthopaedic implant market projected to reach $79.5 billion by 2030, the importance of these medical devices continues to grow [43]. However, traditional implants face significant clinical challenges that limit their longevity and success, including implant loosening, wear, and infections. These complications often result from inadequate osseointegration at the implant-bone interface, which can lead to fibrous tissue formation and mechanical instability [43]. Additionally, metallic implants can release ions and particles that trigger chronic inflammation and osteolysis over time, further compromising implant longevity. These persistent issues have driven the orthopedics industry to adopt advanced engineering tools like FEA to address the root causes of implant failure and develop more reliable solutions.

The evolution of orthopedic implant technology has been marked by significant advances in materials science, bioengineering, and digital technologies. Recent developments include new biomaterials with superior biocompatibility and mechanical durability, additive manufacturing techniques that enable patient-specific implants with porous architectures resembling natural bone, and surface engineering techniques that enhance bone bonding and prevent infection [43]. The emergence of "smart" implants equipped with sensors and wireless connectivity further demonstrates the increasing sophistication of this field, enabling real-time monitoring of biomechanical parameters and paving the way for personalized, data-driven orthopaedic care [43]. Throughout these advancements, FEA has served as a critical tool for validating new designs and materials, ensuring that innovations meet the stringent safety and performance requirements of orthopedic applications.

Specific FEA Applications for Implants and Screws

FEA finds diverse applications throughout the development lifecycle of orthopedic implants and screws, from initial concept evaluation to final design validation. One of the primary uses is in checking the feasibility of design ideas and determining whether a device design will likely fail under its intended loads [41]. Engineers can quickly compare multiple design options using FEA simulations, identifying the most promising candidates for further development. This capability is particularly valuable for orthopedic screws, which must withstand complex loading conditions while maintaining fixation in bone tissue. For example, FEA can simulate the performance of different screw thread designs, materials, and diameters under various loading scenarios, providing data-driven insights for optimization.

Another critical application of FEA in orthopedics involves testing key materials used in medical devices. While plastics are nearly universal in medical devices, many contain highly loaded components that require careful analysis to ensure polymers can withstand extended loading periods [41]. This is especially relevant for orthopedic applications where implants must maintain mechanical integrity over many years of service. FEA enables engineers to evaluate not only immediate mechanical performance but also long-term phenomena like creep—the tendency of loaded parts to stretch or relax over time [41]. By accounting for these time-dependent material behaviors, FEA helps identify design risks that might not appear until much later in the development process during physical performance testing, potentially saving significant time and resources.

Table: Key Applications of FEA in Orthopedic Implant Development

| Application Area | Specific Use Cases | Benefits |

|---|---|---|

| Concept Evaluation | Feasibility assessment of new implant designs; Comparison of design alternatives | Rapid iteration without physical prototyping; Identification of promising concepts early in development |

| Structural Analysis | Stress distribution in implants and bone; Identification of stress concentrations; Fatigue life prediction | Prevention of mechanical failure; Optimization of load transfer; Enhanced implant longevity |

| Material Evaluation | Polymer performance under load; Creep and stress relaxation analysis; Composite material behavior | Prediction of long-term material behavior; Selection of appropriate materials for specific applications |

| Interface Analysis | Bone-implant interface stresses; Screw fixation stability; Osseointegration potential | Improved implant fixation; Reduced risk of loosening; Enhanced biological integration |

| Regulatory Support | Virtual testing for safety and effectiveness; Worst-case scenario analysis; Design verification evidence | Reduced physical testing requirements; Comprehensive data for regulatory submissions |

Case Study: Finite Element Analysis of Osseointegrated Prosthetic Designs

A recent study conducted by Guo et al. (2025) provides an excellent case study on the application of FEA in evaluating orthopedic implants, specifically focusing on osseointegrated prosthetic designs [42]. The research aimed to evaluate the biomechanical behavior of four representative osseointegrated prosthetic configurations using finite element analysis to inform clinical application and guide optimization in prosthetic design. The investigators constructed three-dimensional finite element models to simulate host bone integrated with four distinct prosthetic configurations: (1) a threaded prosthesis representing the Osseointegrated Prostheses for the Rehabilitation of Amputees system, (2) a smooth press-fit prosthesis simulating the Osseointegrated Prosthetic Limb, (3) a titanium alloy prosthesis with a multi-porous surface, and (4) a molybdenum-rhenium (Mo-Re) alloy prosthesis with a multi-porous surface [42]. This comprehensive approach allowed for direct comparison of different design philosophies and material choices under standardized conditions.

The research methodology employed simulated physiological loading conditions to evaluate critical performance parameters, including stress distribution within prosthetic structures, interfacial mechanics at the bone-prosthesis junction, and stress transfer to surrounding osseous tissue [42]. These factors are essential for understanding long-term implant stability and preventing complications such as stress shielding—a phenomenon where bone resorbs due to inadequate mechanical stimulation. The FEA models provided detailed quantitative data on these parameters, enabling objective comparison between the different prosthetic designs. This case study exemplifies the power of FEA in orthopedics, as obtaining similar data through experimental methods alone would require extensive physical testing, potentially involving animal models or cadaveric specimens, with significantly greater time and resource investments.

Key Findings and Implications

The FEA results revealed that all four prosthetic designs exhibited stress concentration at the distal stem region, with peak stress values ranging from 179 to 185 MPa, indicating comparable load-bearing characteristics across the different configurations [42]. This finding is significant as it suggests that while the overall load-bearing capacity may be similar, the location of stress concentrations could influence long-term performance and potential failure modes. A particularly important discovery was that the incorporation of a multi-porous surface effectively reduced stress concentration on the inner cortical wall associated with groove geometry [42]. This demonstrates how strategic design features can mitigate localized stress patterns that might contribute to bone resorption or implant loosening over time.

Further analysis showed that the two multi-porous configurations demonstrated similar load transfer patterns, with maximum stress in adjacent bone tissue recorded at 20.4 MPa [42]. The Mo-Re alloy prosthesis exhibited reduced deformation under equivalent loading due to its higher elastic modulus, and maximum stress within the porous section was 5.3 MPa for the Mo-Re prosthesis compared to 9.3 MPa for the titanium alloy variant, with no evidence of critical stress accumulation [42]. Based on these findings, the researchers concluded that the multi-porous Mo-Re alloy prosthesis demonstrated favorable mechanical compatibility through the optimized integration of material properties and structural design, supporting its potential utility in osseointegrated orthopedic applications [42]. This case study illustrates how FEA enables quantitative comparison of implant designs, providing evidence-based guidance for clinical application and future development.

Table: Performance Comparison of Four Osseointegrated Prosthetic Designs from FEA Study

| Prosthetic Design | Peak Stress (MPa) | Stress in Adjacent Bone (MPa) | Key Characteristics | Notable Findings |

|---|---|---|---|---|

| Threaded Prosthesis | 179-185 | Not specified | Represents OPRA system; Threaded interface | Stress concentration at distal stem; Comparable load-bearing capacity |

| Smooth Press-Fit | 179-185 | Not specified | Simulates OPL system; Smooth surface | Similar stress pattern to threaded design |

| Titanium Multi-Porous | 179-185 | 20.4 | Titanium alloy; Multi-porous surface | Reduced stress concentration on inner cortical wall; Similar load transfer to Mo-Re |

| Mo-Re Multi-Porous | 179-185 | 20.4 | Molybdenum-Rhenium alloy; Multi-porous surface | Reduced deformation under load; Maximum porous section stress: 5.3 MPa |

Experimental Protocols and Methodologies

Model Creation and Validation Protocols

The implementation of FEA for orthopedic implants and screws requires rigorous experimental protocols to ensure results are accurate, reliable, and clinically relevant. A study by Wieding et al. (2012) provides detailed methodology on FEA of osteosynthesis screw fixation, offering valuable insights into appropriate techniques for automatic screw modelling [44]. In their research, the team generated finite element models from CAD models of a composite femur and an angular-stable osteosynthesis plate created from CT data with an approximate voxel size of 0.6 mm cube [44]. This approach exemplifies the integration of medical imaging with engineering simulation, enabling the creation of anatomically accurate models for biomechanical analysis. The researchers performed convergence testing with respect to femoral deflection to avoid any influence of mesh density on results, a critical step in ensuring the accuracy of FEA simulations [44].

For model validation, the team employed experimental testing using a composite femur with a segmental defect and an identical osteosynthesis plate for primary stabilisation with titanium screws [44]. They measured both deflection of the femoral head and gap alteration with an optical measuring system with an accuracy of approximately 3 µm, establishing a high-precision benchmark for comparing FEA results [44]. This validation protocol demonstrated a sufficient correlation of approximately 95% between numerical and experimental analysis for both screw modelling techniques [44]. The study also highlighted the importance of computational efficiency, noting that using structural elements for screw modelling reduced computational time by 85% when using hexahedral elements instead of tetrahedral elements for femur meshing [44]. Such considerations are practical necessities in industrial and research settings where computational resources are often limited.

Screw Modelling Techniques and Material Properties

The Wieding et al. study compared three different numerical modelling techniques for implant fixation: (1) without screw modelling, (2) screws as solid elements, and (3) screws as structural elements [44]. The third approach offered the possibility to implement automatically generated screws with variable geometry on arbitrary FE models, with structural screws parametrically generated by a Python script for automatic generation in the FE-software Abaqus/CAE [44]. This automated approach represents a significant advancement in FEA methodology for orthopedic screws, streamlining what has traditionally been a labor-intensive process. The researchers created three different femur models to accommodate these techniques: one meshed with tetrahedral elements without screw holes, another with tetrahedral elements considering screw holes, and a third with hexahedral elements without screw holes [44]. This systematic approach allowed for direct comparison of different modelling strategies.

For material properties, the study modelled bone as linear elastic and isotropic material with an inhomogeneous material distribution derived from CT data [44]. This treatment of material properties reflects the challenge of accurately representing biological tissues in FEA simulations, which often requires balancing computational complexity with physiological accuracy. The assignment of material properties based on CT data represents a sophisticated approach that accounts for the variations in bone density and stiffness throughout the structure, which significantly influence load transfer and stress distributions. Such methodological details are crucial for researchers seeking to implement FEA for orthopedic applications, as they highlight both the capabilities and complexities of simulating biological systems.

Diagram: Orthopedic FEA Workflow showing key stages in finite element analysis for implant design

Technical Implementation and Research Tools

Essential Research Reagent Solutions