Ensuring FEA Accuracy: A Comprehensive Guide to Quality Control and Validation for Biomedical Applications

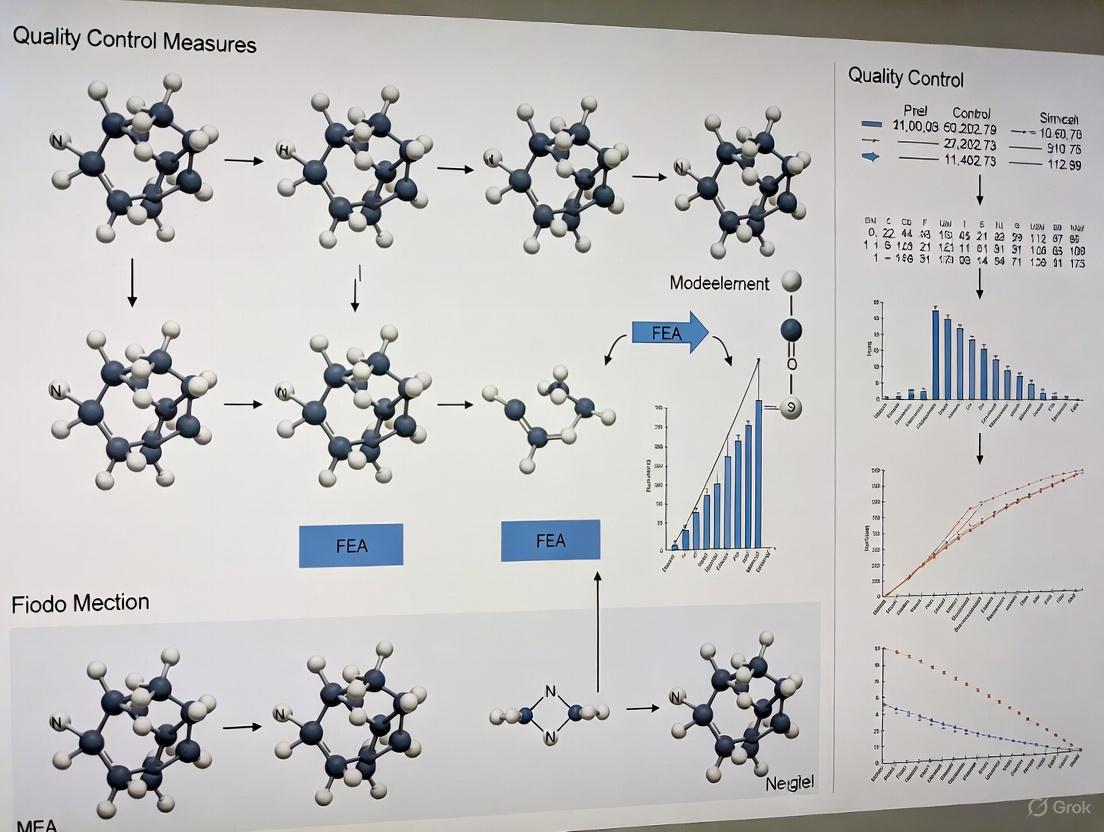

This article provides a comprehensive framework for implementing robust quality control measures in Finite Element Analysis (FEA), specifically tailored for biomedical and clinical research.

Ensuring FEA Accuracy: A Comprehensive Guide to Quality Control and Validation for Biomedical Applications

Abstract

This article provides a comprehensive framework for implementing robust quality control measures in Finite Element Analysis (FEA), specifically tailored for biomedical and clinical research. Covering foundational principles, methodological applications, systematic troubleshooting, and rigorous validation protocols, it equips researchers and drug development professionals with practical strategies to enhance the reliability and credibility of their computational models. By integrating verification and validation processes, this guide supports the development of safer and more effective medical products and therapies, ensuring FEA results are both accurate and clinically relevant.

Building a Foundation: Core Principles of FEA Quality and Error Management

Within the framework of quality control measures for Finite Element Analysis (FEA) technique research, Verification and Validation (V&V) constitute a fundamental and systematic process to ensure the credibility of computational simulations. For researchers and scientists, particularly those in rigorous fields like drug development where predictive modeling is crucial, understanding this distinction is paramount. Verification and Validation serve as the foundational pillars of Finite Element Quality Assurance (FQA), providing a structured approach to build confidence in simulation results. The core principle is elegantly summarized by the questions they seek to answer: Verification asks "Are we solving the equations correctly?" (Solving the problem right), while Validation asks "Are we solving the correct equations?" (Solving the right problem) [1]. This distinction ensures not only the mathematical correctness of the solution but also its physical relevance to the real-world problem being studied.

The failure to implement a robust V&V process constitutes a significant scientific and engineering risk. It can lead to false confidence, where a beautifully visualized but incorrect result misleads the research and development process, potentially leading to costly design failures or misguided scientific conclusions [1]. A documented V&V protocol is, therefore, not an optional step but an integral component of credible research methodology in computational mechanics and related disciplines.

Core Concepts: Verification vs. Validation

Verification and Validation are complementary but distinct processes. The following table outlines their key differences, providing a clear framework for researchers.

Table 1: Fundamental Distinctions Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | "Is the model solved correctly?" [1] [2] | "Does the model represent reality?" [1] [2] |

| Primary Focus | Mathematical correctness and numerical accuracy of the solution [1] [2]. | Physical accuracy and relevance of the model itself [1] [2]. |

| Primary Goal | Ensure the governing equations are solved without significant numerical error [1]. | Ensure the mathematical model accurately predicts real-world physical behavior [1]. |

| Addresses | Solving the problem right [1]. | Solving the right problem [1]. |

| Key Analogy | Checking the accuracy of a calculation; "debugging" the model. | Calibrating a instrument against a known standard. |

The process of V&V is a structured journey from mathematical model to a validated predictive tool, as illustrated in the workflow below.

Verification Protocols and Application Notes

Verification is the process of ensuring that the computational model accurately represents the underlying mathematical model and that the equations are solved correctly. It is primarily concerned with numerical accuracy.

Key Verification Methodologies

The following experimental protocols are essential for a comprehensive verification process.

- Mesh Convergence Studies: This is arguably the most critical verification step. It involves systematically refining the mesh in critical areas of the model and observing key results, such as maximum stress, strain, or displacement. A solution is considered "converged" when these results stop changing significantly with further mesh refinement. The goal is to ensure that the solution is independent of the discretization [1].

- Mathematical Sanity Checks: These are simple checks to ensure the model behaves as expected from a fundamental mathematical and physical perspective.

- Unit Gravity Check: Apply a 1G load and verify that the resulting reaction forces exactly equal the model's total weight. This checks the consistency of loading and boundary conditions [1].

- Rigid Body Mode Check: For an unconstrained (free-free) model, a modal analysis should produce zero-frequency rigid body modes. This validates the correct implementation of the eigenvalue solver and the absence of unintended constraints [1].

- Patch Tests: These are used to verify the correctness of individual elements by ensuring they can represent states of constant stress or strain exactly [2].

- Input and Equilibrium Validation: This involves a meticulous review of all input parameters. Researchers must double-check applied material properties, loads, and boundary conditions. Furthermore, the sum of all reacted loads (e.g., reaction forces) must balance the sum of all applied loads in each direction to satisfy fundamental equilibrium principles [1].

Quantitative Checks for Verification

The table below summarizes the key quantitative checks and their pass/fail criteria.

Table 2: Quantitative Checks for FEA Model Verification

| Check Type | Methodology | Success Criteria | Tolerable Error/Threshold |

|---|---|---|---|

| Mesh Convergence | Successively refine mesh and monitor key outputs (stress, displacement). | Results show asymptotic behavior with less than ~5% change between refinements [1]. | < 2-5% change in critical result. |

| Load Equilibrium | Compare sum of applied forces/moments to sum of reacted forces/moments. | Applied and reacted loads are equal. | Near-zero imbalance (< 0.1-1% is typical). |

| Unit Gravity Test | Apply 1G acceleration to a model with known mass. | Calculated reaction force equals model weight (mass × gravity). | < 1% error. |

| Rigid Body Modes | Perform free-free modal analysis on an unconstrained model. | First six modes have near-zero frequency (≈ 0 Hz). | Frequency < 1e-6 Hz or as defined by solver tolerance. |

Validation Protocols and Application Notes

Validation moves beyond the mathematical to the physical, asking whether the computational model accurately represents reality. It is the process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses of the model.

Key Validation Methodologies

- Comparison with Experimental Data: This is the gold standard for validation. The physical component or system is instrumented (e.g., with strain gauges) and subjected to known loads and boundary conditions. The measured physical response (strains, displacements, temperatures) is then directly compared to the FEA predictions at the corresponding locations and loading conditions [1]. A strong correlation provides high confidence in the model's predictive capability.

- Comparison with Analytical Solutions: For simpler problems or specific sub-components, the FEA results should be compared to closed-form analytical solutions. These solutions, derived from fundamental mechanics equations, provide a highly accurate benchmark for validating the FEA in a controlled context. A difference of less than 10% is often considered a good correlation for complex models [1].

- Benchmarking against Established Cases: Comparing results against well-documented and widely accepted benchmark cases or results from peer-reviewed models provides an additional layer of validation, especially when experimental data is scarce.

Documentation of Validation

It is critical to maintain a "FEM Validation Report" that meticulously documents the entire process. This report should include the locations of gauges or measurement points, detailed test conditions, a quantitative comparison between FEA and test data (using metrics like correlation coefficients), and reasoned explanations for any observed discrepancies [1].

The Researcher's Toolkit for V&V

Successful implementation of V&V relies on a combination of theoretical knowledge, practical tools, and a systematic approach. The table below details key resources and methodologies essential for a researcher's toolkit.

Table 3: Essential Research Reagent Solutions for FEA V&V

| Tool / Solution Category | Specific Examples & Functions |

|---|---|

| Analytical Benchmarks | Closed-form solutions (e.g., for a cantilever beam, pressurized cylinder). Used to validate the FEA implementation for fundamental problems. |

| Software Utilities | Mesh quality checkers (check for aspect ratio, skew, Jacobian); Convergence study automation tools; Result parsers and comparators. |

| Experimental Validation Kits | Strain gauges and data acquisition systems; Digital Image Correlation (DIC) setups; 3D scanners for geometry acquisition; Load cells and displacement sensors. |

| Documentation & Reporting Tools | Validation Report Templates; Tools for creating data comparison plots (X-Y plots, Bland-Altman plots); Version control for models and inputs. |

The logical relationship between the various tools and the phases of V&V is shown below, illustrating how they integrate into a cohesive quality assurance strategy.

For researchers, scientists, and drug development professionals relying on FEA, a rigorous and documented Verification and Validation protocol is the non-negotiable foundation of credible simulation research. V&V transforms a mere colored contour plot into a trustworthy predictive tool. By systematically asking and answering the twin questions—"Are we solving the equations correctly?" (Verification) and "Are we solving the correct equations?" (Validation)—we can place justified confidence in our computational results, ensure the efficacy of our designs, and uphold the highest standards of scientific rigor in computational mechanics and related fields.

Computational models, particularly Finite Element Analysis (FEA), have become indispensable tools in engineering and scientific research, including drug development and medical device design. These models enable the prediction of system behavior under various physical conditions without the immediate need for costly physical prototypes [3] [4]. However, the reliability of these predictions is contingent upon the meticulous management of numerous potential error sources. Within a quality control framework for FEA technique research, understanding, quantifying, and mitigating these errors is paramount to ensuring the credibility of simulation outcomes, especially when applied to safety-critical fields like healthcare [5] [4]. This document outlines the common sources of error in computational modeling and provides structured protocols for their control.

A Categorization of Computational Modeling Errors

Errors in computational models can be systematically classified into three primary categories: modeling errors, discretization errors, and numerical errors [6]. A comprehensive understanding of this taxonomy is the first step in establishing robust quality control measures. The interrelationship and typical flow of these errors are illustrated in Figure 1.

Figure 1. A taxonomy of common error sources in the computational modeling workflow.

Modeling Errors

Modeling errors arise from simplifications and incorrect assumptions made during the translation of a real-world physical problem into a computational framework [6]. These are often considered the most significant source of inaccuracy and can render a simulation fundamentally non-representative.

- Inaccurate Boundary and Load Conditions: Defining unrealistic supports or loads is a frequent error. This includes over-constraining the model or applying forces in a non-physical manner (e.g., to a single node, which creates infinite stresses) [7] [6] [8].

- Material Property Misspecification: Using inaccurate or insufficiently tested material data is a critical error. A common mistake is modeling material behavior as linear-elastic beyond its yield point, ignoring nonlinear effects like plasticity [7].

- Geometric Simplifications: Over-simplification of geometry, such as removing small fillets, holes, or other features that act as stress concentrators, can lead to a non-conservative design by missing critical stress peaks [7].

- Physics Misspecification: Selecting an inappropriate analysis type (e.g., using a linear static analysis for a dynamic problem or ignoring nonlinear effects like contact or large deformations) is a fundamental error that invalidates results [6] [8].

Discretization Errors

Discretization errors originate from the approximation of a continuous domain (geometry and field variables) into a finite number of elements and nodes.

- Mesh-Related Errors: The core of discretization error lies in the mesh. This includes using a mesh that is too coarse to capture high-stress gradients, employing elements with poor quality (highly skewed or distorted), or selecting an inappropriate element type (e.g., linear elements for a curved boundary) [7] [6] [9].

- Mesh Incompatibility: In assemblies, using incompatible meshes at component interfaces can lead to numerical gaps or overlaps, violating physical continuity conditions and producing erroneous stress and strain results [9].

- Ignoring Mesh Convergence: Failing to perform a mesh convergence study is a major procedural error. A solution is only reliable when further mesh refinement does not yield significant changes in the results of interest (e.g., peak stress) [8].

Numerical Errors

Numerical errors are introduced during the computer solution of the finite element equations.

- Linear Solver Errors: Iterative solvers used for large linear systems have inherent convergence tolerances. Stopping iterations too early can leave a significant residual error in the solution [10].

- Rounding Errors: These are caused by the finite precision of computer arithmetic. They can accumulate in problems with a large number of degrees of freedom or be exacerbated by ill-conditioned system matrices [6] [10].

- Integration Errors: The use of numerical integration (e.g., Gauss quadrature) within elements can introduce errors, particularly if the integration rule is not sufficiently accurate for the element type or the physics being modeled [6].

Quantitative Data on Modeling Errors

Understanding the magnitude and impact of different errors is crucial for prioritization within a quality control system. The following tables summarize key quantitative and qualitative findings from the literature.

Table 1: Impact of Discretization Parameters on Solution Accuracy

| Parameter | Effect on Solution | Quantitative Example / Typical Target |

|---|---|---|

| Mesh Size (& Mesh Convergence) | Determines ability to capture field gradients (e.g., stress, concentration). A non-converged mesh yields unreliable results. | A mesh convergence study is mandatory. Refinement continues until change in key result (e.g., max stress) is below a threshold (e.g., 2-5%). [8] |

| Element Quality (Aspect Ratio, Skewness) | Poor quality leads to numerical instability and inaccurate results, especially in stress concentrations. | Targets: Skewness < 0.7 (lower is better), Aspect Ratio < 10 for most applications. Distorted elements can cause error > 20%. [9] |

| Near-Wall Grid Size (y+) | Critical for CFD/transport problems; affects prediction of boundary layer phenomena. | In CFD of ozone-human surface reaction, y+ > 10 under-predicted deposition velocity by 24.3% vs. y+ = 5. y+ = 1 is recommended for accuracy. [11] |

Table 2: Impact of Modeling Assumptions on Solution Validity

| Assumption / Component | Potential Error Introduced | Recommended Quality Control Practice |

|---|---|---|

| Turbulence Model (in CFD) | Affects prediction of mixing, kinetic energy, and mass transfer. | LES or SST k-ω models show better agreement with experiments for near-human surface mass transfer than standard k-ε models, which can underpredict key parameters. [11] |

| Material Model (Linear vs. Nonlinear) | Modeling material as linear beyond yield point is "completely wrong in reality". [7] | Validate material model against experimental stress-strain data. For plasticity, use nonlinear material models with appropriate hardening rules. |

| Boundary Conditions | Small mistakes can cause difference between correct and incorrect simulation. [8] | Perform sensitivity analysis on boundary conditions. Check reaction forces for equilibrium. |

| Contact Definition | Incorrect parameters can cause large changes in system response, convergence problems. [8] | Conduct robustness studies to check sensitivity of numerical parameters. Simplify contact where possible without altering physics. |

Protocols for Error Mitigation and Model Validation

A rigorous, protocol-driven approach is essential for minimizing errors and establishing the credibility of a computational model. The following workflow, Figure 2, outlines a comprehensive validation and verification process.

Figure 2. A recommended workflow for model quality assurance, integrating verification and validation (V&V) steps.

Protocol 1: Model Setup and Pre-Processing

Objective: To minimize modeling errors by establishing a physically accurate and well-defined computational problem.

- Define Analysis Goals: Clearly articulate the Quantities of Interest (QoIs), such as peak stress, natural frequency, or flow rate. This guides all subsequent modeling decisions [8].

- Establish the Physics: Determine whether the problem is linear or nonlinear (geometric, material, contact) and whether it is static or dynamic. Choose the solution type accordingly [6] [8].

- Apply Boundary and Load Conditions: Define constraints and loads that realistically represent the physical environment. Avoid applying point loads; instead, distribute loads over a small area. Check for rigid body motion and global equilibrium [7] [8].

- Define Material Properties: Use validated material data. For nonlinear analyses, ensure the material model captures the full range of relevant behavior (e.g., plasticity, hyperelasticity) [7].

Protocol 2: Mesh Generation and Convergence

Objective: To control discretization error by creating a mesh that is both computationally efficient and sufficiently accurate.

- Element Selection: Choose element types suitable for the geometry and physics (e.g., quadratic elements for curved boundaries, shell elements for thin structures) [8] [9].

- Initial Meshing: Generate an initial mesh, targeting good element quality metrics (low skewness, aspect ratio close to 1). Use refinement in regions with anticipated high gradients [9].

- Mesh Convergence Study: Systematically refine the mesh (e.g., halving the global element size) and resolve the model. Plot the QoIs against a mesh density parameter (e.g., number of degrees of freedom). The solution is considered converged when the change in the QoI between successive refinements falls below a pre-defined tolerance (e.g., 2%) [8].

Protocol 3: Model Verification and Validation (V&V)

Objective: To build confidence in the model's correctness and its fidelity to the real-world system.

- Verification (Solving the Equations Right):

- Validation (Solving the Right Equations):

- Experimental Correlation: Compare FEA results with experimental data from physical tests (e.g., strain gauge measurements, displacement data). Use error norms to quantify the difference [8] [4].

- Benchmarking: If experimental data is unavailable, compare results against analytical solutions or highly trusted benchmark models [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

In computational research, the "reagents" are the software tools, material databases, and numerical libraries that enable the modeling.

Table 3: Key Research "Reagents" for Quality-Controlled Computational Modeling

| Item / Solution | Function in Computational Modeling | Application Notes |

|---|---|---|

| FEA/CFD Software (e.g., ANSYS, Abaqus, OpenFOAM) | Provides the core environment for pre-processing, solving, and post-processing physics-based simulations. | Commercial tools offer extensive support and validation; open-source tools provide transparency and customization. Selection depends on project needs and budget. [3] |

| Material Property Database | A curated source of high-fidelity material data (e.g., elastic modulus, yield strength, viscosity). | Critical input for model accuracy. Data should be sourced from standardized tests or peer-reviewed literature relevant to the operating environment (e.g., strain rate, temperature). [7] |

| Mesh Generation Tool | Software component that discretizes the CAD geometry into finite elements or volumes. | Capabilities for automated and controlled refinement, hex-dominant meshing, and quality checking are essential for efficient model preparation. [9] |

| Linear Solver Libraries (e.g., PETSc, MUMPS, PARDISO) | High-performance software libraries for solving large systems of linear equations efficiently and accurately. | The choice of solver (direct vs. iterative) and its settings (preconditioner, tolerance) can significantly impact solution time and accuracy, especially for large-scale problems. [10] |

| Uncertainty Quantification (UQ) Framework | A set of computational methods (e.g., Monte Carlo, Polynomial Chaos) to propagate input uncertainties (e.g., in material properties) to the output QoIs. | Moving beyond deterministic simulation, UQ is a cutting-edge "reagent" for quantifying the confidence in model predictions, which is vital for risk assessment in drug development and medical device design. [5] [4] |

The Role of Quality Management Systems (ISO Standards and NAFEMS Guidelines)

In computational engineering, particularly in fields with high consequence-of-failure such as drug development and biomedical device design, the Finite Element Analysis (FEA) technique requires rigorous quality control measures to ensure reliable and reproducible results. The credibility of FEA in the clinical and scientific area hinges on robust verification and validation (V&V) processes [12]. While specialized guidelines from organizations like NAFEMS provide technical frameworks for FEA-specific best practices, overarching Quality Management Systems (QMS) based on ISO 9001 standards offer the structural foundation for maintaining consistency, traceability, and continuous improvement in research activities [13]. This integrated approach ensures that FEA methodologies produce accurate, defensible data suitable for critical decision-making in product development and regulatory submission.

The synergy between these systems is essential. ISO 9001 provides the high-level framework for documenting processes, managing resources, and implementing corrective actions, while NAFEMS guidelines translate this framework into the specific technical and procedural controls required for competent finite element analysis [14]. For researchers and scientists, adhering to this combined protocol mitigates the risk of erroneous design decisions that could lead to unsafe products or lengthened development cycles [15].

Current QMS Standards and Their Evolution

ISO 9001:2015 and the Upcoming 2026 Revision

ISO 9001 is the internationally recognized standard for QMS, designed to help organizations ensure they meet customer and regulatory requirements while demonstrating a commitment to continuous improvement. The standard is periodically revised to address evolving market needs and challenges. The current version, ISO 9001:2015, is scheduled for an update, with the new ISO 9001:2026 version anticipated for publication in September 2026 [16] [17].

Organizations certified to ISO 9001:2015 will have a three-year transition period, expected to last until approximately September 2029, to migrate their QMS to the new standard [17]. This revision is confirmed to be an evolutionary update rather than a radical overhaul, focusing on refinements in quality culture, ethical behavior, and clearer risk management, thereby ensuring a manageable transition for established QMS [17].

Table 1: Key Expected Changes in the ISO 9001:2026 Revision

| Area of Change | Specific Update | Impact on QMS |

|---|---|---|

| Organizational Context | Formal integration of climate change considerations as a factor in the organization's context [17]. | Requires organizations to consider how climate change can impact their QMS. |

| Leadership & Culture | Expanded leadership responsibilities to explicitly promote and demonstrate a "quality culture" and "ethical behaviour" [17]. | Top management must actively foster a culture where quality and ethics are central. |

| Risk-Based Thinking | Clarified risk and opportunity management with a reorganized clause structure for clearer separation [17]. | Promotes a more nuanced understanding and management of risks and opportunities. |

| Awareness | A new awareness requirement for employees to understand "quality culture and ethical behaviour" [17]. | Employees at all levels must understand their role in upholding the quality culture. |

NAFEMS Guidelines for FEA

NAFEMS is an international organization that provides authoritative guidance and education on engineering simulation technologies, including FEA. Its publications, such as "Management of Finite Element Analysis - Guidelines to Best Practice," serve as sector-specific interpretations of general quality standards like ISO 9001 [13]. These guidelines are designed to assist personnel in managing FEA activities and creating/maintaining QMS tailored for simulation [13] [14]. They address the critical need for rigorous procedures as FEA moves from the preserve of specialists to a tool routinely used by design engineers [13].

Integrated Application Notes for FEA Quality Control

For a research environment, integrating ISO 9001's management principles with NAFEMS' technical recommendations creates a powerful system for ensuring the quality of FEA. The following workflows and protocols outline this integrated approach.

FEA Quality Assurance Workflow

The diagram below illustrates the integrated quality management process for an FEA project, combining high-level QMS requirements with specific FEA quality assurance steps.

ISO 9001:2026 Transition Timeline

With the new standard forthcoming, organizations must plan their transition. The following diagram outlines the key milestones.

Experimental Protocols for FEA Quality Control

Protocol 1: FEA Model Verification and Validation

This protocol provides a detailed methodology for the verification and validation of FEA models, a cornerstone of reliable simulation research [12].

1.0 Objective: To ensure the computational model is solved correctly (Verification) and that it accurately represents the real-world physical phenomena (Validation).

2.0 Pre-Analysis Checklist (Before Solver Execution) [15] [18]:

- 2.1 Geometry: Confirm that the model geometry is appropriately simplified, and that small features that could cause mesh issues (e.g., very short edges, small holes) have been addressed [15].

- 2.2 Material Properties: Verify that correct, temperature-dependent material properties (e.g., Young's modulus, Poisson's ratio, density) have been assigned. The type of geometrical and/or material nonlinearity must be defined and justified [18].

- 2.3 Interactions: Check that all contact definitions, bonded interfaces, and other interactions are correctly defined with appropriate parameters.

- 2.4 Mesh: Inspect mesh quality. Use refinement and smoothing tools to avoid distorted or skewed elements [18]. Document the element types and sizes used.

3.0 Verification Procedure (Correct Solution of the Equations):

- 3.1 Convergence Study:

- Methodology: Perform the analysis with at least three progressively finer mesh densities. Plot the key output variable(s) of interest (e.g., maximum stress, displacement) against a measure of mesh density (e.g., number of nodes, element size).

- Acceptance Criterion: The results are considered mesh-independent when the change in the key output variable between the two finest meshes is less than a pre-defined threshold (e.g., 2-5%).

- 3.2 Energy Balance: For dynamic analyses, check that the total energy in the system is conserved (or accounts for dissipation correctly) to verify the time integration scheme.

4.0 Validation Procedure (Representation of Physical Reality):

- 4.1 Comparison with Benchmark Data:

- Methodology: Compare FEA results with analytical solutions for simplified problems or with highly trusted benchmark results from established literature [18].

- Documentation: Quantitatively document the difference between the FEA results and the benchmark.

- 4.2 Comparison with Experimental Data (Gold Standard):

- Methodology: If available, compare FEA results with physical test data obtained from a well-controlled experiment designed to replicate the model's boundary conditions and loading [12].

- Acceptance Criterion: Establish and document acceptable error margins based on the intended use of the model and measurement uncertainty.

5.0 Reporting: Adhere to a standardized reporting checklist, such as the one proposed for orthopedic and trauma biomechanics, to ensure all crucial methodologies for the V&V process are documented [12].

Protocol 2: QMS Internal Audit for FEA Processes

This protocol outlines the procedure for conducting an internal audit of FEA activities within an ISO 9001-based QMS.

1.0 Objective: To determine the conformity and effectiveness of FEA processes and their alignment with the organization's QMS and relevant guidelines.

2.0 Pre-Audit Preparation:

- 2.1 Audit Plan: Define the scope, objectives, and criteria (e.g., ISO 9001 clauses, NAFEMS guidelines, internal procedures) for the audit. Select an audit team with competence in both auditing and FEA fundamentals.

- 2.2 Checklist Development: Prepare an audit checklist based on the criteria. Example questions include:

- Is the competence of FEA personnel defined, and are training records maintained? (ISO 9001:2015, 7.2)

- Is there a procedure for the validation of FEA software? (NAFEMS Guidelines)

- Is there evidence that pre-analysis checklists are being used? (Internal Procedure)

3.0 On-Site Audit Execution:

- 3.1 Opening Meeting: Brief the auditees on the plan and scope.

- 3.2 Evidence Collection: Collect objective evidence through interviews, observation of work, and review of records (e.g., project documentation, model files, V&V reports, training records) [15].

- 3.3 Data Analysis: Compare collected evidence against audit criteria to identify conformities and non-conformities.

4.0 Post-Audit Activities:

- 4.1 Audit Report: Prepare a detailed report that includes the audit scope, criteria, findings, and conclusions. The report should be distributed to relevant management.

- 4.2 Corrective Actions: For any non-conformities, the responsible area must perform a root cause analysis and implement corrective actions. The effectiveness of these actions must be verified.

The Scientist's Toolkit: Essential Reagents and Materials

For a research team implementing a QMS for FEA, the "reagents" are the software, hardware, and documented knowledge that enable quality outcomes.

Table 2: Essential Materials and Tools for a QMS-driven FEA Research Environment

| Item / Solution | Function / Purpose | QMS Consideration |

|---|---|---|

| FEA Software Package | Core tool for creating and solving computational models. | Must be validated for its intended use. Access and version control should be managed [13]. |

| High-Performance Computing (HPC) Hardware | Provides the computational power for complex models and convergence studies. | A managed IT infrastructure that ensures data integrity, security, and availability (ISO 9001:2015, 7.1.3). |

| Material Property Database | A centralized, curated source of validated material data for simulations. | Critical for reproducible results. Must be controlled and maintained as documented information (ISO 9001:2015, 7.5). |

| Pre- and Post-Analysis Checklists | Standardized forms to guide and verify key steps in the FEA process [15]. | Aids in mistake-proofing and ensures consistency. Part of the organization's documented information. |

| V&V Benchmark Case Library | A collection of solved benchmark problems for software and methodology validation. | Serves as objective evidence of competence and validation. Used for training and proficiency testing. |

| Electronic Document Management System (EDMS) | Manages controlled documents, records, and approval workflows. | The backbone of the QMS, ensuring control of documents and records (ISO 9001:2015, 7.5). |

Establishing a FQA Culture in Biomedical Research

Finite Element Analysis (FEA) has become an indispensable computational tool in biomedical research, enabling the simulation of complex physical phenomena from orthopedic implant stresses to cardiovascular fluid dynamics. The finite element method (FEM) operates by subdividing complex structures into smaller, manageable elements and solving the underlying differential equations governing system behavior [19]. In biomedical contexts, where patient safety and therapeutic efficacy are paramount, establishing a robust Finite element analysis Quality Assurance (FQA) culture is not merely beneficial—it is essential for producing reliable, validated results that can inform critical research and development decisions.

The transition of FEA from specialist preserve to routine tool used by non-specialists heightens this necessity [20]. Without systematic quality management, FEA risks becoming a "black box" that generates visually compelling but potentially misleading results [21]. This document outlines comprehensive protocols and application notes for embedding FQA principles within biomedical research organizations, with particular emphasis on quality management systems and validation frameworks aligned with biomedical regulatory requirements.

Core Principles of FQA for Biomedical FEA

Fundamental FQA Objectives

A robust FQA culture in biomedical research serves several critical functions:

- Enhanced Reliability: Ensures FEA simulations accurately represent biomechanical reality, reducing dependency on costly physical prototyping while maintaining scientific rigor [22] [23].

- Regulatory Preparedness: Facilitates compliance with quality standards relevant to medical devices and computational modeling in drug development [20].

- Error Reduction: Systematically addresses common pitfalls in the FEA process, from improper mesh generation to unrealistic boundary conditions [21].

- Knowledge Preservation: Creates institutional memory of validated methods rather than ad hoc analytical approaches.

Establishing a Quality Management Framework

The foundation of effective FQA implementation lies in adapting quality management systems specifically for finite element analysis. The NAFEMS Quality System Supplement provides a sector-specific framework that can be tailored to biomedical research contexts [20]. Key components include:

- Documented Analysis Procedures: Standardized protocols for different types of biomedical analyses (e.g., orthopedic, cardiovascular, soft tissue mechanics).

- Personnel Competency Standards: Defined requirements for FEA training and proficiency demonstration.

- Software Validation Protocols: Procedures for verifying that FEA software performs as expected for intended biomedical applications.

- Independent Review Mechanisms: Structured processes for technical review of critical FEA projects by qualified personnel not directly involved in the analysis.

Table: Core Components of a Biomedical FQA System

| Component | Description | Implementation Example |

|---|---|---|

| Quality Management System | Framework of procedures and responsibilities | ISO 9001 with NAFEMS QSS supplement [20] |

| Analysis Planning | Formal definition of objectives and methods | Pre-analysis checklist documenting design criteria and acceptance thresholds [21] |

| Model Validation | Processes for verifying model accuracy | Comparison with experimental biomechanical testing data [22] |

| Documentation | Comprehensive recording of analysis decisions | Electronic lab notebook with version-controlled protocols |

Pre-Analysis Planning and Protocol Definition

Strategic Analysis Planning

Effective FQA begins before any software is launched, with comprehensive planning that defines objectives, constraints, and acceptance criteria [21]. Biomedical researchers should document responses to the following fundamental questions:

- Design Objective: What specific biomedical question is being addressed? (e.g., "Will the spinal implant withstand cyclic loading corresponding to 10 years of use?")

- Analysis Justification: Why is FEA the appropriate tool compared to analytical methods or experimental approaches?

- Design Criteria: What specific thresholds define success or failure? (e.g., "Stress must remain below yield strength with a safety factor of 2.0")

- Model Boundaries: How much of the anatomical structure needs to be modeled to capture relevant phenomena?

- Tolerance for Error: What level of numerical accuracy is required for clinical or regulatory decision-making?

Analysis Type Selection Protocol

Selecting the appropriate analysis type is critical for capturing relevant biomedical behaviors. The following decision protocol provides a systematic approach:

Static vs. Dynamic Assessment:

- Constant loading over relatively long periods → Static analysis

- Negligible inertial and damping effects → Static analysis

- Gradual load application → Static analysis

- Excitation frequency < 1/3 of structure's lowest natural frequency → Static analysis [21]

Linearity Determination:

- Stiffness unchanged under loading → Linear problem

- Strains < 5% → Linear problem

- Stresses below proportional limit → Linear problem [21]

Nonlinearity Characterization (if applicable):

- Large deformations → Nonlinear geometric

- Material plasticity or hyperelasticity (e.g., soft tissues) → Nonlinear material

- Changing boundary conditions or contact → Nonlinear boundary conditions [21]

Table: FEA Types for Biomedical Applications

| Analysis Type | Biomedical Applications | Key Considerations |

|---|---|---|

| Structural Static | Implant stress analysis, bone fixation | Majority of biomechanical assessments; assumes linear elastic behavior [22] |

| Modal Analysis | Prosthesis design, surgical instrument development | Identifies natural frequencies to prevent resonance [22] |

| Thermal Analysis | Tissue ablation planning, cryopreservation devices | Models heat distribution in steady or transient states [22] |

| Thermo-Structural | Dental implants, thermally-activated devices | Evaluates effect of thermal loads on mechanical behavior [22] |

| Fatigue & Life Prediction | Orthopedic implants, cardiovascular devices | Predicts failure due to cyclic loading over time [22] |

| Nonlinear Analysis | Soft tissue mechanics, hyperelastic materials | Handles large deformations, material nonlinearity, or contact problems [22] |

FEA Model Development and Execution Protocols

Geometry Preparation and Mesh Generation

Biomedical geometries derived from medical imaging present unique challenges for FEA. The following protocol establishes best practices for model preparation:

- Geometry Cleanup: Identify and remove non-essential anatomical features that do not contribute significantly to mechanical behavior (e.g., small vasculature in bone models, minor surface irregularities) [21].

- Dimensional Verification: Confirm imported geometry dimensions match anatomical reality, especially when derived from segmented medical images [21].

- Mesh Quality Standards: Establish element quality thresholds specific to biomedical applications:

- Aspect Ratio: < 5:1 for soft tissue analyses, < 10:1 for bone/implant analyses

- Jacobian: > 0.7 for critical regions

- Skewness: < 60° for tetrahedral elements

- Mesh Convergence: Implement systematic mesh refinement until critical outputs (e.g., peak stress) change by < 5% between successive refinements.

Boundary Condition Application

Anatomically accurate boundary conditions are perhaps the most challenging aspect of biomedical FEA. The protocol includes:

- Physiological Loading: Base applied forces and moments on published biomechanical studies or direct measurement when possible.

- Anatomical Constraints: Model joint articulations, ligamentous restraints, and muscle forces appropriate to the simulated activity.

- Contact Definitions: Specify appropriate contact types (bonded, frictionless, frictional) with coefficients based on tissue properties literature.

- Material Properties: Implement tissue-specific material models with sensitivity analysis to address biological variability.

Validation, Verification, and Documentation Standards

Model Validation Protocol

Validation establishes that the FEA model accurately represents the real biomedical system. The validation protocol requires:

- Experimental Correlation: Compare FEA predictions with physical measurements from biomechanical testing [22]. For example, strain gauge measurements on cadaveric specimens or implant prototypes.

- Multi-level Validation: Assess both global measures (e.g., structural stiffness, natural frequencies) and local measures (e.g., strain distributions, peak stresses).

- Acceptance Criteria: Define maximum permissible differences between FEA and experimental results (typically 10-15% for well-validated models).

The case study from ACT demonstrates validation value: "ACT's FEA predicted cracking in a 3D-printed material subjected to combustion loading. Because we identified the failure mode early, we avoided more than six months of costly iteration between long-lead manufacturing and physical testing" [22].

Comprehensive Documentation Requirements

Thorough documentation enables reproducibility, peer review, and regulatory submission. The FQA documentation standard requires:

- Protocol Registration: Adapting the SPIRIT 2025 framework for computational studies, including registration of analysis protocols before execution [24].

- Assumption Logging: Explicit documentation of all modeling assumptions, simplifications, and their potential impact on results.

- Data Transparency: Following SPIRIT 2025 recommendations for sharing de-identified participant data (when applicable), statistical code, and other materials [24].

- Version Control: Maintenance of complete version history for models, inputs, and results.

Implementation Toolkit for Biomedical Researchers

Essential Research Reagent Solutions

Table: Critical Components for Biomedical FQA Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Quality Management System | Framework for procedures and responsibilities | NAFEMS QSS, ISO 9001 adaptation for biomedical FEA [20] |

| Pre-analysis Checklist | Ensures comprehensive planning before modeling | Documented responses to fundamental analysis questions [21] |

| Model Validation Database | Repository of experimental correlation data | Biomechanical test results for different tissue types and loading scenarios |

| Mesh Quality Tools | Quantitative assessment of discretization quality | Automated check for aspect ratio, Jacobian, skewness against thresholds |

| Material Property Library | Curated collection of tissue mechanical properties | Hyperelastic parameters for soft tissues, anisotropic properties for bone |

| Documentation Template | Standardized reporting format | Adapted SPIRIT 2025 checklist for computational studies [24] |

| Independent Review Protocol | Process for technical quality assessment | Checklist-driven review by qualified personnel not involved in analysis [20] |

Organizational Implementation Workflow

Establishing a sustainable FQA culture requires both technical protocols and organizational commitment. The integration of systematic quality management following international standards with biomedical research practice represents the most effective approach for ensuring reliable, reproducible FEA outcomes. As FEA continues to expand into new biomedical applications—from patient-specific surgical planning to implant design—a robust FQA culture will increasingly differentiate research excellence from merely computationally assisted conjecture.

The guidelines presented here provide a foundation for biomedical organizations to build their FQA systems, with particular emphasis on documentation standards, validation protocols, and organizational implementation. By adopting these practices, biomedical researchers can enhance the credibility of their computational findings, accelerate development cycles, and ultimately contribute to more effective healthcare solutions through reliable simulation.

Best Practices in Practice: A Step-by-Step FEA Quality Control Workflow

Defining Clear Analysis Objectives and Acceptance Criteria

Within the framework of quality control for Finite Element Analysis (FEA) technique research, establishing definitive analysis objectives and quantitative acceptance criteria forms the cornerstone of reliable simulation outcomes. The proliferation of FEA from a specialist tool to one routinely used by design engineers has intensified the need for rigorous, procedure-driven analytical activities [25]. Responsible organizations recognize that without precisely defined targets and validation benchmarks, FEA results risk becoming subjective, non-reproducible, and potentially compromising for product safety and corporate profitability [25]. This protocol outlines a systematic methodology for integrating these quality control measures into the FEA research lifecycle, ensuring analyses are fit for their intended use in scientific and industrial contexts, including drug development and medical device manufacturing.

Defining Unambiguous Analysis Objectives

Clear analysis objectives anchor the entire FEA process, guiding model development, material property selection, and the interpretation of results. Well-defined objectives are specific, measurable, and directly tied to the research or design question.

Table 1: Framework for Defining FEA Analysis Objectives

| Objective Category | Description | Example from Research | Key Performance Indicator (KPI) |

|---|---|---|---|

| Performance Assessment | Evaluate a component's behavior under specified service conditions. | Analyzing the three-stage yielding behavior of a novel steel buckling restrained brace (TSY-BRB) under cyclic loading [26]. | Distinct identification of three yielding stages in the hysteresis curve. |

| Design Validation | Verify that a design meets specific regulatory or safety standards. | Simulating a two-wheeler handlebar with a semi-active damping treatment to ensure rider comfort and structural integrity [27]. | Reduction in vibrational acceleration at the handlebar under transient loads. |

| Parametric Optimization | Identify the influence of specific parameters on system performance. | Investigating how varying magnetic field strengths affect the damping ratio of a Magnetorheological Elastomer (MRE) [27]. | Correlation between magnetic field intensity and measured damping ratio. |

| Material Characterization | Determine effective material properties through inverse analysis. | Using instrumented indentation and FEA inversion to determine power-law or linear hardening model parameters for metals [28]. | Close match between simulated and experimental load-depth indentation curves. |

Best Practices for Objective Definition

- Align with Intended Use: The objective must reflect the software's or component's role within the larger system, distinguishing between direct use (e.g., controlling a process) and supporting use [29].

- State the Physical Quantities of Interest: Explicitly define the primary outputs, such as stress, strain, displacement, natural frequency, or energy dissipation capacity [26].

- Reference Applicable Standards: Cite relevant quality standards, such as the NAFEMS Quality System Supplement or ISO 9001, which provide a foundation for quality management systems in FEA [25].

Establishing Quantitative Acceptance Criteria

Acceptance criteria are the quantitative thresholds that determine the success or failure of an FEA simulation. They are derived directly from the analysis objectives and provide an objective basis for decision-making.

Table 2: Examples of Quantitative Acceptance Criteria in FEA Research

| Criterion Type | Function | Exemplary Threshold | Associated FEA Validation Activity |

|---|---|---|---|

| Experimental Correlation | Quantifies the agreement between simulation and physical test data. | Hysteresis curves from FEA must match experimental curves with a correlation coefficient R² ≥ 0.95 [26]. | Comparison of force-displacement data from FEA and cyclic load tests. |

| Performance Metric | Defines a minimum required performance level. | The implemented MRE damping must achieve a damping ratio increase of at least 20% under optimal magnetic field [27]. | Transient dynamic analysis comparing damping ratios with and without MRE treatment. |

| Model Convergence | Ensures numerical accuracy and independence from discretization. | The result of interest (e.g., max stress) must change by less than 2% between successive mesh refinements [27]. | Mesh independence study, progressively refining element size from 4 mm to 1 mm. |

| Material Model Accuracy | Validates the chosen constitutive model's ability to replicate material behavior. | The identified material parameters must predict indentation response within 5% of the experimental measurement [28]. | Inverse analysis fitting FEA-simulated indentation to actual test data. |

Protocol for Setting and Verifying Acceptance Criteria

- Define Thresholds A Priori: Acceptance criteria must be established before running the final simulation to avoid subjective bias during result interpretation.

- Incorporate Risk-Based Analysis: The rigor of the criteria should be scaled to the process risk. A failure that could compromise patient safety demands stricter criteria and scripted testing, whereas lower-risk analyses may use more flexible, exploratory criteria [29].

- Document Rationale: Maintain a complete record including the intended use, risk-based analysis, summary of assurance activities, and the final conclusion of acceptability against the criteria [29].

Implementation Workflow and Protocol

The following diagram illustrates the integrated workflow for applying analysis objectives and acceptance criteria within a quality-assured FEA research process.

Detailed Experimental and Numerical Protocols

To ensure reproducibility, the core methodologies from cited research are detailed below.

Protocol: Experimental Calibration of Damping Ratio for MREs

This protocol details the procedure for determining the damping ratio of Magnetorheological Elastomers (MREs) under varying magnetic fields, a critical input for accurate transient FEA [27].

- Objective: To determine how different magnetic field intensities affect the damping ratio of a sandwiched MRE specimen.

- Applicable Standard: ASTM E756 [27].

- Materials and Equipment:

- MRE specimen (e.g., 180 mm × 10 mm × 2 mm).

- Stainless steel plates (same dimensions as MRE) for sandwich structure.

- Cantilever beam test apparatus with rigid clamp.

- Neodymium magnets for variable magnetic field application.

- PCB Piezotronics accelerometers (uncertainty ±1%).

- Data acquisition system.

- Procedure:

- Prepare the sandwiched specimen by bonding the MRE between two stainless steel plates.

- Mount the specimen as a cantilever beam, securing one end rigidly to the fixture.

- Position neodymium magnets around the free-vibrating section of the specimen to apply a known magnetic field intensity.

- Induce free vibration in the specimen.

- Use the accelerometer and data acquisition system to record the vibration decay response over time.

- Repeat steps 3-5 for different magnetic field strengths.

- Analyze the recorded vibration data to calculate the damping ratio for each magnetic field condition using the logarithmic decrement method.

- Quality Control: Perform triplicate tests for each condition; the maximum standard deviation should be within ±2% to ensure measurement repeatability [27].

Protocol: FEA Transient Vibration Analysis with MRE Damping

This protocol describes the FEA methodology for simulating the dynamic response of a structure incorporating experimentally characterized MREs [27].

- Objective: To perform a transient analysis of a two-wheeler handlebar with MRE damping treatment to evaluate vibration attenuation.

- Software: ANSYS Workbench 2023 R2 (or equivalent).

- Workflow:

- Material Property Assignment:

- Mesh Generation:

- Import the 3D CAD model.

- Conduct a mesh independence study. Refine tetrahedral mesh size from 4 mm down to 1 mm.

- Confirm that a mesh size of 1 mm produces results independent of further refinement [27].

- Boundary Conditions and Loading:

- Apply fixed supports at the handlebar mounting points to the frame, constraining all degrees of freedom.

- Apply a transient acceleration load (e.g., 1 m/s²) to simulate real-world operational conditions [27].

- Solution:

- Execute the transient dynamic analysis, ensuring the time step is sufficiently small to capture the vibration response.

- Post-Processing:

- Extract output parameters such as displacement, velocity, and acceleration at critical locations (e.g., handlebar grips).

- Compare the FEA-predicted vibration response with and without the MRE treatment to quantify performance improvement against the acceptance criteria.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and computational tools essential for conducting high-quality, reliable FEA research.

Table 3: Key Research Reagent Solutions for FEA Quality Control

| Item | Function / Description | Application Example |

|---|---|---|

| Magnetorheological Elastomer (MRE) | A "smart" material whose damping properties (e.g., shear modulus, damping ratio) can be tuned in real-time by applying an external magnetic field. | Used as a semi-active constrained layer damping treatment in structural components to mitigate vibrations [27]. |

| Constitutive Model (Mooney-Rivlin) | A mathematical model describing the non-linear stress-strain behavior of incompressible or nearly incompressible materials like elastomers. | Implemented in FEA software (e.g., ANSYS) to accurately simulate the mechanical response of MREs and other hyperelastic materials [27]. |

| Instrumented Indentation Technique (IIT) | A method for locally probing mechanical properties by analyzing the load-depth curve during indentation. Often coupled with FEA via inverse analysis. | Used for accurate in-site evaluation of local mechanical properties (e.g., yield strength, hardening parameters) of metallic materials [28]. |

| Inverse Analysis Methodology | A computational framework for translating experimentally measured quantities (e.g., indentation data, vibration response) into desired material or model parameters. | Calibrating parameters for complex constitutive models (power-law, linear hardening) to ensure FEA simulations reliably match physical reality [28]. |

| Mesh Refinement Tools | Software capabilities to systematically reduce element size in a model to ensure results are independent of discretization. | Conducting a mesh independence study to guarantee that key outputs (e.g., maximum stress) do not change significantly with further mesh refinement [27]. |

| Quality System Supplement (e.g., NAFEMS QSS) | A sector-specific guideline interpreting international quality standards (ISO 9001) within the context of finite element analysis. | Provides a framework for the development, operation, and certification of quality management systems specific to FEA activities [25]. |

Geometry Simplification and Defeaturing

Core Principles

Effective geometry simplification is crucial for creating computationally efficient models without sacrificing result accuracy. The primary goal involves removing unnecessary details that minimally impact global simulation results while preserving features critical to structural performance [30].

Key Defeaturing Operations:

- Fillet and Round Removal: Small fillets and rounds can typically be eliminated from CAD models as they significantly increase mesh complexity while having negligible effect on global displacement calculations [30].

- Feature Suppression: Eliminate very small components that don't affect global stiffness, such as 0201 resistors on a 12x12-inch printed circuit board assembly during mechanical shock simulation [30].

- Symmetry Utilization: Model only symmetric sections when part or assembly geometry permits, substantially reducing computational effort [31].

Advanced Simplification Techniques

- Effective Geometries: Replace complex bodies with simplified equivalents; bolts and rivets can be represented using simplified 3D geometries, 1D beam elements, or approximated with rigid contact constraints [30].

- Midsurface Generation: For thin-walled structures, use midsurface tools to create surface bodies suitable for shell meshing, which provides more accurate results for bending-dominated applications [30].

Table: Geometry Simplification Guidelines

| Feature Type | Simplification Approach | Impact on Results |

|---|---|---|

| Small fillets/rounds | Remove entirely | Negligible effect on global displacements |

| Fasteners (bolts, rivets) | Replace with beam elements or constraints | Minimal if not in critical load path |

| Thin-walled structures | Use shell elements via midsurface | Improved accuracy for bending |

| Very small components | Remove if distant from area of interest | Negligible effect on global stiffness |

| Symmetric features | Model only symmetric section | Reduced computation time |

Material Properties Assignment

Essential Material Properties

Accurate material property definition is fundamental to obtaining valid FEA results. Properties must represent the actual physical characteristics under the simulated loading conditions [31].

Critical Properties for Structural Analysis:

- Elasticity Parameters: Young's Modulus (E) defines stiffness in the loading direction, while Poisson's ratio (υ) represents transverse strain behavior, typically ranging from 0.1 to 0.33 for bone materials [32].

- Strength Parameters: Yield strength (σy) indicates the stress beyond which plastic deformation occurs, and ultimate stress (σu) defines the maximum stress before fracture [32].

- Plasticity Models: Mathematical relationships defining material behavior beyond the yield point, particularly important for simulating permanent deformation [32].

Property Assignment Methodology

- Homogenized vs. Micro-Scale Models: Continuum models merge bone and marrow as continuous solids with differing properties, while micro-scale models preserve detailed internal structure from high-resolution images [32].

- Directional Properties: Account for material anisotropy when applicable, particularly for composite materials or biological tissues [32].

- Temperature Dependence: Include temperature-varying properties for thermal analyses, especially for components exhibiting significant property changes within operational ranges [30].

Table: Essential Material Properties for FEA

| Property | Symbol | Definition | Units |

|---|---|---|---|

| Young's Modulus | E | Normal stress to normal strain ratio | Pa (GPa) |

| Poisson's Ratio | υ | Negative ratio of transverse to longitudinal strain | Unitless |

| Yield Strength | σy | Stress beyond which plastic deformation occurs | Pa (MPa) |

| Ultimate Strength | σu | Maximum stress before fracture | Pa (MPa) |

| Density | ρ | Mass per unit volume | kg/m³ |

| Shear Modulus | G | Shear stress to shear strain ratio | Pa (GPa) |

Boundary Condition Definition

Realistic Boundary Condition Strategies

Boundary conditions must accurately represent how the structure interacts with its environment without introducing artificial constraints that distort results [33].

Fundamental Approaches:

- 3-2-1 Rule: For 3D space, restrain three points to prevent rigid body motion as a foundational approach [33].

- Constraint Realism: Avoid assuming test fixtures are perfectly rigid; instead, model their actual stiffness characteristics [33].

- Connection Representation: Carefully consider whether to use common nodes (effectively welding components) or release individual degrees of freedom based on actual joint behavior [33].

Advanced Boundary Condition Techniques

- Sensitivity Analysis: Test boundary condition sensitivity by running comparative studies with different restraint approaches (e.g., fully fixed vs. spring supports) to quantify their influence on results [33].

- Experimental Validation: Measure actual interface impedance using impact hammer testing with accelerometers to determine realistic boundary stiffness values [33].

- Load Application Method Selection: Choose between static and transient analysis based on loading characteristics; use transient analysis for impact events where inertial effects are significant [30].

Mesh Generation Protocols

Element Selection Criteria

Appropriate element choice significantly impacts result accuracy and computational efficiency.

Element Type Guidelines:

- Shell vs. Solid Elements: Use shell elements for thin-walled structures where length greatly exceeds thickness and shear deformation is minimal; solid elements for bulky components [30].

- Hexahedral vs. Tetrahedral Elements: Prefer hexahedral ("brick") elements for greater accuracy at lower element counts; use tetrahedral elements for complex geometries with acute angles [30].

- Element Order: Second-order elements with midside nodes provide higher accuracy for complex stress fields but increase computational cost; first-order elements are computationally efficient but may exhibit artificial stiffness [30].

Mesh Quality Assessment

- Convergence Studies: Perform mesh refinement studies to ensure results are independent of mesh size, particularly in regions with high stress gradients [31].

- Quality Metrics: Monitor element aspect ratios, skewness, and Jacobian values to identify poorly shaped elements that degrade solution accuracy [31].

- Selective Refinement: Increase mesh density in critical regions while maintaining coarser meshing in areas with low stress variation to optimize computational efficiency [31].

Table: Mesh Element Selection Guide

| Element Type | Best Application | Advantages | Limitations |

|---|---|---|---|

| Hexahedral | Regular geometries | Accuracy at lower element count | Difficult for complex shapes |

| Tetrahedral | Complex geometries | Handles acute angles well | Lower accuracy per element |

| Shell | Thin-walled structures | Efficient for bending | Limited to thin structures |

| Beam | Slender components | Computational efficiency | Simplified stress field |

Experimental Protocols for Validation

Model Verification Methodology

- Mesh Convergence Protocol: Systematically reduce element size in critical regions until percentage change in maximum stress and displacement falls below an acceptable threshold (typically <5%) [31].

- Result Examination Sequence: Always plot model displacements for each load case before examining stresses; if displacements appear unrealistic, stress results are likely invalid [33].

- Boundary Condition Stress Check: Examine loads and stresses at application points; wild stress peaks often indicate over-constrained models requiring boundary condition adjustment [33].

Validation Against Experimental Data

- Comparative Analysis: Where possible, compare FEA results with experimental data or analytical solutions to validate model accuracy [31].

- Sensitivity Analysis: Perform parameter sensitivity studies to understand how changes in input parameters affect outcomes, identifying critical variables requiring precise definition [31].

- Methodological Quality Assessment: Utilize structured assessment instruments like MQSSFE (Methodological Quality Assessment of Single-Subject Finite Element Analysis) for systematic quality evaluation in computational orthopedics [34].

Workflow Visualization

FEA Model Setup Workflow

Table: Research Reagent Solutions for FEA

| Tool/Category | Function | Application Context |

|---|---|---|

| CAD Defeaturing Tools | Remove unnecessary features | Geometry simplification |

| Midsurface Generators | Create surface bodies from solids | Thin-walled structure modeling |

| Hexahedral Meshers | Generate brick elements | Regular geometry regions |

| Tetrahedral Meshers | Handle complex geometries | Components with acute angles |

| Convergence Assessment | Verify mesh independence | Quality assurance |

| Material Libraries | Provide validated properties | Material definition |

| Validation Datasets | Experimental comparison | Model verification |

| Sensitivity Analysis | Parameter impact assessment | Uncertainty quantification |

Element Selection Logic

Element Selection Decision Tree

In Finite Element Analysis (FEA), the pursuit of numerical accuracy is paramount, and the mesh convergence study stands as a fundamental quality control procedure within computational mechanics research. This process systematically verifies that a simulation's results are independent of the discretization of the domain, ensuring that the solution accurately captures the underlying physics rather than numerical artifacts. An inadequately converged mesh can dramatically impact the accuracy and reliability of simulation results, leading to underestimated stress values, incorrect failure predictions, and ultimately, misguided engineering decisions [35]. For researchers and scientists, particularly those applying FEA to critical domains like biomedical device development, establishing a rigorously converged mesh is not merely a best practice but an ethical imperative for generating trustworthy data.

The core principle of a mesh convergence study is to progressively refine the mesh and observe the stabilization of a Quantity of Interest (QoI). When further refinement produces a negligible change in the QoI, the solution is considered mesh-converged [36]. This process directly addresses one of the foundational assumptions of FEA: that the continuous domain can be accurately represented by a finite number of discrete elements. The following foundational diagram illustrates the logical relationship between the core components of a mesh convergence study.

Quantitative Standards and Convergence Criteria

Establishing quantitative criteria is essential for an objective assessment of convergence. While visual inspection of a convergence plot is informative, definitive judgment requires numerical tolerances. A common benchmark is to consider a solution converged when successive mesh refinements alter the QoI by less than a predefined percentage, often between 1% and 5% depending on the application's criticality [35]. For instance, safety-critical applications like aerospace or medical implants may demand a strict 1% criterion, whereas preliminary design studies might accept 5%.

Beyond simple percentage change, error norms provide a more rigorous, mathematically sound basis for evaluating convergence, especially when analytical solutions are unavailable. These norms compute the error in the solution over the entire domain, not just at a single point. The rate at which these error norms decrease with mesh refinement also serves as an indicator of solution quality and proper element formulation [36].

Table 1: Quantitative Error Norms for Mesh Convergence Analysis

| Error Norm | Mathematical Expression | Primary Application | Theoretical Convergence Rate |

|---|---|---|---|

| L²-Norm (Displacement) | $$|e|{L2} = \sqrt{\int{\Omega} (u{h} - u)^2 d\Omega}$$ | Measures error in displacement field across the entire domain. | Order ( p+1 ) |

| Energy Norm | $$|e|{E} = \sqrt{\frac{1}{2} \int{\Omega} (\sigma{h} - \sigma):(\epsilon{h} - \epsilon) d\Omega}$$ | Measures error in the strain energy, sensitive to stress/strain derivatives. | Order ( p ) |

Note: In the expressions, ( u ) and ( u_h ) represent the exact and FE solutions for displacement, ( \sigma ) and ( \sigma_h ) for stress, and ( \epsilon ) and ( \epsilon_h ) for strain. The variable ( p ) denotes the order of the element used. [36]

The choice of QoI is critical and must align with the research objective. While maximum stress is a common focus, other parameters like displacement at a critical point, natural frequency, reaction force, or temperature may be more relevant. It is also crucial to monitor the convergence of multiple parameters, as they may converge at different rates [35].

Methodological Protocol for Conducting a Mesh Convergence Study

A robust, systematic protocol is vital for producing defensible research results. The workflow below outlines the key stages of this process, from problem definition to the final recommendation for an optimal mesh.

Step-by-Step Experimental Procedure

Identify Quantity of Interest (QoI) and Critical Regions: Begin by selecting the specific output parameter that is most critical to the research objective, such as maximum principal strain in a specific tissue region or stress at a device-bone interface [35] [37]. Use engineering judgment and preliminary analyses to identify geometric features like holes, fillets, or contact regions where high gradients are expected, as these will require finer meshing.

Generate Initial Coarse Mesh: Create an initial mesh that captures all geometric features but uses relatively large elements. The global element size should be based on the smallest feature of interest. Document the initial mesh statistics, including the total number of elements and nodes, as well as element quality metrics (aspect ratio, skew, Jacobian) [37].

Execute Iterative Simulation Loop: Run a complete FEA for the current mesh density, ensuring all boundary conditions, loads, and material properties are consistent and representative of the physical scenario. The only variable changing between runs should be the mesh density. Record the QoI value and computational time for each run.

Systematically Refine the Mesh: Refine the mesh for the next iteration. This can be achieved through:

- Global h-refinement: Uniformly decreasing the element size throughout the model, approximately doubling the number of elements in 3D [35].

- Local h-refinement: Selectively reducing element size in the pre-identified critical regions and areas with high solution gradients [35] [36].

- p-refinement: Increasing the order of the elements (e.g., from linear to quadratic) without changing the mesh topology, which can often lead to faster convergence for smooth solutions [36].

Plot Results and Assess Convergence: Plot the QoI on the Y-axis against a measure of mesh density on the X-axis, such as the total number of elements or the average element size. Assess the plot for stabilization. The solution is considered converged when the change in the QoI between two successive refinements falls below the pre-defined tolerance (e.g., 2%) [35] [38].

Document and Report: The convergence study must be thoroughly documented in any research output. This includes the convergence plot, the quantitative criteria used, the achieved tolerance, mesh statistics for the final model, and a discussion of any encountered issues, such as singularities [35].

Practical Implementation and Special Considerations

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for FEA Convergence Studies

| Item Category | Specific Examples / Formulations | Function & Research Purpose |

|---|---|---|

| Element Types | Linear Tetrahedra (C3D4), Quadratic Tetrahedra (C3D10), Linear Hexahedra (C3D8R), Quadratic Hexahedra (C3D8I) [37] | Discrete building blocks of the model. Quadratic elements generally provide better strain accuracy and convergence behavior than linear elements. |

| Constitutive Models | First-order Ogden hyper-viscoelastic model [37], Neo-Hookean, Plasticity models. | Mathematical description of material behavior. Accurate models are essential for trustworthy results, especially in biological tissues. |

| Mesh Quality Metrics | Aspect Ratio (< 3), Skew (< 50°), Jacobian (> 0.8) [37] | Quantitative measures of element shape. High-quality elements are prerequisites for accuracy, independent of mesh density. |

| Solver & Integration Schemes | Explicit Dynamic Solver, Enhanced Full-Integration (C3D8I), Reduced Integration (C3D8R) with hourglass control [37] | Numerical engines that solve the system of equations. The choice affects stability, accuracy (e.g., locking), and computational cost. |

| Hourglass Control | Relax Stiffness Hourglass Control, Enhanced Hourglass Control, Viscous Hourglass Control [37] | Prevents spurious zero-energy deformation modes in reduced-integration elements. Energy should be monitored and controlled. |

Addressing Numerical Pathologies and Singularities

A key challenge in convergence studies is dealing with non-convergent behaviors. A common issue is the stress singularity, which occurs at geometric discontinuities like sharp reentrant corners, point loads, or boundary condition application points. In these locations, the stress theoretically approaches infinity, and mesh refinement will cause the reported stress to increase without bound, preventing convergence [35] [36].

Strategies to Address Singularities:

- Geometric Modification: Add small fillets to sharp internal corners to match manufacturing reality [35] [36].

- Load/Constraint Realism: Distribute point loads and constraints over finite areas to better represent physical load introduction [35].

- Result Interpretation: Evaluate stress at a small, consistent distance away from the singularity location, as the singularity's influence is highly localized [35].

Another critical consideration is element locking, which includes volumetric locking in nearly incompressible materials (e.g., polymers, soft tissues) and shear locking in bending-dominated problems. Locking manifests as an overly stiff element response. Mitigation strategies include using specialized element formulations, such as second-order elements for incompressibility or elements with selective/reduced integration schemes to avoid shear locking [36].

Protocol for a Mesh Independence Study in Nonlinear/CFD Analysis

For nonlinear transient analyses or Computational Fluid Dynamics (CFD), the concept of convergence expands to include solver iteration convergence in addition to mesh independence. The following integrated protocol is recommended [39]:

- Define Values of Interest: Clearly identify key outputs (e.g., pressure drop, average temperature, force).

- Establish Convergence Criteria:

- Residual RMS error values should reduce to an acceptable level (typically 10⁻⁴ to 10⁻⁵).

- Monitor points for values of interest must reach a steady state.

- Global domain imbalances (e.g., mass, energy) should be less than 1%.

- Perform Mesh Independence Loop: Start with an initial mesh and run the simulation until the criteria in Step 2 are met. Record the value of interest.

- Refine and Compare: Globally refine the mesh (e.g., 1.5x more elements) and rerun the simulation. Compare the new value of interest with the previous one.

- Check for Independence: If the change is within an acceptable tolerance (e.g., 0.5%), the solution is mesh-independent. If not, further refine the mesh and repeat until independence is achieved [39].

Table 3: Convergence Criteria for Nonlinear/CFD Simulations

| Criterion | Target Value | Purpose & Rationale |

|---|---|---|

| Residual RMS Error | ≤ 10⁻⁴ to 10⁻⁵ | Indicates that the governing equations (e.g., momentum, energy) are being satisfied accurately within the domain. |

| Monitor Point Stability | Steady-state value achieved | Ensures that the key output parameters are no longer changing with successive solver iterations. |

| Domain Imbalance | < 1% for all conserved quantities | Ensures conservation of mass, energy, and momentum across the entire computational domain. |