Ensuring Diagnostic Accuracy: A Comprehensive Guide to Inter-Rater Reliability in Parasite Morphology Identification

Accurate parasite identification is foundational to effective disease diagnosis, treatment, and research.

Ensuring Diagnostic Accuracy: A Comprehensive Guide to Inter-Rater Reliability in Parasite Morphology Identification

Abstract

Accurate parasite identification is foundational to effective disease diagnosis, treatment, and research. However, traditional morphological methods, reliant on expert microscopy, are inherently challenged by subjective interpretation, leading to significant variability between technologists. This article explores the critical issue of inter-rater reliability in parasite morphology identification, examining its impact on diagnostic consistency and patient care. We delve into foundational concepts, including the sources of human error and the complex life cycles of parasites that complicate identification. The review then investigates methodological advancements, with a particular focus on the emerging role of artificial intelligence and deep learning models in standardizing identification and achieving expert-level agreement. Furthermore, we address practical strategies for troubleshooting and optimizing laboratory workflows to enhance consistency. Finally, we present a comparative analysis of validation techniques, from statistical measures like Cohen's Kappa to advanced molecular methods, providing a holistic framework for researchers, scientists, and drug development professionals to assess and improve diagnostic accuracy in parasitology.

The Human Factor in Parasite ID: Understanding the Foundations of Diagnostic Variability

Defining Inter-Rater Reliability in the Context of Parasite Morphology

Inter-rater reliability (IRR) represents a fundamental metric in parasitology, quantifying the degree of agreement among independent observers when identifying and classifying parasites based on morphological characteristics. In both research and clinical diagnostics, morphological identification serves as a cornerstone for disease surveillance, treatment decisions, and understanding parasite epidemiology. However, this traditional approach is inherently susceptible to subjective interpretation, leading to potential inconsistencies that can undermine data quality and reproducibility.

The implications of unreliable morphological identification extend across multiple domains. For veterinary medicine, misidentification can lead to inappropriate anthelmintic treatment strategies in livestock and companion animals. In public health, it can compromise disease surveillance accuracy and outbreak response for parasitic diseases affecting human populations. Furthermore, in pharmaceutical development, inconsistent parasite identification can introduce variability into drug efficacy assessments, potentially obscuring treatment effects or leading to false conclusions about compound activity.

This guide objectively compares the performance of traditional morphological identification against emerging molecular and artificial intelligence (AI) technologies, providing researchers with experimental data to inform their methodological choices. The evaluation is framed within the critical context of improving IRR to enhance the rigor and reproducibility of parasitology research.

Comparative Analysis of Identification Methods

Table 1: Methodological Comparison of Parasite Identification Techniques

| Method | Theoretical Basis | Typical IRR Report | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Morphological Identification | Visual analysis of structural features (size, shape, internal structures) | Variable; often "slight" to "fair" (e.g., κ for S. vulgaris: "poor") [1] | Low cost, equipment simplicity, provides immediate data | Subject to observer expertise and subjective interpretation |

| Molecular Identification | Detection of species-specific genetic markers via PCR/HRM analysis | High (gold standard for validation) [1] | High specificity, not reliant on morphological expertise | Requires specialized equipment, higher cost, complex sample preparation |

| AI-Assisted Identification | Deep learning algorithms trained on image datasets | Exceptional (e.g., >99% accuracy in model validation) [2] [3] | High throughput, consistency, eliminates observer fatigue | Requires extensive training datasets, computational resources |

Table 2: Quantitative Performance Comparison Across Parasite Groups

| Parasite Group | Morphological Identification Accuracy/IRR | Molecular Identification Accuracy | AI-Assisted Identification Accuracy |

|---|---|---|---|

| Strongylus spp. (Equine) | "Slight" to "poor" IRR for species [1] | 97-99% for species differentiation [1] | Not specifically reported for Strongylus |

| Plasmodium spp. (Avian) | Subject to inter-examiner variability [3] | Gold standard via PCR [3] | 99% accuracy with Darknet model [3] |

| Intestinal Parasites (Human) | Limited by morphological similarity [2] | High specificity/sensitivity [2] | 98.93% accuracy with DINOv2-large [2] |

| Schistosoma mansoni | Labor-intensive, subjective [4] | Not specifically reported | 96.6% mAP with YOLOv5 [4] |

Experimental Protocols for Assessing Inter-Rater Reliability

Protocol 1: Morphological Versus Molecular Identification in Strongylus Species

Background and Objectives: A 2025 comparative study aimed to evaluate the reliability of morphological larval identification for equine Strongylus species by using molecular techniques as a reference standard. The research sought to quantify discrepancies between these methods in routine diagnostic settings [1].

Methodology:

- Sample Collection and Preparation: 712 strongyle egg-positive equine fecal samples were collected during routine diagnostic investigations in Germany. Larval cultures were performed according to standard parasitological techniques to obtain third-stage larvae (L3) for analysis [1].

- Morphological Differentiation: Trained technicians examined L3 larvae under microscopy, identifying species based on established morphological characteristics including larval size, intestinal cell structure, and tail features. Specimens were categorized as Strongylus vulgaris, S. edentatus, S. equinus, or other Strongylus species [1].

- Molecular Validation: DNA was extracted from larval cultures. Samples were analyzed using a S. vulgaris-specific real-time PCR assay followed by high-resolution melting (HRM) PCR for differentiation of S. edentatus, S. equinus, and S. asini. The 28S rRNA gene target was amplified to confirm nematode DNA presence [1].

- IRR Statistical Analysis: Inter-rater reliability between morphological and molecular identification was calculated using standard statistical measures for diagnostic agreement. The frequency of identification for each species was compared across methods [1].

Protocol 2: AI-Assisted Identification of Intestinal Parasites

Background and Objectives: A 2025 study developed and validated deep learning models for automated identification of human intestinal parasites in stool samples, comparing model performance against human expert microscopy as the reference standard [2].

Methodology:

- Sample Processing and Reference Standard: Stool samples were processed using formalin-ethyl acetate centrifugation technique (FECT) and Merthiolate-iodine-formalin (MIF) staining performed by experienced medical technologists to establish the reference standard diagnosis [2].

- Image Dataset Preparation: Modified direct smears were prepared from samples, with images captured for model training (80% of dataset) and testing (20% of dataset). The dataset included diverse intestinal parasites with annotation by species [2].

- Model Training and Validation: Multiple state-of-the-art deep learning architectures were implemented including YOLOv4-tiny, YOLOv7-tiny, YOLOv8-m, ResNet-50, and DINOv2 variants. Models were trained using an in-house CIRA CORE platform with optimization of hyperparameters [2].

- Performance Evaluation: Model performance was assessed using confusion matrices with metrics including accuracy, precision, sensitivity, specificity, and F1-score calculated via one-versus-rest and micro-averaging approaches. Agreement between AI and human raters was quantified using Cohen's Kappa and Bland-Altman analysis [2].

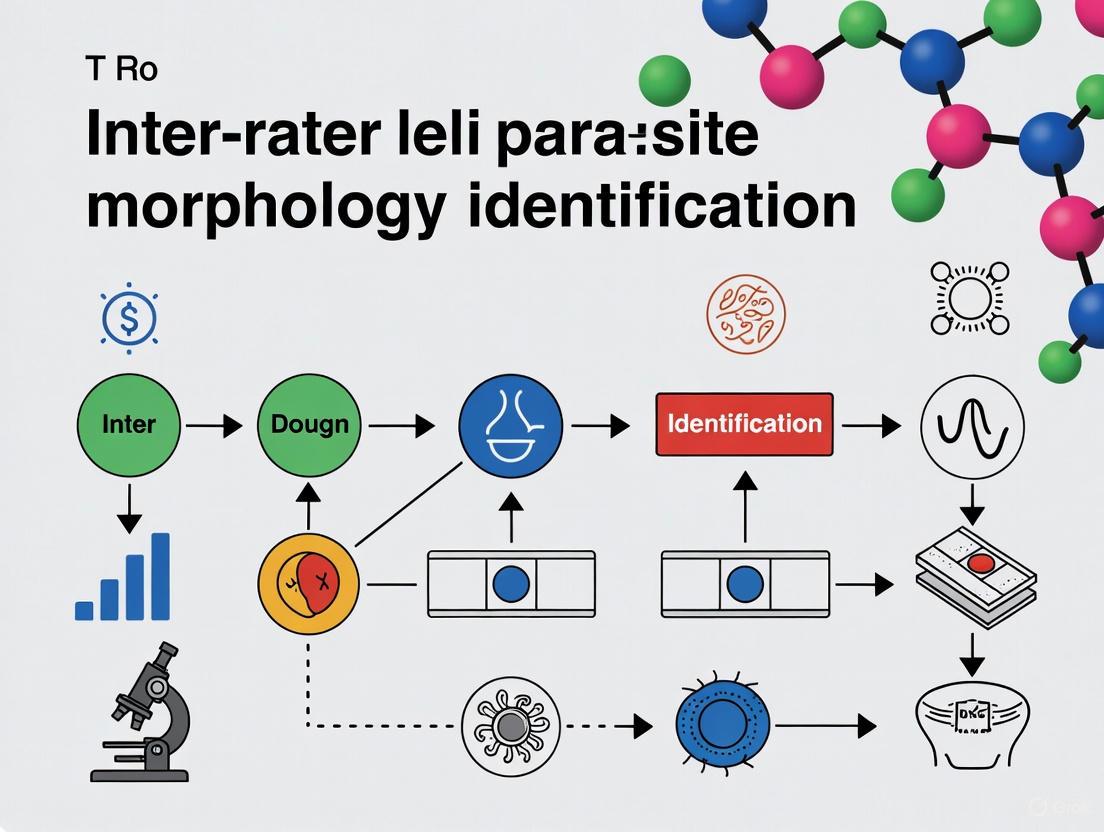

Figure 1: Experimental workflow for assessing parasite identification reliability, comparing traditional morphological and advanced AI-assisted pathways with molecular validation.

Key Research Reagents and Materials

Table 3: Essential Research Reagents for Parasite Identification Studies

| Reagent/Equipment | Specific Application | Function in Experimental Protocol |

|---|---|---|

| Formalin-ethyl acetate | Stool sample processing [2] | Concentration and preservation of parasitic elements for microscopy |

| Giemsa stain | Blood film and larval staining [5] [3] | Enhances visual contrast of parasitic structures for morphological analysis |

| PCR reagents | Molecular identification [1] | Amplification of species-specific genetic markers for definitive identification |

| High-resolution melting PCR | Species differentiation [1] | Discrimination of closely related species based on melt curve analysis |

| YOLOv5 algorithm | AI-assisted detection [4] | Object detection and classification of parasites in digital images |

| DINOv2 models | AI-based classification [2] | Self-supervised learning for parasite identification without extensive labeling |

Technological Advancements in Reliability Assessment

Artificial Intelligence and Machine Learning Applications

Recent advances in artificial intelligence have transformed approaches to parasite identification, offering solutions to the inherent variability of human-based morphological assessment. Deep learning models, particularly convolutional neural networks (CNNs) and vision transformers, have demonstrated remarkable performance in automated parasite detection and classification.

In avian malaria research, Darknet models achieved exceptional accuracy exceeding 99% for classifying Plasmodium gallinaceum blood stages, significantly reducing misclassification rates compared to traditional microscopy [3]. Similarly, for human intestinal parasites, DINOv2-large models attained 98.93% overall accuracy with 78.00% sensitivity and 99.57% specificity, demonstrating strong agreement with expert microscopists (κ > 0.90) [2]. These AI systems not only enhance identification consistency but also address challenges associated with expertise scarcity in resource-limited settings.

For drug discovery applications, YOLOv5 implementation in schistosomiasis research enabled high-throughput screening of compound efficacy against Schistosoma mansoni schistosomula. The model achieved 96.6% mean average precision in distinguishing healthy from damaged parasites, while significantly reducing analysis time compared to manual assessment [4]. This approach minimizes subjective viability assessments that traditionally introduce variability into drug efficacy studies.

Figure 2: Evolution of parasite identification methods demonstrating progressive improvement in reliability through technological integration.

Molecular Validation Frameworks

Molecular techniques have established themselves as reference standards for validating morphological identification, with PCR-based methods providing definitive species determination when morphological features are ambiguous or overlapping. The 2025 Strongylus study exemplifies this validation framework, where HRM-PCR revealed significant discrepancies in species-specific identification frequencies between morphological and molecular approaches [1].

Notably, molecular methods enabled the first report of a patent Strongylus asini infection in a domestic horse, a finding that morphological examination alone failed to detect [1]. This demonstrates how molecular techniques not only validate morphological identification but also expand our understanding of parasite epidemiology through detection of cryptic species or variants.

Implications for Research and Drug Development

The methodological comparisons presented in this guide carry significant implications for parasitology research and anti-parasitic drug development. Consistent and accurate parasite identification forms the foundation of reliable efficacy assessment for novel compounds. The integration of AI-assisted methods and molecular validation into screening pipelines addresses critical sources of variability that can compromise drug development efforts.

For veterinary parasitology, improved IRR directly enhances surveillance data quality, enabling more targeted anthelmintic intervention strategies and better resistance management. In human public health, reliable parasite identification strengthens disease burden assessments and treatment monitoring programs. Future methodological development should focus on integrated systems that leverage the respective strengths of morphological, molecular, and computational approaches while addressing limitations of individual methods through strategic combination.

As technological advancements continue to transform parasitology, maintaining focus on methodological reliability will remain essential for generating reproducible research and effective clinical interventions. The experimental frameworks and comparative data presented here provide researchers with evidence-based guidance for selecting identification methods appropriate to their specific research contexts and reliability requirements.

The High-Complexity Nature of Microscopic Parasitology and its Inherent Subjectivity

Microscopic morphology remains the cornerstone of parasitic disease diagnosis, yet it is characterized by significant technical complexity and inherent diagnostic subjectivity. This guide objectively compares established and emerging parasitological methods, framing the analysis within a critical thesis on inter-rater reliability in parasite identification. Data from controlled experiments quantifying variability between expert microscopists are presented alongside emerging computational solutions designed to mitigate these challenges. The analysis is structured to provide researchers, scientists, and drug development professionals with a clear evidence-based overview of methodological performance, experimental protocols, and the evolving toolkit for parasitological research.

In clinical diagnostics, microscopic parasitology is formally categorized as a high-complexity testing domain under the Clinical Laboratory Improvement Amendments (CLIA) [6]. This classification reflects the extensive knowledge and skill required for accurate morphological identification, which encompasses understanding parasite life cycles, taxonomic classification, and microscopic analysis across diverse specimen types [7]. Despite advancements in molecular techniques, microscopy persists as the gold standard for many parasitic infections, enabling direct parasite observation, species differentiation, and quantification crucial for treatment and research [5] [7].

However, this dependence on morphological expertise is paradoxically threatened by a widespread decline in these very skills. The parasitology community has raised concerns that increased reliance on non-morphology-based diagnostics like rapid antigen tests and nucleic acid amplification tests has led to a progressive loss of morphology expertise [7]. This loss directly impacts diagnostic reliability, potentially leading to missed diagnoses, inappropriate treatment, and mischaracterization of emerging pathogens [7]. The core of this problem lies in the field's inherent subjectivity, where identification accuracy is intrinsically linked to the observer's training and experience, resulting in substantial inter-rater variability.

Experimental Comparisons of Microscopic Methods and Inter-Rater Reliability

Quantifying Variability in Malaria Parasite Counting

A critical study directly compared the established methods for estimating malaria parasitaemia to determine which yields the least inter-rater and inter-method variation [5]. Experienced malaria microscopists counted asexual parasitaemia in 31 Plasmodium falciparum samples using three distinct methods.

Table 1: Comparison of Malaria Parasite Counting Methods and Their Reliability

| Counting Method | Principle | Reported Parasite Density vs. True Count | Sensitivity at Low Parasitaemia (<500/μL) | Inter-Rater Reliability |

|---|---|---|---|---|

| Thin Film Method | Parasites per 5000 erythrocytes, adjusted for total RBC count [5] | ~30% higher than thick film methods [5] | Low (loss of sensitivity) [5] | Not quantified in ANOVA model |

| Thick Film Method | Parasites per 500 white blood cells, adjusted for total WBC count [5] | Closer to true count at high parasitaemia [5] | High [5] | Best among the methods [5] |

| Earle and Perez Method | Number of parasites in fields containing 500 WBCs [5] | Similar to thick film method (little to no bias) [5] | High [5] | Good, but slightly lower than thick film [5] |

The statistical analysis, using ANOVA models on log-transformed counts, revealed that the thick film method demonstrated the best inter-rater reliability [5]. While the thin film method gave counts closer to the true parasite density, it was deemed impractical for low parasitaemias. The study concluded that the thick film method was both reproducible and practical, emphasizing that "the determination of malarial parasitaemia must be applied by skilled operators using standardized techniques" [5].

Detailed Experimental Protocol: Malaria Parasite Counting

The following workflow details the key experimental steps from the comparative study of malaria parasite counting methods [5].

Key Methodological Details:

- Sample Collection: Blood samples were collected from patients in Cambodia during a high transmission season after obtaining informed consent. Samples were transported on ice and processed upon receipt at the laboratory [5].

- Slide Preparation and Staining: Thick and thin smears were made in the field. Additional Earle and Perez slides and thin films were prepared from EDTA blood upon laboratory receipt. All slides were stained with Giemsa at pH 7.2 [5].

- Microscopy and Counting: Seven pre-qualified microscopists across four international sites performed counts. Raters were blinded to each other's results to prevent bias. Counts were performed using the three defined methods [5].

- Statistical Analysis: Parasite counts were log-transformed for analysis to assess proportional differences. Analysis of Variance (ANOVA) models were used to estimate inter-rater reliability, and paired t-tests and regression analyses assessed systematic bias between methods [5].

The Evolving Toolkit: Addressing Complexity and Subjectivity with Technology

Automated Computational Methods for Malaria Diagnosis

To address the challenges of manual microscopy—time consumption, tedium, and observer variability—researchers are developing automated computational methods [8]. These systems typically follow a multi-stage pipeline to diagnose malaria from digital blood smear images.

Table 2: Research Reagent Solutions for Parasitology Analysis

| Reagent/Material | Function/Application | Example Use-Case |

|---|---|---|

| Giemsa Stain (pH 7.2) | Staining malaria parasites in blood smears for microscopic visualization [5] | Differentiation of parasite stages (ring, trophozoite, schizont, gametocyte) in thin and thick films [5] [8] |

| EDTA Blood Tubes | Anticoagulant preservation of blood samples for subsequent smear preparation and cell counting [5] | Maintaining cell integrity for accurate parasite quantification and molecular analysis [5] |

| Block-Matching and 3D Filtering (BM3D) | Computational image denoising to enhance clarity of microscopic fecal images [9] | Preprocessing step in AI-based parasite egg segmentation to improve downstream analysis accuracy [9] |

| Contrast-Limited Adaptive Histogram Equalization (CLAHE) | Enhancing contrast in medical images to improve feature discrimination [9] | Improving distinction between parasite eggs and background in fecal specimen images [9] |

| U-Net Model | Deep learning architecture for precise image segmentation tasks [9] | Segmenting regions of interest (e.g., individual parasite eggs) from complex backgrounds [9] |

| Convolutional Neural Network (CNN) | Deep learning model for image classification through automatic feature learning [9] | Classifying parasite species from segmented image regions with high accuracy [9] |

These automated systems can achieve high accuracy, with one study reporting 97.38% accuracy for an AI-based intestinal parasite egg classifier [9]. This demonstrates the potential of computational methods to provide a standardized, objective approach, reducing reliance on expert morphological skill.

Digital Databases and Genomics to Supplement Morphology

Other technological approaches are being developed to combat the erosion of morphological expertise and provide additional, objective identification tools.

- Digital Parasite Specimen Databases: Initiatives are creating high-quality, digital repositories of parasite specimens to serve as enduring educational and reference resources. These virtual slides prevent deterioration and provide global access to rare specimens, aiding in the training of morphologists [10].

- Genomic Identification Platforms: Tools like the Parasite Genome Identification Platform (PGIP) leverage metagenomic next-generation sequencing (mNGS) for unbiased parasite detection. PGIP uses a curated database of 280 parasite genomes and a standardized bioinformatics pipeline to offer species-level resolution, reducing dependency on morphological expertise [11].

Microscopic parasitology remains a high-complexity field whose gold-standard status is challenged by inherent subjectivity and inter-rater variability, as quantitatively demonstrated in malaria parasite counting studies. While traditional methods like the thick film offer the best reproducibility among skilled operators, the declining pool of expertise poses a significant risk to diagnostic consistency and patient care. The path forward lies in a synergistic approach: preserving and propagating core morphological skills through digital reference databases, while actively integrating advanced computational and genomic methods. AI-based image analysis and platforms like PGIP represent a paradigm shift towards more objective, scalable, and accessible parasitological diagnostics, offering researchers and clinicians powerful tools to supplement and enhance traditional morphological expertise.

The accurate morphological identification of parasites remains a cornerstone of parasitology, crucial for both clinical diagnosis and research. This process, however, is fraught with challenges that can compromise the reliability and reproducibility of results. Inter-rater reliability—the degree of agreement among different microscopists—is a key metric for assessing the consistency of morphological identification in research settings. This guide objectively compares how different methodologies and technologies perform in addressing three pervasive challenges: the morphological similarity of closely related species, variations in sample preparation and staining, and the degradation of sample quality. By synthesizing current experimental data, we provide researchers, scientists, and drug development professionals with a clear comparison of conventional and emerging approaches, highlighting protocols and tools that enhance diagnostic precision and research validity.

Quantitative Comparison of Method Performance

The following tables summarize experimental data from key studies, providing a direct comparison of how different methods address core challenges in parasite morphology.

Table 1: Performance Comparison of Microscopy-Based Counting Methods for Malaria Parasitaemia [5] [12] [13]

| Counting Method | Systematic Bias | Inter-Rater Reliability | Optimal Use Case / Sensitivity |

|---|---|---|---|

| Thin Blood Film | ~30% higher counts than thick film/Earle & Perez [5] | Lower reliability due to counting fatigue [5] | High parasitaemia (>500 parasites/μL); species identification [5] |

| Thick Blood Film | Little to no bias vs. Earle & Perez [5] | Best reliability among methods [5] | Routine diagnosis; low parasitaemia detection [5] |

| Earle & Perez | Little to no bias vs. thick film [5] | Good, but slightly lower than thick film [5] | Historical and specialist comparison [5] |

Table 2: Efficacy of Molecular vs. Morphological Identification for Closely Related Species [14]

| Identification Method | Identification Accuracy | Key Findings & Limitations |

|---|---|---|

| Morphology (Male Spicule Length) | Prone to misidentification due to overlapping traits [14] | Body length/width aided differentiation; female traits were less reliable [14]. |

| Morphology (Female Posterior End) | Unreliable; minimal projection not a robust diagnostic character [14] | Misidentification common between A. cantonensis and A. malaysiensis [14]. |

| Molecular (Nuclear ITS2 Region) | High accuracy; resolved morphological ambiguity [14] | Revealed 8.2% hybrid forms and 1.9% mito-nuclear discordance [14]. |

Table 3: Impact of Sample Preservation Medium on Morphological Analysis [15]

| Preservation Medium | Morphotype Diversity Recovered | Preservation Quality (Larvae) | Suitability |

|---|---|---|---|

| 10% Formalin | Higher number of parasitic morphotypes identified [15] | Superior preservation of larval cuticle and internal structures [15] | Optimal for long-term morphological studies only [15]. |

| 96% Ethanol | Lower morphotype diversity vs. formalin [15] | Increased degradation; cuticle shrinking/puckering [15] | Ideal for combined molecular/morphological work; adequate for morphology [15]. |

Detailed Experimental Protocols

To ensure the reproducibility of the comparative data presented, this section outlines the key methodologies employed in the cited studies.

- Sample Preparation: Thirty-one Plasmodium falciparum-infected blood samples were collected in Cambodia. From each sample, thick and thin blood films were prepared on glass slides and stained with Giemsa at pH 7.2.

- Microscopy and Counting: Seven experienced microscopists across four international laboratories performed blinded counts of asexual parasitaemia using three established methods:

- Thin Film Method: Parasites were counted against a total of 5,000 erythrocytes, with the result adjusted using the patient's actual red blood cell count.

- Thick Film Method: Parasites were counted against 500 white blood cells, with the result adjusted using the patient's actual white blood cell count.

- Earle and Perez Method: A specific method utilizing a standardized slide format, with parasites also counted per white blood cell and adjusted.

- Statistical Analysis: Log-transformed parasite counts were analyzed using ANOVA models to determine inter-rater reliability for each method. The paired t-test was used to assess systematic bias between methods.

- Sample Collection: 257 archived adult worm specimens from five zoogeographical regions in Thailand were used.

- Morphological Identification: Worms were identified based on key diagnostic characters: for males, spicule length and Δ bursal rays; for females, the distance of the anus and vulva from the posterior end, and the presence of a minute terminal projection. General morphometrics (body length/width, esophagus length/width) were also recorded.

- Molecular Identification: DNA was extracted from individual worms. Two molecular targets were used:

- Mitochondrial Cytochrome b (cytb): A SYBR Green-based qPCR assay with species-specific primers was used to differentiate A. cantonensis from A. malaysiensis.

- Nuclear ITS2 Region: PCR-RFLP, using the BtsI-v2 restriction enzyme, was employed for species differentiation and to detect potential hybrids based on banding patterns.

- Data Validation: Morphological character measurements were statistically compared across the molecularly defined groups (pure A. cantonensis, pure A. malaysiensis, and hybrids) to validate the reliability of morphological diagnoses.

- Sample Collection and Storage: Fresh fecal samples from capuchin monkeys were collected and immediately split into two equal parts. One part was stored in 10% buffered formalin, and the other in 96% ethanol. Samples were stored at ambient temperature for 8-19 months before analysis.

- Microscopic Screening: Samples were processed using a modified Wisconsin sedimentation technique. The resulting pellets were screened microscopically for parasites, which were morphologically identified.

- Preservation Grading: A standardized 3-point grading scale was developed for both eggs and larvae in each preservative. A score of 3 indicated excellent preservation with intact structures, 2 indicated moderate degradation partially interfering with identification, and 1 indicated severe degradation making identification difficult or impossible.

- Statistical Comparison: Wilcoxon-Signed Rank tests were used to compare the morphotype diversity, parasites per gram (PFG), and average preservation rating between the paired formalin and ethanol samples.

Visualization of Workflows and Relationships

Experimental Workflow for Species Identification

The following diagram illustrates the integrated workflow for resolving species identity, combining traditional morphological and modern molecular approaches as described in the experimental protocols [14].

Diagnostic Pathway for Morphological Challenges

This flowchart outlines the decision-making process for diagnosing parasitic infections when faced with key challenges of similarity, staining, and sample quality, leading to different classes of solutions [16] [5] [17].

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential reagents, tools, and technologies used in the featured experiments to address morphological identification challenges.

Table 4: Key Research Reagent Solutions for Parasite Morphology Studies

| Reagent / Solution | Function / Application | Experimental Context |

|---|---|---|

| Giemsa Stain (pH 7.2) | Standard staining for malaria blood films; highlights parasite chromatin and cytoplasm. | Used across all microscopy methods for malaria parasite counting to ensure consistent staining [5] [12]. |

| 10% Buffered Formalin | Tissue fixative; cross-links proteins to preserve morphological integrity long-term. | Preserved fecal samples for superior recovery of parasite morphotypes and larval structure [15]. |

| 96% Ethanol | Dehydrating fixative; preserves samples adequately for morphology and optimally for DNA. | Used for parallel sample preservation, enabling both morphological and downstream molecular analysis [15]. |

| BtsI-v2 Restriction Enzyme | Endonuclease for PCR-RFLP; cuts specific DNA sequences to generate species-specific band patterns. | Key reagent for differentiating A. cantonensis and A. malaysiensis using the nuclear ITS2 region [14]. |

| Species-specific qPCR Primers (cytb) | Targets mitochondrial gene for sensitive and quantitative species detection. | Enabled specific identification and quantification of Angiostrongylus species, revealing hybrids [14]. |

| Lightweight Deep Learning Models (e.g., DANet, Hybrid CapNet) | AI-based analysis of blood smear images; automates detection and classification. | Provides a computational solution to challenges of human fatigue and subjectivity in microscopy [16] [17]. |

Impact of Parasite Life Cycle and Pre-patency on Identification Consistency

The accurate identification of parasitic infections is a cornerstone of effective disease control, drug development, and epidemiological research. However, this process is fundamentally influenced by two intrinsic biological factors: the parasite's life cycle and the pre-patent period. The life cycle encompasses the distinct morphological and developmental stages a parasite undergoes, while the pre-patency refers to the initial period after infection during which diagnostic signs, such as eggs or specific antigens, are not yet detectable. Within the context of research on inter-rater reliability in parasite morphology identification, these factors introduce significant variability that can affect the consistency of observations between different scientists. This guide objectively compares the performance of traditional and novel diagnostic methodologies in managing this variability, providing supporting experimental data to inform researchers, scientists, and drug development professionals.

Parasite Life Cycle Stages and Identification Challenges

The complex life cycles of parasites present a primary challenge for consistent identification. For Plasmodium species, the causative agents of malaria, the intra-erythrocytic stages include the ring, trophozoite, schizont, and gametocyte, each with distinct morphologies [18]. The progression through these stages requires a tightly orchestrated transcriptional program, and fundamental changes in chromatin structure and epigenetic modifications during life cycle progression suggest a central role for these mechanisms in regulating the transcriptional program of malaria parasite development [19]. The protein PfSnf2L, an ISWI-related ATPase, has been identified as a key just-in-time regulator of gene expression, spatiotemporally determining nucleosome positioning at the promoters of stage-specific genes [19]. The functional absence of such regulators can phenocopy the loss of correct gene expression timing, disrupting development [19].

For intestinal parasites, the challenge often lies in differentiating eggs of species like Taenia sp., Trichuris trichiura, Diphyllobothrium latum, and Fasciola hepatica from artifacts in fecal smears [20]. Their identification relies on an expert's ability to recognize subtle morphological characteristics, a process susceptible to human error and subjectivity, especially under conditions of mental and physical exhaustion [21] [18]. The variability in staining uptake and the refractivity of parasites further complicates this manual process [21].

Table: Impact of Parasite Life Cycle on Diagnostic Consistency

| Parasite | Key Life Cycle Stages | Impact on Identification Consistency | Supporting Evidence |

|---|---|---|---|

| Plasmodium falciparum | Ring, Trophozoite, Schizont, Gametocyte [18] | Stage-dependent chromatin accessibility regulates gene expression; incorrect timing disrupts development [19]. | Depletion of PfSnf2L led to a global opening of chromatin and mis-timed gene expression, killing parasites [19]. |

| Intestinal Helminths (e.g., Taenia sp., F. hepatica) | Egg, Larval, Adult | Egg morphology is the primary diagnostic feature, but manual identification is variable and requires specialist training [20]. | An automated algorithm achieved near-perfect sensitivity (99.1-100%) and specificity (98.1-98.4%), highlighting human inconsistency [20]. |

| Pinworm (Enterobius vermicularis) | Egg, Larva, Adult | Small egg size (50-60 μm) and similarity to other particles lead to false negatives in manual exams [22]. | The scotch tape test has limited sensitivity and relies heavily on examiner ability [22]. |

The Pre-patent Period and its Implications for Research

The pre-patent period directly impacts the sensitivity of diagnostic tests and the timing of intervention studies. For equine parasites like Parascaris equorum and Anoplocephala perfoliata, eggs are only expelled with feces after the larvae have matured and the infection load becomes substantial [23]. During the larval migration stage in the host, or when no signs of infection are found on the body surface, serological detection becomes a simple and effective method for rapid diagnosis of parasitic infection [23]. This underscores the necessity of selecting the appropriate diagnostic tool based on the timing post-infection.

In malaria research, the biology of gametocytes is particularly relevant. Stage V gametocytes are the only forms infectious to mosquitoes and can circulate quiescently for several weeks [24]. A significant challenge in developing transmission-blocking drugs is that most current antimalarials are ineffective against these quiescent stages [24]. Consequently, individuals can remain infectious for weeks after treatment has cleared the asexual blood stages [24]. This extended pre-patency of transmissibility is a major hurdle for eradication campaigns and requires specialized assays for drug discovery.

Comparative Analysis of Diagnostic Methodologies

Different diagnostic protocols offer varying levels of performance in managing the variability introduced by life cycle and pre-patency. The table below compares the experimental protocols and quantitative performance of several key approaches.

Table: Comparison of Diagnostic Method Performance and Protocols

| Methodology | Experimental Protocol Summary | Key Performance Metrics | Consistency Advantages |

|---|---|---|---|

| Manual Microscopy | Stained blood or fecal smears are examined by a technician for morphological identification of parasites/eggs [21] [18]. | Time-consuming, labor-intensive, and susceptible to human error and subjectivity [22] [18]. | Low inter-rater reliability; consistency is affected by examiner expertise and fatigue [21]. |

| Automated Image Analysis (Mathematical Algorithm) | Digital images are processed through a 14-step SCILAB algorithm: gray-scale conversion, contrast enhancement, Gaussian smoothing, binarization, border smoothing, labeling, boundary object exclusion, image closing, holes filtering, area filtering, skeletonization, border identification, and recoloring. Features are extracted for logistic regression classification [20]. | Sensitivity: 99.10% - 100% Specificity: 98.13% - 98.38% for helminth eggs [20]. | High consistency; eliminates human subjectivity and fatigue; provides a standardized, objective assessment [20]. |

| Deep Learning (YOLO-CBAM) | The YOLOv8 architecture is integrated with a Convolutional Block Attention Module (CBAM) and self-attention mechanisms. The model is trained on datasets of labeled microscopic images to automatically detect and localize parasite eggs [22]. | Precision: 0.9971 Recall: 0.9934 mAP@0.5: 0.9950 [22]. | Superior at detecting small objects in complex backgrounds; reduces false negatives/positives; highly scalable and consistent [22]. |

| Staining-Independent AI Classification | Blood smear images are converted to grayscale to lessen staining impact. For detection, a YOLO-based model is used. For life stage classification, single-cell images are cropped and classified using a CNN (e.g., LeNet-5) architecture [18]. | Detection Accuracy: 0.79 - 0.92 (across species) Classification Accuracy: 0.93 - 0.96 (across stages) [18]. | Reduces variability from inconsistent staining; enables accurate life stage classification, crucial for research on stage-specific biology [18]. |

Research Reagent Solutions for Parasite Identification

The following reagents and tools are essential for conducting research in parasite identification and managing the challenges of life cycle and pre-patency.

Table: Essential Research Reagents and Tools

| Research Reagent / Tool | Function in Experimental Protocol |

|---|---|

| Giemsa Stain | A classical dye used to highlight parasites in blood smears for microscopic identification of malaria life stages [18]. |

| Lugol's Iodine | A temporary stain used on fecal smears to enhance the visibility of protozoan cysts and helminth eggs [20]. |

| NF54/iGP1_RE9Hulg8 Transgenic Parasites | Genetically engineered P. falciparum parasites expressing a red-shifted firefly luciferase reporter; used in viability assays for high-throughput screening of gametocytocidal compounds [24]. |

| Scilab Open-Source Platform | A computational environment used to implement custom image processing and pattern recognition algorithms for the automated identification of parasite eggs [20]. |

| YOLO-CBAM Deep Learning Framework | An integrated object detection architecture that uses attention mechanisms to improve feature extraction from complex microscopic images, enabling high-accuracy automated detection [22]. |

| N-Acetyl-glucosamine (GlcNAc) | A chemical used in Plasmodium culture to eliminate asexual blood stage parasites, enabling the production of synchronous gametocyte populations for stage-specific drug assays [24]. |

Visualizing Experimental Workflows

The following diagrams illustrate the logical workflows for key experimental protocols discussed in this guide, highlighting how they address identification challenges.

Automated Parasite Egg Recognition Workflow

AI Malaria Detection & Staging Pipeline

The consistency of parasite identification is intrinsically linked to a deep understanding of parasite life cycles and the pre-patent period. Traditional manual methods, while foundational, are inherently variable and struggle to account for these biological complexities objectively. The comparative data presented in this guide demonstrates that automated methodologies, particularly those leveraging sophisticated mathematical algorithms and deep learning, offer a significant performance advantage. They provide higher sensitivity, specificity, and overall accuracy while establishing a standardized, objective framework that minimizes inter-rater variability. For researchers and drug developers, the adoption of these advanced tools and the carefully designed experimental protocols that account for stage-specific biology are critical for generating reliable, reproducible data essential for advancing the field of parasitology.

In scientific and clinical practice, reliability refers to the consistency of a measurement—the extent to which it can be reproduced when repeated under the same conditions [25]. In the specific context of parasite morphology identification, inter-rater reliability measures the degree of agreement between different scientists when identifying the same parasite specimens. When this reliability is low, the consequences cascade through healthcare systems and research enterprises, leading to misdiagnosis, delayed patient treatment, and compromised research integrity.

The challenges in parasite morphology identification exemplify these high-stakes reliability concerns. As molecular methods increasingly supplement or replace traditional microscopy, the morphological expertise necessary for accurate identification is diminishing across the scientific community [7]. This loss of expertise threatens diagnostic accuracy, as morphological identification remains the gold standard for many parasitic infections and is often the most appropriate, cost-effective, and sometimes the only accurate identification method in many settings [7]. This article examines the consequences of low inter-rater reliability through the lens of parasite morphology research, comparing diagnostic approaches and providing actionable methodologies for enhancing reliability in scientific practice.

Defining the Problem: Low Reliability in Parasite Morphology Identification

The Decline of Morphological Expertise

The field of parasitology is experiencing a paradoxical situation: while advanced diagnostic techniques like rapid antigen detection tests (RDTs), nucleic acid amplification tests (NAATs), and metagenomic next-generation sequencing (mNGS) have expanded diagnostic capabilities, they have simultaneously contributed to a progressive, widespread loss of morphology expertise for parasite identification [7]. This skill deficit is not easily remedied, as becoming an effective parasite morphologist requires several years of training in practical and theoretical knowledge of anatomy, biology, zoology, taxonomy, and epidemiology across the vast array of parasite taxa capable of infecting humans [7].

This erosion of morphological skills has direct implications for diagnostic accuracy and patient outcomes. As noted in parasitology literature, "Inadequate morphology experience may lead to missed and inaccurate diagnoses and erroneous descriptions of new human parasitic diseases" [7]. The problem is particularly acute for less common parasites and in resource-limited settings where advanced molecular diagnostics may be unavailable or cost-prohibitive.

Quantifying Reliability in Scientific Research

In research methodology, reliability is distinct from validity: while reliability concerns the consistency of a measure, validity refers to how accurately a method measures what it is intended to measure [25]. A measurement can be reliable without being valid, but if a measurement is valid, it is usually also reliable.

Several statistical approaches are used to quantify inter-rater reliability:

- Cohen's Kappa: Used for two raters, accounts for chance agreement [26] [27]

- Fleiss' Kappa: Extends Cohen's Kappa to multiple raters [27]

- Intraclass Correlation Coefficient (ICC): Commonly used for continuous measures, with values closer to 1 indicating higher agreement [28] [29]

Each metric has strengths and limitations, and the appropriate choice depends on the research design, number of raters, and type of data being analyzed [28].

Table 1: Inter-Rater Reliability Metrics and Their Interpretation

| Metric | Appropriate Use Cases | Interpretation Range | Strengths |

|---|---|---|---|

| Cohen's Kappa | Two raters, categorical data | -1 to 1 (≤0: No agreement, 0.01-0.20: Slight, 0.21-0.40: Fair, 0.41-0.60: Moderate, 0.61-0.80: Substantial, 0.81-1.0: Almost Perfect) | Accounts for chance agreement |

| Fleiss' Kappa | Multiple raters, categorical data | Same as Cohen's Kappa | Extends Cohen's to multiple raters |

| Intraclass Correlation Coefficient (ICC) | Multiple raters, continuous measures | 0 to 1 (Higher values indicate better reliability) | Can be used for various experimental designs |

Direct Consequences: Misdiagnosis and Delayed Treatment

Diagnostic Error Categories and Patient Impact

Low reliability in parasite identification directly contributes to diagnostic errors, which the National Academies of Sciences, Engineering, and Medicine categorizes as the failure to establish an accurate and timely explanation of the patient's health problem or to communicate that explanation to the patient [30]. These errors manifest in three primary forms:

- Missed Diagnosis: The medical condition is entirely overlooked, leading to an absence of necessary treatment [31]

- Delayed Diagnosis: The condition is recognized but not promptly, allowing progression without intervention [30] [31]

- Misdiagnosis: The condition is incorrectly identified, leading to inappropriate treatment that may exacerbate the health concern [31]

The prevalence of these errors is substantial. Globally, diagnostic errors affect an estimated 12 million people annually in the United States alone, with conditions such as cancer, cardiovascular diseases, and infections being particularly prone to diagnostic challenges due to their complex nature and subtle early symptoms [31].

Case Study: Angiostrongylus Identification Challenges

The difficulties in differentiating between closely related parasite species demonstrate how low reliability leads to diagnostic errors. Research on Angiostrongylus cantonensis and Angiostrongylus malaysiensis in Thailand reveals that morphological misidentifications between these two closely related species are common due to overlapping morphological characters [14].

A study analyzing 257 archived specimens found that while certain male traits (body length and width) aided species differentiation, female traits were less reliable for accurate identification [14]. Furthermore, the research revealed hybrid forms (8.2% of specimens) through nuclear ITS2 region analysis, complicating morphological identification even for experienced parasitologists [14]. This case illustrates how taxonomic complexities can undermine diagnostic reliability even before considering observer variability.

Patient Harm from Diagnostic Errors

The consequences of these diagnostic failures extend beyond academic concern to tangible patient harm:

- Emotional and Psychological Impact: Patients often experience stress, anxiety, and diminished trust in the healthcare system when faced with diagnostic uncertainty [31]

- Clinical Deterioration: Delayed or incorrect treatment allows disease progression, potentially transforming manageable conditions into life-threatening situations

- Economic Consequences: Prolonged illnesses lead to increased healthcare expenses through additional testing, extended care, or unnecessary treatments based on incorrect initial diagnoses [31]

Research Implications: Compromised Data Quality and Validity

Threats to Research Integrity

Low inter-rater reliability introduces significant threats to research integrity across multiple domains:

- Compromised Internal Validity: When reliability is low, the consistency of measurements within a study is undermined, making it difficult to distinguish true effects from measurement error [25]

- Reduced Statistical Power: Unreliable measurements introduce noise that obscures genuine relationships or treatment effects, potentially leading to Type II errors (false negatives)

- Impaired Reproducibility: The fundamental principle of scientific reproducibility is threatened when measurements cannot be consistently replicated across different research teams [28]

The problem is particularly acute in studies of neurodegenerative disorders and psychiatric conditions where clinical judgment plays a significant role in diagnosis and assessment. As one study noted, "variability in clinical judgment can hinder reliability and complicate the interpretation of findings" [26].

Limitations of Reliability Metrics in Complex Tasks

While statistical measures of inter-rater reliability are necessary, they are insufficient guarantees of data quality, particularly for complex annotation tasks [27]. High inter-rater reliability scores can sometimes be misleading when:

- Annotators Share the Same Biases: Multiple raters may consistently make the same errors due to common misconceptions or shared blind spots

- Task Complexity Exceeds Expertise: Difficult cases may be systematically misclassified by non-expert raters while still producing high agreement rates

- Simplistic Scoring Rubrics: Measurement instruments that fail to capture nuanced distinctions may produce high reliability at the cost of validity [27]

These limitations highlight why sophisticated research protocols incorporate multiple quality control mechanisms beyond simple reliability metrics.

Comparative Analysis: Reliability Across Diagnostic Methods

Morphological vs. Molecular Identification

The ongoing transition from morphological to molecular identification methods in parasitology offers a instructive case study for comparing reliability across diagnostic approaches.

Table 2: Comparison of Diagnostic Methods in Parasitology

| Diagnostic Characteristic | Morphology-Based Diagnostics | PCR-Based Diagnostics | Sequencing-Based Diagnostics |

|---|---|---|---|

| Sensitivity | ++ | +++ | +++ |

| Specificity | +++ | +++ | +++ |

| Quantification Capacity | +++ | ++ | - |

| Turnaround Time | +++ (except histology) | ++ | + |

| Cost-Effectiveness | +++ | ++ | + |

| Genus-Level Identification | +++ | +++ | +++ |

| Species-Level Identification | ++ | +++ | +++ |

| Capacity to Detect Novel/Zoonotic Agents | +++ | - | +++ |

| Adaptability to Resource-Poor Settings | +++ | - | - |

Note: -, +, ++, +++ represent no, limited, moderate, or high capacity/efficacy respectively [7]

Methodological Limitations and Complementarity

Each diagnostic approach faces distinct limitations that can affect reliability:

- Morphological Methods: Subject to interpreter variability, require significant expertise, challenging for closely related species or atypical presentations [7] [14]

- Molecular Methods: Limited target range (not all parasites have available assays), specimen incompatibility (e.g., formalin fixation degrades DNA), inadequate reference databases for novel organisms [7]

- Hybrid Approaches: Combining morphological and molecular methods can enhance overall reliability but requires additional resources and expertise [14]

The Angiostrongylus study concluded that "nuclear ITS2 is a reliable marker for species identification of A. cantonensis and A. malaysiensis, especially in regions where both species coexist," suggesting a complementary approach rather than complete replacement of morphological methods [14].

Methodological Framework: Enhancing Reliability in Research Practice

Experimental Protocols for Reliability Assessment

Well-designed reliability studies require careful planning and execution. Key methodological considerations include:

- Clear Purpose Statement: The study purpose should clearly distinguish between hypothesis testing and parameter estimation, as this determines appropriate sample size calculations and statistical analyses [28]

- Rater Selection and Training: Clearly state whether raters represent the only raters of interest or a larger population, as this affects the choice of statistical model [28]

- Structured Diagnostic Processes: Implement standardized diagnostic criteria and consensus procedures, as exemplified by the Asian Cohort for Alzheimer's Disease (ACAD) study, which achieved almost perfect agreement (Cohen's Kappa = 0.835) through rigorous protocol harmonization [26]

Experimental Protocol for Reliability Assessment

Quality Control Mechanisms Beyond Reliability Metrics

Sophisticated research protocols employ multiple quality control strategies:

- Task Decomposition: Complex identification tasks are broken into simpler subtasks assigned to annotators with appropriate skill levels [27]

- Skill-Based Annotation: Annotator skills are assessed through initial tests and continuously monitored using control tasks mixed with actual study tasks [27]

- Confidence-Based Aggregation: Multiple annotations are aggregated with weighting based on demonstrated annotator skill levels, with low-confidence cases receiving additional review [27]

- Comprehensive Auditing: Final datasets undergo sampling-based audits using quality metrics such as accuracy, precision, recall, and F-score rather than relying solely on inter-rater reliability [27]

Essential Research Reagents and Methodological Tools

The Scientist's Toolkit for Reliability Enhancement

Implementing robust reliability assessment requires specific methodological tools and approaches:

Table 3: Essential Research Reagents and Tools for Reliability Studies

| Tool/Reagent | Primary Function | Application Context |

|---|---|---|

| Standardized Diagnostic Criteria | Provides consistent framework for classification | Essential for multi-center studies; enables comparison across research sites [26] |

| Molecular Markers (e.g., ITS2, cytb) | Validates morphological identifications; detects hybridization | Critical for resolving difficult taxonomic distinctions; identifies cryptic species [14] |

| Statistical Software Packages | Calculates reliability metrics (ICC, Kappa, etc.) | Required for quantitative reliability assessment; must be validated for appropriate application [28] |

| Reference Collections | Serves as ground truth for training and validation | Provides validated specimens for comparator studies; essential for method validation [7] |

| Structured Consensus Protocols | Formalizes diagnostic decision-making | Reduces individual bias; enhances transparency and reproducibility [26] |

| Control Tasks/Specimens | Monitors ongoing rater performance | Enables continuous quality assessment during data collection; identifies rater drift [27] |

The consequences of low reliability in parasite morphology identification—and scientific research more broadly—extend from compromised patient care to undermined research validity. Addressing these challenges requires a multifaceted approach that acknowledges the complementary strengths of traditional and modern methods while implementing rigorous methodological safeguards.

Maintaining morphological expertise remains essential even as molecular methods advance, particularly for detecting novel pathogens, working in resource-limited settings, and providing cost-effective diagnostics [7]. Simultaneously, molecular methods offer crucial validation for morphologically challenging distinctions and can identify hybridization events that complicate traditional classification [14].

Enhancing reliability in both research and clinical practice requires moving beyond simple reliability metrics to implement comprehensive quality frameworks that include careful study design, appropriate statistical application, ongoing rater training, and multimodal verification. By adopting these approaches, the scientific community can better ensure that diagnostic decisions and research findings rest on a foundation of methodological rigor and reproducible observation.

From Microscope to Machine: Modern Methods to Standardize Parasite Identification

In the field of parasitology, the accurate diagnosis of intestinal parasitic infections (IPIs) relies heavily on proven microscopic techniques. Despite advancements in molecular and automated technologies, conventional methods remain the cornerstone for routine diagnosis, particularly in resource-limited settings where the burden of these diseases is highest [32] [33]. The Formalin-Ethyl Acetate Centrifugation Technique (FECT), the Merthiolate-Iodine-Formalin (MIF) technique, and the Direct Smear method represent such foundational approaches. Their utility, however, must be understood within a critical research context: the ongoing investigation into inter-rater reliability in parasite morphology identification. The identification of parasitic elements is inherently dependent on the expertise of the microscopist, introducing a variable that can significantly impact diagnostic consistency, epidemiological data, and the evaluation of new technologies [32] [34]. This guide provides a detailed, objective comparison of these three techniques, framing their performance data and protocols within the broader thesis of analytical variability and standardization in parasitological research.

The following diagram illustrates the procedural relationships and key decision points leading to the use of FECT, MIF, and Direct Smear techniques in a research context focused on morphological identification.

Comparative Performance Analysis

Diagnostic Sensitivity and Operational Characteristics

The choice of diagnostic technique directly influences the detection capability for various parasitic elements and the operational workflow of a laboratory. The table below summarizes the key performance characteristics and comparative advantages of FECT, MIF, and Direct Smear, providing a basis for their application in research settings.

| Characteristic | Formalin-Ethyl Acetate Centrifugation Technique (FECT) | Merthiolate-Iodine-Formalin (MIF) | Direct Smear |

|---|---|---|---|

| Primary Principle | Sedimentation concentration [35] | Staining and sedimentation [32] | Direct wet mount [33] |

| Sensitivity (General) | High; considered a reference standard [34] | Competitive with FECT for IPI evaluation [32] | Low; suitable for high-intensity infections only [33] |

| Sensitivity (Opisthorchis viverrini) | 75.5% [34] | Information Not Specified in Search Results | 67.3% (Stool Kit, a commercial concentrator) [34] |

| Key Advantage | Concentrates a wide range of parasites; suitable for preserved samples [33] | Effective fixation and staining; long shelf life, good for field use [32] | Rapid; preserves motile trophozoites [33] [36] |

| Key Disadvantage | Requires centrifugation; logistical complexity [34] | Can distort trophozoite morphology [32] | Poor sensitivity for low-level infections [33] |

| Quantification Capability | Yes ( Eggs Per Gram (EPG) can be calculated) [34] | Information Not Specified in Search Results | Semi-quantitative only [33] |

| Best For (Parasite Stages) | Helminth eggs, larvae, cysts, and oocysts [33] [35] | Broad-spectrum of helminths and protozoa [32] | Motile trophozoites and poorly floating stages [36] |

Performance in Inter-Rater Reliability Context

The reliability of morphological identification is a central concern when using these techniques. A 2025 study evaluating deep-learning models against human experts using FECT and MIF as the ground truth provides insightful data. The models demonstrated a strong level of agreement with medical technologists, with Cohen's Kappa scores exceeding 0.90 for all models tested [32]. This high kappa score, achieved when a standardized reference is used, underscores that the analytical method itself can be highly reliable. However, it also highlights that the major source of variability in diagnosis often lies in human interpretation, a critical factor for designing studies on inter-rater reliability.

Furthermore, the distinct morphology of different parasites affects identification consistency. The same 2025 study noted that deep-learning models achieved high precision, sensitivity, and F1 scores for helminthic eggs and larvae due to their more distinct and uniform morphology compared to protozoans [32]. This finding can be extrapolated to human raters: techniques like FECT that are particularly strong at concentrating helminth eggs (e.g., Ascaris, Trichuris, hookworm) may inherently facilitate higher inter-rater agreement for these species.

Detailed Experimental Protocols

Formalin-Ethyl Acetate Centrifugation Technique (FECT)

The FECT protocol is a sedimentation method designed to concentrate parasitic elements by removing debris and fats [35].

Workflow Diagram: FECT Protocol

- Step-by-Step Procedure [34] [35]:

- Sample Preparation: Homogenize approximately 2 grams of fresh stool and emulsify in 10% formalin. The suspension is then strained through multiple layers of gauze into a 15 ml conical centrifuge tube.

- First Centrifugation: Top up the tube with saline or 10% formalin and centrifuge at 500 × g for 10 minutes. The supernatant is decanted.

- Solvent Extraction: Resuspend the sediment in 10 ml of 10% formalin. Add 4 ml of ethyl acetate, stopper the tube, and shake vigorously for 30 seconds to dissolve fecal fats.

- Second Centrifugation: Centrifuge again at 500 × g for 10 minutes. This results in four layers: a plug of debris at the top, a layer of ethyl acetate, a formalin layer, and the sediment containing parasites at the bottom.

- Sediment Examination: Free the debris plug, decant the top three layers, and use a swab to clean the tube walls. The final sediment is resuspended in a small volume of formalin and examined microscopically under low and high power.

Merthiolate-Iodine-Formalin (MIF) Technique

The MIF technique serves as a combined fixative and stain, making it suitable for field surveys and for highlighting protozoan cysts [32].

- Solution Preparation: The MIF solution itself is a combination of merthiolate (a preservative), iodine (a stain), and formalin (a fixative). It is noted for its easy preparation and long shelf life [32].

- Staining Mechanism: The iodine component of the solution stains glycogen and nuclei of protozoan cysts, enhancing their visibility and aiding in species differentiation based on internal structures.

- Procedure: While the specific sequence was not detailed in the search results, the technique involves mixing a stool sample with the MIF solution. The fixation preserves the parasites, while the staining allows for immediate examination. It can be used as a direct stain or followed by a sedimentation step to concentrate the parasitic elements [32] [36].

- Limitation for Research: A key consideration for morphology studies is that the iodine can potentially distort trophozoite morphology, and the fixation may be inadequate for certain trichrome stains used for permanent slides [32].

Direct Smear Microscopy

The direct smear is the simplest and fastest technique, primarily used for the initial assessment or when motility must be observed.

Step-by-Step Procedure [33] [36]:

- Smear Preparation: A small amount of fresh, unpreserved feces (approximately 2 mg) is emulsified in a drop of 0.9% saline on a microscope slide. The use of saline is critical, as water can destroy motile trophozoites.

- Coverslip: A large coverslip (22x22 mm) is placed over the suspension.

- Examination: The entire preparation is examined systematically under the microscope, first with a 10x objective and then with a 40x objective.

- Iodine Staining: For enhanced visualization of protozoan cysts, a separate smear can be prepared with a drop of Lugol's iodine instead of saline. This kills trophozoites but stains cyst walls and internal structures.

Key Application in Research: The primary research value of the direct smear is its utility in detecting motile trophozoites (e.g., of Giardia or Entamoeba), which can be lost or distorted in concentration procedures. It is also adequate for observing heavy infections with helminths like Ascaris lumbricoides [33].

The Scientist's Toolkit: Key Research Reagent Solutions

The table below lists essential materials and their functions for implementing the discussed techniques in a research setting.

| Research Reagent / Material | Primary Function in Protocol |

|---|---|

| 10% Formalin Solution | Universal fixative and preservative; inactivates pathogens for safe handling in FECT and MIF [33] [35]. |

| Ethyl Acetate | Organic solvent used in FECT to dissolve fats and debris, clearing the sample for easier microscopy [35]. |

| Merthiolate-Iodine-Formalin (MIF) Solution | All-in-one fixative and stain; preserves morphology and stains internal structures of cysts for identification [32]. |

| Lugol's Iodine Solution | Staining solution used in Direct Smear and other methods to contrast protozoan cysts and reveal nuclei [36]. |

| 0.9% Saline Solution | Isotonic diluent for Direct Smear; maintains viability and motility of trophozoites during examination [36]. |

| Cellophane Coverslips / Glycerol | Used in the Kato-Katz method (a related quantitative technique) to clear debris for better visualization of helminth eggs [32] [33]. |

| Conical Centrifuge Tubes & Gauze | Essential for the concentration steps in FECT; gauze is used to filter out large particulate matter [34] [35]. |

The Role of Digital Imaging and Whole-Slide Scanning in Standardizing Visual Data

In scientific fields reliant on visual data, such as parasite morphology identification research, inter-rater reliability remains a significant challenge. Studies consistently demonstrate that subjective visual interpretation introduces variability, even among experienced professionals. For instance, research on stress signatures in dentition found that more experience in assessment does not necessarily produce higher reliability between raters, with disagreements occurring frequently in intensity categorization [37]. This variability directly impacts diagnostic consistency, research reproducibility, and ultimately, scientific progress in parasitology and drug development.

Digital imaging and whole-slide imaging (WSI) technologies are transforming morphological sciences by addressing these standardization challenges. WSI systems create high-resolution digital reproductions of entire glass slides, enabling pathologists and researchers to examine tissue specimens on computer displays rather than through traditional microscopy [38]. The fundamental value proposition of these technologies lies in their potential to standardize visual data acquisition, management, and interpretation across institutions, research groups, and time periods.

The clinical validation of WSI systems for primary diagnosis has established their non-inferiority to conventional microscopy [38] [39] [40]. However, for research applications, particularly in specialized fields like parasite morphology, understanding the technical variations between systems and their implications for data standardization is crucial. This guide provides an objective comparison of WSI technologies, supported by experimental data, to inform researchers and drug development professionals in selecting and implementing digital pathology solutions.

Whole-Slide Imaging Technology Comparison

Scanner Platforms and Key Characteristics

Whole-slide imaging systems consist of three core components: the slide scanner, viewing software, and display monitor [38]. The market offers diverse scanner options with varying capabilities suited to different laboratory needs and throughput requirements. The following table summarizes major digital pathology scanners and their key characteristics:

Table: Comparison of Whole-Slide Imaging Scanners

| Manufacturer | Model Examples | Key Features | Capacity | Target Use Cases |

|---|---|---|---|---|

| 3DHISTECH | PANNORAMIC Flash DESK DX, PANNORAMIC 1000 DX | Affordable entry-level to high-speed models; standardized optical system; self-calibration | Entry-level to 1000 slides | Routine pathology; basic clinical diagnoses to high-volume labs [41] |

| Grundium | Ocus20, Ocus40, Ocus M 40 | Browser-based platform; high-resolution imaging; precision engineering | Varies by model | Clinical/research settings; remote consultations; intraoperative frozen sections [41] |

| Hamamatsu | NanoZoomer Series | Remarkable image quality; high-speed scanning; fluorescence capabilities | Not specified | Clinical and research applications requiring exceptional image quality [41] |

| Huron | TissueScope iQ, LE, LE120 | Broad file format compatibility; patented MSIA technology; fast scanning (≈60s/slide) | 120-400 slides | High-volume labs; versatile research applications [41] |

| Leica Biosystems | Aperio GT 450 DX, CS2, LV1 | Custom optics; no-touch scanning; secure IT infrastructure | 450 slides (GT 450 DX) | High-volume clinical settings; medium-volume use; remote viewing [41] |

| Roche | VENTANA DP 200, DP 600 | Built-in calibration; dynamic focus technology; user-friendly interface | 240 slides (DP 600) | Frozen sections; urgent cases; labs scaling toward full digitization [41] |

Performance Validation and Equivalency Data

Multiple rigorous studies have validated the diagnostic equivalence between digital pathology and traditional microscopy. The following table summarizes key performance metrics from recent validation studies:

Table: Performance Metrics from WSI Validation Studies

| Study | Sample Size | Concordance Rate | Efficiency Findings | Notable Limitations |

|---|---|---|---|---|

| Roche FDA Validation Study [38] | 2,047 clinical cases | Difference in accuracy between digital reads and manual microscopy: -0.61% (lower bound of 95% CI: -1.59%) | Mean case reading times similar: 2.33 min (digital) vs. 2.34 min (manual) | Higher disagreement rates for longer sign-out diagnoses |

| Memorial Sloan Kettering Study [39] | 204 cases (2,091 glass slides) | Overall diagnostic equivalency: 99.3% | 19% decrease in efficiency per case with digital | Efficiency needs improvement for wider adoption |

| Forensic Pathology Multicenter Validation [40] | 100 forensic slides | Mean concordance: 97.8% | Scan times averaged 44 seconds per slide | First formal validation in forensic pathology setting |

The Roche Digital Pathology Dx system demonstrated precision metrics between 89.3% and 90.3% across different testing conditions, meeting all predetermined primary endpoints for FDA clearance [38]. Similarly, a forensic histopathology study achieved a mean concordance of 97.8% between digital and glass slide diagnoses, surpassing the College of American Pathologists' recommended threshold of 95% [40].

Experimental Protocols for WSI Validation

Interlaboratory Reproducibility and Precision Assessment

The precision study for Roche Digital Pathology Dx followed a rigorous protocol to assess feature identification consistency [38]. Researchers evaluated 23 histopathologic features across three sites, with a single screening pathologist identifying three different slides for each feature. Each slide contained three regions of interest (ROIs) with at least one example of the primary feature. The slide set (69 cases plus 12 "wildcard" cases) was scanned on three nonconsecutive days at each site, generating 729 whole-slide images and 2,187 ROIs for analysis. Statistical analysis measured precision between systems/sites (89.3%), between days (90.3%), and between readers (90.1%), with the lower bound of the 95% confidence interval for each exceeding the predetermined threshold of 85% [38].

Method Comparison and Accuracy Assessment

The method comparison study for Roche Digital Pathology Dx evaluated diagnostic accuracy against the reference standard of manual microscopy [38]. Researchers assessed 2,047 clinical cases, with pathologists rendering diagnoses using both digital reads and manual microscopy. The primary endpoint was the difference in accuracy between digital and manual reads compared to the reference sign-out diagnosis. The study design included exploratory analyses of subgroup-specific diagnostic discrepancy rates and review of cases from multiple organ systems (breast, lung, bladder, kidney, and stomach) to identify potential modality-specific root causes for major diagnostic disagreements [38].

Diagram: WSI validation methodology for standardized visual data. The workflow progresses from study design through slide selection, standardized scanning, pathologist evaluation, and data analysis to reach validation endpoints.

Technical Considerations for Standardized Imaging

Scanner-Induced Variability in Quantitative Analysis

A critical technical consideration for standardization is that different slide scanners can introduce variations in downstream image analysis. A 2023 study directly compared three different slide scanners (Nikon, Olympus, and Huron) using identical prostate cancer tissue samples [42]. Researchers found that each mean color channel intensity (Red, Green, Blue) differed significantly between scanners (all P<.001). After color deconvolution, only the hematoxylin channel was similar across all three scanners. These optical differences translated to variations in computed pathomic features, with lumen and stroma densities showing significant differences between most scanner comparisons [42].

This demonstrates that for quantitative morphology studies, such as parasite feature measurement, scanner selection and consistent imaging protocols are essential for data standardization. The researchers implemented histogram-matching techniques to align intensity distributions between scanners, suggesting that computational harmonization may help mitigate inter-scanner variability [42].

Computational Approaches for Enhanced Standardization

Emerging computational approaches offer promising pathways for overcoming standardization challenges in whole-slide imaging. Foundation models like TITAN (Transformer-based pathology Image and Text Alignment Network) represent a significant advancement [43]. These models are pretrained on hundreds of thousands of whole-slide images through visual self-supervised learning and vision-language alignment. Once trained, they can extract general-purpose slide representations that generalize well to resource-limited scenarios, including rare conditions [43].

For parasite morphology research, such technologies could enable more consistent feature extraction across different laboratories and imaging platforms. The TITAN model demonstrates that multimodal pretraining with both images and corresponding textual reports produces slide representations that outperform supervised baselines and existing multimodal slide foundation models across diverse clinical tasks [43].

Implementation Framework for Standardized Imaging

The Scientist's Toolkit: Essential Research Reagent Solutions

Successfully implementing digital pathology for standardized morphological research requires specific tools and reagents. The following table details essential components:

Table: Research Reagent Solutions for Digital Pathology Implementation

| Item Category | Specific Examples | Function in Standardization |

|---|---|---|

| Slide Scanners | Roche VENTANA DP 200/600, Leica Aperio GT 450 DX, Huron TissueScope | Converts glass slides to high-resolution digital images with consistent quality [41] |

| Staining Reagents | Hematoxylin & Eosin, Special Stains (PTAH, PAS, Masson Trichrome), IHC markers | Provides consistent tissue and morphological contrast for visual analysis [40] |

| Image Management Software | uPath/navify Digital Pathology, O3 viewer, MSK Slide Viewer | Enables slide viewing, annotation, analysis, and sharing with standardized tools [38] |

| Display Monitors | ASUS ProArt Display PA248QV, other professional displays | Ensures consistent color reproduction and resolution for interpretation [38] |

| Quality Control Tools | Calibration slides, color standards, focus verification tools | Maintains consistent scanner performance and image quality over time [41] |

Diagram: Digital pathology system architecture. The framework progresses from hardware components through software layers and data standardization processes to generate analytical outputs.

Workflow Integration and Best Practices