Enhancing FEA Sensitivity: Advanced Techniques for Biomedical Researchers and Drug Development

This article provides a comprehensive guide to Finite Element Analysis (FEA) technique modifications specifically aimed at increasing simulation sensitivity for biomedical applications.

Enhancing FEA Sensitivity: Advanced Techniques for Biomedical Researchers and Drug Development

Abstract

This article provides a comprehensive guide to Finite Element Analysis (FEA) technique modifications specifically aimed at increasing simulation sensitivity for biomedical applications. It covers foundational principles of sensitivity, advanced high-fidelity modeling methodologies, practical troubleshooting for optimization, and rigorous validation frameworks. Tailored for researchers and drug development professionals, the content synthesizes current research and proven strategies to improve the detection of subtle biomechanical changes, such as bone strength variations, directly impacting the reliability of pre-clinical assessments and device design.

Understanding FEA Sensitivity: Core Principles and Its Critical Role in Biomedical Research

FEA Sensitivity FAQ

What does "sensitivity" mean in the context of Finite Element Analysis? In FEA, sensitivity refers to how significantly the results of a simulation change in response to small variations in input parameters, such as material properties, component geometry, boundary conditions, or loading. In biomechanical research, a highly sensitive model can detect subtle changes in stress, strain, or displacement that result from modifications in biological tissues, implant designs, or physiological loads [1] [2].

Why is my biomechanical FEA model not showing expected sensitivity to a material property change? This is a common issue often traced to these causes:

- Excessive Element Distortion: Highly distorted elements fail to calculate meaningful results, crippling sensitivity. Identify these elements using tools like Named Selections for element violations in Ansys [3].

- Insufficient Mesh Refinement: A coarse mesh can miss critical stress gradients. Conduct a mesh convergence study to ensure your results do not significantly change with further mesh refinement [4] [5].

- Unrealistic Boundary Conditions: Over-constrained or improperly defined boundaries can "lock" the model, preventing it from exhibiting natural, sensitive deformation. Ensure your boundary conditions accurately represent the physiological environment [4] [6].

How can I improve my model's sensitivity for detecting subtle biomechanical changes?

- Perform a Mesh Sensitivity Analysis: Systematically reduce mesh size in critical regions until key outcomes (e.g., peak stress) stabilize. Non-linear analyses are particularly mesh-sensitive and demand careful convergence studies [5].

- Verify Material Model and Parameters: Ensure your material model (e.g., hyperelastic for soft tissues) and its parameters are appropriate and accurately defined. Using wrong models is a common modeling error [6].

- Check and Define Contact Properly: Nonlinear contact can significantly influence load transfer and model response. Use the Contact Tool to ensure contacts are initially closed and consider refining the mesh in contact regions [4] [3].

Troubleshooting Guide: Low Model Sensitivity

Problem: Model results are insensitive to small changes in a key input parameter.

Step 1: Investigate Mesh Quality and Convergence

- Action: Perform a mesh convergence study for the specific output you are monitoring (e.g., stress at a specific point).

- Protocol: Refine the global mesh, or use local mesh controls in areas of high stress or interest. Run the analysis at each refinement level and plot the result against element size or count. A converged result is achieved when further refinement causes negligible change [4] [5].

- Example: In a buckling analysis of composite shells, mesh size significantly impacted the critical load result. Finer meshes were required for a converged, accurate solution, especially in non-linear analysis [5].

Step 2: Validate Boundary Conditions and Loads

- Action: Scrutinize all boundary conditions and loads for realism.

- Protocol: Use a modal analysis with the same supports to check for unconstrained rigid body modes. Animated deformation plots can reveal parts that are moving without deforming, indicating under-constraint [3]. Ensure loads are applied realistically; a force on a single node creates infinite stress (a singularity) and is not physically realistic [6].

Step 3: Examine Numerical Error and Solver Settings

- Action: For nonlinear problems, use solver tools to diagnose convergence issues.

- Protocol: Enable the Newton-Raphson Residual plot in the solution information. This plot shows "hotspots" (red areas) where the solution error is highest, often pinpointing problematic regions like specific contact pairs. Mesh refinement in these areas or reducing the contact stiffness can improve convergence and solution sensitivity [3].

Step 4: Check for and Address Singularities

- Action: Identify locations of stress singularities.

- Protocol: Look for sharp re-entrant corners, point loads, or perfect constraints in the model. These features create theoretically infinite stresses, which can dominate the solution and mask the subtle changes you are trying to detect. Mitigate by adding small fillets, distributing loads over an area, or focusing on stresses away from the singularity [6].

Quantitative Sensitivity Data from Biomechanical Research

The table below summarizes quantitative findings from a biomechanical FEA sensitivity study on a prosthetic foot, illustrating how outcomes respond to unit changes in component stiffness [2].

Table 1: Sensitivity of Biomechanical Outcomes to Prosthetic Foot Stiffness

| Outcome Measure | Sensitivity to Hindfoot Stiffness (per 15 N/mm) | Sensitivity to Forefoot Stiffness (per 15 N/mm) |

|---|---|---|

| Prosthesis Energy Return | Decreased | Not Specified |

| GRF Loading Rate | Increased | Not Specified |

| Stance-Phase Knee Flexion | Increased | Not Specified |

| Knee Extensor Moment (Early Stance) | Increased | Not Specified |

| Ankle Push-off Work | Not Specified | Decreased |

| COM Push-off Work | Not Specified | Decreased |

| Knee Flexor Moment (Late Stance) | Not Specified | Increased |

Experimental Protocol: Conducting a Mesh Sensitivity Analysis

Objective: To determine the mesh density required for a converged, accurate solution in a finite element model.

Materials:

- FEA software (e.g., Abaqus, Ansys Mechanical)

- The geometric model of the structure

Methodology:

- Initial Setup: Create the model with all necessary material properties, boundary conditions, and loads.

- Baseline Mesh: Generate an initial, relatively coarse mesh for the entire model.

- Solve and Record: Run the analysis and record the key output variable(s) of interest (e.g., maximum principal stress, critical buckling load, natural frequency).

- Systematic Refinement: Refine the mesh globally or in critical regions. This can be done by:

- Iterate: Repeat steps 3 and 4, each time with a finer mesh or higher element order.

- Analysis: Plot the key output variable against a measure of mesh density (e.g., number of elements, element size). The solution is considered converged when the change in the output between successive refinements falls below an acceptable threshold (e.g., <2%).

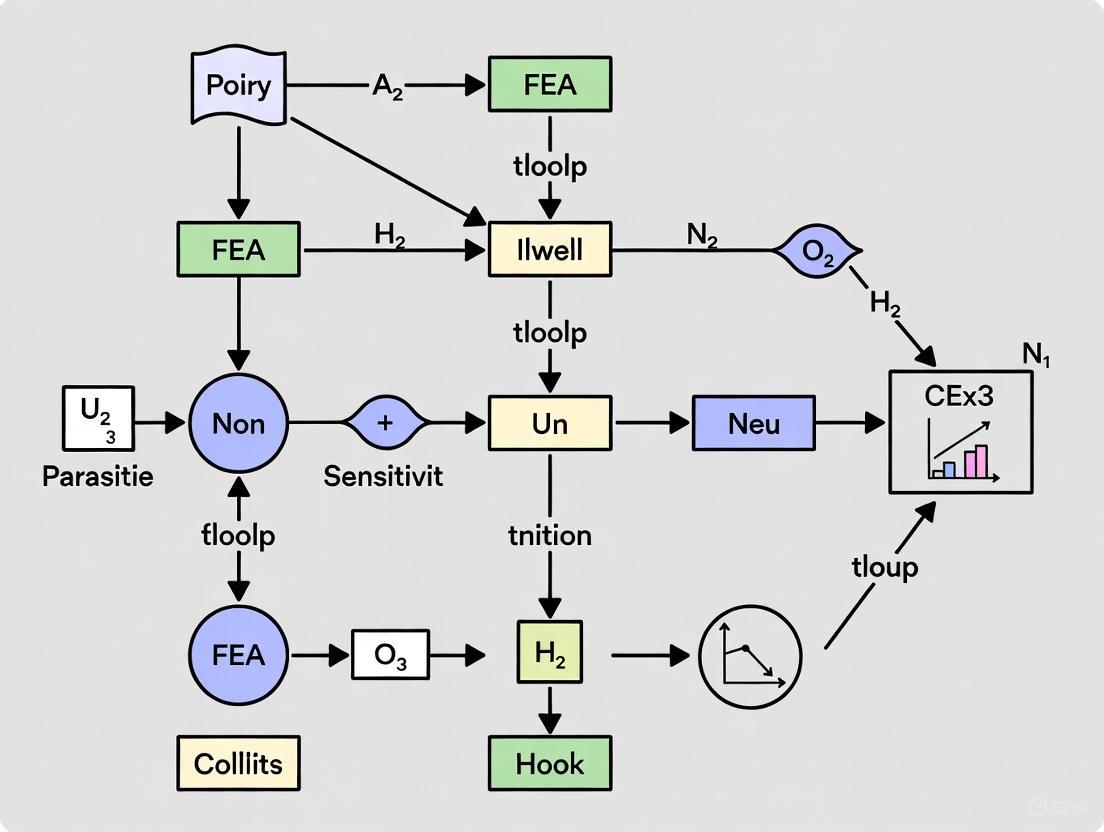

Workflow Diagram: The following diagram illustrates the iterative workflow for a mesh sensitivity analysis.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for FEA Sensitivity Research

| Item | Function in FEA Sensitivity Analysis |

|---|---|

| FEA Software (Abaqus, Ansys) | Provides the computational environment to build, solve, and post-process finite element models. |

| High-Performance Computing (HPC) Cluster | Handles the significant computational load from complex models, nonlinear analyses, and fine meshes. |

| Mesh Generation & Refinement Tools | Used to discretize geometry and systematically increase mesh density for convergence studies [5]. |

| Material Model Library | Contains mathematical models (e.g., linear elastic, hyperelastic, plastic) that define the stress-strain relationship for biological and synthetic materials. |

| Nonlinear Solver (e.g., Static Riks) | Essential for analyzing instability problems like buckling or large deformations, which are highly sensitive to inputs [5]. |

| Validation Data (Experimental) | Empirical data from physical tests (e.g., strain gauges, motion capture) used to validate and correlate FEA predictions, closing the verification loop [4]. |

Frequently Asked Questions (FAQs)

Q1: What is a sensitivity analysis in FEA, and why is it critical for medical device design? A sensitivity analysis in FEA is a process of quantifying how variations in input parameters (like material properties, geometric characteristics, and loading conditions) affect the simulation's output results [7]. It is critical for medical device design because it helps identify which parameters have the most significant effect on performance, assesses risky loading conditions, and ensures the device will behave reliably despite real-world uncertainties in manufacturing and operation [7].

Q2: My FEA model shows a localized "red spot" of very high stress. Is this a real failure risk or an error? A localized spot of extremely high stress, or a singularity, often occurs at sharp corners or where a point load is applied to a single node [6]. This can be confusing because it represents a location where the model predicts infinite stress, which is not physical. In the real world, forces are distributed, and sharp corners have finite radii. While this can sometimes indicate a legitimate stress concentration, it often requires engineering judgment. You should check if boundary conditions or model geometry are causing the singularity and consider applying loads over a small area rather than a single node [6].

Q3: How do I know if my mesh is fine enough to trust the results? Determining a sufficient mesh requires a mesh convergence study [4] [5]. This is a fundamental step where you systematically refine the mesh (make the elements smaller) and observe key results, such as peak stress or displacement. A mesh is considered "converged" when further refinement produces no significant change in the results you are interested in [4]. This process is essential for capturing phenomena like peak stress accurately and lends confidence to your model [4].

Q4: What is the difference between verification and validation in FEA? Verification asks, "Am I solving the equations correctly?" It confirms that the computational model accurately represents the underlying mathematical model and that there are no numerical errors [8]. Validation asks, "Am I solving the right equations?" It involves comparing the FEA predictions with real-world experimental data to ensure the model correctly represents the actual physical behavior [8]. Both are required for establishing model credibility.

Troubleshooting Guides

Problem 1: Model Fails to Converge in a Non-Linear Analysis Non-linear analyses (involving large deformations, contact, or non-linear materials) can fail to converge for several reasons.

- Check 1: Review Material Model. Ensure the material model (e.g., hyperelastic, plastic) is appropriate and its parameters are correctly defined. Inaccurate material models are a common source of non-convergence.

- Check 2: Examine Contact Definitions. Contact conditions create significant computational complexity. Small parameter changes can cause large changes in system response. Check for initial penetrations and ensure contact parameters like penalty stiffness are suitably defined [4].

- Check 3: Manage Large Deformations. For processes with severe plastic deformation (like forming or tissue stretching), element distortion can cause failure. A remeshing technique can be used within a Lagrangian formulation to solve this problem by regenerating the mesh when deformation becomes too severe [9].

Problem 2: FEA Results Do Not Match Physical Test Data A discrepancy between simulation and physical tests undermines the model's credibility.

- Check 1: Validate Boundary Conditions and Loads. This is the most common source of error. Ensure that the constraints and applied loads in the model truly represent the physical test setup. An "unrealistic boundary condition" is a frequent mistake that can make the difference between a correct and incorrect simulation [4].

- Check 2: Confirm Material Properties. The material properties (Young's modulus, yield strength, etc.) used in the FEA must match those of the actual physical test specimens. Using generic property data from a library instead of measured data can cause significant errors.

- Check 3: Revisit Model Assumptions. Re-examine the physics of the problem. Did you use the wrong type of analysis (e.g., linear instead of non-linear, static instead of dynamic)? Simplify the model to a case where you are confident of the result and build complexity back up [6].

Problem 3: Inaccurate Stresses in Regions of Interest If you are not capturing the correct stress levels, the issue often lies with the mesh or element choice.

- Check 1: Perform a Mesh Convergence Study. As per FAQ #3, a mesh that is too coarse will not accurately capture stress gradients. You must perform a convergence study specifically for the regions of peak stress [4].

- Check 2: Use Higher-Order Elements. First-order elements (linear shape functions) can be too stiff and perform poorly in bending or stress concentration scenarios. Switching to second-order elements can provide better results, especially for nonlinear materials or curvilinear geometry, though at a higher computational cost [6].

Experimental Protocols for Sensitivity and Credibility

Protocol 1: Conducting a Mesh Sensitivity Study

Objective: To determine the mesh density required for a numerically accurate result. Methodology:

- Create a Base Model: Start with a preliminary mesh, typically coarser to save time.

- Identify Output of Interest: Select the key result you want to converge (e.g., maximum von Mises stress, maximum displacement, buckling load).

- Refine Systematically: Run the analysis multiple times, each time uniformly refining the global mesh size or targeting specific critical regions.

- Record and Plot Results: For each mesh refinement level, record the output of interest and the number of elements or approximate element size.

- Analyze Convergence: Plot the output result against element size or number of elements. The mesh is considered converged when the change in the result between subsequent refinements is below an acceptable threshold (e.g., <2%). The results from [5] below demonstrate this process for buckling analysis.

Table: Example Mesh Sensitivity Results from a Buckling Analysis of a Composite Cylindrical Shell [5]

| Mesh Size (mm) | Model-1 Buckling Load (kN) | Model-2 Buckling Load (kN) |

|---|---|---|

| 50 | 112 | 223 |

| 40 | 111 | 171 |

| 30 | 110 | 146 |

| 25 | 109 | 138 |

| 20 | 109 | 129 |

| 10 | 109 | 117 |

| 5 | 109 | 111 |

| 2.5 | 109 | 107 |

Protocol 2: Workflow for Credibility Assessment of an In Silico Clinical Trial (ISCT)

Objective: To establish trust in the predictive capability of a computational model used to evaluate a medical device or drug delivery system across a virtual patient population. Methodology: This workflow is based on hierarchical credibility assessment frameworks proposed for medical devices [8].

- Define Context of Use (COU): Precisely state the question the model is intended to answer and the role of the simulation in the decision-making process.

- Perform Model Verification: Ensure the computational model is solved correctly. This includes code verification (checking for software bugs) and calculation verification (ensuring numerical errors are small, e.g., via mesh convergence studies).

- Perform Model Validation: Compare the FEA predictions with real-world experimental data. This could be validation at the component level (e.g., microneedle mechanical strength) or system level (e.g., drug release profile).

- Conduct Uncertainty Quantification (UQ): Estimate the uncertainty in model inputs (e.g., material properties, physiological loads) and compute the subsequent uncertainty in the model outputs. Sensitivity analysis is a key part of this, identifying which uncertain inputs contribute most to output uncertainty [7].

- Assess Credibility for the COU: Weigh the evidence from the VVUQ activities to determine if the model has sufficient credibility for its intended use.

Model Credibility Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials and Properties for FEA in Medical Applications

| Item / Reagent | Function / Relevance in FEA |

|---|---|

| Polymer Materials (e.g., PLGA, PC, PU) | Biocompatible matrix materials for degradable microneedles or device components; their mechanical strength (Young's Modulus) and degradation rate directly control drug release profiles and structural integrity [10]. |

| Silicon & Metals (e.g., Silicon, Titanium) | Used for stiff, non-degradable microneedles or structural device elements; provide high Young's Modulus for reliable skin penetration but carry risk of brittle fracture if not designed properly [10]. |

| Constitutive Material Models (e.g., Drucker-Prager Cap, Hyperelastic) | Mathematical models that define the complex stress-strain behavior of materials like pharmaceutical powders or soft biological tissues; essential for accurate non-linear FEA [11]. |

| Sensitivity Analysis Algorithms (e.g., ZFEM, DDM, ASM) | Computational methods used to calculate how FEA outputs (stress, strain) change with respect to input parameters, enabling robust design and inverse optimization [7]. |

| Abaqus/Standard with Python Scripting | A general-purpose FEA software platform that can be customized, for example, to implement automated remeshing techniques for simulating high-deformation processes [9]. |

Frequently Asked Questions (FAQs)

Q1: What is sensitivity analysis in Finite Element Analysis (FEA) and why is it critical for researchers?

Sensitivity analysis in FEA is the process of evaluating how the output of your computational model changes in response to variations in input parameters, such as material properties, boundary conditions, and geometry [12]. It is a critical step because it helps identify which parameters most significantly influence your results, assesses the model's uncertainty and robustness, and guides design optimization [12]. For instance, a study on a steel frame showed that while boundary condition assumptions had a minimal effect (less than 2% difference) on bending moments under a static gravity load, they caused a substantial difference (87%) in the first mode frequency during dynamic analysis [13]. This underscores that sensitivity is context-dependent, and understanding it is key to obtaining useful and reliable simulation data.

Q2: How do material properties act as a fundamental factor in FEA sensitivity?

Material properties define how a structure deforms and responds to loads. Inaccurate or insufficiently characterized properties are a common source of error and can dramatically alter simulation outcomes [14]. Sensitivity analysis quantifies this effect. Research on Fiber Reinforced Polymer (FRP) composites revealed that not all material parameters are equally influential; in some ply-based models, only fiber-related properties were significant, while parameters related to transverse properties had negligible impact [15]. Similarly, in biomechanical FEA, using high-fidelity, patient-specific material properties derived from CT scans—such as a Young's modulus of 14.88 GPa for cortical bone and 1.23 MPa for intervertebral discs—was crucial for creating models sensitive enough to detect subtle changes in bone strength [16] [17].

Q3: In what ways does geometry influence the sensitivity of an FEA model?

Geometric parameters directly control stress distributions and structural stiffness. Sensitivity analysis helps optimize these parameters for performance. A study on PLA scaffolds for bone repair used FEA and the Taguchi method to analyze geometric factors. It found that pore size was the most significant factor affecting mechanical strength, followed by overall porosity and the geometric configuration (orthogonal vs. offset). The optimal design balanced these parameters with a pore size of 400 µm and 70% porosity [18]. Furthermore, the specific geometric design of a component, such as using double posts (distal and mesiolingual) in a restored tooth instead of a single post, was shown to significantly reduce stress concentrations and improve the mechanical success of the restoration [19].

Q4: Why are boundary conditions often a major source of sensitivity in FEA?

Boundary conditions define how a model interacts with its environment, and simplifications here can lead to unrealistic results [14]. Their sensitivity is highly dependent on the type of analysis being performed. As demonstrated in the steel frame example, the assumption of pinned versus fixed constraints had a negligible impact on static bending moments but an enormous effect on dynamic modal frequencies [13]. This shows that an assumption made without a sensitivity study can lead to incorrect conclusions, particularly in dynamic or seismic applications. In geotechnical FEA, the sensitivity of pile bearing capacity to the boundary conditions represented by the surrounding soil was quantified, revealing that the Poisson's ratio of the soil was the most sensitive parameter [20].

Q5: What are some common methodologies for performing a sensitivity analysis?

Several established methodologies exist for conducting sensitivity analysis in FEA:

- Design of Experiments (DOE): A statistical method that systematically explores a set of design variables without needing to run every possible combination, making the process efficient [12].

- Perturbation and Gradient-Based Methods: These techniques involve varying input parameters by a small amount and computing the resulting change in outputs to determine sensitivity [12].

- Taguchi Experimental Design: This approach uses a special set of arrays to organize design parameters and their levels, allowing for a robust analysis of factor effects with a reduced number of simulation runs, as successfully applied in the optimization of PLA scaffolds [18].

- Software Tools: Many FEA packages have built-in modules for sensitivity analysis (e.g., ANSYS Parametric Design Language, Abaqus Sensitivity). Alternatively, custom scripts can be written in Python or MATLAB, or external tools like Dakota and Optimus can be used [12].

Troubleshooting Guides

Problem 1: Inaccurate or Unphysical Simulation Results

- Symptoms: Stress concentrations in unrealistic locations, deformations that defy physics, or results that drastically change with minor mesh refinements.

- Root Causes:

- Over-simplified Boundary Conditions: Applying idealized fixed or pinned constraints when the real connection has some flexibility [13].

- Incorrect Material Model: Using a linear-elastic model for a material that exhibits plasticity, creep, or hyperelasticity [14].

- Poor Element Quality: The presence of highly distorted, skewed, or stretched elements that cannot accurately calculate stresses [14].

- Solutions:

- Perform a boundary condition sensitivity study. Test your assumptions by comparing extreme cases (e.g., fully fixed vs. pinned) to understand their impact. If the results are highly sensitive, model the connection with more detail, such as using spring elements or contact surfaces [13].

- Select a material model that replicates the real-world behavior of your material. Use experimental data for calibration whenever possible [14] [17].

- Check mesh quality metrics like aspect ratio, skewness, and Jacobian. Remesh the model to improve element quality, especially in areas of high-stress gradients [14].

Problem 2: Model is Insensitive to Expected Key Parameters

- Symptoms: Changes to a parameter you believe should be important have little to no effect on the output results.

- Root Causes:

- Constrained by Other Parameters: The effect of the parameter is being masked or dominated by a different, more influential input.

- Inappropriate Output Variable: The result you are monitoring (e.g., peak stress) may not be affected by the parameter, while another result (e.g., natural frequency or displacement) would be.

- Solutions:

- Use a systematic sensitivity analysis method like DOE or RS-HDMR to untangle parameter interactions. This can reveal which parameters are truly influential and identify any correlations between them [15] [12].

- Broaden the scope of your results evaluation. If you are only looking at stress, try evaluating natural frequencies, displacement, or strain energy. The steel frame case is a perfect example where the sensitive output changed from stress to frequency depending on the analysis type [13].

Problem 3: High Computational Cost of Sensitivity Studies

- Symptoms: Running multiple simulations for a sensitivity analysis takes an impractically long time.

- Root Causes:

- Running a Full Factorial DOE: Attempting to run every possible combination of parameters and levels.

- Overly Refined Mesh: Using a mesh that is finer than necessary for the required accuracy.

- Solutions:

- Employ an efficient sampling technique. Use fractional factorial, Taguchi, or other space-filling DOE designs to get the maximum information from a minimum number of simulation runs [18] [12].

- Perform a mesh sensitivity analysis first. Find the coarsest mesh that provides results within an acceptable error tolerance, and use that mesh for your parametric sensitivity runs [14].

- Consider using surrogate modeling or machine learning. Techniques like Physics-Informed Neural Networks (PINNs) or RS-HDMR can create fast, approximate models that are ideal for extensive parameter exploration [15] [17].

Quantitative Data from Sensitivity Studies

The following tables summarize key quantitative findings from published sensitivity analyses, illustrating how different factors govern FEA model behavior.

Table 1: Sensitivity of Pile Bearing Capacity to Soil Parameters [20] This study analyzed how varying parameters of the soil around a pile affected the pile's top displacement and bearing capacity.

| Soil Parameter | Change in Pile Top Displacement (mm) | Maximum Sensitivity |

|---|---|---|

| Density | -2.41 | 1.03 |

| Modulus of Elasticity | 3.14 | 0.87 |

| Poisson's Ratio | 5.03 | 2.75 |

| Cohesion | -0.04 | 0.014 |

| Angle of Internal Friction | 0.26 | 0.09 |

| Coefficient of Friction | 2.60 | 1.07 |

Table 2: Sensitivity Analysis for 3D-Printed PLA Scaffold Optimization [18] This study used the Taguchi method and ANOVA to determine the significance of geometric factors on the mechanical performance of bone tissue engineering scaffolds.

| Geometric Factor | Influence on Mechanical Performance | Statistical Significance (from ANOVA) |

|---|---|---|

| Pore Size | Most significant factor | p < 0.01 (Highest F-value) |

| Porosity | Second most significant factor | p < 0.01 |

| Geometry (Orthogonal vs. Offset) | Significant factor | p < 0.01 |

Table 3: Material Properties for a Lumbar Spine FEA Model [17] This study established high-fidelity material properties through the integration of FEA and Physics-Informed Neural Networks (PINNs).

| Component | Material Property | Value |

|---|---|---|

| Cortical Bone | Young's Modulus | 14.88 GPa |

| Poisson's Ratio | 0.25 | |

| Bulk Modulus | 9.87 GPa | |

| Shear Modulus | 5.96 GPa | |

| Intervertebral Disc | Young's Modulus | 1.23 MPa |

| Poisson's Ratio | 0.47 | |

| Bulk Modulus | 6.56 MPa | |

| Shear Modulus | 0.42 MPa |

Experimental Protocols

Protocol 1: Conducting a Basic Boundary Condition Sensitivity Study

Objective: To evaluate the impact of boundary condition assumptions on FEA results for static and dynamic analyses. Methodology:

- Model Setup: Create a baseline FE model of the structure (e.g., a steel frame). Use appropriate element types (e.g., beam elements) and apply the primary load (e.g., a point mass for gravity) [13].

- Define Parameter Variations: Identify the boundary condition parameter to test. A common example is the fixity at supports. Create two alternative models:

- Model A: Apply fixed constraints (restricting all translation and rotation degrees of freedom).

- Model B: Apply pinned constraints (restricting only translation degrees of freedom).

- Run Analyses:

- Compare Results: Calculate the percentage difference for each result between the two models.

- % Difference = ∣(ResultFixed - ResultPinned)∣ / max(ResultFixed, ResultPinned) × 100

- Interpretation: A low percentage difference in static analysis indicates low sensitivity to the boundary condition assumption for that load case. A high percentage difference in dynamic analysis indicates high sensitivity, signaling that the boundary condition must be modeled with greater care for vibration or seismic studies [13].

Protocol 2: Sensitivity Analysis using Taguchi Experimental Design

Objective: To efficiently determine the most influential geometric and material parameters on a performance metric (e.g., scaffold strength). Methodology:

- Identify Factors and Levels: Select the parameters (factors) to investigate (e.g., pore size, porosity, geometric configuration) and define at least two values (levels) for each [18].

- Select Taguchi Orthogonal Array: Choose a pre-defined Taguchi array (e.g., L9) that can accommodate your number of factors and levels with a minimal number of experimental runs [18].

- Build and Run FEA Models: Create an FEA model for each combination of parameters specified in the orthogonal array. Run the simulations to obtain the performance metric of interest (e.g., von Mises stress) [18].

- Analyze Data: Use Analysis of Variance (ANOVA) on the simulation results to calculate the percentage contribution of each factor to the total variation. The factor with the highest percentage contribution is the most sensitive or significant [18].

- Validation: Build and test an FEA model with the optimal combination of parameters predicted by the Taguchi analysis to validate the performance improvement [18].

Workflow and Relationship Visualizations

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Software and Methodologies for FEA Sensitivity Research

| Tool / Method | Function in Sensitivity Analysis | Application Example |

|---|---|---|

| ANSYS Parametric Design Language (APDL) | Built-in tool for automating parameter variation and analysis runs [12]. | Automating a study on boundary condition fixity (fixed vs. pinned) [13]. |

| Abaqus Sensitivity | Integrated module for calculating output sensitivity to input parameters [12]. | Determining which material parameters in a composite model are most influential [15]. |

| Design of Experiments (DOE) | A statistical methodology for efficiently planning and analyzing parameter studies [12]. | Setting up a fractional factorial analysis to screen many factors with few runs. |

| Taguchi Method | A specific, robust DOE technique using orthogonal arrays for efficient optimization [18]. | Optimizing scaffold pore size, porosity, and geometry with a minimal set of FEA runs [18]. |

| Python/MATLAB Scripting | Custom programming to control FEA software and manage parametric data [12]. | Creating a custom loop to perturb material properties and record changes in natural frequency. |

| Random Sampling-High Dimensional Model Representation (RS-HDMR) | A surrogate modeling technique to decouple and quantify the influence of correlated inputs [15]. | Understanding the role of multiple, correlated FE input parameters in composite fracture simulation [15]. |

Frequently Asked Questions

Q1: What is the fundamental link between model accuracy and sensitivity analysis in FEA? A robust sensitivity analysis is entirely dependent on the quality of the underlying Finite Element Model. The analysis works by quantifying how changes in input parameters (like material properties) affect output responses (like stress or displacement) [21]. If the model contains idealization errors, such as incorrect boundary conditions or poor mesh quality, the sensitivity results will reflect the model's incorrect behavior rather than the true physics of the system [4] [22]. Essentially, a sensitivity analysis performed on a flawed model will give you precise, but inaccurate, measurements of the wrong behavior.

Q2: Why does my sensitivity analysis show negligible impact from a parameter I know is critical? This is often a symptom of a model-structure error. The parameter you are testing might be "locked" by an incorrect modeling assumption [22]. For example:

- If you are analyzing the sensitivity of a bolt's pre-load, but the connected parts are modeled with a tied contact that prevents sliding, the pre-load will not show a significant effect.

- Similarly, an incorrect boundary condition that over-constrains a structure can mask the sensitivity of material properties. Your analysis is not capturing the parameter's true role because the model's mathematical structure is incorrect.

Q3: How can I verify that my sensitivity results are reliable for decision-making? Reliability is established through a process of Verification and Validation (V&V) [4] [22].

- Verification: Ask, "Am I solving the equations correctly?" This includes performing a mesh convergence study to ensure your results are not skewed by numerical discretization errors [4].

- Validation: Ask, "Am I solving the correct equations?" This involves correlating your FEA results, including the trends identified by sensitivity analysis, with experimental test data. A model that cannot replicate measured behavior is not a valid basis for sensitivity studies [22].

Troubleshooting Guides

Problem: Sensitivity Analysis Yields Unexpected or Physically Impossible Results

| Step | Action | Rationale & Details |

|---|---|---|

| 1 | Verify Boundary Conditions | Unrealistic constraints are a primary cause of non-physical behavior [4]. Re-examine how the structure is fixed and loaded. Ensure that rigid body motions are prevented without introducing excessive artificial stiffness. |

| 2 | Check for Modeling Idealizations | Review the model for simplifications that might alter the load path, such as ignoring contact between components [4] or modeling a flexible support as rigid [22]. These can severely compromise sensitivity outcomes. |

| 3 | Conduct a Mesh Convergence Study | A coarse mesh can produce numerically stiff results that are insensitive to parameter changes. Refine the mesh in critical areas until key outputs (e.g., peak stress) change by less than a target threshold (e.g., 2-5%) [4]. |

| 4 | Validate with Test Data | Compare the model's predictions against experimental data, if available. The discrepancy between model behavior and real-world measurements is the most direct indicator of model error [22]. |

Problem: High Sensitivity to Numerically Controlled Parameters (e.g., Solver Settings)

| Step | Action | Rationale & Details |

|---|---|---|

| 1 | Tighten Solver Convergence Criteria | If results change significantly with solver tolerance, it indicates the solution is not fully converged. Stricter criteria ensure a numerically robust solution as a baseline for sensitivity studies [21]. |

| 2 | Review Contact Definitions | Contact problems are highly nonlinear. Small changes in contact parameters can cause large changes in system response, making sensitivity analysis difficult [4]. Ensure contact parameters are physically justified and conduct robustness studies. |

| 3 | Classify Uncertainty Type | Determine if the variation is aleatory (inherent randomness, best handled probabilistically) or epistemic (from lack of knowledge, reducible with better data). Using the wrong quantification method (e.g., intervals for random noise) amplifies issues [21]. |

Quantitative Data on Parameter Sensitivity

The table below summarizes findings from a case study on a deep excavation, demonstrating how variations in soil parameters impact lateral displacement. This illustrates the tangible effect of input uncertainty on model predictions [23].

Table: Parameter Sensitivity in a Deep Excavation Model (Case Study)

| Parameter | Variation | Impact on Lateral Displacement | Key Finding |

|---|---|---|---|

| Internal Friction Angle | Minor decrease | Displacement doubled | The model was highly sensitive to shear strength parameters. Small inaccuracies in measuring these can lead to dangerously non-conservative designs [23]. |

| Cohesion | Moderate decrease | Significant increase (50-100%) | Reinforces that shear strength parameters are critical and must be precisely determined [23]. |

| Interface Strength (Rinter) | Reduction | Major impact on wall bending moment & deformation | The property governing soil-structure interaction is a critical sensitivity driver that is often overlooked [23]. |

Experimental Protocols for Reliable Sensitivity Analysis

Protocol: Conducting a Model Updating and Validation Study

This methodology uses experimental vibration data to calibrate and validate an FEA model, ensuring its sensitivity to parameters is physically meaningful [24] [22].

Objective: To update a finite element model using experimental modal data (natural frequencies and mode shapes) to minimize discrepancy between analysis and test results, thereby creating a validated model for accurate sensitivity analysis.

Workflow Diagram: Model Updating and Validation Process

Materials and Reagents:

- Finite Element Model: The initial analytical model of the structure.

- Experimental Structure: The physical prototype or real-world structure.

- Data Acquisition System: Hardware for measuring structural responses (e.g., accelerometers, force transducers).

- Excitation Source: An impact hammer or shakers to excite the structure.

- Operational Modal Analysis (OMA) Software: Software to extract modal parameters (natural frequencies, mode shapes, damping) from measured data [24].

- Model Updating Software: Computational tools that implement sensitivity-based algorithms to adjust FEA parameters to match test data [22].

Procedure:

- Initial Model Assessment: Create a baseline finite element model. Perform an eigenvalue analysis to obtain its natural frequencies and mode shapes [22].

- Experimental Testing: Conduct an operational modal analysis on the physical structure. Measure the vibration response and use OMA software to extract the experimental natural frequencies and mode shapes [24].

- Mode Pairing and Correlation: Pair the analytical modes from the FEA with the corresponding experimental modes. This step is critical, as "mode inversion" (where the order of modes is different between test and model) can invalidate the updating process [24].

- Sensitivity Analysis: Perform a sensitivity analysis on the initial FEA model to determine which updating parameters (e.g., Young's modulus at specific locations, spring stiffnesses, non-structural mass) have the greatest influence on the modal frequencies and shapes targeted for correlation [24] [22].

- Parameter Updating: Use a computational updating algorithm (e.g., a sensitivity-based method) to adjust the pre-selected parameters. This is an iterative process that minimizes the difference between the analytical and experimental modal data [22].

- Validation and Verification: The updated model must be validated by checking its ability to predict responses to different loads or in frequency ranges not used in the updating process. This ensures it is not just "over-fitted" to the calibration data [22].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools for FEA Uncertainty and Sensitivity Analysis

| Tool / Methodology | Function in Analysis |

|---|---|

| Monte Carlo Simulation (MCS) | A probabilistic technique that runs thousands of simulations with randomized inputs to generate statistical output distributions. Highly accurate but computationally expensive [21]. |

| Polynomial Chaos Expansion (PCE) | A surrogate modeling approach that approximates the relationship between inputs and outputs using polynomial series, significantly reducing the computational cost of uncertainty quantification [21]. |

| Sensitivity Analysis | Identifies which input parameters have the greatest influence on simulation results, allowing engineers to prioritize refinement efforts and understand key drivers [21] [23]. |

| Bayesian Updating | A probabilistic method that refines uncertainty estimates and model parameters by incorporating new experimental data, improving predictive accuracy over time [21]. |

| Model Updating Software | Proprietary codes that implement sensitivity-based algorithms to automatically calibrate FEA model parameters using vibration test data [22]. |

| Digital Twin | A validated, updated virtual model of a physical structure that is continuously informed by sensor data, enabling real-time monitoring and predictive maintenance [24]. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support resource is designed for researchers and scientists working to implement high-fidelity finite element analysis (FEA) techniques, as explored in the thesis context of increasing sensitivity to bone tissue changes in clinical interventions [16]. The following guides address common computational and methodological challenges.

Frequently Asked Questions (FAQs)

Q1: My FEA model fails to solve or terminates unexpectedly. What are the first steps I should take to diagnose the problem?

A1: A model that fails to solve often has issues that can be diagnosed by checking the solver output files [25]. We recommend this systematic approach:

- Check the DAT file: The

.datfile is the first place to look for errors that prevent the model from starting. It will contain*ERROR:messages that often clearly state the problem, such as incorrect node definitions or conflicting boundary conditions, and list the specific nodes involved [25]. - Inspect the STA file: The

.sta(status) file provides a high-level overview of the analysis progress. It shows the size of each solution increment and the number of iterations attempted. If you see the solver repeatedly "cutting back" the increment size, it indicates difficulty converging on a solution for a particular step [25]. - Examine the MSG file: The

.msgfile gives a detailed, iteration-by-iteration account of the solution process. It is invaluable for diagnosing convergence issues mid-analysis, such as rigid body motion or excessively high strains in elements [25]. - Review the ODB: Even if the analysis failed, the output database (

.odb) may contain warning or error sets that highlight problematic nodes or elements. Visualizing these in the model can immediately show areas with issues like incorrect boundary conditions [25].

Q2: What is the practical difference between a "light touch" and a "deep dive" FEA approach in a research context?

A2: The choice depends on your project's stage and the specific risks you are investigating.

- A "light touch" approach uses simpler models (e.g., linear materials, static loads) for rapid feasibility checks and initial design comparisons early in the research cycle [26].

- A "deep dive" approach involves more complex, non-linear simulations that account for phenomena like material creep, stress relaxation, dynamic loads, and complex contact conditions. This is essential for a robust, publication-ready analysis and for identifying non-obvious design risks before committing to physical prototyping [26].

Q3: Our high-fidelity model of a polymer microneedle suggests it should penetrate the skin, but experimental results show buckling. What could be the cause?

A3: This common discrepancy often stems from an oversimplified material definition in the simulation. The mechanical strength of polymers is a combination of multiple parameters [10].

- Check Material Parameters: Ensure your model uses appropriate, experimentally-derived values for Young's modulus, Poisson's ratio, and yield strength. Using generic values from literature can be misleading.

- Refine the Model: Avoid treating the microneedle as a simple analytical rigid body, as this ignores material deformation. Model the polymer as a non-linear material to better capture its real-world behavior under load [10].

- Validation is Key: FEA results must be complemented by experimental mechanical testing, such as with a texture analyzer or micromechanical testing machine, to validate the simulation's predictions [10].

Troubleshooting Guide: FEA Model Convergence

This guide outlines a structured workflow to diagnose and resolve common FEA convergence issues, based on the analysis of solver output files [25]. Follow the logic below to identify and fix the problem in your model.

Experimental Protocol: High-Fidelity FEA for Bone Strength Assessment

This protocol details the methodology for generating high-fidelity, patient-specific finite element models to assess changes in bone strength, as described in the referenced study [16].

1. Model Generation from Clinical Data

- Input: Quantitative Computed Tomography (QCT) scans of the target anatomy (e.g., hip, spine) taken at multiple time points.

- Segmentation: Use a high-fidelity segmentation approach to precisely delineate bone geometry from the medical images, capturing individual anatomical variations.

- Meshing: Generate a finite element mesh with high-quality, anatomically detailed elements. This technique moves beyond blocky voxel-based meshes to better capture complex geometries and stress distributions.

- Material Mapping: Assign heterogeneous, patient-specific material properties (e.g., bone density) to the model based on the grayscale values from the QCT scans.

2. Simulation and Analysis

- Boundary Conditions: Apply physiological loading conditions relevant to the fracture model (e.g., stance loading for the hip, compression for the spine).

- Solution: Execute a non-linear finite element analysis to simulate the mechanical response under load.

- Output: Calculate the factor of risk for fracture based on the computed bone strength (the load required to cause failure) relative to the applied physiological load. A decrease in this factor indicates reduced fracture risk.

Research Reagent Solutions & Essential Materials

The table below lists key "reagents" or components essential for conducting high-fidelity FEA in a biomedical research context.

| Item | Function in the FEA Experiment |

|---|---|

| Patient QCT Scans | Provides the 3D anatomical geometry and density information required for patient-specific model generation [16]. |

| High-Fidelity Segmentation Software | Converts medical image data into a precise geometric model, capturing individual anatomical details critical for accuracy [16]. |

| Non-Linear Solver | The computational engine that solves the complex mathematical equations of the FEA model, handling material and geometric non-linearities [25]. |

| Continuum Damage Mechanics (CDM) Model | A material model that simulates the progressive degradation and failure of materials, such as bone or composites, under load [15]. |

| Material Property Database | A curated set of mechanical properties (Young's modulus, yield strength) for biological and synthetic materials, necessary for realistic simulation [10]. |

Quantitative Data: Microneedle Material Properties for FEA

For researchers simulating medical devices like microneedles, accurate material data is crucial. The table below summarizes key mechanical parameters for common materials used in FEA simulations, compiled from the literature [10].

| Microneedle Material | Density (kg/m³) | Young's Modulus (GPa) | Poisson's Ratio | Key Characteristics |

|---|---|---|---|---|

| Silicon | 2329 | 170 | 0.28 | Brittle, high stiffness & biocompatibility [10]. |

| Titanium | 4506 | 115.7 | 0.321 | Excellent mechanical properties, low cost [10]. |

| Steel | 7850 | 200 | 0.33 | Excellent strength, but risk of brittle fracture in skin [10]. |

| Polycarbonate (PC) | 1210 | 2.4 | 0.37 | Good biodegradability and biocompatibility [10]. |

| Maltose | 1812 | 7.42 | 0.3 | Common, FDA-approved excipient; can absorb moisture [10]. |

| Silk | 1340 | 8.55 | 0.4 | Excellent toughness and ductility [10]. |

Advanced Methodologies: Implementing High-Fidelity FEA to Boost Sensitivity in Biomedical Models

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: My finite element model shows areas of extremely high, unrealistic stress. What is the likely cause and how can I resolve it?

This is often a singularity, a point in the model where stress values theoretically become infinite [6]. Common causes and solutions are detailed below.

| Cause | Description | Solution |

|---|---|---|

| Sharp Re-entrant Corners | Idealized geometry with sharp internal corners where stress concentrates unrealistically. | Add a small fillet to the sharp corner to reflect real-world geometry and distribute stress [6]. |

| Point Load Application | Applying a force or constraint to a single node, which creates infinite local stress. | Distribute the load over a finite area to simulate how forces are applied in reality [6]. |

| Boundary Conditions | Over-constrained displacements can create artificial stress risers. | Review constraints to ensure they accurately represent the physical system without over-constraining [4] [6]. |

FAQ 2: How can I ensure my segmentation and resulting FEA model is sensitive enough to detect small biological changes, like bone density loss?

Achieving high sensitivity requires a high-fidelity modeling approach. A 2025 study found that high-fidelity anatomically detailed modeling techniques are more sensitive than standard voxel-based techniques for detecting minor changes in bone strength [16] [27]. Key steps include:

- Use High-Resolution Scans: For accurate 3D models, the slice thickness of CT or MRI scans should be less than 1.25 mm [28].

- Employ Detailed Segmentation: Move beyond automated voxel-based methods. Use software that allows for manual refinement to capture individual anatomical variations and material properties with high precision [16].

- Perform a Mesh Convergence Study: Systematically refine your mesh and run analyses until the critical results (like peak stress) do not change significantly. This ensures your results are accurate and not dependent on mesh size [4].

FAQ 3: My model's results do not align with physical expectations. What fundamental steps might I have missed?

This often stems from errors in the initial modeling setup rather than the solution itself.

- Clearly Define FEA Objectives: Before modeling, precisely define what the analysis should capture (e.g., peak stress, stiffness, load distribution). This determines the modeling techniques and assumptions you will use [4].

- Understand the Underlying Physics: Use engineering judgment and knowledge of the system's real-world behavior to build a reliable simulation. Do not rely solely on FEA software to predict behavior; use it to validate your understanding [4].

- Verify and Validate: Implement a Verification & Validation process. This includes mathematical checks and, when possible, correlating FEA results with experimental test data to ensure modeling abstractions do not hide real physical problems [4].

FAQ 4: What are the key considerations for creating a segmentation protocol to ensure consistency in a research setting?

A standardized segmentation protocol is crucial for reducing variability and ensuring reproducible results [29].

- Plan in Advance: Define clear goals, patient selection guidelines, and imaging standards [29].

- Select Appropriate Software: Choose segmentation tools that fit your application, such as 3D Slicer or ITK-SNAP [29].

- Ensure Optimal Image Quality: Images should have a high enough signal-to-noise ratio (SNR) and be free of severe artifacts to be suitable for segmentation [29].

- Determine Slice Thickness and Planes: Use consistent slice thickness and segmentation planes (typically axial) across all datasets to minimize variability [29].

Experimental Protocols for High-Fidelity Modeling

Protocol 1: Developing a High-Fidelity Segmentation and FEA Workflow for Patient-Specific Bone Strength Analysis

This protocol is based on methodologies that have proven more sensitive to bone tissue changes than standard techniques [16] [27].

1. Objective: To generate a patient-specific finite element model from medical CT scans for the direct biomechanical evaluation of bone strength and fracture risk at metabolically active sites (e.g., hip and spine).

2. Materials and Reagents

| Research Reagent / Solution | Function in the Experiment |

|---|---|

| CT Scan DICOM Images | Provides the foundational 3D imaging data of the patient's anatomy. Slice thickness <1.25 mm is recommended [28]. |

| Segmentation Software | Software used to delineate the anatomical structure of interest from the DICOM images and convert them to a 3D surface model [29]. |

| Finite Element Analysis Software | Platform for applying material properties, boundary conditions, and loads to the 3D model to simulate biomechanical performance [16]. |

| High-Fidelity Anatomically Detailed Modeling Technique | A specific modeling approach that prioritizes capturing individual anatomical variations over simpler voxel-based methods to improve sensitivity [16]. |

3. Step-by-Step Methodology

Step 1: Image Acquisition and Preparation

- Acquire CT scans at the required anatomical sites at multiple time points (e.g., before and after an intervention).

- Export the scans as DICOM files and ensure they meet quality standards for segmentation [29].

Step 2: Segmentation and 3D Model Generation

- Import DICOM images into segmentation software.

- Manually or semi-automatically delineate the bone structure on each slice to create a detailed 3D volume.

- Export the segmented model in a format suitable for FEA (e.g., STL) [28].

Step 3: Finite Element Model Setup

- Import the 3D geometry into FEA software.

- Assign anisotropic, patient-specific material properties based on CT Hounsfield units or literature data.

- Apply physiological boundary conditions and loads based on established hip and spine fracture models [16].

Step 4: Mesh Convergence and Solution

- Perform a mesh convergence study on critical areas to ensure numerical accuracy [4].

- Run a nonlinear static structural analysis to simulate bone loading and predict fracture risk.

Step 5: Post-Processing and Analysis

- Analyze results such as stress distribution, strain energy, and predicted failure load.

- Compare results before and after intervention to assess changes in bone strength [16].

Protocol 2: Sensitivity Analysis for FEA Input Parameters

This protocol is adapted from studies on composite materials to understand the influence of various input parameters on FEA outcomes, which is crucial for model calibration and validation [15].

1. Objective: To determine the most influential input parameters in a finite element model and understand their correlation with simulation outputs.

2. Methodology:

- Identify all variable input parameters for the FE model (e.g., material properties, interface strengths).

- Use a sampling method like Random Sampling-High Dimensional Model Representation (RS-HDMR) to efficiently explore the high-dimensional parameter space.

- Run multiple FE simulations with different combinations of input parameters.

- Analyze the results to determine which parameters have the strongest influence on the output (e.g., peak load, damage progression). This helps identify which parameters require precise calibration and which can be simplified [15].

Workflow and Troubleshooting Visualizations

Troubleshooting Guides

Guide 1: Addressing Mesh-Related Convergence Difficulties

Problem: Your FEA simulation fails to converge, or the solver returns errors.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Poor Element Quality [30] [31] | Check mesh metrics for high aspect ratio, skewness, or warpage. | Use automatic mesh quality checks; repair distorted elements manually [30]. |

| Inadequate Mesh in Critical Regions [32] [33] | Identify locations of high stress gradients or geometric complexity from initial results. | Apply local mesh refinement sizing to stress concentration zones like fillets and holes [33]. |

| Mismatched Mesh at Contact Interfaces [33] | Inspect the mesh density on surfaces involved in frictional contact. | Enforce a 1:1 element size ratio across frictional contact faces using Contact Sizing [33]. |

Guide 2: Resolving Inaccurate Stress Results

Problem: The simulated stresses are unreasonably high (singularities) or do not match expected values.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Excessively Coarse Mesh [32] | Perform a mesh convergence study on the critical region. | Systematically reduce element size in high-stress areas until results stabilize (<5% change) [32]. |

| Geometric Singularities [30] | Look for perfect sharp corners or points in the CAD geometry. | Add small fillets to sharp corners or use mesh defeaturing to simplify non-critical tiny features [30] [31]. |

| Inappropriate Element Type [30] | Review if element type (e.g., 1D, 2D, 3D) matches the physical behavior. | Use solid elements for 3D stress states; shell elements for thin-walled structures [30]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the single most important step to ensure my mesh is accurate enough?

The most critical step is performing a mesh convergence study [32]. This involves running your simulation with progressively finer meshes and monitoring key results (like maximum stress or displacement). When the difference in results between subsequent refinements falls below a pre-defined threshold (e.g., 2-5%), your mesh can be considered converged and the results reliable [32] [33].

FAQ 2: How can I reduce computational cost without sacrificing needed accuracy?

- Use a Hybrid Meshing Approach: Employ finer meshes only in critical areas (e.g., stress concentrations) and coarser meshes elsewhere [31] [32] [33].

- Leverage Symmetry: Model only a symmetric portion of the geometry (e.g., 1/2 or 1/4) and apply symmetry boundary conditions [33].

- Simplify Geometry: Defeature non-essential details (like small holes or nameplates) that do not significantly affect global results [30] [31].

- Choose Efficient Element Types: For slender structures, use beam elements; for thin walls, use shell elements [30].

FAQ 3: In the context of high-sensitivity research, what mesh strategy is best for detecting small changes in material properties?

As demonstrated in biomedical research for detecting subtle bone strength changes, high-fidelity, anatomically detailed modeling techniques are superior to standard voxel-based methods [16] [27]. This involves:

- Using higher-order elements that better capture curvatures and complex geometries.

- Applying very fine meshes to capture individual anatomical variations accurately.

- Ensuring the mesh resolution is fine enough to be more sensitive to minor material property changes than the effect size you are trying to detect [16].

FAQ 4: How many elements are needed through the thickness of a thin structure?

For solid elements, a general rule is to use at least three elements through the thickness to adequately capture bending and through-thickness stress gradients [33]. However, the required number can depend on the element order and the specific stress state.

Experimental Protocols & Data

Table 1: Mesh Control Strategies for Different Physics

| Analysis Objective | Recommended Global Mesh Strategy | Key Local Refinement Areas |

|---|---|---|

| Global Stiffness / Displacement [33] | Coarser mesh is often sufficient. | Minimal; focus on connection points and load application areas. |

| Local Stress Analysis [32] [33] | Moderately fine mesh. | High-stress gradients: fillets, holes, notches, weld seams [33]. |

| Contact Stresses [33] | Fine mesh sufficient to define contact geometry. | Contact interfaces with matched mesh density (1:1 for frictional) [33]. |

| Modal Frequency [33] | Mesh fine enough to capture mode shape deformations. | Areas of high stiffness or mass concentration. |

Table 2: Quantitative Guidelines for Mesh Sizing

| Parameter | Guideline | Notes & Rationale |

|---|---|---|

| Global Element Size [33] | 1/5 to 1/10 of the smallest significant dimension. | A starting point; must be refined based on convergence. |

| Element Size Ratio at Bonded Contact [33] | Up to 2:1. | A node-to-node match is not strictly necessary for bonded interfaces. |

| Convergence Threshold [32] [33] | < 5% for design verification; < 2% for high-accuracy studies. | The acceptable relative change in key results upon further refinement. |

Detailed Methodology: Mesh Convergence Study Protocol

A convergence study is essential for verifying the reliability of your results [32].

- Define Outcome Metric: Identify the key result you want to converge (e.g., peak von Mises stress at a notch, maximum displacement).

- Establish Baseline: Run an analysis with a reasonably fine initial mesh.

- Refine and Compare: Systematically refine the mesh globally or in critical areas. Record the outcome metric and computational time for each run.

- Check for Stability: Compare the outcome metric between successive runs. Stop when the relative change is less than your acceptable threshold (e.g., 5%).

- Plot Results: Create a chart of your outcome metric versus the number of nodes or element size to visualize convergence [32].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for High-Fidelity FEA Modeling

| Item | Function in the FEA Context |

|---|---|

| CAD/Geometry Model [31] | The digital representation of the physical structure, serving as the foundation for mesh generation. |

| Pre-processing Software (e.g., Ansys Mechanical) [31] | The software environment for geometry preparation, material definition, and mesh generation. |

| High-Fidelity Segmentation Tools [16] [27] | Software used to create precise geometric models from medical scan data (e.g., CT), crucial for patient-specific analyses. |

| Automatic Meshing Algorithm [30] | Software tool that generates an initial mesh based on geometry, saving time and reducing user effort. |

| Mesh Quality Metrics (Aspect Ratio, Skewness) [30] | Quantitative measures used to evaluate the quality of the generated mesh and identify potential problem elements. |

| Computational Solver [30] | The numerical engine that solves the system of equations derived from the meshed model. |

| High-Performance Computing (HPC) Resources | Essential for handling the large computational cost associated with finely discretized, high-sensitivity models. |

Workflow Visualization

Diagram 1: Mesh Optimization Workflow

Diagram 2: Mesh Convergence Study Process

Core Concepts: Linear and Quadratic Finite Elements

What are the fundamental differences between linear and quadratic finite elements?

The primary difference between linear and quadratic elements lies in their shape functions and how they interpolate displacements and stresses within an element. This fundamental distinction dictates their performance, accuracy, and computational cost.

- Linear Elements: These use a linear shape function, meaning the displacements between nodes vary linearly. They are typically characterized by having nodes only at their corners. A key limitation is that they do not capture bending effectively and cannot represent curved geometries accurately without a significant number of elements [34].

- Quadratic Elements: These utilize a non-linear (second-order) shape function, allowing displacements to be interpolated using a higher-order polynomial. They incorporate mid-side nodes in addition to corner nodes (e.g., three nodes per element edge instead of two). This enables them to represent curved edges and capture complex deformation patterns like bending much more accurately [34].

The table below summarizes the key characteristics of each element type.

Table 1: Characteristic Comparison of Linear and Quadratic Elements

| Characteristic | Linear Elements | Quadratic Elements |

|---|---|---|

| Shape Function | Linear [34] | Quadratic [34] |

| Stress State | Mostly constant within an element [34] | Linear variation within an element [34] |

| Geometry Capture | Poor for curved surfaces [34] | Accurate for curved surfaces [34] |

| Sensitivity to Distortion | Highly sensitive [34] | Relatively less sensitive [34] |

| Computational Cost | Lower (faster simulation) [34] | Higher (slower simulation) [34] |

Impact on Result Precision and Sensitivity

How does the choice of element type affect the precision of my results, particularly in sensitivity studies?

The choice of element type directly impacts the accuracy of your results, especially in studies sensitive to stress gradients, geometry, and deformation modes. An inappropriate choice can introduce significant errors.

- Stress and Strain Accuracy: Quadratic elements provide a linear variation of stress within a single element, offering a more realistic and accurate representation of stress gradients compared to the mostly constant stress state in linear elements [34]. This is critical for accurately capturing peak stresses.

- Bending and Curvature: For problems involving significant bending or curved geometries, linear elements can make the model overly stiff—a phenomenon known as "shear locking"—leading to underestimated displacements and stresses. Quadratic elements are specifically recommended for such scenarios as they are better suited to represent bending deformations [34] [35].

- Mesh Convergence: Quadratic elements generally converge to an accurate solution with fewer elements than linear elements. A study on a thin beam under bending showed that quadratic elements achieved less than 1% error with a much coarser mesh and lower computational time compared to linear elements, which required a much finer mesh to achieve the same accuracy [35].

Table 2: Impact on Numerical Precision in Different Scenarios

| Analysis Scenario | Impact of Linear Elements | Impact of Quadratic Elements |

|---|---|---|

| Bending-Dominant Problems | Poor performance; overly stiff response; inaccurate stresses and displacements [34] [35]. | Excellent performance; accurate capture of deformations and stress [34] [35]. |

| Geometric Accuracy | Poor representation of curved edges; requires many elements [34]. | High-fidelity representation of curved edges [34]. |

| Convergence to True Solution | Slower convergence; requires more mesh refinement [35]. | Faster convergence; often requires fewer elements [35]. |

Diagram 1: A systematic workflow for selecting between linear and quadratic elements based on analysis goals.

Experimental Protocols for Element Type Validation

What is a robust experimental methodology to validate the choice of element type for a new model?

Establishing a validated simulation protocol is essential for reliable results, particularly in sensitive research. The following procedure provides a framework for selecting and validating the appropriate element type.

Protocol 1: Element Type Selection and Convergence Study

- Problem Definition: Clearly define the analysis goals (e.g., peak stress, global stiffness, thermal stress). This determines the required output accuracy [4].

- Preliminary Model with Coarse Mesh: Create a simplified model and mesh it with a coarse grid. Start with a coarse mesh to monitor time and accuracy before increasing mesh fineness [6].

- Comparative Analysis:

- Run the analysis using both linear and quadratic elements with the same coarse mesh density.

- Compare key results (e.g., max displacement, max stress) between the two.

- Mesh Refinement Study:

- Refine the mesh globally or in critical areas using the h-method (reducing element size) for both element types [6].

- For each refinement level, record the key result parameters.

- Convergence Check: Plot the key results (Y-axis) against the number of elements or degrees of freedom (X-axis). A converged solution is achieved when further refinement results in negligible change in the results (e.g., <1% difference) [4].

- Validation and Selection: The element type that achieves a converged solution with less computational effort is the optimal choice for that specific problem. Always validate FEA results against analytical solutions or experimental test data when available [4] [36].

Diagram 2: A step-by-step protocol for validating a Finite Element Model, ensuring result reliability.

Troubleshooting Common Errors and Singularities

Why does my model show extreme stress concentrations (singularities), and how is this related to element type?

Stress singularities are locations in a model where stresses theoretically tend toward infinity, often occurring at sharp re-entrant corners, point loads, or abrupt changes in boundary conditions [6]. While the element type itself does not cause singularities, it influences how they are manifested and interpreted.

- Cause of Singularities: The primary cause is often the model's boundary conditions and geometry, such as a sharp re-entrant corner (e.g., a crack tip) or a point load applied to a single node [6].

- Element Type Interaction:

- Linear Elements: May underestimate deformation and stress around a singularity due to their inherent stiffness, but the stress will still tend to increase with mesh refinement without converging to a finite value.

- Quadratic Elements: With their higher-order shape functions, they can more accurately capture the high stress gradient near a singularity. However, this does not eliminate the singularity itself; the stress will still grow indefinitely with mesh refinement.

Troubleshooting Guide:

- Problem: Stresses keep increasing without convergence upon mesh refinement at a sharp corner.

- Solution: This is a geometric singularity. Modify the geometry by adding a small fillet radius to the sharp corner. The stress will then converge to a finite value upon mesh refinement [6].

- Problem: Infinite stresses at a point where a concentrated load is applied.

- Problem: Poor stress results in areas of bending when using linear elements.

Essential Research Reagent Solutions (FEA Context)

In the context of FEA, "research reagents" translate to the fundamental building blocks and tools required to construct and execute a reliable simulation. The following table details these essential components.

Table 3: Essential FEA "Reagent" Solutions for Reliable Analysis

| Reagent (FEA Tool/Input) | Function & Explanation |

|---|---|

| Element Formulation Library | Provides the available element types (linear, quadratic, hexahedral, tetrahedral). The analyst must select the appropriate formulation (e.g., plane stress, plane strain, shell) based on the physics of the problem [4] [37]. |

| Material Model | Defines the stress-strain relationship of the component's material. Using an incorrect model (e.g., linear elastic beyond the yield point) is a classic error that produces mathematically correct but physically wrong results [36]. |

| Mesh Generator | Discretizes the geometry into finite elements. The quality and density of the mesh are critical for accuracy and must be verified through a convergence study [4] [6]. |

| Boundary Condition Definer | Applies realistic constraints and loads to the model. Unrealistic boundary conditions are a major source of error and can lead to singularities or incorrect load paths [4] [6]. |

| Solvers (Linear/Nonlinear) | The computational engine that solves the system of equations. The analyst must select the right solution type (e.g., static, dynamic, nonlinear) to capture the relevant behaviors [4] [37]. |

| Post-Processor & Validator | Tool for interpreting results (stresses, displacements). It is crucial for checking result plausibility, managing singularities, and comparing averaged vs. unaveraged stresses to gauge solution quality [4] [37]. |

Frequently Asked Questions (FAQs)

Q1: Can I mix linear and quadratic elements in the same model? Yes, but it must be done with extreme caution. Elements with different degrees of freedom (like solid elements with only translational DOFs and shell elements with both translational and rotational DOFs) are directly incompatible. Special techniques are required at the interface to connect them properly, or unexpected behavior and errors may occur [37].

Q2: When should I absolutely avoid using linear elements? Lower-order (linear) tetrahedral elements should be generally avoided for structural analysis as they can make the model overly stiff, leading to inaccurate results. They are particularly unsuitable for thin, slender structures under bending and for accurately capturing peak stresses without an excessively fine mesh [34] [35].

Q3: My model ran successfully, but the results look incorrect. What should I check first? First, always check the deformed shape to see if it matches your engineering intuition and expected behavior [6]. Then, verify your boundary conditions and loads to ensure the model is properly constrained and loaded realistically. Finally, initiate a mesh convergence study to rule out discretization errors [4].

Q4: How does element choice relate to the broader goal of increased sensitivity research in FEA? The choice of element type is a primary technique modification that directly influences the sensitivity of your model. Using an insufficient element order can mask or dampen the model's response to specific stimuli (like localized loads or bending), reducing its ability to detect critical phenomena. Selecting a higher-order element, like quadratic, enhances the model's sensitivity to these effects, leading to more precise and reliable insights [34] [35]. This is fundamental to advancing the predictive capability of FEA.

Applying Sensitivity Analysis Methods for Optimal Design Parameter Identification

Technical Support Center

Frequently Asked Questions

Q1: What is sensitivity analysis in the context of Finite Element Analysis, and why is it crucial for my research?

Sensitivity Analysis (SA) quantifies the extent to which FE model input parameters affect output parameters, helping you systematically understand which inputs most influence your results like stress, deformation, or damage progression [15] [38]. For FEA technique modifications aimed at increasing sensitivity, this is foundational. It assists in identifying which model parameters require precise calibration and which can be approximated, leading to more reliable, efficient, and accurately validated models [15] [16].

Q2: My FEA model has many input parameters. How can I efficiently determine which ones are truly influential?

For high-dimensional models, global sensitivity analysis methods like Random Sampling-High Dimensional Model Representation (RS-HDMR) are highly effective [15]. These techniques can efficiently handle many interacting variables and identify key parameters, separating them from non-influential ones. For instance, one study found that in certain ply-based composite models, only fiber-related properties were influential, while all parameters related to transverse properties were non-influential [15]. This allows for significant model simplification without sacrificing accuracy.

Q3: I need to calibrate my material model, but lack high-precision test data. What are my options?

You can derive necessary parameters using more common test data and established empirical relationships. For example, in geotechnical FEA using the Hardening Soil Small (HSS) model, crucial stiffness parameters can be determined by first calculating a reference in-situ overburden pressure and a reference in-situ void ratio from standard oedometer test results [39]. These reference values can then be used in empirical equations to estimate advanced stiffness parameters, ensuring sufficient numerical analysis accuracy from economical, high-availability tests [39].

Q4: How can I improve my FEA model's sensitivity to detect small changes in material properties?