Enhancing Contrast in Low-Cost USB Microscope Images: A Technical Guide for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on enhancing image contrast from low-cost USB microscopes.

Enhancing Contrast in Low-Cost USB Microscope Images: A Technical Guide for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on enhancing image contrast from low-cost USB microscopes. It explores the fundamental limitations of these devices, details practical software and algorithmic enhancement methods including cutting-edge deep learning, offers solutions for common hardware and imaging challenges, and validates performance against traditional microscopy for applications like cell culture monitoring and forensic material analysis. The goal is to empower scientists to achieve research-grade image quality with accessible, cost-effective tools.

Understanding the Limits: Why USB Microscopes Struggle with Image Contrast

Frequently Asked Questions (FAQs)

Q1: What are the main types of optical aberrations that degrade image quality in microscopy? The two primary types of optical aberrations are chromatic aberrations and geometric (monochromatic or spherical) aberrations [1]. Chromatic aberration occurs because a lens refracts different colors (wavelengths) of light at different angles, causing colored fringes as the wavelengths fail to converge at the same focal point [1]. Spherical aberration results from the spherical shape of a lens, where light rays passing through its edges focus at a different point than rays passing through the center, leading to blurry images [2] [1]. Astigmatism, another common aberration, causes off-axis points to appear as lines or ellipses instead of sharp dots [2].

Q2: How can I tell if my image is blurry due to spherical aberration versus simple defocus? An image that is simply out of focus will appear uniformly blurry and can often be corrected by adjusting the focus knob [3] [4]. Spherical aberration, however, often manifests as a haze or lack of sharpness that cannot be remedied by refocusing [3]. It can be caused by using an objective with a correction collar that is improperly set for the coverslip thickness, by examining a slide that is placed upside down, or by multiple coverslips stuck together [3].

Q3: My USB microscope is not detected by the software on my computer. What are the first steps I should take? This is a common connectivity issue. Please try the following steps in order:

- Check Privacy Settings (Windows): Your operating system's camera privacy permissions may be blocking access. Ensure that camera access is enabled for the software you are using [5] [6].

- Reinstall and Restart: Uninstall the microscope software, then restart your computer. After rebooting, reinstall the software and try connecting the microscope again [5] [4].

- Change USB Port: Plug the microscope into a different USB port on your computer [5].

- Select Correct Device in Software: If your computer has a built-in webcam, the software may default to it. Open the software's settings and manually select the "USB Microscope" as the video input device [6].

- Check for Hardware Conflicts: If you use an Oculus Rift, its sensors use a similar chipset and can cause driver conflicts. Disconnect the Oculus sensors, then follow specific driver update steps in the Device Manager to reassign the microscope's driver to "USB Video Device" [7].

Q4: What is the simplest way to improve contrast and clarity in a low-cost digital microscope? The most impactful and low-cost adjustments are often related to proper illumination and sample preparation:

- Adjust Your Light Source: Ensure the illumination is even and appropriately positioned to avoid shadows or bright spots [4].

- Clean the Optics: Use a soft brush or air blower to remove dust, and a microfiber cloth with lens cleaner to gently wipe the front lens of the objective. Contaminants like oil and dust are a major cause of haze and poor contrast [3] [4].

- Prepare Thin, Clear Specimens: Poor contrast can be inherent in thin biological specimens because their refractive index is very close to that of the surrounding medium, resulting in very weak scattering of light [8]. Ensuring thin, well-prepared samples can mitigate this.

Troubleshooting Guides

Guide 1: Correcting for Optical Aberrations

Objective: To identify and minimize the impact of optical aberrations on image quality. Background: Aberrations are imperfections in image formation caused by the inherent properties of lenses. Understanding and correcting for them is crucial for high-fidelity imaging, especially in quantitative research [1].

Protocol Steps:

- Identify the Aberration:

- Chromatic Aberration: Look for colored fringes (often purple or green) around the edges of your specimen [1].

- Spherical Aberration: The image appears hazy or blurry and cannot be brought into sharp focus across the entire field of view [3] [2].

- Astigmatism: Off-axis points appear as lines or ellipses instead of sharp dots [2].

- Select a Corrected Objective: The simplest method is to use an objective with a higher degree of optical correction. Refer to the table below for common types.

- Utilize Correction Collars: If available on your objective, adjust the correction collar while observing your sample to compensate for spherical aberration induced by coverslip thickness variations [3].

- Numerical Compensation (Advanced): For quantitative phase imaging techniques like Digital Holographic Microscopy (DHM), numerical aberration compensation methods can be employed. These methods use algorithms, such as Alternating Weighted Least Squares (AWLS) fitting with Zernike polynomials, to model and subtract phase aberrations from the acquired image computationally [9].

Table 1: Common Microscope Objective Types and Their Aberration Corrections

| Objective Type | Barrel Abbreviation | Chromatic Aberration Correction | Spherical Aberration Correction | Field Flatness | Typical Applications |

|---|---|---|---|---|---|

| Achromat | Achro, Achromat | 2 colors (red & blue) | 1 color | No (curved field) | Routine laboratory observation [1] |

| Plan-Achromat | Plan Achromat | 2 colors (red & blue) | 1 color | Yes (flat field) | Photomicrography where edge-to-edge focus is critical [1] |

| Semi-Apochromat | Fluor, Fl, Fluotar | 2-3 colors (improved) | 2-3 colors | Varies | Fluorescence microscopy; provides higher resolution and brightness [1] |

| Plan-Apochromat | Plan Apo | 4+ colors (deep blue to red) | 4+ colors | Yes (flat field) | Highest level of correction for demanding quantitative and research applications [1] |

Guide 2: Mitigating Sensor Noise and Improving Signal-to-Noise Ratio (SNR)

Objective: To implement strategies that reduce sensor noise and improve the Signal-to-Noise Ratio (SNR) for clearer images. Background: Sensor noise is the random variation in pixel signals that is not due to the light from the specimen. A high SNR is crucial for detecting weakly scattering specimens and for achieving good localization precision and spatial resolution [8]. SNR is quantified as the ratio of the average pixel value ((\overline{x})) to the standard deviation of the noise ((\sigma)): (SNR=\frac{\overline{x}}{\sigma}) [8].

Protocol Steps:

- Maximize Signal Collection:

- Ensure Proper Illumination: Adjust the microscope's light source to its optimal brightness. Too little light results in a weak signal, while too much can cause saturation and bloom.

- Use the Highest Resolution Mode: Configure your digital microscope's software to capture images at its highest available native resolution [4].

- Minimize Noise Sources:

- Free System Resources: Close unnecessary background applications on your computer to free up RAM and CPU power, which can reduce processing-related lag and noise [4].

- Use a High-Speed USB Port: Connect the microscope to a USB 3.0 or higher port to ensure sufficient data bandwidth and prevent artifacts from a lagging video feed [4].

- Software-Based Noise Reduction: Use image processing software to apply spatial or temporal averaging techniques. For example, averaging multiple frames of the same field of view can significantly reduce random noise.

Table 2: Quantitative Metrics for Image Quality Assessment and Improvement

| Metric | Calculation Formula | Description | Improvement Strategy |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | (SNR=\frac{\overline{x}}{\sigma}) | Measures how well the structure of interest can be discerned from the background noise [8]. | Increase illumination intensity; use frame averaging; cool the camera sensor. |

| Contrast-to-Noise Ratio (CNR) | (CNR=\frac{| {x}{A}-{x}{B}| }{\sigma}) | Quantifies the ability to distinguish between two specific features 'A' and 'B' [8]. | Optimize staining; use optical contrast techniques (like phase contrast); ensure even illumination. |

| Spatial Resolution | (\frac{{\lambda }{det}}{N{A}{ill}+N{A}_{det}}) | The smallest distance between two points that can be distinguished (Abbe limit for coherent imaging) [8]. | Use objectives with higher NA; utilize oil immersion; employ super-resolution techniques. |

Experimental Protocols for Enhanced Imaging

Protocol: Resolution and Contrast Measurement using a USAF Target

Objective: To quantitatively measure the spatial resolution and contrast of a low-cost USB microscope system. Background: This protocol uses a standardized USAF 1951 resolution target to determine the system's limiting resolution and to establish a baseline for image quality assessment.

Materials:

- USB Microscope

- USAF 1951 Resolution Target

- Computer with imaging software

- Stable platform

Workflow:

- Setup: Place the USAF target on the microscope stage. Ensure the microscope is firmly mounted and the target is perpendicular to the optical axis.

- Illuminate: Provide even, bright-field illumination from below the target (for transmitted light).

- Focus: Carefully adjust the focus to achieve the sharpest possible image of the target lines.

- Capture Image: Acquire an image of the target at the highest resolution setting.

- Analyze: In the captured image, identify the smallest group of lines where the line pairs are clearly distinguishable and not merged. The resolution is calculated based on the known line spacing of that group.

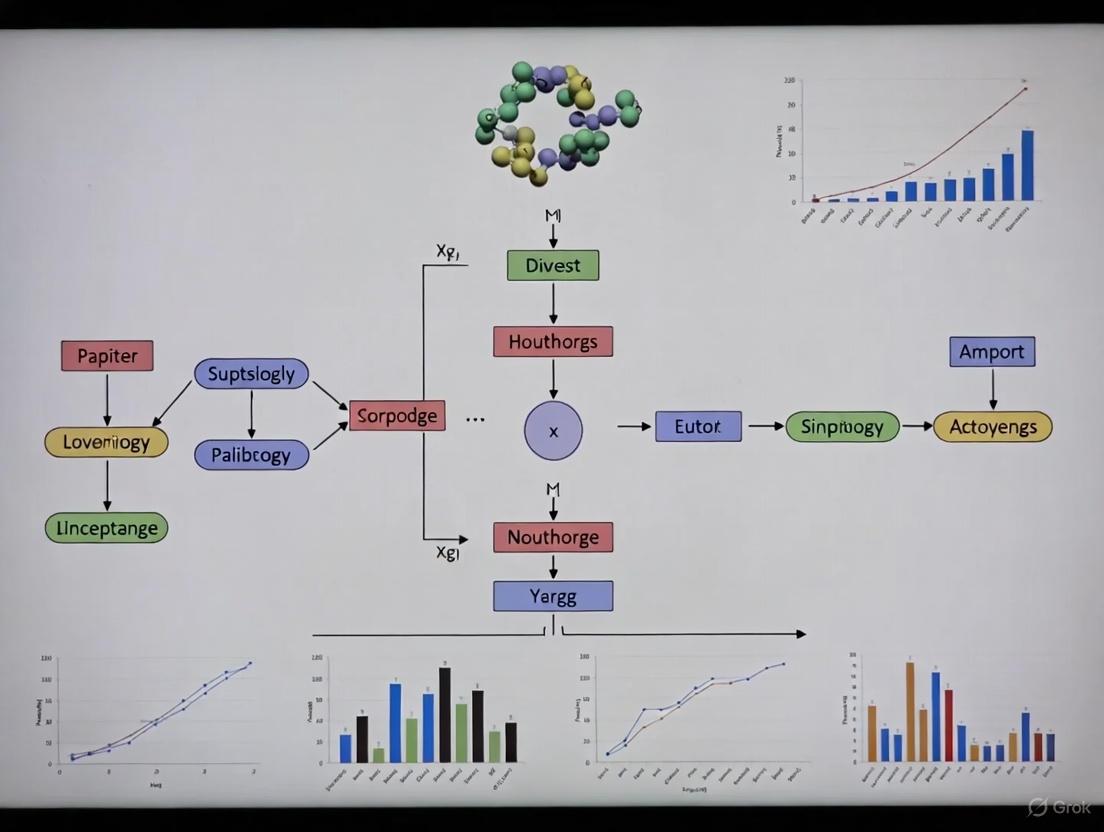

The following workflow diagram illustrates the logical sequence for diagnosing and addressing the core hardware constraints discussed in this guide.

Diagram 1: Troubleshooting workflow for hardware constraints.

Protocol: Phase Aberration Compensation using Alternating Weighted Least Squares (AWLS)

Objective: To automatically compensate for phase aberrations in quantitative phase images, such as those obtained from Digital Holographic Microscopy (DHM) setups. Background: Phase aberrations introduced by the optical system distort quantitative phase measurements. The AWLS method provides a robust numerical solution by iteratively separating the sample's true phase from the system's aberration profile [9].

Materials:

- DHM system or other quantitative phase microscope

- Computer with MATLAB or equivalent computational software

- Sample of interest

Workflow:

- Acquire Hologram: Record the digital hologram of your sample using the DHM system.

- Initial Phase Reconstruction: Reconstruct the phase map ((\Phi(x,y))) from the hologram using standard methods (e.g., spectral filtering and numerical propagation). This initial phase contains the object phase, noise, and aberrations: (\Phi(x,y)=\Phi{obj}(x,y)+\Phi{noise}(x,y)+\Phi_{aber}(x,y)) [9].

- Model Aberration with Zernike Polynomials: Model the phase aberration ((\Phi_{aber}(x,y))) as a linear combination of Zernike polynomials [9].

- AWLS Iteration:

- Variable Splitting: Decompose the problem into object terms and aberration terms.

- Alternate Updates: In each iteration, alternately update the estimate of the sample phase and the coefficients of the Zernike polynomials.

- Weighted Fitting: Use the Tukey biweight function to dynamically assign smaller weights to regions with high residuals (e.g., sample edges or noise outliers), making the fit robust to such artifacts [9].

- Compensation: Subtract the fitted aberration surface ((\Phi{aber}(x,y))) from the original reconstructed phase to obtain the corrected, aberration-free phase image of the object ((\Phi{obj}(x,y))).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Troubleshooting and Enhancement

| Item | Function / Explanation | Relevance to Low-Cost Systems |

|---|---|---|

| USA 1951 Resolution Target | A standardized slide with patterns of known size used to quantitatively measure and calibrate the spatial resolution of a microscope system. | Essential for benchmarking performance and verifying improvements after modifications. |

| Calibration Slide (Stage Micrometer) | A slide with a precise scale, used to calibrate the digital imaging software for accurate measurement of specimen dimensions. | Critical for ensuring measurement accuracy in quantitative analysis. |

| Immersion Oil | A high-refractive-index liquid placed between the objective lens and the coverslip. It reduces light refraction and increases the effective Numerical Aperture (NA), improving resolution [3]. | A cost-effective way to significantly boost resolution when using oil immersion objectives. |

| Lens Cleaning Kit | Includes a soft brush, air blower, microfiber cloths, and lens cleaning solution. Removes dust, oil, and debris that scatter light and degrade contrast [3] [4]. | The simplest and most immediate intervention to restore image quality. |

| Software (AWLS Algorithm) | Implements computational aberration compensation. The Alternating Weighted Least Squares method can model and subtract complex phase aberrations without complex hardware changes [9]. | A powerful software-based solution to overcome inherent optical flaws, aligning with the thesis of computational image enhancement. |

Troubleshooting Common Image Clarity Issues

FAQ 1: My images lack sharpness and fine detail, even at high magnification. Is this just a camera quality issue?

Not necessarily. While sensor quality is a factor, a fundamental cause is often the diffraction limit of light. When fine specimen details approach the size of the light's wavelength, light waves bend (diffract) around them, blurring the image together [10]. This creates a maximum theoretical resolution, beyond which higher magnification will not reveal more detail.

- Actionable Protocol: To maximize your setup's resolution:

- Ensure Adequate Illumination: Use your microscope's adjustable LED ring light to its fullest. Proper illumination allows for shorter exposure times, reducing noise [10].

- Match Pixel Size to Magnification: Resolution is tied to your objective's Numerical Aperture (NA). For lower-cost microscopes with fixed optics, the best practice is to avoid using digital zoom beyond the optical capability. Instead, get physically closer to the specimen to fill the frame before capturing the image [10].

- Update Software: Always use the latest version of your microscope's control software, as updates can include improved image processing algorithms [10].

FAQ 2: I can only get a thin "slice" of my specimen in focus at a time. The rest is blurry. How can I improve this?

This is a classic symptom of a shallow depth of field, which is particularly pronounced at high magnifications. While a physical property of optics, you can employ techniques to mitigate its impact.

- Actionable Protocol: Focus Stacking

- Secure Your Microscope: Mount your microscope on its included stand to prevent movement [11] [12].

- Capture an Image Stack: Take a series of images of your specimen, moving the focus slightly (e.g., by turning the focus knob) between each shot. Capture the entire Z-axis range you wish to be in focus.

- Software Processing: Use image-editing software (like Adobe Photoshop or specialized microscope software that often comes with the device) to combine the in-focus regions from each image into a single, fully-focused composite image [13]. This technique is often referred to as "depth composition" or "extended depth-of-field" in microscope software [13] [14].

FAQ 3: My images have a grainy appearance, uneven lighting, or strange color casts. How can I correct this during capture?

These issues are related to electronic noise and illumination. Correcting them at the source provides the best raw data for later analysis.

- Actionable Protocol: Flat-Field and Dark-Frame Correction

- Capture a "Dark Frame": With the same exposure settings you will use for your specimen, cover the microscope's lens or turn off its lights and capture an image. This records the camera's thermal and electronic noise [15].

- Capture a "Background Image" (Flat Field): Remove your specimen and capture an image of a clean, blank area of your slide or stage under typical illumination. This records any dust, debris, or unevenness in the light source [15].

- Software Subtraction: Use your microscope's advanced software (if available) or image processing protocols to subtract the dark frame and background image from your specimen image. This significantly improves signal-to-noise ratio and creates an even background [13] [15].

Experimental Protocol for Enhancing Image Contrast

This protocol outlines a method to enhance contrast in images captured with low-cost USB microscopes through post-processing.

1. Image Acquisition and Calibration

- Materials: USB microscope (e.g., models from Skybasic, AmScope, or Plugable with 2MP+ CMOS sensors [11] [13] [16]), computer with imaging software, specimen slides.

- Capture Raw Image: Acquire your specimen image, ensuring the focus is as good as possible.

- Record Background & Dark Frames: Follow the Flat-Field and Dark-Frame Correction protocol outlined in FAQ 3 to acquire the necessary correction images [15].

2. Image Processing Workflow

- Software: Use provided software (e.g., AmScopeAmLite, ToupView) or general image editors (e.g., ImageJ, Photoshop [15]).

- Step 1: Background & Noise Subtraction. Subtract the dark frame and background image from your raw specimen image [15].

- Step 2: Brightness and Contrast Adjustment. Use histogram stretching to redistribute pixel intensities across the full dynamic range, improving contrast [15].

- Step 3: Gamma Correction. Adjust gamma (typically between 1.2 and 1.8) to enhance the visibility of details in mid-tones without over-saturating bright areas [15].

- Step 4: Noise Reduction. Apply a mild smoothing or Gaussian blur filter to reduce random noise. Be cautious, as over-application will blur legitimate detail [15].

- Step 5: Sharpening. Apply an "Unsharp Mask" filter to enhance edge detail. Use a low radius and moderate amount to avoid introducing artifacts [15].

- Step 6: Color Balance. Adjust color sliders to remove unwanted color casts and restore natural colors based on your knowledge of the specimen [15].

The logical flow of this image processing protocol is summarized in the following diagram:

Data Presentation: USB Microscope Specifications and Processing Parameters

Table 1: Representative Specifications of Common Low-Cost USB Microscopes

| Brand / Model | Sensor Resolution | Maximum Optical Magnification | Connectivity | Key Features for Research |

|---|---|---|---|---|

| Skybasic WiFi Microscope [11] | 2MP CMOS | 50x-1000x (Digital) | USB, WiFi | Handheld, compatible with iOS/Android, includes stand |

| AmScope UTP200X020MP [13] | 2MP CMOS | 200x | USB | UVC compatibility, software with measurement tools, stand included |

| AmScope HHD510-W [12] | 2MP CMOS | 50x-1000x (Digital) | USB, WiFi | Rechargeable battery, fully portable, table stand with stage clips |

| Plugable USB2-MICRO-250X [16] | 2MP | 60x-250x | USB | Flexible gooseneck stand, observation pad, UVC plug-and-play |

Table 2: Typical Parameters for Image Processing Steps

| Processing Step | Software Tool Example | Key Parameter | Recommended Setting (Starting Point) |

|---|---|---|---|

| Background Subtraction | AmScope Software [13], ImageJ | Control Points / Averaging | Use multiple background images for averaging [15] |

| Histogram Stretching | Adobe Photoshop [15], GIMP | Input Levels | 10/220 (spreads histogram to improve contrast) [15] |

| Gamma Correction | Most image editors | Gamma Value | 1.2 - 1.8 (adjusts mid-tone brightness) [15] |

| Noise Reduction | ImageJ, Photoshop | Gaussian Blur Radius | 0.5 - 1.0 pixels (minimal to avoid blurring) [15] |

| Sharpening | Photoshop, GIMP | Unsharp Mask: Amount/Radius | Amount: 80-150%, Radius: 0.5-1.5 pixels [15] |

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 3: Essential Materials for Sample Preparation and Imaging

| Item | Function in Research Context |

|---|---|

| Standard Microscope Slides & Coverslips | Provides a clean, flat, and stable platform for mounting specimens for observation. |

| Immersion Oil | Used with high-magnification objectives (e.g., 100x) to reduce light refraction and increase resolution by matching the refractive index of glass. |

| Calibration Slide (Stage Micrometer) | A slide with a precise engraved scale. Essential for calibrating software measurement tools to ensure quantitative data accuracy [13] [17]. |

| Stains and Dyes (e.g., Methylene Blue) | Applied to specimens to enhance contrast in transparent or colorless samples, making cellular and structural details more visible. |

| LED Ring Light with Adjustable Brightness | Provides even, shadow-free illumination. Adjustable intensity is crucial for optimizing contrast for different specimens [11] [12] [16]. |

FAQs on Core Imaging Metrics

What is the relationship between resolution and contrast in digital imaging? Resolution and contrast are interdependent. High resolution allows you to see fine details, while contrast makes those details distinguishable from their surroundings [18]. If contrast is too low, details will be invisible regardless of how high your resolution is [19]. In digital images, contrast is the color or grayscale differentiation between different image features [18].

How does Signal-to-Noise Ratio (SNR) affect my microscope images? Signal-to-Noise Ratio (SNR) measures the sensitivity of your imaging system. The signal is the actual data from your sample, while the noise is random interference that obscures that data [20]. A higher SNR means a clearer, more usable image. For example, an SNR of >500:1 is considered good for a spectrometer, meaning the true data is 500 times stronger than the background interference [20]. Low SNR results in grainy, indistinct images.

What are some software solutions to improve contrast in low-cost setups? Many software tools can apply intensity transformation operations to enhance contrast after an image is captured [18]. This process works by broadening the range of brightness values in each color channel. Most microscope software includes sliders to adjust brightness and contrast [18]. Techniques like background subtraction can also increase contrast dramatically in brightfield imaging [19].

My image looks flat and dull. Is this a contrast or brightness issue? This is likely a contrast issue. Brightness refers to the overall intensity of the image, while contrast is the difference in intensity between features [18]. A "flat" image typically has compressed brightness values, meaning the darks aren't very dark and the lights aren't very light. You can correct this in software by stretching the histogram to use the full range of available intensity levels [18].

Troubleshooting Common Image Quality Problems

| Problem | Possible Cause | Solution |

|---|---|---|

| Low Contrast | Insufficient or non-uniform illumination [18]. | Adjust light source intensity and ensure even illumination (e.g., Köhler illumination) [19]. |

| Incorrect microscope adjustment [18]. | ||

| Grainy Image (Low SNR) | Short camera integration time or low light [20]. | Increase light exposure or integration time; use image averaging to reduce random noise [20]. |

| High electronic noise from the camera sensor. | ||

| Blurry Details (Low Resolution) | Using resolution setting too high for hand-held operation [21]. | For hand-held use, choose a lower resolution (e.g., 800x600) for faster capture and less motion blur [21]. |

| Incorrect focus or vibration. | Use a stable mount and carefully adjust focus. | |

| Halos Around Edges | Use of phase contrast on unsuitable (thick) specimens [19]. | Use phase contrast only for thin specimens (e.g., single cell layers); for thicker samples, use techniques like DIC [19]. |

Quantitative Metrics for Image Analysis

The table below summarizes the target values for key metrics discussed.

| Metric | Description | Target Values / Guidelines |

|---|---|---|

| Spatial Resolution | The smallest distance between two distinguishable points in an image. | Determined by sensor pixel size and objective numerical aperture (NA). Higher NA provides better resolution [19]. |

| Signal-to-Noise Ratio (SNR) | The ratio of the level of the desired signal to the level of background noise. | A ratio greater than 500:1 is considered good for optical devices [20]. |

| Color Contrast Ratio | The luminance difference between foreground (text) and background colors. | For accessibility: 7:1 for standard text; 4.5:1 for large text [22] [23]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers focusing on enhancing contrast in biological samples, the following reagents and materials are fundamental.

| Item | Function in Experiment |

|---|---|

| Eosin and Hematoxylin | Classic dyes used in histology to generate color contrast in tissue sections (e.g., for brightfield imaging) [19]. |

| Alexa Fluor Dyes (e.g., 488, 568) | Fluorescent dyes (fluorophores) conjugated to antibodies or phalloidin to label specific cellular targets like actin filaments [24]. |

| DAPI (4',6-diamidino-2-phenylindole) | A fluorescent stain that binds strongly to DNA, used to label cell nuclei in fluorescence microscopy [24]. |

| Aqueous Mounting Media | A solution used to preserve and mount specimens under a coverslip, often critical for maintaining the optical properties of the sample [19]. |

| Hoechst Stains | Cell-permeable fluorescent stains that bind to DNA, commonly used for live-cell nuclear labeling [24]. |

| Phase Contrast Objectives | Specialized microscope objectives (inscribed with Ph1, Ph2, etc.) equipped with a phase ring to enable observation of unstained, live cells [19]. |

Experimental Protocol: Assessing and Improving Image Contrast

This workflow outlines the key steps for diagnosing and remedying poor contrast in images obtained from a USB microscope.

Step-by-Step Methodology:

- Initial Image Acquisition: Capture an image of your sample using your standard USB microscope setup. Ensure the initial lighting is even and the image is in focus.

- Quality Assessment: Critically evaluate the image. Look for a lack of differentiation between the specimen and background, or between internal structures of the specimen. The image may appear "flat" [18] [19].

- Optical Setup Check (Hardware): Before using software, ensure your hardware is optimized.

- Illumination: Verify that the sample is evenly illuminated. Adjust the intensity of the light source to ensure it is sufficient but not causing glare [18] [25].

- Condenser: If your microscope has one, ensure the condenser is properly aligned for Köhler illumination to achieve uniform brightness [19].

- Software Adjustments: Use your microscope's accompanying software to manipulate the image.

- Brightness/Contrast Sliders: Adjust these controls. Moving the contrast slider to the right will stretch the histogram, increasing the difference between dark and light pixels and improving contrast [18].

- Histogram Inspection: Use the software's histogram tool. A histogram clustered in the middle indicates low contrast. The goal of adjustment is to spread the histogram across the full intensity range [18].

- Sample Preparation (if optical/software methods are insufficient): If the specimen is inherently transparent and lacks contrast (like live, unstained cells), physical enhancement may be necessary.

- Staining: Apply colored dyes (for brightfield) or fluorescent dyes (for fluorescence microscopy) to specific cellular structures [19] [24].

- Optical Techniques: Use specialized methods like phase contrast, which translates subtle phase shifts in light into visible contrast differences, making unstained cells visible [19].

Key Technical Diagrams

Assessing USB Microscope Capabilities in Forensic and Biological Contexts

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My computer does not detect the USB microscope. What should I do? This is a common issue often related to software settings or USB port configurations.

- Solution: Follow these troubleshooting steps:

- Check Privacy Settings (Windows): Go to Windows Privacy Settings and ensure camera access is enabled for the microscope software you are using [5].

- Restart the Computer: A simple reboot can resolve many device detection issues [5].

- Try a Different USB Port: Plug the microscope into a different USB port on your computer, preferably a USB 3.0 port if available and compatible [5].

- Select the Correct Device in Software: If your laptop has a built-in webcam, the software may default to it. Open your camera software's settings (often a gear icon) and manually switch the device to the "USB Microscope" [6].

- Use Supported Software: Ensure you are using supported camera software, such as the manufacturer's Digital Viewer or the Windows Camera app, and close other programs that might be accessing the camera [5].

Q2: What are the main limitations of using a low-cost USB microscope for research? While USB microscopes offer excellent portability and convenience, researchers should be aware of their constraints compared to laboratory-grade systems [26].

- Limited Field of View: The small size of portable microscopes can result in a more restricted field of view than larger, stationary microscopes [26].

- Lower Magnification Power: They may have lower maximum magnification than traditional laboratory microscopes [26].

- Imaging Quality: Factors like depth of field, resolution, and image stability may be limited by the device's specifications [26].

- Battery Life: For cordless models, limited battery life can be a constraint during long fieldwork sessions [26].

Q3: Can USB microscopes be used with smartphones or tablets?

- Answer: Standard USB microscopes are designed for use with computers and are not typically supported for direct connection to smartphones, iPads, or Chromebooks. However, you may find third-party USB-to-device connectors and software, though this is not officially supported by most manufacturers. Alternatively, specific smartphone microscope attachments are available that clip onto your mobile device's camera [6].

Troubleshooting Guide: Image Quality and Contrast

Problem: Captured images have poor contrast, making it difficult to distinguish fine details in biological or trace evidence samples.

Objective: To enhance the contrast of images obtained from a USB microscope through simple, non-destructive sample preparation and optimal setup.

Methodology:

Sample Preparation for Enhanced Contrast:

- Liquid Samples (Biological): When analyzing cellular suspensions, add a small amount of a safe, colored dye (e.g., methylene blue for animal cells, safranin for plant cells) to stain the structures of interest. This selectively increases the color contrast between the sample and the background.

- Solid Samples (Trace Evidence): For pale samples like certain fibers or hairs, place them on a dark, non-reflective background. For dark samples, use a white or light-gray background. This creates a stark contrast against the sample's edges.

Optimal Microscope Setup:

- Angle of Illumination: Adjust the microscope's built-in LED lights. Instead of direct, on-axis lighting, angle the light source slightly. This oblique illumination can enhance the visibility of surface textures and edges in toolmarks or soils by creating small shadows [27].

- Maximize Resolution: Ensure the microscope is set to its highest resolution setting in the software before capturing images.

Digital Color Contrast Analysis:

- Procedure: After capturing an image, use a color contrast analyzer tool (like the WebAIM Contrast Checker) to evaluate the contrast ratio between key features and their immediate background in your image [23].

- Assessment: While WCAG guidelines are for web text, they provide a excellent quantitative benchmark for visual analysis. Aim for a contrast ratio of at least 4.5:1 for critical details, as this is the minimum for standard text under accessibility guidelines [28]. This provides a measurable goal for image clarity.

Experimental Workflow for Sample Analysis

The following diagram outlines the core workflow for processing a sample using a USB microscope, from setup to analysis, incorporating contrast enhancement steps.

Research Reagent Solutions for Contrast Enhancement

The table below lists key reagents and materials used to enhance contrast in microscopic analysis for biological and forensic applications.

| Item | Function/Application |

|---|---|

| Methylene Blue | A histological stain used to enhance the visibility of cellular nuclei and other acidic structures in biological samples under the microscope. |

| Safranin | A biological stain commonly used in plant histology to color lignified and cutinized tissues a red hue, providing contrast with other cell types. |

| Non-Reflective Backgrounds | Cards or mats in black, white, and shades of gray used to create a high-contrast backdrop for trace evidence such as hairs, fibers, or soil particles. |

| Immersion Oil | A clear oil used with high-magnification microscope objectives to reduce light refraction and scatter, resulting in a brighter image with better resolution and contrast. |

| Color Contrast Analyzer Software | Digital tools (e.g., based on WCAG guidelines) used to quantitatively measure the contrast ratio between features in a digital image, providing an objective quality metric [23]. |

From Pixels to Insights: Software and Algorithmic Contrast Enhancement Techniques

Technical Support Center

This support center provides troubleshooting and methodological guidance for researchers working to enhance contrast in images from low-cost, USB-based microscopes, a common tool in resource-limited settings.

Frequently Asked Questions (FAQs)

1. The full-resolution images from my low-cost microscope look blurry. Why is this, and how can I get a truly sharper image? The blurriness is often due to Bayer interpolation. Most inexpensive color camera sensors use a Bayer filter, where each pixel sensor captures only red, green, or blue light. The camera's processor must then "guess" (interpolate) the two missing colors for every pixel, which inherently blurs the image by a pixel or two [29]. A practical solution is to capture at the sensor's highest resolution and then downsample the image in software. For example, saving a 12 MP image from a 48 MP sensor will be sharper than a native 12 MP image, because the downsampling process uses real data from multiple sensor pixels to create each output pixel, effectively bypassing the limitations of interpolation [29].

2. My microscope images lack defined edges, making feature analysis difficult. What is a robust method for edge detection? For enhancing edge definition, the Kirsch operator is an effective classical technique. It is a directional edge detector that calculates edge strength by convolving the image with eight different compass-direction kernels [30]. You can implement it in Python, and optional CUDA acceleration is available for processing larger images or batches [30]. The primary parameter to adjust is the derivative threshold, which filters out weak edges considered noise (the default is often 383) [30].

3. What is the most effective denoising technique for grayscale biological images? A comparative study of 2D denoising techniques on functional MRI data, which shares characteristics with microscopic biological images, found that the Wavelet transform with reverse biorthogonal basis functions provided the best performance. It excelled in two key metrics: improving the signal-to-noise ratio (SNR) while effectively preserving the shape of the original structures [31].

4. How can I use a Bayer sensor for high-quality computational microscopy like Fourier ptychography? Using a Bayer sensor in advanced techniques like Fourier ptychography (FP) requires special consideration. The Bayer filter means each color channel is sparsely sampled. Research indicates that treating the raw Bayer data as a sparsely-sampled image during the FP reconstruction algorithm can yield better results than first applying a standard demosaicing algorithm, as the latter can introduce interpolation artefacts that degrade the final reconstruction [32].

Troubleshooting Guides

Problem: Persistent Color Artefacts (False Colours) in Images

- Description: Unnatural color shifts or rainbowing patterns appear along high-contrast edges in the image.

- Primary Cause: This is a classic artifact of the demosaicing process. When the algorithm interpolates missing colors across, rather than along, sharp edges, it can miscalculate color values [33].

- Solutions:

- Use RAW Mode: If your microscope camera supports it, capture images in a RAW format. This allows you to use more sophisticated demosaicing algorithms in post-processing software (e.g., Adobe Lightroom, DCRAW) that are better at handling edges [33].

- Post-Processing Algorithm: Apply a "smooth hue transition" algorithm during or after demosaicing. These algorithms are specifically designed to prevent false colors by ensuring hue changes gradually [33].

- Software Binning: As a workaround, you can downscale your image as mentioned in FAQ #1. This process can mitigate the effect of demosaicing artefacts by combining sensor pixels [29].

Problem: Noisy Images Under Low Light Conditions

- Description: Images have a grainy appearance, obscuring fine details, which is common when imaging with low illumination to preserve samples.

- Primary Cause: Low signal-to-noise ratio (SNR) due to insufficient light.

- Solutions:

- Select Optimal Denoising Technique: Based on empirical comparisons, implement a denoising algorithm using a Wavelet transform with a reverse biorthogonal basis [31].

- Technique Comparison: The table below summarizes the performance of different denoising methods from a comparative study to guide your selection.

Table 1: Comparative Performance of 2D Denoising Techniques

| Denoising Technique | Signal-to-Noise (SNR) Improvement | Shape Preservation Quality |

|---|---|---|

| Wavelet Transform (Reverse Biorthogonal) | Best | Best |

| Gaussian Smoothing | Moderate | Lower |

| Median / Weighted Median Filtering | Lower | Moderate |

| Anisotropic 2D Averaging | Moderate | Moderate |

Experimental Protocols

Protocol 1: Kirsch Edge Detection for Feature Enhancement

This protocol details how to apply the Kirsch operator to enhance edges in a grayscale microscope image.

- Input: Load an 8-bit grayscale image. If working with a color image, first convert it to grayscale.

- Parameter Setup: The Kirsch operator uses a set of eight 3x3 convolution kernels, each corresponding to a compass direction (N, NW, W, SW, S, SE, E, NE).

- Convolution: Convolve the input image with each of the eight Kirsch kernels.

- Edge Strength Calculation: For each pixel location, the edge strength (gradient magnitude) is defined as the maximum value output by any of the eight kernels at that pixel [34].

- Thresholding (Optional): Apply a threshold to the resulting edge strength map. Gradient values below the threshold (e.g., default of 383) are set to zero to suppress noise [30].

- Output: The final output is an 8-bit image map of edge strengths [34].

Diagram: Kirsch Edge Detection Workflow

Protocol 2: Wavelet Denoising with Reverse Biorthogonal Basis

This protocol is based on the technique identified as most effective for preserving structure while reducing noise [31].

- Input: Acquire a 2D grayscale image (e.g., from an fMRI or microscope).

- Wavelet Transformation: Apply a 2D wavelet transform to the noisy image using reverse biorthogonal basis functions.

- Thresholding: Apply a thresholding function (e.g., soft-thresholding) to the wavelet coefficients. This step aims to suppress coefficients that are likely to represent noise while preserving those representing the actual signal.

- Inverse Transformation: Perform an inverse wavelet transform on the thresholded coefficients to reconstruct the image.

- Evaluation: Assess the output image using quantitative metrics like Signal-to-Noise Ratio (SNR) and qualitative assessment of shape preservation.

Diagram: Wavelet Denoising Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Image Enhancement

| Item | Function / Explanation |

|---|---|

| Bayer Sensor (RAW Data) | The raw data from the sensor provides uncompromised, pre-demosaiced information, allowing for the application of superior interpolation algorithms in software [29] [33]. |

| Reverse Biorthogonal Wavelet | A specific mathematical function used in the most effective denoising protocol. It is optimal for decomposing an image and separating noise from signal without oversmoothing structures [31]. |

| Kirsch Convolution Kernels | A set of eight 3x3 matrices. Each is designed to highlight edges in a specific compass direction; used together, they provide a robust map of edge strengths [30] [34]. |

| Fourier Ptychography (FP) Algorithm | A computational super-resolution technique that uses multiple images taken with different illumination angles to synthesize a high-resolution, high-contrast image, overcoming the limits of the sensor's hardware [32]. |

For researchers utilizing low-cost USB microscopes, achieving high-quality, publication-ready images often presents a significant challenge. These affordable imaging tools, while increasing accessibility, frequently produce data compromised by noise, low resolution, and insufficient contrast, limiting their utility in critical research applications such as drug development and cellular imaging. Fortunately, the rapid advancement of deep learning, particularly Convolutional Neural Networks (CNNs) and Generative Adversarial Networks (GANs), offers powerful software-based solutions to overcome these hardware limitations. These models can computationally enhance image quality by learning complex mappings from low-quality to high-quality images, effectively denoising grainy images and increasing their resolution. This technical support center outlines how these technologies can be integrated into a research workflow, providing practical methodologies and troubleshooting guidance to help scientists enhance contrast and clarity in images from low-cost microscopes, making high-quality image analysis more accessible and affordable.

Model Performance at a Glance

The following tables summarize the performance and characteristics of popular deep learning models for super-resolution and denoising, providing a quick reference for model selection.

Table 1: Performance of Super-Resolution Models on Benchmark Datasets (PSNR in dB)

| Model | Set5 | Set14 | B100 | Urban100 | Manga109 | Key Characteristics |

|---|---|---|---|---|---|---|

| LrfSR (x4) [35] | 32.23 | 28.65 | 27.59 | 26.36 | 30.53 | Lightweight, large receptive field, efficient attention modules |

| SRDDGAN (x4) [36] | - | - | - - | - | - | High perceptual quality, fast sampling (4 steps), diverse outputs |

| SRCNN (x?) [37] | - | - | - | - | - | Pioneering CNN model, simple three-layer architecture |

| SRGAN (x?) [37] | - | - | - | - | - | GAN-based, focuses on perceptual quality over PSNR |

Note: "-" indicates that specific quantitative values were not available in the provided search results. PSNR (Peak Signal-to-Noise Ratio) is a common metric for image reconstruction quality, with higher values generally indicating better fidelity to the original image.

Table 2: Top Submissions from NTIRE 2025 Image Denoising Challenge (AWGN σ=50)

| Team Name | Rank | PSNR (dB) | SSIM |

|---|---|---|---|

| SRC-B | 1 | 31.20 | 0.8884 |

| SNUCV | 2 | 29.95 | 0.8676 |

| BuptMM | 3 | 29.89 | 0.8664 |

| HMiDenoise | 4 | 29.84 | 0.8653 |

| Pixel Purifiers | 5 | 29.83 | 0.8652 |

SSIM (Structural Similarity Index) measures the perceptual similarity between two images. A value of 1 indicates perfect similarity [38].

Experimental Protocols for Model Implementation

Implementing a Lightweight Super-Resolution Model (LrfSR)

The LrfSR model is ideal for resource-constrained environments, as it is designed to be lightweight while maintaining performance.

Core Methodology:

- Information Distillation with Large Receptive Fields: Use the proposed Large Receptive Field Distillation Module (LrfDM). This module employs dilated convolutions to expand the receptive field without increasing parameters, allowing the network to capture more contextual information and pixel-to-pixel relationships, which is crucial for reconstructing high-frequency details [35].

- Efficient Attention Mechanisms: Integrate two novel attention modules:

- Enhanced Contrast-Aware Channel Attention (ECCA): Enhances the model's focus on the most informative feature channels.

- Simplified Enhanced Spatial Attention (SESA): Helps the model prioritize spatially important regions within the feature maps. These mechanisms improve image quality without a significant parameter cost [35].

- Dense Connectivity: Employ a dense connectivity structure between LrfDMs. This allows for efficient refinement of local features by reusing features from all preceding modules, improving information flow and gradient propagation throughout the network [35].

Workflow Diagram: Low-Cost Microscope Image Enhancement

Denoising Microscope Images with a CNN (SRCNN-based)

This protocol is based on the SRCNN architecture and its 2.5D extension, which is simple to implement and effective for denoising and super-resolution.

Core Methodology:

- Data Preparation and Preprocessing:

- For a low-cost microscope, collect pairs of low-quality and high-quality images of your sample. If high-quality images are unavailable, you can synthetically generate training pairs by applying downsampling and noise to existing high-resolution images.

- Logarithmic Transformation: For images with a high dynamic range (e.g., some fluorescence images), apply a logarithmic transformation to the voxel values as a preprocessing step. This manages extreme values (e.g., very bright regions) and prevents them from dominating the learning process, thereby improving training effectiveness [39].

- Model Training with 2.5D-SRCNN:

- While the original SRCNN processes images in 2D, a 2.5D approach is more effective for 3D data like microscope z-stacks. This model takes multiple adjacent slices (e.g., the focus slice and 4 slices before and after it) as input. It outputs one or two high-resolution slices, effectively leveraging 3D contextual information with less memory consumption than full 3D processing [39].

- The network consists of three primary layers:

- Loss Function: Use the Mean Squared Error (MSE) loss between the model's output and the ground-truth high-resolution image. This directly optimizes for the PSNR metric.

Implementing a Fast GAN for Super-Resolution (SRDDGAN)

SRDDGAN combines the stability of diffusion models with the speed of GANs, making it suitable for generating diverse, high-quality super-resolution results quickly.

Core Methodology:

- Addressing Slow Sampling: The model tackles the slow sampling of diffusion models by replacing the Gaussian assumption for the denoising distribution with a multimodal distribution modeled by a conditional GAN. This enables large-step denoising, reducing the required steps from thousands to as few as four [36].

- Conditional GAN Architecture:

- Generator: The generator is conditioned on the low-resolution (LR) input image. An LR Encoder module is used to extract feature details from the LR image and transform them into a latent representation, which constrains the solution space for the high-resolution (HR) output.

- Discriminator: The discriminator is trained to distinguish between the generated HR images and real HR images, given the LR input as a condition.

- Stabilized Training: To combat GAN training instability and promote output diversity, instance noise is injected into the inputs of the discriminator. This helps prevent overfitting and mode collapse [36].

- Loss Functions: Combine multiple loss functions to guide the training:

- Adversarial Loss: From the GAN, it encourages the generation of perceptually realistic images.

- Content Loss (e.g., MSE): Ensures pixel-wise fidelity to the ground-truth image.

- Style Loss: Helps in recovering and retaining realistic high-frequency details in the generated image [36].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Deep Learning-Enhanced Microscopy

| Item Name | Function/Application | Example/Notes |

|---|---|---|

| Low-Cost Microscope Platform | Core image acquisition device. | Raspberry Pi-based microscope [40] [41]; Open-source components for local manufacturing [41]. |

| Raspberry Pi Computer | Low-cost computational hardware for running models. | Can be used for image capture control and executing trained models [40] [41]. |

| DIV2K & LSDIR Datasets | Benchmark datasets for training super-resolution models. | Contain high-resolution images for training general-purpose models [38]. |

| TCGA (The Cancer Genome Atlas) | Source of histopathology images for training domain-specific models. | Used for training models on H&E stained tissue samples [41]. |

| CellPainting Assay | A multiplexed staining method for image-based profiling. | Generates rich morphological data for phenotypic screening in drug discovery [42]. |

| CellProfiler | Open-source software for automated image analysis. | Used for feature extraction and measurement in high-content screening [42]. |

| NEMA Phantom | Tool for validating quantitative accuracy in medical imaging. | Used to evaluate metrics like SUVmax in PET denoising studies [39]. |

Troubleshooting Guides and FAQs

Image Quality and Model Performance

Q1: The output of my super-resolution model is blurry and lacks high-frequency details. What could be wrong?

- A: This is a common issue. Several factors can contribute:

- Insufficient Receptive Field: Your model might not be capturing enough contextual information. Consider integrating modules that use dilated convolutions to artificially enlarge the receptive field without significantly increasing parameters, as seen in the LrfDM block [35].

- Loss Function: If you are only using MSE loss, the model may be producing "averaged" results that are perceptually blurry. Incorporate an adversarial loss (GAN) and a style loss to encourage the generation of sharper, more texturally realistic images [36].

- Model Capacity: Your network might be too shallow or have too few parameters to learn the complex mapping from LR to HR. Gradually increase model depth or width while monitoring performance on a validation set.

Q2: How can I trust the quantitative results from my denoised images, especially in medical or biological contexts?

- A: Quantitative validation is crucial.

- Use Phantoms: Perform a phantom study with known structures and concentrations (e.g., a NEMA phantom). This allows you to validate that your model maintains quantitative accuracy, such as preserving standardized uptake values (SUV) in PET imaging or intensity measurements in fluorescence microscopy [39].

- Robust Metrics: Rely on multiple metrics. While PSNR is good for pixel-wise fidelity, the Structural Similarity Index (SSIM) often correlates better with human perception of quality [38] [39].

Technical Implementation and Training

Q3: Training my GAN-based model is unstable. The results are poor, or the model collapses. How can I fix this?

- A: GAN instability is a well-known challenge.

- Instance Noise: Inject instance noise into the inputs of your discriminator. This technique helps stabilize training by preventing the discriminator from becoming too powerful too quickly, thus giving the generator a chance to learn effectively [36].

- Conditioning: Ensure your generator and discriminator are properly conditioned on the low-resolution input image. A well-designed LR Encoder can strongly guide the generation process and improve stability [36].

- Loss Functions: Experiment with different GAN loss functions (e.g., Wasserstein loss) that are known to be more stable than the original minimax loss.

Q4: I have 3D image stacks, but 3D convolutional models are too memory-intensive. What are my options?

- A: A 2.5D approach is an excellent compromise. Instead of processing the entire 3D volume at once, your model can take a small stack of consecutive 2D slices as input (e.g., 5-9 slices) and predict the central high-resolution slice(s). This leverages the 3D contextual information from adjacent slices while keeping computational demands manageable, as demonstrated in 2.5D-SRCNN [39].

Data and Workflow

Q5: My model works well on clean test data but fails on real-world images from my low-cost microscope. Why?

- A: This is typically a domain gap issue.

- Degradation Model: The degradation (downsampling, noise, blur) you applied to create your training data does not match the real degradation in your microscope images.

- Solution: Develop a more complex and realistic degradation model for training. Instead of simple bicubic downsampling, use a randomized pipeline that combines blur, complex downsampling, and noise degradation to better simulate the real-world conditions of your imaging setup [36]. Fine-tuning your model on a small set of real low-quality/high-quality image pairs from your microscope is also highly effective.

Q6: Can these deep learning models be integrated into a high-content screening (HCS) pipeline for drug discovery?

- A: Absolutely. Using deep learning for image enhancement can significantly improve the quality of HCS data. Enhanced images can lead to more robust feature extraction in tools like CellProfiler and more accurate image-based profiling. This can improve the clustering of compounds by mechanism of action and the identification of novel therapeutic targets, thereby accelerating the drug discovery process [42] [43].

Implementing Deep Learning-Based Extended Depth of Field (EDoF) for Clearer Z-Stacks

FAQs: Core Concepts and Setup

Q1: What is the fundamental advantage of using a deep learning-based EDoF approach over traditional Z-stacking for low-cost microscopes?

Traditional Z-stacking requires capturing multiple images at different focal planes and combining them, which is time-consuming, causes photobleaching, and demands precise mechanical control often lacking in low-cost setups [44] [45]. A deep learning-based EDoF method, in contrast, can generate a single, fully-focused image from a limited number of inputs, or even a single snapshot, by using a computational model to overcome the optical limitations of affordable hardware [46]. This significantly speeds up acquisition and reduces hardware complexity.

Q2: My USB microscope produces images with chromatic aberrations and misalignments. Can EDoF methods still work?

Yes, but pre-processing is critical. Images from low-cost devices frequently suffer from issues like chromatic aberrations, vignetting, and spatial misalignments between focal planes. A successful workflow must include pre-processing steps such as chromatic alignment to correct color shifts and elastic image registration to align the frames in your Z-stack before they are fed into the deep learning model [46]. Neglecting this will severely degrade the quality of your final EDoF image.

Q3: What are the key hardware components for implementing PSF engineering in a EDoF system?

Point Spread Function (PSF) engineering modifies the optical path to create a depth-invariant blur that is later computationally decoded. Key components include:

- Phase Mask: A physical optical element placed at the Fourier (aperture) plane of the microscope to modulate the light wavefront [47] [45].

- 4f Optical System: A classic setup using two lenses to provide access to the Fourier plane where the phase mask is inserted [47].

- Computational Backbone: A computer with a capable GPU to run the post-processing deblurring convolutional neural network (D-CNN) that reconstructs the final sharp image [47].

Troubleshooting Guides

Issue 1: Blurry or Artifact-Ridden EDoF Reconstruction

| Symptom | Possible Cause | Solution |

|---|---|---|

| Final output is blurry across all depths. | The trained model is over-generalized or lacks sufficient features. | Increase model capacity or use a deeper network architecture [46]. |

| Strange, unrealistic textures or "hallucinations" in the output. | The training dataset was too small or not representative of your samples. | Augment your training data with more real-world images from your microscope or use a larger, more diverse public dataset [46]. |

| Good reconstruction in some areas, blurry in others. | Incorrect or insufficient Z-stack input. The stack does not cover the entire sample depth. | Ensure your Z-stack acquisition covers the full thickness of the specimen with adequate step size between frames [44]. |

| Persistent blur and chromatic fringes. | Failure to perform pre-processing alignment. | Implement a robust pre-processing pipeline including rigid and elastic alignment of the Z-stack frames before generating the EDoF image [46]. |

Issue 2: Poor Performance of the End-to-End Optimized System

| Symptom | Possible Cause | Solution |

|---|---|---|

| The system fails to converge during training. | Incompatibility between the learned optics (phase mask) and the D-CNN. | Jointly optimize the phase mask and the D-CNN parameters in a true end-to-end fashion, allowing both components to co-adapt [47]. |

| The reconstructed image lacks high-frequency details. | The loss function is oversimplified. | Use a loss function that penalizes perceptual dissimilarity, such as a combination of L1/L2 loss and a perceptual loss (e.g., VGG-based) [47]. |

| The PSF is not depth-invariant. | Sub-optimal phase mask design. | Utilize an end-to-end framework that specifically optimizes the phase mask to achieve a depth-invariant PSF across your desired depth range [47]. |

Experimental Protocols & Workflows

Protocol 1: Basic EDoF Generation from Z-stack with Pre-processing

This protocol is designed for generating an EDoF image from a Z-stack captured on a standard or low-cost microscope [46].

- Sample Preparation: Prepare and mount your specimen on the stage of your USB microscope.

- Z-stack Acquisition: Using the microscope's software, capture a series of images (a Z-stack) by moving the objective or stage vertically in fine, predefined steps (e.g., 0.5 µm). Ensure the stack covers the entire depth of the specimen.

- Pre-processing (Critical for Low-Cost Scopes):

- Chromatic Alignment: Correct for lateral chromatic aberration by aligning the color channels of each image in the stack based on a calibration image.

- Rigid & Elastic Registration: Align all images in the Z-stack to correct for any lateral shifts or warping that occurred during acquisition. This ensures each pixel location corresponds to the same point in the specimen across all focal planes.

- EDoF Generation: Feed the pre-processed Z-stack into a pre-trained deep learning model (e.g., EDoF-CNN-Fast or EDoF-CNN-Pairwise) to generate a single, all-in-focus output image [46].

- Validation: Compare the EDoF output with individual frames of the Z-stack to verify that features from all depths are in focus.

Protocol 2: Implementing an End-to-End EDoF Microscope with PSF Engineering

This advanced protocol involves modifying the optics and jointly optimizing the hardware and software [47] [45].

- Optical Setup: Configure a 4f microscope system. Place a programmable phase mask (e.g., a spatial light modulator) or a metasurface at the Fourier plane of this system.

- Data Collection for Training: Collect a large dataset of high-resolution, sharp images of your sample of interest. This dataset will be used to teach the system what a "good" image looks like.

- End-to-End Optimization:

- Forward Model: In simulation, the sharp images are passed through an optical layer that applies the current phase mask pattern and simulates defocus blur.

- Reconstruction: The resulting blurred images are passed through the D-CNN to reconstruct a sharp output.

- Loss Calculation & Backpropagation: The difference between the reconstructed image and the original sharp image is calculated. This error is then backpropagated through both the D-CNN weights and the phase mask pattern simultaneously to update their parameters.

- Fabrication & Deployment: Once optimized, the final phase mask design is fabricated as a physical metasurface or diffractive optical element (DOE) and installed in the microscope. The corresponding D-CNN is deployed for image reconstruction.

The following diagram illustrates the data flow and optimization process of this end-to-end framework:

Quantitative Data & Specifications

Table 1: Comparison of EDoF Techniques for Microscopy

| Technique | Principle | Best For | Extended DOF Range (Example) | Key Hardware Needs |

|---|---|---|---|---|

| Traditional Z-stacking [44] | Multi-image acquisition & fusion | Static samples, high-end systems | N/A (Depends on stack depth) | Precision motorized stage |

| Deep Learning EDoF from Z-stack [46] | Computational fusion via CNN | Low-cost microscopes, legacy data | N/A (Software-based) | Standard USB microscope |

| PSF Engineering with Metasurfaces [47] | Depth-invariant PSF + Deconvolution | High-NA systems, snapshot imaging | Defocus coefficient ~245 (Superior EDoF) | 4f system, Metasurface/DOE |

| Compact PSF Engineering [45] | Phase mask in objective BFP | High-throughput systems, incubators | 1.9x DOF improvement | Modified objective lens |

Table 2: Computational Requirements for EDoF Models

| Model / Component | Function | Key Parameters | Training/Execution Context |

|---|---|---|---|

| EDoF-CNN-Fast / Pairwise [46] | Generates EDoF from aligned Z-stack | Convolutional layers, pairwise connections | Trained on public datasets (e.g., Cervix93) |

| Deblurring CNN (D-CNN) [47] | Recovers sharp image from encoded input | Optimized jointly with phase mask | End-to-end optimization framework |

| TrueSpot Software [48] | Automated quantification of puncta (2D/3D) | Automated threshold selection | Runs on desktop or computer cluster (ACCRE) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for EDoF Implementation

| Item | Function in the Experiment | Specification / Example |

|---|---|---|

| Low-Cost USB Microscope [40] [49] | Primary image acquisition device; the target for enhancement. | Example: AmScope UTP200X020MP (2MP sensor, LED ring light) or a custom Raspberry Pi microscope [40]. |

| Phase Mask / Metasurface [47] [45] | Modulates the light wavefront to create a depth-invariant PSF for snapshot EDoF. | Can be a diffractive optical element (DOE) or a nano-fabricated metasurface placed at the Fourier plane. |

| Pre-processing Software Tools [46] | Corrects chromatic aberrations and aligns Z-stack images before EDoF generation. | Tools for rigid and elastic image registration (e.g., in Python with OpenCV or in ImageJ). |

| Deep Learning Framework [47] [46] | Provides the environment to build, train, and run EDoF models (CNNs). | TensorFlow, PyTorch, or Keras. |

| Validation Samples [50] [45] | Samples with known 3D structure to validate EDoF performance and resolution. | Fluorescent microspheres suspended in gel or transgenic zebrafish embryos (e.g., Tg(myl7:mCherry)) [50] [45]. |

| Automated Quantification Software [48] | Objectively measures the quality and resolution of the final EDoF output. | Software like TrueSpot for automated detection and quantification of fluorescent spots in 2D or 3D [48]. |

Technical Support Center

Troubleshooting Guides

This section addresses common challenges researchers face when using low-cost USB microscopes for biomedical research, providing specific solutions to improve image quality and analysis reliability.

Problem 1: Blurry or Unsharp Images

Question: My captured images consistently appear blurry or out of focus, even when the live preview looks sharp. What are the primary causes and solutions?

Answer: Blurry images can stem from several sources, including equipment stability, optical issues, and software settings.

- Vibration and Stability: Ensure the microscope is on a stable surface. Even slight vibrations can cause motion blur, especially at high magnifications [3] [51]. Hand-holding the microscope is not recommended for image capture.

- Parfocal and Focus Settings: An image that looks focused in the software preview but is blurry when saved may indicate a focus offset. Manually fine-tune the focus using a specimen with sharp edges after the software indicates it is focused [3].

- Objective Lens Contamination: Check the front lens of the microscope for dust, fingerprints, or immersion oil. Clean lenses gently with a soft, lint-free cloth and an appropriate lens-cleaning solution [3] [51].

- Resolution and Speed Trade-off: Higher resolution settings can sometimes introduce lag, making the image more susceptible to blur from minute movements. If this occurs, try using a slightly lower resolution for a faster capture speed, which can reduce motion blur [21].

Problem 2: Poor Image Contrast in Thin or Low-Stain Specimens

Question: How can I enhance the contrast of specimens that are inherently faint or have been imaged with low-cost staining methods?

Answer: Optimizing both hardware lighting and software processing is key to improving contrast.

- Adjust Lighting Function: Different samples require different lighting. For transparent or low-contrast samples, experiment with the microscope's lighting settings. If available, use darkfield lighting to make specimens appear bright against a dark background [51].

- Leverage Software Features: Use your microscope software's built-in tools to adjust brightness, contrast, and gamma levels [51]. If the software has a High Dynamic Range (HDR) function, enable it to reveal details in areas with low contrast [51].

- Post-Processing Color Settings: Certain structures are more visible with specific color enhancements. Apply grayscale mode or color gradient mapping in your software to highlight key areas of interest [52] [51].

Problem 3: Inconsistent Results Across Imaging Sessions

Question: My image quality and measurements vary from one day to the next, even with similar specimens. How can I improve workflow consistency?

Answer: Standardizing your imaging protocol is crucial for reproducible research data.

- Calibrate Regularly: Calibrate your digital microscope before starting work to ensure measurement accuracy [51].

- Save Microscope Settings: For different specimen types, save the optimal software settings (e.g., resolution, lighting, contrast) as a preset profile. This allows for quick and consistent setup across sessions [51].

- Control the Environment: Maintain stable power to the microscope to prevent damage and ensure consistent performance [51]. Keep the microscope in a cool, dry place and cover it when not in use to protect it from dust [51].

- Software and Firmware Updates: Always use the latest updated software and firmware provided by the manufacturer to access the best performance and latest features [51].

Frequently Asked Questions (FAQs)

Q1: What is the best resolution to use for my USB microscope? A1: Always use the highest native optical resolution of your microscope for your final captured images to preserve the most detail [51]. Be aware that higher resolutions may slow down the live preview, which can make focusing on live specimens more challenging. A lower resolution can be used for faster live previews and initial scanning [21].

Q2: How can I obtain a clear image of a thick specimen with structures at different depths? A2: Low-cost USB microscopes have a limited depth of field. To overcome this, you can use a technique called image stacking. Capture multiple images of the specimen, each focused on a different depth level. Then, use specialized image processing software to combine these images into a single, fully focused composite image [21].

Q3: My software lacks advanced analysis tools. What are my options? A3: You can export your high-resolution images and use third-party open-source or commercial image analysis software. Many powerful platforms exist, such as ZEISS arivis Pro and arivis Cloud, which offer advanced segmentation and analysis tools, including AI-powered models for complex tasks like cell counting and measurement [53]. Always ensure your original images are saved in a compatible, non-lossy format like TIFF during export to preserve data integrity [54].

Q4: Why is proper file management and metadata important? A4: A robust data management plan is critical. Proprietary formats or "lossy" compression can destroy image data. Export images in standard, lossless formats like TIFF [54]. Permanently associate metadata (e.g., sample prep, staining, magnification) with your image files. This practice ensures the reproducibility of your analysis, facilitates correct interpretation, and enables future data reuse [54].

Experimental Protocols for Contrast Enhancement

Protocol 1: Optimizing Software-Based Contrast

- Capture: Acquire an image at the microscope's highest resolution in an uncompressed format.

- Import: Open the image in your microscope's software or another image analysis application.

- Adjust Levels: Locate the brightness/contrast or "Levels" tool. Adjust the black point, white point, and gamma (mid-tones) to maximize the dynamic range without clipping the shadows or highlights.

- Apply Filters: Use available filters such as "Sharpen" or "Unsharp Mask" sparingly to enhance edge definition.

- Color Mapping: If the specimen is monochrome, apply a grayscale or false-color lookup table (LUT) to improve feature visibility [52] [51].

- Document Settings: Record all applied adjustments for reproducibility.

Protocol 2: Empirical Lighting Adjustment for Contrast

- Start with Brightfield: Place your specimen on the stage and illuminate with standard transmitted brightfield light.

- Vary Angle and Intensity: If your microscope allows, adjust the angle and intensity of the light source. Sometimes, oblique lighting from a slight angle can enhance the contrast of edges and textures.

- Evaluate Darkfield (if available): Switch to darkfield mode if your microscope supports it. This is particularly effective for revealing edges, boundaries, and fine details in unstained, transparent specimens [51].

- Capture Comparison Set: Capture the same field of view under each lighting condition.

- Quantify Contrast: Use software to measure the intensity variance between regions of interest (e.g., specimen vs. background) to objectively determine the optimal setup.

Workflow and Signaling Pathway Diagrams

Image Enhancement Workflow

Diagram Title: Low-Cost USB Microscope Image Enhancement Workflow

Contrast Enhancement Decision Logic

Diagram Title: Contrast Enhancement Decision Logic for USB Microscopy

Research Reagent and Material Solutions

The following table details key materials and software tools referenced for improving imaging workflows with low-cost USB microscopes.

| Item Name | Type | Primary Function in Workflow |

|---|---|---|

| Lens Cleaning Solution & Tissue | Maintenance Tool | Gently removes oil, dust, and fingerprints from objective lens to ensure optimal image clarity and prevent blur [3] [51]. |

| Standard Reference Slide (e.g., Stage Micrometer) | Calibration Tool | Provides a scale with known dimensions for calibrating software measurements and validating magnification accuracy across sessions [51]. |

| Immersion Oil (if applicable) | Optical Reagent | Matches the refractive index of the glass coverslip to the microscope objective, improving resolution and light-gathering for high-magnification objectives [3]. |

| Lossless Image Format (e.g., TIFF) | Software/Data Standard | Preserves all original image data without compression artifacts during export, which is critical for quantitative analysis [54]. |

| AI-Enhanced Analysis Platforms (e.g., ZEISS arivis Cloud) | Analysis Software | Provides cloud-based AI tools to train custom models for segmenting and analyzing complex image structures without coding [53]. |

| Digital Slide Viewer Software (e.g., SlideViewer) | Viewing & Annotation | Enables whole-slide navigation, precise digital annotation, and seamless collaboration, replacing traditional microscope viewing [52]. |

Optimizing Your Setup: Practical Solutions for Hardware and Imaging Challenges

In low-cost USB microscopy, achieving optimal image contrast is often hindered by poor illumination, leading to glare and uneven lighting that obscures critical specimen details. This guide provides targeted, practical strategies to overcome these challenges, enhancing the quality of images for research and analysis in contexts such as drug development and material inspection.

★ FAQs and Troubleshooting Guides

My image is mostly glare, especially on shiny circuit boards. How can I reduce this?

Glare from reflective surfaces like PCBs is a common issue caused by specular reflection. A highly effective and low-cost solution is to use polarizing films.

- Solution: Employ cross-polarization. Attach a linear polarizer film both over your microscope's light source and its lens. By rotating one of the filters, you can cancel out the specular reflections, making details like laser-etched markings on ICs clearly visible [55].

- Required Materials:

- Two sheets of linear polarizer film (can be sourced inexpensively online or salvaged from junked LCD screens) [55].

- Scissors or cutting implement.

- Method to temporarily attach the films to the light source and lens.

- Troubleshooting: If glare persists, experiment with rotating the filter on the light source while observing the image change in real-time on your screen. The effect can range from "glare-central" to a "darkened-but-clear" picture [55].

The lighting on my sample is uneven, causing shadows and hot spots. How do I fix this?

Uneven lighting is frequently a result of a single, direct light source and can be mitigated by diffusing and managing the light's angle and intensity.

- Solution:

- Diffuse the Light: Place a semi-transparent material (like tracing paper, a white plastic lid, or a commercial light diffuser) between the built-in LED lights and your sample. This softens the light and spreads it more evenly [56].

- Adjust Angle and Intensity: For advanced setups, try angling the lights from the side or using a ring light to minimize shadows cast by surface topography [56]. Dim the lights for transparent specimens and increase brightness for darker, opaque samples [56].

- Required Materials:

- Diffuser material (tracing paper, milky plastic).

- Microscope with adjustable LED intensity or an external, adjustable light source.

My images are blurry and lack detail, even when in focus. What can I do?

Blurry images can stem from multiple factors, including instability, incorrect working distance, and poor lighting, which collectively reduce effective contrast.

- Solution:

- Ensure Stability: Use a solid, metal stand and place the microscope on a stable surface to eliminate vibrations, which are magnified at high magnification [56].

- Optimize Working Distance: Find the correct distance between the lens and the object. Start at low magnification to find and center your subject, then gradually increase magnification. A shorter working distance is needed for tiny specimens, while larger objects require more space [56].

- Verify Focus: Adjust the focus knob slowly while fine-tuning the working distance for the sharpest image [56].

★ Advanced Experimental Protocols

Protocol 1: Cross-Polarization for Glare Elimination

This protocol details the method for implementing a cross-polarization setup to remove glare from reflective samples.