Cross-Validation in Geometric Morphometrics: A Performance Review of Protocols for Biomedical and Clinical Research

Geometric morphometrics (GM) is a powerful statistical tool for quantifying biological shape, with growing applications in clinical and pharmaceutical research.

Cross-Validation in Geometric Morphometrics: A Performance Review of Protocols for Biomedical and Clinical Research

Abstract

Geometric morphometrics (GM) is a powerful statistical tool for quantifying biological shape, with growing applications in clinical and pharmaceutical research. The reliability of its findings, however, hinges on the rigorous cross-validation of analytical protocols. This article provides a comprehensive review of GM cross-validation performance across diverse methodologies, from foundational landmark-based analyses and emerging functional data approaches to comparisons with machine learning. We explore common analytical pitfalls, offer optimization strategies for robust out-of-sample classification, and discuss the critical role of protocol validation in translating morphometric findings into reliable biomedical applications, such as personalized drug delivery and forensic anthropology.

Core Principles and Performance Challenges in Geometric Morphometrics

Defining Cross-Validation in the Context of Geometric Morphometric Workflows

Geometric morphometrics (GM) relies on sophisticated statistical models to quantify and analyze biological shape, making robust validation protocols essential for reliable results. Cross-validation serves as a critical methodology for assessing the generalizability and predictive performance of these models, guarding against overfitting—a significant risk given the high-dimensional nature of morphometric data. This guide objectively compares the cross-validation performance of various geometric morphometric protocols, including semi-landmark methods, outline-based analyses, and different dimensionality reduction techniques. We synthesize experimental data from multiple studies to provide researchers with evidence-based recommendations for optimizing their analytical workflows.

In geometric morphometrics, cross-validation provides a more reliable estimate of a model's classification accuracy than resubstitution methods, which are known to be biased upward as they use the same data to build and test the model [1]. The fundamental risk in GM analyses, particularly when using canonical variates analysis (CVA) for classification, is the high variable-to-specimen ratio. When outlines or curves are represented by numerous semi-landmarks, the number of parameters dramatically increases, demanding larger sample sizes for stable results [1]. Cross-validation, particularly leave-one-out cross-validation, mitigates this by iteratively training the model on all but one specimen and testing on the excluded one, providing a less biased performance estimate [1] [2].

The choice of cross-validation strategy becomes paramount when evaluating different GM protocols. Studies demonstrate that optimal performance depends on the complex interaction between data acquisition methods, alignment algorithms, and dimensionality reduction techniques [1]. Furthermore, the challenge of out-of-sample classification—applying a classification rule derived from a reference sample to new individuals not included in the original analysis—represents a critical extension of cross-validation principles in applied contexts [2]. The following sections compare these protocols quantitatively, using cross-validation performance as the key metric for evaluation.

Comparative Performance of Geometric Morphometric Protocols

Semi-Landmark and Outline Analysis Methods

Table 1: Comparison of Cross-Validation Performance for Different GM Methods

| Method Category | Specific Method | Application Context | Reported Cross-Validation Accuracy | Key Findings |

|---|---|---|---|---|

| Semi-Landmark Alignment | Bending Energy Minimization (BEM) | Feather shape (Ovenbird) | Roughly equal classification rates [1] | Performance not highly dependent on number of points or acquisition method. |

| Semi-Landmark Alignment | Perpendicular Projection (PP) | Feather shape (Ovenbird) | Roughly equal classification rates [1] | Performance not highly dependent on number of points or acquisition method. |

| Outline-Based Analysis | Elliptical Fourier Analysis (EFA) | Feather shape (Ovenbird) | Roughly equal classification rates [1] | Comparable performance to extended eigenshape and semi-landmark methods. |

| Outline-Based Analysis | Extended Eigenshape Analysis | Feather shape (Ovenbird) | Roughly equal classification rates [1] | Comparable performance to Fourier and semi-landmark methods. |

| Semi-Landmark & Fourier | Outline and Semi-Landmark | Carnivore tooth marks | Low accuracy (~40%) [3] | Bi-dimensional application showed limited discriminant power. |

| Geometric Morphometrics | Landmark-based CVA | Malocclusion (Cephalograms) | 80% after cross-validation [4] | High discrimination among malocclusion classes (I, II, III). |

The classification of specimens based on shape appears less dependent on the specific choice of outline method than previously assumed. Research on ovenbird rectrices found that two semi-landmark methods (Bending Energy Minimization and Perpendicular Projection) produced roughly equal classification rates, as did Elliptical Fourier methods and the extended eigenshape method [1]. This suggests that for many biological applications, the choice between these established methods may not be the primary factor influencing predictive success.

However, significant performance limitations emerge when these methods are applied to certain real-world problems. A study on carnivore tooth marks found that both outline (Fourier) and semi-landmark approaches achieved low discriminant accuracy, below 40%, for identifying the carnivore modifying agent [3]. This highlights that methodological performance is context-dependent, and bi-dimensional information alone can sometimes be insufficient for complex classification tasks. In contrast, a landmark-based CVA on lateral cephalograms for malocclusion classification achieved a high cross-validation accuracy of 80%, demonstrating the method's power in clinical dental contexts [4].

Dimensionality Reduction and Machine Learning Approaches

Table 2: Comparison of Dimensionality Reduction and Classification Techniques

| Technique | Purpose | Key Feature | Cross-Validation Performance |

|---|---|---|---|

| Variable PC Axes | Dimensionality Reduction | Uses number of PC axes that optimizes cross-validation rate [1] | Produced higher cross-validation assignment rates than fixed PC or PLS [1] |

| Fixed PC Axes | Dimensionality Reduction | Uses a fixed number of PC axes (e.g., all with non-zero eigenvalues) [1] | Lower cross-validation rates due to potential overfitting [1] |

| Partial Least Squares (PLS) | Dimensionality Reduction | Finds axes with greatest covariation with classification variables [1] | Lower cross-validation rates than variable PC axes method [1] |

| Supervised Machine Learning | Classification | Uses classifiers like LDA on aligned coordinates [2] | More accurate than PCA for classification and detecting new taxa [5] |

| Computer Vision (DCNN) | Classification | Deep Convolutional Neural Networks on images [3] | 81% accuracy for tooth pit classification [3] |

| Computer Vision (FSL) | Classification | Few-Shot Learning models on images [3] | 79.52% accuracy for tooth pit classification [3] |

The approach to dimensionality reduction preceding CVA is a more significant factor for cross-validation performance than the choice of outline method. A variable number of Principal Component (PC) axes approach, which selects the number of PCs that maximize the cross-validation assignment rate, outperformed both the standard fixed-number approach and a Partial Least Squares (PLS) method [1]. Using a fixed number of PC axes (often all axes with non-zero eigenvalues) can lead to high resubstitution rates but substantially lower cross-validation rates due to overfitting, where discriminant axes become too tailored to the specific sample [1].

Emerging evidence challenges the standard PCA-based workflow. A benchmark study on papionin crania found that PCA outcomes are "artefacts of the input data" and are "neither reliable, robust, nor reproducible," while supervised machine learning classifiers provided more accurate classification [5]. Similarly, in a challenging domain like carnivore tooth mark identification, Computer Vision methods like Deep Convolutional Neural Networks (DCNN) and Few-Shot Learning (FSL) models significantly outperformed traditional GM, achieving accuracies of 81% and 79.52%, respectively [3]. This indicates a potential paradigm shift towards machine learning for complex morphometric classification tasks.

Detailed Experimental Protocols and Workflows

Standard GM Workflow with Cross-Validation

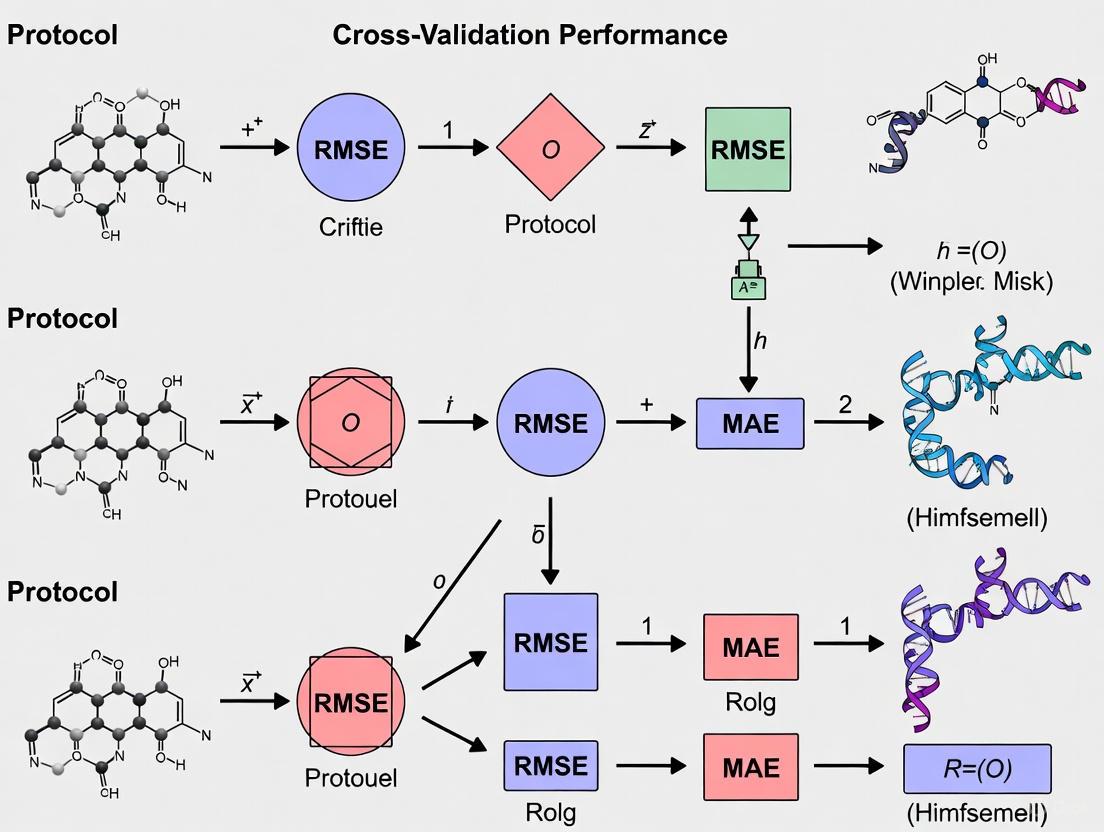

The following diagram illustrates the standard geometric morphometric workflow integrated with cross-validation, as applied in studies comparing methodological performance [1] [4] [2].

The standard workflow begins with Generalized Procrustes Analysis (GPA), which superimposes landmark configurations by translating, rescaling, and rotating them to minimize the sum of squared distances between corresponding landmarks, thus eliminating non-shape variations [4]. The resulting Procrustes coordinates are then subjected to dimensionality reduction, typically via Principal Component Analysis (PCA), to address the high dimensionality of the data [1] [5]. The reduced data serves as input for a classification model like Canonical Variates Analysis (CVA) or Linear Discriminant Analysis (LDA). The critical cross-validation step, often leave-one-out, involves iteratively refitting the model while holding out one specimen to test classification accuracy, providing a robust performance estimate [1] [2].

Protocol for Out-of-Sample Classification

A significant challenge in applied morphometrics is classifying new individuals not included in the original sample. The following workflow, derived from nutritional assessment research, addresses this [2].

This protocol requires selecting a template configuration from the training sample to serve as a target for registering the raw coordinates of a new individual [2]. This registration step is crucial for placing the new specimen into the same shape space as the training data, enabling the application of a pre-derived classification rule. The choice of template—such as the mean shape of the sample or a representative specimen—can influence classification performance and must be carefully considered [2]. This workflow is essential for real-world applications like the Severe Acute Malnutrition (SAM) Photo Diagnosis App, which classifies children's nutritional status from arm shape images without including them in the original model training [2].

Research Reagent Solutions: Essential Materials and Tools

Table 3: Key Software and Tools for Geometric Morphometric Analysis

| Tool Name | Type | Primary Function in GM Workflow | Application Example |

|---|---|---|---|

| MorphoJ | Software | Statistical analysis and visualization of shape data [4] | Malocclusion classification from cephalograms [4] |

| tpsDig2 / tpsUtil | Software | Digitizing landmarks and managing landmark data files [6] | Acquiring 2D coordinates from specimen images [6] |

| geomorph | R Package | GM analysis including Procrustes ANOVA and phylogenetic comparisons [7] | Complex statistical modeling of shape data [7] |

| Momocs | R Package | Outline analysis, including Elliptical Fourier Analysis [7] | Analyzing closed outlines of structures [7] |

| morphospace | R Package | Building and visualizing ordinations of shape data [7] | Creating publication-ready morphospace plots [7] |

| MORPHIX | Python Package | Supervised machine learning classification of landmark data [5] | Alternative to PCA-based classification [5] |

The analytical tools listed above form the backbone of modern geometric morphometric research. MorphoJ is a widely used standalone application for performing essential GM operations, including Generalized Procrustes Analysis, Principal Component Analysis, and Discriminant Function Analysis with cross-validation [4]. The tps software suite, particularly tpsDig2 and tpsUtil, is fundamental for the initial stages of data acquisition and management, allowing researchers to digitize landmarks and organize data files [6].

The R statistical environment hosts several powerful packages that extend analytical capabilities. The geomorph package provides tools for complex analyses, such as Procrustes ANOVA, and for integrating phylogenetic information [7]. Momocs is specialized for handling outline data through methods like Elliptical Fourier Analysis [7]. The newer morphospace package streamlines the creation and visualization of ordinations, enhancing the biological interpretation of results [7]. For researchers seeking alternatives to traditional PCA-based classification, MORPHIX is a Python package that implements supervised machine learning classifiers for landmark data, reportedly offering higher accuracy [5].

The cross-validation performance of geometric morphometric protocols is influenced by multiple factors, with the choice of dimensionality reduction technique and classifier often mattering more than the specific type of outline method. Based on the synthesized experimental data:

- For traditional GM workflows, a variable PC axes approach to dimensionality reduction is recommended over fixed PC axes or PLS, as it directly optimizes cross-validation performance [1].

- When classification accuracy is the primary goal, supervised machine learning classifiers (as implemented in MORPHIX) and computer vision approaches should be considered, as they have demonstrated superior performance compared to standard PCA-based methods in several benchmark studies [3] [5].

- Researchers must be cautious of overfitting when the number of variables (landmarks, semi-landmarks) is high relative to the sample size. Cross-validation is non-negotiable for obtaining realistic performance estimates [1] [2].

- For applied systems that classify new individuals, a robust protocol for out-of-sample classification must be implemented, carefully considering the selection of an appropriate template for registration [2].

Future research should continue to bridge traditional morphometric methods with modern machine learning, validate protocols on diverse datasets, and develop standardized workflows for out-of-sample prediction to enhance the reliability and applicability of geometric morphometrics.

Geometric morphometrics (GM) has become a foundational tool for quantifying biological shape across diverse scientific fields, from paleontology to drug development. The standard analytical protocol in GM consistently relies on a two-step process: Generalized Procrustes Analysis (GPA) for shape alignment, followed by Principal Component Analysis (PCA) for dimensionality reduction and visualization of shape variation [8] [9]. This combination is considered the cornerstone of modern shape analysis.

However, within the context of broader research on the cross-validation performance of different geometric morphometric protocols, critical questions arise: How reliable and robust are the conclusions drawn from this standard GPA-PCA pipeline? Can researchers confidently use this protocol for taxonomic classification, clinical prediction, or evolutionary inference? Recent studies have begun to systematically evaluate this workflow, testing its limits and comparing its performance against emerging methodologies, including various machine learning (ML) classifiers [8] [10]. This guide provides an objective comparison of the GPA-PCA protocol's performance against alternative approaches, supported by experimental data.

The Standard GPA-PCA Workflow and Its Applications

The conventional geometric morphometric pipeline involves a series of structured steps to transform raw coordinate data into interpretable shape variables.

Core Procedural Steps

- Landmarking: Anatomically homologous points are digitized on biological structures. Studies use Type I, II, and III landmarks, often supplemented with semi-landmarks to capture the geometry of curves and surfaces [8] [11]. For instance, a study on killer whales used 6 landmarks on aerial images to assess body shape related to reproductive status [12].

- Generalized Procrustes Analysis (GPA): This step removes non-shape variations by superimposing landmark configurations. It uses least-squares estimates to optimally translate, rotate, and scale all specimens to a common coordinate system, effectively isolating pure shape information [9] [10].

- Principal Component Analysis (PCA): The aligned Procrustes coordinates are projected onto a new set of uncorrelated variables—the Principal Components (PCs). These PCs describe the major axes of shape variation within the dataset, typically visualized using 2D or 3D scatterplots [8].

Diverse Scientific Applications

The GPA-PCA pipeline has been successfully applied across numerous domains, demonstrating its utility as a versatile tool for shape-based classification and hypothesis testing.

Table 1: Applications of the Standard GPA-PCA Protocol in Research

| Field of Study | Biological Structure | Research Objective | Key Finding |

|---|---|---|---|

| Anesthesiology [10] | Human Face (3D Scan) | Predict Difficult Mask Ventilation (DMV) | Significant morphological difference in the mandibular region identified between DMV and easy mask ventilation groups. |

| Paleontology [13] | Fossil Shark Teeth | Support Taxonomic Identification | Geometric morphometrics validated qualitative taxonomic separation and captured more morphological information than traditional morphometrics. |

| Ecology [12] | Killer Whale Body | Detect Reproductive Status from Aerial Images | Significant separation of body shapes between most reproductive statuses (e.g., non-pregnant vs. late-stage pregnant). |

| Personalized Medicine [11] | Human Nasal Cavity | Classify Olfactory Accessibility for Drug Delivery | Identified three distinct morphological clusters of the nasal cavity, influencing accessibility to the olfactory region. |

| Taxonomy [9] | Shrew Crania | Classify Three Shrew Species | Functional Data GM (FDGM) combined with PCA and LDA outperformed classical GM in species classification. |

Reliability Assessment: A Critical Evaluation

A growing body of literature critically examines the reliability of the standard GPA-PCA protocol, often through direct comparison with other statistical and machine learning methods.

Performance in Classification and Prediction

Comparative studies consistently reveal that while the GPA-PCA pipeline is a powerful exploratory tool, its performance in classification tasks can be surpassed by other methods.

Table 2: Comparative Performance of GPA-PCA vs. Alternative Methods

| Study Context | Comparison | Performance Outcome | Reference |

|---|---|---|---|

| Difficult Mask Ventilation Prediction [10] | PCA-based vs. 10 Machine Learning models on 3D facial scans. | The best ML model (Logistic Regression) achieved an AUC of 0.825, outperforming the traditional DIFFMASK score (AUC 0.785). PCA was part of the feature extraction, but ML improved classification. | |

| Shrew Species Classification [9] | Classical GM (PCA+LDA) vs. Functional Data GM (FDGM) with ML. | FDGM combined with machine learning (e.g., SVM, Random Forest) demonstrated better classification accuracy for shrew species than classical GM. | |

| Papionin Crania Classification [8] | Standard PCA vs. Supervised Machine Learning classifiers. | Supervised ML classifiers were found to be more accurate than PCA for both classification and detecting new taxa. | |

| Nasal Cavity Clustering [11] | PCA for identifying morphological clusters. | PCA successfully identified three distinct morphological clusters of the nasal cavity, demonstrating its continued utility for uncovering latent group structures. |

Identified Limitations and Criticisms of PCA

The central role of PCA in GM has recently been challenged. A compelling critique argues that PCA outcomes can be "artefacts of the input data" and are neither reliable, robust, nor reproducible as often assumed by researchers [8]. The main criticisms include:

- Subjectivity in Interpretation: Biological conclusions about relatedness, evolution, and taxonomy are often drawn from the visual clustering of samples on PCA scatterplots (e.g., PC1 vs. PC2). This interpretation is highly subjective, and different PC combinations can yield conflicting results, leading to questionable taxonomic decisions [8].

- Statistical Artefacts, Not Biological Reality: Principal Components are statistical constructs that maximize variance in the data, but this variance is not necessarily driven by biologically meaningful factors like population structure or sex. The assumption that proximity on a PCA plot proves relatedness is not statistically guaranteed [8].

- Irreproducibility: The aforementioned study found that PCA results were not reproducible in the way the scientific community assumes, raising concerns about the validity of PCA-based findings in an estimated 18,400 to 35,200 physical anthropology studies [8].

(caption: The Standard GM Workflow and Its Critiqued Pathway. The conventional path (red) from PCA to subjective interpretation is increasingly challenged. A more robust alternative (green) uses Procrustes coordinates as direct input to supervised machine learning models for objective classification.)

Detailed Experimental Protocols from Key Studies

This study offers a robust protocol for clinical prediction, integrating GPA with machine learning.

- Data Acquisition: 3D facial scans were collected from 669 patients using a FaceGo pro scanner (accuracy 0.1 mm). Patients maintained a neutral expression with heads in a natural position.

- Landmarking and Registration: A reference mesh (9,578 vertices) was created. All individual facial scans were non-rigidly registered to this template using MeshMonk toolbox, ensuring dense, corresponding landmarks across all subjects.

- GPA and Data Reduction: GPA was applied to the 9,578 landmarks to remove size, location, and orientation. The resulting Procrustes coordinates were used for subsequent analysis.

- Model Comparison: The Procrustes-aligned coordinate data were used to train ten different machine learning models, including Logistic Regression (LR), Support Vector Machines, and Random Forests. Model performance for predicting DMV was compared against a traditional clinical score (DIFFMASK).

- Key Result: The Logistic Regression model performed best, achieving an AUC of 0.825, which was higher than the DIFFMASK score (AUC 0.785). This demonstrates that GM with ML can surpass traditional clinical assessment.

This study designed a methodological test to evaluate PCA's reliability using benchmark data.

- Benchmark Data: The researchers used a known dataset of papionin (Old World monkeys) crania from five genera.

- Tool Development: They developed MORPHIX, a Python package for processing superimposed landmark data, which includes classifier and outlier detection methods.

- Comparative Analysis: The standard GPA-PCA approach was applied to the benchmark data. Its performance in classification and novelty detection was then compared against various supervised machine learning classifiers.

- Key Result: The study concluded that PCA results were not robust or reproducible and that supervised machine learning classifiers provided more accurate classification and better detection of new taxa. This challenges the central role of PCA in thousands of published studies.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of a geometric morphometrics study, especially one focused on cross-validation, requires a suite of specialized software and methodological tools.

Table 3: Key Research Reagents and Solutions for Geometric Morphometrics

| Tool Name | Type/Function | Brief Description of Role in Protocol |

|---|---|---|

| TPSdig [13] | Landmark Digitization Software | Used to collect two-dimensional landmark coordinates from digital images. |

| MeshMonk [10] | 3D Surface Registration Toolbox | An open-source toolbox for non-rigid, dense registration of 3D facial surfaces to a common template, generating thousands of corresponding landmarks. |

| Viewbox [11] | Landmark Digitization & Analysis | Software used to digitize both fixed landmarks and sliding semi-landmarks on 3D models. |

| MORPHIX [8] | Python Package for GM | A custom package for processing landmark data, featuring classifier and outlier detection methods as an alternative to standard PCA. |

| geomorph & FactoMineR [11] | R Packages for Statistical Analysis | Standard R packages for performing GPA, PCA, and other multivariate statistical analyses on landmark data. |

| Generalized Procrustes Analysis (GPA) | Core Statistical Method | The fundamental algorithm for aligning landmark configurations by removing differences in position, rotation, and scale. |

| Thin Plate Spline (TPS) [11] | Geometric Interpolation Function | Used to project semi-landmarks from a template onto individual specimens, ensuring homology across samples. |

The evidence from current research presents a nuanced view of the standard GPA-PCA protocol in geometric morphometrics. Generalized Procrustes Analysis remains a robust and reliable foundation for aligning shapes and isolating shape variation from other confounding variables. Its utility is not in question.

The primary subject of debate is the subsequent use of Principal Component Analysis. While PCA is an excellent tool for unsupervised exploration and visualization of the major trends in shape variation, its reliability for definitive taxonomic classification and phylogenetic inference is seriously challenged. Studies consistently show that supervised machine learning models often outperform PCA-based analyses in predictive accuracy and classification tasks [8] [10].

Therefore, the choice of protocol should be guided by the research objective. For exploratory shape analysis and hypothesis generation, the standard GPA-PCA pipeline is sufficient. However, for classification, prediction, or whenever robust, cross-validated conclusions are required, the evidence strongly supports a shift towards a GPA-ML pipeline, where Procrustes-aligned coordinates are fed directly into supervised machine learning algorithms. This combined approach leverages the strengths of both worlds, ensuring rigorous statistical validation while maintaining a firm grounding in biological shape.

The accurate quantification of biological shape through geometric morphometrics is foundational to numerous fields, including ecology, paleontology, and biomedical research. These analyses rely on the precise placement of landmarks—discrete, homologous anatomical points—to capture form in two or three dimensions [14]. The reliability of downstream statistical interpretations, from taxonomic classifications to evolutionary inferences, is fundamentally constrained by the initial landmark data. Consequently, understanding how different landmark types, configurations, and data acquisition protocols influence measurement error is crucial for scientific reproducibility [14] [15].

Reproducibility, defined as the closeness of agreement between independent results obtained under different conditions (e.g., different operators or equipment), is a cornerstone of the scientific method [16]. In geometric morphometrics, this is threatened by various sources of error introduced during data collection, which can be substantial enough to explain over 30% of the total variation among datasets [14] [17]. This article provides a comparative guide to the reproducibility of different geometric morphometric protocols, synthesizing experimental data on error sources and their impacts on analytical outcomes. By framing this within the context of cross-validation performance, we aim to equip researchers with the evidence needed to design more robust and replicable morphometric studies.

Measurement error in geometric morphometrics is not a single entity but arises from multiple, distinct phases of data acquisition. A comprehensive understanding of these sources is the first step in mitigating their impact.

- Imaging Device (Instrumental Error): The choice and configuration of imaging equipment, such as different camera lenses or scanners, can generate dissimilar morphological reconstructions. This includes lens distortion effects, which vary based on lens curvature and the position of the specimen within the camera field [14].

- Specimen Presentation (Methodological Error): In 2D analyses, projecting a 3D object onto a 2D plane inevitably introduces distortion. The projected location of a landmark can be displaced from its true position relative to other loci. This error is exacerbated when specimens are oriented dissimilarly, making biological variation difficult to distinguish from artificial variation caused by presentation angle [14] [17].

- Interobserver Error (Personal Error): Different individuals may position landmarks differently on the same specimen and locus. This variation is influenced by the observer's experience and the clarity of the landmark's definition [14] [16].

- Intraobserver Error (Personal Error): The same observer may place a landmark inconsistently across different specimens or digitizing sessions. This is affected by factors such as fatigue and the ease of visualizing the landmark locus [14].

Quantitative Impact on Statistical Results

The impact of these error sources is not merely theoretical; they directly affect the statistical fidelity of morphometric analyses. A landmark study on vole (Microtus) molars quantified how error influences Linear Discriminant Analysis (LDA), a common classification tool [14] [17].

Table 1: Impact of Measurement Error on Species Classification Accuracy

| Error Source | Key Finding on Classification | Experimental Context |

|---|---|---|

| Specimen Presentation | Greatest discrepancies in species classification results | Comparison of in-situ teeth vs. isolated/tilted teeth [14] [17] |

| Imaging Device | Impacts group membership predictions | Comparison of Nikon D70s vs. Dino-Lite digital microscope [17] |

| Interobserver Variation | Greatest discrepancies in landmark precision | Comparison between experienced and new observers [14] [17] |

| All Error Sources | No two landmark dataset replicates produced the same predicted group memberships for fossil specimens | Analysis of 31 fossil Microtus specimens [14] [17] |

These findings underscore a critical point: the cumulative effect of measurement error can lead to fundamentally different interpretations of the same biological data. For instance, the taxonomic affinity of fossil specimens may be assigned to different groups depending solely on which replicated dataset is used to train the classifier [17]. This has profound implications for replicating studies in paleontology, ecology, and systematics.

Comparative Analysis of Morphometric Protocols

The reproducibility of a morphometric analysis is significantly influenced by the overarching methodological approach, which ranges from fully manual landmarking to automated, landmark-free techniques.

Traditional vs. Geometric Morphometrics

A direct comparison of four morphometric methods in ichthyology quantified their repeatability (agreement under the same conditions) and reproducibility (agreement under different conditions) [16].

Table 2: Performance Comparison of Morphometric Methods

| Method | Key Characteristics | Repeatability & Reproducibility | Subjectivity (Measurer Effect) |

|---|---|---|---|

| Traditional (TRA) | Caliper-based linear measurements on preserved specimens | Lowest repeatability and reproducibility | Population-level detachment was entirely overwritten by measurer effect [16] |

| Truss-Network (TRU) | Distance between homologous points from digital images | Similar repeatability to Geometric Methods on Scales (GMS) | Significant measurer effect [16] |

| Geometric on Body (GMB) | Landmark coordinates from digital images of the body | Highest overall repeatability and reproducibility | Least burdened by measurer effect [16] |

| Geometric on Scales (GMS) | Landmark coordinates from digital images of scales | Similar repeatability to GMB, but lower reproducibility | Significant measurer effect; aggregation of different measurers' datasets not recommended [16] |

The study strongly recommended image-based geometric methods (GMB) over traditional caliper-based methods due to their superior repeatability, reproducibility, and reduced subjectivity. It also cautioned against aggregating datasets from different measurers, especially when using TRA and GMS methods [16].

The Emergence of Landmark-Free and AI-Based Approaches

Emerging automated methods aim to overcome the bottlenecks of manual landmarking, which is time-consuming and prone to observer bias [18].

- Landmark-Free Morphometrics: Techniques like Large Deformation Diffeomorphic Metric Mapping (LDDMM) quantify shape by calculating the deformation energy required to map a reference "atlas" shape onto each specimen in a dataset. A macroevolutionary study of 322 mammals found that while landmark-free methods (DAA) showed strong correlation with manual landmarking, differences emerged, particularly for certain clades like Primates and Cetacea. Both methods produced comparable but varying estimates of phylogenetic signal and evolutionary rates, highlighting that the choice of method can influence macroevolutionary inferences [18].

- AI-Based Landmark Detection: Artificial intelligence, particularly deep learning models, offers high-throughput, automated landmarking. In cephalometrics, AI has demonstrated superior reproducibility compared to manual tracing by an experienced orthodontist [19]. One study reported that AI achieved the lowest coefficient of variation (CV) values, with particular consistency for landmarks like Menton (Me) and Pogonion (Pog) [19]. This enhanced reproducibility is attributed to the elimination of intra- and inter-obscriber variability. However, systematic biases can occur; for example, AI reconstructing 3D models from 2D photos showed a consistent downward shift of inferior landmarks [20].

Experimental Protocols for Error Assessment

Robust morphometric studies require protocols to quantify and control for measurement error. Below are detailed methodologies from key studies.

Protocol for Evaluating Data Acquisition Error

Objective: To quantify error from four sources (imaging device, specimen presentation, inter- and intraobserver variation) and its impact on classification statistics [17].

- Dataset Replication: Create multiple replicated datasets.

- Imaging Device: Photograph the same set of specimens (e.g., vole dentaries) using two different cameras (e.g., Nikon D70s and Dino-Lite microscope).

- Specimen Presentation: Photograph specimens a second time after intentionally tilting them along their axis to simulate non-standardized orientation.

- Inter/Intraobserver Error: Have multiple observers (e.g., an experienced and a new observer) digitize the same image sets twice, with at least a week between sessions.

- Landmark Digitization: Digitize all images using a standardized landmark protocol (e.g., 21 landmarks on the lower first molar) in software such as TpsDig.

- Data Preprocessing: Perform Generalized Procrustes Analysis (GPA) using software like the

gpagenfunction in the R packagegeomorphto superimpose all landmark configurations, removing variation due to position, orientation, and scale. - Statistical Classification: Run Linear Discriminant Analysis (LDA) on each of the nine Procrustes-aligned datasets using the

ldafunction in R. Use leave-one-out cross-validation to determine the correct classification rate for specimens of known species. - Error Impact Assessment: Append fossil specimens of unknown affinity to each dataset and use the LDA models to predict their group membership. Compare the predictions across all replicated datasets to see how error influences the classification of unknowns.

Protocol for Assessing Individual Landmark Precision

Objective: To evaluate the precision of individual landmarks and avoid the "Pinocchio effect," where highly variable landmarks inflate overall error estimates [15].

- Repeated Digitization: A single observer digitizes the landmark configuration on a specimen multiple times (e.g., 10 times). The specimen must be held in a constant orientation throughout this process to isolate digitization error from presentation error.

- Calculate Centroid and Distances: For each landmark, calculate the centroid (mean x, y, z coordinates) of its repeated placements.

- Measure Precision: Compute the Euclidean distance between each individual placement of a landmark and its centroid.

- Analyze by Landmark: The average distance and standard deviation for each landmark represent its precision. Landmarks with consistently large distances from their centroid are considered less precise and may require redefinition or removal.

Experimental Workflows for Assessing Landmark Error

The Scientist's Toolkit: Essential Reagents and Software

A summary of key computational tools and their functions in geometric morphometrics is provided below.

Table 3: Key Software and Tools for Geometric Morphometrics

| Tool Name | Primary Function | Application Context |

|---|---|---|

| TpsDig / TpsUtil | Digitizing landmarks and managing project files | Standard software for collecting 2D landmark data from images [17] |

| Geomorph (R package) | Performing Generalized Procrustes Analysis (GPA) and subsequent statistical shape analysis | Core statistical toolkit for morphometrics in R [17] |

| Deformetrica | Performing landmark-free analysis using Large Deformation Diffeomorphic Metric Mapping (LDDMM) | Automated shape comparison without manual landmarking [18] |

| WebCeph | AI-assisted and manual cephalometric landmark identification | Commercial platform for orthodontic analysis; used in AI reproducibility studies [19] |

| RENOIR | Platform for robust and reproducible machine learning model training and testing | Ensures generalizability of AI/ML models in biomedical sciences [21] |

The reproducibility of geometric morphometric analyses is profoundly affected by the choices researchers make regarding landmark types, data acquisition protocols, and analytical methods. The evidence demonstrates that image-based geometric methods on the body (GMB) offer superior repeatability and reduced subjectivity compared to traditional caliper-based or scale-based geometric methods. Furthermore, emerging AI and landmark-free methods show great promise for enhancing throughput and consistency, though they require careful validation to identify and correct for potential systematic biases.

To maximize reproducibility, researchers should: standardize imaging equipment and specimen presentations whenever possible; use a single, experienced observer for landmarking or use automated methods; quantify and report measurement error as a routine part of their methodology; and be cautious when aggregating datasets collected under different conditions. By adopting these rigorous protocols, the morphometrics community can strengthen the foundation of shape-based inferences across biological and biomedical disciplines.

Geometric morphometrics (GM) is a foundational tool across evolutionary biology, palaeontology, and drug development for quantifying and analyzing shape variation. The standard analytical pipeline typically involves two core steps: Generalized Procrustes Analysis (GPA) to superimpose landmark coordinates by removing shape-independent variations, followed by Principal Component Analysis (PCA) to project the high-dimensional data onto a lower-dimensional space of uncorrelated variables [8]. This PCA-based approach is deeply embedded in morphological studies, with an estimated 18,400 to 35,200 physical anthropology studies alone relying on its outcomes [8].

However, a growing body of critical research challenges the reliability and robustness of PCA for drawing biological conclusions. This article provides a comparative guide evaluating PCA's performance against emerging alternative statistical and machine learning protocols, with a specific focus on cross-validation performance within geometric morphometric research. We synthesize current evidence to help researchers make informed methodological choices.

Theoretical and Methodological Biases of PCA

The application of PCA in morphometrics introduces several inherent biases that can compromise the validity of research findings.

Input Data Artefacts: PCA outcomes are highly sensitive to input data composition. Results are not stable, reliable, or reproducible in the way often assumed by field practitioners [8]. The patterns observed in PCA scatterplots (e.g., clustering, proximity) may represent statistical artefacts rather than genuine biological relationships.

Subjective Interpretation: Phenetic, evolutionary, and ontogenetic conclusions are frequently drawn from visual inspection of the first two or three principal components, despite these components being "statistical manifestations agnostic to the data" [8]. Researchers may selectively report PC combinations that support their hypotheses, as witnessed in controversial hominin taxonomy cases like Homo Nesher Ramla, where different PC plots produced conflicting phylogenetic results [8].

Dimensionality Reduction Limitations: While PCA effectively reduces dimensionality, it may oversimplify complex morphological spaces by focusing on global structure at the expense of locally relevant variations for classification tasks [22].

Table 1: Documented Methodological Biases of PCA in Morphometric Studies

| Bias Category | Description | Impact on Research |

|---|---|---|

| Input Sensitivity | Outcomes are artefacts of specific input data composition [8] | Compromised reliability and reproducibility of studies |

| Subjective Interpretation | Biological meaning is assigned to statistically-derived components [8] | Potential for confirmation bias in evolutionary hypotheses |

| Variance Overemphasis | Prioritizes directions of maximum variance, which may not be biologically relevant [23] | Possible misinterpretation of morphological patterns |

| Linearity Assumption | Assumes linear relationships in shape data [23] | Poor capture of complex morphological relationships |

Experimental Comparisons: PCA vs. Alternative Methods

Performance in Taxonomic Identification

A rigorous evaluation of statistical models for establishing morphometric taxonomic identifications compared PCA with Linear Discriminant Analysis (LDA) and Random Forest (RF) using cranial specimens of modern Dipodomys spp. and Leporidae species [22]. The results demonstrated that Random Forest consistently outperformed PCA across all test scenarios.

Table 2: Classification Error Rates (%) by Statistical Method and Dataset [22]

| Condition | Dataset | PCA | LDA | Random Forest |

|---|---|---|---|---|

| Complete Crania | Leporidae | 18.4 | 4.1 | 3.1 |

| Complete Crania | Dipodomys spp. | 42.9 | 16.3 | 16.3 |

| Cranial Fragments | Leporidae | 26.5 | 8.2 | 6.1 |

| Cranial Fragments | Dipodomys spp. | 46.9 | 18.4 | 16.3 |

The study concluded that "PCA should not be used to predict species identifications using morphometric data" due to its significantly higher error rates [22]. Random Forest not only achieved higher accuracy but also handled missing data more effectively through imputation.

Performance in Novel Taxon Detection

Beyond classification of known taxa, the detection of novel or outlier specimens represents a critical challenge in morphological research. A study developing MORPHIX, a Python package for processing landmark data, found that supervised machine learning classifiers were more accurate than PCA both for standard classification tasks and for detecting new taxa [8]. This capability is particularly valuable for identifying exceptional specimens that may represent new species or previously unknown morphological variants.

Limitations in Capturing Tooth Mark Morphology

In taphonomy research, a methodological comparison of techniques for identifying carnivore agency found that PCA-based geometric morphometric approaches showed less than 40% discriminant power when analyzing bi-dimensional tooth marks [3]. The study noted that previous claims of high accuracy using these methods were "heuristically incomplete" because they had only considered a small range of allometrically-conditioned tooth pits while excluding widely represented non-oval forms [3].

In contrast, computer vision approaches using Deep Convolutional Neural Networks classified experimental tooth pits with approximately 80% accuracy, demonstrating significantly superior performance for this specific morphological application [3].

Experimental Protocols for Method Comparison

Standard Geometric Morphometrics Workflow

The foundational protocol for landmark-based geometric morphometrics involves sequential steps that are consistent across most studies, whether using PCA or alternative multivariate methods.

Diagram 1: Standard workflow for geometric morphometric studies. The multivariate analysis stage is where PCA and alternative methods diverge.

Detailed Protocol: Killer Whale Reproduction Study

A comprehensive geometric morphometric study on killer whale reproductive stages provides an exemplary protocol for method comparison [12]:

- Landmark Configuration: Six landmarks were digitized on standardized aerial images of killer whales using software such as MorphoMetriX [12].

- Experimental Design: The study employed a balanced approach with multiple images per individual and tested the significance of Procrustes distances to validate landmark configurations.

- Validation Method: Discriminant Function Analysis (DFA) revealed significant shape differences between reproductive states (non-pregnant, early-stage pregnant, late-stage pregnant, lactating) with P-values ranging from <0.001 to 0.01 for pairwise comparisons [12].

- Cross-Validation: The protocol included testing for statistical differences in Procrustes distances based on the number of images per whale and number of landmarks per image to optimize the configuration.

This experimental design demonstrates how rigorous validation protocols can be implemented to ensure the reliability of morphometric analyses beyond standard PCA approaches.

Protocol for Out-of-Sample Classification

A critical challenge in applied morphometrics involves classifying new specimens not included in the original training set. A study on children's nutritional assessment from arm shapes developed a specialized protocol for this purpose [24]:

- Template Registration: Raw coordinates of new individuals are registered to a template configuration from the training sample.

- Procrustes Alignment: The registered coordinates undergo Procrustes analysis against the template.

- Classifier Application: Pre-trained classifiers (LDA, RF, or SVM) are applied to the aligned coordinates for nutritional status classification.

- Performance Validation: The method was tested with different template choices to evaluate impact on classification accuracy.

This approach addresses a significant limitation of standard PCA-based morphometrics, where classification rules derived from a sample cannot be directly applied to new individuals without repeating the entire alignment process [24].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Software and Analytical Tools for Geometric Morphometrics

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| MORPHIX [8] | Python Package | Processing landmark data with classifier and outlier detection | Evolutionary anthropology, novel taxon detection |

| TPSDig2 [25] [13] | Desktop Software | Landmark digitization on 2D images | Standardized landmark placement across studies |

| FaceDig [25] | AI Tool | Automated landmark placement on facial portraits | High-throughput facial morphometrics |

| MorphoJ [26] | Desktop Software | Comprehensive morphometric analysis | General-purpose shape analysis |

| XYOM [27] | Cloud Platform | Online morphometric analysis | Platform-independent collaborative research |

| R (geomorph) [24] | Statistical Package | GM analysis in statistical programming environment | Flexible, customizable analytical pipelines |

The cross-validation performance of different geometric morphometric protocols reveals significant limitations in PCA-based approaches compared to modern alternatives. The evidence indicates that PCA exhibits substantial biases that can lead to unreliable biological interpretations, particularly when used for taxonomic identification or phylogenetic inference.

Supervised machine learning methods, particularly Random Forest classifiers, demonstrate superior performance in multiple experimental contexts, offering higher classification accuracy and better handling of missing data [22]. These methods excel at capturing complex morphological patterns that may be overlooked by PCA's variance-maximizing approach.

For researchers engaged in geometric morphometrics, we recommend:

- Utilizing multiple analytical methods to validate findings, rather than relying exclusively on PCA

- Implementing rigorous cross-validation protocols using hold-out samples or leave-one-out approaches

- Considering supervised machine learning approaches for classification tasks, particularly when working with fragmentary or incomplete specimens

- Applying caution when interpreting PCA plots as direct evidence of biological relationships

Future methodological development should focus on integrating geometric morphometrics with robust machine learning frameworks and improving protocols for out-of-sample classification. As the field advances, the critical examination of analytical biases remains essential for generating reliable morphological insights across evolutionary biology, anthropology, and drug development research.

The Role of Sample Size and Power in Protocol Validation

In scientific research, particularly in fields employing advanced morphological analysis or machine learning, the principles of protocol validation are paramount. This process ensures that methodologies produce reliable, reproducible, and generalizable results. Two of the most critical factors influencing the success of validation are sample size and statistical power. Within the context of a broader thesis on the cross-validation performance of different geometric morphometric protocols, this article examines how sample size and power underpin protocol validation. We objectively compare the performance of different methodological approaches, using supporting experimental data to highlight the trade-offs and optimal strategies researchers must consider. The focus on geometric morphometrics serves as a powerful case study due to its high-dimensional data and reliance on robust validation, but the conclusions are applicable to a wide range of scientific domains, including drug development.

Theoretical Foundations: Sample Size, Power, and Cross-Validation

The Interplay of Key Concepts

At its core, protocol validation is the process of establishing that a specific methodological procedure is fit for its intended purpose. In data-driven sciences, this almost invariably involves using cross-validation (CV), a family of model validation techniques that assess how the results of a statistical analysis will generalize to an independent data set [28]. The goal is to flag problems like overfitting and provide insight into how a model will perform on unseen data.

The effectiveness of cross-validation is directly governed by two intertwined concepts:

- Sample Size (n): The number of observations or specimens available for analysis.

- Statistical Power: The probability that a test will correctly reject a false null hypothesis (i.e., detect an effect if one exists).

The relationship between them is simple yet profound: inadequate sample size leads to low statistical power. A study with low power not only risks missing true effects (Type II errors) but also produces effect sizes that are often inflated and unreliable [29]. This is especially critical in geometric morphometric studies, which analyze complex shape data and require sufficient samples to accurately estimate population mean shape and variance [30].

The Pitfalls of Small Sample Sizes in Validation

Small sample sizes create a cascade of problems that compromise protocol validation:

- Increased Error in Cross-Validation: With small samples, the estimate of a model's predictive accuracy becomes unstable and possesses large error bars. One study noted that cross-validation errors in measuring prediction accuracy can be as high as ±10% with sample sizes around 100 [31].

- Overfitting and Optimistic Bias: A model trained on a small dataset may learn the noise in the data rather than the underlying signal. While it may perform well on that specific training set, its performance will plummet on unseen data. Cross-validation with small samples struggles to detect this overfitting [32].

- Impact on Morphometric Analyses: In geometric morphometrics, reducing sample size directly impacts the accuracy of shape measurements. Studies have shown that smaller samples lead to biased estimates of mean shape and increased shape variance, making it difficult to detect true morphological differences between groups [30].

The following diagram illustrates the logical relationship between sample size, statistical power, and the outcomes of protocol validation.

Comparative Analysis of Methodological Protocols

The choice of analytical protocol and its interaction with sample size significantly influences the outcome of scientific studies. The table below summarizes key performance metrics for different methodological approaches as reported in the literature.

Table 1: Performance comparison of different methodological protocols under varying sample sizes

| Method / Protocol | Reported Accuracy / Performance | Key Sample Size Finding | Study Context |

|---|---|---|---|

| 2D Geometric Morphometrics (GMM) | Effective for species discrimination [30] | Sample size reduction significantly impacts mean shape & increases shape variance; n > 70 used for stable estimates [30] | Skull shape analysis in bat species |

| Computer Vision (Deep Learning) | 81% classification accuracy [3] | Outperformed 2D GMM which showed < 40% discriminant power in the same study [3] | Carnivore tooth mark identification |

| Machine Learning (SVM, NN, etc.) | Accuracy increases with sample size, plateauing after n ≈ 120 [29] | Small samples (n<120) show high variance in accuracy; overfitting exaggerates reported performance [29] | Arrhythmia dataset classification |

| Nested k-fold Cross-Validation | Highest statistical confidence and power [32] | Required sample size could be 50% lower than with single holdout method; reduces overestimation of accuracy [32] | General ML model validation |

Geometric Morphometrics vs. Computer Vision

A direct comparison in taphonomy highlights how protocol choice affects outcomes. A study aiming to identify carnivore agents from tooth marks found that while 2D Geometric Morphometrics (GMM) using outline analysis had limited discriminant power (<40%), a Computer Vision (CV) approach using Deep Convolutional Neural Networks (DCNN) achieved 81% accuracy [3]. This stark difference was attributed to the GMM's reliance on manual landmarking and outlines, which may not capture the full spectrum of shape complexity, especially with a constrained sample of "non-oval tooth pits." The CV protocol, designed to automatically learn relevant features from images, demonstrated superior performance with the same data, underscoring the importance of selecting a protocol with sufficient representational capacity for the task.

The Critical Role of Advanced Cross-Validation Protocols

The method of validation itself is a protocol that requires careful selection. A key finding from machine learning research is that the common practice of single holdout cross-validation (a single train-test split) leads to models with low statistical power and confidence, resulting in a significant overestimation of classification accuracy [32].

In contrast, nested k-fold cross-validation provides a more robust validation protocol. In this method, an outer loop performs k-fold cross-validation to estimate the generalization error, while an inner loop is used for model selection and hyperparameter tuning. This prevents data leakage and provides an unbiased estimate of model performance. The adoption of this more rigorous protocol is critical, as it can reduce the required sample size by up to 50% compared to the single holdout method to achieve the same level of confidence [32].

Experimental Data on Sample Size Impact

Quantitative Evidence from Morphometrics and Machine Learning

Empirical studies consistently demonstrate a non-linear relationship between sample size and the reliability of outcomes. The following table synthesizes quantitative findings from multiple research domains.

Table 2: Impact of sample size on analytical outcomes across different fields

| Field of Study | Measured Metric | Small Sample Effect (n < ~30) | Effect with Larger Samples (n > ~100) |

|---|---|---|---|

| Geometric Morphometrics [30] | Mean shape estimation | Biased and unstable | Converges to stable population value |

| Geometric Morphometrics [30] | Shape variance | Inflated variance | Accurately reflects population variance |

| Machine Learning [29] | Classification Accuracy | High variance (e.g., 68-98%); overfitting | Stable and reliable (e.g., 85-99%) |

| Machine Learning [29] | Effect Size | Inflated and highly variable | Stable and accurate |

| Neuroimaging (MVPA) [31] | Cross-Validation Error Bar | Large (e.g., ±10%) | Substantially reduced |

A pivotal study on bat skull morphology systematically evaluated the impact of sample size on 2D geometric morphometric analyses. Using large intraspecific sample sizes (n > 70) for Lasiurus borealis and Nycticeius humeralis, researchers found that reducing sample size directly increased the distance from the true mean shape and inflated estimates of shape variance [30]. This means that studies with small samples are not only less precise but also prone to overestimating the morphological diversity within a group. Furthermore, they found that shape differences were not consistent across different 2D views of the skull, indicating that a single view analyzed with a small sample may lead to incomplete or misleading biological conclusions.

In machine learning, a systematic evaluation using a large arrhythmia dataset revealed that classification accuracy for multiple algorithms (Support Vector Machine, Neural Networks, etc.) increased sharply as the sample size grew from 16 to about 120. Crucially, the variance in accuracy was very high for sample sizes below 120, meaning that a single run of an experiment could yield a deceptively high or low result purely by chance. Beyond this point, the performance gains diminished and the results stabilized [29]. This provides a practical benchmark for a minimum sample size in similar ML-based studies.

Workflow for a Robust Validation Protocol

The following workflow integrates the critical steps of sample size consideration and robust cross-validation into a geometric morphometric study design, from data collection to final interpretation.

Essential Research Reagent Solutions

Successful protocol validation relies on a toolkit of robust software and methodological "reagents." The following table details key solutions essential for researchers in geometric morphometrics and related fields.

Table 3: Key research reagent solutions for geometric morphometric and validation studies

| Tool / Solution | Function / Purpose | Relevance to Protocol Validation |

|---|---|---|

| tpsDig2 [30] | Software for digitizing landmarks and semi-landmarks on 2D images. | Standardizes the initial data collection step, reducing observer bias and ensuring reproducibility in morphometric analyses. |

| Geomorph R Package [30] | A comprehensive R package for performing geometric morphometric analyses, including Generalized Procrustes Analysis (GPA) and statistical testing. | Provides a standardized, peer-reviewed toolkit for core GM procedures, ensuring analytical consistency and correctness. |

| Nested k-fold Cross-Validation Code [32] | Custom scripts (e.g., in MATLAB or Python) to implement nested cross-validation. | Critical for obtaining unbiased performance estimates in machine learning and model-based studies, preventing overfitting. |

| Whalength / ImageJ / MorphoMetriX [12] | Software tools for processing and measuring biological specimens from images. | Enables non-invasive, standardized body condition assessments, crucial for ecological and conservation studies. |

| Deep Learning Frameworks (e.g., for DCNN) [3] | Libraries like TensorFlow or PyTorch for implementing computer vision models. | Provides an alternative, high-capacity protocol for shape analysis that can outperform traditional GMM in some classification tasks. |

The body of evidence unequivocally demonstrates that sample size and statistical power are not mere afterthoughts but foundational elements of protocol validation. In geometric morphometrics, small samples lead to unstable estimates of shape and variance [30]. In machine learning and neuroimaging, they result in large, often underestimated, error bars in cross-validation, creating an "illusion of biomarkers that do not generalize" [31].

The comparative data shows that while advanced methods like deep learning can offer higher accuracy [3], they do not absolve the researcher from the sample size imperative. Furthermore, the choice of validation protocol itself, such as adopting nested k-fold cross-validation over a simple holdout method, is a powerful lever to improve statistical power and confidence, effectively making better use of available samples [32].

For researchers, scientists, and drug development professionals, this implies that study design must prioritize sample size estimation and power analysis from the outset. Relying on small, underpowered studies risks building scientific conclusions on an unstable foundation. The practical guidance is clear: invest in preliminary data and power analyses, aim for sample sizes demonstrated to provide stable estimates (e.g., often n > 70 in morphometrics [30]), and always employ the most robust cross-validation protocols available to ensure that validated methods perform reliably when applied in the real world.

Protocol Implementation and Domain-Specific Applications

Geometric morphometrics (GM) has become a foundational tool for the quantitative analysis of shape across biological, medical, and paleontological disciplines. Within this field, two predominant methodologies have emerged: landmark-based GM, which relies on anatomically defined point coordinates, and outline-based GM, which analyzes the complete contour of a structure using mathematical functions [33] [34]. The choice between these methods carries significant implications for the reliability, interpretability, and generalizability of research findings, particularly when classification models are applied to new data.

This guide objectively compares the performance of these two approaches through the critical lens of cross-validation. Cross-validation rigorously tests a model's predictive power by evaluating its performance on data not used during training, thus simulating real-world application [2]. For researchers in drug development and other applied sciences, where models must perform reliably on new samples, understanding the cross-validation performance of different geometric morphometric protocols is paramount.

Methodological Foundations

Landmark-Based Geometric Morphometrics

Landmark-based GM analyzes shape using discrete, homologous points that have direct biological correspondence across specimens. The methodology follows a structured pipeline:

- Landmark Typology: Landmarks are classified into three primary types. Type I landmarks are defined by discrete anatomical loci (e.g., junctions between bones or the tip of a structure). Type II landmarks represent mathematically defined points of maximum curvature. Type III landmarks are constructed points, such as midpoints or extreme points, defined by their relative position to other landmarks [34].

- Data Acquisition and Procrustes Superimposition: Cartesian coordinates from digitized landmarks undergo Generalized Procrustes Analysis (GPA). GPA standardizes configurations by scaling them to a unit centroid size, translating them to a common origin, and rotating them to minimize the sum of squared distances between corresponding landmarks [34] [35]. This process isolates shape variation from differences in size, position, and orientation.

- Statistical Analysis and Classification: The resulting Procrustes coordinates serve as variables for multivariate statistical analysis, including Principal Component Analysis (PCA) and Discriminant Function Analysis (DFA) or Canonical Variate Analysis (CVA) [34] [35]. Classification rules, such as linear discriminant functions, are built from these aligned coordinates.

A key challenge in landmark-based analysis, particularly for cross-validation, is the out-of-sample problem. Classification rules are constructed from a sample-dependent Procrustes alignment. Applying these rules to new individuals requires a method to register the new specimen's raw coordinates into the pre-existing shape space of the training sample, a process that is not standardized and can introduce error [2].

Outline-Based Geometric Morphometrics

Outline-based GM captures shape information from the entire contour of a structure, making it suitable for forms that lack discrete landmarks. The standard workflow involves:

- Outline Digitization: The contour of a structure is captured as a sequence of two-dimensional coordinates [34].

- Mathematical Representation: The outline is represented mathematically, most commonly using Elliptic Fourier Analysis (EFA). EFA decomposes a contour into a sum of trigonometric functions (harmonics), with the coefficients of these functions serving as shape descriptors [33] [36].

- Data Normalization and Analysis: Fourier coefficients are normalized to be invariant to the contour's starting point, rotation, and size. These normalized coefficients are then used as variables in subsequent statistical analyses and classifier construction [34].

Alternative outline methods are also emerging. The shape-changing chain approach, for instance, models a profile using a chain of rigid, scalable, and extendible segments. The parameters of this chain (e.g., relative angles and length ratios) provide a modest number of variables for discriminant analysis, which can have physical or biological meaning [36].

Comparative Cross-Validation Performance

The table below synthesizes quantitative findings from multiple studies that directly compared the classification accuracy of landmark- and outline-based methods, often using cross-validation techniques.

Table 1: Cross-Validation Classification Accuracy of Landmark vs. Outline-Based GM

| Study Organism/Subject | Landmark-Based Accuracy | Outline-Based Accuracy | Cross-Validation Method | Key Findings | Source |

|---|---|---|---|---|---|

| Trichodinids (parasites) | Higher accuracy (specific value not provided) | Lower accuracy | Not specified | Landmarks provided greater differentiation; outlines may include points with less taxonomic information. | [37] |

| Mosquito Vectors | Effective for genus-level ID and Anopheles & Aedes species | Effective for genus-level ID and Anopheles & Aedes species | Validated reclassification | Both methods were successful, but performance varied by genus; less effective for Culex species. | [33] |

| Horse Flies (Tabanus) | Not tested | 86.67% (using 1st submarginal cell contour) | Validated classification test | Outline-based GM on a specific wing cell showed high accuracy and is useful for damaged specimens. | [38] |

| Children's Arm Shape | Model created from Procrustes coordinates | Not the focus | Out-of-sample application | Highlighted the central challenge of classifying new individuals not included in the original Procrustes alignment. | [2] |

Interpretation of Comparative Data

The data reveals that the superiority of one method over the other is often context-dependent.

- Data Quality and Structure: Outline-based methods demonstrated a significant advantage in a study of horse flies, where the contour of the first submarginal wing cell achieved 86.67% classification accuracy. This approach proved particularly valuable for analyzing specimens with incomplete wings but intact cells, a common issue with field-collected insects [38]. Conversely, a study on trichodinid parasites found landmark-based methods to be more accurate, suggesting that for certain structures, defined points may contain more taxonomically informative data than the overall contour [37].

- The Out-of-Sample Challenge: A critical finding from nutritional status research is that landmark-based models built on Procrustes coordinates face a fundamental hurdle in cross-validation. The entire classification pipeline, from alignment to classifier training, is sample-dependent. Applying this model to a new child's arm shape requires a non-standardized registration step to place the new individual into the pre-existing shape space, which can affect reliability [2]. This is a form of data leakage that proper cross-validation must account for.

Experimental Protocols for Cross-Validation

A Protocol for Landmark-Based GM with Cross-Validation

The following protocol, synthesized from multiple sources [2] [34] [35], is designed to properly address out-of-sample classification.

- Image Acquisition and Landmarking: Capture high-resolution, standardized 2D or 3D images. Using software such as tpsDig2, digitize Type I, II, and III landmarks on all specimens in the training set [34].

- Training-Sample Procrustes Analysis: Perform Generalized Procrustes Analysis (GPA) exclusively on the training sample to obtain Procrustes shape coordinates. Compute Centroid Size as a measure of isometric size [35].

- Classifier Construction: Use the Procrustes coordinates from the training set to build a classifier (e.g., Linear Discriminant Analysis). Evaluate its performance using leave-one-out cross-validation within the training sample [2].

- Out-of-Sample Registration: To classify a new specimen, its raw landmark coordinates must be aligned to the training sample's shape space. This is typically done by performing a Procrustes fit that rotates and scales the new specimen to the mean shape of the training set, without including it in a new GPA.

- Out-of-Sample Prediction: Apply the pre-trained classifier to the registered coordinates of the new specimen to predict its group membership.

A Protocol for Outline-Based GM with Cross-Validation

This protocol, based on studies of fish, insects, and parasites [38] [33] [34], outlines the workflow for outline analysis.

- Image Preparation and Outline Extraction: Remove backgrounds from images using tools like ImageJ. Extract the outline coordinates of the structure of interest [34].

- Mathematical Representation: For closed outlines, apply Elliptic Fourier Analysis (EFA) using the Momocs package in R. Select a sufficient number of harmonics to capture the essential shape. Normalize the coefficients for size, rotation, and starting point [34].

- Classifier Construction and Validation: Use the normalized Fourier coefficients as variables. Construct a classifier (e.g., DFA) on the training set and validate its performance using leave-one-out cross-validation [38].

- Out-of-Sample Classification: For a new specimen, extract its outline, compute its normalized Fourier coefficients using the same harmonic model, and directly apply the pre-trained classifier. This process is typically more straightforward than landmark-based out-of-sample prediction as it avoids a re-alignment step [2].

The following workflow diagram illustrates the critical divergence in how the two methods handle out-of-sample data, highlighting the additional registration step required for landmark-based GM.

The Researcher's Toolkit: Essential Reagents and Software

Table 2: Essential Software and Analytical Tools for Geometric Morphometrics

| Tool Name | Type/Function | Application in GM | Relevance to Cross-Validation | |

|---|---|---|---|---|

| tpsDig2, tpsUtil | Software suite for digitization and file management | Digitizing landmarks and organizing data files | Foundational for creating reproducible landmark datasets. | [34] [35] |

| MorphoJ | Integrated software for GM analysis | Performing Procrustes superimposition, PCA, DFA, and CVA | Commonly used to build and perform leave-one-out cross-validation on training samples. | [34] [35] |

| R packages (Momocs, geomorph) | Statistical programming environment | Comprehensive outline analysis (Momocs) and general GM (geomorph) | Provides flexible, scripted environments for implementing custom cross-validation protocols. | [34] |

| ImageJ | Image processing and analysis | Background removal and outline extraction | Essential for preparing images for consistent and automated outline analysis. | [34] |

| Linear Discriminant Analysis (LDA) | Statistical classification method | Building classifiers from shape variables (Procrustes coordinates or Fourier coeffs.) | The primary method for creating classification rules that are tested via cross-validation. | [2] [34] |

Both landmark- and outline-based geometric morphometrics offer powerful, yet distinct, pathways for shape classification. The choice between them should be guided by the specific research context and the paramount importance of cross-validation performance.

- Opt for Landmark-Based GM when your structures possess clear, homologous points and the primary research goal requires deep biological interpretation tied to specific anatomical locations. However, researchers must be acutely aware of and develop robust solutions for the out-of-sample registration problem to ensure their models are generalizable [2].

- Opt for Outline-Based GM when analyzing structures lacking discrete landmarks, when dealing with damaged specimens where key areas are missing but contours remain [38], or when a more streamlined out-of-sample prediction pipeline is desirable. Methods like the analysis of wing cell contours [38] or the shape-changing chain [36] show high discriminatory power and practical advantages.

Ultimately, the most rigorous approach may often involve a combination of both methods, leveraging their respective strengths to validate findings and build a more comprehensive and reliable model of shape variation for real-world application.

{Abstract} Geometric morphometrics (GM) is a fundamental tool for quantifying biological shape, but it can be limited by its reliance on discrete landmarks. This guide compares a novel protocol, Functional Data Geometric Morphometrics (FDGM), against classical GM and other alternatives. FDGM enhances sensitivity by converting discrete landmark data into continuous curves, capturing subtle shape variations often missed by traditional methods. Experimental data from species classification studies, particularly on shrew crania, demonstrates FDGM's superior performance in cross-validation and machine learning applications, establishing it as a powerful protocol for taxonomic and morphological research where high sensitivity is critical.

Geometric Morphometrics (GM) is a landmark-based approach that quantitatively analyzes the shape of biological organisms by comparing the coordinates of anatomically defined points after removing differences in size, position, and orientation through a process called Generalized Procrustes Analysis (GPA) [9] [39]. While powerful, a key limitation of classical GM is that important shape differences can occur between landmarks, which the discrete point data may fail to capture [9].

Functional Data Geometric Morphometrics (FDGM) is an advanced protocol that addresses this gap. FDGM treats the configuration of landmarks not as a set of discrete points, but as a continuous curve. It uses mathematical functions to represent the entire shape, thereby capturing the geometry between landmarks and providing a more comprehensive description of form [9]. This protocol is particularly valuable for enhancing the sensitivity of analyses aimed at distinguishing groups with very subtle morphological differences.

Objective Comparison of Protocol Performance

Experimental data from direct comparisons provides the most reliable evidence for evaluating protocol performance. A study on shrew classification offers a robust, head-to-head comparison between FDGM and classical GM.

Direct Performance Comparison: FDGM vs. Classical GM