Cross-Dataset Validation of Malaria Parasite Classification Models: Challenges, Strategies, and Clinical Translation

This article provides a comprehensive analysis of cross-dataset validation for deep learning models in malaria parasite classification, a critical step for ensuring real-world clinical applicability.

Cross-Dataset Validation of Malaria Parasite Classification Models: Challenges, Strategies, and Clinical Translation

Abstract

This article provides a comprehensive analysis of cross-dataset validation for deep learning models in malaria parasite classification, a critical step for ensuring real-world clinical applicability. Aimed at researchers, scientists, and drug development professionals, it explores the foundational challenges of dataset variability, reviews state-of-the-art model architectures, and details methodological frameworks for robust validation. The content further addresses key troubleshooting strategies for data quality and model generalization, and establishes rigorous benchmarks for performance comparison. By synthesizing insights from recent scientific literature, this work offers a actionable roadmap for developing reliable, generalizable, and clinically translatable AI-driven diagnostic tools for malaria.

The Critical Imperative: Why Cross-Dataset Validation is Non-Negotiable for Clinical AI

For over a century, Giemsa-stained blood smear microscopy has constituted the undisputed gold standard for malaria diagnosis and remains the primary endpoint for clinical trials and drug efficacy studies. However, this method suffers from significant limitations that compromise its reliability as a reference standard, particularly in the context of developing and validating automated malaria classification models. This review systematically examines the technical and operational constraints of manual microscopy, analyzes its impact on cross-dataset validation of machine learning models, and explores emerging solutions that leverage artificial intelligence to overcome these challenges. We present quantitative performance comparisons between manual and automated diagnostic methods and provide detailed experimental protocols for benchmarking malaria detection systems. The analysis reveals that addressing microscopy's limitations is critical for advancing robust, generalizable AI solutions that can transform malaria diagnosis in resource-limited settings.

Since Gustav Giemsa introduced his staining mixture in 1904, microscopic examination of stained blood films has served as the cornerstone of malaria diagnosis [1]. This technique provides unparalleled benefits, including direct parasite visualization, species differentiation, and parasite quantification capabilities that inform clinical management and therapeutic decisions. The World Health Organization (WHO) designates microscopy as the essential reference standard for assessing new diagnostic tools, and it remains the only U.S. Food and Drug Administration (FDA)-approved endpoint for evaluating anti-malarial drugs and vaccines [1]. Despite this authoritative status, a substantial body of evidence demonstrates that manual microscopy exhibits significant variability in performance, undermining its reliability as a definitive diagnostic benchmark [1] [2] [3].

The limitations of manual microscopy present particularly acute challenges for the developing field of automated malaria diagnosis using artificial intelligence (AI). The performance of any machine learning model is fundamentally constrained by the quality and accuracy of its training labels and evaluation benchmarks. When the reference standard itself is inconsistent, validating model performance across diverse datasets becomes problematic [4]. This review examines the specific limitations of manual microscopy through the specialized lens of cross-dataset validation for malaria parasite classification models, an area where inconsistent reference standards directly impede algorithmic advancement and clinical translation.

Limitations of Manual Microscopy: A Systematic Analysis

Diagnostic Accuracy Challenges

The diagnostic performance of manual microscopy varies considerably across different settings, influenced by multiple factors including technician expertise, workload, equipment quality, and environmental conditions. Table 1 summarizes the key limitations and their impacts on diagnostic accuracy.

Table 1: Limitations of manual microscopy and their impact on diagnostic accuracy

| Limitation Category | Specific Issue | Impact on Diagnosis | Quantitative Evidence |

|---|---|---|---|

| Sensitivity Variation | Variable detection thresholds | Missed low-density infections | Field sensitivity: 50-100 parasites/μL (vs. 4-20/μL ideal) [1] |

| False Positives | Stain precipitation, platelets, debris | Misdiagnosis of non-malarial fevers | Specificity as low as 92.5% in field settings [3] |

| Species Identification | Differentiation challenges | Incorrect treatment protocols | Frequent confusion between P. vivax/P. ovale; underreporting of mixed infections [1] |

| Parasite Quantification | Inconsistent counting methods | Inaccurate severity assessment & treatment monitoring | High variability in parasite density estimates [1] |

| Operator Dependency | Training & experience level | Inconsistent results across facilities | Sensitivity range: 36.8% (inexperienced) to >90% (experts) [3] |

The sensitivity of microscopy demonstrates particular variability. Under ideal research conditions with expert microscopists, the detection threshold for Giemsa-stained thick blood films has been estimated at 4-20 parasites/μL [1]. However, under routine field conditions, this threshold rises substantially to approximately 50-100 parasites/μL, potentially missing low-density infections that can maintain transmission and contribute to chronic morbidity [1]. This sensitivity limitation was starkly demonstrated in an Angolan prevalence survey where microscopy detected only 60% of PCR-confirmed Plasmodium falciparum infections, with performance varying significantly by age group—68.4% in preschool children versus just 36.8% in adults [3].

Species misidentification represents another critical limitation. A well-trained, proficient microscopist should correctly recognize Plasmodium species in thick blood films at relatively low parasite density, but this expertise is uncommon in many endemic settings [1]. Most documented species errors involve differentiating between P. vivax and P. ovale or recognizing infections with simian plasmodia such as P. knowlesi [1]. Even distinguishing P. falciparum from P. vivax, the two most common species, occurs with unexpected frequency in routine microscopy but is substantially underreported [1]. These errors have direct clinical consequences, as different Plasmodium species require distinct treatment regimens.

Impact on Model Validation and Generalization

The inconsistencies in manual microscopy create fundamental challenges for developing and validating automated classification models. When training data contains erroneous labels or inconsistent annotations, models learn incorrect features and patterns, compromising their performance and generalizability [4]. Table 2 compares the performance of manual microscopy against automated systems and PCR across different study conditions.

Table 2: Performance comparison of malaria diagnostic methods across studies

| Diagnostic Method | Study Context | Sensitivity (%) | Specificity (%) | Reference Standard |

|---|---|---|---|---|

| Manual Microscopy | Angolan prevalence survey | 60.0 | 92.5 | PCR [3] |

| RDT (Paracheck-Pf) | Angolan prevalence survey | 72.8 | 94.3 | PCR [3] |

| Manual Microscopy | UK imported malaria study | 93.6 (any species) | 99.4 | Expert microscopy [5] |

| RDT | UK imported malaria study | 100 (P. falciparum) | 98.8 | Expert microscopy [5] |

| EasyScan GO (automated) | WHO 55 slide set | 94.3 (detection) | - | Expert microscopy [2] |

The "cross-dataset validation gap" emerges clearly when models trained on data labeled by one group of microscopists perform poorly on data labeled by different groups. This problem stems not from algorithmic deficiencies but from inconsistent reference standards [4]. Variations in blood smear preparation techniques, staining protocols, and imaging equipment introduce significant biases that limit a model's applicability to new environments [4]. For instance, models trained on data from a specific region may perform poorly when tested on samples from other regions, a phenomenon that underscores the critical importance of domain adaptation and robust validation frameworks [4].

The impact of imperfect training labels can be substantial. Studies have demonstrated that class imbalances in malaria datasets—where uninfected cells significantly outnumber parasitized cells—can lead to a 20% drop in F1-score, reflecting both reduced precision and recall [4]. Such data quality issues ultimately compromise the real-world applicability of otherwise sophisticated models, particularly in resource-constrained settings where automated diagnosis could offer the greatest benefit.

Experimental Protocols for Benchmarking Diagnostic Performance

WHO External Competence Assessment Methodology

The World Health Organization has established standardized protocols for evaluating malaria diagnostic competence through its External Competence Assessment of Malaria Microscopists (ECAMM) programme. These protocols provide a rigorous framework for benchmarking both human technicians and automated systems [2].

Slide Set Composition: The ideal WHO 55 slide set consists of carefully validated Giemsa-stained blood films including:

- Detection subset: 20 negative samples and 20 positive samples with parasitemia ranging from 80-200 parasites/μL

- Species identification subset: The same 20 negative and 20 positive samples used for detection

- Quantitation subset: 15 P. falciparum slides with parasitemia within 200-2000 parasites/μL, plus one or two very high parasitemia slides [2]

Assessment Criteria:

- Detection accuracy: Correct classification of slides as positive or negative

- Species identification accuracy: Correct determination of Plasmodium species

- Quantitation accuracy: Parasite density estimates within 25% of reference values [2]

Reference Standard Establishment: All slides in the WHO set are validated by multiple independent microscopists certified as Level 1 malaria microscopists, with parasite species confirmed by at least 70% of readers and by polymerase chain reaction (PCR) [2]. Parasite counts are estimated against 500 white blood cells using an assumed average white cell count of 8000/μL, with the median of 24 readings taken as the reference count [2].

Cross-Dataset Validation Protocol for AI Models

Robust evaluation of automated malaria classification models requires rigorous cross-dataset validation to assess generalization capability. The following protocol adapts principles from both malaria diagnostics and machine learning best practices:

Dataset Partitioning Strategy:

- Training Set: Diverse slides from multiple geographical regions (e.g., over 500 slides from 11 countries)

- Validation Set: Held-out data from same sources as training set for parameter tuning

- Test Set: completely independent dataset (e.g., WHO 55 slide set) never used during training [2]

Performance Metrics:

- Detection: Sensitivity, specificity, positive and negative predictive values

- Species Identification: Per-class accuracy and overall species identification accuracy

- Quantitation: Percentage of estimates within 25% of reference values [2]

Generalization Assessment:

- Performance comparison between internal validation and external test sets

- Cross-dataset testing using slides with different preparation protocols, staining methods, and imaging equipment

- Analysis of performance variation across parasite density ranges and species [4]

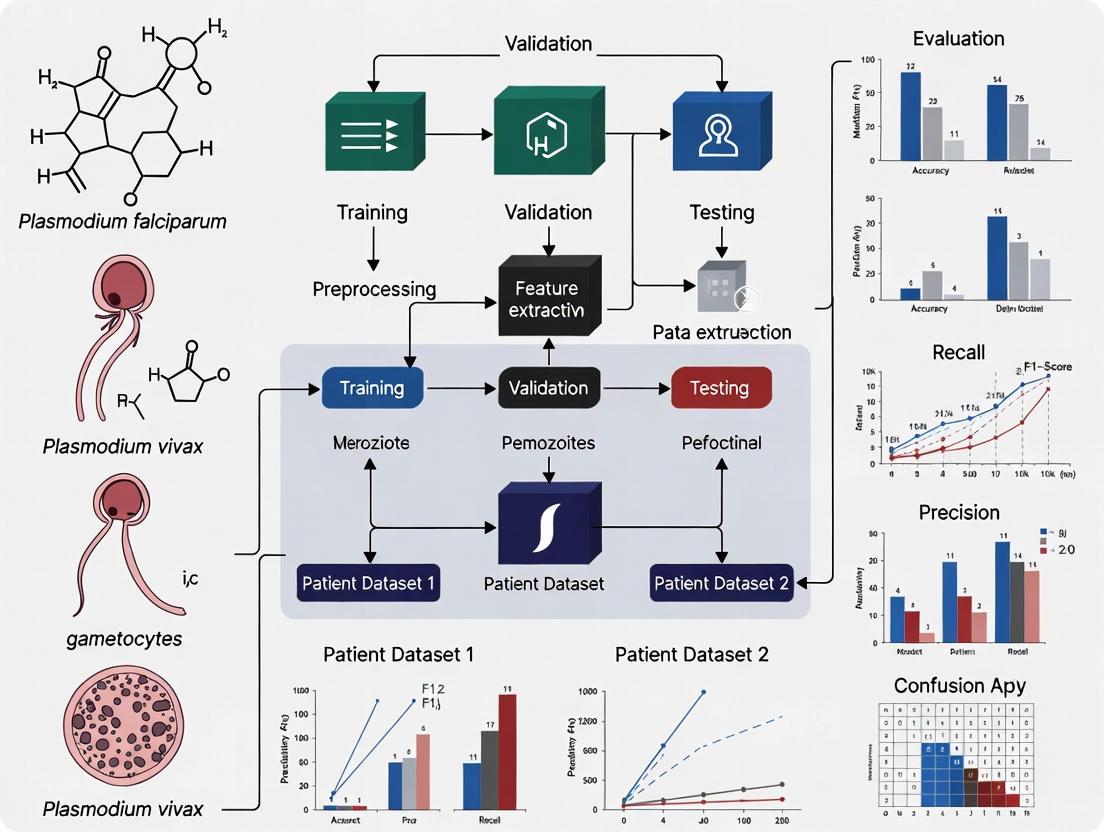

The following diagram illustrates the relationship between microscopy limitations and their impact on model validation:

Emerging Solutions and Alternative Approaches

Automated Microscopy Systems

Fully automated diagnostic systems represent a promising approach to overcoming the limitations of manual microscopy. These systems combine automated microscopy platforms with machine learning algorithms to provide reproducible, standardized diagnoses. The EasyScan GO system, tested on a WHO 55 slide set, achieved 94.3% detection accuracy, 82.9% species identification accuracy, and 50% quantitation accuracy, corresponding to WHO microscopy competence Levels 1, 2, and 1, respectively [2]. This performance demonstrates the potential of automated systems to mitigate human variability while maintaining diagnostic accuracy, particularly for detection and species identification.

Advanced AI and Data Processing Techniques

Addressing data quality challenges requires sophisticated technical approaches. Several promising strategies have emerged:

Data Augmentation with Generative Adversarial Networks (GANs): GAN-based augmentation has been shown to improve model accuracy by 15-20% by generating synthetic data to balance classes and enhance dataset diversity [4]. In one study, researchers employed WGAN-GP to augment training samples from multi-class cell images, significantly enhancing model robustness [6].

Domain Adaptation Techniques: Transfer learning and domain adaptation methods improve cross-domain robustness by up to 25% in sensitivity [4]. Transformer-based models like Swin Transformer and MobileViT have demonstrated exceptional performance in malaria classification, with Swin Transformer achieving up to 99.8% accuracy while MobileViT offers lower memory usage and shorter inference times [6].

Advanced Model Architectures: Convolutional Neural Networks (CNNs) and transformer-based models have shown remarkable capabilities in analyzing medical images. The Swin Transformer model achieves superior detection performance, while MobileViT demonstrates lower memory usage and shorter inference times, enabling deployment on edge devices with limited computational resources [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key research reagents and materials for malaria diagnostics research

| Item | Function/Application | Specifications/Protocols |

|---|---|---|

| Giemsa Stain | Staining malaria parasites in blood films for microscopic visualization | 10% Giemsa for 15 minutes; distinguishes parasite chromatin and cytoplasm [1] [7] |

| Reference Blood Smears | Quality control, training, and validation of diagnostic methods | WHO reference slides available through Malaria Research and Reference Reagent Resource Center (MR4) [1] |

| RDTs (Rapid Diagnostic Tests) | Field-based rapid detection of malaria antigens | Immunochromatographic assays detecting HRP2, pLDH; results in 15-20 minutes [8] [5] |

| PCR Reagents | Molecular confirmation of Plasmodium species | Nested PCR targeting SSU-rRNA gene; high sensitivity but requires specialized equipment [3] |

| Digital Whole Slide Imaging Systems | Automated slide scanning and image acquisition | Systems like EasyScan GO with 40× objectives; enable automated image analysis [2] |

Manual microscopy remains an essential tool for malaria diagnosis and research, but its limitations as a reference standard significantly impact the development and validation of automated classification models. The documented variability in diagnostic accuracy, species identification, and parasite quantification creates fundamental challenges for cross-dataset validation and model generalization. Addressing these limitations requires a multi-faceted approach incorporating standardized evaluation protocols, advanced data processing techniques, and robust validation frameworks. Emerging technologies in automated digital microscopy and artificial intelligence offer promising pathways toward more consistent, reproducible malaria diagnosis that can transcend the constraints of traditional microscopy. As these technologies evolve, establishing more reliable reference standards will be crucial for advancing the field and developing diagnostic tools that perform consistently across diverse populations and settings.

The application of deep learning for malaria parasite classification represents a significant advancement in automated diagnostics, promising to alleviate the burden on microscopists in resource-limited settings. However, a critical challenge persists: models that demonstrate exceptional performance on their original benchmark datasets often fail to maintain this accuracy when applied to new data from different sources or clinical environments. This performance drop, known as the generalization gap, stems primarily from dataset biases—systematic inaccuracies or limitations in the training data that do not reflect the true variability encountered in real-world settings. These biases can arise from multiple sources, including variations in staining protocols, blood smear preparation techniques, microscope configurations, and demographic differences in patient populations [9].

The pursuit of malaria elimination by 2030, particularly in high-burden countries, depends on reliable diagnostic tools that can perform consistently across diverse clinical settings [10]. While recent models have reported accuracy exceeding 97% on controlled datasets, their translational potential to field conditions remains uncertain without rigorous cross-dataset validation [11] [12] [13]. This guide systematically compares current approaches, their experimental methodologies, and performance across datasets to provide researchers and drug development professionals with a clear understanding of the generalization challenge in malaria parasite classification.

Comparative Analysis of Model Architectures and Performance

Researchers have developed diverse architectural strategies to address malaria classification, each with distinct advantages and limitations concerning generalizability. The table below summarizes the performance of recently proposed models on their primary datasets.

Table 1: Performance Comparison of Recent Malaria Diagnostic Models

| Model Architecture | Reported Accuracy | Precision | Recall/Sensitivity | F1-Score | Primary Dataset | Key Innovation |

|---|---|---|---|---|---|---|

| Ensemble (VGG16, ResNet50V2, DenseNet201, VGG19) [11] | 97.93% | 97.93% | - | 97.93% | - | Adaptive weighted averaging ensemble |

| Multi-model Framework (ResNet-50, VGG-16, DenseNet-201 + SVM/LSTM) [12] | 96.47% | 96.88% | 96.03% | 96.45% | 27,558 thin blood smear images | Feature fusion with majority voting |

| CNN with Seven-Channel Input [13] | 99.51% | 99.26% | 99.26% | 99.26% | 190,399 thick smear images | Advanced image preprocessing |

| Hybrid Capsule Network [14] | ~100%* | - | - | - | Four benchmark datasets | Lightweight architecture for mobile deployment |

| DANet (Lightweight CNN) [15] | 97.95% | - | - | 97.86% | NIH Malaria Dataset | Dilated attention mechanism |

| Low-cost CNN System [16] | 89% | 89% | 89.5% | - | Public dataset | Optimized for portable, low-cost deployment |

*Note: *Reported as "up to 100%" on specific benchmark datasets

While these results appear promising, direct comparison is complicated by variations in evaluation datasets and protocols. For instance, the ensemble model achieving 97.93% accuracy utilized an adaptive weighted averaging approach that assigns greater influence to stronger models based on validation performance [11]. Similarly, the CNN with seven-channel input leveraged advanced preprocessing techniques including feature enhancement and the Canny Algorithm on RGB channels to achieve its notable 99.51% accuracy [13]. These specialized approaches, while effective on their test data, may not necessarily translate equally well to external datasets with different characteristics.

Experimental Protocols for Cross-Dataset Validation

Standardized Evaluation Methodologies

To properly assess generalization capability, researchers have implemented several experimental protocols focused on cross-dataset validation:

K-fold Cross-Validation: The seven-channel CNN model implemented a stratified K-fold approach with five folds, where in each iteration, four folds were used for training while the remaining fold was split equally for validation and testing. After five iterations, results were averaged to obtain overall performance metrics (accuracy: 99.51%, precision: 99.26%, recall: 99.26%) [13]. This approach provides a more robust estimate of model performance than simple train-test splits.

Cross-Dataset Evaluation: The Hybrid Capsule Network was explicitly evaluated on four benchmark malaria datasets (MP-IDB, MP-IDB2, IML-Malaria, MD-2019) to measure both intra-dataset and cross-dataset performance. The model maintained high accuracy while significantly reducing computational requirements (1.35M parameters, 0.26 GFLOPs), making it suitable for mobile deployment in resource-constrained settings [14].

Multi-Species Validation: PlasmoCount 2.0 incorporated a validation dataset of 164 images featuring simian malaria parasite species (P. knowlesi and P. cynomolgi) that were not represented in the primary training data. This approach tests the model's ability to handle truly unseen parasite morphologies and provides a more realistic assessment of field deployment capability [17].

Dataset Composition and Diversity Considerations

The composition of training datasets significantly impacts model generalizability. A comprehensive study investigating the impact of dataset integration examined eleven publicly available blood film datasets, analyzing classification performance based on infection status, parasite species, smear type, optical train, and staining method [9]. The research found that models tested on combined datasets generally outperformed those trained on individual datasets, with VGG19 achieving 85% validation accuracy for smear classification on combined data compared to 81% on a single dataset for infection status.

Table 2: Impact of Dataset Diversity on Model Performance

| Model | Validation Task | Single Dataset Accuracy | Combined Dataset Accuracy | Performance Improvement |

|---|---|---|---|---|

| VGG19 [9] | Infection Status | 81% | - | - |

| RESNET50 [9] | Species Classification | 59% | - | - |

| VGG19 [9] | Smear Classification | - | 85% | +4% |

| VGG19 [9] | Optical Train | - | 96% | - |

| RESNET50 [9] | Stain Classification | 55% | - | - |

The relatively low performance on species (59%) and stain classification (55%) highlights the persistent challenges in generalizing across these specific variables, indicating areas where dataset biases most significantly impact model performance.

Visualization of Experimental Workflows

Cross-Dataset Validation Workflow

Ensemble Model Architecture

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for Malaria Classification Studies

| Reagent/Material | Specification | Research Function | Considerations for Generalization |

|---|---|---|---|

| Giemsa Stain [13] [17] | Standard histological stain | Highlights parasites in blue/dark red against light red RBCs | Staining protocol variations affect color distribution; major source of dataset bias |

| Blood Smear Slides [12] [13] | Thin and thick smears | Gold standard for malaria diagnosis | Smear type (thin/thick) requires different feature extraction approaches |

| Microscopy Systems [9] | Various magnifications (40x, 100x) | Image acquisition | Field of view and resolution differences impact feature visibility |

| Datasets [12] [14] | MP-IDB, IML-Malaria, NIH Dataset | Model training and validation | Combined datasets improve robustness but require normalization |

| Computational Framework [15] | Python, TensorFlow/PyTorch | Model implementation and training | Lightweight architectures enable field deployment (e.g., DANet: 2.3M parameters) |

| Validation Samples [17] | Multiple Plasmodium species | Cross-species generalization testing | Essential for assessing real-world applicability across parasite diversity |

The generalization gap in malaria parasite classification models represents a significant barrier to the widespread deployment of AI-driven diagnostics in clinical and field settings. While current models demonstrate impressive performance on benchmark datasets, with accuracy frequently exceeding 97%, their reliability diminishes when confronted with data that exhibits variations in staining, microscopy, smear preparation, or parasite species [11] [12] [13]. This gap underscores the critical importance of cross-dataset validation as an essential component of model evaluation rather than an optional supplement.

To effectively bridge this gap, researchers should prioritize several key strategies: the systematic integration of diverse datasets during training [9], the development of lightweight architectures that maintain performance while reducing computational demands [14] [15], and the implementation of comprehensive multi-species validation protocols [17]. Additionally, standardized reporting of metadata including staining methods, microscope specifications, and patient demographics would significantly enhance the comparability of research findings across studies. As the field progresses toward the goal of malaria elimination by 2030, addressing these challenges will be essential for creating diagnostic tools that deliver consistent, reliable performance across the diverse range of settings where they are most urgently needed.

The development of robust deep learning models for malaria parasite classification is fundamentally challenged by the critical issue of dataset divergence. Models that demonstrate near-perfect accuracy on their original training dataset often experience a significant drop in performance when applied to new data, a phenomenon that severely limits their real-world clinical utility [18]. This divergence is not a minor inconvenience but a central obstacle to the deployment of automated diagnostics in the diverse and often resource-limited settings where malaria is most prevalent. The core of this problem lies in the inherent variability of the source data—microscopic images of blood smears. This variability arises from multiple technical and geographical factors that introduce differences in image characteristics, which are not related to the actual biological features of the parasites. This guide objectively analyzes the primary sources of this dataset divergence—staining protocols, imaging equipment, and regional variations in parasite species—by synthesizing experimental data from recent comparative studies. It further details the experimental methodologies used to quantify this performance gap and provides a toolkit of strategies researchers are employing to build more generalizable and reliable classification models [18] [19].

Quantitative Evidence of Performance Divergence

Cross-dataset validation experiments provide the most direct evidence of model performance degradation. The following table summarizes key findings from recent studies that evaluated their models on datasets different from their training data.

Table 1: Documented Performance Gaps in Cross-Dataset Validation

| Training Dataset | Testing Dataset | Reported Performance (Accuracy/Precision) | Cross-Dataset Performance Drop | Key Divergence Factor(s) Identified |

|---|---|---|---|---|

| MBB (P. vivax) [19] | MP-IDB (P. ovale, P. malariae, P. falciparum) [19] | Detection Accuracy: 0.92 (on MBB) | Detection Accuracy: 0.79-0.84 (on MP-IDB) [19] | Parasite Species, Staining Variation |

| PlasmoCount 2.0 (Multi-species) [17] | Unseen P. knowlesi & P. cynomolgi [17] | High classification accuracy (99.8%) on primary dataset | "Significant prediction improvements on out-of-domain data" noted after specific adaptations [17] | Parasite Species Morphology |

| P. vivax-specific Model [19] | MP-IDB (P. falciparum) [19] | N/A | Detection Accuracy: 0.92 (Highest among cross-species tests) [19] | Parasite Species (P. falciparum morphology may be more distinct) |

The data indicates that models trained on a single species, such as P. vivax, experience a measurable drop in detection accuracy when applied to other species like P. ovale and P. malariae [19]. Furthermore, while not all studies provide a single quantitative drop, the focus on achieving robustness to "out-of-domain data" and "variations in staining, microscopy platform, etc." underscores that dataset divergence is a widely recognized and significant challenge [17]. The fact that a model trained on P. vivax performed best on P. falciparum when tested cross-species also suggests that the degree of divergence is not uniform and may be influenced by the specific morphological characteristics of the parasite species involved [19].

Experimental Protocols for Quantifying Divergence

To systematically diagnose and address dataset divergence, researchers employ rigorous experimental protocols. The following methodologies are critical for benchmarking model robustness.

Cross-Dataset Validation

This is the foundational protocol for assessing generalizability. Instead of only performing a standard train-test split on a single dataset, models are trained on one or more source datasets and then tested on a completely separate, held-out target dataset with different characteristics [18] [19]. The performance gap between the source test set and the target test set is a direct measure of dataset divergence. For instance, one study trained their detection model exclusively on the MBB dataset (P. vivax) and then evaluated it on the multi-species MP-IDB dataset, revealing performance variations across species [19].

Multi-Species Training and Leave-One-Species-Out Evaluation

This protocol specifically probes a model's ability to handle morphological diversity across parasite species. Researchers train a single model on image data encompassing multiple Plasmodium species (e.g., P. falciparum, P. vivax, P. berghei) [17]. The model's robustness is then tested by evaluating its performance on a species that was excluded from the training set. This "leave-one-species-out" approach simulates the real-world challenge of deploying a diagnostic tool in a new region where a different parasite species may be prevalent and provides a clear measure of how well the model generalizes across species boundaries.

Staining Invariance Analysis

To isolate the impact of staining variation, researchers preprocess images to minimize its effect. A key method involves color-to-grayscale conversion. By converting all images to grayscale before training and inference, the model is forced to learn from morphological and textural features rather than relying on color information that is highly dependent on the specific staining protocol (e.g., Giemsa concentration, staining time) [19]. Experiments comparing model performance on grayscale versus color images in cross-dataset scenarios can quantify the contribution of staining variation to overall dataset divergence.

Visualizing the Impact of Dataset Divergence

The diagram below maps the sources of dataset divergence, their interactions, and their ultimate impact on model performance.

Diagram: Pathways of Dataset Divergence in Malaria Image Analysis. This map illustrates how technical and regional factors introduce feature variations that are not biologically relevant, leading trained models to make decisions based on confounding artifacts and resulting in a performance drop during real-world use.

Successfully navigating dataset divergence requires a suite of data, software, and methodological tools. The following table details essential components for research in this field.

Table 2: Key Research Reagent Solutions for Cross-Dataset Validation

| Resource Category | Specific Example(s) | Function & Relevance to Divergence Research |

|---|---|---|

| Public Benchmark Datasets | NIH Malaria Dataset [20] [21], MP-IDB [19], MBB Dataset [19], IML-Malaria [18] | Provide standardized, annotated image data from specific sources for model training. Using multiple datasets is essential for cross-dataset validation experiments. |

| Object Detection Models | YOLO Series (YOLOv4, YOLOv8, YOLOv10/v11) [22] [23] [17], Faster R-CNN [17] | Detect and localize red blood cells and parasites in whole slide images, a crucial first step before classification. Different architectures offer trade-offs in speed and accuracy. |

| Classification Architectures | Convolutional Neural Networks (CNNs) [20] [21], Vision Transformers (ViTs) [24], Hybrid Models (e.g., CNN-ViT, Capsule Networks) [18] [24] | Extract features and perform the final classification (e.g., infected/uninfected, life stage). Hybrid models are increasingly used to capture both local and global image features for better generalization. |

| Preprocessing Techniques | Grayscale Conversion [19], Dilation, CLAHE, Normalization [21] | Reduce the influence of dataset-specific artifacts like staining color and contrast, forcing the model to focus on more invariant morphological features. |

| Validation Protocols | Cross-Dataset Validation [18] [19], Leave-One-Species-Out Evaluation | The core experimental methods for objectively quantifying a model's robustness and generalizability to new data sources. |

The pursuit of clinically viable AI models for malaria diagnosis hinges on directly confronting the challenge of dataset divergence. Quantitative evidence from cross-dataset experiments consistently reveals that performance degradation due to variations in staining, equipment, and parasite species is a real and significant barrier. By adopting rigorous validation protocols such as cross-dataset testing and leave-one-species-out evaluation, researchers can move beyond optimistic, dataset-specific accuracy metrics and obtain a true measure of model robustness. The path forward requires a concerted shift in model development strategy—from simply maximizing accuracy on a single benchmark to proactively engineering for invariance. This involves leveraging multi-source and multi-species datasets, employing preprocessing techniques that minimize technical artifacts, and designing architectures capable of learning the fundamental morphological features of malaria parasites, regardless of their origin.

The development of artificial intelligence (AI) models for malaria parasite classification represents a frontier in the fight against a disease that continues to cause hundreds of thousands of deaths annually [18] [25]. While numerous models demonstrate exceptional performance on their native datasets, achieving accuracies above 90% and even up to 100% in controlled settings, their real-world utility hinges on a often-overlooked factor: generalizability [18]. Performance on a single, curated dataset is an academic metric; performance across diverse, unseen datasets from different geographical locations, staining protocols, and imaging equipment is a clinical performance requirement. This guide objectively compares the performance of contemporary malaria diagnostic models, with a critical focus on their validation across multiple datasets—the true benchmark for a successful transition from research to clinical application.

Comparative Analysis of Malaria Diagnostic Models

The table below summarizes the key performance metrics and architectural features of recently published models, highlighting their computational efficiency and cross-dataset evaluation scope.

Table 1: Performance and Computational Comparison of Malaria Diagnostic Models

| Model Name | Reported Accuracy (%) | Key Metric (mAP%) | Parameters | Computational Cost (GFLOPs) | Cross-Dataset Evaluation |

|---|---|---|---|---|---|

| Hybrid CapNet [18] | Up to 100 (Multiclass) | N/A | 1.35 Million | 0.26 | Yes (4 datasets: MP-IDB, MP-IDB2, IML-Malaria, MD-2019) |

| YOLOv3 [25] | 94.41 | N/A | Not Specified | Not Specified | No (Single clinical dataset) |

| Optimized YOLOv4 [22] | N/A | 90.70 | Reduced via pruning | ~22% B-FLOPS saved | No (Focused on model pruning) |

The data reveals a critical distinction. While the YOLOv3 model demonstrates high accuracy (94.41%) in detecting Plasmodium falciparum-infected red blood cells (iRBCs) in a clinical setting [25], and the optimized YOLOv4 achieves a high mean Average Precision (mAP) through architectural efficiency [22], only the Hybrid Capsule Network (Hybrid CapNet) explicitly reports rigorous cross-dataset validation. This model was evaluated on four benchmark datasets (MP-IDB, MP-IDB2, IML-Malaria, MD-2019), achieving superior accuracy with a lightweight architecture of only 1.35 million parameters and 0.26 GFLOPs, making it suitable for mobile deployment [18]. This cross-dataset testing is a more robust indicator of potential clinical performance.

Experimental Protocols and Methodologies

A deep understanding of model performance requires insight into the experimental workflows that generated the data. The methodologies for the core models discussed herein are detailed below.

Hybrid CapNet for Multiclass Classification

The Hybrid CapNet architecture was designed for precise parasite identification and life-cycle stage classification (ring, trophozoite, schizont, gametocyte) [18]. The experimental protocol can be summarized as follows:

- Architecture: A lightweight hybrid model combining Convolutional Neural Networks (CNNs) for feature extraction with capsule layers to preserve spatial hierarchies within the parasite images.

- Training: Utilized a novel composite loss function integrating margin, focal, reconstruction, and regression losses. This enhanced classification accuracy, spatial localization, and robustness to class imbalance and annotation noise.

- Validation: Underwent both intra-dataset and cross-dataset evaluation on four public benchmarks. Performance was measured by classification accuracy and computational efficiency. Interpretability was validated using Grad-CAM visualizations to confirm the model focused on biologically relevant parasite regions [18].

YOLOv3 for Infected Red Blood Cell Detection

The YOLOv3 model was applied to the task of directly detecting iRBCs in thin blood smear images [25]. The workflow involved:

- Data Preparation: Thin blood smears from patients returning from endemic areas were prepared, stained with Giemsa, and imaged. Original high-resolution images (2592 × 1944 pixels) were cropped into smaller sub-images (518 × 486 pixels) using a non-overlapping sliding window strategy to preserve fine morphological features. These were then resized to 416 × 416 pixels for model input.

- Annotation: Experts created bounding box labels for each iRBC in the cropped images, taking care to exclude platelets and impurities.

- Training & Evaluation: The YOLOv3 model, with its Darknet-53 backbone and multi-scale prediction, was trained on the annotated dataset. Its performance was evaluated based on its false negative rate, false positive rate, and overall recognition accuracy for iRBCs [25].

The following diagram illustrates the core workflow for the deep learning-based detection of malaria parasites from thin blood smears, as used in the YOLOv3 and similar studies.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful development and validation of malaria diagnostic models rely on a foundation of well-characterized biological and computational resources. The table below lists key reagents and their functions in this field.

Table 2: Key Research Reagent Solutions for Malaria Model Development

| Reagent / Resource | Function in Research | Example Use Case |

|---|---|---|

| Giemsa Stain | Stains nucleic acids of parasites, differentiating chromatin (red-purple) and cytoplasm (blue) in iRBCs for visual identification. | Standard staining protocol for preparing thin blood smear images for both manual microscopy and AI model training [25]. |

| Benchmark Datasets (e.g., MP-IDB, IML-Malaria) | Publicly available, labeled image collections of infected and uninfected RBCs; provide standardized ground truth for model training and comparative benchmarking. | Used for intra-dataset model training and, crucially, for cross-dataset validation to test generalizability [18]. |

| PlasmoFAB Benchmark | A curated dataset of P. falciparum protein sequences labeled as antigen candidates or intracellular proteins. | Used to train and evaluate machine learning models for predicting protein antigen candidates for vaccine development [26]. |

| qPCR Assays | Highly sensitive molecular technique for detecting parasite nucleic acids. | Used as a confirmatory diagnostic tool to validate infection status in patient samples used for model training and testing [25]. |

Signaling Pathways in Malaria Pathogenesis and Immunity

Beyond direct parasite detection, understanding the molecular interactions between the parasite and its human host is crucial for drug and vaccine development. A key player in pathogenesis is the Plasmodium falciparum erythrocyte membrane protein 1 (PfEMP1), a variant antigen expressed on infected red blood cells that mediates cytoadherence to host endothelial receptors, leading to sequestration and severe disease [27] [28].

The diagram above illustrates the central role of PfEMP1. Different PfEMP1 variants, containing domain cassettes like DC8 and DC13, bind to specific host receptors such as Endothelial Protein C Receptor (EPCR) and ICAM-1, which is strongly associated with severe and cerebral malaria [27] [28]. This cytoadherence triggers endothelial transcriptional responses linked to inflammation, apoptosis, and loss of barrier integrity [28]. Critically, the acquisition of antibodies against specific PfEMP1 variants, particularly those of the CIDRα1 class, has been longitudinally associated with protection from severe disease, highlighting their importance as targets of natural immunity and potential vaccine candidates [29].

The transition of AI-driven malaria diagnostics from an academic exercise to a clinically viable tool demands a redefinition of success. As this comparison guide illustrates, metrics such as accuracy on a single dataset are necessary but insufficient. The true differentiator is robust performance across multiple, heterogeneous datasets, as demonstrated by the Hybrid CapNet model [18]. Furthermore, for the broader goal of malaria eradication, computational efforts must extend beyond parasite detection to include the identification of key pathogenic mediators like PfEMP1 variants [28] [29] and liver-stage antigens [30] through specialized tools like the PlasmoFAB benchmark [26]. For researchers and drug development professionals, prioritizing cross-dataset validation and integrating molecular pathogenesis data will be critical in developing the next generation of diagnostic and therapeutic solutions that are not only accurate but also generalizable and biologically insightful.

Architectural Frontiers and Validation Frameworks for Robust Models

The development of automated diagnostic tools for malaria parasite classification represents a critical application of deep learning in global health. The performance and reliability of these tools are fundamentally governed by their underlying model architectures. This guide provides a comparative analysis of three dominant architectural paradigms—Convolutional Neural Networks (CNNs), Hybrid Models, and Transformer-based Networks—evaluating their performance, computational characteristics, and generalization capabilities within the essential context of cross-dataset validation. This approach rigorously tests model robustness against real-world variations in staining protocols, imaging equipment, and sample preparations encountered across different clinical settings [4].

Core Architectural Paradigms

Convolutional Neural Networks (CNNs): CNNs form the historical backbone of image classification tasks. They excel at hierarchical feature extraction through convolutional layers, pooling operations, and non-linear activations. Customized architectures, such as the Soft Attention Parallel CNN (SPCNN), have demonstrated exceptional accuracy on single-dataset evaluations, achieving up to 99.37% accuracy and a 99.95% AUC on specific benchmarks [21].

Hybrid Models: These architectures integrate components from different neural network paradigms to leverage their complementary strengths. A prominent example is the Hybrid Capsule Network (Hybrid CapNet), which combines CNN-based feature extraction with capsule layers. The capsule components are designed to better preserve hierarchical spatial relationships between features, which is crucial for identifying subtle morphological variations in parasites. This architecture has shown superior performance in cross-dataset evaluations [18]. Other hybrids fuse features from multiple pre-trained CNNs (e.g., ResNet-50, VGG-16, DenseNet-201) for classification by a meta-learner, achieving high accuracy through feature fusion and ensemble methods [12].

Transformer-based Networks: Originally developed for natural language processing, Transformers utilize a self-attention mechanism to weigh the importance of different parts of the input image. Models like the Swin Transformer have achieved leading performance on several malaria classification benchmarks, with reports of up to 99.8% accuracy [6]. Their ability to capture long-range dependencies across the image makes them particularly powerful. However, their computational demands can be a constraint, though efficient variants like MobileViT have been developed to offer a favorable balance between accuracy and resource consumption [6].

Quantitative Performance Comparison

The following table summarizes the reported performance metrics and computational demands of representative models from each architectural category.

Table 1: Performance and Computational Profile of Model Architectures for Malaria Classification

| Model Architecture | Representative Model | Reported Accuracy (%) | Key Metrics | Computational Cost |

|---|---|---|---|---|

| CNN | SPCNN [21] | 99.37 | Precision: 99.38%, Recall: 99.37%, AUC: 99.95% | 2.21M parameters |

| Hybrid | Hybrid CapNet [18] | Up to 100.00 (multiclass) | Superior cross-dataset generalization | 1.35M parameters, 0.26 GFLOPs |

| Hybrid | ResNet50+VGG16+DenseNet-201 Ensemble [12] | 96.47 | Sensitivity: 96.03%, Specificity: 96.90%, F1-Score: 96.45% | High (Multiple backbone networks) |

| Transformer | Swin Transformer [6] | 99.80 | High precision, recall, and F1-score | High computational demand |

| Transformer | MobileViT [6] | High (exact value not stated) | Competitive performance | Lower memory usage, shorter inference time |

The Critical Role of Cross-Dataset Validation

A model's performance on a single, curated dataset is an insufficient measure of its real-world utility. Cross-dataset validation, where a model trained on one dataset is tested on another, is the benchmark for assessing true generalization ability [4]. This process exposes models to variations that are inevitable in practice, such as differences in staining techniques (e.g., Giemsa, Wright), slide preparation, and microscope or digital scanner characteristics [18] [4].

Impact of Data Quality on Generalization

Challenges in data quality significantly impact model generalization, a key finding from cross-dataset studies:

- Class Imbalance: Models trained on datasets with an overrepresentation of uninfected cells can suffer from a 20% drop in F1-score, biasing predictions and reducing sensitivity to infected samples [4].

- Limited Diversity: Datasets lacking geographic and demographic diversity lead to models that perform poorly when deployed in new environments. For instance, a model trained on data from one region may fail in another due to divergent parasite strains or technical protocols [4].

- Annotation Variability: Inconsistencies in expert annotations introduce noise and reduce the reliability of the ground truth used for training [4].

Table 2: Impact of Data Quality Challenges and Mitigation Strategies

| Challenge | Impact on Model | Proposed Mitigation Strategies |

|---|---|---|

| Class Imbalance | Up to 20% reduction in F1-score; biased towards majority class | Data augmentation (rotation, flipping), GAN-based synthetic data [4], Focal Loss [18] |

| Limited Dataset Diversity | Poor cross-dataset performance; fails in new clinical settings | Multi-source dataset curation, domain adaptation techniques [4] |

| Annotation Variability | Reduced model reliability and trustworthiness | Annotation standardization, explainable AI (e.g., Grad-CAM) for validation [18] [21] |

Experimental Protocols for Model Evaluation

To ensure fair and rigorous comparison, studies employ standardized experimental protocols. The following workflow visualizes a typical benchmark validation process for malaria classification models.

Detailed Methodological Breakdown

Data Preparation and Preprocessing:

- Datasets: Models are trained and evaluated on publicly available benchmark datasets such as MP-IDB, MP-IDB2, IML-Malaria, and MD-2019 [18]. These datasets contain thousands of thin blood smear images with labels for infected vs. uninfected cells, and sometimes for parasite species and life-cycle stages.

- Preprocessing: A standardized sequential pipeline is often applied, including image dilation to accentuate structures, Contrast Limited Adaptive Histogram Equalization (CLAHE) to enhance contrast, and normalization to scale pixel values [21]. For cross-dataset validation, these steps are applied consistently to all datasets without dataset-specific tuning.

Model Training and Optimization:

- Loss Functions: To address class imbalance and improve localization, advanced composite loss functions are used. The Hybrid CapNet, for instance, employs a combination of margin loss, focal loss, reconstruction loss, and offset regression loss [18]. Focal loss is particularly effective for directing learning towards hard-to-classify examples.

- Training Regime: Models are typically trained using a form of gradient descent (e.g., Adam optimizer) with a carefully chosen learning rate. Techniques like five-fold cross-validation on the training set are used to ensure robustness and prevent overfitting [31].

Performance Evaluation:

- Intra-Dataset Evaluation: Model performance is first assessed on a held-out test set from the same dataset used for training, using metrics like accuracy, precision, recall, F1-score, and Area Under the Curve (AUC) [21] [12].

- Cross-Dataset Validation: This is the critical step for assessing generalizability. A model trained on one dataset (e.g., MD-2019) is directly applied to the test set of a completely different dataset (e.g., IML-Malaria) without any fine-tuning. The same performance metrics are calculated, and a significant drop in performance from intra-dataset to cross-dataset results indicates poor generalization [18] [4].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for conducting research in this field.

Table 3: Essential Research Tools for Malaria Classification Model Development

| Research Reagent / Resource | Function / Description | Example Use Case |

|---|---|---|

| Public Datasets (e.g., MP-IDB, NIH Dataset) | Provides standardized, annotated microscopic images for training and benchmarking models. | Serves as the foundational data for model development and intra-dataset evaluation [18] [4]. |

| Generative Adversarial Networks (GANs) | Generates synthetic, high-quality cell images to augment underrepresented classes in datasets. | Mitigates class imbalance; shown to improve model accuracy by 15-20% [4]. |

| Gradient-weighted Class Activation Mapping (Grad-CAM) | Produces visual explanations for model decisions, highlighting regions of the input image that were most influential. | Validates that models focus on biologically relevant parasite regions, increasing interpretability and trust [18] [21]. |

| Transfer Learning & Pre-trained Models | Leverages features from models pre-trained on large datasets (e.g., ImageNet) to boost performance on smaller medical imaging datasets. | Accelerates training and improves robustness, enhancing cross-dataset performance by up to 25% in sensitivity [4]. |

| Composite Loss Functions (e.g., Focal Loss) | Dynamically scales the loss to focus learning on hard, misclassified examples, addressing class imbalance. | Integrated into training pipelines to significantly improve sensitivity to infected (minority) cell classes [18]. |

The landscape of model architectures for malaria classification is diverse, with each paradigm offering distinct advantages. CNNs provide a strong, computationally efficient baseline, while Transformers achieve top-tier accuracy on specific benchmarks. However, for real-world deployment where robustness and generalization are paramount, Hybrid Models like the Hybrid CapNet present a compelling solution by balancing high accuracy with lower computational cost and demonstrated superiority in cross-dataset validation. The future of reliable, AI-driven malaria diagnostics lies not merely in pursuing higher accuracy on a single dataset, but in architecting models and building datasets that are inherently robust to the vast heterogeneity of the clinical world.

The application of artificial intelligence in malaria diagnostics represents a significant advancement in the global fight against this infectious disease. Within this domain, the transfer learning paradigm—where pre-trained deep learning models are adapted for new, specific tasks—has emerged as a particularly powerful approach. This methodology is especially valuable in medical imaging, where labeled data is often scarce and computational resources may be limited. By leveraging features learned from large general image datasets, researchers can develop highly accurate malaria detection systems without the prohibitive costs of training models from scratch. This guide provides an objective comparison of various transfer learning approaches applied to malaria parasite classification, with particular emphasis on their cross-dataset validation performance, which is crucial for assessing real-world applicability.

Performance Comparison of Transfer Learning Models

The evaluation of transfer learning models for malaria detection reveals a landscape of diverse architectural approaches, each with distinct strengths in accuracy, computational efficiency, and generalization capability. The table below provides a comprehensive comparison of recently published models based on their reported performance metrics and validation methodologies.

Table 1: Performance Comparison of Transfer Learning Models for Malaria Detection

| Model Architecture | Reported Accuracy | Precision/Recall/F1-Score | Validation Method | Key Distinguishing Feature |

|---|---|---|---|---|

| Ensemble (VGG16, ResNet50V2, DenseNet201, VGG19) [11] | 97.93% | Precision: 97.93%, Recall: N/A, F1-Score: 97.93% | Standard train-test split | Adaptive weighted averaging combines multiple architectures |

| Hybrid Capsule Network (Hybrid CapNet) [14] | Up to 100% (multiclass) | N/A | Intra- and cross-dataset evaluation | Lightweight (1.35M parameters), preserves spatial hierarchies |

| CNN with 7-channel input [13] | 99.51% | Precision: 99.26%, Recall: 99.26%, F1-Score: 99.26% | 5-fold cross-validation | Specialized for species identification (P. falciparum, P. vivax) |

| EfficientNet [32] | 97.57% | N/A | k-fold cross-validation | Balanced accuracy and computational efficiency |

| DenseNet201 [33] | N/A | AUC: 99.41% | 100 distinct partition cross-validations | Excels in texture feature identification |

| PlasmoCount 2.0 (YOLOv8) [17] | 99.8% | N/A | Multi-species validation | Rapid processing (<3 seconds per image), multi-species detection |

Beyond the core accuracy metrics, computational efficiency represents a critical consideration for practical deployment, particularly in resource-constrained settings. The Hybrid Capsule Network notably achieves its performance with only 1.35 million parameters and 0.26 GFLOPs, making it suitable for mobile applications [14]. Similarly, PlasmoCount 2.0's reduction in processing time from 40 to under 3 seconds per image through model architecture optimization demonstrates the importance of efficiency in clinical workflows [17].

The specialization level of models varies significantly across approaches. While some models focus primarily on binary classification (infected vs. uninfected), others like the CNN with 7-channel input and PlasmoCount 2.0 advance the field by addressing the more clinically challenging task of species identification [13] [17]. This capability is crucial for determining appropriate treatment regimens, as different Plasmodium species require different therapeutic approaches.

Experimental Protocols and Methodologies

Ensemble Learning with Adaptive Weighted Averaging

One notable approach implements a two-tiered ensemble strategy that combines hard voting with adaptive weighted averaging [11]. The methodology first involves training multiple pre-trained architectures—VGG16, VGG19, ResNet50V2, and DenseNet201—alongside a custom convolutional neural network on the same malaria dataset. Rather than employing simple majority voting or fixed-weight averaging, this approach dynamically assigns weights to each model's predictions based on their individual validation performance. This allows stronger models to exert more influence on the final prediction while the hard voting mechanism ensures consensus reliability. The researchers applied comprehensive data augmentation techniques including rotation, flipping, and scaling to enhance model robustness and prevent overfitting. This ensemble method demonstrated a test accuracy of 97.93%, outperforming all standalone models including individual components like VGG16 (97.65% accuracy) and the custom CNN (97.20% accuracy) [11].

Hybrid Capsule Network with Composite Loss

The Hybrid Capsule Network (Hybrid CapNet) introduces a lightweight architecture combining convolutional layers for feature extraction with capsule layers that preserve spatial hierarchies [14]. This model employs a novel composite loss function integrating four distinct components: margin loss for classification accuracy, focal loss to address class imbalance, reconstruction loss to maintain spatial coherence, and regression loss for precise localization. The model was evaluated on four benchmark malaria datasets (MP-IDB, MP-IDB2, IML-Malaria, MD-2019) with both intra-dataset and cross-dataset validation. This comprehensive evaluation methodology specifically tests the model's generalization capability across different imaging conditions and staining protocols. The Hybrid CapNet architecture achieves high accuracy while maintaining computational efficiency (1.35M parameters, 0.26 GFLOPs), making it particularly suitable for deployment in resource-constrained environments [14].

Multi-Species Detection with YOLOv8

PlasmoCount 2.0 implements a three-stage pipeline for malaria parasite detection and classification [17]. The first stage utilizes an object detection model (YOLOv8) to identify all red blood cells in a microscopic image and output bounding box coordinates. In the second stage, each detected cell is cropped and processed by a binary classification model that predicts infection status. The third stage takes infected cells and passes them to a regression model that predicts the developmental stage of the parasite (ring, trophozoite, or schizont). This approach was trained on a multi-species dataset including human-infective parasites (P. falciparum and P. vivax) and rodent malaria parasites (P. berghei, P. chabaudi, and P. yoelii), comprising 286,363 cells across 2,936 field-of-view images [17]. The model was further validated on completely unseen parasite species (P. knowlesi and P. cynomolgi) to test its generalization capability, achieving 99.8% classification accuracy with significantly reduced processing time compared to its predecessor.

Cross-Validation Approaches

Robust evaluation methodologies are critical for assessing model performance in malaria detection. Several studies employed rigorous cross-validation strategies:

- Stratified k-fold cross-validation: One CNN model utilized a variant of k-fold cross-validation (5 folds) with stratified sampling to maintain class distribution in each fold [13]. In each iteration, four folds were used for training, while the remaining fold was split equally for validation and testing, with final results averaged across all iterations.

- Exhaustive cross-validation: The DenseNet201 transfer learning approach was evaluated using 100 distinct random partitions of the data with 80% for training and 20% for testing in each partition [33]. This extensive validation provides a more reliable estimate of model performance and reduces variance in accuracy estimates.

- Cross-dataset validation: The Hybrid CapNet study explicitly included cross-dataset evaluation, where models trained on one dataset were tested on completely different datasets [14]. This approach tests the model's ability to generalize across variations in staining protocols, imaging conditions, and sample preparations.

Visualization of Transfer Learning Workflow

The following diagram illustrates the generalized workflow for applying transfer learning to malaria parasite classification, integrating common elements from the methodologies discussed in the search results:

Diagram 1: Transfer Learning Workflow for Malaria Classification

This workflow demonstrates how pre-trained models on general image datasets (like ImageNet) serve as feature extractors, which are then adapted through fine-tuning for malaria-specific classification tasks. The diagram highlights the three primary architectural approaches identified in the literature—ensemble methods, single model adaptation, and object detection pipelines—all culminating in cross-dataset validation as a critical final step for assessing real-world applicability.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of transfer learning approaches for malaria detection requires specific data resources and computational tools. The following table catalogues essential reagents and their functions as identified from the evaluated studies:

Table 2: Essential Research Reagents and Resources for Malaria Detection Models

| Research Reagent | Function | Example Specifications |

|---|---|---|

| Giemsa-Stained Blood Smear Images | Gold standard for malaria parasite visualization; provides ground truth for model training | MP-IDB, MP-IDB2, IML-Malaria, MD-2019 datasets [14] |

| Pre-trained CNN Models | Feature extractors providing learned visual representations | VGG16/19, ResNet50V2, DenseNet201 [11] |

| Data Augmentation Pipelines | Increase dataset diversity and size; improve model generalization | Rotation, flipping, scaling transformations [11] |

| Object Detection Models | Identify and localize individual cells in microscopic images | YOLOv8, Faster R-CNN [17] |

| Cross-Validation Frameworks | Assess model robustness and generalization capability | k-fold, stratified sampling, cross-dataset validation [14] [13] [33] |

| Computational Resources | Enable model training and inference | GPU acceleration (e.g., Nvidia GeForce RTX 3060) [13] |

| Attention Mechanisms | Enhance focus on parasite regions; improve interpretability | Integrated in YOLO-Para series for small-object detection [34] |

These research reagents form the foundation for developing and validating transfer learning models for malaria detection. The selection of appropriate datasets is particularly crucial, with multi-species datasets becoming increasingly important for developing robust models [17]. Similarly, the integration of attention mechanisms addresses the specific challenge of detecting small parasites within complex blood smear images [34].

The transfer learning paradigm has substantially advanced the capabilities of automated malaria detection systems, with models now achieving accuracy levels exceeding 99% in controlled evaluations [13] [17]. The comparative analysis presented in this guide reveals several key insights: ensemble methods leveraging multiple architectures provide superior performance through complementary feature learning [11]; computational efficiency is increasingly addressed through lightweight designs and optimized object detection pipelines [14] [17]; and the field is evolving beyond simple binary classification toward clinically relevant species identification and life-stage classification [13] [17].

Cross-dataset validation emerges as a critical differentiator in assessing model robustness and real-world applicability [14]. While high accuracy on carefully curated datasets is now commonplace, maintaining performance across varied imaging conditions, staining protocols, and parasite species remains challenging. Future research directions should prioritize the development of models that generalize effectively across diverse clinical settings, the creation of standardized evaluation benchmarks, and the optimization of systems for deployment in resource-constrained environments where the need for automated malaria diagnostics is most acute.

Cross-validation represents a cornerstone of robust model evaluation in medical artificial intelligence, particularly for critical applications like malaria parasite classification. These techniques are essential for assessing how well a predictive model will perform on unseen data, providing crucial insights into its real-world viability before clinical deployment. In malaria diagnostics, where model accuracy can directly impact patient outcomes, proper validation strategies ensure that automated classification systems can reliably identify Plasmodium species and their life-cycle stages across diverse populations and laboratory conditions. The fundamental principle of all cross-validation methods is to test the model's ability to generalize beyond the data used for training, thereby flagging problems like overfitting or selection bias that could compromise diagnostic accuracy in clinical settings [35].

Within the specific context of malaria research, cross-validation takes on added significance due to the challenging nature of the classification task. Malaria parasites exhibit subtle color variations, indistinct demarcation lines, and diverse morphologies across species and life-cycle stages, creating a complex feature space for deep learning models to navigate [15]. Furthermore, models must demonstrate robustness across variations in staining protocols, microscope settings, and blood smear preparation techniques used in different clinical environments. This article systematically compares two fundamental validation approaches—K-Fold Cross-Validation and the Hold-Out Method—within the framework of malaria parasite classification research, providing experimental data and implementation protocols to guide researchers in selecting appropriate validation strategies for their specific contexts.

Theoretical Foundations of Validation Strategies

Hold-Out Validation Methodology

The hold-out method, also referred to as simple validation, constitutes the most fundamental approach to model evaluation. In this technique, the available dataset is randomly partitioned into two distinct subsets: a training set used to build the model and a testing set (or hold-out set) used exclusively for evaluating its performance [35] [36]. This separation is methodologically critical because testing a model on the same data used for training represents a fundamental flaw in machine learning experimentation; a model that simply memorizes the training labels would achieve a perfect score but would fail to predict anything useful on yet-unseen data, a phenomenon known as overfitting [37].

In typical implementations for malaria classification tasks, the dataset is divided according to a predetermined ratio. Common splits include 70:30 or 80:20 for training to testing data, though these proportions can vary based on overall dataset size [36]. For instance, in a study developing YOLOv3 for recognizing Plasmodium falciparum, researchers employed an 8:1:1 ratio for training, validation, and testing sets respectively, where the validation set was used for parameter tuning and the test set provided the final performance evaluation [25]. The principal advantage of the hold-out method lies in its computational efficiency and simplicity—since the model is trained and tested only once, it requires significantly less computation time compared to resampling methods [36]. However, this approach carries notable limitations: the performance estimate can be highly sensitive to how the data is partitioned, potentially leading to either optimistic or pessimistic bias depending on which samples end up in the test set [35]. This variability is particularly problematic with smaller datasets, where a single random split might not adequately represent the underlying data distribution.

K-Fold Cross-Validation Methodology

K-fold cross-validation represents a more sophisticated approach designed to provide a more reliable estimate of model performance while making efficient use of limited data. In this method, the dataset is randomly partitioned into k equal-sized subsets (called "folds") of approximately equal size [35]. The model is trained and evaluated k times, with each iteration using a different fold as the test set and the remaining k-1 folds combined to form the training set. After k iterations, each fold has been used exactly once as the test set, and the overall performance metric is calculated as the average of the k individual evaluation results [37] [36].

The choice of k represents a critical decision in implementing this method, with different values offering distinct trade-offs between bias, variance, and computational expense. Common configurations include 5-fold and 10-fold cross-validation, with the latter being particularly widely used in malaria classification research [35] [36]. For example, the DANet study for malaria parasite detection employed 5-fold cross-validation to demonstrate the robustness of their model, achieving an accuracy of 97.95% [15]. As k increases, the bias of the performance estimate typically decreases because each training set becomes more representative of the overall dataset, but the variance may increase and computation time rises proportionally [36]. In the extreme case where k equals the number of observations (k = n), the method becomes Leave-One-Out Cross-Validation (LOOCV), which utilizes maximum training data but at significant computational cost, especially for large datasets [35] [36].

Stratified Cross-Validation for Imbalanced Data

In malaria classification datasets, class imbalance frequently occurs when certain parasite species or life-cycle stages are underrepresented compared to others. Standard k-fold cross-validation may produce folds with unrepresentative class distributions, leading to misleading performance estimates. Stratified k-fold cross-validation addresses this issue by ensuring that each fold maintains approximately the same class proportions as the complete dataset [37]. This technique is "frequently recommended when the target variable is imbalanced" as it creates folds with the same probability distribution as the larger dataset [38]. For instance, in a dataset where 80% of images show infected cells and 20% show healthy cells, each fold in stratified cross-validation would preserve this 80:20 ratio, resulting in more reliable performance metrics, particularly for minority classes that might otherwise be overlooked in certain folds [38].

Comparative Analysis of Validation Methods

Methodological Comparison

The table below summarizes the fundamental differences between k-fold cross-validation and the hold-out method:

Table 1: Fundamental Methodological Differences Between K-Fold Cross-Validation and Hold-Out Method

| Feature | K-Fold Cross-Validation | Holdout Method |

|---|---|---|

| Data Split | Dataset divided into k folds; each fold used once as test set [36] | Dataset split once into training and testing sets [36] |

| Training & Testing | Model trained and tested k times; each fold serves as test set once [36] | Model trained once on training set and tested once on test set [36] |

| Data Utilization | All data points used for both training and testing [36] | Only portion of data used for training; remainder used only for testing [36] |

| Result Stability | Average of k results provides more stable estimate [35] | Single result can vary significantly based on split [35] |

| Computational Load | Higher; requires k model trainings [36] | Lower; requires only one model training [36] |

Performance and Practical Considerations

The choice between k-fold cross-validation and hold-out validation involves important trade-offs between statistical reliability and practical implementation factors:

Bias-Variance Trade-off: K-fold cross-validation generally provides lower bias estimates because the model is trained on a larger portion of the dataset in each iteration [36]. However, with higher values of k (approaching LOOCV), the estimates may exhibit higher variance as the test sets become more similar to each other [36]. The hold-out method typically shows higher bias, especially if the training set is not representative of the full dataset [36].

Computational Efficiency: The hold-out method is significantly faster computationally since it involves only a single training-testing cycle [36]. This advantage becomes particularly important with large datasets or complex models where training time is substantial. As noted in discussions among statisticians, "K-fold is super expensive, so hold out is sort of an 'approximation' to what k-fold does for someone with low computational power" [39].

Data Efficiency: K-fold cross-validation makes more efficient use of limited data, which is particularly valuable in medical imaging domains where annotated datasets may be small [37]. For example, in malaria research, collecting and expertly labeling blood smear images is time-consuming and expensive, making maximal data utilization a priority.

Representativeness of Results: The performance metrics from k-fold cross-validation tend to be more reliable and representative of true generalization ability because they're averaged across multiple different train-test splits [35]. A single hold-out split might yield misleading results if the test set happens to be particularly easy or difficult to classify [39].

Experimental Performance in Malaria Classification

Recent studies on malaria parasite classification provide empirical evidence of how these validation strategies perform in practice:

Table 2: Validation Approaches in Recent Malaria Classification Studies

| Study/Model | Validation Method | Reported Performance | Dataset Characteristics |

|---|---|---|---|

| Hybrid CapNet [18] | Cross-dataset validation across 4 benchmarks | Up to 100% multiclass accuracy | Multiple datasets (MP-IDB, MP-IDB2, IML-Malaria, MD-2019) |

| DANet [15] | 5-fold cross-validation | 97.95% accuracy, 97.86% F1-score | 27,558 images (NIH Malaria Dataset) |

| YOLOv3 Platform [25] | Hold-out (8:1:1 ratio) | 94.41% recognition accuracy | 262 original images, cropped to 518×486 sub-images |

These results demonstrate that both validation approaches can yield high performance metrics when appropriately implemented. The Hybrid CapNet study notably employed cross-dataset validation, which provides the most rigorous assessment of generalizability by testing on completely independent datasets collected under potentially different conditions [18]. This approach is particularly valuable for evaluating model performance across varying staining protocols, microscope magnifications, and blood smear preparation techniques encountered in different clinical settings.

Implementation Protocols for Malaria Classification Research

Workflow Visualization

The following diagram illustrates the systematic workflow for implementing k-fold cross-validation in malaria classification research:

Systematic K-Fold Cross-Validation Workflow for Malaria Classification

Experimental Protocol for K-Fold Cross-Validation

Implementing robust k-fold cross-validation for malaria classification requires careful attention to several critical steps:

Dataset Preparation and Preprocessing: Begin with a curated dataset of malaria blood smear images, such as the NIH Malaria Dataset comprising 27,558 images from infected and healthy individuals [15]. Preprocessing should include image cropping to focus on relevant regions, resizing to meet model input requirements (e.g., 416×416 pixels for YOLOv3 [25]), and normalization of color values to account for staining variations. For the DANet study, this included addressing challenges of "low contrast and blurry borders" through specialized preprocessing techniques [15].

Stratified Fold Generation: Partition the preprocessed dataset into k folds (typically k=5 or k=10) using stratified sampling to maintain consistent distribution of parasite classes (P. falciparum, P. vivax, etc.) and life-cycle stages (ring, trophozoite, schizont, gametocyte) across all folds [37]. This is particularly crucial for imbalanced datasets where certain classes may be underrepresented.

Iterative Training and Validation: For each fold iteration (k total iterations):

- Designate one fold as the validation set and combine the remaining k-1 folds as the training set.

- Train the classification model (e.g., Hybrid CapNet, DANet) on the training set.

- Evaluate the trained model on the validation set, recording relevant performance metrics (accuracy, F1-score, precision, recall).