Comparing Semi-Landmark Methods for Shape Discrimination: A Practical Guide for Biomedical Research

This article provides a comprehensive comparison of semi-landmark methods for geometric morphometric analysis, tailored for researchers and professionals in drug development and biomedical science.

Comparing Semi-Landmark Methods for Shape Discrimination: A Practical Guide for Biomedical Research

Abstract

This article provides a comprehensive comparison of semi-landmark methods for geometric morphometric analysis, tailored for researchers and professionals in drug development and biomedical science. It explores the foundational principles of landmark types and the biological rationale for semi-landmarks, reviews prevalent methodologies including sliding, patch-based, and particle-based approaches, and offers practical guidance for troubleshooting common issues like sample size and algorithmic choice. Through a critical evaluation of validation studies and performance benchmarks across anatomical structures, it synthesizes key trade-offs to inform robust methodological selection for shape discrimination tasks in clinical and research applications.

Understanding Semi-Landmarks: From Biological Homology to Mathematical Correspondence

In geometric morphometrics, the analysis of biological form relies on capturing shape through defined points. This guide compares two fundamental paradigms for defining these points: homologous landmarks, rooted in biological ancestry and developmental homology, and mathematical semi-landmarks, defined by algorithmic correspondence and spatial sampling. While landmarks provide a direct link to evolutionary biology, semi-landmarks enable the quantification of shape from surfaces and curves lacking discrete homologous points. This article objectively compares the performance, applications, and limitations of these approaches, providing a structured framework for selecting appropriate methodologies in shape discrimination research.

In geometric morphometrics, the comparison of shapes requires a set of corresponding points across all specimens in a study. The definition of these points, however, can follow two fundamentally different philosophies, each with distinct implications for biological interpretation.

Homologous Landmarks are defined by biological correspondence. A landmark is a point that is considered equivalent in each individual due to shared evolutionary ancestry and developmental origins [1] [2]. Examples include the junction of cranial sutures or the tips of teeth cusps. The homology (sameness) of these points is a biological hypothesis based on prior knowledge of anatomy, development, and evolution [3] [4].

Mathematical Semi-Landmarks are defined by geometric or algorithmic correspondence. These are points placed on curves or surfaces to capture their form, but their specific locations are not justified by independent biological homology [1] [5]. Instead, their equivalence across specimens is determined by mathematical procedures, such as sliding along a curve or projecting onto a surface between "true" homologous landmarks [1] [6].

The core distinction lies in the basis for claiming that point A in specimen X is "the same" as point A in specimen Y. Is it because they share a developmental and evolutionary history (homology), or because an algorithm has placed them in topographically similar positions (mathematical correspondence)? This guide explores the practical consequences of this distinction for research outcomes.

Theoretical Foundations: Homology, Homoplasy, and Correspondence

The Biological Basis of Homology

In biology, homology signifies a similarity in structures or genes between different taxa that arises from their shared ancestry [2]. A classic example is the forelimbs of vertebrates, where the wings of bats, the arms of primates, and the flippers of whales are all homologous as forelimbs, despite their different functions, because they derive from the same ancestral tetrapod structure [2]. Homology is a relation of correspondence between parts of individuals (e.g., organs, organisms, lineages) that can be traced back to a common ancestral precursor [3] [4]. This relationship is transitive, meaning that if A corresponds to B, and B to C, then A corresponds to C, regardless of how much the structures may have diverged in form [3].

The Challenge of Homoplasy

Contrasting with homology, homoplasy is a similarity that arises through independent evolution rather than common descent [7]. This includes convergence (where different developmental processes produce similar forms), parallelism, and reversals [3] [7]. The wings of birds and insects are analogous, not homologous, as they evolved independently. This is crucial for morphometrics because a mathematical correspondence established by an algorithm could inadvertently match homoplastic structures, potentially leading to misleading biological inferences [1].

Mathematical Correspondence as an Operational Tool

Mathematical correspondence methods do not rely on prior biological knowledge. Instead, they operationalize "sameness" through geometry and algorithms. Common approaches include:

- Sliding Semi-Landmarks: Points are placed between homologous landmarks and then "slid" to minimize bending energy or Procrustes distance, effectively removing the tangential component of variation that is arbitrary due to their initial placement [1] [5].

- Dense Correspondence Analysis: Algorithms like Non-rigid Iterative Closest Point (NICP) or Large Deformation Diffeomorphic Metric Mapping (LDDMM) deform a template specimen onto target specimens, transferring a dense set of points [1] [8].

- Conformal Mapping: Used in 2D analyses, this method transforms complex shapes (like a leaf outline) into a simpler space (like a circle) where correspondences are easier to establish [6].

These methods prioritize dense shape coverage over biological point homology, which is both their primary strength and their primary weakness.

Comparative Methodologies and Experimental Protocols

Several experimental studies have directly compared the performance of different landmarking approaches. The protocols and findings from key studies are summarized below.

Protocol 1: Comparing Semi-Landmarking Approaches on Ape Crania

Shui et al. [1] conducted a comparison of three landmark-driven semilandmarking approaches using surface mesh datasets of ape crania and human heads.

Methodologies Compared:

- Optic Flow: Estimates dense correspondences based on image intensity patterns.

- Thin-Plate Spline (TPS) and Gaussian Process (GP): Uses known landmarks to guide the interpolation of correspondences across surfaces.

- Non-rigid Iterative Closest Point (NICP): Iteratively matches points between a template and a target surface using a non-rigid transformation.

Key Experimental Steps:

- A template specimen was manually landmarked.

- Semilandmarks were transferred to each target specimen using the different algorithms.

- Transferred points were slid to minimize bending energy.

- The resulting point configurations were analyzed using Procrustes ANOVA and other morphometric tools to compare patterns of shape variation.

Findings: While the non-rigid approaches (NICP and Optic Flow) produced more consistent results with each other, all methods yielded different semilandmark locations, which in turn led to differences in statistical results. This highlights that the choice of algorithm introduces a source of error and that results should be considered approximations [1].

Protocol 2: Evaluation of Dense Sampling Strategies on Great Ape Skulls

Another study [5] implemented and compared three dense sampling strategies for analyzing 3D images of great ape skulls (Pan troglodytes, Gorilla gorilla, Pongo pygmaeus).

Methodologies Compared:

- Patch-based Sampling: Triangular patches defined by three manual landmarks are projected onto the specimen's surface independently for each specimen.

- Patch-TPS: Patches are defined on a single template and transferred to all specimens via a Thin-Plate Spline (TPS) transform and projection.

- Pseudo-landmark Sampling: A template model is regularly sampled at arbitrary locations, and points are transferred via TPS.

Key Experimental Steps:

- Manual landmarks were collected on all specimens.

- Each dense sampling method was used to generate hundreds to thousands of additional points.

- The accuracy of each method was quantified by using the landmark sets to estimate a transform between an individual and the population average template. The average mean root squared error between the transformed mesh and the template was the performance metric.

Findings: All methods provided shape estimations comparable to or better than using manual landmarks alone. The patch method was most sensitive to noise and missing data, while Patch-TPS and pseudo-landmarking were more robust [5].

Protocol 3: Landmark-Free Morphometrics Across Diverse Mammals

A large-scale study [8] assessed the application of a landmark-free method, Deterministic Atlas Analysis (DAA), across 322 mammals spanning 180 families, comparing it to a high-density geometric morphometric approach.

Methodologies Compared:

- Manual Landmarking with Semi-Landmarks: The traditional standard.

- Deterministic Atlas Analysis (DAA): A landmark-free method that computes diffeomorphic deformations from a sample-derived atlas to each specimen. Control points guide the comparison without predefined homology.

Key Experimental Steps:

- Specimens were processed with both methods.

- The correlation between shape matrices from each method was assessed using Mantel tests and PROTEST.

- Downstream macroevolutionary analyses (phylogenetic signal, morphological disparity, evolutionary rates) were computed from both datasets and compared.

Findings: After standardizing mesh data, DAA showed a significant correlation with manual landmarking results. However, differences emerged, particularly for certain clades like Primates and Cetacea. Downstream evolutionary metrics were comparable but not identical, indicating that methodological choice can influence biological inference [8].

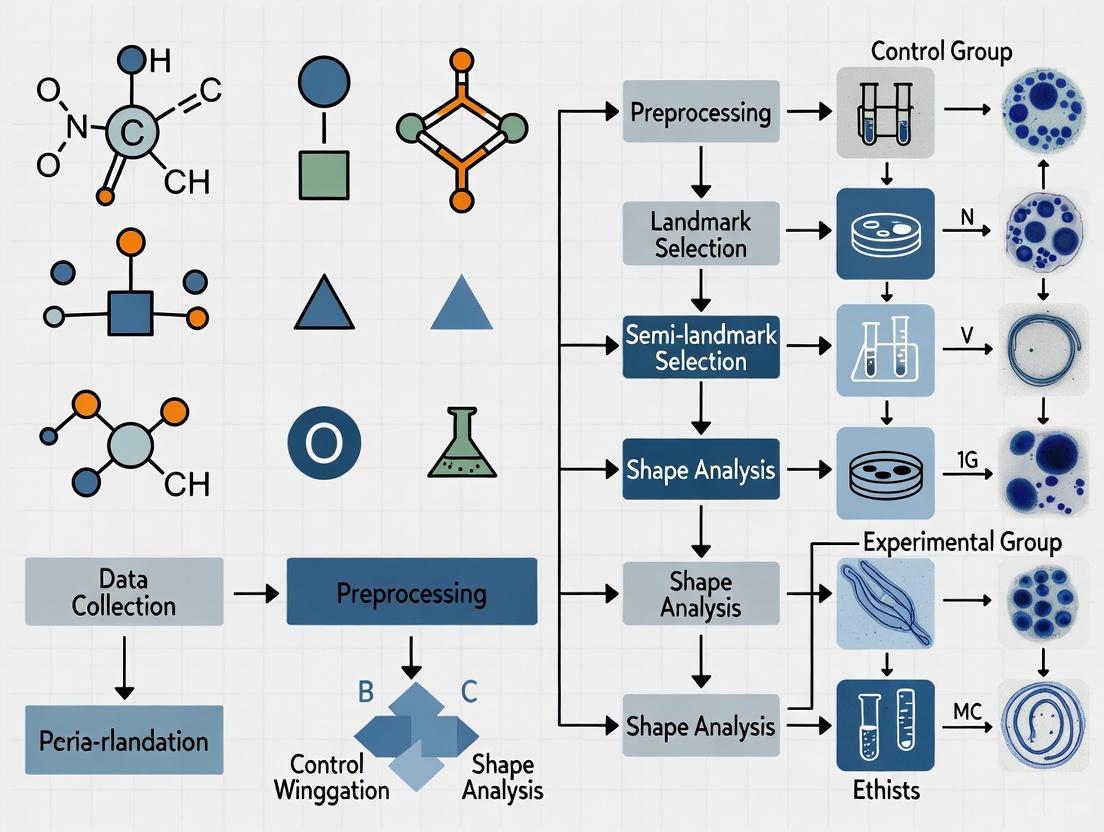

The following workflow diagram synthesizes the general process for comparing these methods, as used across the cited studies.

Performance Data: A Quantitative Comparison

The following tables synthesize quantitative and qualitative findings from the experimental studies cited, providing a direct comparison of methodological performance.

Table 1: Performance Comparison of Different Semi-Landmark and Landmark-Free Methods

| Method | Theoretical Basis | Key Strength | Key Limitation | Reported Performance/Outcome |

|---|---|---|---|---|

| Homologous Landmarks [1] [5] | Biological Homology | Direct biological interpretability; clear evolutionary context. | Limited to discrete, identifiable points; sparse shape representation. | Gold standard for biological inference, but captures limited morphological information [5]. |

| Sliding Semi-Landmarks [1] [5] | Mathematical (Bending Energy/Procrustes Distance) | Enables quantification of smooth curves and surfaces. | Arbitrary initial placement; sliding requires landmarks as anchors. | Greatly increases shape information, but results are sensitive to sliding criterion (TPS vs. Procrustes) [1]. |

| Patch & Patch-TPS [5] | Geometric (Template Projection) | Good coverage of user-defined regions; repeatable. | Sensitive to surface noise and patch definition. | Patch-TPS more robust than direct Patch method; outliers occur with noise/missing data [5]. |

| Pseudo-Landmarks [5] [8] | Mathematical (Arbitrary Sampling) | Very high density; can cover entire surface automatically. | No biological homology; correspondence is purely algorithmic. | Provides robust performance and high-density coverage, but biological meaning of variation is less direct [5]. |

| Landmark-Free (DAA) [8] | Mathematical (Diffeomorphic Mapping) | No manual landmarking needed; applicable across disparate taxa. | Complex setup; results depend on parameters (e.g., kernel width). | Correlates with manual landmarking but shows clade-specific differences; impacts downstream evolutionary metrics [8]. |

Table 2: Impact on Downstream Macroevolutionary Analyses [8]

| Analysis Type | Comparison Finding (Manual vs. Landmark-Free) | Implication |

|---|---|---|

| Phylogenetic Signal | Produced comparable but varying estimates. | Methodological choice can influence the perceived integration of shape with phylogeny. |

| Morphological Disparity | Patterns were broadly similar but not identical. | Estimates of morphological diversity within clades may be method-dependent. |

| Evolutionary Rates | Inferred rates were correlated but showed differences in magnitude. | Conclusions about the tempo of evolution could be affected by the chosen morphometric approach. |

The Scientist's Toolkit: Essential Research Reagents and Software

Successful implementation of landmark and semi-landmark analyses requires a suite of software tools and methodological considerations. The following table details key "research reagents" for this field.

Table 3: Essential Software and Methodological "Reagents" for Morphometrics

| Tool/Reagent | Function | Example Use-Case |

|---|---|---|

| 3D Slicer / SlicerMorph [5] | Open-source platform for 3D visualization and image analysis. | Used for manual landmarking, and implementing patch-based and pseudo-landmark sampling protocols [5]. |

| R packages (Morpho, geomorph) [5] | Statistical analysis and visualization of shape data. | Used for Procrustes superimposition, sliding semi-landmarks, and performing statistical tests (e.g., Procrustes ANOVA) [5]. |

| Deformetrica [8] | Software for implementing landmark-free methods like Deterministic Atlas Analysis (DAA). | Used for large-scale studies across disparate taxa where manual landmarking is not feasible [8]. |

| Thin-Plate Spline (TPS) Transformation [1] [5] | A mathematical function for smooth interpolation of deformations. | Core to many semilandmarking approaches for warping a template onto a target specimen [1]. |

| Poisson Surface Reconstruction [8] | Algorithm for creating watertight, closed surface meshes from scan data. | Critical for standardizing data from mixed modalities (CT vs. surface scans) before landmark-free analysis [8]. |

| Conformal Mapping & DTW Algorithm [6] | Transforms 2D outlines into a "fingerprint" function for automatic landmark matching. | Used for automatic landmarking of 2D leaf shapes, achieving <5% coordinate deviation from manual placement [6]. |

The choice between homologous landmarks and mathematical semi-landmarks is not a matter of which is universally superior, but which is most appropriate for the specific research question and dataset.

Use Homologous Landmarks when the research goal is to test explicit evolutionary hypotheses about the transformation of specific, identifiable anatomical structures. This approach is ideal for studies of closely related species or when analyzing modularity and integration of known anatomical units [1] [2].

Use Semi-Landmarks when the study requires quantification of overall form, especially from smooth curves and surfaces lacking discrete landmarks. This approach is powerful for quantifying gross morphological differences and is essential for capturing geometric information from regions like cranial vaults or leaf outlines [1] [5] [6].

Consider Landmark-Free Methods for large-scale, macroevolutionary studies across highly disparate taxa where identifying numerous homologous points is impractical. These methods offer unparalleled efficiency for big datasets, but researchers must be cautious, as the resulting patterns of shape variation may not be directly equivalent to those derived from homologous points [8].

In practice, many modern studies employ a hybrid approach, using a core set of homologous landmarks to provide a biological framework and a dense cloud of semi-landmarks to capture comprehensive shape information. This combines the biological grounding of homology with the rich geometric power of mathematical correspondence, offering a robust solution for the complex challenge of quantifying biological form.

In the field of geometric morphometrics, biological structures are often quantified using landmarks—discrete, homologous points that can be reliably identified across specimens. However, many biologically significant structures, such as the cranial vault or long bone surfaces, present extensive smooth areas devoid of such discrete landmarks. This fundamental challenge has led to the development of semi-landmark methods, which use algorithmic approaches to densely match points between surfaces lacking readily identifiable landmarks. These methods have become indispensable for capturing comprehensive shape information, yet their application involves critical choices that directly impact research outcomes in evolutionary biology, anthropology, and related fields [1].

This guide provides an objective comparison of predominant semi-landmark approaches, their performance characteristics, and detailed experimental protocols to inform methodological selection for shape discrimination research.

Methodological Approaches: A Comparative Framework

Three primary strategies have emerged for the dense sampling of three-dimensional biological surfaces, each with distinct operational characteristics and theoretical foundations.

Table 1: Core Semi-Landmark Methodologies

| Method | Core Principle | Homology Assurance | Key Requirement |

|---|---|---|---|

| Patch-Based | Projects points from triangular patches defined by manual landmarks onto the specimen surface | High (geometric relationship to manual landmarks) | Manual landmarks defining patch boundaries |

| Patch-TPS | Transfers semi-landmarks from a template to targets via Thin-Plate Spline (TPS) transformation | Moderate (guided by template landmarks) | Representative template specimen |

| Pseudo-Landmark | Automatically samples points on surfaces with no direct relationship to manual landmarks | Low (mathematical correspondence only) | High-quality surface meshes |

Patch-Based Semi-Landmarking

This approach operates independently on each specimen. Researchers first define triangular regions bounded by three manual landmarks. A template grid with a user-specified density is then registered to these boundaries using thin-plate spline deformation and projected onto the specimen surface along calculated normal vectors. The method preserves a clear geometric relationship between semi-landmarks and manual landmarks, maintaining stronger homology claims. However, it demonstrates sensitivity to surface noise and may produce placement errors in regions with sharp curvatures [5].

Patch-Based with TPS Transfer

This template-dependent method applies patch-based semi-landmarking to a single representative specimen (either a synthetic average or an actual sample). These points are then transferred to all specimens in a dataset through a TPS transformation calculated from manual landmark correspondences, followed by projection along the template's normal vectors. This approach improves robustness against noise and missing data compared to the basic patch method, though it introduces dependency on template selection [5].

Pseudo-Landmark Sampling

This landmark-free approach generates points directly on a template model through regular sampling, assuming spherical topology. A spatial filter enforces minimum distances between points to prevent clustering. These points are transferred to individual samples via TPS and normal projection. While this method offers extensive coverage independent of manual landmark placement, it completely severs the connection with biological homology, relying instead on mathematical correspondence [5].

Experimental Performance Comparison

Recent studies have systematically evaluated these methods using great ape cranial datasets, providing quantitative performance data. The evaluation typically involves estimating a transformation between an individual specimen and a population average template, then calculating the mean root squared error (MRSE) between the transformed mesh and the template.

Table 2: Performance Comparison of Semi-Landmark Methods

| Method | Shape Estimation Accuracy | Robustness to Noise | Computational Demand | Sample Coverage |

|---|---|---|---|---|

| Manual Landmarks Only | Baseline reference | High | Low | Limited to defined points |

| Patch-Based | Comparable or superior to manual landmarks | Low (produces outliers) | Moderate | Dependent on manual landmark placement |

| Patch-TPS | Comparable or superior to manual landmarks | High | Moderate to High | Good (template-dependent) |

| Pseudo-Landmark | Comparable or superior to manual landmarks | High | High | Excellent |

Key Performance Findings

Studies implementing these methods on great ape cranial data have demonstrated that all three automated strategies can produce shape estimations of population average templates that are generally comparable to, and often exceed, the accuracy achieved using manual landmarks alone. This accuracy improvement comes while dramatically increasing the density of shape information [5].

The patch method demonstrates the greatest sensitivity to data quality issues, resulting in outliers with substantial deviations in mean shape estimates when surface noise or irregularities are present. Both patch-TPS and pseudo-landmark methods provide more robust performance across datasets with varying quality and morphological variability [5].

Research Reagent Solutions

The implementation of semi-landmark methodologies requires specific software tools and technical components.

Table 3: Essential Research Materials and Tools

| Research Reagent | Function/Description | Application Context |

|---|---|---|

| 3D Slicer with SlicerMorph | Open-source platform for visualization and analysis of 3D image data | Primary environment for implementing semi-landmark protocols [5] |

| Surface Meshes | 3D digital representations of biological structures (e.g., crania) | Fundamental input data for all semi-landmark analyses [1] [5] |

| Manual Landmark Sets | Expert-placed homologous points defining biological correspondences | Foundation for patch-based methods and TPS transformations [5] |

| Thin-Plate Spline (TPS) | Mathematical transformation for interpolation between landmark points | Core algorithm for transferring semi-landmarks in template-based approaches [1] [5] |

Workflow Visualization

The following diagram illustrates the logical relationships and decision pathways for selecting and implementing semi-landmark methodologies:

Critical Considerations for Method Selection

When implementing semi-landmark approaches in research, several critical factors require consideration:

Trade-offs in Method Selection: The choice between methods involves balancing correspondence quality, point spacing consistency, sample coverage, repeatability, and computational time. No single method optimizes all parameters simultaneously [5].

Interpretation Limitations: Results from semi-landmark analyses should be considered approximations of biological reality. Different approaches produce varying semi-landmark locations, which can lead to differences in statistical outcomes, necessitating cautious interpretation [1].

Template Sensitivity: Template-based methods (patch-TPS and pseudo-landmarks) demonstrate dependency on template selection. The ideal template exhibits high geometric similarity to the sample population to minimize projection artifacts [1].

Sliding Procedures: Most methodologies include an optional sliding step where semi-landmarks are adjusted to minimize bending energy or Procrustes distance. This refinement reduces potential artifacts introduced by initial point placement but adds computational complexity [1].

Semi-landmark methods have substantially advanced our ability to quantify biological shape on smooth surfaces where traditional landmarks are scarce. The patch, patch-TPS, and pseudo-landmark approaches each offer distinct advantages, with the optimal choice dependent on research questions, data quality, and biological constraints. While these methods significantly increase shape information density, researchers must acknowledge that all semi-landmark placements represent estimates rather than true biological homologies. As methodological refinement continues, these approaches will remain essential tools for extracting comprehensive shape data in evolutionary morphology, paleontology, and biological anthropology.

In geometric morphometrics, landmarks are defined as discrete, homologous points of biological correspondence that can be precisely located and measured across specimens. These points serve as the fundamental data for quantifying biological form, enabling researchers to statistically analyze shape variation within and between populations, species, and higher taxa. The conceptualization and classification of landmarks proposed by Fred Bookstein in the 1990s have profoundly influenced how researchers select points for morphological analysis, establishing a theoretical framework for evaluating the biological relevance and methodological reliability of landmark data [9] [10].

Bookstein's typology emerged during a pivotal period when morphometrics transitioned from traditional measurement-based approaches to geometry-based statistical frameworks. This classification system provides critical guidance for identifying points that best capture biologically meaningful shape variation rather than arbitrary geometric locations. The theoretical foundation of this typology rests on the principle of biological homology, where landmarks represent corresponding biological loci across specimens, thus enabling valid comparisons of form [11]. This review examines the three landmark types within Bookstein's framework, their biological and methodological characteristics, and their evolving role in contemporary morphometric research, particularly in relation to emerging semi-landmark methods for shape discrimination.

Bookstein's Landmark Typology: Definitions and Examples

Bookstein's classification system categorizes landmarks into three distinct types based on their anatomical locations and biological significance. This typology assists researchers in selecting landmarks that maximize biological information while considering practical constraints in identification and measurement.

Table 1: Bookstein's Three Landmark Types: Definitions and Biological Basis

| Landmark Type | Definition | Biological Basis | Examples | Methodological Considerations |

|---|---|---|---|---|

| Type I | Points at discrete juxtapositions of tissues | Highest degree of biological homology; defined by local topology | Foramina, suture intersections, enamel junctions | Considered most reliable; limited in number on many biological structures |

| Type II | Points at maxima of curvature or other local morphometric phenomena | Defined by geometric properties rather than specific tissue interactions | Tips of processes, furthest points on bulges | More abundant than Type I; may have less precise biological correspondence |

| Type III | Points at extreme points or constructed locations | Minimal biological homology; often defined by extremal points | Extremities of longest axes, tangent points | Most numerous but contain deficient information about shape variation |

Type I Landmarks

Type I landmarks represent the highest order of biological homology in Bookstein's classification, defined by discrete juxtapositions of tissues or structures. These points have a precise biological definition independent of the overall geometry of the form. Common examples include foramina (openings for nerves and blood vessels), intersections of cranial sutures, and junctions between different dental tissues. In craniometric analyses, Type I landmarks might include the intersection of the sagittal and coronal sutures (bregma) or the various cranial foramina such as the infraorbital foramen or mental foramen. These landmarks are considered the most reliable for morphometric analyses because their precise anatomical definition theoretically enables different researchers to identify the same location consistently across specimens [9].

Type II Landmarks

Type II landmarks are defined by local geometric properties rather than specific tissue interactions. These points typically occur at maxima of curvature, tips of processes, or the most concave or convex points on a structure. Examples include the tip of the chin (gnathion), the tip of the nasal bones (rhinion), or the furthest point on the occipital condyles. While Type II landmarks lack the precise tissue-level definition of Type I landmarks, they still represent locations that can be reliably identified based on their geometric properties and often correspond to functionally or developmentally significant aspects of morphology. In practice, Type II landmarks are more abundant on biological structures than Type I landmarks, making them essential for capturing comprehensive shape information [9].

Type III Landmarks

Type III landmarks represent the most geometrically defined category, consisting of extremal or constructed points with minimal biological homology. These points are defined primarily by their positional relationships to other landmarks or by being the most distant points in a particular direction. Examples include the furthest posterior point on the skull (opisthocranion) or the most lateral points on the zygomatic arches (euryon). Bookstein and others have argued that Type III landmarks contain deficient information about shape variation and are less reliably measured than Type I or II landmarks because their definition depends heavily on the overall orientation of the specimen and may not correspond to developmentally or functionally discrete entities [9].

Methodological Considerations and Contemporary Critique

While Bookstein's landmark typology provides a valuable theoretical framework for understanding biological correspondence in morphometric analyses, its practical application faces significant challenges that have led to contemporary critiques and methodological evolution.

Theoretical Basis for Landmark Selection

The hierarchical valuation of landmarks in Bookstein's typology stems from theoretical concerns about the biological meaningfulness of shape information captured by each type. Type I landmarks are privileged because their precise tissue-level definition theoretically makes them optimal for studying biological processes such as development, evolution, and functional adaptation. The decreasing preference for Type II and III landmarks reflects concerns about their potential measurement error and less direct relationship to underlying biological processes [9] [11].

This theoretical framework has practical implications for research design. Studies prioritizing tests of specific biological hypotheses might emphasize Type I landmarks, while those focused on comprehensive shape characterization might incorporate more Type II and III landmarks to increase the density of shape information. This balance exemplifies the constant trade-off in morphometrics between biological specificity and geometric comprehensiveness [5].

Empirical Challenges in Application

Recent empirical investigations have revealed substantial challenges in consistently applying Bookstein's typology. A comprehensive review of geometric morphometrics studies found considerable variation in landmark classifications among different researchers, with disagreement in the application of both Bookstein's landmark typology and individual landmark definitions [9]. This inconsistency stems from several factors:

- Ambiguity in classification criteria: Many anatomical locations display characteristics of multiple landmark types

- Structural variation: The same anatomical feature may present differently across specimens

- Disciplinary conventions: Different research traditions apply typology differently

Perhaps more significantly, literature review has shown little correlation between landmark type and measurement reproducibility, especially when considering factors such as differences in measurement tools (calipers, digitizer, or computer software) and data sources (dry crania, 3D models, or 2D images) [9]. This finding challenges a fundamental assumption underlying the typology—that Type I landmarks are inherently more reliably measured.

Limitations and Contemporary Critique

In their seminal critique, Wärmländer et al. (2019) argue that while landmark typology is valuable for teaching biological shape analysis, "employing it in research design introduces confusion without providing useful information" [9]. This perspective is supported by several observations:

- The typology does not consistently predict measurement error in practical applications

- Over-reliance on Type I landmarks severely limits the number of points available for analysis

- Many biological structures lack sufficient Type I landmarks for comprehensive shape analysis

- The classification often becomes arbitrary for complex morphological structures

Instead, these researchers recommend that "researchers should choose landmark configurations based on their ability to test specific research hypotheses, and research papers should include justifications of landmark choices along with landmark definitions, details on landmark collection methods, and appropriate interobserver and intraobserver analyses" [9]. This approach emphasizes methodological transparency and empirical validation over theoretical categorization.

Landmark Typology in Contemporary Morphometric Methods

The evolution of morphometric methodologies, particularly the development of semi-landmark and landmark-free approaches, has transformed the practical relevance of Bookstein's typology while maintaining focus on the fundamental challenge of capturing biologically meaningful shape data.

Semi-Landmarks and Landmark Typology

Semi-landmarks were developed to quantify the shape of curves and surfaces between traditional landmarks, effectively bridging the information gap between discrete points [5]. These points are not individually homologous but capture information about boundaries and surfaces between Type I and II landmarks. The application of semi-landmarks represents both an extension and a departure from Bookstein's original typology:

- Relaxed homology requirements: Semi-landmarks sacrifice individual homology to capture comprehensive shape information

- Geometric correspondence: Points are placed based on geometric spacing rather than biological correspondence

- Statistical optimization: Final positions are determined through algorithms that minimize bending energy or Procrustes distance

Different semi-landmarking approaches navigate the trade-offs between correspondence, spacing, coverage, and repeatability differently. The patch-based approach projects points from triangular patches constructed from manual landmarks, maintaining some geometric relationship to Type I/II landmarks. The patch-TPS method transfers semi-landmarks from a template using thin-plate spline transformation, while pseudo-landmark sampling generates points with no direct relationship to manual landmarks [5]. As these methods demonstrate, contemporary morphometrics increasingly prioritizes comprehensive shape capture over strict adherence to traditional landmark typologies.

Landmark-Free Methods and Their Implications

Recent advances in landmark-free morphometrics represent a further departure from Bookstein's framework by eliminating the need for discrete landmarks altogether. Methods such as Large Deformation Diffeomorphic Metric Mapping (LDDMM) and Deterministic Atlas Analysis (DAA) compare entire shapes using control points that have no necessary relationship to biologically homologous locations [12]. These approaches offer particular advantages for analyzing morphologically disparate taxa where homologous landmarks are obscure or few in number [12].

Landmark-free methods address several limitations of traditional landmark-based approaches:

- Overcoming homology constraints: Enable comparisons across phylogenetically diverse taxa

- High-throughput capability: Automate shape analysis for large datasets

- Comprehensive shape capture: Quantify global shape differences beyond discrete points

However, these methods also face challenges in ensuring biological meaningfulness and may require validation against traditional landmark-based approaches [12]. The emergence of these techniques reflects an ongoing methodological evolution from discrete homologous points to comprehensive shape characterization.

Experimental Protocols and Research Applications

Comparative Evaluation of Landmarking Strategies

Research evaluating different landmarking approaches typically follows standardized protocols to ensure methodological rigor. In a comparative study of semi-landmarking approaches for analyzing great ape cranial morphology, researchers implemented three sampling strategies: patch, patch-TPS, and pseudo-landmark sampling [5]. The experimental workflow involved:

- Specimen preparation: DICOM stacks of great ape skulls (Pan troglodytes, Gorilla gorilla, and Pongo pygmaeus) were converted to volumes and reviewed for completeness

- Manual landmarking: Expert placement of traditional Type I and II landmarks using 3D Slicer software

- Semi-landmark generation: Application of each sampling strategy to generate dense point sets

- Template estimation: Use of semi-landmarks to estimate transforms between individuals and population average templates

- Error quantification: Calculation of mean root squared error between transformed meshes and templates

This study found that while all semi-landmarking strategies produced shape estimations comparable to manual landmarks alone, they differed significantly in their robustness to noise and missing data. The patch method demonstrated highest sensitivity to noise, while patch-TPS and pseudo-landmarking provided more consistent performance across variable datasets [5].

Figure 1: Experimental workflow for comparing semi-landmarking approaches, showing three strategies evaluated for shape analysis of great ape cranial morphology [5].

Automated Landmark Identification Protocols

Studies evaluating automated landmark placement typically employ rigorous validation protocols to assess accuracy and reliability. In a comprehensive analysis of automated landmark identification on mouse skulls, researchers compared manually and automatically generated landmarks using a large sample (n=1205) representing 62 mouse genotypes [11]. The experimental methodology included:

- Dataset preparation: Micro-computed tomography (μCT) images of adult mouse skulls

- Manual landmarking: Expert placement of 32 anatomical landmarks on each specimen

- Automated landmarking: Atlas-based registration using genotype-specific templates

- Accuracy assessment: Comparison of landmark positions between methods

- Morphometric analysis: Comparison of shape variation and covariance structures

This research found that although automated landmark placement was significantly different than manual placement, it successfully captured skull shape covariation structure and could identify shape differences between inbred mouse genotypes with similar power to manual methods [11]. The study also noted that automated methods demonstrated reduced shape variance estimates, partially reflecting underestimation of extreme genotypes but also the elimination of intra-observer error inherent in manual landmarking.

Table 2: Comparison of Manual and Automated Landmarking Methods in Mouse Skull Analysis

| Analysis Aspect | Manual Landmarking | Automated Landmarking | Research Implications |

|---|---|---|---|

| Landmark placement time | Time-consuming (hours to days) | Rapid (batch processing) | Enables high-throughput phenotyping |

| Measurement reproducibility | Subject to intra-observer error | Algorithmically standardized | Improves consistency across studies |

| Biological signal detection | Captures extreme shapes well | May underestimate morphological extremes | Important consideration for outlier detection |

| Shape variance estimates | Includes observer error | Reduced variance due to standardization | Affects statistical power in group comparisons |

| Applicability to large datasets | Limited by practical constraints | Scalable to very large samples | Facilitates phenomic analysis |

Contemporary morphometric research utilizes a diverse array of computational tools and methodological approaches. The following essential resources represent the current state of the field for landmark-based shape analysis:

Table 3: Essential Research Tools for Landmark-Based Morphometric Analysis

| Tool/Resource | Function | Application Context | Methodological Role |

|---|---|---|---|

| 3D Slicer with SlicerMorph | Open-source platform for 3D visualization and analysis | Great ape cranial morphology studies [5] | Provides semi-automated landmarking protocols and geometric morphometrics workflows |

| R packages (Morpho, geomorph) | Statistical shape analysis | Generalized Procrustes analysis, phylogenetic comparisons [5] | Standardized implementation of shape statistics and visualization |

| Atlas-based registration | Automated landmark identification | High-throughput mouse phenotyping [11] | Enables scalable landmark placement across large datasets |

| Deterministic Atlas Analysis (DAA) | Landmark-free shape comparison | Macroevolutionary analyses across mammalian taxa [12] | Facilitates shape comparisons without homologous landmarks |

| Elliptical Fourier Analysis | Outline-based shape quantification | Archaeological artifact analysis [13] | Captures shape information from boundaries rather than discrete points |

Bookstein's landmark typology represents a foundational conceptual framework that continues to inform discourse on biological correspondence in morphometrics, though its practical application has been tempered by empirical challenges and methodological evolution. While the theoretical hierarchy from Type I to Type III landmarks provides a valuable heuristic for understanding biological meaningfulness, contemporary research demonstrates that strict adherence to this typology may limit analytical scope without guaranteeing improved measurement reliability.

The development of semi-landmark and landmark-free methods reflects an ongoing methodological progression toward more comprehensive shape characterization, with researchers increasingly selecting landmark configurations based on their ability to test specific biological hypotheses rather than theoretical classifications. This pragmatic approach, coupled with transparent reporting of landmark definitions and measurement protocols, represents the current best practice in morphometric research.

As the field continues to evolve with advances in automated landmarking and dense shape correspondence methods, the core principles embodied in Bookstein's typology—attention to biological meaningfulness, concern for measurement reliability, and critical evaluation of landmark choices—remain essential guides for rigorous shape analysis. Future methodological development will likely further bridge the gap between discrete landmark-based approaches and comprehensive shape characterization, expanding our ability to address fundamental questions in evolutionary biology, functional morphology, and developmental genetics.

Geometric morphometrics (GM) has revolutionized the quantitative analysis of biological form. However, a significant limitation of traditional landmark-based methods is their sparse characterization of morphology, particularly across smooth surfaces and complex curves lacking discrete anatomical points. Semi-landmarks have emerged as an essential methodological innovation that addresses this constraint by dramatically increasing the density of shape information captured. This guide objectively compares the performance of leading semi-landmark methodologies, evaluating their experimental outcomes, technical requirements, and applicability across research contexts from evolutionary biology to medical imaging.

In geometric morphometrics, landmarks are defined as discrete, homologous anatomical points that can be reliably identified across all specimens in a study. While powerful, this approach captures only a fraction of morphological variation because many biologically significant structures—such as cranial vaults, feather outlines, or arm contours—contain few, if any, true homologous points [1]. This creates an information density problem, where sparse landmark configurations inadequately represent the continuous biological surfaces and curves that interest researchers.

Semi-landmarks solve this fundamental problem by providing a mathematically rigorous framework for quantifying shape along curves and surfaces between traditional landmarks. Unlike landmarks, which represent developmental or evolutionary homologies, semi-landmarks establish geometric correspondences through algorithmic placement and sliding procedures [1]. This methodological advancement has transformed morphometrics by enabling dense sampling of form while maintaining compatibility with established statistical shape spaces.

Comparative Performance of Semi-Landmark Methods

Classification Accuracy Across Biological Structures

Table 1: Discrimination Performance of Semi-Landmark vs. Alternative Approaches

| Biological Structure | Method Category | Specific Technique | Classification Accuracy | Study Reference |

|---|---|---|---|---|

| Feather outlines | Semi-landmark | Bending energy alignment | Roughly equal performance | [14] |

| Feather outlines | Semi-landmark | Perpendicular projection | Roughly equal performance | [14] |

| Feather outlines | Outline-based | Elliptical Fourier analysis | Similar to semi-landmarks | [14] |

| Feather outlines | Outline-based | Extended eigenshape method | Similar to semi-landmarks | [14] |

| Arthropod wings | Landmark-based | Traditional landmarks | 79% average correct assignment | [15] |

| Arthropod wings | Outline-based | Elliptic Fourier analysis | 85% average correct assignment | [15] |

| Great ape crania | Patch semi-landmarks | Direct projection | Comparable to manual landmarks | [5] |

| Great ape crania | Patch-TPS | Template-based transfer | Comparable or superior to manual landmarks | [5] |

| Great ape crania | Pseudo-landmarks | Automated sampling | Comparable to manual landmarks | [5] |

Technical Implementation and Robustness

Table 2: Methodological Characteristics of Semi-Landmark Approaches

| Method | Implementation Process | Correspondence Quality | Sensitivity to Noise | Computational Demand |

|---|---|---|---|---|

| Patch-based | Projects points from triangular patches defined by 3 landmarks | High (geometric relationship to manual landmarks) | High (vulnerable to surface artifacts) | Moderate |

| Patch-TPS | Transfers template semi-landmarks via Thin-Plate Spline transformation | High | Low (robust to noise and variability) | High |

| Pseudo-landmark | Automatically samples points with minimal landmark guidance | Variable (no guaranteed homology) | Low | Moderate |

| Sliding Semi-landmarks | Iteratively slides points to minimize bending energy or Procrustes distance | Improved after sliding | Moderate | High (iterative process) |

Experimental Protocols and Workflows

Patch-Based Semi-Landmarking

The patch method enables specimen-specific semi-landmark placement without a pre-defined template [5]. The workflow proceeds as follows:

Patch Definition: Select three previously digitized landmarks that define the triangular biological region of interest.

Grid Registration: Register a template triangular grid with user-specified point density to the landmark-bounded triangle using thin-plate spline deformation.

Surface Projection:

- Smooth surface normal vectors via Laplacian smoothing to minimize noise impact

- Calculate average surface normal from the three defining landmarks as projection vector

- Cast rays from grid points toward surface, selecting first intersection

- If no intersection found, reverse direction or select nearest mesh point

Grid Merging:

- Identify unique triangle edges in complex multi-patch configurations

- Place uniformly sampled lines between endpoints

- Project additional points to ensure continuous coverage

- Combine with manual landmarks into final configuration

Template-Based Semi-Landmark Transfer

Template approaches improve consistency across specimens by propagating semi-landmarks from a reference specimen [5]:

Template Selection: Choose specimen with greatest geometric similarity to sample members as reference.

Template Marking: Apply patch-based or other semi-landmark method to template specimen.

Landmark Transfer:

- Compute thin-plate spline transformation based on manual landmark correspondences between template and target

- Warp template semi-landmarks to target specimen using TPS transformation

- Project each warped semi-landmark to target surface along template normal vector

- Apply reversal procedure or nearest point selection for failed intersections

Optional Sliding: Iteratively slide semi-landmarks to minimize bending energy or Procrustes distance, replacing template with mean shape after first iteration.

Table 3: Key Software and Analytical Tools for Semi-Landmark Research

| Tool Name | Function | Application Context |

|---|---|---|

| 3D Slicer with SlicerMorph | 3D visualization and landmark collection | Medical image analysis, 3D morphometrics |

| Morpho R package | Sliding semi-landmarks, Procrustes analysis | Statistical shape analysis |

| Geomorph R package | GM analyses integration with comparative methods | Evolutionary biology, organismal shape |

| Viewbox 4 | Digitization template creation | Archaeological materials, osteology |

| auto3dgm | Landmark-free correspondence algorithm | Comparative morphology without landmarks |

| Artec Eva Scanner | High-resolution 3D surface scanning | Specimen digitization, mesh creation |

Discussion: Method Selection Guidelines

The comparative data indicates that semi-landmark methods generally achieve classification accuracy comparable to or exceeding traditional landmark and outline-based approaches [14] [15]. However, method selection involves important trade-offs between correspondence quality, robustness, and computational requirements.

For highly variable datasets or those with substantial missing data, template-based approaches (especially Patch-TPS) demonstrate superior robustness compared to direct patch projection [5]. The integration of TPS transformation with surface projection accommodates greater shape variation while maintaining correspondence.

In applications where computational efficiency is prioritized or template selection is challenging, direct patch-based semi-landmarking provides a viable alternative, though with increased vulnerability to surface noise and artifacts.

For outline analysis of two-dimensional structures, both semi-landmark and elliptical Fourier methods perform equivalently in discrimination tasks, suggesting methodological choice can be based on researcher familiarity and software access [14].

Critically, all semi-landmark approaches introduce some degree of geometric approximation, as their locations are algorithmically determined rather than biologically homologous [1]. Consequently, results from such analyses should be interpreted as approximations of biological reality, with appropriate acknowledgment of methodological uncertainty.

Future Directions

Emerging methodologies continue to refine the balance between shape information density and biological correspondence. Machine learning approaches show promise for automating landmark and semi-landmark placement while learning optimal representations from large datasets. Additionally, integration with biomedical applications such as nutritional assessment from arm shapes [16] and craniofacial studies [17] demonstrates the expanding translational potential of dense shape quantification.

As semi-landmark methodologies mature, standardization of protocols and validation across diverse biological structures will be essential for comparative morphological research. The development of open-access templates and workflows, such as those for human ossa coxae [18], represents a crucial step toward reproducible morphometric science.

Geometric morphometrics relies on the precise capture of form to analyze biological shape variation. While traditional anatomical landmarks provide sparse but biologically homologous points, semi-landmarking methods have become indispensable for quantifying shape across surfaces and curves lacking discrete landmarks. These methods, however, involve significant methodological choices. Researchers must navigate key trade-offs between point correspondence, sample coverage, repeatability, and computational cost. This guide objectively compares the performance of major semi-landmarking approaches, providing experimental data to inform method selection for shape discrimination research in evolutionary biology, biomedicine, and beyond.

Method Comparison at a Glance

The table below summarizes the core characteristics and trade-offs of three prominent semi-landmarking approaches: patch-based, patch-TPS, and pseudo-landmarking.

| Method | Correspondence Basis | Sample Coverage | Repeatability | Computational Cost | Best Suited For |

|---|---|---|---|---|---|

| Patch-Based [5] | Geometric relationship to manual landmarks defining triangular patches | Dependent on manual landmark placement; risk of gaps or uneven density | Sensitive to surface noise and missing data; can produce outliers | Lower for individual specimens | Studies with abundant, reliable manual landmarks and low-noise surfaces |

| Patch-TPS [5] | Transferred from template via Thin-Plate Spline (TPS) transform | Consistent, template-driven coverage across all specimens | High; more robust to noise and dataset variability | Moderate; requires TPS warp and projection for each specimen | Standardized analyses where a representative template is available |

| Pseudo-Landmarking [5] | Automatically sampled points with no biological homology; correspondence is algorithmic | Comprehensive and even, based on template spherical topology | High; robust performance with noise and variability | Higher initial setup; efficient projection to new specimens | High-density shape capture for complex surfaces without requiring manual landmarks between templates |

Experimental Performance Data

A comparative study implemented these three strategies to analyze cranial morphology in three species of great apes (Pan troglodytes, Gorilla gorilla, Pongo pygmaeus). The goal was to evaluate the shape information each method added when estimating a transform between an individual specimen and a population average template. The average mean root squared error (MRSE) between the transformed mesh and the template quantified performance [5] [19].

| Method | Average MRSE Performance | Sensitivity to Noise/Missing Data | Key Strength | Key Weakness |

|---|---|---|---|---|

| Patch-Based | Comparable to manual landmarks alone [5] | High; resulted in outliers with large deviations [5] | Does not require a prior template; geometric interpretation is preserved per specimen [5] | Coverage is dependent on the availability of manual landmarks [5] |

| Patch-TPS | Comparable or exceeded manual landmark accuracy [5] | Low; provides robust performance [5] | Improves robustness over the basic patch method [5] | Requires a representative template specimen [5] |

| Pseudo-Landmarking | Comparable or exceeded manual landmark accuracy [5] | Low; provides robust performance [5] | Excellent sample coverage and repeatability [5] | Points have no geometric relationship to original landmarks [5] |

Detailed Experimental Protocols

To ensure reproducibility and provide context for the performance data, this section details the key experimental methodologies from the cited studies.

Great Ape Cranial Morphology Study

This study serves as the primary source for the performance data in the previous section [5] [19].

- Imaging and Data: The analysis used DICOM stacks of great ape crania from the National Museum of Natural History collections, converted to 3D volumes. Manual landmarks were previously placed using 3D Slicer [5].

- Patch-Based Landmarking: For a defined triangular patch bounded by three manual landmarks, a template grid of points was generated. This grid was deformed to the patch using a Thin-Plate Spline (TPS) and projected onto the actual specimen surface. Projection involved casting a ray in the direction of the average surface normal of the three bounding landmarks. Adjacent patches were merged to prevent overlap [5].

- Patch-TPS Landmarking: A single template specimen was densely landmarked using the patch method. These semi-landmarks were then transferred to every other specimen in the dataset by first warping the specimen to the template using a TPS transformation defined by the manual landmarks. The template semi-landmarks were then projected onto the warped specimen's surface along the template's normal vectors [5].

- Pseudo-Landmarking: A template model was regularly sampled to generate a dense set of points, which were projected to the model's external surface assuming spherical topology. A spatial filter enforced a minimum distance between points. These pseudo-landmarks were transferred to target specimens via a TPS transform and projection [5].

- Evaluation Metric: The transformed mesh of an individual specimen was compared to the population average template, and the Mean Root Squared Error (MRSE) between them was calculated to quantify transform success [5].

Comparison of Semi-Landmarking Approaches

This study assessed the performance of three landmark-driven semilandmarking approaches on ape crania and human heads, analyzing how different methods influence results [1].

- Methodology: The study compared different algorithms for establishing dense point correspondences, including sliding semilandmarks (minimizing bending energy or Procrustes distance), and landmark-free algorithms like Iterative Closest Point (ICP). The consistency of statistical results from morphometric analyses based on these different point placements was evaluated [1].

- Findings: The different approaches produced semilandmarks at different locations, leading to discrepancies in statistical results. However, non-rigid semilandmarking approaches showed greater consistency with each other. Landmark-free algorithms, while powerful, could project points to different anatomical features on target specimens, especially when shape differences were large [1].

Outline Analysis in Feather Shape Discrimination

This study compared geometric morphometric methods for classifying specimens based on outlines, providing insights into semi-landmark alignment methods [14].

- Data: The research used rectrices (tail feathers) from Ovenbirds (Seiurus aurocapilla) of different age categories to compare outline methods [14].

- Semi-Landmark Alignment: Two semi-landmark alignment methods were tested: Perpendicular Projection (PP) and Bending Energy Minimization (BEM) [14].

- Performance: Both semi-landmark methods, along with Elliptical Fourier Analysis and the Extended Eigenshape method, produced roughly equal rates of correct classification in Canonical Variates Analysis (CVA). Classification success was not highly dependent on the number of points used to represent the curve [14].

Workflow and Relationship Diagram

The following diagram illustrates the logical workflow for selecting a semi-landmarking method, based on the key trade-offs identified in the experimental data.

The Scientist's Toolkit: Essential Research Reagents and Software

Successful implementation of semi-landmarking methods requires a suite of specialized software tools and packages.

| Tool/Software | Primary Function | Relevance to Semi-Landmarking |

|---|---|---|

| 3D Slicer / SlicerMorph [5] | Open-source platform for biomedical image visualization and analysis | Provides the environment in which the patch, patch-TPS, and pseudo-landmarking methods were implemented and tested [5]. |

| R Package: Morpho [5] | Geometric morphometrics analysis in R | Offers tools for statistical analysis of landmark data, including sliding semi-landmarks to minimize bending energy or Procrustes distance [5]. |

| R Package: geomorph [5] [19] | Geometric morphometric analysis of landmark shapes in R | Used for the collection and analysis of geometric morphometric shape data, supporting the evaluation of semi-landmark approaches [5] [19]. |

| auto3dgm [1] | Landmark-free algorithm for establishing point correspondences | An ICP-based package that automatically projects semilandmarks from a template to target specimens, without requiring manual landmarks [1]. |

The choice of a semi-landmarking method is a fundamental step in shape analysis that directly influences research outcomes. No single method is universally superior; each occupies a distinct point in the trade-off space between correspondence, coverage, repeatability, and cost. Patch-based methods offer direct geometric interpretation but are fragile with noisy data. Patch-TPS and pseudo-landmarking provide superior robustness and consistency at the cost of increased computational complexity and reduced direct biological homology for pseudo-landmarks. Researchers should align their choice with specific research goals, data quality, and the importance of biological homology versus dense, repeatable shape capture. As the field advances, results from these analyses should be viewed as powerful, yet cautious, approximations of biological reality.

A Practical Guide to Prevalent Semi-Landmarking Methodologies

In geometric morphometrics, the analysis of biological form often extends beyond the use of traditional landmarks to include outlines and surfaces. This is facilitated by sliding semi-landmarks, points that are slid along curves or surfaces to remove tangential variation and establish geometric, rather than strictly biological, homology across specimens [20] [21]. Two principal criteria govern this sliding process: minimizing bending energy (BE) and minimizing Procrustes distance (D). The choice between these criteria is not merely a technicality; it influences the estimation of shape variation and can alter the outcomes of statistical analyses, particularly when studying groups with low morphological disparity, such as modern human populations [20] [22]. This guide provides an objective comparison of these two methods, detailing their theoretical foundations, experimental performance, and practical implications for shape discrimination research.

Methodological Comparison: Core Principles and Assumptions

The two sliding methods operate on different philosophical and mathematical principles, which underlie their distinct behaviors in morphometric analyses.

| Feature | Minimizing Bending Energy (BE) | Minimizing Procrustes Distance (D) |

|---|---|---|

| Core Principle | Slides points to minimize the bending energy of the Thin-Plate Spline (TPS) deformation from a reference form [20] [1]. | Slides points to minimize the partial Procrustes distance between the specimen and a reference form [20] [1]. |

| Underlying Assumption | Assumes the observed contour is the result of the smoothest possible deformation of the reference contour [20]. | Assumes the best correspondence is achieved when specimen points lie along lines perpendicular to the reference curve [20]. |

| Philosophical Basis | A model-based approach that imposes a specific (smooth) deformation model [1]. | A phenomenological approach that seeks a direct geometric fit without a specific deformation model [1]. |

| Spatial Influence | Sliding is localized, as bending energy gives greater weight to landmarks and semilandmarks that are spatially close [1]. | Sliding is global, as all landmarks and semilandmarks influence the minimization of Procrustes distance, regardless of proximity [1]. |

Experimental Data and Performance Comparison

Empirical studies comparing these two methods reveal that while they sometimes yield similar results, key differences can emerge, particularly in the estimation of variation and the resulting morphospace.

A seminal study by Perez et al. (2006) compared BE and D methods using human molars and craniofacial data, employing bootstrapped Goodall's F-tests, Foote's measurement, Principal Component Analysis (PCA), and Discriminant Function Analysis [20] [22]. The table below summarizes the quantitative outcomes.

| Analysis Type | Findings for Bending Energy (BE) vs. Procrustes Distance (D) | Interpretation |

|---|---|---|

| Goodall's F-test | F-scores and P-values were similar for both criteria [20] [22]. | Both methods detect group mean shape differences with comparable statistical power in this context. |

| Foote's Measurement | BE and D yielded different estimates of within- and between-sample variation [20] [22]. | The methods disagree on the apportionment of morphological variance, which can impact evolutionary inferences. |

| Principal Component Analysis (PCA) | Low correlation between the first principal component axes obtained by D and BE [20] [22]. | The major axes of shape variation in the morphospace are method-dependent. |

| Discriminant Function Analysis | Percentage of correct classification was similar for BE and D, but the ordination of groups along discriminant scores differed between them [20] [22]. | While predictive accuracy may be comparable, the biological interpretation of group separation can vary. |

Practical Considerations in Implementation

Beyond the statistical outcomes, practical aspects of implementation are critical for researchers.

- Effect of Iterations: The process of sliding semi-landmarks is iterative. Research on 3D facial images shows that classification accuracy is affected by the number of iterations, but not in a simple, progressive manner. Stability and highest accuracy were achieved at 12 iterations, with a decline in performance thereafter [23]. This indicates that more iterations are not necessarily better, and an optimal, dataset-specific number should be determined.

- Template Dependency and Alternatives: Some methodologies use a template-dependent approach for collecting and aligning semi-landmarks. This method, which projects semi-landmarks onto a target specimen using perpendiculars from a template, has been shown to produce shape distortion comparable to or lower than BE and D methods, though it can result in a greater loss of degrees of freedom [24].

- Comparative Performance with Other Techniques: When compared to other morphometric techniques like Elliptic Fourier Analysis (EFA) and landmark-only analysis, semi-landmark methods (of which BE and D are a part) show superior performance for analyzing complex, ornamented structures where landmarks are sparse [25].

Essential Workflows and Research Toolkit

Generalized Workflow for Sliding Semi-Landmarks

The following diagram illustrates the standard workflow for processing sliding semi-landmarks, highlighting the key decision point between the BE and D criteria.

Successful implementation of sliding semi-landmark studies requires a suite of software tools and methodological choices. The table below details key "research reagents" for this field.

| Tool / Resource | Function / Purpose | Examples & Notes |

|---|---|---|

| Digitization Software | To manually locate anatomical landmarks and initial semi-landmarks on 2D images or 3D models. | tpsDig [20], MakeFan [20] |

| Sliding & Analysis Software | To perform the iterative sliding of semi-landmarks and subsequent statistical shape analysis. | Morpho [23], geomorph [23], EVAN Toolbox [23], Viewbox [23] |

| Template Mesh | A reference specimen with predefined landmarks and semi-landmarks, used to warp and transfer points to target specimens. | Crucial for surface semi-landmark analysis; often a specimen with average shape [21] [1]. |

| Sliding Criterion | The algorithmic rule (BE or D) that defines how semi-landmarks are adjusted to establish correspondence. | A core methodological choice that influences results [20] [1]. |

| Alignment Algorithm | Methods for non-rigidly registering a template to a target specimen to transfer semi-landmarks. | Thin-Plate Spline (TPS) [23], Iterative Closest Point (ICP) [1] |

The choice between minimizing bending energy and minimizing Procrustes distance for sliding semi-landmarks is consequential. While both methods can effectively discriminate between groups, they can lead to different interpretations of morphological variation and group ordination [20] [22]. Bending energy, with its localized and model-based smoothing, is well-suited for hypotheses involving smooth biological deformations. In contrast, Procrustes distance provides a direct geometric fit without a strong prior model of deformation.

Recommendations for Researchers:

- Justify Your Choice: The selection of a sliding criterion should be driven by the biological question and the assumptions one is willing to make about the nature of shape change.

- Conduct Sensitivity Analyses: Especially in studies of groups with low morphological variation (e.g., modern human populations), it is prudent to analyze data using both criteria to test the robustness of the findings [20].

- Optimize Iterations: Avoid arbitrarily high iteration counts. Experiment to find the optimal number that provides stable results for your specific dataset [23].

- Report Methodology in Detail: Always clearly state the software, sliding algorithm, number of iterations, and template used to ensure the reproducibility of your research.

The ongoing development of methods, including functional data analysis and landmark-free algorithms, promises to further refine our ability to capture and analyze complex biological shapes [1] [26]. However, the BE and D approaches for sliding semi-landmarks remain foundational tools, and understanding their nuances is critical for rigorous shape discrimination research.

In geometric morphometrics, the quantitative assessment of three-dimensional shape often relies on landmark points. However, manual landmarks provide a sparse representation of anatomy. Semi-landmarks, particularly those placed via patch-based sampling, address this limitation by enabling the dense sampling of surface regions, thereby capturing richer morphological information. This guide objectively compares three predominant semi-landmarking strategies—Patch, Patch-TPS, and Pseudo-landmark sampling—evaluating their performance based on robustness, computational efficiency, and accuracy in shape estimation for shape discrimination research.

Semi-landmarks are points that are placed on an object's surface to supplement the information provided by manually placed anatomical landmarks. They relax the strict requirement of biological homology to capture shape information from regions where traditional landmarks are scarce or difficult to identify, such as smooth surfaces or areas with large morphological differences [5]. The core challenge in semi-landmarking lies in managing the trade-offs between point correspondence across specimens, sample coverage, repeatability, and computational time [5].

Patch-based sampling is a specific approach to semi-landmarking that defines regions of interest on a 3D surface using triangles bounded by manual landmarks. A template grid of points is generated within each triangular patch and then projected onto the actual specimen surface. This method provides a direct geometric relationship between the semi-landmarks and the manual landmarks defining the patch [5]. This guide details the protocols for this method and compares it with other prominent sampling strategies, providing researchers in morphometrics and drug development with the data needed to select an appropriate method for their shape discrimination work.

Methodologies and Experimental Protocols

The following section details the experimental setup and specific protocols for the three semi-landmarking strategies compared in this guide. The foundational research for this comparison analyzed cranial morphology across three species of great apes (Pan troglodytes, Gorilla gorilla, and Pongo pygmaeus) using 3D surface meshes [5].

Patch-Based Semi-Landmarking (Patch)

The patch method operates on each specimen independently.

- Step 1: Patch Definition: A user selects three pre-digitized manual landmarks on a specimen's surface to define the vertices of a triangular patch [5].

- Step 2: Grid Generation: A template triangular grid, with a user-specified density of points, is registered to the bounding triangle using a thin-plate-spline (TPS) deformation [5].

- Step 3: Surface Projection: The grid points are projected onto the specimen's mesh surface.

- The mesh surface is smoothed using Laplacian smoothing to mitigate the impact of surface noise [5].

- The surface normal vectors at the three manual landmarks are averaged to define the projection direction for the entire patch [5].

- A ray is cast from each grid point in the direction of this averaged normal vector. The first intersection of the ray with the mesh surface is taken as the location of the semi-landmark [5].

- If no intersection is found, the ray direction is reversed. If still no intersection is found, the closest mesh point to the grid point is selected [5].

- Step 4: Merging Patches: After all patches are processed, their points are merged into a single landmark set. To prevent overlap and ensure coverage, the edges of adjacent triangles are uniformly sampled, and these points are also projected onto the surface [5].

Patch-Based Semi-Landmarks Applied through Thin-Plate Splines (Patch-TPS)

This method uses a single template specimen to define the semi-landmark set, which is then transferred to all other specimens.

- Step 1: Template Landmarking: A single template (a representative specimen or a synthetic model) is used to define triangular patches and generate semi-landmarks using the patch method described above [5].

- Step 2: Thin-Plate Spline Warping: For each target specimen, a TPS transformation is calculated based on the correspondence between the manual landmarks on the template and the target specimen. This transformation warps the entire template mesh, including its semi-landmarks, toward the target specimen's shape [5].

- Step 3: Surface Projection: Each warped semi-landmark point is projected onto the target specimen's surface.

- A ray is cast from the template semi-landmark point in the direction of the template's surface normal vector.

- The final intersection of this ray with the warped target specimen's mesh is selected as the semi-landmark location [5].

- The same fallback procedures (ray reversal, closest point selection) as the standard patch method are used if no intersection is found [5].

Pseudo-Landmark Sampling

Pseudo-landmarks are points placed automatically on a surface with no direct geometric relationship to manual landmarks.

- Step 1: Template Sampling: A dense set of points is regularly sampled on the surface of a template mesh. This is often achieved by assuming spherical topology and applying a spatial filter to enforce a minimum distance between points, removing coincident ones [5].

- Step 2: Transfer to Specimens: The pseudo-landmarks are transferred to each specimen in the dataset using the same TPS warping and surface projection protocol described in the Patch-TPS method [5].

The following diagram illustrates the core workflow for the Patch-TPS and Pseudo-landmark methods, which rely on a template specimen.

Performance Comparison and Experimental Data

To evaluate the performance of the three dense sampling strategies, researchers estimated the transform between an individual specimen and a population average template. The accuracy was quantified using the average mean root squared error between the transformed mesh and the template [5]. The following table summarizes the key findings.

Table 1: Performance Comparison of Semi-Landmarking Strategies

| Method | Shape Estimation Accuracy | Robustness to Noise & Missing Data | Correspondence | Sample Coverage | Computational Demand |

|---|---|---|---|---|---|

| Patch | Comparable to manual landmarks alone [5] | Low: Highly sensitive to noise and missing data, can produce outliers with large deviations [5] | High: Direct geometric relationship to manual landmarks [5] | Dependent on manual landmark placement [5] | Lower (processed per specimen) |

| Patch-TPS | High: Comparable or exceeding manual landmark accuracy; more robust than Patch [5] | High: More robust performance [5] | High: Maintains relationship via template [5] | Consistent, defined by template [5] | Medium (requires TPS warp) |

| Pseudo-Landmark | High: Comparable or exceeding manual landmark accuracy [5] | High: More robust performance [5] | Low: No direct relationship to manual landmarks [5] | Excellent, can cover entire surface uniformly [5] | Medium (requires TPS warp) |