Building the Future of Parasitology: Digital Specimen Databases for Enhanced Research and Drug Development

This article explores the construction, application, and validation of digital parasite specimen databases, a critical innovation addressing the decline in morphological expertise and scarce physical samples.

Building the Future of Parasitology: Digital Specimen Databases for Enhanced Research and Drug Development

Abstract

This article explores the construction, application, and validation of digital parasite specimen databases, a critical innovation addressing the decline in morphological expertise and scarce physical samples. Tailored for researchers and drug development professionals, it details the foundational need for these resources, the methodology behind whole-slide imaging and database architecture, solutions for data integrity and accessibility challenges, and the comparative validation of AI-driven analysis. By synthesizing the latest 2025 research, it positions digital databases as indispensable tools for accelerating parasite research, improving diagnostic accuracy, and fostering international collaboration in the development of novel therapeutics.

The Critical Need for Digital Parasite Databases in Modern Research

The Crisis in Morphological Expertise and Specimen Scarcity

The field of morphological taxonomy faces a critical juncture, characterized by the parallel declines of expert capabilities and physical specimen availability. This erosion of expertise undermines fundamental biodiversity research, conservation efforts, and diagnostic capabilities across multiple scientific disciplines. Taxonomic expertise provides the essential foundation for species identification, description, and classification, enabling accurate documentation of Earth's biodiversity. Simultaneously, the declining accessibility of high-quality physical specimens for training and research creates a reinforcing cycle that further diminishes morphological skills. This crisis is particularly acute in parasitology and invertebrate taxonomy, where specialized morphological knowledge is essential for accurate diagnosis and research.

The significance of this dual crisis extends beyond academic taxonomy into practical applications in medicine, conservation biology, and environmental monitoring. In parasitology, for instance, morphological diagnosis remains the gold standard for identifying many parasitic infections, yet educational programs in developed countries are allocating significantly less time to parasitology education [1] [2]. This whitepaper examines the scope of this crisis, quantifies its impacts, and presents digital solutions that can help bridge the growing expertise gap while addressing specimen scarcity challenges.

Quantifying the Crisis: Data on Expertise and Specimen Availability

Global Disparities in Taxonomic Expertise

The distribution of taxonomic expertise shows significant global inequalities that directly impact biodiversity research capabilities. A comprehensive global survey reveals that 48% of countries have fewer than ten active plant taxonomists, with stark regional gaps in access to basic tools and infrastructure [3]. The "limitations index" developed in this survey identifies Angola, Benin, Botswana, Colombia, Sierra Leone, and Venezuela as facing the most severe challenges. This expertise shortage is most acute in low-income biodiversity-rich regions where species may become extinct before being scientifically described [3].

Table 1: Global Distribution of Taxonomic Expertise and Resources

| Region Type | Approximate Number of Active Taxonomists | Access to Basic Tools & Infrastructure | Primary Challenges |

|---|---|---|---|

| Low-income biodiversity-rich regions | Critically low (<10 experts in 48% of countries) | Severely limited | Lack of training resources, inadequate infrastructure, specimen scarcity |

| Central European countries (e.g., Hungary) | Declining rapidly (to 1970s levels) | Available but underutilized | Aging expert population, decreased publications, administrative burdens |

| Developed nations | Relatively higher but declining | Well-developed | Reduced educational focus, shifting research priorities to molecular methods |

The Decline of Expertise in Specific Regions and Disciplines

The expertise crisis manifests dramatically at national levels. In Hungary, a Central European country with a strong history of taxonomic research, almost half of the nearly 36,000 animal species recorded in the country lack active biodiversity experts for identification [4]. More than a quarter of the fauna have only one or two active experts available. The research output has decreased to levels comparable to the 1970s, with the number of active experts and published papers showing a strong decline since approximately 2010 [4].

In medical parasitology, Japan has witnessed a significant decrease in lecture hours for Medical Laboratory Technologist (MLT) programs compared to 1994 levels [2]. This decline is particularly concerning as MLTs play a critical role in detecting parasitosis, which physicians then diagnose and treat. The reduction in morphological training occurs despite the continued importance of microscopy-based morphologic analysis for diagnosing parasitic infections [1] [2].

Table 2: Declining Educational Focus in Parasitology (Japan Case Study)

| Educational Aspect | Historical Status | Current Status | Impact on Expertise |

|---|---|---|---|

| Lecture hours in MLT programs | Substantial (pre-1994) | Significantly decreased | Reduced morphological identification skills |

| Student interest in parasitology | Not formally measured | Students tend to disregard parasitology as necessary | Decreasing pipeline of future experts |

| Practical specimen access | Available through physical collections | Diminished due to reduced parasitic infections | Limited hands-on experience with rare specimens |

Digital Specimen Databases: A Technological Solution

The Digital Database Approach

Digital specimen databases represent a promising technological solution to address both specimen scarcity and expertise limitations. These databases utilize whole-slide imaging (WSI) technology to digitize physical glass specimens, creating virtual slides that can be accessed remotely [1]. The fundamental advantage of this approach lies in its ability to preserve rare specimens indefinitely without deterioration while enabling widespread access to valuable morphological reference materials.

A pioneering project in Japan has demonstrated the practical implementation of this approach. Researchers created a preliminary digital parasite specimen database using 50 slide specimens (including parasite eggs, adults, and arthropods) from Kyoto University and Kyoto Prefectural University of Medicine [1]. The database successfully incorporated specimens ranging from parasitic eggs and adult worms to ticks and insects typically observed under low magnification, as well as malarial parasites requiring high magnification. Each specimen was accompanied by explanatory notes in both English and Japanese to facilitate learning, with the shared server enabling approximately 100 individuals to access the data simultaneously via web browsers on various devices [1].

Research Reagent Solutions for Digital Morphology

Table 3: Essential Research Reagents and Materials for Digital Specimen Databases

| Item | Function | Implementation Example |

|---|---|---|

| SLIDEVIEW VS200 slide scanner | Acquires high-resolution virtual slide data | Used with Z-stack function to accommodate thicker specimens by accumulating layer-by-layer data [1] |

| Whole-slide imaging (WSI) technology | Digitizes glass specimens for preservation and sharing | Prevents specimen damage and deterioration; simplifies data storage and backup [1] |

| Shared server infrastructure | Hosts virtual slide database for multi-user access | Windows Server 2022 implementation allows ~100 simultaneous users via web browsers [1] |

| Multi-language explanatory texts | Facilitates international educational use | English and Japanese annotations attached to each specimen [1] |

| Taxonomic folder organization | Structures database for efficient retrieval | Folder structure organized according to taxonomic classification of organisms [1] |

Methodological Framework: Creating Digital Specimen Databases

Workflow for Database Development

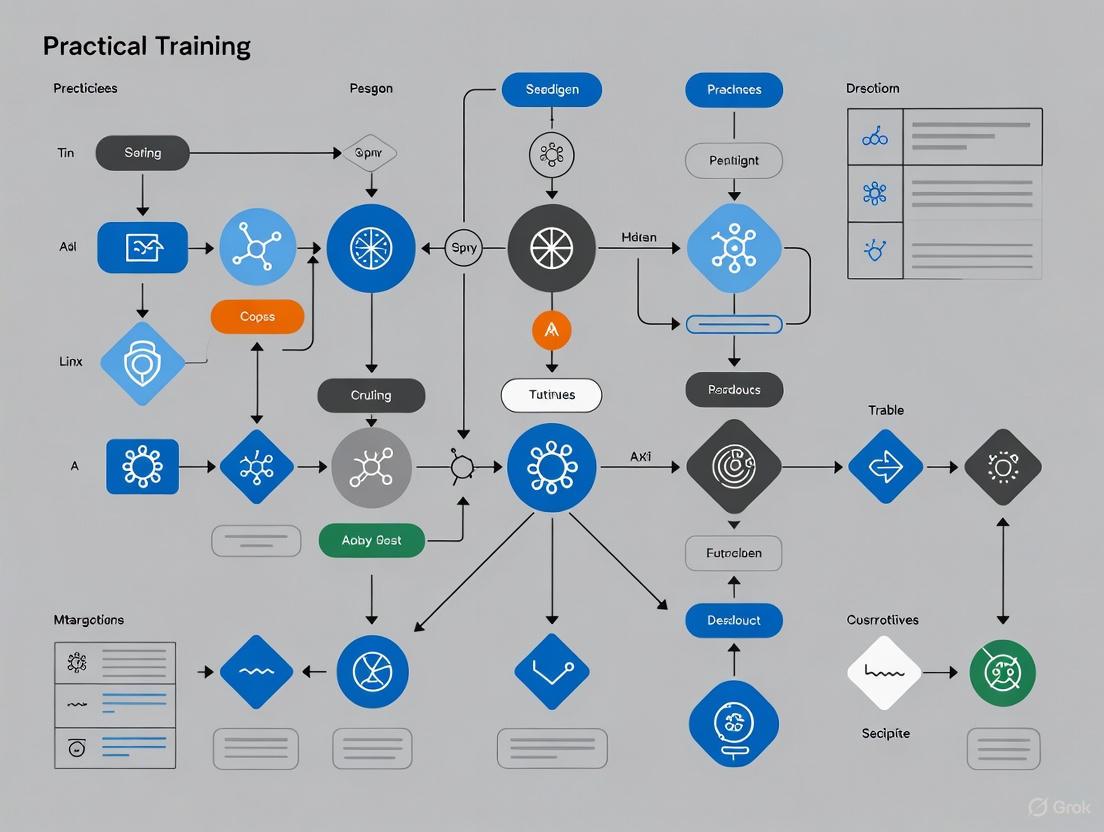

The development of a comprehensive digital specimen database follows a systematic workflow that ensures high-quality morphological data preservation and accessibility. The following diagram illustrates the key stages in this process:

Diagram 1: Digital Specimen Database Creation Workflow

Detailed Experimental Protocols

Specimen Acquisition and Preparation

The initial phase involves careful selection and preparation of physical specimens. The Japanese parasitology database project acquired 50 slide specimens of parasitic eggs, adult parasites, and arthropods from university collections [1]. Some specimens were prepared in-house, while others were purchased from commercial suppliers and museums. Critical considerations include:

- Ethical Compliance: Ensuring specimens contain no personal information and are intended solely for educational and research purposes [1].

- Specimen Diversity: Incorporating specimens across different life stages (eggs, adults) and taxonomic groups to ensure comprehensive coverage.

- Preservation State: Selecting specimens with optimal morphological preservation to facilitate high-quality digital reproduction.

Digital Scanning Protocol

The digitization process employs specialized equipment and methodologies to capture high-fidelity representations of physical specimens:

- Equipment Specification: Using the SLIDEVIEW VS200 slide scanner (Evident Corporation) or equivalent systems capable of high-resolution imaging [1].

- Z-stack Implementation: Applying the Z-stack function for specimens with thicker smears, varying the scan depth to accumulate layer-by-layer data for optimal focus throughout the specimen [1].

- Quality Control: Rescanning slides with out-of-focus areas as needed, with authors reviewing all digital images for focus and clarity before database incorporation [1].

- Multi-resolution Capture: Scanning specimens at appropriate magnifications (40x for parasite eggs and adults, 1000x for malarial parasites) to ensure diagnostically relevant detail [1].

Database Architecture and Access Management

The technical implementation requires robust database architecture with appropriate access controls:

- Taxonomic Organization: Structuring folder organization according to taxonomic classification to facilitate intuitive navigation [1].

- Multi-language Support: Providing specimen names and descriptions in multiple languages (e.g., English and Japanese) to enhance accessibility for international users [1].

- Access Control: Implementing identification code and password requirements to ensure appropriate use while maintaining accessibility for educational and research purposes [1].

- Server Capacity: Configuring shared server infrastructure (e.g., Windows Server 2022) to support approximately 100 simultaneous users without specialized viewing software [1].

Applications in Research and Drug Discovery

Enhancing Morphological Profiling in Discovery Research

The principles underlying digital specimen databases extend beyond taxonomy into drug discovery research, where morphological profiling has emerged as a powerful method for predicting compound bioactivity. The Cell Painting assay, for instance, captures morphological changes across various cellular compartments, enabling rapid prediction of compound properties and mechanisms of action [5].

Recent advancements have demonstrated how comprehensive morphological profiling resources using carefully curated compound collections can generate robust datasets across multiple imaging sites. These resources facilitate exploration of compound bioactivity and prediction of mechanisms of action by correlating morphological profiles with cellular toxicity and specific protein targets [5]. The integration of digital morphology databases with such profiling approaches creates new opportunities for understanding compound effects while preserving crucial morphological expertise.

Addressing the Cryptic Species Challenge

Digital specimen databases play a vital role in addressing the growing challenge of cryptic species identification—genetically distinct lineages with minimal morphological differentiation. Current practices in many invertebrate groups require assigning original morphospecies names to particular genetic lineages before formally describing other lineages, which considerably delays—and may even hinder—the scientific description of cryptic species [6].

Recommended adaptations to accelerate cryptic species description include:

- New Name Assignment: Assigning new names to each lineage without necessarily first obtaining DNA from the morphospecies holotype or designating a neotype [6].

- Basic Morphological Diagnosis: Providing fundamental morphological diagnosis in cryptic species descriptions rather than exhaustive characterization [6].

- Terminology Clarification: Systematically following morphospecies names by 'sensu lato' or 'species group' when referring to the entire morphospecies and by 'sensu stricto' when referring to the original lineage [6].

Digital databases facilitate this process by providing widespread access to reference specimens and standardized morphological data, enabling more researchers to contribute to cryptic species characterization.

Future Directions and Implementation Recommendations

Strategic Investment in Taxonomic Training

Addressing the crisis in morphological expertise requires strategic investment in regionally adapted training programs with improved access to infrastructure, engaging teaching methods, cascading mentorship, and stronger collaboration [3]. The massive decline in biodiversity expertise documented in Central Europe highlights the urgency of these investments [4]. Implementation should focus on:

- Integrating Traditional and Modern Approaches: Equipping the next generation of taxonomists with both robust morphology-based knowledge and fluency in modern techniques like molecular analysis and digital data management [4].

- Creating Specialized Positions: Establishing more positions and focused grants for biodiversity researchers to maintain national knowledge bases and reduce dependence on foreign expertise [4].

- Leveraging Digital Resources: Using digital specimen databases to supplement declining physical collections and provide equitable access to morphological reference materials across geographic and economic boundaries [1].

Expanding Digital Database Capabilities

Future development of digital specimen databases should focus on:

- Content Expansion: Systematically adding specimens from multiple national and international collections to create comprehensive taxonomic coverage [1].

- Analytical Integration: Combining morphological data with genetic, ecological, and distributional information to create multidimensional taxonomic resources.

- Educational Optimization: Structuring databases and interfaces specifically to support both classroom instruction and self-directed learning, particularly in contexts with reduced lecture hours [1] [2].

The crisis in morphological expertise and specimen scarcity represents a critical challenge for biodiversity research, parasitology, and drug discovery. Digital specimen databases offer a transformative solution by preserving rare specimens, facilitating widespread access to morphological data, and supporting the development of taxonomic skills despite declining physical collections and educational focus. By implementing these digital resources alongside strategic investments in taxonomic training and adapted practices for species description, the scientific community can work to reverse the current trends of expertise erosion and ensure the preservation of essential morphological knowledge for future generations.

Despite significant advancements in global public health, vector-borne parasitic diseases (VBPDs) continue to represent a profound and persistent challenge to human health and economic development worldwide. These diseases, including malaria, schistosomiasis, leishmaniasis, Chagas disease, African trypanosomiasis, lymphatic filariasis, and onchocerciasis, impose a significant global health burden, accounting for more than 17% of all infectious diseases and forming a considerable challenge to population health globally [7]. The World Health Organization classifies all except malaria as neglected tropical diseases, reflecting their concentration in impoverished and remote communities lacking resources for effective prevention, diagnosis, and treatment [7]. These diseases are not merely health issues; they are also consequences and drivers of poverty, creating a vicious cycle that hampers economic development and traps communities in disadvantage.

The complex epidemiology of these diseases, influenced by environmental, socioeconomic, and healthcare access factors, necessitates ongoing research efforts despite progress in control measures. While overall trends show decreasing burden for some VBPDs, others like leishmaniasis are demonstrating concerning rising prevalence (EAPC = 0.713), indicating that control efforts remain insufficient [7]. Furthermore, diseases that have shown declines, such as African trypanosomiasis, Chagas disease, lymphatic filariasis, and onchocerciasis, continue to persist in many endemic regions, requiring vigilant surveillance and ongoing research to prevent resurgence [7]. This technical guide examines the current global burden of parasitic diseases, analyzes the challenges in parasitology education and diagnosis, and presents innovative digital solutions for maintaining research and diagnostic capabilities in an evolving global health landscape.

Quantitative Analysis of Global Parasite Burden

Current Epidemiological Profiles

Analysis of the Global Burden of Disease (GBD) 2021 data reveals the staggering scale and distribution of vector-borne parasitic diseases across different regions and demographic groups. Malaria dominates the overall burden, representing 42% of all VBPD cases and a staggering 96.5% of all VBPD-related deaths, disproportionately affecting sub-Saharan Africa [7]. Schistosomiasis ranks second in prevalence at 36.5% of cases, reflecting its widespread distribution across Asia, Africa, and Latin America, with approximately 1 billion people globally at risk [7] [8]. The distribution of VBPDs demonstrates pronounced socioeconomic disparities, with low-Socio-demographic Index (SDI) regions bearing the highest burden across nearly all disease metrics [7] [8].

Table 1: Global Burden of Vector-Borne Parasitic Diseases (2021)

| Disease | Global Prevalence | Mortality Share | Primary Endemic Regions | Key Population at Risk |

|---|---|---|---|---|

| Malaria | 42% of VBPD cases | 96.5% of VBPD deaths | Sub-Saharan Africa | Children under 5 |

| Schistosomiasis | 36.5% of VBPD cases | Low mortality | Asia, Africa, Latin America | Approx. 1 billion globally |

| Leishmaniasis | Rising prevalence (EAPC=0.713) | Significant in visceral form | Multiple regions, including sub-Saharan Africa | 700,000-1 million annual cases |

| Lymphatic Filariasis | Significant decline | Low mortality | 39 countries globally | 657+ million at risk |

| Chagas Disease | Rising global prevalence | Complications in chronic phase | Mainly Latin America | Increasing due to globalization |

| Onchocerciasis | Significant decline | Low mortality; causes blindness | Sub-Saharan Africa | >20 million affected |

Demographic and Socioeconomic Disparities

Analysis of GBD 2021 data reveals significant disparities in VBPD burden across sex, age, and socioeconomic groups. Males exhibit greater disability-adjusted life year (DALY) burdens than females, largely attributed to occupational exposure patterns in endemic areas [7]. Age disparities are particularly evident, with children under five facing high malaria mortality and leishmaniasis DALY peaks, while older adults experience complications from chronic conditions like Chagas disease and schistosomiasis [7]. The socioeconomic gradient is stark, with the age-standardized prevalence and DALY rates of VBPDs (except Chagas disease) highest in low-SDI regions by 2021 [8]. Correlation analysis confirms a significant decline in age-standardized prevalence and DALY rates with increasing SDI, highlighting the critical role of development in disease control [8].

Table 2: Distribution of VBPD Burden by Sociodemographic Index (SDI) Regions

| SDI Level | Age-Standardized Prevalence Rate | Age-Standardized DALY Rate | Notable Disease Patterns |

|---|---|---|---|

| Low | Highest for all VBPDs except Chagas | Highest for all VBPDs except Chagas | Dominated by malaria; limited healthcare access |

| Low-Middle | High but lower than low-SDI | High but lower than low-SDI | Mixed burden with regional variations |

| Middle | Moderate | Moderate | Focal endemic areas persist |

| High-Middle | Low | Low | Mainly imported cases and localized transmission |

| High | Lowest | Lowest | Primarily travel-associated cases |

The attributable risk factors for malaria further illustrate the complex interplay between parasitic diseases and underlying social determinants. Globally, 0.14% of DALYs related to malaria are attributed to child underweight, and 0.08% of DALYs related to malaria are attributed to child stunting, demonstrating how malnutrition exacerbates the burden of parasitic infections [8]. This data underscores that VBPDs are not merely biological phenomena but diseases shaped and sustained by social inequities and development gaps.

Challenges in Parasitology Education and Diagnosis

Declining Morphological Expertise

Despite advances in molecular diagnostic techniques, traditional microscopy-based morphologic analysis remains essential for diagnosing many parasitic infections [1]. The morphological identification of adult parasites and their eggs represents a crucial skill for medical laboratory technologists and healthcare providers in endemic regions [1]. However, over the past two decades, educational institutions in developed countries have significantly reduced time allocated to parasitology education for medical technologists who play a central role in parasitology testing [1]. This trend is reflected globally in the decreasing number of hours devoted to parasitology lectures in medical student educational programs, leading to concerns about declining physician ability to diagnose parasitic diseases in several countries [1].

A crucial factor contributing to this decline is the difficulty in obtaining specimens for educational purposes due to reduced parasitic infections in developed countries resulting from improved sanitation [1]. Consequently, only a limited number of parasite egg or body part specimens are available in training schools, and these specimens deteriorate over time owing to repeated use [1]. This creates a vicious cycle where reduced prevalence leads to reduced educational capacity, which in turn diminishes diagnostic capability even for the cases that do occur. The problem is particularly acute for rare or emerging parasite species that may not be included in standardized non-morphological test panels.

Limitations of Current Diagnostic Approaches

Non-morphological tests, including molecular biological techniques and antigen testing, have undoubtedly improved parasite detection and facilitated access to reliable diagnosis [1]. However, these approaches have significant limitations: they typically target a limited range of known parasites, potentially missing rare or emerging species, and can be hindered by inhibitory substances present in specimens [1]. Furthermore, the specialized equipment and workflows required for these tests make them less accessible in resource-limited areas where many parasitic diseases are endemic [1].

The decline in morphological expertise has significant implications for patient care, public health, and epidemiology [1]. Without trained morphologists, surveillance systems may fail to detect unusual outbreaks or emerging parasite species, potentially delaying appropriate public health responses. Additionally, in many resource-limited settings, microscopy remains the most accessible and cost-effective diagnostic method, making the maintenance of these skills essential for global health security. This challenge necessitates innovative approaches to preserve and disseminate morphological expertise despite decreasing hands-on opportunities with physical specimens.

Digital Specimen Databases: Revolutionizing Parasitology Training and Research

Digital Database Architecture and Implementation

In response to the challenges in parasitology education, researchers have developed preliminary digital parasite specimen databases using whole-slide imaging (WSI) technology [1] [9]. This approach involves acquiring slide specimens of parasite eggs, adults, and arthropods from existing collections and creating virtual slide data through high-resolution scanning [1]. The technical process involves using slide scanners such as the SLIDEVIEW VS200 by EVIDENT Corporation, with the Z-stack function employed for thicker specimens to accumulate layer-by-layer data by varying the scan depth [1]. This ensures that all morphological features, from low-magnification structures like parasite eggs to high-magnification features like malarial parasites, are captured with diagnostic clarity [1].

The digital architecture includes a shared server system (Windows Server 2022) that enables approximately 100 individuals to access the data simultaneously via a web browser on various devices without requiring specialized viewing software [1]. The folder structure of the database is organized according to the taxonomic classification of the organisms, and each specimen is accompanied by explanatory text in both English and Japanese to facilitate learning and international collaboration [1]. This digital infrastructure represents a significant advancement over traditional specimen collections, which are constrained by physical degradation, limited access, and maintenance requirements.

Digital Parasite Database Workflow: This diagram illustrates the technical pipeline from physical specimen collection to digital accessibility for education and research applications.

Research Reagent Solutions for Parasitology

The creation and maintenance of digital parasite databases require specific technical resources and reagents that constitute essential research tools for parasitology. The table below details key research reagent solutions and their applications in both traditional and digital parasitology work.

Table 3: Essential Research Reagents and Resources in Parasitology

| Reagent/Resource | Technical Function | Research Application |

|---|---|---|

| Whole-Slide Imaging (WSI) System | Digitizes glass specimens at high resolution | Creates virtual slides for database; enables digital morphology |

| Ethanol-Preserved Specimens | Maintains structural integrity of parasites | Provides source material for slide preparation and molecular studies |

| Stained Slide Preparations | Enhances morphological features for identification | Forms basis of traditional and digital morphological diagnosis |

| Taxonomic Classification Framework | Organizes specimens by phylogenetic relationships | Structures database organization and educational content |

| Shared Server Infrastructure | Hosts digital database with multi-user access | Enables simultaneous remote education and research collaboration |

| Multi-language Annotation | Provides specimen descriptions in multiple languages | Facilitates international educational use and knowledge transfer |

Protocol for Database Construction and Utilization

The methodology for constructing a comprehensive digital parasite database involves systematic procedures for specimen acquisition, digitization, quality control, and deployment:

Specimen Acquisition and Curation: The process begins with obtaining existing slide specimens of parasitic eggs, adult parasites, and arthropods from institutional collections. For example, the Kyoto University and Kyoto Prefectural University of Medicine provided 50 existing slide specimens, some prepared at the university and others purchased from companies and museums [1]. These specimens must be properly documented with taxonomic information and preparation methods.

Digital Scanning Protocol: Each slide specimen is individually scanned using a high-precision slide scanner. The scanning process must accommodate different specimen types: thicker specimens require the Z-stack function to accumulate layer-by-layer data by varying the scan depth [1]. Quality control is essential, with slides in out-of-focus areas being rescanned as needed, and the clearest images selected after review by experts [1].

Database Architecture and Deployment: The digitized data are uploaded to a shared server with folders organized by taxonomic classification. The system implementation includes security measures requiring user identification codes and passwords provided by the host organization, ensuring appropriate use for educational and research purposes [1]. The technical infrastructure must support approximately 100 simultaneous users accessing the data via web browsers on various devices without specialized viewing software [1].

This protocol represents a standardized approach that can be replicated and scaled across institutions to build comprehensive global digital parasite resources. Similar initiatives, such as the University of Nebraska State Museum's parasitology collection digitization, which houses the second-largest collection of parasite samples in the Western Hemisphere, demonstrate the feasibility and value of large-scale digitization efforts [10].

Integration with Global Health Priorities

Alignment with Disease Control Targets

The development of digital parasite databases directly supports the achievement of global health targets for neglected tropical diseases and malaria. The World Health Organization's roadmap for neglected tropical diseases aims to control, eliminate, or eradicate specific diseases through enhanced surveillance, improved diagnostics, and strengthened capacity [7]. Digital databases contribute to these goals by preserving morphological expertise essential for surveillance and outbreak investigation, particularly as disease prevalence decreases and clinical familiarity wanes [1]. For diseases approaching elimination, such as lymphatic filariasis (projected to near elimination by 2029), maintaining diagnostic capability becomes increasingly important to detect residual transmission and prevent resurgence [7].

The forecasting models from GBD 2021 data project divergent trends for different VBPDs, with lymphatic filariasis prevalence nearing elimination by 2029, but leishmaniasis burden rising across all metrics [7]. This divergence necessitates targeted interventions and disease-specific strategies, for which digital resources can provide crucial support. Furthermore, the disproportionate impact of VBPDs on vulnerable populations - including children under five facing high malaria mortality, and older adults experiencing complications from chronic conditions like Chagas disease - underscores the importance of equitable access to diagnostic expertise and training resources [7].

Future Directions and Implementation Framework

The continued development of digital parasite databases requires systematic expansion and international collaboration. Current databases are limited by the specimens available in participating institutions, necessitating plans to expand with additional national and international specimens in the future [1]. The digitization process also depends on external services and equipment availability, highlighting the need for sustainable funding models and technical infrastructure [1]. The implementation of the DAMA (Document, Assess, Monitor, Act) protocol, developed by parasitologists to facilitate sharing and acting on essential information about parasite evolution, ecology, and epidemiology, provides a cooperative framework for addressing the impact of environmental change on parasite distribution [10].

Digital Resources in Global Health Context: This diagram shows how digital specimen databases address the VBPD burden through multiple interconnected pathways to reduce health disparities.

The significant and ongoing global health burden imposed by vector-borne parasitic diseases, coupled with emerging challenges such as climate change, drug resistance, and uneven resource distribution, demands sustained research investment and innovative approaches to education and capacity building [7]. Digital parasite specimen databases represent a transformative approach to preserving essential morphological knowledge, expanding access to educational resources, and supporting diagnostic capabilities despite declining hands-on opportunities with physical specimens in many regions. By leveraging whole-slide imaging technology and shared server platforms, these resources directly address the critical gaps in parasitology education while supporting the global health goal of controlling and eliminating neglected tropical diseases and malaria.

The quantitative burden data from the Global Burden of Disease Study 2021 provides a compelling evidence base for prioritizing parasitic disease research and control efforts, particularly in low-SDI regions where the burden remains concentrated [7] [8]. As the global health community works toward elimination targets for several VBPDs, maintaining morphological expertise through digital archives will become increasingly important for surveillance, outbreak investigation, and confirming elimination. The integration of digital parasitology resources into broader global health strategies represents a cost-effective approach to preserving essential knowledge, building diagnostic capacity, and ultimately reducing the substantial health burden imposed by these persistent parasitic diseases.

The diagnosis of parasitic diseases stands at a critical juncture. While microscopy has been the cornerstone of parasitology for centuries, its limitations are increasingly evident in the face of modern global health challenges. Concurrently, traditional animal models, long used in drug development, often fail to predict human therapeutic outcomes. This whitepaper details the inherent constraints of these established methodologies and frames the emergence of a novel solution: the digital parasite specimen database. By integrating quantitative data on diagnostic performance, outlining experimental protocols for database construction, and visualizing key workflows, we position this digital framework as an indispensable tool for advancing research, refining diagnostics, and accelerating therapeutic development against parasitic diseases.

The discipline of parasitology is navigating a complex transition. In developed nations, improved sanitation has led to a decreased prevalence of parasitic infections, resulting in a scarcity of physical specimens for education and research [1]. This decline directly contributes to a erosion of morphological expertise among healthcare professionals, a concerning trend given that microscopy-based morphologic analysis remains the gold standard for diagnosing numerous parasitic infections [1] [11]. Compounding this diagnostic challenge is the high failure rate of drugs developed using traditional animal models; over 90% of drugs that appear safe and effective in animals fail in human trials due to safety or efficacy issues [12]. This dual crisis in diagnostics and research models underscores an urgent need for innovative approaches. Digital technologies, particularly the creation of comprehensive, accessible digital specimen databases, offer a promising pathway to preserve essential morphological knowledge, enhance diagnostic training, and integrate with modern, non-morphological diagnostic and research methods.

Limitations of Traditional Diagnostic Methods

Constraints of Morphology-Based Diagnostics

Despite being the foundational method for parasite identification, traditional microscopy possesses significant limitations that impact diagnostic accuracy and efficiency. These constraints are quantified and detailed in Table 1.

Table 1: Key Limitations of Traditional Morphology-Based Parasite Diagnostics

| Limitation Factor | Impact on Diagnostic Process | Quantitative/Severity Indicator |

|---|---|---|

| Observer Dependency | Accuracy heavily reliant on technician skill and experience; inconsistent results [11]. | Inexperienced personnel may overlook critical diagnostic signs [11]. |

| Low Parasite Load | Difficulty in detecting infections, leading to false negatives [11]. | Directly contributes to underdiagnosis of subclinical or early infections [11]. |

| Specimen Degradation | Physical slide specimens deteriorate with repeated use, reducing educational and reference value [1]. | Limited number of parasite egg or body part specimens available in training schools [1]. |

| Labor Intensive | Manual process is time-consuming and requires significant expert involvement [11]. | Contributes to workflow bottlenecks and longer turnaround times for results. |

| Artifact Interference | Non-parasitic structures can be misinterpreted, leading to false positives [11]. | Potential for misdiagnosis and unnecessary treatment. |

As illustrated, the skill of the observer is the primary determinant of accuracy, creating a vulnerability in diagnostic pipelines, especially in regions facing a shortage of trained parasitologists [11]. Furthermore, the scarcity of physical specimens in developed countries creates a vicious cycle where fewer practitioners are trained to proficiency, further diminishing diagnostic capacity [1]. This scarcity also severely hampers the education of new generations of medical technologists and researchers, who require exposure to a wide variety of specimens to achieve competency.

The Animal Model Dilemma in Drug Development

The use of animal models in parasitology and drug development is fraught with predictive limitations. As noted, the vast majority of drugs that pass animal tests fail in human trials [12]. This high attrition rate stems from inherent physiological and metabolic differences between animal models and humans, leading to poor translatability of findings. Beyond scientific limitations, traditional animal testing faces ethical implications and practical challenges such as high costs and supply chain limitations, including scarcities of non-human primates [12]. These factors have prompted regulatory agencies, including the U.S. Food and Drug Administration (FDA), to actively promote the "3Rs" principle (Reduce, Replace, Refine) and develop roadmaps to reduce reliance on animal testing [12]. This shift necessitates the development of human-relevant alternatives for the next stage of parasitology research and therapeutic development.

The Digital Paradigm: Database Construction and Workflow

A pivotal innovation for addressing the limitations in training and morphological standardization is the construction of a digital parasite specimen database. This approach leverages whole-slide imaging (WSI) technology to create a durable, accessible, and scalable resource for the global scientific community.

Experimental Protocol for Digital Database Creation

The methodology for constructing a preliminary digital database, as pioneered by institutions like Kyoto University, involves a meticulous multi-stage process [1]. The following workflow diagram delineates the key stages from physical specimen to a functional digital resource.

Diagram 1: Digital Specimen Database Construction Workflow. The process transitions from physical handling (yellow) to digital infrastructure (green).

Specimen Acquisition and Curation: The foundational step involves gathering existing slide specimens from collaborating institutions. The preliminary database by Kyoto University and Kyoto Prefectural University of Medicine was built using 50 slide specimens of parasitic eggs, adult parasites, and arthropods [1]. These specimens are verified for quality and suitability for digitization.

Digital Scanning and Image Processing: Specimens are scanned using a high-precision slide scanner (e.g., the SLIDEVIEW VS200) [1]. A critical technical step for thicker smears is the application of the Z-stack function, which captures multiple focal planes by accumulating layer-by-layer data to create a completely in-focus composite image [1]. Each slide is individually scanned, and images are rigorously reviewed for focus and clarity before inclusion.

Database Architecture and Annotation: The digitized slides are compiled into a structured database on a secured shared server (e.g., Windows Server 2022) [1]. The folder organization is based on taxonomic classification, facilitating intuitive navigation. To enhance the resource's educational value, each specimen is accompanied by explanatory text in both English and Japanese, making it accessible to a global audience [1].

Deployment and Access Management: The final platform is deployed via a web-accessible server, allowing approximately 100 simultaneous users to access the data through a standard browser on various devices [1]. Confidentiality is maintained through a requirement for user credentials (ID and password), managed by the host organization to ensure appropriate use for education and research [1].

The Scientist's Toolkit: Research Reagent Solutions

The construction and utilization of a state-of-the-art digital database rely on a suite of specific reagents and technologies. Key materials and their functions are outlined in the table below.

Table 2: Essential Research Reagents and Technologies for Digital Parasitology

| Item/Technology | Function in Database Construction/Use |

|---|---|

| Whole-Slide Imaging (WSI) Scanner | High-resolution digitization of physical glass slide specimens to create virtual slides [1]. |

| Z-Stack Imaging Software | Software function that varies the scan depth to accommodate thicker specimens, ensuring a fully focused final image [1]. |

| Shared Server Infrastructure | Hosts the virtual slide database, enabling multi-user, simultaneous access via web browsers [1]. |

| Existing Slide Specimens | Physical reference materials (e.g., parasite eggs, adults) that serve as the source material for digitization [1]. |

| Cloud-based LIMS (LIMS) | Laboratory Information Management Systems aid in managing complex digital data and metadata associated with specimens [13]. |

Integration with Modern Diagnostic and Research Frameworks

The digital parasite database is not an isolated tool but a component that integrates synergistically with contemporary diagnostic and research trends, including artificial intelligence (AI), advanced data analytics, and the move toward personalized medicine.

Synergy with Advanced Diagnostic Technologies

The digitization of parasitological data creates the foundational dataset required to power other technological innovations. Artificial Intelligence (AI) and machine learning algorithms are increasingly deployed to analyze complex pathology images and identify subtle patterns that may elude the human eye [11] [14]. A robust digital database provides the vast, high-quality annotated image sets necessary to train and validate these AI models, ultimately enhancing diagnostic accuracy.

Furthermore, digital specimens align with the growing trend of Point-of-Care Testing (POCT) and connectivity via the Internet of Medical Things (IoMT) [14] [13]. Digital images can be accessed remotely by experts to support diagnosis in field settings, and database information can be integrated with IoMT-connected devices to create a more efficient and collaborative diagnostic ecosystem [13]. This complements the rise of liquid biopsies and mass spectrometry in other diagnostic fields, as the digital database preserves the morphological knowledge essential for validating these new, non-morphological methods [14] [13].

Bridging to Modern Research Models

In the research domain, the digital database supports the transition away from sole reliance on animal models. It serves as a key reference and validation tool for emerging human-relevant research methodologies. For instance, findings from in vitro assays, organ-on-a-chip systems, or computational models studying host-parasite interactions can be cross-referenced and validated against high-fidelity morphological data from the digital database [12]. This enhances the reliability of these alternative models and helps build a more human-predictive research pipeline, contributing to the FDA's goal of reducing animal testing [12].

The limitations of traditional microscopy and animal models present significant and interconnected challenges to the future of parasitology. The decline in morphological expertise threatens diagnostic accuracy, while the poor predictive power of animal models hinders drug development. The construction of a preliminary digital parasite specimen database represents a critical step forward. By preserving rare specimens indefinitely, enabling wide-access practical training, and providing a structured data foundation for integration with AI and modern research models, this digital paradigm directly addresses these challenges. As these databases expand with international specimens and information, they are poised to become indispensable resources, ensuring that essential morphological knowledge is not only preserved but enhanced to propel global parasitology education and research into a new era.

In the context of parasitology, the decline in morphological expertise, coupled with the increasing scarcity of physical specimens in developed regions due to improved sanitation, presents a significant challenge for both education and diagnostic practices [1]. A Digital Specimen Database is a structured, online collection of digitized representations of physical specimens, enabling unprecedented levels of data accessibility, linkage, and analysis [15] [16]. For researchers and drug development professionals, this represents a paradigm shift, transforming static collections into dynamic, interoperable resources that are Findable, Accessible, Interoperable, and Reusable (FAIR) [16]. This whitepaper defines the core concepts and advantages of digital specimen databases, framed within their critical application for practical training and research in parasitology.

Core Architectural Concepts

The infrastructure of a digital specimen database is built upon several foundational technical concepts that collectively ensure its robustness and long-term utility.

The Digital Specimen as a Central Entity

A "Digital Specimen" is not merely a scanned image of a physical specimen; it is a rich digital object that serves as a central, dynamic hub for all data related to that physical entity [16]. In parasitology, this could mean that a single digital specimen of a parasite egg links to its high-resolution virtual slide, genomic data, geographical collection data, and related literature.

Persistent Identifiers (PIDs) and FAIR Principles

A cornerstone of this architecture is the use of Persistent Identifiers (PIDs), with the Digital Object Identifier (DOI) being the most prevalent [15]. A DOI is an alphanumeric code that provides a permanent, unique identifier for a digital specimen, ensuring it can be reliably located and cited even if its underlying web address changes [15]. The assignment of PIDs is fundamental to implementing the FAIR Guiding Principles, which ensure data is Findable, Accessible, Interoperable, and Reusable [16]. The implementation of a FAIR Digital Object (FDO) framework guarantees that each specimen is more than a data point; it is a citable, traceable unit of scientific capital [16].

Digital Object Architecture (DOA)

For large-scale infrastructures like the Distributed System of Scientific Collections (DiSSCo), the underlying framework is the Digital Object Architecture (DOA) [16]. DOA is a fundamental extension of internet architecture designed to efficiently manage research data as 'specimens on the internet.' It utilizes its own communication protocol, the Digital Object Interface Protocol (DOIP), to manage digital specimens in a way that is independent of web-based approaches, ensuring long-term stability and governance [16].

Table: Core Concepts of a Digital Specimen Database

| Concept | Technical Definition | Role in the Database Architecture |

|---|---|---|

| Digital Specimen | A rich digital object representing a physical specimen and all its associated data [16]. | Serves as the central, linkable entity for all information, enabling complex data relationships. |

| Persistent Identifier (PID) | A permanent, globally unique identifier for a digital object (e.g., a DOI) [15]. | Guarantees permanent citability, accessibility, and uniqueness of each specimen over time. |

| FAIR Principles | A set of guiding principles to make data Findable, Accessible, Interoperable, and Reusable [16]. | Informs the design of the infrastructure to maximize data utility and automated processing. |

| Digital Object Architecture (DOA) | An internet-scale architecture for managing digital objects using a specific protocol (DOIP) [16]. | Provides the robust, long-term technical foundation for managing millions of digital specimens. |

Key Advantages and Transformative Potential

The implementation of a digital specimen database offers transformative advantages over traditional methods.

Enhanced Data Linkage and Inter-Institutional Collaboration

The use of DOIs for individual specimens allows for the creation of an "extended digital specimen," which can be linked to other relevant information hosted in separate repositories, such as genomic data, ecological data, or protein structures [15]. This effectively fills a critical gap in scientific work, enabling true data exchange across institutional and disciplinary boundaries [15]. For parasitology research, this means a specimen can be directly linked to drug resistance studies or vaccine development projects.

Accessibility for Education and Advanced Analytics

Digital databases overcome the physical and temporal limitations of traditional specimens. A preliminary digital parasite specimen database demonstrated that virtual slides can be accessed simultaneously by approximately 100 individuals from any location via a web browser, without any physical deterioration of the original material [1]. Furthermore, the metadata stored with a digital specimen DOI allows Artificial Intelligence (AI) systems to quickly navigate billions of specimens and perform automated tasks, such as pattern recognition for parasite classification, saving researchers immense amounts of time [15].

Reliable Citability and Dynamic Scholarship

The ability to cite an individual specimen with a DOI in a scholarly publication marks a significant advancement [15]. This moves beyond citing an entire collection dataset, allowing for precise referencing of the specific evidence used in research. This also enables a more dynamic form of science, as the digital specimen can be annotated and commented upon, creating a living record of its scientific interpretation [15].

Table: Advantages of Digital Specimen Databases for Parasitology

| Advantage | Impact on Research | Impact on Practical Training |

|---|---|---|

| Global Accessibility & Preservation | Enables 24/7 access to rare specimens without logistical constraints [1]. | Provides unlimited access for students to high-quality specimens that do not degrade over time, crucial in regions where parasitic infections are now rare [1]. |

| Enhanced Data Linkage | Facilitates systems biology approaches by linking morphological data with genetic, clinical, and ecological datasets [15]. | Allows students to see the full context of a parasite, from its egg morphology to its genome and geographical distribution. |

| AI and Automation Readiness | The structured data and metadata enable the training of AI models for high-throughput analysis and diagnosis [15]. | Provides a vast, standardized resource for teaching and testing automated identification tools. |

| Precise Citability & Provenance | Ensures research is built on a foundation of verifiable and citable evidence, with a clear trail of annotations and use [15] [16]. | Teaches best practices in data provenance and reproducible science. |

Practical Implementation: A Parasitology Case Study

A 2025 study detailing the construction of a preliminary digital parasite specimen database provides a clear experimental protocol for implementation [1].

Methodology and Workflow

The following workflow diagram summarizes the key experimental steps involved in creating a digital specimen database for parasitology.

Research Reagent and Material Solutions

The following table details the key materials and tools used in the cited parasitology database construction, which are essential for replicating or scaling this methodology.

Table: Essential Research Reagents and Materials for Digital Database Construction

| Item / Reagent | Specification / Function | Application in Workflow |

|---|---|---|

| Existing Slide Specimens | 50 slides of parasite eggs, adults, and arthropods from institutional collections [1]. | Source of morphological data; the physical objects to be digitized. |

| Research Institution | Kyoto University and Kyoto Prefectural University of Medicine [1]. | Provides curated physical specimens and taxonomic expertise. |

| Slide Scanner | SLIDEVIEW VS200 by EVIDENT Corporation [1]. | Hardware for high-resolution whole-slide imaging (WSI) digitization. |

| Z-stack Function | Scanner technique that varies scan depth to accumulate layer-by-layer data for thicker specimens [1]. | Ensures high-quality, fully in-focus images of uneven specimen smears. |

| Shared Server | Windows Server 2022 [1]. | Hosts the virtual slide database, enabling secure, wide-area access. |

| Biopathology Institute | External service provider for digital scanning [1]. | Provides specialized digitization services if in-house capability is lacking. |

Digital specimen databases represent a fundamental modernization of biological collections. By leveraging core concepts like Persistent Identifiers, FAIR principles, and robust Digital Object Architecture, they offer profound advantages: breaking down data silos, enabling global and AI-ready access, and creating a dynamic, citable record of scientific evidence. For the field of parasitology, where the preservation of morphological expertise is paramount, these databases are not merely a convenience but a vital resource. They ensure that critical specimens remain accessible for practical training and can be integrally linked to modern drug development and research pipelines, securing their relevance for future scientific challenges.

From Slide to Server: Building and Accessing a Digital Parasite Database

Whole Slide Imaging (WSI) is a transformative technology that involves digitally scanning an entire glass microscope slide containing tissue sections or other specimens to create a high-resolution virtual slide [17]. This process allows for remote collaboration and analysis, fundamentally changing workflows in pathology, research, and education [17]. The technology has gained significant traction, with the U.S. Food and Drug Administration (FDA) beginning to clear WSI systems for use in primary surgical pathology diagnosis, opening avenues for wider acceptance and application in routine practice [18].

For parasitology education and research, WSI offers crucial advantages by preserving rare specimen morphology in a digital format, enabling widespread access without physical slide deterioration [1]. This is particularly valuable in developed countries where parasite specimen acquisition is challenging due to low infection rates from improved sanitation [1] [9].

Fundamentals of Z-Stack Scanning

The Depth of Field Challenge

In conventional microscopy, the depth of field determines the focal plane of a digital image, meaning only a small part of a specimen is in sharp focus at any given time while the rest remains out of focus [19]. This limitation becomes particularly problematic when imaging thicker specimens where structures of interest are located at different tissue depths [19].

Z-Stacking as a Solution

Z-stacking is an advanced imaging technique that addresses this challenge by capturing multiple images of a specimen at different focal planes along the Z-axis (vertical axis) and then combining these images to create a single composite image with an extended depth of field [19]. This process effectively creates a three-dimensional (3D) representation of the specimen, allowing researchers to see the entire thickness of the sample in detail [19].

The technique is especially valuable for parasitology specimens, which often have uneven surfaces or considerable thickness, such as whole parasites, arthropods, or thick tissue sections containing parasites [1]. For example, in creating a digital parasite database, specimens with thicker smears were successfully captured using the Z-stack function to accumulate layer-by-layer data [1].

Technical Workflows and Integration

Whole Slide Imaging Process

The WSI process involves four sequential processes: image acquisition, storage, processing, and visualization [18]. The hardware components comprise two main systems: image capture and image display [18].

Z-Stack Scanning Methodology

The Z-stacking workflow involves precise optical sectioning through a specimen:

Technical Specifications for Parasite Digitization

Table 1: Scanning Parameters for Parasite Specimens

| Specimen Type | Recommended Magnification | Z-Stack Requirements | Special Considerations |

|---|---|---|---|

| Parasite eggs | 40x | Minimal | Low magnification typically sufficient [1] |

| Adult worms | 40x-100x | Moderate | Variable thickness may require limited Z-stacking [1] |

| Malaria parasites | 1000x | Possible thin Z-stacks | High magnification for detailed morphology [1] |

| Ticks and insects | 40x-100x | Often essential | 3D structure benefits significantly from Z-stacking [1] |

| Thick smears | 400x-1000x | Essential | Multiple focal planes required for comprehensive visualization [1] |

Application in Digital Parasite Specimen Databases

Implementation Case Study

A recent initiative demonstrated the successful application of WSI and Z-stack scanning for parasitology education by constructing a preliminary digital parasite specimen database [1] [9]. Researchers acquired 50 slide specimens (parasite eggs, adults, and arthropods) from Kyoto University and Kyoto Prefectural University of Medicine and created virtual slide data using the SLIDEVIEW VS200 slide scanner [1].

For thicker specimens, the Z-stack function was employed to accommodate varying scan depths by accumulating layer-by-layer data [1]. All specimens—ranging from parasitic eggs, adult worms, ticks, and insects (typically observed under low magnification) to malarial parasites (typically observed under high magnification)—were successfully digitized [1].

Database Architecture and Accessibility

The digitized data were uploaded to a shared server (Windows Server 2022) with folders organized according to taxonomic classification [1]. Each specimen was accompanied by explanatory text in both English and Japanese to facilitate learning and international collaboration [1]. The shared server enables approximately 100 individuals to access the data simultaneously via web browsers on various devices without requiring specialized viewing software [1].

Quality Control and Validation

Quantitative Quality Control Measures

Implementing robust quality control is essential for research-grade digital parasite databases. Recent advances include computational tools like HistoQC, an open-source pipeline that quantitatively measures visual characteristics of WSIs and detects artifacts [20].

Table 2: Essential Quality Metrics for Digital Slide Assessment

| Quality Feature | Description | Importance for Parasitology |

|---|---|---|

| RMS Contrast | Standard deviation of pixel intensities | Ensures sufficient contrast for morphological discrimination |

| Michelson Contrast | Luminance difference over average luminance | Critical for visualizing subtle parasite features |

| Grayscale Brightness | Mean pixel intensity of grayscale image | Maintains consistent exposure across slides |

| Channel-specific Brightness | Mean pixel intensity per color channel | Verifies staining consistency and color balance |

| Focus Quality | Sharpness measurement across regions | Particularly crucial for Z-stack composites |

Batch Effect Management

In multisite digital pathology repositories, batch effects—systematic technical differences introduced when samples are processed in different batches—can significantly impact computational analysis [20]. HistoQC metrics can quantify these batch effects, which is especially important when building parasite databases from multiple institutional collections [20].

Essential Research Tools and Reagents

Table 3: Research Reagent Solutions for Parasite Slide Digitization

| Item Category | Specific Examples | Function in Workflow |

|---|---|---|

| Slide Scanners | SLIDEVIEW VS200 [1], Aperio GT 450 [17], Philips IntelliSite Pathology Solution [18] | Converts glass slides to digital images with automated scanning |

| Image Viewing Software | Aperio ImageScope, PathXL [18] | Allows visualization, annotation, and analysis of digital slides |

| Quality Control Tools | HistoQC [20] | Identifies artifacts and computes quantitative quality metrics |

| Storage Infrastructure | Windows Server [1], Cloud-based platforms [21] | Manages large volumes of WSI data with appropriate access controls |

| Slide Preparation | Standard histology reagents | Tissue fixation, processing, cutting, and staining for optimal morphology |

The integration of WSI with artificial intelligence (AI) and machine learning algorithms represents the next frontier in digital parasitology [17] [21] [18]. As these technologies evolve, they are expected to make significant contributions to life sciences research, including automated parasite detection and classification [17].

For parasitology education and research, WSI and Z-stack scanning technologies offer transformative potential by preserving rare specimens in accessible digital formats, enabling standardization of educational materials across institutions, and facilitating international collaboration [1] [9]. As additional parasitic slides and information are added to digital databases, these resources are expected to become increasingly valuable for advancing global parasitology education and research [1].

The decline in traditional morphology-based diagnostic skills for parasitic infections, coupled with the increasing scarcity of physical specimens in developed regions, presents a significant challenge for parasitology education and research [1]. The construction of a digital parasite specimen database addresses this challenge directly by preserving valuable morphological resources and making them globally accessible. Such databases are crucial for maintaining diagnostic competency, supporting the training of new parasitologists, and facilitating international collaborative research [1]. This guide details the technical workflow for creating a comprehensive digital repository, from acquiring physical specimens to deploying the digital assets for practical training and research, framed within the broader objective of sustaining parasitological expertise.

Phase I: Specimen Acquisition and Curation

The foundation of a robust digital database is a well-characterized and curated collection of physical specimens.

Specimen Sourcing and Types

Physical specimens can be sourced from existing collections in university departments, research institutes, or museums, as well as through new collections from clinical or field settings [1]. A diverse collection is essential for a comprehensive database. The types of specimens typically included are:

- Parasite Eggs: For the diagnosis of helminth infections.

- Adult Parasites: Whole mounts or sections for morphological study.

- Arthropod Vectors: Such as ticks, fleas, and insects.

- Blood Parasites: Including smears for malaria and other hemoparasites, which require high-magnification observation [1].

All specimens must be properly prepared and mounted on standard glass slides, free of personal identifying information to ensure they are appropriate for educational and research sharing [1].

Essential Research Reagent Solutions

The following table summarizes key materials and reagents required for the initial phase of specimen handling and curation.

Table 1: Key Research Reagent Solutions for Specimen Curation

| Item Name | Function/Application |

|---|---|

| Existing Slide Specimens | Primary source material for digitization; provides a foundation of diverse parasite morphologies [1]. |

| Glass Slide Mounts | Standard medium for preserving and displaying parasite specimens for microscopic examination [1]. |

| Whole-Slide Imaging (WSI) Scanner | High-resolution digital scanning device for converting physical glass slides into virtual slide data [1]. |

Phase II: Digital Capture and Image Processing

This phase involves the conversion of physical slides into high-fidelity digital images, which is a critical step for preserving specimen integrity.

Digital Scanning Methodology

The core of the digitization process is the use of a whole-slide imaging (WSI) scanner, such as the SLIDEVIEW VS200 model used in foundational studies [1]. The scanning protocol must accommodate the diverse nature of parasitological specimens:

- Resolution and Magnification: The scanning process should be capable of capturing images at a range of magnifications, from low power (e.g., 40x) for parasite eggs and adult worms to high power (e.g., 1000x) for intracellular parasites like Plasmodium [1].

- Z-Stack Function: For specimens with thicker smears, the Z-stack function is essential. This technique involves scanning at multiple focal depths and accumulating layer-by-layer data to produce a completely in-focus composite image [1].

- Quality Control: Each digitally scanned image must be rigorously reviewed for focus and clarity. Slides with out-of-focus areas should be rescanned to ensure the highest quality of the final digital asset [1].

Image Processing and File Management

Once scanned, images should be uploaded to a centralized shared server. A logical folder structure, organized by taxonomic classification, is crucial for easy navigation and data retrieval [1]. Each specimen image must be accompanied by an explanatory text file that includes the specimen name and a description in multiple languages, such as English and Japanese, to enhance accessibility for international users [1].

Phase III: Data Structuring and Metadata Annotation

Standardizing the data associated with each digital specimen is key to making the database searchable, interoperable, and reusable (FAIR).

Minimum Data Standard for Specimen Annotation

Adopting a minimum data standard ensures consistency. The following table outlines a proposed set of core fields, adapted from standards in wildlife disease research, which can be effectively applied to parasite specimens [22].

Table 2: Minimum Data Standard for Digital Parasite Specimens

| Category | Field Name | Description | Requirement Level |

|---|---|---|---|

| Host & Sample | Host Species | The species from which the parasite was isolated. | Required |

| Sample Type | Type of sample (e.g., egg, adult worm, blood smear). | Required | |

| Collection Date | Date of sample collection. | Required | |

| Collection Location | Geographic location of collection. | Required | |

| Parasite & Test | Parasite Identification | Taxonomic identification of the parasite. | Conditionally Required |

| Diagnostic Method | Method used for identification (e.g., microscopy, PCR) [23]. | Required | |

| Test Result | Outcome of the diagnostic test (e.g., positive, negative). | Required | |

| Test Date | Date the diagnostic test was performed. | Required | |

| Digital Asset | Image Resolution | Resolution of the digital image in pixels. | Recommended |

| Scanner Model | Model of the WSI scanner used. | Recommended | |

| Accession Number | Unique identifier for the digital specimen. | Required |

For negative results, it is critical to still record the specimen and test data. Omitting negative data prevents meaningful calculations of prevalence and can bias research findings [22].

Phase IV: Database Deployment and Accessibility

The final phase involves deploying the digital database in a way that maximizes its utility for education and research while ensuring long-term preservation.

Technical Deployment and Access Control

The compiled virtual slides and their associated metadata are hosted on a dedicated shared server (e.g., Windows Server 2022) [1]. This server should be configured to allow approximately 100 simultaneous users to access the data via a standard web browser on various devices without requiring specialized viewing software [1]. To ensure confidentiality and responsible use, access to the database should be protected by an authentication system requiring an identification code and password, which can be provided by the host organization upon request for educational or research purposes [1].

Ensuring Digital Accessibility and Inclusivity

When designing the user interface for the database, it is imperative to adhere to the Web Content Accessibility Guidelines (WCAG). This ensures the database is usable by people with a wide range of disabilities.

- Non-Text Contrast (WCAG 1.4.11): All user interface components and graphical objects essential for understanding must have a contrast ratio of at least 3:1 against adjacent colors [24]. This includes buttons, form borders, and focus indicators, which help users with low vision perceive the components and their states.

- Use of Scalable Vector Graphics (SVG): For interface icons and diagrams, using SVGs is recommended. SVGs maintain quality when zoomed or magnified, ensuring that users who rely on screen magnification can clearly see details [24]. Furthermore, any essential information conveyed by color in an SVG must also be available through another means, such as shape or pattern [25].

- Text Contrast (WCAG 1.4.3): All text presented as part of the interface should have a contrast ratio of at least 4.5:1 against its background to ensure readability for users with low vision or in suboptimal lighting conditions [26].

Experimental Protocol: In Silico Prediction of Novel Anthelmintics

Beyond morphological training, a comprehensive parasitology database can fuel computational research for drug discovery. The following workflow details an in silico machine learning approach for predicting novel anthelmintic candidates, using the parasitic nematode Haemonchus contortus as a model.

Detailed Machine Learning Workflow

Objective: To accelerate the discovery of novel anthelmintic compounds by building a predictive model from existing bioactivity data. Background: Widespread anthelmintic resistance in livestock parasites necessitates new drugs. High-throughput screening generates large bioactivity datasets, which can be leveraged for machine learning [27].

Diagram 1: In silico anthelmintic discovery workflow.

Data Curation and Labeling:

- Assemble a bioactivity dataset from high-throughput phenotypic screens (e.g., measuring parasite motility) and evidence-based data from peer-reviewed literature [27].

- Apply a three-tier labeling system to classify compounds based on their activity:

- 'Active': Wiggle Index < 0.25, viability < 20%, reduction > 80%, EC50 < 50 µM, or MIC75 < 1 µg/mL.

- 'Weakly Active': Wiggle Index 0.25-0.5, viability 20-50%, reduction 50-80%, EC50 50-100 µM, or MIC75 1-10 µg/mL.

- 'None' (Inactive): Wiggle Index ≥ 0.5, viability ≥ 50%, reduction ≤ 50%, EC50 ≥ 100 µM, or MIC75 ≥ 10 µg/mL [27].

Model Training and Validation:

- Train a Multi-layer Perceptron (MLP) classifier, a type of deep learning artificial neural network, on the labeled dataset. This model is suited for the complex, non-linear patterns in chemical data [27].

- Assess model performance using metrics like precision and recall. A well-trained model in this context achieved 83% precision and 81% recall for the 'active' class, despite the dataset being highly imbalanced (only ~1% 'active' compounds) [27].

In Silico Screening and Prioritization:

- Use the trained model to screen millions of compounds from a public chemical database like ZINC15 [27].

- The model will output a list of candidate compounds predicted to have nematocidal activity, which are then prioritized for further testing based on their predicted activity and structural properties.

Experimental Validation:

- Select a subset (e.g., 10) of the top predicted candidates for in vitro experimental assessment.

- Test the compounds using established phenotypic assays, such as larval motility and development assays for H. contortus [27].

- Compounds that exhibit significant inhibitory effects in vitro are considered promising lead candidates for further development as novel anthelmintics [27].

Reagent Solutions for Computational Analysis

Table 3: Key Reagents and Resources for In Silico Workflow

| Item Name | Function/Application |

|---|---|

| Bioactivity Datasets | Curated data from high-throughput screens used as the labeled training set for the machine learning model [27]. |

| Molecular Descriptors | Quantitative representations of chemical structures that serve as input features for the QSAR model [27]. |

| ZINC15 Database | A public database of commercially available chemical compounds used for virtual screening to discover new active molecules [27]. |

| Multi-layer Perceptron (MLP) | A class of artificial neural network used for deep learning-based classification of compounds into active/inactive categories [27]. |

The systematic curation and digitization of parasite specimens, from physical acquisition to a fully accessible online database, creates an indispensable resource for the global parasitology community. This workflow not only preserves morphological knowledge but also enables new, data-driven research avenues. By integrating detailed specimen metadata with computational approaches, these digital repositories support both foundational education in parasite identification and advanced research, such as the in silico discovery of novel therapeutics to combat the growing threat of anthelmintic resistance.

The global challenge of parasitic diseases, combined with declining opportunities for hands-on parasitology training in areas where infections have become rare, has created an urgent need for innovative educational and research resources [9]. This guide details the core architecture for constructing a digital parasite specimen database, a resource framed within a broader thesis on leveraging digital tools for practical training and research. Such databases are critical for sustaining morphological expertise—which remains foundational for diagnosing parasitic infections—and for harnessing modern genomic tools in parasitology [9] [28]. We focus on two complementary architectural paradigms: one designed for organizing physical specimen scans to aid morphological identification, and another for enabling the taxonomic identification of parasites from complex clinical samples using metagenomic next-generation sequencing (mNGS) [9] [28].

Core Database Architectures

Morphology-Focused Database Architecture

This architecture is designed to digitize physical microscope slides and organize them for remote educational access.

Data Acquisition and Curation: The foundational step involves acquiring high-quality virtual slide data from physical parasite specimens (e.g., eggs, adult worms, arthropods) using slide scanning technology [9]. All specimens, from those requiring low magnification (like ticks) to those needing high magnification (like malarial parasites), can be successfully digitized. Each digital specimen is then associated with structured metadata.

Taxonomic Organization and Storage: The virtual slides are compiled into a central digital repository, with folders and database entries organized by taxonomic classification [9]. This structure allows users to intuitively navigate the database by evolutionary relationships. Explanatory notes in multiple languages (e.g., English and Japanese) are attached to each specimen to facilitate self-directed learning [9].

Remote Access and Sharing: The database is deployed on a shared server infrastructure that can support approximately 100 simultaneous users, enabling collaborative practical training and research across multiple institutions [9]. This architecture directly addresses the challenge of scarce physical specimens in developed nations by providing ubiquitous access to a curated digital collection.

Genomics-Focused Identification Platform Architecture

For parasite identification via mNGS, a more complex, automated bioinformatics architecture is required. The Parasite Genome Identification Platform (PGIP) exemplifies this approach [28].