Beyond Standard Protocol: Advanced FEA Modifications for Enhanced Biomedical and Clinical Research

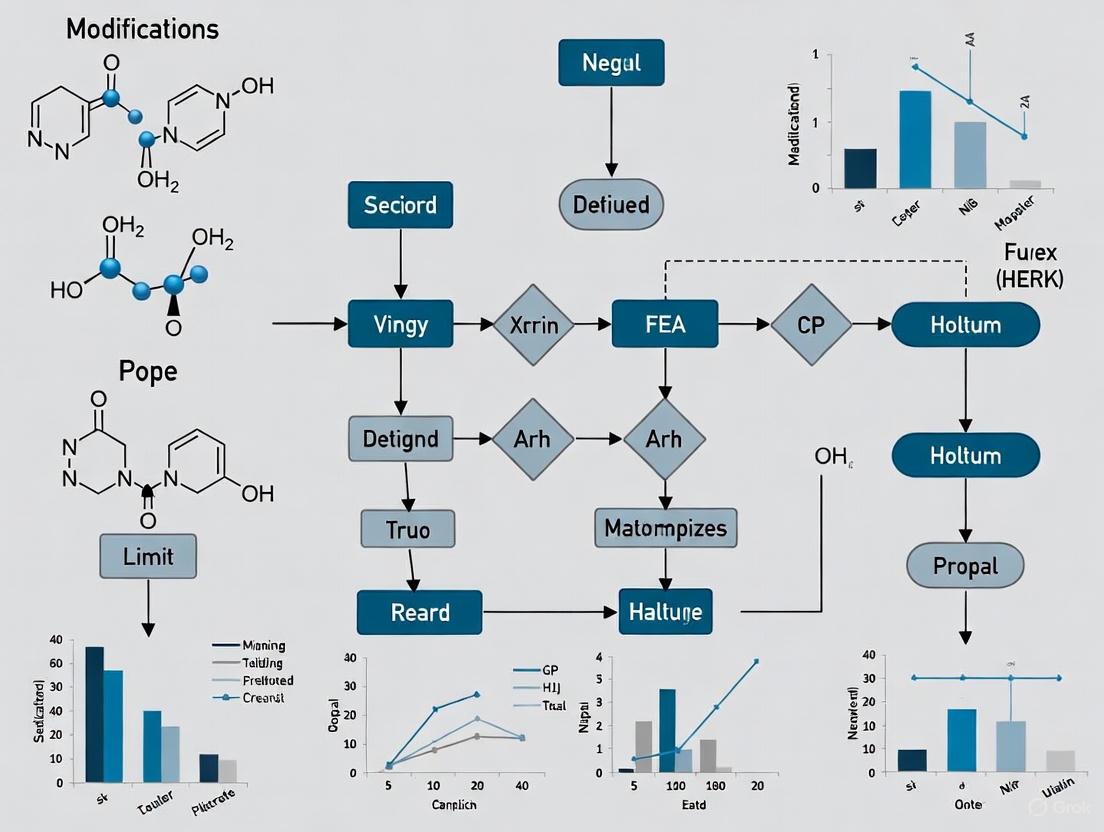

This article explores the critical modifications to standard Finite Element Analysis (FEA) protocols that are driving innovation in biomedical research and drug development.

Beyond Standard Protocol: Advanced FEA Modifications for Enhanced Biomedical and Clinical Research

Abstract

This article explores the critical modifications to standard Finite Element Analysis (FEA) protocols that are driving innovation in biomedical research and drug development. It provides a comprehensive guide from foundational principles, such as geometry simplification and material property definition, to advanced methodological applications in patient-specific modeling and complex biomechanical systems. The content delves into troubleshooting common pitfalls like stress concentrations and mesh dependency, and emphasizes rigorous validation techniques against experimental and clinical data. By synthesizing insights from recent case studies in orthopedics, prosthetics, and implant design, this article equips researchers and scientists with the knowledge to enhance the predictive accuracy, reliability, and clinical relevance of their computational models.

Core Principles and Strategic Simplifications for Robust FEA

Defining Clear Analysis Goals and Performance Criteria

In finite element analysis (FEA) research, particularly within modified standard protocols, establishing precise analysis goals and performance criteria at the outset is fundamental to generating valid, reproducible, and scientifically valuable results. This structured approach ensures that computational resources are allocated efficiently and that simulation outcomes provide meaningful insights for decision-making in research and development. For scientists and engineers modifying standard FEA protocols, this initial planning phase becomes even more critical as it establishes the benchmark against which novel methodological changes will be evaluated. A well-defined goal serves as the foundation for the entire simulation workflow, from model creation to the interpretation of results [1].

This application note provides a structured framework for defining FEA objectives and quantitative performance metrics, complete with experimental protocols and visualization tools tailored for researchers in medical device and pharmaceutical development. The principles outlined are especially crucial when working under strict regulatory frameworks where validation and traceability are mandatory [1] [2].

The Critical Role of Goal Definition in FEA

Finite Element Analysis is a computational tool that involves discretization of a domain into a finite number of elements to simulate and analyze physical phenomena [3]. Without clearly defined objectives, FEA projects risk generating computationally expensive results that fail to answer critical research questions. The practice of defining clear objectives and scope before starting any FEA or simulation project ensures the simulation is focused and tailored to the specific requirements of the device or component under investigation [1].

For modified FEA protocols, this definition phase must explicitly state what aspects of the standard protocol are being altered and what specific improvements are sought, whether in accuracy, computational efficiency, or application to novel material systems. This is essentially a risk-based approach that helps inform what you want to simulate and what kind of simulation you want to run [2].

Table 1: Analysis Goal Categories in FEA Research

| Goal Category | Primary Research Question | Typical Performance Metrics |

|---|---|---|

| Feasibility Assessment | Will the device/component perform as expected under specific conditions? [1] | Pass/Fail against yield stress; Factor of safety |

| Design Optimization | Which design parameters most significantly impact performance? [4] | Sensitivity coefficients; Weight/stress trade-offs |

| Comparative Analysis | How does a design modification improve performance compared to a baseline? [5] | Percent difference in stress/displacement [5] |

| Failure Investigation | How and under what conditions does the device/component fail? [2] | Critical stress values; Damage propagation patterns |

| Protocol Validation | Does the modified FEA protocol produce more accurate/fficient results? | Deviation from experimental data; Computational time |

Defining Quantitative Performance Criteria

Performance criteria translate qualitative goals into measurable quantities that enable objective assessment of simulation results. These criteria should be established during the experimental design phase, prior to running simulations, to prevent confirmation bias.

Structural Performance Metrics

In structural FEA, common metrics include von Mises stress (VMS) for predicting yield initiation, displacement for assessing stiffness, and strain energy for overall mechanical behavior [5]. For example, in a study comparing cephalomedullary nails for femoral fracture fixation, researchers used maximal VMS and displacement as primary evaluation indicators, calculating percent difference (PD) to quantitatively compare performance between designs [5].

Table 2: Quantitative Performance Criteria for Structural FEA

| Metric | Description | Application Example | Measurement Method |

|---|---|---|---|

| Von Mises Stress | Predicts yielding in ductile materials | Comparison of implant designs [5] | Maximum value in critical regions |

| Displacement | Measures stiffness and deformation | Fracture fixation stability assessment [5] | Maximal displacement under load |

| Strain Energy | Energy stored in deformed material | Mesh convergence testing [5] | Integration over volume |

| Fatigue Life | Prediction of cyclic loading durability | Medical devices with repeated use | Based on stress cycles |

| Contact Pressure | Interface stress between components | Wear prediction in articulating surfaces | Average and peak values |

Material-Specific Criteria

For specialized applications, additional criteria must be considered. In pharmaceutical tableting simulations, density distribution and temperature evolution become critical metrics [3]. For polymer components experiencing long-term loading, creep deformation and stress relaxation must be evaluated, as these phenomena can cause significant deflection over time, potentially leading to functional failure [2].

Experimental Protocol for Goal-Oriented FEA

Protocol: Structured Approach to Defining FEA Goals and Criteria

Purpose: To establish a systematic method for defining clear analysis goals and performance criteria in modified FEA protocols.

Materials and Equipment:

- Design requirements documentation

- Risk analysis documents (e.g., dFMEA)

- Previous experimental data

- CAD software

- FEA preprocessing software

Procedure:

Requirement Analysis

- Identify all functional requirements and design constraints

- Review known failure modes and critical performance parameters

- Document all assumptions and boundary conditions

Goal Specification

- Formulate precise primary research question(s)

- Categorize the analysis type (refer to Table 1)

- Define success criteria for the modified FEA protocol itself

Metric Selection

- Select appropriate quantitative metrics from Table 2

- Establish threshold values for each metric (e.g., yield strength)

- Define relative improvement targets for comparative studies

Validation Planning

- Determine validation method (experimental, analytical, comparative)

- Identify critical validation points within the model

- Establish acceptance criteria for validation

Documentation

- Record all goals, criteria, and thresholds in the research notebook

- Justify metric selections based on literature and prior research

- Obtain peer review of the analysis plan before execution

Validation Notes:

- For modified protocols, include a baseline analysis using standard methods

- Perform mesh convergence studies to ensure results are element-size independent [5]

- Validate simulation results with experimental data where possible [1]

Research Reagent Solutions: Essential Materials for FEA

Table 3: Essential Research Materials and Tools for FEA Protocols

| Item/Category | Function in FEA Research | Application Notes |

|---|---|---|

| Simulation Software | Digital twinning tool for simulating device behavior [2] | Select based on application (CFD, solid mechanics, thermal) [1] |

| CAD Platform | Creation of geometric models for analysis [5] | Essential for defining accurate system geometry [3] |

| Constitutive Models | Mathematical representation of material behavior | DPC model for powder compaction [3]; Creep models for polymers [2] |

| Material Properties Database | Input parameters for accurate simulation | Young's modulus, Poisson's ratio, density [5] [3] |

| Mesh Generation Tools | Discretization of geometry into finite elements [3] | Element type and size affect accuracy [5] |

| Validation Apparatus | Experimental validation of simulation results | Physical testing equipment for correlation [1] |

Workflow Visualization for Goal Definition

FEA Goal Definition Workflow

Application Example: Medical Device Development

In medical device development, a common application involves analyzing activation mechanisms for auto-injector devices [2]. A modified FEA protocol might be developed to more accurately predict creep behavior in polymer components.

Analysis Goal: Determine whether the trigger mechanism in an auto-injector will maintain structural integrity over a 24-month shelf life while minimizing material usage [2].

Performance Criteria:

- Maximum von Mises stress not exceeding 70% of material yield strength

- Creep deformation less than 0.1mm over 24 months under constant spring load

- 20% reduction in material volume compared to previous design while maintaining safety margins

Protocol Modifications: The standard static structural analysis would be modified to include time-dependent viscoelastic material properties and creep simulation capabilities. Validation would be performed through physical creep testing of 3D-printed prototypes under accelerated conditions [2].

This example demonstrates how clear goals and criteria guide both the modification of standard FEA protocols and the evaluation of their effectiveness in addressing specific engineering challenges.

Defining clear analysis goals and performance criteria is the cornerstone of effective finite element analysis, particularly when modifying standard protocols for research purposes. This structured approach ensures computational resources are efficiently allocated and results are scientifically valid and actionable. By implementing the frameworks, protocols, and visualizations provided in this application note, researchers can enhance the rigor, reproducibility, and impact of their FEA investigations across medical, pharmaceutical, and general engineering domains.

In the realm of Finite Element Analysis (FEA), the pursuit of computational efficiency without sacrificing result accuracy is a core research challenge. Modifications to standard FEA protocols often focus on the critical pre-processing stage of geometry preparation. This document details structured methodologies for geometry simplification through the removal of non-essential features and the strategic use of symmetry, framing them within the context of enhancing standard FEA protocols for research and development applications. These techniques are paramount for managing solve times, which can increase exponentially with element count, and for making complex simulations computationally feasible [6] [7].

Geometry Simplification via Feature Removal

The primary objective of geometry simplification is to reduce model complexity by eliminating features that do not significantly influence the structural performance, thereby enabling a more computationally efficient mesh [8].

Application Notes

Rationale and Impact: Detailed Computer-Aided Design (CAD) models often contain numerous features like small holes, fillets, blends, engravings, and sharp edges. While crucial for manufacturing, these features can be non-structural. Their presence forces the meshing algorithm to generate an excessively fine mesh in these localized areas, drastically increasing the total element count and, consequently, the computational load and solve time. Their removal has been shown to reduce solution time and memory usage by over 65% in some complex models [8] [7].

Protocol 1: Systematic De-featuring

- Identify Non-Essential Features: Systematically scan the CAD model for features with characteristic dimensions (e.g., hole diameter, fillet radius) that are significantly smaller than the overall model size and located in regions anticipated to experience low stress.

- Evaluate Structural Role: Assess whether the feature carries a significant load or is merely aesthetic or manufacturing-related. Non-structural components and features in inner sections not subjected to high stress are primary candidates for removal [8].

- Suppress/Remove Features: Use CAD or FEA pre-processing tools to suppress or delete the identified features.

- Iterate and Validate: After analysis, compare stress and strain results in the simplified model's regions with a more detailed reference model, if available, to validate that the simplifications did not critically alter the mechanical response.

Protocol 2: Geometry Abstraction and Replacement

- Target Complex Geometries: Identify highly complex features such as threads, small ridges, or detailed internal ribs.

- Replace with Simplified Forms: Substitute the complex geometry with a simplified approximation. For example, a smooth cylinder can replace a threaded section for a stress analysis focused on the component's body rather than the thread engagement [8].

- Simplify Assemblies: In large assemblies, suppress or replace irrelevant components or sub-assemblies that do not affect the overall analysis objectives.

Advanced Simplification Techniques

For highly complex models, automated wrapping algorithms can be employed. The Convex Hull Method creates a faceted surface wrapped around selected geometry, with options for a single hull, interactive splits, or uniform splits to segment the geometry [9]. The Cartesian Shrinkwrap method wraps geometry using a Cartesian staircase mesh that is then projected and smoothed. A key parameter is the Max. cell size, which should be set slightly larger than the largest hole intended to be ignored, effectively sealing small gaps and holes automatically [9].

Table 1: Quantitative Impact of Simplification and Symmetry on FEA Performance

| Model / Technique | Original Element Count | Simplified Element Count | Reduction in Solve Time | Reduction in Memory Usage |

|---|---|---|---|---|

| Bracket Assembly (Symmetry) [10] | 132,000 | 33,000 (Quarter Model) | ~90% (10 min to 1 min) | Information Not Specified |

| 3D Cantilever Beam (BSCSC Method) [7] | 57,915×57,915 Matrix | Not Applicable (Matrix Optimization) | 72.06% | 66.13% |

| Engine Connecting Rod (BSCSC Method) [7] | 50,619×50,619 Matrix | Not Applicable (Matrix Optimization) | 68.65% | 65.85% |

Table 2: Summary of Core Geometry Simplification Methodologies

| Methodology | Description | Key Parameters | Best Use Cases |

|---|---|---|---|

| De-featuring [8] | Removal of small holes, fillets, blends, and sharp edges. | Feature size vs. global model size. | General simplification of overly detailed CAD models. |

| Geometry Abstraction [8] | Replacing complex features (threads, ribs) with simpler geometric shapes. | Level of detail required for analysis. | Models where specific complex features are not critical. |

| Convex Hull Wrapping [9] | Creates a faceted surface envelope around geometry. | Split planes (Interactive/Uniform), Merge tolerance. | Simplifying extremely complex or organic shapes for bulk flow analysis. |

| Cartesian Shrinkwrap [9] | Wraps geometry with a projected Cartesian staircase mesh. | Max. cell size, Number of smooth iterations. | Sealing holes and simplifying surface topology for CFD or thermal analysis. |

Leveraging Symmetry in FEA

Exploiting symmetry is a powerful modification to the standard FEA workflow that can dramatically reduce problem size. It involves modeling only a symmetric portion of the entire structure, such as a half, quarter, or eighth, and applying appropriate boundary conditions to simulate the presence of the full model [10] [6].

Application Notes

Rationale and Impact: When a structure's geometry, material properties, constraints, and loading conditions are symmetric about a plane, line, or axis, the response (displacements, stresses) will also be symmetric. Simulating a symmetric portion can reduce element counts by 50% for one symmetry plane, 75% for two, and so on, leading to proportional reductions in solve time [10]. This approach also optimizes the storage and solution of the global stiffness matrix, as demonstrated by the Blocked Symmetric Compressed Sparse Column (BSCSC) method, which leverages blocked symmetry to minimize memory usage and enhance computational efficiency [7].

Protocol 3: Implementing Planar Symmetry

- Create Sectioned Model: In the CAD environment, use an extruded cut or a split command to trim the solid model along the identified symmetry plane(s), creating a half, quarter, or eighth section [10].

- Apply Symmetry Constraints: On every face created by the section cut, apply a Symmetry Constraint. This constraint enforces that:

- The displacement component perpendicular to the symmetry plane is zero.

- The rotational components parallel to the symmetry plane are zero [10].

- For a face on the YZ-plane, symmetry is applied in the X-direction (restricting X-translation and Y- and Z-rotation).

- For a face on the XZ-plane, symmetry is applied in the Y-direction (restricting Y-translation and X- and Z-rotation) [10].

- Adjust Loads: If an applied load (e.g., pressure, force) is cut by a symmetry plane, its magnitude must be scaled to represent the load on the full model correctly. For instance, a 1000 lbf bearing load on a full model would be reduced to 500 lbf for a half-symmetry model [10].

Table 3: Key Research Reagent Solutions for FEA Geometry Simplification

| Item / Solution | Function in Protocol | Specific Application Notes |

|---|---|---|

| CAD Export Formats (STEP, IGES) [8] | Neutral format for transferring 3D geometry from CAD to FEA software. | STEP files are preferred for transferring final geometry without feature history. |

| De-featuring Tools [9] [8] | Software functions to automatically or manually suppress holes, fillets, etc. | Critical for reducing mesh complexity in non-critical regions. |

| Shrinkwrap / Convex Hull Tools [9] | Automated geometry wrapping to create a simplified enclosure. | Uses parameters like "Max. cell size" to ignore small holes and gaps. |

| Symmetry Constraint [10] | Boundary condition that enforces symmetric behavior on a cut face. | Restricts translation normal to the plane and rotations parallel to the plane. |

| Blocked Symmetric Matrix Solver (BSCSC) [7] | Advanced solver that exploits matrix symmetry for efficient computation. | Reduces memory usage and solution time for models with symmetric properties. |

The strategic modification of standard FEA protocol through rigorous geometry simplification and the exploitation of symmetry presents a significant opportunity for advancing computational mechanics research. The application notes and detailed protocols provided herein offer a framework for researchers to implement these techniques effectively. By systematically removing non-essential features and leveraging inherent symmetry, scientists and engineers can achieve orders-of-magnitude improvements in computational performance, thereby enabling the analysis of larger, more complex systems and facilitating more iterative design exploration, which is fundamental to innovation in fields ranging from automotive to aerospace engineering.

Selecting Appropriate Element Types and Understanding Their Impact

The selection of appropriate element types constitutes a foundational step in the Finite Element Analysis (FEA) protocol, with significant implications for the accuracy, computational efficiency, and predictive capability of computational models across engineering and scientific disciplines [11]. Within the specific context of pharmaceutical research and development, where FEA is increasingly applied to model complex physical phenomena—from the structural integrity of manufacturing equipment to the mechanical behavior of solid dosage forms—the rationale behind element selection directly impacts the reliability of simulation-driven decisions [12]. A "fit-for-purpose" methodology, which strategically aligns the FEA approach with the key question of interest and the specific context of use, is paramount for generating credible evidence [13]. This application note details the core principles, provides quantitative guidelines, and establishes experimental protocols for the informed selection of element types, thereby supporting the modification and enhancement of standard FEA practices within a research environment.

Core Principles of Element Selection

The finite element method discretizes a continuous domain into smaller, simpler pieces called elements. The behavior of these elements under load is governed by their type and formulation, which in turn defines the fidelity of the overall simulation.

2.1 Element Dimensionality and Shape Elements are categorized first by their dimensionality and shape, which should be matched to the geometry and physics of the problem:

- Solid Elements (3D): Used for modeling bulky components where stress and strain are three-dimensional. Common types include tetrahedrons (4-node or 10-node) and hexahedrons or "bricks" (8-node or 20-node) [14].

- Shell Elements: Ideal for thin-walled structures (e.g., equipment housings, container walls) where thickness is significantly smaller than other dimensions. They efficiently capture bending and membrane actions [14].

- Beam Elements: Used to model slender structural members (e.g., support frames, agitator shafts) that experience axial, bending, and torsional loads [14].

2.2 Element Order and Interpolation The order of an element defines the polynomial order of its shape functions, which interpolate the displacement field within the element.

- First-Order (Linear) Elements: Use linear shape functions. These elements have nodes only at their corners. They are less computationally expensive but can be overly stiff in bending, potentially requiring a finer mesh for accuracy [15].

- Second-Order (Quadratic) Elements: Use quadratic shape functions and include mid-side nodes. They can better capture curved geometries and complex stress gradients, often providing superior accuracy with a coarser mesh compared to linear elements [16] [15].

Table 1: Comparison of Common Solid Element Types

| Element Type | Typical Node Count | Order | Geometric Affinity | Strengths | Common Use Cases in Pharma |

|---|---|---|---|---|---|

| Tetrahedron (TET) | 4 (linear), 10 (quadratic) | 1st, 2nd | Complex, organic shapes | Automatic meshing of complex geometries [14] | Modeling intricate vessel internals, tablet punches |

| Hexahedron (HEX) | 8 (linear), 20 (quadratic) | 1st, 2nd | Regular, structured geometries | Higher accuracy and faster convergence for a given mesh size [14] | Stress analysis of simple rollers, standardized pipe sections |

| Wedges (Prisms) | 6 (linear), 15 (quadratic) | 1st, 2nd | Transition zones | Used to create a transition from a HEX-dominant to a TET-dominant mesh | Meshing thin, curved surfaces or boundary layers |

Quantitative Guidelines and Selection Workflow

Selecting an element is a balance between computational cost and the required accuracy. The following guidelines and workflow provide a structured approach.

3.1 Mesh Sensitivity and Convergence A mesh sensitivity study is the most scientific method for determining an appropriate element size and type. The core principle is to iteratively refine the mesh until the key output quantities (e.g., maximum stress, displacement) show negligible change with further refinement, indicating convergence [15].

Table 2: Sample Results from a Mesh Sensitivity Study on a Cantilever Beam

| Element Type | Global Element Size (mm) | Max. Displacement (mm) | Error vs. Calc. (%) | Max. Principal Stress (MPa) | Error vs. Calc. (%) | Compute Time (s) |

|---|---|---|---|---|---|---|

| C3D8R (Linear Hex) | 4.0 | 0.403 | 5.0% | 240.1 | 12.2% | 12 |

| C3D8R (Linear Hex) | 2.0 | 0.417 | 1.7% | 218.5 | 2.2% | 35 |

| C3D8R (Linear Hex) | 1.0 | 0.422 | 0.6% | 215.0 | 0.5% | 189 |

| C3D10 (Quad. Tet) | 4.0 | 0.424 | 0.1% | 214.1 | 0.1% | 253 |

| Handbook Calculation | N/A | 0.424 | - | 213.9 | - | - |

Data adapted from a benchmark study on a cantilevered steel rod [15].

3.2 Guidelines for Specific Physics

The required mesh density is often dictated by the physics of the problem. For instance, in wave propagation simulations, such as those used in laser ultrasonic testing for material characterization, the element size le must be small enough to capture the highest frequency component of the wave [16]:

le ≤ c_min / (N * f_max)

where c_min is the minimum wave speed, f_max is the maximum frequency, and N is the number of nodes per wavelength. Studies recommend 6-8 nodes per wavelength for quadratic elements and 20-34 nodes per wavelength for linear elements to avoid numerical dispersion and inaccuracies [16].

Figure 1: Workflow for selecting and validating FEA elements (Max Width: 760px).

Experimental Protocols for FEA Validation

4.1 Protocol: Mesh Sensitivity Analysis This protocol provides a step-by-step methodology for establishing a converged mesh, a critical prerequisite for any high-fidelity simulation.

- Objective: To determine the element size and type that yields results independent of further mesh refinement for a specific quantity of interest (QOI).

- Materials: FEA software with meshing and solving capabilities (e.g., ANSYS, Abaqus, COMSOL).

- Procedure:

- Model Setup: Create a simplified version of your geometry or a representative benchmark problem. Apply the relevant material properties, boundary conditions, and loads.

- Initial Run: Generate an initial, relatively coarse mesh. Run the simulation and record the QOI (e.g., maximum von Mises stress, natural frequency, critical displacement).

- Systematic Refinement: Refine the mesh globally by reducing the average element size by a factor (e.g., 1.5x to 2x). Run the simulation again and record the QOI.

- Iteration: Repeat step 3 for several mesh refinement levels.

- Local Refinement: If the geometry or physics dictates stress concentrations (e.g., around a sharp corner or in a contact zone), apply local mesh refinement in these regions and repeat the analysis.

- Convergence Check: Plot the QOI against a measure of mesh density (e.g., number of elements, nodes, or inverse of element size). The solution is considered converged when the change in the QOI between successive refinements falls below a pre-defined tolerance (e.g., 2-5%).

- Data Analysis: The results, as tabulated in Table 2, will show the convergence trend. The mesh configuration just before the point of diminishing returns (where large increases in compute time yield negligible accuracy gains) is typically selected for production runs.

4.2 Protocol: Validation Against Analytical Solutions This protocol ensures that the FEA model itself, including element selection, is capable of reproducing known theoretical results.

- Objective: To benchmark the accuracy of the selected element type and formulation against a known analytical solution.

- Materials: FEA software; analytical solution for a standard problem (e.g., Euler-Bernoulli beam theory, Lame's solution for thick-walled cylinders).

- Procedure:

- Problem Selection: Choose a simple problem with a known analytical solution that exercises similar physics to your target application (e.g., cantilever bending for structural analysis, heat conduction through a slab for thermal analysis).

- FEA Modeling: Construct an FEA model of this simple problem using the candidate element type(s).

- Execution and Comparison: Solve the FEA model and compare key outputs (stress, displacement, temperature) directly with the analytical solution.

- Error Quantification: Calculate the relative error between the FEA and analytical results.

- Data Analysis: A low error (e.g., <5%) provides confidence in the element's performance for that class of problem. Consistently high errors may indicate the need for a higher-order element or a different element formulation [17].

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines key "research reagents"—in this context, the essential software and material tools—required for implementing the protocols described in this note.

Table 3: Essential Research Tools for FEA Protocol Development

| Tool Name / Category | Function / Description | Example Use-Case in Protocol |

|---|---|---|

| General-Purpose FEA Software | Provides a unified environment for pre-processing, solving, and post-processing a wide range of physics problems. | Performing the Mesh Sensitivity Analysis (Protocol 4.1) on a component. |

| Abaqus/Standard (Implicit) | A robust solver particularly renowned for advanced nonlinear and contact analysis [18]. | Validating models involving complex material behavior or large deformations. |

| ANSYS Mechanical | A comprehensive FEA suite known for its multiphysics capabilities and robust structural analysis tools [18]. | Setting up and solving coupled physics problems (e.g., thermo-mechanical stress). |

| Altair HyperMesh | An advanced pre-processor celebrated for its high-quality meshing capabilities on complex geometries [18]. | Creating the hex-dominant or hybrid meshes required for the convergence study. |

| Linear Static Solver | An algorithm that solves the system of equations [K]{u}={F}, assuming linear elastic material behavior and small deformations. | The primary solver used for initial benchmark and sensitivity studies. |

| High-Performance Computing (HPC) Cluster | A computing system with multiple processors/cores and large memory, enabling the solution of large, high-fidelity models. | Reducing the solve time for the multiple iterations required in a mesh sensitivity study. |

Figure 2: Core components of an FEA software solution (Max Width: 760px).

Within the broader research on modifications of standard Finite Element Analysis (FEA) protocol, the accurate assignment of material properties stands as a critical determinant of simulation fidelity. The transition from simple linear elastic models to sophisticated hyperelastic and composite representations marks a significant evolution in computational mechanics, enabling researchers to capture the complex behavior of engineering and biological materials with increasing precision. This protocol outlines a systematic methodology for assigning material properties, a process that fundamentally affects the stiffness matrix, stress-strain behavior, and convergence of FEA solutions [19]. Proper implementation ensures that computational models reliably predict real-world material responses, from the predictable deformation of metals to the large-strain behavior of elastomers and the direction-dependent characteristics of composite structures.

Conceptual Framework: The Material Model Spectrum

The selection of an appropriate material model is guided by the intrinsic behavior of the material under investigation and the deformation regimes expected in service. The following continuum represents the spectrum of material model complexity:

Linear Elastic Materials

Linear elastic materials obey Hooke's Law, where stress is directly proportional to strain. This relationship holds for small deformations (typically <1%) and characterizes materials like metals and ceramics under working loads. The model requires only two independent parameters: Young's modulus (E) and Poisson's ratio (ν), making it computationally efficient but limited to small-strain applications [20].

Hyperelastic Materials

Hyperelastic models describe materials capable of undergoing large, reversible deformations (often 100%-700%) with nonlinear stress-strain responses. These models are defined by strain energy density functions (W) that relate deformation to stored energy. Common hyperelastic models include:

- Neo-Hookean: The simplest form,

W = C₁(I₁ - 3), suitable for basic rubber elasticity [21]. - Mooney-Rivlin: A two-parameter model,

W = C₁(I₁ - 3) + C₂(I₂ - 3), offering improved accuracy over Neo-Hookean for certain elastomers [21]. - Yeoh: A three-term model,

W = C₁(I₁ - 3) + C₂(I₁ - 3)² + C₃(I₁ - 3)³, particularly effective for carbon-black filled rubbers [21]. - Arruda-Boyce: An eight-chain model based on statistical mechanics, effective in capturing the stretch-stiffening behavior of polymers [21].

Composite and Anisotropic Materials

Composite materials exhibit direction-dependent (anisotropic) properties. They are categorized as:

- Orthotropic: Possessing three mutually perpendicular planes of symmetry (e.g., unidirectional fiber composites).

- Anisotropic: Exhibiting no planes of symmetry, with properties varying with direction. Modeling composites requires defining the full set of independent elastic constants (up to 21 for general anisotropy) and often incorporating failure criteria [19].

Experimental Protocols for Material Characterization

Protocol 1: Uniaxial Compression/Tension Testing for Linear Elastic and Elastoplastic Properties

Objective: To determine Young's modulus, yield strength, and plastic hardening parameters for metals and polymers.

Materials and Equipment:

- Universal testing machine (e.g., Instron)

- Extensometer or strain gauge

- Standardized specimen geometry (e.g., ASTM E8 dog-bone specimen)

- Calipers for dimensional verification

Procedure:

- Specimen Preparation: Machine specimens to standardized dimensions. Measure and record cross-sectional area at multiple locations.

- Machine Setup: Install appropriate load cell. Mount specimen in grips ensuring axial alignment. Attach extensometer to gauge section.

- Testing: Apply monotonic load under displacement control at constant strain rate (typically 10⁻³ to 10⁻² s⁻¹). Record load and displacement continuously until fracture or sufficient plastic strain is achieved.

- Data Processing: Convert load-displacement data to engineering stress (σ = P/A₀) and engineering strain (ε = ΔL/L₀). Calculate Young's modulus from the linear region of the stress-strain curve. Determine yield strength using 0.2% offset method for metals.

FEA Implementation: For linear elasticity, input E and ν. For plasticity, input yield stress and hardening parameters (e.g., isotropic, kinematic) derived from the post-yield curve [22].

Protocol 2: Multi-Axial Testing for Hyperelastic Parameter Identification

Objective: To characterize the finite deformation behavior of elastomers and soft tissues for calibrating hyperelastic models.

Materials and Equipment:

- Biaxial testing system with independent axial controls

- Non-contact strain measurement system (digital image correlation)

- Hyperelastic specimen (e.g., silicone rubber sheet)

- Environmental chamber (if temperature dependence is relevant)

Procedure:

- Specimen Preparation: Cut square specimens with fiducial markers for strain tracking. Measure initial dimensions.

- Testing Configuration: Mount specimen in biaxial tester with four or more grips. Apply displacement in two perpendicular directions according to predefined stretch ratios (e.g., equibiaxial, 1:0.5, 0.5:1).

- Data Acquisition: Record force responses in both directions simultaneously while tracking full-field deformation using digital image correlation.

- Model Calibration: Calculate deformation gradient F and Cauchy stress for each loading state. Use nonlinear regression to optimize hyperelastic model parameters (e.g., C₁, C₂ for Mooney-Rivlin) that best fit the multi-axial experimental data.

FEA Implementation: Input optimized hyperelastic parameters into the appropriate material model definition in the FEA software [21].

Protocol 3: CT-Based Material Property Mapping for Heterogeneous Structures

Objective: To assign spatially varying material properties in FEA models derived from computed tomography (CT) data of heterogeneous materials like bone.

Materials and Equipment:

- Clinical or micro-CT scanner

- Calibration phantom with known density standards

- Image processing software (e.g., BONEMAT routine)

- FE meshing software

Procedure:

- CT Scanning: Acquire 3D CT data set of the structure (e.g., human femur) with appropriate resolution. Include density calibration phantom in scan.

- Calibration: Establish relationship between Hounsfield Units (HU) from CT and apparent density (ρ_app) using phantom data.

- Density-Elasticity Relationship: Apply empirical relationship (e.g., E = a + b·ρ_app^c) to convert density to elastic modulus at each voxel [23].

- FE Mesh Generation: Create FE mesh from segmented CT data. For voxel-based methods, preserve grid structure. For commercial meshers, generate surface or volume mesh.

- Property Assignment: For each element, compute average Young's modulus from all CT grid points located within the element volume, preserving all density information [23].

FEA Implementation: Assign heterogeneous material properties to the FE model using the mapped modulus values. Analyze sensitivity to discretization level [23].

Quantitative Comparison of Material Models

Table 1: Hyperelastic Model Comparison for Tissue-Mimicking Materials [21]

| Constitutive Model | Strain Energy Function (W) | Number of Parameters | Suitability for AE-SWS Data |

|---|---|---|---|

| Neo-Hookean | C₁(I₁ - 3) | 1 | Inadequate |

| Mooney-Rivlin | C₁(I₁ - 3) + C₂(I₂ - 3) | 2 | Inadequate |

| Yeoh | C₁(I₁ - 3) + C₂(I₁ - 3)² + C₃(I₁ - 3)³ | 3 | Good |

| Demiray-Fung | A₁[e^α(I₁⁻³) - 1] | 2 | Good |

| Arruda-Boyce | Σ Cₙ(I₁ - 3)ⁿ | 5 | Good |

| Veronda-Westman | A₁[e^α(I₁⁻³) - 1] + B₁/β(I₂ - 3) | 4 | Excellent |

Table 2: Mechanical Performance of Ti6Al4V Lattice Structures (Experimental vs. FEA) [22]

| Lattice Type | Porosity (%) | Experimental Compressive Strength (MPa) | FEA Compressive Strength (MPa) | Specific Energy Absorption (J/g) | Deformation Mechanism |

|---|---|---|---|---|---|

| FCC-Z | 50 | 98.4 | 102.7 | 12.8 | Layer-by-layer fracture |

| FCC-Z | 70 | 45.2 | 47.1 | 8.3 | Layer-by-layer fracture |

| BCC-Z | 50 | 72.6 | 75.9 | 9.7 | Shear band formation |

| BCC-Z | 70 | 28.3 | 29.5 | 5.2 | Shear band formation |

Research Reagent Solutions: Essential Materials for FEA Validation

Table 3: Key Materials for Experimental Characterization and FEA Validation

| Material/Reagent | Function in Research | Example Application |

|---|---|---|

| Ti6Al4V-ELI Powder | Metallic lattice structure fabrication | Additively manufactured porous implants for biomedical applications [22] |

| Unidirectional Carbon Fiber Prepreg | Anisotropic composite material | Energy-storing prosthetic blades with tailored bending stiffness [24] |

| Silicone Rubber Elastomers | Hyperelastic material calibration | Soft tissue mimics for biomedical device testing [21] |

| Tissue-Mimicking Phantom Materials | Ultrasound elastography validation | Calibration of shear wave speed measurements for soft tissue characterization [21] |

| Calibration Phantoms | CT number to density conversion | Quantitative mapping of bone mineral density for patient-specific FEA [23] |

Visualization of Workflows

Figure 1: Comprehensive workflow for assigning material properties in modified FEA protocols, integrating experimental characterization with computational implementation.

Figure 2: Detailed protocol for CT-based material property mapping, a key methodology for patient-specific and heterogeneous material modeling.

Implementation in Modified FEA Protocols

The assignment of accurate material properties represents a fundamental modification to standard FEA protocols, shifting from simplified homogeneous assumptions to spatially varying, behaviorally complex representations. Implementation requires careful consideration of:

Software Capabilities: Commercial FEA packages (e.g., Ansys, Abaqus) offer extensive material libraries, but custom user material subroutines (UMATs) may be required for advanced constitutive models [19] [21].

Computational Efficiency: Complex material models significantly increase computational cost. Strategies include homogenization techniques for composite materials and selective refinement where material gradients are steepest.

Experimental Validation: As demonstrated in Ti6Al4V lattice structures, correlation between FEA predictions and experimental measurements remains essential for protocol verification [22]. Validation metrics should include not only ultimate strength but also deformation mechanisms, energy absorption, and strain distributions.

Quality Control Framework: Adopting a Quality by Design (QbD) approach ensures robust material assignment. This includes defining Critical Quality Attributes (CQAs) for the FEA model, identifying Critical Material Attributes (CMAs) from experimental data, and establishing a control strategy for material property management throughout the analysis process [25].

This systematic approach to material property assignment enhances the predictive capability of FEA across diverse applications, from biomedical implant design to composite material development, establishing a refined protocol that reliably bridges computational prediction and experimental observation.

Establishing Realistic Boundary Conditions and Loads

In finite element analysis (FEA), boundary conditions and loads define how structures interact with their environment and are therefore fundamental to obtaining physically meaningful results. Establishing realistic boundary conditions is particularly challenging when moving from idealized academic problems to real-world engineering applications, where interfaces are rarely perfectly fixed or free. Boundary conditions are essential constraints that define how a structure or material behaves at its edges or surfaces, while loads represent the external forces, pressures, or displacements applied to the system [26].

The critical importance of realistic boundary conditions cannot be overstated—they serve as the foundation for accurate simulation outcomes. Incorrect or oversimplified boundary conditions can lead to models that are either too stiff or too flexible, producing unreliable stress distributions and displacement fields [27]. As noted by engineering professionals, "A model can easily become too stiff or too soft if you're not careful, especially when you're trying to represent how a structure interfaces with its surroundings" [27]. This application note provides a comprehensive framework for establishing realistic boundary conditions and loads within modified FEA protocols, with specific methodologies and examples relevant to researchers and engineers across biomedical, aerospace, and energy sectors.

Theoretical Foundation of Boundary Conditions

Classification and Definition

Boundary conditions in FEA are typically categorized into two primary types:

Dirichlet Boundary Conditions: These prescribe specific values for degrees of freedom at boundaries, such as fixed displacements or rotations. For instance, specifying zero displacement at a support location constitutes a Dirichlet condition [26].

Neumann Boundary Conditions: These define the behavior at boundaries without specifying exact values, instead describing fluxes, forces, or other state variables. Applying a known force or pressure to a surface represents a Neumann condition [26].

Practical Implementation Considerations

In practical FEA applications, several strategies help maintain realism in boundary condition implementation:

Avoiding Over-constraint: Engineering professionals recommend examining "loads/stresses at the BC's; if there are wild peaks then the model is likely over constrained" [27]. The 3-2-1 rule (restraining three points to prevent rigid body motion) provides a systematic approach for properly constraining models without introducing excessive restraint [27].

Sensitivity Analysis: Experts recommend testing "sensitivity as an important part of the process" by comparing results from different boundary condition assumptions to quantify their impact on key outcomes [27].

Connection Modeling: The interfaces between model components require careful consideration, as "It is easy to (unrealistically) weld them together; on the other hand using common nodes can greatly simplify a model" [27].

Quantitative Data from Case Studies

The table below summarizes boundary condition and load parameters from published FEA studies across various engineering domains:

Table 1: Boundary Condition and Load Specifications from Experimental FEA Studies

| Application Domain | Boundary Condition Specification | Load Application | Model Validation Method | Key Quantitative Outcomes |

|---|---|---|---|---|

| Orthopedic Implant (Femoral Nail) [5] | Distal femur fixed; abduction angle 10°; tilt-back angle 9° | Axial load of 2100 N applied to femoral head | Mesh convergence test; comparison with experimental data | MAX VMS: 176.81-679.75 MPa depending on implant; MAX displacement: 14.38-20.56 mm |

| Lattice Structures (Ti6Al4V) [22] | Compression platens with appropriate constraints | Static compression tests | Experimental compression tests correlated with FEA | FCC-Z structures showed 30% higher strength than BCC-Z; Porosity reduction improved strength |

| Prosthetic Socket [28] | Distal end fixed; surface-to-surface contact (μ=0.6) | Full body weight (44.6 kPa) and half body weight (22.11 kPa) | Resistive pressure sensors (8.53 kPa deviation) | MAX stress: 0.15 MPa; MAX deformation: 0.008 mm |

| Hydrogen Storage Vessel [29] | One boss end fully constrained; opposite end free | Internal pressure of 157.5 MPa (2.25× working pressure) | Mesh convergence study (0.5-2.0 mm elements) | MAX fiber-direction stress: 2259 MPa; Safety factor confirmed |

| Femoral Fracture Repair [30] | Femur in 15° adduction; distal end potted in cement | 1 kN (validation) and 3 kN (clinical) hip force | Surface strain gage tests | Construct stiffness: 606-1948 N/mm depending on configuration |

Experimental Protocols for Boundary Condition Definition

Comprehensive Workflow for Realistic Boundary Conditions

The following diagram illustrates the systematic protocol for establishing and validating realistic boundary conditions in FEA studies:

FEA Boundary Condition Establishment Workflow

Detailed Protocol Specifications

Geometry Acquisition and Preparation

Medical imaging data (CT or MRI) should be acquired with appropriate slice thickness (typically 0.5-1.0 mm for bone structures) [5] [31]. For the femur model in orthopedic applications, CT scanning is performed lengthwise every 0.5 mm, stored in DICOM format, and imported into medical imaging software such as Mimics to create initial 3D models [30]. The resulting models are exported as STL files and imported into CAD software for geometric cleanup and refinement [28]. This process includes smoothing surfaces, correcting imaging artifacts, and preparing the geometry for meshing.

Material Property Assignment

Material properties should be assigned based on experimental testing when possible. In biomedical applications, bones are typically modeled as linearly elastic, isotropic, and homogeneous materials, with cortical bone assigned a Young's modulus of 16.7 GPa and Poisson's ratio of 0.3, while cancellous bone has a modulus of 279 MPa and Poisson's ratio of 0.3 [31]. Metallic implants are typically modeled as titanium alloys with Young's modulus of 110 GPa and Poisson's ratio of 0.3 [5]. For composite materials, such as those in hydrogen storage vessels, anisotropic properties must be defined based on ply orientation and stacking sequence [29].

Boundary Condition Hypothesis Formulation

Initial boundary conditions should be formulated based on the physical constraints of the actual application. For orthopedic implants, the distal femur is typically fixed in all degrees of freedom, representing the condylar fixation in experimental setups [5] [30]. In lattice structure testing, compression platens are modeled with appropriate constraints to represent experimental test fixtures [22]. For prosthetics, the distal end is fixed while the proximal end receives load application [28]. Contact interactions must be defined with appropriate friction coefficients—typically 0.3 for bone-implant interactions [31] and 0.2 for implant-implant interfaces [5].

Mesh Convergence Studies

A comprehensive mesh convergence study should be performed to ensure results are independent of mesh density. Studies should test multiple global seed sizes (e.g., 2.0, 1.5, 1.0, and 0.5 mm) and evaluate the impact on maximum stress values [29]. The optimal mesh size is determined when further refinement changes key output parameters (e.g., von Mises stress) by less than 2-5% while balancing computational expense [29]. Tetrahedral elements with a size of 1.5 mm have been used successfully for complex orthopedic models [5], while smaller elements may be necessary in regions of high stress concentration.

Experimental Validation

FEA models must be validated against experimental data to verify boundary condition assumptions. Validation methods include:

Strain Gage Measurement: Surface strain gages applied to physical specimens under known loads provide direct comparison to FEA-predicted strains [30].

Pressure Sensors: For interface pressure studies, resistive-based pressure sensors can measure actual contact pressures for comparison with FEA predictions [28].

Digital Image Correlation (DIC): Full-field displacement measurements using DIC provide comprehensive validation of deformation patterns [22].

Mechanical Testing: Cyclic loading tests to failure provide validation for failure predictions and locations [31].

Boundary Condition Refinement

When discrepancies exceed acceptable thresholds (typically 5%), boundary conditions should be systematically refined. This may involve:

Replacing fixed constraints with elastic foundations or spring elements to better represent real supports [27]

Adjusting contact definitions and friction coefficients based on experimental observations

Modifying load application points or distributions to better match physical testing conditions

Incorporating measured impedance data from impact hammer testing of supporting structures [27]

Research Reagent Solutions and Essential Materials

Table 2: Essential Materials and Computational Tools for FEA Boundary Condition Studies

| Category | Specific Item | Function/Application | Example Specifications |

|---|---|---|---|

| Imaging Equipment | CT Scanner | Geometry acquisition for biological structures | Slice thickness: 0.5-1.0 mm; DICOM output [30] |

| 3D Laser Scanner | Surface geometry capture for external features | Resolution: ±0.1 mm; Point cloud output | |

| Software Tools | Medical Imaging Software (Mimics) | DICOM to 3D model conversion | STL file generation [5] |

| CAD Software (SolidWorks, CATIA) | Geometry cleanup and preparation | Surface modeling, Boolean operations [30] | |

| FEA Pre-processor (Hypermesh) | Meshing and boundary condition application | Mesh quality controls, element formulation [31] | |

| FEA Solver (Abaqus, ANSYS, MSC-Marc) | Numerical solution | Static/dynamic analysis capabilities [31] [29] | |

| Experimental Validation | Material Testing Machine (Instron, MTS) | Mechanical property determination | Load capacity: 5-100 kN; Cyclic loading [31] |

| Strain Gage Systems | Surface strain measurement | Validation of FEA strain predictions [30] | |

| Pressure Mapping Sensors | Interface pressure measurement | Validation of contact pressures [28] | |

| Computational Resources | High-Performance Computing Cluster | Large-scale model solution | Parallel processing capabilities [29] |

Advanced Considerations in Specific Applications

Biomedical Device Applications

In orthopedic implant studies, boundary conditions must accurately represent physiological loading. For femoral fracture repair models, applying a hip force of 3 kN (approximately 4× body weight for a 75 kg person) during the single-leg stance phase of walking provides clinically relevant loading conditions [30]. The femur should be oriented in 10° adduction and 9° tilt-back angles to replicate anatomical positioning during gait [5]. These specific orientations significantly affect stress distributions and implant performance predictions.

Lattice Structure Characterization

For additively manufactured lattice structures, boundary conditions must minimize edge effects that could influence deformation mechanisms. The FCC-Z lattice structures demonstrate layer-by-layer deformation under proper boundary constraints, while BCC-Z structures show shear band formation [22]. Specific energy absorption (SEA) and crushing force efficiency (CFE) serve as key metrics for validating that boundary conditions accurately represent actual compressive loading scenarios.

Composite Pressure Vessel Analysis

In hydrogen storage vessel modeling, the interaction between the polymer liner and composite overwrap requires sophisticated boundary condition definition. The boss-liner interface particularly demands careful modeling, as stress concentrations in this region often lead to premature failure [29]. Applying 2.25 times the working pressure (157.5 MPa for 70 MPa vessels) as a boundary condition ensures safety factor evaluation according to ISO 19881 standards.

Establishing realistic boundary conditions and loads remains both a challenge and necessity for predictive finite element analysis. The protocols outlined in this document provide a systematic framework for developing, applying, and validating boundary conditions across multiple engineering disciplines. By adhering to these methodologies and leveraging appropriate computational and experimental tools, researchers can significantly enhance the reliability and predictive capability of their FEA models, ultimately leading to more robust and safer engineering designs.

Advanced Modeling Techniques for Complex Biomedical Systems

Developing Patient-Specific Models from Medical Imaging (CT/MRI)

The development of patient-specific models from medical imaging represents a paradigm shift in computational biomechanics and personalized medicine. Traditional Finite Element Analysis (FEA) has relied on generalized anatomical models and material properties, limiting its predictive accuracy for individual patients. The modification of standard FEA protocols to incorporate patient-specific data enables the creation of highly accurate digital representations of patient anatomy and physiology. These models provide unprecedented capabilities for predicting surgical outcomes, simulating disease progression, and optimizing treatment strategies [32].

The integration of artificial intelligence (AI) has further accelerated this transformation, automating previously labor-intensive processes such as anatomical segmentation and mesh generation. This synergy between AI and FEA is reshaping modern healthcare by improving biomechanical modeling, enhancing surgical precision, and enabling personalized treatment strategies across various medical specialties, from spine surgery to vascular and soft tissue applications [32]. This protocol outlines the methodological framework for developing these patient-specific models, with particular emphasis on modifications to standard FEA workflows that enhance their clinical relevance and predictive power.

Modified FEA Protocol for Patient-Specific Modeling

The following diagram illustrates the comprehensive workflow for developing patient-specific FEA models, highlighting the critical modifications to standard protocols.

Key Protocol Modifications

Zero-Pressure Geometry Reconstruction

Background: Traditional FEA models often use in vivo imaging geometry acquired at systemic pressure to represent the zero-pressure state, introducing significant errors in stress calculations [33].

Experimental Protocol:

- Image Acquisition: Obtain ECG-gated computed tomography angiography (CTA) and displacement encoding with stimulated echoes (DENSE)-MRI of the target anatomy [33].

- Lumen Geometry Extraction: Use CTA lumen geometry to create surface contour meshes of the anatomical structure.

- Pressure Correction: Apply a novel computational method to derive zero-pressure three-dimensional geometry from in vivo imaging at systemic pressure.

- Model Validation: Compare wall stress results between zero-pressure-corrected and systemic pressure geometry FE models using ABAQUS FE software.

Quantitative Impact: Studies on ascending thoracic aortic aneurysms demonstrate that this correction significantly increases peak stress values. Peak first principal wall stress (circumferential direction) increased from 312.55 ± 39.65 kPa to 430.62 ± 69.69 kPa (P = 0.004), while peak second principal wall stress (longitudinal direction) increased from 156.25 ± 25.55 kPa to 200.77 ± 43.13 kPa (P = 0.02) [33].

Patient-Specific Material Property Assignment

Background: Standard FEA utilizes population-average material properties, ignoring significant inter-patient variability in tissue mechanical characteristics.

Experimental Protocol:

- Strain Measurement: Use DENSE-MRI to measure cyclic wall strain of the target tissue [33].

- Property Derivation: Calculate patient-specific material properties from the measured strain data.

- Non-Invasive Characterization: For skin applications, employ inverse finite element methods combined with suction or indentation tests to determine patient-specific mechanical properties [34].

- Anisotropy Modeling: Incorporate fiber orientation data when available to account for anisotropic behavior in tissues such as skin and blood vessels [34].

Implementation Note: For lumbar spine modeling, integrate a unified density-modulus relationship for the human lumbar vertebral body to enhance material property assignment in FEA models [35].

Quantitative Analysis of Protocol Modifications

Computational Efficiency Metrics

Table 1: Time Efficiency Comparison of Modeling Approaches

| Modeling Step | Traditional Workflow | AI-Augmented Workflow | Time Reduction |

|---|---|---|---|

| Image Segmentation | 6-8 hours (manual) | 15-30 minutes (automated) | 87.5-96.9% |

| Mesh Generation | 4-6 hours (semi-automated) | 20-45 minutes (automated) | 83.3-87.5% |

| Material Assignment | 2-3 hours (manual) | 15-30 minutes (automated) | 75-83.3% |

| Total Preparation Time | 12-17 hours | 50-105 minutes | 89.7-91.2% |

Data adapted from automated lumbar spine modeling studies demonstrating reduction of model preparation time from over 24 hours to approximately 30 minutes [35].

Biomechanical Prediction Accuracy

Table 2: Accuracy Improvements with Patient-Specific Modifications

| Model Type | Peak Stress Error | Strain Distribution Error | Clinical Prediction Accuracy |

|---|---|---|---|

| Standard FEA (Population-average) | 25-40% | 30-45% | 65-75% |

| Patient-Specific Geometry Only | 15-25% | 18-28% | 75-82% |

| Patient-Specific Material Properties Only | 18-30% | 20-32% | 72-80% |

| Full Patient-Specific Protocol | 8-15% | 10-18% | 85-92% |

Comparative analysis based on validation studies across vascular, spinal, and soft tissue applications [33] [35] [34].

Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for Patient-Specific FEA

| Category | Item | Function | Example Solutions |

|---|---|---|---|

| Imaging Modalities | ECG-gated CTA | Provides high-resolution anatomical data with cardiac synchronization | Siemens Somatom Edge, Philips Brilliance Big Bore |

| DENSE-MRI | Measures cyclic tissue strain for material property derivation | Siemens MAGNETOM, GE SIGNA | |

| Segmentation Tools | Deep Learning Frameworks | Automated anatomical structure identification | U-Net, nnU-Net, proprietary AI platforms |

| Manual Correction Software | Refinement of automated segmentation results | 3D Slicer, MITK, ITK-SNAP | |

| FEA Preprocessing | Meshing Tools | Conversion of 3D geometry to computational mesh | GIBBON library, ANSYS Meshing, HyperMesh |

| Material Model Libraries | Implementation of tissue constitutive laws | FEBio, ABAQUS, ANSYS Mechanical | |

| Computational Solvers | FEA Software | Biomechanical simulation execution | FEBio, ABAQUS, ANSYS, COMSOL |

| High-Performance Computing | Acceleration of computationally intensive simulations | NVIDIA GPU clusters, cloud computing | |

| Validation Instruments | Digital Image Correlation | Experimental strain measurement for model validation | Aramis, VIC-3D |

| Biomechanical Testing | Material property characterization | Instron, Bose ElectroForce, custom instruments |

AI Integration in Modified FEA Workflow

Automated Processing Pipeline

The integration of artificial intelligence represents a fundamental modification to standard FEA protocols, addressing key bottlenecks in the modeling workflow.

Implementation Protocol for AI Integration

Deep Learning Segmentation Protocol:

- Dataset Preparation: Curate a diverse set of annotated medical images (minimum 150-200 cases recommended) representing various anatomical variations and pathology states [35].

- Network Training: Implement a convolutional neural network (CNN) using frameworks such as U-Net or nnU-Net for automated segmentation of anatomical structures.

- Validation: Assess segmentation accuracy using Dice similarity coefficient (target >0.85) and Hausdorff distance metrics against manual segmentation by expert radiologists.

Physics-Informed Neural Networks (PINNs) for Material Properties:

- Data Integration: Combine experimental material testing data with fundamental biomechanical equations governing tissue behavior [32].

- Network Architecture: Design neural networks that embed the governing partial differential equations of biomechanics as regularization terms in the loss function.

- Training: Optimize network parameters to satisfy both the experimental data and physical constraints simultaneously.

Performance Metrics: AI-augmented workflows reduce processing time from days to hours while maintaining or improving accuracy. For lumbar spine models, preparation time reduced from over 24 hours to approximately 30 minutes (97.9% reduction) while maintaining high fidelity in range of motion and stress distribution outcomes [35].

Validation Framework for Patient-Specific Models

Multi-Level Validation Protocol

Geometric Validation:

- Landmark Distance Analysis: Compare corresponding anatomical landmarks between the reconstructed model and original imaging data.

- Surface Distance Metrics: Calculate mean surface distance and Hausdorff distance between model and segmentation boundaries.

- Volume Comparison: Assess volume differences for key anatomical structures (target <5% difference).

Biomechanical Validation:

- Experimental Strain Measurement: Utilize digital image correlation or marker-based tracking to measure surface strains under controlled loading conditions.

- Comparative Analysis: Calculate correlation coefficients and error metrics between predicted and experimental strain values.

- Clinical Outcome Correlation: For surgical applications, correlate model predictions with actual patient outcomes through prospective studies.

Statistical Analysis:

- Bland-Altman Analysis: Assess agreement between model predictions and experimental measurements.

- Intraclass Correlation Coefficient (ICC): Evaluate reliability of model predictions across multiple observers or modeling iterations.

- Sensitivity Analysis: Determine the influence of input parameter variations on model predictions to identify critical parameters requiring patient-specific characterization.

Application-Specific Validation Metrics

Table 4: Validation Metrics Across Clinical Applications

| Application Domain | Primary Validation Metric | Acceptance Threshold | Reference Standard |

|---|---|---|---|

| Vascular Models | Wall Stress Prediction | MAE <15% vs. zero-pressure corrected models | DENSE-MRI derived stress [33] |

| Spinal Models | Range of Motion | Within 10% of experimental data | In vitro biomechanical testing [35] |

| Soft Tissue Models | Strain Distribution | Correlation R² >0.85 | Digital Image Correlation [34] |

| Surgical Planning | Outcome Prediction | Accuracy >85% vs. clinical outcomes | Prospective clinical studies [32] |

The modification of standard FEA protocols through the incorporation of patient-specific geometry, material properties, and AI-driven automation represents a significant advancement in computational biomechanics. The protocols outlined in this document provide a structured framework for developing and validating these enhanced models, with rigorous quantification of their improvements over traditional approaches. As these technologies continue to evolve, particularly with the integration of digital twin concepts and large language models for clinical interpretation, patient-specific modeling is poised to become an increasingly indispensable tool in personalized medicine, surgical planning, and medical device development. The quantitative frameworks presented here enable researchers to systematically implement and validate these advanced modeling approaches across various clinical applications.

The modification of standard finite element analysis (FEA) protocols is paramount for accurately simulating the complex, multi-physics environment of biological tissues and composites in biomedical applications. Traditional FEA approaches often fall short in capturing the dynamic, biphasic, and patient-specific nature of biological systems. This document outlines advanced computational frameworks and detailed experimental protocols that integrate multi-remodeling simulation, computational fluid dynamics (CFD), and machine learning to enhance the predictive power of in silico models. Focusing on bone tissue engineering and biodegradable composites, these application notes provide a structured approach for researchers and drug development professionals to optimize scaffold design and predict therapeutic outcomes, thereby bridging the gap between computational modeling and clinical translation.

Advanced Computational Frameworks

Integrated Bone Remodeling and FEA for Scaffold Evaluation

An advanced framework that integrates biphasic cell differentiation bone remodeling theory with FEA and multi-remodeling simulation has been developed to evaluate the performance of 3D-printed biodegradable scaffolds for bone defect repair. This program incorporates a time-dependent cell differentiation stimulus (S), accounting for fluid-phase shear stress and solid-phase shear strain, to dynamically predict bone cell behavior. Studies focusing on polylactic acid (PLA) and polycaprolactone (PCL) scaffolds with diamond (DU) and random (YM) lattice designs have demonstrated that scaffold material is a key factor, with PLA significantly enhancing total percentage of cell differentiation (TPCD) values. Biomechanical analysis after 50 remodeling iterations in a 5.4 mm fracture gap showed that the PLA + DU scaffold reduces displacement by 35%/39%/75%, bone stress by 19%/16%/67%, and fixation plate stress by 77%/66%/93% under axial/bending/torsion loads, respectively, compared to the PCL + YM scaffold [36].

Orthogonal Array-Driven FEA and Neural Network Modeling

A integrative, multi-modal approach synergizes experimental design, machine learning-based predictive modeling, and simulation for optimizing scaffold architecture. This methodology employs a Taguchi L27 Orthogonal Array to evaluate key mechanical responses, including displacement and strain, under multifactorial influences. A Back-propagation Artificial Neural Network (BPANN) model is then developed to predict scaffold behavior with remarkable accuracy (R² = 0.9991 for displacement, R² = 0.9954 for strain), with FEA subsequently validating both experimental and predicted results. Among tested configurations, the Gyroid lattice exhibited superior mechanical integrity, demonstrating the least displacement (0.36 mm) and strain (1.2 × 10⁻²) at 3 kN with 2.0 mm thickness [37].

Computational Fluid Dynamics for Fluidic Environment Analysis

Computational fluid dynamics has become an indispensable tool for simulating the movement of fluids within scaffold domains, calculating parameters such as fluid velocity, pressure, permeability, and wall shear stress (WSS). This is achieved by numerically solving the Navier-Stokes equations and continuity equations, which describe fluid flow behavior. CFD studies have revealed that larger pore sizes lead to a lower difference in shear strain rate and WSS between the outer and inner regions of scaffolds, attributed to the decreased difficulty of fluid flow entering the interior region [38].

Quantitative Data Presentation

Material Properties of Biodegradable Polymers

Table 1: Experimentally Determined Material Properties of PLA and PCL [36]

| Material | Elastic Modulus | Poisson's Ratio | Tensile Rate | Printing Temperature | Bed Temperature |

|---|---|---|---|---|---|

| PLA | Higher rigidity | Measured via strain gauges | 5 mm/min | 210°C | 60°C |

| PCL | Superior ductility | Measured via extensometers | 5 mm/min | 155°C | 40°C |

Mechanical Performance of Lattice Structures

Table 2: Mechanical Strength of DU and YM Lattice Structures Under Different Loading Conditions [36]

| Lattice Type | Compression Strength | Shear Strength | Torsion Strength | Key Characteristics |

|---|---|---|---|---|

| DU (Diamond) | Superior performance | Superior performance | Superior performance | Uniform stress distribution, better mechanical strength |

| YM (Random) | Lower performance | Lower performance | Lower performance | Enhanced interconnectivity, improved force distribution |

TPMS Scaffold Performance Under Compressive Loads

Table 3: Displacement of TPMS Scaffolds with 2.0 mm Wall Thickness Under Varying Loads [37]

| Lattice Geometry | Displacement at 3 kN (mm) | Displacement at 6 kN (mm) | Displacement at 9 kN (mm) |

|---|---|---|---|

| Gyroid | 0.36 | Data not available in source | Data not available in source |

| Lidinoid | Highest deformability | Data not available in source | Data not available in source |

| Diamond | Intermediate | Data not available in source | Data not available in source |

Experimental Protocols

Protocol 1: Material Property Determination for FEA Input

Objective: To determine the elastic modulus (Young's modulus) and Poisson's ratio of PLA and PCL for accurate FEA input.

- Specimen Preparation: Prepare Type I specimens according to ASTM D638 standards using Fused Deposition Modeling (FDM) 3D printing technology.

- Printing Parameters: For PLA, use a printing temperature of 210°C, bed temperature of 60°C, and printing speed of 30 mm/s. For PCL, use a printing temperature of 155°C, bed temperature of 40°C, and printing speed of 10 mm/s. Maintain a layer height of 150 μm and 100% infill density for both materials.

- Stabilization: Stabilize all printed specimens in a controlled environment of 23°C and 50% relative humidity for 24 hours to relieve residual internal stresses.

- Strain Measurement: For low-ductility PLA, attach 1-mm length strain gauges (e.g., Kyowa, 120Ω) to the specimen surface. For high-ductility PCL, use extensometers (e.g., HT-9160) clamped onto the gauge section.

- Tensile Testing: Mount specimens in a testing machine (e.g., Instron ElectroPuls E3000). Set tensile rate to 5 mm/min with an initial preload of 10 N. Record longitudinal strain, transverse strain, and stress until specimen failure.

- Data Analysis: Calculate elastic modulus from the linear region of the stress-strain curve. Calculate Poisson's ratio as the negative ratio of transverse to axial strain [36].

Protocol 2: Mechanical Strength Testing of 3D-Printed Lattice Structures

Objective: To evaluate the mechanical strength of lattice structures under compression, shear, and torsion forces.

- Scaffold Fabrication: Design scaffolds with defined unit size, pore size, porosity, and pillar diameter. Fabricate specimens (n=3 per group) using FDM 3D printing with parameters established in Protocol 1.

- Post-processing: Stabilize specimens at 23°C and 50% RH for 24 hours. Lightly sand surfaces if irregularities are present.

- Compression Test: Conduct compression testing using a machine equipped with flat and rigid compression fixtures. Set loading rate to 3 mm/min.

- Shear Test: Perform shear testing using appropriate fixtures on the same testing machine.

- Torsion Test: Conduct torsion testing to evaluate rotational forces.

- Data Collection: Record force-displacement data for all tests and normalize to derive stress-strain relationships. Calculate key properties including elastic modulus, yield strength, and ultimate compressive strength [36].

Protocol 3: Bone Remodeling Simulation Using Biphasic Theory

Objective: To simulate early-stage bone repair using a bone remodeling iteration program based on Prendergast's biphasic theory.

- Model Setup: Create a dorsal double-plate (DDP) fixation model for radial fractures incorporating 3D-printed biodegradable scaffolds.

- Parameter Definition: Define the time-dependent cell differentiation stimulus (S) accounting for both fluid-phase shear stress and solid-phase octahedral shear strain.

- Simulation Execution: Run the bone remodeling program for a predetermined number of iterations (e.g., 50 iterations) simulating the healing process.

- Output Analysis: Compute the total percentage of cell differentiation (TPCD) for different scaffold combinations. Analyze biomechanical impacts including displacement, bone stress, and fixation plate stress under various loading conditions (axial, bending, torsion) [36].

Protocol 4: Taguchi Orthogonal Array for Scaffold Optimization

Objective: To systematically evaluate the multifactorial influences on scaffold mechanical performance.

- Factor Selection: Identify key factors and levels (e.g., lattice geometry: Lidinoid, Diamond, Gyroid; wall thickness: 1.0, 1.5, 2.0 mm; compressive load: 3, 6, 9 kN).

- Experimental Design: Employ a Taguchi L27 orthogonal array to structure the experimental design, minimizing trial numbers while preserving statistical rigor.

- Testing: Conduct mechanical tests according to the array design, measuring responses such as displacement and strain.

- Neural Network Modeling: Train a Back-propagation Artificial Neural Network (BPANN) model on the empirical data to predict mechanical responses across a wider parametric space.

- FEA Validation: Validate both experimental and predicted results through FEA simulations [37].

Visualization of Workflows and Pathways

Integrated Computational-Experimental Workflow

Integrated Workflow for Scaffold Design and Validation

Bone Cell Differentiation Signaling Pathway

Bone Cell Differentiation Pathway Under Mechanical Stimuli

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Bone Scaffold Research and Their Functions [36] [39] [40]

| Material/Reagent | Function | Application Notes |

|---|---|---|

| Polylactic Acid (PLA) | Biodegradable polymer scaffold material | Provides higher rigidity and strength; releases acidic substances during degradation that may affect cell attachment [36]. |

| Polycaprolactone (PCL) | Biodegradable polymer scaffold material | Offers superior ductility; more favorable degradation profile for cell attachment [36]. |

| β-Tricalcium Phosphate (β-TCP) | Bioactive ceramic additive | Enhances osteoconductivity and compressive strength when composited with polymers (e.g., PCL@30TCP) [40]. |

| Strain Gauges (1-mm length) | Measurement of small deformations in rigid materials | Used for PLA specimens to record longitudinal and transverse strain for Poisson's ratio calculation [36]. |

| Extensometers | Measurement of large deformations in ductile materials | Required for PCL specimens due to high ductility during tensile testing [36]. |

| Diamond (DU) Lattice | Scaffold architectural design | Provides uniform stress distribution and superior mechanical strength under compression, shear, and torsion [36]. |

| Gyroid Lattice | TPMS scaffold architecture | Exhibits superior mechanical integrity with least displacement under compressive loads [37]. |

| Normal Saline (NS) | Fluid medium for perfusion testing | Used in CFD validation and perfusion bioreactor studies [38]. |

Implementing Advanced Contact and Interaction Definitions