Beyond Single-Dataset Performance: A Practical Guide to Optimizing Deep Learning Models for Robust Cross-Dataset Generalization in Biomedicine

This article provides a comprehensive guide for researchers and drug development professionals on achieving robust cross-dataset performance in deep learning models.

Beyond Single-Dataset Performance: A Practical Guide to Optimizing Deep Learning Models for Robust Cross-Dataset Generalization in Biomedicine

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on achieving robust cross-dataset performance in deep learning models. It covers the foundational challenges of dataset bias and domain shift, explores advanced optimization and domain adaptation methodologies, presents troubleshooting strategies for performance degradation, and outlines rigorous validation frameworks using cross-dataset benchmarking. With a focus on real-world biomedical applications, such as drug response prediction, the content synthesizes current research and best practices to equip scientists with the tools needed to build models that generalize reliably to new, unseen data, thereby enhancing their potential for clinical translation.

The Generalization Challenge: Understanding Dataset Bias and Domain Shift in Biomedical Deep Learning

FAQ: Understanding Cross-Dataset Evaluation

What is cross-dataset evaluation and why is it critical for real-world AI? Cross-dataset evaluation is a framework that assesses a model's generalization by training it on one or more datasets and then testing it on entirely separate datasets. This methodology directly tests for robustness against dataset-specific biases, domain shift, and annotation artifacts, providing a more realistic measure of how a model will perform in heterogeneous real-world environments than within-dataset validation [1].

My model achieves 99% accuracy on its test set. Why should I be concerned? High performance on a held-out test set from the same data distribution often reflects mastery of dataset-specific shortcuts or annotation patterns, not generalizable learning. Empirical studies consistently show that even state-of-the-art models can suffer drastic performance drops—sometimes to near-random accuracy—when evaluated on a different dataset due to factors like varying image resolution, data collection protocols, or labeling conventions [1] [2].

Which is more important for improving cross-dataset performance: a better model or better data? While both are important, a data-centric approach often yields significant gains. One systematic study found that by focusing on data quality—through methods like deduplication, correcting noisy labels, and augmentation—researchers achieved a consistent 3% or greater performance improvement on standard benchmarks, rivaling or surpassing the gains from model-centric improvements alone [3].

Troubleshooting Guide: Common Experimental Pitfalls and Solutions

Problem: Severe performance drop when testing on a new dataset.

- Potential Cause 1: Domain Shift. The target dataset may differ from your source data in terms of resolution, acquisition hardware, or environmental context (e.g., cracks in lab concrete vs. weathered outdoor concrete) [2].

- Solution: Implement domain adaptation techniques. This can include unsupervised fine-tuning on unlabeled data from the target domain or using domain-invariant feature learning methods to align the source and target distributions [1].

- Potential Cause 2: Label Inconsistency. Class definitions or annotation guidelines may not be perfectly aligned across datasets (e.g., the distinction between "cup" and "mug," or varying thresholds for "hate speech") [1].

- Solution: Perform label reconciliation before training. Carefully audit and map the label spaces of all datasets to a unified ontology to ensure semantic alignment [1].

Problem: Inconsistent and non-reproducible results across different dataset pairs.

- Potential Cause: Lack of a Standardized Benchmark. Ad-hoc selection of source and target datasets makes comparisons with other studies difficult [4].

- Solution: Adopt or create a standardized benchmarking framework. Use fixed dataset splits, predefined source-target pairs, and consistent evaluation metrics. For example, in drug response prediction, benchmarks now specify particular datasets (like CTRPv2) as standard sources for training to ensure fair model comparison [4] [1].

Problem: My multi-task model for drug discovery is not converging well.

- Potential Cause: Gradient Conflict. When a model learns multiple tasks (e.g., drug-target affinity prediction and drug generation) simultaneously, gradients from different tasks can conflict, leading to unstable optimization and poor performance [5].

- Solution: Use algorithms designed to mitigate gradient conflict. The FetterGrad algorithm, for instance, helps align gradients from different tasks by minimizing the Euclidean distance between them, promoting more stable and effective multi-task learning [5].

Quantitative Insights: Measuring Robustness Across Domains

The following table summarizes key quantitative findings from cross-dataset evaluations in different fields, highlighting the pervasive challenge of generalization.

| Domain / Study | Key Metric | In-Dataset Performance | Cross-Dataset Performance | Notes |

|---|---|---|---|---|

| Lightweight Vision Models [6] | Cross-Dataset Score (xScore) | N/A | Varies by architecture | ImageNet accuracy did not reliably predict performance on fine-grained or medical datasets. |

| Drug Response Prediction [4] | R² Score | High (e.g., >0.8) | Substantial drop | Performance drop observed even for leading models; CTRPv2 identified as a robust source dataset. |

| Crack Classification [2] | Accuracy | Up to 100% (e.g., VGG16) | Substantial degradation | Models trained on high-res data performed poorly on lower-res, complex-texture datasets. |

| Data-Centric vs. Model-Centric [3] | Accuracy | Baseline (Model-Centric) | +3% relative improvement | Focus on data quality (cleaning, deduplication) consistently outperformed model-tuning alone. |

Experimental Protocols for Robust Evaluation

Protocol 1: Systematic Cross-Dataset Benchmarking This protocol, used in evaluating drug response prediction models, provides a standardized method for assessing generalization [4].

- Dataset Selection: Curate multiple public datasets (e.g., for drug response: CTRPv2, GDSC, etc.).

- Data Alignment: Implement uniform pre-processing and feature extraction pipelines across all datasets to ensure input consistency.

- Source-Target Splits: Design experiments where models are trained on one "source" dataset and tested on all others as "targets." Perform this for all possible pairwise combinations.

- Evaluation: Use metrics that quantify both absolute performance on the target dataset and relative performance drop compared to within-dataset results. Aggregated off-diagonal scores ((ga[s] = \frac{1}{d - 1} \sum{t \ne s} g[s,t])) provide a single measure of a model's generalization capability [4] [1].

Protocol 2: Quantifying Robustness with the xScore Metric This metric offers a unified way to score model robustness across diverse visual domains [6].

- Fixed Training: Train a set of models (e.g., 11 lightweight vision models) under an identical, fixed regime (e.g., 100 epochs) across several diverse datasets (e.g., 7 datasets).

- Cross-Testing: Evaluate each trained model on every dataset, including those it was not trained on.

- Calculate xScore: Compute the Cross-Dataset Score, which quantifies the consistency and robustness of a model's performance across all these visual domains. Research indicates that a reliable xScore can be estimated using results from as few as four datasets [6].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational tools and metrics essential for conducting rigorous cross-dataset evaluation.

| Item Name | Function / Description |

|---|---|

| Standardized Benchmarking Framework [4] | A pre-defined set of datasets, models, and evaluation workflows that ensure fair and reproducible model comparisons. |

| Cross-Dataset Score (xScore) [6] | A unified metric that quantifies the consistency and robustness of model performance across diverse visual domains. |

| Aggregated Off-Diagonal Score ((g_a[s])) [1] | A generalization metric calculated as the average of a model's performance across all unseen target datasets. |

| FetterGrad Algorithm [5] | An optimization algorithm that mitigates gradient conflicts in multitask learning, ensuring stable training for complex objectives like simultaneous drug affinity prediction and generation. |

| Data-Centric Pipeline [3] | A systematic approach for generating high-quality data through deduplication (e.g., multi-stage hashing) and confident learning for detecting/correcting noisy labels. |

| Domain Adaptation Techniques [1] | Methods such as unsupervised fine-tuning and pseudo-labeling that help a model adapt to a new target dataset without requiring extensive new labels. |

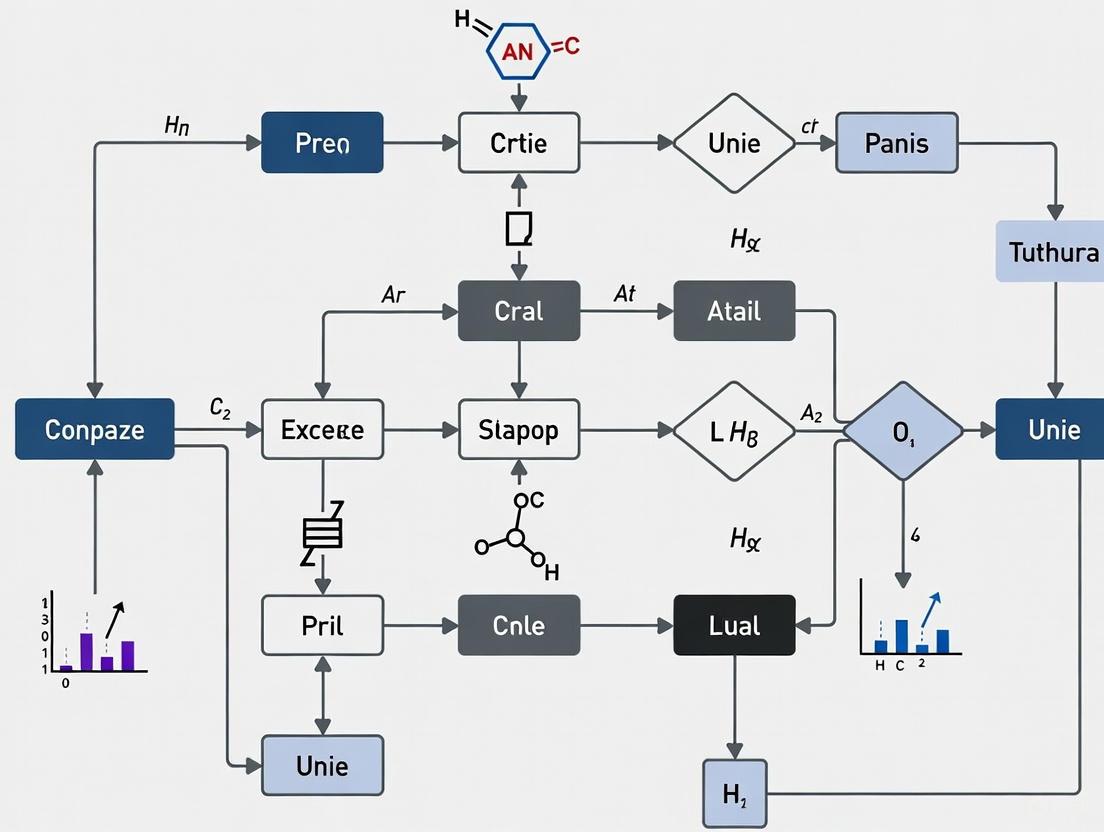

Workflow Diagram: Cross-Dataset Evaluation Protocol

The diagram below visualizes the logical workflow and decision points in a standardized cross-dataset evaluation protocol.

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Data Skew and Representation Bias

Problem: Model performance degrades significantly for specific demographic subgroups or under-represented conditions.

Symptoms:

- High overall accuracy, but low performance on data from new geographic locations, demographics, or environmental conditions [7] [1].

- The model makes systematic errors for certain skin tones, age groups, or in specific weather conditions [7] [8].

Diagnosis Steps:

- Audit Dataset Composition: Break down your dataset by sensitive attributes like gender, age, ancestry, and Fitzpatrick skin tone. Check for under-represented groups [9] [8].

- Subgroup Performance Evaluation: Do not just look at aggregate metrics. Calculate accuracy, precision, and recall for each demographic and scenario subgroup to identify performance disparities [9].

- Check for Missing Feature Values: Investigate if data for certain features (e.g.,

temperamentin a dog adoptability model) is missing more frequently for particular subgroups, as this can indicate collection bias [9].

Mitigation Strategies:

- Data Augmentation: Use techniques like horizontal flipping (

fliplr) and color variation (hsv_v) to artificially increase dataset diversity and force the model to learn more robust features [7]. - Leverage Synthetic Data: Use synthetic data generation to fill gaps where real-world data for under-represented groups is scarce [7].

- Utilize Fairness Benchmarks: Evaluate your models on dedicated fairness datasets like the Fair Human-Centric Image Benchmark (FHIBE), which provides dense annotations and global diversity for granular bias diagnosis [8].

Guide 2: Resolving Label Inconsistencies and Annotation Artifacts

Problem: Models learn spurious correlations from labeling patterns rather than the underlying task, leading to poor generalization.

Symptoms:

- Model performance is high on the original test set but falls drastically on a new, carefully curated test set or a different dataset (cross-dataset evaluation) [1].

- The model relies on background features, watermarks, or other non-causal signals for prediction [10].

Diagnosis Steps:

- Conduct Cross-Dataset Evaluation: Train your model on one dataset and test it on another. A significant performance drop indicates overfitting to dataset-specific artifacts [1].

- Audit for Shortcut Learning: Use frameworks like G-AUDIT (Generalized Attribute Utility and Detectability-Induced bias Testing) to identify metadata attributes (e.g., image

width,height, hospital token) that are both detectable from the data and useful for predicting the task label [10]. - Implement Inter-Rater Reliability Checks: If possible, review the annotation guidelines and check for consistency between different annotators. Low agreement often signals ambiguous guidelines or subjective labels [8].

Mitigation Strategies:

- Label Reconciliation: Meticulously remap and merge class labels from different datasets to create a consistent ontology before training [1].

- Data Preprocessing: Remove or standardize non-task-related signals like hospital-specific tokens or consistent background elements during data preprocessing [10].

- Advanced Training Techniques: Use dataset-aware loss functions or adversarial training to force the model to learn features that are invariant to the source dataset [1].

Frequently Asked Questions

Q1: Our model achieved 98% accuracy on our internal test set, but it performs poorly in real-world trials. What could be wrong?

This is a classic sign of dataset bias and overfitting. Your internal test set likely suffers from the same biases as your training data. To diagnose this:

- Perform a cross-dataset evaluation: Test your model on an external benchmark dataset like FHIBE [8] or any other independent collection.

- Audit for data skew: Ensure your test set reflects the real-world prevalence of different classes and conditions, and evaluate performance by subgroup [9] [7]. A model might exploit a statistical correlation in your dataset that does not hold in the real world.

Q2: What are the most common types of dataset bias we should audit for?

The most prevalent sources of bias are [7]:

- Selection Bias: The data collected does not randomly represent the target population (e.g., a facial recognition system trained only on young people).

- Representation Bias: Certain groups are significantly under-represented relative to their real-world prevalence (e.g., a dataset featuring mostly European cities).

- Labeling Bias: Human subjectivity during annotation introduces consistent errors or prejudices (e.g., consistently misclassifying certain objects due to ambiguous guidelines).

Q3: How can we proactively detect bias before training a large, expensive model?

Recent research focuses on early bias detection from "bias symptoms" in the dataset statistics themselves, avoiding computationally intensive training [11]. Furthermore, you can:

- Run a dataset audit: Apply a framework like G-AUDIT to quantify the relationship between data attributes (age, sex, acquisition site) and task labels. Attributes with high "utility" and "detectability" scores pose a high risk of being learned as shortcuts [10].

- Analyze metadata: Check for strong correlations between simple metadata (like image

heightandwidth, which can be a proxy for clinical site) and your class labels [10].

Q4: How does dataset bias relate to algorithmic bias?

It is crucial to distinguish between the two [7]:

- Dataset Bias is data-centric; the inputs themselves are flawed or non-representative. The model learns perfectly from a distorted reality.

- Algorithmic Bias is model-centric; it arises from the design of the algorithm. For example, an optimization algorithm might be inclined to prioritize the majority class to maximize overall accuracy. Both contribute to unfair AI systems, and addressing dataset bias is the foundational step.

Experimental Protocols & Data

Table 1: Quantitative Metrics for Cross-Dataset Robustness Evaluation

This table summarizes key metrics for evaluating how well a model generalizes across different datasets [1].

| Metric Name | Formula | Interpretation |

|---|---|---|

| Cross-Dataset Error Rate | Error_cross = 1 - (Correct Predictions on Target / Total Target Samples) |

The absolute error rate on a held-out target dataset. |

| Normalized Performance | g_norm[s, t] = g[s, t] / g[s, s] |

Performance on target dataset t relative to performance on source dataset s. A value <1 indicates a performance drop. |

| Aggregated Off-Diagonal Score | g_a[s] = (1/(d-1)) * Σ g[s, t] for t≠s |

An average measure of a model's generalization capability from source s to all other target datasets. |

Table 2: G-AUDIT Framework Results on ISIC 2019 Skin Lesion Dataset

This table shows the output of a modality-agnostic dataset audit, identifying potential sources of shortcut learning. High utility and detectability indicate high bias risk [10].

| Attribute | Utility Score | Detectability Score | Bias Risk |

|---|---|---|---|

| Image Height | 0.050 | 0.887 | High |

| Image Width | 0.048 | 0.865 | High |

| Year | 0.052 | 0.862 | High |

| Skin Color (Fitzpatrick) | 0.000 | 0.424 | Medium |

| Anatomical Location | 0.012 | 0.169 | Low |

| Sex | 0.003 | 0.168 | Low |

Protocol 1: Cross-Dataset Evaluation Protocol

Objective: To systematically assess model generalization and uncover hidden dataset biases [1].

Methodology:

- Dataset Selection: Curate multiple datasets (

D1, D2, ..., Dn) for the same general task (e.g., object detection, medical image classification). - Label Reconciliation: Align the label spaces across datasets. This may involve merging similar classes (e.g., "bike" and "bicycle") into a unified ontology.

- Experiment Design: For each dataset

ias the source (training) dataset, train a model and evaluate its performance on all datasets, including itself. - Metric Calculation: For each source-target pair (

D_i,D_j), calculate the metrics listed in Table 1. The performance matrixg[i, j]provides a complete picture of generalization. - Analysis: Analyze the matrix. High diagonal values (

g[i, i]) with low off-diagonal values (g[i, j]for i≠j) indicate models that overfit to dataset-specific biases.

Protocol 2: Early Bias Detection Using Dataset Symptoms

Objective: To predict variables that may induce bias before training a model, increasing development sustainability [11].

Methodology:

- Identify Sensitive Variables: Define a set of candidate attributes (e.g., demographic, acquisition-related).

- Compute Bias Symptoms: For each attribute, calculate a set of dataset statistics that serve as "bias symptoms." These could be measures of correlation with the label, class imbalance, or feature value distributions.

- Empirical Analysis: Using a reference set of known biased datasets, establish a predictive relationship between the computed bias symptoms and the actual variables that cause bias under different fairness definitions.

- Screening: For a new dataset, compute the bias symptoms for its attributes. Use the established model to flag attributes with a high probability of causing downstream algorithmic bias.

The Scientist's Toolkit

Table 3: Essential Research Reagents for Bias-Aware ML

| Tool / Resource | Type | Primary Function |

|---|---|---|

| FHIBE Dataset [8] | Evaluation Dataset | A consensually collected, globally diverse image benchmark for granular bias diagnosis across tasks like pose estimation and face verification. |

| G-AUDIT Framework [10] | Auditing Framework | A modality-agnostic tool to quantify shortcut learning risks by measuring attribute "utility" and "detectability." |

| Cross-Dataset Score (xScore) [6] | Evaluation Metric | A unified metric that quantifies the consistency and robustness of lightweight model performance across diverse visual domains. |

| Data Augmentation (e.g., fliplr, hsv_v) [7] | Mitigation Technique | Artificially increases dataset diversity and variance to improve model robustness and mitigate representation bias. |

| AdamW Optimizer [12] | Optimization Algorithm | An optimization technique that integrates weight decay, often leading to better generalization performance on unseen data. |

Workflow Diagrams

Dataset Bias Auditing and Mitigation Workflow

This technical support center provides troubleshooting guides and FAQs to help researchers address performance drops in deep learning models, a core challenge in cross-dataset performance research for medical applications.

Troubleshooting Guide: Performance Drops in Cross-Dataset Evaluation

Q1: Why does my model, which performs perfectly on its original dataset, fail on a new dataset with similar medical images?

This is a classic case of domain shift or dataset bias. The model has learned features specific to your original training data that do not generalize. Key factors causing this include [13]:

- Resolution and Image Quality: Models trained on high-resolution, clean images often struggle with lower-resolution, noisier data.

- Variations in Data Collection: Differences in medical imaging equipment, protocols, or institutional settings create underlying differences in data distributions.

- Surface Texture and Complexity: The model may overfit to specific textural patterns in the source dataset that are not prevalent in the target dataset.

Mitigation Strategies:

- Employ Domain Adaptation: Use advanced techniques like domain adversarial training to learn features that are invariant across datasets [14].

- Utilize Data Augmentation: During training, apply aggressive augmentation (random rotations, flips, contrast adjustments, blurring) to simulate the variability expected in real-world data [15].

- Explore Hybrid Models: Combine the strengths of different architectures. For instance, a model with strong spatial feature extraction (like VGG16) can be paired with temporal or sequential analysis components if needed [13].

Q2: How can I improve my drug response prediction model so that it translates from preclinical models (like PDX) to human patients?

The biological dissimilarity between preclinical models and human tumors creates a significant translational gap [14].

Mitigation Strategy: Implement a Domain Adaptation Framework. A framework like TRANSPIRE-DRP is specifically designed for this problem. Its workflow involves [14]:

- Pre-training a Domain-Invariant Feature Extractor: An autoencoder is trained on large-scale, unlabeled genomic data from both PDX models and patient tumors. This forces the model to learn robust, generalizable representations of genomic features before it even sees the drug response labels.

- Adversarial Adaptation: The pre-trained model is then fine-tuned using the labeled PDX data. An adversarial component is introduced to align the feature distributions of the PDX and patient domains, ensuring that the drug response signals learned from PDXs become applicable to patients.

Empirical Evidence of Performance Gaps

Table 1: Documented Performance Drops in Medical Imaging Deep Learning Models During Cross-Dataset Evaluation [13]

| Deep Learning Model | Reported Self-Testing Accuracy (Best Case) | Observed Cross-Testing Performance | Primary Challenge in Cross-Dataset Context |

|---|---|---|---|

| VGG16 | 100% (on SCD & CPC datasets) | Substantial performance degradation | Struggles with lower-resolution images and complex, noisy textures from different sources. |

| ResNet50 | High accuracy on source datasets | Holds its own but is troubled by variability | Performance is impacted by surface complexity and environmental noise in new data. |

| LSTM | Varies by application | Becomes less useful in cross-domain tasks | Struggles to extract relevant spatial characteristics from image data. |

Table 2: Diagnostic Accuracy of Deep Learning Models in Medical Imaging (Specialty-Specific) [16]

| Medical Specialty & Task | Imaging Modality | Pooled AUC (95% CI) | Key Limitation & Heterogeneity |

|---|---|---|---|

| Ophthalmology: Diabetic Retinopathy | Retinal Fundus Photographs | 0.939 (0.920 - 0.958) | High heterogeneity; extensive variation in methodology and outcome measures between studies. |

| Ophthalmology: Diabetic Retinopathy | Optical Coherence Tomography (OCT) | 1.00 (0.999 - 1.000) | High heterogeneity; extensive variation in methodology and outcome measures between studies. |

| Respiratory: Lung Nodules | CT Scans | 0.937 (0.924 - 0.949) | High heterogeneity; only 2 of 115 studies used prospective data collection. |

| Respiratory: Lung Cancer/Mass | Chest X-Ray | 0.864 (0.827 - 0.901) | High heterogeneity; only 2 of 115 studies used prospective data collection. |

| Breast: Breast Cancer | Mammogram, Ultrasound, MRI | 0.868 - 0.909 (Range) | High heterogeneity; extensive variation in methodology and outcome measures between studies. |

Experimental Protocols for Robust Model Evaluation

Protocol 1: Cross-Dataset Evaluation for Medical Imaging Models

This protocol is designed to stress-test your model's generalizability.

- Dataset Selection: Choose multiple publicly available datasets relevant to your task (e.g., for crack detection, datasets like Structural Defects Network 2018, SCD, and CPC were used) [13].

- Data Preprocessing: Resize all images to a consistent resolution (e.g., 224x224 pixels) and apply the same normalization scheme across all datasets [13].

- Training Pipeline:

- Incorporating Augmentation: Use random flips and rotations to expand the effective diversity of your training data [13].

- Leveraging Transfer Learning: Start with a model pre-trained on a large, general dataset (like ImageNet) to benefit from learned fundamental features [13].

- Preventing Overfitting: Implement early stopping by monitoring performance on a held-out validation set from the training domain [13].

- Rigorous Evaluation:

- Self-Testing: Report the model's performance on a test set held out from the same dataset it was trained on.

- Cross-Testing: Evaluate the trained model directly on the test splits of the other datasets without any fine-tuning. This is the primary measure of generalizability [13].

Protocol 2: Translating Drug Response Predictions from PDX to Patients

This protocol outlines the key steps for applying the TRANSPIRE-DRP framework [14].

- Problem Formulation:

- Source Domain (

D_s): PDX models, represented as{ (x_i^s, y_i) }wherexis a genomic feature vector andyis a binary drug response label (sensitive/resistant). - Target Domain (

D_t): Patient tumors, represented as{ x_i^t }(unlabeled genomic features).

- Source Domain (

- Model Pre-training (Unsupervised):

- Objective: Learn a domain-invariant genomic representation.

- Method: Train an autoencoder on large-scale unlabeled genomic profiles from both PDXs and patients. The architecture uses separate private encoders for each domain and a shared encoder, with a shared decoder to reconstruct the genomic input.

- Model Adaptation (Supervised):

- Objective: Fine-tune the model to preserve drug response signals while aligning the PDX and patient domains.

- Method: Use the labeled PDX data to fine-tune the pre-trained encoder within a domain adversarial framework. A domain classifier tries to distinguish PDX from patient features, while the feature extractor is trained to fool it, thereby creating aligned representations.

Experimental Workflow Visualizations

Diagram 1: Cross-Dataset Model Evaluation Workflow

Diagram 2: TRANSPIRE-DRP Domain Adaptation Framework

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Computational Tools for Cross-Domain DL Research

| Item / Resource | Function / Application | Relevance to Cross-Dataset Performance |

|---|---|---|

| Patient-Derived Xenograft (PDX) Models | Preclinical cancer models with high biological fidelity to human tumors [14]. | Serves as the critical source domain data for translating drug response predictions to patients. |

| Micro-gap Plate (MGP) | A microfluidic device for high-throughput drug screening with extremely low cell requirements (e.g., 9,000 cells per test) [17]. | Enables the generation of robust drug response data from precious PDX and primary patient samples, expanding data available for model training. |

| Coherent Raman Scattering (CARS/SRS) Microscopy | A non-invasive, label-free imaging method to capture cellular-level morphological and chemical information [18]. | Provides high-quality, quantitative cellular data for training models to assess conditions like dermatitis, reducing reliance on subjective macroscale cues. |

| Domain Adversarial Neural Network | A deep learning architecture that includes a domain classifier to encourage domain-invariant feature learning [14]. | The core computational technique for bridging the distribution gap between source (e.g., PDX) and target (e.g., Patient) domains. |

| TensorFlow / PyTorch | Primary deep learning frameworks for building and training complex models like CNNs and adversarial networks [19]. | The foundational software infrastructure for implementing, experimenting with, and deploying domain adaptation models. |

| Experiment Management Tools (e.g., Neptune.ai) | Platforms to track hyperparameters, code/data versions, and metrics across many experiments [20]. | Essential for reproducibility and managing the complexity of hyperparameter tuning and multiple training runs inherent in cross-dataset research. |

Frequently Asked Questions (FAQ)

1. What is the fundamental difference between domain shift and overfitting? While both can cause poor model performance on new data, overfitting occurs when a model learns patterns specific to the training dataset (including noise) that do not represent the broader underlying data distribution. Domain shift, however, happens when the model is applied to data that comes from a different probability distribution than the training data, even if the model has generalized perfectly from its training set [21] [22]. You can identify overfitting if your model performs well on the training set but poorly on a held-out test set from the same distribution. Domain shift is indicated when the model performs well on the original test set but fails on data collected under different conditions (e.g., a new hospital, different season, or different patient population) [23].

2. My model has a low training error but a high validation error. Is this always caused by domain shift? Not necessarily. A large gap between training and validation error is a classic sign of overfitting [24] [25]. Before concluding that domain shift is the issue, you should first rule out overfitting by using standard regularization techniques such as:

- Dropout [24]

- L1/L2 regularization [24] [25]

- Early stopping [24]

- Reducing model complexity [25] If these measures successfully reduce the validation error on your original test set, the problem was overfitting. True domain shift is suspected when the model, after being properly regularized, still fails on data from a new, distinct environment [21].

3. What is a simple experimental technique to gauge the impact of domain shift before full deployment? Blocking is a heuristic technique that allows you to simulate domain shift during testing [21]. The core idea is to split your data in a way that makes the training/validation distribution different from the test distribution, mimicking a real-world shift.

- For time-series data: Instead of a random train/test split, put contiguous blocks of time in your test set (e.g., use the most recent 20% of data for testing). This assesses the model's ability to predict the future from the past [21].

- For data from multiple groups/individuals: Perform blocking at the group level. For example, put all data from a specific hospital or patient demographic group exclusively in the test set. This tests the model's performance on previously unseen groups [21].

4. What are the main types of domain shift I should be aware of? Domain shift problems are often categorized based on the nature of the distribution change [26]:

- Covariate Shift: The input distribution

P(X)changes between source and target domains, but the conditional distribution of the outputs given the inputsP(Y|X)remains the same. Example: A model trained on high-resolution MRI scans (source) is applied to low-resolution scans (target). The relationship between a tumor's appearance and its malignancy is unchanged, but the input images look different. - Prior Shift (or Label Shift): The distribution of the output labels

P(Y)changes, but the conditional distributionP(X|Y)is stable. Example: A model trained to diagnose a disease in a general hospital (where the disease is rare) is deployed in a specialist clinic (where the disease is common). The symptoms for the disease are the same, but the base rate of the disease is higher. - Concept Shift: The relationship between inputs and outputs changes, meaning

P(Y|X)is different. Example: The same clinical symptoms (input) might indicate different diseases (output) in different geographical regions due to varying prevalence of endemic illnesses.

5. How can I create a model that is inherently more robust to domain shift? Domain Adaptation is a subfield of transfer learning dedicated to this problem. The method you choose depends on what data is available from the target domain [23] [26].

- Unsupervised Domain Adaptation (UDA): Used when you have unlabeled data from the target domain. A popular method is Domain-Adversarial Training (e.g., DANN), where the model is trained to extract features that are indistinguishable between the source and target domains, forcing it to learn domain-invariant representations [23] [27].

- Supervised Domain Adaptation: Used when you have a small amount of labeled data from the target domain. This typically involves fine-tuning a model pre-trained on the source domain using the labeled target data [23] [26].

Troubleshooting Guide: Poor Cross-Dataset Performance

This guide provides a step-by-step methodology for diagnosing and addressing performance degradation caused by domain shift.

Step 1: Diagnose the Problem

First, systematically rule out other common issues before focusing on domain-specific solutions.

- Action 1.1: Overfit a Single Batch. Take a small batch of data (e.g., 2-4 samples) and try to drive the training loss to zero. If the model cannot, there is likely a implementation bug, not a domain shift issue [28].

- Action 1.2: Compare to a Known Baseline. Reproduce the results of a well-established model (e.g., ResNet) on a benchmark dataset (e.g., ImageNet). This verifies your training pipeline is correct. Then, test this baseline model on your target domain data; a performance drop strongly indicates domain shift [28].

- Action 1.3: Establish a Simple Baseline. Train a simple model (e.g., a linear classifier or a small CNN) on your source data and evaluate it on the target data. This provides a performance floor and confirms whether more complex models are learning useful, transferable features [28].

Step 2: Quantify the Shift and Set a Performance Target

Use blocking to measure the potential impact of domain shift and set a realistic goal.

- Action 2.1: Implement a Blocking Strategy. As described in the FAQ, use blocking to create a validation set that simulates your target domain. The performance gap between a standard validation set and this "blocked" validation set quantifies the expected degradation from domain shift [21].

- Action 2.2: Define Your Target Performance. Establish the minimum acceptable performance for clinical deployment. This could be based on human-level performance, published results on similar datasets, or clinical requirements [24].

Step 3: Implement Mitigation Strategies

Based on your diagnosis and data availability, choose and apply one or more of the following strategies.

- Action 3.1: Apply Standard Regularization. If you haven't already, implement dropout, weight decay (L2 regularization), and/or early stopping. This is a prerequisite to ensure any remaining performance gap is due to domain shift and not simple overfitting [24].

- Action 3.2: Employ Domain Adaptation.

- If you have labeled target data: Use Supervised Domain Adaptation by fine-tuning your source model on the target data [23].

- If you only have unlabeled target data: Use Unsupervised Domain Adaptation (UDA). The table below summarizes a real-world result using adversarial domain adaptation on chest X-rays [27].

Table 1: Quantitative Results of Adversarial Domain Adaptation (ADA) on a Nigerian Chest X-Ray Dataset [27]

| Source Domain (Training Data) | Performance without ADA (AUC) | Performance with Supervised ADA (AUC) |

|---|---|---|

| Dataset A | 0.81 | 0.94 |

| Dataset B | 0.79 | 0.96 |

| Dataset C | 0.83 | 0.95 |

- Action 3.3: Utilize Data Augmentation. Artificially expand your training data using transformations that mirror potential variations in the target domain. For medical images, this could include realistic variations in contrast, brightness, or minor rotations. This helps the model learn more invariant features [24].

Step 4: Plan for Dynamic Deployment

For clinical applications, assume that domain shift will occur over time and plan for continuous monitoring and updating [29].

- Action 4.1: Establish Feedback Loops. Implement systems to collect new patient data, outcomes, and user feedback in a structured way post-deployment [29].

- Action 4.2: Implement Continuous Monitoring. Monitor key performance metrics (e.g., accuracy, AUC) and data distributions in real-time to detect performance degradation or data/model drift early [29].

- Action 4.3: Enable Model Updating. Develop protocols for safely updating models using techniques like online learning or periodic fine-tuning with new data, following a framework like Dynamic Deployment [29].

Table 2: The Scientist's Toolkit: Key Methods and Their Functions

| Method / Reagent | Primary Function |

|---|---|

| Blocking | A data-splitting heuristic to simulate domain shift and gauge its potential impact on model performance [21]. |

| Domain-Adversarial Neural Networks (DANN) | An unsupervised domain adaptation technique that learns domain-invariant features by fooling a domain classifier [23] [27]. |

| Dynamic Deployment Framework | A systems-level approach for clinical trials and deployment that allows for continuous model monitoring, learning, and validation [29]. |

| Adversarial Domain Adaptation (ADA) | A feature-level adaptation technique that uses adversarial training to align the feature distributions of the source and target domains [27]. |

Experimental Protocol: Supervised Adversarial Domain Adaptation for Medical Imaging

This protocol details the methodology used in a published study that successfully addressed cross-population domain shift in chest X-ray classification [27].

1. Objective: To adapt a deep learning model trained on chest X-rays from source populations (e.g., the USA, Europe) to perform accurately on a target population (e.g., Nigeria) where a domain shift exists.

2. Hypothesis: Supervised Adversarial Domain Adaptation (ADA) will improve classification performance on the target domain by learning features that are invariant to the population-specific domain shift.

3. Materials (Research Reagents):

- Source Datasets: Publicly available chest X-ray datasets from populations different from the target (e.g., CheXpert, MIMIC-CXR).

- Target Dataset: A curated dataset of chest X-rays from the Nigerian population.

- Base Model: A convolutional neural network (CNN) such as DenseNet or ResNet, pre-trained on the source domain(s).

- Software: Deep learning framework (e.g., PyTorch, TensorFlow) with libraries for implementing adversarial training.

4. Methodology: The experimental workflow involves a two-stage training process to first learn general features from the source domain and then adapt them to be domain-invariant.

Workflow for Adversarial Domain Adaptation

Detailed Steps:

- Source Model Pre-training: Train the initial CNN (feature extractor and classifier) on the labeled source data using a standard supervised loss function (e.g., cross-entropy). Freeze the feature extractor weights after this stage [27].

- Adversarial Fine-Tuning:

- Setup: Introduce a domain discriminator, a small neural network that takes features from the feature extractor and tries to classify them as originating from the source or target domain.

- Adversarial Loop:

- The domain discriminator is trained to correctly distinguish between source and target features.

- The feature extractor is simultaneously trained to "fool" the discriminator by producing features that are indistinguishable across domains. This is typically achieved using a gradient reversal layer.

- The classifier continues to be trained to correctly predict labels from the source domain features.

- Outcome: This adversarial process forces the feature extractor to learn domain-invariant features that are predictive of the class label but not of the data domain [27].

5. Evaluation:

- Primary Metric: Compare the Area Under the Receiver Operating Characteristic Curve (AUC) on the Nigerian test set before and after applying ADA.

- Baseline Comparison: Compare the performance of the ADA model against the pre-trained source model without adaptation, and against other baseline methods like multi-task learning (MTL) or continual learning (CL) [27].

Building Robust Models: Optimization Techniques and Domain Adaptation Strategies for Cross-Dataset Success

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary goal of using data-centric strategies in cross-dataset evaluation? The primary goal is to improve model generalization and robustness by addressing dataset bias and domain shift. Cross-dataset evaluation trains models on one dataset and tests on others, revealing hidden artifacts and quantifying true performance in real-world, heterogeneous environments, which is critical for reliable deployment in fields like medical imaging and drug discovery [1].

FAQ 2: Why does model performance often degrade significantly in cross-dataset scenarios? Performance degrades due to dataset bias, where each dataset has unique selection criteria, acquisition hardware, or annotation protocols. This creates a domain shift, causing models to overfit to dataset-specific cues and artifacts rather than learning generalizable features. Empirical studies show that even state-of-the-art models can experience precipitous drops in performance metrics like R² scores when evaluated out-of-domain [1].

FAQ 3: What is label reconciliation and why is it a critical step? Label reconciliation is the process of harmonizing class ontologies and annotation conventions across different datasets. It involves meticulous remapping of labels (e.g., reconciling "bike" with "bicycle") to create a consistent, normalized label space. This is a prerequisite for valid cross-dataset evaluation and multi-domain aggregation, as inconsistent semantics otherwise invalidate performance comparisons [1].

FAQ 4: How does multi-domain aggregation improve model robustness? Multi-domain aggregation involves jointly training models on multiple, diverse datasets. This technique dilutes the influence of dataset-specific artifacts and biases by exposing the model to a wider variety of data distributions, acquisition protocols, and contextual features. It is a validated data-centric approach for learning more invariant and generalizable features [1].

FAQ 5: What role does data augmentation play in this context? Data augmentation generates high-quality artificial data by manipulating existing samples, directly addressing data scarcity and class imbalance. It introduces diversity into the training dataset, filling the gap between training data and real-world applications. This is a series of techniques proven to significantly improve the applicability and generalization capability of AI models, especially when dealing with limited or imbalanced data [30].

Troubleshooting Guides

Problem 1: Sharp Performance Drop in Cross-Dataset Testing

Symptoms: Your model achieves high accuracy on its source (training) dataset but shows a dramatic performance decrease (e.g., large drop in R², accuracy, or Dice score) when evaluated on a new target dataset [1].

Diagnosis and Solutions:

Diagnosis 1: Severe Domain Shift

- Root Cause: The data distributions between your source and target datasets are too different, often due to variations in data acquisition protocols, sensor types, or environmental contexts [1].

- Solution: Implement unsupervised domain adaptation.

- Methodology: Perform online fine-tuning on the target dataset using pseudo-labels generated by the model itself or by using presumed positive/negative pairs. This allows the model to adapt to the new distribution without requiring labeled target data [1].

- Protocol:

- Train your model on the labeled source dataset.

- Use the trained model to generate pseudo-labels for the unlabeled target dataset.

- Fine-tune the model on the target dataset using these pseudo-labels, typically with a lower learning rate.

Diagnosis 2: Overfitting to Dataset-Specific Artifacts

- Root Cause: The model has learned shortcuts or biases specific to your training dataset instead of the underlying task [1].

- Solution: Apply multi-domain aggregation and dataset-aware training.

- Methodology: Instead of training on a single source, aggregate multiple diverse datasets. Use techniques like a dataset-aware loss function, which encourages the model to learn features that are discriminative and invariant to the dataset origin [1].

- Protocol:

- Curate and align multiple datasets using label reconciliation.

- During training, incorporate a loss component that penalizes the model for being able to predict which dataset a sample came from.

Problem 2: Label Space Mismatch and Inconsistent Ontologies

Symptoms: You are unable to directly evaluate a model trained on Dataset A against Dataset B because their class labels are different (e.g., "automobile" vs. "car") or have different levels of granularity [1].

Diagnosis and Solutions:

- Diagnosis: Label Misalignment

- Root Cause: Datasets were annotated under different protocols, using different class definitions or ontologies [1].

- Solution: Perform label reconciliation.

- Methodology: Create a mapping schema that harmonizes the label spaces across all datasets involved in your experiment. This often requires domain expertise to correctly merge or map fine-grained classes into a unified, coarser-grained ontology [1].

- Protocol:

- Audit Labels: List all class labels from every dataset.

- Define Unified Ontology: Establish a common set of class labels that all original labels can map to.

- Create Mapping Rules: Define rules for converting original labels to the unified labels (e.g., map both "bike" and "bicycle" to a unified "bicycle" class).

- Apply Mapping: Apply this mapping consistently to all datasets before training or evaluation.

Problem 3: Handling Class Imbalance in Aggregated Data

Symptoms: After aggregating multiple datasets, the combined dataset exhibits severe class imbalance, leading to poor model performance on minority classes during cross-dataset testing [1].

Diagnosis and Solutions:

- Diagnosis: Amplified Imbalance from Aggregation

- Root Cause: Combining datasets can compound existing imbalances, making minority classes even more underrepresented [1].

- Solution: Leverage data augmentation and imbalance-aware metrics.

- Methodology: Use advanced data augmentation techniques, such as synthetic data generation, to create more samples for the minority classes. Furthermore, avoid using overall accuracy and instead rely on metrics that are robust to imbalance [30] [1].

- Protocol:

- Synthetic Data Generation: Use generative models (e.g., GANs, VAEs, Diffusion Models) to artificially create labeled data for minority classes from their existing samples [30] [31].

- Use Robust Metrics: Evaluate your model using Matthews Correlation Coefficient (MCC) or balanced accuracy instead of standard accuracy for a more reliable performance assessment [1].

Experimental Protocols & Data Presentation

Key Metrics for Cross-Dataset Evaluation

The following table summarizes the essential metrics for quantifying model performance and generalization in cross-dataset experiments [1].

Table 1: Key Metrics for Cross-Dataset Evaluation

| Metric Name | Formula/Description | Use Case |

|---|---|---|

| Error Rate | ( \text{Error}_{cross} = 1 - \frac{\text{Correct predictions on target}}{\text{Total target samples}} ) | Measures basic performance on a target dataset. |

| Normalized Performance | ( g_{norm}[s, t] = \frac{g[s, t]}{g[s, s]} ) | Compares cross-dataset performance to within-dataset performance for a source s. |

| Aggregated Off-Diagonal Score | ( ga[s] = \frac{1}{d - 1} \sum{t \ne s} g[s, t] ) | Provides a single score for a model's average generalization from source s to all other d datasets. |

| Matthews Correlation Coefficient (MCC) | - | A balanced metric reliable even when classes are of very different sizes. |

| Simulation Quality (A_O) | - | Quantifies the fidelity of synthetic datasets in cross-domain scenarios [1]. |

| Transfer Quality (S_O) | - | Quantifies the domain coverage and practical utility of synthetic datasets [1]. |

Standard Cross-Dataset Evaluation Protocol

This protocol provides a step-by-step methodology for a robust cross-dataset evaluation benchmark [1].

Dataset Curation & Label Reconciliation:

- Select multiple datasets relevant to your task.

- Perform label reconciliation to create a unified label space across all datasets.

Source-Target Partitioning:

- Define all possible or a specific set of source-target dataset pairs. A common scenario is training on one or more large public datasets and testing on smaller, more specific ones.

Model Training & Evaluation:

- For each defined source-target pair

(s, t):- Train a model exclusively on the source dataset

s. - Evaluate the trained model on the target dataset

t. - Record all relevant metrics from Table 1.

- Train a model exclusively on the source dataset

- For each defined source-target pair

Analysis & Visualization:

- Compile results into a performance matrix where rows are sources and columns are targets.

- Use visualization tools like performance hexagons or rank plots to compare model generalization statistically.

Workflow and Strategy Visualization

Diagram: Cross-Dataset Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Techniques for Data-Centric Research

| Tool / Technique | Category | Function |

|---|---|---|

| Synthetic Data Generation (GANs, VAEs, Diffusion) [30] [31] | Data Augmentation | Artificially creates labeled data to address scarcity, balance classes, and preserve privacy. |

| Semi-Supervised Learning (SSL) [31] | Learning Paradigm | Leverages a small labeled dataset alongside vast unlabeled data to reduce manual labeling costs. |

| Self-Supervised Learning (Self-SL) [31] | Learning Paradigm | Pretrains models on unlabeled data by solving pretext tasks, creating robust initial representations. |

| Label Reconciliation Framework [1] | Data Preprocessing | Harmonizes class ontologies across datasets to enable valid multi-domain aggregation and evaluation. |

| Dataset-Aware Loss Function [1] | Training Strategy | Encourages the model to learn features invariant to the specific dataset origin, improving generalization. |

| Unsupervised Domain Adaptation [1] | Adaptation Technique | Adapts a model to a new, unlabeled target domain using pseudo-labeling and fine-tuning. |

| Digital Twin Technology [32] | Simulation | Creates a virtual replica of a system (e.g., data center) for simulation and performance planning. |

Troubleshooting Guides & FAQs

This technical support center addresses common challenges researchers face when applying model compression techniques to improve the efficiency and generalization of deep learning models, particularly in cross-dataset scenarios like drug response prediction.

Pruning

Q: My model's accuracy drops severely after pruning. How can I recover the performance?

A: Significant accuracy drop usually indicates overly aggressive pruning or insufficient fine-tuning. Implement these steps:

- Iterative Pruning: Don't remove all target weights at once. Use an iterative process: prune a small percentage (e.g., 10-20%), then fine-tune the model, and repeat. This allows the network to adapt gradually [33] [34].

- Fine-Tuning with a Lower Learning Rate: After pruning, fine-tune the model using your training data with a lower learning rate (e.g., 1/10th of the original training rate) to recover performance without distorting the remaining weights [34].

- Validate Sparsity Impact: Use a small calibration dataset to analyze the sensitivity of different layers to pruning. Avoid pruning critical layers, such as the final classification head, in early stages [35].

Q: How do I decide between structured and unstructured pruning?

A: The choice depends on your deployment environment and performance goals [36] [34].

- Choose Structured Pruning (removing entire neurons, filters, or layers) if your goal is to achieve faster inference on standard hardware (GPUs/CPUs) and to reduce model size directly. It creates a smaller, dense model that is computationally efficient [35] [33].

- Choose Unstructured Pruning (removing individual weights) if your primary goal is to maximize the compression rate and model sparsity for storage, and you have access to specialized software or hardware libraries that can accelerate sparse matrix computations [33] [34].

Experimental Protocol: Depth Pruning of a Transformer Model [35]

| Step | Description | Key Parameters |

|---|---|---|

| 1. Model & Data Preparation | Convert a pre-trained model (e.g., Hugging Face format) to a compatible framework format (e.g., NVIDIA NeMo). Prepare a small calibration dataset. | Model: Qwen2-7B. Dataset: WikiText (1024 samples). |

| 2. Pruning Execution | Run a pruning script to reduce the model's depth by removing a specific number of transformer layers. | target_num_layers: 24 (original: 32). seq_length: 4096. |

| 3. Fine-Tuning | Use Knowledge Distillation to fine-tune the pruned model, using the original full model as the teacher. | teacher_path: Original model. lr: 1e-4. max_steps: 40. |

Quantization

Q: What are the best practices for deciding the level of quantization (e.g., 8-bit vs. 4-bit)?

A: The decision involves a trade-off between efficiency and accuracy [37] [34].

- Use 8-bit Quantization as a default starting point. It offers a significant model size reduction (about 75%) and speedup with minimal accuracy loss for most networks and is widely supported by hardware [37].

- Reserve 4-bit or lower precision for highly resource-constrained environments (e.g., edge devices). Be aware that this can lead to more substantial accuracy degradation, especially for models that are not robust to such low precision. Techniques like Quantization-Aware Training (QAT) are often necessary to maintain acceptable performance [33].

Q: How can I mitigate the accuracy loss from Post-Training Quantization (PTQ)?

A: The key is proper calibration [34].

- Use Representative Calibration Data: Your calibration dataset must be a representative subset of your real-world data, not random noise. This helps the quantization algorithm accurately determine the range of activations and weights.

- Try Different Quantization Schemes: Experiment with quantizing only the weights (which is safer) versus both weights and activations (which is more efficient but can impact accuracy more).

- Switch to Quantization-Aware Training (QAT): If PTQ accuracy is unacceptable, use QAT. This method models the quantization error during the fine-tuning process, allowing the model to learn parameters that are more robust to lower precision [37] [34].

Quantization Performance Comparison (Sentiment Analysis Tasks) [38]

| Model | Compression Technique | Accuracy (%) | F1-Score (%) | Energy Reduction (%) |

|---|---|---|---|---|

| BERT | Pruning & Distillation | 95.90 | 95.90 | 32.097 |

| DistilBERT | Pruning | 95.87 | 95.87 | -6.709* |

| ELECTRA | Pruning & Distillation | 95.92 | 95.92 | 23.934 |

| ALBERT | Quantization | 65.44 | 63.46 | 7.120 |

Note: The negative energy reduction for DistilBERT indicates an increase in consumption, highlighting that compression effects are not always additive and depend on the base model.

Knowledge Distillation

Q: In which situations is distillation a better choice than quantization or pruning?

A: Distillation is particularly advantageous in the following scenarios [33] [36]:

- Architectural Flexibility: When you need a student model with a completely different, more efficient architecture (e.g., a smaller transformer, a CNN) than the large teacher model.

- Task-Specific Optimization: When you want to train a small model that specializes in a specific task or domain by learning from a large, generalist teacher model. This is common in drug discovery, where a model distilled on general bio-data is fine-tuned for a specific prediction task.

- Cross-Dataset Generalization: When a powerful teacher has learned robust, generalizable features from multiple datasets, distillation can transfer this generalization ability to a smaller student, which is a key goal in cross-dataset research [4].

Q: The student model fails to match the teacher's performance. What can I do?

A: This is often due to a capacity gap or suboptimal distillation loss.

- Adjust the Loss Temperature: Increase the temperature parameter (T) in the softmax function to create "softer" target probabilities from the teacher. This provides more information about class relationships (e.g., that a "cat" is more similar to a "tiger" than a "car") and helps the student learn more effectively [35] [34].

- Use Feature-Based Distillation: Don't just match the final outputs. Force the student to mimic the teacher's intermediate hidden layer representations or attention maps. This provides a richer learning signal than output logits alone [35] [33].

- Tune the Loss Weight (Alpha): The total loss is often

alpha * distillation_loss + (1 - alpha) * task_loss. Experiment with the alpha parameter to balance learning from the teacher versus learning from the ground-truth labels [34].

Experimental Protocol: Response-Based Knowledge Distillation [35] [34]

| Step | Description | Key Parameters |

|---|---|---|

| 1. Teacher Model | A large, pre-trained, and high-performing model that serves as the source of knowledge. | Model: Qwen2-7B. |

| 2. Student Model | A smaller, more efficient model architecture to be trained. | Model: Architecturally smaller (e.g., fewer layers/parameters). |

| 3. Distillation Training | Train the student model to mimic the teacher's soft label distributions, often while also using the true hard labels. | temperature (T): 3-10. alpha: 0.5-0.7. |

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Technique | Function in Optimization | Example Use Case |

|---|---|---|

| TensorRT Model Optimizer | A comprehensive framework that streamlines the application of pruning and distillation at scale [35]. | Automating the pipeline for creating a small, efficient model from a large pre-trained LLM for deployment [35]. |

| CodeCarbon | An open-source tool for tracking energy consumption and carbon emissions during model training and inference [38]. | Quantifying the environmental impact and energy efficiency gains from different compression techniques [38]. |

| LoRA / QLoRA | Parameter-Efficient Fine-Tuning (PEFT) methods that adapt large models to new tasks by updating only a very small number of parameters [33]. | Efficiently fine-tuning a base drug prediction model for a new, smaller dataset or a specific cancer type with minimal computational cost [33]. |

| Quantization-Aware Training (QAT) | A methodology that incorporates quantization simulation during training, allowing the model to adapt to lower precision [37] [34]. | Preparing a model for deployment on edge devices with 8-bit integer precision while minimizing accuracy loss. |

| NeMo Framework | A toolkit for building, training, and optimizing conversational AI models, with strong support for compression [35]. | Provides ready-to-use scripts for model pruning and distillation experiments, as cited in the protocols above [35]. |

FAQs: Core Concepts and Decision Making

Q1: What is the fundamental difference between transfer learning and fine-tuning?

A1: While both techniques adapt pre-trained models to new tasks, their scope and approach differ. Transfer Learning typically freezes most of the pre-trained model's layers and only trains newly added final layers on the new data. It is a safer approach for smaller datasets. In contrast, Fine-Tuning updates part or all of the pre-trained model's weights, allowing for deeper adaptation to the new task, which is beneficial for larger datasets [39].

Q2: When should I choose fine-tuning over transfer learning for my project?

A2: The choice depends on your dataset size, computational resources, and the similarity between your new task and the model's original training task [39]. The following table summarizes the key decision factors:

| Factor | Prefer Transfer Learning | Prefer Fine-Tuning |

|---|---|---|

| Dataset Size | Small | Large enough to avoid overfitting |

| Task Similarity | New task is very similar to the original | New task differs significantly from the original |

| Compute Resources | Limited | Sufficient for more extensive training |

| Risk of Overfitting | Lower risk | Higher risk, requires careful management |

Q3: What are Parameter-Efficient Fine-Tuning (PEFT) methods and why are they important?

A3: PEFT methods, such as LoRA (Low-Rank Adaptation) and QLoRA (Quantized LoRA), are revolutionary techniques that dramatically reduce the computational cost of adaptation [40]. Instead of updating all of the model's parameters, LoRA injects and trains small, low-rank matrices into the model layers, freezing the original weights. This can reduce the number of trainable parameters to a tiny fraction of the original model size. QLoRA goes a step further by first quantizing the base model to 4-bit precision, making it possible to fine-tune very large models (e.g., 65B parameters) on a single GPU [40].

Q4: My fine-tuned model performs well on its target task but has forgotten its general knowledge. What happened?

A4: This is a classic problem known as catastrophic forgetting [40] [41]. It occurs when a model over-specializes on the new, fine-tuning dataset, degrading its performance on tasks it previously handled well. Mitigation strategies include:

- Using PEFT methods like LoRA, which are less prone to catastrophic forgetting as the original model weights are preserved [40].

- Employing a multi-task learning objective that combines the new task with a sample of the original tasks [41].

- Carefully curating the fine-tuning data to include a mix of the new domain and general-domain data.

Troubleshooting Guides: Common Experimental Issues

Problem: Unexpected performance drop on out-of-distribution (OOD) data after fine-tuning.

- Potential Cause: The fine-tuning dataset introduced hidden biases or altered the model's sensitivity to certain linguistic or statistical features not present in the OOD data [42]. Studies have shown that factors like source label imbalance or output length distribution can negatively impact OOD performance, even if the source and target tasks seem unrelated [42].

- Solution:

- Analyze Source Data Traits: Before fine-tuning, profile your dataset's statistical properties, such as label distribution, average output length, and vocabulary usage [42].

- Systematic Evaluation: Construct a performance matrix by evaluating your fine-tuned model not just on the target task, but on a suite of validation tasks representing different latent abilities (e.g., reasoning, sentiment, NLI) [42]. This helps uncover negative transfer effects.

- Leverage PCA: Apply Principal Component Analysis (PCA) to the performance matrix to identify the latent "traits" (e.g., Reasoning, Arithmetic) that the fine-tuning process has most affected, guiding your data selection [42].

Problem: The fine-tuned model's outputs are an unnatural length.

- Potential Cause: The model has overfitted to the generation length proclivities of your fine-tuning dataset. If your training data consists mostly of short responses, the model will learn to generate short responses, even when longer, more detailed answers are required [42].

- Solution: Audit and diversify the length distribution of examples in your fine-tuning dataset. Ensure it contains a representative mix of short, medium, and long-form outputs appropriate for your application.

Problem: Gradient conflicts and unstable training in a multi-task learning setup.

- Potential Cause: In frameworks designed for tasks like simultaneous drug-target affinity prediction and molecule generation, gradients from different tasks can point in opposing directions, leading to optimization challenges and biased learning [5].

- Solution: Implement a gradient harmonization algorithm. For example, the FetterGrad algorithm was developed specifically for multi-task drug discovery models. It works by minimizing the Euclidean distance between task gradients, keeping them aligned and mitigating conflicts during training [5].

Experimental Protocols and Methodologies

Protocol 1: Analyzing Cross-Task Transfer Effects

This methodology helps deconstruct the interactions between datasets during fine-tuning, which is crucial for optimizing cross-dataset performance [42].

- Model Training: Fine-tune multiple instances of a base model (e.g., Llama 3.2 3B), each on a different source dataset (e.g., MetaMath, Goat, PAWS, MNLI, Flipkart).

- Evaluation Matrix Construction: Evaluate each fine-tuned model on all source datasets and a set of diverse target tasks (e.g., GSM8K for math, IMDB for sentiment). Organize the results into an

I x Nperformance matrix, whereIis the number of fine-tuned models andNis the number of evaluation datasets. - Latent Trait Discovery: Apply Principal Component Analysis (PCA) to the performance matrix. The principal components represent latent abilities (e.g., "Reasoning," "Sentiment Classification") that the models have acquired.

- Outlier Analysis: Identify and investigate performance matrix outliers (e.g., a model fine-tuned on dataset A performs surprisingly well or poorly on unrelated dataset B). Correlate these outliers with hidden statistical factors of the source data.

Protocol 2: Standardized Workflow for Drug-Target Affinity Prediction

This protocol outlines the core steps for a multi-task deep learning framework in drug discovery, as exemplified by DeepDTAGen [5].

- Data Preparation: Use benchmark datasets like KIBA, Davis, or BindingDB. Represent drugs as SMILES strings or molecular graphs, and proteins as amino acid sequences.

- Model Architecture (DeepDTAGen):

- Feature Encoder: Use a shared encoder (e.g., Graph Neural Network for drugs, CNN or Transformer for protein sequences) to create a common latent feature space.

- Multi-Task Heads: Attach two prediction heads to the shared encoder:

- Regression Head: For predicting continuous Drug-Target Binding Affinity (DTA) values.

- Generative Head: A transformer decoder for generating novel, target-aware drug molecules (SMILES strings).

- Training with Gradient Harmonization: Train the model using a combined loss function (e.g., Mean Squared Error for DTA and cross-entropy for generation). Employ the FetterGrad algorithm to align gradients from the two tasks and prevent optimization conflicts [5].

- Evaluation:

- DTA Prediction: Use MSE, Concordance Index (CI), and R2m.

- Drug Generation: Assess the Validity, Novelty, and Uniqueness of generated molecules, followed by chemical property analysis (Solubility, Drug-likeness).

Multi-Task Drug Discovery Model Workflow

The Scientist's Toolkit: Key Research Reagents

The following table details essential "reagents" — datasets, models, and algorithms — for conducting research in model adaptation for cross-domain performance.

| Research Reagent | Function & Explanation | Example Use Case |

|---|---|---|

| LoRA (Low-Rank Adaptation) | A PEFT method that adds small, trainable low-rank matrices to model layers. Drastically reduces compute and memory needs, enabling fine-tuning of large models on limited hardware [40]. | Adapting a 7B parameter LLM on a single GPU for a specific domain like legal document analysis. |

| Cross-Task Performance Matrix | An I x N matrix organizing performance scores of I fine-tuned models on N datasets. Serves as the foundational data for analyzing transfer learning effects and latent trait discovery [42]. |

Systematically quantifying how fine-tuning on a math dataset affects performance on sentiment analysis and NLI tasks. |

| PCA (Principal Component Analysis) | A dimensionality reduction technique applied to the performance matrix. It uncovers the underlying latent abilities (e.g., reasoning, sentiment) that are enhanced or degraded by fine-tuning [42]. | Identifying that fine-tuning on dataset A primarily strengthens a "Reasoning" trait, while dataset B strengthens a "Linguistic Formality" trait. |

| FetterGrad Algorithm | A custom optimization algorithm designed for multi-task learning. It mitigates gradient conflicts between tasks by minimizing the Euclidean distance between their gradients, ensuring stable and balanced learning [5]. | Training a unified model that simultaneously predicts drug-target affinity and generates novel drug candidates. |

| Domain-Specific Benchmarks | Evaluation datasets from specialized fields (e.g., biomedical text, clinical notes, financial reports). Critical for measuring true in-domain performance gains after adaptation [41]. | Evaluating a model fine-tuned on biomedical literature using the BLURB benchmark to assess its grasp of medical concepts. |

This technical support center provides troubleshooting guides and FAQs for researchers and scientists designing deep learning models for robust cross-dataset performance.

Troubleshooting Guides

Guide 1: Diagnosing and Remedying Poor Cross-Dataset Generalization

Problem: Your model performs well on its training dataset but shows significantly degraded performance on new, external datasets.

Diagnosis Steps:

- Perform a Cross-Dataset Evaluation: Train your model on your primary source dataset and evaluate it on one or more held-out target datasets. Use the formula to calculate the cross-dataset error rate:

Error_cross = 1 - (Number of correct predictions on target dataset / Total number of target test samples)[1]. - Check for Dataset Bias: Investigate differences in data acquisition, annotation protocols, and class definitions between your source and target datasets. Inconsistent semantics or annotation artifacts are common culprits [1].

- Analyze the Performance Drop: Calculate the normalized performance for a source/target pair as

g_norm[s, t] = g[s, t] / g[s, s], whereg[s, s]is the within-dataset performance. A low ratio indicates poor generalization [1].

Solutions:

- Architectural Adaptation: Implement a multi-task learning architecture with separate task-specific layers for different domains or subjects, while maintaining a shared feature representation to learn invariant features [43].

- Causal Feature Learning: Employ a sample reweighting strategy to eliminate spurious correlations introduced by selection bias and iteratively estimate the causal effect between features and labels to identify truly invariant features [44].

- Reconcile Label Spaces: Carefully map and consolidate class labels and feature extraction pipelines across datasets to reduce semantic drift and ensure valid comparisons [1].

Guide 2: Addressing Training Instability in Complex Architectures

Problem: During training, the model's loss becomes volatile, shows explosions, or fails to converge, especially when using deep or specialized architectures for invariance.

Diagnosis Steps:

- Conduct a Learning Rate Sweep: Perform a hyperparameter search to find the best learning rate (lr). Then, plot training loss curves for learning rates just above lr [45].

- Monitor Gradient Norms: Log the L2 norm of the full loss gradient during training. Look for outlier values, which can cause sudden instability in the middle of training [45].

- Identify Instability Type: Determine if the instability occurs at initialization/early training or suddenly in the middle of training, as this guides the solution [45].

Solutions:

- Apply Learning Rate Warmup: Prepend a schedule that ramps up the learning rate from 0 to a stable

base_learning_rateoverwarmup_steps. This is best for early training instability. The stable rate should be at least one order of magnitude larger than the unstable rate [45]. - Use Gradient Clipping: If the gradient norm

|g|is greater than a thresholdλ, set the new gradient tog' = λ * g / |g|. This helps with both early and mid-training instability. Set the threshold based on the 90th percentile of observed gradient norms [45]. - Leverage Normalization: Ensure inputs are normalized, and consider adding normalization layers (like Batch Normalization) within the network. For residual connections, normalize as the last operation before adding to the residual branch:

x + Norm(f(x))[45].

Frequently Asked Questions (FAQs)

Q1: What are the most effective architectural patterns for learning features that are invariant across different data distributions?

A1: Two state-of-the-art approaches are:

- Multi-Task with Subject-Specific Layers: This architecture, used in VALERIAN, involves a shared feature extraction backbone. The features are then fed into separate, parallel task-specific layers (e.g., one per subject or data domain). This allows the model to handle distribution shifts and noisy labels on a per-domain basis while benefiting from a common, robust feature representation [43].

- Causally Invariant Feature Learning: FedCIFL is a novel approach that uses a sample reweighting strategy to eliminate spurious correlations. It iteratively estimates the federated causal effect between each feature and the labels, refining the set of confounding features to identify the true invariant causal features, which greatly improves out-of-distribution performance [44].

Q2: My model overfits the training data quickly. How can I design my network to improve generalization?

A2: Beyond gathering more data, consider these architectural and training strategies:

- Regularization Techniques: Integrate L1 or L2 weight regularization into your loss function to prevent weights from becoming too large. Use Dropout, which randomly deactivates a fraction of neurons during training to prevent overspecialization [46].

- Early Stopping: Monitor performance on a validation set and halt training when performance on this set begins to degrade, indicating the start of overfitting [46].

- Simplify the Architecture: Start with a simple model (e.g., a single hidden layer) and a small training set (e.g., 10,000 examples) to establish a baseline and ensure it can learn effectively before ramping up complexity [28].

Q3: What is the standard experimental protocol for evaluating cross-dataset performance?

A3: The core protocol involves:

- Dataset Partitioning: Designate one or more datasets as "source" for training and distinct datasets as "target" for testing [1].

- Training and Evaluation: Systematically train models on source datasets and evaluate them on target datasets. This is often done for all possible source-target pairs [1].

- Performance Metrics: Use standard metrics (Accuracy, F1, AUROC) but report them in a cross-dataset context. Key constructs include:

- Visualization: Use performance matrices to visualize results across all dataset pairs [1].

Q4: How can I debug my model if it fails to learn anything useful from the data?

A4: Follow this structured debugging workflow:

- Start Simple: Use a simple architecture (e.g., LeNet for images, 1-layer LSTM for sequences) and sensible defaults (ReLU activation, normalized inputs) [28].

- Overfit a Single Batch: Try to drive the training error on a single, small batch of data arbitrarily close to zero. This tests the model's basic capacity. If it fails, check for issues like incorrect loss functions or data preprocessing [28] [46].

- Compare to a Known Result: Reproduce the results of an official implementation on a benchmark dataset to verify your training pipeline is correct [28].

- Check Intermediate Outputs: Use debugging tools to track the outputs and gradients after each layer, ensuring they are within expected ranges and are not vanishing or exploding [46].

Experimental Protocols & Data

Table 1: Cross-Dataset Performance Metrics

| Metric Name | Formula | Use Case |

|---|---|---|

| Cross-Dataset Error Rate | Error_cross = 1 - (Correct Predictions / Total Test Samples) |

Measures absolute performance drop on a target dataset [1]. |

| Normalized Performance | g_norm[s, t] = g[s, t] / g[s, s] |

Quantifies relative performance drop from source (s) to target (t) [1]. |

| Aggregated Off-Diagonal Score | g_a[s] = (1/(d-1)) * Σ g[s,t] for t≠s |

Provides a single score for a model's average generalization across all other datasets [1]. |

Table 2: Invariant Feature Learning Methods Performance