Beyond Bailenger: A Comparative Analysis of FEA and Traditional Methods for Biomedical Concentration and Detection

This article provides a critical evaluation of Finite Element Analysis (FEA) alongside traditional physical concentration and testing methods, with a specific focus on applications in biomedical research and drug development.

Beyond Bailenger: A Comparative Analysis of FEA and Traditional Methods for Biomedical Concentration and Detection

Abstract

This article provides a critical evaluation of Finite Element Analysis (FEA) alongside traditional physical concentration and testing methods, with a specific focus on applications in biomedical research and drug development. It explores the foundational principles of both approaches, details their methodological applications in processes like sample preparation and viral detection, and addresses key troubleshooting and optimization strategies. A core component is a rigorous comparative analysis of validation paradigms, weighing computational predictions against empirical data from methods like filtration-centrifugation and precipitation. Aimed at researchers and scientists, this review synthesizes how a hybrid strategy, integrating FEA's predictive power with the tangible validation of traditional methods, can accelerate innovation and enhance reliability in complex biomedical workflows.

Understanding the Core Principles: From Computational FEA to Physical Concentration Methods

In engineering and scientific research, predicting how a product or material will behave under real-world conditions is paramount to ensuring safety, reliability, and performance. Two fundamental approaches dominate this analytical landscape: the computational power of Finite Element Analysis (FEA) and the empirical foundation of Traditional Engineering Methods, often referred to as traditional stress testing or hand calculations [1] [2].

FEA is a computer-based simulation technique that breaks down complex physical structures into a finite number of small, interconnected elements. By applying mathematical models to this mesh, engineers can predict how the entire structure will react to forces, vibration, heat, and other physical effects [1] [3]. In contrast, traditional methods rely on established formulas and principles derived from engineering theory. These hand calculations are often used for simpler designs with predictable behaviors and are frequently mandated by industry codes for validation [1] [2]. This guide provides an objective comparison for researchers and development professionals, framing the selection of an analytical method as a critical step in the research and development workflow.

Fundamental Principles and Methodologies

Finite Element Analysis (FEA)

FEA operates on the principle of discretization, subdividing a complex geometry into a mesh of simpler elements. The process follows a defined workflow to approximate the behavior of a continuum [1]:

- Model Creation: A digital CAD model of the component is developed.

- Meshing: The model is divided into a network of small, simple finite elements (e.g., tetrahedrons, hexahedrons). The density of this mesh can be increased in areas of anticipated high stress for greater accuracy.

- Material Property Assignment: Key properties such as Young's modulus, Poisson's ratio, and yield strength are assigned to the model.

- Application of Boundary Conditions and Loads: Real-world constraints (e.g., fixed points) and forces are applied to the simulation.

- Solution and Post-Processing: A solver computes the results, which are then analyzed through visualizations of stress distribution, strain, and deformation [1].

Traditional Engineering Methods

Traditional methods are grounded in analytical mechanics and applied mathematical formulas. These calculations are based on fundamental principles of statics, dynamics, and mechanics of materials, using equations that have been validated through decades of empirical research [2]. They often apply simplifying assumptions to make problems tractable, such as treating components as beams, plates, or shells with standard support conditions and load paths. This approach is codified in many industry standards (e.g., ASME, ASTM, ISO) which provide approved formulas for the design and validation of common components like pressure vessels, beams, and shafts [1].

Comparative Analysis: FEA vs. Traditional Methods

The choice between FEA and traditional methods is not a matter of which is universally superior, but which is more appropriate for a given research or design context. The table below summarizes their core characteristics.

Table 1: Core Characteristics of FEA and Traditional Methods

| Feature | Finite Element Analysis (FEA) | Traditional Engineering Calculations |

|---|---|---|

| Fundamental Principle | Discretization of complex geometry into a mesh of elements for numerical solution [1]. | Application of closed-form analytical formulas and principles from engineering theory [2]. |

| Typical Workflow | CAD modeling → Meshing → Applying loads & BCs → Solving → Post-processing [1]. | Problem definition → Selecting appropriate formula → Inputting parameters → Manual calculation [2]. |

| Analysis Capability | Handles complex geometries, non-linear materials, dynamic loads, and multi-physics (thermal, fluid) problems [3]. | Best for simple geometries, linear material behavior, and static loads with predictable paths [2]. |

| Key Strength | High detail in stress analysis and ability to identify concentration areas and failure modes in complex designs [1]. | Speed, cost-effectiveness for simple problems, and strong foundation for code-compliant design [2]. |

| Primary Limitation | Computational intensity; requires specialized expertise; accuracy depends on correct input and assumptions [1]. | Becomes inaccurate or inapplicable for complex geometries, materials, or load cases [2]. |

Experimental Protocols and Validation

A Representative Experimental Workflow

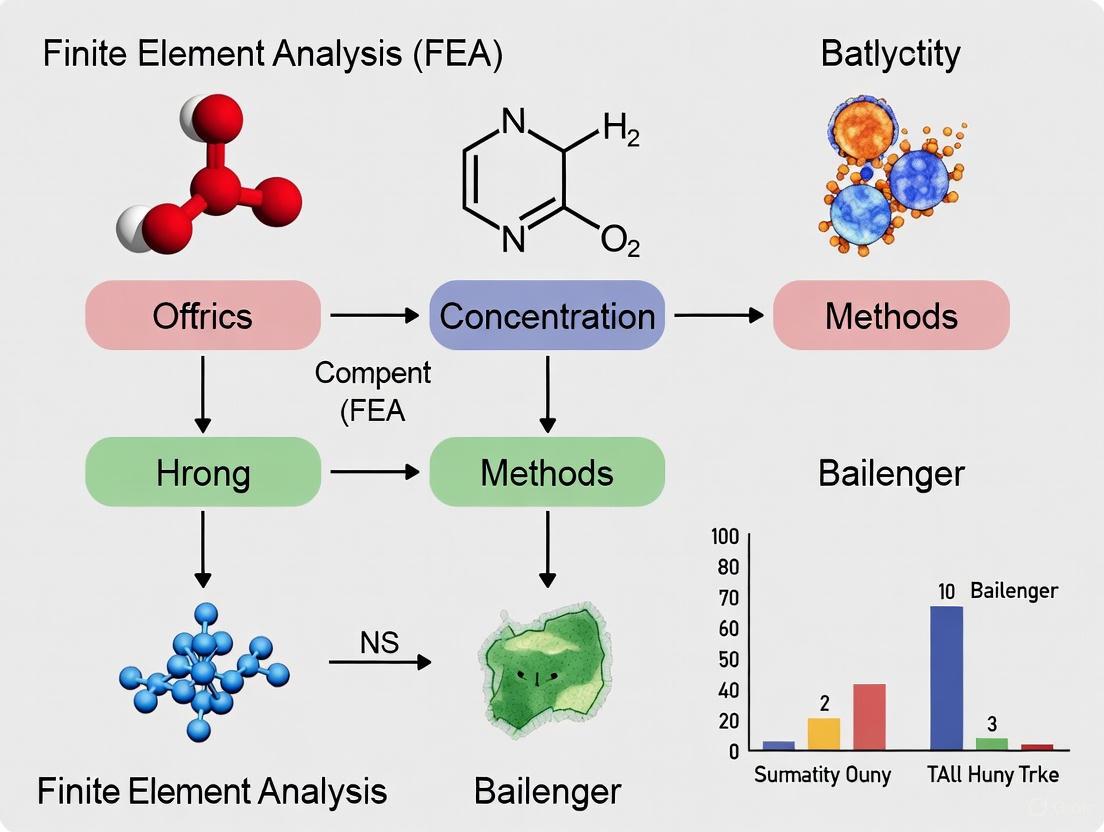

A robust research strategy often integrates both FEA and traditional methods to leverage their respective strengths. The following diagram illustrates a typical hybrid validation workflow.

Methodologies for Key Experiments

FEA Protocol for Structural Analysis

- Model Preparation: Create or import a 3D CAD model. Simplify geometry by removing irrelevant features like small fillets or threads that unnecessarily complicate meshing [1].

- Meshing: Generate a finite element mesh. Conduct a mesh sensitivity study to ensure results are independent of element size. Use finer meshes in areas of high-stress gradients [4].

- Material Modeling: Define material properties (e.g., elastic modulus, yield strength, Poisson's ratio). For non-linear analyses, specify plasticity models [1] [4].

- Boundary Conditions: Apply realistic constraints to mimic physical supports. Apply operational loads such as pressures, forces, or thermal gradients [1].

- Solving and Validation: Execute the solver. Critically, validate results by comparing them with analytical calculations for simplified sub-components or against established experimental data [4].

Traditional Stress Testing Protocol

- Specimen Preparation: Manufacture physical prototypes or test coupons according to relevant standards (e.g., ASTM) [1] [4].

- Test Setup: Calibrate testing equipment (e.g., universal testing machine). Mount the specimen precisely to ensure uniaxial loading and avoid eccentricities [1].

- Instrumentation: Attach strain gauges or use Digital Image Correlation (DIC) systems to measure strain fields. Set up load cells and displacement transducers [4].

- Testing: Subject the specimen to controlled loads (tensile, compressive, cyclic) until yield or failure. Monitor and record load-displacement data in real-time [1].

- Data Analysis: Calculate key mechanical properties like yield strength, ultimate tensile strength, and modulus of elasticity from the acquired data [1] [4].

Supporting Experimental Data and Case Studies

Data from Lattice Structure Analysis

Research on additively manufactured Ti6Al4V lattice structures provides quantitative data on the correlation between FEA predictions and physical experiments. The study evaluated two lattice configurations—Face-Centred Cubic (FCC-Z) and Body-Centred Cubic (BCC-Z)—with varying porosity levels [4].

Table 2: Experimental vs. FEA Results for Ti6Al4V Lattice Structures [4]

| Lattice Type | Porosity | Experimental Compressive Strength (MPa) | FEA-Predicted Compressive Strength (MPa) | Key Observed Deformation Mechanism |

|---|---|---|---|---|

| FCC-Z | 50% | 95.2 | 98.1 | Layer-by-layer fracture |

| FCC-Z | 80% | 18.7 | 19.5 | Layer-by-layer fracture |

| BCC-Z | 50% | 64.8 | 66.3 | Shear band formation |

| BCC-Z | 80% | 12.1 | 12.9 | Shear band formation |

The study concluded that the FEA results "closely aligned with the experimental data, validating the accuracy of the simulation in predicting peak forces, displacement trends, and failure mechanisms" [4]. Furthermore, the FCC-Z structures demonstrated superior mechanical performance in Specific Energy Absorption (SEA) and Crushing Force Efficiency (CFE) compared to BCC-Z structures, a finding consistently captured by both experimental and FEA methods [4].

Performance Comparison in Engineering Practice

Beyond specific case studies, the two methods exhibit distinct performance profiles across general engineering metrics.

Table 3: Performance and Resource Comparison

| Aspect | FEA | Traditional Methods |

|---|---|---|

| Cost | Higher upfront due to software/hardware; cost-effective by reducing physical prototypes [1]. | Lower upfront cost; can become expensive if multiple prototype iterations are needed [1]. |

| Time | Faster for digital iterations; slower for initial model setup and computation of high-fidelity simulations [1]. | Faster for simple, standard calculations; time-consuming for multiple design iterations requiring new prototypes [1]. |

| Accuracy for Complex Problems | High, provided the model is well-constructed and validated. Can identify internal stress concentrations [1]. | Low to medium, as simplifying assumptions break down for intricate geometries and complex loads [2]. |

| Regulatory Acceptance | Often used for design insight; typically supplemented by physical testing for final validation in critical applications [1]. | Widely accepted for code-compliant designs and is often mandatory for final product certification [1]. |

The Researcher's Toolkit: Essential Materials and Reagents

Table 4: Essential Research Tools for Analytical Methods

| Tool / Solution | Function in Analysis |

|---|---|

| FEA Software (e.g., ANSYS, Abaqus) | Platform for creating digital models, running simulations, and post-processing results for stress, thermal, and fluid flow analysis [4] [3]. |

| Universal Testing Machine | Applies controlled tensile, compressive, or cyclic loads to physical specimens to measure mechanical properties and validate simulations [1]. |

| Strain Gauge / DIC System | Measures local strain on a specimen's surface during physical testing, providing critical data for correlating with FEA-predicted strain fields [4]. |

| CAD Software (e.g., SolidWorks, CATIA) | Used to create the precise digital geometry that serves as the foundation for both FEA meshing and the generation of prototypes [1]. |

| Calibrated Material Coupons | Test specimens with known properties used to calibrate and verify the accuracy of both FEA material models and traditional calculation inputs [1] [4]. |

The analytical landscape defined by FEA and traditional methods offers researchers a powerful, complementary toolkit. FEA excels in handling complexity, providing detailed insights, and enabling rapid design optimization for novel structures and materials. Traditional methods provide a fast, reliable, and code-mandated approach for simpler, well-understood problems. As evidenced by experimental data, a hybrid strategy that uses traditional calculations for initial sizing and FEA for detailed analysis and optimization—followed by physical testing for final validation—constitutes the most robust and efficient pathway for engineering research and drug development professionals aiming to deliver innovative and reliable solutions.

Finite Element Analysis (FEA) is a computational technique used by engineers to predict how products will react to real-world forces, vibration, heat, and other physical effects [5]. The method works by breaking down a complex structure into smaller, manageable pieces called finite elements, which are interconnected at nodes [5]. This process transforms complicated real-world problems into solvable mathematical models by breaking down larger partial differential equations into simpler algebraic equations [6]. By solving these equations collectively, FEA allows engineers to visualize stress concentrations, deformation, and thermal effects that are often invisible to the naked eye, reducing the need for costly physical prototypes and accelerating time-to-market across industries from aerospace to biomedical engineering [5] [6].

The significance of FEA lies in its ability to handle complex geometries, diverse materials, and challenging boundary conditions that would be impractical or impossible to analyze using traditional analytical methods alone [7]. As we approach 2025, the application of finite element methods continues to expand into new industries and more complex scenarios, driven by advances in computing power, software capabilities, and integration with other digital tools [5]. This expansion makes understanding FEA's core principles—from meshing to mathematical prediction—essential for researchers and engineers working with computational modeling across scientific disciplines.

The Meshing Process: Discretization Fundamentals

Core Concepts of Discretization

Discretization represents the foundational step in FEA where a continuous system or structure is divided into finite elements [7]. This process creates a mesh—a network of smaller interconnected parts that helps simulate and analyze local effects and their impact on the overall structure [7]. The mathematical foundation of FEA rests on the Principle of Minimum Potential Energy, which states that a structure is in equilibrium when its total potential energy is minimized [7]. When a structure deforms due to applied loads, it stores potential energy, and FEA applies this principle by minimizing the stored energy in each finite element to predict how the structure will behave under various loading conditions [7].

The accuracy of FEA depends heavily on the type, size, and quality of the finite elements used in the mesh [7]. Engineers must carefully select element shapes (e.g., triangles, tetrahedrons, quadrilaterals, hexahedrons) and sizing to balance computational efficiency with the precision of results. Proper meshing is crucial for capturing the correct behavior of the structure and minimizing errors in simulation [7]. The relationship between mesh characteristics and solution accuracy represents a critical consideration in FEA, with finer meshes typically providing more accurate results but requiring greater computational resources [8].

Meshing Workflow and Best Practices

The following diagram illustrates the standard workflow for mesh generation in finite element analysis:

The FEA meshing process begins with geometry definition, where the physical structure is converted into a digital model, often imported from CAD software [7] [1]. Next, engineers select appropriate element types and formulations based on the analysis requirements—common choices include linear elements for simpler analyses and higher-order elements for complex stress distributions [8]. The mesh density is then determined, balancing accuracy needs with computational constraints [8]. Areas with expected stress concentrations typically require finer meshing, while regions with minimal stress variation can utilize coarser elements to reduce computational load [8].

Following initial mesh generation, a comprehensive quality check is performed to assess metrics such as element aspect ratios, skewness, and Jacobian values [8]. Poor mesh quality can lead to significant errors in analysis, compromising the safety and integrity of the structure being modeled [7]. If quality metrics are unsatisfactory, the refinement process begins, which may involve localized mesh densification in critical areas or global adjustments to element sizing and distribution [7]. This iterative process continues until the mesh meets predefined quality standards, resulting in a final mesh suitable for accurate simulation [8].

Mathematical Foundation of FEA

Governing Equations and Solution Methods

The mathematical framework of FEA transforms physical laws into solvable systems of equations through several key steps. The process begins with establishing governing equations based on the relevant physical principles for the problem domain, such as the equations of elasticity for structural mechanics or the heat equation for thermal analysis [7]. These partial differential equations (PDEs) describe the continuous behavior of the system but are generally impossible to solve analytically for complex geometries [6].

The core mathematical operation in FEA involves converting these PDEs into simpler algebraic equations through the formulation of element stiffness matrices [6]. Each element in the mesh contributes to a global stiffness matrix that represents the entire structure's resistance to deformation [7]. The assembly of these element matrices creates a comprehensive system of equations that represents the entire structure: [K]{u} = {F}, where [K] is the global stiffness matrix, {u} is the nodal displacement vector, and {F} is the applied force vector [7]. This system is then solved using numerical methods to determine unknown quantities such as displacements, stresses, or temperatures throughout the model [6].

Solver Technologies and Algorithmic Approaches

Finite element software employs various solver technologies to handle different problem types efficiently. Direct solvers, such as those based on LU decomposition, provide robust solutions for smaller problems but face memory limitations with large-scale simulations [8]. Iterative solvers like the conjugate gradient method offer better scalability for large problems but require careful parameter tuning for convergence [8]. The selection of appropriate solver algorithms significantly impacts both solution accuracy and computational efficiency, particularly for nonlinear or transient analyses where convergence behavior becomes critical [8].

The mathematical implementation also varies between implicit and explicit solution schemes. Implicit methods, used in solvers like Abaqus/Standard, are preferred for static and low-speed dynamic problems as they provide unconditional stability [9]. Explicit methods, such as those in Abaqus/Explicit or LS-DYNA, excel at modeling high-speed dynamic events like impacts and crashes but require smaller time steps for stability [9]. The mathematical sophistication of modern FEA solvers enables them to handle increasingly complex scenarios, including material nonlinearity, large deformations, and multi-physics couplings that would be mathematically intractable using traditional analytical approaches [7] [9].

Comparative Analysis: Leading FEA Software Platforms

Evaluation Criteria for FEA Tools

Selecting appropriate FEA software requires careful consideration of multiple technical factors that influence simulation accuracy, efficiency, and applicability to specific research needs. Based on comprehensive analyses of available platforms, the following criteria represent essential evaluation dimensions:

- Accuracy: The fidelity with which software approximates real-world physical behavior, assessed through mesh density sensitivity, validation against analytical solutions, material model implementation, and solver precision [8].

- Computational Efficiency: The software's ability to deliver accurate results within reasonable time and resource constraints, evaluated through solver performance, scalability, parallel processing capabilities, and optimization algorithms [8].

- User Interface: The accessibility and workflow efficiency provided by the software environment, including model creation tools, mesh generation controls, simulation setup processes, and post-processing visualization capabilities [8].

- Supported Physics: The range of physical phenomena the software can simulate, from basic structural mechanics to advanced multi-physics couplings like thermal-structural, fluid-structure interaction, and electromechanical analyses [8].

- Integration and Automation: Compatibility with CAD, PLM, and other engineering systems, along with scripting and API access for workflow customization and parametric studies [9].

Comprehensive Software Comparison

The table below provides a detailed comparison of leading FEA software platforms based on the key evaluation criteria:

| Software Platform | Core Strengths | Primary Applications | Accuracy Features | Computational Efficiency | Learning Curve |

|---|---|---|---|---|---|

| ANSYS Mechanical | Comprehensive multi-physics capabilities, extensive material library, high-fidelity results [9] | Aerospace, automotive, electronics [9] | Robust structural analysis, validated solvers, advanced contact modeling [9] | High-performance computing support, parallel processing [9] | Steep learning curve, extensive training resources [9] |

| Abaqus (Dassault Systèmes) | Advanced non-linear analysis, complex material behavior, sophisticated contact modeling [9] | Automotive (tire modeling, crashworthiness), defense [9] | Excellence in material nonlinearity, reliable for complex physics [9] | Separate modules for standard (implicit) and explicit dynamics [9] | Less intuitive interface, significant training investment [9] |

| MSC Nastran | Structural analysis, vibration, buckling analysis, reliability [9] | Aerospace, automotive (aircraft frames, vehicle chassis) [9] | Industry standard for stress/vibration, extensive verification history [9] | Efficient for large models with millions of degrees of freedom [9] | Moderate learning curve, especially with pre-processors like Patran/Femap [9] |

| Altair HyperWorks (OptiStruct) | Design optimization, lightweighting, meshing capabilities [9] | Automotive NVH, crash, durability, industrial design [9] | Strong linear/nonlinear capabilities, focus on optimization-driven accuracy [9] | Units-based licensing, efficient optimization algorithms [9] | Moderate to steep, depending on module (HyperMesh for pre-processing) [9] |

| COMSOL Multiphysics | Integrated multi-physics environment, equation-based modeling [5] | Academia, research, electronics, biomedical [5] | Direct coupling of multiple physics, customizable equations [5] | Adaptive meshing, specialized solvers for coupled phenomena [5] | Moderate, intuitive interface for coupled physics [5] |

This comparative analysis reveals that while all major platforms provide robust FEA capabilities, each excels in specific application domains. ANSYS and Abaqus lead in handling complex nonlinear and multi-physics problems, while Nastran remains the preferred choice for traditional structural analysis in aerospace applications [9]. Altair HyperWorks distinguishes itself through optimization-focused workflows, and COMSOL offers unique strengths in coupled physics phenomena [9] [5]. The selection of an appropriate platform should align with the specific physics requirements, computational resources, and technical expertise available within a research team.

Experimental Protocols for FEA Validation

Standard Verification Methodology

Validating FEA results requires systematic experimental protocols to ensure simulation accuracy and reliability. The standard verification process involves multiple methodological approaches:

Benchmarking Against Analytical Solutions: Comparing simulation results with known analytical solutions for simplified cases provides a fundamental accuracy assessment. This process involves modeling idealized scenarios with established mathematical solutions and evaluating the deviation between software output and expected analytical outcome [8]. For example, comparing the deflection of a cantilever beam under a point load simulated by the software with the classical beam theory solution validates basic structural mechanics capabilities [8].

Mesh Convergence Studies: Performing systematic mesh refinement to evaluate solution sensitivity to element size and distribution represents a critical validation step. The protocol involves progressively refining mesh density in critical regions and observing how key output parameters (such as stress concentrations or natural frequencies) change with each refinement [8]. A solution is considered converged when further mesh refinement produces negligible changes in results, typically less than 1-2% variation in critical output parameters [8].

Material Model Validation: Testing the software's implementation of material models against experimental data for specific materials under various loading conditions. This protocol involves creating standardized test specimens (tensile, compression, shear) with instrumented measurement systems, conducting physical tests, and comparing the empirical stress-strain response with software predictions using the same material models [1]. This validation is particularly important for nonlinear materials like polymers, composites, or biological tissues [1].

Physical Correlation Protocols

Correlating FEA results with physical testing provides the most comprehensive validation approach:

Strain Gauge Testing: Applying strain gauges to physical prototypes at critical locations identified through preliminary FEA and comparing measured strains with predicted values under identical loading conditions [1]. This protocol requires careful attention to loading application, boundary condition replication, and measurement precision to ensure meaningful comparisons.

Digital Image Correlation (DIC): Using advanced optical measurement systems to capture full-field displacement and strain data on component surfaces during physical testing [1]. The protocol involves applying speckle patterns to test articles, conducting load tests while capturing high-resolution images, and processing the image data to generate comprehensive deformation maps for direct comparison with FEA predictions across the entire component surface rather than discrete measurement points.

Modal Testing: For dynamic analyses, conducting experimental modal analysis through impact hammer or shaker testing to determine natural frequencies, damping ratios, and mode shapes [1]. The protocol involves instrumenting the test structure with accelerometers, applying controlled excitation, and measuring the dynamic response to extract modal parameters for comparison with FEA-predicted modal characteristics.

These experimental protocols collectively provide a robust framework for validating FEA methodologies, building confidence in simulation results, and identifying potential limitations in mathematical models, material properties, or boundary condition assumptions [1].

Essential Research Reagent Solutions for FEA

The following table details key computational tools and resources that constitute the essential "research reagent solutions" for conducting finite element analysis across scientific disciplines:

| Research Reagent | Function & Purpose | Examples & Implementation |

|---|---|---|

| Element Formulations | Mathematical basis for element behavior; determines how elements interpolate solutions and respond to loads [8] | Linear/quadratic elements, solid/shell formulations, hybrid elements for incompressible materials [8] |

| Material Model Libraries | Define material stress-strain relationships and failure criteria for accurate physical representation [8] [9] | Linear elastic, plastic, hyperelastic, composite, creep models; implementation varies by software [8] [9] |

| Solution Algorithms | Numerical methods for solving the system of equations derived from discretization [8] [9] | Direct solvers (LU decomposition), iterative solvers (conjugate gradient), explicit/implicit methods [8] [9] |

| Meshing Tools | Generate finite element mesh from geometry with quality controls for analysis accuracy [8] [7] | Automatic tetrahedral/hexahedral meshing, mesh refinement algorithms, quality metrics checking [8] [7] |

| Pre/Post-Processing Modules | Prepare models for analysis and interpret results through visualization and data extraction [8] | CAD integration, boundary condition application, contour plotting, animation, report generation [8] |

These computational reagents form the essential toolkit for conducting rigorous finite element analyses across engineering and scientific disciplines. The selection and implementation of these components significantly influence the accuracy, efficiency, and reliability of FEA outcomes, much like traditional laboratory reagents affect experimental results in physical sciences. Researchers must carefully select and validate these computational resources based on their specific application requirements, available computational infrastructure, and validation capabilities [8] [9].

FEA in Context: Comparison with Alternative Methods

FEA versus Traditional Experimental Methods

Finite Element Analysis provides distinct advantages and limitations when compared to traditional experimental stress analysis methods. The following diagram illustrates the strategic decision process for selecting between these approaches:

The comparative analysis between FEA and traditional experimental methods reveals a complementary relationship rather than a competitive one. FEA excels in early design stages through its ability to perform rapid design iterations, identify internal stress distributions invisible to physical measurement, simulate extreme or dangerous conditions impractical for physical testing, and optimize material usage while maintaining structural integrity [1]. These capabilities come with FEA limitations, including requirements for specialized expertise, computationally intensive resources for high-resolution simulations, and dependence on accurate material data inputs [1].

Conversely, traditional stress testing methods (including tensile testing, fatigue testing, impact testing, and pressure testing) provide irreplaceable real-world validation, direct observation of failure mechanisms, and essential data for regulatory compliance in industries such as aerospace, automotive, and medical devices [1]. However, these experimental approaches face limitations in cost, time requirements, and inability to provide comprehensive internal stress state information [1]. The optimal research strategy typically involves a hybrid approach that leverages FEA for design exploration and optimization followed by targeted physical testing for final validation and regulatory certification [1].

FEA in the Context of Bailenger Research Concentration Methods

While the specific context of "Bailenger research concentration methods" referenced in the thesis context is not detailed in the available search results, FEA can be conceptually compared to various concentration and reduction methods used in scientific computing and engineering analysis. Like mathematical concentration techniques that simplify complex systems to their essential parameters, FEA employs discretization to reduce continuous physical phenomena to solvable algebraic equations [7]. This methodological approach shares philosophical commonality with other scientific reduction methods that break complex problems into manageable components while preserving essential physical behaviors.

The distinctive value of FEA within the landscape of analytical methods lies in its ability to maintain high fidelity to original system complexity while achieving mathematical tractability. Unlike some concentration methods that sacrifice detail for computational simplicity, FEA systematically preserves spatial and temporal resolution through controlled mesh refinement and time stepping [7]. This balanced approach enables FEA to serve as a bridge between oversimplified analytical models and computationally prohibitive direct numerical simulations, positioning it as a versatile tool for researchers across disciplines requiring predictive modeling of physical systems [7] [1].

Finite Element Analysis represents a sophisticated computational methodology that transforms continuous physical systems into discrete mathematical models through meshing and numerical solution techniques. The process encompasses geometry definition, mesh generation, mathematical formulation based on physical principles, and numerical solution followed by results interpretation [7]. As FEA continues to evolve through 2025, trends such as increased AI-driven optimization, cloud computing adoption, and integrated digital twin workflows are expanding its capabilities and accessibility [10] [5].

The comparative analysis of leading FEA platforms reveals distinctive strengths tailored to different application domains, from ANSYS and Abaqus for complex multi-physics and nonlinear problems to Nastran for traditional structural analysis and Altair HyperWorks for optimization-focused workflows [9]. This specialization underscores the importance of selecting FEA tools aligned with specific research requirements, computational resources, and technical expertise [8] [9]. When complemented with appropriate experimental validation protocols and understood within the broader context of analytical methods, FEA provides researchers with a powerful predictive tool that continues to transform engineering design and scientific inquiry across diverse disciplines [1].

The isolation and concentration of biological nanoparticles, such as extracellular vesicles (EVs) and proteins, are critical steps in biomedical research and drug development. Among the most established physical methods are ultracentrifugation, precipitation, and ultrafiltration, each with distinct principles and performance characteristics [11]. These techniques are essential for obtaining high-quality samples for downstream analysis in fields like biomarker discovery and therapeutic development. The choice of method significantly impacts the yield, purity, and biological functionality of the isolated materials, influencing the reliability and reproducibility of experimental data [12] [13]. This guide provides an objective, data-driven comparison of these three core techniques to inform method selection by researchers and scientists.

Method Fundamentals and Experimental Protocols

The following sections detail the core principles and standard operating procedures for each concentration technique.

Ultracentrifugation (UC)

Principle: This technique separates particles based on their size, density, and shape by applying a high centrifugal force. Differential ultracentrifugation involves a series of centrifugation steps at increasing speeds and durations to sequentially pellet larger particles, followed by the desired smaller nanoparticles like exosomes [11]. The relative centrifugal force (RCF) is calculated as: (RCF = (1.118 \times 10^{-5}) \times (RPM)^2 \times r), where (RPM) is revolutions per minute and (r) is the rotor radius [11].

Detailed Protocol:

- Sample Pre-conditioning: Centrifuge the cell culture supernatant or biofluid at 300 × g for 10 minutes at 4°C to remove dead cells [12] [14].

- Clearing of Debris: Transfer the supernatant to a new tube and centrifuge at 3,000 × g for 30 minutes at 4°C to eliminate cellular debris and larger microvesicles [13].

- Filter Sterilization: Carefully filter the supernatant through a 0.22 µm sterilization filter to remove any remaining cell particulates or apoptotic bodies [14].

- Ultracentrifugation: Transfer the filtered supernatant to ultracentrifugation tubes. Pellet the nanoparticles via centrifugation at 100,000 × g for 70 minutes at 4°C [12] [14].

- Washing and Final Pellet: Resuspend the pellet in a large volume of phosphate-buffered saline (PBS). To obtain a relatively pure pellet, repeat the ultracentrifugation step (100,000 × g for 70 minutes) [14]. The final pellet, containing the concentrated nanoparticles, can be resuspended in a small volume of PBS or buffer and stored at -80°C [14].

Precipitation

Principle: This method reduces the solubility of nanoparticles by using water-excluding polymers, such as polyethylene glycol (PEG), which disrupt the hydration shell and force the particles out of solution. The precipitated particles are then collected using low-speed centrifugation [14] [13].

Detailed Protocol:

- Sample Pre-conditioning and Clearing: Follow the same initial steps as ultracentrifugation: centrifuge at 300 × g for 10 minutes and 3,000 × g for 30 minutes to remove cells and debris [14].

- Filter Sterilization: Filter the supernatant through a 0.22 µm filter [14].

- Precipitation: Transfer the conditioned supernatant to a sterile vessel. Add a specified volume of a commercial exosome precipitation solution (e.g., PEG-based solution) as per the manufacturer's protocol. Mix thoroughly and refrigerate the mixture overnight (16 hours) to allow for complete precipitation [14].

- Collection: Centrifuge the mixture at 1,500 × g for 30 minutes to pellet the precipitated nanoparticles. Aspirate the supernatant carefully [14].

- Removal of Residual Solution: Centrifuge the tube again at 1,500 × g for 5 minutes and remove any residual liquid to minimize contaminant carry-over [14]. The pellet can then be resuspended for downstream applications.

Ultrafiltration (UF)

Principle: This technique separates particles based on size and molecular weight using a semipermeable membrane with a defined molecular weight cutoff (MWCO), such as 100 kDa. Smaller molecules and solvents pass through the membrane as permeate, while larger particles are retained and concentrated [14] [11].

Detailed Protocol:

- Sample Pre-conditioning and Clearing: Begin with the same initial clearing steps: centrifugation at 300 × g for 10 minutes and 3,000 × g for 30 minutes [14].

- Filter Sterilization: Filter the supernatant through a 0.22 µm sterilization filter [14].

- Ultrafiltration: Load the conditioned supernatant into an ultrafiltration device (e.g., a 100 kDa MWCO centrifugal concentrator). Centrifuge at a specified force (e.g., 3,000 × g) for a set time or until the desired volume reduction is achieved. This step simultaneously concentrates the sample and exchanges its buffer with the filtrate (e.g., PBS) [14]. The retained fraction contains the concentrated nanoparticles.

Comparative Performance Data

Independent studies directly comparing these three methods for isolating exosomes reveal significant differences in their outcomes regarding particle size, yield, and purity.

Table 1: Comparative Analysis of Exosomes Isolated by Different Methods

| Performance Metric | Ultrafiltration | Precipitation | Ultracentrifugation |

|---|---|---|---|

| Mean Particle Size | 122 nm [12] | 89 nm [12] | 60 nm [12] |

| Particle Homogeneity | Lower (higher shape variability) [12] | Moderate [12] | Higher (narrow size distribution) [12] |

| Total Protein Content | Higher | Higher | Lower (50 µg/ml) [14] |

| Particle-to-Protein Ratio | Lower [13] | Lowest [13] | Higher [13] |

| Functional Efficacy | 11% increase in hypoxic cell viability [12] | 15% increase in hypoxic cell viability [12] | 22% increase in hypoxic cell viability [12] |

| Relative Process Speed | Fast [11] | Moderate (requires overnight incubation) [14] | Slow (time-consuming) [13] |

A 2025 comprehensive study corroborates these findings, showing that the precipitation method yielded the highest particle concentration but the lowest purity based on particle-to-protein ratio. In contrast, ultracentrifugation and size-exclusion chromatography achieved higher purity [13].

The Scientist's Toolkit: Essential Research Reagents

The following table lists key materials and reagents required for implementing these isolation protocols.

Table 2: Key Research Reagents and Solutions

| Item | Function/Description | Example Usage |

|---|---|---|

| Polyethylene Glycol (PEG) Solution | Water-excluding polymer that precipitates nanoparticles by disrupting solvation [14] [13]. | Used in precipitation-based isolation kits. |

| Ultrafiltration Devices | Centrifugal concentrators with membranes of defined MWCO (e.g., 100 kDa) for size-based separation [14] [15]. | Available as centrifugal units (e.g., Amicon) for small volumes; materials include PES or regenerated cellulose [15]. |

| Dulbecco's Phosphate Buffered Saline (PBS) | Isotonic buffer for washing pellets, resuspending final isolates, and buffer exchange [14]. | Used in all protocols for resuspension and washing steps. |

| Polyethersulfone (PES) Membrane | A common ultrafiltration membrane material with high pH and thermal stability [15]. | Used in stirred cells and centrifugal ultrafilters for concentrating viral vectors and EVs [15]. |

| Regenerated Cellulose (RC) Membrane | A hydrophilic ultrafiltration membrane material, less prone to fouling than PES [15]. | An alternative membrane material for ultrafiltration devices. |

| Exosome-Depleted FBS | Fetal bovine serum processed to remove endogenous bovine exosomes for cell culture [14]. | Used in cell culture media to ensure that isolated exosomes are cell-derived. |

| Protease Inhibitor Cocktails | Added to lysis buffers or samples to prevent proteolytic degradation of proteins in the isolate [13]. | Used during and after isolation for downstream protein analysis. |

Workflow and Decision Pathways

The following diagrams summarize the logical steps for each isolation protocol and provide a guideline for method selection.

Diagram 1: Ultracentrifugation workflow.

Diagram 2: Method selection guide.

Finite Element Analysis (FEA) has become a cornerstone computational method for simulating complex physical phenomena across engineering and scientific disciplines. Within the broader context of concentration methods explored in Bailenger research, evaluating FEA's performance requires a rigorous framework of key metrics: sensitivity (ability to detect true positive effects), specificity (ability to avoid false positives), recovery efficiency (ability to accurately reconstruct true system responses), and computational accuracy (deviation from experimental or analytical benchmarks). These metrics collectively determine FEA's reliability for critical applications from drug development to structural integrity assessment. This guide provides an objective comparison of FEA performance against alternative methods, supported by experimental data and detailed protocols, offering researchers a comprehensive toolkit for method selection and validation.

Performance Metrics Framework and Comparative Analysis

Defining the Core Performance Metrics

- Sensitivity: In FEA and computational mechanics, sensitivity measures how effectively a model or analysis detects a true positive effect or condition, such as identifying a critical stress concentration or detecting a structural defect. High sensitivity ensures that genuine phenomena are not missed, which is crucial for safety-critical applications [16].

- Specificity: Specificity quantifies a method's ability to correctly exclude false positive indications. For FEA, this translates to minimizing spurious results or misidentified problem areas that do not correspond to actual physical behavior. Maintaining high specificity prevents unnecessary design changes and resource allocation [16].

- Recovery Efficiency: This metric evaluates how completely and accurately an analysis method can reconstruct or "recover" the true system response from limited data. In FEA context, it assesses how well simulation results match comprehensive experimental measurements or known analytical solutions across the entire domain of interest.

- Computational Accuracy: Computational accuracy measures the numerical deviation between FEA predictions and ground truth data from controlled experiments or established physical laws. It represents the fundamental fidelity of the simulation methodology and its implementation.

Comparative Performance Data

Table 1 summarizes quantitative performance data for FEA and alternative analytical methods across different application domains and loading conditions, based on experimental validations.

Table 1: Comparative Performance Metrics for FEA and Alternative Methods

| Method | Application Domain | Sensitivity | Specificity | Computational Accuracy | Experimental Validation |

|---|---|---|---|---|---|

| FEA (Non-linear) | Pipe with local wall thinning [17] | High (Detects all failure modes) | High (Correctly classifies failure modes) | >95% correlation with experimental failure moments [17] | Four-point bending tests on carbon steel pipes |

| FEA (Model Updated) | Historical masonry church [18] | High (Identifies critical modal frequencies) | Medium-High (Some parameter uncertainty) | <5% error in natural frequency prediction [18] | Ambient vibration tests and operational modal analysis |

| Machine Learning (SSL) | Lower-limb joint moment estimation [19] | Very High | Very High | MAE reduced by 26.48% vs baseline [19] | Optical motion capture and force plates |

| Infrared Imaging + ML | Hidden bubble detection [20] | Medium (Depth-dependent) | High | Validated against FEA models [20] | Thermal imaging experiments |

| Experimental (Static Load) | RC beams [21] | Reference Standard | Reference Standard | Reference Standard | Sensor arrays and universal testing machine |

FEA Performance in Bailenger Research Context

Within Bailenger research frameworks investigating concentration methods, FEA demonstrates distinct advantages in multiscale modeling and complex geometry handling. Studies integrating FEA with machine learning show enhanced predictive capability for thermal conductivity in composite materials, achieving coefficients of determination (R²) greater than 0.97 across multiple spatial directions [22]. This integrated approach outperforms traditional analytical methods for problems with intricate microstructures where simplifying assumptions break down.

For defect detection applications relevant to material characterization, FEA-enabled methods successfully identify critical failure modes in structures with local weaknesses, correctly classifying failure mechanisms (ovalization, buckling, crack initiation) with high specificity based on geometric parameters [17]. This capability directly supports concentration analysis in material stress zones.

Experimental Protocols for Method Validation

FEA Model Updating with Dynamic Identification

The validation of FEA models through experimental testing follows rigorous protocols to ensure metric reliability:

- Objective: Calibrate numerical model parameters to minimize discrepancy between computational predictions and experimental measurements of dynamic behavior [18].

- Equipment: High-sensitivity accelerometers (e.g., PCB 393B12, 10 V/g sensitivity), 24-bit acquisition systems, anti-aliasing filters [18].

- Procedure:

- Perform ambient vibration tests (AVT) with strategically positioned sensors

- Process data through Operational Modal Analysis (OMA) to extract natural frequencies, mode shapes, and damping ratios

- Develop initial FEA model using best-available material parameters

- Perform sensitivity analysis to identify most influential parameters

- Iteratively update parameters using Douglas-Reid method until error <5%

- Validation Metrics: Natural frequency matching, Modal Assurance Criterion (MAC) for mode shape correlation [18].

Static Mechanical Testing with FEA Correlation

- Objective: Establish ground truth data for FEA validation under controlled loading conditions [17] [21].

- Equipment: Universal testing machine, displacement sensors (LVDT, FLS), strain gauges, force-resisting sensors [21].

- Procedure:

- Instrument test specimens with sensor arrays at critical locations

- Apply monotonic or cyclic loading under displacement or force control

- Record force-displacement data at appropriate sampling frequency

- Develop FEA model with identical geometry and boundary conditions

- Compare numerical and experimental results for load-deformation response

- Quantify accuracy using statistical measures (R², RMSE, MAE)

- Validation Metrics: Maximum load capacity, deformation at failure, stiffness, strain distribution [17].

Integrated FEA-Machine Learning Workflow

- Objective: Enhance predictive capability while reducing computational expense through hybrid methodology [22] [19].

- Equipment: Computational resources (workstations with adequate RAM/CPU), FEA software (ANSYS, Abaqus), machine learning libraries (Python/TensorFlow) [22].

- Procedure:

- Generate comprehensive training dataset using parametric FEA studies

- Split data into training, validation, and test sets

- Train ML models (Kriging, ANN, SVM) on FEA results

- Validate ML predictions against holdout FEA data

- Conduct experimental validation of hybrid model predictions

- Compare computational efficiency vs. pure FEA approach

- Validation Metrics: Prediction accuracy (MSE, MAE), computational time savings, generalization capability [22] [19].

Visualization of Methodologies and Relationships

FEA Model Updating Workflow

Diagram 1: FEA model updating workflow based on experimental data.

Integrated FEA-ML Methodology

Diagram 2: Integrated FEA and machine learning methodology for enhanced efficiency.

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Materials and Equipment for FEA Validation

| Item | Function | Application Example |

|---|---|---|

| Accelerometers (PCB 393B12) | Measure structural vibration responses | Operational modal analysis for FEA model updating [18] |

| Universal Testing Machine | Apply controlled mechanical loading | Static validation of FEA stress predictions [17] [21] |

| ANSYS Mechanical | General-purpose FEA simulation | Multiphysics structural and thermal analysis [22] [9] |

| Abaqus/Standard | Advanced nonlinear FEA | Complex material behavior and contact problems [9] |

| Python with Scikit-learn | Machine learning implementation | Developing surrogate models from FEA data [22] [19] |

| Force-Resisting Sensors | Measure applied and reaction forces | Load quantification in mechanical testing [21] |

| Flex Sensors | Measure surface deformation | Deflection monitoring in beam experiments [21] |

| Thermal Imaging Camera | Capture surface temperature distributions | Hidden defect detection and thermal analysis [20] |

The comparative analysis of FEA performance metrics reveals a sophisticated computational methodology with well-established validation protocols. When properly implemented with experimental calibration, FEA achieves high sensitivity and specificity in detecting critical structural responses and failure mechanisms. The integration of FEA with machine learning techniques demonstrates particularly promising enhancements in computational efficiency while maintaining accuracy, with Kriging models showing superior performance to traditional ANN approaches in some applications [22].

For researchers in drug development and material science, selection of appropriate concentration methods should consider the multiscale capabilities of modern FEA alongside its requirements for experimental validation. The continued development of hybrid approaches leveraging both physical modeling and data-driven techniques represents the most promising direction for achieving optimal balance between computational accuracy and efficiency in complex Bailenger research applications.

The pharmaceutical and biotechnology industries are undergoing a foundational shift in preclinical drug development, moving toward a reduced reliance on traditional animal testing. This transformation is driven by a powerful combination of ethical concerns, compelling scientific limitations of animal models, and progressive regulatory changes. New Approach Methodologies (NAMs) represent a suite of innovative scientific approaches that provide human-relevant data to evaluate drug safety and efficacy. These include advanced in vitro systems (such as 3D cell cultures and organ-on-a-chip devices), in silico tools (computational models and AI), and in chemico methods [23] [24]. The impetus for this change is clear: surveys show more than 85% of US adults support discontinuing animal testing, and scientifically, more than 90% of drugs successful in animal trials fail to gain FDA approval, primarily due to lack of efficacy or unexpected safety issues in humans [23].

This guide objectively compares the performance of these NAMs against traditional methods and within the context of different NAM types themselves. It frames this comparison within a broader thesis on the role of Finite Element Analysis (FEA) and other computational methods, providing researchers and drug development professionals with the experimental data and regulatory context needed to navigate this evolving landscape.

The Driving Forces: Regulatory and Ethical Imperatives

The Evolving Regulatory Landscape

The regulatory environment for drug development has recently undergone landmark changes that formally enable the use of non-animal data.

- FDA Modernization Act 2.0: Enacted in December 2022, this law removed the long-standing federal mandate for animal testing for all new drug applications. It explicitly permits pharmaceutical companies to use alternative testing methods—including cell-based assays, organ-on-a-chip systems, and computer models—to establish drug safety and efficacy [23] [25].

- FDA's 2025 Roadmap: In April 2025, the FDA published a "Roadmap to reducing animal testing in preclinical safety studies," outlining a strategic, stepwise approach to reduce, refine, and replace animal testing with scientifically validated NAMs. The agency identified monoclonal antibodies (mAbs) as the first promising area for reducing animal use, with plans to expand to other biological molecules and eventually new chemical entities [23] [25].

- European Medicines Agency (EMA) Initiatives: The EMA fosters regulatory acceptance of NAMs by providing developers with pathways for interaction, including briefing meetings, scientific advice, and a formal qualification procedure for specific contexts of use [26].

The Ethical and Scientific Case for Change

The ethical imperative to reduce animal suffering aligns with growing recognition of the scientific limitations of animal models. There are fundamental differences between animal physiology and human biology. The genetic homogeneity of most laboratory test animals contrasts sharply with the vast genetic diversity in human populations, making it difficult for animal studies to predict drug responses across different individuals [23]. Consequently, drugs deemed safe in animals have sometimes proved lethal in first-in-human trials [23]. As noted by experts, "NAMs allow us to study human biology directly, instead of transposing animal results" [24].

Comparative Analysis of NAMs and Traditional Methods

Performance Metrics of NAMs vs. Animal Models

The transition to NAMs is justified by quantitative data demonstrating their potential for improved predictivity, efficiency, and cost-effectiveness.

Table 1: Quantitative Comparison of NAMs versus Traditional Animal Models

| Performance Metric | Traditional Animal Models | New Approach Methodologies (NAMs) | Data Source / Supporting Evidence |

|---|---|---|---|

| Predictivity of Human Response | ~10% success rate for drugs entering clinical trials [23] | Improved human relevance using human cells and tissues; potential for patient-specific testing [23] [27] | High attrition rate (90%) of drugs passing animal trials [23] [27] |

| Testing Timeline | Often requires months to years for chronic toxicity and carcinogenicity studies [28] | Faster results; organ-on-a-chip and computational models can provide data in days to weeks [23] | Accelerated timelines for toxicity and efficacy screening [23] |

| Direct Financial Cost | High (animal procurement, long-term housing, care) [23] | Lower operational costs per test after initial investment [23] | Reduced R&D costs and drug prices [25] |

| Species Translation Gap | Significant due to physiological differences [23] [29] | Minimized by using human-derived cells and tissues [23] [24] | Tragic failures like TGN1412 and unpredictable immunotherapy toxicity [29] |

| Regulatory Acceptance | Long-standing, well-defined pathway [28] | Emerging but growing; FDA Modernization Act 2.0 and specific EMA pathways [23] [26] | FDA's 2025 roadmap and EMA's qualification advice [23] [26] |

Comparative Performance of Different NAM Types

NAMs are not a monolithic category; they encompass a range of technologies with different strengths, applications, and readiness levels.

Table 2: Comparative Analysis of Different NAM Categories

| NAM Category | Key Technologies | Strengths | Limitations / Current Challenges | Sample Experimental Readouts |

|---|---|---|---|---|

| In Vitro Microphysiological Systems | Organoids, Organs-on-chips [23] [24] | Human-specific biology; can detect tissue-specific responses; enable precision medicine [23] [27] | Typically single organs; fail to capture complex multi-organ interactions [23] | Contractility in cardiac organoids [27]; Cytokine release in immunotoxicity assays [29] |

| In Silico Computational Models | AI/ML predictive models, FEA, PBPK, QSP models [23] [29] | High-throughput; can simulate diverse human populations; analyze complex datasets [23] [29] | Dependent on quality and quantity of input data; validation required [23] | Predicted toxicity scores [23]; Simulated von Mises stresses in implant FEA [30] |

| Advanced Cell Culture & Assays | 2D & 3D cell cultures, patient-derived tumor organoids [24] [27] | More physiologically relevant than standard 2D culture; scalable for screening [24] | May lack the complexity of more advanced MPS [24] | IC50 values for drug efficacy; Biomarker changes for hepatotoxicity [28] [29] |

Experimental Protocols and Methodologies

Protocol for Organ-on-a-Chip Efficacy and Toxicity Screening

This protocol is used by companies like Roche and Johnson & Johnson in partnership with platforms like Emulate [23].

- Step 1: System Preparation: Prime the organ-on-a-chip device (e.g., liver-chip) with appropriate cell culture medium. Seed human primary cells (e.g., hepatocytes) or stem cell-derived cells into the microfluidic channels.

- Step 2: Cell Maturation: Allow cells to adhere and form a functional tissue under physiologically relevant flow conditions for 5-14 days. Monitor for established biomarkers of function (e.g., albumin production for liver chips).

- Step 3: Drug Dosing: Introduce the drug candidate into the medium flow at concentrations scaled from anticipated human exposure. A range of doses is tested, typically from therapeutic to multiples thereof to assess safety margins.

- Step 4: Endpoint Analysis: After a set exposure period (e.g., 7 days for repeat-dose toxicity):

- Efficacy: Measure specific functional endpoints relevant to the target (e.g., reduction of a pathogenic protein).

- Toxicity: Assess cell viability (using assays like ATP content), measure release of damage biomarkers (e.g., ALT for liver), and monitor morphological changes.

- Step 5: Data Integration: Compare the drug's efficacy and toxicity profiles to those of known compounds to establish a risk-benefit assessment [23] [27].

Protocol for Finite Element Analysis (FEA) in Biomedical Application

FEA is a computational in silico NAM used to simulate and predict the mechanical behavior of structures under load, with applications in medical device and implant design [30].

- Step 1: Geometry Creation: Develop a precise 3D computer model of the structure. For a lattice-structured implant, this is generated from CAD software, defining parameters like strut diameter and unit cell type (e.g., FCC-Z, BCC-Z) [4] [30].

- Step 2: Meshing: The geometry is subdivided into a finite number of small, simple elements (e.g., tetrahedral or hexahedral elements). A mesh convergence study is performed to ensure results are independent of mesh size [4] [30].

- Step 3: Material Property Assignment: Define the linear elastic, plastic, or hyper-elastic material properties for each part (e.g., Young’s modulus, Poisson’s ratio, yield strength) based on experimental data from mechanical testing [4] [30].

- Step 4: Applying Loads and Boundary Conditions: Simulate real-world physical constraints and forces. For a spinal implant, this includes applying a 100 N follower load and 1.5 Nm pure moments to simulate flexion, extension, and lateral bending [30].

- Step 5: Solving and Validation: The FEA software solves the complex system of equations. Results such as von Mises stress distribution, deformation, and factor of safety are then validated against experimental data from physical compression or biomechanical tests to ensure accuracy [4] [30].

Workflow for an Integrated NAM-Based Safety Assessment

The following diagram illustrates a logical workflow for integrating multiple NAMs into a safety assessment strategy, moving from simple, high-throughput systems to more complex, human-relevant models.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of NAMs relies on a suite of specialized tools and platforms. The following table details key solutions used in the development and application of these methodologies.

Table 3: Essential Research Reagent Solutions for NAMs

| Tool / Solution | Function | Example Use Case |

|---|---|---|

| Human Pluripotent/Adult Stem Cells | Source for generating patient-specific human tissues and organoids. | Creating patient-derived tumor organoids for ex vivo testing of oncology therapies [27]. |

| Specialized Cell Culture Media & Growth Factors | Supports the growth, differentiation, and maintenance of complex 3D cell cultures. | Enabling the maturation of cardiac organoids that beat and pump fluid [24] [27]. |

| Organ-on-a-Chip Platforms | Microfluidic devices that mimic the structure and function of human organs. | Used by Roche & J&J with Emulate for predictive toxicity evaluation of new therapeutics [23]. |

| AI/ML Analytics Platforms | Analyzes complex, high-dimensional data from NAMs (e.g., transcriptomics, imaging). | Translating in vitro phenotypic data into clinically meaningful predictions for dose selection [29]. |

| FEA Software (e.g., ANSYS, Abaqus) | Performs computational simulation of mechanical stress, strain, and deformation. | Predicting the biomechanical performance and failure modes of lattice-structured implants [4] [30]. |

| Quantitative Systems Pharmacology (QSP) Tools | Mechanistic modeling platforms that integrate NAM data to predict clinical outcomes. | Translating in vitro NAM efficacy/toxicity data into predictions of clinical exposure for FIH dose selection [29]. |

The landscape of preclinical drug development is being reshaped by the convergent forces of ethical responsibility, scientific necessity, and regulatory evolution. New Approach Methodologies are no longer speculative concepts but are maturing into practical, powerful tools that offer a more human-relevant, efficient, and predictive path forward. As this guide has illustrated through comparative data and experimental protocols, NAMs—from organ-on-a-chip systems to FEA and other computational models—each have distinct strengths and optimal contexts of use.

The transition will be phased, with NAMs initially complementing animal studies before potentially replacing them in specific areas. For researchers and drug development professionals, success in this new paradigm will require interdisciplinary collaboration, strategic investment in technologies like those listed in the toolkit, and proactive engagement with regulators. By embracing NAMs, the industry is poised to enhance the predictive accuracy of preclinical development, reduce attrition rates in clinical trials, and ultimately get safer, more effective treatments to patients faster and more reliably.

Methodology in Action: Implementing FEA and Laboratory Techniques in Research Pipelines

Finite Element Analysis (FEA) has become a cornerstone of modern engineering, allowing designers to virtually test how products and structures behave under various forces and conditions [9]. In biomedical engineering, FEA provides a powerful computational tool to simulate the biomechanical behavior of biological tissues, medical devices, and their interactions. By breaking complex biological structures into smaller "finite" elements and simulating physical phenomena on each element, FEA tools can predict real-world performance with impressive accuracy, which is crucial for advancing medical research and device development [9]. This capability helps researchers and engineers identify critical biomechanical factors and optimize designs early in the development process, saving time and cost by reducing the need for physical prototypes [9].

The application of FEA in biomedical contexts presents unique challenges and opportunities compared to traditional engineering fields. Biological tissues exhibit complex, often nonlinear material behaviors, and patient-specific anatomical variations must be carefully considered. This article breaks down the complete FEA workflow—pre-processing, solving, and post-processing—with specific focus on biomedical applications, providing researchers with a framework for implementing reliable computational models in their work.

The FEA Workflow: A Three-Stage Process

The finite element method follows a systematic workflow consisting of three main stages: pre-processing, solving, and post-processing. Each stage contributes to the overall accuracy and reliability of the simulation, with particular considerations for biomedical applications.

Stage 1: Pre-processing - Model Preparation

The pre-processing stage involves converting a geometrical model into a discretized system suitable for numerical analysis. This stage establishes the foundation for the entire simulation.

Geometry Acquisition and Creation: Biomedical FEA often begins with acquiring subject-specific anatomical geometries. Recent research emphasizes "the use of subject-specific data" to enhance clinical relevance [31]. Geometries can be generated from medical imaging data (CT, MRI, micro-CT) using segmentation algorithms [31]. For example, one study developed "subject-specific geometries of the defect from in vivo micro-CT scans" to model bone defect healing [31]. Commercial CAD software like SOLIDWORKS is also used for creating detailed component geometries [32].

Meshing and Element Selection: The geometrical model is subdivided into small discrete regions called elements, connected at nodes. This process, known as meshing, transforms the continuous problem into a computationally solvable discrete one [33]. Element selection significantly impacts results; simpler elements like TRI3 (triangle with 3 nodes) can be "too stiff" and "undervalue stress," while higher-order elements like TRI6 (triangle with 6 nodes) or QUAD8 (quadrilateral with 8 nodes) provide better accuracy through quadratic interpolation [33]. For complex biological structures, tetrahedral elements (TET4, TET10) are commonly used, though hexahedral elements (HEX8, HEX20) offer superior accuracy when geometry permits [33].

Material Property Assignment: Biomedical models require careful assignment of material properties that reflect the complex behavior of biological tissues. Unlike engineering materials, biological tissues often exhibit nonlinear, anisotropic, and viscoelastic properties. The development of accurate "nonlinear material models" is essential for model reliability [34]. One study highlighted the importance of using "ductile damage model" for titanium trabecular structures fabricated via additive manufacturing [34].

Boundary Conditions and Loading: Applying realistic constraints and loads is particularly important in biomedical simulations. Research shows that "incorporating subject-specific boundary conditions significantly enhanced model accuracy" [31]. These may include muscle forces, joint reactions, occlusal loads in dental applications, or physiological pressure distributions. For example, a dental study applied "vertical (100N at 0°) and oblique (100N at 45°) loading conditions" to simulate biting forces [32].

Stage 2: Solving - Computational Analysis

The solving stage involves the computational process of assembling system matrices and solving the governing equations across the discretized domain.

Solution Methods: The General Stiffness Method (also known as the Displacement Method) is commonly used by most FEA software [33]. This method "calculates the displacement at each node and then uses interpolation over the elements to determine the solution" [33]. From displacement solutions, strain is derived, and stress is then calculated using material stress-strain relationships [33].

Analysis Types: Biomedical simulations may employ various analysis types depending on the research question:

- Linear static analysis for equilibrium problems

- Nonlinear analysis for large deformations and complex material behaviors [9]

- Dynamic analysis for time-dependent phenomena

- Multiphysics simulations for coupled phenomena (thermal-structural, fluid-structure interactions)

Specialized solvers may be employed for specific applications. For instance, "Abaqus/Explicit (explicit solver suited for high-speed dynamic events like impacts)" might be used for trauma simulations, while "Abaqus/Standard (implicit solver for static and low-speed dynamic problems)" would be appropriate for most physiological loading scenarios [9].

Computational Considerations: The solving process is computationally intensive, with high-resolution simulations demanding "powerful computing resources and extended processing times" [1]. Many modern FEA packages support high-performance computing (HPC) to distribute calculations across multiple processors, significantly reducing solution times for complex biomedical models [9].

Stage 3: Post-processing - Results Interpretation

Post-processing involves analyzing and interpreting the solution data to extract meaningful engineering insights and make research conclusions.

Data Visualization and Extraction: Results such as stress distributions, displacement fields, and strain patterns are visualized using contour plots, vector diagrams, and deformation animations. In biomedical contexts, specific quantitative measures are often extracted, such as "Von Mises stress values in the PDL and cortical bone" in dental studies [32] or "compressive strains within the defect" in bone healing research [31].

Validation and Verification: Establishing model credibility is essential, particularly for biomedical applications with clinical implications. This involves "comparing experiments and simulations" [34] to ensure the model accurately represents reality. For example, one study used "axial strain data from strain gauges on the fixators" to validate their bone healing models [31].

Statistical Analysis: Quantitative results often require statistical processing to draw meaningful conclusions. Research protocols may specify that "stress distribution results were analyzed using MedCalc software" to compare performance across different conditions or designs [32].

Leading FEA Software for Biomedical Applications

Selecting appropriate FEA software requires careful consideration of capabilities, usability, and specialized features for biomedical modeling.

Table 1: Comparison of Leading FEA Software Platforms

| Software | Strengths | Biomedical Applications | Limitations |

|---|---|---|---|

| ANSYS Mechanical | Comprehensive multiphysics capabilities; High-fidelity results; Extensive material library [9] | Orthopedic implant analysis; Surgical instrument design; Biomedical device testing [9] | Steep learning curve; High cost [9] |

| Abaqus (SIMULIA) | Advanced non-linear analysis; Complex material behavior (plastics, rubbers, composites); Sophisticated contact modeling [9] | Soft tissue mechanics; Bone-implant interactions; Cardiovascular devices [9] | Less intuitive interface; Significant cost [9] |

| MSC Nastran | Reliable for linear statics, dynamics, and buckling; Extensive verification history; Efficient for large models [9] | Structural analysis of external fixators; Prosthetic components; Surgical guides [9] | Nonlinear capabilities less advanced than Abaqus [9] |

| Altair OptiStruct | Strong design optimization; Topology optimization; Lightweighting [9] | Patient-specific implant design; Bone-conserving prosthesis design [9] | Units-based licensing may be complex [9] |

Case Study: FEA of Dental Splinting Materials

A recent study demonstrates a complete FEA workflow applied to periodontal splinting, providing an excellent example of biomedical FEA implementation.

Experimental Protocol and Methodology

Research Objective: To evaluate and compare stress distribution of four different splint materials—composite, fiber-reinforced composite (FRC), polyetheretherketone (PEEK), and metal—on mandibular anterior teeth with 55% bone loss [32].

Model Development:

- 3D models of mandibular anterior teeth were constructed using SOLIDWORKS 2020 [32]

- Finite element models underwent meshing using ANSYS software [32]

- Each splint material was assigned specific mechanical properties (Young's modulus, density, Poisson's ratio) [32]

- Models simulated both non-splinted and splinted configurations [32]

Loading Conditions:

Analysis Method: Finite element analysis simulations were performed using ANSYS software to calculate stress distribution using the Von Mises stress criterion [32].

Quantitative Results and Interpretation

Table 2: Von Mises Stress (MPa) in Cortical Bone Across Splint Types

| Splint Type | Vertical Load (100N at 0°) | Oblique Load (100N at 45°) |

|---|---|---|

| Non-Splinted | 0.43 | 0.74 |

| Composite | 0.44 | 0.62 |

| FRC | 0.36 | 0.41 |

| Metal Wire | 0.34 | 0.51 |

| PEEK | Data not shown in excerpt | Data not shown in excerpt |

Table 3: Von Mises Stress (MPa) in Periodontal Ligament (Oblique Loading)

| Tooth Location | Non-Splinted | Composite | FRC | Metal |

|---|---|---|---|---|

| Central Incisors | 0.39 | 0.19 | 0.13 | 0.26 |

| Lateral Incisors | 0.32 | 0.24 | 0.19 | 0.25 |

| Canine | 0.31 | 0.45 | 0.38 | 0.36 |

The results demonstrated that "non-splinted teeth exhibited the highest stress levels, particularly under oblique loading conditions" [32]. Among splinting materials, "FRC showed the most effective reduction in stress across all teeth, especially under vertical loads" [32]. The study concluded that "FRC splints emerged as the most effective material for minimizing stress under both vertical and oblique loading conditions" [32].

Essential Research Reagents and Computational Tools

Successful implementation of biomedical FEA requires both computational resources and specialized materials for model validation.

Table 4: Essential Research Toolkit for Biomedical FEA

| Tool/Category | Specific Examples | Function/Role in Research |

|---|---|---|

| FEA Software | ANSYS, Abaqus, MSC Nastran, Altair OptiStruct [9] | Primary computational platform for simulation and analysis |

| CAD Software | SOLIDWORKS, CATIA [32] [9] | Geometrical model creation and modification |

| Imaging & Segmentation | Micro-CT, CT, MRI scanners; Segmentation algorithms [31] | Acquisition of subject-specific anatomical geometries |

| Material Testing Equipment | Tensile testers, Dynamic mechanical analyzers [1] | Characterization of material properties for biological tissues and biomaterials |

| Validation Instruments | Strain gauges, Load cells, Motion capture systems [31] | Experimental validation of computational models |

| Statistical Analysis | MedCalc, MATLAB, Python with statistical libraries [32] | Statistical processing of simulation results |

FEA vs. Traditional Experimental Methods

While FEA offers powerful capabilities, understanding its relationship with traditional experimental methods is essential for comprehensive biomedical research.

Advantages of FEA:

- Cost-Effective: Significantly reduces the need for expensive prototypes and physical testing [1]

- Detailed Analysis: Identifies stress concentrations, weak points, and failure modes with precision [1]

- Faster Iterations: Allows engineers to modify and test designs efficiently [1]

- Extreme Condition Simulation: Evaluates performance under high pressure, temperature, and impact loads that may be unsafe or impractical for physical testing [1]

Advantages of Traditional Stress Testing:

- Real-World Accuracy: Reflects actual performance under operational conditions [1]

- Regulatory Compliance: Often mandatory for meeting industry safety and quality standards [1]

- Failure Mode Analysis: Offers direct visual and measurable insights into failure points [1]

Hybrid Approach: For optimal results, researchers often adopt "a hybrid approach that integrates both Finite Element Analysis (FEA) and Traditional Stress Testing" [1]. This strategy balances computational efficiency with experimental validation, particularly important in biomedical applications where both accuracy and safety are critical.