Beyond Accuracy: Advanced Strategies for Handling Class Imbalance in Parasite Image Classification

Class imbalance is a pervasive challenge that significantly hinders the development of robust deep-learning models for parasite image classification, often leading to biased predictions and poor generalization on rare species...

Beyond Accuracy: Advanced Strategies for Handling Class Imbalance in Parasite Image Classification

Abstract

Class imbalance is a pervasive challenge that significantly hinders the development of robust deep-learning models for parasite image classification, often leading to biased predictions and poor generalization on rare species or life stages. This article provides a comprehensive guide for researchers and biomedical professionals, addressing the issue from foundational concepts to cutting-edge solutions. We explore the root causes and impacts of imbalance in medical imaging datasets, critically evaluate a spectrum of methodological approaches from data-level to algorithm-level solutions, and delve into practical troubleshooting and optimization techniques for real-world deployment. The content further establishes a rigorous framework for model validation and comparative analysis, emphasizing clinical relevance. By synthesizing the latest research, this article aims to equip the community with the knowledge to build more accurate, reliable, and equitable diagnostic tools for parasitology.

The Imbalance Problem: Understanding its Impact on Parasitological AI

Defining Class Imbalance in the Context of Parasite Microscopy

This technical support guide provides researchers with practical solutions for a common hurdle in automated parasite detection: class imbalance. Learn how to diagnose and overcome it to build more reliable AI models.

Table of Contents

- What is Class Imbalance and Why is it a Problem in Parasite Microscopy?

- How Can I Identify Class Imbalance in My Parasite Image Dataset?

- What Resampling Strategies Can I Use to Fix Class Imbalance?

- My Model is Still Biased After Resampling. What Advanced Techniques Can I Try?

- How Do I Properly Evaluate a Model Trained on an Imbalanced Dataset?

What is Class Imbalance and Why is it a Problem in Parasite Microscopy?

A: Class imbalance occurs when the number of examples in one class (e.g., non-parasitized cells) significantly outweighs the examples in another class (e.g., parasitized cells) [1]. In parasite microscopy, this is not just common—it's the norm. For instance, you might have thousands of images of healthy red blood cells for every one image of a cell infected with a rare parasite.

This creates a major problem for machine learning models. These models learn by minimizing error, and if one class dominates the dataset, the easiest way to reduce error is to always predict the majority class. The model becomes biased and essentially "gives up" on learning to identify the minority class, treating it as noise [1]. Consequently, while the model may show high overall accuracy, it will fail in its primary task: correctly detecting parasites [2] [3].

How Can I Identify Class Imbalance in My Parasite Image Dataset?

A: Before tackling imbalance, you must confirm its presence and severity. The process is straightforward and involves calculating the class distribution.

Experimental Protocol: Quantifying Class Imbalance

- Data Loading: Load your annotated dataset. For this example, we use a parasite image dataset [4].

- Class Counting: Use a simple function to count the number of samples in each class. The

Counterfunction from Python's collections module is ideal for this. - Visualization: Create a bar chart to visualize the difference in class counts.

Code Example:

Example Output: Using the dataset from [4], an analysis might reveal a distribution like this:

Table: Example Class Distribution in a Parasite Dataset

| Class Label | Class Name | Number of Images | Percentage of Total |

|---|---|---|---|

| 0 | Uninfected / Host Cells | 9,450 | 94.5% |

| 1 | Parasitized | 550 | 5.5% |

| Total | 10,000 | 100% |

This table clearly shows a severe imbalance, where the minority class (Parasitized) represents only a small fraction of the entire dataset.

What Resampling Strategies Can I Use to Fix Class Imbalance?

A: Resampling is the most direct approach to rectifying class imbalance. It adjusts the training dataset to have a more balanced class distribution. The two primary categories are Oversampling and Undersampling [5] [1].

Experimental Protocol: Implementing Resampling with imbalanced-learn

- Install Library: Ensure you have the

imbalanced-learnpackage installed (pip install imbalanced-learn). - Split Data: First, split your data into training and testing sets. Crucially, apply resampling only to the training set to avoid data leakage and ensure your test set remains representative of the real-world distribution [5].

- Apply Resampling: Use

RandomOverSamplerorRandomUnderSamplerfrom theimblearnlibrary.

Code Example:

Table: Comparison of Basic Resampling Strategies

| Strategy | Method | Pros | Cons | Best For |

|---|---|---|---|---|

| Random Oversampling | Duplicates random examples from the minority class [5]. | Prevents loss of information from the majority class. | Can lead to overfitting, as the model sees exact copies of images [1] [6]. | Smaller datasets where the majority class data is critical. |

| Random Undersampling | Randomly removes examples from the majority class [5]. | Reduces training time and can improve generalization. | May remove potentially useful information, degrading model performance [1]. | Very large datasets where discarding majority samples is acceptable. |

| SMOTE (Synthetic Minority Oversampling) | Creates synthetic minority class samples by interpolating between existing ones [1]. | Reduces risk of overfitting compared to random oversampling. | Can generate unrealistic or noisy samples, which is problematic for detailed medical images [6]. | Situations where random oversampling leads to clear overfitting. |

My Model is Still Biased After Resampling. What Advanced Techniques Can I Try?

A: For persistent bias, or in cases of extreme imbalance, more sophisticated methods are required. Two advanced approaches are Algorithmic Modification and Anomaly Detection.

1. Algorithmic Modification: Using Class Weights This technique does not change the data but tells the model to pay more attention to the minority class during training. It's simple to implement in frameworks like TensorFlow/Keras [7].

Code Example:

2. Anomaly Detection Approach This paradigm reframes the problem: instead of a binary classification, it treats parasite detection as an anomaly detection task. The model is trained only on the majority class (uninfected cells) and learns to recognize them. A parasitized cell is then identified as an "outlier" or "anomaly" because it looks different from the norm [6].

Experimental Protocol: Anomaly Detection with an Autoencoder

- Model Selection: Train an autoencoder (a neural network that learns to compress and reconstruct its input) exclusively on images of uninfected cells.

- Learning Phase: The autoencoder learns to reconstruct uninfected cells with low error.

- Detection Phase: When presented with a new image, a high reconstruction error indicates that the input is anomalous and likely contains a parasite.

This method, as demonstrated in studies like AnoMalNet, is highly effective for extreme class imbalance because it does not require a large number of positive (parasitized) samples during training [6].

How Do I Properly Evaluate a Model Trained on an Imbalanced Dataset?

A: Accuracy is a misleading metric for imbalanced problems. A model that simply always predicts "uninfected" could achieve 94.5% accuracy on the example dataset in Table 1, but it would be useless. You must use metrics that are sensitive to the performance on the minority class [7] [1].

Key Metrics and Their Interpretation:

- Precision: Of all the images predicted as "parasitized," how many are actually parasitized? (Measures false positives).

- Recall (Sensitivity): Of all the actually parasitized images, how many did the model correctly find? (Measures false negatives).

- F1-Score: The harmonic mean of Precision and Recall. This single metric provides a balanced view of the model's performance on the minority class.

- Confusion Matrix: A table that gives a complete picture of True Positives, False Positives, True Negatives, and False Negatives.

- Precision-Recall (PR) Curve: Often more informative than the ROC curve for imbalanced data, as it focuses directly on the performance of the positive (minority) class [7].

Experimental Protocol: Comprehensive Model Evaluation

Code Example:

Table: The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Parasite Image Classification | Example / Specification |

|---|---|---|

| Digital Microscopy System | Acquires high-resolution digital images of blood smears or other samples for analysis. | System capable of 400x and 1000x magnification [4]. |

| Benchmarked Parasite Datasets | Provides standardized, annotated data for training and validating models. | Microscopic Images of Parasites Species dataset [4]. |

imbalanced-learn (imblearn) Library |

Python library offering a suite of resampling algorithms (SMOTE, RandomUnderSampler, etc.) [5]. | pip install imbalanced-learn |

| Deep Learning Framework | Provides the infrastructure to design, train, and evaluate complex models like CNNs and autoencoders. | TensorFlow & Keras [7] or PyTorch. |

| Computational Hardware (GPU) | Accelerates the training of deep learning models, which is computationally intensive. | NVIDIA GPUs with CUDA support. |

| Self-Supervised Learning Framework | Leverages unlabeled data for pre-training, improving feature learning when labeled data is scarce [3]. | Frameworks like BYOL (Bootstrap Your Own Latent) [3]. |

In parasite image classification, a class imbalance occurs when one category of data (e.g., "uninfected cells") significantly outnumbers another (e.g., "a specific parasite life-stage") [8]. This guide details how this data skew creates diagnostic blind spots, where automated systems fail to identify crucial minority classes, leading to misdiagnosis. The following sections provide a troubleshooting guide, experimental protocols, and resource lists to help researchers mitigate these critical issues.

Troubleshooting Guide & FAQs

FAQ 1: Why does my model have high overall accuracy but fails to detect infected cells in critical cases?

- Problem: This is a classic symptom of class imbalance. The model becomes biased toward the majority class (e.g., uninfected cells) because it is penalized less for missing the minority class (e.g., rare parasites) during training [9].

- Solution:

- Use Appropriate Metrics: Immediately stop using accuracy as your primary metric. Adopt a suite of metrics that are sensitive to class imbalance, such as Precision, Recall, F1-score, and Area Under the ROC Curve (AUC-ROC) [9]. These provide a clearer picture of minority class performance.

- Inspect the Confusion Matrix: This will visually show you if the model is misclassifying one specific parasite stage as another or as uninfected [9].

- Implement Cost-Sensitive Learning: Adjust your model's loss function to assign a higher penalty for misclassifying minority class samples. This forces the model to pay more attention to them during training [10].

FAQ 2: My model is overfitting to the few minority class samples I have. How can I generate more reliable data?

- Problem: Simple data duplication (random oversampling) leads to overfitting because the model learns the same examples repeatedly [9].

- Solution:

- Use Advanced Synthetic Data Generation: Employ Synthetic Minority Over-sampling Technique (SMOTE) or its variants to create new, synthetic minority class samples by interpolating between existing ones [9].

- Address Intra-Class Imbalance: Be aware that SMOTE can cause "intra-class mode collapse" if the minority class itself has diverse sub-types. For advanced cases, use methods that first identify sparse and dense regions within the minority class before generation to ensure diversity [11].

- Combine with Data Cleaning: After oversampling, use undersampling techniques like Tomek Links to remove overlapping or noisy samples from the majority class, creating a cleaner decision boundary [5] [9].

FAQ 3: I am working with limited computational resources. What is a computationally efficient strategy for handling imbalance?

- Problem: Complex models and data generation can be prohibitive in resource-constrained settings, which are common in field clinics [12].

- Solution:

- Leverage Lightweight Models: Utilize recently proposed efficient architectures like Hybrid Capsule Networks (Hybrid CapNet) which are designed for high accuracy with minimal parameters (e.g., 1.35M parameters, 0.26 GFLOPs) [12] or YAC-Net for parasite egg detection [13].

- Algorithmic Ensemble Methods: Use ensemble methods like Balanced Random Forests or EasyEnsemble which are specifically designed to perform well on imbalanced data and can be more effective than applying resampling separately [14].

- Adjust Class Weights: The simplest approach is to use the

class_weight='balanced'parameter available in many classifiers (e.g., in scikit-learn). This adjusts the loss function automatically without needing to generate new data [9].

Experimental Protocols for Mitigating Blind Spots

Protocol 1: Implementing a Composite Loss Function for Robust Training

This methodology is designed to enhance classification accuracy, spatial localization, and robustness to class imbalance and annotation noise simultaneously [12].

- Objective: To train a model that is not only accurate but also spatially aware and robust to noisy labels in imbalanced parasite datasets.

- Materials: A labeled dataset of parasite images (e.g., MP-IDB, IML-Malaria) with known class imbalance.

- Procedure:

- Model Architecture: Employ a lightweight hybrid architecture (e.g., Hybrid CapNet) that combines CNN-based feature extraction with capsule layers to preserve spatial hierarchies [12].

- Loss Function Configuration: Integrate a novel composite loss function (Ltotal) that combines four components:

- Margin Loss (Lmargin): Ensures correct classification of capsule outputs [12].

- Focal Loss (Lfocal): Down-weights the loss assigned to well-classified examples, making the model focus on hard-to-classify minority samples [12].

- Reconstruction Loss (Lrecon): Uses a decoder network to reconstruct the input image, encouraging the capsules to capture meaningful features [12].

- Offset Regression Loss (L_reg): Improves the spatial localization of parasites within the image [12].

- Training: Train the model by minimizing the combined loss:

L_total = L_margin + αL_focal + βL_recon + γL_reg, where α, β, and γ are weighting hyperparameters.

- Validation: Perform both intra-dataset and cross-dataset evaluations to assess generalization. Use Grad-CAM visualizations to confirm the model focuses on biologically relevant parasite regions [12].

Protocol 2: A Standardized Segmentation and Multi-Stage Classification Framework

This protocol uses traditional image processing and machine learning to create a robust pipeline for detecting parasites and classifying their species and life-cycle stages from both thick and thin blood smears [15].

- Objective: To accurately segment and classify malaria parasites in varied smear images, addressing imbalance through a multi-stage approach.

- Materials: Microscopic images of thick and thin blood smears.

- Procedure:

- Segmentation:

- Feature Extraction: Extract morphological and color features from the segmented parasite regions.

- Multi-Stage Classification: Implement a cascaded classifier, such as a Random Forest (RF):

- Stage 1: Parasite Detection. Classify segments as "Parasite" or "Non-Parasite".

- Stage 2: Species Recognition. Route detected parasites to a classifier for "P. Falciparum" vs. "P. Vivax".

- Stage 3: Life-Cycle Staging. Finally, classify the parasite into its life-cycle stage (e.g., Ring, Trophozoite, Schizont, Gametocyte) [15].

- Validation: Evaluate the accuracy at each stage (detection, species recognition, staging) separately to identify where performance drops for minority classes occur [15].

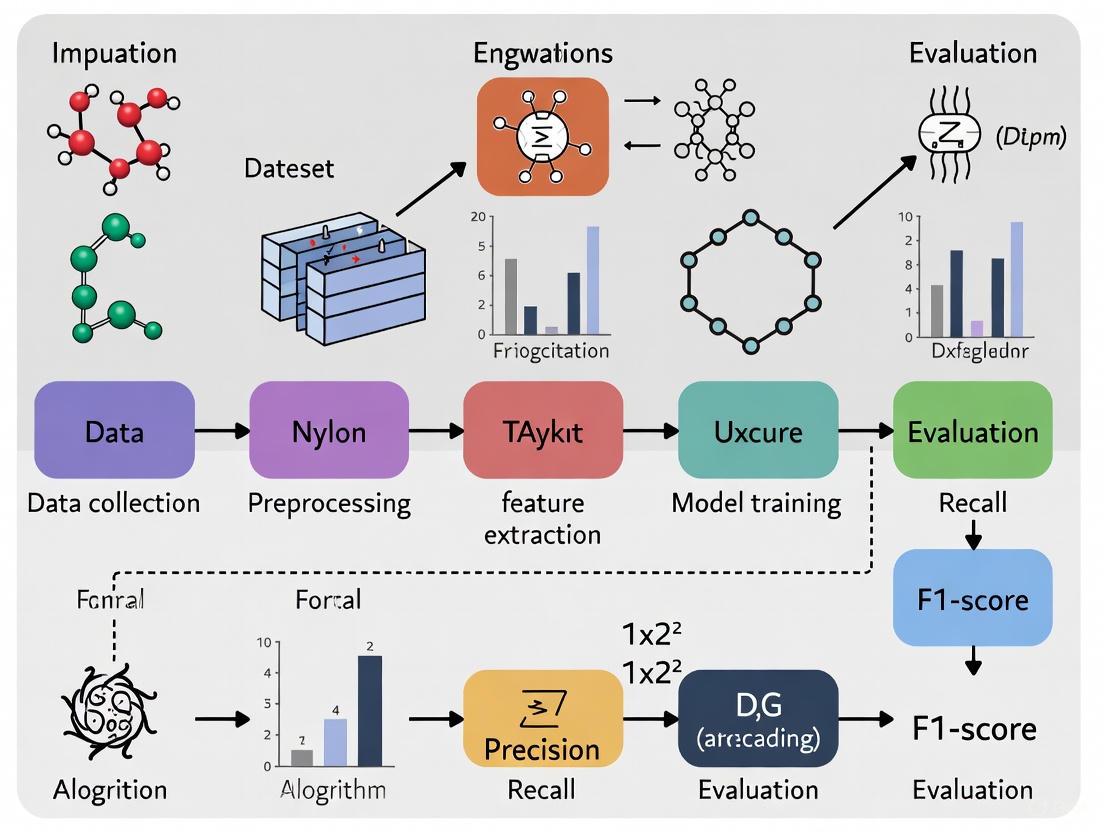

The logical workflow for this multi-stage framework is outlined below.

Performance Data & Technical Solutions

Table 1: Quantitative Impact of Class Imbalance Solutions on Model Performance

| Solution Category | Specific Technique | Reported Performance Improvement | Key Advantage / Mitigated Blind Spot |

|---|---|---|---|

| Advanced Architecture | Hybrid Capsule Network (Hybrid CapNet) [12] | Up to 100% multiclass accuracy; superior cross-dataset generalization. | Preserves spatial relationships; interpretable (via Grad-CAM); lightweight. |

| Synthetic Data Generation | GAN with CBLOF & OCS Filter [11] | ~3% accuracy increase on medical datasets (BloodMNIST, etc.). | Addresses intra-class imbalance; generates diverse, high-quality samples. |

| Standardized Image Processing | Phansalkar Thresholding + EKM + Random Forest [15] | 99.86% segmentation accuracy; 90.78% staging accuracy. | Robust to lighting variations; effective on both thick and thin smears. |

| Lightweight Detection Model | YAC-Net (YOLO-based) [13] | 97.8% precision, 97.7% recall; parameters reduced by one-fifth. | Enables deployment in resource-constrained, real-world environments. |

Table 2: The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item Name | Function / Role in Mitigating Blind Spots | Example Use Case |

|---|---|---|

| Imbalanced-Learn Library [14] [5] | Provides a suite of algorithms for resampling (SMOTE, Tomek Links) and ensemble methods (EasyEnsemble). | Quickly prototyping different resampling strategies in Python. |

| Composite Loss Function [12] | A weighted combination of loss types (Margin, Focal, Reconstruction, Regression) to jointly optimize for multiple objectives. | Training a model to be accurate, spatially precise, and robust to label noise in an imbalanced dataset. |

| Grad-CAM Visualizations [12] | Produces heatmaps showing which image regions the model used for prediction. | Debugging "blind spots" by verifying the model focuses on parasites, not artifacts. |

| Capsule Networks [12] [16] | Neural networks that encode spatial hierarchies and pose relationships, improving robustness to viewpoint changes. | Classifying parasite life-cycle stages where orientation and spatial layout are critical. |

| Graph-Based Transformation [8] | Constructs graphs to explore relationships between a test sample and minority/majority classes, creating a dedicated feature projection. | Improving classification in small sample size situations with high imbalance, without data augmentation. |

The relationship between class imbalance and the resulting diagnostic blind spots, along with the mitigation pathways, can be visualized as a causal loop.

Troubleshooting Guides & FAQs

FAQ: How does class imbalance manifest in parasite image analysis?

Class imbalance is a prevalent issue that significantly impacts the performance of deep learning models in parasite diagnostics. The most common sources of imbalance are:

- Rare Species: Some parasite species are inherently less common in samples. For instance, among the five Plasmodium species causing malaria, P. falciparum is more prevalent in Africa, while P. vivax is found elsewhere, creating natural prevalence disparities [12].

- Life-Cycle Stages: Within a single species, different life-cycle stages occur with varying frequency. In malaria parasites, the four stages—gametocyte, ring, trophozoite, and schizont—are not equally represented in blood samples, with the trophozoite and ring stages often being more visible in human infections [12].

- Data Collection Biases: The availability of genetic and image data is heavily biased by which hosts researchers study. Helminth species infecting hosts of conservation concern or terrestrial hosts are more likely to have genetic data available, which does not reflect their true biodiversity [17]. Furthermore, research effort is skewed toward certain parasite species based on taxonomy, host human-use status, and even the number of authors who originally described the species [18].

FAQ: What technical solutions can address class imbalance in parasite image datasets?

Several advanced technical solutions have been developed to mitigate class imbalance:

| Solution Category | Specific Methods | Key Function | Reported Performance Gain |

|---|---|---|---|

| Advanced Network Architectures | Hybrid Capsule Network (Hybrid CapNet) [12] | Combines CNN feature extraction with capsule routing to preserve spatial hierarchies for rare stage classification. | Up to 100% accuracy in multiclass malaria stage classification; significantly improved cross-dataset generalization. |

| Custom Loss Functions | Cost-sensitive learning [10] | Applies larger penalty weights for misclassifying minority class samples during model training. | Rebalances class learning; reduces bias toward majority classes. |

| Data Augmentation | GAN-based augmentation with CBLOF & OCS filter [11] | Identifies intra-class sparse samples; generates diverse synthetic data focused on underrepresented features. | ~3% accuracy improvement on medical image datasets (BloodMNIST, PathMNIST). |

| Attention Mechanisms | YOLO-CBAM (YCBAM) [19] | Integrates self-attention and Convolutional Block Attention Module to focus on small, critical features like pinworm eggs. | mAP@0.5 of 0.995 for pinworm egg detection in challenging conditions. |

Experimental Protocol: Implementing a Cost-Sensitive Loss Function

For researchers dealing with imbalanced parasite image datasets, here is a detailed methodology for implementing a cost-sensitive loss function, a common approach to solving this problem [10].

1. Problem Setup:

Assume a classification task with C classes (e.g., different parasite species or life-cycle stages). Let N_total be the total number of samples in your training dataset, and N_j be the number of samples in class j.

2. Calculate Class Penalty Weights:

Compute a penalty weight W_j for each class j to increase the cost of misclassifying minority class samples. The formula is:

W_j = N_total / (C * N_j)

This ensures that classes with fewer samples (N_j is small) receive a larger weight (W_j is large).

3. Integrate Weights into Loss Function:

Incorporate these weights into a standard cross-entropy loss function to create a Weighted Cross-Entropy Loss. For a batch of N samples, the loss is calculated as:

Weighted Loss = - (1/N) * Σ_i Σ_j W_j * Y_ij * log(P_ij)

Where:

iiterates over the batch samples.jiterates over the classes.Y_ijis the true label (1 if sampleibelongs to classj, else 0).P_ijis the model's predicted probability that sampleibelongs to classj.

4. Model Training: During training, the model will minimize this weighted loss. This forces the model to pay more attention to correctly classifying the minority classes because misclassifications for these are more costly.

Experimental Protocol: GAN-Augmentation for Intra-Class Imbalance

This two-step protocol addresses the challenge of intra-class mode collapse in GANs, where generated samples lack the diversity of real minority classes [11].

Step-by-Step Workflow:

Identify Intra-Class Sparse and Dense Samples:

- Tool: Cluster-Based Local Outlier Factor (CBLOF) algorithm.

- Action: Apply CBLOF to the feature space of the minority class(es) within your training set. This identifies which samples are in dense, common regions and which are in sparse, underrepresented regions ("hard" samples).

Train Conditional GAN with Sparse Sample Focus:

- Input: Use the identified sparse and dense samples as conditions for training the GAN.

- Process: This conditions the GAN to specifically learn and focus on generating samples that resemble the challenging, sparse examples, thereby increasing the diversity of the generated data.

Filter Generated Samples:

- Tool: One-Class SVM (OCS) algorithm.

- Action: After the GAN has been trained and has generated new synthetic samples, use the OCS model trained on the real minority class data as a noise filter. This step removes low-quality or unrealistic generated samples ("outliers"), ensuring only "pure," high-quality augmented samples are added to your dataset.

Train Classification Model:

- Combine the original dataset with the newly generated, high-quality synthetic samples to create a balanced training set for your final parasite image classification model.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Solution | Function in Addressing Imbalance | Example in Parasitology Research |

|---|---|---|

| Capsule Networks (CapsNets) | Preserves hierarchical pose relationships and spatial context, which is crucial for distinguishing subtly different parasite life-cycle stages [12]. | Used in Hybrid CapNet for precise malaria parasite life-cycle stage classification (ring, trophozoite, etc.) [12]. |

| Composite Loss Functions | Jointly optimizes multiple objectives (e.g., classification, spatial localization, reconstruction) to enhance robustness against class imbalance and annotation noise [12]. | A novel function integrating margin, focal, reconstruction, and regression losses improved malaria classification accuracy and spatial accuracy [12]. |

| Convolutional Block Attention Module (CBAM) | Enhances feature extraction by focusing the model's attention on spatially and channel-wise important regions, crucial for detecting small, rare parasite eggs [19]. | Integrated into the YCBAM model to achieve high precision (0.9971) and recall (0.9934) for detecting small pinworm eggs in microscopic images [19]. |

| Public Datasets with Datasheets | Provides well-documented, curated data for auditing models for equitable performance across different subpopulations and for performing rigorous secondary analyses [20]. | Essential for "research parasite" studies that test for biases and ensure fairness in machine learning models for parasite diagnosis [20]. |

Frequently Asked Questions

What is the BBBC041v1 dataset and why is it used in malaria research? The BBBC041v1 is a public benchmark collection of P. vivax infected human blood smears, containing 1,364 images with approximately 80,000 annotated cells. It's widely used in malaria research because it provides standardized data for developing and testing automated parasite detection and classification systems. The dataset includes cells from three different sources (Brazil, Southeast Asia, and time course studies), stained with Giemsa reagent, and contains detailed annotations for both infected and uninfected cells, making it valuable for training machine learning models [21].

Why is class imbalance a significant problem in malaria image datasets? Class imbalance severely impacts model performance because most classification methods assume equal occurrence of classes. In medical imaging like malaria detection, this leads to biased learning where models become good at predicting common classes but fail to identify rare conditions. For example, in BBBC041v1, uninfected RBCs comprise over 95% of all cells, meaning a naive model that always predicts "uninfected" could achieve 95% accuracy while completely failing to detect malaria parasites [21] [22]. This is dangerous for real-world applications where identifying the minority class (infected cells) is critically important.

Which evaluation metrics are most appropriate for imbalanced malaria datasets? For imbalanced datasets, accuracy is misleading and should be supplemented with other metrics [23] [24]:

| Metric | Formula | When to Use |

|---|---|---|

| Precision | TP / (TP + FP) | When false positives are costly (e.g., unnecessary treatments) |

| Recall | TP / (TP + FN) | When false negatives are critical (e.g., missed infections) |

| F1 Score | 2 × (Precision × Recall) / (Precision + Recall) | Balanced measure of both precision and recall |

| Specificity | TN / (TN + FP) | When correctly identifying negatives is important |

Recall and F1 score are particularly important for malaria detection since false negatives (missing actual infections) have serious health consequences [23].

What technical approaches effectively address class imbalance in parasite classification? Several approaches have proven effective:

Data Augmentation with GANs: Generating synthetic minority class samples using Generative Adversarial Networks helps balance class distribution. Advanced methods like CBLOF-OCS GANs specifically address intra-class mode collapse by identifying and focusing on sparse regions within classes [11].

Architectural Improvements: Custom CNN architectures with attention mechanisms, such as Soft Attention Parallel CNNs (SPCNN), have achieved 99.37% accuracy on malaria classification by focusing on relevant image regions [25].

Cost-sensitive Learning: Modifying loss functions to assign higher weights to minority class misclassifications, forcing the model to focus more on learning from rare cases [26].

One-Class Classification: Training models using only samples from the majority class (normal cells) and treating minority classes as anomalies, which works well when infected samples are extremely rare [26].

Troubleshooting Guides

Problem: Model Achieves High Accuracy But Misses Infected Cells

Symptoms

- Test accuracy exceeds 95% but recall for infected cell classes is very low

- Model consistently misclassifies infected cells as uninfected

- Confusion matrix shows high false negative rates for minority classes

Solution Steps

- Replace Accuracy with Better Metrics: Monitor precision, recall, and F1-score for each class separately during training [23] [24].

Implement Weighted Loss Functions: Use class-weighted cross-entropy loss that assigns higher penalties for misclassifying minority classes:

Apply Strategic Oversampling: Use techniques like SMOTE or GAN-based generation specifically for sparse intra-class regions rather than uniform oversampling [11].

Validate with Cross-Validation: Employ stratified k-fold cross-validation (as used in [27]) to ensure representative sampling of all classes during evaluation.

Symptoms

- Good performance on training data but poor on validation/test sets

- Significant performance drop when testing on images from different geographic regions

- High variance in performance across different parasite stages

Solution Steps

- Enhance Data Diversity: Incorporate data from multiple sources like the three different preparations in BBBC041v1 (Brazil, Southeast Asia, time course) [21].

Apply Domain Adaptation: Use transfer learning with models pre-trained on diverse medical images, or employ domain adaptation techniques.

Implement Advanced Architectures: Adopt attention-based models like YOLO-Para series that focus on discriminative features across different parasite morphologies [28].

Use Robust Preprocessing: Apply sequential preprocessing including dilation, CLAHE, and normalization to enhance generalizable features [25].

Quantitative Analysis of BBBC041v1 Imbalance

Class Distribution in BBBC041v1 Dataset

| Cell Type | Class | Approximate Percentage | Impact on Model Training |

|---|---|---|---|

| Uninfected RBCs | Majority | >95% | Models bias toward predicting "uninfected" |

| Infected Cells (all stages) | Minority | <5% | Under-represented in training |

| Gametocytes | Rare | ~0.3% | Often misclassified without special handling |

| Rings | Rare | ~0.9% | Critical for early detection but sparse |

| Trophozoites | Rare | ~1.2% | Intermediate stage, moderate representation |

| Schizonts | Rare | ~0.8% | Late stage, important for treatment decisions |

| Leukocytes | Minority | ~1.8% | Often confused with infected cells |

Data synthesized from BBBC041v1 documentation [21]

Performance Comparison of Balance Handling Techniques

| Method | Accuracy | Precision | Recall | F1-Score | Implementation Complexity |

|---|---|---|---|---|---|

| Basic CNN (No balancing) | 95.2% | 34.5% | 28.7% | 31.4% | Low |

| Weighted Loss Function | 96.8% | 72.3% | 69.5% | 70.9% | Medium |

| Data Augmentation (Traditional) | 97.1% | 75.6% | 73.2% | 74.4% | Medium |

| GAN-Based Augmentation (CBLOF-OCS) | 98.3% | 89.7% | 87.4% | 88.5% | High |

| Attention CNN (SPCNN) | 99.4% | 99.4% | 99.4% | 99.4% | High |

| Seven-Channel CNN [27] | 99.5% | 99.3% | 99.3% | 99.3% | High |

Performance metrics compiled from recent studies [27] [25] [11]

Experimental Protocols

GAN-Based Data Augmentation for Intra-Class Imbalance

Objective: Generate diverse synthetic samples for minority classes, particularly focusing on sparse regions within classes.

Materials:

- BloodMNIST dataset or BBBC041v1 preprocessed patches

- Python with PyTorch/TensorFlow

- Cluster-Based Local Outlier Factor (CBLOF) implementation

- One-Class SVM (OCS) algorithm

Methodology:

- Sparse Region Identification:

- Apply CBLOF algorithm to identify sparse and dense samples within each minority class

- Cluster samples and compute local outlier factors to detect low-density regions

Conditional GAN Training:

- Train GAN using sparse and dense samples as conditions

- Generator: $G(z,c)$ where $c$ indicates sparse/dense condition

- Discriminator: $D(x,c)$ with conditional input

Sample Filtering:

- Generate augmented samples using trained generator

- Apply One-Class SVM to filter out noisy generated samples

- Retain only high-quality synthetic samples for training

Model Training:

- Combine original minority class samples with generated samples

- Train classification model on balanced dataset

- Validate using stratified k-fold cross-validation [11]

Attention-Based CNN for Malaria Classification

Objective: Implement soft attention mechanisms to improve feature learning for rare classes.

Materials:

- Custom Parallel CNN architecture

- Soft attention modules

- Preprocessed malaria cell images

- Gradient-weighted Class Activation Mapping (Grad-CAM) for interpretation

Methodology:

- Architecture Design:

- Implement Parallel Convolutional Neural Network (PCNN) with multiple branches

- Integrate soft attention mechanisms after convolutional blocks (SPCNN)

- Add skip connections to preserve spatial information

Training Protocol:

- Input: 224×224×3 cell images

- Data preprocessing: dilation, CLAHE, normalization

- Optimization: Adam optimizer with learning rate 0.0001

- Regularization: Dropout (0.5), L2 weight decay

Interpretation:

- Apply Grad-CAM and SHAP visualization to understand model focus

- Validate attention maps with domain experts

- Compare feature activation between majority and minority classes [25]

Workflow Visualization

Malaria Dataset Imbalance Handling Workflow

Research Reagent Solutions

| Research Tool | Type | Function in Malaria Classification |

|---|---|---|

| BBBC041v1 Dataset | Data | Benchmark dataset with 80,000+ annotated cells for method development [21] |

| Soft Attention P-CNN (SPCNN) | Algorithm | Custom CNN with attention mechanisms for improved feature extraction [25] |

| CBLOF-OCS GAN | Algorithm | Advanced data augmentation addressing intra-class sparse regions [11] |

| Grad-CAM | Visualization | Interpretability tool for understanding model focus areas [25] |

| Stratified K-Fold | Evaluation | Cross-validation method preserving class distribution in splits [27] |

| One-Class SVM | Filtering | Noise detection in generated samples to ensure quality [11] |

| Seven-Channel Input | Preprocessing | Enhanced feature representation for better model performance [27] |

A Toolkit of Solutions: From Data Augmentation to Novel Architectures

Frequently Asked Questions (FAQs)

1. What is the primary cause of class imbalance in parasite image datasets? In parasite image classification, class imbalance is fundamentally caused by the natural scarcity of infected samples compared to a vast number of uninfected cells. For instance, in widely used public datasets, parasitized cells are often the minority class. This imbalance is exacerbated by the resource-intensive process of collecting and expertly annotating samples from geographically diverse regions, leading to datasets that are not only imbalanced but also lack diversity [29] [2].

2. How do SMOTE and GANs differ in their approach to solving data imbalance? SMOTE (Synthetic Minority Over-sampling Technique) and GANs (Generative Adversarial Networks) address imbalance by generating synthetic data, but their methodologies differ significantly. SMOTE is an interpolation-based technique that creates new synthetic samples for the minority class along the line segments between existing minority class instances in feature space [30] [31]. In contrast, GANs use a deep learning framework where two neural networks, a Generator and a Discriminator, are trained adversarially. The Generator learns to produce new synthetic images that mimic the real data distribution of the minority class, while the Discriminator learns to distinguish between real and fake images, leading to the generation of highly realistic, novel samples [30].

3. My model performs well on validation data but poorly on new patient samples. What could be wrong? This is a classic sign of poor model generalization, often stemming from a lack of diversity in your training dataset. If your training data does not account for variations in staining protocols, blood smear preparation techniques, or imaging equipment used across different clinics, the model will fail to adapt. To address this, ensure your dataset incorporates samples from multiple sources and regions. Furthermore, employing Domain Adaptation techniques or GANs that can generate data with diverse visual characteristics can significantly improve cross-domain robustness, with studies showing sensitivity improvements of up to 25% [29].

4. Why is my SMOTE-augmented model still performing poorly on the minority class? Poor performance post-SMOTE can often be traced to the presence of abnormal instances, such as noise and outliers, within the minority class. The standard SMOTE algorithm does not discriminate between clean and noisy samples; it will generate synthetic samples based on any k-nearest neighbors, which can amplify noise and degrade the quality of the synthetic data and the decision boundary. Recent research proposes SMOTE extensions like Dirichlet ExtSMOTE and BGMM SMOTE that use probabilistic models to identify and mitigate the influence of these abnormal instances, leading to improved F1 scores and better synthetic sample quality [32].

5. Is it acceptable to apply SMOTE to the entire dataset before splitting into train and test sets? No, this is a critical mistake that leads to data leakage. Information from your test set will leak into the training process, creating an overly optimistic and invalid performance estimate. SMOTE, or any resampling technique, should be applied only to the training set after the train-test split. The test set must remain completely untouched and representative of the original, raw data distribution to provide a valid assessment of your model's generalization ability [5] [31].

Troubleshooting Guides

Problem: The SMOTE Algorithm is Introducing Too Much Noise

Issue: After applying SMOTE, the decision boundary becomes blurred, and model performance, particularly precision on the minority class, decreases. This is often due to the creation of implausible synthetic samples, especially when the minority class contains outliers or when there is significant overlap with the majority class.

Solution Steps:

- Switch to Advanced SMOTE Variants: Instead of the default SMOTE, use more sophisticated variants designed to handle noisy data:

- Borderline-SMOTE: This method only generates synthetic samples from minority instances that are considered "hard to learn" (i.e., those located near the decision boundary), avoiding outliers that are deep within the majority class region [31].

- SMOTE-Tomek Links: This hybrid approach combines SMOTE oversampling with Tomek Links undersampling. SMOTE creates synthetic minority samples, and then Tomek Links cleans the dataset by removing pairs of close opposite-class instances (Tomek Links), which often represent noise [5].

- SMOTE Extensions for Abnormal Instances: Implement newer methods like Dirichlet ExtSMOTE, which uses a Dirichlet distribution to produce more robust synthetic samples that are less influenced by neighboring outliers, thereby improving metrics like F1-score and MCC [32].

Preprocess to Identify Outliers: Before applying SMOTE, run an outlier detection algorithm (e.g., Isolation Forest, DBSCAN) on the minority class in your feature space. Visually inspect and consider removing severe outliers before synthetic sample generation.

Validate with a Custom Pipeline: Use the

imblearnpipeline to prevent data leakage during cross-validation and model evaluation seamlessly.

Problem: GAN Training is Unstable and Fails to Converge

Issue: The Generator produces nonsensical outputs, or the Discriminator loss becomes zero, halting training. This is a common problem with GANs, often attributed to an imbalance in the adversarial "game" between the Generator and Discriminator.

Solution Steps:

- Architecture and Loss Functions: Use a stable GAN architecture like Deep Convolutional GAN (DCGAN) or Wasserstein GAN (WGAN). WGAN, with its Wasserstein loss and gradient penalty, is known for more stable training and improved convergence compared to standard GANs with minimax loss [30].

Data Preprocessing for Images: Ensure your parasite images are consistently preprocessed. This includes:

- Resizing all images to a fixed dimension.

- Normalizing pixel values to a specific range (e.g., [-1, 1] or [0, 1]).

- Applying data augmentation techniques (e.g., random rotations, flips) to the real images to increase diversity before feeding them to the Discriminator.

Training Monitoring and Techniques:

- Monitor Both Losses: Track the loss of both the Generator and Discriminator. The Discriminator loss should not consistently be zero.

- Use Label Smoothing: When training the Discriminator, use soft labels (e.g., 0.9 for real and 0.1 for fake) instead of hard 1s and 0s to prevent the Discriminator from becoming overconfident.

- Train with Different Frequencies: A common strategy is to train the Discriminator more frequently than the Generator (e.g., 5 times for every 1 time the Generator is trained) to maintain a competitive balance.

Problem: Model is Overfitting on the Synthetic Data

Issue: The model achieves near-perfect accuracy on the training set (which contains synthetic data) but fails to generalize to the real-world test set. This occurs when the model learns the specific patterns of the synthetic data instead of the underlying generalizable features of the parasite.

Solution Steps:

- Incorporate Strong Regularization:

- Add L1/L2 regularization penalties to your model's loss function.

- Use Dropout layers in your neural network architecture.

- For tree-based models, increase regularization parameters.

Validate with the Original Test Set: Always use a hold-out test set composed of real, original data that was never used in the training or validation process. This is the only reliable way to measure true generalization performance.

Combine with Undersampling: Apply SMOTE to oversample the minority class and combine it with random undersampling of the majority class. This prevents the model from being overwhelmed by synthetic patterns and helps it learn from a more balanced, yet varied, dataset [31] [33].

Diversify Data Generation: If using GANs, ensure that the generated samples are diverse. If all synthetic images look very similar, the model will overfit to those specific features. Techniques like mini-batch discrimination and feature matching can help encourage diversity in the Generator's output.

Experimental Protocols & Performance Comparison

The table below summarizes the performance impact of different data-level strategies as reported in malaria detection research, providing a benchmark for expected outcomes.

Table 1: Impact of Data-Level Strategies on Model Performance in Medical Imaging [29]

| Dataset / Strategy | Precision (%) | Recall (%) | F1-Score (%) | Overall Accuracy (%) |

|---|---|---|---|---|

| Imbalanced (Baseline) | 75.8 | 60.4 | 67.2 | 82.1 |

| Imbalanced + Data Augmentation | 87.2 | 84.5 | 85.8 | 91.3 |

| Imbalanced + Focal Loss | 85.4 | 78.9 | 81.9 | 89.7 |

| Balanced + Transfer Learning | 93.1 | 92.5 | 92.8 | 94.2 |

| GAN-based Augmentation | ~87* | ~87* | ~85-90* | ~92* |

Note: GAN performance is summarized from reported improvements of 15-20% in accuracy [29].

Detailed Methodology: Evaluating SMOTE for Parasite Classification

This protocol outlines a standard workflow for applying and evaluating SMOTE on an image-based parasite dataset.

1. Data Preparation and Feature Extraction

- Dataset: Use a publicly available parasitology image dataset (e.g., the NIH Malaria dataset from the UCI Machine Learning Repository) [29] [32].

- Preprocessing: Resize all cell images to a uniform size (e.g., 64x64 pixels). Normalize pixel values to [0, 1].

- Feature Extraction: Instead of using raw pixels, feed the images through a pre-trained Convolutional Neural Network (CNN) like VGG16 or ResNet. Extract features from the last fully connected or pooling layer. This creates a high-level feature representation for each image, which is more suitable for SMOTE interpolation [2].

2. Train-Test Split and SMOTE Application

- Split the extracted features and corresponding labels into a training set (e.g., 70%) and a hold-out test set (e.g., 30%). The test set must not be used in any sampling or parameter tuning.

- Apply the SMOTE algorithm only to the training data. Use the

imblearnlibrary in Python with default parameters (k_neighbors=5) or a chosen variant like Borderline-SMOTE [31].

3. Model Training and Evaluation

- Train a standard classifier, such as a Support Vector Machine (SVM) or Random Forest, on the SMOTE-transformed training data.

- Evaluate the final model's performance exclusively on the untouched, imbalanced test set. Use metrics appropriate for imbalanced data: F1-Score, Precision-Recall AUC (PR-AUC), and Matthews Correlation Coefficient (MCC), in addition to per-class accuracy [29] [32].

The following workflow diagram illustrates this experimental protocol.

Diagram 1: SMOTE Experimental Workflow for Parasite Images

Detailed Methodology: Implementing a GAN for Data Augmentation

This protocol describes the process for using a GAN to generate synthetic parasite images.

1. GAN Selection and Architecture

- Model Choice: For stability, implement a Wasserstein GAN with Gradient Penalty (WGAN-GP).

- Generator Architecture: A deep CNN that takes a random noise vector (latent space) as input and upsamples it through transposed convolutional layers to generate a synthetic image of the target size.

- Discriminator (Critic) Architecture: A deep CNN that takes an image (real or fake) as input and outputs a scalar score rather than a probability.

2. Training Loop

- Training Data: Use only the minority class (parasitized) images from the training set.

- Training Steps:

- For a number of training iterations:

- Train the Discriminator (Critic): Sample a batch of real images and a batch of generated images. Calculate the Wasserstein loss and gradient penalty. Update the Discriminator's weights more frequently (e.g., 5 times per Generator update).

- Train the Generator: Sample a new batch of random noise, generate images, and pass them through the Discriminator. Calculate the Generator's loss based on the Discriminator's scores and update the Generator's weights.

- Monitoring: Check the generated images periodically for visual quality and diversity.

3. Evaluation and Utilization

- After training, use the Generator to create a sufficient number of synthetic parasitized cell images.

- Combine these synthetic images with the original training data to create a balanced dataset.

- Proceed to train a standard classification model (e.g., a CNN) on this augmented dataset, following the same train-test split and evaluation principles as in the SMOTE protocol.

The following diagram illustrates the core adversarial training process of the GAN.

Diagram 2: GAN Adversarial Training Core Concept

Table 2: Essential Tools for Implementing Data-Level Strategies

| Tool / Resource | Type | Primary Function | Key Application in Parasite Research |

|---|---|---|---|

| imbalanced-learn (imblearn) | Python Library | Provides implementations of SMOTE, its variants (e.g., Borderline-SMOTE, ADASYN), and undersampling methods [5] [31]. | The go-to library for quickly testing and applying various oversampling strategies to feature-based parasite data. |

| TensorFlow / PyTorch | Deep Learning Framework | Flexible platforms for building and training custom GAN architectures (e.g., DCGAN, WGAN-GP) from the ground up [30]. | Essential for researchers who need full control over GAN architecture and training loop for generating synthetic parasite images. |

| Pre-trained CNN Models (e.g., VGG, ResNet) | Model Architecture | Used for transfer learning and, crucially, for extracting meaningful feature representations from images before applying SMOTE [29] [2]. | Extracts high-level features from cell images, making SMOTE interpolation more effective in a semantically rich space. |

| Dirichlet ExtSMOTE | Algorithm | An advanced SMOTE extension that uses the Dirichlet distribution to reduce the influence of outliers when generating synthetic samples [32]. | Improves the quality of synthetic data in datasets where the minority class contains noisy or abnormal parasite images. |

| Scikit-learn | Python Library | Provides data preprocessing tools (e.g., MinMaxScaler, StandardScaler), model implementations, and critical evaluation metrics [5] [34]. | Used for the entire machine learning pipeline, from scaling features for SMOTE to training final classifiers and evaluating performance. |

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using Focal Loss over standard Cross-Entropy Loss for parasite image classification?

A1: The primary advantage is Focal Loss's ability to handle extreme class imbalance, which is common in parasite image datasets where infected samples are much rarer than uninfected ones. Standard Cross-Entropy Loss treats all samples equally, causing the model to become biased toward the majority class (e.g., uninfected cells). Focal Loss addresses this by incorporating a modulating factor, (1 - p_t)^γ, which down-weights the loss for easy-to-classify examples (the abundant background/uninfected cells) and forces the model to focus its training efforts on hard, misclassified examples, which are often the minority class of interest (e.g., parasites) [35] [36]. This leads to improved model performance on the underrepresented classes.

Q2: How do I choose the right value for the focusing parameter (γ) in Focal Loss?

A2: The value of γ is dataset-dependent and should be tuned through cross-validation. A larger γ value increases the focus on hard, misclassified examples. Empirical studies suggest starting with a value between 1.5 and 2.5 [37]. One cross-validation study found that values between 0.5 and 2.5 yielded good performance with minimal variance, ultimately selecting γ=2.0 for their medical imaging task [37]. It is recommended to begin with γ=2 and experiment within this range to find the optimal value for your specific parasite image dataset.

Q3: My model's performance on the minority class is still poor after implementing Focal Loss. What are other algorithm-level strategies I can try?

A3: You can consider these hybrid or alternative strategies:

- α-Balanced Focal Loss: Combine Focal Loss with a class weighting factor,

α. This variant handles class imbalance by introducing two components: theαweighting factor (which can be set by inverse class frequency) to balance class importance, and theγparameter to focus on hard examples [35]. - Batch-Balanced Focal Loss (BBFL): This hybrid approach combines a data-level strategy with an algorithm-level one. It uses batch-balancing to ensure each training batch has an equal number of samples from each class, forcing the model to learn all classes equally. This is then combined with the Focal Loss function to emphasize hard samples within those balanced batches [37].

- Cost-Sensitive Learning: Instead of modifying the loss function, you can assign a higher misclassification cost to the minority class (e.g., parasite-positive samples) during the training process. This directly instructs the model to be more cautious about making errors on the critical class [37].

Q4: Is Focal Loss only applicable to two-class (binary) classification problems?

A4: No, Focal Loss can be extended to multi-class classification problems, which is relevant for differentiating between multiple parasite species or infection severity levels. The principle remains the same: for each sample, the loss is computed based on the predicted probability for the true class, and the modulating factor down-weights the loss for well-classified examples across all classes [37]. The implementation simply involves using a multi-class cross-entropy as the base instead of binary cross-entropy.

Troubleshooting Guides

Problem: Model convergence is unstable or slow after switching to Focal Loss.

- Potential Cause 1: The

γparameter is set too high, over-penalizing uncertain predictions, especially in the early stages of training. - Solution: Start with a lower

γvalue (e.g.,1.0or1.5) and gradually increase it. You can also explore adaptive variants of Focal Loss that dynamically adjustγduring training to avoid this issue [38]. - Potential Cause 2: The learning rate may not be optimal for the new loss landscape.

- Solution: Re-tune your learning rate when implementing Focal Loss, as the change in loss dynamics often requires a different learning rate for stable convergence.

Problem: The model is overfitting to the minority class, showing high recall but low precision.

- Potential Cause: The combined effect of class weighting (e.g., a high

αvalue) and the focusing parameter (γ) is too strong, causing the model to become overly sensitive to the minority class and flag too many false positives. - Solution:

- Adjust the

αweighting factor. If you set it via inverse class frequency, try smoothing the weights or treating it as a hyperparameter to be validated [35]. - Incorporate data augmentation techniques specifically for the minority class to increase the diversity of positive examples and improve model generalization [39] [40].

- Strengthen regularization in your model, for example, by increasing dropout rates or adding L2 regularization, to prevent overfitting [37].

- Adjust the

Table 1: Performance Comparison of Different Loss Functions on Imbalanced Medical Image Datasets

| Dataset / Task | Model Architecture | Standard Cross-Entropy | Focal Loss (γ=2.0) | Batch-Balanced Focal Loss (BBFL) | Reference |

|---|---|---|---|---|---|

| RNFLD (Retinal defect) Binary Classification | InceptionV3 | 83.0% F1 (est. from baseline) | 84.7% F1 | 84.7% F1 | [37] |

| Glaucoma Multi-class Classification | MobileNetV2 | 64.7% Avg. F1 (ROS baseline) | 69.6% Avg. F1 | 69.6% Avg. F1 | [37] |

| Product Categorization (Text) | Neural Network | Lower accuracy on minority classes | Improved accuracy on minority classes | N/A | [35] |

Table 2: The Impact of the Focusing Parameter (γ) in Focal Loss [35]

| Value of γ | Effect on Loss Function | Use Case Scenario |

|---|---|---|

| γ = 0 | Equivalent to Standard Cross-Entropy Loss. | Balanced datasets or initial baselining. |

| γ = 1 | Moderate down-weighting of easy examples. | Mild class imbalance. |

| γ = 2 | Strong down-weighting of easy examples, focusing heavily on hard negatives. | The most common starting point for severe imbalance (e.g., medical images). |

| γ > 2 | Very aggressive focus on the hardest examples. | Can be tried if performance with γ=2 is still unsatisfactory, but may risk instability. |

Experimental Protocols

Protocol 1: Implementing and Tuning Focal Loss in a Deep Learning Model

This protocol describes how to integrate Focal Loss into a CNN for parasite image classification.

- Define the Loss Function: Implement the Focal Loss formula in your deep learning framework (e.g., PyTorch). The formula is:

FL(p_t) = -α * (1 - p_t)^γ * log(p_t)wherep_tis the model's estimated probability for the true class,αis a weighting factor for class balance, andγis the focusing parameter [35] [36]. - Initial Hyperparameter Setup: Initialize the parameters. A recommended starting point is

γ=2.0andα=0.25for the positive class, as per the original paper [35] [36]. Theαparameter can also be set as the inverse class frequency. - Integrate with Trainer: Replace the standard loss function in your training loop. If using a high-level framework like Hugging Face, you may need to create a custom trainer class that overrides the

compute_lossmethod to use your Focal Loss implementation [41]. - Cross-Validation: Perform k-fold cross-validation (e.g., k=5 or k=10) to rigorously tune the

γandαparameters. This helps find the optimal values that generalize best to unseen data [40]. - Evaluation: Validate the model's performance on a held-out test set using metrics appropriate for imbalanced data, such as F1-score, precision, recall, and the area under the ROC curve (AUC) [37].

Protocol 2: Evaluating a Hybrid Batch-Balanced Focal Loss (BBFL) Strategy

This protocol outlines the steps for the hybrid BBFL approach, which was shown to be effective on imbalanced medical image datasets [37].

- Data Sampling (Batch-Balancing): During training, structure your data loader so that each mini-batch contains an equal number of samples from every class. For a binary parasite detection task, this means each batch would have a 1:1 ratio of infected to uninfected samples [37].

- Data Augmentation: To prevent overfitting on the oversampled minority class in each batch, apply random geometric and intensity augmentations (e.g., flipping, rotation, blurring, noise) to the images in the batch [37].

- Loss Calculation: Compute the loss for the balanced batch using the standard Focal Loss function with a tuned

γparameter (e.g.,2.0) [37]. - Model Architecture: Use a standard CNN (e.g., InceptionV3, MobileNetV2) for feature extraction, followed by fully connected layers with dropout for classification [37].

- Performance Comparison: Compare the results of BBFL against baselines like standard Cross-Entropy Loss, Focal Loss alone, and other techniques like random oversampling (ROS) or cost-sensitive learning.

Workflow and Logic Diagrams

Diagram 1: Focal Loss Logic for Parasite Classification

This diagram visualizes how Focal Loss dynamically adjusts the contribution of each sample to the total loss based on its classification difficulty, which is crucial for imbalanced datasets.

Diagram 2: Hybrid Batch-Balanced Focal Loss (BBFL) Workflow

This diagram illustrates the end-to-end workflow of the hybrid BBFL strategy, combining data-level batch balancing with the algorithm-level Focal Loss.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for an Imbalanced Parasite Image Classification Pipeline

| Component / Reagent | Function / Explanation | Example or Note |

|---|---|---|

| Focal Loss Function | The core algorithm-level solution that modifies the loss function to focus learning on hard, misclassified examples and mitigate class imbalance. | Can be implemented in PyTorch as a custom function [41]. Tune γ and α parameters. |

| Pre-trained CNN Models | Used for transfer learning. Provides powerful, pre-trained feature extractors to boost performance, especially with limited data. | Models like VGG16, ResNet50, InceptionV3, and EfficientNet [39] [40]. |

| Data Augmentation Library | Generates synthetic variations of training images to increase dataset diversity and robustness, combating overfitting. | Use libraries like Albumentations or TensorFlow/Keras ImageDataGenerator for operations (rotation, flip, blur) [37]. |

| Batch Balancing Sampler | A data-level tool that ensures each training batch has a balanced number of samples from each class. Often used in hybrid strategies. | Can be implemented as a custom sampler in PyTorch's DataLoader [37]. |

| Evaluation Metrics | A set of metrics that provide a true picture of model performance across all classes, not just overall accuracy. | F1-Score: Harmonic mean of precision and recall. Precision: Ability to not label negative samples as positive. Recall: Ability to find all positive samples. AUC: Overall measure of separability [37]. |

Frequently Asked Questions (FAQs)

Q1: Why should I use an autoencoder instead of a standard CNN for parasite image classification? Autoencoders are particularly effective in class-imbalanced scenarios, common in medical imaging, where you have many more "normal" (e.g., uninfected) samples than "anomalous" (e.g., parasitized) ones. Instead of learning to distinguish between classes, an autoencoder is trained only on normal data to learn an efficient representation or "identity" of what a healthy sample looks like. During inference, it flags anomalies based on high reconstruction error; anomalous inputs (e.g., cells with parasites) deviate from the learned normal pattern and are thus reconstructed poorly [42] [6]. This unsupervised approach means you don't need a large, balanced dataset of rare anomalous samples to train an effective model.

Q2: What is a typical performance benchmark for this method? When applied to medical image classification, autoencoder-based anomaly detection can achieve highly competitive results. For instance, in malaria cell image classification, the AnoMalNet model achieved the following performance metrics on a dataset containing parasitized and uninfected cells [6]:

| Metric | Reported Performance |

|---|---|

| Accuracy | 98.49% |

| Precision | 97.07% |

| Recall | 100% |

| F1 Score | 98.52% |

Q3: My autoencoder reconstructs anomalies too well. How can I improve its sensitivity? This is often a sign that the model's capacity is too high, allowing it to "memorize" the input without learning a meaningful representation of the underlying normal structure. To address this [42]:

- Reduce Model Capacity: Make the bottleneck layer (the central layer in the encoder) smaller. This forces the network to learn only the most critical features of the normal data.

- Introduce Noise: Use a denoising autoencoder, where the input is corrupted with noise but the network is tasked with reconstructing the clean original. This encourages the model to be more robust and learn broader features.

- Adjust the Threshold: The threshold on the reconstruction loss that flags an anomaly may need recalibration on your validation set.

Q4: How do I choose the right reconstruction error threshold for my data? There is no universal threshold. The standard practice is to [42]:

- Calculate the reconstruction errors for your normal validation set (data not used during training).

- Analyze the distribution of these errors (e.g., plot a histogram).

- Set the threshold to a value that captures the majority of these normal samples, such as the 95th or 99th percentile of the error distribution. Any new sample with an error higher than this threshold is flagged as an anomaly.

Q5: What are the advantages of a multi-layer autoencoder over a single-layer one? Using multiple layers in the encoder and decoder allows the network to learn a hierarchical feature representation [42]. The initial layers may learn simple, low-level features (like edges), while deeper layers combine these into more complex, high-level patterns. This is crucial for accurately modeling and reconstructing intricate structures in biological images, leading to better anomaly detection performance compared to a single, simplistic layer.

Troubleshooting Guides

Problem: The Model Fails to Detect Anomalies (High False Negative Rate)

- Potential Cause: The model has learned an overly general representation of "normal" and is not sensitive enough to the specific features of your anomalies.

- Solutions:

- Refine Training Data: Ensure your training set is pure and contains only high-quality, confirmed normal samples. Even a small number of anomalies in the training set can teach the model that they are normal.

- Use a Multi-Layer Architecture: As mentioned in the FAQs, a deeper network can capture more discriminative features of normalcy. A single-layer autoencoder might be too simplistic [42].

- Experiment with Loss Functions: The standard Mean Squared Error (MSE) might not always be optimal. Test other loss functions like Mean Absolute Error (MAE) or SSIM, which can be more sensitive to certain types of image distortions.

Problem: The Model Flags Too Many Normal Samples as Anomalies (High False Positive Rate)

- Potential Cause 1: The reconstruction error threshold is set too low.

- Solution: Re-evaluate the threshold on your validation set as described in FAQ #4. If the distribution of normal errors is wide, a higher threshold is needed.

- Potential Cause 2: High variance in the "normal" class.

- Solution: Perform data augmentation (e.g., rotation, scaling, brightness adjustment) on your normal training images only to increase the diversity of normal samples the model sees. This helps it learn a more robust definition of normalcy [43].

Problem: The Model Does Not Converge During Training

- Potential Causes and Solutions:

- Learning Rate is Incorrect: The learning rate might be too high (causing divergence) or too low (causing extremely slow progress). Implement a learning rate scheduler to adjust it during training.

- Data is Not Properly Normalized: Ensure your input image pixel values are scaled to a standard range, typically [0, 1] or [-1, 1]. This is a critical pre-processing step.

- Gradient Explosion/Vanishing: This is common in deep networks. Using activation functions like ReLU and techniques like batch normalization can help mitigate this issue.

Experimental Protocols & Data

The following table summarizes the performance of autoencoder-based anomaly detection in biological imaging, demonstrating its effectiveness.

| Study / Model | Application | Key Performance Metrics | Comparative Models |

|---|---|---|---|

| AnoMalNet [6] | Malaria Cell Image Classification | Accuracy: 98.49%, Precision: 97.07%, Recall: 100%, F1: 98.52% | Outperformed VGG16, ResNet50, MobileNetV2, LeNet |

| Bilik et al. [44] [45] | Phytoplankton Parasite Detection | Overall F1 Score: 0.75 (unsupervised AE) | Supervised Faster R-CNN achieved F1: 0.86, but requires anomaly labels |

Detailed Methodology: Implementing an Autoencoder for Anomaly Detection

This protocol outlines the core steps for training and evaluating an autoencoder-based anomaly detection system, mirroring the approach used in AnoMalNet [6] and other studies [42].

1. Data Preparation and Preprocessing

- Data Splitting: Split your data into three sets: Training, Validation, and Test.

- Strictly Normal Training Set: The Training set must contain only normal (uninfected) samples. This is the foundational principle of the method.

- Balanced Test Set: The Test set should contain a mix of normal and anomalous (parasitized) samples to properly evaluate performance.

- Image Preprocessing: Normalize pixel values, resize images to a consistent shape (e.g., 28x28 for MNIST, higher for real microscopy images), and flatten them if using fully-connected layers.

2. Model Architecture Definition

- Encoder: A network that compresses the input into a lower-dimensional latent space. It typically consists of progressively smaller dense or convolutional layers.

- Bottleneck: The innermost layer that holds the compressed representation of the input. Its size is a key hyperparameter.

- Decoder: A network that aims to reconstruct the input from the bottleneck representation. It is typically symmetric to the encoder.

3. Model Training

- Loss Function: Use a pixel-wise reconstruction loss, such as Mean Squared Error (MSE) or Binary Cross-Entropy.

- Objective: The model is trained to minimize the difference between its output and the input

(x ≈ x'). It learns to replicate normal data efficiently.

4. Inference and Anomaly Detection

- Calculate Reconstruction Loss: Pass a new sample through the trained autoencoder and compute the reconstruction loss (e.g., MSE).

- Apply Threshold: Classify the sample based on a predefined threshold. If

loss > threshold, the sample is flagged as an anomaly.

The workflow for this methodology is summarized in the following diagram:

The Scientist's Toolkit: Research Reagent Solutions

This table lists key components and their functions for building an autoencoder-based anomaly detection system.

| Item / Concept | Function / Explanation |

|---|---|

| Normal (Uninfected) Image Dataset | The foundational "reagent" for training. The autoencoder learns to model the features and distribution of these samples. Purity is critical. |

| Encoder Network | Acts as a "feature extractor" and "compressor." It reduces the high-dimensional input image into a compact, latent-space representation (the code). |

| Bottleneck (Latent Space) | The core of the autoencoder. Its restricted size forces the network to learn the most salient features of the normal data, preventing it from simply memorizing the input. |

| Decoder Network | Functions as a "generator" or "reconstructor." It attempts to recreate the original input from the compressed latent representation. |

| Reconstruction Loss (e.g., MSE) | Serves as the "anomaly score." It quantifies the difference between the original and reconstructed image. A high score indicates the input was unfamiliar to the model. |

| Threshold Value | The decision boundary. It is tuned on a validation set to define the maximum acceptable reconstruction error for a sample to be classified as normal. |

Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: What is the primary advantage of using a Hybrid CapNet over a standard CNN for parasite image classification?

Hybrid CapNet architectures are specifically designed to overcome a key limitation of CNNs: the loss of spatial hierarchies and pose relationships between features due to pooling layers [46] [47]. In parasite classification, the spatial orientation and relationship of structures within a cell are critical for accurate identification. While CNNs excel at extracting deep semantic features, Capsule Networks within the hybrid model preserve spatial hierarchies, making the model more robust to morphological variations and rotations in blood smear images [46] [47]. This leads to better generalization across different datasets and staining protocols.

- Troubleshooting Guide: Model shows high accuracy on training data but poor performance on new, unseen blood smear images.

- Potential Cause: The model (especially a standard CNN) may be overfitting to low-level texture or color patterns specific to your training set and failing to generalize the essential spatial structures of parasites.

- Solution: Implement a Hybrid CapNet framework. Its capsule layers enforce learning of equivariant entities, meaning the model learns to recognize features regardless of their orientation or exact position. Furthermore, ensure your training data includes images from multiple sources with varying staining intensities to improve robustness [46].

FAQ 2: How can I address class imbalance in a multiclass parasite life-cycle stage dataset when using a Hybrid CapNet?

Class imbalance is a common challenge where certain parasite stages (e.g., early rings) are underrepresented, causing model bias toward the majority classes (e.g., trophozoites). A multi-faceted approach is required.

- Troubleshooting Guide: The model performs well on common parasite stages but fails to classify rare stages.

- Solution 1: Algorithm-Level Solution. Leverage the Hybrid CapNet's composite loss function. Integrate a Focal Loss component, which reduces the relative loss for well-classified examples, forcing the model to focus on hard-to-classify minority class samples during training [46] [48].

- Solution 2: Data-Level Solution. Employ hybrid sampling algorithms before training. A technique like SMOTE-RUS-NC can be applied: first, use the Neighborhood Cleaning rule to clean the data, then strategically combine Synthetic Minority Oversampling Technique (SMOTE) with Random Undersampling (RUS) to achieve an optimal class balance [49]. Another effective method is the Hybrid Cluster-Based Oversampling and Undersampling (HCBOU), which uses K-means clustering to generate meaningful synthetic data for minority classes and selectively remove majority class samples to minimize information loss [50].

FAQ 3: My model's decisions are not interpretable. How can I verify that the Hybrid CapNet is focusing on biologically relevant parasite regions?

Interpretability is crucial for gaining the trust of clinicians and researchers. Hybrid CapNet offers inherent advantages due to the pose parameters learned by capsules, but additional techniques can be used.

- Troubleshooting Guide: Need to validate which parts of an image the model uses for classification.

- Solution: Utilize visualization techniques like Grad-CAM (Gradient-weighted Class Activation Mapping). This generates a heatmap overlay on the original input image, highlighting the regions that most strongly influenced the model's decision. Studies on Hybrid CapNet for malaria detection have used Grad-CAM to confirm that the model's attention aligns with the actual locations of parasites within red blood cells, thereby validating its interpretability [46].

Protocol: Implementing and Evaluating a Hybrid CapNet for Parasite Classification

This protocol outlines the key steps for building and validating a Hybrid CapNet model, as described in recent literature [46].

- Data Preparation: Collect and preprocess blood smear images from multiple public datasets (e.g., MP-IDB, IML-Malaria) to ensure diversity. Apply standardization and augmentation techniques (rotations, flips, color jitter) to improve robustness.

- Handling Class Imbalance: Apply a hybrid sampling framework like HCBOU [50] or SMOTE-RUS-NC [49] to the training split to create a balanced dataset.

- Model Architecture Configuration:

- CNN Backbone: Design a lightweight convolutional neural network for initial feature extraction. This typically involves several convolutional and pooling layers.

- Capsule Network Layer: The features from the CNN are reshaped into primary capsules. These are then routed to a final layer of class capsules using a dynamic routing algorithm. The length of each class capsule vector represents the probability of that class.

- Training with Composite Loss Function: Train the model using a composite loss function that combines:

- Margin Loss: The primary loss for Capsule Networks, which maximizes the gap between the probability of the correct class and incorrect classes.

- Focal Loss: To handle class imbalance by down-weighting the loss contributed by easy-to-classify examples.

- Reconstruction Loss: A decoder network reconstructs the input image from the capsule outputs, encouraging the capsules to encode meaningful features.

- Evaluation: Perform both intra-dataset and cross-dataset validation. Use metrics like Accuracy, F1-Score, and Area Under the Curve (AUC) to assess performance. Generate Grad-CAM visualizations to interpret model focus areas.

Table 1: Performance Comparison of Hybrid CapNet on Benchmark Datasets

This table summarizes the quantitative performance of a Hybrid CapNet as reported in research, demonstrating its high accuracy and computational efficiency [46].

| Dataset Name | Reported Accuracy | Key Metric | Computational Cost (GFLOPs) |

|---|---|---|---|

| MP-IDB | Up to 100% | Multiclass Classification | 0.26 |

| MP-IDB2 | Consistent Improvements | Cross-dataset Generalization | 0.26 |

| IML-Malaria | Superior to CNN baselines | Life-cycle Stage Classification | 0.26 |

| MD-2019 | High Performance | Parasite Detection | 0.26 |

Table 2: Hybrid Sampling Algorithms for Class Imbalance

This table compares data-level methods to address class imbalance, a critical step in pre-processing for parasite image classification [49] [50].

| Sampling Method | Type | Key Mechanism | Best Suited For |

|---|---|---|---|