Automating Parasitology: A Comprehensive Guide to YOLO Models for Accurate Parasite Egg Detection

Intestinal parasitic infections remain a significant global health challenge, particularly in resource-limited settings.

Automating Parasitology: A Comprehensive Guide to YOLO Models for Accurate Parasite Egg Detection

Abstract

Intestinal parasitic infections remain a significant global health challenge, particularly in resource-limited settings. Traditional diagnostic methods relying on manual microscopic examination are time-consuming, labor-intensive, and prone to human error. This article explores the transformative potential of YOLO (You Only Look Once) deep learning models for automating the detection and classification of parasite eggs in microscopic images. We provide a comprehensive analysis spanning from foundational concepts and state-of-the-art model architectures to practical optimization techniques and rigorous validation metrics. Tailored for researchers, scientists, and drug development professionals, this review synthesizes current research findings, demonstrates performance benchmarks achieving over 99% precision in some studies, and discusses implementation strategies for developing accurate, efficient, and accessible diagnostic tools for biomedical and clinical applications.

The Diagnostic Challenge: Why Automated Parasite Egg Detection Matters

For over a century, traditional light microscopy has served as the cornerstone of pathological and parasitological diagnosis, forming the gold standard for examining tissue samples and identifying parasitic infections [1]. Despite its foundational role, this conventional method presents significant and inherent limitations in modern healthcare settings, primarily related to its time-consuming nature and susceptibility to human error [2] [3]. These challenges persist because the diagnostic process relies heavily on manual examination by skilled technicians, a labor-intensive process that can lead to diagnostic delays, increased healthcare costs, and potential misdiagnoses, particularly in resource-constrained environments [2] [4]. The emergence of digital pathology and advanced deep learning models, specifically YOLO (You Only Look Once) architectures, offers a transformative solution by automating detection workflows, thereby directly addressing these longstanding limitations [1] [2] [5].

Quantifying the Limitations of Traditional Microscopy

The constraints of manual microscopy can be systematically categorized and quantified, providing a clear rationale for the adoption of automated systems.

Table 1: Key Limitations of Traditional Microscopy in Parasite Diagnosis

| Limitation Category | Specific Challenge | Impact on Diagnostic Workflow |

|---|---|---|

| Time Consumption | Labor-intensive manual examination [4] [6] | Slow throughput (approx. 30 minutes per sample [5]); delays in diagnosis and treatment |

| Requirement for specialist expertise [2] [7] | Creates bottlenecks in high-volume settings [2] | |

| Human Error & Subjectivity | Susceptibility to false negatives/positives [2] [3] | Compromised diagnostic accuracy; varies with examiner skill and fatigue |

| Morphological similarities between eggs and artifacts [2] | Leads to misdiagnosis; low sensitivity in manual identification [3] | |

| Operational Inefficiency | Fragile glass slides and physical storage [1] | Logistical complications and risk of sample damage during transport |

| Inefficient remote consultations [1] | Requires shipping physical slides, causing significant delays |

The YOLO Framework: An Automated Solution for Parasite Egg Detection

YOLO-based deep learning models represent a paradigm shift in parasitological diagnostics. These one-stage object detection algorithms can identify and classify parasitic elements in microscopic images with remarkable speed and accuracy, directly mitigating the constraints of manual microscopy [8] [5].

Experimental Performance of YOLO Models

Research demonstrates that various optimized YOLO models achieve superior performance in detecting and recognizing parasitic eggs, offering a viable solution for rapid, automated diagnostics.

Table 2: Performance Metrics of YOLO Models in Parasite Detection

| Model Variant | Reported Performance Metrics | Experimental Context |

|---|---|---|

| YCBAM (YOLOv8-based) [2] [3] | Precision: 0.9971, Recall: 0.9934, mAP\@0.5: 0.9950 | Detection of pinworm parasite eggs in microscopic images |

| YOLOv7-tiny [9] | mAP: 98.7% | Recognition of 11 species of intestinal parasitic eggs in stool microscopy |

| YOLOv5 [5] | mAP: ~97%, Detection Time: 8.5 ms per sample | Detection and classification of six common classes of protozoan cysts and helminthic eggs |

| YOLOv3 [7] | Recognition Accuracy: 94.41% | Recognition of Plasmodium falciparum in clinical thin blood smears |

| YAC-Net (YOLOv5n-based) [8] | Precision: 97.8%, mAP_0.5: 0.9913, Parameters: 1.92 million | Lightweight model for parasite egg detection; optimized for low computational resources |

Detailed Experimental Protocol for YOLO-Based Parasite Egg Detection

The following protocol outlines a standard methodology for training and validating a YOLO model for automated parasite egg detection, synthesizing common approaches from recent studies [2] [9] [5].

Objective: To train a deep learning model for the automated detection and localization of parasite eggs in digitized whole-slide microscopic images.

Materials and Reagents:

- Microscopy System: A standard light microscope equipped with a high-resolution digital camera or a dedicated whole-slide scanner (e.g., Grundium Ocus series) [1].

- Glass Slides: Prepared stool samples stained using standard parasitological methods (e.g., Giemsa stain) [7].

- Computing Hardware: A computer with a dedicated GPU (e.g., NVIDIA series) for model training. Embedded platforms like Jetson Nano or Raspberry Pi can be used for deployment [9].

- Software: Python programming language with PyTorch or TensorFlow frameworks, and specific YOLO repositories (e.g., Ultralytics for YOLOv5/v8).

Procedure:

- Sample Preparation & Image Acquisition:

- Prepare thin smears of stool samples on glass slides and apply appropriate staining [7].

- Scan the slides using a digital slide scanner or capture images directly from the microscope using a high-resolution camera to create a dataset of digital images [1]. Ensure consistent magnification (e.g., 10x or 40x) [5].

Data Preprocessing & Annotation:

- Image Cropping and Resizing: Use a sliding window approach to crop large scanned images into smaller sub-images compatible with the YOLO model's input size (e.g., 416x416 pixels). Resize images while preserving the aspect ratio, using padding if necessary [7].

- Data Augmentation: Apply techniques such as rotation, flipping, scaling, and color space adjustments (e.g., hue, saturation) to increase the diversity and size of the training dataset, improving model robustness [5].

- Annotation: Using a graphical annotation tool (e.g., Roboflow), expert parasitologists manually draw bounding boxes around each parasite egg in every image and assign the correct class label (e.g., Enterobius vermicularis, Hookworm) [5]. This creates the ground truth data.

Model Training & Validation:

- Dataset Splitting: Randomly divide the annotated dataset into training (80%), validation (10%), and test (10%) sets [7].

- Model Selection & Configuration: Choose a YOLO model architecture (e.g., YOLOv5, YOLOv8). Initialize the model with pre-trained weights from a general dataset (like COCO) to leverage transfer learning.

- Integration of Attention Mechanisms (Optional): For enhanced performance on small objects like pinworm eggs, integrate attention modules such as the Convolutional Block Attention Module (CBAM) into the YOLO architecture to help the model focus on relevant features [2] [3].

- Training Loop: Train the model on the training set. Use the validation set to tune hyperparameters (e.g., learning rate, batch size) and monitor for overfitting.

Model Evaluation:

- Performance Metrics: Evaluate the final model on the held-out test set using standard object detection metrics, including Precision, Recall, F1-score, and mean Average Precision (mAP) at an Intersection over Union (IoU) threshold of 0.5 [2] [8].

- Explainable AI (XAI) Analysis: Employ visualization techniques like Gradient-weighted Class Activation Mapping (Grad-CAM) to generate heatmaps that highlight the image regions most influential to the model's decision, thereby building trust and providing insights [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Solutions for Automated Parasite Detection

| Item | Function/Application |

|---|---|

| Whole-Slide Digital Scanner | Converts physical glass slides into high-resolution digital whole-slide images (WSIs) for analysis [1]. |

| Giemsa Stain | Standard staining reagent used to enhance the contrast and visibility of parasitic structures in blood smears and other samples [7]. |

| Roboflow Annotation Tool | Web-based graphical interface for efficiently labeling and annotating bounding boxes on training images [5]. |

| Pre-trained YOLO Weights | Model parameters pre-trained on large datasets (e.g., COCO); used as a starting point for transfer learning, reducing required data and training time [5]. |

| Grad-CAM (Explainable AI Tool) | Provides visual explanations for model decisions, highlighting the features used to identify parasite eggs, which is crucial for clinical validation [9]. |

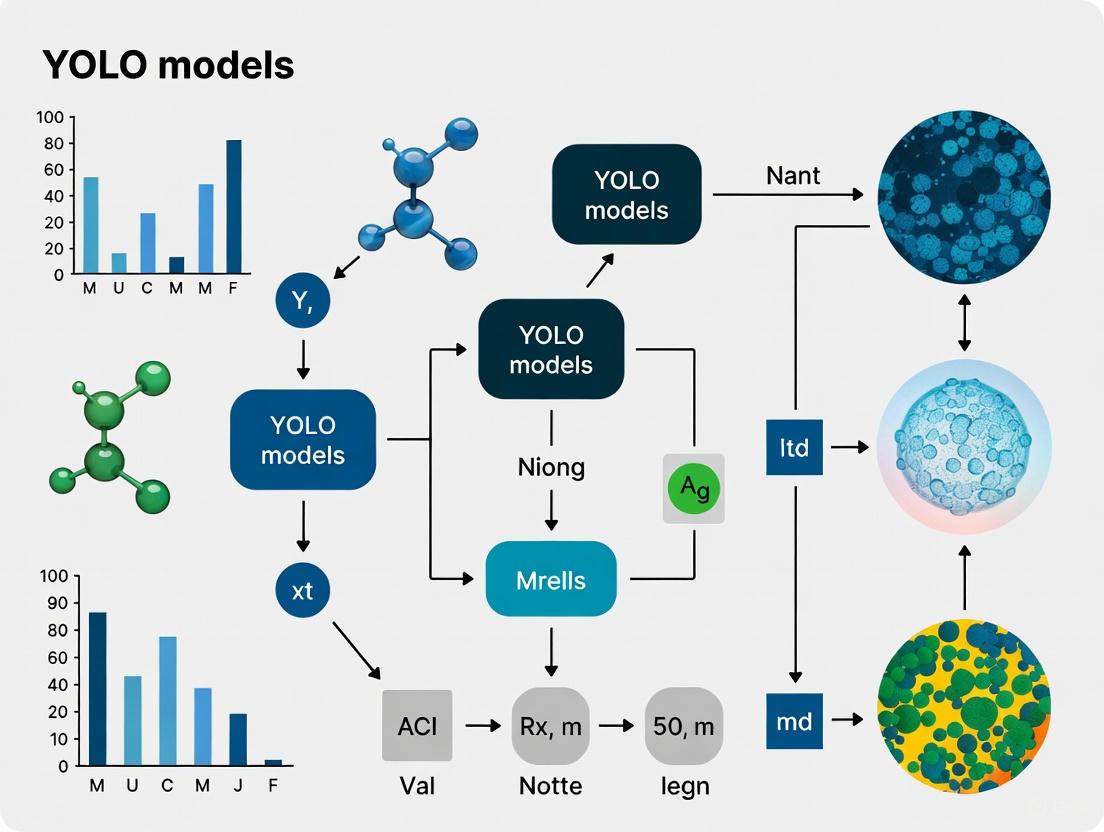

Workflow Visualization: From Sample to Diagnosis

The following diagram illustrates the integrated workflow, contrasting the traditional manual microscopy path with the automated AI-assisted diagnostic pipeline.

The limitations of traditional microscopy—specifically its time-consuming processes and vulnerability to human error—are no longer insurmountable obstacles in parasitology. The integration of digital pathology with robust, lightweight YOLO models provides a viable and superior alternative. These AI-driven systems enable rapid, accurate, and automated detection of parasitic eggs, significantly enhancing diagnostic efficiency and reliability. This technological evolution promises to reshape diagnostic workflows, particularly in resource-limited settings, ensuring faster and more precise patient care.

Public Health Impact of Intestinal Parasitic Infections (IPIs)

Intestinal parasitic infections (IPIs) represent a significant global public health challenge, particularly in low-income settings where access to clean water, sanitation, and hygiene (WASH) facilities is limited. These infections are caused by various protozoa and helminths and disproportionately affect vulnerable populations, including children, institutionalized groups, and communities in resource-poor regions [10] [11] [12]. The World Health Organization estimates that approximately 1.5 billion people are infected with soil-transmitted helminths globally, while protozoan parasites such as Giardia lamblia and Entamoeba histolytica also contribute substantially to the disease burden [10] [8].

The global distribution of IPIs varies significantly by region and population group. Recent systematic reviews indicate that the overall prevalence of IPIs among institutionalized populations is approximately 34.0%, with rehabilitation centers showing the highest prevalence at 57.0% [12]. Among general school-aged children in endemic areas, prevalence can be even higher, with studies from Jalalabad, Afghanistan, reporting infection rates of 48.8% [10]. The substantial burden of these infections manifests through nutritional deficiencies, impaired growth, poor cognitive development, and reduced academic performance in children, creating long-term consequences for human capital development in affected regions [10].

Table 1: Global Prevalence of Intestinal Parasitic Infections in Different Populations

| Population Group | Prevalence | Most Common Parasites | Geographic Context | Citation |

|---|---|---|---|---|

| Schoolchildren | 48.8% | Giardia lamblia (35.8%), Entamoeba histolytica (34.3%) | Jalalabad, Afghanistan | [10] |

| Institutionalized Populations | 34.0% | Blastocystis hominis (18.6%), Ascaris lumbricoides (5.0%) | Global (59 studies) | [12] |

| Rehabilitation Center Residents | 57.0% | Mixed protozoan and helminth infections | Multi-continental | [12] |

The economic impact of parasitic infections extends beyond human health to affect livestock and agriculture. Plant-parasitic nematodes alone cause global crop yield losses estimated at $125-350 billion annually, while human parasitic diseases reduce productivity and healthcare resources in endemic regions [11]. The World Health Organization reports that IPIs contribute significantly to disability-adjusted life years (DALYs), particularly in children, though mortality rates are typically lower than other infectious diseases [11].

Current Diagnostic Challenges and the Need for Automation

Limitations of Conventional Diagnostic Methods

Traditional methods for diagnosing IPIs rely primarily on microscopic examination of stool samples using techniques such as direct smear, formalin-ethyl acetate concentration technique (FECT), and Kato-Katz thick smear [13]. While these methods remain the gold standard in many settings due to their simplicity and cost-effectiveness, they suffer from several significant limitations:

- Time-consuming processes: Manual microscopic examination is labor-intensive, requiring skilled laboratory technicians to process and analyze each sample individually [2] [8].

- Diagnostic variability: Accuracy is highly dependent on the expertise and experience of the microscopist, leading to substantial inter-observer variability [14] [13].

- Low sensitivity: Especially in cases of low parasitic load, manual methods may yield false negatives, necessitating repeated examinations for reliable diagnosis [13].

- Workplace challenges: The process involves exposure to unpleasant odors and potentially infectious materials, creating an undesirable work environment [8].

These limitations are particularly problematic in resource-constrained settings where the burden of IPIs is highest, yet trained personnel and laboratory resources are most scarce. The diagnostic challenges contribute to underreporting, delayed treatment, and ongoing transmission in endemic communities.

The Promise of Automated Detection Systems

Recent advances in artificial intelligence, particularly deep learning and computer vision, offer transformative solutions to these diagnostic challenges. Automated detection systems based on convolutional neural networks (CNNs) and YOLO (You Only Look Once) architectures can potentially revolutionize parasitology diagnostics by [2] [9] [8]:

- Reducing reliance on specialist expertise: Automated systems can perform at expert-level accuracy without requiring highly trained parasitologists at every diagnostic site.

- Increasing throughput: AI-based systems can process samples significantly faster than human technicians, enabling large-scale screening programs.

- Improving accuracy and consistency: Computer vision models eliminate human fatigue factors and provide consistent application of diagnostic criteria.

- Enabling remote diagnostics: Digital pathology allows for telemedicine applications in remote or underserved areas.

The integration of automated detection systems into public health programs represents a promising strategy for expanding access to accurate diagnosis and enabling timely intervention for IPIs.

YOLO-Based Detection: Experimental Protocols and Workflows

Model Selection and Optimization Framework

The implementation of YOLO-based detection systems for intestinal parasites requires careful consideration of model architecture, computational requirements, and diagnostic performance. Recent research has evaluated multiple YOLO variants to identify optimal configurations for parasitic egg detection in microscopic images [9] [8] [13].

Table 2: Performance Comparison of YOLO Models for Parasite Egg Detection

| Model Variant | mAP@0.5 | Precision | Recall | Inference Speed (FPS) | Parameters | Key Strengths |

|---|---|---|---|---|---|---|

| YOLOv7-tiny | 98.7% | - | - | - | - | Highest mAP [9] |

| YOLOv10n | - | - | 100% | - | - | Highest recall [9] |

| YOLOv8n | - | - | - | 55 | - | Fastest inference [9] |

| YAC-Net | 99.13% | 97.8% | 97.7% | - | 1.92M | Optimized for low-resource settings [8] |

| YOLOv8-m | - | 62.02% | 46.78% | - | - | Strong overall performance [13] |

The YAC-Net architecture exemplifies model optimization specifically for parasitic egg detection. This approach modifies the standard YOLOv5n baseline by [8]:

- Replacing the feature pyramid network (FPN) with an asymptotic feature pyramid network (AFPN) to better integrate spatial contextual information

- Substituting the C3 module with a C2f module in the backbone to enrich gradient flow

- Implementing adaptive spatial feature fusion to reduce computational complexity while maintaining detection performance

These modifications resulted in a 1.1% increase in precision, 2.8% improvement in recall, and reduction of parameters by one-fifth compared to the baseline YOLOv5n model, making it particularly suitable for resource-constrained environments [8].

Comprehensive Experimental Protocol

Sample Preparation and Image Acquisition

- Stool Processing: Collect fresh stool samples and process using formalin-ethyl acetate concentration technique (FECT) to concentrate parasitic elements [13].

- Slide Preparation: Prepare microscopic slides using direct smear or MIF (Merthiolate-iodine-formalin) staining techniques to enhance visual contrast [13].

- Digital Imaging: Capture high-resolution images (minimum 1000x1000 pixels) using microscope-mounted digital cameras at 100x-400x magnification.

- Data Curation: Assemble a diverse image dataset representing various parasite species, staining conditions, and image qualities to ensure model robustness.

Model Training and Validation

- Data Annotation: Manually annotate images using bounding boxes to identify and classify parasitic eggs, cysts, and trophozoites using standardized labeling protocols.

- Data Partitioning: Split dataset into training (80%), validation (10%), and test (10%) sets using five-fold cross-validation to ensure statistical reliability [8].

- Augmentation Strategies: Implement comprehensive data augmentation including rotation, flipping, color variation, and synthetic noise to improve model generalization.

- Training Configuration: Utilize transfer learning from pre-trained weights, with hyperparameter optimization focusing on learning rate (0.01-0.001), batch size (8-16 based on GPU memory), and optimizer selection (AdamW or SGD with momentum).

- Evaluation Metrics: Assess model performance using mean average precision (mAP), precision-recall curves, F1 scores, and inference latency on embedded platforms.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of YOLO-based parasite detection systems requires specific materials and computational resources. The following table outlines essential components of the research and deployment pipeline.

Table 3: Essential Research Reagents and Materials for Automated Parasite Detection

| Category | Item | Specification/Function | Application Notes |

|---|---|---|---|

| Sample Processing | Formalin-ethyl acetate | Concentration of parasitic elements | Gold standard concentration technique [13] |

| Staining Reagents | Merthiolate-iodine-formalin (MIF) | Fixation and staining of specimens | Enhances contrast for imaging [13] |

| Imaging Hardware | Microscope with digital camera | 100-400x magnification, ≥5MP resolution | Image acquisition quality critical for accuracy |

| Computational Resources | GPU-accelerated workstations | NVIDIA GTX 1080 Ti or superior | Model training and development |

| Deployment Platforms | Embedded systems (Jetson Nano, Raspberry Pi 4) | Edge computing for field deployment | Enables point-of-care diagnostics [9] |

| Annotation Software | LabelImg, VGG Image Annotator | Bounding box annotation for training data | Critical for supervised learning approach |

| Software Frameworks | PyTorch, TensorFlow, Ultralytics | Deep learning model implementation | Pre-trained models accelerate development |

Performance Validation and Comparative Analysis

Rigorous validation of YOLO-based detection systems demonstrates their strong potential for revolutionizing parasitology diagnostics. Recent comparative studies have evaluated these systems against human expert performance and alternative AI approaches.

In performance validation studies, the DINOv2-large model achieved remarkable accuracy of 98.93%, precision of 84.52%, and sensitivity of 78.00% in intestinal parasite identification [13]. Meanwhile, YOLOv8-m demonstrated accuracy of 97.59% with specificity of 99.13%, indicating exceptional performance in confirming true negatives [13]. These metrics approach or exceed human expert performance while offering significantly higher throughput.

The agreement between AI models and human technologists has been quantitatively assessed using statistical measures. Cohen's Kappa analysis revealed scores exceeding 0.90 for all models, indicating almost perfect agreement with human experts [13]. Bland-Altman analysis further confirmed strong concordance, with the best performance showing mean differences of 0.0199 between FECT performed by medical technologists and YOLOv4-tiny predictions [13].

Notably, YOLO-based models demonstrate particular strength in detecting helminthic eggs and larvae due to their more distinct morphological features compared to protozoan cysts and trophozoites [13]. This enhanced performance for helminth detection is significant from a public health perspective, as soil-transmitted helminths infect hundreds of millions of people globally and are primary targets for mass drug administration programs.

Implications for Public Health and Future Directions

The integration of YOLO-based automated detection systems into public health programs offers transformative potential for combating intestinal parasitic infections. These technologies align with several critical public health priorities:

Enhanced Surveillance and Outbreak Response Automated detection systems enable large-scale screening programs that can accurately monitor prevalence trends and rapidly identify outbreaks. The high throughput of these systems allows public health authorities to implement more responsive and targeted interventions based on real-time data [9] [8].

Resource Optimization in Endemic Settings The development of lightweight YOLO variants capable of running on embedded systems like Raspberry Pi and Jetson Nano brings sophisticated diagnostic capabilities to remote and resource-limited settings [9]. This deployment flexibility addresses the critical gap in diagnostic resources that has historically hampered parasitic disease control in endemic regions.

Integration with Existing Health Systems Successful implementation requires careful integration with existing laboratory systems and health infrastructure. Future development should focus on [8] [13]:

- Creating user-friendly interfaces for laboratory technicians

- Establishing standardized validation protocols

- Developing continuous learning systems that adapt to local parasite morphology

- Ensuring interoperability with laboratory information management systems

The promising performance of YOLO-based detection systems, with mAP scores exceeding 98% in some configurations [9], demonstrates the technical feasibility of automated parasite detection. As these systems continue to evolve, they offer the potential to significantly reduce the global burden of intestinal parasitic infections through earlier detection, more targeted treatment, and improved surveillance capabilities.

The Role of Deep Learning in Revolutionizing Parasitology Diagnostics

Parasitic infections remain a major global health challenge, affecting billions of people worldwide and causing significant morbidity and mortality [13] [15]. Traditional diagnostic methods in parasitology, particularly manual microscopic examination of stool samples, are time-consuming, labor-intensive, and susceptible to human error [3] [13]. These limitations are especially pronounced in resource-constrained settings and regions with high parasitic disease burden. The emergence of deep learning (DL), a subset of artificial intelligence (AI), has introduced transformative solutions to these diagnostic challenges. By automating the detection and identification of parasitic elements in microscopic images, DL technologies offer the potential to enhance diagnostic accuracy, improve efficiency, and enable large-scale screening programs. This article explores the revolutionary impact of DL on parasitology diagnostics, with a specific focus on You Only Look Once (YOLO) models for automated parasite egg detection, providing detailed application notes and experimental protocols for researchers and drug development professionals.

Deep Learning Approaches in Parasitology

Evolution of Diagnostic Methods

The gold standard for parasitology diagnostics has historically involved conventional techniques such as the formalin-ethyl acetate centrifugation technique (FECT) and Merthiolate-iodine-formalin (MIF) staining, followed by manual microscopic examination [13]. These methods, while cost-effective and widely available, present significant limitations. They are inherently subjective, dependent on technician expertise, and poorly suited for high-throughput settings. Studies have shown that in routine laboratory practice, only approximately 3% of submitted stool samples test positive for parasites, indicating substantial inefficiency in resource allocation [16]. Molecular methods like polymerase chain reaction (PCR) offer improved sensitivity and specificity but are often time-consuming, costly, and require specialized equipment and personnel [13].

Deep Learning Architectures for Parasite Detection

Deep learning has emerged as a powerful alternative, with several architectures demonstrating remarkable performance in parasitology diagnostics:

- YOLO Models: Single-stage object detection networks that provide real-time detection capabilities by framing detection as a regression problem [13]. Versions like YOLOv4-tiny, YOLOv7-tiny, and YOLOv8 have been successfully applied to parasite identification.

- Convolutional Neural Networks: Used for classification tasks, with architectures like ResNet-50, ResNet-101, and NASNet-Mobile achieving high accuracy in distinguishing parasitic elements from artifacts [3].

- Self-Supervised Learning Models: Approaches like DINOv2 learn features from unlabeled datasets, reducing dependency on extensive manual annotation [13].

- Attention-Enhanced Architectures: Recent innovations like the YOLO Convolutional Block Attention Module integrate attention mechanisms with object detection to improve feature extraction and focus on relevant image regions [3].

Performance Comparison of Deep Learning Models

Recent validation studies have demonstrated the superior performance of deep learning approaches compared to conventional methods and human experts. The tables below summarize quantitative performance metrics across different models and architectures.

Table 1: Performance comparison of object detection models for parasite identification

| Model | Precision (%) | Sensitivity/Recall (%) | Specificity (%) | F1 Score (%) | mAP@0.5 |

|---|---|---|---|---|---|

| YCBAM (Pinworm eggs) [3] | 99.71 | 99.34 | - | - | 99.50 |

| YOLOv8-m (Intestinal parasites) [13] | 62.02 | 46.78 | 99.13 | 53.33 | - |

| YOLOv4-tiny (34 parasite classes) [13] | 96.25 | 95.08 | - | - | - |

| DINOv2-large (Intestinal parasites) [13] | 84.52 | 78.00 | 99.57 | 81.13 | - |

Table 2: Performance of classification models for specific parasitic infections

| Parasite/Infection | Model Architecture | Accuracy (%) | Precision (%) | Recall (%) | Specificity (%) |

|---|---|---|---|---|---|

| Malaria species [17] | Custom CNN (7-channel) | 99.51 | 99.26 | 99.26 | 99.63 |

| Pinworm eggs [3] | NASNet-Mobile/ResNet-101 | 97.00 | - | - | - |

| Plasmodium spp. [15] | ROENet (ResNet-18) | 95.73 | - | 94.79 | 96.68 |

The data reveal that DL models consistently achieve high performance metrics, with certain architectures like the YCBAM for pinworm detection reaching exceptional precision and recall above 99% [3]. For intestinal parasite identification, DINOv2-large demonstrates balanced performance across multiple metrics, making it suitable for complex diagnostic scenarios [13].

Application Notes: YOLO Models for Parasite Egg Detection

YOLO Convolutional Block Attention Module

The YCBAM architecture represents a significant advancement in automated parasite egg detection. This framework integrates YOLOv8 with self-attention mechanisms and the Convolutional Block Attention Module to enhance detection capabilities, particularly for challenging imaging conditions [3]. The self-attention component enables the model to focus on essential image regions while suppressing irrelevant background features. Simultaneously, CBAM enhances feature extraction by sequentially applying channel and spatial attention modules, improving sensitivity to small critical features like pinworm egg boundaries [3].

Experimental validation of YCBAM demonstrated a precision of 0.9971, recall of 0.9934, and training box loss of 1.1410, indicating efficient learning and convergence. The model achieved a mean Average Precision of 0.9950 at an IoU threshold of 0.50 and a mAP50-95 score of 0.6531 across varying IoU thresholds [3]. This performance surpasses traditional YOLO implementations and establishes a new benchmark for parasitic egg detection in microscopic images.

Implementation Considerations

Successful implementation of YOLO models for parasite detection requires attention to several critical factors:

- Dataset Curation: Models require extensive datasets of annotated microscopic images. The YCBAM study utilized datasets with hundreds to thousands of images, with precise bounding box annotations for training [3].

- Computational Requirements: Training YOLO models demands significant computational resources, typically requiring GPUs such as NVIDIA GeForce RTX series for efficient processing [17].

- Preprocessing Techniques: Image enhancement techniques including noise reduction, contrast adjustment, and color normalization substantially improve model performance [3] [17].

- Data Augmentation: To address limited training data and improve model generalization, techniques like rotation, flipping, and scaling are essential [3].

Experimental Protocols

Protocol 1: YOLO-based Parasite Egg Detection in Microscopic Images

Objective: To automate the detection and localization of parasite eggs in microscopic images using YOLO models with attention mechanisms.

Materials:

- Microscope with digital imaging capabilities

- Stool samples preserved in formalin or MIF solution

- Standard laboratory equipment for sample preparation

- Computer workstation with GPU (minimum: NVIDIA GeForce RTX 3060)

- Python 3.8+ with PyTorch and Ultralytics YOLO implementation

Procedure:

- Sample Preparation:

- Prepare stool samples using formalin-ethyl acetate concentration technique (FECT) or MIF staining [13].

- Create standardized smears on microscope slides.

- Capture digital images at 100-400x magnification using calibrated microscope cameras.

Dataset Curation:

Model Configuration:

- Implement YOLOv8 architecture as baseline.

- Integrate Convolutional Block Attention Module after each convolutional block.

- Incorporate self-attention mechanisms in the backbone network.

- Set hyperparameters: initial learning rate 0.01, momentum 0.937, weight decay 0.0005.

Training:

- Train model for 100-200 epochs with batch size 16-32.

- Apply data augmentation: rotation (±15°), scaling (0.8-1.2x), horizontal flipping.

- Monitor loss functions (box loss, classification loss) and metrics (precision, recall, mAP).

Validation:

- Evaluate model on test set using standard metrics: precision, recall, F1-score, mAP at IoU thresholds 0.5 and 0.5-0.95.

- Compare performance with human experts using Cohen's Kappa and Bland-Altman analysis [13].

Troubleshooting:

- For poor precision: Increase attention module capacity, adjust confidence threshold.

- For low recall: Enhance data augmentation, review annotation quality.

- For training instability: Reduce learning rate, implement gradient clipping.

Protocol 2: Multi-species Parasite Identification Using DINOv2

Objective: To accurately identify and classify multiple parasite species from microscopic images using self-supervised learning.

Materials:

- Similar to Protocol 1, with emphasis on diverse parasite species

- DINOv2 implementation (ViT-S, ViT-B, or ViT-L architectures)

Procedure:

- Sample Preparation and Imaging: Follow steps from Protocol 1, ensuring representation of target parasite species.

Feature Extraction:

- Utilize DINOv2 pre-trained on diverse image datasets.

- Extract features from microscopic images without extensive labeling [13].

Classifier Training:

- Add sequential classifier transforming features to 256 dimensions before final classification.

- Train with limited labeled data (1-10% of full dataset) [13].

Evaluation:

- Assess class-wise performance for helminthic eggs, larvae, and protozoan cysts.

- Validate against reference standards (FECT/MIF with expert microscopy).

Workflow Visualization

Diagram 1: Workflow for deep learning-based parasite detection system

Research Reagent Solutions

Table 3: Essential research reagents and materials for deep learning in parasitology

| Reagent/Material | Specifications | Application/Function |

|---|---|---|

| Formalin-ethyl acetate | Laboratory grade | Sample preservation and concentration [13] |

| Merthiolate-iodine-formalin (MIF) | Standard formulation | Staining and fixation of parasitic elements [13] |

| Annotation software | LabelImg, CVAT, or similar | Bounding box annotation for training data [3] |

| Deep learning framework | PyTorch, TensorFlow | Model implementation and training [3] [17] |

| YOLO implementation | Ultralytics YOLOv8 | Object detection baseline model [3] |

| Attention modules | CBAM implementation | Enhanced feature extraction [3] |

| GPU computing resources | NVIDIA RTX 3000/4000 series | Accelerated model training [17] |

Deep learning technologies, particularly YOLO-based models with attention mechanisms, are fundamentally transforming parasitology diagnostics. The exceptional performance demonstrated by architectures like YCBAM and DINOv2 highlights the potential for automated systems to achieve expert-level accuracy while offering superior scalability and efficiency. These advancements address critical limitations of conventional microscopy, including inter-observer variability, labor intensiveness, and throughput constraints. For researchers and drug development professionals, the protocols and application notes provided herein offer practical guidance for implementing these cutting-edge technologies. Future directions will likely focus on multi-modal approaches combining computer vision with molecular diagnostics, expanded model capabilities for rare parasite species, and deployment optimization for point-of-care applications in resource-limited settings. As deep learning continues to evolve, its integration into parasitology diagnostics promises to enhance global capacity for parasitic infection control, outbreak management, and public health surveillance.

Object detection, a fundamental task in computer vision, involves identifying and localizing objects within an image by predicting bounding boxes and corresponding class labels [18]. The You Only Look Once (YOLO) framework, first introduced in 2015, revolutionized this field by proposing a unified architecture that predicts bounding boxes and class probabilities in a single pass over the image, significantly improving inference speed while maintaining competitive accuracy compared to previous two-stage detectors [18]. Over the past decade, YOLO has evolved from a streamlined detector into a diverse family of architectures characterized by efficient design, modular scalability, and cross-domain adaptability [18]. This evolution has made YOLO particularly valuable for specialized applications such as automated parasite egg detection, where real-time performance and accuracy are critical for diagnostic efficiency [2] [5] [8].

The development of YOLO marked a turning point in object detection by offering an unprecedented balance between accuracy and efficiency that resonated strongly across both academic and industrial communities [18]. Prior to YOLO, two-stage detectors dominated the deep learning era by decoupling the detection process into region proposal generation followed by region classification and refinement [18]. While effective, these approaches introduced latency and increased computational cost, making them less suitable for real-time applications [18]. YOLO's single-stage, unified approach addressed these limitations, establishing itself as one of the most influential and widely adopted object detection frameworks [18].

Evolution of YOLO Architectures

Key Architectural Milestones

The YOLO family has undergone significant architectural evolution since its initial release, with each version introducing innovations to improve performance, efficiency, and applicability across diverse domains. YOLOv5 incorporated Cross Stage Partial networks (CSP) into the CSPDarknet backbone and utilized Path Aggregation Network (PANet) in its neck section to improve information flow, enhancing both parameter efficiency and feature utilization [5]. These advancements made YOLOv5 particularly effective for medical imaging tasks, including intestinal parasite egg detection, where it achieved a mean Average Precision (mAP) of approximately 97% [5].

YOLO-NAS further advanced the architecture through Neural Architecture Search, identifying optimal configurations that balance accuracy and computational efficiency [19]. This version integrated DenseNet with Spatial Pyramid Average Pooling (SPAP) to improve multi-scale feature extraction and context information sharing [19]. The incorporation of the MISH activation function added non-monotonic behavior, enhancing feature representation and gradient flow, while the Artificial Bee Colony (ABC) optimization algorithm automated hyperparameter tuning [19]. These improvements resulted in a model that outperformed YOLOv6, YOLOv7, and YOLOv8 across multiple metrics including precision, recall, and mAP [19].

The recently introduced YOLO12 represents a paradigm shift toward attention-centric architectures while maintaining the real-time inference speed essential for many applications [20]. It introduces an Area Attention mechanism that efficiently processes large receptive fields by dividing feature maps into equal-sized regions, significantly reducing computational cost compared to standard self-attention [20]. Additionally, YOLO12 incorporates Residual Efficient Layer Aggregation Networks (R-ELAN) with block-level residual connections and a redesigned feature aggregation method to address optimization challenges in larger-scale attention-centric models [20].

Quantitative Performance Comparison

Table 1: Performance Comparison of Selected YOLO Variants on COCO Dataset

| Model | Input Size (pixels) | mAPval (50-95) | Parameters (M) | FLOPs (B) | T4 TensorRT Speed (ms) |

|---|---|---|---|---|---|

| YOLO12n | 640 | 40.6 | 2.6 | 6.5 | 1.64 |

| YOLO12s | 640 | 48.0 | 9.3 | 21.4 | 2.61 |

| YOLO12m | 640 | 52.5 | 20.2 | 67.5 | 4.86 |

| YOLO12l | 640 | 53.7 | 26.4 | 88.9 | 6.77 |

| YOLO12x | 640 | 55.2 | 59.1 | 199.0 | 11.79 |

Performance metrics sourced from published results on COCO val2017 dataset [20]

Table 2: Specialized YOLO Models for Parasite Egg Detection

| Model | Application | Precision | Recall | mAP@0.5 | Key Innovation |

|---|---|---|---|---|---|

| YOLOv5 [5] | Intestinal Parasite Detection | ~97% | - | ~97% | CSPDarknet, PANet |

| YCBAM [2] | Pinworm Egg Detection | 99.7% | 99.3% | 99.5% | Convolutional Block Attention Module |

| Optimized YOLO-NAS [19] | General Object Detection | 98% | - | - | MISH activation, ABC optimization |

| YAC-Net [8] | Parasite Egg Detection | 97.8% | 97.7% | 99.1% | Asymptotic Feature Pyramid Network |

YOLO for Automated Parasite Egg Detection: Methodological Framework

Experimental Protocol for Parasite Egg Detection

Dataset Preparation and Annotation

- Image Collection: Acquire microscopic images of stool samples at 10× magnification with recommended resolution of 416×416 pixels [5]. Dataset should include diverse parasite species; for example, the protocol used by researchers included hookworm eggs, Hymenolepsis nana, Taenia, Ascaris lumbricoides, and Fasciolopsis buski [5].

- Data Annotation: Utilize annotation tools such as Roboflow for labeling bounding boxes around parasite eggs [5]. Annotation should be performed by trained parasitologists to ensure accuracy.

- Data Augmentation: Implement augmentation techniques including rotation, scaling, color space adjustments, and noise injection to increase dataset diversity and improve model generalization [5]. This is particularly important given the limited availability of annotated medical images.

Model Configuration and Training

- Backbone Selection: Choose appropriate backbone based on computational constraints; CSPDarknet for balanced performance [5] or DenseNet with SPAP for enhanced multi-scale context [19].

- Attention Integration: For challenging detection scenarios with small objects, incorporate attention mechanisms such as Convolutional Block Attention Module (CBAM) or self-attention to help the model focus on relevant spatial regions and channel features [2].

- Hyperparameter Optimization: Utilize optimization algorithms like Artificial Bee Colony (ABC) for automated hyperparameter tuning, particularly for learning rate, batch size, and confidence thresholds [19].

- Training Protocol: Implement five-fold cross-validation to ensure robust performance evaluation [8]. Monitor key metrics including precision, recall, F1-score, and mAP at various IoU thresholds.

Validation and Evaluation

- Performance Metrics: Evaluate model using precision, recall, F1-score, and mAP at IoU thresholds of 0.50, 0.75, and 0.50-0.95 [2] [8].

- Comparative Analysis: Benchmark performance against existing state-of-the-art models and traditional manual microscopy [5] [8].

- Clinical Validation: Conduct blind testing with expert parasitologists to establish diagnostic concordance and identify potential failure modes.

Architectural Workflow for Parasite Egg Detection

Diagram 1: Parasite egg detection workflow

Research Reagent Solutions

Table 3: Essential Research Materials and Computational Tools

| Component | Function | Example Implementation |

|---|---|---|

| Annotation Tool | Bounding box labeling for training data | Roboflow GUI [5] |

| Backbone Network | Feature extraction from input images | CSPDarknet, DenseNet-SPAP [19] [5] |

| Attention Module | Enhanced focus on relevant regions | Convolutional Block Attention Module (CBAM) [2] |

| Feature Fusion Neck | Multi-scale feature integration | AFPN, PANet [5] [8] |

| Optimization Algorithm | Hyperparameter tuning | Artificial Bee Colony (ABC) [19] |

| Evaluation Framework | Performance quantification | mAP, precision, recall, F1-score [2] [8] |

Advanced Architectural Innovations

Attention Mechanisms in YOLO

The integration of attention mechanisms has significantly enhanced YOLO's capability for parasite egg detection, where target objects are often small and embedded in complex backgrounds. The YOLO Convolutional Block Attention Module (YCBAM) integrates self-attention mechanisms with CBAM to enable precise identification and localization of parasitic elements in challenging imaging conditions [2]. This integration employs channel attention to emphasize important feature channels and spatial attention to focus on relevant spatial regions, substantially improving detection accuracy for small objects like pinworm eggs [2].

YOLO12's Area Attention mechanism represents a further innovation, processing large receptive fields efficiently by dividing feature maps into equal-sized regions either horizontally or vertically [20]. This approach avoids complex operations while maintaining a large effective receptive field, significantly reducing computational cost compared to standard self-attention [20]. The model also incorporates FlashAttention to minimize memory access overhead and removes positional encoding for a cleaner, faster architecture [20].

Lightweight Architecture Optimizations

For deployment in resource-constrained settings typical of parasitic infection hotspots, lightweight YOLO variants have been developed. YAC-Net modifies YOLOv5n by replacing the feature pyramid network (FPN) with an asymptotic feature pyramid network (AFPN) structure [8]. This hierarchical and asymptotic aggregation structure fully fuses spatial contextual information of egg images, with adaptive spatial feature fusion helping the model select beneficial features while ignoring redundant information [8]. Additionally, the C3 module in the backbone is modified to a C2f module to enrich gradient flow information, improving feature extraction capability while reducing parameters by one-fifth compared to the baseline YOLOv5n [8].

Diagram 2: Key developments in YOLO architecture

The evolution of YOLO architecture has transformed the landscape of real-time object detection, with significant implications for automated parasite egg detection in clinical and field settings. From its initial unified detection approach to recent attention-optimized architectures, YOLO has consistently balanced the critical demands of accuracy and computational efficiency. The integration of specialized components—including attention mechanisms, optimized backbone networks, and lightweight feature fusion modules—has enabled YOLO-based frameworks to achieve remarkable performance in detecting challenging microscopic targets like parasite eggs, with recent models achieving precision and recall rates exceeding 97% [2] [5] [8]. These advancements provide a solid foundation for deploying automated diagnostic systems in resource-constrained environments where parasitic infections are most prevalent, potentially revolutionizing public health approaches to these widespread neglected tropical diseases.

Current Landscape of AI-Assisted Parasite Egg Detection Research

Parasitic infections remain a major global health challenge, affecting billions of people worldwide, particularly in resource-limited settings where traditional diagnostic methods struggle with throughput and accuracy requirements [21] [22]. The current gold standard for parasite diagnosis relies on manual microscopic examination of stool samples, a process that is time-consuming, labor-intensive, and susceptible to human error due to examiner fatigue and the morphological similarities between different parasite eggs [2] [21]. These limitations have prompted significant research into artificial intelligence (AI)-assisted diagnostic solutions, with You Only Look Once (YOLO) models emerging as particularly promising frameworks for automated parasite egg detection.

This application note provides a comprehensive overview of the current landscape of AI-assisted parasite egg detection research, with particular emphasis on YOLO model architectures, their performance characteristics, and detailed experimental protocols for implementation. We focus specifically on the context of a broader thesis on YOLO models for automated parasite egg detection research, providing researchers, scientists, and drug development professionals with practical guidance for developing and validating these systems.

Current Research Landscape

Performance Comparison of Detection Models

Recent studies have demonstrated the exceptional capability of YOLO-based models in detecting and classifying helminth eggs from microscopic images. The table below summarizes key performance metrics from recent investigations:

Table 1: Performance metrics of recent AI models for parasite egg detection

| Model | mAP@0.5 | Precision | Recall | F1-Score | Primary Parasites Detected | Citation |

|---|---|---|---|---|---|---|

| YCBAM (YOLO with attention) | 0.9950 | 0.9971 | 0.9934 | - | Pinworm (Enterobius vermicularis) | [2] |

| YOLOv7-tiny | 0.987 | - | - | - | 11 parasite species including Enterobius vermicularis, Hookworm, Opisthorchis viverrine | [9] |

| YOLOv10n | - | - | 1.00 | 0.986 | Mixed helminth species | [9] |

| YOLOv4 | - | - | - | - | E. vermicularis (89.31%), F. buski (88.00%), T. trichiura (84.85%) | [21] |

| YAC-Net (YOLOv5-based) | 0.9913 | 0.978 | 0.977 | 0.9773 | Multiple intestinal parasites | [8] |

| YOLOv8-m | 0.755 (AUROC) | 0.6202 | 0.4678 | 0.5333 | Mixed intestinal parasites | [13] |

The integration of attention mechanisms with YOLO architectures represents a significant advancement. The YOLO Convolutional Block Attention Module (YCBAM) integrates self-attention mechanisms and the Convolutional Block Attention Module (CBAM) to enable precise identification and localization of parasitic elements in challenging imaging conditions [2]. This approach has demonstrated remarkable precision (0.9971) and recall (0.9934) for pinworm egg detection, highlighting the value of architectural innovations in improving detection accuracy.

Comparative Analysis of YOLO Variants

A comparative analysis of resource-efficient YOLO models for intestinal parasitic egg recognition revealed that different YOLO variants offer distinct advantages depending on the application requirements [9]. The study evaluated YOLOv5n, yolov5s, yolov7, yolov7-tiny, yolov8n, yolov8s, yolov10n, and yolov10s for rapid and accurate recognition of 11 parasite species eggs, with real-time performance analysis conducted on embedded platforms including Raspberry Pi 4, Intel upSquared with the Neural Compute Stick 2, and Jetson Nano.

Table 2: Comparison of YOLO model characteristics for parasite egg detection

| Model | mAP | Inference Speed (FPS) | Model Size | Best Use Cases |

|---|---|---|---|---|

| YOLOv7-tiny | 98.7% | Moderate | Small | High accuracy applications |

| YOLOv8n | - | 55 FPS (Jetson Nano) | Small | Real-time detection on edge devices |

| YOLOv10n | - | - | Small | Applications requiring high recall |

| YOLOv5n (baseline) | - | - | Small | Resource-constrained environments |

| YAC-Net | 99.13% | - | Small (1.9M parameters) | Low-computing power settings |

Notably, YOLOv7-tiny achieved the overall highest mean Average Precision (mAP) score of 98.7%, while YOLOv8n offered the fastest inference time with a processing speed of 55 frames per second on Jetson Nano hardware [9]. This highlights the importance of selecting model architectures based on specific deployment constraints and performance requirements.

Experimental Protocols

Dataset Preparation and Annotation

Protocol 1: Microscope Image Acquisition and Preprocessing

Sample Collection: Collect helminth egg suspensions for target parasite species. Common species include Ascaris lumbricoides, Trichuris trichiura, Enterobius vermicularis, Ancylostoma duodenale, Schistosoma japonicum, Paragonimus westermani, Fasciolopsis buski, Clonorchis sinensis, and Taenia spp. [21].

Slide Preparation: Place two drops of vortex-mixed egg suspension (approximately 10 μL) on a slide and cover with a coverslip (18 mm × 18 mm), avoiding air bubbles.

Image Acquisition: Photograph slides using a light microscope (e.g., Nikon E100). Ensure consistent lighting conditions and magnification across samples.

Image Cropping: For high-resolution images, employ a sliding window approach to crop original images into smaller tiles of consistent size (e.g., 518 × 486 pixels) to facilitate detection [21].

Dataset Splitting: Divide the dataset into training set (80%), validation set (10%), and test set (10%) to ensure proper model evaluation and prevent overfitting [21].

Protocol 2: Data Annotation for YOLO Training

Bounding Box Annotation: Using annotation tools such as LabelImg, draw bounding boxes around each parasite egg in the images.

Class Labeling: Assign appropriate class labels to each bounding box based on parasite species.

Annotation Format: Save annotations in YOLO format, with each image having a corresponding text file containing:

- Object class index

- Normalized bounding box coordinates (xcenter, ycenter, width, height)

Quality Control: Have annotations verified by multiple trained parasitologists to ensure consistency and accuracy.

Background Images: Include 0-10% background images (images without eggs) to reduce false positives [23].

Model Training and Optimization

Protocol 3: YOLO Model Training with Ultralytics

Environment Setup:

- Install Python 3.8+ and necessary dependencies (PyTorch, Ultralytics)

- Ensure access to GPU resources (NVIDIA GPU with CUDA support recommended)

Model Selection and Initialization:

Training Configuration:

Advanced Training with Attention Mechanisms (for YCBAM implementation):

- Integrate Convolutional Block Attention Module (CBAM) into the YOLO architecture

- CBAM sequentially applies channel and spatial attention to enhance feature representation

- Freeze backbone layers for the first 50 epochs to expedite training convergence [2]

Hyperparameter Tuning:

- Use Ultralytics hyperparameter tuner to optimize learning rate, momentum, and weight decay

Protocol 4: Data Augmentation Strategies

Implement the following data augmentation techniques to improve model generalization:

HSV Augmentation:

- hsv_h=0.015 (hue variation)

- hsv_s=0.7 (saturation variation)

- hsv_v=0.4 (value/brightness variation)

Spatial Transformations:

- degrees=0.0 (rotation)

- translate=0.1 (translation)

- scale=0.5 (scaling)

- shear=0.0 (shearing)

Advanced Augmentations:

- mosaic=1.0 (combines 4 images into one)

- mixup=0.0 (blends two images and their labels)

- copy_paste=0.0 (copies and pastes objects between images)

- erasing=0.4 (random erasing of image portions) [23]

Disable mosaic augmentation for the last 10 epochs (close_mosaic=10) to improve final model accuracy [23].

Visualization of Workflows

Experimental Workflow for AI-Assisted Parasite Detection

The following diagram illustrates the complete experimental workflow for developing an AI-assisted parasite egg detection system:

YCBAM Architecture with Attention Mechanisms

The YCBAM (YOLO Convolutional Block Attention Module) architecture integrates attention mechanisms with YOLO to improve detection performance:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents and materials for AI-assisted parasite detection

| Item | Specification/Example | Function/Purpose | Reference |

|---|---|---|---|

| Parasite Egg Suspensions | Commercially available suspensions (Ascaris lumbricoides, Trichuris trichiura, etc.) from suppliers like Deren Scientific Equipment Co. Ltd. | Provide standardized biological material for creating training datasets | [21] |

| Microscopy Equipment | Light microscope (e.g., Nikon E100) with digital camera | Image acquisition of parasite eggs at appropriate magnifications | [21] |

| Slide Preparation Materials | Microscope slides (75 × 25 mm), coverslips (18 × 18 mm) | Preparation of samples for imaging | [21] |

| Computational Hardware | NVIDIA GPUs (e.g., RTX 3090, A100), embedded systems (Jetson Nano, Raspberry Pi 4) | Model training (high-end GPUs) and deployment (embedded systems) | [9] [21] |

| Deep Learning Frameworks | PyTorch, Ultralytics YOLO, TensorFlow | Model development, training, and evaluation | [24] [21] |

| Data Annotation Tools | LabelImg, CVAT, Make Sense AI | Creating bounding box annotations for training data | - |

| Staining Solutions | Merthiolate-iodine-formalin (MIF), other staining protocols | Enhance contrast and visibility of parasite structures | [13] |

The current landscape of AI-assisted parasite egg detection research demonstrates significant advancements in accuracy, efficiency, and accessibility of parasitic infection diagnostics. YOLO-based models, particularly those enhanced with attention mechanisms like YCBAM, have achieved remarkable performance metrics with precision and recall rates exceeding 99% in some configurations [2]. The development of lightweight models such as YAC-Net and optimization for embedded systems like Jetson Nano have further increased the potential for deploying these systems in resource-limited settings where parasitic infections are most prevalent [9] [8].

Future research directions should focus on expanding model capabilities to handle a wider range of parasite species, improving performance in mixed infection scenarios, and enhancing model interpretability through explainable AI techniques such as Grad-CAM visualization [9]. As these technologies continue to mature, AI-assisted parasite egg detection systems hold tremendous promise for transforming diagnostic workflows in both clinical and public health settings, ultimately contributing to more effective management and control of parasitic infections worldwide.

Architectural Innovations: Advanced YOLO Frameworks for Parasite Detection

YOLO Convolutional Block Attention Module (YCBAM) for Pinworm Eggs

Parasitic infections, particularly those caused by soil-transmitted helminths like pinworms (Enterobius vermicularis), remain a significant global public health challenge. Traditional diagnostic methods rely on manual microscopic examination of stool or perianal samples, a process that is time-consuming, labor-intensive, and susceptible to human error, especially in settings with high sample volumes [3] [2]. The need for specialized expertise and the potential for false negatives due to the small size (50–60 μm in length and 20–30 μm in width) and transparent appearance of pinworm eggs further complicate accurate diagnosis [3].

Recent advancements in automated microscopic imaging and deep learning offer promising solutions to enhance diagnostic accuracy and efficiency. Within this domain, the YOLO (You Only Look Once) family of object detection models has emerged as a powerful tool for real-time medical image analysis [5]. This application note focuses on a novel framework that integrates YOLO with attention mechanisms—the YOLO Convolutional Block Attention Module (YCBAM)—designed specifically to automate the detection of pinworm parasite eggs in microscopic images [3] [2]. The content is framed within a broader research thesis on YOLO models for automated parasite egg detection, detailing the architecture, performance, and experimental protocols for the YCBAM model to aid researchers and scientists in replicating and advancing this technology.

The YCBAM architecture is a sophisticated deep-learning framework built upon the YOLOv8 foundation. Its core innovation lies in the integration of self-attention mechanisms and the Convolutional Block Attention Module (CBAM) to enhance feature extraction and focus on morphologically critical regions of pinworm eggs within complex microscopic backgrounds [3].

The model functions as a single-stage detector, directly predicting bounding boxes and class probabilities for parasite eggs in a single forward pass of the network. This design is essential for maintaining high processing speeds, a crucial requirement for large-scale screening applications. The integration of attention mechanisms specifically addresses the challenge of distinguishing small, translucent pinworm eggs from other microscopic artifacts and debris, a common limitation of traditional models [3].

Table 1: Core Components of the YCBAM Architecture

| Component | Function | Benefit for Pinworm Egg Detection |

|---|---|---|

| YOLOv8 Backbone | Base feature extraction network. | Provides efficient, multi-scale feature learning from input images. |

| Self-Attention Mechanism | Dynamically weights the importance of different image regions. | Helps the model focus on small, salient features like egg boundaries, reducing background interference [3]. |

| Convolutional Block Attention Module (CBAM) | Sequentially applies channel and spatial attention [25]. | Enhances sensitivity to critical features: channel attention refines feature maps by emphasizing important channels, while spatial attention highlights key spatial locations [3]. |

| Feature Pyramid Network (FPN) / Path Aggregation Network (PANet) | Combines multi-scale feature maps. | Improves detection of objects of varying sizes, ensuring small pinworm eggs are detected across different image resolutions [5]. |

Figure 1: YCBAM Architectural Workflow. The diagram illustrates the sequential flow from image input through the YOLOv8 backbone, the parallel application of self-attention and CBAM, feature fusion in the neck, and final detection.

Quantitative Performance Evaluation

The YCBAM model has been rigorously evaluated against standard object detection metrics, demonstrating superior performance in pinworm egg detection. Experimental evaluations report a precision of 0.9971 and a recall of 0.9934, indicating an exceptionally low rate of false positives and false negatives [3] [2]. The model achieved a mean Average Precision (mAP) of 0.9950 at an Intersection over Union (IoU) threshold of 0.50, which is a standard benchmark for detection accuracy [3]. Furthermore, the model attained a mAP50–95 score of 0.6531 across a range of IoU thresholds from 0.50 to 0.95, reflecting its robust performance under more stringent localization criteria [3].

For context, the following table compares the performance of YCBAM with other YOLO-based models applied to the broader task of intestinal parasite egg detection, highlighting YCBAM's specific excellence in pinworm detection.

Table 2: Performance Comparison of YOLO Models in Parasite Egg Detection

| Model | Target Parasite(s) | Key Metric | Reported Performance | Inference Speed |

|---|---|---|---|---|

| YCBAM (YOLOv8) | Pinworm (Enterobius vermicularis) | mAP@0.5 | 99.5% [3] | Not Specified |

| YOLOv5n | Multiple Intestinal Parasites | mAP | ~97% [5] | 8.5 ms/sample [5] |

| YOLOv7-tiny | 11 Parasite Species | mAP | 98.7% [9] | 55 FPS (Jetson Nano) [9] |

| YOLOv10n | 11 Parasite Species | Recall / F1-Score | 100% / 98.6% [9] | Not Specified |

| YAC-Net (YOLOv5-based) | Multiple Parasitic Eggs | mAP@0.5 | 99.13% [8] | Not Specified |

Experimental Protocols

This section provides a detailed methodology for training and validating a YCBAM model for pinworm egg detection, as derived from the cited literature.

Dataset Curation and Preprocessing

- Sample Collection: Pinworm egg suspensions are typically obtained from clinical samples or commercial biological suppliers [21]. Perianal samples collected via the scotch tape technique are a standard source for Enterobius vermicularis.

- Image Acquisition: Images are captured using a light microscope with a mounted digital camera. Consistent magnification (e.g., 10× or 40× objectives) is crucial. Automated digital microscopes like the Schistoscope or Kubic FLOTAC Microscope (KFM) can standardize this process for high-throughput image acquisition [26] [27].

- Data Annotation: Annotate pinworm eggs in the acquired images using bounding boxes. Specialized software like Roboflow or LabelImg is used, ensuring that annotations are performed by experienced microscopists to establish a reliable ground truth [26] [5].

- Data Augmentation: Apply transformations to increase dataset diversity and improve model generalization. Common techniques include:

Model Training Configuration

- Hardware & Software: Training is conducted using a high-performance GPU (e.g., NVIDIA GeForce RTX 3090) [21]. The Python programming environment with the PyTorch framework is standard for implementing YOLO models.

- Hyperparameters:

- Initial Learning Rate: 0.01 [21].

- Optimizer: Adam (with momentum=0.937) [21].

- Batch Size: 64 [21].

- Epochs: 300, with an early stopping patience of 200 epochs if performance plateaus [21].

- Image Size: Images are typically resized to a standard dimension (e.g., 416x416 or 640x640 pixels) before being fed into the network [5].

- Training Procedure: The backbone feature extraction network is often frozen for the initial 50 epochs to accelerate training convergence. The model is trained on the training set, with validation performance monitored after each epoch to select the best-performing weights [21].

Model Validation and Testing

- Validation Split: The dataset is split into training (70-80%), validation (10-20%), and test (10%) sets to ensure unbiased evaluation [26] [21].

- Evaluation Metrics: The model's final performance is assessed on the held-out test set using:

- Precision and Recall.

- F1-Score (harmonic mean of precision and recall).

- mean Average Precision (mAP) at IoU thresholds of 0.5 (mAP@0.5) and 0.5:0.95 (mAP@0.5:0.95) [3].

- Training Box Loss: A metric indicating how well the model's predicted bounding boxes match the ground truth during training (e.g., 1.1410 for YCBAM) [3].

Figure 2: YCBAM Experimental Validation Workflow. This diagram outlines the end-to-end process for developing and validating the YCBAM model, from data preparation to final evaluation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials, tools, and software used in developing and deploying an automated pinworm detection system based on the YCBAM model.

Table 3: Essential Research Reagents and Tools for YCBAM-based Detection

| Item Name | Function/Description | Application Note |

|---|---|---|

| Kubic FLOTAC Microscope (KFM) | A portable, automated digital microscope for standardizing image acquisition from fecal or parasite concentration samples [27]. | Enables high-throughput, consistent image capture in both field and laboratory settings, crucial for building a robust dataset. |

| Schistoscope | A cost-effective, automated digital microscope designed for scanning microscopy slides in resource-limited settings [26]. | Facilitates the creation of large-scale image datasets from field samples; can be integrated with AI models for edge computing. |

| Roboflow | A cloud-based graphical user interface (GUI) tool for annotating images and managing datasets for computer vision projects [5]. | Streamlines the process of labeling pinworm eggs with bounding boxes, managing dataset versions, and applying pre-processing augmentations. |

| PyTorch Framework | An open-source machine learning library based on the Torch library. | The primary programming framework used for implementing, training, and evaluating the YOLOv8 and YCBAM models [21]. |

| Microscopic Image Dataset | A curated collection of annotated images of pinworm eggs and other parasites. | Can be sourced from clinical partners, commercial suppliers, or public challenges (e.g., ICIP 2022 Challenge, Chula-ParasiteEgg-11) [8] [27]. |

| GPU (e.g., NVIDIA RTX 3090) | A graphics processing unit optimized for parallel computation. | Accelerates the deep learning training process, significantly reducing the time required to train complex models like YCBAM [21]. |

The diagnosis of intestinal parasitic infections, which affect over 1.5 billion people globally, relies heavily on microscopic examination of stool samples, a process that is time-consuming, labor-intensive, and requires significant expertise [28] [8]. Automated detection systems based on deep learning can eliminate this dependence on highly trained professionals, but their deployment in resource-constrained settings—where parasitic infections are most prevalent—faces a significant barrier: the substantial computational requirements of conventional detection algorithms [28] [8]. This application note details two advanced model architectures, YAC-Net and the Asymptotic Feature Pyramid Network (AFPN), which are specifically engineered to provide high-accuracy parasite egg detection while maintaining a lightweight computational profile suitable for low-power hardware.

Model Architectures and Performance

YAC-Net: A Lightweight Model for Parasite Egg Detection

YAC-Net is a lightweight deep-learning model designed for rapid and accurate detection of parasitic eggs in microscopy images. It is built upon the YOLOv5n architecture but incorporates two key modifications to enhance performance and reduce computational complexity [28] [8]:

- AFPN Neck: The original Feature Pyramid Network (FPN) in YOLOv5n is replaced with an Asymptotic Feature Pyramid Network (AFPN). Unlike FPN, which primarily integrates semantic feature information at adjacent levels, AFPN's hierarchical and asymptotic aggregation structure fully fuses spatial contextual information through direct interaction between non-adjacent levels. Its adaptive spatial fusion mode helps the model select beneficial features and ignore redundant information [28] [29].

- C2f Module in Backbone: The C3 module in the YOLOv5n backbone is modified to a C2f module. This change enriches gradient flow information, thereby improving the feature extraction capability of the backbone network [28].

The Asymptotic Feature Pyramid Network (AFPN)

AFPN addresses a common limitation in classic feature pyramid networks like FPN and PANet: the loss or degradation of feature information during fusion, which impairs the fusion effect of non-adjacent levels [29] [30]. AFPN supports direct interaction at non-adjacent levels by initiating the fusion of two adjacent low-level features and then asymptotically incorporating higher-level features into the fusion process. This approach avoids the larger semantic gap that typically exists between non-adjacent levels [29]. Furthermore, an adaptive spatial fusion operation is utilized at each spatial location to mitigate potential multi-object information conflicts during feature fusion [28] [29].

Quantitative Performance Comparison

The following tables summarize the performance of YAC-Net and other relevant models as reported in the literature.

Table 1: Performance of YAC-Net on the ICIP 2022 Challenge Dataset [28] [8]

| Model | Precision (%) | Recall (%) | F1 Score | mAP@0.5 | Parameters |

|---|---|---|---|---|---|

| YOLOv5n (Baseline) | 96.7 | 94.9 | 0.9578 | 0.9642 | ~2.5 M |

| YAC-Net | 97.8 | 97.7 | 0.9773 | 0.9913 | 1,924,302 |

Table 2: Performance of YCBAM Model for Pinworm Egg Detection [3] [31]

| Model | Precision | Recall | mAP@0.50 | mAP@0.50:0.95 | Training Box Loss |

|---|---|---|---|---|---|

| YCBAM (YOLO + CBAM) | 0.9971 | 0.9934 | 0.9950 | 0.6531 | 1.1410 |

As shown in Table 1, YAC-Net not only improves upon its baseline across all metrics but does so with a 20% reduction in the number of parameters [28]. This demonstrates an effective balance of high detection performance and model efficiency. The YCBAM model (Table 2), which integrates a Convolutional Block Attention Module with YOLO, also achieves exceptionally high precision and recall, highlighting the potential of architectural refinements for specific parasitic targets [3] [31].

Experimental Protocols

Protocol 1: Model Training and Validation for YAC-Net

This protocol outlines the procedure for training and validating the YAC-Net model as described in [28] [8].

1. Objective: To train and evaluate a lightweight deep-learning model (YAC-Net) for the detection of parasite eggs in microscopy images.

2. Materials:

* Dataset: ICIP 2022 Challenge dataset.

* Hardware: A computer with a dedicated GPU is recommended for accelerated training.

* Software: Python, PyTorch, Ultralytics YOLOv5 (or similar deep learning framework).

3. Procedure:

* Step 1: Data Preparation. Organize the dataset according to the requirements of the model framework (e.g., YOLO format). It is recommended to split the data into training, validation, and test sets.

* Step 2: Experimental Setup. Configure the experiment to use fivefold cross-validation. This ensures a robust evaluation of model performance by training and testing on different data splits.

* Step 3: Model Configuration.

a. Use YOLOv5n as the baseline model.

b. Modify the model architecture by replacing the native FPN neck with an AFPN structure.

c. Replace the C3 modules in the backbone network with C2f modules.

* Step 4: Model Training. Train the model on the training set. Key hyperparameters from related work often include an initial learning rate (lr0) of 0.01, momentum of 0.937, and weight decay of 0.0005 [32].

* Step 5: Model Validation. Evaluate the trained model on the validation and test sets. Key performance metrics to report include Precision, Recall, F1 Score, and mean Average Precision at an IoU threshold of 0.5 (mAP0.5).

* Step 6: Ablation Study (Optional). Design and conduct ablation experiments to independently verify the performance contributions of the AFPN and C2f modules.

4. Analysis: Compare the final performance metrics (Precision, Recall, F1, mAP0.5) and the number of parameters of YAC-Net against the baseline YOLOv5n model and other state-of-the-art detection methods.

Protocol 2: Evaluating Model Performance with Attention Mechanisms

This protocol is derived from methodologies used to integrate attention modules, such as the Convolutional Block Attention Module (CBAM), for enhanced parasite egg detection [3] [31].

1. Objective: To integrate an attention mechanism into a YOLO model and evaluate its efficacy in detecting pinworm parasite eggs in microscopic images. 2. Materials: * Dataset: A dataset of microscopic images containing pinworm eggs and other artifacts. * Hardware: A computer with a CUDA-enabled GPU. * Software: Python, PyTorch, a YOLO framework (e.g., Ultralytics YOLOv8). 3. Procedure: * Step 1: Data Preparation. Curate and annotate a dataset of pinworm egg images. Apply data augmentation techniques (e.g., rotation, scaling, color jitter) to improve model generalization [3]. * Step 2: Model Architecture Design. a. Select a base YOLO model (e.g., YOLOv8). b. Integrate the Convolutional Block Attention Module (CBAM) and self-attention mechanisms into the architecture to form a YCBAM (YOLO Convolutional Block Attention Module) model. This helps the model focus on salient features and suppress irrelevant background information [3]. * Step 3: Model Training. Train the YCBAM model on the prepared dataset. Monitor the training loss (e.g., box loss) to ensure convergence. * Step 4: Performance Evaluation. Evaluate the model on a held-out test set. Report standard object detection metrics, including Precision, Recall, and mAP at different IoU thresholds (e.g., mAP@0.50 and mAP@0.50:0.95). 4. Analysis: Assess the model's performance based on the evaluation metrics. High precision and recall, along with a low training box loss, indicate efficient learning and a robust model for precise identification and localization of pinworm eggs [3] [31].

Architecture and Workflow Visualization

Workflow for AFPN-based Detection System

The following diagram illustrates the logical workflow and data transformation from image input to final detection in a system like YAC-Net.

Conceptual Structure of AFPN

This diagram provides a simplified, conceptual view of the Asymptotic Feature Pyramid Network (AFPN), highlighting its asymptotic fusion process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Parasite Egg Detection Experiments

| Item Name | Function / Application | Specifications / Examples |

|---|---|---|

| Annotated Dataset | Provides ground-truth data for model training and evaluation. | ICIP 2022 Challenge Dataset; In-house datasets of microscopic images [28] [8]. |

| Deep Learning Framework | Provides the software environment for building, training, and deploying models. | PyTorch, Ultralytics YOLO (YOLOv5, YOLOv8) [28] [3]. |

| Computational Hardware | Accelerates model training and inference. | NVIDIA GPUs (e.g., T4, A100) with CUDA support [32] [33]. |

| Model Optimization Tools | Converts models for efficient deployment on various hardware. | TensorRT, OpenVINO for quantization and speed enhancement [32] [33]. |

| Attention Modules | Enhances feature extraction by focusing on spatially and channel-wise important features. | Convolutional Block Attention Module (CBAM), Self-Attention mechanisms [3] [31]. |

| Feature Pyramid Networks | Manages multi-scale feature extraction for detecting objects of different sizes. | Asymptotic FPN (AFPN), PANet, BiFPN [28] [29] [30]. |