AlexNet vs. ResNet50 for Low-Quality Image Classification: A Comparative Analysis for Biomedical Research

This article provides a comprehensive comparison of the AlexNet and ResNet50 convolutional neural network architectures for classifying low-quality images, a common challenge in biomedical and clinical research.

AlexNet vs. ResNet50 for Low-Quality Image Classification: A Comparative Analysis for Biomedical Research

Abstract

This article provides a comprehensive comparison of the AlexNet and ResNet50 convolutional neural network architectures for classifying low-quality images, a common challenge in biomedical and clinical research. We explore the foundational principles of both models, detail methodological approaches for handling degraded images, address key troubleshooting and optimization strategies, and present a validation framework for performance comparison. Aimed at researchers and drug development professionals, this analysis synthesizes technical insights with practical applications to guide the selection and implementation of robust image classification models in resource-constrained or data-limited environments, such as those involving low-resolution medical imaging or historical clinical data.

AlexNet and ResNet50: Architectural Foundations and Their Relevance to Low-Quality Images

Historical Context and Architectural Breakdown

The year 2012 marked a turning point for deep learning and computer vision with the introduction of AlexNet, a convolutional neural network (CNN) developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton [1] [2]. It decisively won the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) by achieving a top-5 error rate of 15.3%, dramatically outperforming the second-place model's error rate of 26.2% [1] [3] [2]. This victory demonstrated the untapped potential of deep convolutional networks for large-scale visual recognition tasks and catalyzed a new wave of AI research [1] [3].

AlexNet's architecture, while simple by today's standards, introduced several key innovations that became standard for subsequent deep learning models. The network consists of eight learned layers: five convolutional and three fully-connected layers [1] [4]. The architecture processes input images of size 227x227x3 and culminates in a 1000-way SoftMax output layer corresponding to the ImageNet object categories [1] [4]. A notable implementation detail was the splitting of the network across two NVIDIA GTX 580 GPUs due to memory constraints, which also allowed for a specialized pipeline that increased training efficiency [1].

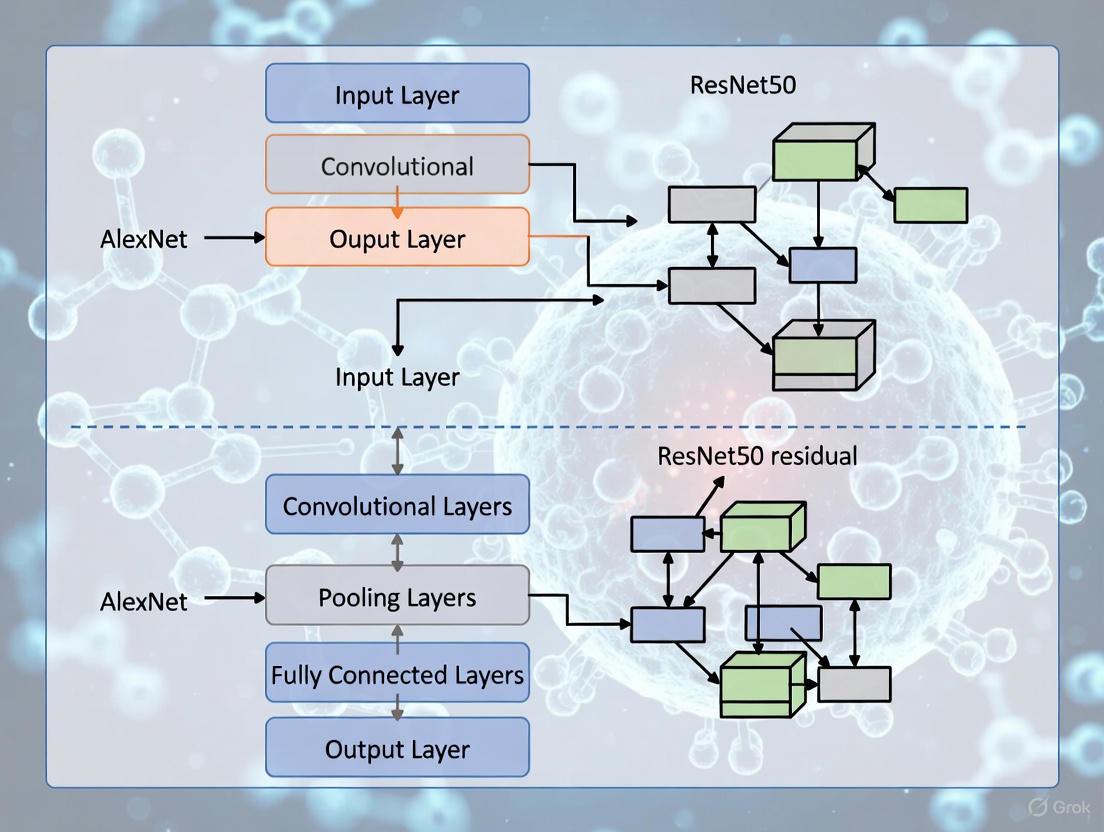

The following diagram illustrates the core architecture and data flow of AlexNet.

Core Innovations of AlexNet

AlexNet's success was not merely due to its depth but its strategic incorporation of several then-novel techniques which are now foundational in deep learning.

- ReLU Activation Function: AlexNet replaced traditional saturating activation functions like

tanhor sigmoid with the Rectified Linear Unit (ReLU), which simply outputsmax(0, x)[5] [3] [2]. This non-saturating nature drastically accelerated the convergence of stochastic gradient descent, as networks with ReLUs could achieve a 25% training error rate six times faster than equivalent networks withtanhunits [5] [2]. - Dropout Regularization: To combat overfitting in the large fully-connected layers, AlexNet employed dropout [1] [3] [2]. During training, this technique randomly "drops" each hidden neuron with a probability of 0.5, preventing complex co-adaptations of features and forcing the network to learn more robust representations [5] [3].

- Overlapping Pooling: The network used max-pooling layers, but with a twist: the pooling regions overlapped, with a pool size of 3×3 and a stride of 2 [5] [3]. This overlapping design reduced the top-1 and top-5 error rates by 0.4% and 0.3%, respectively, and provided a slight improvement in translation invariance while making overfitting less likely [5] [3].

- GPU Acceleration: Training a network with over 60 million parameters was made feasible by leveraging Graphics Processing Units (GPUs) for parallel computation [1] [2]. The model was trained on two NVIDIA GTX 580 GPUs for five to six days, a feat that would have been prohibitively slow on CPUs at the time [1].

AlexNet vs. ResNet-50: A Comparative Analysis for Image Classification

While AlexNet was a pioneer, the field has advanced significantly with architectures like ResNet-50, developed by Microsoft Research in 2015 [6] [7] [8]. A direct comparison is essential for researchers, particularly when considering applications like low-quality image classification where computational efficiency and robustness are key.

Table 1: High-Level Architectural and Philosophical Comparison

| Feature | AlexNet | ResNet-50 |

|---|---|---|

| Core Philosophy | Pioneering, relatively deep CNN for its time [2] | Very deep network enabled by residual learning to prevent degradation [6] [8] |

| Depth | 8 layers (5 Conv, 3 FC) [1] | 50 layers [7] [8] |

| Key Innovation | ReLU, Dropout, GPU training [1] [3] [2] | Skip connections / residual blocks [6] [7] |

| Solution to Vanishing Gradients | ReLU activation function [5] [2] | Identity skip connections act as gradient highways [6] [7] [8] |

| Primary Regularization | Dropout [1] [3] | Batch Normalization (within residual blocks) [8] |

| Computational Cost | Lower (∼1.43 GFLOPs forward pass) [1] | Higher due to greater depth [8] |

Table 2: Quantitative Performance and Efficiency Comparison

| Aspect | AlexNet | ResNet-50 |

|---|---|---|

| ILSVRC Top-5 Error | 15.3% [1] [2] | ~5-7% (Surpassed human-level performance of 5.1%) [7] |

| Parameters | ~60 million [1] | ~25 million [8] |

| Computational Efficiency | More efficient for simpler tasks [9] | More efficient per parameter for complex tasks [8] |

| Performance on Low-Quality/Simple Data | Can outperform deeper models when data is limited or low-quality [9] | Can underperform simpler models if task/data is not complex enough to require its depth [6] |

The most significant architectural difference is ResNet-50's use of residual blocks with skip connections. These connections bypass one or more layers by performing an identity mapping and adding their output to the output of the stacked layers [6] [7]. This solves the vanishing gradient problem more effectively for extremely deep networks by allowing gradients to flow directly backward through the skip connections, and it enables the network to learn residual functions F(x) = H(x) - x instead of the complete, unreferenced mapping H(x) [7] [8].

Experimental Insights and Performance in Applied Research

Empirical evidence from recent studies provides critical context for model selection. A 2025 study on automated feature recognition in pedestrian crash diagrams offers a compelling comparison in a challenging, real-world image classification scenario [9].

Experimental Protocol:

- Objective: To classify multiple features (e.g., intersection type, road type) from low-quality, hand-sketched pedestrian crash diagrams [9].

- Models: A comprehensive evaluation of VGG-19, AlexNet, and ResNet-50 was conducted [9].

- Dataset: 5,437 pedestrian crash diagrams from Michigan UD-10 police reports [9].

- Training: Models were evaluated using metrics like accuracy and F1-score to assess their reliability in classifying multiple pedestrian crash features [9].

Key Finding: In this specific task, AlexNet consistently surpassed ResNet-50 and VGG-19, achieving the highest accuracy and F1-score [9]. The study concluded that AlexNet also emerged as the most computationally efficient model, a crucial advantage in resource-constrained environments [9]. This demonstrates that for certain non-natural image datasets, particularly those with lower complexity or quality, a simpler, well-regularized model like AlexNet can be more effective and efficient than a much deeper, more complex architecture like ResNet-50 [9].

Conversely, in highly complex and data-rich domains like medical imaging, ResNet-50's depth provides a clear advantage. For instance, in a 2025 benchmark study on breast cancer histopathological image classification, ResNet-50 achieved a near-perfect AUC (Area Under the Curve) of 0.999 in binary classification tasks, performing on par with more recent state-of-the-art models [10].

The Scientist's Toolkit: Research Reagent Solutions

For researchers aiming to implement or experiment with these architectures, the following table details the essential "research reagents" and their functions based on the original models and subsequent studies.

Table 3: Essential Research Reagents and Materials

| Reagent / Material | Function in the Experiment |

|---|---|

| ImageNet Dataset | Large-scale benchmark dataset (~1.2 million training images, 1000 categories) for pre-training and evaluating model generalizability [1] [3]. |

| NVIDIA GPUs (e.g., GTX 580) | Provides parallel computational power essential for training deep neural networks in a feasible timeframe [1] [2]. |

| Stochastic Gradient Descent (SGD) with Momentum | Optimization algorithm that updates weights using small, random batches of data; momentum helps accelerate convergence and dampen oscillations [1] [3]. |

| Data Augmentation Pipeline (Cropping, Flipping, Color Jittering) | Artificially expands the training dataset and encourages invariance to transformations, which is crucial for preventing overfitting and improving robustness, especially with low-quality images [1] [5] [3]. |

| Dropout Regularization | Prevents overfitting in fully-connected layers by randomly disabling neurons during training, forcing the network to learn redundant, robust representations [1] [3]. |

| Local Response Normalization (LRN) | A form of lateral inhibition intended to encourage competition for big activities amongst neuron outputs computed using different kernels [1] [3]. |

| Skip (Residual) Connections | A core component of ResNet-50 that mitigates the vanishing gradient problem, enabling the stable training of very deep networks [6] [7] [8]. |

| Batch Normalization | Used in ResNet-50 to normalize the inputs to each layer, reducing internal covariate shift and accelerating training [8]. |

AlexNet's legacy as the catalyst for the modern deep learning revolution is secure. Its core innovations—ReLU, dropout, and efficient GPU utilization—established the foundational toolkit for building and training deep CNNs [1] [3] [2]. While later architectures like ResNet-50 have since surpassed its raw accuracy on benchmark datasets by introducing revolutionary ideas like skip connections, AlexNet's relative simplicity and computational efficiency make it a surprisingly potent and pragmatic choice for specific research applications [9]. This is particularly true for tasks involving lower-quality image data, limited dataset sizes, or constrained computational resources, where its performance can rival or even exceed that of more complex models [9]. For any researcher in computational vision or related fields, understanding the architecture, innovations, and comparative position of AlexNet remains indispensable.

ResNet50, a 50-layer deep convolutional neural network (CNN), represents a pivotal advancement in deep learning architecture that fundamentally addressed the vanishing gradient problem plaguing deep neural networks. Developed by researchers at Microsoft Research in 2015, ResNet introduced the concept of residual learning that enabled the successful training of networks with significantly greater depth than previously possible [11] [8]. The "50" in its name denotes the total number of layers, which include convolutional, pooling, fully connected layers, and most importantly, residual blocks that utilize skip connections [8]. This architectural innovation allowed gradients to flow directly through the network via shortcut connections, preventing them from becoming excessively small during backpropagation and thus enabling the training of networks with hundreds or even thousands of layers [11] [12].

The significance of ResNet50 extends beyond its technical specifications to its profound impact on the field of computer vision. Prior to ResNet, deeper neural networks often exhibited performance degradation - where adding more layers反而 led to higher training and test errors, contrary to theoretical expectations [11]. This phenomenon was not caused by overfitting but rather by the fundamental difficulty of optimizing deeper networks using gradient-based methods. ResNet50's residual blocks solved this problem by learning residual mappings rather than complete transformations, making it substantially easier for the network to learn identity functions when optimal [11] [8]. This breakthrough established ResNet50 as a cornerstone architecture that continues to influence modern deep learning approaches across diverse applications from medical image analysis to autonomous driving [8].

Architectural Comparison: ResNet50 vs. AlexNet

Fundamental Structural Differences

AlexNet and ResNet50 represent two distinct generations of deep learning architectures with fundamentally different approaches to network design. AlexNet, the 2012 ImageNet competition winner, consists of 8 learned layers - 5 convolutional layers and 3 fully-connected layers - with approximately 60 million parameters [5] [1]. It pioneered the use of ReLU activation functions instead of tanh, utilized overlapping max-pooling, and employed dropout regularization to prevent overfitting [5] [1]. Notably, due to computational constraints of the era, the network was split across two GPUs, with specialized layers that enabled model parallelism [1].

In contrast, ResNet50 employs a substantially deeper architecture comprising 50 layers organized around residual blocks [8]. The key innovation lies in these residual blocks, which utilize skip connections (also called shortcut connections) that allow the input to bypass one or more layers and be added to the output of those layers [11]. This creates a fundamental architectural difference: while AlexNet must learn complete transformations at each layer, ResNet50 learns residual functions expressed as F(x) = H(x) - x, where H(x) is the desired underlying mapping and x is the input to the blocks [11]. This residual learning framework significantly eases the optimization process for deep networks.

Core Innovation: Residual Learning in ResNet50

The residual block represents ResNet50's core innovation, specifically implemented through bottleneck residual blocks that consist of three convolutional layers: a 1×1 convolution for dimensionality reduction, a 3×3 convolution for feature extraction, and another 1×1 convolution for dimensionality restoration [12] [8]. This bottleneck design optimizes computational efficiency while maintaining representational power. The skip connection that bypasses these three layers enables the gradient to flow directly backward through the network during training, effectively mitigating the vanishing gradient problem that hampered previous deep architectures [12].

AlexNet's comparatively simpler structure lacks these identity connections, which explains why increasing its depth beyond 8 layers would have led to diminishing returns. The ResNet50 architecture can be conceptually summarized as: Input → Initial Convolution and Pooling → Stage 1 Residual Blocks (3) → Stage 2 Residual Blocks (4) → Stage 3 Residual Blocks (6) → Stage 4 Residual Blocks (3) → Average Pooling → Fully Connected Layer → Output [8]. Each stage increases the number of filters while reducing spatial dimensions, following the common pattern of CNNs while maintaining gradient flow through skip connections at every stage.

Table 1: Architectural Comparison Between AlexNet and ResNet50

| Feature | AlexNet | ResNet50 |

|---|---|---|

| Depth | 8 layers | 50 layers |

| Key Innovation | ReLU, Dropout, GPU parallelism | Residual learning with skip connections |

| Core Building Block | Convolutional + Pooling layers | Bottleneck residual block |

| Parameter Count | ~60 million | ~25 million |

| Activation Function | ReLU | ReLU |

| Skip Connections | No | Yes |

| Training Efficiency | Suffers from vanishing gradients in deeper variants | Maintains gradient flow even in very deep networks |

Experimental Performance Comparison

Quantitative Performance Metrics

Multiple empirical studies have directly compared the performance of AlexNet and ResNet50 across various domains and datasets. In a comprehensive study classifying traditional Indonesian food images (24 categories, >4,000 images), ResNet50 consistently outperformed AlexNet across all evaluation metrics [13]. The researchers employed 5-fold cross-validation and standard evaluation metrics, with ResNet50 achieving an average accuracy of 92% compared to AlexNet's 86% [13]. ResNet50 also demonstrated superior precision, recall, and F1-score, indicating its enhanced capability in learning visual patterns from diverse food images [13].

Another revealing comparison comes from pedestrian crash diagram analysis, where both architectures were evaluated on their ability to classify features like intersection type, road type, and crosswalk presence from crash report diagrams [9]. Interestingly, this study found AlexNet outperforming ResNet50, achieving higher accuracy and F1-score while also demonstrating superior computational efficiency [9]. This outcome suggests that task complexity and data characteristics significantly influence which architecture performs better, with AlexNet potentially maintaining advantages for certain specialized applications with limited computational resources.

Table 2: Experimental Performance Comparison Across Different Applications

| Application Domain | Dataset Characteristics | AlexNet Performance | ResNet50 Performance | Key Findings |

|---|---|---|---|---|

| Traditional Food Classification [13] | 24 categories, >4,000 images | 86% accuracy | 92% accuracy | ResNet50 superior for complex visual patterns |

| Pedestrian Crash Diagram Analysis [9] | 5,437-609 diagrams, 6 feature types | Highest accuracy & F1-score | Lower accuracy | AlexNet more efficient for certain specialized tasks |

| ImageNet Classification [1] [8] | 1,000 categories, 1.2M images | 15.3% top-5 error (2012) | ~5% top-5 error (later) | ResNet50 establishes new performance benchmarks |

Performance on Low-Quality and Low-Resolution Images

The classification of low-quality and low-resolution images presents particular challenges that differently impact architectural performance. Research into foundation models' performance on low-resolution images has revealed that model size positively correlates with robustness to resolution degradation [14]. This finding generally favors deeper architectures like ResNet50, though the quality of the pre-training dataset appears more crucial than its size in maintaining performance under resolution reduction [14].

For low-quality QR code images affected by various noise types, deeper architectures like XceptionNet achieved the highest accuracy (87.48%), while a simpler CNN with fewer layers attained competitive performance (86.75%) [15]. This suggests that for certain types of image degradation, extremely deep architectures may offer diminishing returns compared to appropriately sized networks. ResNet50's residual connections theoretically help maintain feature representation integrity even with quality degradation, though the specific noise characteristics significantly influence practical performance.

Experimental Protocols and Methodologies

Standardized Training and Evaluation Frameworks

The experimental comparisons between AlexNet and ResNet50 follow rigorous methodologies to ensure valid performance assessments. In the traditional food image classification study, researchers implemented a comprehensive preprocessing pipeline where all images were resized to 224×224 pixels and normalized according to each model's standard input format [13]. The training incorporated data augmentation techniques including random cropping, flipping, and color jittering to enhance variation and prevent overfitting [13]. The critical methodological aspect was the use of 5-fold cross-validation, ensuring robust performance estimates rather than relying on a single train-test split [13].

For both architectures, transfer learning approaches were typically employed, leveraging models pre-trained on the ImageNet dataset and fine-tuning them on the target domain datasets. The training generally utilized SGD with momentum (0.9) and used learning rate scheduling where the learning rate was reduced when validation error plateaued [13] [1]. These standardized protocols enable fair comparisons between architectures by eliminating training methodology as a confounding variable.

Specialized Methodologies for Low-Quality Image Research

Research focusing on low-quality image classification requires specialized methodologies to properly assess model robustness. Studies typically create degraded image datasets through systematic downsampling and introduction of various noise types (speckle, salt & pepper, Gaussian, etc.) [14] [15]. Evaluation metrics must then account for both absolute performance and robustness - the degree to which performance degrades with reducing image quality.

Recent work has proposed specialized metrics like Weighted Aggregated Robustness (WAR) to address limitations of previous metrics that could produce misleading scores when models perform poorly on challenging datasets [14]. The WAR metric provides a more balanced evaluation by considering performance drops across datasets more fairly, offering better assessment of model behavior under quality degradation [14]. For low-resolution specific research, methodologies often include benchmarking across multiple resolution levels and analyzing how performance degrades non-linearly with resolution reduction.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Materials and Computational Resources

| Research Reagent | Function/Purpose | Example Specifications |

|---|---|---|

| Image Datasets | Training and evaluation基准 | ImageNet (1.2M images, 1K categories) [1], Custom domain-specific datasets [13] |

| Data Augmentation Pipeline | Increases dataset diversity and size | Random cropping (224×224), horizontal flipping, color jittering [13] [5] |

| GPU Acceleration | Enables practical training of deep models | NVIDIA GTX 580 (AlexNet era) to modern GPUs with >1000 TFLOPS [16] |

| Deep Learning Frameworks | Model implementation and training | TensorFlow, Keras, PyTorch with CUDA support [11] |

| Evaluation Metrics | Quantifies model performance | Accuracy, Precision, Recall, F1-score [13], Top-5 error rate [1] |

| Cross-Validation Protocols | Ensures robust performance estimation | 5-fold cross-validation [13] |

Critical Analysis and Research Implications

Contextual Performance Advantages

The comparative analysis reveals that neither AlexNet nor ResNet50 universally outperforms the other across all scenarios. ResNet50 demonstrates clear superiority for complex visual recognition tasks requiring hierarchical feature learning, as evidenced by its substantial advantage in traditional food classification (92% vs. 86% accuracy) [13]. This performance gap widens with increasing task complexity and dataset size, consistent with ResNet50's architectural advantages for deep hierarchical representation learning.

However, AlexNet maintains competitive performance for certain specialized applications, particularly those with limited data or specific pattern recognition requirements. In pedestrian crash diagram analysis, AlexNet surprisingly achieved higher accuracy and F1-score than ResNet50 while also being computationally more efficient [9]. This suggests that researchers must consider the specific problem characteristics when selecting architectures, as simpler models may sometimes outperform more sophisticated alternatives for specialized domains.

Implications for Low-Quality Image Classification Research

For low-quality image classification - the central theme of the broader thesis context - several important implications emerge from this analysis. First, the residual connections in ResNet50 theoretically provide advantages for maintaining feature representation integrity as image quality degrades, though empirical evidence varies by domain. Second, the finding that pre-training dataset quality matters more than size for low-resolution robustness [14] suggests that careful selection of pre-training strategies may be more important than architectural choices alone.

Recent research into low-resolution robustness has led to innovative approaches like LR-TK0 (Low-Resolution Zero-Shot Tokens), which introduces low-resolution-specific tokens to enhance model robustness without altering pre-trained weights [14]. Such approaches could potentially be combined with ResNet50's architectural advantages to create more robust classifiers for low-quality images across various application domains, including medical imaging, remote sensing, and historical document analysis.

The comprehensive comparison between AlexNet and ResNet50 reveals a complex performance landscape where architectural advantages interact significantly with application domain characteristics. ResNet50's residual learning framework unquestionably represents a fundamental advancement in deep learning architecture, enabling successfully training of substantially deeper networks and establishing new performance benchmarks across standard computer vision tasks [11] [8]. However, AlexNet's competitive performance in certain specialized applications [9] demonstrates that simpler architectures retain relevance for specific use cases, particularly where computational efficiency or data limitations are primary concerns.

For low-quality image classification research, future work should focus on several promising directions. First, developing specialized residual architectures optimized for different types of image degradation (resolution reduction, noise, compression artifacts) could yield significant performance improvements. Second, exploring how pre-training strategies interact with architectural choices for low-quality images would help establish best practices for this important problem domain. Finally, hybrid approaches that combine ResNet50's strengths with domain-specific preprocessing or attention mechanisms may offer the most promising path forward for robust classification of challenging low-quality images across scientific and industrial applications.

In the field of image-based research, the quality of input data serves as the fundamental determinant of analytical success. For researchers, scientists, and drug development professionals, the challenge of low-quality images is not merely an inconvenience but a significant scientific obstacle that can compromise experimental validity, reduce statistical power, and lead to erroneous conclusions. The proliferation of advanced deep learning architectures like AlexNet and ResNet50 has created unprecedented opportunities for image analysis, yet these models face distinct challenges when processing suboptimal visual data. Understanding the characteristics and sources of image quality degradation is therefore essential for selecting appropriate analytical tools and implementing effective preprocessing strategies.

Image quality assessment (IQA) plays a critical role in automatically detecting and correcting defects in images, thereby enhancing the overall performance of image processing and transmission systems [17]. In research contexts, this extends to ensuring the reliability of analytical outcomes. The process of image generation, transmission, compression, and storage inevitably introduces various forms of distortion [17]. These distortions lead to significant differences between the visual information received by human observers and the original image, potentially resulting in unexpected deviations in practical applications that rely on high-fidelity image processing, such as medical imaging and autonomous driving [17]. This article examines the fundamental challenges posed by low-quality images in research settings and provides a comparative analysis of how AlexNet and ResNet50 architectures perform under these constrained conditions.

Defining Low-Quality Images: Characteristics and Research Impact

Key Characteristics of Low-Quality Research Images

Low-quality images in research environments manifest through several identifiable characteristics that directly impact analytical outcomes. While the specific manifestations vary across domains, five core attributes consistently present challenges for classification algorithms:

Low Resolution and Insufficient Detail: Images with inadequate pixel density fail to capture essential morphological features, particularly problematic for medical and biological imaging where subtle structural variations carry diagnostic significance [18]. Super-resolution techniques aim to address this by improving image quality and resolution to enhance finer details, sharpness, and clarity [18].

Noise and Artifacts: Introduction of visual noise during image acquisition or compression can obscure relevant features. This includes sensor noise in microscopy, compression artifacts in transmitted medical images, and interference in satellite imagery [17].

Poor Lighting and Contrast: Suboptimal illumination conditions during capture create shadows, overexposure, or low contrast, reducing feature discriminability [19]. This is particularly challenging in field research and time-series experiments where lighting control is limited.

Blur and Focus Issues: Motion blur from subject movement or equipment vibration, along with focal inaccuracies, result in loss of edge definition and structural clarity [19]. These issues are common in live-cell imaging and behavioral studies.

Compression Artifacts: Lossy compression algorithms, particularly JPEG, introduce blocking artifacts and spectral distortions that can mimic or obscure genuine image features [20] [21]. This presents significant challenges for telemedicine and collaborative research involving image sharing.

The provenance of research images significantly influences their susceptibility to quality issues. Three primary sources introduce distinct degradation patterns:

Acquisition Limitations: Research constraints often necessitate suboptimal capture conditions. In medical imaging, factors such as limited scan time, spatial coverage, and signal-to-noise ratio (SNR) can result in low-resolution captures [18]. Similarly, laboratory equipment limitations, such as older microscopes or clinical cameras, produce images with inherent quality restrictions.

Processing and Transmission Artifacts: The digital lifecycle of research images introduces multiple degradation opportunities. Common issues include quality loss during analog-to-digital conversion, compression for storage or transmission [17], and format conversions that discard visual information [20]. These challenges are particularly acute in multi-center studies and cloud-based research collaborations.

Subject-Related Challenges: Biological variability and experimental conditions create unique obstacles. Samples with insignificant morphological structural features, strong target correlation, and low signal-to-noise ratio present fundamental classification challenges [22]. Additionally, amorphous structural boundaries in medical images [22] and transparent features in microscopic samples complicate feature extraction.

Table 1: Impact of Image Quality Issues on Research Analysis

| Quality Issue | Primary Sources | Impact on Analysis | Common Research Domains |

|---|---|---|---|

| Low Resolution | Equipment limitations, sampling constraints | Loss of structural details, reduced feature discriminability | Medical MRI [18], Satellite imaging [18] |

| Noise | Sensor limitations, low light conditions | Obscured genuine features, false pattern recognition | Microscopy, Astronomical imaging |

| Compression Artifacts | Storage limitations, transmission requirements | Structural distortions, introduced false edges | Telemedicine, Multi-center trials |

| Blur | Subject motion, focus inaccuracies | Loss of boundary definition, reduced edge clarity | Behavioral studies, Live-cell imaging |

Comparative Analysis of AlexNet and ResNet50 for Low-Quality Image Classification

Architectural Considerations for Quality-Challenged Images

The structural differences between AlexNet and ResNet50 create distinct advantages and limitations when processing low-quality images. AlexNet's pioneering but relatively shallow architecture (8 layers) provides less capacity for learning complex feature representations from degraded images but may offer advantages with smaller datasets [9]. In contrast, ResNet50's deeper architecture (50 layers) with residual connections enables more sophisticated feature extraction through identity mappings that alleviate the vanishing gradient problem in deep networks [23]. This allows ResNet50 to learn more robust representations from quality-challenged images but requires more substantial datasets for effective training [22].

The residual learning framework in ResNet50 is particularly valuable for low-quality image classification as it enables the network to focus on learning residual mappings rather than complete transformations [23]. When processing images with noise or compression artifacts, this approach allows the network to more effectively separate signal from noise. AlexNet's consecutive convolutional and pooling layers lack this refinement, potentially limiting its performance on complex degraded images where learning identity mappings is beneficial [13].

Experimental Performance Comparison

Direct comparative studies reveal significant performance differences between these architectures when handling challenging image data. In classifying traditional Indonesian food images—a task involving significant visual variation and potential quality issues—ResNet50 consistently outperformed AlexNet across all evaluation metrics [13]. ResNet50 achieved an average accuracy of 92%, compared to 86% obtained by AlexNet, demonstrating a 6% absolute improvement [13]. This performance advantage extended to precision, recall, and F1-score metrics, indicating ResNet50's superior ability to extract meaningful patterns from diverse visual data with potential quality limitations.

However, performance relationships are context-dependent. In analyzing pedestrian crash diagrams, which often feature simplified schematic representations rather than rich photographic detail, AlexNet surprisingly achieved the highest accuracy and F1-score, while also demonstrating superior computational efficiency [9]. This suggests that for certain types of lower-complexity schematic images, AlexNet's simpler architecture may provide sufficient representational power without the computational overhead of deeper networks.

Table 2: Experimental Performance Comparison Between AlexNet and ResNet50

| Research Context | AlexNet Performance | ResNet50 Performance | Key Findings | Citation |

|---|---|---|---|---|

| Indonesian Food Classification | 86% accuracy | 92% accuracy | ResNet50 superior for complex visual patterns | [13] |

| Pedestrian Crash Diagrams | Highest accuracy & F1-score | Lower performance than AlexNet | AlexNet superior for schematic images | [9] |

| COVID-19 CT Scan Classification | Not tested | High performance with DenseNet-121 achieving 95.0% accuracy | ResNet variants effective for medical images | [23] |

| Computational Efficiency | Most efficient model | Higher computational demands | AlexNet advantageous with resource constraints | [9] |

Experimental Protocols for Image Classification Performance Evaluation

Standardized Evaluation Methodology

Robust evaluation of image classification performance requires carefully controlled experimental protocols. The Indonesian food image study employed a methodology that can serve as a template for comparative architecture assessment [13]. Researchers combined datasets from multiple sources to create 24 food categories with more than 4,000 total images [13]. Each image underwent systematic preprocessing including resizing to 224×224 pixels, data augmentation to enhance variation, and normalization based on standard input formats of the models [13]. The training process utilized 5-Fold Cross Validation, while performance was evaluated using accuracy, precision, recall, and F1-score metrics [13]. This comprehensive approach ensures fair comparison between architectures and generates statistically meaningful performance measures.

For medical image classification, researchers have employed specialized protocols to address domain-specific challenges. In COVID-19 CT image classification, studies have utilized uniform datasets, data augmentation, hyperparameter training, and consistent optimal weight during the training process to enable meaningful comparison across multiple deep learning models [23]. Performance evaluation typically incorporates five key metrics: accuracy (Acc), recall, precision (Pre), F1-score, and area under the curve (AUC) [23]. This multi-metric approach provides a more nuanced understanding of model performance than accuracy alone, particularly important for medical applications where false negatives and false positives carry different clinical implications.

Addressing Small Dataset Challenges

Research applications frequently face limited data availability, creating particular challenges for deep learning approaches. When working with small sample sets, techniques such as transfer learning, data augmentation, and specialized architectures become essential [22]. Few-shot learning approaches address insufficient data problems through model initialization, transfer learning, and matching networks [22]. Additionally, data augmentation methods can expand effective dataset size, though their ability to enhance the diversity of image features is inherently limited [22].

Advanced architectures specifically designed for limited data scenarios incorporate prior feature knowledge embedding to compensate for small sample sizes [22]. One medical image classification approach combined ResNet50 with Radial Basis Probabilistic Neural Network (RBPNN) to embed diverse prior feature knowledge, using channel cosine similarity attention and dynamic C-means clustering algorithms to select representative sample features from different category subsets [22]. This approach achieved accuracy rates of 85.82% on brain tumor MRI images and 83.92% on cardiac ultrasound images despite data limitations [22].

Research Reagent Solutions: Essential Tools for Image Quality Research

Table 3: Essential Research Tools for Image Classification Experiments

| Tool Category | Specific Examples | Research Function | Application Context |

|---|---|---|---|

| Deep Learning Frameworks | TensorFlow, PyTorch | Model architecture implementation and training | General image classification [18] |

| Image Preprocessing Tools | OpenCV, PIL | Image resizing, normalization, augmentation | Data preparation pipeline [13] |

| Model Architectures | AlexNet, ResNet50 | Core classification engines | Performance comparison [13] [9] |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-score, AUC | Performance quantification | Model validation [13] [23] |

| Medical Imaging Datasets | Brain tumor MRI, Cardiac ultrasound | Domain-specific validation | Medical application testing [22] |

| Data Augmentation Techniques | Rotation, Flip, Zoom, Color adjustment | Dataset expansion and variation | Small sample set improvement [22] |

The challenge of low-quality images in research demands careful consideration of analytical approaches and architectural selection. Through comparative analysis, we have established that ResNet50 generally outperforms AlexNet for complex image classification tasks involving significant visual variation, achieving superior accuracy (92% vs. 86%) in food image classification [13]. However, AlexNet maintains advantages in specific scenarios, particularly with schematic images or computational resource constraints, even achieving highest accuracy in pedestrian crash diagram classification [9].

The selection between these architectures for research applications involving quality-challenged images should consider multiple factors: dataset size and complexity, computational resources, and specific research domain requirements. For medical imaging with limited samples, ResNet variants incorporating specialized enhancements like prior feature knowledge embedding have demonstrated strong performance (83.92-85.82% accuracy) [22]. As image quality challenges continue to evolve across research domains, understanding these architectural tradeoffs becomes increasingly essential for producing valid, reproducible research outcomes.

The Impact of Architecture Depth and Complexity on Feature Extraction from Degraded Data

The performance of deep learning models in computer vision is heavily influenced by both architectural design and the quality of input data. While deeper networks have demonstrated superior performance on high-quality benchmark datasets, their ability to maintain this advantage when processing degraded, noisy, or low-quality images remains a critical research question. This comparison guide objectively analyzes the performance of two seminal convolutional neural network architectures—AlexNet and ResNet-50—for feature extraction and classification from degraded image data. Framed within a broader thesis on robust visual recognition systems, this examination provides researchers with experimental insights and methodological protocols for evaluating architectural efficacy under suboptimal conditions commonly encountered in real-world applications from medical imaging to autonomous systems.

Architectural Fundamentals: AlexNet vs. ResNet-50

AlexNet: The Pioneering Deep CNN

AlexNet, the 2012 ImageNet competition winner, established the potential of deep convolutional networks for large-scale visual recognition tasks [1]. Its architecture contains eight learned layers—five convolutional and three fully-connected—with a total of 60 million parameters [1]. The network introduced several groundbreaking techniques including the use of ReLU activation functions for faster training, local response normalization, and dropout regularization to combat overfitting [1]. A distinctive feature of the original implementation was its dual-stream design across two GPUs due to memory constraints, with specific layers communicating only between certain feature maps [1].

ResNet-50: Revolutionizing Depth with Residual Learning

ResNet-50 represents a fundamental architectural innovation through its introduction of residual learning frameworks [24]. The core premise addresses the vanishing gradient problem that plains very deep networks through skip connections that enable direct feature map propagation between layers [11]. These residual blocks learn residual functions with reference to layer inputs rather than complete transformations, expressed as ( F(x) = H(x) - x ) where ( H(x) = F(x) + x ) represents the desired underlying mapping [24] [11]. This design allows ResNet-50 to effectively utilize its 50-layer depth with approximately 25.6 million parameters—significantly fewer than VGG-19's 143.7 million parameters despite being 2.6 times deeper [25].

Experimental Methodology for Degraded Image Assessment

Standardized Degradation Protocols

To quantitatively evaluate architectural robustness, researchers have established systematic methodologies for introducing controlled degradations to image data. The following protocols represent community standards for assessing model performance under various challenging conditions [25]:

- Gaussian Noise: Addition of zero-mean Gaussian noise with standard deviation (σ) ranging from 0.1 to 0.5 to simulate sensor noise.

- Gaussian Blur: Application of Gaussian filters with kernel sizes from 3×3 to 15×15 and σ values from 1 to 5 to simulate out-of-focus captures.

- Motion Blur: Implementation of linear motion blur kernels with lengths from 5 to 25 pixels and angles from 0° to 180° to simulate camera shake.

- Salt-and-Pepper Noise: Introduction of random black and white pixels with noise densities from 0.01 to 0.2 to simulate transmission errors.

- JPEG Compression: Application of lossy JPEG compression with quality factors from 90% to 10% to simulate storage and transmission artifacts.

- Resolution Reduction: Downsampling by factors of 2× to 8× followed by upsampling to original dimensions to simulate low-resolution captures.

Benchmark Datasets and Evaluation Metrics

Comparative studies typically employ standardized datasets with controlled degradation introductions [25]:

- Synthetic Digits Dataset: 12,000 images of numerals (0-9) rendered with 16 distinct fonts, random colors, rotations, and complex backgrounds from COCO dataset.

- Natural Images Dataset: 6,899 high-resolution real-world images across 8 diverse classes (airplanes, cars, cats, dogs, flowers, fruits, motorbikes, persons).

- ImageNet Subsets: Selected classes from the Large Scale Visual Recognition Challenge for large-scale evaluation.

Performance is quantified using standard metrics: Classification Accuracy (primary indicator), Precision (exactness), Recall (completeness), F1-Score (harmonic mean), and Computational Efficiency (FLOPs and inference time).

Performance Comparison Under Image Degradations

Quantitative Results Across Degradation Types

Table 1: Classification Accuracy (%) of AlexNet and ResNet-50 Across Image Degradation Types

| Degradation Type | Severity Level | AlexNet | ResNet-50 | Performance Gap |

|---|---|---|---|---|

| Gaussian Noise | Low (σ=0.1) | 78.3 | 85.6 | +7.3 |

| Medium (σ=0.3) | 62.1 | 73.4 | +11.3 | |

| High (σ=0.5) | 45.7 | 58.9 | +13.2 | |

| Gaussian Blur | Low (3×3, σ=1) | 81.2 | 88.3 | +7.1 |

| Medium (9×9, σ=3) | 58.6 | 72.7 | +14.1 | |

| High (15×15, σ=5) | 42.3 | 61.5 | +19.2 | |

| Motion Blur | Low (length=5) | 79.5 | 86.2 | +6.7 |

| Medium (length=15) | 54.8 | 69.3 | +14.5 | |

| High (length=25) | 38.9 | 57.1 | +18.2 | |

| JPEG Compression | Low (quality=70%) | 84.1 | 90.2 | +6.1 |

| Medium (quality=40%) | 72.5 | 83.7 | +11.2 | |

| High (quality=10%) | 58.3 | 74.6 | +16.3 |

Table 2: Computational Characteristics and Performance Metrics

| Characteristic | AlexNet | ResNet-50 | Relative Difference |

|---|---|---|---|

| Number of Parameters | 60M | 25.6M | -57.3% |

| Computational FLOPs | 1.43 G | 7.6 G | +431% |

| Baseline Accuracy (Clean) | 82.3% | 92.1% | +9.8% |

| Average Accuracy (Degraded) | 61.8% | 74.9% | +13.1% |

| Performance Drop | -20.5% | -17.2% | -3.3% |

| Inference Time (ms) | 4 | 12 | +200% |

Architectural Response Analysis

The experimental data reveals several key patterns regarding architectural depth and degradation robustness:

Performance Preservation: ResNet-50 consistently maintains higher classification accuracy across all degradation types and severity levels, with the performance gap widening as degradation severity increases [25].

Degradation-Specific Sensitivity: Both architectures show particular vulnerability to blur-based degradations (Gaussian and motion blur), with ResNet-50 demonstrating superior resilience to high-frequency information loss [25].

Progressive Advantage: The residual architecture's advantage is most pronounced under medium to high degradation conditions, suggesting better feature preservation through skip connections when critical visual information is compromised [24].

Computational Trade-offs: While ResNet-50 requires approximately 431% more FLOPs than AlexNet, it achieves significantly higher robustness to degradations, indicating that architectural sophistication rather than mere parameter count drives performance [25].

Experimental Workflow for Degradation Robustness Assessment

Table 3: Research Reagent Solutions for Degradation Robustness Experiments

| Research Tool | Function/Purpose | Example Implementation |

|---|---|---|

| CleanVision | Automated detection of low-quality images and dataset anomalies | Identifies blur, darkness, odd aspect ratios [26] |

| Fastdup | Large-scale visual similarity analysis and duplicate detection | Cluster analysis for dataset curation [26] |

| DataPerf Benchmark | Standardized evaluation of dataset quality and model performance relationships | Cross-platform dataset quality metrics [26] |

| CUDA-ConvNet | GPU-accelerated CNN training framework (original AlexNet implementation) | Multi-GPU training optimization [1] |

| ResNet Building Blocks | Modular residual network implementation with skip connections | Keras/TensorFlow custom layers [11] |

| Degradation Simulators | Controlled introduction of noise, blur, and compression artifacts | Gaussian filters, noise injection algorithms [25] |

| Attention Mechanism Modules | Enhanced feature weighting (CBAM, Triplet Attention) | Feature recalibration for degraded inputs [27] [28] |

This comparative analysis demonstrates that architectural depth and complexity significantly impact feature extraction capability from degraded image data. While ResNet-50 consistently outperforms AlexNet across all degradation types, the magnitude of this advantage varies substantially with degradation characteristics and severity. The residual learning framework provides a more robust foundation for handling information loss particularly from blur and noise corruptions. However, this robustness comes with substantial computational costs that may influence architectural selection for resource-constrained applications. These findings highlight that optimal architecture selection depends critically on the expected degradation profile of the target application domain, with ResNet-50 preferable for severely degraded environments and AlexNet remaining competitive for mildly corrupted data with efficiency constraints. Future research directions should explore lightweight residual architectures and hybrid approaches that maintain degradation robustness while improving computational efficiency.

The field of computer vision has undergone a profound transformation, shifting from reliance on manually engineered features to leveraging deep learning models that automatically learn hierarchical representations directly from data. This paradigm shift, catalyzed by advances in convolutional neural networks (CNNs), has dramatically improved performance across image classification, object detection, and other visual recognition tasks. Among the architectures that propelled this revolution, AlexNet and ResNet50 represent two pivotal milestones. AlexNet demonstrated the potential of deep CNNs for large-scale image classification, while ResNet50 addressed fundamental optimization challenges in very deep networks through residual learning. This guide provides a comprehensive comparison of these architectures, with particular focus on their performance and characteristics for classifying low-quality images—a common challenge in real-world applications where high-resolution data may be unavailable.

Architectural Evolution: AlexNet to ResNet50

AlexNet: The Pioneering Deep CNN

AlexNet, developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton in 2012, served as a watershed moment for deep learning in computer vision [1] [4]. Its victory in the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) with a top-5 error rate of 15.3% demonstrated the superior capability of deep CNNs over traditional computer vision approaches. The architecture consists of eight learned layers: five convolutional layers and three fully-connected layers [1]. The network processes input images of 227×227×3 dimensions and utilizes overlapping max-pooling for spatial downsampling. Key innovations included the use of the ReLU (Rectified Linear Unit) activation function to combat the vanishing gradient problem and accelerate training, as well as dropout regularization to reduce overfitting in the fully-connected layers [4]. The original implementation employed a two-GPU parallelization strategy due to memory constraints of contemporary hardware.

AlexNet Architecture Diagram

ResNet50: Revolutionizing Deep Networks with Residual Learning

ResNet50, introduced by Kaiming He et al. in 2015, represents a significant architectural advancement that enabled the successful training of substantially deeper networks [8]. The core innovation lies in residual blocks with skip connections that address the vanishing gradient problem, which had previously hampered the training of very deep networks [7] [12]. The "50" in ResNet50 denotes its 50-layer depth, organized into four main stages with bottleneck residual blocks that employ 1×1, 3×3, and 1×1 convolutional layers [7] [8]. This bottleneck design reduces computational complexity while maintaining representational power. The skip connections perform identity mapping, adding the input of a residual block directly to its output, which allows gradients to flow more easily through the network during backpropagation [12]. This residual learning framework enables the network to learn residual functions with reference to the layer inputs rather than having to learn unreferenced functions, significantly easing the training of deep architectures.

ResNet50 Architecture with Residual Blocks

Experimental Comparison Framework

Methodologies for Performance Evaluation

Dataset Composition and Preprocessing: Experimental comparisons typically employ standardized datasets such as ImageNet, containing over 1.2 million images across 1000 object categories [1]. For traditional food classification studies, researchers have combined datasets from Kaggle with additional localized food images, creating 24 food categories with more than 4,000 total images [13]. Standard preprocessing includes resizing images to 224×224 pixels for both architectures, with AlexNet using 227×227 inputs in its original implementation [13] [4]. Data augmentation techniques commonly include random cropping, horizontal flipping, and color jittering to improve generalization.

Training Protocols: Models are typically trained using momentum gradient descent with a batch size of 128 examples, momentum of 0.9, and weight decay of 0.0005 [1]. AlexNet originally used a learning rate starting at 10⁻² with manual reduction when validation error plateaued [1]. ResNet50 training often employs similar optimization approaches with adjustments for deeper architecture. Evaluation typically utilizes 5-fold cross-validation to ensure robust performance measurement [13].

Performance Metrics: Standard evaluation metrics include top-1 and top-5 classification accuracy, precision, recall, and F1-score [13]. For comprehensive comparison, computational efficiency metrics such as training time, inference speed, and parameter count are also assessed.

Quantitative Performance Comparison

Table 1: Overall Performance Comparison on Standard Image Classification Tasks

| Metric | AlexNet | ResNet50 | Performance Delta |

|---|---|---|---|

| Top-1 Accuracy (ImageNet) | 61.8% (single model) | 76.0% (single model) | +14.2% |

| Top-5 Accuracy (ImageNet) | 84.7% (single model) | 93.3% (single model) | +8.6% |

| Top-5 Error (ILSVRC) | 15.3% (single model) | 6.7% (single model) | -8.6% |

| Parameters | 62.3 million | 25.6 million | -36.7 million |

| Theoretical FLOPs | 1.43 GFLOPs (forward) | ~4.1 GFLOPs | ~2.87 GFLOPs |

Table 2: Performance on Specific Application Domains

| Application Domain | AlexNet Performance | ResNet50 Performance | Dataset Characteristics |

|---|---|---|---|

| Indonesian Traditional Food Classification [13] | 86% accuracy | 92% accuracy | 24 categories, >4,000 images |

| Tomato Leaf Disease Detection [29] | 96.99% accuracy (with SVM) | 96.99% accuracy (with SVM) | 10 disease classes, 18,835 images |

| Pedestrian Crash Diagram Classification [9] | Highest accuracy and F1-score | Lower accuracy than AlexNet | 5,437-6,046 diagrams, 6 features |

| QR Code Noise Classification [15] | Competitive with state-of-the-art | XceptionNet achieved 87.48% accuracy | 80,000 images, 7 noise types |

Low-Quality Image Classification Performance

The performance gap between AlexNet and ResNet50 becomes particularly nuanced when dealing with low-quality images. Recent benchmarking studies reveal that foundation models exhibit significant performance degradation on low-resolution images, with model size positively correlating with robustness to resolution degradation [14]. This relationship suggests that deeper architectures like ResNet50 may maintain better performance on degraded images despite the loss of fine-grained details.

For low-resolution images, ResNet50's residual connections potentially enable better preservation of semantic information even when spatial details are compromised. However, in certain domains with inherently low-quality input data, such as pedestrian crash diagrams, AlexNet's shallower architecture surprisingly demonstrates superior performance [9]. This counterintuitive result suggests that for specific low-quality image classification tasks, overly deep architectures may struggle to extract relevant features from information-sparse inputs.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Research Reagents

| Research Reagent | Function | Implementation Notes |

|---|---|---|

| CUDA-enabled GPUs | Accelerates training and inference through parallel processing | Modern implementations use NVIDIA RTX series with Tensor Cores for mixed-precision training |

| Data Augmentation Pipeline | Increases effective dataset size and improves model generalization | Standard techniques: random cropping, flipping, color jittering, and rotation |

| Transfer Learning Pre-trained Weights | Enables effective training with limited data | Models pre-trained on ImageNet provide robust feature extractors for domain-specific tasks |

| Automatic Differentiation Frameworks | Simplifies gradient computation for backpropagation | TensorFlow, PyTorch, and Keras provide high-level APIs for model development |

| Gradient Optimization Algorithms | Optimizes model parameters during training | Momentum SGD, Adam, and RMSprop with learning rate scheduling |

| Model Regularization Techniques | Prevents overfitting and improves generalization | Dropout (AlexNet), Batch Normalization (ResNet50), L2 weight decay |

The comparative analysis of AlexNet and ResNet50 reveals a nuanced architectural evolution in computer vision. While ResNet50 generally demonstrates superior performance on high-quality image classification tasks, its advantages in low-quality image classification scenarios are less definitive. The residual learning framework enables ResNet50 to train substantially deeper networks and achieve state-of-the-art results on benchmark datasets, yet AlexNet maintains competitive performance in specific domains with limited data quality or particular feature characteristics.

For researchers working with low-quality images, the architectural selection requires careful consideration of the specific data properties and task requirements. ResNet50's robustness to resolution degradation makes it preferable for many real-world applications, though AlexNet's computational efficiency and surprising effectiveness in certain domains warrant its continued consideration. Future research directions may explore hybrid approaches or neural architecture search to optimize network design for specific low-quality image classification challenges.

Implementing AlexNet and ResNet50 for Low-Quality Image Analysis: A Step-by-Step Guide

Essential Preprocessing Pipelines for Low-Quality Inputs in PyTorch/TensorFlow

In low-quality image classification research, the data preprocessing pipeline is not merely a preliminary step but a fundamental determinant of model performance and reliability. The comparative analysis of foundational architectures like AlexNet and ResNet50 reveals a critical insight: the performance gap between these models can be significantly amplified or diminished by the quality and sophistication of preprocessing techniques applied to input data [13]. Research demonstrates that while ResNet50 consistently outperforms AlexNet across various metrics, with one study showing 92% versus 86% accuracy in traditional food image classification, this advantage becomes particularly pronounced when handling degraded, noisy, or inconsistent image data [13]. The escalating demand for image classification systems in real-world scenarios—from medical diagnostics using chest X-rays to archaeological preservation of cultural artifacts—has intensified the need for robust preprocessing methodologies that can compensate for inherent data quality limitations [30] [31]. This guide systematically examines the essential preprocessing pipelines within PyTorch and TensorFlow frameworks, providing researchers with experimentally-validated methodologies for optimizing AlexNet and ResNet50 performance on low-quality image datasets.

Experimental Protocols: Methodologies for Preprocessing Research

Standardized Experimental Framework

To ensure valid comparisons between preprocessing techniques and their impact on AlexNet versus ResNet50, researchers should implement a standardized experimental protocol based on methodologies from recent peer-reviewed studies. The foundational approach should incorporate a 5-fold cross-validation process to mitigate variance in performance measurements, with datasets partitioned into training, validation, and test sets at ratios consistent with established research practices [13]. Each experiment should begin with a baseline measurement of model performance on raw, unprocessed images, followed by iterative testing of individual and combined preprocessing techniques.

For AlexNet and ResNet50 comparisons, input images must be resized to the models' native requirements—typically 224×224 pixels for ResNet50 and 227×227 for AlexNet—using interpolation methods that minimize information loss [13]. The preprocessing workflow should systematically apply normalization based on each model's expected input distribution, typically scaling pixel values to a [0,1] range or standardizing to zero mean and unit variance. Data augmentation should be implemented consistently across experiments, with techniques including random rotation (±10°), horizontal flipping, zoom (±15%), and brightness/contrast variations (±20%) to enhance model robustness [13] [32]. Each preprocessing operation should be individually evaluated and then tested in combination to identify synergistic effects on model performance.

Evaluation Metrics and Statistical Validation

Performance evaluation should extend beyond basic accuracy metrics to include precision, recall, F1-score, and confusion matrix analysis, particularly for imbalanced datasets common in low-quality image scenarios [13]. For ResNet50, which typically demonstrates superior performance on complex visual patterns, researchers should pay particular attention to metrics that capture fine-grained classification improvements, such as per-class precision and recall. Statistical significance of performance differences should be validated using appropriate tests (e.g., paired t-tests across multiple runs), with confidence intervals reported for all key metrics.

Quantitative Performance Comparison: AlexNet vs. ResNet50

Table 1: Performance Comparison of AlexNet and ResNet50 on Low-Quality Image Classification Tasks

| Metric | AlexNet | ResNet50 | Performance Gap | Experimental Conditions |

|---|---|---|---|---|

| Accuracy | 86% | 92% | +6% | Traditional food image classification with 5-fold cross-validation [13] |

| Precision | 84% | 90% | +6% | Multiclass classification with 24 food categories [13] |

| Recall | 83% | 89% | +6% | Dataset of 4,000+ images with augmentation [13] |

| F1-Score | 83.5% | 89.5% | +6% | Combined dataset from Kaggle and Cirebon dishes [13] |

| Robustness to Noise | Moderate | High | Significant | Performance degradation under noisy conditions [31] |

| Feature Utilization | Basic patterns | Complex hierarchical features | Substantial | ImageNet pre-trained weights with transfer learning [13] |

| Training Stability | Moderate | High | Notable | 5-fold cross-validation results [13] |

Table 2: Impact of Preprocessing Techniques on Model Performance

| Preprocessing Technique | Effect on AlexNet | Effect on ResNet50 | Optimal Implementation | Use Case Specificity |

|---|---|---|---|---|

| Data Normalization | +3-5% accuracy | +2-3% accuracy | Per-channel mean subtraction | Universal [33] |

| Data Augmentation | +5-8% accuracy | +4-6% accuracy | Random crops, flips, rotations | Data-scarce environments [32] |

| Noise Reduction | +7-10% accuracy | +3-5% accuracy | Median filtering for salt-and-pepper noise | High-noise environments [30] |

| Contrast Enhancement | +4-6% accuracy | +2-3% accuracy | Histogram equalization or CLAHE | Low-contrast images [30] |

| Geometric Transformations | +5-7% accuracy | +3-4% accuracy | Affine transformations with interpolation | Pose/orientation variation [13] |

| Handling Missing Values | Critical (+8-12%) | Important (+5-8%) | Imputation vs. removal decision | Incomplete data samples [33] |

Framework-Specific Implementation: PyTorch vs. TensorFlow

PyTorch Preprocessing Pipeline

PyTorch's preprocessing paradigm emphasizes explicitness and debugging flexibility, leveraging its dynamic computational graph and Pythonic programming model. The foundational element is the torch.utils.data.Dataset class, which researchers should extend to implement custom preprocessing operations. For AlexNet and ResNet50 preprocessing, a typical pipeline incorporates:

The DataLoader class then creates batches with optional shuffling, enabling efficient GPU utilization. PyTorch's key advantage lies in its debugging capabilities—researchers can insert print statements or breakpoints at any point in the preprocessing pipeline to inspect intermediate results, a critical feature when handling low-quality inputs that may require customized processing logic [34]. For advanced preprocessing scenarios, such as medical images with specialized normalization requirements, researchers can implement custom transform classes with __call__ methods for domain-specific processing.

TensorFlow Preprocessing Pipeline

TensorFlow offers a highly optimized preprocessing workflow through the tf.data API and tf.image module, designed for production-grade performance and scalability. The typical preprocessing pipeline for AlexNet and ResNet50 classification integrates:

TensorFlow's key strength is pipeline optimization—the framework automatically parallelizes preprocessing operations across CPU cores while the GPU trains on already-processed batches [34]. The tf.data pipeline typically demonstrates higher throughput for large-scale datasets, though it may present steeper debugging challenges compared to PyTorch. For research scenarios requiring real-time preprocessing on streaming data, TensorFlow's graph execution provides performance advantages, though potentially at the cost of implementation flexibility.

Framework Selection Guidelines

Table 3: PyTorch vs. TensorFlow for Preprocessing Pipelines

| Criteria | PyTorch | TensorFlow | Recommendation Context |

|---|---|---|---|

| Debugging Capability | Excellent (Pythonic, immediate execution) | Moderate (graph mode complexities) | Experimental phases, novel preprocessing development [34] |

| Production Performance | Good (improving with torch.compile) | Excellent (mature optimization) | Large-scale deployment, throughput-critical applications [34] |

| Learning Curve | Gradual, intuitive | Steeper, more conceptual | Research teams with limited ML engineering support [34] |

| Custom Operation Support | Flexible, Python-native | Robust but more complex | Domain-specific preprocessing requirements [35] |

| Data Pipeline Efficiency | Good (DataLoader) | Excellent (tf.data) | Very large datasets, limited hardware resources [34] |

| Community Adoption | Strong in research | Strong in production | Collaboration considerations, code sharing [34] |

Specialized Preprocessing Techniques for Low-Quality Inputs

Handling Image Degradation and Artifacts

Low-quality images present unique challenges that require specialized preprocessing approaches beyond standard augmentation. For corruption types common in real-world datasets, researchers should implement:

Noise Reduction: Adaptive filtering techniques that preserve edge information while reducing sensor noise. For Gaussian noise, non-local means denoising typically outperforms standard Gaussian blurring. For salt-and-pepper noise, median filtering with appropriately sized kernels (3×3 or 5×5) provides effective artifact removal without excessive blurring [30]. Implementation should include noise-level estimation to adaptively adjust filter parameters based on image content and degradation severity.

Resolution Enhancement: For severely low-resolution inputs, super-resolution techniques can provide meaningful improvements before classification. While traditional interpolation methods (bicubic, Lanczos) offer basic improvement, deep learning-based approaches (ESPCN, SRGAN) can reconstruct more plausible high-frequency details, though with increased computational cost [30]. The trade-off between quality enhancement and computational overhead should be balanced according to application requirements.

Illumination Correction: Non-uniform lighting represents a common challenge in real-world image capture. Techniques like Contrast Limited Adaptive Histogram Equalization (CLAHE) can normalize illumination variations while avoiding over-amplification of noise [30]. For color images, processing should typically be applied in LAB color space to separate luminance from color information, preventing hue shifts.

Domain-Specific Preprocessing Strategies

Different application domains necessitate specialized preprocessing approaches tailored to their specific data characteristics:

Medical Imaging: Chest X-ray classification requires lung segmentation prior to classification to isolate regions of interest and exclude irrelevant features, significantly improving reliability [31]. Windowing and gamma correction can enhance subtle pathological features, while specialized normalization should account for the monochromatic nature and diagnostic relevance of specific intensity ranges.

Cultural Heritage Preservation: Traditional food image classification, as studied in AlexNet/ResNet50 comparisons, benefits from background standardization and color calibration to address variations in capture conditions [13]. Data augmentation should emphasize realistic transformations that reflect actual appearance variations rather than artificial geometric distortions.

Facial Recognition Systems: Preprocessing pipelines should incorporate face detection, alignment, and landmark normalization to standardize inputs despite pose variations [30]. Illumination normalization is particularly critical, with techniques like Difference of Gaussian (DoG) filtering effectively compensating for lighting variations while preserving facial features.

Experimental Workflow and Research Reagents

Standardized Experimental Workflow

The following diagram illustrates the comprehensive preprocessing workflow for comparative evaluation of AlexNet and ResNet50 on low-quality images:

Preprocessing and Evaluation Workflow for AlexNet vs. ResNet50 Comparison

Research Reagent Solutions

Table 4: Essential Research Tools and Libraries for Preprocessing Pipelines

| Research Reagent | Function | Framework Compatibility | Implementation Considerations |

|---|---|---|---|

| PyTorch Transforms | Preprocessing and augmentation operations | PyTorch native | Comprehensive standard image transformations with composable interface [34] |

| TensorFlow tf.data | Input pipeline optimization | TensorFlow native | Automated parallelization and prefetching for performance [35] |

| OpenCV | Advanced image processing | Both frameworks | Traditional computer vision algorithms beyond DL framework capabilities [30] |

| Albumentations | Advanced augmentation | Both frameworks | Specialized transformations for domain-specific applications [13] |

| Scikit-image | Image enhancement | Both frameworks | Algorithmic implementations for quality improvement [30] |

| TensorBoard/Weights & Biases | Preprocessing visualization | Both frameworks | Quality control through processed sample inspection [35] |

The comparative analysis of AlexNet and ResNet50 performance on low-quality image classification tasks reveals the profound impact of specialized preprocessing pipelines on model effectiveness. While ResNet50 consistently demonstrates superior performance across metrics—achieving approximately 6% higher accuracy than AlexNet in controlled studies—this advantage is substantially mediated by preprocessing quality [13]. The residual connections and deeper architecture of ResNet50 enable more effective utilization of enhanced features produced by advanced preprocessing techniques, particularly for noisy, low-contrast, or artifact-laden images.

Framework selection between PyTorch and TensorFlow represents a critical decision point, with PyTorch offering superior debugging capabilities valuable during experimental development, while TensorFlow provides production-optimized pipelines essential for large-scale applications [34]. Regardless of framework choice, researchers should implement systematic preprocessing workflows incorporating noise reduction, contrast enhancement, and domain-specific normalization to maximize model performance. The experimental protocols and quantitative comparisons presented in this guide provide a validated foundation for researchers developing robust image classification systems capable of handling the low-quality inputs prevalent in real-world applications across medical, cultural, and industrial domains.

Data Augmentation Strategies Specific to Enhancing Low-Resolution Datasets

In the domain of computer vision, low-resolution image classification presents unique challenges that extend beyond those encountered with standard image recognition. Models must learn to extract meaningful patterns from limited pixel information while contending with artifacts, noise, and lost detail. Within this context, particularly for a comparative study of foundational architectures like AlexNet and deeper networks such as ResNet50, data augmentation transforms from a mere performance-enhancing technique to an absolute necessity. These architectures, with their differing depths and learning mechanisms, respond differently to the constraints of low-resolution data. AlexNet, with its simpler structure, may be more susceptible to overfitting on small datasets, while ResNet50, despite its superior representational capacity, might struggle to leverage its depth effectively when trained on limited or impoverished visual information [13] [9]. Strategic data augmentation directly addresses these challenges by artificially expanding and enriching the training dataset, introducing variations that mimic real-world conditions, and forcing the models to learn more robust and generalizable features [36] [37]. This guide provides a comprehensive overview of data augmentation strategies tailored for low-resolution datasets, framing them within the experimental context of comparing AlexNet and ResNet50 model performance.

The choice of neural network architecture fundamentally influences how a model processes and learns from low-resolution input. AlexNet and ResNet50 represent two significant generations in deep learning evolution, each with distinct strengths and weaknesses for handling imperfect data.

AlexNet, a pioneering deep CNN, established the potential of deep learning for image classification. Its architecture, while groundbreaking for its time, is relatively modest by modern standards, typically featuring 5 convolutional layers followed by 3 fully-connected layers. Its comparative simplicity can be an advantage for low-resolution tasks; with fewer parameters, it is less prone to overfitting when data is scarce [9]. However, this same simplicity limits its capacity to learn the highly complex and hierarchical features often needed to disambiguate details in low-resolution images.

ResNet50, in contrast, is a much deeper network comprising 50 layers, built around the innovative concept of residual connections. These skip connections mitigate the vanishing gradient problem in very deep networks, allowing for effective training and enabling the model to learn more sophisticated feature representations [13]. For low-resolution classification, this enhanced capacity can be pivotal in reconstructing semantic meaning from limited pixel information. Research has consistently shown that deeper and more complex architectures like ResNet50 are more effective in learning visual patterns from diverse image sets, typically achieving higher accuracy than AlexNet on standardized benchmarks [13] [38]. However, this power comes at a computational cost and with a greater risk of overfitting if the training data is not sufficiently diversified, a risk that can be ameliorated through aggressive data augmentation [31].

Table 1: Architectural Comparison for Low-Resolution Context

| Feature | AlexNet | ResNet50 |

|---|---|---|

| Depth | 8 layers (5 conv, 3 FC) | 50 layers |

| Core Innovation | Pioneering deep CNN architecture | Residual learning with skip connections |

| Parameter Count | ~60 million | ~25 million |