AI-Powered Microscopy: Revolutionizing Helminth Egg Detection and Classification for Biomedical Research

This article comprehensively examines the transformative role of artificial intelligence (AI) in the image-based classification of helminth eggs, a critical diagnostic task in parasitology.

AI-Powered Microscopy: Revolutionizing Helminth Egg Detection and Classification for Biomedical Research

Abstract

This article comprehensively examines the transformative role of artificial intelligence (AI) in the image-based classification of helminth eggs, a critical diagnostic task in parasitology. It explores the foundational need for AI to overcome the limitations of traditional manual microscopy, which is time-consuming, labor-intensive, and prone to human error. The review details the application of state-of-the-art deep learning models, including YOLOv4, EfficientNet, and ConvNeXt, for automated detection and classification across multiple parasite species. It further addresses key methodological challenges such as dataset robustness and model optimization for complex scenarios like mixed infections and low-intensity cases. Finally, the article provides a rigorous comparative analysis of AI performance against manual microscopy, validating its superior sensitivity and specificity, particularly in resource-limited settings, and discusses its implications for drug development and global health surveillance.

The Diagnostic Imperative: Why AI is Revolutionizing Helminth Parasitology

Soil-transmitted helminth (STH) infections are among the most common neglected tropical diseases (NTDs) globally, affecting approximately 1.5 billion people worldwide and representing a substantial public health challenge [1] [2]. These infections, primarily caused by Ascaris lumbricoides (roundworm), Trichuris trichiura (whipworm), and hookworm species, disproportionately affect impoverished communities in tropical and subtropical regions [1]. The global burden is characterized by chronic disability that impacts physical growth, cognitive development, and economic productivity, particularly among vulnerable populations such as school-aged children [3] [4].

The World Health Organization has identified STH infections as a priority for control, with targets set to eliminate them as a public health problem by 2030 [1]. Recent advancements in artificial intelligence (AI) have created new opportunities for improving the diagnosis and monitoring of these infections, particularly through image-based classification of helminth eggs in microscopic samples [5] [6]. This application note examines the global burden of helminth infections and presents innovative AI-driven approaches for their detection and quantification, providing researchers and public health professionals with comprehensive data and methodologies to support control efforts.

Global Epidemiology and Burden of Disease

Prevalence and Distribution

The global prevalence of STH infections remains substantial, with recent estimates from the Global Burden of Disease Study 2021 indicating approximately 642.72 million cases worldwide [2]. The age-standardized prevalence rate (ASPR) was 8,429.89 per 100,000 population, representing a significant 69.6% decrease since 1990 [2]. This decline reflects the success of expanded control programs, though considerable geographic variation persists.

School-aged children bear the highest burden of infection, with a recent systematic review and meta-analysis reporting a global prevalence of 20.6% among this demographic [3]. The highest prevalence rates have been observed in Tanzania (67.41%) and Vietnam (65.04%), with Toxocara spp. (10.36%) and Ascaris lumbricoides (9.47%) identified as the most prevalent helminthic parasites [3]. This distribution underscores the importance of school-based deworming programs and the potential for AI-assisted screening to enhance surveillance in these high-risk populations.

Table 1: Global Prevalence and Disease Burden of Soil-Transmitted Helminths (2021)

| Helminth Species | Global Prevalence (millions) | DALYs (thousands) | Age-Standardized Prevalence Rate (per 100,000) |

|---|---|---|---|

| All STHs | 642.72 | 1,380 | 8,429.89 |

| Ascariasis | 293.80 | 647.53 | 3,856.33 |

| Trichuriasis | 266.87 | 193.92 | 3,482.27 |

| Hookworm | 112.82 | 540.20 | 1,505.49 |

Source: Global Burden of Disease Study 2021 [2]

Disability-Adjusted Life Years (DALYs)

The disease burden of STH infections extends beyond prevalence to include significant disability. In 2021, STH infections were responsible for 1.38 million disability-adjusted life years (DALYs) globally [2]. This metric quantifies the total health loss attributable to these infections, combining years of life lost due to premature mortality with years lived with disability.

The distribution of DALYs varies by parasite species. Hookworm infections account for the largest proportion (51.14%) of the total age-standardized DALY rate for STHs, primarily due to their contribution to anemia and protein malnutrition [1]. Trichuriasis, while highly prevalent, contributes less to overall DALYs (14.05%), with ascariasis intermediate in its impact [1] [2]. This distribution reflects the different pathophysiological mechanisms of each parasite species and their varying effects on human health.

Temporal Trends and Socioeconomic Correlations

Significant progress has been made in reducing the global burden of STH infections over the past three decades. From 1990 to 2021, the prevalence and DALYs of STH infections in China decreased dramatically by 85.08% and 98.01%, respectively [1]. The age-standardized prevalence rate dropped from 34,073.24 to 4,981.01 per 100,000, with an estimated annual percentage change (EAPC) of -6.62% [1]. Similar declining trends have been observed globally, though the rate of reduction varies by region and socioeconomic factors.

The socio-demographic index (SDI) shows a strong negative correlation with STH burden, with higher prevalence and DALY rates observed in regions with lower SDI scores [2]. This relationship highlights the connection between poverty, inadequate sanitation, and helminth transmission. Despite overall progress, projections indicate a potential rebound in trichuriasis by 2035 if control efforts are not sustained, underscoring the need for continued investment and innovation in STH control strategies [1].

AI-Based Approaches for Helminth Egg Detection and Classification

Diagnostic Challenges and AI Solutions

Traditional microscopic diagnosis of helminth infections relies on manual examination of fecal samples, a process that is time-consuming, labor-intensive, and requires specialized expertise [5] [7]. This method is prone to false negatives and missed detections, particularly in low-intensity infections or when multiple parasite species are present [6]. These limitations have motivated the development of AI-based diagnostic platforms that can automate the identification and quantification of helminth eggs with greater speed, accuracy, and consistency.

Artificial intelligence approaches, particularly deep learning algorithms, have demonstrated remarkable success in recognizing parasitic helminth eggs in microscopic images [5] [7]. These systems can enhance image clarity, remove noise, segment regions of interest, and classify parasite species with high accuracy, significantly reducing reliance on human expertise while maintaining real-time efficiency [5]. The integration of these technologies into diagnostic workflows has the potential to revolutionize parasitological examination in both clinical and public health settings.

Deep Learning Architectures and Platforms

Several deep learning architectures have been successfully applied to helminth egg detection, including Single Shot MultiBox Detector (SSD), U-Net, Faster R-CNN (Faster Region-based Convolutional Neural Network), and YOLOv4 (You Only Look Once) [6] [7]. Each architecture offers distinct advantages for different aspects of the detection and classification process, from image segmentation to object recognition.

The Helminth Egg Analysis Platform (HEAP) represents an integrated approach that combines multiple deep learning strategies to identify and quantify helminth eggs in microscopic specimens [6]. This user-friendly platform allows technicians to choose the best predictions from different algorithms and includes specialized features such as image binning and egg-in-edge algorithms based on pixel density detection to improve performance. The platform employs a distributed computing structure that enables efficient processing across multiple computer systems, making it adaptable to resource-limited settings [6].

Table 2: Performance Metrics of AI Algorithms for Helminth Egg Detection

| AI Algorithm | Application | Accuracy (%) | Precision (%) | Sensitivity (%) | Reference |

|---|---|---|---|---|---|

| U-Net with Watershed Algorithm | Image segmentation | 96.47 | 97.85 | 98.05 | [5] |

| CNN Classifier | Feature extraction and classification | 97.38 | N/A | N/A | [5] |

| YOLOv4 | Single species detection | 84.85-100* | N/A | N/A | [7] |

| YOLOv4 | Mixed species detection | 75.00-98.10* | N/A | N/A | [7] |

Accuracy range varies by parasite species; highest for Clonorchis sinensis and Schistosoma japonicum (100%), lower for E. vermicularis (89.31%), F. buski (88.00%), and T. trichiura (84.85%) [7]

Experimental Protocol for AI-Based Helminth Egg Detection

Protocol Title: Automated Detection and Classification of Helminth Eggs in Fecal Samples Using Deep Learning

Principle: This protocol describes a standardized method for preparing fecal samples, acquiring microscopic images, and applying deep learning algorithms for the automated detection and classification of helminth eggs.

Materials and Reagents:

- Stool samples preserved in 10% formalin or other suitable fixative

- Standard fecal parasite concentration reagents (formalin-ethyl acetate)

- Microscope slides (75 × 25 mm) and coverslips (18 × 18 mm)

- Light microscope with digital camera attachment (recommended 100x, 400x magnification)

- Computer workstation with GPU capability (minimum NVIDIA GeForce RTX 3090 recommended)

- Image analysis software (Python 3.8 with PyTorch framework)

Sample Preparation:

- Concentration Procedure: Process preserved stool samples using formalin-ethyl acetate concentration method to concentrate helminth eggs.

- Slide Preparation: Place two drops (approximately 10 μL) of vortex-mixed sediment on a clean microscope slide and cover with a coverslip, avoiding air bubbles.

- Quality Control: Examine slides under microscope to confirm presence and species of helminth eggs before proceeding to digital imaging.

Image Acquisition:

- Microscopy: Use light microscope with 100x and 400x magnification objectives for image capture.

- Digital Imaging: Capture multiple digital images from each slide using consistent lighting and exposure settings across all samples.

- Dataset Organization: Create separate directories for training, validation, and test sets with 8:1:1 ratio respectively.

AI Model Training (Using YOLOv4):

- Environment Setup: Configure Python 3.8 programming environment with PyTorch framework on GPU-enabled system.

- Image Preprocessing: Compress images to standard size, apply k-means algorithm for anchor size determination.

- Data Augmentation: Implement mosaic data augmentation and mixup data augmentation for sample expansion.

- Parameter Configuration: Set initial learning rate to 0.01 with decay factor of 0.0005, use Adam optimizer with momentum value of 0.937, batch size of 64.

- Training: Execute 300 epochs with backbone feature extraction network frozen for first 50 epochs to accelerate convergence.

- Validation: Use validation set for parameter optimization and prevention of overfitting.

Evaluation Metrics:

- Calculate precision and recall using formulas:

- Precision = TP / (TP + FP)

- Recall = TP / (TP + FN)

- Determine average precision (AP) for single target classes and mean average precision (mAP) for multiclass detection accuracy.

- Assess model performance using Intersection over Union (IoU) and Dice Coefficient at object level.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents and Materials for Helminth Research

| Item | Function/Application | Specifications/Examples |

|---|---|---|

| Albendazole | Benzimidazole anthelmintic; frontline treatment for STH infections | Standard dose: 400 mg single administration; cure rates: A. lumbricoides (79.5%), hookworm (30.7%), T. trichiura (95.7%) [4] |

| Mebendazole | Benzimidazole anthelmintic; alternative frontline treatment | Standard dose: 500 mg single administration; child-friendly formulation available [4] |

| Praziquantel | Primary treatment for schistosomiasis and food-borne trematodiases | Standard dose: 40-60 mg/kg; active against all Schistosoma species [8] |

| Artemisinins | Repurposed antimalarials with anthelmintic activity | Includes artemether, artesunate; effective against Schistosoma spp., Fasciola spp. [8] |

| Microscope with Digital Camera | Image acquisition for AI-based diagnosis | Recommended: Light microscope with 100x, 400x magnification; digital camera attachment [7] |

| Helminth Egg Suspensions | Reference materials for algorithm training | Commercially available from scientific suppliers (e.g., Deren Scientific Equipment Co. Ltd.) [7] |

| Formalin-Ethyl Acetate | Fecal sample concentration and preservation | Standard parasitological concentration method for egg detection [7] |

Workflow and Signaling Pathways

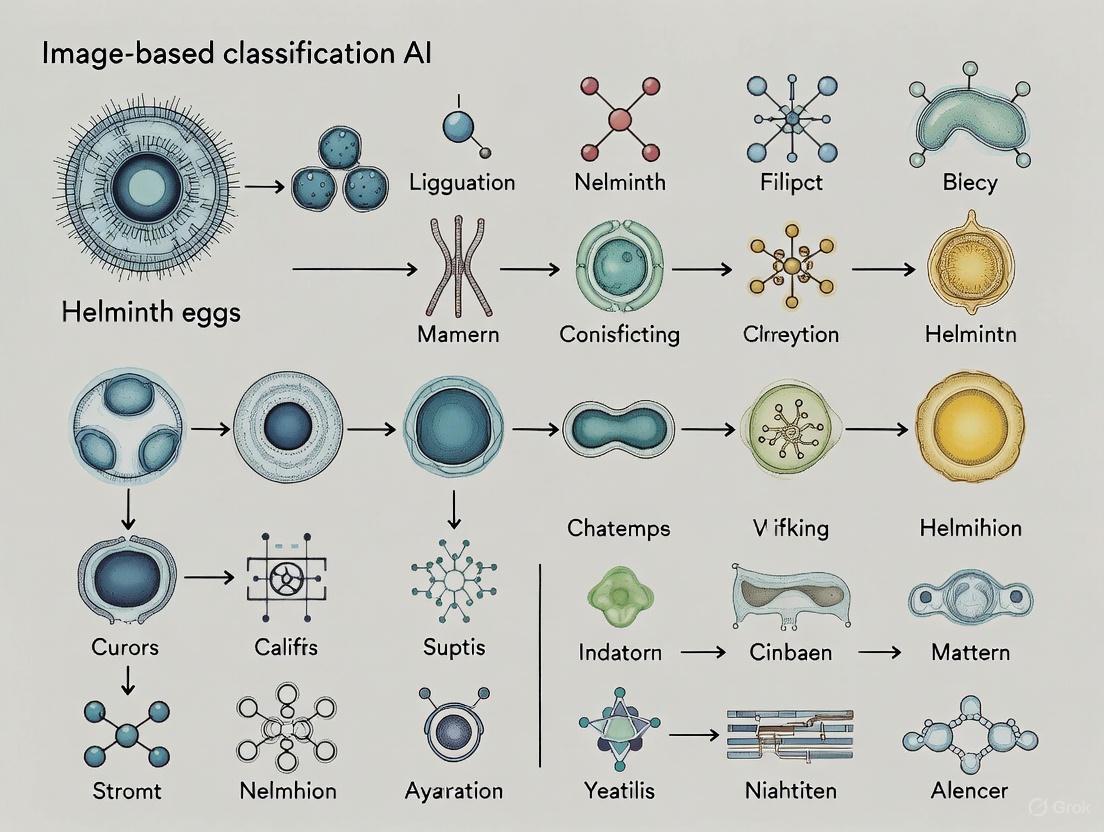

The following diagram illustrates the integrated workflow for AI-based detection of helminth eggs, combining both laboratory procedures and computational analysis:

The AI-based diagnostic workflow integrates traditional parasitological methods with modern computational approaches, creating an efficient pipeline for helminth egg detection and classification. This integrated system addresses limitations of conventional microscopy while maintaining compatibility with established laboratory procedures [5] [6] [7].

Discussion and Future Perspectives

The integration of AI technologies into helminth diagnosis represents a paradigm shift in parasitology, offering solutions to longstanding challenges in manual microscopy. These advanced platforms demonstrate remarkable accuracy, with some studies reporting up to 100% recognition accuracy for specific helminth species such as Clonorchis sinensis and Schistosoma japonicum [7]. The continued development and validation of these systems will be essential for expanding their implementation in both clinical and public health settings.

Future research directions should focus on expanding training datasets to include more diverse helminth species and imaging conditions, refining recognition algorithms to improve performance on mixed infections, and optimizing platforms for use in resource-limited settings [6] [7]. Additionally, the combination of AI-based diagnostic tools with novel therapeutic approaches, including drug repurposing and combination therapies, holds promise for integrated control strategies that can more effectively reduce the global burden of helminth infections [8] [4].

As the field advances, the standardization of methodologies and validation across different population settings will be crucial for establishing the reliability and comparability of AI-based diagnostic platforms. With continued innovation and collaboration between computer scientists, parasitologists, and public health experts, these technologies have the potential to significantly contribute to global efforts to control and eliminate helminth infections as public health problems by 2030.

Manual microscopy remains the gold standard for diagnosing helminth infections and other parasitic diseases in many clinical and research settings. However, this method faces significant limitations related to the extensive time required for analysis, its dependence on highly trained expert personnel, and susceptibility to human error and variability. This application note details these limitations through quantitative data, outlines experimental protocols for AI-based diagnostic evaluation, and visualizes the critical workflows, underscoring the transformative potential of artificial intelligence in parasitology.

Manual microscopy of stool samples, particularly using the Kato-Katz thick smear technique, is the globally established gold standard for diagnosing soil-transmitted helminths (STHs) such as Ascaris lumbricoides, Trichuris trichiura, and hookworms [9]. Despite its widespread use, this method is increasingly recognized as a bottleneck in large-scale monitoring programs and drug development trials. The procedure is inherently labor-intensive, requiring skilled technicians to meticulously prepare and examine samples [10] [11]. The diagnostic accuracy is fundamentally linked to the expertise and subjective judgment of the microscopist, leading to concerns about consistency and reproducibility [10] [11]. Furthermore, the time-sensitive nature of certain assays, like the Kato-Katz technique which requires analysis within 30–60 minutes before hookworm eggs disintegrate, imposes significant logistical constraints [9]. These limitations collectively compromise diagnostic sensitivity, particularly for light-intensity infections that are becoming increasingly prevalent as global control efforts advance [9].

Quantitative Analysis of Limitations

The constraints of manual microscopy can be quantified across several key performance metrics. The following tables consolidate empirical data from recent studies, providing a direct comparison with AI-assisted methods.

Table 1: Comparative Diagnostic Performance of Manual Microscopy vs. AI-Based Methods for Soil-Transmitted Helminths (STHs)

| Diagnostic Method | Metric | A. lumbricoides | T. trichiura | Hookworm |

|---|---|---|---|---|

| Manual Microscopy | Sensitivity | 50.0% | 31.2% | 77.8% |

| Autonomous AI | Sensitivity | 50.0% | 84.4% | 87.4% |

| Expert-Verified AI | Sensitivity | 100% | 93.8% | 92.2% |

| Manual Microscopy | Specificity | >97% | >97% | >97% |

| Autonomous AI | Specificity | >97% | >97% | >97% |

| Expert-Verified AI | Specificity | >97% | >97% | >97% |

Data adapted from a study on 704 Kato-Katz smears, using a composite reference standard [9].

Table 2: Broader Operational Limitations of Manual Microscopy

| Limitation Category | Quantitative/Qualitative Impact |

|---|---|

| Time Consumption | Manual examination of slides consumes approximately 80% of the total time-to-result for Kato-Katz smear analysis [12]. |

| Expertise Dependency | Diagnostic accuracy is closely tied to the prior knowledge and physical condition of the operator, leading to inter-user variability [11] [13]. |

| Sensitivity in Light Infections | 96.7% of positive STH infections in a recent study were of light intensity, which are notoriously challenging to detect manually [9]. |

| Error and Variability | Human operators introduce variability in image acquisition and interpretation, affecting the consistency and reliability of results [10]. |

| Workflow Rigidity | Lack of remote-work capabilities; requires experts to be physically present in the laboratory, hindering collaboration and consultation [11]. |

Experimental Protocols for AI-Assisted Diagnostics Evaluation

To objectively evaluate and compare the performance of AI models against manual microscopy, standardized experimental protocols are essential. The following outlines a general workflow and a specific implementation for training a deep learning model.

General Workflow for AI-Based Diagnostic Assessment

AI Diagnostic Assessment Workflow

Procedure:

- Sample Collection and Preparation: Collect stool samples from the target population (e.g., school children in endemic areas). Prepare Kato-Katz thick smears according to WHO standard protocols [9].

- Digital Slide Imaging: Digitize the entire microscope slide using a portable whole-slide imaging scanner. This creates a high-resolution digital image that can be stored and analyzed remotely [9].

- Data Preprocessing: The digital whole-slide image is typically divided into smaller, manageable image patches. Techniques like BM3D for noise reduction and Contrast-Limited Adaptive Histogram Equalization (CLAHE) can be applied to enhance image clarity and contrast [5].

- AI Model Inference: Process the preprocessed image patches through a trained deep learning model (e.g., a YOLO variant). The model autonomously identifies and classifies parasite eggs within the images [7] [12] [9].

- Expert Verification: For validation studies, the AI-generated detections are reviewed and verified by expert microscopists. This step is crucial for generating high-quality training data and for the "expert-verified AI" diagnostic method [9].

- Result Interpretation & Performance Reporting: Compare the outputs from manual microscopy, autonomous AI, and expert-verified AI against a composite reference standard. Report key metrics such as sensitivity, specificity, and mean Average Precision (mAP).

Specific Protocol: Training a Lightweight YOLO Model for Egg Detection

This protocol details the methodology for developing a computationally efficient AI model, as described in [13].

Objective: To train and evaluate a lightweight deep learning model (YAC-Net) for rapid and accurate detection of parasitic eggs in microscopy images.

Materials: See the "Research Reagent Solutions" section below.

Method:

- Dataset and Preprocessing:

- Utilize a publicly available dataset such as the ICIP 2022 Challenge dataset.

- Partition the dataset for fivefold cross-validation to ensure robust performance evaluation.

- Apply standard image preprocessing, including resizing and normalization.

Model Architecture and Training:

- Baseline: Use YOLOv5n as the baseline model.

- Architecture Modification: Replace the standard Feature Pyramid Network (FPN) in the model's neck with an Asymptotic Feature Pyramid Network (AFPN). This structure better fuses spatial contextual information from different levels and reduces computational complexity.

- Backbone Enhancement: Modify the C3 module in the backbone to a C2f module to enrich gradient flow and improve feature extraction.

- Training Parameters: Train the model using an Adam optimizer. Set the initial learning rate to 0.01, use a batch size of 64, and train for a sufficient number of epochs (e.g., 300) with early stopping to prevent overfitting [7] [13].

Evaluation:

- Evaluate the model on a held-out test set.

- Report standard object detection metrics, including Precision, Recall, F1-score, and mean Average Precision at an IoU threshold of 0.5 (mAP_0.5).

- Compare the number of parameters and computational requirements against the baseline and other state-of-the-art models.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for AI-Based Parasitology Research

| Item | Function/Description | Example Use Case |

|---|---|---|

| Kato-Katz Kit | Preparation of thick smears from stool samples for microscopic examination. Contains templates, cellophane soaked in glycerol-malachite green, etc. | Standardized sample preparation for STH diagnosis in field studies [9]. |

| Portable Whole-Slide Scanner | Digitizes entire microscope slides into high-resolution digital images for remote analysis and AI processing. | Enabling digital pathology in field laboratories and primary healthcare settings [9]. |

| Kubic FLOTAC Microscope (KFM) | A compact, portable digital microscope designed to autonomously analyze fecal specimens prepared with FLOTAC or Mini-FLOTAC techniques. | Automated image acquisition for on-field parasite egg detection in veterinary and human medicine [14]. |

| Annotated Image Datasets | Curated collections of digital microscopy images with labeled parasite eggs. Essential for training and validating AI models. | ICIP 2022 Challenge dataset [13]; Chula-ParasiteEgg-11 [14]; AI-KFM challenge dataset [14]. |

| Deep Learning Framework (e.g., PyTorch) | An open-source software library used for developing and training deep neural networks. | Implementing and training object detection models like YOLOv4, YOLOv7, and custom architectures like YAC-Net [7] [12] [13]. |

| High-Performance Computing (HPC) | GPU-accelerated workstations (e.g., NVIDIA GeForce RTX 3090). | Significantly reducing the time required for model training and hyperparameter optimization [7]. |

The limitations of manual microscopy—its time-consuming nature, reliance on scarce expertise, and proneness to human error—are quantifiable and significant, particularly in the context of large-scale helminth control programs and drug development trials. The integration of artificial intelligence, facilitated by standardized experimental protocols and specialized reagents, presents a viable and superior alternative. AI-assisted diagnostics demonstrably enhance sensitivity, especially for critical light-intensity infections, while improving standardization and operational efficiency. The ongoing development of lightweight, computationally efficient models promises to make this technology accessible in resource-limited settings, ultimately contributing to the global goal of eliminating parasitic diseases as a public health concern.

Application Notes: Performance of Deep Learning Models in Helminth Egg Classification

The application of deep learning for the image-based classification of helminth eggs demonstrates significant potential to automate and enhance traditional diagnostic processes. The following tables summarize quantitative performance data from recent studies, providing researchers with a benchmark for model selection and development.

Table 1: Performance Metrics of Deep Learning Models in Helminth Egg Classification [15]

| Deep Learning Model | Accuracy (%) | Precision (%) | Sensitivity/Recall (%) | Macro Average F1-Score (%) |

|---|---|---|---|---|

| ConvNeXt Tiny | - | - | - | 98.6 |

| EfficientNet V2 S | - | - | - | 97.5 |

| MobileNet V3 S | - | - | - | 98.2 |

| U-Net (Pixel-Level) | 96.47 | 97.85 | 98.05 | - |

| Custom CNN (Image-Level) | 97.38 | - | - | 97.67 |

Table 2: Object-Level Segmentation Performance of U-Net Model [5]

| Evaluation Metric | Performance Value (%) |

|---|---|

| Intersection over Union (IoU) | 96 |

| Dice Coefficient | 94 |

Experimental Protocols

Protocol A: Multi-Model Comparative Evaluation for Helminth Egg Classification

This protocol outlines the methodology for a comparative analysis of state-of-the-art deep learning models to classify Ascaris lumbricoides, Taenia saginata, and uninfected eggs from microscopic images [15].

I. Research Reagent Solutions

- Dataset of Microscopic Images: A diverse dataset comprising images of Ascaris, Taenia, and uninfected eggs. The dataset must be partitioned into training, validation, and test sets.

- Deep Learning Models: Pre-trained versions of ConvNeXt Tiny, EfficientNet V2 S, and MobileNet V3 S.

- Software Framework: A deep learning framework such as TensorFlow or PyTorch with necessary libraries for image preprocessing, model training, and evaluation (e.g., scikit-learn for metric calculation).

II. Experimental Procedure

- Data Preparation: Resize all images to the required input dimensions of each model (e.g., 224x224 pixels). Apply data augmentation techniques such as rotation, flipping, and brightness adjustment to increase dataset variability and improve model robustness.

- Model Configuration: Initialize the three pre-trained models. Replace the final classification layer of each model with a new layer containing three units (corresponding to the three classes: Ascaris, Taenia, uninfected).

- Model Training: Train each model on the training dataset. Use the validation set for hyperparameter tuning and to prevent overfitting. It is critical to maintain consistent training epochs and batch sizes across all models to ensure a fair comparison.

- Model Evaluation: Use the held-out test set to evaluate the final models. Calculate key performance metrics, including accuracy, precision, recall, and F1-score for each model and for each class.

- Statistical Analysis: Perform statistical assessment (e.g., confidence intervals, hypothesis testing) to demonstrate the reliability of the performance results.

Protocol B: AI-Based Workflow for Parasite Egg Segmentation and Classification

This protocol details an end-to-end AI approach that integrates image filtering, segmentation, and classification for diagnosing intestinal parasitic infections [5].

I. Research Reagent Solutions

- Microscopic Fecal Images: Images acquired from stool samples, containing various types of noise (Gaussian, Salt and Pepper, Speckle, Fog).

- Image Processing Algorithms: Block-Matching and 3D Filtering (BM3D) for denoising and Contrast-Limited Adaptive Histogram Equalization (CLAHE) for contrast enhancement.

- Segmentation Model: A U-Net model architecture for precise pixel-level segmentation of parasite eggs.

- Classification Model: A Convolutional Neural Network (CNN) for automatic feature learning and classification of the segmented regions of interest.

II. Experimental Procedure

- Image Preprocessing:

- Denoising: Apply the BM3D technique to the input microscopic images to remove noise while preserving the structural information of the parasite eggs [5].

- Contrast Enhancement: Use the CLAHE algorithm on the denoised images to improve the contrast between the eggs and the background, facilitating more accurate segmentation [5].

- Image Segmentation:

- Model Training: Train the U-Net model on the preprocessed images using the Adam optimizer. The training labels should be pixel-wise masks that identify egg regions.

- Region Extraction: Apply the trained U-Net model to segment the images. Subsequently, use a watershed algorithm on the segmented output to separate touching objects and extract precise Regions of Interest (ROI) [5].

- Classification:

- Feature Learning & Classification: Feed the extracted ROIs into the custom CNN. The network will automatically learn discriminative features in the spatial domain and perform the final classification (e.g., Ascaris, Taenia, uninfected) [5].

- Performance Validation:

- Segmentation Metrics: Evaluate the U-Net model at the pixel level (Accuracy, Precision, Sensitivity) and the object level (Intersection over Union, Dice Coefficient).

- Classification Metrics: Evaluate the final diagnostic output of the CNN using accuracy and macro-average F1-score.

Workflow Visualization

AI Diagnostic Workflow

Model Comparison Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for AI-Based Helminth Egg Diagnostics

| Item | Function/Application in Research |

|---|---|

| Curated Image Dataset | A foundational reagent comprising high-quality, annotated microscopic images of helminth eggs (e.g., Ascaris lumbricoides, Taenia saginata) and uninfected samples for model training and validation [15] [5]. |

| Pre-trained Deep Learning Models (ConvNeXt, EfficientNet, etc.) | Model architectures with weights pre-trained on large-scale image datasets (e.g., ImageNet). They serve as a starting point for transfer learning, significantly reducing training time and data requirements [15]. |

| U-Net Architecture | A specific convolutional network architecture designed for biomedical image segmentation. It is essential for creating precise pixel-wise masks of parasite eggs in complex microscopic images [5]. |

| Block-Matching and 3D Filtering (BM3D) Algorithm | An advanced image processing algorithm used as a reagent to enhance image quality by effectively removing various types of noise (Gaussian, Salt-and-Pepper) from raw microscopic images [5]. |

| Contrast-Limited Adaptive Histogram Equalization (CLAHE) | A digital image processing reagent used to improve the contrast between the parasite eggs and the background, which is a critical preprocessing step for robust segmentation [5]. |

| Watershed Algorithm | A classical image segmentation reagent used in post-processing to separate touching or overlapping eggs in the image, ensuring accurate individual egg analysis and feature extraction [5]. |

The accurate detection and identification of parasitic helminths—specifically Ascaris, Trichuris, hookworm, Schistosoma, and Taenia—is a cornerstone of effective public health interventions, drug efficacy trials, and surveillance programs. Traditional diagnostic methods, primarily based on microscopic examination of stool samples, have long been the standard. However, these methods face significant challenges, including low sensitivity, especially in low-intensity infections, labor-intensive procedures, and reliance on skilled microscopists. The emergence of molecular techniques and artificial intelligence (AI) is reshaping the diagnostic landscape. This document provides a detailed overview of current diagnostic methodologies, highlights the integration of AI for image-based classification, and presents standardized protocols for researchers and drug development professionals working within the framework of advanced helminth research.

Current Diagnostic Landscape and Quantitative Comparisons

The following tables summarize the performance and characteristics of key diagnostic methods for the target helminths.

Table 1: Comparison of Traditional, Molecular, and AI-Based Diagnostic Methods

| Diagnostic Method | Key Parasites Detected | Typical Sensitivity & Specificity | Major Advantages | Major Limitations |

|---|---|---|---|---|

| Kato-Katz (KK) [16] [17] | Ascaris, Trichuris, Hookworm, Schistosoma | Varies; lower in low-intensity infections [16] | Low cost, quantifies eggs per gram (EPG), field-deployable | Low sensitivity post-treatment, labor-intensive, unable to differentiate cryptic species [16] [18] |

| qPCR [16] [19] | Ascaris, Trichuris, Hookworm, Schistosoma, Taenia | High sensitivity and specificity [19] | High sensitivity, species differentiation, quantifiable | Higher cost, requires lab infrastructure, complex result interpretation [16] |

| AI-Based Image Analysis [5] | Ascaris, Trichuris, Hookworm (Microscopy-based targets) | 97.38% accuracy, 97.85% precision reported [5] | High-throughput, automated, reduces manual labor | Requires high-quality images, initial model training, computational resources |

Table 2: Key Diagnostic Markers and Recent Discoveries for Target Helminths

| Parasite | Key Diagnostic Marker/Feature | Recent Finding / Clinical Significance |

|---|---|---|

| Trichuris spp. [18] | ITS2 rDNA region / Egg morphology | Existence of cryptic species (T. incognita); requires molecular differentiation [18] |

| Ascaris spp. [20] | Immunogenic proteins (e.g., ABA-1, paramyosin) | Recombinant proteins show promise for serodiagnostic assays detecting IgG4 [20] |

| Hookworm [21] | Egg morphology / Larval culture | Mathematical modeling informs transmission dynamics and intervention strategies [21] |

| Schistosoma spp. [17] | Egg morphology (spine location) / qPCR | WHO goals focus on heavy-intensity infection prevalence (<1% for EPHP) [17] |

| Taenia spp. [22] | Egg/proglottid morphology / Coproantigen | Monitoring & Surveillance (M&S) systems are less developed compared to other helminths [22] |

The Role of Artificial Intelligence in Image-Based Classification

AI, particularly deep learning, is revolutionizing the image-based classification of helminth eggs. A typical AI workflow achieves high accuracy by automating the entire process from image preparation to final classification [5].

AI Classification Workflow

The following diagram illustrates the sequential steps for AI-based classification of helminth eggs from microscopic images:

This workflow integrates several advanced computational techniques:

- Image Preprocessing: The BM3D (Block-Matching and 3D Filtering) technique is employed to remove various types of noise (Gaussian, Salt and Pepper, Speckle, and Fog Noise) from microscopic images. Contrast-Limited Adaptive Histogram Equalization (CLAHE) enhances the contrast between parasite eggs and the background, improving feature visibility for subsequent analysis [5].

- Image Segmentation: A U-Net model, optimized with the Adam optimizer, is used for precise segmentation of potential parasite eggs. This model has demonstrated excellent performance at the pixel level, with reported accuracy of 96.47%, precision of 97.85%, and sensitivity of 98.05%. At the object level, it achieved a 96% Intersection over Union (IoU) and a 94% Dice Coefficient [5].

- Feature Extraction and Classification: Following segmentation, a watershed algorithm extracts Regions of Interest (ROIs). A Convolutional Neural Network (CNN) then performs automatic feature learning in the spatial domain to classify the eggs. This CNN classifier has achieved an overall accuracy of 97.38% with macro average F1 scores of 97.67% [5].

Detailed Experimental Protocols

Protocol 1: qPCR for Trichuris trichiura Detection

Principle: This protocol uses quantitative real-time PCR (qPCR) to detect Trichuris trichiura DNA in stool samples with high sensitivity and specificity, suitable for prevalence studies and efficacy trials [19].

Materials:

- Quick-DNA Fecal/Soil Microbe Miniprep Kit (Zymo Research)

- CFX96 Real-Time PCR Cycler (Bio-Rad Laboratories)

- Specific primers and FAM-labelled probe for T. trichiura [19]

Procedure:

- Sample Washing: Transfer approximately 150 mg of stool to a 15 mL tube with 10 mL of 1X PBS. Homogenize by shaking, centrifuge at 2000 g for 3 minutes, and discard supernatant. Repeat twice [19].

- DNA Extraction: Use the commercial kit according to the manufacturer's protocol. Include a bead-beating step (5 minutes at maximum speed) for mechanical lysis [19].

- qPCR Setup:

- Prepare a master mix containing primers and probe.

- Pipette 5 µL of master mix into reaction wells and add 2 µL of DNA template.

- Include positive controls (serial dilutions of plasmid standard) and no-template controls (nuclease-free water).

- Thermal Cycling: Run on the CFX96 system with the following conditions:

- Pre-denaturation: 95°C for 3 minutes.

- 40 cycles of: 95°C for 10 seconds (denaturation), 61°C for 1 minute (annealing/extension) [19].

- Analysis: A cycle threshold (Ct) value of ≤40 is considered positive for T. trichiura infection [19].

Protocol 2: AI-Based Egg Segmentation and Classification

Principle: This protocol outlines the steps for using a U-Net model for segmentation and a CNN for classification of helminth eggs in digital microscopic images [5].

Materials:

- Dataset of microscopic fecal images

- Computing hardware (GPU recommended)

- Python with libraries (TensorFlow/Keras, OpenCV, Scikit-image)

Procedure:

- Image Preprocessing:

- Apply the BM3D algorithm to reduce noise.

- Use CLAHE to enhance image contrast.

- Model Training (U-Net for Segmentation):

- Train the U-Net model on manually annotated images of helminth eggs.

- Use the Adam optimizer and a loss function like binary cross-entropy.

- Validate model performance using IoU and Dice Coefficient.

- Segmentation Inference:

- Apply the trained U-Net to new images to create segmentation masks.

- Use the watershed algorithm on the masks to separate touching eggs and extract ROIs.

- Classification:

- Feed the extracted ROIs into the pre-trained CNN classifier.

- The CNN outputs the probability for each helminth species.

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Helminth Diagnostics

| Item | Function / Application | Example / Note |

|---|---|---|

| Kato-Katz Kit | Quantitative microscopic detection of STH and Schistosome eggs | Standard for field surveys; low cost but lower sensitivity [16] [17] |

| Nucleic Acid Extraction Kit | DNA purification from stool samples (e.g., Zymo Research, QIAamp DNA Mini Kit) | Includes bead-beating for mechanical disruption of tough egg shells [16] [19] |

| Species-Specific Primers/Probes | Target DNA amplification in qPCR/PCR | Enables species differentiation (e.g., T. trichiura vs T. incognita) [18] [19] |

| Recombinant Antigens (e.g., ABA-1, Paramyosin) | Targets for serological assays (ELISA) to detect host antibodies | Useful for detecting pre-patent or larval stage infections (e.g., in ascariasis) [20] |

| U-Net & CNN Models | AI-based segmentation and classification of egg images | Achieves high accuracy (>97%); requires computational resources and training data [5] |

| Block-Matching and 3D Filtering (BM3D) | Advanced image denoising in AI workflow | Effectively removes Gaussian, Salt and Pepper, Speckle, and Fog Noise [5] |

Deep Learning in Action: Architectures and Workflows for Egg Detection

The image-based classification of helminth eggs represents a critical challenge in parasitology and public health. Traditional diagnostic methods, reliant on manual microscopy, are characterized by subjectivity, low throughput, and a significant reliance on specialized expertise [23] [7]. This application note details the implementation and evaluation of two advanced object detection models—YOLOv4 and EfficientDet—within the context of an AI-driven research thesis aimed at automating the detection and classification of soil-transmitted helminths (STH) and Schistosoma mansoni eggs. These models offer the potential to deliver rapid, accurate, and scalable diagnostic solutions, which are crucial for monitoring and evaluation programs in resource-limited settings [23]. We provide a detailed, comparative framework to guide researchers and scientists in selecting, implementing, and validating these models for helminth egg analysis.

Model Architectures and Comparative Analysis

YOLOv4: Optimal Speed and Accuracy for Real-Time Detection

YOLOv4 is designed as a high-speed, one-stage object detector that prioritizes real-time performance without sacrificing accuracy. Its architecture is systematically divided into three key components, each contributing to its robust performance [24] [25]:

- Backbone: CSPDarknet53. This backbone serves as the primary feature extractor. It is based on Cross-Stage-Partial-connections (CSP) and DenseNet, which help to alleviate the vanishing gradient problem in deep networks, strengthen feature propagation, and reduce the number of parameters. This design removes computational bottlenecks and improves learning by passing an unedited version of the feature map to subsequent layers [24].

- Neck: PANet with SPP Block. The Path Aggregation Network (PANet) is used for feature aggregation, collecting feature maps from different stages of the backbone to prepare for detection. Additionally, a Spatial Pyramid Pooling (SPP) block is incorporated to increase the receptive field and isolate the most significant contextual features from the backbone [24].

- Head: YOLOv3. The detection head is responsible for the final prediction of bounding boxes and class labels. It utilizes an anchor-based approach and makes predictions at three different scales to improve the detection of objects of varying sizes [24].

A significant contribution of the YOLOv4 framework is its systematic use of training enhancements, termed "Bag of Freebies" and "Bag of Specials" [24] [25]:

- Bag of Freebies: These methods improve training accuracy without increasing inference time. They include advanced data augmentation techniques like Mosaic augmentation, which tiles four training images into one, teaching the model to recognize smaller objects and be less dependent on specific contextual backgrounds, and Self-Adversarial Training (SAT), which identifies and obscures the most relied-upon parts of an image, forcing the network to generalize. The loss function is also refined using CIoU loss to improve bounding box regression [24].

- Bag of Specials: These are modules that add a marginal computational cost during inference for a significant boost in accuracy. They include the Mish activation function, which helps push signals to optimal points for better feature creation, and DIoU NMS for more efficient suppression of overlapping bounding boxes [24].

EfficientDet: Scalable and Efficient Network Architecture

EfficientDet is a family of object detection models that achieves state-of-the-art performance with notably fewer parameters and training epochs compared to other architectures. Its core innovation lies in its scalable and efficient design, making it highly suitable for environments with limited computational resources [26].

- Backbone: EfficientNet. The backbone is pre-trained on ImageNet and is optimized through a compound scaling method that carefully balances the network's depth, width, and resolution. This results in a model that is both highly accurate and computationally efficient [24] [26].

- Neck: BiFPN. The Bi-directional Feature Pyramid Network (BiFPN) serves as the neck. It allows for easy, fast multi-scale feature fusion by enabling information to flow both top-down and bottom-up. This design is often a product of neural architecture search (NAS) to find the most effective connections for aggregating features from different levels of the backbone [24] [26].

- Scalability. A key advantage of the EfficientDet architecture is its scalable nature, denoted by the suffix (e.g., D0-D7), allowing researchers to select a model size that matches their specific accuracy and speed requirements [26].

Quantitative Model Comparison

The table below summarizes the key architectural and performance characteristics of YOLOv4 and EfficientDet, providing a direct comparison for researchers.

Table 1: Architectural and Performance Comparison of YOLOv4 and EfficientDet

| Feature | YOLOv4 | EfficientDet |

|---|---|---|

| Core Architecture | One-stage detector | One-stage detector |

| Backbone | CSPDarknet53 [24] | EfficientNet [26] |

| Neck | PANet with SPP [24] | BiFPN [24] |

| Key Strengths | High speed; ideal for real-time applications; extensive "Bag of Freebies" for robust training [24] [25] | High computational efficiency; excellent accuracy with fewer parameters and training epochs [26] |

| Typical mAP on COCO | State-of-the-art results (exact values depend on variant and configuration) [25] | Achieves best performance in fewest training epochs (exact values depend on variant) [26] |

| Inference Speed | Optimized for real-time detection on a single conventional GPU [25] | Highly efficient, suitable for scalable deployment [26] |

Experimental Protocols for Helminth Egg Analysis

This section provides detailed, step-by-step protocols for training and evaluating object detection models on a dataset of helminth egg images.

Dataset Curation and Preprocessing

A robust dataset is foundational for training an accurate model. The following protocol is adapted from recent studies on STH and S. mansoni detection [23] [7].

Image Acquisition:

- Equipment: Use a digital microscope (e.g., Schistoscope) or a standard light microscope (e.g., Nikon E100) capable of capturing high-resolution images (e.g., 2028x1520 pixels) [23] [7].

- Sample Preparation: Prepare fecal smears using the standard Kato-Katz technique with a 41.7 mg template. This ensures consistency with current field methods [23].

- Magnification: A 4x objective lens is typically sufficient for initial imaging [23].

Data Annotation:

- Software: Use annotation tools like LabelImg or Roboflow to draw bounding boxes around each parasite egg.

- Classes: Annotate eggs by species (e.g., A. lumbricoides, T. trichiura, hookworm, S. mansoni). Annotation should be performed by expert microscists to ensure ground truth accuracy [23].

- Data Splitting: Randomly shuffle the entire dataset and split it into training (70-80%), validation (10-20%), and test (10%) sets. This split prevents data leakage and ensures unbiased evaluation [23] [7].

Data Preprocessing:

- Image Cropping: Large field-of-view images can be automatically cropped into smaller tiles (e.g., 518x486 pixels) using a sliding window approach to facilitate model training and increase the number of samples [7].

- Data Augmentation: Apply a suite of augmentations to improve model generalization. For YOLOv4, this natively includes Mosaic and MixUp [24]. General augmentations beneficial for helminth eggs are:

- Geometric: Random scaling, cropping, flipping, and rotating [25].

- Photometric: Adjustments to brightness, contrast, hue, and saturation [25].

- Advanced Filtering: For pre-processing, techniques like Block-Matching and 3D Filtering (BM3D) can be used to remove noise, and Contrast-Limited Adaptive Histogram Equalization (CLAHE) can enhance contrast [5].

Model Training Protocol

The following steps outline the training procedure for a model like YOLOv4 on a custom helminth egg dataset.

Environment Setup:

Parameter Configuration:

- Anchor Boxes: Use the k-means clustering algorithm on your training set to determine optimal initial anchor box sizes tailored to helminth eggs [7].

- Optimizer: Use the Adam optimizer with a momentum of 0.937 [7].

- Learning Rate: Set an initial learning rate (e.g., 0.01) with a decay factor (e.g., 0.0005) [7].

- Batch Size: Set according to GPU memory (e.g., 64) [7].

- Training Epochs: Train for a sufficient number of epochs (e.g., 300), implementing early stopping if performance on the validation set does not improve for a set number of epochs (e.g., 200) [7].

Model Evaluation and Metrics

Rigorous evaluation is critical to assess model performance. The following metrics, computed on the held-out test set, are essential [27] [28].

- Intersection over Union (IoU): Measures the overlap between a predicted bounding box and the ground truth box. An IoU threshold of 0.50 is commonly used to define a correct detection [27] [28].

- Precision and Recall:

- F1-Score: The harmonic mean of precision and recall, providing a single balanced metric [27] [28].

- Average Precision (AP) and mean Average Precision (mAP):

- AP computes the area under the precision-recall curve for a single class.

- mAP is the average of AP over all object classes. mAP@0.50 uses an IoU threshold of 50%, while mAP@0.50:0.95 averages mAP over IoU thresholds from 0.50 to 0.95 in steps of 0.05, providing a more stringent assessment [27].

Table 2: Performance of Deep Learning Models in Helminth Egg Detection and Classification

| Model / Study | Task / Species | Key Metric | Reported Performance |

|---|---|---|---|

| YOLOv4 [7] | Detection of 9 helminth species | Accuracy | Ranged from 84.85% (T. trichiura) to 100% (C. sinensis, S. japonicum) |

| EfficientDet [23] | Detection of STH & S. mansoni | Weighted Average F-Score | 94.0% (± 1.98%) |

| ConvNeXt Tiny [15] | Classification of A. lumbricoides & T. saginata | F1-Score | 98.6% |

| EfficientNet V2 S [15] | Classification of A. lumbricoides & T. saginata | F1-Score | 97.5% |

| MobileNet V3 S [15] | Classification of A. lumbricoides & T. saginata | F1-Score | 98.2% |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Tools for AI-Based Helminth Egg Detection

| Item | Function / Description | Example / Specification |

|---|---|---|

| Digital Microscope | High-resolution image acquisition of prepared slides. | Schistoscope [23], Nikon E100 [7] |

| Annotation Software | For creating bounding box labels on images; generates dataset for model training. | LabelImg, Roboflow |

| Deep Learning Framework | Provides libraries and tools for building, training, and evaluating models. | PyTorch [7] |

| GPU Hardware | Accelerates the deep learning training process through parallel computation. | NVIDIA GeForce RTX 3090 [7] |

| Kato-Katz Kit | Standard method for preparing thick fecal smears for microscopic analysis. | 41.7 mg template [23] |

Workflow and Architecture Diagrams

The following diagram illustrates the end-to-end pipeline for developing an AI-based helminth egg detection system, from sample collection to model deployment.

YOLOv4 Architecture Breakdown

This diagram details the internal structure of the YOLOv4 object detector, showing the flow of data through its backbone, neck, and head.

This document provides detailed application notes and experimental protocols for using three advanced Convolutional Neural Networks (CNNs)—EfficientNet, ConvNeXt, and MobileNet—within the specific research context of image-based classification of helminth eggs. Accurate and efficient identification of helminth eggs in microscopic images is a critical step in diagnosing parasitic infections, monitoring community health, and assessing the efficacy of drug development campaigns. The manual microscopic examination is time-consuming, requires significant expertise, and is prone to human error. Artificial Intelligence (AI), particularly deep learning-based computer vision, offers a promising solution for automating this process, enabling high-throughput, reproducible, and precise analysis. The choice of neural network architecture is paramount, balancing the need for high accuracy with the constraints of computational resources, which are often limited in field or clinical settings. This guide focuses on three modern CNN families that represent different optimal trade-offs between these competing demands, providing researchers with the practical tools needed to implement and evaluate these models for their parasitology research.

The following sub-sections detail the core architectural principles and relative strengths of EfficientNet, ConvNeXt, and MobileNet.

EfficientNet: Compound Scaling for Balanced Performance

EfficientNet revolutionized model design by introducing a compound scaling method that systematically balances the network's depth (number of layers), width (number of channels), and resolution (input image dimensions) [29] [30]. Instead of arbitrarily scaling a single dimension, which leads to rapidly diminishing returns, EfficientNet scales all three in a coordinated manner, governed by a single compound coefficient (φ) [29]. This approach results in a family of models (B0 to B7) that achieve state-of-the-art accuracy with an order-of-magnitude fewer parameters and computational requirements (FLOPs) than previous models [30].

Its core building block is the MBConv layer (Mobile Inverted Bottleneck Convolution), which features depthwise separable convolutions and a Squeeze-and-Excitation (SE) module [29] [30]. The SE module acts as an attention mechanism, adaptively weighting the importance of each channel in a feature map, allowing the model to focus on more informative features [30]. For helminth egg classification, where subtle textural and morphological differences distinguish species, this focused feature extraction is highly beneficial. EfficientNet models, particularly versions like EfficientNetV2, are known for fine-tuning efficiently on small to mid-sized datasets, making them an excellent choice when labeled microscopic image data is limited [31].

ConvNeXt: A Modernized CNN for the 2020s

ConvNeXt is a pure CNN architecture that was redesigned by systematically modernizing a standard ResNet using insights and techniques from Vision Transformers (ViTs) [32]. It demonstrates that CNNs, when properly updated, can match or even surpass the performance of state-of-the-art transformers on various vision tasks while retaining the computational efficiency of convolutions [31] [32].

Key innovations of ConvNeXt include:

- Large Kernel Depthwise Convolutions: Replacing small (e.g., 3x3) kernels with larger (e.g., 7x7) depthwise convolutions to increase the receptive field, similar to the global context modeling of self-attention in ViTs [32].

- Inverted Bottleneck and Layer Normalization: Adopting an inverted bottleneck design common in mobile networks and replacing Batch Normalization with Layer Normalization, which improves training stability [32].

- Modernized Training Recipes: Utilizing advanced training strategies like AdamW optimizer, data augmentation (MixUp, CutMix), and regularization (label smoothing) [32].

ConvNeXt is particularly suited for research pipelines where high accuracy is the priority and computational resources for training are adequate. Its hierarchical design and powerful feature extraction capabilities make it a strong "safe bet for scalable production pipelines" in biomedical image analysis [31].

MobileNet: Lightweight Champion for Edge Deployment

The MobileNet family is specifically engineered for low-power, low-latency deployment on mobile and embedded devices [31] [33]. Its primary innovation is the heavy use of depthwise separable convolutions, which factorize a standard convolution into a depthwise convolution (applying a single filter per input channel) and a pointwise convolution (a 1x1 convolution to combine channel outputs) [31]. This factorization drastically reduces the model's parameter count and computational cost.

MobileNetV3, the latest major version, incorporates neural architecture search (NAS) to optimize the network structure further and includes a Squeeze-and-Excitation module in its bottleneck blocks [31] [33]. It is the go-to model for applications that require real-time inference on resource-constrained hardware, such as point-of-care diagnostic devices or portable field microscopes [31]. For helminth egg classification, a MobileNetV3-based model can be quantized (e.g., to INT8 precision) to achieve excellent performance on a smartphone or single-board computer, enabling decentralized analysis without reliance on cloud connectivity [31].

Table 1: Comparative Analysis of CNN Architectures for Image Classification

| Feature | EfficientNet | ConvNeXt | MobileNetV3 |

|---|---|---|---|

| Core Innovation | Compound Scaling of depth, width, & resolution [29] [30] | Modernized CNN with ViT-inspired designs [32] | Depthwise Separable Convolutions & NAS [31] |

| Key Building Block | MBConv with SE attention [29] [30] | Large-kernel depthwise conv [32] | Inverted Residual Bottleneck [31] |

| Typical Use Case | High-accuracy, efficient training on small datasets [31] | High-accuracy, research & cloud deployment [31] | Real-time inference on mobile/embedded devices [31] [33] |

| Strengths | Best trade-off: accuracy vs. efficiency [31] [30] | High performance, modern & scalable [31] | Extremely fast, low power, easily quantized [31] |

| Helminth Egg Application | Default choice for balanced performance | When highest accuracy is needed and compute is available | For point-of-care/field deployment |

Quantitative Performance Comparison

To make an informed model selection, it is essential to compare the quantitative performance of different variants within and across these model families. The following table summarizes key metrics on the standard ImageNet-1K benchmark, which serves as a proxy for their potential performance on other image classification tasks, such as helminth egg analysis.

Table 2: Model Performance and Complexity on ImageNet-1K Benchmark [31]

| Model Variant | Top-1 Accuracy (%) | Parameters (Millions) | FLOPs (Billions) | Notes |

|---|---|---|---|---|

| EfficientNetV2-L | 86.8 - 87.3 | 120 | 53 | Strong balance; efficient fine-tuning [31] |

| ConvNeXt-B | 85.8 | 89 | 15.4 | Modern CNN; matches Swin Transformer performance [31] |

| ViT-B/16 | ~85.4 | 86 | 17.6-55.5 | Transformer baseline; needs large-scale pre-training [31] |

| Swin-B | 86.4 | 88 | 47 | Hierarchical transformer for reference [31] |

| MobileNetV3-Large | ~75.2 | 5.4 | 0.2 | Edge-optimized; high speed, lower accuracy [31] |

| ResNet-50 (modern) | ~80.4 | 25.6 | 4.1 | Classic baseline for comparison [31] |

Experimental Protocols for Helminth Egg Classification

This section outlines a standardized workflow and detailed protocols for training and evaluating the featured CNN models on a proprietary dataset of helminth egg images.

The diagram below illustrates the end-to-end pipeline for developing a helminth egg classification system, from data preparation to model deployment.

Protocol 1: Data Preparation and Augmentation

Objective: To create a robust, balanced, and standardized dataset for training and evaluating deep learning models.

Data Preprocessing:

- Resizing: Uniformly resize all raw microscopic images to the input resolution required by the chosen model (e.g., 224x224 for EfficientNet-B0, 384x384 for higher-resolution fine-tuning) [31]. Maintain aspect ratio by padding if necessary to avoid distortion.

- Normalization: Normalize pixel values using the mean and standard deviation of the pre-training dataset (typically ImageNet). Common values are

mean = [0.485, 0.456, 0.406],std = [0.229, 0.224, 0.225].

Data Augmentation (Training Set Only): Apply random transformations to increase dataset diversity and improve model generalization. This is critical for preventing overfitting, especially with limited medical data [32].

- Geometric: Random horizontal and vertical flips, random rotations (e.g., ±15 degrees) [34].

- Photometric: Adjust brightness, contrast, and saturation slightly. Adding random Gaussian noise can also improve robustness.

- Advanced Techniques: For better performance, consider incorporating MixUp or CutMix, which combine images and labels to create new training samples [31] [32].

Dataset Splitting: Partition the data into three sets:

- Training (70%): Used to fit the model parameters.

- Validation (15%): Used for hyperparameter tuning and selecting the best model during training.

- Test (15%): Used only once for the final evaluation of the selected model's generalization performance. Ensure splits are stratified to preserve the class distribution.

Protocol 2: Model Training and Fine-tuning

Objective: To adapt a pre-trained model to the specific task of helminth egg classification effectively.

Model Initialization: Always start with a model pre-trained on a large-scale dataset like ImageNet. This transfer learning approach significantly speeds up convergence and improves final accuracy compared to training from scratch [31].

- Source: Download pre-trained weights from official sources like Torchvision (for ResNet, ConvNeXt), Hugging Face Transformers or timm library (for a wide variety of models including EfficientNet and ConvNeXt), or TensorFlow Hub (for EfficientNet and MobileNet) [31].

Fine-tuning Strategy:

- Feature Extraction (for very small datasets): Freeze all pre-trained layers (the "backbone") and only train a new randomly initialized classification head.

- Full Fine-tuning (recommended for datasets of >1000 images per class): Unfreeze all or most of the layers and train the entire network with a low learning rate. This allows the model to adapt its pre-learned features to the specifics of helminth egg morphology.

Training Configuration:

- Optimizer: Use AdamW with a learning rate (

lr) between 1e-5 and 1e-4 [34] [32]. This optimizer often provides better generalization than SGD with momentum in modern training recipes. - Loss Function: Use Cross-Entropy Loss. For imbalanced datasets, supplement it with Focal Loss or class-weighted cross-entropy to make the model focus on harder examples and prevent the majority class from dominating [31] [33].

- Regularization: Apply Dropout (rates of 0.2-0.5) and/or Stochastic Depth (DropConnect) to prevent overfitting [30] [35]. Label Smoothing can also be beneficial [31] [32].

- Epochs & Scheduling: Train for a sufficient number of epochs (e.g., 50-100) and use a cosine learning rate scheduler with a warm-up period to stabilize training early on [32].

- Optimizer: Use AdamW with a learning rate (

Protocol 3: Model Evaluation and Inference

Objective: To rigorously assess model performance and prepare it for deployment.

Performance Metrics: Evaluate the model on the held-out test set.

- Primary Metrics: Report Top-1 Accuracy, Precision, Recall, and F1-Score (preferably per-class and macro-averaged) [33]. The F1-Score is especially important for imbalanced datasets.

- Confusion Matrix: Generate a confusion matrix to identify specific inter-class confusion patterns (e.g., between morphologically similar helminth eggs).

Robustness and Deployment Readiness:

- Inference Latency: Measure the average time to classify a single image or a batch of images on the target hardware (e.g., a server GPU, a desktop CPU, or a mobile phone) [31].

- Model Compression (for MobileNet & EfficientNet): For edge deployment, apply post-training quantization to reduce model size and accelerate inference. This can shrink a model by 4x with minimal accuracy loss [31].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential software and hardware "reagents" required to implement the described experimental protocols.

Table 3: Essential Research Reagents for AI-based Helminth Egg Classification

| Reagent / Tool | Type | Function / Application | Example / Note |

|---|---|---|---|

| Pre-trained Models | Software | Provides a powerful starting point via transfer learning, drastically reducing data and compute needs. | Torchvision, Hugging Face Hub, TIMM (PyTorch Image Models) library [31] |

| PyTorch / TensorFlow | Software | Core deep learning frameworks for model implementation, training, and inference. | PyTorch is common in research; TensorFlow has strong production tools (TF Lite) [31] |

| High-Performance GPU | Hardware | Accelerates model training and hyperparameter tuning, reducing experiment time from days to hours. | NVIDIA RTX series with >=8GB VRAM |

| Microscopy with Camera | Hardware | Captures high-quality digital images of helminth egg samples for dataset creation. | Standard laboratory microscope with a digital camera attachment |

| Labeling Software | Software | Enables researchers to annotate collected images, assigning the correct species label to each. | LabelImg, VGG Image Annotator, or commercial solutions |

| Optimization Libraries | Software | Provides implementations of optimizers, learning rate schedulers, and loss functions. | Included in PyTorch/TensorFlow (e.g., AdamW, CosineAnnealingLR) [32] |

The final choice of model architecture depends on the specific constraints and goals of the helminth egg classification project. The following decision diagram provides a clear pathway for researchers to select the most appropriate model.

In summary, EfficientNet, ConvNeXt, and MobileNet each offer distinct advantages for the image-based classification of helminth eggs. EfficientNet provides the most balanced approach for typical research settings, ConvNeXt is ideal for projects where accuracy is paramount and resources are plentiful, and MobileNet is indispensable for point-of-care applications. By following the detailed application notes, performance comparisons, and experimental protocols outlined in this document, researchers and drug development professionals can effectively leverage these state-of-the-art AI tools to advance the field of parasitology and improve global health outcomes.

End-to-End Artificial Intelligence-Powered Digital Pathology (AI-DP) platforms represent a transformative approach to diagnosing parasitic infections in resource-limited settings. These systems integrate field-deployable digital slide scanners with onboard AI analysis to automate the detection and classification of soil-transmitted helminth (STH) and Schistosoma mansoni (SCH) eggs in fecal samples [36]. This automation addresses critical limitations of conventional microscopy, which relies on labour-intensive processes requiring trained technicians and poses significant logistical challenges in areas where neglected tropical diseases (NTDs) are most prevalent [36] [23]. By providing near-real-time data with integrated quality controls, AI-DP platforms demonstrate significant potential for efficient monitoring and evaluation of large-scale deworming programs, aligning with the World Health Organization's (WHO) goal to eliminate NTDs as a public health problem by 2030 [36] [37].

Platform Architecture & Workflow

An AI-DP platform is engineered as an integrated system comprising several core components that work in sequence to transform a physical stool sample into a digitized, analyzed diagnostic result.

Core Components

The architecture typically consists of four main subsystems [36] [37]:

- Electronic Data Capture Tools: Facilitate the recording of sample metadata and patient information.

- Whole Slide Imaging (WSI) Scanners: Portable, robust scanners capable of automatically capturing high-resolution images of prepared microscopy slides in field conditions.

- Onboard AI Analysis Engine: A pre-trained deep learning model that processes the digitized slide images to detect, count, and classify parasite eggs.

- Result Verification Software: A user interface that allows technicians to review the AI-generated annotations, confirm results, and make corrections if necessary, thereby creating a human-in-the-loop validation system.

Integrated Workflow

The end-to-end process, from sample collection to final reporting, is visualized in the following workflow.

Performance Metrics of AI Models

The efficacy of the AI detection models is paramount to the platform's overall performance. Various deep learning architectures have been validated on large datasets of Kato-Katz slide images. The following table summarizes the reported performance metrics for different parasite species across multiple studies, highlighting the high accuracy achieved by these systems.

Table 1: Analytical Performance of AI Models for Helminth Egg Detection

| Parasite Species | Model Architecture | Precision (%) | Recall / Sensitivity (%) | F1-Score / mAP@0.5 | Data Source |

|---|---|---|---|---|---|

| Ascaris lumbricoides | EfficientDet [23] | 95.4 | 91.7 | 97.1 (AP) | 951 KK slides, 43,919 eggs [36] |

| Trichuris trichiura | EfficientDet [23] | 95.9 | 86.7 | 94.8 (AP) | 951 KK slides, 43,919 eggs [36] |

| Hookworm | EfficientDet [23] | 84.6 | 86.6 | 91.4 (AP) | 951 KK slides, 43,919 eggs [36] |

| Schistosoma mansoni | EfficientDet [23] | 89.1 | 79.1 | 89.2 (AP) | 951 KK slides, 43,919 eggs [36] |

| Multiple STH & SCH | YOLOv7-E6E (ID Setting) [37] | - | - | 97.47 (F1) | AI4NTD KK2.0 Dataset [37] |

| Multiple Parasite Eggs | YAC-Net (Lightweight YOLO) [13] | 97.8 | 97.7 | 0.9773 (F1) | ICIP 2022 Challenge Dataset [13] |

| Multiple Parasite Eggs | U-Net + CNN [5] | 97.85 (Pixel-level) | 98.05 (Sensitivity) | 97.38 (Accuracy) | Custom Dataset [5] |

Experimental Protocols

To ensure reproducible and reliable results, standardized protocols for data generation and model training are essential. The following sections detail the key experimental methodologies cited in the literature.

Sample Preparation and Image Acquisition

This protocol is foundational for creating high-quality datasets for both model training and clinical diagnosis [36] [23].

- Sample Collection: Collect fresh fecal samples in sterile, leak-proof containers. Maintain a cool chain if processing is not immediate.

- Kato-Katz Smear Preparation:

- Place a small amount of sieved stool sample on a clean glass slide.

- Press a template with a 41.7 mg hole onto the slide to standardize the sample amount.

- Fill the template hole with stool and remove excess material.

- Carefully remove the template.

- Place a glycerol-soaked cellophane strip over the sample and press firmly to create a uniform, transparent smear.

- Microscopy and Imaging:

- Place the prepared slide into a portable, automated digital microscope (e.g., Schistoscope [23] or other WSI scanner).

- Scan the slide using a 4x to 10x objective lens to capture field-of-view (FOV) images with resolutions such as 2028 x 1520 pixels [23].

- Systematically scan the entire slide to generate a whole-slide image composed of multiple FOVs.

AI Model Training and Evaluation

This protocol outlines the standard workflow for developing the core detection algorithm, as used in recent studies [23] [37] [13].

- Data Annotation:

- Expert microscopists manually annotate images from the training set, drawing bounding boxes around each parasite egg and labeling them with the correct species class.

- This creates the "ground truth" dataset used for supervised learning.

- Data Preprocessing & Augmentation:

- Model Training:

- Select a model architecture (e.g., YOLOv7, EfficientDet).

- Initialize training with pre-trained weights on general image datasets (Transfer Learning).

- Train the model using an optimizer (e.g., Adam) with a defined initial learning rate (e.g., 0.01) and learning rate decay.

- Use the validation set for hyperparameter tuning and to avoid overfitting.

- Model Evaluation:

- Evaluate the final model on the held-out test set.

- Calculate standard object detection metrics: precision, recall, F1-score, and mean Average Precision (mAP) at an Intersection over Union (IoU) threshold of 0.5.

The relationships between the core components of the AI model and the training process are illustrated below.

The Scientist's Toolkit: Research Reagent Solutions

Successful development and deployment of an AI-DP platform rely on a suite of specific materials and software tools. The following table details these essential components and their functions.

Table 2: Key Research Reagents and Materials for AI-DP Platform Development

| Item Name | Function / Application | Specifications / Examples |

|---|---|---|

| Kato-Katz Kit | Standardized preparation of thick fecal smears for microscopic detection of helminth eggs. | 41.7 mg template, glass slides, glycerol, cellophane strips [23]. |

| Portable Whole Slide Scanner | Automated digital imaging of microscopy slides in field settings. | Schistoscope [23]; Scanners with 4x-10x objectives, automated staging [36]. |

| AI4NTD KK2.0 Dataset | Benchmark dataset for training and evaluating STH and SCH egg detection models. | Contains >10,000 FOV images with ~20,000 annotated eggs [23] [37]. |

| Deep Learning Framework | Software environment for building, training, and deploying AI models. | PyTorch, TensorFlow [7] [37]. |

| Object Detection Model | The core algorithm for detecting and classifying parasite eggs in images. | YOLO variants (v4, v5, v7) [7] [37] [13], EfficientDet [23]. |

| Annotation Software | Tool for experts to create ground truth data by labeling eggs in images. | Software for drawing bounding boxes and assigning class labels [23]. |

| Computing Hardware | Infrastructure for model training and for running inference in deployment. | Training: NVIDIA GPUs (e.g., RTX 3090) [7]. Deployment: Edge computing devices [36] [23]. |

Accurate diagnosis of helminth infections is a critical public health challenge, with soil-transmitted helminths (STH) and schistosomiasis affecting over a billion people globally [36]. Traditional diagnostic methods, particularly manual microscopy of stool samples, are labor-intensive, time-consuming, and require substantial expertise, often leading to variable sensitivity and specificity [37]. Artificial intelligence (AI) has emerged as a transformative approach to automate and improve the accuracy of helminth egg classification in microscopic images.

This application note provides a comprehensive analysis of performance benchmarks for AI-driven classification of helminth eggs, focusing on the critical metrics of precision and recall across both single-species and mixed-species scenarios. We synthesize recent experimental data, detail standardized protocols for model training and evaluation, and identify key factors influencing diagnostic performance to support researchers and developers in creating robust, clinically applicable AI tools.

Performance Benchmarks

Single-Species Classification Performance

Table 1: Performance Benchmarks for Single-Species Helminth Egg Detection

| Helminth Species | Model Architecture | Precision (%) | Recall (%) | F1-Score/% | mAP/@IoU0.5 | Research Context |

|---|---|---|---|---|---|---|

| Ascaris lumbricoides | AI-DP Platform [36] | 95.4 | 91.7 | - | 97.1 | Field-deployable system |

| Trichuris trichiura | AI-DP Platform [36] | 95.9 | 86.7 | - | 94.8 | Field-deployable system |

| Hookworm | AI-DP Platform [36] | 84.6 | 86.6 | - | 91.4 | Field-deployable system |

| Schistosoma mansoni | AI-DP Platform [36] | 89.1 | 79.1 | - | 89.2 | Field-deployable system |

| Clonorchis sinensis | YOLOv4 [7] | 100.0 | - | - | - | Laboratory setting |

| Schistosoma japonicum | YOLOv4 [7] | 100.0 | - | - | - | Laboratory setting |

| Enterobius vermicularis | YOLOv4 [7] | 89.3 | - | - | - | Laboratory setting |

| Fasciolopsis buski | YOLOv4 [7] | 88.0 | - | - | - | Laboratory setting |

| Ascaris lumbricoides | YOLOv7-E6E [37] | - | - | 97.5 | - | In-distribution setting |

| Ascaris lumbricoides | ConvNeXt Tiny [38] | - | - | 98.6 | - | Multiclass classification |

| Taenia saginata | ConvNeXt Tiny [38] | - | - | 98.6 | - | Multiclass classification |

Mixed-Species and Complex Scenario Performance