AI in Parasitic Disease Control: Transforming Diagnostics, Drug Discovery, and Outbreak Prediction

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) and Machine Learning (ML) in parasitology and parasitic disease control, tailored for researchers, scientists, and drug...

AI in Parasitic Disease Control: Transforming Diagnostics, Drug Discovery, and Outbreak Prediction

Abstract

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) and Machine Learning (ML) in parasitology and parasitic disease control, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of AI, including machine and deep learning, and their specific applications in automating parasite diagnostics through image analysis, accelerating antiparasitic drug discovery via virtual screening and target identification, and modeling disease transmission risks. The article further investigates the technical challenges, optimization strategies, and validation frameworks necessary for deploying robust AI solutions, comparing their performance against traditional methods. By synthesizing current research and future directions, this review serves as a critical resource for integrating AI-driven approaches into biomedical research and public health strategies for combating parasitic diseases.

The New Frontier: Understanding AI's Foundational Role in Modern Parasitology

The field of parasitic disease control is undergoing a profound transformation driven by artificial intelligence (AI). Traditional approaches to diagnosis, drug discovery, and outbreak management have faced persistent challenges including time-intensive processes, limited accuracy, and resource constraints, particularly in endemic regions [1]. The AI revolution, marked by the transition from traditional machine learning (ML) to sophisticated deep learning (DL) architectures, is poised to overcome these hurdles. This paradigm shift enables the analysis of complex, high-dimensional data at unprecedented scales and speeds, leading to enhanced diagnostic precision, accelerated therapeutic development, and improved public health interventions [2]. Within parasitology, this technological evolution is proving critical for addressing the significant global burden of diseases such as malaria, leishmaniasis, and trypanosomiasis, which disproportionately affect vulnerable populations in resource-limited settings [1]. This document delineates the core technical principles of this revolution and its transformative applications in parasitic disease research and control.

Theoretical Foundations: From Machine Learning to Deep Learning

Core Concepts and Definitions

The AI revolution in biomedicine is built upon a hierarchy of computational techniques. Artificial Intelligence is the broadest concept, encompassing machines designed to perform tasks that typically require human intelligence. Machine Learning, a subset of AI, involves algorithms that parse data, learn from that data, and then apply learned patterns to make informed decisions or predictions. Traditional ML models often require manual feature engineering, where domain experts identify and extract the most relevant variables from raw data for the model to process [1] [2].

Deep Learning, a specialized branch of ML, mimics the structure and function of the human brain through artificial neural networks with multiple layers of abstraction. These "deep" architectures automatically learn hierarchical feature representations directly from raw data, such as images, genomic sequences, or chemical structures, eliminating the need for manual feature engineering and often achieving superior performance on complex tasks [3] [4]. Key DL architectures making a significant impact in parasitology include Convolutional Neural Networks (CNNs) for image analysis, Recurrent Neural Networks (RNNs) and their variants like Long Short-Term Memory (LSTM) networks for sequential data, and Vision Transformers (ViT) for advanced pattern recognition [3] [4].

Comparative Analysis of ML and DL in Biomedical Research

The transition from ML to DL represents a fundamental shift in approach and capability. The table below summarizes the key technical distinctions relevant to biomedical applications.

Table 1: Comparative Analysis of Machine Learning vs. Deep Learning in Biomedical Contexts

| Feature | Machine Learning (ML) | Deep Learning (DL) |

|---|---|---|

| Data Dependency | Effective on smaller, structured datasets [5] | Requires very large datasets (e.g., thousands of images) for training [3] [4] |

| Feature Engineering | Manual, domain-expert driven | Automatic, hierarchical feature learning from raw data |

| Hardware Requirements | Standard CPUs often sufficient | High-performance GPUs/TPUs typically required |

| Model Interpretability | Generally more interpretable (e.g., decision rules) | Often considered a "black box"; explainable AI techniques needed |

| Typical Applications in Parasitology | Predictive modeling using epidemiological data [1], basic classification | Image-based parasite detection [3], complex drug candidate screening [1], protein structure prediction [6] |

AI in Parasitic Disease Control: A Technical Review of Applications

Diagnostic Revolution via Deep Learning

Microscopy, the longstanding gold standard for parasitic diagnosis, is being revolutionized by DL-based computer vision. CNNs are trained on vast datasets of annotated microscopic images (blood smears, stool samples) to identify and classify parasitic stages with expert-level accuracy [1] [3] [4].

Case Study 1: Advanced Malaria Detection A 2025 study demonstrated a multi-model DL framework for malaria detection using thin blood smear images. The methodology integrated transfer learning from pre-trained models (ResNet-50, VGG16, DenseNet-201) for feature extraction, followed by feature fusion and dimensionality reduction via Principal Component Analysis (PCA). A hybrid classifier combining Support Vector Machine (SVM) and LSTM networks was employed, with a majority voting mechanism finalizing the prediction [3]. This ensemble approach yielded a state-of-the-art accuracy of 96.47%, sensitivity of 96.03%, and specificity of 96.90% [3].

Case Study 2: Intestinal Parasite Identification A 2025 performance validation study compared several DL models for diagnosing human intestinal parasitic infections (IPI) from stool samples. The study benchmarked state-of-the-art models, including YOLOv8-m (an object detection model) and DINOv2 (a self-supervised Vision Transformer), against traditional microscopy performed by human experts [4]. The DINOv2-large model achieved an accuracy of 98.93%, precision of 84.52%, sensitivity of 78.00%, and specificity of 99.57%, demonstrating strong agreement with medical technologists (Cohen's Kappa >0.90) [4].

Table 2: Performance Metrics of Deep Learning Models in Parasite Detection

| Model / Task | Accuracy (%) | Precision (%) | Sensitivity (%) | Specificity (%) | F1-Score (%) |

|---|---|---|---|---|---|

| Multi-model Malaria Detection [3] | 96.47 | 96.88 | 96.03 | 96.90 | 96.45 |

| DINOv2-large (Intestinal Parasites) [4] | 98.93 | 84.52 | 78.00 | 99.57 | 81.13 |

| YOLOv8-m (Intestinal Parasites) [4] | 97.59 | 62.02 | 46.78 | 99.13 | 53.33 |

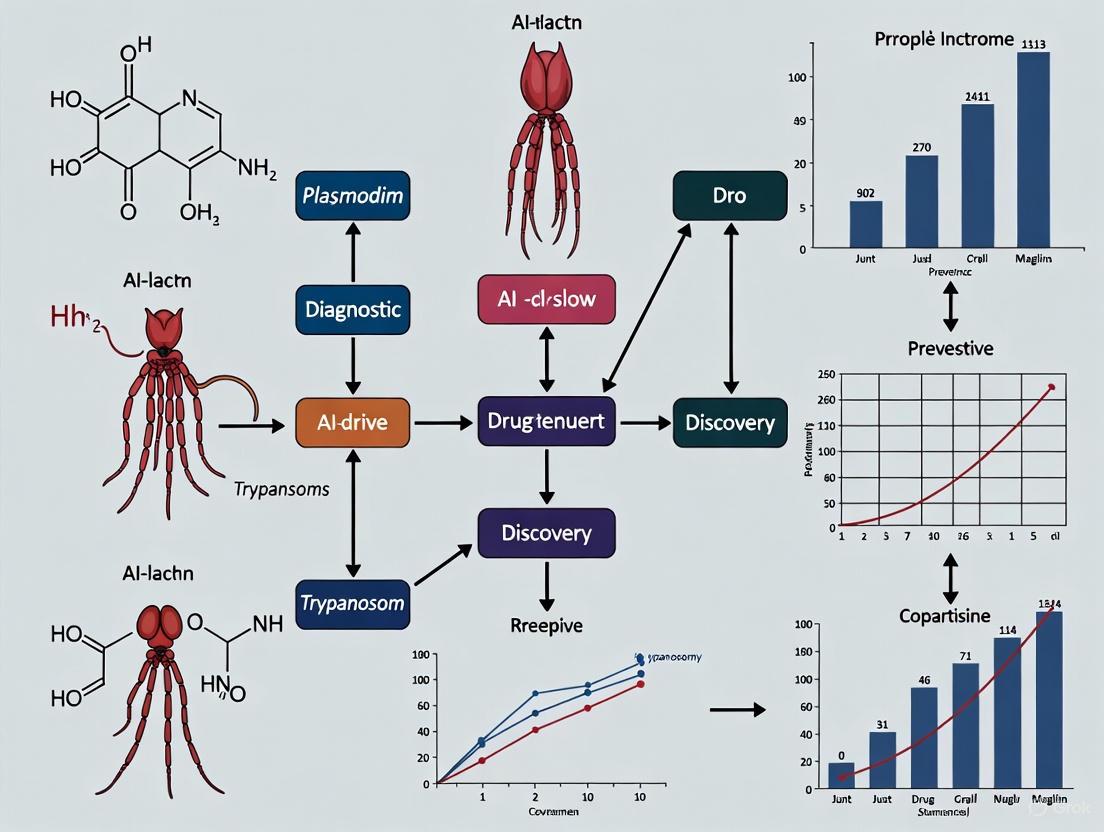

The following diagram illustrates the generalized workflow for a DL-based diagnostic system using microscopic images, as applied in the cited case studies.

AI-Driven Predictive Modeling and Drug Discovery

Beyond diagnostics, AI is revolutionizing the forecasting of outbreaks and the discovery of new antiparasitic drugs.

Predictive Modeling: ML algorithms are being deployed to forecast parasitic disease outbreaks by analyzing vast amounts of epidemiological data, environmental factors (e.g., temperature, rainfall), and population demographics [1] [5]. For instance, a convolutional neural network algorithm trained on 2013–2017 data for vector-borne diseases achieved 88% accuracy in predicting outbreaks of chikungunya, malaria, and dengue [1]. Such models enable proactive public health interventions, resource allocation, and preparedness strategies.

Drug Discovery: The traditional drug discovery process is notoriously lengthy and costly. AI-driven methods are streamlining this pipeline by identifying novel drug targets, predicting the efficacy and safety of candidates, and even repurposing existing drugs [1] [6]. For example:

- DeepMalaria: A Graph CNN-based DL model identified compound DC-9237 as a fast-acting antimalarial candidate; over 85% of its identified compounds showed significant parasite inhibition [1].

- Generative Chemistry: Companies like Exscientia and Insilico Medicine use generative AI and automated precision chemistry to design novel drug-like molecules, compressing discovery timelines from years to months [6]. Insilico Medicine's AI-designed drug for idiopathic pulmonary fibrosis progressed from target discovery to Phase I trials in just 18 months [6].

- Drug Repurposing: The AI system "Eve" identified the antimicrobial fumagillin as a potential inhibitor of Plasmodium falciparum, which was subsequently validated in a mouse model [1].

Table 3: Key AI Platforms and Their Applications in Parasitic Drug Discovery

| AI Platform/Company | Core AI Technology | Application in Parasitology |

|---|---|---|

| Exscientia [6] | Generative Chemistry, Automated Design-Make-Test-Learn Cycles | Design of small-molecule therapeutics; platform can reduce design cycles by ~70% using 10x fewer synthesized compounds. |

| Insilico Medicine [6] | Generative AI, Target Identification | Accelerated target-to-clinic pipeline; used AI-assisted virtual screening to identify antiplasmodial compounds like LabMol-167. |

| DeepMind (AlphaFold) [1] [7] | Deep Learning for Protein Structure Prediction | Prediction of target protein structures in parasites like Trypanosoma, aiding in rational drug design. |

Experimental Protocols and Methodologies

This section provides a detailed methodological breakdown of key experiments cited in this review, serving as a reference for researchers seeking to implement similar approaches.

Objective: To train and validate the performance of deep learning models in identifying and classifying human intestinal parasites from stool sample images.

Sample Preparation and Ground Truth:

- Techniques: Stool samples are processed using the Formalin-Ethyl Acetate Centrifugation Technique (FECT) and Merthiolate-Iodine-Formalin (MIF) technique, performed by human experts (medical technologists). This serves as the reference "ground truth."

- Imaging: A modified direct smear is prepared from the sample. Digital images are captured using a microscope-connected camera.

- Dataset Curation: Images are split into training (80%) and testing (20%) datasets. Each image is meticulously annotated by experts, labeling the bounding boxes and classes of parasitic elements (eggs, cysts, larvae).

Model Training and Evaluation:

- Model Selection: Choose appropriate state-of-the-art models. The cited study evaluated:

- Object Detection Models: YOLOv4-tiny, YOLOv7-tiny, YOLOv8-m.

- Classification Models: ResNet-50.

- Self-Supervised Learning (SSL) Models: DINOv2 (base, small, large).

- Training: Models are trained on the annotated training dataset. SSL models like DINOv2 can leverage unlabeled data for pre-training, followed by fine-tuning on the labeled parasite dataset.

- Performance Metrics: Models are evaluated on the held-out test set using a comprehensive set of metrics calculated from confusion matrices:

- Accuracy, Precision, Sensitivity (Recall), Specificity, F1-Score.

- Area Under the Receiver Operating Characteristic Curve (AUROC).

- Statistical agreement with human experts (Cohen’s Kappa) and Bland-Altman analysis.

Objective: To identify novel, potential antiplasmodial compounds using AI-driven in-silico screening.

Workflow:

- Target Identification: Define a specific molecular target within the malaria parasite (e.g., a specific protein kinase like PK7).

- Library Preparation: Compile a vast virtual library of chemical compounds for screening.

- AI-Based Screening:

- Shape-Based and Machine-Learning Models: Train models on known active and inactive compounds to predict the binding affinity and activity of new molecules.

- Virtual Screening: Run the compound library through the trained AI models to score and rank candidates based on predicted efficacy and desirable pharmacological properties (e.g., ADME - Absorption, Distribution, Metabolism, and Excretion).

- Hit Confirmation: Top-ranked compounds from the virtual screen are procured and subjected to in vitro biological assays to validate antiplasmodial activity (e.g., measuring half-maximal inhibitory concentration - IC50).

- Lead Optimization: Promising "hit" compounds can be further optimized using generative AI models to design analogues with improved potency and safety profiles.

The following diagram maps this multi-stage AI-driven drug discovery workflow.

The Scientist's Toolkit: Essential Research Reagents and Materials

The implementation of AI-driven research in parasitology relies on a foundation of both computational and wet-lab resources. The table below details key solutions and their functions.

Table 4: Essential Research Reagent Solutions for AI-Driven Parasitology Research

| Research Reagent / Material | Function and Application |

|---|---|

| Giemsa Stain | Standard staining reagent for blood smears. Differentiates malaria parasite chromatin (red-purple) and cytoplasm (blue) under microscopy, creating the color contrast essential for training diagnostic AI models [3]. |

| Formalin-Ethyl Acetate (FECT) | A concentration technique for stool samples. It preserves parasitic elements and removes debris, producing cleaner microscopic slides and higher-quality digital images for AI-based diagnosis of intestinal parasites [4]. |

| Merthiolate-Iodine-Formalin (MIF) | A combined fixation and staining solution for stool specimens. It preserves protozoan cysts and helminth eggs while staining internal structures, providing critical morphological features for AI classifiers [4]. |

| Curated Image Datasets | Large, well-annotated collections of microscopic images (e.g., from thin/thick blood smears, stool samples). These are not traditional "reagents" but are fundamental data resources for training, validating, and benchmarking DL models [3] [4]. Publicly available datasets are crucial for reproducibility. |

| Pre-trained Deep Learning Models (e.g., ResNet-50, YOLOv8) | Foundational AI models pre-trained on large general image datasets (e.g., ImageNet). Researchers use transfer learning to fine-tune these models on specific, smaller parasitology datasets, significantly reducing the computational cost and data required to develop accurate diagnostic tools [3] [4]. |

The AI revolution, characterized by the shift from traditional machine learning to sophisticated deep learning, is fundamentally redefining the landscape of parasitic disease control. The technical applications detailed in this document—from DL-powered diagnostics achieving expert-level accuracy to generative AI accelerating the drug discovery pipeline—demonstrate a move towards more precise, proactive, and accessible solutions. While challenges such as data quality, model interpretability, and integration into diverse healthcare systems remain, the progress is unequivocal. The continued collaboration between computational scientists, parasitologists, and clinical researchers is essential to refine these tools, validate them in real-world settings, and ultimately realize their full potential in mitigating the global burden of parasitic diseases.

Parasitic diseases continue to pose a significant global health challenge, disproportionately affecting nearly a quarter of the world's population, particularly in tropical, subtropical, and resource-limited settings. These diseases, including malaria, leishmaniasis, trypanosomiasis, and soil-transmitted helminths, result in severe health complications, economic losses, and perpetuate cycles of poverty. Traditional approaches to parasitic disease control—including diagnostics, drug discovery, and public health interventions—are hampered by lengthy timelines, high costs, and limited scalability, creating a critical unmet need for innovative solutions. Artificial intelligence (AI) has emerged as a transformative tool with immense potential to revolutionize parasitic disease control. This whitepaper examines the persistent challenges in managing parasitic diseases and details how AI-driven approaches in predictive modeling, diagnostics, and drug discovery are poised to create a paradigm shift, offering enhanced speed, accuracy, and efficiency for researchers and drug development professionals.

The Persistent Burden of Parasitic Diseases

Global Health and Economic Impact

Parasitic diseases represent a massive and ongoing global health crisis, with a particularly severe impact on vulnerable populations in developing regions.

Table 1: Global Burden of Select Parasitic Diseases and NTDs

| Disease / Indicator | Global Burden (People Affected or Economic Cost) | Regional Concentration & Notes |

|---|---|---|

| Overall NTD Interventions Needed | 1.495 billion people required interventions in 2023 [8] | 32% decrease from 2010 baseline [8] |

| NTD Burden in Africa | 578 million people affected [9] | Africa ranks second globally (33% of global burden) [9] |

| Overall NTD Disease Burden | 14.1 million DALYs (Disability-Adjusted Life Years) [8] | Measured between 2015 and 2021 [8] |

| NTD-Related Deaths | 119,000 deaths annually [8] | Measured between 2015 and 2021 [8] |

| Malaria Economic Loss (India) | US$ 1,940 million (in 2014) [10] | Country-specific economic drain [10] |

| Visceral Leishmaniasis (Bihar, India) | 11% of annual household expenditure [10] | Devastating impact on individual households [10] |

| Neurocysticercosis (US) | >US$ 400 million annually [10] | Substantial societal costs including healthcare and lost productivity [10] |

The economic impact extends beyond direct healthcare costs to include significant losses in productivity and livestock production. For example, India's dairy production incurs a loss of US$787.63 million annually due to ticks and tick-borne diseases, while porcine cysticercosis results in economic losses exceeding US$164 million in Latin America [10]. These infections lead to impaired cognitive and physical development in children, reduced productivity in adults, and entrenched socioeconomic disparities [10].

Key Challenges Complicating Control Efforts

The persistent burden of parasitic diseases is fueled by a complex interplay of biological, social, and economic factors:

Complex Parasite Life Cycles: Many parasites have intricate life cycles involving multiple hosts, complicating control and eradication efforts [10]. Parasites frequently manipulate host behavior to enhance transmission and can adaptively divide growth between hosts to optimize their life cycles [10].

Drug Resistance: The emergence of drug resistance poses a significant threat to control efforts. Genetic variability among parasites enables them to develop resistance through mechanisms like altered drug uptake and metabolism [10]. Continuous reliance on specific drugs, such as macrocyclic lactones for filarial infections, has led to resistance in certain regions [10].

Poverty and Sanitation: Parasitic diseases are strongly influenced by poverty and poor sanitation, particularly in low- and middle-income countries (LMICs) [10]. Nearly one billion people are affected by soil-transmitted helminths (STHs) globally, with socioeconomic vulnerability correlating with increased transmission risk [10].

Climate Change: Alterations in temperature, rainfall, and host movement due to climate change create favorable conditions for parasites, leading to expanded geographical distribution and increased transmission rates [10]. Rising temperatures have dissolved geospatial boundaries and impacted the basic reproductive number of parasites [10].

Sociopolitical Instability: Countries facing sociopolitical instability, particularly in Africa, bear a high burden of NTDs [9]. Internal displacement and migration disrupt health systems and can facilitate the spread of parasites to new regions [9].

Limitations of Conventional Approaches

Diagnostic Challenges

The diagnosis of parasitic infections has evolved from basic microscopy to advanced molecular techniques, yet significant limitations remain:

Microscopy Limitations: While microscopy revolutionized parasitology in the 17th century, it remains labor-intensive, requires significant expertise, and has variable sensitivity [10]. These limitations are particularly acute in remote regions with limited access to diagnostic facilities and trained personnel [1].

Serological Challenges: Serodiagnostics, including enzyme-linked immunosorbent assays (ELISAs) and immunoblot techniques, have advanced but still face challenges with cross-reactivity and difficulty distinguishing between past and current infections [10].

Molecular Diagnostics: Technologies such as polymerase chain reaction (PCR), multiplex assays, and next-generation sequencing offer improved sensitivity and specificity but can be resource-intensive, costly, and difficult to scale in low-resource settings [10].

Drug Discovery Hurdles

The traditional drug discovery process for parasitic diseases is characterized by extensive timelines, high costs, and substantial failure rates:

Lengthy Timelines: The conventional drug discovery process typically spans around 15 years from initial target identification to market approval [11]. This protracted timeline is ill-suited to addressing the urgent need for new parasitic therapies.

High Costs and Failure Rates: Traditional drug discovery is extremely lengthy and expensive, with an estimated 90% of potential drug candidates failing to progress beyond preclinical testing [1]. This high failure rate is due to various factors, including poor target selection, inadequate efficacy, unacceptable toxicity, and unfavorable pharmacokinetic properties [1].

Empirical Approaches: Traditional processes primarily rely on empirical approaches often lacking predictive models that can accurately assess the likelihood of a drug candidate's success [1]. This leads to inefficient resource allocation and prolonged development timelines.

AI as a Paradigm Shift in Parasitic Disease Control

Artificial intelligence encompasses a broad spectrum of techniques, including machine learning (ML), deep learning (DL), and other advanced computational methods that have demonstrated remarkable potential to address the limitations of conventional approaches to parasitic disease control [11].

AI-Driven Diagnostic Advancements

AI is revolutionizing parasitic diagnostics by enhancing the accuracy, speed, and accessibility of detection methods:

Enhanced Image Analysis: AI algorithms, particularly convolutional neural networks (CNNs), can analyze large datasets of parasitic images from blood smears, stool samples, and tissue biopsies with remarkable accuracy [1] [10]. These systems enable rapid identification and classification of parasitic stages such as eggs, larvae, and adult worms, even in remote settings with limited diagnostic facilities [1].

Consistency and Throughput: AI-powered diagnostic tools offer more consistent readings and can process a high volume of samples, significantly increasing laboratory throughput [2]. This capability is particularly valuable for large-scale screening programs and surveillance efforts in endemic regions.

Predictive Modeling for Outbreak Preparedness

Predictive AI modeling is transforming the approach to outbreak preparedness and response by enabling proactive interventions:

Epidemiological Forecasting: Predictive models analyze vast amounts of epidemiological data, environmental factors, and population demographics to identify patterns and trends in disease incidence [1]. For example, a convolutional neural network (CNN) algorithm trained with 2013-2017 data for chikungunya, malaria, and dengue predicted disease outbreaks with 88% accuracy [1].

Geospatial Analysis: Researchers are using geospatial AI that integrates ML algorithms with geographic information system (GIS)-based approaches for mapping disease risk. One study successfully mapped cutaneous leishmaniasis risk in Isfahan province, identifying northern and central areas as high-risk regions [1].

Accelerating Drug Discovery and Development

AI-driven approaches are streamlining multiple aspects of the drug discovery pipeline for parasitic diseases:

Virtual Screening and Target Identification: AI-driven virtual screening approaches leverage machine learning algorithms to rapidly sift through vast datasets of chemical compounds and predict their biological activity against specific drug targets [1] [11]. These algorithms analyze structural features, physicochemical properties, and molecular interactions to prioritize compounds with the highest likelihood of therapeutic efficacy.

De Novo Drug Design: Generative AI models, including generative adversarial networks (GANs) and variational autoencoders, can design novel molecular structures with desired pharmacological profiles [12]. These approaches can generate optimized molecular structures targeting specific biological activity while matching specific pharmacological and safety profiles [11].

Drug Repurposing: AI algorithms can analyze large-scale biomedical data to uncover hidden relationships between existing drugs and parasitic diseases, facilitating the identification of new therapeutic uses for approved drugs [11]. This approach is particularly valuable for diseases affecting developing countries, as it can significantly accelerate clinical translation [11].

Experimental Protocols and Workflows

AI-Assisted Diagnostic Workflow

The implementation of AI for parasitic diagnosis follows a structured workflow that ensures accuracy and reliability.

AI-Powered Parasite Diagnostic Workflow

Detailed Methodology:

Sample Collection and Preparation: Collect appropriate clinical samples (blood, stool, tissue biopsies) using standard protocols. For stool samples, this may include concentration techniques such as formalin-ethyl acetate sedimentation. Prepare microscopic slides using appropriate staining (e.g., Giemsa for blood parasites, Kato-Katz for helminths) [1] [10].

Digital Imaging: Capture high-resolution digital images of microscopy slides using automated digital microscopy systems or smartphone-enabled portable devices. Ensure consistent magnification and lighting conditions across images. A minimum of 1,000-10,000 annotated images per parasitic species is typically required for robust model training [1] [10].

AI Preprocessing: Implement image preprocessing techniques to enhance quality and standardize inputs. This includes:

- Color normalization to correct for staining variations

- Background subtraction to improve object contrast

- Image augmentation (rotation, flipping, scaling) to increase dataset diversity and improve model generalization [11]

Feature Extraction: Utilize convolutional neural networks (CNNs) to automatically extract relevant morphological features. Lower layers detect simple features (edges, textures), while deeper layers identify complex patterns specific to different parasite species and life cycle stages [1] [10].

CNN Classification: Implement a classification algorithm, typically using a softmax activation function in the final layer, to generate probability distributions across possible parasite identities. Common architectures include ResNet, VGG, or custom CNN architectures optimized for parasitic morphology [1].

Result Validation: Establish a validation protocol where AI predictions are compared against expert microbiologist interpretations for a subset of samples. Calculate performance metrics including sensitivity, specificity, and accuracy, with a common benchmark being >90% accuracy for field-deployable systems [1] [2].

AI-Driven Drug Discovery Pipeline

The application of AI in antiparasitic drug discovery follows a multi-stage process that significantly compresses traditional timelines.

AI-Driven Antiparasitic Drug Discovery Pipeline

Detailed Methodology:

Target Identification: Identify essential proteins or enzymes critical for parasite survival and replication using genomic, proteomic, and structural information. AI tools like AlphaFold can predict protein structures for targets with unknown experimental structures [1] [12].

Data Aggregation: Compile diverse datasets for model training:

Model Training: Develop predictive models using various AI approaches:

Compound Generation: Utilize generative AI models for de novo design of novel compounds. For example, Generative Tensorial Reinforcement Learning (GENTRL) can design novel kinase inhibitors, as demonstrated with DDR1 inhibitors for fibrosis, reducing discovery time from years to 21 days [12].

Virtual Screening: Implement AI-powered virtual screening to prioritize candidates. This includes:

Experimental Validation: Conduct in vitro and in vivo testing of top-ranked compounds:

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Research Reagents for AI-Driven Parasitology Research

| Reagent / Material | Function in AI-Driven Research | Application Examples |

|---|---|---|

| Annotated Image Datasets | Training and validation data for AI diagnostic models; enables feature recognition [1] [10] | Public parasite image repositories; in-house curated datasets of blood smears, stool samples [1] |

| High-Throughput Screening Assays | Generate bioactivity data for ML model training; compound validation [1] [11] | In vitro parasite growth inhibition assays; target-based screening [1] |

| Chemical Compound Libraries | Foundation for virtual screening; training data for generative models [11] [12] | Commercially available libraries (e.g., ZINC); proprietary compound collections [12] |

| QSAR Modeling Software | Predict biological activity from chemical structure; optimize lead compounds [1] [11] | Commercial platforms (e.g., Schrödinger); open-source tools; custom ML models [1] |

| Generative AI Platforms | De novo molecular design; chemical space exploration [12] | GENTRL for DDR1 inhibitors; GANs/VAEs for novel compound generation [12] |

The significant unmet needs in parasitic disease control—spanning diagnostics, drug discovery, and epidemic preparedness—create an imperative for innovative solutions that can overcome the limitations of conventional approaches. Artificial intelligence represents a paradigm shift in our ability to address these challenges, offering transformative potential across the entire spectrum of parasitic disease control. From AI-enhanced microscopy that improves diagnostic accuracy in remote settings to generative AI models that dramatically accelerate therapeutic development, these technologies are poised to revolutionize how researchers and drug development professionals combat these persistent global health threats. The integration of AI into parasitology research requires disciplined implementation, robust validation, and cross-disciplinary collaboration, but offers the promise of significantly reducing the global burden of parasitic diseases within the coming decade.

The fight against parasitic diseases, which impose a significant burden on global health and livestock productivity, is being transformed by artificial intelligence (AI) [13]. Conventional diagnostic methods, such as microscopy and serological assays, are often constrained by limitations in sensitivity, specificity, and reliance on skilled personnel [13]. In this context, AI paradigms are emerging as powerful tools to automate diagnostics, enhance predictive surveillance, and accelerate research. This whitepaper provides an in-depth technical overview of three core AI methodologies—Convolutional Neural Networks (CNNs), Random Forest, and Predictive Modeling—detailing their fundamental principles, experimental protocols, and specific applications within parasitic disease control research. The integration of these technologies, particularly into novel diagnostic platforms like CRISPR-Cas systems, represents a promising frontier for next-generation solutions in both human and veterinary medicine [13].

Core AI Paradigms: Technical Foundations

Convolutional Neural Networks (CNNs)

CNNs are a class of deep learning algorithms specifically designed for processing structured grid data, such as images. Their architecture is inspired by the human visual cortex, making them exceptionally adept at automatically learning hierarchical features from pixel data without the need for hand-crafted feature extraction [14].

2.1.1 Architectural Components and Workflow A typical CNN comprises several key layers that work in concert. The process begins with convolutional layers, which apply a set of learnable filters (or kernels) to the input image. Each filter slides across the input, computing element-wise multiplications and summations to produce feature maps that highlight specific patterns like edges or textures [14]. Following this, activation functions, most commonly the Rectified Linear Unit (ReLU), are applied to introduce non-linearity, enabling the network to learn a wider range of complex representations [14]. Pooling layers (e.g., max pooling) then downsample the feature maps, reducing their spatial dimensions to control computational cost and overfitting by making the representations more invariant to small input translations [14]. Finally, after several cycles of convolution and pooling, the resulting features are flattened and passed through one or more fully connected layers to perform the final classification or regression task [14].

2.1.2 Application in Parasitic Disease Research In parasitology, CNNs have been widely adopted for the automated analysis of medical images. A prominent application is the diagnosis of malaria from images of Giemsa-stained blood smears. CNNs can be trained to identify and classify Plasmodium parasites within red blood cells, a task that achieves high accuracy and significantly reduces diagnostic time and human error [15] [16]. Transfer learning, a technique where a pre-trained CNN (e.g., VGG16, ResNet) is fine-tuned on a specialized medical dataset, is commonly employed to achieve state-of-the-art performance even with limited data [17].

Random Forest

Random Forest (RF) is an ensemble machine learning algorithm used for both classification and regression tasks. It operates by constructing a multitude of decision trees during training and outputting the mode of the classes (for classification) or mean prediction (for regression) of the individual trees [14].

2.2.1 Core Algorithmic Mechanics The "forest" is built using a technique called bagging (bootstrap aggregating), which involves training each tree on a random subset of the original data, sampled with replacement. This ensures diversity among the trees [14]. Furthermore, when splitting nodes in each decision tree, the algorithm is restricted to a random subset of features. This dual randomness—in data and features—decorrelates the trees, making the ensemble more robust and less prone to overfitting than a single decision tree [18] [14]. Node splitting is typically optimized using metrics like Gini impurity, which measures the misclassification probability of a randomly chosen sample from a node [14]. The final prediction is determined by majority voting (for classification) or averaging (for regression) across all trees in the forest [14].

Predictive Modeling

Predictive modeling leverages statistical and machine learning techniques to forecast future outcomes based on historical data. In the context of parasitic diseases, this extends beyond image-based diagnosis to forecasting disease incidence and outbreak risk.

2.3.1 Modeling Techniques and Temporal Dynamics Techniques range from traditional time-series models to more advanced machine learning and deep learning algorithms. For instance, Long Short-Term Memory (LSTM) networks, a type of recurrent neural network, have demonstrated high accuracy in forecasting malaria cases by effectively modeling temporal dependencies in epidemiological data [19]. These models can integrate various predictors, including historical case counts, meteorological data (e.g., temperature, humidity), and social factors, to predict morbidity and identify high-risk areas [16] [19]. Statistical analyses from such models have revealed, for example, that temperatures exceeding 34°C can halt mosquito vector reproduction, thereby slowing malaria transmission [19].

Integrated AI Frameworks and Experimental Protocols

While powerful individually, CNNs and Random Forest are often combined into hybrid models to leverage their complementary strengths. The following section outlines a standard protocol for such a framework and its application.

Hybrid CNN-Random Forest Protocol for Image Analysis

This protocol describes a late fusion model where a CNN acts as a feature extractor and a Random Forest classifier makes the final decision, ideal for tasks like segmenting and classifying parasitic structures in microscopy images [14].

Experimental Workflow Overview The following diagram illustrates the key stages of the hybrid CNN-RF model pipeline for image-based parasitic disease analysis.

Step-by-Step Methodology:

Data Acquisition and Preparation:

- Image Collection: Acquire a dataset of relevant images. For malaria diagnosis, this would be peripheral blood smear images [15] [17]. For studying spore morphology, Transmission Electron Microscopy (TEM) images are used [14].

- Preprocessing: Apply preprocessing techniques to standardize and enhance image quality. This typically includes:

- Noise Reduction: Using Gaussian or median filters to smooth images and reduce artifacts [17] [14].

- Segmentation (Optional but impactful): For some tasks, segmenting the region of interest first can significantly boost performance. The Otsu thresholding method, for instance, has been used to effectively isolate parasitic regions in malaria-infected cells, reducing background noise and improving subsequent classification accuracy from 95% to 97.96% in one study [15].

- Data Augmentation: Artificially expand the training dataset by applying random transformations (e.g., rotation, flipping, scaling) to improve model robustness and generalizability [17].

Model Training and Implementation:

- CNN Feature Extraction: A CNN architecture (e.g., a custom 12-layer CNN or a pre-trained model like VGG16) is trained on the image data. The key is to use the outputs of the final layers before the classification layer as a high-level, low-dimensional feature vector that represents the essential characteristics of each input image [18] [14] [20].

- Random Forest Classification: The feature vectors extracted by the CNN for all images in the training set are used as the input features for a Random Forest classifier. The RF is then trained to map these features to the correct labels (e.g., "parasitized" or "uninfected") [18] [14].

- Hyperparameter Tuning: Optimize hyperparameters for both the CNN (e.g., learning rate, number of filters) and the RF (e.g., number of trees, maximum depth) to maximize performance on a validation set.

Model Evaluation:

- Performance Metrics: Evaluate the final hybrid model on a held-out test set using standard metrics, including Accuracy, Precision, Sensitivity (Recall), and F1-score [17] [14].

- Comparison: Compare the hybrid model's performance against standalone CNNs or other machine learning classifiers to demonstrate its superior robustness and generalization ability, particularly for non-linear data [14].

Advanced Ensemble Protocol for Enhanced Diagnosis

For even higher diagnostic accuracy, an advanced ensemble framework integrating multiple pre-trained models can be employed, as demonstrated in recent malaria detection research achieving 97.93% test accuracy [17].

Advanced Diagnostic Workflow The diagram below visualizes the adaptive weighted ensemble process that combines multiple deep-learning models for superior diagnostic performance.

Methodology:

- Model Selection and Training: Select multiple pre-trained CNN architectures (e.g., VGG16, VGG19, ResNet50V2, DenseNet201). Fine-tune each model on the target medical image dataset [17].

- Prediction Generation: Each model in the ensemble generates its own prediction for a given input image.

- Adaptive Weighted Averaging: Instead of simple averaging, assign a dynamic weight to each model's prediction based on its individual performance on a validation set. This gives more influence to stronger models [17].

- Hard Voting Consensus: Combine the weighted predictions with a hard voting mechanism, where the final classification output is determined by the consensus of the models, further enhancing reliability [17].

Quantitative Performance of AI Models

The following tables summarize the performance metrics of various AI models as reported in recent literature for parasitic disease applications, particularly malaria diagnosis.

Table 1: Performance Comparison of AI Models in Malaria Detection from Blood Smear Images

| Model / Approach | Reported Accuracy | Precision | Sensitivity/Recall | F1-Score | Key Features |

|---|---|---|---|---|---|

| Hybrid CNN-RF (RF-CNN-F) [18] | 99.18% | - | - | - | Uses CNN predictions as features for RF; excellent accuracy. |

| Optimized CNN + Otsu Segmentation [15] | 97.96% | - | - | - | Simple preprocessing (Otsu) significantly boosts baseline CNN (95%). |

| Advanced Ensemble (VGG16, ResNet, etc.) [17] | 97.93% | 0.9793 | - | 0.9793 | Adaptive weighted averaging of multiple transfer learning models. |

| Custom Standalone CNN [17] | 97.20% | - | - | 0.9720 | Serves as a baseline for ensemble model comparison. |

| CNN-SVM Hybrid [17] | 82.47% | - | - | 0.8266 | Highlights performance difference with CNN-RF hybrid. |

Table 2: Performance of Predictive Models for Forecasting Malaria Incidence

| Model | Task | Reported Accuracy | RMSE | Key Findings |

|---|---|---|---|---|

| LSTM [19] | Forecasting malaria cases in Adamaoua, Cameroon | 76% | 0.08 | Identified high-risk areas; cases projected to peak in 2029. |

| AI-Powered Predictive Analytics [16] | Forecasting malaria outbreaks | - | - | Can predict outbreaks up to 9 months in advance with ~80% accuracy. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The development and application of the AI models described rely on a foundation of wet-lab and computational resources. The following table details key reagents and materials essential for research in this field.

Table 3: Key Research Reagent Solutions for AI-Driven Parasitic Disease Research

| Reagent / Material | Function in Research Context |

|---|---|

| Stained Blood Smears | Provides the primary image data for training and testing AI models for malaria diagnosis. Staining (e.g., Giemsa) highlights parasites within red blood cells [15] [17]. |

| CRISPR-Cas Reagents (Cas12, Cas13) | Forms the core of next-generation molecular diagnostics. These endonucleases, combined with amplification techniques, provide high-sensitivity detection of parasitic nucleic acids, generating data that can be analyzed or validated by AI systems [13]. |

| Nucleic Acid Amplification Kits (RPA, LAMP) | Used to pre-amplify target DNA/RNA from parasites before CRISPR-Cas detection. This enhances the sensitivity of the diagnostic assay, enabling detection of low-parasitemia infections [13]. |

| Transmission Electron Microscopy (TEM) Reagents | Chemicals for sample preparation (e.g., glutaraldehyde for fixation) used to create high-resolution images of parasitic ultrastructure. These images are used for advanced AI segmentation and classification tasks [14]. |

| Publicly Accessible Image Datasets | Curated datasets (e.g., from Kaggle) of parasitized and uninfected cells. These are critical for training, validating, and benchmarking new AI models in a standardized manner [17] [20]. |

The integration of CNNs, Random Forest, and predictive modeling into parasitology research represents a paradigm shift in how we diagnose, monitor, and forecast parasitic diseases. Hybrid models that leverage the feature extraction power of CNNs with the robust classification of Random Forest have demonstrated superior performance in automating image-based diagnosis, achieving accuracies exceeding 97% [18] [17]. Meanwhile, predictive models like LSTM networks offer a powerful tool for public health planning by forecasting outbreak trajectories. The future of this field lies in the deeper integration of these AI paradigms with emerging diagnostic technologies, such as CRISPR-Cas, and their deployment in scalable, point-of-care devices. Overcoming challenges related to data interoperability, infrastructure in resource-limited settings, and model interpretability will be crucial to fully realizing the potential of AI in the global effort to control and eliminate parasitic diseases [16] [13].

The One Health framework is an integrated, unifying approach that aims to sustainably balance and optimize the health of people, animals, and ecosystems [21] [22]. It recognizes that the health of humans, domestic and wild animals, plants, and the wider environment are closely linked and interdependent [21]. This approach mobilizes multiple sectors, disciplines, and communities at varying levels of society to work together to foster well-being and tackle threats to health and ecosystems [22]. The approach can be applied at the community, subnational, national, regional, and global levels and relies on shared and effective governance, communication, collaboration, and coordination, often referred to as the "4 Cs" [21] [22].

The recent SARS-CoV-2 pandemic has underscored the close connections between humans, animals, and the shared environment, highlighting the urgent need for operationalizing One Health principles in disease control strategies [22]. This is particularly relevant for parasitic diseases, which continue to plague populations worldwide, especially in resource-limited settings, and disproportionately affect vulnerable populations [1]. The inevitable future of frequent outbreaks and pandemics, fueled by factors such as human expansion into wildlife habitats, climate change, and increased global movement, necessitates more resilient health-care innovations and interventions [1] [23] [24].

Core Principles and Components of One Health

Foundational Principles

The One Health approach is grounded in several fundamental principles that guide its implementation [22]:

- Equity between sectors and disciplines

- Sociopolitical and multicultural parity and inclusion of communities and marginalized voices

- Socioecological equilibrium that seeks harmonious balance in human-animal-environment interactions

- Stewardship and responsibility for sustainable solutions that recognize animal welfare and ecosystem integrity

- Transdisciplinarity and multisectoral collaboration, including modern and traditional knowledge systems

Key Interconnected Health Domains

One Health issues encompass a broad spectrum of shared health threats [24]:

- Emerging, re-emerging, and endemic zoonotic diseases

- Neglected tropical diseases and vector-borne diseases

- Antimicrobial resistance

- Food safety and food security

- Environmental contamination and climate change impacts

This framework is particularly relevant for parasitic disease control, as many parasites have complex life cycles involving human, animal, and environmental components. The rising incidence of diseases like malaria (263 million cases in 2023) demonstrates the urgent need for innovative, integrated control strategies [16].

Quantitative Data Integration in One Health

Effective One Health implementation requires the integration of diverse datasets from human, animal, and environmental domains. The table below summarizes key data types relevant to parasitic disease control.

Table 1: Data Types for One Health Parasitic Disease Surveillance

| Domain | Data Category | Specific Metrics | Application Examples |

|---|---|---|---|

| Human | Epidemiological Data | Parasite incidence/prevalence, case demographics, treatment outcomes | Monitoring malaria transmission intensity [1] [16] |

| Mobility Patterns | Mobile phone data, travel history, commuter flows | Understanding human-vector exposure risk [23] | |

| Animal | Wildlife Movement | GPS collar data, migration patterns, habitat use | Assessing deer-human interactions for zoonoses [23] |

| Domestic Animal Health | Livestock parasite loads, seroprevalence, morbidity | Tracking zoonotic parasite reservoirs [13] | |

| Environmental | Climatic Factors | Temperature, precipitation, humidity | Predicting vector habitat suitability [1] [16] |

| Land Use | Vegetation indices, urbanization, water bodies | Mapping disease risk areas [23] |

Analytical Approaches for Integrated Data

Statistical analysis of integrated One Health data, particularly parasite counts, presents unique challenges due to typical skewed distributions with excess zeros (non-infected individuals) [25]. The table below compares appropriate analytical methods for such data.

Table 2: Analytical Methods for Skewed Parasite Count Data

| Method | Appropriate Use Cases | Advantages | Limitations |

|---|---|---|---|

| Non-parametric Tests | Initial group comparisons when distribution assumptions violated | Does not require normal distribution; robust to outliers | Less powerful than parametric tests when assumptions met [25] |

| Negative Binomial Regression | Modeling overdispersed count data common in parasitology | Specifically handles variance greater than mean | More complex interpretation than Poisson regression [23] [25] |

| Generalized Linear Mixed Models (GLMMs) | Hierarchical data with repeated measures or spatial correlation | Accounts for dependency in clustered data | Computational complexity with large datasets [25] |

| Machine Learning Algorithms | Complex pattern recognition in multidimensional One Health data | Handles nonlinear relationships; feature importance ranking | Requires large sample sizes; risk of overfitting [1] [16] |

One Health Data Integration Framework

The following diagram illustrates the conceptual framework for integrating human, animal, and environmental data within the One Health approach to parasitic disease control:

Artificial Intelligence in One Health Applications for Parasitic Disease Control

AI Applications Across the Parasitic Disease Continuum

Artificial intelligence has emerged as a transformative tool with immense promise in parasitic disease control within the One Health framework, offering enhanced diagnostics, precision drug discovery, predictive modeling, and personalized treatment [1]. The following diagram illustrates AI workflows for parasitic disease control:

AI-Enhanced Diagnostic and Predictive Methodologies

Image-Based Parasite Detection Using Convolutional Neural Networks

Experimental Protocol:

- Sample Collection: Prepare blood smears (for malaria) or stool samples (for intestinal parasites) using standard clinical procedures [1].

- Image Acquisition: Capture high-resolution digital images of samples using standardized microscopy protocols at 1000x magnification.

- Dataset Curation: Compile a diverse image dataset with expert-annotated parasite identifications, ensuring representation of various parasite life stages and species.

- Model Training: Implement a Convolutional Neural Network (CNN) architecture (e.g., ResNet, VGG) using transfer learning approaches. Train on 70% of the dataset with data augmentation techniques (rotation, flipping, brightness adjustment) to improve model robustness.

- Validation: Evaluate model performance on 15% validation set, optimizing hyperparameters to balance precision and recall.

- Testing: Assess final model performance on held-out 15% test set, comparing against expert microscopist readings as gold standard.

Performance Metrics: AI models have achieved diagnostic accuracies exceeding 88% for malaria parasite detection, significantly reducing diagnostic time and human error compared to conventional microscopy [1] [16].

Predictive Modeling of Parasitic Disease Outbreaks

Experimental Protocol:

- Data Compilation: Integrate heterogeneous datasets including historical case counts, atmospheric data (temperature, precipitation, humidity), environmental factors (vegetation indices, water bodies), and population demographics [1] [16].

- Feature Engineering: Create temporal features (lagged case counts, seasonal indicators) and spatial features (proximity to water bodies, land use characteristics).

- Model Selection: Implement ensemble machine learning methods (Random Forest, Gradient Boosting) or deep learning approaches (LSTM networks) capable of capturing complex spatiotemporal patterns.

- Training Regimen: Train models on historical data using time-series cross-validation to assess temporal generalization performance.

- Prospective Validation: Deploy models in real-time surveillance systems and compare predicted outbreaks with actual epidemiological data.

Performance Metrics: Predictive AI models have demonstrated approximately 80% accuracy in forecasting malaria outbreaks up to 9 months in advance when incorporating factors like sea surface temperatures and historical transmission patterns [16].

AI-Driven Drug Discovery for Parasitic Diseases

Experimental Protocol:

- Target Identification: Use AI-driven computational methods to analyze genomic, proteomic, and structural information to identify essential parasitic proteins or enzymes [1].

- Virtual Screening: Implement deep learning architectures (e.g., Graph CNN-based models like DeepMalaria) to screen chemical compound libraries against identified targets [1].

- Hit Confirmation: Apply AI models such as "Eve" to integrate screening, confirmation, and lead generation processes, identifying promising candidate compounds [1].

- Lead Optimization: Utilize neural network-based models to optimize pharmacological properties (e.g., oral absorption, metabolic stability) of lead compounds [1].

- Experimental Validation: Progress top AI-identified candidates to in vitro and in vivo testing in appropriate disease models.

Performance Metrics: AI-assisted virtual screening has identified novel antiplasmodial compounds (e.g., LabMol-167) that inhibit Plasmodium falciparum at nanomolar concentrations with low cytotoxicity in mammalian cells [1]. Deep learning models have successfully identified potential drug candidates where more than 85% of compounds showed parasite inhibition with ≥50% effectiveness [1].

Advanced Diagnostic Technologies in One Health

CRISPR-Cas Systems for Parasitic Disease Detection

CRISPR-Cas systems have emerged as transformative tools in molecular diagnostics, offering high sensitivity, specificity, rapidity, and cost-effectiveness [13]. These systems are particularly valuable for parasitic disease detection within the One Health framework due to their potential for field deployment and point-of-care applications.

Table 3: CRISPR-Cas Systems for Parasitic Disease Diagnostics

| CRISPR System | Key Features | Detection Mechanism | Parasitic Disease Applications |

|---|---|---|---|

| Cas12 | Most widely utilized; collateral cleavage of single-stranded DNA | Fluorescent or colorimetric readout via reporter molecules | Malaria, Leishmaniasis, Trypanosomiasis [13] |

| Cas13 | RNA targeting; collateral cleavage of single-stranded RNA | Fluorescent or lateral flow detection | Soil-transmitted helminths, Schistosomiasis [13] |

| Cas9 | Programmable DNA cleavage; requires additional reporter systems | Lateral flow assays with gold nanoparticles | Cryptosporidiosis, Giardiasis [13] |

| Cas10 | Emerging promise; multi-protein effector complex | Collateral cleavage of both DNA and RNA | Potential for multiplexed parasite detection [13] |

Integrated Diagnostic Workflows

Experimental Protocol: CRISPR-Cas Diagnostic Assay for Parasitic Detection

- Sample Processing: Extract nucleic acids from clinical samples (blood, stool, tissue) using simplified protocols compatible with field deployment [13].

- Target Amplification: Implement isothermal amplification techniques (LAMP, RPA, RAA) to enhance detection sensitivity, typically operating at constant temperatures (60-65°C) for 15-30 minutes [13].

- CRISPR-Cas Detection: Program Cas effector proteins (Cas12, Cas13) with guide RNAs specific to parasitic target sequences. Upon target recognition, collateral cleavage activity activates.

- Signal Detection: Utilize fluorescent reporters for quantitative analysis or lateral flow strips for visual, binary readouts suitable for low-resource settings.

- Result Interpretation: Develop smartphone-based applications or simple readers to standardize result interpretation and facilitate data recording.

Performance Metrics: CRISPR-Cas systems coupled with isothermal amplification can detect target sequences at femtomolar to attomolar concentrations, enabling identification of low-parasitemia infections that challenge conventional diagnostics [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents for One Health Parasitic Disease Research

| Reagent/Material | Specifications | Application in One Health Research | Representative Examples |

|---|---|---|---|

| GPS Collars | High-resolution (hourly locations), long battery life | Wildlife movement tracking to assess human-animal interactions and disease transmission risk [23] | White-tailed deer movement studies in urban environments [23] |

| CRISPR-Cas Reagents | Lyophilized Cas proteins, guide RNAs, reporter molecules | Point-of-care diagnostic development for field-based parasite detection [13] | Cas12-based detection of malaria parasites in blood samples [13] |

| AI Training Datasets | Curated, annotated medical images (microscopy, radiology) | Training convolutional neural networks for automated parasite detection [1] [16] | Malaria blood smear image datasets with expert annotations [1] |

| Environmental DNA (eDNA) Sampling Kits | Water filtration systems, DNA preservation buffers | Detecting parasite presence in aquatic environments and vector habitats [26] | Schistosoma detection in freshwater bodies [26] |

| Human Mobility Data | Anonymized, aggregated mobile device location data | Modeling human movement patterns and disease spread potential [23] | Advan Patterns data assessing human-deer spatial overlap [23] |

Implementation Challenges and Future Directions

While the One Health framework offers significant promise for revolutionizing parasitic disease control, several challenges remain in its full implementation:

Data Integration Barriers: Significant technical and ethical challenges exist in integrating human, animal, and environmental data streams, particularly regarding data ownership, privacy protection, and standardization across sectors [23] [26]. Future efforts should focus on developing interoperable data standards and secure data sharing frameworks that maintain privacy while enabling comprehensive analysis.

Technological Access Limitations: The promising AI and CRISPR-based technologies face implementation barriers in resource-limited settings where parasitic diseases are most prevalent, including limited infrastructure, technical training requirements, and cost considerations [1] [16] [13]. Research should prioritize development of ruggedized, low-cost, and user-friendly implementations that can function in challenging field conditions.

Analytical Complexity: The multidimensional nature of One Health data requires advanced analytical approaches that can handle complex, nonlinear relationships across biological, environmental, and social domains [1] [25]. Future methodological development should focus on interpretable AI approaches that provide not only predictions but also actionable insights for intervention planning.

The integration of artificial intelligence with the One Health framework represents a paradigm shift in how we approach parasitic disease control. By leveraging interconnected data streams from human, animal, and environmental domains, researchers and public health professionals can develop more effective, targeted interventions that address the complex ecological context of parasitic diseases. Continued innovation in AI methodologies, coupled with strengthened cross-sectoral collaboration, will be essential for realizing the full potential of this integrated approach to achieve sustainable disease control and elimination goals.

From Theory to Practice: Methodological Applications of AI in Parasite Diagnostics and Drug Development

The field of parasitic disease control is undergoing a profound transformation through the integration of artificial intelligence (AI). Parasitic infections, including soil-transmitted helminths (STHs) and intestinal protozoa, continue to plague global populations, particularly in resource-limited settings where conventional healthcare delivery faces significant challenges [1]. Traditional diagnostic methods have relied heavily on manual microscopy examination of blood, stool, and tissue samples—a process that is inherently subjective, time-consuming, and requires highly trained, skilled technologists [27] [28]. These limitations are particularly problematic in regions where parasitic diseases are most endemic, as the scarcity of expert microscopists can hinder both individual patient care and large-scale public health monitoring programs [29].

AI-powered microscopy represents a paradigm shift in parasitic disease control, offering the potential for enhanced diagnostics, precision drug discovery, predictive modeling, and personalized treatment strategies [1]. By leveraging machine learning (ML) and deep learning (DL) algorithms, particularly convolutional neural networks (CNNs), these systems can analyze vast datasets of microscopic images to identify parasitic elements with remarkable accuracy and speed [1]. This technological advancement addresses critical limitations of traditional methods by providing faster, more consistent results while reducing the burden on human experts [27]. The integration of AI into parasitology not only improves diagnostic accuracy but also enables more proactive herd monitoring and targeted treatment interventions, ultimately leading to improved health outcomes and reduced economic losses from parasitic infections [27].

Performance Comparison: AI vs. Traditional Microscopy

Diagnostic Accuracy for Soil-Transmitted Helminths

Table 1: Comparison of diagnostic sensitivity for soil-transmitted helminths between manual microscopy and AI-based methods against a composite reference standard (n=704 smears) [29].

| Parasite Species | Manual Microscopy Sensitivity (%) | Autonomous AI Sensitivity (%) | Expert-Verified AI Sensitivity (%) |

|---|---|---|---|

| A. lumbricoides | 50.0 | 50.0 | 100.0 |

| T. trichiura | 31.2 | 84.4 | 93.8 |

| Hookworms | 77.8 | 87.4 | 92.2 |

Table 2: Specificity comparison for soil-transmitted helminth detection across diagnostic methods [29].

| Parasite Species | Manual Microscopy Specificity (%) | Autonomous AI Specificity (%) | Expert-Verified AI Specificity (%) |

|---|---|---|---|

| A. lumbricoides | 100.0 | 99.4 | 99.7 |

| T. trichiura | 98.9 | 96.7 | 97.8 |

| Hookworms | 99.1 | 95.7 | 97.1 |

Economic and Operational Impact

Table 3: Operational and economic impact of AI-powered microscopy for livestock parasite detection [27].

| Parameter | Traditional Microscopy | AI-Powered System |

|---|---|---|

| Analysis Time | 2-5 days | 10 minutes |

| Technician Training | Extensive training required | Minimal training required |

| Cost Implications | Higher long-term personnel costs | Fraction of the cost |

| Economic Burden | $141 million annually (NC cattle industry) | Potential for significant reduction |

Experimental Protocols and Methodologies

AI-Assisted Digital Microscopy for Intestinal Protozoa

The Clinical Parasitology Laboratory at Mayo Clinic has implemented a comprehensive digital pathology workflow for the detection of intestinal protozoa in trichrome-stained stool specimens [28]. This protocol leverages the Techcyte intestinal protozoa algorithm, which utilizes a deep convolutional neural network trained to identify protozoan parasites in digitally scanned samples.

Sample Preparation Protocol:

- Specimen Preservation: Stool samples are preserved in Ecofix or mercury/copper-free PVA to maintain morphological integrity while eliminating toxic heavy metals traditionally used in fixatives [28].

- Slide Preparation: A thin monolayer of stool is created using concentrated stool specimen to optimize scanning quality while maintaining diagnostic sensitivity. This represents a modification from traditional methods that use unconcentrated stool for permanently stained slides [28].

- Staining Procedure: Slides are stained with a single standardized trichrome stain method, specifically Ecostain, to ensure consistency in digital imaging [28].

- Coverslipping: Slides are permanently coverslipped using an automated coverslipping system with fast-drying mounting medium to prevent movement during scanning. This replaces previous methods that used temporary immersion oil mounting [28].

Digital Imaging and Analysis:

- Slide Scanning: Prepared slides are scanned using a Hamamatsu NanoZoomer 360 digital slide scanner capable of processing 360 slides per batch with a 40x dry objective lens, achieving 1000x magnification equivalent to traditional microscopy [28].

- AI Analysis: The Techcyte algorithm searches through the digital image matrix to identify objects of interest corresponding to protozoan parasites, including Giardia, Dientamoeba fragilis, Entamoeba histolytica, and other intestinal protozoa [28].

- Technologist Verification: Identified objects are grouped into suggested categories and presented to laboratory technologists for final interpretation. The system assists but does not replace the technologist, maintaining human oversight in the diagnostic process [28].

AI-Powered Fecal Egg Counting for Veterinary Parasitology

Researchers at Appalachian State University have developed an automated microscopy system for fecal egg counting (FEC) in livestock, addressing the substantial economic burden of gastrointestinal parasites [27].

System Development Protocol:

- Technology Foundation: The system builds on a decade of development in custom automated microscope and image-processing platforms, with specific adaptation to FEC analyses through NCInnovation grant funding [27].

- Image Acquisition: The platform incorporates specialized solutions for rapidly scanning sample areas thousands of times larger than typical microscope fields and increasing contrast without relying on dyes or expensive equipment [27].

- Field Validation: The current development phase focuses on refining hardware for field testing and validation by accrediting agencies, including customer discovery to inform user experience design tailored to farmers' workflows [27].

Deep Learning for Soil-Transmitted Helminths in Kato-Katz Smears

A study deployed in a primary healthcare setting in Kenya implemented a comprehensive protocol for AI-based detection of STHs in Kato-Katz thick smears, addressing the challenge of light-intensity infections that account for 96.7% of positive cases [29].

Sample Processing and Digitization:

- Sample Collection: 965 stool samples were collected from school children in Kwale County, Kenya, an area endemic for A. lumbricoides, T. trichiura, and hookworm [29].

- Kato-Katz Preparation: Standard Kato-Katz thick smears were prepared according to WHO guidelines, with the modification that slides needed to be analyzed within 30-60 minutes due to glycerol-induced disintegration of hookworm eggs [29].

- Whole Slide Imaging: Smears were digitized using portable whole-slide scanners suitable for field deployment in primary healthcare settings, enabling digital pathology outside high-end laboratories [29].

AI Implementation and Verification:

- Algorithm Architecture: The system employed deep learning algorithms, specifically convolutional neural networks and vision transformers, trained to identify STH eggs in digital smears [29].

- Disintegration Compensation: An additional DL algorithm was implemented specifically to detect partially disintegrated hookworm eggs, addressing a limitation identified in previous studies where disintegration reduced sensitivity [29].

- Expert Verification Tool: An AI-verification tool was developed to allow experts to verify AI findings, creating a composite reference standard that combined expert-verified eggs in both physical and digital smears [29].

Workflow Visualization

AI-Parasite Detection Workflow

System Architecture & Algorithm Integration

AI System Architecture

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key research reagent solutions and essential materials for AI-powered parasite detection experiments.

| Reagent/Material | Function/Application | Implementation Example |

|---|---|---|

| Ecofix | Stool specimen preservation for optimal digital imaging | Maintains morphological integrity while eliminating toxic heavy metals [28] |

| Mercury/Copper-Free PVA | Alternative fixative for stool specimens | Environmentally friendly preservation compatible with AI analysis [28] |

| Ecostain | Standardized trichrome staining for digital pathology | Ensures consistent staining quality for AI algorithm performance [28] |

| Fast-Drying Mounting Medium | Permanent coverslipping for slide scanning | Prevents movement during high-resolution digitization [28] |

| Kato-Katz Reagent Kit | Preparation of thick smears for STH detection | Standardized field-deployable method for soil-transmitted helminths [29] |

| Portable Whole-Slide Scanner | Digital imaging in field settings | Enables digitization outside traditional laboratories [29] |

| Convolutional Neural Network Algorithm | Core AI technology for parasite detection | Analyzes digital images to identify parasitic elements [1] [29] |

| Disintegration Detection Algorithm | Specialized hookworm egg identification | Compensates for glycerol-induced disintegration in Kato-Katz smears [29] |

The integration of AI-powered microscopy into parasitic disease control represents a fundamental shift in diagnostic capabilities and public health interventions. The quantitative evidence demonstrates that AI systems, particularly expert-verified approaches, achieve significantly higher sensitivity than manual microscopy while maintaining high specificity—especially crucial for detecting light-intensity infections that comprise the majority of cases in declining transmission settings [29]. This enhanced detection capability directly addresses the growing need for more sensitive diagnostic methods as global STH prevalence decreases and light infections become increasingly predominant [29].

Beyond improved diagnostic accuracy, AI-powered microscopy offers transformative benefits for healthcare systems. The technology reduces analysis time from days to minutes, decreases reliance on highly specialized technicians, and enables more cost-effective mass screening programs [27]. Furthermore, these systems facilitate remote diagnosis, quality assurance, and educational reviews while potentially allowing technologists to work in non-traditional settings, including from home [28]. As the technology continues to evolve, the integration of predictive modeling and automated reporting will further enhance its utility in both clinical and public health contexts, ultimately contributing to more effective parasitic disease control and improved patient outcomes worldwide.

The fight against parasitic diseases such as malaria, trypanosomiasis, and leishmaniasis represents one of the most persistent challenges in global health, particularly in resource-limited settings where these diseases disproportionately affect vulnerable populations [1]. Traditional drug discovery paradigms are characterized by lengthy development cycles often spanning a decade or more, prohibitive costs exceeding $2.5 billion per approved drug, and high failure rates with approximately 90% of potential drug candidates failing to progress beyond preclinical testing [1] [30]. This inefficient model has severely limited the development of new treatments for neglected tropical diseases, where pharmaceutical development business models often prioritize conditions prevalent in affluent countries [31].

Artificial intelligence has emerged as a transformative force in pharmaceutical research, revolutionizing traditional drug discovery by seamlessly integrating data, computational power, and algorithms to enhance efficiency, accuracy, and success rates [32] [33]. AI, particularly through machine learning (ML) and deep learning (DL), accelerates the entire drug development pipeline from target identification to clinical trials, reducing both timelines and costs while increasing the probability of success [30]. For parasitic diseases specifically, AI offers unprecedented capabilities for understanding transmission patterns, enabling rapid diagnostics, identifying novel drug targets, predicting drug efficacy and safety, and repurposing existing therapeutics [1]. This technological paradigm shift is particularly crucial given the adapting nature of parasites to climatic changes and the expanding geographical spread of vector-borne parasitic infections, necessitating more responsive and resilient healthcare innovations [1].

AI Fundamentals for Drug Discovery

Artificial intelligence in drug discovery encompasses multiple computational techniques that mimic human intelligence to analyze complex biological and chemical data. The AI ecosystem in pharmaceutical research consists of several interconnected technologies, each with distinct capabilities and applications in combating parasitic diseases.

Machine Learning (ML) represents a foundational AI approach that enables computers to learn from data without explicit programming [34]. ML algorithms identify patterns within large datasets to build predictive models for various drug discovery applications. Key ML paradigms include: supervised learning using labeled datasets for classification and regression tasks; unsupervised learning that identifies latent structures in unlabeled data through clustering and dimensionality reduction; semi-supervised learning that leverages both labeled and unlabeled data; and reinforcement learning that optimizes decisions through reward-based systems [34] [30].

Deep Learning (DL), a subset of ML inspired by the human brain's neural networks, utilizes multiple processing layers to extract hierarchical features from raw data [34]. DL architectures have demonstrated remarkable performance in handling large and complex datasets common in pharmaceutical research. Principal DL algorithms include Multilayer Perceptron (MLP) for data escalation; Convolutional Neural Networks (CNN) for processing image-based data such as microscopic parasite images; and Long Short-Term Memory Recurrent Neural Networks (LSTM-RNN) for sequential data analysis [34]. Other specialized architectures include Self-Organizing Maps (SOM), Autoencoders (AE), Restricted Boltzmann Machines (RBM), Deep Belief Networks (DBN), and Generative Adversarial Networks (GAN) for specific analytical tasks [34].

Network-Based Approaches study relationships between biological entities including protein-protein interactions (PPIs), drug-disease associations (DDAs), and drug-target associations (DTAs) [34]. These methods operate on the principle that drugs proximate to a disease's molecular site in biological networks tend to be more suitable therapeutic candidates, employing mathematical approaches like random walks to predict network relationships [34].

Table 1: Core AI Technologies in Parasitic Drug Discovery

| Technology | Primary Function | Key Algorithms | Parasitic Disease Applications |

|---|---|---|---|

| Machine Learning (ML) | Pattern recognition and predictive modeling from data | RF, SVM, ANN, kNN, NBC [34] | Compound screening, QSAR modeling, efficacy prediction [31] |

| Deep Learning (DL) | Hierarchical feature extraction from complex datasets | CNN, LSTM-RNN, GAN, MLP [34] | Image-based parasite detection, molecular design [1] |

| Network-Based Approaches | Mapping biological relationships and interactions | Random walks, multiview learning [34] | Target identification, drug repurposing [34] |

| Natural Language Processing (NLP) | Extracting information from textual data | Text mining, entity recognition [35] | Literature-based discovery, clinical data analysis [35] |

AI-Enabled Target Prediction and Identification