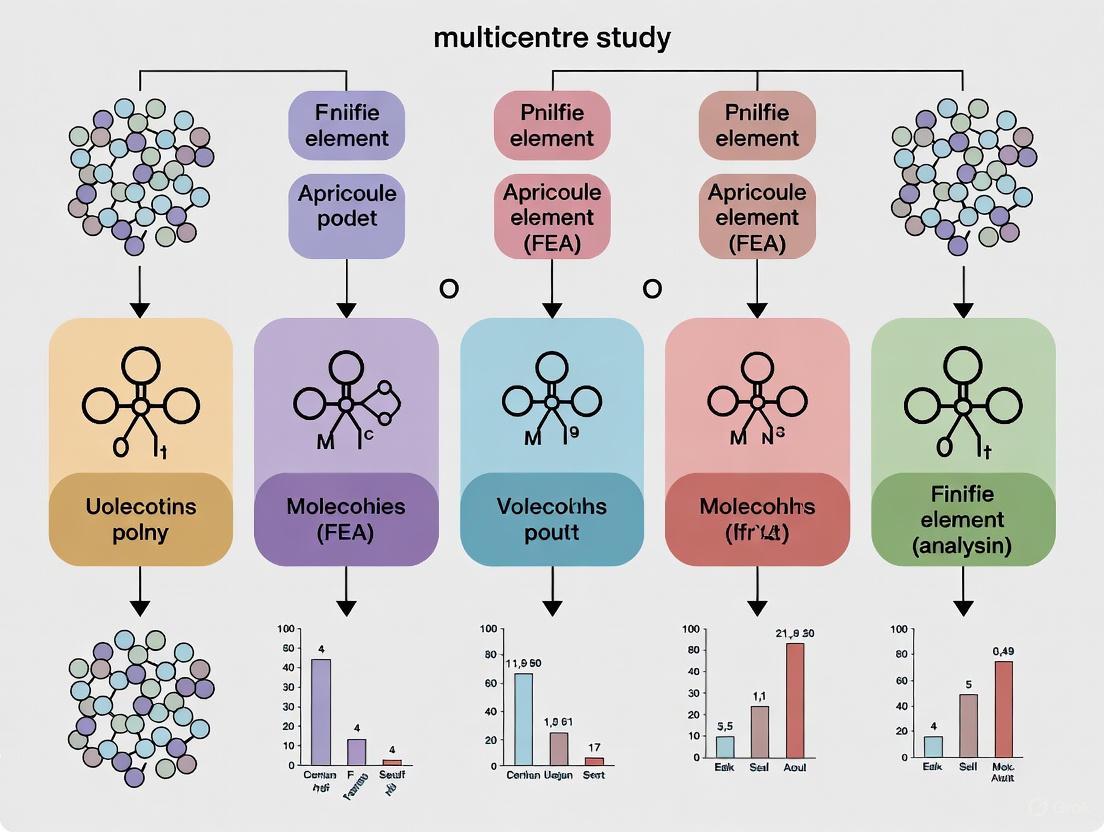

Advancing Multicenter Studies with Finite Element Analysis: A Framework for Robust, Scalable, and Clinically Translational Research

This article provides a comprehensive guide for researchers and drug development professionals on the application of Finite Element Analysis (FEA) in multicenter study settings.

Advancing Multicenter Studies with Finite Element Analysis: A Framework for Robust, Scalable, and Clinically Translational Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the application of Finite Element Analysis (FEA) in multicenter study settings. It covers the foundational principles of FEA and the critical challenge of uncertainty quantification, which is paramount for ensuring reliability across diverse centers. The piece explores advanced methodological integrations, including multi-objective optimization and machine learning surrogates, to enhance scalability. It further details strategies for troubleshooting model robustness and optimizing computational efficiency. Finally, the article establishes a rigorous framework for the external validation and comparative analysis of FEA models, highlighting their growing role in supporting regulatory decisions and Model-Informed Drug Development (MIDD).

Establishing a Robust Foundation: Core FEA Principles and Multicenter Challenges

Demystifying the FEA and FEM Workflow in Biomedical Contexts

Finite Element Analysis (FEA) and the Finite Element Method (FEM) have become indispensable tools in biomedical engineering, enabling researchers to simulate and understand the complex mechanical behavior of biological systems and medical devices without the need for extensive physical prototyping. In multicentre study settings, standardized FEA workflows are crucial for ensuring consistent, comparable, and clinically relevant results across different research sites. This computational technique numerically approximates the solution to partial differential equations that govern physical phenomena by dividing complex structures into smaller, simpler pieces called elements [1]. The biomedical industry has witnessed a profound transformation with FEM integration, particularly in modeling biological systems, optimizing medical devices, and developing personalized treatment strategies [2].

The fundamental principle of FEA involves discretizing a continuous domain into a finite number of elements connected at nodes, creating a mesh that represents the geometry of the structure being analyzed. This approach allows researchers to solve complex biomechanical problems by applying material properties, boundary conditions, and loads to predict how biological structures will respond to various mechanical stimuli. In bone research, for example, micro-scale FEA (µFEA) accounts for different loading scenarios and detailed three-dimensional bone structure to estimate mechanical properties and predict potential fracture risk [1]. The accuracy of these models depends heavily on the congruence between calibration data and real-world load cases, as demonstrated in stent development studies where simplified geometries are often necessary due to the high effort required for prototype manufacturing [3].

Core FEA Workflow in Biomedical Contexts

Standardized Workflow Diagram

The following diagram illustrates the generalized FEA workflow adapted for biomedical applications, integrating components from multiple research domains:

Stage-by-Stage Workflow Description

Medical Imaging and 3D Reconstruction: The workflow begins with acquiring high-resolution medical images using computed tomography (CT) or magnetic resonance imaging (MRI). For bone evaluation, micro-CT scanners provide voxel sizes from ~1 to 100 μm, enabling detailed capture of trabecular architecture [1]. In pelvic floor studies, researchers combine CT (for bone tissue) and MRI (for soft tissues) to overcome the similar density challenges of pelvic muscles, fascia, and other tissues [4]. The imaging data is processed using specialized software like Mimics to generate 3D models, with manual outlining of anatomical structures by experienced radiologists to ensure accuracy.

Mesh Generation and Discretization: The reconstructed 3D geometry is converted into a finite element mesh through discretization. Element type and size are critical parameters determined through mesh convergence studies, where refinement continues until changes in key outputs (e.g., peak reaction force) are less than 2.5-5% [5]. Tetrahedral elements (C3D4) are commonly used for complex anatomical geometries, while modified quadratic elements (C3D10M) are preferred for scenarios involving contact and large strains [6] [5].

Material Property Assignment: Biological materials require appropriate constitutive models to capture their mechanical behavior. Bone is often modeled as linear elastic due to its inherent stiffness [6], while soft tissues typically require hyperelastic or viscoelastic models. For polymeric biomaterials, advanced constitutive models like the Parallel Rheological Framework (PRF) and Three-Network (TN) model provide better fits for time-dependent behavior compared to simpler linear elastic-plastic models [3]. Material parameters are derived from experimental testing or literature values.

Boundary Conditions and Loading: Physiologically accurate boundary conditions and loading scenarios are essential for clinical relevance. This includes simulating specific activities (gait, Valsalva maneuver) [6] [4] or medical device interactions (stent expansion, prosthetic loading) [3] [6]. In miniscrew-assisted rapid palatal expansion (MARPE) studies, accurate boundary conditions must account for anisotropic bone behavior and time-dependent sutural mechanics [7].

Numerical Solution and Validation: The assembled model is solved using numerical methods, with explicit approaches often necessary for dynamic effects [3]. Validation against experimental measurements is crucial, with quantitative comparison of parameters like force-displacement responses [3] [5] or qualitative assessment of deformation patterns [3]. In multicentre studies, standardized validation protocols ensure consistency across research sites.

Application-Specific Protocols

Protocol 1: FEA for Polymer Stent Development

Objective: To validate material models for bioresorbable polymer stents using a simplified planar geometry approach for efficient material screening and design optimization [3].

Materials and Specimen Preparation:

- Polymers: Poly(L-lactide) (PLLA) and poly(glycolide-co-trimethylene carbonate) (PGA-co-TMC)

- Specimen Fabrication: Injection molding of planar 2D substructures from stent designs

- Equipment: Haake MiniJet II injection molding system

Experimental Methodology:

- Conduct quasi-static and cyclic mechanical testing including loading, stress relaxation, unloading, and strain recovery

- Perform planar stent segment expansion (PSSE) experiments for validation

- Capture strain data using video-assisted correction methods

FEA Model Calibration:

- Calibrate material model coefficients for three constitutive models: linear elastic-plastic (LEP), Parallel Rheological Framework (PRF), and Three-Network (TN) model

- Implement manual tuning of material coefficients and boundary conditions to improve robustness

- Validate models against experimental PSSE results and stress relaxation analyses

Multicentre Considerations: Standardize testing protocols across sites using identical specimen geometries, testing parameters, and validation metrics to ensure comparable results.

Protocol 2: Micro-CT Based Bone Evaluation

Objective: To predict bone mechanical competence and fracture risk using micro-scale FEA based on high-resolution micro-CT images [1].

Sample Preparation and Imaging:

- Sample Types: Animal model bone specimens (in vivo or ex vivo)

- Imaging Parameters: Micro-CT scanning with voxel sizes of 1-100 μm

- Calibration: Use phantom scans to convert radiodensity to Hounsfield units or bone mineral density

Model Development Workflow:

- Image Segmentation: Separate bone from marrow space using threshold-based methods

- Mesh Generation: Convert segmented images to tetrahedral element mesh

- Material Assignment: Assign bone material properties based on density-elasticity relationships

- Loading Scenarios: Apply physiologically relevant loads (compression, tension, shear)

- Solution: Solve for mechanical parameters including stress, strain, and deformation

Output Analysis:

- Calculate apparent elastic modulus and ultimate strength

- Identify high-strain regions predisposed to fracture

- Compare trabecular and cortical bone contributions to mechanical competence

Validation Approach: Validate µFEA predictions against experimental mechanical testing results from same specimens.

Protocol 3: Prosthetic Liner Optimization

Objective: To evaluate the effects of liner material and thickness on stress distribution at the residual limb-liner interface in transfemoral amputees [6].

Geometric Modeling:

- Develop 3D models based on CT scan data with approximately 1 mm slice increment

- Process medical images using 3D Slicer and Autodesk Meshmixer

- Extract geometric structure of muscles and bones

- Create models with varying liner thicknesses (2 mm, 4 mm, 6 mm) while adjusting socket dimensions accordingly

Material Definitions:

- Bone: Linear elastic material (E = 16.8 GPa, υ = 0.3)

- Muscle: Linear elastic material (E = 0.92 MPa, υ = 0.49)

- Gel Liner: Linear elastic material (E = 1.15 MPa, υ = 0.49)

- Silicone Liner: Hyperelastic Ogden model (μ₁ = 0.294, α₁ = 4.365, D1 = 0.5)

Simulation Parameters:

- Element Type: Tetrahedral elements (C3D4)

- Mesh Size: Uniform element size of 5 mm after convergence study

- Loading: Apply physiological loading conditions

- Output Parameters: Contact pressure (CPRESS), maximum principal strain (Le. Max), shear stress (CSHEAR1), vertical displacement (U3)

Multicentre Standardization: Establish consistent mesh density, element types, and boundary conditions across participating research sites.

Quantitative Data Synthesis

Table 1: Material Properties for Biomedical FEA Applications

| Material | Application Context | Constitutive Model | Parameters | Source |

|---|---|---|---|---|

| PLLA | Stent development | Parallel Rheological Framework | Calibrated from experimental data | [3] |

| PGA-co-TMC | Stent development | Three-Network Model | Calibrated from experimental data | [3] |

| Bone | General orthopedic | Linear Elastic | E = 16.8 GPa, υ = 0.3 | [6] |

| Muscle | Prosthetic interfaces | Linear Elastic | E = 0.92 MPa, υ = 0.49 | [6] |

| Gel Liner | Prosthetic interfaces | Linear Elastic | E = 1.15 MPa, υ = 0.49 | [6] |

| Silicone Liner | Prosthetic interfaces | Ogden Hyperelastic | μ₁ = 0.294, α₁ = 4.365, D1 = 0.5 | [6] |

Table 2: Prosthetic Liner Performance Comparison

| Liner Thickness | Material | Contact Pressure (MPa) | Pressure Reduction | Key Findings |

|---|---|---|---|---|

| 2 mm | Gel/Silicone | 0.4656 | Baseline | Highest pressure, potential discomfort |

| 4 mm | Gel/Silicone | 0.4153 | 10.8% | Moderate pressure reduction |

| 6 mm | Gel/Silicone | 0.3825 | 17.9% | Optimal pressure distribution |

Table 3: FEA Validation Metrics Across Biomedical Applications

| Application Domain | Primary Validation Metrics | Typical Accuracy | Key Challenges | |

|---|---|---|---|---|

| Polymer Stents | Force-displacement response, Deformation patterns | Strong agreement for deformation, varying for force response | Capturing time-dependent effects | [3] |

| Bone Mechanics | Apparent elastic modulus, Ultimate strength | High correlation with experimental testing (R² > 0.8 in many studies) | Accounting for anisotropy and heterogeneity | [1] |

| Prosthetic Liners | Contact pressure, Shear stress | Quantitative agreement with pressure measurements | Modeling soft tissue nonlinearity | [6] |

| Pelvic Floor | Tissue deformation, Strain patterns | Qualitative agreement with dynamic MRI | Complex material interactions | [4] |

Advanced Integration Techniques

Machine Learning-Enhanced FEA

The integration of machine learning with FEA represents a paradigm shift in biomedical simulation capabilities. Machine learning-assisted approaches address the critical challenge of parameter identification, which is often time-consuming and requires expert knowledge [5]. A physics-informed artificial neural network (PIANN) model can be trained using data generated through automated FEA workflows to predict optimal modeling parameters based on experimental force-displacement curves as input [5]. This approach has demonstrated superior performance compared to state-of-the-art models in both quantitative and qualitative accuracy when applied to 3D-printed meta-biomaterials.

In thermal ablation therapy, ensemble machine learning combined with finite element modeling accurately predicts temperature distribution and optimizes probe positioning and power delivery [8]. This integration reduces the need for costly experiments and enables personalized cancer treatment planning through improved prediction of ablation zones [8]. The random forest regression model in this application was trained on FEM-generated data to optimize antenna insertion depth and predict ablation geometry with high fidelity.

Multicentre Study Implementation

Standardization Challenges: Implementing FEA in multicentre research presents unique challenges, including variability in imaging protocols, segmentation methodologies, and boundary condition definitions. The review of MARPE studies found that only 6 out of 79 studies included clinical validation data, highlighting the validation gap in multicentre applications [7].

Recommended Standardization Framework:

- Imaging Protocols: Establish consistent scanning parameters across sites (voxel size, resolution, calibration)

- Segmentation Standards: Implement standardized segmentation protocols with quality control measures

- Material Property Databases: Develop shared repositories of material properties for biological tissues

- Validation Benchmarks: Create standardized validation cases for cross-site comparison

- Mesh Quality Guidelines: Define minimum mesh quality standards and convergence criteria

Data Integration: For digital phenotyping studies like PREACT-digital, which combines ecological momentary assessment with passive sensing, FEA integration requires careful temporal alignment of mechanical simulations with physiological data streams [9]. This multimodal approach enables correlation of mechanical environment with biological response and clinical outcomes.

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Biomedical FEA

| Tool Category | Specific Tools | Function | Application Examples |

|---|---|---|---|

| Medical Imaging | Micro-CT, MRI, CT | Provides 3D anatomical data for model reconstruction | Bone microarchitecture [1], Pelvic floor dynamics [4] |

| Image Processing | Mimics, 3D Slicer, Geomagic Studio | Converts medical images to 3D CAD models | Stent geometry [3], Bone specimens [1] |

| FEA Software | Abaqus, FEBio, ANSYS | Performs numerical simulation and analysis | Prosthetic liners [6], Thermal ablation [8] |

| Material Testing | Universal testing systems | Generates experimental data for material model calibration | Polymer stent materials [3] |

| Machine Learning | Keras, Scikit-learn | Enhances parameter identification and model optimization | Meta-biomaterials [5], Thermal therapy [8] |

The finite element method provides a powerful framework for investigating complex biomechanical problems across diverse biomedical applications, from stent development to orthopedic interventions and prosthetic design. Successful implementation in multicentre research settings requires rigorous standardization of imaging protocols, material properties, boundary conditions, and validation methodologies. The integration of machine learning approaches with traditional FEA workflows represents a promising direction for enhancing predictive accuracy while reducing dependency on expert-driven parameter tuning. As these computational methods continue to evolve, their potential to accelerate medical device innovation, personalize treatment strategies, and improve clinical outcomes will further expand, solidifying FEA's role as an essential tool in biomedical research.

The Critical Imperative of Uncertainty Quantification (UQ) for Multicenter Generalizability

In the realm of finite element analysis (FEA) within multicenter study settings, Uncertainty Quantification (UQ) transitions from a best practice to a critical imperative for ensuring model generalizability and reliability. Multicenter research introduces inherent variability through differences in equipment, operational protocols, and population characteristics across different locations. A multi-analysis framework that combines various computational methods informed by statistical data is essential to simulate progressive damage evolution in composites, including their uncertainty [10]. Such frameworks employ efficient FEA to generate large datasets, global sensitivity analysis to identify influential input parameters, and simplified surrogate models based on polynomial regression for rapid analysis [10]. This approach enables coupling with Bayesian parameter estimation in the form of Markov Chain Monte Carlo to determine probability distributions of FEA input parameters, thereby representing measured uncertainty across multiple centers.

The fundamental challenge in multicenter FEA research lies in the fact that subjects entering a trial constitute a "collection" of patients rather than a random sample from a well-defined population [11]. Consequently, the basis for any inference becomes questionable without proper UQ methodologies. Randomization processes can serve as a basis for inference as an alternative to relying on random sampling, but this approach strictly applies to the "collection" of patients who have entered the trial [11]. Any generalization of inference to a broader population must be made based on how well the "collection" of patients in the trial approximates a well-defined disease population, necessitating robust UQ frameworks.

Quantitative Foundations of UQ

Performance Metrics for UQ Methodologies

Table 1: Performance Comparison of UQ Methods in Multicenter Studies

| Method Category | Specific Method | Key Performance Indicators | Optimal Use Cases |

|---|---|---|---|

| Conditional Models | Mixed-Effects Logistic Regression with Random Intercept | Maintains type I error; handles center variation; Power: >80% in most scenarios [12] | Most scenarios except very low event rates (≤2%) with small samples (n≤500) [12] |

| Marginal Models | GEE with Small Sample Correction | Maintains nominal type I error; reduced power in small centers [12] | Large number of centers; requires explicit correlation structure [12] |

| Design-Based Methods | Randomization-Based Inference | Increased power in presence of center variation; utilizes ancillary statistics [11] | Permuted block designs; stratification by center [11] |

| Surrogate Modeling | Polynomial Regression with Bayesian Estimation | B-Basis values consistent with experiments (2-9% difference) [10] | Rapid parameter estimation; large dataset generation [10] |

Statistical Evidence for UQ Implementation

Table 2: Quantitative Evidence for UQ in Multicenter Research

| Study Context | Sample Size & Centers | Key UQ Findings | Statistical Performance |

|---|---|---|---|

| Postoperative Complication Prediction [13] | Derivation: 66,152 cases; Validation: Two cohorts with 13,285 and 2,813 cases | Multitask learning model for AKI, respiratory failure, and mortality | AUROCs: 0.805-0.863 (AKI); 0.886-0.925 (PRF); 0.849-0.907 (mortality) [13] |

| Smoking Ccessation RCT [12] | 54 companies; 6,006 participants; 80 total events (1.3%) | Extreme low event rate scenario requiring specialized UQ | Cessation percentages: 0.1%-2.9% across arms; many centers with zero events [12] |

| Compact Tension Testing [10] | Simulation-based design allowables | Bayesian parameter estimation with Markov Chain Monte Carlo | B-Basis values consistent with experiments (2-9% difference); A-Basis varied significantly [10] |

| Permuted Block Design [11] | Theoretical framework for multicenter trials | Randomization as basis for inference conditioning on ancillary statistics | Significant power increase in presence of center variation [11] |

Experimental Protocols for UQ in Multicenter FEA

Protocol 1: Multi-Analysis Framework for FEA UQ

Objective: To implement a comprehensive UQ pipeline for FEA in multicenter settings, combining computational methods with experimental data.

Materials and Equipment:

- FEA software with parameterization capabilities

- Statistical analysis environment (R, Python with scikit-learn, PyMC3)

- Experimental dataset from multiple centers

- High-performance computing resources for large dataset generation

Procedure:

- Initial FEA Dataset Generation: Execute parameterized FEA simulations to generate large datasets representing geometric, material, and boundary condition variations across centers [10].

- Global Sensitivity Analysis: Employ Sobol or Morris methods to identify influential FEA input parameters contributing most to output variance [10].

- Surrogate Model Development: Create simplified models based on polynomial regression or Gaussian process regression to enable rapid parameter estimation [10].

- Bayesian Parameter Estimation: Implement Markov Chain Monte Carlo sampling to determine probability distributions of FEA input parameters, representing measured uncertainty [10].

- Design Allowables Calculation: Compute A- and B-Basis design allowables for various structural configurations, validating against experimental data from multiple centers [10].

- Cross-Center Validation: Assess model performance across different centers, quantifying generalizability through metrics in Table 2.

Validation Criteria:

- B-Basis values consistent with experimental results (2-9% difference acceptable) [10]

- Convergence of MCMC chains assessed through Gelman-Rubin statistics

- Surrogate model accuracy verified against full FEA simulations

Protocol 2: Randomization-Based Analysis for Multicenter FEA

Objective: To implement design-based analysis methods that account for center effects through randomization inference.

Materials and Equipment:

- Multicenter FEA dataset with randomization records

- Statistical software with permutation testing capabilities

- Computing resources for combinatorial calculations

Procedure:

- Randomization Structure Documentation: Document the permuted block design used within each center, including block sizes and allocation sequences [11].

- Ancillary Statistics Calculation: Compute conditioning statistics based on the number of patients assigned to each treatment within a center [11].

- Test Statistic Definition: Define appropriate test statistics (e.g., treatment effect size) that incorporate the design structure.

- Reference Distribution Generation: Generate the exact or approximate randomization distribution through permutation or resampling methods [11].

- Conditional Inference: Conduct statistical tests conditioning on the ancillary statistics to increase power and account for center effects [11].

- Model-Based Comparison: Compare results with traditional model-based analyses (linear, logistic models) to assess performance differences.

Validation Criteria:

- Increased statistical power in the presence of center variation compared to unadjusted methods [11]

- Appropriate type I error control under null hypothesis of no treatment effect

- Consistency with model-based approaches when sample sizes are large

Protocol 3: UQ for Low Event Rate Scenarios in Multicenter FEA

Objective: To address UQ challenges in multicenter FEA studies with rare events or low outcome proportions.

Materials and Equipment:

- Multicenter dataset with low event rates

- Statistical software supporting mixed-effects models and GEE

- Computational resources for simulation studies

Procedure:

- Event Rate Assessment: Quantify overall and center-specific event rates, identifying centers with zero events [12].

- Method Selection Matrix: Apply appropriate statistical methods based on event rates and center characteristics (refer to Table 1).

- Random Intercept Model Implementation: For most scenarios, implement mixed-effects logistic regression with random intercepts for center [12].

- Small Sample Corrections: When using GEE, apply small sample corrections to maintain appropriate type I error rates with limited centers [12].

- Convergence Monitoring: Closely monitor model convergence, particularly for scenarios with event rates ≤2% and sample sizes ≤500 [12].

- Alternative Method Specification: Pre-specify alternative methods in statistical analysis plans to address potential non-convergence issues [12].

Validation Criteria:

- Successful model convergence without algorithmic failures

- Maintenance of nominal type I error rates (≤0.05)

- Maximized statistical power while accounting for center effects

- Adherence to intention-to-treat principles without unnecessary participant exclusion

Visualization Methods for UQ

Uncertainty Visualization Framework

Effective visualization of uncertainty is paramount for interpreting multicenter FEA results. The visualization pipeline must include uncertainty at each stage, from data transformation to visual mapping and ultimately user perception [14]. A general approach treats statistical graphics as functions of the underlying distribution, propagating uncertainty through to the visualization [15]. By repeatedly sampling from the data distribution and generating complete statistical graphics for each sample, a distribution over graphics is produced, which can be aggregated pixel-by-pixel to create a single, static image that communicates uncertainty [15].

Visual Mapping Strategies for UQ

Multiple visual mapping strategies can be employed to represent uncertainty in multicenter FEA results:

- Explicit Distribution Representation: Direct visualization of probability distributions through error bars, confidence intervals, box plots, violin plots, or quantile dot plots [15].

- Summary Statistics: Display of statistical summaries such as confidence intervals for point estimates or confidence bands for regression curves [15].

- Hybrid Approaches: Combination of distributional representations and summary statistics through techniques like gradient-based uncertainty fields, contouring, or ambiguated charts [14] [15].

- Pixel-Level Aggregation: Generation of static images through aggregation of multiple statistical graphics created from distribution samples, effectively showing the uncertainty in the visualization itself [15].

Research Reagent Solutions

Table 3: Essential Research Tools for UQ in Multicenter FEA

| Tool Category | Specific Solution | Function in UQ Process | Implementation Considerations |

|---|---|---|---|

| Sensitivity Analysis | Sobol Method, Morris Method | Identifies influential input parameters for prioritization in UQ [10] | Computational cost increases with parameter dimension; effective screening reduces burden |

| Surrogate Modeling | Polynomial Regression, Gaussian Process Regression | Creates rapid approximation models for coupling with Bayesian methods [10] | Balance between model accuracy and computational efficiency; validate against full FEA |

| Bayesian Estimation | Markov Chain Monte Carlo (MCMC) | Determines probability distributions of input parameters representing uncertainty [10] | Convergence diagnostics essential; potential for software implementations like PyMC3, Stan |

| Randomization Inference | Permutation Tests, Conditional Exact Tests | Provides design-based analysis accounting for center effects [11] | Conditions on ancillary statistics; increases power in presence of center variation |

| Mixed-Effects Modeling | Random Intercept Models, Generalized Linear Mixed Models | Accounts for center effects in statistical analysis [12] | Preferred for most scenarios except very low event rates with small samples |

| Uncertainty Visualization | Bootplot, Hypothetical Outcome Plots | Communicates uncertainty in statistical graphics and analysis results [15] | Pixel-level aggregation of multiple graphics; provides theoretical coverage guarantees |

Within the framework of Failure Mode and Effect Analysis (FMEA) for multicentre studies, the systematic classification and management of uncertainty is paramount for ensuring reliable and trustworthy results. In medical image analysis and clinical prediction models, failing to effectively quantify uncertainty can lead to severe consequences, including misdiagnosis [16]. Uncertainty in artificial intelligence (AI) and machine learning (ML) is broadly categorized into two fundamental types: aleatoric and epistemic [16]. Aleatoric uncertainty refers to the inherent randomness or noise within a system or dataset, stemming from unpredictable fluctuations in the data generation process, such as measurement errors or biological variability. This uncertainty is typically irreducible and cannot be eliminated even with more data [17] [16]. Epistemic uncertainty arises from a lack of knowledge or insufficient information about the system, the model, or its parameters. This reflects the model's incompleteness or a lack of sufficient training data to cover all possible scenarios, and is therefore reducible through more data or improved models [17] [16].

The distinction between these uncertainties is critical in multicentre studies, where data heterogeneity and model generalizability are major concerns. A prospective risk analysis of automated radiotherapy workflows highlighted that the highest-risk failure modes were associated with human interactions with the system and the difficulty of judging scenarios where AI models lack generalizability, underscoring a form of epistemic uncertainty [18]. Consequently, educational programs and interpretative tools are deemed essential prerequisites for the widespread clinical application of such automated systems [18].

Quantitative Comparison of Aleatoric and Epistemic Uncertainty

The table below summarizes the core characteristics of aleatoric and epistemic uncertainty, providing a structured comparison for researchers.

Table 1: Fundamental Characteristics of Aleatoric and Epistemic Uncertainty

| Characteristic | Aleatoric Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Origin / Source | Inherent randomness in data; measurement noise [17] [16] | Lack of knowledge; model limitations; insufficient training data [17] [16] |

| Reducibility | Irreducible (cannot be eliminated with more data) [16] | Reducible (can be mitigated with more data or improved models) [16] |

| Mathematical Representation | Variance of residual errors (e.g., in regression: ( \epsilon \sim \mathcal{N}(0,\sigma^2) )) [16] | Posterior distribution over model parameters ( ( p(\theta|D) ) ) [16] |

| Typical Quantification Methods | Learned loss attenuation, probabilistic model outputs [17] | Bayesian inference, ensemble methods, Monte Carlo dropout [17] [16] |

| Primary Influence in Multicentre Studies | Data heterogeneity across sites; protocol variations [18] | Model generalizability; small sample sizes for rare subgroups [18] |

The practical quantification of these uncertainties is demonstrated in medical imaging segmentation tasks. A study using a 3D U-Net for brain MRI segmentation derived aleatoric and epistemic uncertainty maps per voxel. The research showed that both types of uncertainty decreased as the number of training data volumes increased from 200 to 898, with high uncertainty primarily observed in tissue boundary regions [17]. This provides a direct quantification method applicable for both 2D and 3D neural networks in a clinical setting [17].

Protocols for Quantifying Uncertainty in Multicentre Studies

Protocol 1: Quantifying Uncertainty in Medical Image Segmentation

This protocol details the procedure for deriving voxel-level maps of aleatoric and epistemic uncertainty from a 3D U-Net segmentation network, based on a multinomial probability function [17].

- Objective: To generate tissue segmentation maps alongside quantitative measures of aleatoric and epistemic uncertainty for each voxel in a 3D medical image (e.g., T1 MRI).

- Materials and Reagents:

- Experimental Procedure:

- Neural Network Training:

- Train a 3D U-Net neural network using a loss function defined as the negative logarithm of the likelihood based on a multinomial probability function: ( L(\alpha) = -\log(\Pr(Y|\alpha)) = \log(\sum{j=1}^m \alphaj) - \sum{j=1}^m cj \log(\alphaj) ) where ( \alphaj ) are the tissue probability predictions and ( c_j ) is the ground truth indicator [17].

- Use an Adam optimizer with a learning rate of 0.0001, batch size of 3, and 140 epochs. Minimal data augmentation (e.g., 1% signal intensity perturbation) is recommended [17].

- Uncertainty Quantification:

- For a trained network, pass the test data (e.g., Connectome or tumor data) through the model to obtain the output ( \alpha ) [17].

- Calculate the total ( S\alpha = \sumj \alphaj ) [17].

- Compute Aleatoric Uncertainty for tissue class ( j ) using the derived equation: ( \text{Aleatoric} = \frac{\alphaj (S\alpha - \alphaj)}{S\alpha^2 (S\alpha + 1)} ) [17].

- Compute Epistemic Uncertainty for tissue class ( j ) using the derived equation: ( \text{Epistemic} = \frac{\alphaj}{S\alpha^2} - \frac{\alphaj^2}{S\alpha^3} ) [17].

- Validation:

- Neural Network Training:

Uncertainty Quantification Workflow

Protocol 2: FMEA for Risk Analysis in Automated Workflows

This protocol outlines a multicentre prospective FMEA for a fully automated radiotherapy workflow, identifying failure modes associated with human-automation interaction and model trust [18].

- Objective: To identify and prioritize potential failure modes in a hypothetical fully automated radiotherapy workflow, with a specific focus on risks stemming from uncertainty in human-computer interaction and AI model generalizability.

- Materials:

- FMEA Framework: Standardized templates for documenting failure modes, causes, effects, and risk scoring.

- Multicentre Panel: Experts from multiple radiotherapy centres (e.g., eight European centres) [18].

- Experimental Procedure:

- Workflow Decomposition: Break down the fully automated radiotherapy workflow (including auto-segmentation, auto-planning, and a final manual review step) into its constituent steps [18].

- Failure Mode Identification: For each workflow step, the expert panel identifies potential failure modes. These can be provided from a common list or newly added by individual centres based on local experience [18].

- Risk Scoring: Each centre assesses the identified failure modes on three metrics:

- Occurrence (O): Likelihood of the failure occurring.

- Severity (S): Impact of the failure on the patient or process.

- Detectability (D): Likelihood that the failure will be detected before causing harm.

- Calculate a Risk Priority Number (RPN): ( \text{RPN} = O \times S \times D ) [18].

- Data Analysis:

- Quantitative: Perform statistical analysis on the curated risk scores to identify the highest-risk steps and failure modes.

- Qualitative: Summarize free-text comments from experts to capture nuances not reflected in the scores, such as concerns about skill degradation or difficulty recognizing automation errors [18].

- Expected Output:

- A ranked list of high-risk failure modes. The analysis is expected to highlight that points of human interaction (e.g., manual review) pose higher risk than purely technical components, and that a major concern is the human ability to judge output when AI models have low generalizability (epistemic uncertainty) [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Uncertainty Quantification in Clinical AI Research

| Item / Tool | Function in Uncertainty Analysis |

|---|---|

| 3D U-Net Neural Network | A convolutional neural network architecture for volumetric image segmentation, which can be modified to output uncertainty measures directly [17]. |

| Multinomial Loss Function | A custom loss function derived from the multinomial probability distribution, enabling the direct quantification of both aleatoric and epistemic uncertainty from the network's outputs [17]. |

| PyTorch / TensorFlow | Deep learning frameworks that provide the flexibility to implement custom loss functions and uncertainty quantification layers for research and development [17]. |

| Failure Mode and Effect Analysis (FMEA) | A systematic, prospective risk assessment method used to identify and prioritize potential failures in a process, crucial for managing epistemic risk in clinical workflows [18]. |

| Monte Carlo Dropout | A technique that approximates Bayesian inference in deep learning models by performing multiple stochastic forward passes during prediction to estimate epistemic uncertainty [16]. |

| SHapley Additive exPlanations (SHAP) | A method to interpret the output of any machine learning model, quantifying the contribution of each feature to a single prediction, which helps explain model uncertainty [19]. |

Uncertainty Sources and Reducibility

Application Notes and Data Visualization

Quantitative data from clinical and imaging studies should be visualized effectively to communicate uncertainty and model performance. The best graphs for quantitative data comparison include bar charts for categorical data, line charts for trends over time, and scatter plots for relationships between variables [20] [21]. For model evaluation, Receiver Operating Characteristic (ROC) curves and Area Under the Curve (AUC) values are standard for reporting performance, as seen in a multicenter glaucoma surgical outcome prediction study where a convolutional neural network achieved an AUROC of 76.4% [22]. Similarly, a random forest model for predicting spinal cord injury in cervical spondylosis exhibited superior performance with elevated AUC values across training and testing sets [19].

Table 3: Example Quantitative Outcomes from Multicenter Clinical AI Studies

| Study Focus | Best-Performing Model | Key Performance Metric (Internal Test) | External Validation Performance | Noted Uncertainty / Risk Factor |

|---|---|---|---|---|

| Glaucoma Surgical Outcome Prediction [22] | 1D-CNN (Convolutional Neural Network) | AUROC: 76.4%, Accuracy: 71.6% | AUROC declined slightly (2-4%) | Outcome variability based on patient-specific factors; model generalizability. |

| Spinal Cord Injury Prediction in Cervical Spondylosis [19] | Random Forest | Elevated AUC and Accuracy (specific values not repeated) | Validated on external set of 149 patients | Heterogeneity in patient clinical presentation and imaging findings. |

| Breast Tumor Malignancy Classification [23] | Vision Transformer-based Multimodal Fusion | AUC: 0.994 (95% CI: 0.988-0.999) | AUC: 0.942 and 0.945 on two independent test cohorts | Integration of imaging histology, deep learning features, and clinical parameters. |

The FMEA study on automated radiotherapy workflows provides a qualitative data perspective, where the highest scoring failure modes were associated with "inadequate manual review" (high detectability and severity score), "incorrect application of the FAW" (high severity score), and "protocol violations during patient preparation" (high occurrence score) [18]. This highlights that in a clinical FMEA context, human factors and process adherence are critical sources of epistemic risk that must be managed alongside technical model performance.

Defining Context of Use (COU) and Key Questions for a 'Fit-for-Purpose' FEA Model

Finite Element Analysis (FEA) is a computational technique for numerically solving differential equations arising in engineering and mathematical modeling, widely used for solving complex physical problems in multiple dimensions [24]. In multicentre research settings, FEA provides a robust framework for standardizing computational simulations across different institutions, enabling the validation of predictive models through coordinated, geographically distributed studies. The method operates by subdividing large systems into smaller, simpler parts called finite elements, then systematically reassembling them into a global system of equations for final calculation [24]. This approach enables accurate representation of complex geometry, inclusion of dissimilar material properties, and capture of local effects—all essential characteristics for collaborative research.

Defining Context of Use (COU) for FEA Models

The Context of Use (COU) provides a precise specification of how a finite element model should be implemented, the conditions under which it operates, and its intended purpose within a multicentre study framework. A clearly defined COU is fundamental for ensuring that FEA models produce reliable, reproducible results across multiple research sites.

Table 1: Core Components of Context of Use for FEA Models

| COU Component | Description | Considerations for Multicentre Studies |

|---|---|---|

| Intended Purpose | Specific research question or prediction goal the model addresses | Must be consistently defined across all participating centres to ensure uniform application |

| Boundary Conditions | Constraints, loads, and environmental factors applied to the model | Requires standardization of loading protocols and constraint definitions to minimize inter-centre variability |

| Input Parameters | Material properties, geometric data, and initial conditions | Essential to establish acceptable ranges for input parameters and validate measurement techniques across centres |

| Output Metrics | Specific quantities of interest extracted from simulation results | Must define precise post-processing methodologies to ensure comparable output assessment |

| Performance Criteria | Accuracy thresholds, validation requirements, and acceptance criteria | Should include both technical performance metrics and clinical/biological relevance where applicable |

Key Questions for Establishing Fit-for-Purpose FEA Models

Developing a fit-for-purpose FEA model requires addressing critical questions throughout the model lifecycle. These questions ensure the computational framework adequately serves its intended research function while maintaining scientific rigor across multiple institutions.

Model Conceptualization Questions

- What specific biological, mechanical, or physical phenomenon does the model seek to represent?

- What are the key input variables and their acceptable ranges based on experimental data?

- What simplifying assumptions are appropriate given the research context?

- How will the model structure accommodate multicentre data integration?

Technical Implementation Questions

- What discretization strategy (h-version, p-version, hp-version) best balances accuracy and computational efficiency?

- What mesh density and element types are appropriate for capturing phenomena of interest?

- What solution algorithms (direct vs. iterative solvers) are most suitable for the problem class?

- How will software and hardware variations across centres be managed?

Validation and Verification Questions

- What experimental data will be used for model validation, and how will it be standardized?

- What statistical metrics will determine whether the model adequately represents reality?

- How will sensitivity analysis be performed to identify critical parameters?

- What constitutes sufficient model verification to ensure correct implementation?

Multicentre Coordination Questions

- What quality control procedures will ensure consistent model implementation?

- How will data sharing and interoperability be managed between institutions?

- What training and documentation are required to standardize operations?

- How will model updates and modifications be communicated and implemented?

Experimental Protocols for FEA Model Development and Validation

Protocol 1: Pre-Processing Phase Methodology

The pre-processing stage establishes the foundation for FEA by defining the computational domain and its properties [25].

Step 1: Geometric Modeling

- Acquire anatomical or structural geometry through medical imaging (CT, MRI) or coordinate measurement

- Segment regions of interest using consistent thresholds across all centres

- Create simplified geometric representations suitable for meshing while preserving critical features

- Document all geometric assumptions and simplification criteria

Step 2: Material Property Definition

- Define material constitutive models (linear elastic, hyperelastic, viscoelastic, etc.) based on experimental data

- Establish probability distributions for material parameters when accounting for biological variability

- Specify isotropic, anisotropic, or composite material orientations as appropriate

- Validate material models against experimental tests where possible

Step 3: Meshing Protocol

- Select appropriate element types (tetrahedral, hexahedral, shell, beam) based on geometry and physics

- Perform mesh convergence study to determine optimal element size

- Implement consistent mesh quality metrics across all centres (aspect ratio, skewness, Jacobian)

- Document mesh statistics including number of elements, nodes, and degrees of freedom

Step 4: Boundary Condition Application

- Define displacement constraints, applied loads, and contact interactions

- Standardize loading conditions based on physiological or mechanical relevance

- Implement boundary conditions consistently with minimal edge effects

- Validate boundary condition application through simplified analytical solutions

Protocol 2: Processing Phase Methodology

The processing stage involves solving the discretized system of equations to obtain simulation results [25].

Step 1: Solver Selection and Configuration

- Choose appropriate solver type (direct vs. iterative) based on problem size and nonlinearity

- Configure solver parameters (tolerances, convergence criteria, time stepping)

- Establish maximum computational time limits and resource allocation

- Implement solver diagnostics to monitor solution progress

Step 2: Solution Execution

- Execute simulation with standardized computational settings across centres

- Monitor solution convergence and implement fallback strategies for non-convergence

- Generate intermediate results for long-running simulations to permit progress assessment

- Log all computational parameters and performance metrics

Step 3: Result Extraction

- Output raw result data at consistent intervals and locations

- Extract primary variables (displacements, temperatures, pressures) at all nodes

- Compute derived quantities (stresses, strains, fluxes) at integration points

- Implement data compression strategies for large result files while preserving accuracy

Protocol 3: Post-Processing Phase Methodology

The post-processing stage involves analyzing and interpreting simulation results [25].

Step 1: Data Visualization

- Generate standardized contour plots, graphs, and animations across all centres

- Implement consistent colormaps and scaling for quantitative comparison

- Create deformation visualizations with standardized magnification factors

- Produce cross-sectional views and probe locations at anatomically relevant positions

Step 2: Quantitative Analysis

- Extract specific numerical values at predefined regions of interest

- Calculate performance metrics (safety factors, failure indices, risk scores)

- Compute statistical measures across patient-specific or population models

- Perform comparative analysis against control groups or baseline conditions

Step 3: Validation and Verification

- Compare FEA predictions against experimental measurements using standardized metrics

- Calculate error measures (mean absolute error, root mean square error, correlation coefficients)

- Generate Bland-Altman plots or similar comparative visualizations

- Document discrepancies and potential sources of error

FEA Workflow in Multicentre Research

The following diagram illustrates the standardized workflow for implementing FEA within multicentre research studies, highlighting critical coordination points across distributed teams.

FEA Multicentre Workflow

Research Reagent Solutions and Computational Tools

Table 2: Essential Research Tools for FEA in Multicentre Studies

| Tool Category | Specific Examples | Function in FEA Research |

|---|---|---|

| Pre-Processing Tools | 3D Slicer, Mimics, SolidWorks, Abaqus/CAE | Image segmentation, geometric modeling, mesh generation |

| FEA Solvers | Abaqus, ANSYS, FEBio, CalculiX, OpenFOAM | Numerical solution of discretized PDEs using various algorithms |

| Post-Processing Software | Hyperview, ParaView, EnSight, FieldView | Visualization, quantitative analysis, and result interpretation |

| Material Testing Equipment | Instron machines, rheometers, DMA, DIC systems | Experimental characterization of material properties for model inputs |

| Medical Imaging | CT, MRI, micro-CT, ultrasound scanners | Acquisition of anatomical geometry and tissue property data |

| Statistical Analysis Software | R, Python, SAS, SPSS, MATLAB | Statistical comparison of FEA predictions with experimental data |

| Collaboration Platforms | Git, SVN, Open Science Framework, REDCap | Version control, data sharing, and protocol management across centres |

Establishing a clearly defined Context of Use and addressing key methodological questions are fundamental prerequisites for developing fit-for-purpose FEA models in multicentre research settings. The structured approach presented in this protocol enables standardization of FEA implementation across multiple institutions, facilitating collaborative model development and validation. By adhering to these guidelines, researchers can enhance the reliability, reproducibility, and translational impact of computational modeling in biomedical applications, ultimately supporting regulatory evaluation and clinical adoption of in silico technologies.

Advanced FEA Applications and Integration with Multitask Learning in Drug Development

Finite Element Analysis (FEA) has revolutionized engineering design by enabling accurate simulation of complex physical phenomena under real-world conditions. Multi-objective optimization (MOO) integrated with FEA represents a paradigm shift from traditional single-objective design, allowing engineers to systematically balance competing performance criteria such as structural integrity, weight, computational efficiency, and manufacturing constraints. This approach is particularly valuable in advanced engineering applications where design requirements are frequently conflicting and must be satisfied simultaneously.

In biomedical engineering, for instance, the development of a novel scissor-type thrombolytic micro-actuator for treating ischemic stroke demonstrates the critical importance of MOO. Researchers simultaneously maximized tip amplitude and stirring force—two conflicting performance indicators—to enhance vascular recanalization effectiveness while ensuring patient safety [26]. Similarly, in precision manufacturing, turning-milling machine tool beds have been optimized to reduce maximum deformation, decrease mass, and improve natural frequency concurrently [27] [28].

The fundamental challenge in multi-objective FEA lies in navigating the complex trade-offs between simulation accuracy, computational expense, and design performance. High-fidelity models provide greater accuracy but demand substantial computational resources, creating an inherent tension between these objectives. Modern MOO frameworks address this challenge through sophisticated methodologies that efficiently explore the design space and identify optimal compromise solutions.

Core Methodologies and Algorithms

Optimization Approaches and Techniques

Multi-objective optimization in FEA employs various methodological approaches, each with distinct strengths and implementation considerations. The selection of an appropriate methodology depends on factors including problem complexity, computational resources, and the nature of design objectives.

Table 1: Comparison of Multi-Objective Optimization Methods in FEA

| Method | Key Features | Advantages | Limitations | Representative Applications |

|---|---|---|---|---|

| Response Surface Methodology (RSM) | Uses quadratic empirical functions to approximate relationships between variables and responses [29] | Reduces number of required experiments; identifies variable interactions [26] | Accuracy depends on design space sampling; limited to pre-defined parameter ranges | Thrombolytic micro-actuator optimization [26] |

| Non-dominated Sorting Genetic Algorithm (NSGA) | Evolutionary algorithm constructing Pareto fronts; NSGA-III provides more diverse alternatives than NSGA-II [26] | Maintains population diversity; reduces computational complexity [26] | Requires numerous function evaluations; computationally intensive for complex problems | Auxetic coronary stent optimization [30] |

| Taguchi Method | Employs orthogonal arrays and signal-to-noise ratios for quality evaluation [28] | Efficient with limited experiments; robust parameter design [28] | Limited to discrete factor levels; may miss optimal solutions between levels | Machine tool bed optimization [28] |

| Weighted Sum Method | Combines multiple objectives into single function using weighting factors [31] | Simple implementation; intuitive weighting of objective importance [31] | Weight selection subjective; difficult to capture non-convex Pareto fronts [31] | FE model updating [31] |

Finite Element Implementation Framework

The effective integration of FEA within multi-objective optimization requires a systematic workflow that ensures computational efficiency while maintaining accuracy:

Model Preparation and Objective Definition The process begins with creating a precise 3D CAD model and assigning accurate material properties (e.g., Young's modulus, density, Poisson's ratio) [32]. Engineers must identify primary optimization objectives—such as weight reduction, improved strength, or thermal efficiency—and define practical constraints including material properties, budget limitations, manufacturing capabilities, and compliance requirements [32].

Initial FEA Simulation and Result Analysis Using specialized software (e.g., NASTRAN, ANSYS, Abaqus), engineers perform initial simulations to analyze structural, thermal, fluid, or dynamic behavior depending on the product's purpose [32]. The results, including stress distribution, strain, and heat transfer parameters, are evaluated to identify potential design flaws, over-engineering, or material inefficiencies [32].

Iterative Optimization and Validation Based on FEA insights, the design is modified through reinforcement of weak areas or material reduction where stress is minimal [32]. Advanced techniques like topology optimization create lightweight, performance-driven designs by removing unnecessary material [32]. The optimized design must be validated through physical testing to confirm FEA predictions, with simulation models adjusted based on test results for improved accuracy [32].

Application Protocols

Protocol 1: RSM-NSGA-III Integration for Medical Device Optimization

This protocol details the integrated Response Surface Methodology and Non-dominated Sorting Genetic Algorithm III approach for optimizing biomedical devices, as demonstrated for thrombolytic micro-actuators [26].

Experimental Workflow

Step-by-Step Procedure

Parameter Identification and FEA Modeling

- Identify critical structural parameters affecting device performance through preliminary sensitivity analysis [26].

- Develop a dynamic FEA model of the device incorporating all identified parameters. For thrombolytic micro-actuators, this includes slit beam thickness, beam cross-sectional area, tip length, and groove angle [26].

- Establish performance indicators (e.g., tip amplitude and stirring force for micro-actuators) as optimization objectives [26].

Experimental Design and Response Surface Development

- Conduct single-factor FEA experiments to determine preliminary parameter effects [26].

- Employ Central Composite Design or Box-Behnken design to define design points for RSM [26].

- Execute FEA simulations at all design points and record response values.

- Fit quadratic regression models for each response indicator using analysis of variance (ANOVA) to assess model significance [26].

- Validate model accuracy through statistical metrics (R-squared, adjusted R-squared) and residual analysis.

Genetic Algorithm Optimization

- Define optimization objectives and constraints based on RSM models.

- Configure NSGA-III parameters: population size, crossover and mutation probabilities, and termination criteria [26].

- Execute optimization algorithm to generate Pareto-optimal solutions balancing multiple objectives [26].

- Select final optimal parameter combination from Pareto front based on application requirements.

Validation and Prototyping

- Fabricate physical prototype based on optimized parameters [26].

- Conduct experimental performance testing comparing results with FEA predictions [26].

- For thrombolytic micro-actuators, experimental results demonstrated 61.33% improvement in maximum tip amplitude and 80.19% improvement in maximum stirring force post-optimization [26].

Protocol 2: FEA-Taguchi Hybrid Approach for Structural Lightweighting

This protocol outlines the combined FEA and Taguchi method for multi-objective optimization of structural components, with application to machine tool beds [27] [28].

Experimental Workflow

Step-by-Step Procedure

FEA Model Development and Objective Definition

- Create parametric CAD model of the target structure suitable for design modifications [28].

- Perform static and dynamic FEA to establish baseline performance characteristics [28].

- Define optimization objectives (e.g., mass reduction, deformation minimization, natural frequency improvement) and identify corresponding performance metrics [28].

Taguchi Experimental Design

- Select critical design factors influencing performance objectives through preliminary studies [28].

- Determine appropriate factor levels representing feasible design variations.

- Construct orthogonal array (e.g., L9, L18, L27) to define simulation trials, significantly reducing required experiments while maintaining statistical validity [28].

- Assign design factors to appropriate columns in the orthogonal array.

FEA Execution and Signal-to-Noise Analysis

- Execute FEA simulations for all experimental combinations in the orthogonal array [28].

- Calculate appropriate signal-to-noise (S/N) ratios for each objective:

- "Smaller is better" for minimization objectives (e.g., deformation, stress)

- "Larger is better" for maximization objectives (e.g., natural frequency, stiffness)

- "Nominal is better" for target value objectives [28]

- Compute average S/N ratios for each factor at different levels.

Optimal Parameter Identification and Validation

- Identify optimal factor levels based on highest S/N ratios for each objective [28].

- Perform analysis of variance (ANOVA) to determine relative factor significance.

- Conduct confirmatory FEA with optimal parameters to verify improvement.

- In machine tool bed optimization, this approach achieved 5.14% reduction in maximum deformation, 1.75% decrease in mass, and 1.04% improvement in fourth-order natural frequency [28].

Research Reagent Solutions

Essential Computational Tools and Materials

Table 2: Research Reagent Solutions for Multi-Objective FEA

| Category | Item | Specification/Function | Application Examples |

|---|---|---|---|

| FEA Software | ANSYS | General-purpose FEA with multi-physics capabilities | Structural, thermal, and fluid analysis [32] |

| NASTRAN | Advanced structural analysis with optimization modules | Aerospace and automotive structural optimization [32] | |

| Abaqus | Nonlinear and dynamic FEA with material modeling | Complex contact and material nonlinearities [32] | |

| SolidWorks Simulation | Integrated CAD-FEA with design studies | Design integration and parametric optimization [32] | |

| Optimization Algorithms | NSGA-II/III | Evolutionary multi-objective optimization with non-dominated sorting [26] | Biomedical device optimization [26] |

| MOPSO | Multi-objective particle swarm optimization | Continuous parameter space exploration | |

| Weighted Sum Method | Scalarization of multiple objectives with weighting factors [31] | FE model updating [31] | |

| Materials | Polylactic Acid (PLA) | Biodegradable polymer with suitable mechanical properties | Bioresorbable coronary stents [30] |

| Resin Concrete | High damping capacity and stiffness for machine tools | Machine tool bed lightweight design [28] | |

| Piezoelectric Ceramics | Electromechanical energy conversion | Thrombolytic micro-actuator transducers [26] | |

| Experimental Validation | 3D Scanning | Geometric deviation analysis between CAD and as-built | Prototype geometry verification |

| Dynamic Signal Analyzer | Experimental modal analysis for model correlation | Natural frequency and mode shape validation [28] | |

| Load Frame | Mechanical property testing under controlled loading | Static performance validation [32] |

Data Presentation and Analysis

Quantitative Optimization Results

Table 3: Performance Improvements Achieved Through Multi-Objective FEA Optimization

| Application Domain | Optimization Methodology | Performance Metrics | Improvement Achieved | Reference |

|---|---|---|---|---|

| Thrombolytic Micro-actuator | RSM-NSGA-III | Maximum tip amplitude | +61.33% | [26] |

| Maximum stirring force | +80.19% | [26] | ||

| Turning-Milling Machine Tool Bed | FEA-Taguchi Method | Maximum deformation | -5.14% | [28] |

| Mass | -1.75% | [28] | ||

| Fourth-order natural frequency | +1.04% | [28] | ||

| Auxetic Coronary Stent (PLA-RH) | Surrogate Modeling + FEA | Bending stiffness | -60.12% | [30] |

| Radial recoil and force | Maintained with no compromise | [30] | ||

| Transcatheter Aortic Valve Stent | NSGA-II | Maximum compressive strain | -40% | [26] |

| Radial strength | +261% | [26] | ||

| Eccentricity | -67% | [26] |

Advanced Integration Techniques

Uncertainty Quantification and Robust Design

Real-world engineering applications must account for various uncertainties in material properties, manufacturing tolerances, and loading conditions. Advanced MOO frameworks incorporate uncertainty quantification through several approaches:

Monte Carlo Simulation Integration The combination of Response Surface Methodology with Monte Carlo simulation optimization (OvMCS) enables effective handling of coefficient uncertainties in empirical functions, better representing real situations [29]. This approach reduces or eliminates the need for additional confirmation experiments while providing better adjustment of factor values and response variables compared to classic multiple response methods [29].

Stochastic FEA Frameworks Probabilistic elasticity models account for microstructure uncertainties in materials like long fiber reinforced thermoplastics [29]. Techniques such as the stochastic finite element method using Monte Carlo simulation provide robust uncertainty propagation through complex models [29].

Pareto-Optimal Solution Selection Criteria

Identifying the preferred solution from multiple Pareto-optimal alternatives requires systematic decision-making strategies:

Equilibrium Point Method This approach defines the objective function as the distance between a candidate point and the equilibrium point in the objective function space [31]. The minimum distance criterion identifies solutions representing the best compromise between conflicting objectives without requiring computation of the entire Pareto front, significantly reducing computational effort [31].

Adaptive Weighted Sum Method Unlike traditional fixed weighting, adaptive approaches change weighting factors according to the nature of the Pareto front, addressing the limitation where even weight distribution doesn't correspond to even solution distribution on the Pareto front [31]. This method enables identification of non-convex Pareto front regions that conventional weighted sum methods might miss [31].

Multi-objective optimization in FEA represents a sophisticated framework for addressing complex engineering design challenges with competing requirements. The methodologies and protocols presented demonstrate significant performance improvements across diverse applications, from biomedical devices to precision manufacturing equipment. Successful implementation requires careful selection of appropriate optimization strategies based on specific application requirements, computational resources, and validation capabilities.

The integration of uncertainty quantification and robust decision-making criteria further enhances the practical applicability of optimized designs in real-world conditions. As computational capabilities advance, the integration of machine learning and artificial intelligence with multi-objective FEA promises to further accelerate design optimization cycles while improving solution quality across increasingly complex engineering systems.

Leveraging Multitask Learning Models for Simultaneous Prediction of Multiple Clinical Outcomes

The accurate prediction of clinical outcomes is a cornerstone of personalized medicine, yet it remains a complex challenge due to the multifactorial nature of disease progression and patient recovery. Traditional single-task learning (STL) models, which predict one outcome at a time, often fail to leverage the inherent relatedness between different clinical endpoints, potentially leading to suboptimal performance and inefficient use of data [33]. Multitask learning (MTL) has emerged as a powerful machine learning paradigm that addresses these limitations by simultaneously training a single model on multiple related tasks, enabling knowledge sharing across tasks and improving data utilization [34] [33].

In the context of multicenter studies, which are essential for achieving statistically powerful and generalizable clinical findings, MTL offers particular advantages. These studies inherently generate diverse, multimodal data across different patient populations and clinical settings, creating an ideal environment for MTL approaches that can learn robust, shared representations from this variability [35]. Furthermore, the principles of finite element analysis (FEA)—a computational method for simulating complex physical systems—can provide a valuable conceptual framework for MTL in healthcare. Just as FEA breaks down complex structures into smaller, manageable elements to understand system-level behavior [36], MTL deconstructs complex clinical prognosis into constituent predictive tasks to build a more comprehensive understanding of patient outcomes.

This protocol outlines the application of MTL models for simultaneous prediction of multiple clinical outcomes, with specific consideration for multicenter study settings and the conceptual framework provided by FEA methodologies.

Theoretical Foundations and Key Concepts

Multitask Learning in Healthcare

Multitask learning is a machine learning approach where a single model is trained to perform multiple related tasks simultaneously, leveraging shared representations to improve learning efficiency and prediction accuracy [34] [33]. In clinical applications, this typically involves predicting several patient outcomes—such as mortality, length of stay, and functional recovery—from the same set of input features. The most common MTL architecture employs hard parameter-sharing, where a shared feature extractor processes input data for all tasks of interest before task-specific branches generate individual predictions [34]. This design encourages the model to learn more generalizable patterns that benefit all tasks, reducing the risk of overfitting—particularly valuable in clinical settings where labeled data may be limited [33].

The rationale for MTL in clinical prediction is supported by the interrelated nature of clinical outcomes. For instance, a patient's functional recovery is intrinsically linked to the extent of tissue damage, and both are influenced by common underlying pathophysiological processes [34]. By modeling these outcomes jointly, MTL can capture these shared underlying factors more effectively than separate STL models.

The Multicenter Study Context

Multicenter clinical trials (MCCTs) investigate research questions through coordinated efforts across multiple healthcare institutions, offering significant advantages over single-center studies including larger sample sizes, enhanced patient diversity, and improved generalizability of findings [35]. The heterogeneous data generated across centers with varying equipment, protocols, and patient populations creates both challenges and opportunities for machine learning models. MTL is particularly well-suited to this context as it can learn robust representations that are invariant to center-specific variations, potentially improving model generalizability across diverse clinical settings.

Finite Element Analysis as a Conceptual Framework

Finite element analysis is a computational technique that uses mathematical approximations to simulate real physical systems by breaking down complex geometries into smaller, manageable elements [36]. While traditionally applied in engineering contexts such as microneedle design [36], FEA provides a valuable conceptual framework for MTL in clinical prediction. In this analogy, the overall clinical prognosis represents the complex system, while individual outcome tasks correspond to the discrete elements analyzed in FEA. The MTL model, like FEA, integrates information from these discrete elements (tasks) to form a comprehensive understanding of the whole system (patient prognosis). This conceptual alignment underscores how complex clinical prediction problems can be decomposed and analyzed systematically.

Current State of Multitask Learning in Clinical Prediction

Recent research has demonstrated successful applications of MTL across various clinical domains, utilizing diverse data modalities including medical images, clinical metadata, and temporal data from electronic health records.

Table 1: Recent Multitask Learning Applications in Clinical Prediction

| Clinical Domain | Model Name | Prediction Tasks | Data Modalities | Performance Highlights |

|---|---|---|---|---|

| Rectal Cancer | Multitask Deep Learning Model [37] | Recurrence/Metastasis; Disease-Free Survival | Clinicopathologic data; Multiparametric MRI | AUC: 0.846 (internal test), 0.797 (external test); C-index: 0.794 (internal test), 0.733 (external test) |

| Acute Ischemic Stroke | CTPredict [34] | Follow-up Lesion; 90-day Functional Outcome (mRS) | 4D CTP Imaging; Clinical metadata | Dice score: 0.23; Accuracy: 0.77 |

| ICU Patient Outcomes | MTLNFM [33] | Frailty Status; Hospital Length of Stay; Mortality | Electronic Health Records (66 variables) | AUROC: 0.7514 (Frailty), 0.6722 (LOS), 0.7754 (Mortality) |

| General ICU Benchmarking [38] | Multitask LSTM | In-hospital Mortality; Decompensation; Length of Stay; Phenotype Classification | Clinical time series (17 variables) | AUC-ROC: 0.8459-0.9474 across tasks |

The integration of multimodal data has been a critical factor in the success of these MTL approaches. As noted in a review of multimodal machine learning in healthcare, "clinicians typically rely on a variety of data sources including patients' demographic information, laboratory data, vital signs and various imaging data modalities to make informed decisions and contextualise their findings" [39]. MTL provides a natural framework for integrating these diverse data sources while modeling multiple clinical outcomes.

MTL Model Architectures and Implementation Framework

Common Architectural Patterns

MTL models for clinical prediction typically follow several common architectural patterns:

Hard Parameter-Sharing Encoder: This architecture uses a shared backbone (e.g., convolutional neural networks for images or recurrent networks for temporal data) to extract general features from input data, followed by task-specific heads that generate predictions for each outcome [34]. This approach is computationally efficient and reduces overfitting.

Cross-Attention Fusion Modules: For multimodal data, cross-attention mechanisms enable dynamic integration of features from different modalities (e.g., imaging and clinical data), allowing the model to focus on the most relevant features from each modality for each prediction task [34].

Neural Factorization Machine Integration: Frameworks like MTLNFM combine factorization machines with deep neural networks to capture both low-order and high-order feature interactions across tasks, particularly effective for structured clinical data [33].

Workflow Diagram

The following diagram illustrates a generalized workflow for developing and validating an MTL model in a multicenter setting:

Table 2: Essential Resources for MTL Clinical Prediction Research

| Category | Item | Specification/Examples | Function/Purpose |

|---|---|---|---|

| Data Resources | Multicenter Clinical Datasets | MIMIC-III [38], Custom MCCT Collections | Training and validation data source with diverse patient populations |

| Medical Imaging Data | Multiparametric MRI [37], 4D CTP [34] | Provides spatial and/or temporal imaging features for prediction tasks | |

| Clinical Metadata | Electronic Health Records, Laboratory Results, Vital Signs [33] [38] | Complementary patient information for multimodal prediction | |

| Computational Tools | Deep Learning Frameworks | PyTorch [40], DGL [40] | Model implementation, training, and evaluation |

| Multimodal Fusion Libraries | Custom cross-attention modules [34] | Integration of diverse data modalities within MTL architecture | |

| Data Preprocessing Tools | Normalization, Resampling, Augmentation pipelines [37] | Data preparation and harmonization across multicenter sources | |

| Model Evaluation | Performance Metrics | AUC-ROC, AUPRC, C-index, Dice Score [37] [40] [34] | Quantitative assessment of model performance across tasks |

| Statistical Analysis Tools | Bootstrapping, Confidence Interval estimation [38] | Robust evaluation of model performance and significance testing |

Detailed Experimental Protocol for MTL Model Development

Multicenter Data Collection and Preprocessing

Objective: To gather and preprocess heterogeneous multimodal data from multiple clinical centers to ensure compatibility with MTL model requirements.

Materials:

- Access to multicenter clinical datasets with appropriate ethical approvals

- Data sharing agreements between participating institutions

- Computational infrastructure for large-scale data processing

Procedure:

- Data Acquisition: Collect multimodal clinical data according to standardized protocols across participating centers. Essential data categories include:

- Medical Images: Acquire according to consensus sequences/parameters (e.g., for rectal cancer: T2WI and DKI MRI sequences [37]; for stroke: 4D CTP imaging [34])

- Clinical Metadata: Structured electronic health record data including demographics, laboratory values, comorbidities, and treatment histories [33]

- Outcome Labels: Annotate ground truth labels for all prediction tasks (e.g., recurrence/metastasis status, disease-free survival, functional outcomes)

Data Harmonization: Address center-specific variations through:

- Spatial Alignment: Implement rigid transformation to register images to a common space [37]

- Intensity Normalization: Apply modality-specific intensity normalization to ensure consistent voxel intensity distributions [37]

- Temporal Alignment: For time-series data, align measurements to common temporal grids [38]

Handling Missing Data: Rather than deletion or simple imputation, explicitly label missing values as a separate category to allow the model to learn from missingness patterns [33]

Data Augmentation: Address class imbalance through targeted augmentation of minority classes using techniques including random 3D rotations, zooming, and shifting [37]

MTL Model Implementation

Objective: To implement a multimodal MTL model capable of simultaneous prediction of multiple clinical outcomes.

Architecture Specifications:

- Modality-Specific Encoders: Implement separate input encoders for each data modality: